Abstract

In this Methods article, we discuss and illustrate a unifying, principled way to analyze response time data from psychological experiments—and all other types of time-to-event data. We advocate the general application of discrete-time event history analysis (EHA) which is a well-established, intuitive longitudinal approach to statistically describe and model the shape of time-to-event distributions. After discussing the theoretical background behind the so-called hazard function of event occurrence in both continuous and discrete time units, we illustrate how to calculate and interpret the descriptive statistics provided by discrete-time EHA using two example data sets (masked priming, visual search). In case of discrimination data, the hazard analysis of response occurrence can be extended with a microlevel speed-accuracy trade-off analysis. We then discuss different approaches for obtaining inferential statistics. We consider the advantages and disadvantages of a principled use of discrete-time EHA for time-to-event data compared to (a) comparing means with analysis of variance, (b) other distributional methods available in the literature such as delta plots and continuous-time EHA methods, and (c) only fitting parametric distributions or computational models to empirical data. We conclude that statistically controlling for the passage of time during data analysis is

Keywords

Since the publication of the subtraction method (Donders, 1969) and the additive factors method (Sternberg, 1969), analysis of variance (ANOVA) has become the standard data analysis method in psychology and cognitive (neuro)science for the analysis of response times (RTs). Following these approaches, many researchers interpret differences in RTs between experimental conditions on a difference scale that is assumed to directly capture the time requirements of additional cognitive operations. However, differences in mean RT can only be interpreted that way when assuming that the nature of cognitive processing is captured by the serial information processing framework. Even though the serial information processing framework has been criticized repeatedly in the literature (Cisek & Kalaska, 2010; Eriksen & Schultz, 1979; McClelland, 1979; Pieters, 1983; Schöner et al., 2016), ANOVA continues to be the most popular method to analyze RTs to this day.

As discussed by Van Gelder (1995), there is a viable alternative view on the nature of cognitive processing: Cognition is the behavior of a dynamical system. To understand the behavior of a dynamical system, it is crucial to track its output over time (Schöner et al., 2016). We therefore promote and illustrate the use of a well-established longitudinal or distributional technique known as event history analysis (EHA) for analyzing time-to-event data such as RTs. EHA (also known as survival, hazard, duration, transition, and failure-time analysis) is the name of the standard set of statistical methods for studying

To apply EHA, we must be able to define the event of interest (any qualitative change that can be situated in time), to define time point zero, and to measure the passage of time between time zero and event occurrence in discrete or continuous time units. While sociologists are interested in the occurrence and timing of events such as marriage and divorce—note that some people never marry—and biostatisticians in death, experimental psychologists are interested in events such as button presses (RT analysis), saccade onsets (saccade latency analysis), fixation offsets (fixation duration analysis), and so forth. Typically, time point zero is defined as target display onset time in RT and saccade latency studies. However, sometimes the time of the last response can be defined as time zero for the next response, for example, when studying perceptual dominance durations in studies using ambiguous figures. The onset of fixation is time zero for fixation duration analysis.

The structure of this Methods article is as follows. First, we introduce and explain the concept of hazard, in continuous and discrete time units. Next, we illustrate how to calculate the descriptive statistics in discrete time using a life table, and we discuss two example data sets. We then describe different approaches for obtaining inferential statistics. We end with a discussion of the (dis)advantages of discrete-time EHA, compared with other existing distributional methods.

The Continuous-Time Hazard Rate Function of Event Occurrence

Luce (1986) mentions that there are several different, but mathematically equivalent, ways to present the information about a continuous random variable T denoting a particular person’s RT in a particular experimental condition, including (a) the cumulative distribution function In principle, we may present the data as estimates of any of these functions and it should not matter which we use. In practice, it matters a great deal, although that fact does not seem to have been as widely recognized by psychologists as it might be. (Luce, 1986, p. 17)

First, the hazard function of response occurrence is one of the most diagnostic functions when describing the distribution of a sample of time-to-event data (Allison, 2010; Luce, 1986; Townsend, 1990). For example, “the hazard function itself is one of the most revealing plots because it displays what is going on locally without favoring either short or long times, and it can be strikingly different for

Four views on four different waiting-time distributions in continuous time. The hazard rate function

Second, because RT distributions may differ from one another in multiple ways, Townsend (1990) developed a dominance hierarchy of statistical differences between two arbitrary distributions A and B. For example, if

Third, EHA does not discard right-censored observations when estimating hazard functions, that is, trials for which we do not observe a response during the data collection period so that we only know that the RT must be larger than some value. This is important because although a few right-censored observations are inevitable in most RT tasks, a lot of right-censored observations are expected in experiments on masking, the attentional blink, and so forth, for example.

There are other types of censoring. Left censoring occurs when all that is known about an observation on a variable T is that it is

Importantly, all standard statistical methods for time-to-event data require that random censoring be noninformative: For example, a trial that is censored at time c should be representative of all those trials with the same values of the explanatory variables that survive to c (Allison, 2010). For example, the occurrence of an equipment error during a trial will introduce random censoring that is uninformative. However, when estimating the hazard of correct response occurrence, error responses introduce random censoring (and vice versa) that is very likely informative, because response channels are known to compete with one another (Burle et al., 2004; Eriksen et al., 1985; Praamstra & Seiss, 2005). We therefore never recommend to describe or model the hazard of correct response occurrence independently from the hazard of error response occurrence but to extend the hazard of response occurrence with conditional accuracy functions (see later).

The most common type of right-censoring is “singly Type I censoring” that applies when the experiment uses a fixed response deadline for all trials. “Type I” means that the censoring time is fixed and is under the control of the experimenter, and “singly” refers to the fact that all observations have the same censoring time (Allison, 2010). Discarding such trials—or trials with very long RTs in case the experimenter waits for a response on each trial—may introduce a sampling bias that results in underestimation of the mean. In contrast, EHA can include the data information from all trials when estimating the descriptive statistics.

Fourth, hazard modeling allows incorporating

Finally, hazard is more suited as a measure of the concept of processing capacity, that is, the amount of work the observer is capable of performing within some unit of time (Wenger & Gibson, 2004). The hazard function can capture the notion of the instantaneous capacity of the observer for completing the task in the next instant, given that the observer has not yet completed the task.

The Discrete-Time Hazard Probability Function of Event Occurrence

Unfortunately, estimating the shape of the continuous-time hazard rate function for one observer in one experimental condition is not straightforward because one needs at least 1,000 trials for example (Bloxom, 1984; Luce, 1986; Van Zandt, 2000). However, by shifting to discrete time, we can trade-off some temporal resolution for increased applicability of EHA in RT studies that typically collect less than 1,000 trials per condition per participant. In this Methods article, we therefore focus on the application of

In Figure 2A, four hypothetical discrete-time population hazard functions are plotted with time divided in 10 discrete bins (1–10). Each function was constructed by selecting a series of 10 real numbers from the interval [0,1] with replacement, with the only restriction that once “1.0” is selected then the following numbers are set to “missing data”—the reader can construct her or his own example functions. Each hazard function completely describes the shape of a distribution of discrete waiting times. For example, the four theoretical functions in Figure 2A could reflect the true RT distributions of a single participant in four experimental conditions (studied with a small-

Four views on four different waiting-time distributions in discrete time. The hazard probability function

Figure 2C displays the corresponding discrete-time survivor functions, or

We constructed the hazard functions in Figure 2A in such a way that they show some symmetry. For example, Condition 1 (black line) might represent a neutral priming condition and Conditions 2 and 3 a congruent and incongruent priming condition, respectively. Let us assume for simplicity that each bin is 1 second wide and that the censoring time equals 10 seconds so that we have the following sequence of bins: (0,1], (1,2], … , (9,10]. For example, the discrete-time hazard for bin 2 in the neutral condition equals .20 (for bins 1–3, the hazard functions for the first three conditions lie on top of each other). In other words, given that time has passed until 1 second after target onset without response occurrence, then there is a conditional probability of .2 that the response occurs sometime during the next second, that is, in the second bin or time segment (1,2]. In short,

If we now compare Conditions 2 and 3 (green and red lines), we see a large positive priming effect in hazard for time segment (3,6] followed by a smaller negative (i.e., inverted) priming effect for time segment (7,8]. Note that while the hazard functions for Conditions 2 and 3 cross two times, the

Similarly, note that the symmetry present in the hazard functions for the first three conditions is also absent in the

Obtaining Descriptive Statistics for Discrete Time Units: The Life Table

To calculate the descriptive statistics—functions of discrete time—for a finite time-to-event data set, one has to set up a

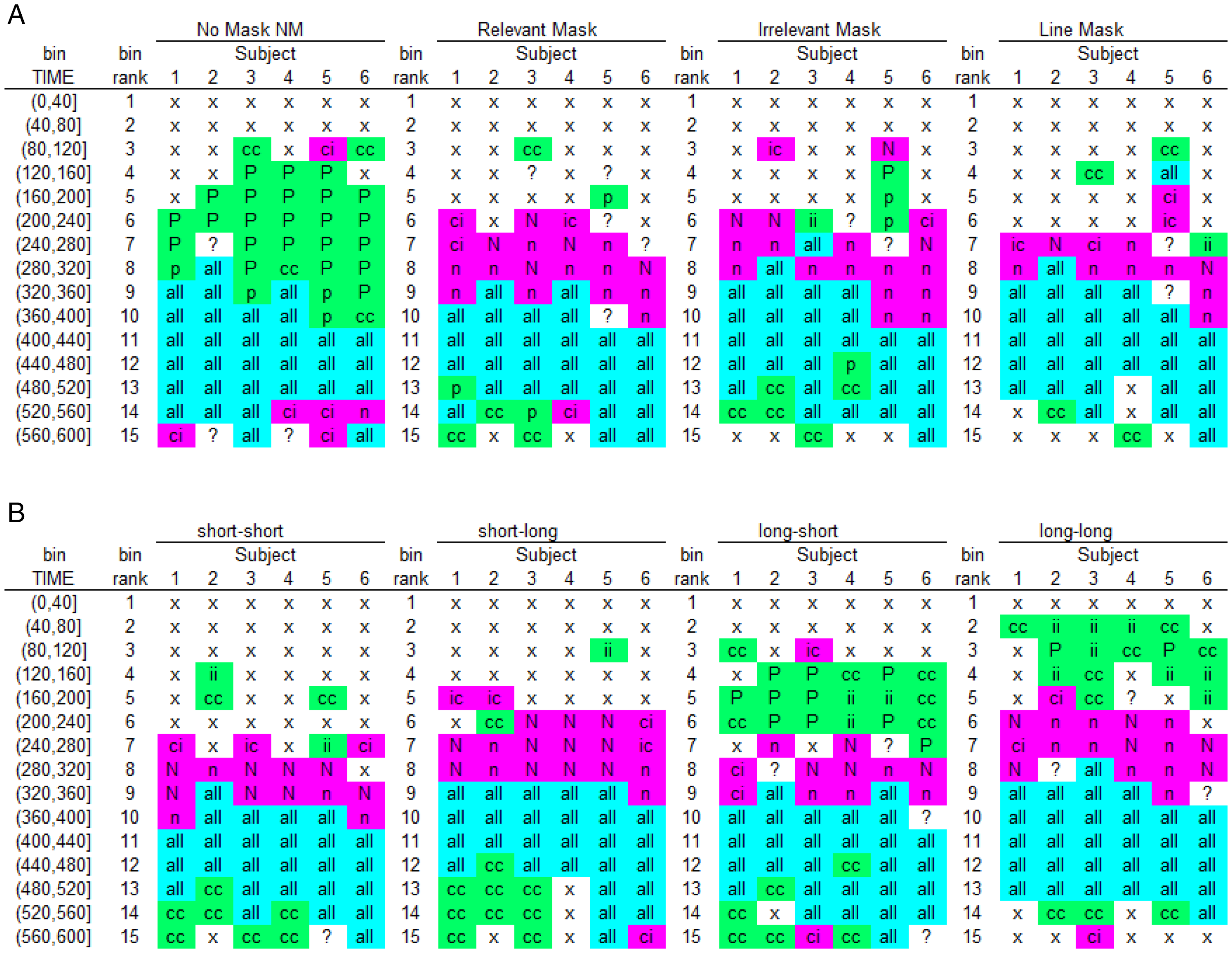

Masked Priming

Panis and Schmidt (2016) asked participants to perform speeded keypress responses to the direction of a 94 ms double arrow target (left/right), within 600 ms (Figure 3A). The central target could be preceded by a central 13 ms double arrow prime that was followed by a 94-ms pattern mask. The factors prime type and mask type were manipulated factorially. The prime could point in the same direction as the target (CONgruent), in the opposite direction (INCONgruent), or no prime was presented (NP). The mask stimulus could be response-relevant (REL), response-irrelevant (IRREL), a set of random lines (LIN), or no mask was presented (NM). Consistent with the literature, the mean correct RT (Figure 3B) and mean error rates (Figure 3C) show a positive priming or congruency effect (PCE) of about 100 ms and 20 percentage points when no mask was presented, but the reversed effect in the presence of relevant or irrelevant masks: a negative congruency effect (NCE) of about –40 ms and –10 percentage points.

Masked priming example. (A) Trial and mask designs used in Experiment 1 of Panis and Schmidt (2016). A trial with a congruent prime and a relevant mask is shown. Insets show three mask types. Time on the

Table 1 presents the life table for the data of a single participant in condition NP-NM (no prime, no mask). The first 600 ms after target onset are divided into 15 bins of 40 ms indexed by t = 1 to 15. After counting the number of responses in each bin, one can then directly estimate the discrete-time hazard probability function of response occurrence:

Example Life Table for Discrete-Time Statistics.

Because we are dealing with two-button discrimination data, the

Sample-based estimates of

Sample-based estimates for Participant 6 in Experiment 1 of Panis and Schmidt (2016). For each combination of mask type (no mask and relevant mask) and prime type (congruent, no prime, incongruent), the estimated discrete-time hazard function

Once the waiting time has reached 280 ms after target onset without response occurrence, response hazards continue to increase temporarily for congruent primes but start to decline for incongruent primes and eventually even reach zero: in bin (360,400] after target onset, no responses are emitted when the prime is incongruent. In our view, this temporary decline in hazard reflects—at least initially—response competition from the target, which is becoming overtly available in bin 280 and activates the opposite (correct) response as the prime. In other words, this is the phase where the target starts taking over response control from the prime. After bin 400,

But something else is going on in the relevant mask condition (right panels). The first overt responses only appear around 320 ms after target onset. Overall, response hazards increase faster in incongruent than in congruent trials (with the no-prime condition in between), demonstrating a reversed priming or NCE in response occurrence. Moreover, the earliest emitted responses are typically correct in incongruent trials and typically incorrect in congruent trials: a complete reversal of the pattern in the no mask condition. When the target information becomes available, it now delays responses in the congruent condition around 360 ms after target onset. Following this temporary dip,

The hazard functions for congruent and incongruent trials thus show a partial ordering (i.e., only for t > 280 ms in the no mask condition, and for t > 320 ms in the relevant mask condition). In other words, the hazard functions reveal the onset time, duration, and shape of the behavioral effect. The differences in means also typically underestimate the duration of the effect in terms of hazard. For example, the within-trial duration of the PCE when the mask is absent is at least 200 ms (5 bins) and that of the NCE when the mask is relevant is at least 160 ms (4 bins). Also, plotting hazard and conditional accuracy functions can reveal important interindividual differences and the time-locking of effects to stimuli or other events. For example, Panis and Schmidt (2016) compared the dynamics of the priming effect in the

Sample-based

Panis and Schmidt (2016) concluded that the NCE is neither caused by automatic self-inhibition of the primed response due to backward masking nor by updating response-relevant features of the mask, but by active, selective mask-triggered inhibition. The mask thus acts as a

Visual Search

Panis et al. (2020) reanalyzed the benchmark visual search data sets collected by Wolfe et al. (2010). For example, in the color-orientation conjunction search task, 10 participants searched a single display for a red vertical rectangle among green vertical and red horizontal rectangles. Four different set sizes (target plus distractors; 3, 6, 12, or 18) were randomly intermixed. Participants pressed one key if the target was present (50% of trials) and another if the target was absent. They were instructed to respond as quickly and correctly as possible and received feedback after each trial. Accuracy and RT in ms were recorded. Each participant provided approximately 10 blocks of 400 trials, leading to about 500 trials per participant and search condition. Figure 6 shows the data for one representative participant in the color-orientation conjunction search task with a set size of 18 objects, using bins of 40 ms and a censoring time of 2,400 ms.

Visual search example. The data for one representative participant in each of the target-present and target-absent conditions of the color-orientation conjunction search task with set size 18 of Wolfe et al. (2010) are plotted as (A) hazard function

First, there is only a partial ordering of the hazard functions with respect to the effect of target presence (only for t < 600 ms), and the hazard functions are relatively flat for the right tail of the RT distributions. Second, false alarms occur mostly early in time, while misses occur mostly for medium-latency responses. The miss rate peaks around 600 ms after search display onset. As far as we know, none of these features of visual search behavior are predicted by current cognitive models of visual search (Panis et al., 2020).

One tentative interpretation of these data is based on the idea that behavior at any point in time is determined not only by the outcome of the ongoing search process but also by response biases and reactive cognitive control processes (Panis et al., 2020). For example, we can distinguish five phases in the time-dispersed behavior of this observer (the gray surface areas in Figure 6 mark phases two and four). First, if the waiting time has increased until 360 ms after search display onset, then

Second, in the time range 400–480 ms, hazard further increases for both conditions, while

Third, in the time range 480–560 ms, performance is optimal in the sense that (a) hazard is at its highest level so far, and (b) conditional accuracy is high for both target presence conditions. Around this point in time after display onset, behavior is thus determined mostly by the outcome of the search process. However, for a subset of trials, no overt decision is made and time passes on.

Fourth, in the time range 560–640 ms, the difference in hazard disappears, and a no-bias develops as the miss rate reaches a maximum, and there are no false alarms. In other words, if the waiting time has increased until 560 ms, then

Finally, after 640 ms, hazard functions are flat and most emitted responses are correct. In other words, the system quits the search and finally transitions to a state with flat hazard functions without a systematic effect of target presence. Horizontally shaped hazard functions point to exponentially distributed RTs. Based on the findings of Shenoy et al. (2013), we assume that these flat right tails reflect RT outliers during decision making. Shenoy et al. (2013) described neuronal motor activity in macaque monkeys from a dynamical systems perspective by studying single-trial neural trajectories in a state space. They found that the neural state wanders before falling back on track in RT outlier trials so that the monkey hesitated for an abnormally long time before movement onset. Interestingly, Thompson et al. (1996) found that much of the RT variance in search tasks is due to postperceptual motor processing, perhaps to provide the adaptive advantage of allowing for subsequent visual processing and cognitive factors to alter the response choice before an irrevocable commitment is made. For example, one might keep inspecting a few more items even though the no-response is already selected in the target-absent condition. Similarly, one might explicitly compare the presumed target with a few surrounding distractors to confirm target presence, even though the yes-response is already selected in the target-present condition.

Both these and other discrete-time EHA studies of simultaneous masking (Panis & Hermens, 2014), object recognition (Panis et al., 2017; Panis & Wagemans, 2009; Torfs et al., 2010), spatial cueing (Panis, 2020; Panis & Schmidt, 2020), and priming (Wolkersdorfer et al., 2020) teach us three things: (a) Mean performance measures conceal crucial information about behavioral dynamics such as premature response activation, time-locking, response suppression, and how performance changes as time passes by within

Note that when you measure time in continuous units, the survivor function

Obtaining Inferential Statistics

There are several approaches for obtaining inferential statistics (Allison, 2010; Austin, 2017). When you simply want to compare survival functions between two groups in continuous time (large-

When you want to study how hazard depends on various predictors, you can fit regression models to the data (Singer & Willett, 2003). An example

The main predictor variable TIME is the time bin index t (see Table 1) that is centered on value 1 in this example. The complementary log-log link is preferred over the logit link when events can occur in principle at any time point within a bin, which is the case for RT data (Singer & Willett, 2003). The first set of terms within brackets, the alpha parameters multiplied by their polynomial specifications of (centered) time, represents the shape of the baseline cloglog-hazard function (i.e., when all predictors Xi take on a value of zero). The second set of terms (the beta parameters) represents the vertical shift in the baseline cloglog-hazard for a 1 unit increase in the respective predictor. Predictors can be discrete, continuous, and time-varying or time-invariant. For example, the effect of a 1 unit increase in X1 is to vertically shift the whole baseline cloglog-hazard function by β1 cloglog-hazard units. However, if the predictor interacts linearly with time (see X2 in the example), then the effect of a 1 unit increase in X2 is to vertically shift the predicted cloglog-hazard in bin 1 by β2 cloglog-hazard units (when TIME–1 = 0), in bin 2 by β2+ β3 cloglog-hazard units (when TIME–1 = 1), and so forth. To interpret the effects of the predictors, the parameter estimates are exponentiated, resulting in a hazard ratio (due to the use of the cloglog link).

In the case of a large-

When you treat time continuously, you can fit parametric models (e.g., a lognormal hazard model, an exponential hazard model, and so forth; Figure 1), semiparametric models such as the Cox regression model that ignores the shape of the hazard function and only tests the beta parameters, or piecewise exponential models (Allison, 2010). A piecewise exponential model is useful when (a) event times are measured precisely, (b) you want to estimate the shape of the hazard function, and (c) you do not want to impose a parametric model: Time is divided into intervals, and the hazard rate is assumed to be constant within each interval (i.e., exponentially distributed RTs within each interval).

The use of rather complex regression models to analyze hazard and conditional accuracy functions, and the employment of stepwise techniques to find the best model, harbor the danger of over- or underfitting the data, especially when the model is tested with the same data to which it was fitted.

We can shortly illustrate a very simple and immensely useful jackknifing procedure suggested by Ulrich and Miller (2001). Consider the data in Figure 4A (left panel), where we found that the hazard function for incongruent trials experiences a temporary drop in performance (Panis & Schmidt, 2016). If we know from previous experiments that such effects can take place in a certain time window, we can use that window as a region of interest (ROI). The jackknifing procedure now consists of extracting subsamples of the data, each of which contains the average curve for the incongruent trials within the ROI

Discussion

The Theoretical and Statistical Advantages of EHA

Many experimental psychologists are still reluctant to embrace EHA and to stop using ANOVA when dealing with time-to-event data. In part, this is due to historical reasons. The computer metaphor of cognition—serial information processing via consecutive stages—was developed by Donders (1969) and became very popular from 1960 onward (Sternberg, 1969, 1984, 2013). During the past decades, however, various distributional methods have been advertised to move beyond the mean (Balota & Yap, 2011; Ridderinkhof, 2002; van Maanen et al., 2019; VanRullen, 2011).

Nevertheless, while many still assume that RTs reflect the cumulative duration of all time-consuming cognitive operations involved in a task (e.g., Liesefeld, 2018; Song & Nakayama, 2009), the results from various discrete-time event history and conditional accuracy analyses show that fast, medium, and slow RTs can actually index different sets of cognitive operations (Figures 4 and 6; cf. van Zoest et al., 2010). Statistically controlling for the passage of time on multiple time scales during data analysis is therefore

The distributional data in Figures 4 to 6 are consistent with a dynamic systems approach to cognition according to which cognition involves sequential transitions between stable sensory, motor, and central states (Schöner et al., 2016). To understand the behavioral output of the brain, we must therefore measure quantities—

Statistical reasons in favor of EHA include the ability to deal with right-censored observations and time-varying covariates and the fact that hazard provides exactly the kind of information we want to extract from RT data: the instantaneous likelihood of event occurrence given no previous events. We thus recommend to always first plot the

Issues about bin size optimality play a secondary role at this moment in time in our view, because by working in discrete time—or using interval-censored data—we can make an informed trade-off between the availability of temporal information (smaller bins increase temporal resolution) with the feasibility to perform expensive data collection efforts (small bins can only be used with a large number of repeated measurements in case of a small-

As a standard method, EHA offers a unifying and principled approach to the analysis of time-to-event data that can be flexibly combined with other tools used by cognitive (neuro)scientists. For example, by transforming a sample of time-to-event data into time-series data—

Finally, as explained by Kelso et al. (2013), it is crucial to first have a precise description of the macroscopic behavior of a system (here:

Discrete-Time EHA Versus Other Distributional Methods

Continuous-Time EHA

Discrete-time methods treat time-to-event data as interval-censored data while continuous-time methods use the exact event times. While learning the discrete-time methods first will ease the learning of the more complex continuous-time methods, they also have a lower temporal resolution. Thus, although statistical modeling of continuous time-to-event data requires specialized software to either fit parametric hazard models that are rather restrictive in the shapes they allow (e.g., a Weibull hazard model), or semiparametric hazard models that completely ignore the shape of the hazard function, their use might be warranted in particular circumstances. Allison (2010) provides a useful list of considerations when choosing between discrete- and continuous-time methods to perform an EHA. An overview of R functions for a continuous-time EHA can be found here: https://cran.r-project.org/web/views/Survival.html.

Quantile Plots and Classic Delta Plots

A quantile plot visualizes a set of quantiles (e.g., the nine deciles) as a function of quantile order. A classic delta plot for RT compares two conditions by subtracting corresponding quantiles and plots each of these (e.g., nine) differences (

Procedures such as Vincentizing (construction of average RT distributions from the average of their quantiles) that are assumed to normalize the RT distributions across participants (Ratcliff, 1979) have not been evaluated positively (Rouder & Speckman, 2004). Instead, we believe that if, for example, the range of RTs and the time course in hazard of an effect are different across participants, then this is theoretically interesting and requires a substantial explanation. Even if it is possible to somehow average those distributions, that does not mean that the underlying processes should be lumped together. Note that individual differences (e.g., in working memory capacity, the time required to stop a response, and so forth) can be taken into account by adding relevant predictors to the participant level of a multilevel hazard model, thus allowing for participant effects and cross-level interactions.

Possible Disadvantages of Discrete-Time EHA

There are also possible disadvantages of discrete-time EHA.

First, the person-trial-bin-oriented data set can become very large.

Second, one needs to explore a few bin sizes to find the optimal size for a particular data set. The optimal bin size will depend on the censoring time, the overall rarity of event occurrence, and the number of repeated measures or trials (small-

Third, in hierarchical data from a small-

In general, analyzing single participants should be regarded as a safeguard against interpreting spurious effects in the pooled data that are actually only generated by a minority of participants while at the same time refraining from overinterpreting the individual data patterns. Note that systematic effects will be visible for a majority of participants, while occurrences due to noise will not.

Recommendations for Experimental Design of RT and Other Time-to-Event Data Studies

Two general recommendations can be made from the viewpoint of EHA when designing RT studies. First, always use the same fixed response deadline in each trial, for example, 500 ms for single-button detection and 800 ms for an easy two-button discrimination task. Because hazard analysis deals with right-censored observations, there is no need to wait for very slow responses that are considered meaningless and would be trimmed anyway. Also, using rather short and fixed response deadlines will lead to individual distributions that overlap in time, which is important for

Second, try to design as many trials as possible per condition because then you can use small bins and still obtain stable

Conclusions

RT and accuracy distributions are a rich source of information on the time course of cognitive processing. The changing effects of our experimental manipulations with increases in waiting time become strikingly clear when looking at response hazards and microlevel speed-accuracy trade-off functions. Indeed, working with hazard and conditional accuracy functions, you will discover a whole new layer of the data, and presumably the one where the processes live that actually interest you. An EHA of time-to-event data can strongly constrain the choice between cognitive models of the same psychological phenomenon. Due to the theoretical and statistical advantages of EHA, the fundamental simplicity of the method, and the availability of free software, there is currently no reason anymore to not start using EHA for time-to-event data.

Footnotes

Acknowledgements

We would like to thank Gillian Porter, Tim Meese, Peter Thompson, Frans Verstraten, and Johan Wagemans for inviting us to write a Methods article on event history analysis. We also thank Niko Troje and two anonymous reviewers for their useful comments on previous versions.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation)—Projektnummer PA 2947/1-1 (to S. P.).

Open practices statement

The data and R code for the event history analyses are available from the first author upon request.