Abstract

This study presents a systematic review and meta-analysis synthesising the existing research on the effectiveness of interventions featuring physical challenges for developing transferable skills and psychological health outcomes. Results from 47 independent samples across 44 studies revealed that the overall proximal effects of the interventions were medium (g = 0.51) and that effects gradually diminished over time (g = 0.39). Analyses across individual outcomes revealed interventions positively influenced interpersonal (g = 0.55), intrapersonal (g = 0.53), and cognitive skills (g = 0.53), as well as psychological health outcomes (g = 0.56). Moderator analyses indicate interventions can be potentially beneficial irrespective of design and participants involved. However, the current state of the literature does not truly allow for thorough conclusions to be made regarding the appropriateness and effectiveness of physical challenge interventions for organizational settings.

Plain Language Summary

Transferable skills, described as interpersonal (e.g., communication), intrapersonal (e.g., resilience), and cognitive skills (e.g., problem-solving), have been identified as indispensable human resources in modern-day workplaces. Researchers have found these skills to be associated with numerous desirable performance (e.g., productivity) and health outcomes (e.g., reduced burnout). Therefore, the demand for occupational initiatives which can develop transferable skills is growing and workplaces are increasingly looking outside of work settings for training and development opportunities. Physical challenge interventions which feature novel outdoor environments and recreational physical challenges (e.g., rock climbing and high ropes courses), in particular, are gaining popularity as a method for enhancing employees' transferable skills and psychological wellbeing. However, no review has attempted to synthesize this body of research relative to the occupational domain. This paper evaluated published literature to identify the types of transferable skills and psychological health-related outcomes that can be developed in working-age adults through participating in physical challenge interventions. Our findings suggest physical challenge interventions can have positive short-and long-term effects on transferable skills, particularly interpersonal and intrapersonal skills, and support psychological health. However, due to the current state of the literature, we are not able to determine whether positive changes transfer to workplaces nor make thorough conclusions on the appropriateness and effectiveness of physical challenge interventions for organizational settings. In our discussions, we highlight methodological limitations of the current evidence base, issues concerning intervention implementation in organizational settings, and provide recommendations for future research.

Keywords

As the organizational landscape has evolved throughout the twenty-first century, an accompanying shift in the skills and competencies required by individuals to succeed in the workplace has been evident. Although technical, occupation-specific skills continue to be required, increasing value is being placed on transferable skills, such as communication, leadership, and resilience (Martin-Raugh et al., 2020; Nägele & Stalder, 2017; Sarfraz et al., 2018; Suarta et al., 2017) that support individuals in navigating today's complex work environment (Abidi, 2019; Lepeley et al., 2021). Transferable skills have been associated with positive work and health-related outcomes, such as enhanced performance, satisfaction, motivation, engagement and well-being (Avey et al., 2010; Donaldson et al., 2019; Mitchell et al., 2010; Pellegrino & Hilton, 2012; Peterson et al., 2011; van Woerkom et al., 2020). However, employers are frequently reporting that new recruits and long-standing employees are lacking in these skillsets (Hurrell, 2016; Winterbotham et al., 2019). The demand for occupational initiatives which target the development of transferable skills is growing and workplaces are beginning to look outside of work settings for training and development purposes. Yet, there remains limited research which has explored if this is an appropriate and effective approach for workforces.

Experiential interventions involving outdoor recreational physical challenges, commonly described as adventure programs, are gaining popularity as a method for enhancing employees transferable skills and psychological well-being (Lee & David, 2021; Rhodes & Martin, 2014; Williams et al., 2003). Deeply rooted in the philosophy of experiential education, adventure programs offer an educational experience where participants are required to draw upon their personal skills, push personal limits, collaborate with team members to overcome an array of physically and psychologically challenging activities. The incorporated Physical challenges are seen to be more than conventional physical activity, they denote activities involving real or apparent risk to an individual (Houge Mackenzie & Hodge, 2020). Common examples include rock-climbing, high ropes courses, and mountain biking that typically take place in unique physical and social settings and are theorized to facilitate learning and personal growth to occur (Hattie et al., 1997; Houge Mackenzie & Brymer, 2020; Priest, 2023).

Previous systematic and meta-analytical reviews have explored the effects of adventure-based interventions for a wide variety of populations (e.g., clinical groups, disadvantaged youths, pupils), outcomes (e.g., therapeutic, educational, psychological, personal, social), and specific applications (e.g., challenge courses, wilderness settings, adventure therapy) (Bowen & Neill, 2014; Cason & Gillis, 1994; Fleischer et al., 2017; Gillis & Speelman, 2008; Hattie et al., 1997; Holland et al., 2018). However, despite growing interest, no review has attempted to synthesize this body of research relative to the occupational domain to date. The purpose of the present study is to provide a systematic review and meta-analysis of physical challenge interventions and their effectiveness in developing transferable skills and psychological health-related outcomes that are relevant for personal and professional life contexts. Therefore, this paper intends to serve as a first look at physical challenge interventions as potential occupational training approaches.

Physical challenge interventions

Interventions involving outdoor recreational physical challenges have grown considerably in demand and now encompass a diverse range of experiential courses for recreational, educational, occupational, and therapeutic uses. Various terms are used interchangeably to describe these interventions within the literature including adventure programming, adventure education, adventure therapy, outdoor experiential learning, outward bound, rope or wilderness courses (Hattie et al., 1997; Neill & Dias, 2001; Rantala et al., 2018; Williams et al., 2003). Identifying a fixed and universally accepted definition is problematic due to the varying practices, philosophical underpinnings, and cultural influences (Blaine & Akhurst, 2021; Thomas, 2019). For example, interventions can incorporate activities that range from simple self-discovery such as residential camps and survival skills training, to intense adventure experiences involving more physically and mentally demanding activities such as rock climbing, rope courses, water sports, hiking, and wilderness experiences (Buckley, 2018). It is also recognized that individuals create their own perceptions as to what is deemed “adventure” and “challenging” based on their perceived skill level, expertise, attitudes and values gained through prior experiences, which can exist with or without formal structured training (Buckley, 2018; Ewert & Sibthorp, 2014). In this review, the term, physical challenge interventions, is used to describe interventions taking place in outdoor environments and involve instructor-led recreational physical challenges with elements of real or perceived risk. Notably, emphasis is placed on the development of work-relevant transferable skills and fostering positive psychological health outcomes.

Despite the array of intervention designs, many have evolved from theoretical models (e.g., The Outward Bound Process Model; Walsh & Golins, 1976) and generally share common characteristics (Priest, 2023). These include the social (small groups) and physical environments (e.g., wilderness or backcountry settings) and the combination of challenging adventure activities (e.g., rock climbing, hiking, mountain biking, kayaking) and problem-solving tasks. Other typical features are elements of perceived or objective risk-taking, along with dedicated reflection techniques and the use of metaphors to encourage generalization to real-life situations (Mckenzie, 2003; Russell et al., 2017; Sibthorp, 2003). While researchers have debated the requisite experiential components, physically challenging experiences are generally considered the salient determinant for personal growth and development (Bailey et al., 2019; Ewert & Sibthorp, 2014; Tsaur et al., 2015, 2020).

Researchers have documented an array of positive outcomes achievable through these intervention types. Notable outcomes include the acquisition of new skills (e.g., leadership, communication, and problem-solving skills), positive changes in personal beliefs (e.g., self-efficacy) and self-esteem, improved group relationships and cohesion, and enhanced physical and psychological well-being. These outcomes have been suggested to be strongest immediately after participation and, although findings are inconsistent, intervention effects can be long-lasting and found months later (Bowen & Neill, 2014; Gillis & Speelman, 2008; Hattie et al., 1997; Holland et al., 2018; Pomfret et al., 2023).

Examining the individual and collective benefits attributed to physical challenge interventions, reveals why these experiences are becoming an increasingly attractive option for a wide range of organizations in search of training and development opportunities (Williams et al., 2003). To date, research has primarily associated these interventions with workers in white-collar professions (e.g., Bronson et al., 1992; Priest, 1998) and current students (e.g., Anderson et al., 2020; Mutz & Müller, 2016). Yet, they are not limited to these demographics as the aforementioned transferable skills are important throughout the occupational spectrum (Hurrell, 2016). Moreover, interventions have typically been framed as team building or leadership development initiatives, leveraging activities such as outdoor expeditions or rope courses to enhance interpersonal dynamics and leadership skills (Brymer et al., 2011; Hatch & McCarthy, 2005).

Defining transferable skills

Transferable skills are synonymous with various labels in the literature, including soft skills, generic skills, employability skills, and twenty-first-century skills (Nägele & Stalder, 2017). Collectively, these terms refer to a set of valuable, non-job specific competencies, knowledge, and abilities that individuals acquire in one context and can apply in another (Messum et al., 2015; Sarfraz et al., 2018). A review by Pellegrino and Hilton (2012) classified these broadly defined skills in three groups. The first was Interpersonal skills, involving the capacity to communicate and interact with others, such as leadership and teamwork skills. The second was intrapersonal skills, which relate to meta-cognitive skills that allow people to recognize and manage behavior, thoughts, and emotions, such as resilience and self-awareness. The third group was cognitive skills, which involve reasoning and memory, such as critical thinking, problem-solving, and innovation. Empirical studies have found that these skillsets positively influence a wealth of desirable workplace outcomes (e.g., job satisfaction, performance, well-being, motivation) and also contribute to reducing burnout and work-related stress (Avey et al., 2010; Donaldson et al., 2019; Giri & Pavan Kumar, 2010; Messum et al., 2015 Peterson et al., 2011; Sarfraz et al., 2018; Sato et al., 2019; van Woerkom et al., 2020).

Developing transferable skills for the workplace

Transferable skills are typically harder to observe and quantify compared to more technical, job-specific skills (e.g., IT skills), however, they are considered to be highly malleable and developed through carefully crafted intervention (Heckman & Kautz, 2012; Ibrahim et al., 2017; Michelle et al., 2020). Accordingly, a variety of training interventions have been documented and examined for their efficacy in advancing employee skill development (Martin-Raugh et al., 2020). These interventions are typically delivered “on-the-job” in the workplace or, more recently, through virtual means (e.g., email, telephone, video software) and involve a variety of lectures, audio-visual materials, one-to-one coaching, computer-based training, feedback sessions, focus groups, and role-play simulations which target specific employee skills, job tasks, and situations. For example, teamwork (Lacerenza et al., 2018), communication (Barth & Lannen, 2011), and leadership skills (Lacerenza et al., 2017), and psychological functioning (Donaldson et al., 2019; Vanhove et al., 2016). While the formats of these occupational training approaches vary, they offer distinct advantages, notably in terms of time and cost effectiveness (e.g., short online workshops) and are grounded in sound theoretical frameworks (e.g., positive psychology; Donaldson et al., 2019) and robust research. Furthermore, by employing meticulously designed job-relevant exercises (e.g., conflict resolution) and immersive environments (e.g., simulated office settings), employees are encouraged to apply their newly acquired knowledge and mirror the skills cultivated during training within their work environment (Botke et al., 2018; Lacerenza et al., 2018).

Organizations are also taking an interest in off-the-job training approaches, referring to activities and programs conducted outside of traditional occupational settings (Mahadevan & Yap, 2019). The growing prominence of such training methods can be attributed to the recognition of the importance of fostering employees’ holistic development since our life roles are inevitably intertwined (Malchelosse et al., 2023). Drawing on research concerning work-life enrichment, different spheres of our life can positively influence one another (Greenhaus & Powell, 2006). For example, individuals can gain valuable experiences and skills in non-work domains that can enrich their work-life, resulting in greater performance and satisfaction (Brough et al., 2014; Heskiau & McCarthy, 2020). Nature-based interventions (e.g., Gritzka et al., 2020) and physical challenge interventions are two similar and prevalent forms of “off-the-job” interventions that use non-workspace environments and aim to enhance participants’ skills and experiences beyond their current roles, leading to a positive spillover effect between work and personal life (Brough et al., 2014). Researchers have generally agreed that many of the experiential features of these interventions facilitates the work-life enrichment process, such as post-course reflection, active learning, receiving feedback from peers and instructors, and the use of isomorphic metaphors (similarities between behaviors at work and intervention activities) (Furman & Sibthorp, 2013; Gass et al., 2012; Pierce et al., 2017; Sibthorp et al., 2011).

Nevertheless, it should be noted for organizational contexts that one of the distinctive qualities of these interventions is that they are far removed from the individuals’ actual job tasks and environment, contrasting with many of the previously mentioned training approaches which can be classified as “near” transfer (Botke et al., 2018). While there are advantages to employees being far removed from their daily routine and away from the pressures of work (e.g., reduced stress; Mutz & Müller, 2016), it poses a challenge for positive outcomes that result from intervention engagement to transfer to the job and derive workplace benefits (Ford et al., 2018).

The present study

Existing literature reports on the positive effects of integrating outdoor recreational physical challenges within structured programs for a range of populations and personal, social, educational, and health outcomes. This study intends to contribute to the literature by examining whether physical challenge interventions can truly accomplish the development of transferable skills and psychological health-related outcomes as they claim to. The aim is to synthesize, through narrative and meta-analysis, trials of physical challenge interventions to appraise the quality and examine effects on pre-defined, work-relevant transferable skills and psychological health-related outcomes. Synthesis via meta-analysis will specifically add value by providing a quantitative assessment of the effectiveness of physical challenge interventions and provide accurate conclusions about the strength and direction of variable relationships and moderators involved in physical challenge interventions (Paul & Barari, 2022).

Methods

The systematic review methods were informed by the Cochrane Handbook for Systematic Reviews of Interventions version 6.2 (Higgins et al., 2021) and reported in accordance with the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) statement (Page et al., 2021). The protocol was registered in September 2021 in the Research Registry (ID: reviewregistry1224).

Inclusion and exclusion criteria

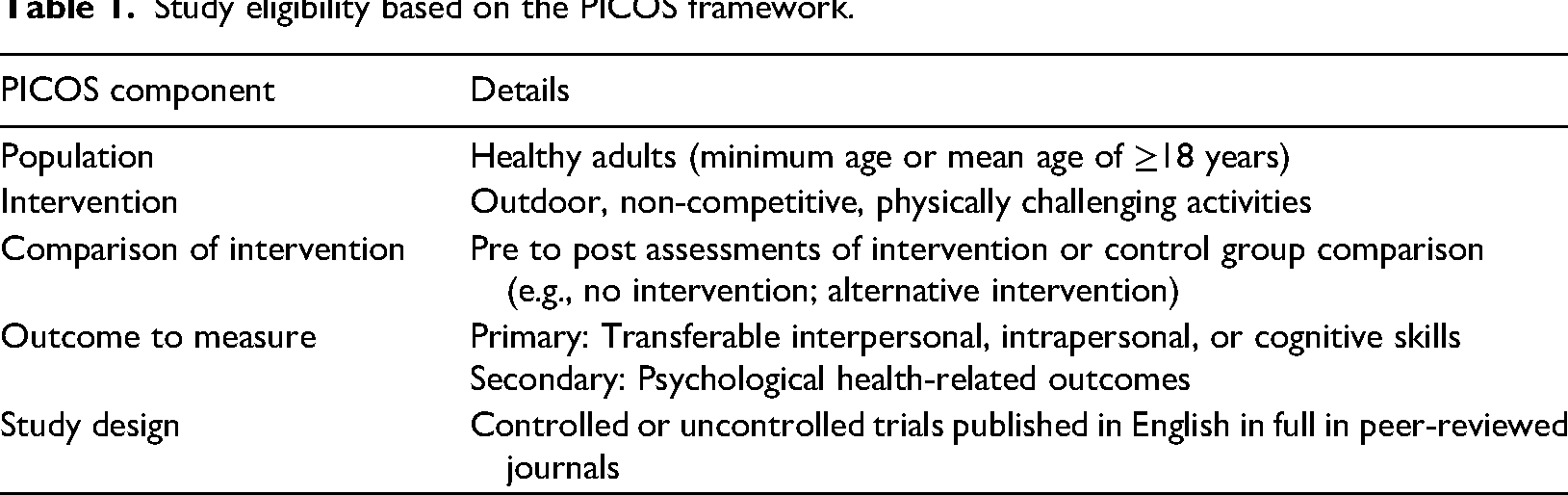

Study eligibility criteria are outlined in Table 1. Papers were included if they reported studies of healthy adults (minimum or mean age of ≥18 years) involved in outdoor, non-competitive, physical challenges. Interventions were not eligible if they were primarily designed as competitive events or for fitness training or therapeutic purposes or incorporated controlled laboratory exercises. Study outcomes were transferable interpersonal, intrapersonal, or cognitive skills and/or psychological health outcomes. Studies that measured physical health, fitness, educational, environmental, or therapeutic outcomes were excluded. Studies were included if they had a pre-post intervention design, with or without a control condition involving no intervention or another intervention different to that of the experimental condition. Finally, included studies were required to published in full in an English language peer-reviewed academic journal.

Study eligibility based on the PICOS framework.

Search strategies

To ensure comprehensive coverage of the existing literature, four approaches were used to identify relevant studies. First, electronic searches of five electronic databases (SportDiscuss, PsycArticles, PsychInfo, Medline and Sport Education and Medicine Index) were performed in April 2022. A search string was developed with the help of an information specialist using keywords that appeared in the title and/or abstract of each database

Study selection

Studies identified through the electronic search and from additional sources were exported to systematic review software, Covidence. The process of study selection began with the lead reviewer screening the titles and abstracts of all studies and rejecting irrelevant ones. Two reviewers independently evaluated the full text of each study against the outlined eligibility criteria (Table 1). Inter-rater reliability was 0.975 for the full-text screening stage.

Data extraction

Data were extracted on Study details, study design, intervention details, outcomes measured, sample information, findings, and methodological quality. Data extraction was performed by two independent reviewers, using a form that was developed and piloted with three of the included studies. For instances of missing data, authors were contacted to request relevant information. A total of 13 authors were contacted, five responded, and two provided relevant information. One author was contacted regarding inconsistencies in the follow-up assessment data, however, did not respond. Therefore, the follow-up data from Gómez et al. (2019) was excluded from the meta-analysis.

Risk of bias

Study quality was assessed using two validated critical appraisal tools from the Joanna Briggs Institute (Tufanaru et al., 2020). Randomized trials were assessed with the Joanna Briggs Institute Checklist for Randomized Controlled Trials which involves 13 criteria. Non-randomized and uncontrolled trials were assessed with the Joanna Briggs Institute Checklist for Quasi-Experimental Studies consisting of nine assessment criteria. For both checklists, items received a rating of “yes,” “no,” “unclear” or “not applicable.” Checklist items are listed in the key of Tables 3 and 4.

Data analyses

All included studies were initially summarized in tables outlining characteristics, findings, and methodological quality. To estimate the magnitude of change in outcomes, where possible, studies were statistically synthesized. Where statistical pooling was not possible (e.g., insufficient data), findings are only presented in table form.

Effects size estimation

Effect sizes were calculated with the statistical software R (R Core Team, 2020) using the “ESC” package. Effect sizes were calculated for three analyses: (1) immediate assessments (i.e., measures administered before and immediately after the intervention); (2) follow-up assessments (i.e., measures administered before and at a later follow-up, days, weeks, or months after the intervention); (3) controlled effects (i.e., the difference in changes between intervention and control groups). Effect sizes are expressed as the standardised mean difference (SMD) to accommodate the heterogeneity of instruments used in studies. For immediate and follow-up effects, within-group Cohen's d method was used with a Hedges g correction to account for the bias created by contrasting sample sizes (Hedges, 1981). Controlled effects were calculated as the standardised mean change for the intervention and control group. Included trials did not report the correlation coefficients from pre-test to post-test and follow-up, therefore, an imputed r = 0.5 was used which is recommended as the default when unknown and has been used in similar reviews (Bowen & Neill, 2014; Fleischer et al., 2017).

Effect size synthesis

All meta-analytic and meta-regression analyses were conducted in R (R Core Team, 2020) using the “robumeta” (Fisher & Tipton, 2015) and “metafor” (Viechtbauer, 2010) packages. Random-effects models were used for all quantitative syntheses as they assume variation exists between studies and their associated effects, and therefore, are able to control for the systematic differences between studies (e.g., different interventions, populations, sample sizes, outcome measurements). The issue of dependent effects within the data were recognized, with most studies measuring multiple outcomes or including multiple measures of a single outcome. To handle the complexities of dependency, overall intervention effects (analyses including all transferable skills) were calculated using robust variance estimation (RVE). RVE provides a more accurate estimate of the standard errors for the intervention effects by using information from each outcome measure (Hedges et al., 2010). RVE also uses approximately inverse weights, which were calculated using the correlated effects method. A small sample adjustment (Tipton, 2015) and an imputed ρ=0.8, as recommended by Fisher and Tipton (2015), was applied to all RVE analyses. To ensure the robustness of the results to changing rho values, sensitivity analyses were carried out (Hedges et al., 2010).

We were also interested in the development of specific types of transferable skills. We conducted separate meta-analyses on interpersonal, intrapersonal, and cognitive skills and psychological-health outcomes. These outcome variables were examined in the immediate analysis only, since there were insufficient data on separate outcomes for the follow-up or controlled analyses. RVE can be used to make inferences even with a small number of studies and is valid so long as the degrees of freedom >4 (Polanin et al., 2017). Outcomes were analyzed through RVE when this criterion were met. Primary outcomes which did not meet the criteria were analyzed through traditional random-effects models using Dersimonian and Laird's method using the “metafor” package (Viechtbauer, 2010). As dependent effect sizes aren’t accounted for, in studies which included multiple outcomes or measures of the same construct, the first measured outcome or measure was used to represent the study effect to ensure the assumption of effect size independence was not violated.

For all random-effects models, Tau squared (T2) was calculated to estimate the between-study variance. The square root of this number (T) is the estimated standard deviation of underlying effects across the studies. I2 values are also presented, indicating the proportion of variance that is due to heterogeneity in effect sizes rather than chance (Higgins et al., 2021). Robust variance estimation methods do not provide a measure of heterogeneity significance (Q test) (Tanner-Smith & Tipton, 2014). To explore potential moderating influences on intervention effects, moderator analyses were conducted on five a priori determined variables related to demographics (sex and sample type) and intervention characteristics (duration, perceived difficulty, environment. These were selected based on previous research, suggesting variables, such as perceived challenge (Tsaur et al., 2015) and sex (Overholt & Ewert, 2015) can influence program outcomes. Perceived difficulty was determined according to the intervention description and the activities used. Moderator analysis concerning the environment compared natural outdoor settings (e.g., kayaking) to the increasingly prevalent, purpose-built, outdoor adventure experiences (e.g., high rope courses). To increase statistical power, moderator variables were tested for all outcomes combined using immediate and follow-up effect sizes.

Publication bias

Publication bias is the phenomenon that studies which report small or nonsignificant findings are systematically under-represented in the published literature (Zelinsky & Shadish, 2018). The chance of a study being accepted and published by a scientific journal is regularly associated with the corresponding statistical significance of its results (Lin & Chu, 2018). Since this review included peer-reviewed, published studies only, detecting publication bias is a critical step in the review process as such bias may lead to inaccurate conclusions (Lin & Chu, 2018). Robumeta does not yet allow for publication bias analysis, accordingly, publication bias was assessed using study-level effect sizes so that the assumption of effect size independence was not violated (Zelinsky & Shadish, 2018). An independent effect size and its associated standard error was calculated for each study and a random-effects model was fit to synthesize study-level effects. Publication bias was assessed via Egger's regression test (Egger et al., 1997), visual inspection of a funnel plot, and trim-and-fill analysis to identify missing studies and calculate a new, adjusted meta-analytic average effect size (Zelinsky & Shadish, 2018).

Results

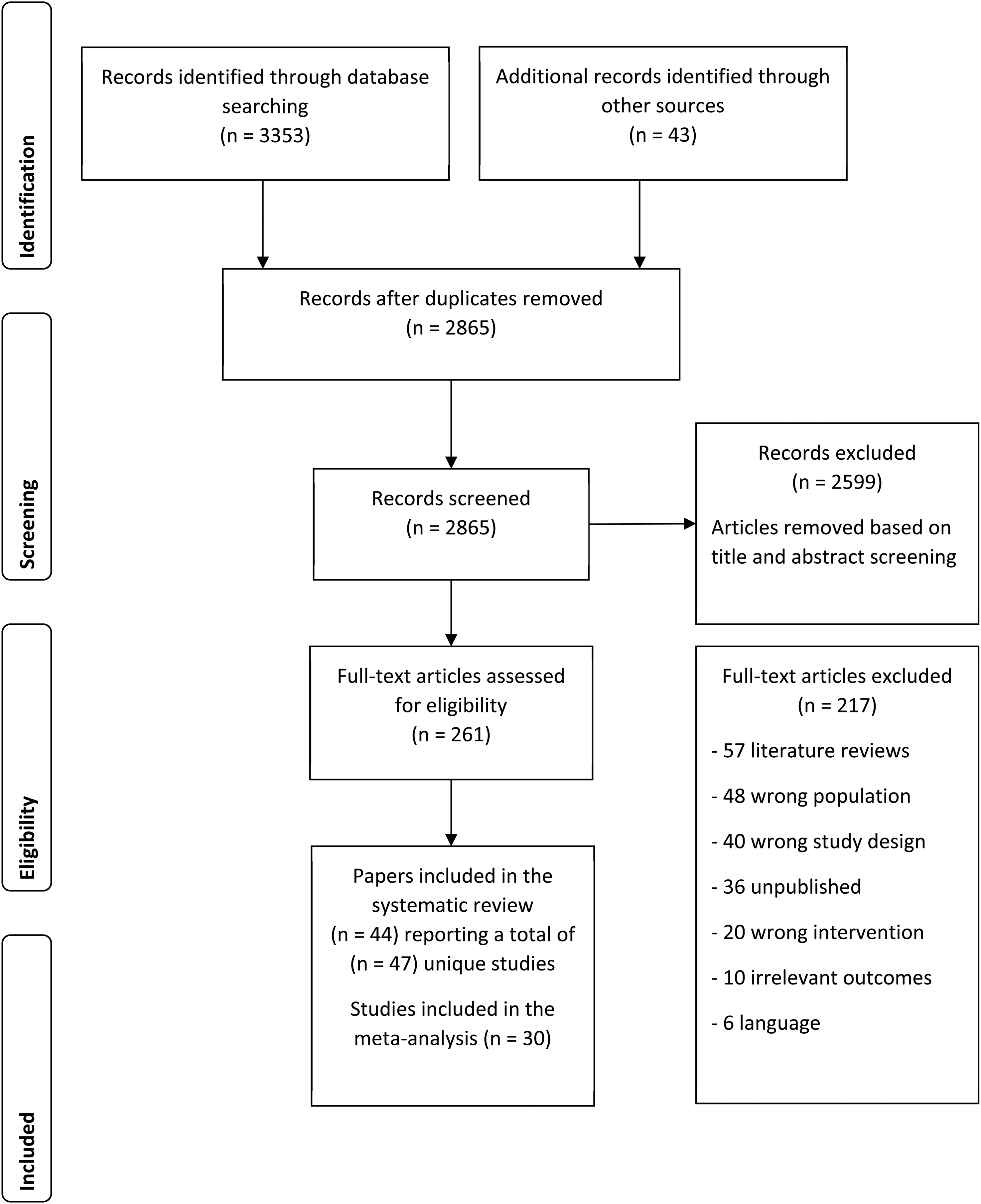

In total, the search process identified 2865 (after the removal of duplicates) potentially relevant papers. After title and abstract screening, 261 papers were considered for full-text review, and 44 papers (47 independent studies) were considered eligible for the systematic review (Figure 1).

PRISMA study selection flowchart.

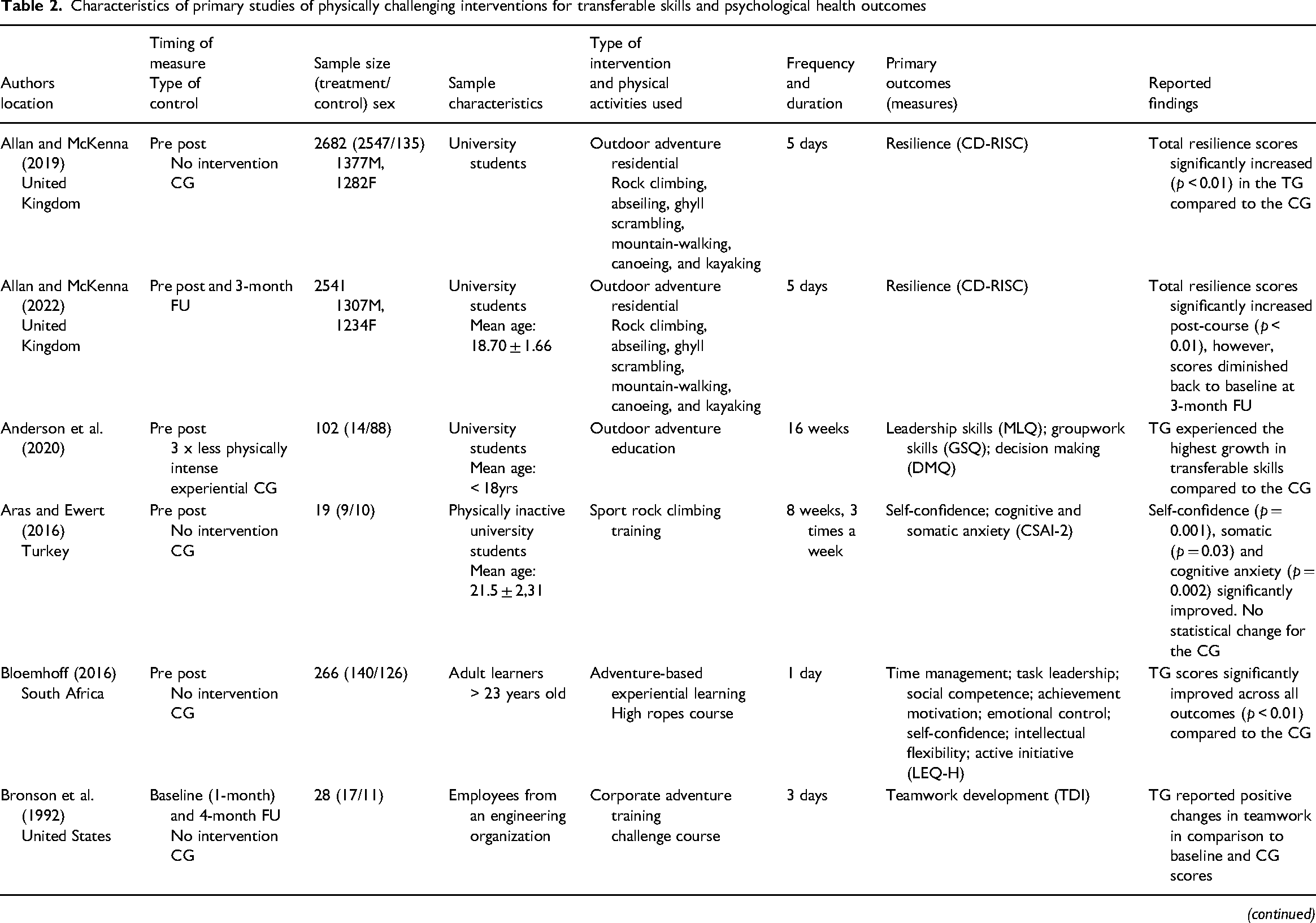

Characteristics of included studies

Table 2 shows the characteristics of the included studies. The research was conducted in 12 countries with the majority from the United States (48%) and Europe (30%). Across the 44 studies, there was a total of 10,194 participants. Of the studies that reported information on participant sex, 54% of participants were male (4,789) and 46% female (4,147). Thirty-two studies included university student samples, nine studies involved workforce samples, two studies used military samples, and the remaining four studies included working adults from unspecified backgrounds. Seven studies used a randomized controlled trial design, 17 were non-randomized controlled trials, and 25 studies had no control group. All studies collected data through self-report questionnaires. Twenty-five studies collected data before and immediately after the intervention and 22 studies collected data at a later follow-up time point ranging from 3 days to 12 months (mean length of 3 months). The mean intervention duration was 10 days, ranging from half a day to 112 days. Of the 46 studies, 16 used purpose-built facilities (e.g., challenge/rope courses and rock climbing walls) and 30 involved adventure activities in natural outdoor environments.

Characteristics of primary studies of physically challenging interventions for transferable skills and psychological health outcomes

Note: 1 = Study one; 2 = Study two; ATWG = Attitude Towards Working in a Group Scale; B = Baseline; CCEQ = Challenge Course Experience Questionnaire; CD RISC = Conor-Davidson Resilience Scale; CG = Control group; CSAI-2 = Competitive Sport Anxiety Inventory-2; CSDTI-2 = Student Development Task Inventory-2; DMQ = Decision Making Questionnaire; ECIQ = Emotional Competence Intelligence Inventory; EIQ = Emotional Intelligence Questionnaire; FU = Follow up; GEQ = Group Environment Questionnaire; GPSES = General Perceived Self-Efficacy Scale; GSES = Generalized Self-Efficacy Scale; GWSE = Groupwork Self-Efficacy Scale; GSQ = Groupwork Skills Questionnaire; ITM = Interpersonal Trust Measure; ITI-G = “Group” Interpersonal Trust Inventory; ITI-O = “Organizational” Interpersonal Trust Inventory; ITI-S = “Self-Version” Interpersonal Trust Inventory; LEQ-H = Life effectiveness Questionnaire-Version H; LOC = Rotter Locus of Control; LPQ = Leadership Profile Questionnaire; MAAS = Mindfulness Attention and Awareness Scale; MHC-SF = Mental Health Continuum Short Form; MLQ = Multifactor Leadership Questionnaire; MRQ = Modified Resilience Questionnaire; MSES = Multidimensional Self-Esteem Scale; NGSE = New General Self-Efficacy Scale; OBOI = Outward Bound Outcomes Instrument; PCQ-12 = Psychological Capital Questionnaire-Short Version; PCS = Perceived Cohesion Scale; PGE-O = Personal and Group Effectiveness Scale; PSQ = Perceived Stress Questionnaire; RCSSES = Ropes Course Specific Self-efficacy; RS = Resilience Scale; SDQ111 – Self-Description Questionnaire Scale; SFI = Situational Fears Inventory; SSI = Social Support Inventory; STAI = State-Trait Anxiety Inventory; TDI = Teamwork Development Inventory; TG = Treatment group; TSCS = Tennessee Self-Concept Scale

Included studies assessed a range of outcomes. For analysis, we categorized these as, Interpersonal, intrapersonal, cognitive skills or psychological health. Due to the number of outcomes pertaining to interpersonal and intrapersonal skills, these were further broken down into two sub-categories of interpersonal skills (teamwork/collaboration and leadership) and four sub-categories of intrapersonal skills (self-efficacy, self-concept, resilience, and emotional intelligence).

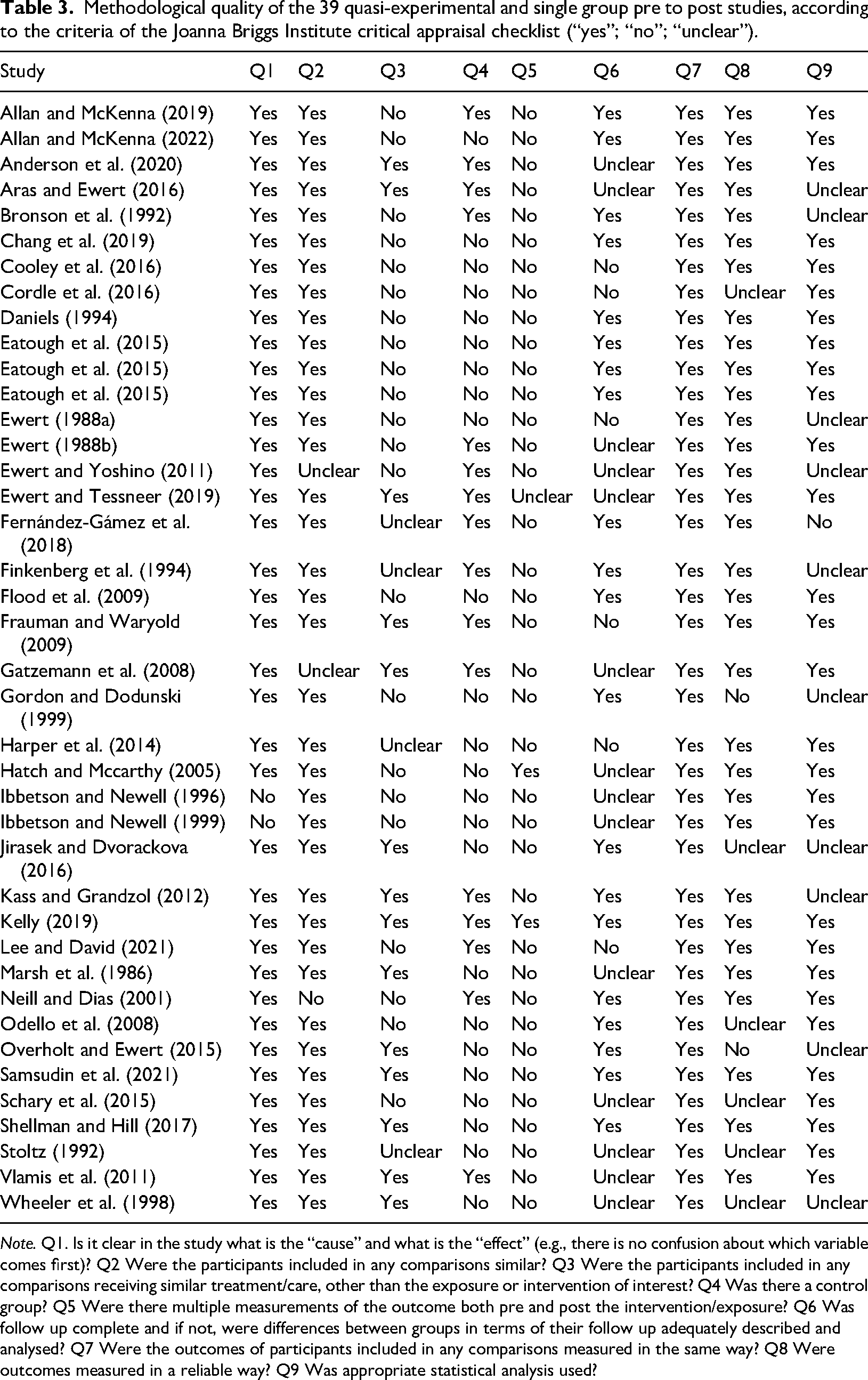

Quality assessment

The methodological quality assessments of quasi-experimental studies and randomized-controlled trials are presented in Table 3 and Table 4 respectively. Most quasi-experimental studies were considered moderate to low quality. Common methodological limitations included no control group, failing to measure outcomes at multiple time points, and failing to report participant retention rates. Likewise, all randomized controlled trials were of moderate to low quality. Three of the criteria were not deemed applicable to these studies (e.g., participants blinded to treatment) due to the nature of the interventions (Scrutton & Beames, 2015).

Methodological quality of the 39 quasi-experimental and single group pre to post studies, according to the criteria of the Joanna Briggs Institute critical appraisal checklist (“yes”; “no”; “unclear”).

Note. Q1. Is it clear in the study what is the “cause” and what is the “effect” (e.g., there is no confusion about which variable comes first)? Q2 Were the participants included in any comparisons similar? Q3 Were the participants included in any comparisons receiving similar treatment/care, other than the exposure or intervention of interest? Q4 Was there a control group? Q5 Were there multiple measurements of the outcome both pre and post the intervention/exposure? Q6 Was follow up complete and if not, were differences between groups in terms of their follow up adequately described and analysed? Q7 Were the outcomes of participants included in any comparisons measured in the same way? Q8 Were outcomes measured in a reliable way? Q9 Was appropriate statistical analysis used?

Methodological quality of the seven randomized controlled trials, according to the criteria of the Joanna Briggs Institute critical appraisal checklist (“yes”; “no”; “unclear”; not applicable “na”).

Note. Q1. Was true randomization used for assignment of participants to treatment groups? Q2. Was allocation to treatment groups concealed? Q3. Were treatment groups similar at the baseline? Q4. Were participants blind to treatment assignment? Q5. Were those delivering treatment blind to treatment assignment? Q6. Were outcomes assessors blind to treatment assignment? Q7. Were treatment groups treated identically other than the intervention of interest? Q8. Was follow-up complete and if not, were differences between groups in terms of their follow-up adequately described and analyzed? Q9. Were participants analyzed in the groups to which they were randomized? Q10. Were outcomes measured in the same way for treatment groups? Q11. Were outcomes measured in a reliable way? Q12. Was appropriate statistical analysis used? Q13. Was the trial design appropriate, and any deviations from the standard RCT design (individual randomization, parallel groups) accounted for in the conduct and analysis of the trial?

Quantitative review

Thirty studies were included in the meta-analysis (Table 5).

Effects sizes of the included studies in the meta-analysis.

Preliminary analysis

Included studies for meta-analysis were firstly screened for outliers. A Grubbs test was carried out for immediate and follow-up comparisons and for controlled effects. A Grubbs test investigating immediate effect sizes revealed the effect for the study by Daniels (1994) was an outlier (G = 3.49, p < 0.01). This effect size, g = 2.09, was deemed significantly larger than the mean effect size. Due to mean standard deviations not being reported in Daniel's (1994) study, missing data was calculated following Cochrane guidance, resulting in an overestimation of treatment effect. Because this was the only effect size from the Daniels (1994) study, this resulted in the removal of the whole study. A Grubbs test also revealed that the effect sizes, g = 1.85, from Eatough et al. (2015) (G = 3.37, p = 0.02) and, g = 1.69, from Gómez et al. (2019) (G = 3.18, p = 0.04) were outliers. However, the decision was made to keep the effect sizes in the analysis after confirming calculations were accurate and no noticeable reasons were identified to exclude. No outliers were identified in follow-up and control group comparison effect sizes.

Overall intervention effects

To investigate overall intervention effects (Table 6) on the development of transferable skills for the three comparisons, we fitted an intercept-only meta-regression model using RVE, with adjustments for small sample sizes. We calculated a total of 72 immediate effect sizes from 19 studies (24 independent samples). The average, overall immediate effect size (g = 0.51, 95% CI [.38, .65]) was statistically significant (t(13.3) = 8.11, p < 0.001). Between study heterogeneity was very small, T2 = 0.006, with approximately 5% of the variance in effect sizes due to between-study heterogeneity. Thus, studies essentially had the same magnitude of immediate effect. To ensure the robustness of the result, a sensitivity analysis regarding the assumed correlation (ρ = 0.8) between within-study effect (ρ = 0 to ρ = 1) had a marginal impact on estimated effect, standard error, and between-study heterogeneity (T2).

Random effects meta-analyses for outcome categories based on immediate and follow-up assessments and compared with control conditions.

Note. **p < 0.01; *p < 0.05; k = Number of studies; n = Number of effect sizes; CI = Confidence intervals; T2 = Tau squared; df = Degrees of freedom

For the follow-up analysis, 34 effect sizes were calculated from 12 studies which included follow-up assessments to determine the enduring effects of the intervention. The reported length of time for follow-up assessments ranged from 1 week to 6 months after the intervention. The average, overall follow-up effect size (g = 0.39, 95% CI [.003, .78]) was small and statistically significant (t(10.5) = 2.23, p = 0.049). Between study heterogeneity was moderate, T2 = 0.22, with approximately 66% of the variance in effect sizes due to between-study heterogeneity. Results were robust to sensitivity analysis.

Twelve studies included a control group comparison, from which we calculated 30 effect sizes. The average overall mean change (mean change for intervention versus mean change for comparison group) effect size (g = 0.49, 95% CI [.15, .83]) was statistically significant (t(8.85) = 3.28, p = 0.01). Specifically, participants who engaged in the intervention had statistically greater positive changes in transferable skills when compared with a comparison group (e.g., waitlist group). The model presented zero between-study heterogeneity. These results were robust to sensitivity analysis.

Outcome variable effects

We evaluated the effects of the interventions on different types of interpersonal, intrapersonal, and cognitive skills and the impact on psychological health (Table 6). For outcome analyses using RVE, sensitivity analysis revealed that varying the assumed correlation (ρ = 0 to ρ = 1) had no impact on estimated effect, standard error, and between-study heterogeneity.

Interpersonal Skills. A statistically significant (t = 4.72(9.88), p < 0.01), medium pooled effect size (g = 0.55, 95% CI [.29, .81]) was estimated with some heterogeneity (T2 = 0.05, I2 = 29%) (Figure 2). Interpersonal skills pertaining to teamwork and collaboration were the most frequently cited with 9 studies (12 independent samples) contributing 27 effect sizes. The random-effects model estimated a statistically significant (t = 4.68(9.92), p < 0.01), medium intervention effect (g = 0.55, 95% CI [.29, .81]). Three studies (4 independent samples) incorporated a measure of leadership, for which, a statistically significant (p < 0.01), medium treatment effect (g = 0.69, 95% CI [.32, 1.06]) was estimated.

Intervention effects on interpersonal skill development.

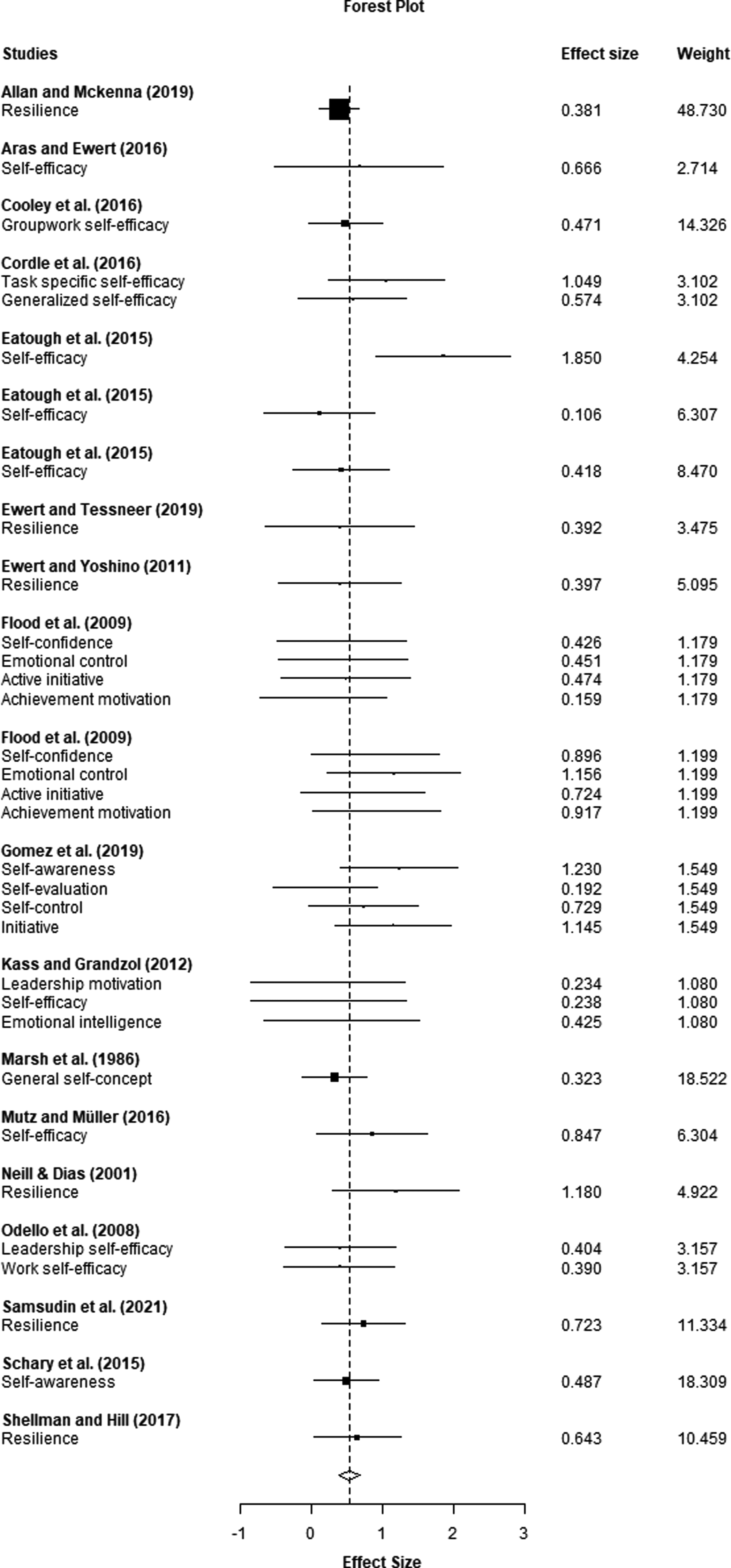

Intrapersonal Skills. The random-effects model estimated a statistically significant (t(8.93) = 8.08, p = 0.01), medium effect (g = 0.53, 95% CI [.38, .68]) with very limited heterogeneity (Figure 3). Frequently cited intrapersonal skills included: self-efficacy (g = 0.61, 95% CI [.32, .90]); resilience (g = .61, 95% CI [.31, .91]); self-concept (g = 0.61, 95% CI [.50, 1.73]); and emotional intelligence (g = 0.71, 95% CI [.19, 1.23]).

Intervention effects on intrapersonal skill development.

Cognitive Skills. The random-effects model estimated a positive, medium intervention effect (g = 0.53, 95% CI [−.04, 1.10]) on the cognitive domain, this effect was not statistically significant (p = 0.059) and considerably heterogeneous (T2 = 0.07, I2 = 90%).

Psychological Health. From the four studies (6 independent samples) that included a measure of psychological health, the random-effects model estimated a statistically significant (p < 0.01), medium effect (g = 0.56, 95% CI [.48, .63]) with no heterogeneity.

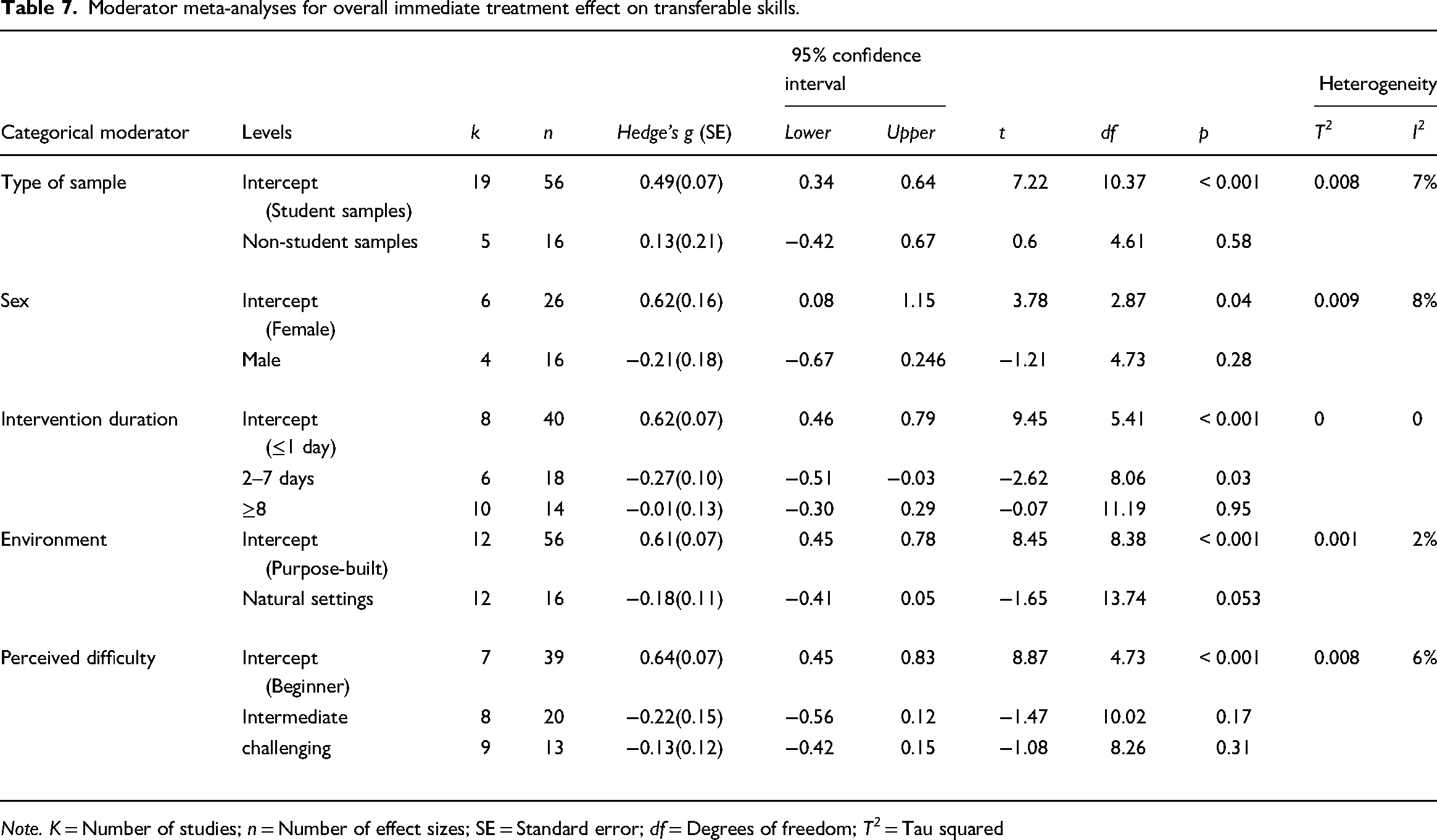

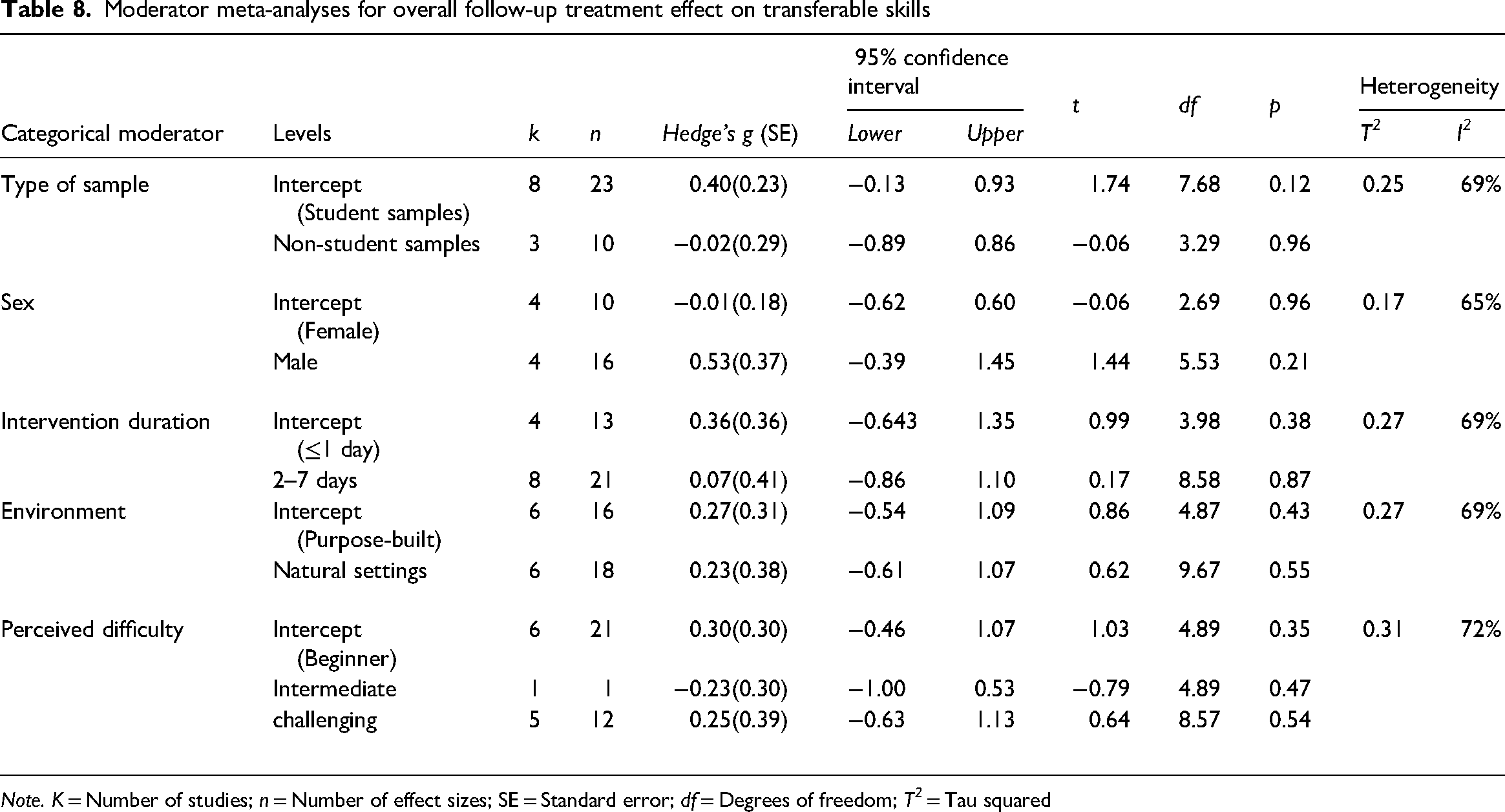

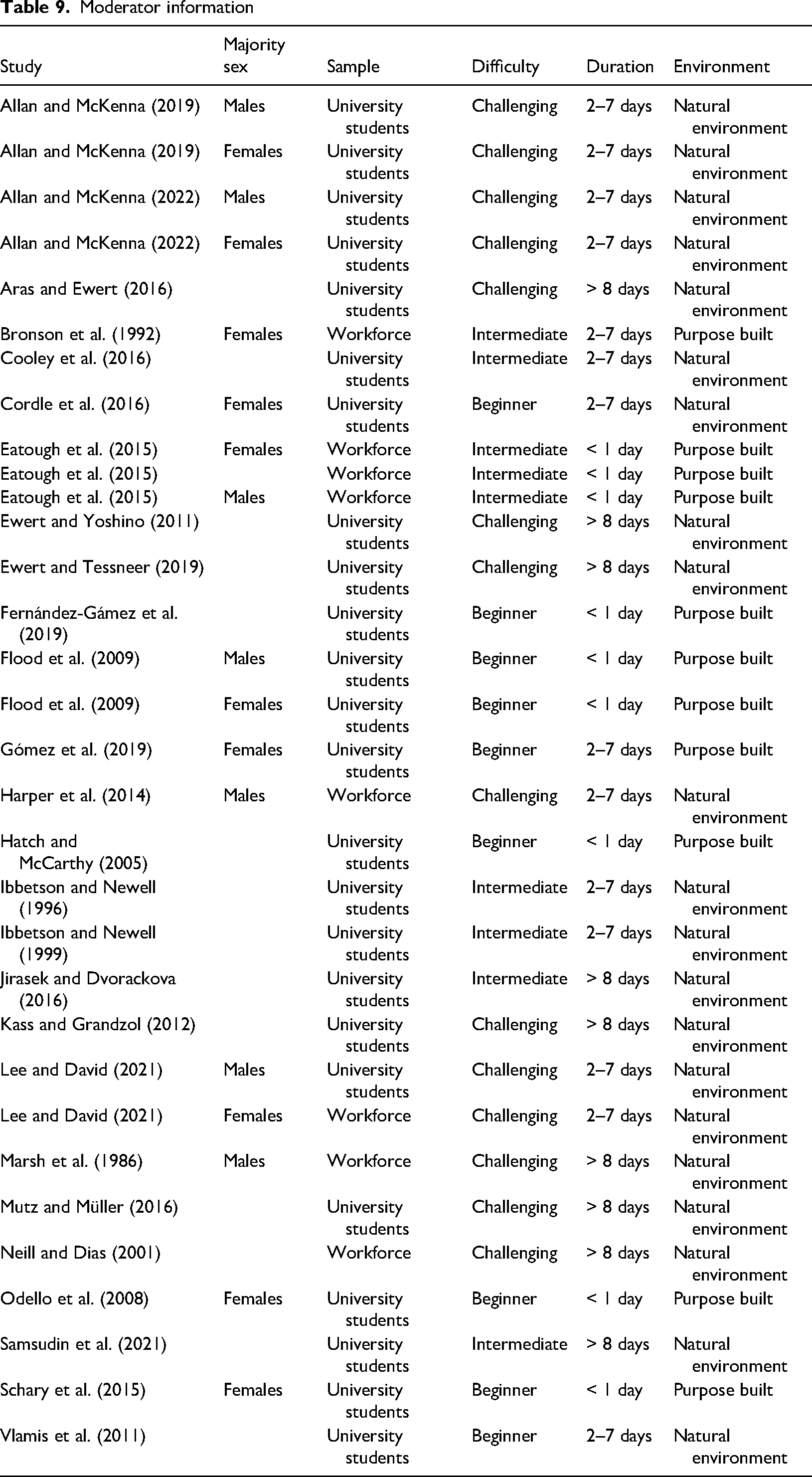

Moderator analysis

Moderator analyses were carried out using immediate and follow-up effect sizes (Table 7 and Table 8 respectively). Moderators included sample characteristics (sex and sample type), the difficulty and duration of the intervention, and the environment the intervention took place in (Table 9).

Moderator meta-analyses for overall immediate treatment effect on transferable skills.

Note. K = Number of studies; n = Number of effect sizes; SE = Standard error; df = Degrees of freedom; T2 = Tau squared

Moderator meta-analyses for overall follow-up treatment effect on transferable skills

Note. K = Number of studies; n = Number of effect sizes; SE = Standard error; df = Degrees of freedom; T2 = Tau squared

Moderator information

Sample Characteristics. Moderator analyses comparing the effects of student samples and non-student samples (e.g., working adults) at immediate and follow-up assessments were not statistically different. Only three studies presented their results split by sex (Allan & McKenna, 2019, 2022; Flood et al., 2009). To ensure a meaningful and powerful analysis, we inspected the sex distribution among the included papers and identified studies with a predominantly male sample and those with a predominantly female sample (> 70%). Eleven studies presented neutral sex splits, three studies did not report participant demographic information, and five studies reported predominant female samples (Cordle et al., 2016 (71% female); Eatough et al., 2015 (80% female); Gómez et al., 2019 (73% female); Odello et al., 2008 (72% female), Schary et al., 2015 (83% female at follow-up)) and five studies reported predominantly male samples (Eatough et al., 2015 (90% male); Harper et al., 2014 (87% male); Lee & David, 2021 (85% male), 2021 (71% male); Marsh et al., 1986 (75% male)). Studies with a predominantly female sample reported higher pooled immediate effects (g = 0.62) compared to studies with a predominantly male sample (g = 0.41). Interestingly, longitudinal effects were greater (g = 0.52) in studies with a predominant male sample compared to predominant female samples (g = −0.01). Nonetheless, these analyses were not statistically significant.

Intervention Design. Moderator analyses explored the effects of intervention duration, environment, and reported difficulty. For duration, moderator analysis for immediate effects revealed the pooled effect for interventions lasting two to seven days (g = 0.35) to be significantly lower (p = 0.03) than interventions lasting one day (g = 0.62) and eight days or more (g = 0.61). Moderator analyses comparing purpose built outdoor facilities (e.g., climbing walls and high ropes course) and natural outdoor activities (e.g., rock climbing, trekking, and mountaineering), and analyses concerning the perceived difficulty of the intervention revealed no statistically different effect sizes for both immediate and follow-up.

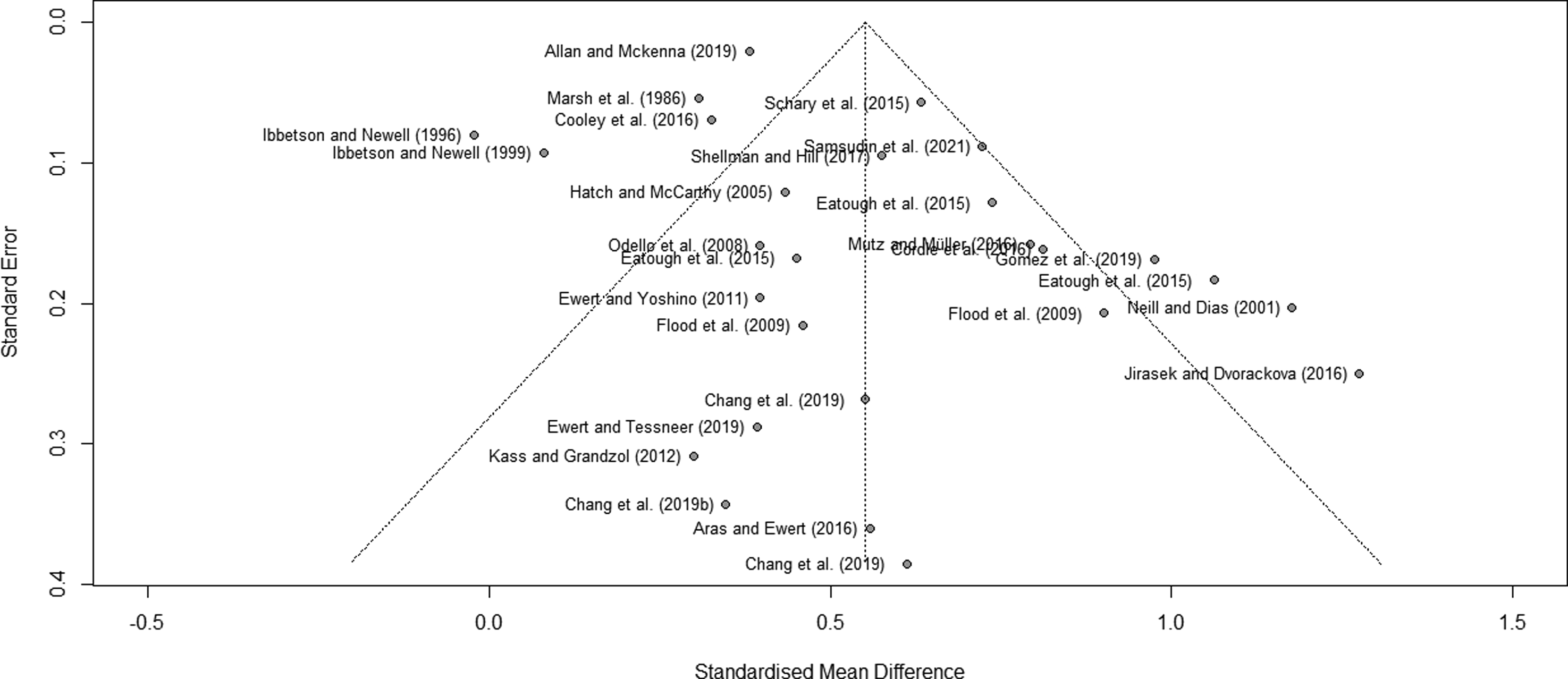

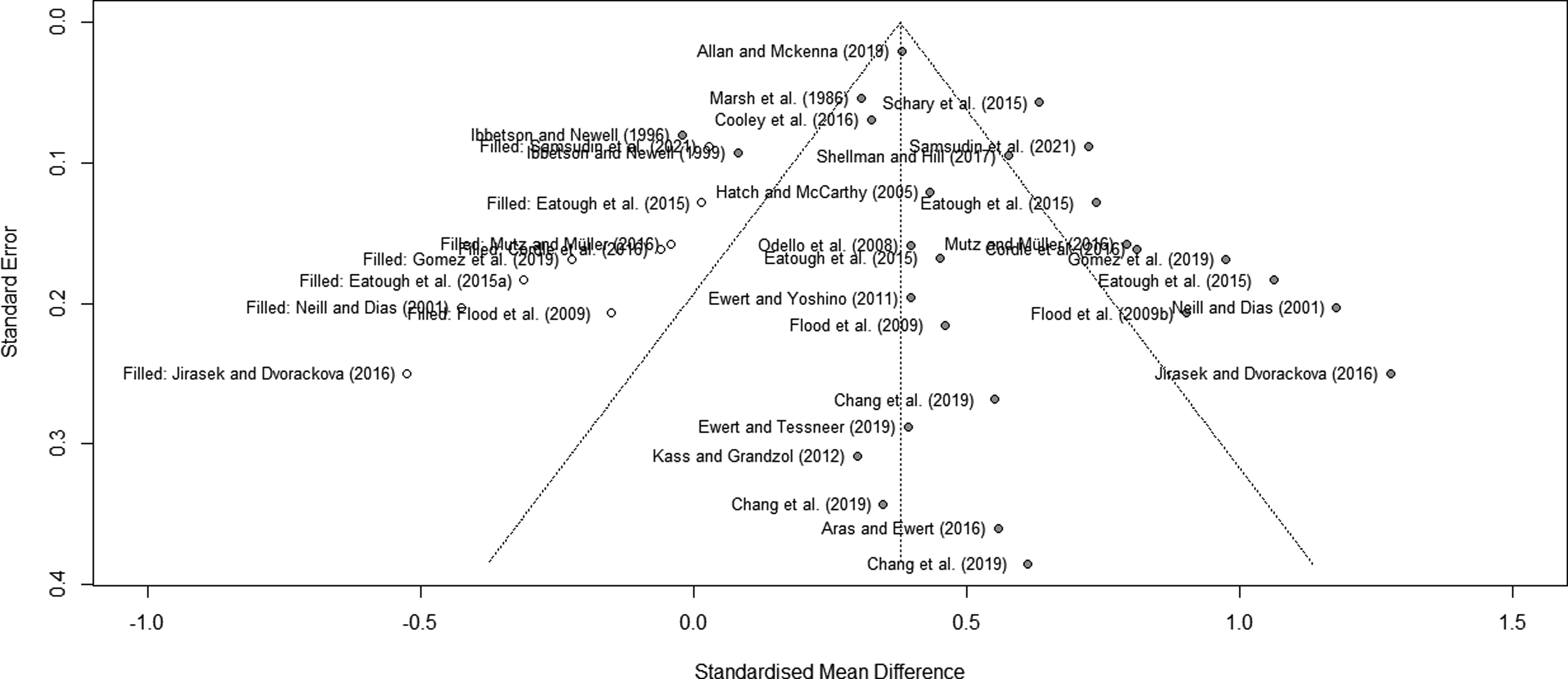

Publication bias

All the following tests for publication bias were carried out using aggregated study level (immediate, follow-up, and controlled effects) effect sizes and synthesized through random-effects models. We found indications of publication bias when evaluating aggregated study level immediate effect sizes. Publication bias was evident upon visual inspection of the asymmetric funnel plot (Figure 4) due to a distinct lack of small-study effects. This observation was supported by Egger's regression test which was statistically significant (t = 2.25, p = 0.034). Applying Duval and Tweedie's (2000) trim and fill analysis, nine studies were added to make the funnel plot symmetric (Figure 5), formulating a new, adjusted meta-analytic average effect size (g = 0.38, 95% CI [.23, .52]) with significant heterogeneity (Q(35) = 262.73, p < 0.001). This effect size was still statistically significant (p < 0.001), however, including these 9 smaller effect studies reduced the overall immediate effect size. No publication bias was evident for follow-up and controlled effects.

Asymmetric funnel plot indicating publication bias.

Symmetrical funnel plot with nine imputed studies.

Discussion

Transferable skills are highly valuable human resources that can gain organizations a competitive advantage by contributing to more robust performance and improved health (Ibrahim et al., 2017). This study investigated the effectiveness of physical challenge interventions in developing transferable skills and associated psychological health outcomes. This study has added to the literature by (a) focusing on the effects on current (i.e., employed samples) and future (i.e., prospective student) workforce samples (b) exploring a priori defined transferable skills that are desirable in occupational settings (c) using advanced statistical analysis (RVE) to account for dependent effect sizes.

In this review, we systematically identified and evaluated 44 published papers (47 independent studies) that met the inclusion criteria. Thirty papers were eligible for quantitative synthesis. Results from the meta-analysis of immediate effects revealed a medium overall intervention effect, suggesting that interventions can lead to short-term changes in a range of targeted transferable skills. This finding is broadly comparable to previous reviews, albeit with different outcomes, which found moderate to large effects when isolating the effects of adventure experiences to adult samples (Bowen & Neill, 2014; Gillis & Speelman, 2008; Hattie et al., 1997). Controlled studies help to substantiate this evidence, indicating that participants who engaged in a physical challenge intervention showed significantly greater mean increases in transferable skills compared to a control group. Most controlled studies compared intervention effects with a “no intervention” group and only four studies compared the effects of physical challenge interventions with alternative training courses (e.g., classroom-based learning; Lee & David, 2021). Although it is not the purpose of the review, the evidence base does not currently allow for meaningful comparisons to be made regarding which training methods might be more effective at developing transferable skills.

Meta-analyses across the identified primary outcomes revealed fairly consistent effects. The most frequently studied category of dependent variables were intrapersonal skills, with reference to effects on self-efficacy, self-concept, resilience, and emotional intelligence. Interventions also had very positive effects on interpersonal skills including outcomes relating to leadership and teamwork/collaboration. Similarly, a positive medium effect was estimated for cognitive skills such as problem-solving and critical thinking. Although this effect was not statistically significant, this is likely due to the limited number of studies contributing to the effect. Psychological health was included as a secondary outcome given its importance within workplace environments (Ford et al., 2011) and a positive medium effect was estimated, encompassing enhanced well-being, reduced perceived stress, and cognitive anxiety. Studies which were not included in the meta-analyses also support the meta-analytic results and recorded improvements in interpersonal (Bloemhoff, 2016; Gordon & Dodunski, 1999; Stoltz, 1992; Wheeler et al., 1998), intrapersonal (Bloemhoff, 2016; Finkenberg et al., 1994; Gatzemann et al., 2008; Kelly, 2019; Priest, 1996a, 1996b, 1998; Wheeler et al., 1998), cognitive skills (Bloemhoff, 2016), and psychological health (Ewert, 1988a, 1988b). These findings help to inform organizations on the types of transferable skills that can be developed through physical challenge interventions and also highlight the positive impact on psychological health. Notable support is provided for skills pertaining to interpersonal and intrapersonal domains, suggesting that these interventions can be a valuable tool for organizations to use as teambuilding or leadership development and a means of developing employees’ psychological strengths.

A small, statistically significant effect size was observed for follow-up effects (baseline to follow-up). Previous reviews have also reported the positive sustained effects of adventure programs of up to two years post-intervention (see: Bowen & Neill, 2014; Fleischer et al., 2017; Gillis & Speelman, 2008; Hattie et al., 1997), indicating valuable changes in transferable skills can be maintained over time. For example, the findings demonstrate that physical challenge experiences can translate into long-term improvements in team development (Bronson et al., 1992), self-confidence (Priest, 1996a, 1996b, 1998), emotional intelligence (Gómez et al., 2019), and psychological capital (Lee & David, 2021). Therefore, current evidence suggests intervention effects may extend beyond the immediate context of the program and positively “spillover” into various aspects of the individual's personal and professional life. Enrichment theory, which explains how experiences and activities in one domain, such as far-removed off-the-job training (e.g., physical challenge experiences), can enhance one's resources and positively impact other aspects of life, such as workplace relationships, provides valuable context for interpreting these findings (Greenhaus & Powell, 2006). The theory outlines an enrichment pathway, where skills, beliefs, and psychological resources are transferred across various life roles, thereby exerting influence on performance and broader outcomes (e.g., satisfaction) in receiving roles (e.g., skills gained through physical challenge interventions, such as interpersonal communication, can improve workplace team dynamics and relationships) (Heskiau & McCarthy, 2020). Accordingly, Top of Formenrichment theory serves as a valuable conceptual framework to deepen current insights into the relevance of physical challenge interventions within workplace contexts and should be applied in further academic exploration.

The follow-up effects are the most notable findings as the true value of training programs lies in effective implementation of learning for positive outcomes (Botke et al., 2018; McDonald et al., 2012). If participants are unable to retain and use their new skills and knowledge to improve workplace performance for example, then the program would not represent good use of resources (Botke et al., 2018). We cannot say conclusively, however, that due to significant follow-up effects that outcomes positively transferred to the workplace as no studies directly measured transfer of learning. Based on current understandings, there are recommendations that practitioners and organizations can employ to improve transfer and the enrichment process. These include considering participant antecedents (e.g., ensuring participants are motivated, engaged, and understand the intended learning outcomes of the program), during-course factors (e.g., draw analogies between the course content and applications to the workplace; instructors provide constructive feedback), and post-course factors (e.g., provide participants the opportunity to apply their learning in the workplace; employing group and individual processing and reflection activities) to support transfer of learning (Ford et al., 2018; Furman & Sibthorp, 2013; Rhodes & Martin, 2015; Sibthorp et al., 2011).

Analyses of potential moderators only yielded one significant finding across immediate and follow-up effects. Results for immediate effects suggest interventions lasting 2–7 days had a significantly lower impact compared to interventions lasting up to 1 day or more than 8 days. It is intuitive to assume longer interventions would be more effective to allow sufficient cognitive and skill development (Lacerenza et al., 2017), however, it is encouraging to observe shorter interventions, which are less time consuming, cheaper, more accessible to organizations, and in more demand (Rushford et al., 2020), are just as advantageous. While the perception of challenge is an essential component of physical challenge interventions (Houge Mackenzie et al., 2014; Houge Mackenzie & Brymer, 2020; Tsaur et al., 2015), findings suggest intervention outcomes may not be sensitive to increasing the degree of challenge. Indeed, an intervention which has an overemphasis on challenge is potentially counterproductive, or even harmful for the participants due to adverse responses (e.g., overwhelming stress) (Berman & Davis-Berman, 2005; Brown & Fraser, 2009). This finding reiterates the importance of observing a challenge-by-choice philosophy, allowing participants the autonomy to choose to physically take part in an activity. The observed sex differences are also noteworthy. Studies incorporating a majority female sample reported larger immediate effects in comparison to studies with a predominantly male sample. Males, however, recorded larger follow-up effects in comparison to females. Although the difference may not be statistically significant, findings cautiously support previous research which suggests that sex may be a contributory factor in the way in which interventions are experienced and associated outcomes are constructed (Blaine & Akhurst, 2021; Overholt & Ewert, 2015).

Likewise, the environment did not moderate changes in transferable skills. Purpose-built facilities (e.g., climbing walls and high ropes courses) which attempt to emulate authentic adventure experiences by preserving the essence of unfamiliarity have become increasingly popular as the demand for adventure-based experiences has grown (King et al., 2020; Van Bottenburg & Salome, 2010). However, they distinctively lack contact with the natural environment that is integral to many adventure-orientated programs. Nonetheless, although natural settings may be more efficacious for producing greater benefits for positive affect and psychological health-related outcomes (Zwart & Ewert, 2022), the study findings suggest the type of environment may not influence outcome change. Indeed, the lack of significant moderators do not imply interventions involving physical challenges should follow a “one-size-fits-all” approach (Warner & Dillenschneider, 2019). Rather, organizations should tailor the intervention to meet the needs and abilities of the group via conducting a thorough needs analysis (Lacerenza et al., 2018) and adhere to a challenge-by-choice philosophy to ensure an enjoyable and inclusive experience for individuals and the team.

Although findings have suggested physical challenge interventions can be an effective way for organizations to target and develop an array of valuable employee skills, there are potential restrictions to implementation. A notable issue concerns the cost and resources involved compared with other training approaches (e.g., virtual workshops), and, therefore, organizations may be unable or hesitant to allocate resources to these interventions. Moreover, given the nature of participation requirements, these interventions may not be accessible to everyone in the organization, such as those with physical disabilities or medical conditions that prevent participation or those who feel severely uncomfortable by the prospect. However, with increasing interest and funding in outdoor adventure experiences, facilities and equipment are becoming more accessible for people with disabilities (e.g., specialized harnesses) as well as instructors becoming more qualified and well-informed (Gibson, 2011). A further consideration relates to legal concerns for organizations as the integrated physical challenges often (purposefully) carry some level of risk (e.g., rock climbing or mountain biking). These concerns, however, are mitigated by established organizations (e.g., European Ropes Course Association) and official licensing (e.g., Adventure Activities Licensing) which not only ensure the safety of facilities, but also the qualifications of instructors for safeguarding participants. Organizations should consider these factors and decide whether these physically orientated interventions are appropriate for their workforce and whether they meet training objectives.

This review also highlights some limitations in the methodological quality of the evidence base. Conclusions of systematic reviews are dependent on the quality of the included studies, and, in this review, it is evident that high-quality research surrounding physical challenge interventions is scarce. The conclusions from the review, therefore, must be interpreted with caution. We acknowledge that there are a range of variables (e.g., assignment selection, statistical controls, the timing of measures, comparison group) involved in outdoor experiential programs that cannot always be accounted for and researchers are required to make choices regarding what is feasible given the available resources (Ewert & Sibthorp, 2009; Scrutton & Beames, 2015). However, a common issue within this review, and the broader literature, concerns studies exploring immediate change (pre- to post-intervention). Measurements taken immediately after the intervention may simply capture an “artificial” inflation in scores due to participants experiencing euphoria, emotional highs and a sense of achievement upon conclusion of the experience (Ewert & Sibthorp, 2009; Hattie et al., 1997; McEvoy, 1997; Scrutton & Beames, 2015). Data may, therefore, represent a manifestation of the postexperience high rather than true skill development or self-perception changes (Ewert & Sibthorp, 2009). However, this data does demonstrate that participants generally react favorably to adventure-based interventions, indicating their potential to foster peak learning experiences (Pomfret et al., 2023).

There is also the need for more studies to compare the effects of physical challenge interventions with other organizational approaches to training and development to truly understand if these experiences should be invested in and if they provide meaningful unique variance in developing transferable skills. To address this concern, future research must endeavor to employ more robust study designs (e.g., multiple measures of dependent variables and randomization) with follow-up assessments and comparison groups to allow for more thorough inferences to be made. More attention should also be given to the standards for reporting studies, for example, reporting randomized controlled trials according to the consolidated standards of reporting trials (CONSORT) statement (Grant et al., 2018). Moreover, due to the significant publication bias found within the studies, we recommend researchers to publish in peer-reviewed journals, even if there is no effect or sample sizes are small.

Limitations and future directions

The systematic review adhered to rigorous methods, however, there are limitations that must be acknowledged. Firstly, the findings from the review only relied on published literature and English language papers. We recognize that the omission of unpublished literature and conference abstracts may have contributed to the observable publication bias and conceal the impact of the “file drawer problem”. However, bias can be introduced into the review process from including studies which have not been through a formal rigorous peer-review and the inaccessibility to obtain unpublished studies may cause a poor representation of the unpublished literature (Higgins et al., 2021). Moreover, issues relating to the methodological quality of the outdoor and adventure education literature have been raised in prior reviews (e.g., Bowen & Neill, 2014; Holland et al., 2018), therefore, the review was based on literature that we were confident had been through a peer-review process. Additionally, language restrictions in systematic reviews have been found to present no evidence of systematic bias (Morrison et al., 2012).

Second, the review aimed to identify skills that were assumed to be transferable to occupational settings and contribute to desirable outcomes. Many reviewed studies, however, did not include follow-up assessments, did not measure transfer, nor did they assess the work-related repercussions. What is noticeable from the review is that the instruments used to assess outcomes are rarely specific to certain life domains, but rather capture generalized constructs (e.g., general self-efficacy). With regards to the occupational domain, future research should make use of the available validated instruments in the occupational psychology literature that allow participants to report their newly formed skills in relation to workplace-relevant scenarios. Furthermore, research should explore the wider ramifications for organizations engaging in these interventions, for example, the impact on workplace performance. We acknowledge that qualitative inquiry has explored some of the implications for the workplace and highlighted improvements to desirable workplace behaviors and attitudes following adventure experiences (Holman & McAvoy, 2005; McEvoy, 1997; Rhodes & Martin, 2014), however, this is seldom investigated through larger quantitative methods. For organizational contexts, it would also be beneficial to explore intervention effects in more diverse working populations (e.g., blue-collar professions), rather than the focus to remain on white collar professions which tend to dominate the organizational psychology field.

Finally, the design of the interventions included were heterogeneous and there are many variables which are not accounted for in the review. Various potentially important variables are seldomly documented in the research including the facilitators’ competence and delivery style, sample characteristics beyond traditional demographic information, the objectives and components of the intervention, the order the activities were delivered in, and if specific reflection techniques were used to name a few. Many of these variables are considered to strongly influence the intervention outcomes but due to the limited detail regarding the interventions and participants, researchers were unable to code for these moderator variables. Limited detail was also a concern for the pre-defined moderator variables which posed a challenge to the researchers to accurately code variables (e.g., the perceived difficulty of the intervention) and avoid researcher bias. The lack of detail limits both the scope of the systematic review and the ability for interventions to be replicated and compared meaningfully. Future research, therefore, should aim to provide more complete descriptions of the interventions and participants to enhance our understanding of what works and for who.

Conclusion

This paper has attempted to map the terrain for scholars and organizations interested in using physical challenge interventions to develop employees’ transferable skills and psychological health. The findings from this review suggest physical challenge interventions can lead to significant immediate increases in a variety of valuable transferable skills and psychological health outcomes, with particular reference to interpersonal and intrapersonal domains. Whilst limited, there is also evidence to propose that these outcomes can be retained over the longer term, but the extent to which outcomes transfer to the workplace has not been thoroughly examined. Interestingly, moderator analyses indicate programs can be potentially beneficial irrespective of design and participants involved, however, it is highly recommended that organizations conduct a needs assessment and carefully consider the objectives of the intervention before participation. Considering the quality assessment of the literature, we recommend that researchers explore the effectiveness of physical challenge interventions through more rigorous study designs. Overall, the current state of the literature does not truly allow for thorough conclusions to be made regarding the appropriateness and effectiveness of physical challenge interventions for organizational settings. Therefore, this paper serves as a call for further investigation into physical challenge interventions to better understand the long-term effects and implications for workforces and the mechanisms which might support transfer of learning.

Footnotes

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the The Leadership High Organisation (External PhD funders).