Abstract

To the complex question of “What is the number one issue on which we should focus as producers, evaluators, and consumers of research?” our simple and blunt answer is:

In a 1992 interview, political strategist James Carville provided a simple answer to the very complex question of what would win Bill Clinton's US Presidential campaign: “It's the economy, stupid” (“It's the Economy, Stupid,” 2021). Extrapolating from Carville's statement, we argue that the number one and most important reason why research is meaningful and makes a useful and valuable contribution is theory. In other words, to the complex question of what is the number one issue on which we should focus as producers, evaluators, and consumers of research, our blunt answer is:

In their seminal article “What theory is not,” Sutton and Staw (1995) wrote that, “Unfortunately, the literature on theory building can leave the reader more rather than less confused about how to write a paper that contains strong theory” (p. 371). Further, they noted that the lack of consensus about what theory should be among journal editors and reviewers meant that peer review is not very reliable because different research evaluators and consumers use their own idiosyncratic “conceptual tastes” to judge new theory. Our view is that, 30 years later, the level of confusion has abated very little, and the focus of the debate seems to have shifted to how much theory to have (Antonakis, 2017; Hambrick, 2007; Miller, 2007; Pillutla & Thau, 2013), or what to theorize about (Barney, 2018; Makadok et al., 2018; Mathieu, 2016; Wright, 2017), rather than why theory is necessary and useful. Yet precisely why theory is so important and how good theory comes about are questions that are asked over and over again by producers of research (i.e., current and future scholars), evaluators of research (e.g., journal editors and reviewers), and consumers of research (e.g., other researchers, organization decision makers, policy makers).

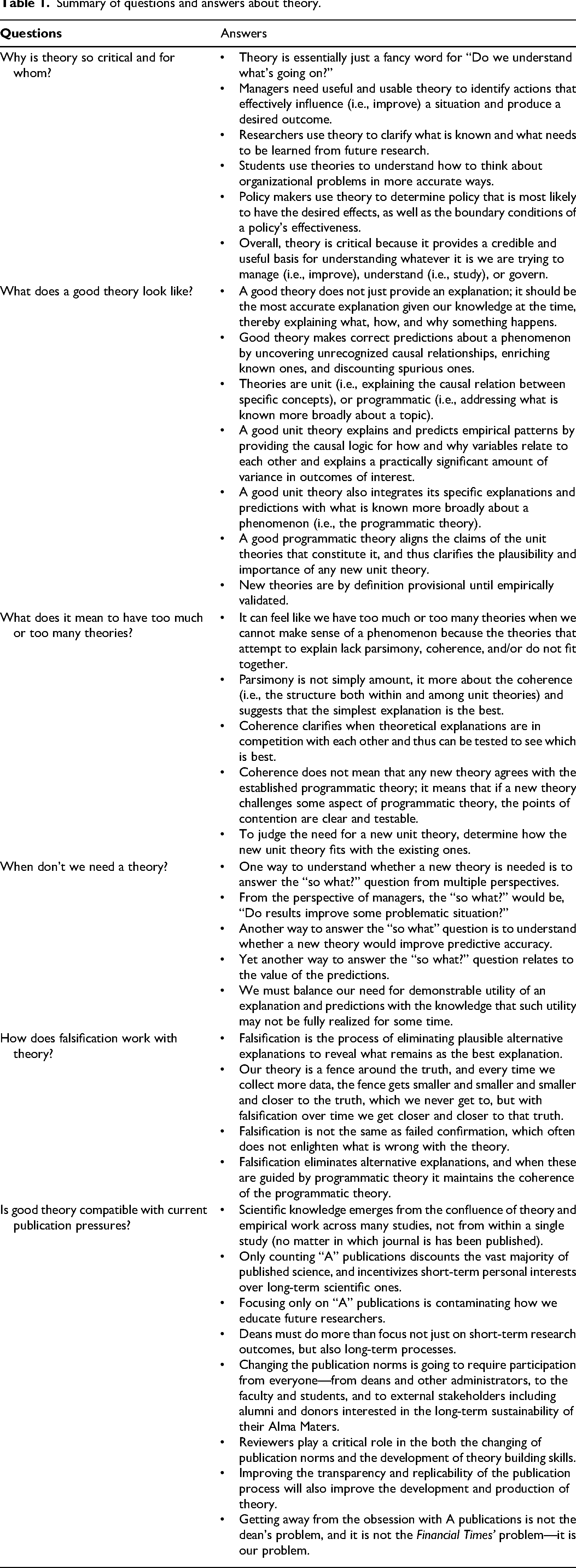

Our goal is to answer the following six questions about theory and its role: (1) Why is theory so critical and for whom? (2) what does a good theory look like? (3) what does it mean to have too much or too many theories? (4) when don’t we need a theory? (5) how does falsification work with theory? and (6) is good theory compatible with current publication pressures? As a preview, Table 1 includes a summary of our answers.

Summary of questions and answers about theory.

We anticipate that these questions will be familiar to most readers because we’ve all heard them in their exact or rephrased versions at presentations and workshops at professional meetings. Similarly, we have heard, and read, these recurring questions in our combined experience of about 50 years as editors, associate editors, and special-issue editors for several journals (e.g.,

Why is theory so critical and for whom?

Most journals expect that their articles make a contribution to theory. Reviewers evaluate contribution to theory, and this weighs heavily in editors’ decisions about a paper's publication deservingness. Thus, a manuscript that does not contribute to theory is unlikely to be published.

Yet

In our view, theory is essentially just a fancy word for, “Do we understand what's going on?” But, how we use this understanding depends on whether you are a manager, researcher, student, or policy makers.

Criticality of theory for managers

Managers wish to use theory to identify actions that effectively influence (i.e., improve) a situation and produce a desired outcome. For example, take the manager who notices high dysfunctional turnover (i.e., voluntary departure of high-performing employees). This manager seeks a theory that illuminates factors having direct and reliable causal effects. So, the theory should explain the factors most likely to be causing the high-performing employees to leave. A validated theory on dysfunctional turnover would provide knowledge on factors that the organization could potentially influence, and how those actions might reduce turnover and to what extent. This knowledge would be

Criticality of theory for researchers

Theories are obviously critical for researchers, but their needs and goals regarding theory are not necessarily the same as managers’. Using the same example, a theory relating personality to dysfunctional turnover is useful for researchers if it improves their understanding of dysfunctional turnover. It is even more useful if it leads to future research on the concept of dysfunctional turnover. For example, what other traits are related to dysfunctional turnover? Are there states (e.g., job dissatisfaction, uninteresting work) that may also be related to dysfunctional turnover? In sum, to the researcher, theory is critical because it clarifies what is known and illuminates what needs to be learned from future research.

Criticality of theory for students

Students, whether they are undergraduates, MBAs, or those enrolled in executive education courses, are expected to parse complex organizational problems. Theories are critical because they help students understand how to think about organizational problems in more accurate ways. Theories should encompass collections of validated findings that are woven into credible explanations for how and why organizational phenomena operate. Thus, they provide the frameworks for thinking about business problems. For instance, many undergraduates may not realize how or why turnover happens, or the processes involved; theories are useful because they put these components together into a coherent and valid framework that students can use do diagnose and respond to specific instances of turnover. While experienced executives may know all too well that dysfunctional turnover is expensive, they might not know how it actually happens or the extent of the problems it triggers, such as the departure of more high-performing employees in the near future.

Criticality of theory for policy makers

Finally, theories are also critical for yet another stakeholder group that, unfortunately, is overlooked in most management theory: policy makers (Aguinis et al., 2021). Many policies and laws relate to theories on employee well-being and performance, leadership behaviour, and firm structure and financial performance, among several highly relevant topics. So, there are many theories in management that are critical for policy makers because they address “governance principles that guide courses of action and behaviour in organizations and societies” (Aguinis et al., 2021, p. 4). We emphasize that implications for policy making are not the same as implications for practice, which are “explicit statements that the findings suggested the value of implementing some type of activity or practice” (Bartunek & Rynes, 2010, p. 102). Yet when it comes to writing policy that is most likely to have the desired effects, as well as highlighting the boundary conditions about the limits of a policy's effectiveness, a good theory will provide clarity and credibility to both issues.

In closing, theory is critical because it provides the basis for understanding whatever it is we are trying to manage (i.e., improve), understand (i.e., study), or govern (Bacharach, 1989; Whetten, 1989). Our goal as researchers is to understand organizations, to understand the function of organizations, and to understand why individuals do certain things. We then teach these theories to students who will become managers and possibly policy makers, who can in turn make better choices and policies for how organizations will run. Armed with theory, managers that notice high dysfunctional turnover in a unit within their firm are better equipped to diagnose and fix the problem. Such a theory comes from researchers, is taught to students, and can inform policy. While each role may use theory differently, they all use theory for what it is: a credible and useful explanation what is going on.

What does a good theory look like?

A theory should provide an

Explanation

A good theory does not just provide an explanation; it should be

Prediction

It is not enough to make predictions that are relatively obvious extensions of what is known to be true such as: people who do not like their jobs are more likely to seek greener pastures if they have alternatives. Good theory makes predictions about a phenomenon by uncovering unrecognized causal relationships, enriching known ones, and discounting spurious ones (Cortina, 2016; Mathieu, 2016). In all of these cases, the insights must be demonstrably correct, for accurate correspondence to reality is what distinguishes a

Good unit and good programmatic theory

Understanding what is good theory and what is not also involves making a critical distinction between

Yet a unit theory's strength also depends on its relationship with what is known more broadly about a phenomenon – the programmatic theory (Cronin et al., 2021; Wagner & Berger, 1985). Clear and coherent programmatic theory emerges from what Lakatos (1976) called progressive research programs, and is described as “the context of interrelated theories within which unit theoretical work occurs” (Wagner & Berger, 1985, p. 704). Programmatic theory comprises the settled science with respect to a domain or topic more broadly –in our running example, programmatic theory would encompass what is known about turnover more generally. So, unit theories would integrate into a body of programmatic theory.

For example, imagine that someone seeking to discover some novel insight proposed a theory that turnover was actually good and should be encouraged. Such a unit theory could certainly make an impact but

Programmatic theory aligns the claims of the unit theories that constitute it, and thus bears strongly on the plausibility and importance of any new unit theory. Continuing with our example, for functional turnover to be a plausible concept, researchers would need to explain how and why turnover benefits could outweigh its established costs. Even if the theory of functional turnover were empirically validated, without clear connection to the programmatic theory on turnover, it would be hard to understand what was incorrect about all the prior work on turnover that established it as harmful to organizations. This is why in addition to being testable, a good unit theory needs to be coherent with (i.e., understandable in light of) currently accepted programmatic theory. To use Huff’s (2008) research conversation metaphor, the meaning and value of any new sentence (unit theory) depends on what those in the current conversation are thinking (programmatic theory) at the time of its utterance; sentences that do not relate to what else has been said do not add much to the conversation. Sentences that bring up disconnected issues or contradict others only create confusion.

Finally, programmatic theory is also how we ultimately judge the theoretical contribution of any stream of research – does it advance the settled science on a topic and allow us to make new claims that have a high likelihood of being true? Thus, much in the same way a good unit theory clarifies the appropriateness of the methods used to test it, a good unit theory will also clarify how it relates to other unit theories within a programmatic theory (Cronin et al., 2021).

Good theory versus potentially good theory

We emphasize that a proposed unit theory as described in articles published regularly in journals such as

Thus, to produce good unit theory, we need to remember that

In addition, good unit theories are precise and explain a practically significant amount of variance in outcomes of interest.

What does it mean to have too much theory or too many theories?

While we never really know “too much” about a topic, it can sometimes feel like we have too much or too many theories. This happens when we cannot make sense of a phenomenon because the theories that attempt to explain it lack parsimony, coherence, or both. Explaining Y using a multitude of factors (X1, X2, X3, X4, and X5) is not really a problem if we can tell how each factor works separately, and how they all work together. There is trouble when we cannot tell which factor is at work, or if the factors provide contradictory predictions that cannot be adjudicated. Can the user understand how to apply the theory or is it unwieldy (i.e., too much)? Can the user discern clear predictions or are there multiple possibilities (i.e., too many)? One theory that proposes a four-way interaction that is mediated by three intervening variables is probably too much; two theories that explain Y in irreconcilable ways is one too many. Next, we discuss three criteria to more clearly understand whether we have too much or too many theories: lack of parsimony, lack of coherence, and lack of fit of unit into programmatic theory.

Lack of parsimony

Too much theory and too many theories actually speak to a common underlying problem, which is the lack of parsimony. Parsimony is typically thought of using Occam's razor – the simplest explanation is the best. Complicated and convoluted explanations are overwhelming and can easily feel like “too much.” Even if explanations are not complicated, multiple explanations for the same thing give the feeling that there are “too many” theories. A modern objection is that parsimony impinges on nuance, and marginalizes certain kinds of experiences within some broader phenomenon. But in actuality, nuance is often superficial (Healy, 2017) – representing a change in what happens but not how or why it happens – and the addition of the nuance via more explanation is often at the expense of parsimony. A lack of parsimony in either case will limit a theory's usability in terms of guidance for research, practice, or policy making (Antonakis, 2017; Cucina et al., 2014), and can easily limit or mislead rather than clarify understanding (Ferris et al., 2012; Tourish, 2019). It is intuitive to think of parsimony in terms of “less is more.” What sometimes gets lost is that parsimony is not about some magical number; rather, it is about the coherence. We address this point next.

Lack of coherence

Coherence is about structure both within and among unit theories. Coherence within unit theories means that the concepts and their causal mechanisms make sense. Yet the unit theories must also make sense when you consider them together, which is what makes programmatic theory coherent. Physics exemplifies coherence well. Specifically, one can study the topic of motion using different unit theories: classical Newtonian mechanics that measure the forces at play, Lagrangian mechanics that measure potential and kinetic energy, or the Hamiltonian approach that makes different assumptions about the space in which the motion is occurring. Each unit theory uses different constructs, but those constructs make it clear when each theory is appropriately applied, and all three are entirely consistent in the programmatic theory on motion; in fact, you can derive each unit theory from the others.

As a management example of coherence within and across unit theories, consider the programmatic theory on group brainstorming. There are three specific unit theories that collectively explain exactly why brainstorming in a group degrades the brainstorming process. Groups create (a) the capacity for social loafing (Comer, 1995), (b) the fear of evaluation by others (Geen, 1983), and (c) interference from what others were saying (Diehl & Stroebe, 1987). Each unit theory explains a distinct mechanism, but they do not contradict each other, they are unambiguous in their boundaries, and they integrate well with each other as well as what is known about groups more broadly. In fact, because the programmatic theory of group brainstorming is so coherent, it makes the explanations for why group brainstorming is ineffective seem like a small unit theory, and the specific mechanisms within theorized each seem like testable hypotheses. This is because the fit among the findings makes it easy for people to “chunk” the elements together into a single structure that fits within the limits of human working memory (Gobet et al., 2001). Yet this coherent programmatic theory on group brainstorming represents decades of research and scores of studies that refined and tested scores of alternative explanations (Paulus et al., 1993), and fit these together in a coherent way.

Achieving coherence helps reduce the stray theories that may be weaker than others, and thus helps parsimony in the “less is more” sense by clarifying when theoretical explanations are in competition with each other. In a very famous

Just to be clear, coherence does

Lack of fit among unit theories within a programmatic theory

For the author or reviewer weighing the need for a new unit theory among the existing ones, the question is really about how the new unit theory fits with the existing ones. If it cannot be connected to existing theory because it is overly complicated, overly circumscribed, or adds one more mediator or moderator to an already long laundry list, it is best to rework the theory until these problems are solved (or abandon it, see the next section). Having too many unit theories obscures rather than clarifies predictions, as in the case of what types of leadership behaviours predict direct-report performance (e.g., Gottfredson & Aguinis, 2017). Parsimony is the antidote to this situation, and it comes from coherence within and among unit theories. With coherence, unit theory predictions are more easily comprehended. More importantly, coherence reconciles unit theories with each other to produce clarity on the settled science which is the programmatic theory.

When don’t we need a theory?

It will take time and resources to create any validated unit theory, and even more time and energy to integrate multiple unit theories into a good programmatic theory. It needs to be worth the effort, and it is not always worth that effort. Yet most journals imply or mandate that every empirical study needs to enrich or build theory with the omnipresent question “What is a paper's theoretical contribution?” In fact, most journals require reviewers to answer this question on manuscript evaluation forms. Some scholars are pushing back against this so-called obsession with theory (Antonakis, 2017; Hambrick, 2007; Kim et al., 2018; Miller, 2007; Pillutla & Thau, 2013) by saying that a theoretical contribution is not always needed. While this view has merit, it does not tell us when we do and when we do not need theory—which is critical, because organizational phenomena ranging from the seemingly abstruse (e.g., liminality) to the seemingly mundane (e.g., meetings) could conceivably be explained, predicted, and improved. And while there are more and more papers on

Answering the “so what?” questions

From the perspective of managers, the “so what?” would be, “Do results improve some problematic situation?” If the answer is in the negative, a theory may not be needed. In addition, if the situations studied are rare, or the situations do not really matter much in terms of individual well-being or organizational performance, maybe the benefit of developing and a theory is not warranted by the cost. This argument sometimes emerges in discussions of the relevance of management research (Vermeulen, 2005).

Another way to answer the “so what” question is to understand whether a new theory would improve predictive accuracy. If the predictions are the same then the new theory is redundant, and offering an alternative explanation for the same prediction may reduce coherence among the unit theories (as we noted in the previous section). A new theory is useful if its predictions diverge from existing ones by either improving the accuracy of the prediction or by identifying new and useful explanatory variables. The comment “Don’t we know that already?” is often used to dismiss theory that explains known relationships (e.g., X has been shown to affect Y). But if a new theory makes it possible to more accurately predict Y using X by some practically significant margin, then the explanation for why that is should merit an investigation and, potentially, publication. Provided that new explanation was sufficiently tested against the existing one (per strong inference's “horse race”), this is an occasion to remove the weaker theory and increase parsimony.

A related way to answer the “so what?” question is about the value of the predictions. We believe that there is a mistaken assumption that the value of a theory should always be clear when it is proposed. Most often, scientific discovery does not work this way (Cagan et al., 2001; Cronin & Loewenstein, 2018). In other words, a theory typically starts with an important question that has no answer. For example, Mathieu (2016) proposed a good one: Why do some people stay in miserable jobs? It often takes time to realize the full value of a theory, especially when the theory is truly innovative, because the current programmatic theory can get in the way. For example, Kepler's new theory about how planets orbited at varying speed and in an elliptical shape was not simply accepted when it was presented because the current programmatic theory (i.e., the “clockwork universe” model) could not accommodate such a theory despite the fact that Kepler's questions were mathematically provable. New predictions are not valuable simply because they are new, so we must balance our need for demonstrable utility of an explanation and predictions with the knowledge that such utility may not be fully realized for some time. Theory should not fix problems we do not have, but it can also identify problems we did not realize we had. If it seems like a new theory might “stimulate future research that will substantially alter managerial theory and/or practice” (Hambrick, 2007, p. 1350) then we should be patient.

How does falsification work with theory?

Unfortunately, the vast majority of theories proposed are never tested (Edwards, 2010), in part because a proposal to test a theory is not seen as a novel contribution. For example, a study testing a theory that has been proposed in an article in conceptual journals such as

Falsification versus failed confirmation

In practice, falsification seems to be mistaken for failed confirmation: “We tested this theory and it did not work.” We believe this is a weak inferential strategy because we never prove a theory to be true, we can only prove that a theory is better than an alternative (e.g., Gottfredson & Aguinis, 2017). What is more, failed confirmation often does not enlighten what is wrong with the theory. For example, task conflict was theorized to improve team performance, yet a meta-analysis showed that typically it did not (De Dreu & Weingart, 2003). While this study was useful because it disconfirmed the theory, it did not explain what was wrong with the theory. It required the proposal of other unit theories to explain how and why task conflict was not helpful even though it seemed like it would be. Yet most of this research was confirmation, not falsification. In other words, research merely showed that whatever factor of interest was hypothesized to make task conflict helpful did, in fact, do so. But, as we discussed earlier, this creates lack of coherence and bloat at the level of programmatic theory because we ended up with a number of disconnected ways that task conflict could be made productive. Despite all these ways, a subsequent meta-analysis (De Wit et al., 2012) found support for the first prediction (i.e., most often, task conflict was not helpful). So, while the unit theories were confirmed individually, they did not really integrate into a true understanding of how task conflict actually worked.

What should have happened was that the various unit theories for what makes task conflict helpful should have each been seen as an alternative explanation for how task conflict works, and these could have been tested against or with each other in order to “shrink the fence.” If we believe that task conflict is only helpful when there is a moderate amount (De Dreu, 2006), then falsification should encourage research that discounts the alternative explanations to this one. Such research would not, for example, just confirm the curvilinear relationship between task conflict and performance; it would show that the amount of relationship conflict and trust predicted no variance above what was explained by the amount of task conflict. Falsification eliminates alternative explanations, and when these are guided by programmatic theory it maintains the coherence of the programmatic theory (e.g., Gottfredson & Aguinis, 2017)

Is good theory compatible with current publication pressures?

The “An A is an A” and “it does not count” challenges

Empirical validation of unit theory, when done correctly, does not simply confirm the unit theory; it helps weed out the inaccuracies in that theory and helps integrate a unit theory with the other unit theories within a programmatic theory. Over time, clarity about what is known with respect to a topic should emerge. So, scientific knowledge emerges from the confluence of theory and empirical work across many studies, not from within a single study (no matter in which journal is has been published).

Unfortunately, management and other fields seem to have lost sight of the collective nature of scientific progress. As described in detail by Aguinis et al. (2020b), one of the root causes of this problem is the obsession with A journal publications and, of course, counting them. In sports they say that “A win is a win.” It doesn’t matter if you won a basketball game by 30 points or a foul shot, or a soccer match by 3 goals during regulation or penalty kicks after two extra overtimes. It looks to us, and many others, that the field management has devolved to this state - where publishing an article in one of the few A journals has come to count as a win. The emphasis is not on what the study is about, what methods were used, or its implications for practice and future research. It needs to be published in an A journal, otherwise, we hear the phrase that the publication “does not count.” A textbook? It doesn’t count. A book chapter? It doesn’t count. An article published in a journal not on the A list? It does not count. Science does not accumulate if we ignore the vast majority of what is published. Further, if we are only counting and not reading, the short-term personal interest vastly outweighs the long term scientific one. Yet, unfortunately, this is the state of affairs that has emerged.

How did we get here?

We believe that the outsized emphasis on publication outlet as an indicator of a study's quality, rigour, and contribution to theory is a sad state of affairs. It is worth considering how we got to this point. In the 1950s two reports funded by the Ford foundation concluded that business schools were not doing a good job of training managers, and part of the problem was that teaching was based on opinion and subjective experiences rather than research and validated theories (Gordon & Howell, 1959; Pierson, 1959). Therefore, business schools began to hire social scientists including sociologists, political scientists, economists, and psychologists. As a result, research gradually became more rigorous, more theory oriented, and more methodologically sophisticated. Over time, business schools sought to measure whether a school was creating good, rigorous research. The demand for accountability was also created by outside stakeholders. For instance, state schools are influenced by legislators who want to be assured that faculty are spending their time wisely and doing quality research. Unfortunately, a fast and easy method was simply to count publications in specific journals deemed to be of high quality. Interestingly, this trend was also due to business school rankings and reporters who created, for example, the

So, we ended up with the current state of affairs where we judge an article's contribution and importance based on which journal it was published in—the proverbial judging a book by its cover. This is causing all kinds of problems with the fundamental process of science: creating clarity on what is and is not credible knowledge. As a result, in many business schools, the methodological and epistemological discussions that should guide the creation of research are being co-opted by the “Will this paper land in an A journal?” question. Innovative methods that may yield more accurate or insightful analyses are eschewed for fear of confusing reviewers. Actual debate on whether theories can be merged or falsified is never attempted for fear of offending reviewers’ pet theories. Academic socialization is becoming more about “landing an A publication” than substance. Or worse, a process of teaching future researchers how to game the system (i.e., do whatever it takes to publish in an A journal) rather than create and test useful and usable theories.

Proposed solutions: The role of researchers, deans, and other stakeholders

We are certainly not blaming researchers. All of us are part of a research ecosystem that heavily incentivizes junior and senior scholars to think and act in a certain way. We can start with the leadership of our schools and universities. To nurture a PhD programme that will produce star researchers 20 years out requires a long-term perspective, but business school deans typically remain at a school for 5 or 6 years and then move on. Even if one wanted to remain at a school for a long time, financial pressures, especially now, lead to thinking more about short-term strategies that will quickly improve what seems to be the prestige of the school, as signalled by rankings. It is a short-term outcome focus that needs to be complemented by a long-term process focus.

Journal lists make it easy to rank research quality and productivity, and so they are tools of convenience. But, the fact that this information is easy to get does not mean it is valid (Van Fleet et al., 2000). Even if deans do want to support theory advancements, they may balk at putting a full professor in a classroom with 4 non-paying PhD students versus 40 paying MBAs or undergraduate students. So, deans looking out for their own individual interests and short-term career goals, as opposed to the long-term sustainability of a business school as a generator of useful and usable knowledge, choose to reduce the number of doctoral courses. This is particularly problematic given that there is an increasing number of sophisticated methods and theories to learn, so more training is needed. For, even if a student learns sophisticated methods and all the latest unit theories, without learning the broader scientific process of falsification, the knowledge produced will be less useful and usable because it is only weakly tested. In short, changing the culture of counting is going to require participation from everyone—from deans and other administrators, to the faculty and students, and to external stakeholders including alumni and donors interested in the long-term sustainability of their Alma Maters.

We should also mention reviewers, for they are the gatekeepers for the development of unit theory (and ultimately the studies that test them). Reviewers play a critical role in the both the changing of publication norms and the development of theory building skills. Authors learn early on and quickly that contradicting reviewers is a losing strategy when the goal is to get an A journal publication, and when you are worried about tenure, it is a foolish risk to take. Reviewers, therefore, have an outsized influence on what gets published, and some of their bad habits can be very harmful. Reviewers need to understand how unit theories fit with data but also programmatic theory. This is a balancing act that relies heavily on falsification. On the one hand, reviewers need to analyze unit theory for its fit with data, and to understand that a theory is provisional until it is proven. When a reviewer, thinking of a published unit theory, asks, “Don’t we know this already?” unless that theory is validated by data then the answer is “no.” In fact, unless that theory has been validated by multiple studies, the answer should be “only provisionally.” Testing theories and then replicating those tests is how we arrive at settled science. This also means that reviewers need to be more welcoming of the explanation of unsupported effects. A reviewer who writes, “Hypothesis 3 was not supported and your paper is already long, so take it out” will rob the field of important information that other researchers should know before conducting their own research.

Aguinis, Banks, et al. (2020a) offered 10 actionable recommendations for journal editors to improve the transparency and replicability of the publication process aimed at improving theory. One that we believe would be particularly impactful is to make the journal publication process more like the doctoral dissertation process. Specifically, doctoral students submit a proposal based on the theory and methodological procedures before data are collected. The committee evaluates and approves the quality of the proposed research without seeing the results first. Thus, they affirm that the value and contribution of the study have more to do with the design than the results. Some journals are already implementing this procedure with a registered report, or preregistration, where researchers submit their paper using a similar format as in dissertation proposals. The paper includes information on theory and methods, but not the results. Then, if the proposal is accepted, unless something changes in the protocol, the full paper will be accepted once submitted. This is true even if the results are not statistically significant or contrary to the hypotheses. This is a publication process that allows for the falsification of theories without bruising reviewers’ egos, and certainly more likely to lead to the publication of results that are not statistically significant.

The converse of this is to realize that interpreting the findings of any study depends on the programmatic theory to which it speaks. One could have a pre-registered paper with data that support the hypotheses and substantial effect sizes, but if this paper's unit theory cannot be reconciled with programmatic theory on the topic, it should not be published. Empirically, the findings simply could be a fluke; theoretically, the findings will not advance the programmatic research on the topic. Both of these issues are remedied by clearly linking the empirics that test a unit theory to the broader programmatic theory. Importantly, if the argument can be made for how and why this newly tested unit theory should cause the field to reconsider what was considered to be settled science, then there may be a significant contribution to the field (provided more testing is done).

Improving the review process may actually help people produce better research in the future because it will provide more transparency into how science actually works. What gets published currently obscures this process because all people see is the polished finished product. Scholars see statistically significant effects with large effect sizes that support the proposed hypotheses. Thus, when anyone reads the articles published in our top journals, they look perfect. Yet, as most of us know, that is rarely how research actually happens. Thus, scholars do not get the benefit of seeing all the things that were tried but did not work, which not only provides a distorted view, it actually limits learning how those theories were actually developed. It would be like expecting a new chef to learn how to cook by ordering and eating a fabulous entrée at a French restaurant. While you can learn what the end product should look like - the textures, the colours, the placement of the different components, and the taste – this is far from instructive about how to produce such an outcome. And while it will still take many tries for the new chef to refine their skills, that process can be sped up if the new chef can see (and avoid) all the different mis-steps the head chef went through to come up with this fabulous entrée. This analogy applies equally well across studies, where the attempts are the empirical studies and the operation is the theory. We need to teach the field about that process as well. Many papers do not report all of the attempted tests that were failures. It takes away valuable information.

This is where the onus comes back to us as management scholars. Getting away from the obsession with A publications is not the dean's problem, and it is not the

Concluding remarks

Theory is essentially just a fancy word for “Do we understand what's going on?” It is why journals demand, reviewers evaluate, and publication depends on how an investigation relates to theory. Theory is critical because it provides the basis for understanding whatever it is we are trying to manage (i.e., improve), understand (i.e., study), or govern. A good theory yields improved understanding by offering the most accurate explanation given our knowledge at the time, and does not merely predict what will happen but how and why something happens. A unit theory explains the causal relation between specific concepts and a programmatic theory addresses what is known more broadly about a phenomenon. To be good, both need to be parsimonious and coherent. Feeling like there is too much and too many theories suggests a common underlying problem: Unit theories that are by themselves complicated and convoluted (i.e., lack of parsimony), and collectively yield programmatic theory that is disjointed or contradictory. Although we argue for the criticality of good theory, theory is not always needed. When a situation is well managed and well understood, a new theory may not be needed. If a theory does not offer divergent predictions in terms of either accuracy or the causal mechanism, it may not be necessary. The value of a new the theory rests on its predictions - the more accurate they are, the better the theory. Good theory is created through the process of falsification: we eliminate plausible alternative explanations to reveal what remains, which is accepted as the best explanation. Theory is a fence around the truth, and every time we collect more data, the fence gets smaller and closer to the truth. We can never actually get to that truth, but over time falsification brings us closer. We should remember that falsification is not the same as failed confirmation; the latter often does not enlighten what is wrong with the theory. We also need to remember that scientific knowledge emerges from the confluence of theory and empirical work across many studies, not from within a single study (regardless of which journal published it). We are facing a challenging “An A is an A” phenomenon, meaning that there is an almost exclusive emphasis on articles in A journal publications, and other publications rarely count (e.g., textbooks, articles in other journals, book chapters). Getting out of this situation means making the publication process more compatible with theory advancement, and this will require the involvement of multiple stakeholders: researchers, journal editors and reviewers, university administrators, and external stakeholders including alumni and donors.

In closing, theories are critical for anyone seeking to explain, predict, and influence what happens in organizations. So, theory is really what matters the most to both knowledge creators and knowledge users. Yes,

Footnotes

Author Note

Both authors contributed equally. We thank

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Author biographies

![]() ” www.hermanaguinis.com

” www.hermanaguinis.com