Abstract

Workplace-based assessment (WBA) integrates assessment with clinical practice and ensures that healthcare professionals meet required competency standards. This essay discusses WBA's key concepts, such as competency, standards, principles of assessment, rules of evidence, and entrustable professional activities (EPAs). It highlights the importance of aligning assessments with learning objectives and setting clear standards to enhance healthcare education and patient care.

Introduction

Workplace-based assessment (WBA) plays a pivotal role in healthcare education by bridging the gap between learning and clinical practice. It ensures that healthcare professionals meet established competency standards by systematically evaluating learners’ performance in clinical settings. Central to WBA is competency, which encompasses observable abilities related to knowledge, skills, values, and attitudes. Competency frameworks, such as those developed by the Accreditation Council for Graduate Medical Education (ACGME), structure these evaluations by categorizing competencies into domains such as medical knowledge and patient care. Within each domain, competencies are further organized into entrustable professional activities (EPAs).

The effectiveness of WBA relies on adherence to established standards, principles of assessment, and rules of evidence. A thorough understanding of these foundational concepts is essential for its successful implementation. This commentary explores these key elements through the lens of EPAs, illustrating their role in the WBA process.

Competency and entrustable professional behaviours (EPAs)

Examples of EPAs.

Integrating EPAs into WBA ensures that assessments are competency-based, contextually relevant, and reflect actual clinical practice. EPAs provide a clear framework for trainees to demonstrate their readiness to undertake professional responsibilities independently, which is critical for ensuring patient safety and effective healthcare delivery.

Standards

Standards are authoritative statements that judge the quality of practice, service, or education. They provide benchmarks for evaluating performance and ensuring consistent, high-quality care. Standards can be set by professional organizations, licensing bodies, institutions, or expert panels and should be based on agreed-upon levels of performance considered adequate for specific purposes. 3 These standards ensure that assessments are fair, valid, and aligned with the competencies required for professional practice. For instance, the Singapore Nursing Board (SNB) governed the required core guidelines for developing competent nurses according to the four main domains: Legal and Ethical Nursing Practice, Professional Nursing Practice, Collaborative Practice and Teamwork, and Continuing Professional Education and Development.

To illustrate, consider EPA 1 from Table 1: “conducting patient assessments.” This EPA is aligned with expected competency levels throughout residency training. For instance, Year 1 residents (R1) are expected to perform this task under direct supervision (Level 2), reflecting the minimum acceptable standard for first-year residents. These standards are linked to entrustment levels, ensuring junior residents can safely perform the EPA with supervision.

Setting standards involves defining levels of achievement or proficiency required for competency. Standards setting ensures that the benchmarks for performance are appropriate, achievable, and reflective of current practice. This process includes deciding the type of standards, whether they are minimal, aspirational, or developmental. Choosing the method for setting standards, such as the Angoff method or the Borderline Group method, is essential. Selecting judges and assessors ensures they have relevant expertise. Engaging stakeholders, including learners, educators, professional bodies, and policymakers, helps establish the standards by setting clear, agreed-upon benchmarks for performance. 3

Principles of assessment

Effective assessment in healthcare relies on key principles: validity, reliability, fairness, and flexibility. 4

Validity refers to the degree to which an assessment measures what it is intended to measure. It ensures that assessment activities directly address the abilities and knowledge required in practice. 5 Validity includes the assessment method’s suitability and the relevance and accuracy of the evidence collected. 6 Ensuring validity involves aligning assessment methods with learning outcomes and competencies, and using assessment tools that accurately reflect clinical scenarios. For example, EPA 3 “Performing Clinical Procedures” (Table 1) can be assessed through direct observation, using procedural checklists to ensure all necessary steps are followed.2,7

Reliability is the consistency and reproducibility of assessment outcomes. It ensures that assessments yield stable results across different assessors and contexts. This requires standardized assessment criteria and thorough training for assessors to interpret evidence consistently. Reliability can be enhanced through clear rubrics, inter-rater reliability checks, and repeated measures. 3

Fairness in assessment means equitable to all individuals, unbiased, and non-discriminatory. 8 Assessments should not be influenced by factors such as race or gender. Fair assessments use a variety of methods to accommodate diverse learner characteristics and learning approaches. 9 Strategies to ensure fairness include blind marking and the use of multiple assessors. For EPA 4, “Communicating with Patients and Families,” fairness can be ensured by using standardized patient encounters and providing the same scenarios to all trainees. 10

Flexibility in assessment allows for variations in assessment contexts and methods. 11 Flexible assessments are adaptable to different delivery modes and accommodate different needs without compromising the validity and reliability of the assessment. 9 This approach includes assessments in multiple formats, such as written exams, oral presentations, and practical demonstrations. For example, in assessing EPA 5, “Collaborating with Interprofessional Teams” (Table 1), flexibility is demonstrated through teamwork simulations, virtual team meetings, and reflective practice documentation. 2

Rules of evidence

The rules of evidence ensure that the evidence collected is authentic, valid, sufficient, current, and consistent.12,13 Authentic evidence must be the individual’s work, and can be gathered through direct observation, practical demonstrations, and real patient interactions. Valid evidence should cover a broad knowledge and skills relevant to the standards. Sufficient evidence means that enough evidence must be collected to demonstrate the individual’s competence. Ensuring sufficiency involves collecting multiple pieces of evidence to build a comprehensive picture of the learner’s competence. 13 Current evidence must reflect the individual’s skills and knowledge. Consistent evidence should meet specific standards and match the type of performance required.

In the context of EPAs in Table 1, consider EPA 2, “Developing and Implementing Patient Management Plans.” Authentic evidence includes a detailed patient management plan created by the trainee. To ensure the evidence is valid, the plan should address patient issues and integrate various aspects of care. Sufficient evidence involves multiple patient management plans developed over different clinical rotations. The plans should be current, reflecting the trainee’s most recent clinical experiences and knowledge. Consistency is achieved using a standardized template and criteria for evaluating each plan.

Types and forms of evidence

Types of evidence include direct evidence (collected through direct observation by the assessor), indirect evidence (obtained from sources like written assignments or project work), and supplementary evidence (includes testimonials and 360-degree feedback, usually not collected directly by the assessor).13,14

In WBA, forms of evidence are categorized as product evidence (the results of the individual’s work), process evidence (how the individual performed the task, observable through direct actions), and knowledge evidence (information demonstrating the individual’s understanding and comprehension of a subject matter). 13

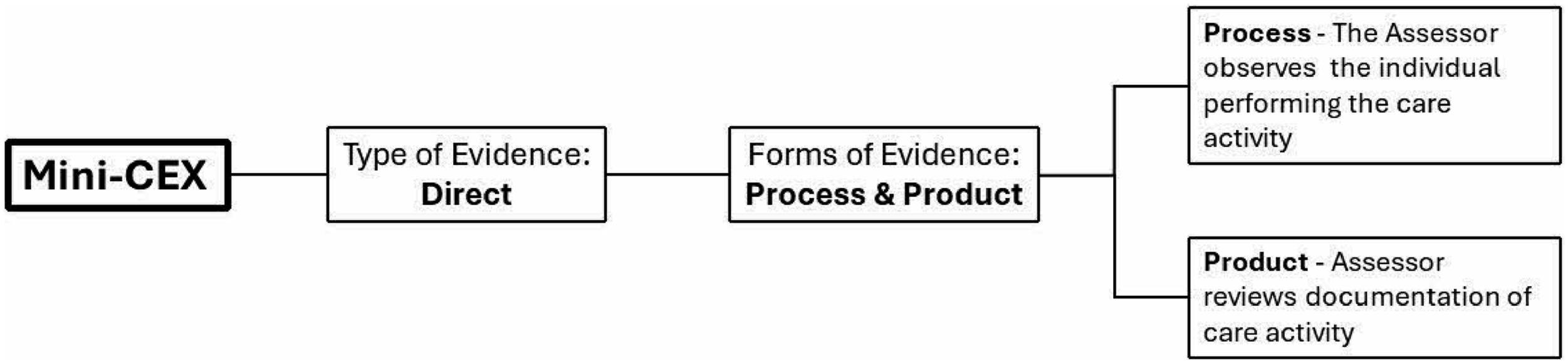

Together, these forms and types of evidence ensure a comprehensive assessment approach that captures different aspects of competency. Direct evidence is valuable in WBA as it provides immediate feedback on the learner’s performance in clinical settings. Indirect and supplementary evidence complements this by offering additional insights into the learner’s capabilities and behaviours. Figure 1 illustrates the mini Clinical Evaluation Exercise (mini-CEX) as an example of an assessment method, demonstrating how different types and forms of WBA evidence are used in healthcare. It emphasizes direct observation of clinical activities and the evaluation of clinical documentation. Using mini clinical evaluation exercise (mini-CEX) to illustrate the types and forms of evidence.

Aligning assessment with learning objectives

Aligning assessments with learning objectives ensures that the assessments accurately measure what learners are expected to achieve. This alignment is critical for providing meaningful feedback to learners, guiding their learning, and ensuring educators meet educational goals. In workplace-based assessment (WBA), aligning assessments with well-defined learning objectives requires using the SMART criteria, which stands for specific, measurable, achievable, relevant, and time-bound. These objectives not only clarify the purpose of the course but also guide the development of course materials and establish mutual accountability between learners and educators. 15

Learning objectives should be communicated to learners at the start of the educational process and revisited throughout to maintain focus on the intended outcomes. For instance, a SMART learning objective for EPA 1 (as shown in Table 1) could be: “Students will demonstrate the ability to independently conduct comprehensive patient assessments, including thorough history taking and a complete physical examination, for at least three patients.” This objective can be assessed through a checklist during direct observation in an Objective Structured Clinical Examination (OSCE) or clinical setting. It ensures that students’ performance is evaluated based on specific conditions and quality standards, in alignment with WBA competencies.

Conclusions

Workplace-based assessment is a cornerstone of healthcare education and is essential for ensuring that healthcare professionals meet the required competencies. By adhering to the principles of assessment and rules of evidence, aligning assessments with clear learning objectives and standards, and incorporating EPAs, WBA can effectively enhance learning and professional development in healthcare.

Footnotes

Acknowledgements

We would like to thank all the participants in AMEI workshop on Workplace-Based Assessment the authors conducted.

Author contributions

CD, LSC and CY developed and conducted an AMEI workshop on this topic. Based on the workshop and the feedback from the participants, all three wrote the first draft of the manuscript, reviewed and edited the manuscript and approved the final version of the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.