Abstract

Background and Objectives

The purpose of this study is to examine differences in image quality, discrepancy rates, productivity and user experience between remote reporting over Virtual Application (VA) using visually calibrated monitors, and reporting using diagnostic grade workstations in hospital premises.

Methods

Three specialist accredited radiologists examined and provisionally reported outpatient CT and MR studies over PACS delivered as a VA, using visually calibrated monitors from their homes. They then proceeded to view the same studies within hospital premises and issue a final report. Surveys were filled out for each imaging study. Discrepancies were reviewed and assigned RADPEER scores.

Results

A total of 51 outpatient CT and MRIs were read. Relative to hospital premise reporting, on a Likert scale of 5 (the higher the better), average image quality was 3.9, speed of loading and image manipulation was 4.4 and productivity was 4.1. Remote reporting user experience did not differ significantly between CT versus MRI studies. Complete concordance rate was 80.4% (41/51) and only one of the studies had a significant discrepancy, which may have been due to extra time given to interpretation. All three radiologists reported factors influencing image display and quality as the top factor impacting remote reporting throughput.

Conclusions

Remote reporting over VA with visually calibrated monitors for CT and MR can be useful in periods of staffing difficulty to augment on-site radiologists, though attention must be paid to its limitations and policies defined by local leadership with reference to relevant national position

Introduction

The COVID-19 pandemic has forced radiology departments worldwide to rethink their working practices to ensure business continuity whilst ensuring staff well-being by social distancing and avoiding daily commutes by public transport. A natural solution has been to promote teleradiology. Implementation of remote reporting, however, has its challenges for organisations that have not previously been setup for it.

Secure network connectivity is required to protect patient data at endpoints and in transit. However, connectivity and mode of application delivery may not always preserve image quality. Specifically, Virtual Applications (VA) transmit data to the user in the form of bitmaps, which when used without graphic enhancement features results in image degradation.

If a Bring Your Own Device (BYOD) strategy is chosen by the hospital, hardware may not be standardised in radiologist’s homes. Key hardware components include monitor specifications and quantity, personal computer (PC) Random Access Memory (RAM), Graphical Processing Units (GPU) and Central Processing Units (CPU). Commercial off-the-shelf monitors may also not be calibrated to DICOM Grayscale Display Function (GSDF) standards, which typically requires a photometer and dedicated calibration software.

In our institution, we previously used Citrix Gateway (Citrix Systems, Inc.), to deliver RIS and PACS in the form of VA, and in most cases, radiologists use their own devices. Due to legacy hardware, Citrix High Definition eXperience (HDX) graphics enhancement was unable to be implemented. With concerns regarding image quality, discrepancy rates, productivity and user experience and a general paucity of data, we initiated a study to find out if there are significant differences in these factors compared to reporting on-premise using diagnostic grade workstations and hospital intranet.

Methods

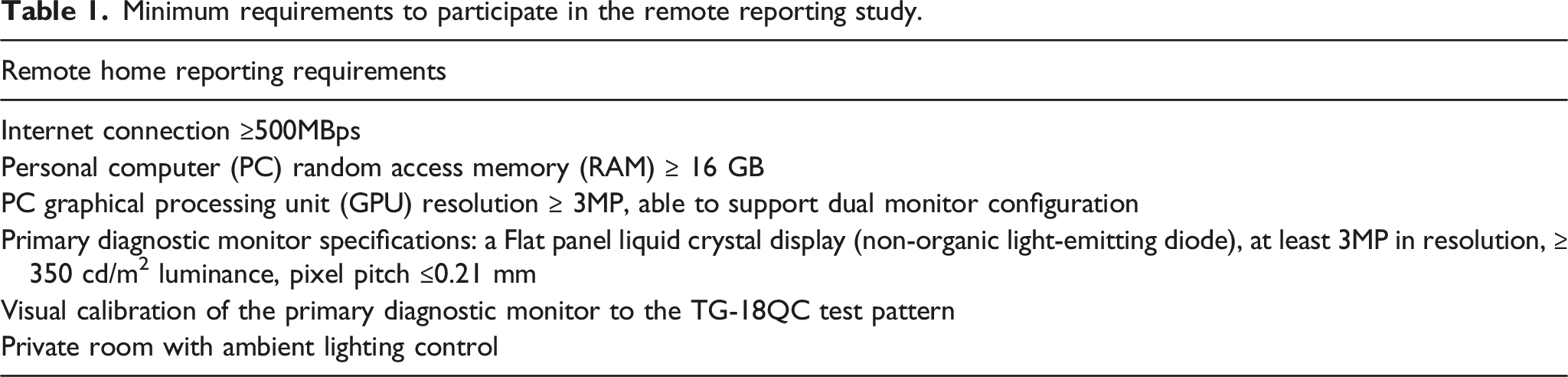

Minimum requirements to participate in the remote reporting study.

This was a within-subject task analysis involving CT and MRI studies whereby participants provisionally reported in their homes’ outpatient cross-sectional imaging over two sessions (1 day). RIS (Philips Vue RIS 11.2), PACS (Philips Vue PACS 12.2) and Electronic Medical Record (Sunrise Clinical Manager) were accessed over Citrix Gateway. Participants then did a final report on the same studies within the hospital premise using diagnostic grade workstations over two additional sessions. It is important to note that all radiologists had remote access to the full patient electronic medical record as well as prior studies.

Following each study, the participants completed a user survey on their reporting experience using a five-point Likert scale. Questions surveyed included ease of remote reporting, speed of loading/image manipulation, image quality perception, differences in length of time to report between remote versus in-premise reporting and discrepancies between provisional and final reports. Survey forms were filled out using Google Forms. Data were presented as aggregate averages and per subspeciality radiologists. Statistical analysis was performed using the independent-samples t-test to evaluate for user experience differences for CT versus MRI studies.

A single independent associate consultant radiologist then reviewed both the provisional and final reports of studies flagged as discrepant. RADPEER scores were assigned and tabulated. 2 A score of one indicates concordance, a score of two indicates discrepancy in interpretation not ordinarily expected to be made (understandable miss), and a score of three indicates discrepancy in interpretation which should be made most of the time. Scores 2 and 3 are further assigned A if the discrepancy is clinically significant or B if the discrepancy is without clinical significance.

Results

A total of 51 outpatient cross-sectional studies were reported, 16 CT and 35 MRI, of which 20 were Body, 22 Musculoskeletal and nine were Neuroradiology cases.

Survey of user experience with remote home reporting. Likert Scale: 5, very good; 4, good; 3, acceptable; 2, poor; 1, very poor. Neuro Rad: Neuroradiologist, MSK Rad: Musculoskeletal Radiologist, Body Rad: Body Radiologist.

CT versus MR remote user reporting experience. Data based on Likert scale with no significant difference detected at the p = 0.05 level between the two modalities. Error bars reflect 95% confidence intervals.

For body studies, there were 12 CT and eight MRI studies with the two most common diagnoses being no tumour recurrence (n = 4) and diverticulosis (n = 3). For musculoskeletal studies, there were 2 CT and 20 MRI studies with the two most common diagnoses being normal (n = 7) and meniscus tear (n = 3). For neuroradiology, there were 2 CT and seven MRI studies and the most common diagnosis was normal (n = 5).

List of discrepancies between provisional (remote) versus final reports (in-hospital premise). Category C: Change in interpretation in final report. Category A: Additional finding in final report.

Concordance between provisional reports (remote reporting over Virtual Application and using visually calibrated monitors) vs. final reports (in-hospital premise).

Feedback was sought regarding the factors impacting remote reporting throughput. All three radiologists reported factors influencing image display and quality as the top factor. Speed of applications, voice recognition and lack of on-site technical support were cited as the second biggest factors.

Discussion

Most comparative studies examine concordance for remote reporting with study designs not specifically examining implications of image delivery over VA and viewing using visually calibrated monitors. To the best of our knowledge, our study is the first specifically examining remote reporting under these parameters. 3

Significant resources and agility are required to implement measures to preserve image quality presented to the radiologist. It may not be possible to institute these measures quickly, for example, VA graphic enhancements, network connectivity like a Secure Socket Layer Virtual Private Network (SSL VPN) or deploying diagnostic grade workstations to radiologists’ homes. The paucity of major discrepancies bolsters confidence in reporting over VA with the necessary hardware requirements, though the presence of discrepancies does support the addition of a reporting caveat as recommended by the Royal College of Radiologists . 4 Nonetheless, the major discrepancy rate of 1.96% (1/51) in our study is in line with published radiology reporting discrepancy rates of approximately 3–5% . 5 Radiologists who are not confident to issue a final report are encouraged to return to premise to do so.

Remote reporting productivity was not on par with hospital premise reporting. This is not in keeping with two other studies, one by Quraishi et al. 6 and another by Dick et al. . 7 The study by Quraishi et al. demonstrated that the majority of respondents to their survey across the United States of America reported no change or improved turnaround time, though this did not reveal what mode of network connectivity and hardware the respondents used. The study by Dick et al. of a single radiologist using VMWare’s VA and monitors calibrated to DICOM GSDF standard showed 140% productivity in a 4-week period. In our study, our three radiologists were specifically briefed about the limitations of VA in image quality, which may have led to increased caution and scrutiny of images. Primary diagnostic monitors were not calibrated to DICOM GSDF in our study, which may lower reading efficiency and confidence. There was also non-complete standardisation of hardware though minimum requirements were met, which could lead to heterogeneity in results. These limit direct comparison and may also account for differences in productivity.

Interestingly, application speed was not the foremost reason cited by our participants for impact on productivity. This could have been due to an improvement in country’s Internet Service Providers’ capacity since the national push for remote working. Instead, image display and image quality were the top reasons. Image quality is discussed above. Pertaining to image display, our findings corroborate a study showing that dedicated medical displays improve reading efficiency relative to commercial off-the-shelf monitors. 8 The authors recommend that radiologists considering working from home on a frequent/permanent basis use a dedicated medical display. This not only guarantees resolution, but also assures high luminance, uniformity, regular calibration to DICOM GSDF standard and longevity.

On a side note, tabulated template reporting can be implemented to foster productivity gains. The radiologist who used a macOS was not handicapped by the lack of voice recognition as he could touch-type and also used tabulated templated reporting.

Technical support can be difficult to render remotely. Despite our best efforts to send detailed instructions on remote reporting and visual calibration and making our imaging informatics team available over phone and messaging, participants still cited that remote support was suboptimal. The situation is akin to the inability of clinicians to perform a physical examination over telemedicine. For instance, our neuroradiologist had a sudden inability to receive a video signal on his primary diagnostic monitor, which he had to eventually bring to hospital for our imaging informatics team to troubleshoot. Failure of the DisplayPort cable was identified but this had already resulted in productivity loss of a reporting session. Departments embarking on remote reporting need to factor in their planning potential productivity losses if the radiologist encounters severe technical difficulty.

There are several limitations to this study. This is a small study covering 51 studies and only three participants, due to manpower constraints. Secondly, this only involved outpatients due to the strict turnaround time for inpatient and emergency studies which was not compatible with our study design. Thirdly, this only examined cross-sectional imaging and did not involve X-rays, due to the higher resolution required to view X-rays. Fourthly, there was a skew towards MRI studies compared to CT due to musculoskeletal and neuroradiology subspecialities having an inherent greater number of MRI studies. We note, however, in our analysis that user experience did not differ significantly between CT and MRI. Fifthly, only general subspeciality work was involved, not studies requiring 3D applications such as CT colonography as our 3D applications were not included in our VA suite. Lastly, the reason for the single major discrepancy may not have been due to the technical limitations of the remote reporting setup, but rather the extra time given to reporting leading to a change in final interpretation of an abnormality which had already been detected in the provisional report. The authors believe that larger studies addressing these limitations are needed.

The value proposition of remote reporting is apparent. It is a natural form of social distancing, promoting operational resilience during pandemics. It is a win-win for departments and radiologists when seeking to extend services whilst maintaining a form of work-life balance. It may also be used as a way for organisations to address hospital physical space shortages due to the large footprint diagnostic workstations occupy. It is against this backdrop that we concur with international recommendations that reporting over VA and visually calibrated monitors can be used during periods of staffing difficulty, 9 but should be limited to cross-sectional studies and as a means to augment a core group of on-site radiologists.

In conclusion, our study comparing remote reporting over VA using visually calibrated monitors and reporting using diagnostic grade workstations in hospital premises showed limitations in image quality and productivity, with complete concordance rate of 80.4% and only one major discrepancy out of 51 studies reported. We note that there is no significant difference in the results between CT and MRI reporting. In times of urgent need, remote reporting of CT and MR studies over VA using visually calibrated monitors is a viable option to augment a core group of on-location radiologists. Policies for remote reporting should be defined by local leadership which are guided by relevant national position statements/regulations. 10

Footnotes

Acknowledgements

The authors would like to thank our institution’s imaging informatics team led by Mr Danny Fooh Choon Hock, especially Mr James Cheong Chee Hong, Mr Francis Tan Ser Yew, Mr Andik Irwan Amirullah Bin Mohd Jelani, and Ms Dian Liyana Moezar. We are grateful for our Integrated Health Informations Systems colleagues particularly Mr Peh Cheng Lam, Mr Chai Chun Wei, Ms Lei Yanli, Mr Wong Yoon Wah, Ms Tan-Leong Woon Lan and Mr Pravin Concessio. We are thankful for the support rendered by our Head of Department, Dr Lai Peng Chan.

Author Contributions

Guarantor of integrity of the entire study- Yusheng Keefe Lai

Study concepts and design- Yusheng Keefe Lai, Png Meng Ai

Literature research- Yusheng Keefe Lai, Benjamin Jyhhan Kuo

Clinical studies- NAExperimental studies/data analysis- NA

Statistical analysis- Benjamin Jyhhan Kuo

Manuscript preparation- Yusheng Keefe Lai, Benjamin Jyhhan Kuo, Kheng Choon Lim, Chee Yeong Lim, Albert Su Chong Low, Meng Ai Png

Manuscript editing- Yusheng Keefe Lai, Benjamin Jyhhan Kuo, Meng Ai Png.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data availability

All data and material were available to all authors, and is available to reviewers upon request. The authors declare that they had full access to all of the data in this study and the authors take complete responsibility for the integrity of the data and the accuracy of the data analysis.