Abstract

“You must stay at home!” This is how the UK Prime Minister announced lockdown in March 2020. Many countries implemented similarly assertive messages. Research, however, suggests that authoritative language can backfire by inciting psychological reactance (i.e., feelings of anger arising from threats to one’s autonomy). In a series of three studies, we therefore tested whether commanding versus control and noncommanding messages influence several cognitive and affective indicators of reactance, intentions to comply with COVID-19 recommendations, and the compliance behavior itself. Although people found commanding messages threatening and felt angry and negative toward them, these messages impacted only intentions, but there was no evidence of behavioral reactance. Overall, our research constitutes the most comprehensive examination of cognitive–affective and behavioral indicators of reactance regarding commands to date and offers new insights into both reactance theory and COVID-19 communication.

On March 23, 2020, UK Prime Minister Boris Johnson exclaimed “You must stay at home!” to announce lockdown (British Broadcasting Corporation, 2020). Although such authoritative language may seem necessary to convey the seriousness of the situation and convince people to comply with governmental recommendations, research indicates that assertive messages can negatively impact behavior by evoking psychological reactance (J. W. Brehm, 1966; Rosenberg & Siegel, 2018). Experts have warned that reactance—rather than the widely publicized and critiqued behavioral fatigue—may in fact be the main threat to compliance with social distancing measures (Sibony, 2020). There has not, however, been any empirical investigation into whether the type of messages that governments have been using to enforce lockdown can backfire. In the present research, we therefore investigated how commanding messages impact compliance with COVID-19 behavioral recommendations. Because researchers have neglected whether messages aimed at enhancing the compliance might influence other activities not directly relevant to COVID-19, such as leisure, and because psychological reactance is known to evoke emotional mechanisms that shape various behaviors (Rosenberg & Siegel, 2018), we also explored potential “spillover” and “spillunder” effects of the messages (Dolan & Galizzi, 2015; Krpan et al., 2019). These variables and the corresponding analyses are, however, presented in Supplementary Materials (pp. 22–31, 79–88), given that they generally yielded null effects. We next overview previous research on reactance theory to develop our hypotheses.

Psychological Reactance

Psychological reactance theory posits that, if people’s freedom of action has been undermined, a motivational state of reactance marked by anger will be activated, thus prompting them to restore their freedom by undertaking the forbidden or discouraged behaviors (Miron & Brehm, 2006). The main assumption of the theory is that reactance effects occur when a behavior that a person can typically freely undertake, such as going out, is suddenly restricted: for example, by telling them they must stay at home (S. S. Brehm & Brehm, 2013).

Crucially, psychological reactance depends on how the restriction on behavior is communicated to people (Rosenberg & Siegel, 2018). This can be through language that is either commanding (e.g., “must”) or creates an impression of free choice (e.g., “may”). One of the most robust findings from the literature is that using commanding compared to noncommanding language instigates reactance (Rains, 2013; Rosenberg & Siegel, 2018). For example, commanding (vs. noncommanding) health messages were perceived as less persuasive and decreased people’s intention to undertake the targeted health behaviors (Miller et al., 2007; Quick & Considine, 2008). Based on the previous findings regarding the consequences of message language, we therefore predict the following:

It is also important to address the mechanisms behind the hypothesized effects of commands on COVID-19 compliance. In a meta-analysis involving 20 studies and 4,942 participants, Rains (2013) found that reactance is typically experienced as anger, and this emotional state contributes to its undesirable behavioral effects. We therefore predict the following:

Overview of the Present Research

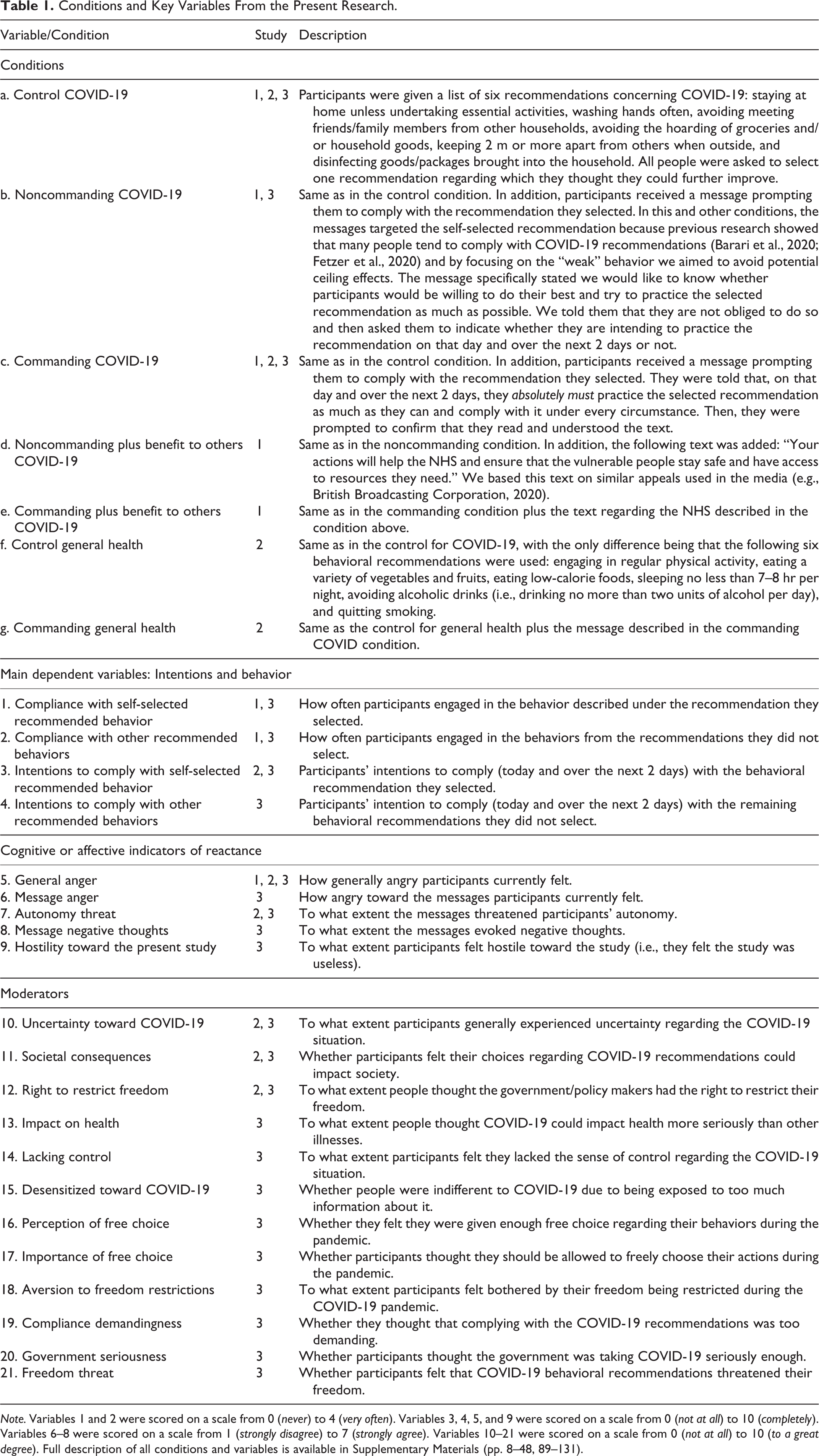

The first study we conducted to test the hypotheses generally yielded null effects. Study 1 is therefore relegated to SM (pp. 5–88), whereas the main measures assessed in that study are outlined in Table 1 for informative purposes. The table also overviews measures from the main Studies 2 and 3 that are presented in the article. These studies drew on the insights from Study 1 to gain a more nuanced understanding of when reactance to commanding (vs. control and noncommanding) messages might occur. We considered two main possibilities behind the failure to detect reactance in Study 1. One is that our measures were not sufficiently sensitive. For example, in previous relevant research, reactance was captured via intentions (Rosenberg & Siegel, 2018), whereas our study focused on actual behaviors. A second possibility is that reactance does not occur regarding COVID-19 messages, in which case it would be important to understand why, given that message-related reactance has been documented in other health domains (Miller et al., 2007).

Conditions and Key Variables From the Present Research.

Note. Variables 1 and 2 were scored on a scale from 0 (never) to 4 (very often). Variables 3, 4, 5, and 9 were scored on a scale from 0 (not at all) to 10 (completely). Variables 6–8 were scored on a scale from 1 (strongly disagree) to 7 (strongly agree). Variables 10–21 were scored on a scale from 0 (not at all) to 10 (to a great degree). Full description of all conditions and variables is available in Supplementary Materials (pp. 8–48, 89–131).

To address the first possibility, across Studies 2–3, we measured all important indicators of reactance (Table 1) we could identify in the literature (Rosenberg & Siegel, 2018). Next to assessing the main dependent variables that tap into behavior (actual compliance and intentions to comply, Table 1), we measured several cognitive or affective indicators of reactance. These included general anger as in Study 1, but also anger specifically directed toward messages, negative thoughts experienced upon reading the messages, and autonomy threat (Dillard & Shen, 2005; Rosenberg & Siegel, 2018). Moreover, we assessed hostility toward the present study (Table 1), given that reactance can also manifest itself as hostility toward the source of threat (Nezlek & Brehm, 1975; Rains, 2013)—in this case the study in which participants took part.

To address the second possibility behind the failure to initially detect reactance, we measured all relevant variables that should, according to reactance theory, determine the likelihood of reactance (S. S. Brehm & Brehm, 2013; Rains & Turner, 2007; Rosenberg & Siegel, 2018) and may therefore moderate the impact of commanding (vs. control or noncommanding) language on variables indicative of this phenomenon. Reactance should occur if acting freely is important to people (Variable 17, Table 1), if they are averse to someone attempting to restrict their freedom (Variables 12 and 18, Table 1), if they feel that their freedom is being threatened or eliminated (Variables 16 and 21, Table 1), if the behaviors in question are too demanding (Variable 19, Table 1) or do not have serious (e.g., life-threatening) consequences (Variables 11, 13, and 20, Table 1), and if people feel they have control over their actions (Variable 14, Table 1) or are not uncertain regarding the situation (Variable 10, Table 1). We also measured whether people were desensitized to COVID-19 (Variable 15, Table 1), given that we considered they may fail to experience reactance toward commanding language because they are generally exposed to too much COVID-related information in the media. Finally, in Study 2, we manipulated commanding versus control messages regarding general health as one of the domains where reactance has been frequently documented (Rosenberg & Siegel, 2018) to understand whether the effects would differ compared to COVID-19-related messages.

Overall, the general approach in Studies 2–3 was to first test whether the commanding (vs. control or noncommanding) condition would impact any of the behavioral or cognitive–affective indicators of reactance tested. In Study 2, we also probed whether the effects of COVID-19-related messages on these variables were different than the effects of messages regarding general health. For any of the significant effects of the commanding (vs. control or noncommanding) COVID-19 messages on intentions or behavior, we then aimed to further test the mediating role of the cognitive–affective variables. We next probed the potential moderators of the impact of commanding (vs. control or noncommanding) COVID-19 conditions on reactance variables. Finally, we meta-analyzed any main effects of message language on dependent variables that were probed in more than one study.

Method

Participants

In Study 2, which had only one part, of 1,763 UK participants recruited, 1,719 passed the inclusion criteria and were included in analyses (male = 622; female = 1,091; other = 6; M age = 41.127; SD age = 13.105). There were therefore 427, 433, 433, and 426 participants in the health control, COVID-19 control, health commanding, and COVID-19 commanding conditions (Table 1), respectively. In Study 3, which had two parts, of 2,112 UK participants recruited for Part 1, 1,969 were included in analyses because they completed both parts and passed the inclusion criteria (male = 632; female = 1,331; other = 6; M age = 37.045; SD = 12.879). There were therefore 662, 658, and 649 participants in the control, commanding, and noncommanding conditions (Table 1), respectively. In both studies, the inclusion criteria involved passing seriousness checks at the end of the study (Aust et al., 2013), correctly answering instructed response items (Meade & Craig, 2012), and participants allowing us to use their data (SM, pp. 132–135). For both studies, sample size was determined based on meeting a high power (.90) to detect small effects (Cohen’s f 2 ≤ .02; Cohen, 1988). Detailed power analyses are available in SM (pp. 142–146). The data were collected via Prolific.co on June 22, 2020 (Study 2), and between September 29 and October 5, 2020 (Study 3).

Study Design, Procedure, and Measures

The study design involved a between-subjects variable (message language) consisting of four conditions in Study 2 and three conditions in Study 3 (Table 1). For Part 1, procedures in both studies were similar. All participants first answered the consent form, after which we measured two covariates—age and gender (male vs. female vs. other)—given their links to compliance with COVID-19 recommendations (Galasso et al., 2020; Levkovich, 2020). Thereafter, participants were randomly allocated to one of the message language conditions and read the corresponding messages (see Table 1 and SM, pp. 89–93, 103–106). Then, they received the questions measuring compliance intentions, cognitive–affective indicators of reactance, and the moderator variables (Table 1). Finally, at the end of Part 1, participants answered the seriousness check and whether they allowed us to use their data.

In Study 3, which also had Part 2, participants were contacted on the 3rd day after completing Part 1. They first received the consent form and then responded to the questions measuring their compliance with behavioral recommendations (Table 1). In the end, they answered the seriousness check and whether they allowed us to use their data. Study materials and all variables are detailed in SM (pp. 89–135) and available via the Open Science Framework (OSF; https://osf.io/a2jnb/).

Results

All analyses reported in this section were computed using linear regression models. The data and analysis codes that produced the results can be accessed via OSF (https://osf.io/a2jnb/).

Influence of Messages on Reactance Variables and Comparison Between COVID-19 and General Health

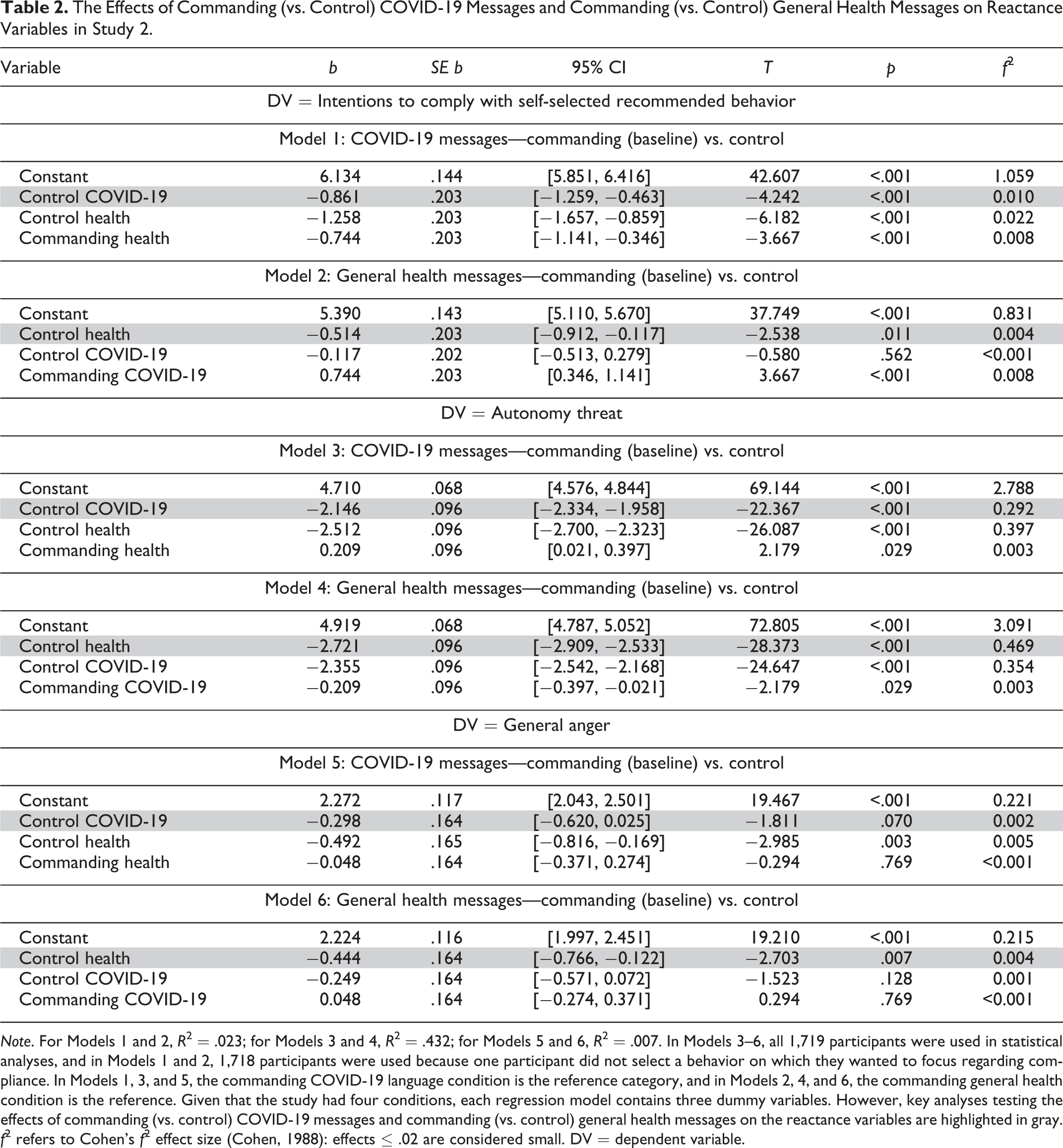

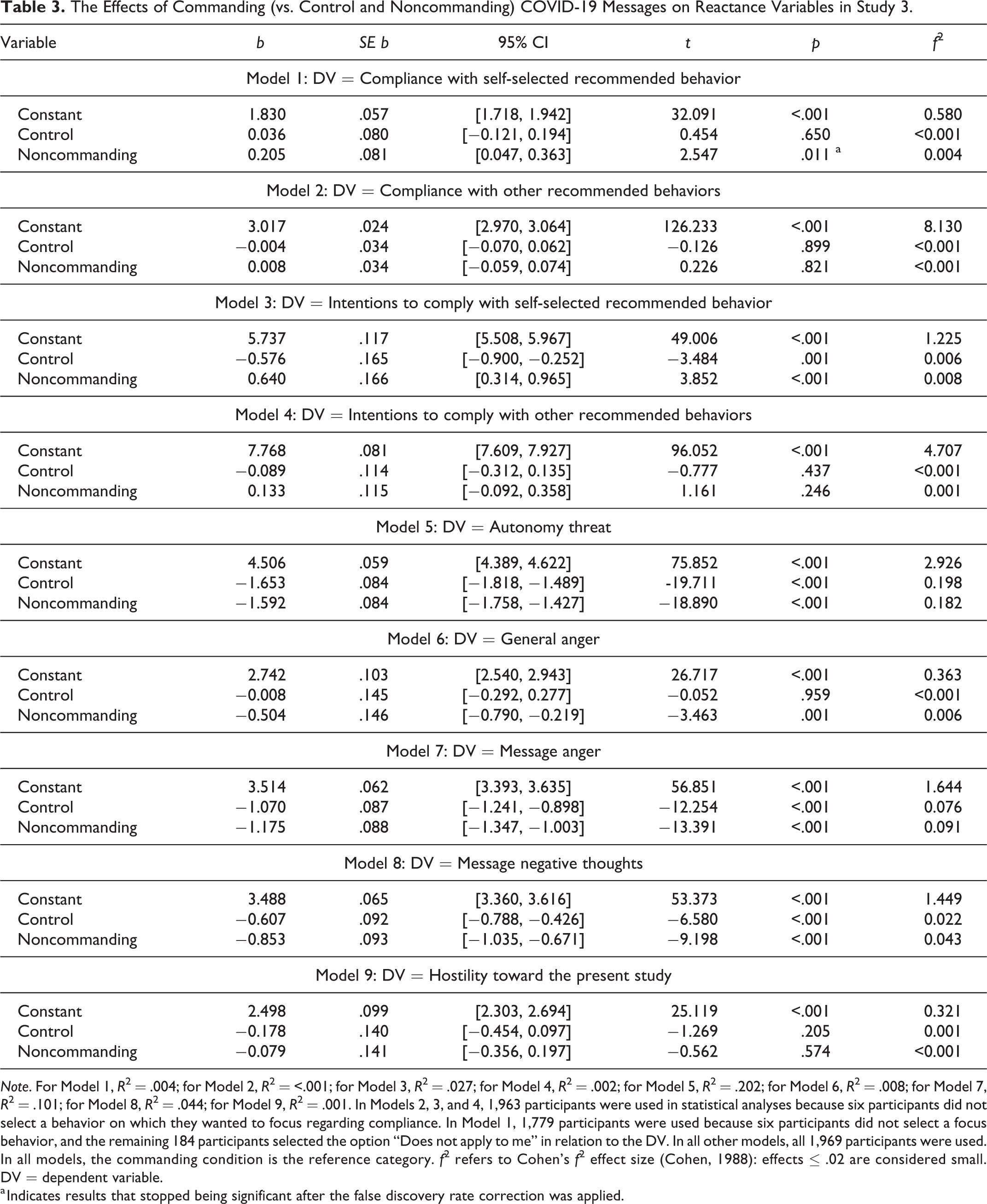

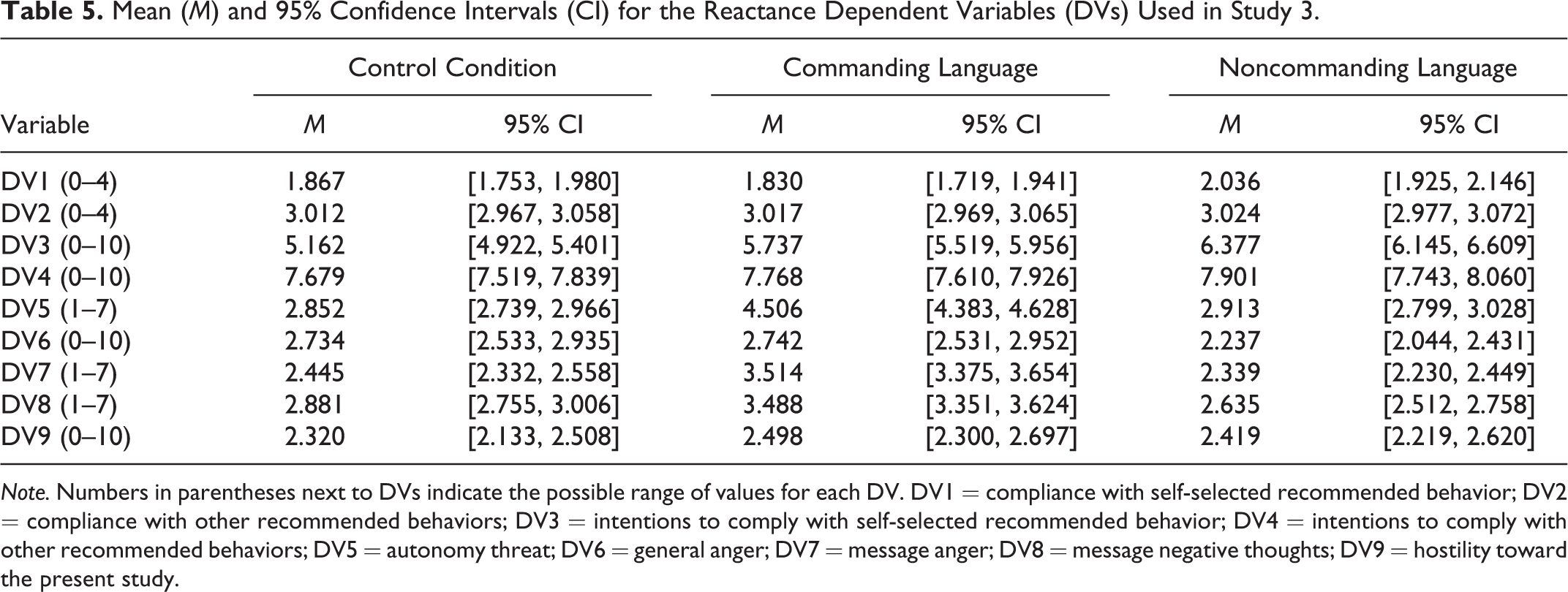

Regression models testing the impact of messages on reactance variables in Studies 2 and 3 are presented in Tables 2 and 3, whereas the means and 95% CIs for the variables are reported in Tables 4 and 5. To minimize the chance of Type I error, the effects were deemed significant only if they passed the false discovery rate (FDR; Benjamini & Hochberg, 1995) correction (SM, pp. 142–146). Overall, the analyses showed that, whereas the commanding condition influenced various cognitive–affective indicators of reactance compared to the other conditions, it impacted intentions in line with reactance theory only relative to the noncommanding condition but failed to change behavior, which is inconsistent with Hypothesis 1.

The Effects of Commanding (vs. Control) COVID-19 Messages and Commanding (vs. Control) General Health Messages on Reactance Variables in Study 2.

Note. For Models 1 and 2, R 2 = .023; for Models 3 and 4, R 2 = .432; for Models 5 and 6, R 2 = .007. In Models 3–6, all 1,719 participants were used in statistical analyses, and in Models 1 and 2, 1,718 participants were used because one participant did not select a behavior on which they wanted to focus regarding compliance. In Models 1, 3, and 5, the commanding COVID-19 language condition is the reference category, and in Models 2, 4, and 6, the commanding general health condition is the reference. Given that the study had four conditions, each regression model contains three dummy variables. However, key analyses testing the effects of commanding (vs. control) COVID-19 messages and commanding (vs. control) general health messages on the reactance variables are highlighted in gray. f 2 refers to Cohen’s f 2 effect size (Cohen, 1988): effects ≤ .02 are considered small. DV = dependent variable.

The Effects of Commanding (vs. Control and Noncommanding) COVID-19 Messages on Reactance Variables in Study 3.

Note. For Model 1, R 2 = .004; for Model 2, R 2 = <.001; for Model 3, R 2 = .027; for Model 4, R 2 = .002; for Model 5, R 2 = .202; for Model 6, R 2 = .008; for Model 7, R 2 = .101; for Model 8, R 2 = .044; for Model 9, R 2 = .001. In Models 2, 3, and 4, 1,963 participants were used in statistical analyses because six participants did not select a behavior on which they wanted to focus regarding compliance. In Model 1, 1,779 participants were used because six participants did not select a focus behavior, and the remaining 184 participants selected the option “Does not apply to me” in relation to the DV. In all other models, all 1,969 participants were used. In all models, the commanding condition is the reference category. f 2 refers to Cohen’s f 2 effect size (Cohen, 1988): effects ≤ .02 are considered small. DV = dependent variable.

a Indicates results that stopped being significant after the false discovery rate correction was applied.

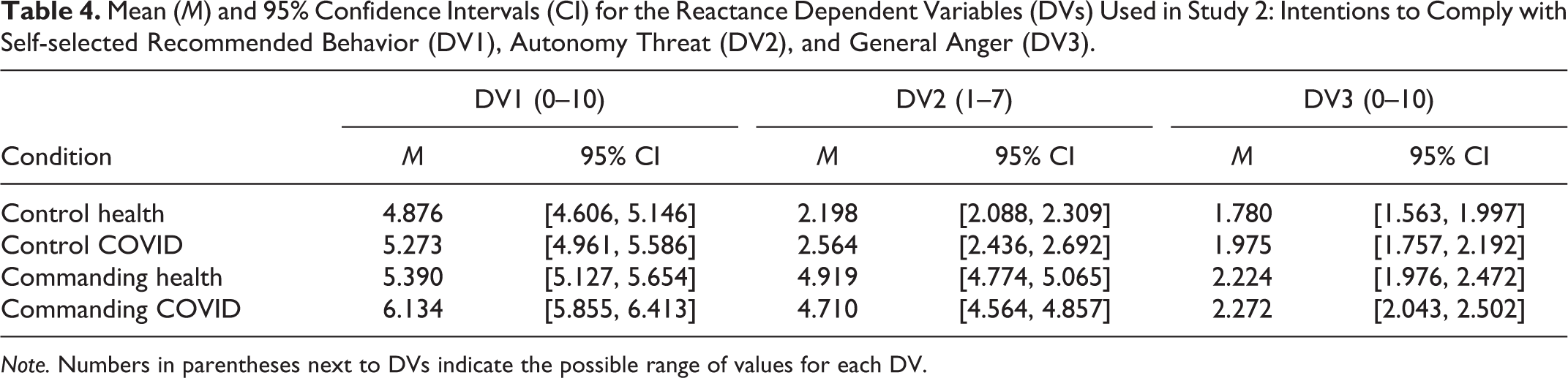

Mean (M) and 95% Confidence Intervals (CI) for the Reactance Dependent Variables (DVs) Used in Study 2: Intentions to Comply with Self-selected Recommended Behavior (DV1), Autonomy Threat (DV2), and General Anger (DV3).

Note. Numbers in parentheses next to DVs indicate the possible range of values for each DV.

Mean (M) and 95% Confidence Intervals (CI) for the Reactance Dependent Variables (DVs) Used in Study 3.

Note. Numbers in parentheses next to DVs indicate the possible range of values for each DV. DV1 = compliance with self-selected recommended behavior; DV2 = compliance with other recommended behaviors; DV3 = intentions to comply with self-selected recommended behavior; DV4 = intentions to comply with other recommended behaviors; DV5 = autonomy threat; DV6 = general anger; DV7 = message anger; DV8 = message negative thoughts; DV9 = hostility toward the present study.

More specifically, concerning the cognitive–affective indicators of reactance regarding COVID-19, in both Studies 2 (Table 2: Model 3) and 3 (Table 3: Model 5), participants experienced higher autonomy threat in the commanding (vs. control) COVID-19 condition. Moreover, in Study 3 (Table 3: Model 5), the commanding (vs. noncommanding) condition also increased this variable. Interestingly, in either of the studies, the commanding (vs. control) condition did not influence general anger, whereas in Study 3, participants in the commanding (vs. noncommanding) condition had higher anger, but the effect size was small (Table 2: Model 5; Table 3: Model 6). In contrast, in Study 3, the commanding (vs. both control and noncommanding) condition increased message-specific anger, and the effect sizes were more substantial (Table 3: Model 7). Finally, in this study, the commanding (vs. control and noncommanding) condition also increased message negative thoughts (Table 3: Model 8). No significant effects were obtained regarding hostility toward the present study (Table 3: Model 9).

Concerning the variables capturing COVID-related intentions and behavior, in Study 3 (Table 3: Model 3), participants in the commanding (vs. noncommanding) condition had lower intentions to comply with the self-selected recommended behavior, in line with Hypothesis 1. In Studies 2 (Table 2: Model 1) and 3 (Table 3: Model 3), however, the commanding (vs. control) condition increased the intentions, which would not be expected based on Hypothesis 1. The effects regarding the intentions to comply with other recommended behavior (Table 3: Model 3) and regarding the actual compliance behaviors (Table 3: Models 1 and 2) were not significant. Overall, all significant effects reported in Tables 2 and 3 concerning cognitive–affective variables and intentions remained significant despite covariates (SM, pp. 201–204).

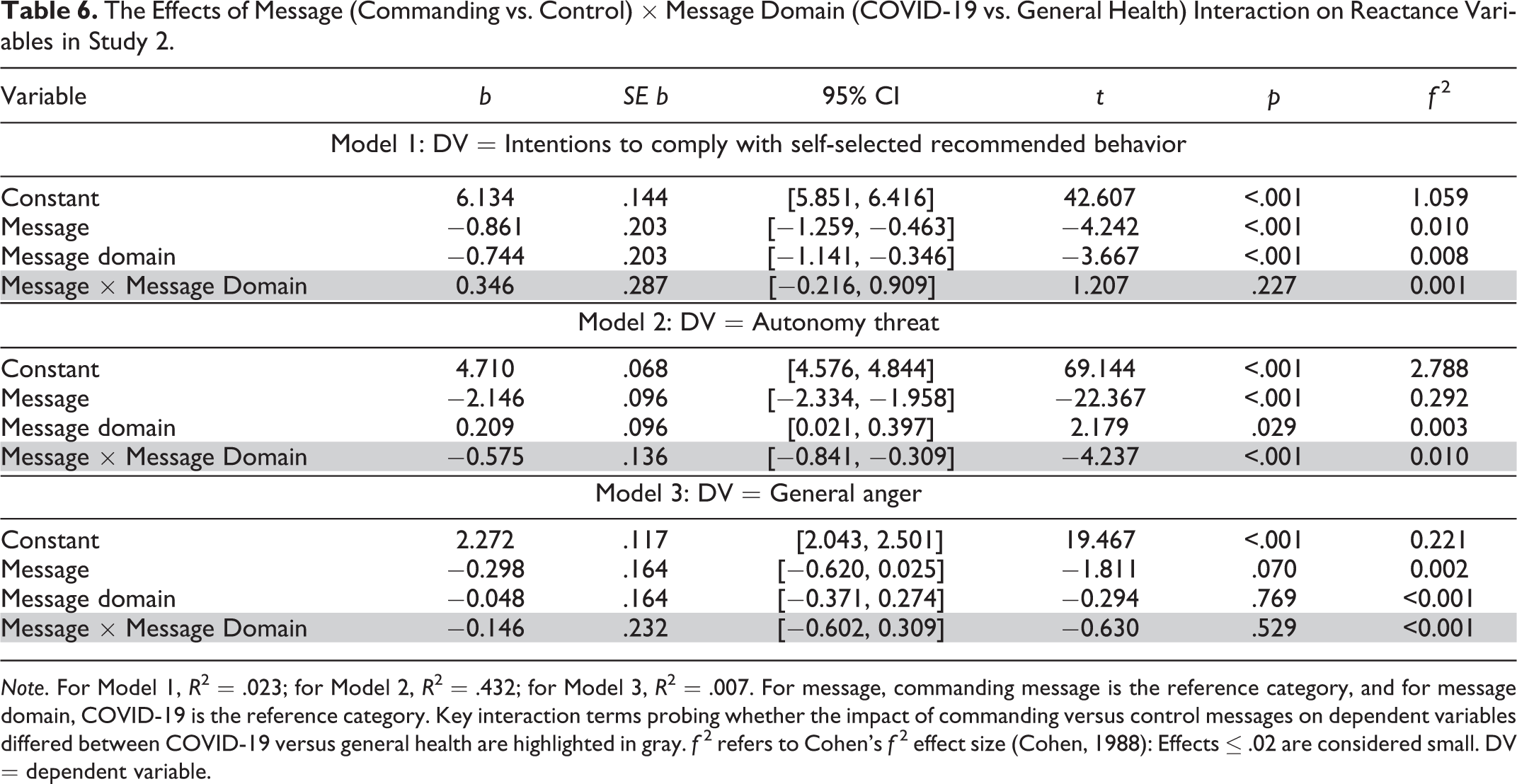

In addition, we probed whether the effects for the health messages in Study 2 would be different than for the COVID-19 messages. As shown in Table 2, the findings for general health were comparable. Participants experienced higher autonomy threat in the commanding (vs. control) condition (Table 2: Model 4) but had higher intentions to comply with the self-selected recommended behavior (Table 2: Model 2). Although the effect on general anger was significant, it was in the same direction as for the COVID-19 messages (Table 2: Models 5 and 6). The significant effects were robust to covariates (SM, pp. 201–202). To more precisely investigate whether the effects differed between the COVID-19 versus general health domains, we conducted moderation analyses where message (commanding vs. control) was used as the independent variable and message domain (COVID-19 vs. health) as the moderator (Table 6). The effects regarding anger and intentions did not differ, whereas the effects regarding autonomy threat were different between the two domains, given that the interaction was significant (Table 6: Model 2). Nevertheless, because the influence of the commanding (vs. control) messages on autonomy threat was highly significant and in the same direction in both domains (Table 2: Models 3 and 4), the main conclusion from the analyses is that it is unlikely that commanding messages impact reactance-related variables only for general health but not for COVID-19.

The Effects of Message (Commanding vs. Control) × Message Domain (COVID-19 vs. General Health) Interaction on Reactance Variables in Study 2.

Note. For Model 1, R 2 = .023; for Model 2, R 2 = .432; for Model 3, R 2 = .007. For message, commanding message is the reference category, and for message domain, COVID-19 is the reference category. Key interaction terms probing whether the impact of commanding versus control messages on dependent variables differed between COVID-19 versus general health are highlighted in gray. f 2 refers to Cohen’s f 2 effect size (Cohen, 1988): Effects ≤ .02 are considered small. DV = dependent variable.

Cognitive–Affective Indicators of Reactance as Mediators of Effects on Intentions

In this section, we examine whether the cognitive–affective indicators of reactance from Studies 2 and 3 (Table 1) mediated the three significant effects of COVID-19 messages on intentions reported in the previous section—the effects of commanding (vs. control) conditions in Studies 2 and 3 and the effect of commanding (vs. noncommanding) condition in Study 3. We did not probe mediated effects for the nonsignificant effects on intentions and behavior to be consistent with Hypothesis 2, which implied using mediation analyses to understand the mechanism behind significant effects of COVID-19 commands on compliance. Parallel mediation analyses (i.e., with all potential mediators included in the analyses together), percentile-bootstrapped with 20,000 samples, were conducted using the Process package (Model 4; Hayes, 2018). To determine significance, 99% CIs were used to minimize the chances of Type I error, given that each mediation analysis included several regression models, as presented in Table 7 (for a full analyses output, see SM, pp. 207–218).

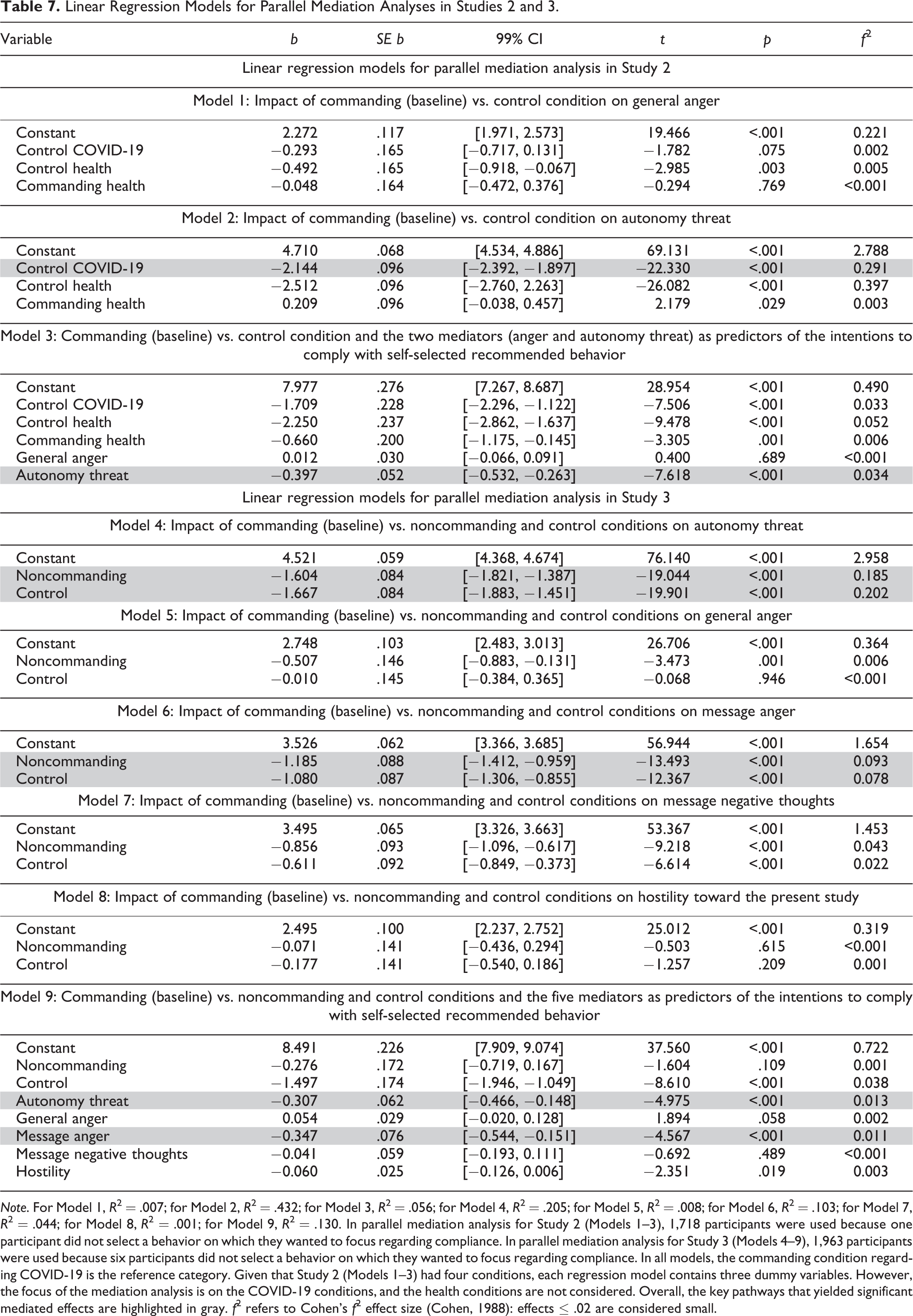

Linear Regression Models for Parallel Mediation Analyses in Studies 2 and 3.

Note. For Model 1, R 2 = .007; for Model 2, R 2 = .432; for Model 3, R 2 = .056; for Model 4, R 2 = .205; for Model 5, R 2 = .008; for Model 6, R 2 = .103; for Model 7, R 2 = .044; for Model 8, R 2 = .001; for Model 9, R 2 = .130. In parallel mediation analysis for Study 2 (Models 1–3), 1,718 participants were used because one participant did not select a behavior on which they wanted to focus regarding compliance. In parallel mediation analysis for Study 3 (Models 4–9), 1,963 participants were used because six participants did not select a behavior on which they wanted to focus regarding compliance. In all models, the commanding condition regarding COVID-19 is the reference category. Given that Study 2 (Models 1–3) had four conditions, each regression model contains three dummy variables. However, the focus of the mediation analysis is on the COVID-19 conditions, and the health conditions are not considered. Overall, the key pathways that yielded significant mediated effects are highlighted in gray. f 2 refers to Cohen’s f 2 effect size (Cohen, 1988): effects ≤ .02 are considered small.

We first discuss the findings regarding the mediation for commanding versus noncommanding condition in Study 3. The analyses showed that both autonomy threat (a1b1 = .492, 99% CI = [0.218, 0.784]) and message anger (a2b2 = .412, 99% CI = [0.164, 0.678]) contributed to explaining lower behavioral intentions in the former condition, given that participants exposed to commanding (vs. noncommanding) messages had higher autonomy threat and message anger (Table 7: Models 4 and 6) and that the two mediators negatively predicted the intentions (Table 7: Model 9). The results remained significant despite covariates (SM, pp. 216–218). Overall, this finding is consistent with Hypothesis 2, given that one of the anger components we measured contributed to explaining reactance effects, but it also provides additional insights, given that another cognitive–affective indicator of reactance—autonomy threat—was established as an important mediator.

Parallel mediation analyses computed to examine the mechanism behind higher behavioral intentions in the commanding versus control condition (Studies 2 and 3) produced a more complex picture, given that “inconsistent mediation” was obtained (MacKinnon et al., 2007, p. 602). Indeed, although mediated effects were significant for autonomy threat (Study 2: a3b3 = .852, 99% CI = [0.544, 1.196]; Study 3: a4b4 = .511, 99% CI = [0.222, 0.810]) and message anger (Study 3: a5b5 = .375, 99% CI = [0.146, 0.626]), these effects were in the opposite direction to the main effect and indicated that the commanding (vs. control) condition indirectly lowered behavioral intentions. This is because the commanding condition increased autonomy threat and message anger (Table 7: Models 2, 4, and 6), and these variables negatively predicted the compliance intentions (Table 7: Models 3 and 9). The results remained significant despite covariates (SM, pp. 208–210, 216–218). This finding suggests that commanding language, compared to control, evokes message anger and autonomy threat that undermine intentions, consistent with Hypothesis 2 and the obtained mediated effect of the commanding (vs. noncommanding) conditions on intentions. Because the commanding language condition, however, contained explicit instructions prompting participants to change their behavior, whereas the control condition did not, it is plausible that these instructions overcame the negative reactance effect. The same conclusion applies to the impact of commanding (vs. control) general health messages on the behavioral intentions (SM, pp. 210–213).

Moderation Analyses

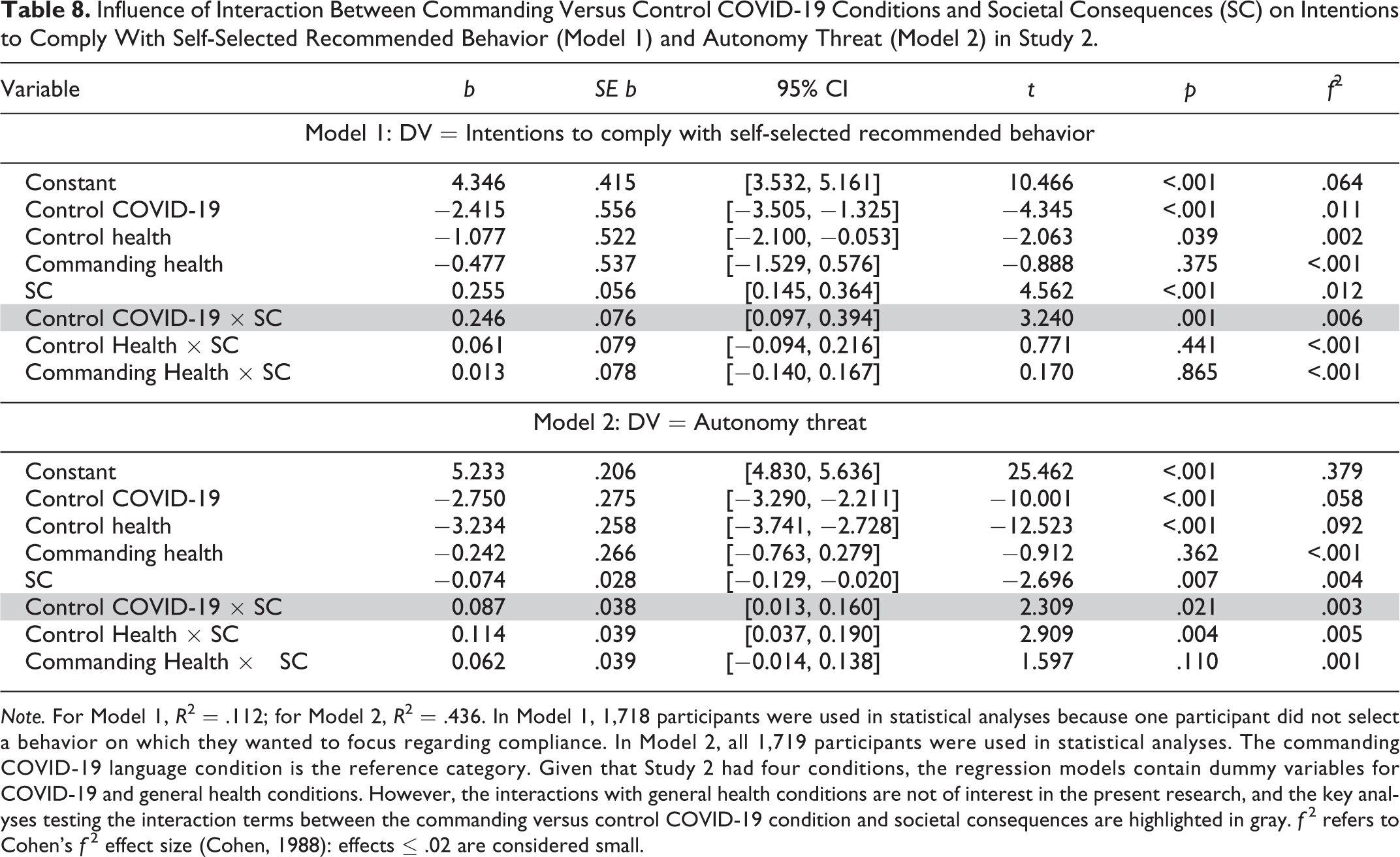

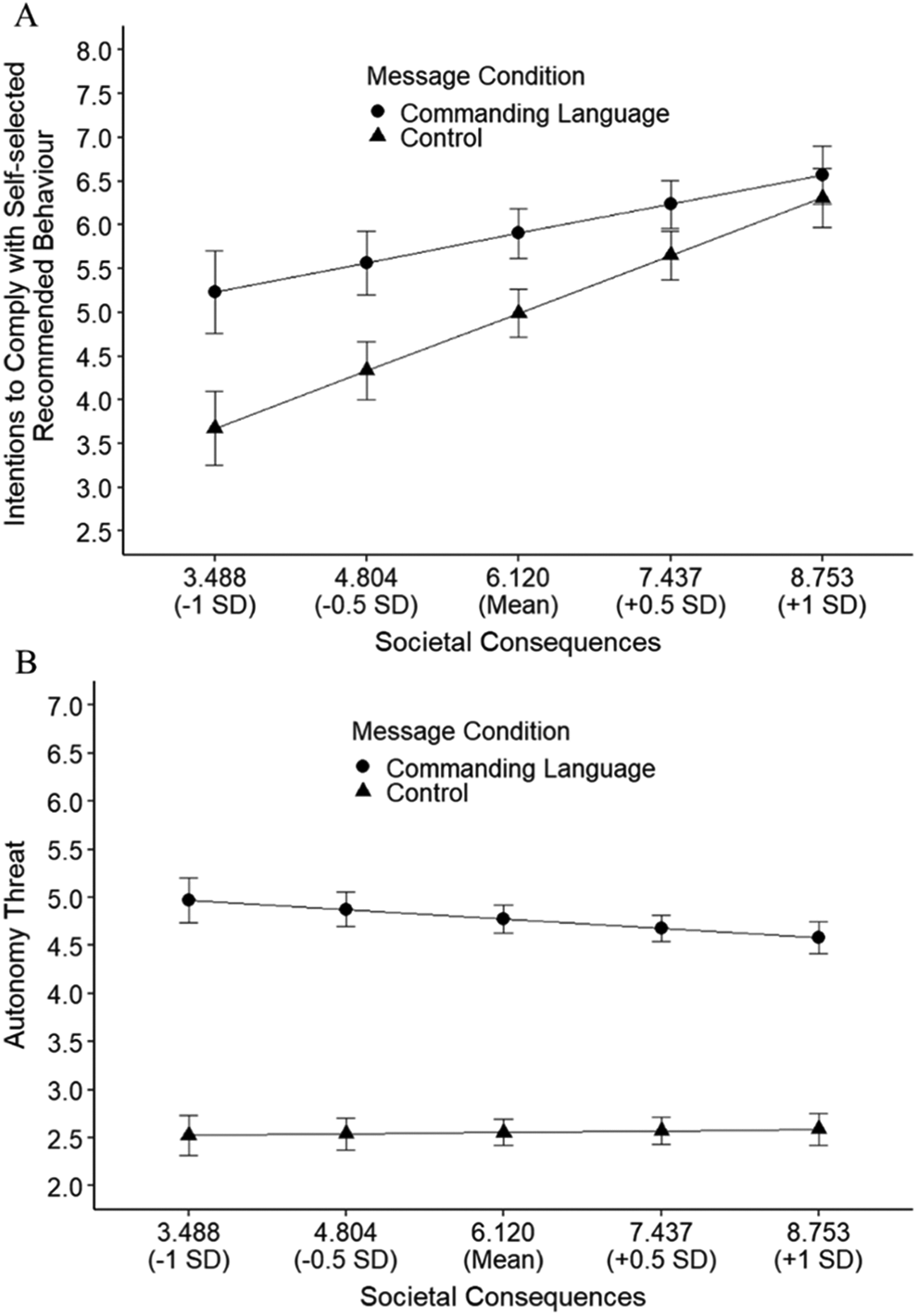

To examine whether the commanding (vs. control or noncommanding) COVID-19 conditions interacted with any of the moderators (Table 1) in influencing reactance variables, we first computed the interaction effects using linear regressions and then examined the patterns of significant interactions using the Johnson–Neyman technique (Esarey & Sumner, 2018; Hayes, 2018; Johnson & Fay, 1950). The interaction effects were deemed significant only if they passed the FDR (Benjamini & Hochberg, 1995) correction (SM, pp. 142–146). Twenty-one initially significant interactions emerged (two in Study 2 and 19 in Study 3). Nineteen of them, however (all in Study 3), did not pass the FDR correction and are therefore reported in SM (pp. 157–200). The two moderation analyses that remained significant despite FDR and covariates (SM, pp. 147–156) are reported in Table 8, and the interaction patterns are further presented in Figure 1. For both interactions, the moderator in question was societal consequences, and the interaction patterns indicated that the differences between the commanding versus control conditions regarding compliance intentions and autonomy threat were becoming smaller as the moderator scores increased (Figure 1). These patterns are broadly consistent with reactance theory, according to which people should feel it is more justified for someone to restrict their behavior when the negative consequences of this behavior for society could potentially be severe, in which case the type of language used to communicate behavioral restrictions (e.g., commanding or noncommanding) should therefore be less relevant (Rosenberg & Siegel, 2018). Despite the broadly consistent interaction patterns, however, as aforementioned, the direction of influence of the commanding (vs. control) condition on the compliance intentions was inconsistent with reactance theory, given that commands would be expected to decrease compliance intentions.

Influence of Interaction Between Commanding Versus Control COVID-19 Conditions and Societal Consequences (SC) on Intentions to Comply With Self-Selected Recommended Behavior (Model 1) and Autonomy Threat (Model 2) in Study 2.

Note. For Model 1, R 2 = .112; for Model 2, R 2 = .436. In Model 1, 1,718 participants were used in statistical analyses because one participant did not select a behavior on which they wanted to focus regarding compliance. In Model 2, all 1,719 participants were used in statistical analyses. The commanding COVID-19 language condition is the reference category. Given that Study 2 had four conditions, the regression models contain dummy variables for COVID-19 and general health conditions. However, the interactions with general health conditions are not of interest in the present research, and the key analyses testing the interaction terms between the commanding versus control COVID-19 condition and societal consequences are highlighted in gray. f 2 refers to Cohen’s f 2 effect size (Cohen, 1988): effects ≤ .02 are considered small.

The influence of commanding versus control COVID-19 condition on intentions to comply with self-selected recommended behavior (Panel A) and autonomy threat (Panel B) at different levels of societal consequences (Study 2). Note. Moderator levels in the figures were selected arbitrarily for effective visualization; detailed output of the Johnson–Neyman analyses depicting the interaction patterns is available in Supplementary Materials (pp. 147–156). Error bars correspond to the 95% confidence intervals.

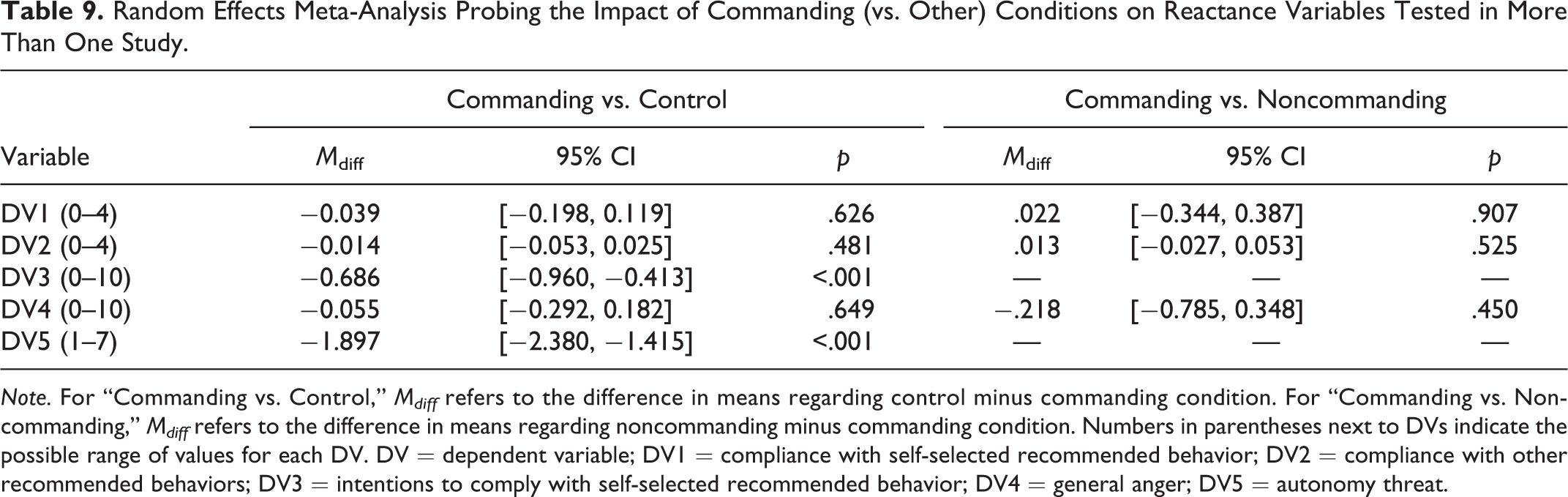

Meta-Analysis

Random effects meta-analysis (Table 9) examining the impact of commanding (vs. other) conditions on reactance variables probed in more than one study (including Study 1) was tested using “esci” (Cumming & Calin-Jageman, 2016). As indicated in Table 9, autonomy threat and intentions to comply with self-selected recommended behavior were generally higher in the commanding (vs. control) condition, whereas other variables yielded no significant differences.

Random Effects Meta-Analysis Probing the Impact of Commanding (vs. Other) Conditions on Reactance Variables Tested in More Than One Study.

Note. For “Commanding vs. Control,” Mdiff refers to the difference in means regarding control minus commanding condition. For “Commanding vs. Noncommanding,” Mdiff refers to the difference in means regarding noncommanding minus commanding condition. Numbers in parentheses next to DVs indicate the possible range of values for each DV. DV = dependent variable; DV1 = compliance with self-selected recommended behavior; DV2 = compliance with other recommended behaviors; DV3 = intentions to comply with self-selected recommended behavior; DV4 = general anger; DV5 = autonomy threat.

General Discussion

The present research investigated psychological reactance toward commanding messages regarding COVID-19. Because our studies constitute arguably the most comprehensive examination of reactance theory concerning message language to date, here we discuss the findings in relation to the theory. We showed that commanding condition (vs. control or noncommanding) influenced compliance intentions and several cognitive–affective indicators of reactance. In this regard, there are two main insights that go beyond previous research.

First, a cognitive–affective measure may be more likely to capture reactance if it is phrased in relation to the messages rather than generally. Indeed, whereas we detected robust reactance effects for measures phrased concerning the messages (message anger, autonomy threat, and message negative thoughts), this was not the case for general anger not directed specifically at the messages. On a conceptual level, these findings indicate that reactance-related cognitive and affective states are experienced specifically in relation to the messages rather than as general states. Whereas previous studies to our knowledge did not address this subtle distinction, it may have important implications for how reactance influences decision making. For example, we know that emotions (e.g., anger) induced in one context can influence people’s decisions in other contexts (Andrade & Ariely, 2009). In that regard, if commanding (vs. other) messages evoke general emotions, it would be plausible that they may impact decisions on topics not targeted by the messages. If, however, these emotions are message specific, then it is plausible that they may shape only decisions that have direct relevance to the messages but not other decisions. We encourage researchers to attempt to test this premise more directly in future research.

The second main insight of the present research is that, whereas commanding messages decreased intentions to comply with self-selected recommended behavior versus noncommanding messages, they increased the intentions compared to control, which would not be expected based on reactance theory. Previous research on reactance, however, generally compared commanding and noncommanding messages but failed to probe a control condition where no behavioral instructions were given. The present research therefore indicates that, even if people may feel threatened in response to the type of commanding messages regarding COVID-19 we used in the present research, they may be more likely to intend to comply with the recommended behaviors than if given no behavioral prompts.

Concerning the influence of messages on actual behavior, which has not been previously tested in the context of reactance evoked via commanding language (Rosenberg & Siegel, 2018), we did not find evidence that commanding versus other conditions would impact COVID-19 compliance, either in individual studies or after meta-analyzing the behavioral effects tested in more than one study. One of the main conclusions of the present research is therefore that, even if commanding messages influence intentions and cognitive–affective variables that have implications for behavior, they may not be sufficiently strong to convincingly change behavior that people undertake over several days after receiving the messages. This finding is in line with previous research on intention–behavior gap, especially given that intentions are less likely to spawn behaviors that require self-control, such as COVID-19 compliance (Sheeran & Webb, 2016; Wallace et al., 2005).

In relation to the psychological mechanisms we examined, the present research showed that the negative influence of commanding (vs. noncommanding) messages on compliance intentions is explained by autonomy threat and message anger. This is aligned with reactance theory, even if the theorizing more comprehensively focused on anger as the core mechanism (Rosenberg & Siegel, 2018). Moreover, although we observed that commands (vs. control) had a negative indirect effect on compliance intentions via autonomy threat and message anger, their actual effect on the intentions was positive. The most plausible explanation is therefore that the commanding (vs. control) condition did activate reactance regarding compliance intentions, but the explicit prompts to change the behavior that were given only in this condition, but not in control, overcame the negative reactance effect. Finally, concerning moderation analyses, of all potential moderators of the influence of commanding (vs. other) messages we tested, only two significant interactions involving societal consequences were robust. This moderator also produced the largest number of significant interactions if other initially significant interactions that did not pass the FDR correction are considered (SM, pp. 157–200). Whereas this suggests that societal consequences may be the main moderator of messages on reactance, our research generally indicates that further theoretical and empirical work needs to be done to uncover the most important moderators, given that we failed to detect consistent moderation effects.

Limitations

One of the main limitations of this research concerns ecological validity (Coolican, 2009). The messages we tested were not officially published by the government, and it is possible that people did not react to them as they would to official governmental communication. Most previous studies investigating reactance regarding commanding messages were, however, conducted in ecologically nonvalid settings (Rosenberg & Siegel, 2018); this has not been an obstacle to detecting reactance. It is thus unlikely that the absence of evidence of behavioral effects in our research can be attributed to ecological validity. Another limitation is that, despite the large sample sizes, we did not recruit participants representative of the UK population. For example, it is possible that the participants we tested differed from the general population on personality traits such as conscientiousness and agreeableness that shape compliance with COVID-19 recommendations (e.g., Clark et al., 2020) and that their responses to our messages may have therefore been different to some degree. It is thus not given the present findings would generalize across the population. Nevertheless, it is important to point out that online participants tend to be reasonably representative of the general population in terms of psychological characteristics (e.g., McCredie & Morey, 2019; Mullinix et al., 2015; Redmiles et al., 2019), thus suggesting that generalizability may not be a major limitation of the present research.

Conclusion

Overall, although people experienced more anger and negative thoughts toward commanding (vs. control or noncommanding) messages and found them threatening to their autonomy, there was no convincing evidence that these messages would hinder COVID-19 compliance behaviors. In fact, commands increased the intentions to comply compared to control. When communicating COVID-19 policies to the public, policy makers may therefore be better off using either commanding or noncommanding language relative to no behavioral prompts to increase people’s intentions, but it will be crucial for them to provide appropriate support that could translate these intentions to behavior.

Supplemental Material

Supplemental Material, sj-docx-1-spp-10.1177_19485506211005582 - You Must Stay at Home! The Impact of Commands on Behaviors During COVID-19

Supplemental Material, sj-docx-1-spp-10.1177_19485506211005582 for You Must Stay at Home! The Impact of Commands on Behaviors During COVID-19 by Dario Krpan and Paul Dolan in Social Psychological and Personality Science

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

The supplemental material is available in the online version of the article.

Author Biographies

Handling Editor: Lisa Libby

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.