Abstract

The global reach of misinformation has exacerbated harms in low- and middle-income countries faced with deficiencies in funding, platform engagement, and media literacy. These challenges have reiterated the need for the development of strategies capable of addressing misinformation that cannot be countered using popular fact-checking methods. Focusing on Kenya’s contentious 2022 election, we evaluate a novel method for democratizing debunking efforts termed “social truth queries” (STQs), which use questions posed by everyday users to draw reader attention to the veracity of the targeted misinformation in the aim of minimizing its impact. In an online survey of Kenyan participants (N ~ 4,000), we test the efficacy of STQs in reducing the influence of electoral misinformation which could not have been plausibly fact-checked using existing methods. We find that STQs reduce the perceived accuracy of misinformation while also reducing trust in prominent disseminators of misinformation, with null results for sharing propensities. While effect sizes are small across conditions, assessments of the respondents most susceptible to misinformation reveal larger potential effects if targeted at vulnerable users. These findings collectively illustrate the potential of STQs to expand the reach of debunking efforts to a wider array of actors and misinformation clusters.

Keywords

Introduction

The dissemination of information and communications technologies (ICTs) has allowed individuals from previously disconnected areas to engage in politics through digital networks at a fraction of prior costs. At the same time, ICTs have also facilitated the spread and amplification of political misinformation targeting politicians, political parties, and the integrity of elections (Chakrabarti et al. 2018; Guess and Lyons 2020). The spread of political misinformation has introduced new challenges, including threats to equitable participation (Ohme et al. 2021; Valenzuela et al. 2019; White et al. 2006), the polarization of domestic electorates (Azzimonti and Fernandes 2023; Del Vicario et al. 2016; Törnberg 2018), and reduced trust in the legitimacy of electoral outcomes (Allcott and Gentzkow 2017; Lee 2019; Tambini 2018).

Growing concern over the role played by misinformation in facilitating these deleterious outcomes has increased the salience of research on the efficacy of efforts to address misinformation. Despite this recent research and the continued efforts of dedicated fact-checking organizations to reduce the impact of misinformation in lower and middle-income countries (LMICs), this work remains limited by several interrelated structural, temporal, and financial factors. This leaves the following question: How can individuals counter the spread of misinformation when claims cannot be easily fact-checked?

To address this puzzle, we draw on recent collaborative research conducted with the Africa-based Centre for Analytics and Behaviour Change (CABC) to test a novel method for addressing misinformation that is beyond the reach of typical debunking strategies: “Social Truth Queries” or STQs (Jalbert et al. 2023). STQs are user responses to posts containing misinformation that include questions which draw attention to truth or the criteria used to assess information truth, such as the presence of evidence or the credibility of the source of the information. We test this approach on misinformation spread about the Kenya 2022 election. Our findings have important implications for addressing the spread of political misinformation in LMICs.

Addressing Online Misinformation in LMICs

Research from high-income democracies around the world has provided evidence of the detrimental consequences of misinformation on the conduct and outcome of elections. In theUnited States, for example, survey data from the 2008 presidential election found that the endorsement of negative rumors about Barack Obama was associated with a reduced likelihood of voting for him (Weeks and Garrett 2014). Similar evidence from Washington State (Reedy et al. 2014) and Oregon (Gastil et al. 2018) utilizing cross-sectional and panel data, respectively, revealed that disinformation influenced voter support for ballot measures on each state’s electoral ticket. Similarly, in Italy researchers have used variation in local languages to link political misinformation to increased support for populist parties (Cantarella et al. 2023).

Though research on the influence of misinformation on electoral outcomes from LMICs is limited, the work that has been done suggests that the consequences could be acutely impactful in these contexts due in part to lower levels of partisanship (Mainwaring and Scully 1999; Samuels and Zucco 2018), digital literacy (Cruz-Jesus et al. 2018; Guess and Munger 2023), and the widespread use of encrypted messaging applications (EMAs), which complicate efforts to use public channels to quickly identify misinformation (Badrinathan et al. 2023; Machado et al. 2019). These factors have collectively contributed to the proliferation of political misinformation, with the prevalence of misinformation in LMICs often outpacing high-income counterparts (Madrid-Morales et al. 2021; Resende et al. 2019; Jalli and Idris 2019). This abundance of local misinformation has been linked to instances of violence (Akinyetuna et al. 2021; Banaji et al. 2019), support for populist candidates (Ong and Tapsell 2022; Ricard and Medeiros 2020), along with elevated xenophobic attitudes (Chenzi 2021; Nkabane and Mutereko 2021).

Current efforts to address the spread of misinformation in LMICs have revolved primarily around official fact-checking efforts, which involve trained professionals who investigate the source and veracity of select claims (Graves 2018; Schiffrin and Cunliffe-Jones 2022). While there is evidence that professional fact-checking efforts can counter belief in rumors and conspiracy theories across a diverse cultural and political contexts (e.g., Amazeen 2020; Nyhan and Reifler 2010; Porter and Wood 2021), researchers have raised concerns regarding the practical limitations facing this approach in LMICs. First, fact-checking is costly (Jolly 2022; Karagiannis et al. 2020). While funding does exist, in comparison to funding in high-income countries, limits to available resources make it difficult to sustain the robust fact-checking efforts necessary to counter misinformation tailored to exploit local political factors (Vraga et al. 2020; Bowles et al. 2022; Wasserman and Madrid-Morales 2019). Beyond funding constraints, the diversity of social media platforms, local languages, and sheer volume of online communications present in LMICs requires fact-checking organizations to cover a more complex media ecosystem (Graves 2018; Nielsen and McConville 2022). These challenges have been exacerbated further by regional use of EMAs such as WhatsApp, Telegram, and Signal (Gursky and Woolley 2021; Kazemi et al. 2022; Liu et al. 2021).

Given limits to funding and access to social media data, fact-checking organizations operating in LMICs, as elsewhere, have had to prioritize misinformation they perceive to pose the greatest risk to the public (Graves and Cherubini 2016; Nakov et al. 2021). However, it is difficult to determine what misinformation is likely to be the most influential a priori and it may take a long time for misinformation that starts on local platforms may take a long time to reach fact-checkers. Moreover, the misinformation that poses the greatest offline threat is often not obviously false (Barrera et al. 2020; Hameleers 2022), and it requires substantial investigative work by highly trained staff to ensure fact-checks are correct and institutional credibility is maintained (Graves and Cherubini 2016).

These constraints limit the reach of regional fact-checking efforts and slow their implementation. For example, in a preliminary effort to track the reach of fact-checks during Kenya’s 2022 Election, discussed further below, we find that fewer than half of the misinformation identified was applicable to fact-checking efforts (39%), resulting in low rates of coverage (15%). With regards to this specific political subset, this gap reiterates the need for greater attention on how best to counter different types of misinformation across diverse platforms. Collectively, while fact-checking organizations have made large recent strides in LMICs, the limitations placed on organizations operating in LMICs have hindered their reach and influence. As a result, fact-checkers, policymakers, and researchers have begun to explore new methods to address the proliferation of misinformation in LMICs.

Beyond Fact-Checking: Social Truth Queries

In light of the limitations of existing methods, there is widespread acknowledgment of the need for the development of additional strategies for addressing online election misinformation. Organizations have started to develop their own community-driven strategies to combat the spread of harmful election-related misinformation online that take local conditions into account. One such organization is the CABC, an NGO in South Africa. At the CABC, experts train volunteers to respond to misinformation online by following a set of guidelines developed through their experience in dialogue facilitation. Many of these replies are formed as questions that draw attention to the truth of the original post. Drawing on this approach, we have developed the term STQs to refer to questions posed in response to misinformation that draw attention to truth or the criteria used to assess truth, such as the credibility of the information’s source or the social consensus for that information (for an overview of truth criteria, see Schwarz and Jalbert 2020). For instance, when encountering a post containing a false statement about the election’s outcome, the use of a STQ can take the form of reply probing the veracity of the information by asking about its source (i.e., “Where did you learn about this?”) or its wider social consensus (i.e., “Do many Kenyans believe this?”), among other strategies discussed further below.

An initial series of laboratory studies by Jalbert et al. (2023) found that the presence of STQs consistently reduced belief in and intent to share false information, and that a variety of different STQs were effective. STQs are hypothesized to work through two main pathways. First, STQs orient other users to pay attention to and consider the truth of information when they would not have otherwise. In fact, evidence suggests that people tend to accept information they encounter as true (Grice 1989; Schwarz 1994, 1996; Sperber and Wilson 1986), and that people do not always consider information accuracy prior to deciding to share it (Epstein et al. 2021; Pennycook et al. 2021). The presence of a cue that draws attention to information truth—such as an STQ—can change the way that people process that information, drawing their attention to potential aspects of the information which suggest it may be false (for a related discussion, see Lin et al. 2022). Indeed, previous research has found that cues that get users to consider information truth can be an effective way to protect people from later belief in false information (Jalbert et al. 2020). In addition, these cues can also increase the quality of information that users share. For example, one study found that asking users to explain how they knew information was true or false before sharing it reduced their intent to share false information (Fazio 2020; Pillai and Fazio 2023), while other research has found that asking users to assess the underlying truth of a single headline can reduce the intent to share subsequent false headlines (Pennycook et al. 2020).

Second, as STQs are not promoted by official organizations but rather individuals, this separate point of communication may suggest that the accompanying misinformation is not universally accepted by a reader’s peers. Perceptions of what others believe play an important role in guiding individual beliefs (Festinger 1954; Schwarz and Jalbert 2020). As a result, by disrupting perceptions that there may be consensus support, STQs may decrease the acceptance of the targeted misinformation. In addition, given individuals’ motivation to affiliate with others (e.g., Cialdini and Goldstein 2004), people may also be less willing to share a post when it comes with a comment from another user questioning its veracity.

Building on these psychological foundations, STQs hold several additional advantages over existing methods. These include the ease and speed with which they can be applied to potentially problematic content, their transferability to EMAs, and, perhaps most importantly, the ease with which individuals and organizations can deploy them. In addition, STQs can be used to address types of misinformation that cannot be currently addressed through fact-checking approaches. These differences could be particularly salient in LMICs, where a more diverse set of languages and local costs reduce the availability and generalizability of other forms of debunking content. However, thus far, the effectiveness of STQs have only been experimentally tested in U.S. populations to address information that could be fact-checked. The Kenyan election presented an opportunity to test the potential of these STQs as a novel strategy for minimizing the spread and influence of misinformation in LMICs on complex forms of electoral misinformation that could not be fact-checked.

Study Context: Kenya’s 2022 General Election

The contained study revolves around Kenya’s contentious 2022 Election. By focusing on this widely tracked and researched election, we were provided with an opportunity to study the influence of STQs in the type of highly contested political environment which is thought to be of greatest risk for the influence of misinformation. In addition to enabling us to test the efficacy of STQs amidst a real-world event involving widespread misinformation, the additional historical context in which the election took place provided further relevancy. The election was only two elections removed from the 2007 vote, which was marred by extensive postelection violence (Gibson and Long 2009). This violence has been linked to the salience of ethnic ties (Fjelde and Höglund 2018), which have played a critical role in the country’s previous elections (Horowitz 2019). In past elections, these ethnic ties have also heightened political partisanship in a manner which is not reflective of weaker regional trends (Harding et al. 2021).

Yet, although this recent domestic context heightened international concern regarding the potential for postelection violence (Jung and Long 2023), the lack of an ethnic distinction between two main presidential competitors saw ethnicity become far less central to the contest than in previous iterations (Iraki 2022). Following the vote, which saw five-time candidate and democracy advocate Raila Odinga narrowly defeated by then-Incumbent Deputy President William Ruto, fear of ethnic violence was quickly replaced by concern over the vote’s integrity. Spurred by intense competition and an absence of trusted media networks with broad national appeal, observers were aware that the intense competition had likely created “fertile ground” for electoral misinformation (Kamau and Shiundu 2024: 212). In confirmation of these fears, William Ruto’s victory served as a lightning rod for misinformation (Allen et al. 2023), which previous investigations have shown to have involved both organic speculation and “coordinated” efforts to sway Kenyan electoral behavior (Madung and Obilo 2021). Misinformation involving the 2022 poll, exacerbated by further penetration of social media, saw both top-down forms of narrative segmentation aimed at promoting delegitimizing information (Abboud et al. 2024), as well as forms of bottom-up collective sensemaking (Munene and Oloo 2024). Attachment to the online narratives distributed in the aftermath of the election have become increasingly important to Kenyan politics in part due to declining trust in mainstream media outlets (Agbele 2023). Bolstered by these narratives, though the Supreme Court of Kenya eventually struck down Raila Odinga’s appeal, protests regarding the legitimacy of Ruto’s victory persisted long after the election, providing further relevance to the study context (Galava and Kanyinga 2024).

While Kenya’s political and historical context is distinct, the election’s outcome and aftermath are reflective of wider global trends related to electoral hesitation in the aftermath of democratic elections. Given that we expected this election to involve the proliferation of online political misinformation, we viewed this as a useful context to test the effectiveness of STQs. We view this specific context as critical for the function of STQs to serve as a complement to fact-checking efforts as it involved the spread of misinformation that could not be addressed through current fact-checking efforts, with concerning impacts on perception of election legitimacy.

Present Investigations

We use this context to examine five related hypotheses which each speak to the potential for STQs to serve as an effective strategy for addressing misinformation when fact-checks are unavailable.

We predict that STQs will reduce the perceived accuracy and intent to share misinformation because they orient readers to consider the truth of the information. These effects would be consistent with the findings of other types of interventions that operate by drawing attention to information truth (e.g., Fazio 2020; Pillai and Fazio 2023; Pennycook et al. 2021), and would replicate the effects of STQs observed by Jalbert et al. (2023). In addition to drawing attention to information truth, STQs may also work to reduce accuracy judgments and sharing intent because they decrease perceptions of others consensus in that information, and individuals use consensus as a criterion to judge truth (Schwarz and Jalbert 2020) and are motivated to align their behaviors with others (Cialdini and Goldstein 2004).

In addition to considerations of perceived accuracy and sharing intentions, we have also introduced a third treatment focused on perceptions of the trustworthiness of the initial poster. While a less immediate output, given the prominence of “repeat spreaders” of misinformation across digital environments (Kennedy et al. 2022), strategies that can induce readers to reduce their trust in individuals that spread misinformation have the potential to extend more immediate effects. We envision the influence of STQs on trustworthiness perceptions to track those of H1 and H2. We expect that if an STQ reduces misinformation belief, it may also reduce the perceived trustworthiness of the source, as more credible sources are expected to share more true information (Traberg and van der Linden 2022). If the results match this hypothesis, it could limit the spread not only of the identified misinformation but also the reliance of the reader on the poster for future information.

Individuals are more likely to believe political information that aligns with their partisan beliefs and less likely to believe information that does not align with these beliefs (Lodge and Taber 2013; Weeks and Garrett 2014), as partisan motivated reasoning drives individuals with strong political attachments to defend their existing beliefs even in the face of otherwise compelling evidence (Flynn et al. 2017; Peterson and Iyengar 2021). Political misinformation can be more difficult to counter than apolitical misinformation due to partisan attachments (Kunda 1990; Garrett et al. 2013), with the effectiveness of fact-checking methods yielding mixed results (Hameleers and van der Meer 2020; Iyengar and Hahn 2009; Nyhan and Reifler 2010).

As STQs are expected to work because they draw attention to information truth, we expect that STQs will be most effective when paired with misinformation that would otherwise be interpreted uncritically, as is often the case with information that is aligned with partisan leanings. Because individuals are already more likely to question and reject information that is misaligned with their partisan leanings, we expect the addition of STQs to have less of an effect—that is, if an individual is already unlikely to believe or share a message—or trust the source of that message—the addition of an STQ may not change things further. Given the continued protestation of results and the polarization of the public, the highly politicized context of Kenya’s 2022 presidential election serves as a “least likely” test of the efficacy of STQs on misaligned misinformation.

One of the primary weaknesses of the fact-checking methods available to the public remains the necessity of a distinct claim to counter. While specific false claims represent a unique threat to political legitimacy, other types of misinformation are also prevalent during election periods. As STQs do not rely on fact-checks, the persistence of their effects to misinformation that cannot be fact-checked would allow them to be uniquely suited to a wider set of circumstances than current methods. In order to test this possibility, we tested the effect of STQs on two types of nonfact-checkable misinformation: unproven and ambiguous misinformation.

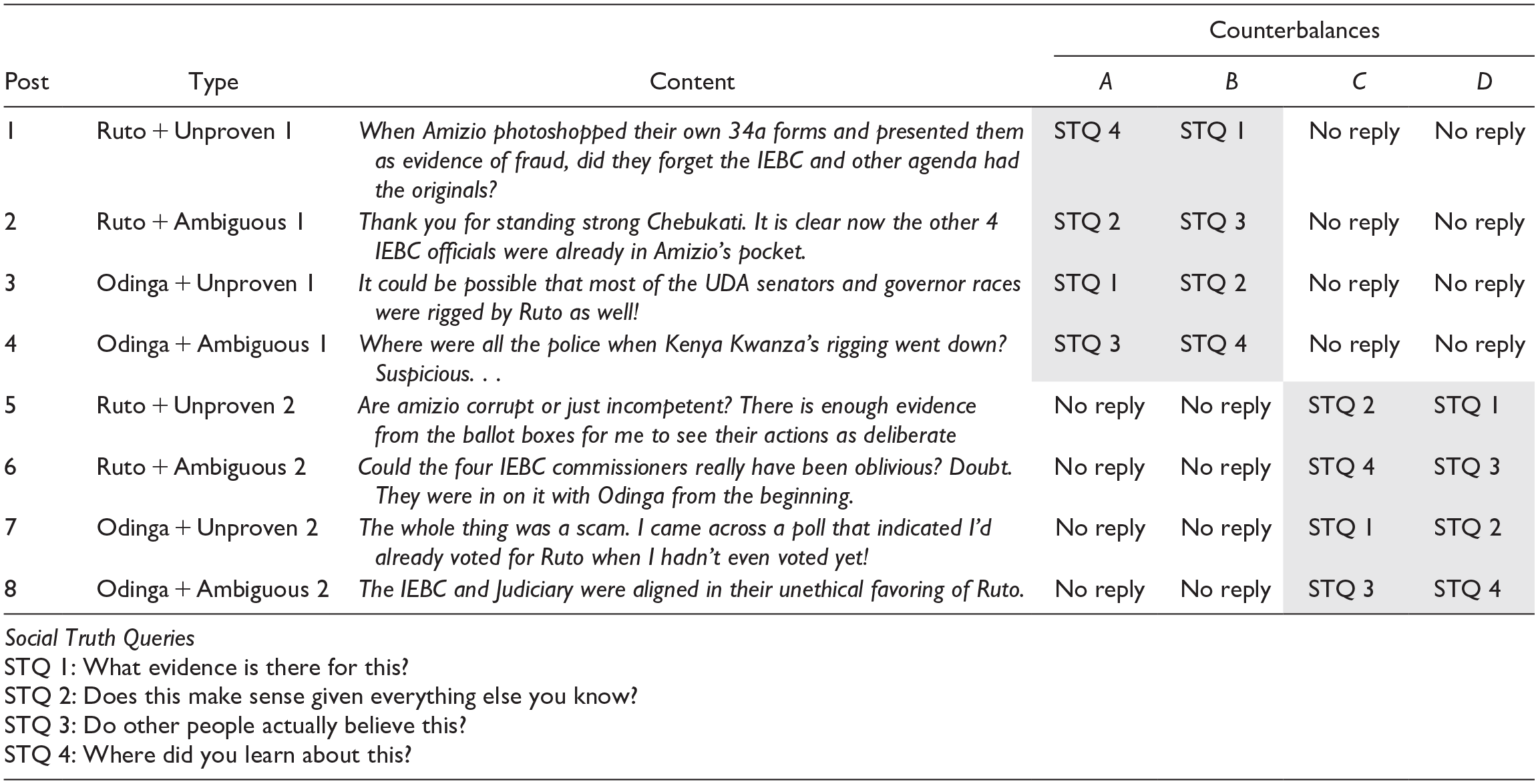

We define unproven misinformation as misinformation that contains discrete claims beyond the reach of fact-checking methods (Row 1 of Table 1). This includes misinformation that relies on either private information or information that is too costly to investigate. Although it is possible these claims could become fact-checked at a later time when resources or evidence become available, they could not be feasibly fact-checked at the time of their circulation. Second, we define ambiguous misinformation as misinformation that contains claims with vague references or hints at illegitimacy but does not present a coherent theory, narrative, or evidence that could be fact-checked (Row 2 of Table 1). The distinction between these groups was in part based on discussions with fact-checkers actively investigating rumors during electoral periods in LMICs. The simplicity of each category should improve opportunities for the replication of this classification process for labeling political misinformation. 1

Example Posts by Type of Misinformation.

We hypothesize that STQs will be beneficial in combating both unproven and ambiguous misinformation. Theoretically, as with fact-based claims, STQs should similarly push readers to consider the truth of unproven and ambiguous misinformation even when they may otherwise not consider the posts to contain slanted or biased information. An overview of the composition of misinformation present on social media platforms during Kenya’s 2022 election is contained in Supplemental Information file, Appendix B. In total, reflecting our concern regarding the potential limitations of fact-checking in LMICs and Kenya more specifically, we find that 61% of identified misinformation was categorized as either unproven (32%) or ambiguous (29%). We rely on data from this subset for our analyses to ensure that we are testing STQs on the types of misinformation that could not have been addressed by fact-checking efforts.

Research Methods

Survey Design

We constructed a survey to test these hypotheses among Kenyans in the aftermath of the 2022 general election (N ~ 4,000). 2 Participants were randomized to one of three versions of our survey, one for each of our outcomes of interest: Accuracy (N = 1,297), Sharing (N = 1,338), and Trust (N = 1,376). Each of these surveys were identical except for which of these three dependent variables we measured. We chose to only ask about one of these measures for each participant because preceding questions can influence responses to later questions (Schwarz 1999), specific to our outcomes of interest, asking participants to make judgments about a post’s accuracy can change participants’ sharing behavior or intent to share (e.g., Pennycook et al. 2021).

Participants were paid 101 Kenyan Shillings (KES) for their participation in the survey, which was estimated to take 10 minutes to complete. Participants were recruited using online advertisements on Facebook, with targeting for recruitment stratified by the region, age, and gender based on information contained in their public profiles (Rosenzweig and Zhou 2021). 3 Recruitment advertisements were distributed from an account associated with the Center for an Informed Public (CIP). 4 To ensure high quality in our survey responses, we removed participants who failed our attention check as well as participants who completed the survey in under three minutes.

Post Creation

Our survey employed a two (STQ: treatment or control) by two (political alignment: aligned vs. misaligned) by two (misinformation type: unproven vs. ambiguous) within-subjects design. Each participant judged eight key posts, half-aligned and half-misaligned with their political orientation, half containing ambiguous and half containing unproven misinformation, and half appearing with and half appearing without an STQ reply (see Figure 1).

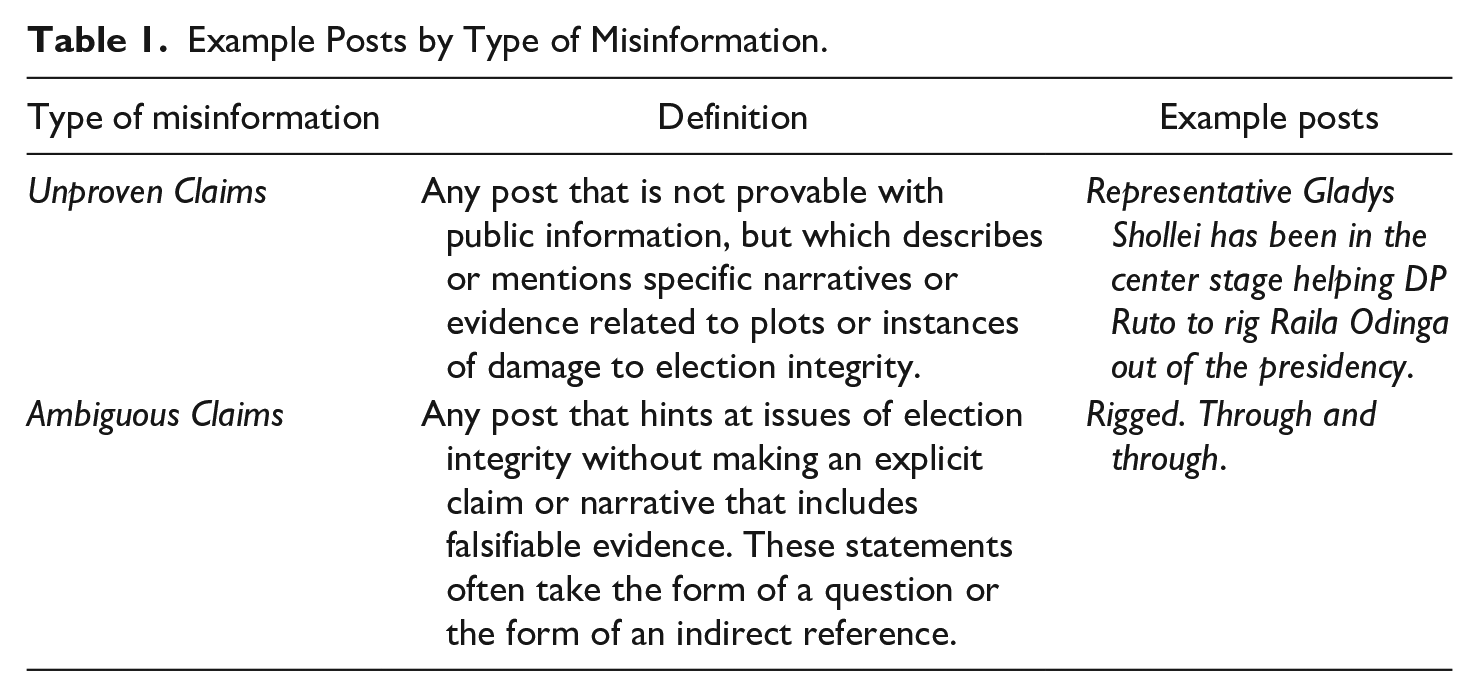

An example of the STQ present (treatment) and STQ absent (control) versions of a post.

Starting in June 2022 and continuing through the postelection appeals in September, we collected a set of over two million Twitter posts relating to the election in order to identify election misinformation using Twitter’s (now depreciated) Academic API. 5 From this collection, 2,600 posts were randomly selected and coded by the authors to identify posts containing false or misleading election rumors. In addition to this Twitter collection, we recruited a set of local volunteers to identify potential misinformation across both mainstream social media platforms and EMAs, including WhatsApp, Telegram, and TikTok. To simplify the process and to ensure that it had been widely publicized, we narrowed our focus to misinformation present in both the initial Twitter collection and the crowdsourced submission process. Of the matching sets, each claim was examined by at least one of the authors for accuracy and the set was further narrowed to ensure that only false and misleading election claims were eligible for inclusion. By focusing on this subset, we attempt to test the applicability of STQs on the misinformation they are best suited theoretically to counter. In this context, STQs do not represent a substitute for fact-checking efforts, but as a complement capable of extending the reach of debunking methods to improve the coverage of debunking strategies in resource-scarce contexts. A final set of 72 claims was integrated into a pre-survey to enable norming by accuracy and partisanship. From this process, which is explained in more detail in Supplemental Information file, Appendix E, a final set of eight key posts was selected.

To test and account for the role of partisanship, we selected four posts to be aligned with William Ruto and four were aligned with Raila Odinga. Depending on the partisanship of the respondent, these were coded as either aligned (Ruto-Ruto; Odinga-Odinga) or misaligned (Ruto-Odinga). We code posts containing misinformation as aligned or misaligned based on their political subject and target, with discussions taking place between the researchers and local experts to adjudicate the partisan lean of narratives for which the alignment was uncertain. For both the four Ruto-aligned posts and the four Odinga-aligned posts, half of the posts (two) contained ambiguous misinformation and half of the posts (two) contained unproven misinformation.

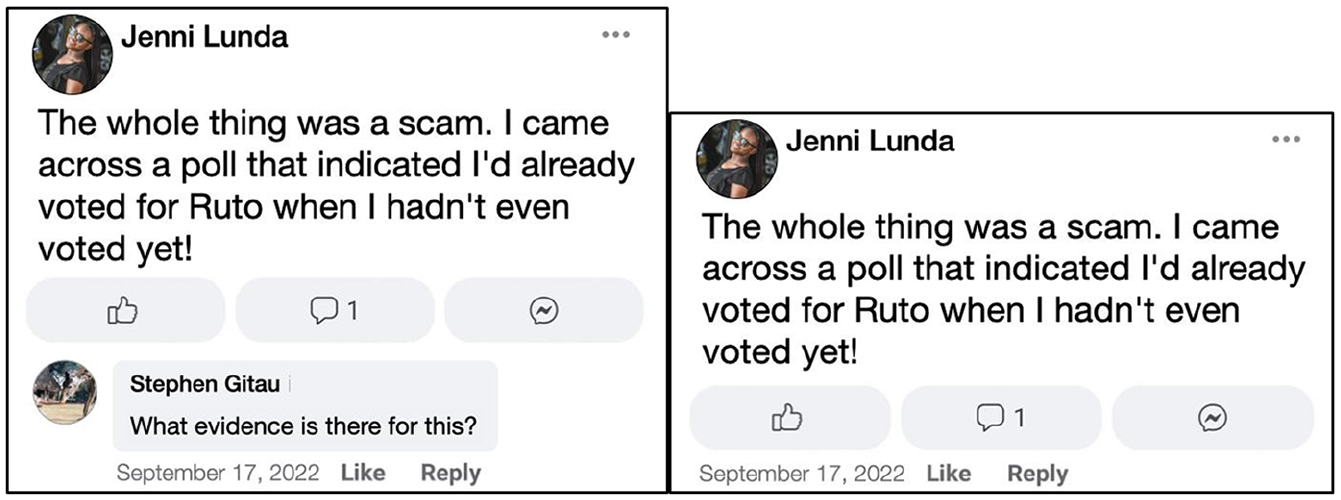

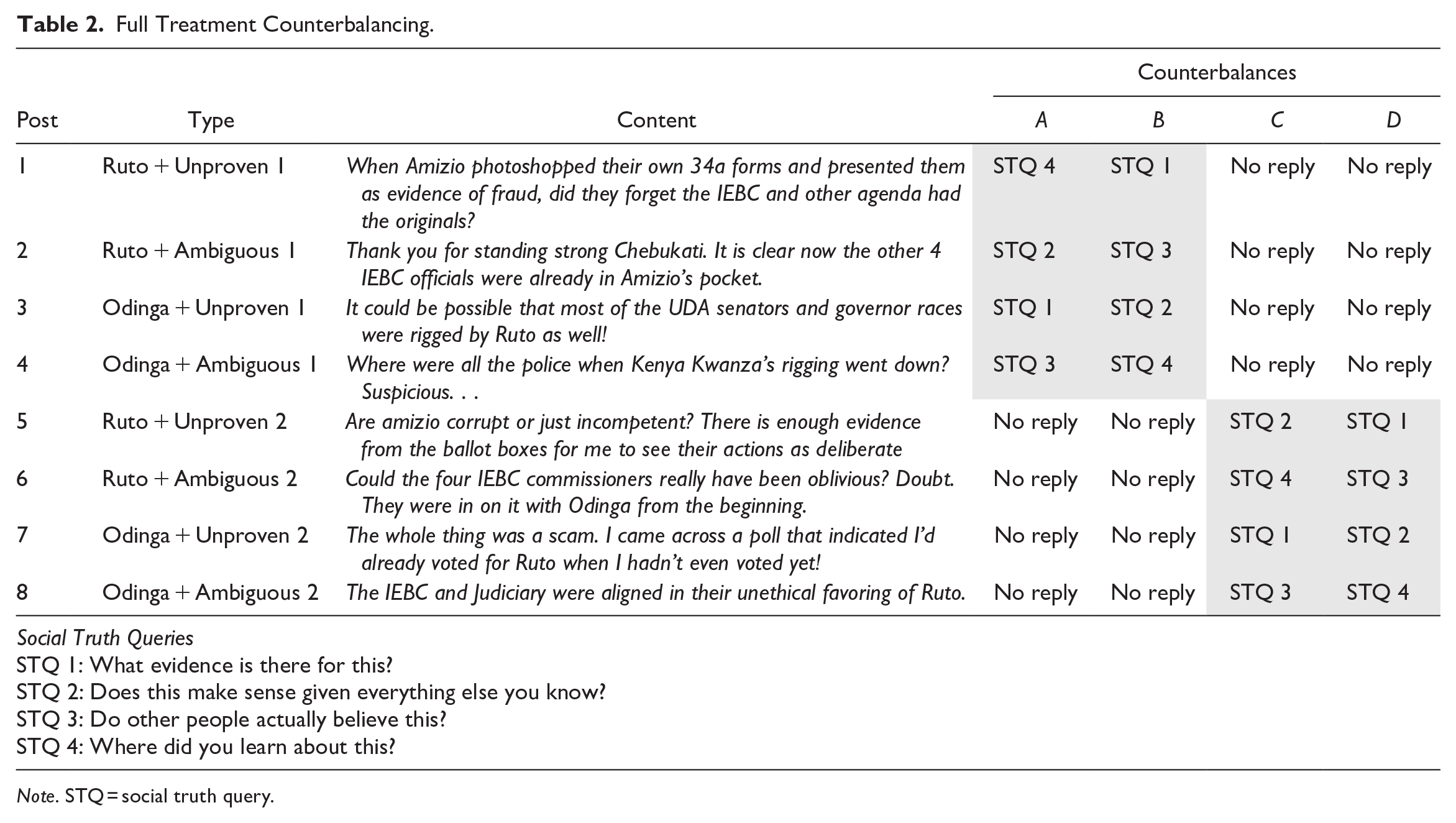

To test the effects of STQs on these posts, we wanted participants to see STQs on half of each type of post. Thus, we divided our key posts into two sets of four, with each set of four containing one post of each of the following types (Ruto-aligned/ambiguous, Ruto-aligned/unproven, Odinga-aligned/ambitious, Odinga-aligned/unproven). Each participant saw one of these sets of four posts with an STQ. In doing this testing, we also wanted to ensure that any differences observed between our STQ-present and STQ-absent posts were due specifically to the presence of an STQ reply and not due to any variation in the posts themselves (i.e., item effects). To do this, we varied sets of posts so that each post appeared approximately the same number of times, with and without an STQ, between participants. In addition, we wanted to make sure any STQ findings were generalizable and not an idiosyncratic result of assigning specific STQs to specific posts. To do so we varied which of our four STQs were matched with each post between participants so that, when a post did appear with an STQ, there were two separate STQ selections. To vary both sets of potential outcomes, we created four different counterbalances of our stimuli and assigned participants to one at random. Full details of the posts and STQs in each of these four counterbalances can be viewed in Table 2.

Full Treatment Counterbalancing.

Note. STQ = social truth query.

More information on the process used to create stimuli from these posts can be found in Supplemental Information file, Appendix G.

Survey Procedure

Participants were informed that our interest was to learn more about Kenyan perceptions of recent social media posts and that they would be presented with a series of twelve recent social media posts. They were also told that some of the posts contained true information while other posts contained false or unproven information and asked to refrain from looking up answers during the survey.

Participants then saw twelve posts: the eight key posts described above (half appearing with STQs, half appearing without STQs) and four filler posts. 6 These posts were presented in a randomized order. Participants in the accuracy version of the survey answered: How accurate is the information in this post? on an unnumbered six-point scale ranging from very accurate (coded as 6) to “very inaccurate” (coded as 1). Participants in the sharing version of the survey answered, How likely would you be to share this post? on a six-point unnumbered scale ranging from very likely (coded as 6) to very unlikely (coded as 1). Finally, participants in the trustworthiness version of the survey were asked, How trustworthy is the author of this post? with answers ranging from very trustworthy (coded as 6) to very untrustworthy (coded as 1).

Following this judgment task, we asked participants across all three survey versions to indicate who they voted for in the recent election with the following response options: William Samoei Ruto - Kenya Kwanza Alliance, Raila Amolo Odinga - Azimio la Umoja, I didn’t vote, and Other. Participants who answered that they did not vote or Other, were then asked if, of the top two candidates, there was a party or candidate they preferred. We additionally collected measures of whether participants thought the election was free and fair and how likely it was that one of the primary candidates had attempted to use fraud to win the election.

Participants then completed demographic questions, which included the collection of self-reported data on ethnicity, gender, age, and education, among others. 7 Finally, participants were debriefed regarding the facticity of the posts they had seen, including a reminder that there has been no evidence that widespread fraud took place during the Kenyan election.

Results

Can social truth queries limit the influence of electoral misinformation when fact-checks are unavailable? We first assess the consistency of the results with Hypotheses 1–3, which examine the effect of the inclusion of any one of the utilized STQs on the perceived accuracy (H1), sharing intentions (H2), and the perceived trustworthiness of the poster (H3). That is, this analysis looks at how much the inclusion of an STQ in the comments altered survey responses.

To assess the effects of STQs on these outputs, we first compare the ratings of all treatment posts (posts that appeared with STQs) and all control posts (posts appearing without STQ), pooling together posts aligned and misaligned with the partisanship of the reader Accuracy, sharing, and trust responses were coded from our six-point scales such that higher values represent higher accuracy, higher willingness to share, and higher perceived trustworthiness of the poster. All subsequent tables present model outputs that utilize regression models employing clustered heteroskedasticity-consistent standard errors (HC1) to help account for within-subject variation. Demographic controls for all models are included for gender, age, region, education level, ethnicity, employment, religion, and misinformation type. 8

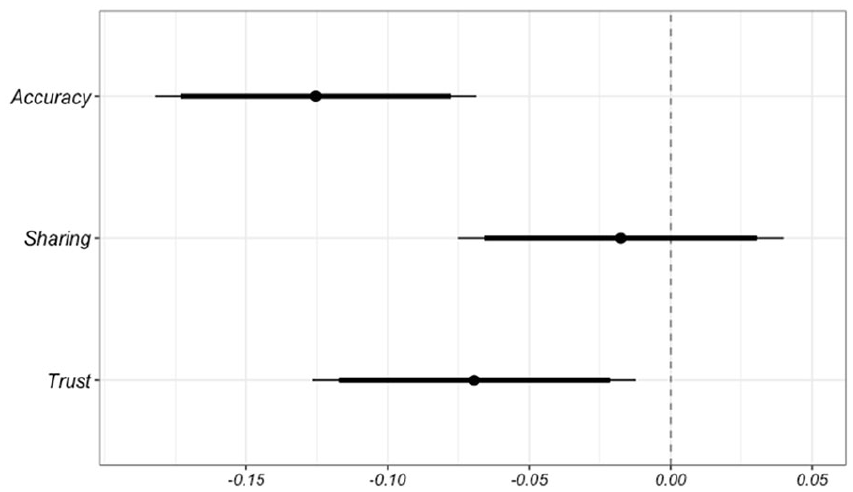

See Figure 2 for the aggregated results by treatment group, with the longer and shorter confidence intervals representing significance at the 95% and 90% levels, respectively. 9

Aggregate effect of STQ inclusion across treatment groups (H1–H3).

In assessing H1, we see that the addition of STQs to social media posts significantly reduces the likelihood that a respondent assesses rumors to be accurate and the poster of the misinformation to be trustworthy relative to the control group. In looking at the sharing intentions of participants, although the directionality of the results is consistent with H2, the sharing intentions of participants, the effect size is indistinguishable from zero. Finally, with respect to H3, the perceived trustworthiness of the poster of the misinformation, the results present evidence that STQs reduce respondent perceptions of the trustworthiness of the misinformation posters. Overall, considering low initial ratings and the highly politicized domestic electoral environment, STQs appear most helpful in reducing perceptions of rumor accuracy (H1) with additional evidence that they serve to lower perceptions of poster trustworthiness (H3).

Though not pre-registered, due to high rates of disbelief in the misinformation stimuli, we also derive an observed measure of each respondent’s susceptibility to misinformation to assess the influence of the treatment on the participants most likely to be influenced. To develop this score, we employ a linear regression model to predict participant susceptibility among only the control stimuli, or the posts which did not contain STQs, based on the following set of demographic variables: respondent gender, age, education, ethnicity, employment, urbanization, province of residence, and religion. This data-driven framework allows us to generate continuous measures of susceptibility grounded in the participants’ own responses, providing distinct susceptibility scores for each of the three survey groups (Accuracy, Sharing, and Trust) which are not confounded by treatment effects. Using these generated coefficients, we can calculate a susceptibility score for each participant which is not dictated by their own survey responses. Cohort selection in the main analyses contained in Figure 3 use a threshold of one standard deviation greater than the mean. 10

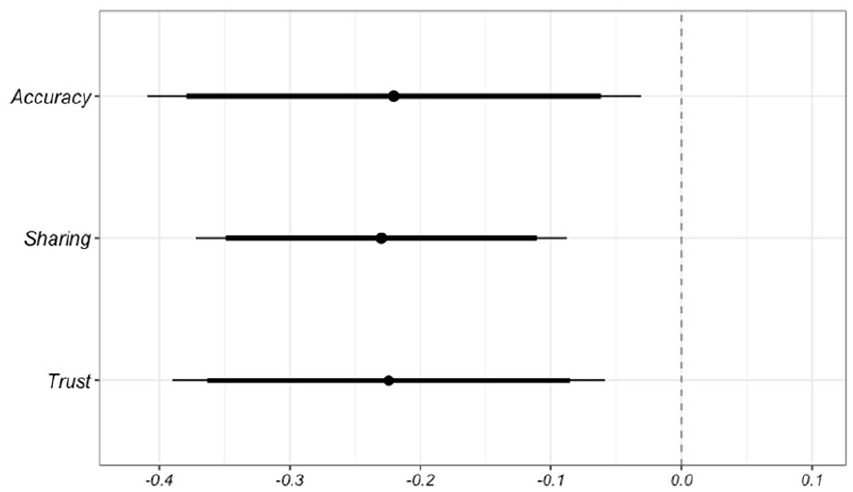

Effects of STQ inclusion among susceptible participants.

Figure 3 details the results of the main STQ analyses conducted among this susceptible subset.

As illustrated in Figure 3, the results of the STQs among this high-priority group are at least twice as large across the main DVs for each survey (Accuracy, Sharing, and Trust). 11 These results are consistent with the theory that STQs work by drawing attention to the truth of information among users who would otherwise accept it as valid. We find the amplified effects among this subset to be one of the most promising results related to the future impact of STQs.

Partisan Alignment

We next assess whether the efficacy of STQs depends on whether targeted misinformation is aligned or misaligned with the partisanship of the respondent (H4) in order to address challenges related to partisan-motivated reasoning. Partisanship was taken from self-reported data on voting history in the 2022 election, resulting in 1,070 Odinga voters, 1,990 Ruto voters, and 951 third-party or nonvoters. For the analysis, we coded claims as “1” when they were political claims aligned with the respondent’s partisan preferences, and as “0” when they were misaligned. 12 Third-party and nonvoter respondents were not included in this analysis. 13

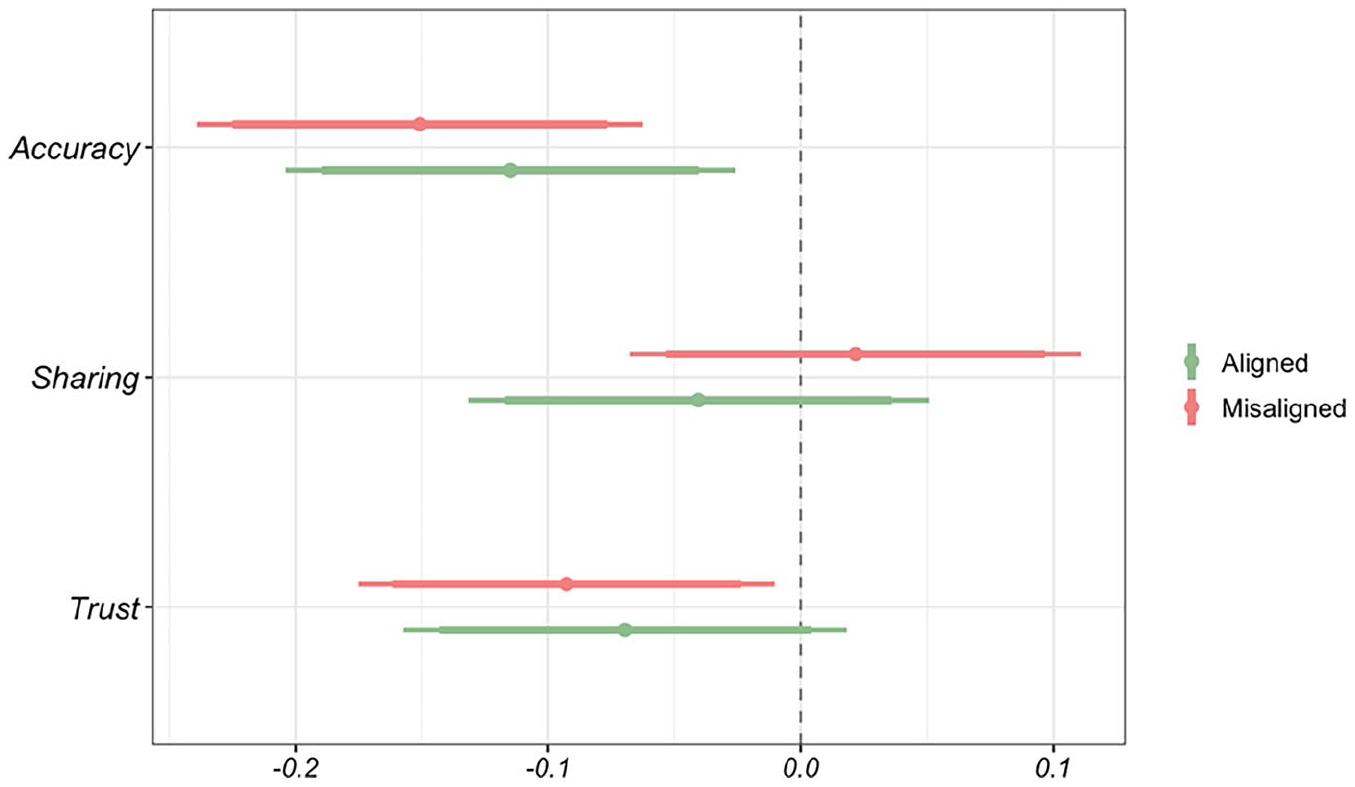

Figure 4 details the effect of STQs on aligned and misaligned misinformation posts. 14

Effects of STQ inclusion on misaligned versus aligned posts.

Contrary to expectations presented in H4, the results presented in Figure 4 show that the effect of STQs did not differ depending on whether the targeted misinformation was aligned or misaligned with the reader’s partisanship. Rather, STQs had similar effects on accuracy and trustworthiness perceptions across both claim types, speaking to the potential utility of STQs in partisan environments. In terms of real-world influence, as misinformation is often spread by a small proportion of active posters (Kennedy et al. 2022), reducing trust in these posters could potentially reduce interactions with these posters in the future. In addition, the persistence of the direction of the effect across all three treatment groups in pairings with posts from co-partisans (aligned posts) illustrates the potential for long-term benefits from STQs.

Finally, with candidate supporter splits depicted in full in Supplemental Information file, Appendix O, we find that the effects are most prominent among supporters of Raila Odinga. We also find the persistence of well-documented “winners effect,” which are differentials in trust in electoral legitimacy by the outcome of supported candidates (Berlinski et al. 2023; Sinclair et al. 2018). Given the potential consequences of elevated belief in misinformation among this cohort, the persistence of the effects of STQ inclusion across treatment groups among Odinga supporters is highly encouraging. Collectively, the results of the political alignment analyses show that the efficacy of STQs on accuracy and trustworthiness perceptions is not substantially influenced by partisanship.

Misinformation Type

In addition to the alignment of the post, we examine the comparative efficacy of STQs across two prominent forms of misinformation: unproven and ambiguous misinformation (H5) to see if STQs can serve as both a substitute for fact-based corrections when they are not available due to the presence of either private or prohibitive information (unproven) as well as whether STQs can address misinformation that is not applicable to fact-based corrections (ambiguous).

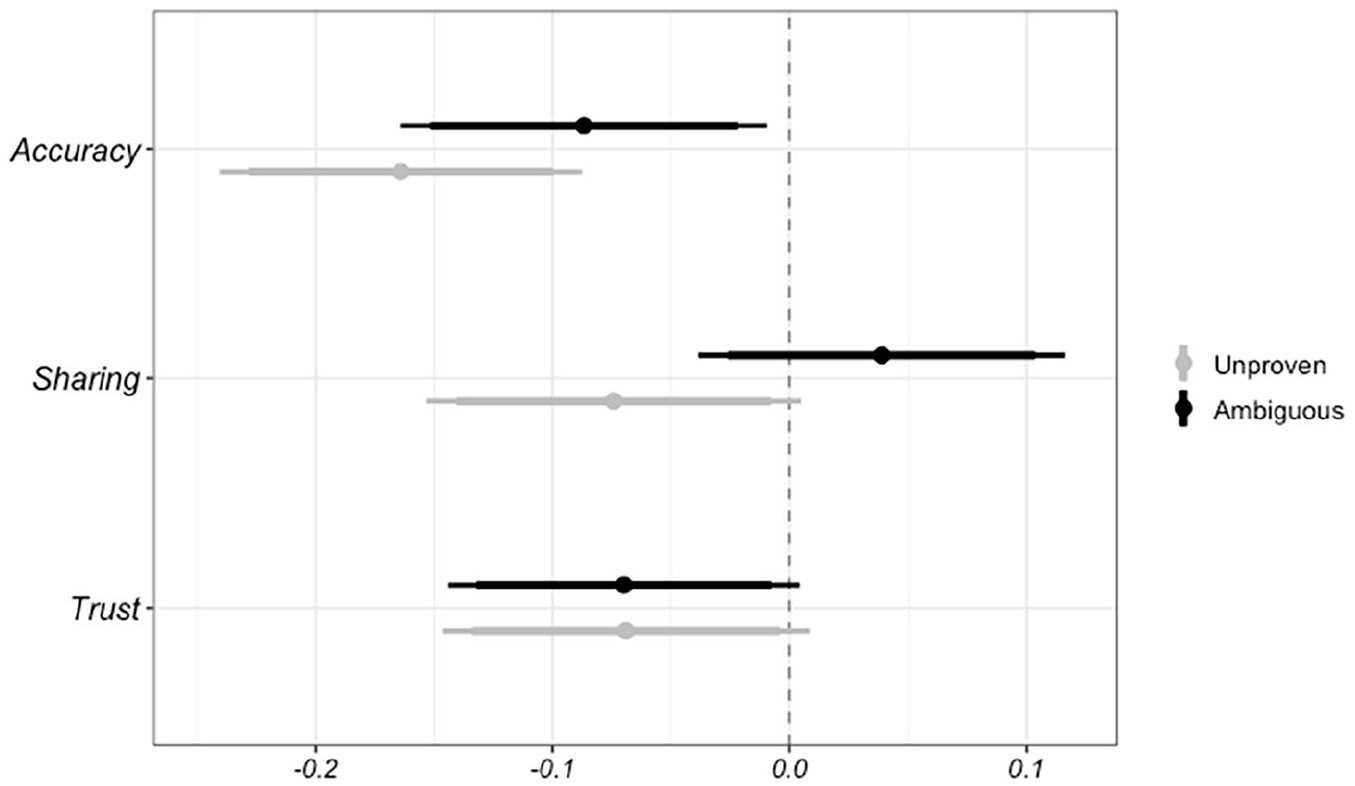

Figure 5 notes the effect of STQs on unproven and ambiguous misinformation. 15

STQ inclusion effects across unproven (gray) & ambiguous (black) misinformation.

The results of analyses suggest STQs retain their effectiveness across both types of nonfact-checked misinformation other than splits in the sharing condition. Specifically, in the sharing condition STQs were more influential in reducing sharing intentions when paired with unproven misinformation. That is, when the misinformation targets a specific actor and details the intent of the conspiracy, respondents were less likely to signal their intention to share the post than in the control condition. The direction of this effect was reversed when the misinformation was unproven. Though determining the reason for this split would require more research, we speculate the low rates of reported sharing at baseline resulted in shifts that reflect emotional responses to claims that don’t rely on specific targets. Alternatively, perceptions of accuracy and trustworthiness perceptions see consistent directional effects across both forms of misinformation.

Taken in full, these results are encouraging as they suggest that STQs may serve as a complement to fact-checking across a range of misinformation narratives. Consistent with H5, the split results suggest that STQs are capable of countering multiple forms of noncheckable political misinformation.

Discussion and Conclusion

The primary contribution of the study is a test of “social truth queries” (STQs) as a novel strategy to address the spread of political misinformation. We specifically focus on the use of STQs to address the spread of misinformation in LMICs that could not be addressed using existing fact-checking approaches. In a survey of ~4,000 Kenyan participants, we find that the presence of STQs on social media posts containing misinformation reduced participants’ ratings of information accuracy and trust in the poster of the misinformation. Crucially, the effects of STQs on accuracy and trust judgments were similar for both posts containing misinformation aligned and misaligned with participant partisanship, as well as for two types of noncheckable misinformation (ambiguous and unproven). Encouragingly, these results were observed when using several different STQs and when varying STQ-post pairing, providing evidence that different types of STQs may be effective in addressing a variety of types of misinformation. However, we did not find STQs to be effective at shifting sharing intentions in this context.

STQs sidestep many of the challenges facing fact-checking efforts in LMICs providing a flexible, user-driven approach that can be quickly implemented to address a variety of different types of misinformation, including misinformation that cannot be addressed with the reach of fact-checking work. As such, STQs have the potential to extend the reach of debunking methods to additional forms of pernicious misinformation that threaten the legitimacy of elections in nascent democracies.

This work extends on initial investigations on STQs (Jalbert et al. 2023) by demonstrating the effectiveness of STQs in reducing belief in partisan misinformation in the context of Kenya’s 2022 election. We demonstrate that STQs can be effective in reducing perceptions of the trustworthiness of misinformation purveyors, which is an important outcome given that an outsized proportion of misinformation is often shared by small, but hyperactive groups of individual “repeat spreaders” (Kennedy et al. 2022). The persistence of the direction of the effect across all three treatment groups and within pairings with posts from co-partisans (aligned posts) illustrates the potential for STQs to depress trust in these posters.

Although Jalbert et al. (2023) found that the presence of STQs consistently reduced intent to share false information, we did not replicate these findings in our overall results. We suspect that these disparate findings are likely due to the difference in the types of misinformation being targeted. While Jalbert et al. (2023) tested STQs on unfamiliar false claims that were unlikely to feel identity-relevant, we tested STQs on more familiar, identity-relevant misinformation narratives that were expected to be more difficult to address. Generally, Jalbert et al. (2023) found smaller effects on sharing intent judgments than on truth judgments, our lack of observed effects here may be reflective of this being a particularly difficult misinformation to address as well as sharing intent being more difficult to shift. In addition to these insights, in differentiating between two types of electoral misinformation, we illustrate that STQs can be used when fact-based corrections are either inapplicable due to the content of the information (ambiguous misinformation) or unavailable due to time, cost, or accessibility constraints (unproven misinformation). That these results were generated following the contentious Kenyan election—when misinformation would be expected to be particularly difficult to address—provides further confidence regarding the potential portability of STQs to other contexts.

In addition to looking at the effects of STQs across all of our participants, we also conducted exploratory analyses looking at “susceptible” users within each survey group (accuracy, trust, or sharing). When looking at the impact of STQs among just these users, we observed more substantive effects across all three judgments. That the observed effects of STQs are largest among the people who were at baseline the most susceptible to misinformation is consistent with the idea that STQs are effective when they get users to consider information truth when they would not otherwise do so. If readers are already suspicious, then STQs are unlikely to further influence their judgment. These observations indicate that, while the effects of STQs may appear relatively small among all participants, figuring out a way to target STQs to contexts where users may be most susceptible may increase their impact.

While these results are encouraging, the specifics of the intervention necessitate caution before applying this intervention broadly in the real world. Further research is needed to assess how users would deploy STQs in real time as well as how different platform affordances may alter the appearance (and influence) of STQs. As an example of future areas for examination, the persistence of the impact of STQs should consider the number of comments present under each post, the emotional valence of accompanying comments, and the timing of the STQ comments. Relatedly, our methods were set up to broadly test the effectiveness of different STQ replies on a variety of posts rather than to provide a controlled test of different individual characteristics. Given this limitation, which guided us to only compare posts appearing with STQs to posts with no replies, there is also the possibility that our observed effects may be driven by aspects of these replies which are not specific to the STQs, such as in-group trust or co-ethnicity. This possibility was given more thorough testing and ruled out by Jalbert et al. (2023), who found that only replies containing STQs—and not unrelated replies from the same posters—reduced belief in and intent to share misinformation. However, this possibility is worth confirming in future studies in additional contexts.

An additional consideration is the potent effect of STQs on true information. In our study, we only tested the impact of STQs on false information to mirror how this strategy is being implemented by the CABC, who rely on expert-trained volunteers to identify and respond to problematic posts and misinformation. One concern is that STQs may have negative effects if posted in response to credible information. As this possibility has not yet been tested, we currently recommend caution with implementing STQs in contexts where they are likely to appear on true information. Moreover, though we expect the electoral context has limited the ceiling of STQs, we cannot be certain that this is the case until they are examined in the real world beyond political settings. As a final consideration, we expand on ethical challenges related to the conduct of research using STQs and related interventions in Supplemental Information file, Appendix R.

In light of these areas for further research, at present, the results from this initial study illustrate the broad applicability of STQs for moderating underlying attitudes toward misinformation in polarized political contexts and across various types of misinformation. Additional studies are needed to assess the applicability of STQs across contexts and social media platforms to ensure that the messages selected for deployment as STQs are culturally relevant and politically neutral to maximize their influence among localized audiences.

Supplemental Material

sj-docx-1-hij-10.1177_19401612241301671 – Supplemental material for Social Truth Queries as a Novel Method for Combating Misinformation: Evidence From Kenya

Supplemental material, sj-docx-1-hij-10.1177_19401612241301671 for Social Truth Queries as a Novel Method for Combating Misinformation: Evidence From Kenya by Morgan Wack and Madeline Jalbert in The International Journal of Press/Politics

Footnotes

Acknowledgements

We would like to thank Jenna-Lee Strugnell and Stef Snel from the Centre for Analytics and Behaviour Change and Tales of Turning for their continued assistance in helping to design the intervention. We would also like to thank Luke Williams for his assistance coding data for the project.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is supported by the John S. and James L. Knight Foundation through a grant to the Institute for Data, Democracy & Politics at The George Washington University.

The preparation of this article was supported by the University of Washington’s Center for an Informed Public and the John S. and James L. Knight Foundation.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.