Abstract

Can residents of Ukraine discern between pro-Kremlin disinformation and true statements? Moreover, which pro-Kremlin disinformation claims are more likely to be believed, and by which audiences? We present the results from two surveys carried out in 2019—one online and the other face-to-face—that address these questions in Ukraine, where the Russian government and its supporters have heavily targeted disinformation campaigns. We find that, on average, respondents can distinguish between true stories and disinformation. However, many Ukrainians remain uncertain about a variety of disinformation claims’ truthfulness. We show that the topic of the disinformation claim matters. Disinformation about the economy is more likely to be believed than disinformation about politics, historical experience, or the military. Additionally, Ukrainians with partisan and ethnolinguistic ties to Russia are more likely to believe pro-Kremlin disinformation across topics. Our findings underscore the importance of evaluating multiple types of disinformation claims present in a country and examining these claims’ target audiences.

Introduction

Sharing politically relevant information online and via social media is easier than ever (Tucker et al., 2017, 2018). However, as a result, people now routinely encounter a growing supply of various forms of untrue and misleading content, including disinformation—false or distorted claims that are deployed strategically to mislead people. Disinformation’s global explosion (Lazer et al., 2018) 1 has led to fears that it is eating away the foundations of democratic societies because it destroys the factual basis on which citizens predict the consequences of their choices (Rid, 2020). This worsens debates over public policy (Kuklinski et al., 2000) and hampers citizens’ ability to choose elected officials (Zimmermann and Kohring, 2020).

Beyond these theoretically-driven governance concerns, media reports are rife with examples of how disinformation is associated with dangerous, tragic, or otherwise undesirable societal outcomes. In Myanmar, disinformation spread by the military was instrumental in the ethnic cleansing campaign carried out against the Rohingya community (Mozur, 2018). In the United States, a sustained disinformation campaign by former President Donald Trump and his political allies preceded his supporters’ January 6, 2021, attack on the U.S. Capitol, and, in 43 states, Republican lawmakers are using this disinformation campaign as a pretext to restrict access to voting (Gardner et al., 2021). In many countries across Europe, disinformation about the safety of vaccines has coincided with a resurgence of measles (Burki, 2019).

The theoretical concerns and the empirical examples listed above raise a set of questions. First, can people tell the difference between factually-based stories and disinformation, and are some people better at this than others? Indeed, while writing in The Lancet about the prevalence of vaccine disinformation and the rise of measles, Burki (2019) points out that the impact of disinformation is difficult to assess. Understanding whether people can distinguish between true stories and disinformation, in general, is a critical step in assessing its impact. Second, given the wide range of topics subject to disinformation, can people distinguish between factual claims and disinformation more easily for some topics compared with others? Third, does the apparent strategic objective of the disinformation shape whether people believe it? We address these questions using survey data from Ukraine.

Ukraine, intensively targeted by pro-Kremlin disinformation campaigns (Lange-Ionatamišvili, 2015), provides an ideal environment to undertake this research. While an emerging research agenda tackles belief in fake news coverage in the United States (e.g., Bronstein et al., 2019; Clayton et al., 2020; Pennycook and Rand, 2018)—including disinformation emanating from Russia (e.g., Porter et al., 2018)—there is a paucity of research that investigates how the public absorbs or reacts to pro-Kremlin disinformation elsewhere. Conducting this research in Eastern and Central Europe is particularly important, since theoretically, many of these countries may have low levels of resilience to online disinformation (Humprecht et al., 2020) and high levels of exposure. These regions are the ones where researchers will best be able to study the effect of disinformation and determine whether Russian efforts are successful in getting people to pay attention to and believe disinformation that serves Russia’s interests. Ultimately, measuring the conditions under which disinformation is effective will enable policy-makers and others to design efficient countermeasures, thereby mitigating the negative impact of disinformation.

In two separate studies, we ask Ukrainian respondents to assess the truth of each item in a battery of claims present in true news and statements identified as pro-Kremlin disinformation by EU vs Disinfo (EUD), a monitoring website that is part of the European Union’s efforts to track and respond to Russian disinformation. We randomly assign statements to each respondent, with each disinformation claim corresponding to one of four topics and one of three strategic objectives that are characteristic of pro-Kremlin disinformation. Each true statement that respondents rate also clearly corresponds to one of the four topic areas.

Many definitions of disinformation exist, not all of which are consistent with each other. 2 For our study, we chose to select statements that qualified as disinformation because they were either demonstrably false or stated that improbable future events would occur. We limited our study to these types of disinformation because of our interest in how embracing false or improbable beliefs could erode the quality of a society’s democracy.

We find that the average respondent is usually able to distinguish between verifiably true news stories and pro-Kremlin disinformation claims. However, for one topic area, economics, subjects are less able to distinguish between true stories and disinformation, and for the other three topics respondents report low levels of certainty about the truth propositions of true stories. We do not find significant differences in respondents’ belief in stories across strategies of disinformation.

In addition to examining the relationship between topic, strategy, and belief, we also examine respondent-specific correlates of belief in disinformation. We find that those who have partisan or contextual connections with Russia, such as supporting a political party that advocates closer relations with Russia, preferring to communicate in Russian instead of Ukrainian, and claiming Russian ethnicity, are more susceptible to believing pro-Kremlin disinformation. In line with previous literature (e.g., Pereira et al., 2018), we also find that those who are more politically sophisticated are less likely to believe Russian disinformation.

We make two contributions to the existing literature. First, we build on previous classification schemes of pro-Kremlin disinformation claims that we test in two ways. The items in our disinformation battery span a variety of strategies associated with Russia’s goals (promoting Russia’s image, undermining Ukraine, and disparaging the West). Each item in both the true news stories and disinformation claims also falls into one of four topic areas (economics, history, military, or politics). Both the strategies and topics chosen were derived from a close reading of contemporary pro-Kremlin disinformation stories and background research on KGB disinformation strategies. Second, we build on prior literature by examining two sets of individual-level correlates (contextual closeness with Russia, and political sophistication and awareness) of belief in disinformation that have been shown to matter in other contexts (e.g., Nyhan, 2010; Allcott and Gentzkow, 2017), but have yet to be tested in Eastern Europe. These tests help us situate our findings in ongoing cross-national research on the correlates of belief in disinformation.

Pro-Kremlin Disinformation Campaigns

Like many authoritarian regimes, the Russian government effectively controls mass information domestically, but it cannot impose widespread censorship on foreign media outside its reach. Still, the Russian government and other pro-Kremlin actors desire that people abroad believe pro-Kremlin claims about events because, if people believe Russia’s claims, they are more likely to support Russia’s actions (Lewandowsky et al., 2013). When persuasion is unfeasible, these campaigns intend to obfuscate or confuse by overwhelming individuals until they cannot distinguish between fact and fiction (Paul and Matthews, 2016; Pomerantsev and Weiss, 2014). If authoritarian regimes, such as Russia, want to internationally spread untrue claims of events themselves or through their supporters to shape views abroad, they and their supporters can use disinformation techniques within open media environments (Walker, 2016).

In the Putin era, Russia has vastly expanded its media presence outside its borders by offering media content in multiple languages. RT (formerly Russia Today), a Kremlin-backed global news channel, was created in 2005 as a counterweight to Western international media. Following the Ukrainian crisis, Russia also launched Sputnik, a Kremlin-backed news agency.

Pro-Kremlin actors have also spread disinformation on social media platforms. The 2016 U.S. presidential election provides one dramatic example of the coordinated Russian government effort to spread disinformation on social media. In late 2017, Facebook announced that 470 inauthentic accounts linked to Russian operations spent $100,000 in advertising to publish roughly 3,000 ads during the election period (Stamos, 2017). Similarly, Twitter notified 1.4 million users about potential interactions with accounts linked to the Internet Research Agency, a Russian government organization (Twitter, 2018). These efforts targeting the U.S. presidential election are emblematic of Russia’s global disinformation efforts, which have affected countries worldwide (Helmus et al., 2018, 14–21).

Researchers have documented a diverse array of story subjects that Russian disinformation campaigns have targeted in recent years. These include attempts to promote hoaxes about Ebola outbreaks and chemical accidents in the U.S. (Chen, 2015), and interference in the 2016 U.S. presidential race (e.g., Grinberg et al., 2019; Jensen, 2018). Russia’s disinformation campaigns can also occur alongside their overt or covert military operations. Recent examples of disinformation campaigns proceeding alongside military operations can be found in Russia’s 2008 war with Georgia and its 2014 invasion of Ukraine’s Crimean peninsula (Iasiello, 2017). There is also substantial evidence that modern Russian disinformation campaigns have their roots in Soviet-era “active measures” (e.g., Shultz and Godson, 1984; Abrams, 2016). 3 Indeed, descriptions of Soviet efforts (e.g., Bittman, 1985) sound remarkably similar to journalistic accounts of Russian disinformation tactics today.

Russia’s disinformation campaign targeting Ukraine is well-documented (e.g. Jaitner and Mattsson, 2015; Mejias and Vokuev, 2017). Russia has been described as taking a “4D approach” — dismiss, distort, distract, and dismay (Snegovaya, 2015). In addition to promoting Russia and attacking the West, they also seek to undermine Ukraine. Russia’s disinformation campaigns have been marked by consistently repeated claims, for example, the Russian media’s multiple false explanations for the downing of Malaysia Airlines flight MH17 (e.g. the U.S. shot down the aircraft in order to damage Russia’s reputation; the aircraft was hit by a Ukrainian missile fired from the ground), many of which were subsequently discredited by the Ukrainian government (Lange-Iotamišvili, 2015).

Topics and Strategies of Russian Disinformation

Our first contribution is to classify and quantitatively measure belief in various types of pro-Kremlin disinformation statements according to the topic they cover and the strategic objective they advance. Since these disinformation campaigns have covered a wide range of events, we sought to systematically categorize them. Our close reading of pro-Kremlin disinformation articles leads us to argue for a topic categorization similar to Meiselman et al. (1987), who wrote of Soviet disinformation during the Cold War, “There are basically three types of disinformation: political disinformation, military disinformation, and economic disinformation” (33). Indeed, we argue that many contemporary pro-Kremlin disinformation stories can be grouped into the three high-level topics Meiselman et al. (1987) identify. To these three topics, we add a fourth category, historical/cultural claims or statements (we label these historical for brevity), which we found abundant and which did not fit into the three previous categories. Indeed, we chose these four areas because of their relevance in both historical and contemporary Russian disinformation efforts. We present each of these categories, which should apply generally to pro-Kremlin disinformation, but focus on Ukrainian examples, given our study is carried out in Ukraine.

Economic coverage may be a fecund area in which to sow disinformation, particularly in countries with a history of economic mismanagement such as Ukraine. Citizens may be susceptible to stories that disparage the domestic economy because they are accustomed to hearing true stories of economic mismanagement and corruption. Such mismanagement has pervaded Ukraine’s post-Soviet history (Åslund, 2005) and continues to plague contemporary Ukraine (Denisova-Schmidt and Prytula, 2017).

Citizens may also believe political disinformation in nascent and noninstitutionalized democracies, such as Ukraine, because of the weakness of political and democratic institutions (Way, 2015). Therefore, similar to the relationship between weakening political institutions in OECD democracies and increased belief in disinformation (Bennett and Livingston, 2018), the lack of political institutional authority makes it more likely that citizens will believe disinformation about politics. Indeed, Ukrainians often view political actors as likely to deceive them and engage in unscrupulous behavior (Chudowsky and Kuzio, 2003, 278). As with the economic topic, disinformation about Ukrainian politicians or governing structures may sway audiences accustomed to hearing negative news about their politicians.

Pro-Kremlin disinformation claims about the military deserve particular attention in Ukraine. Military-related disinformation forms a recurring part of Russia’s global disinformation efforts and was part of Russian efforts to distract or mislead the international community and the Ukrainian population in response to Russia’s invasion of Crimea and support for insurgents in eastern Ukraine (Iasiello, 2017). Additionally, Russia has used disinformation aimed at discrediting NATO and other Western institutions to reduce their allure within Ukraine (Lange-Ionatamišvili, 2015). Finally, Russia has spread falsehoods about the actions of the Ukrainian military (Khaldarova and Pantti, 2016). Prior research suggests that military-related disinformation could be highly believable because, as with rumours, citizens usually do not have firsthand information about a conflict, and latching onto the disinformation claim can be a mechanism to cope with anxiety (Greenhill and Oppenheim, 2017).

We also find a large quantity of pro-Kremlin disinformation attempting to taint Ukrainian leaders with unsavory historical or cultural connections. This type of disinformation leverages the propaganda technique that Sproule (2001) has classified as “name-calling” and has been found to be prevalent in other contemporary propaganda contexts (e.g., Lakomy, 2020). Russia’s use of “name-calling” in the history topic likely also emerges out what have been dubbed the “memory wars” (Koposov, 2017). Russian leadership maintains the Soviet view that Russia was a liberating victor of World War II that vanquished the Nazis. Therefore, disinformation claims that fall in the historical topic tend to paint individuals or governments as having fascist or Nazi connections and attempt to reinforce Russia’s historical propaganda.

Along with varying topics, pro-Kremlin disinformation also appears to pursue varying strategic objectives. In one analysis, Kragh and Åsberg (2017) investigate a corpus of 3,963 Swedish Sputnik articles published in 2015 and find ten strategies, such as sowing “crisis in the West,” promoting a “positive image of Russia,” highlighting “Western aggressiveness,” and others. In a meta-analysis of annual reports from security services in 11 countries, Karlsen (2019, 10) similarly finds a common strategy is to blame problems on Western actions. In the Baltic States, common claims focus on the return of Nazism and fascism, and emphasize Soviet heroism in WWII and NATO aggression. We categorize all of these strategies into three types emerging from both recent scholarship and the literature on Soviet disinformation practices: “Undermine the government of the country” (undermine Ukraine), “Build up Russia” (pro-Russia), and “Disparage Western partners” (anti-West).

Which Audiences Believe pro-Kremlin Disinformation?

Our second contribution is to examine two sets of correlates of belief in disinformation. The first is comprised of correlates that link individuals to Russia, which we term contextual traits. The second set of variables relate to political sophistication and awareness. Both sets allow us to place our study of Ukraine in cross-national perspective.

Supporting pro-Russia political parties is the first contextual trait we explore. Evidence from the United States finds partisans are more likely to believe information congruent with their political beliefs (Pereira et al., 2018), and it appears that Russian disinformation seeks to target those already most supportive of the Russian government’s views (Kragh and Åsberg, 2017). Additionally, there is evidence that while RT sought to make its message compelling to a broad audience, it appealed primarily to those who distrust the American government (Yablokov, 2015). Evidence also exists that parties that are out of power (the relatively Pro-Russia parties in Ukraine at the time of the studies) in polarized environments such as both the United States and Ukraine are less likely to trust statements in the news media (Humprecht, 2019), which can lead to more uncertainty and less accuracy about whether statements are true or false. Given the partisanship and polarization, it stands to reason that Ukrainians who support pro-Russia political parties should be less likely to think true claims are true and more likely to believe pro-Kremlin disinformation claims are true relative to those who do not support such parties.

Language and ethnicity are two other individual-level characteristics that may also correlate with belief in pro-Kremlin disinformation. On the one hand, studies have shown that Ukrainians tend not to make political choices on the basis of ethnicity or language (Erlich and Garner, 2021; Frye, 2015), part of a larger accommodation of difference (Wanner, 2014, 431) and “nonmobilization” along these cleavages (Giuliano, 2018, 170). However, Russia’s current foreign policy seeks to reassemble “the fragmented world of Russian-speakers” (Makarychev, 2014, 186) and protect Russian language (Tsygankov, 2015). Indeed, there is evidence that the Russian government sees the ethnic and linguistic diaspora as a potentially supportive and sympathetic force for its foreign policy (Lange-Iotamišvili, 2015, 9). Given Russian policy towards language and ethnicity and the fact that ethnicity and languages spoken are increasingly a question of choice, with an increasingly bilingual population in Ukraine (Wanner, 2014), identification with Russian ethnicity and Russian language use may correlate with belief in pro-Kremlin claims and disbelief in true statements. 4

There is also a burgeoning literature that looks at other covariates that may influence individuals’ ability to discern whether a piece of news is true (e.g. Allcott and Gentzkow, 2017; Pennycook et al., 2018). Many of these covariates are related to political sophistication and awareness (Delli Carpini and Scott Keeter, 1993; Zaller, 1992). Those who are more politically sophisticated and aware should be more able to discern true from false news stories. 5 Sophistication and awareness should also be positively correlated with educational attainment, which has been found to be related to belief in untrue news (Seo et al., 2020). Research in North America has also pointed to the fact that increased consumption of news overall may increase belief in false news (Nyhan, 2010), though social media consumption may play more of a role than traditional media consumption (Bridgman et al., 2020). Since less news is generally available in rural areas and fewer consume social media given poor internet access, it also stands to reason that those in rural areas may be less vulnerable to pro-Kremlin claims. U.S. research on Facebook users has found that age also plays an important role, with older Americans more likely to believe disinformation (Guess et al., 2019). Other sociodemographic characteristics such as gender, income, and employment may also be related and are typical control variables, regardless of their relationship.

Research Design

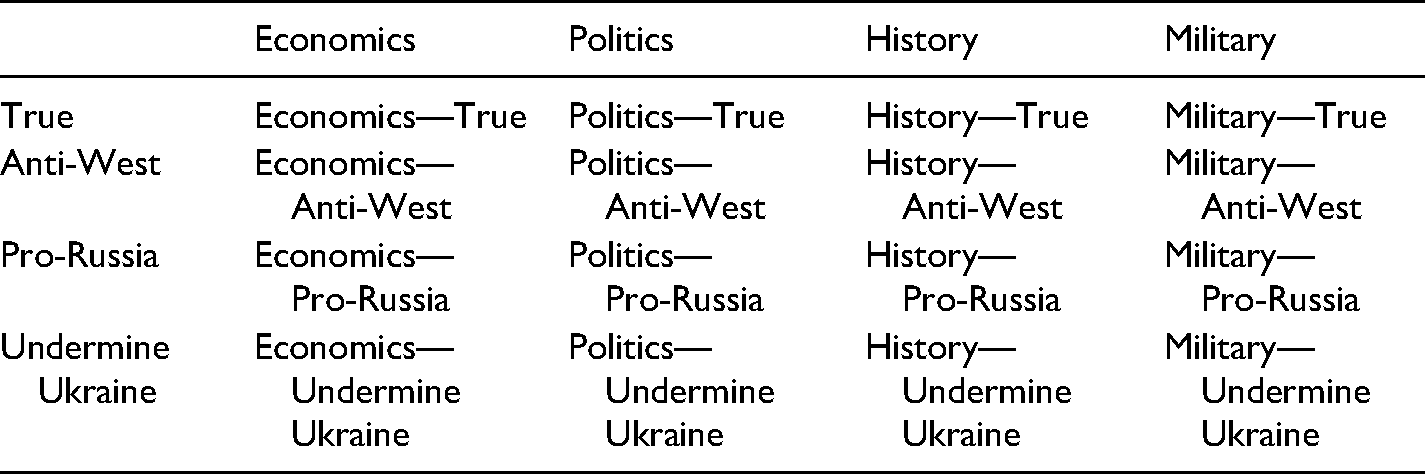

Our classification scheme yields 16 unique combinations of topic and strategy, as seen in Table 1. For example, a claim could be historical and true, or historical and deploy an anti-Western strategy (e.g., accusing NATO allies of having Nazi connections).

Testing whether respondents could discern truth from disinformation within the 16 different combinations of topic and strategy required us first to identify a large number of relevant disinformation claims that Ukrainian respondents might plausibly believe. 6 To do so, we draw from the disinformation claims collected by the website EU vs Disinfo (EUD), part of the European Union’s efforts to track and respond to Russian disinformation. EUD’s goal is to “focus on messages in the international information space that are identified as providing a partial, distorted, or false depiction of reality and spread key pro-Kremlin messages.” EUD specifically notes, however, they cannot always explicitly link sources to the Kremlin or prove a source’s goal is to disinform. 7 One potential criticism of using EUD as a source for pro-Kremlin disinformation claims is that the link between the media outlet spreading the false claim and the Russian government is not always clear. Given that Russian disinformation tactics include deliberately obscuring the source and laundering disinformation through media outlets that do not appear to have explicit links to the Russian government (Global Engagement Center, 2020; Lange-Ionatamišvili, 2015; Paul and Matthews, 2016), the inability to draw clear links in all cases is not surprising. However, for this reason we use the terms “pro-Kremlin disinformation” and “false or improbable statements consistent with Russian disinformation,” rather than “Russian disinformation” in reference to our empirical tests and findings.

Story matrix.

In collaboration with Ukrainian partners, we chose claims from the EUD database that had circulated in Ukraine and double-checked they met the definition of disinformation that this paper and EUD propose. The authors then collaboratively determined how to classify each disinformation claim with respect to topic and strategy, omitting claims on which they could not agree. An example of a claim drawn from the EUD is: “Ukraine will have to cancel pensions to comply with IMF requirements for reserve funds,” which we classify as being in the Economics topic and the Anti-West strategy. A second example of a disinformation claim is, “Ukrainian armed forces are firing on their own positions in order to pin it on separatists.” We classify this claim as possessing a Military topic with an Undermine Ukraine strategy. A full list of all stories from both studies can be found in the Supplemental Information file (Appendix H). We collaborated with our partners in Ukraine to validate that these claims had circulated in Ukraine and to translate them back into Russian and Ukrainian, the two languages in which respondents could elect to take the survey (EU vs. Disinfo had translated them into English from the language(s) in which they were originally published). We also consulted with our Ukrainian partners to identify stories from the Ukrainian daily news that were verified as true from multiple sources. These claims cover all four topics but have no strategy component, since we assume they are true and are not part of an apparent strategy.

Within disinformation claims, we focus on whether certain topics or certain strategies of disinformation are more or less believed. We hypothesize that respondents would be more vulnerable to disinformation stories related to economics and politics, given that the Ukrainian political system and economy have been widely disparaged in recent years. We also hypothesize that historical disinformation stories would not find particular resonance among Ukrainian citizens. For disinformation strategies, we do not have any strong ex ante hypotheses.

Additionally, we examine a set of demographic covariates that indicate potentially vulnerable populations. We hypothesized that those with contextual and partisan relationships to Russia and lower levels of political sophistication would be less likely to distinguish truth from falsehood. These variables also let us examine potential sources of bias in the original online study.

We employ our classification scheme in two studies commissioned by the National Democratic Institute, the first conducted online and the second conducted face-to-face. For both studies, following Pennycook and Rand (2019), we create a dataset where each observation

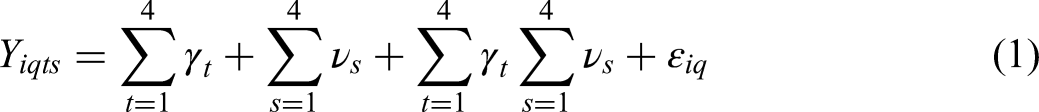

To examine potential correlates of belief in disinformation and investigate potential differences within our two samples, we run OLS regression models to examine interactions between whether a story is true and theoretically-motivated covariates. Mathematically, in addition to

Study 1

Study 1 was carried out online. While online panels are relatively new in Ukraine, survey firms have taken significant steps towards creating sizable subject pools from which they can sample for online studies. We collaborated with Infosapiens to field the survey. The Infosapiens online panel contained more than 30,000 active subjects at the time of fieldwork. We fielded a pre-test on March 4 and 5, 2019. Fieldwork occurred between March 11 and 14, 2019, and included 1,974 respondents who rated 31,584 claims.

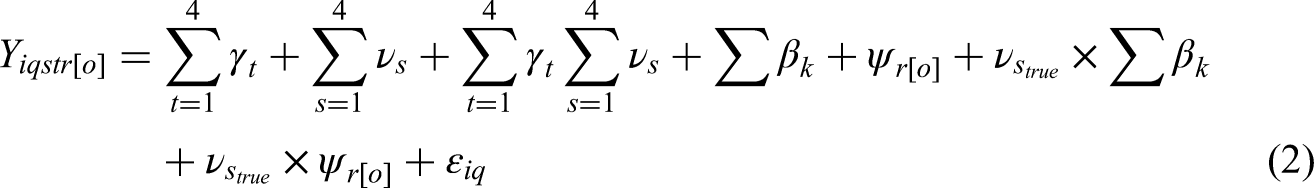

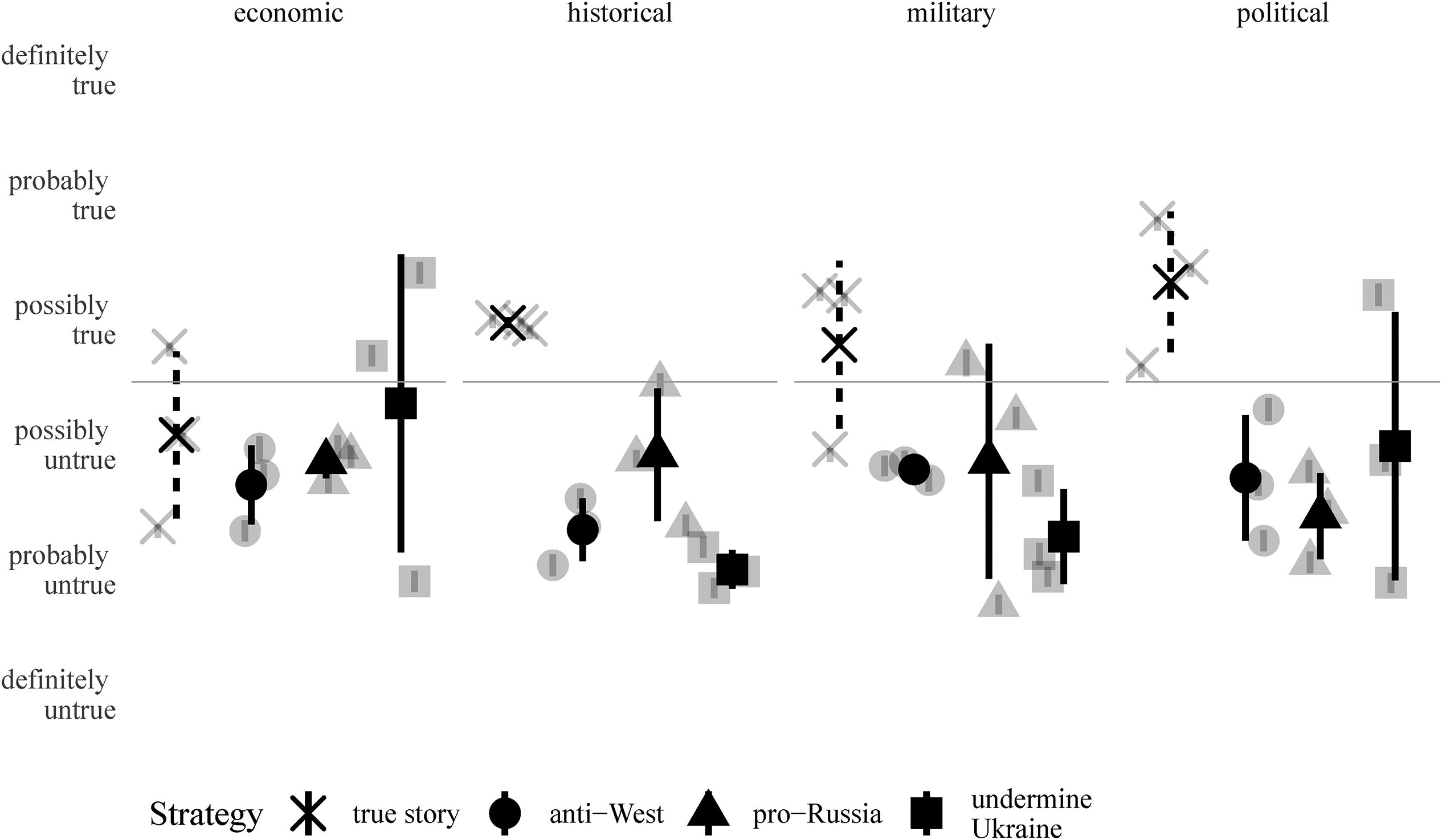

In Study 1, we tested respondents’ belief in 98 claims divided between our 16 cells, of which 79 were disinformation claims identified by EUD and 19 were true stories. The number of claims in each of the 12 disinformation cells given to respondents varied between five and ten, and the four true cells varied between four and six stories. 8 In Study 1, each respondent was assigned one story chosen at random from each of the 16 cells of the matrix of topics and strategies (12 disinformation, 4 true), and cell order was randomized. The Supplemental Information file (Appendix H) enumerates these claims. After seeing each story, respondents were asked whether they thought the story was true or false on a six-point scale from completely true (1) to completely false (6). Since each respondent rates 16 stories, Study 1 contains 31,584 evaluations of disinformation claims and true stories. Figure 1 shows the estimates for each of the 98 claims grouped by topic and strategy overlaid with estimates from our OLS model for each of the 16 combinations of strategy and topic. 9

Pooled estimates for OLS models of each strategy-topic combination are shown in black with 95% confidence intervals. Estimates for each of the 98 stories in Study 1 grouped by topic and strategy are shown in gray.

Graphically, a clear pattern emerges: respondents generally report that they believe the true stories are true. All of the disinformation topics on the other hand are rated, on average, as untrue, regardless of strategy. We do note, however, that the stories with the strategy undermining Ukraine had the most variance. These stories may have resonated more with some subsets of respondents, while alienating others. For example, it is possible that those who opposed the government at the time of data collection were more inclined to believe stories in this strategy that spread (negative) falsehoods about the country’s officials or elected leaders. Supporters of the government could be less likely to believe these stories to be true, driving higher variance in average levels of belief.

We also see a pattern related to the different topics covered in each disinformation claim and true story. It appears that respondents are least likely to distinguish true stories from disinformation claims when the topic is the economy. There were two economy-related disinformation claims that were judged on average, as being true. These stories dealt with the fact the shipbuilding industry in Ukraine “all but disappeared,” and that Ukraine unilaterally withdrew from a trade agreement with Russia. Moreover, all of the other disinformation economic stories had average ratings that showed a high degree of uncertainty about the truth proposition of the stories. Respondents, on average, also thought two true stories about the economy were untrue: (1) a story reporting the fact that real wages had grown in Ukraine between January and October of 2018; and (2) a story reporting the fact that Ukraine had record breaking agricultural exports in 2018.

Study 2

Study 1 may be subject to several criticisms. First, it may be that a different set of true stories would generate different comparisons for our validated false claims. Second, though we randomly sampled from the Infosapiens online panel, this panel is itself not representative of the population as a whole. To address these potential criticisms, in Study 2, we change many of the true stories (several remain the same) and conduct a nationally representative study with a subset of the disinformation claims that we used in Study 1. NDI funded a face-to-face, nationally representative survey of 9,474 respondents (who make 167,237 ratings) in Ukraine with several large oversamples, which led to unequal probabilities of selection. We use survey weights to correct for these unequal probabilities in the analysis. Fieldwork occurred between September 18 and October 31, 2019.

Study 2 deviated in the outcome variable in that a mid-point “don’t know”(4) in the scale is a possible outcome, making it a seven-point (1-7) scale rather than a six-point (1-6) scale. Given the face-to-face nature of the study and time constraints, we chose only 48 stories on which to concentrate in this study—with three stories in each of the 16 cells, which maintained coverage on all three disinformation strategies, true stories, and all four topics. Additionally, respondents were randomly selected to choose all three stories in one cell, evaluating 18 stories in total.

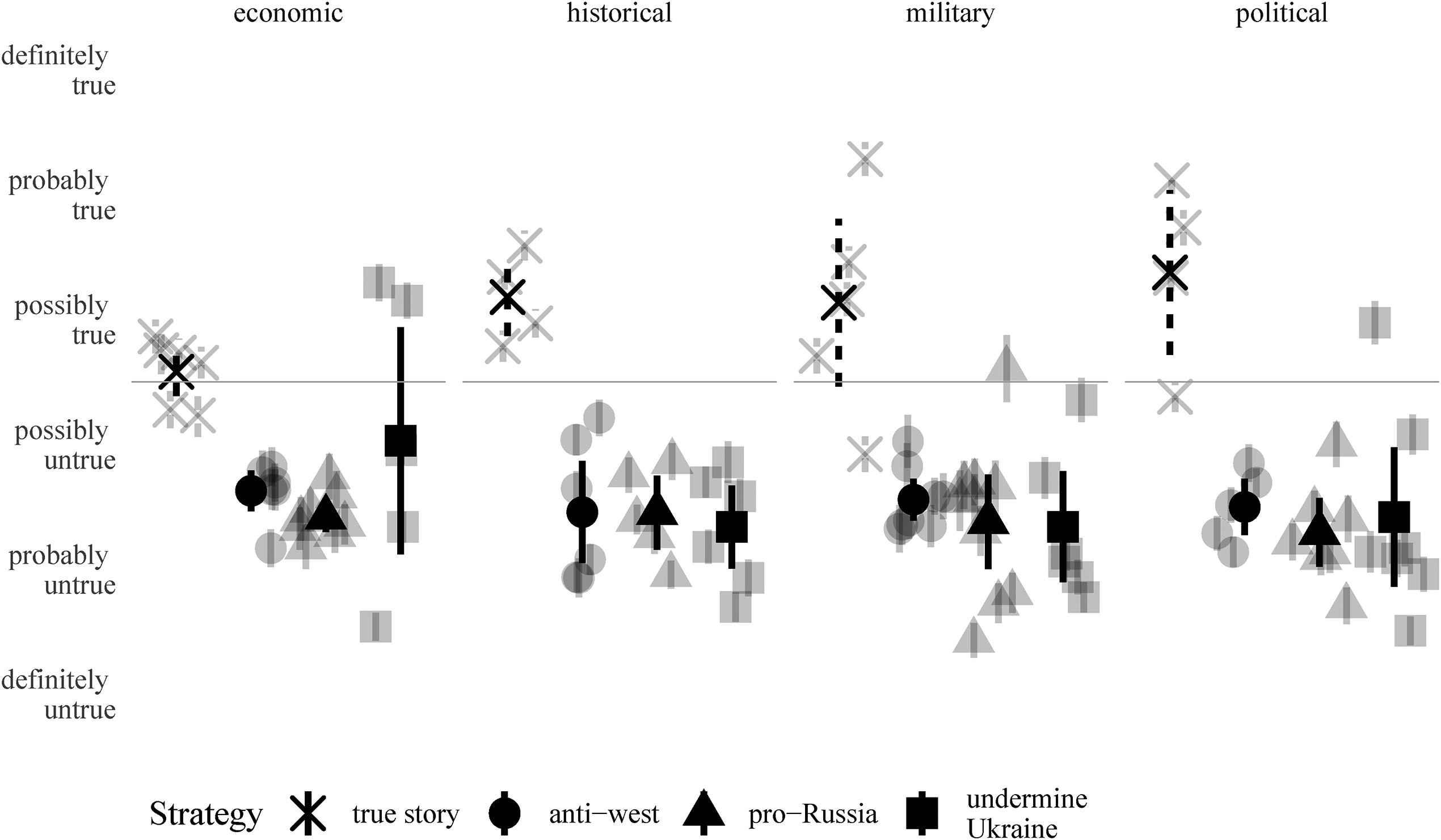

Figure 2 displays the results for Study 2 presented in the same manner as Study 1. As in Study 1, Study 2 respondents are, on average, able to distinguish between true and disinformation stories. Again, respondents have the most trouble distinguishing truth from disinformation with respect to economic claims. Indeed, the pooled OLS estimate for economic true claims is slightly negative (though not at a statistically significant level) in Study 2. On average, respondents rate two true stories as more false than true. One true claim is only present in Study 2: the Ukrainian economy has been experiencing stable growth. The second true claim was also presented in Study 1: that real wages in Ukraine had grown.

Pooled estimates for OLS models of each strategy-topic combination are shown in black with 95% confidence intervals. Estimates for each of the 48 stories in Study 2 grouped by topic and strategy are shown in gray. Estimates include population-based post-stratification weights.

Results Across Both Studies

In both studies, across three of the four topics, respondents are generally able to distinguish true stories from disinformation claims—though they are somewhat less able to do so in Study 2. 10 In both studies, there is little variation among different strategies of disinformation—though in both studies the greatest variance is around the claims which we categorized as being part of the Undermine Ukraine strategy—particularly economic claims aimed at undermining Ukraine. 11 Additionally, in both studies respondents are much more certain of the truth proposition of stories related to history, politics, or the military, as compared with economic stories. Indeed, economic stories are the area in which Ukrainian survey respondents appear particularly vulnerable to disinformation. One limitation of our finding about true economic stories is that economic news was generally positive when we ran both studies, so it could be that respondents have particular trouble believing positive economic news.

Subgroup Effects

Our two surveys were fielded via different modes. Furthermore, Study 2 contains a more extensive set of control variables. 12 We examine three sets of context-specific dummy variables indicating those who may be more susceptible to Russian disinformation. First, we measure whether a respondent states that they consider themselves ethnic Russian (Russian = 1, 0 otherwise). 13 Second, we employ a behavioral measure of the language in which the study was carried out (Russian = 1, Ukrainian = 0). 14 We also group respondents' political party support into a six-level categorical variable (Anti-Russia, Pro-Russia, Servant of the People, Nationalist Party, No Party, Other), and we focus on the comparison between those respondents who support Anti-Russia and Russia-leaning (Pro-Russia) parties, a long-standing classification of parties in Ukraine (Abdelal, 2005). 15

To model political awareness and sophistication, in both studies, we use an electoral turnout measure (stated retrospective turnout in 2014 in Study 1 and an 11-point scale (normalized between 0 and 1) of prospective turnout in a hypothetical upcoming municipal election in Study 2); settlement type (Village = 1, 0 otherwise); and a six-point education scale normalized between zero and one. In Study 2, we also deploy an additive six-question political knowledge battery (normalized between 0 and 1) and four measures of news consumption indicating whether a respondent consumes news daily from television, radio, online sources, or social media.

Additionally, we control for other typical demographic variables, including respondents' gender (Female = 1, Male = 0), a measure of economic well-being (a socio-economic status index in Study 1 and an income question in Study 2), and age. To better gauge the size of the coefficients and to deal with the fact that the two studies had slightly different outcome scales (6- versus 7-point), we show the effects in standard deviation units.

Before examining the results, we highlight that on some dimensions—such as political party support—the two studies are relatively similar. However, the two samples also diverge in some areas. The online sample is approximately 10 years younger, on average, than the representative sample; more importantly, the online sample is more educated and is more connected to Russia. There are also almost no residents from villages in the online sample, which is unsurprising, given the lower level of internet infrastructure in rural Ukraine. Given the divergence of the studies, seeing similar estimates across the two studies will add further support to our findings.

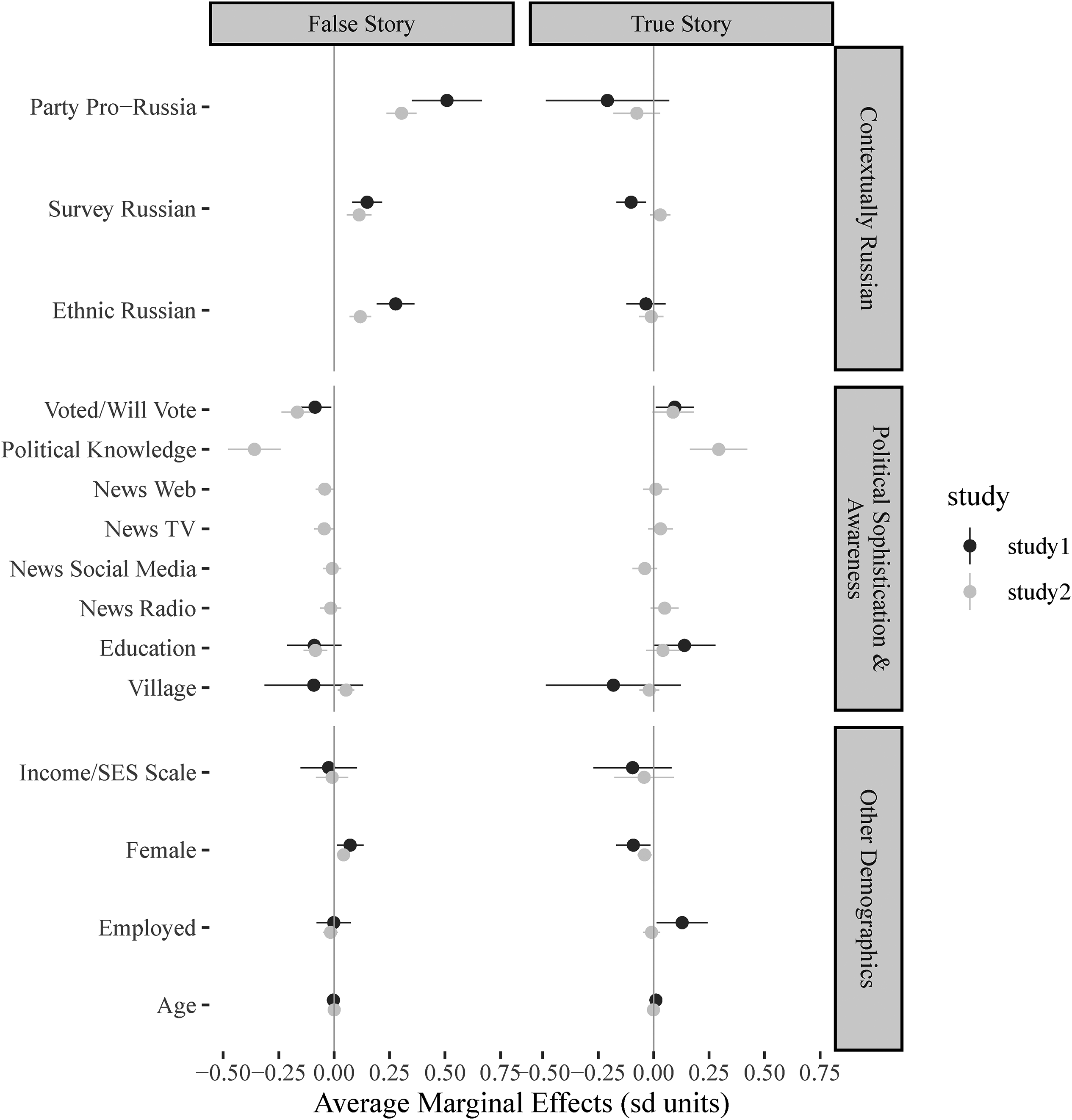

First, as seen in Figure 3, which shows the average marginal effects at representative values in standard deviation units for our three different categories of covariates, it appears that contextual variables have a large correlation with believing disinformation stories. In line with research on partisanship in the U.S. (Pereira et al., 2018), those who support pro-Russia political parties (relative to anti-Russia parties) are more likely to believe pro-Kremlin disinformation. Those who answer the survey in Russian (relative to Ukrainian), or identify as ethnic Russians (relative to ethnic Ukrainians), are also more likely to believe pro-Kremlin disinformation. The magnitude and statistical significance of the coefficients remain similar across both studies. For example, supporting a pro-Russia party increased belief in the disinformation stories by 0.51 standard deviation units in Study 1 and 0.31 standard deviation units in Study 2, relative to supporters of Anti-Russia parties. This change in party support is equivalent to increasing the average respondent’s belief in disinformation by 0.74 points on a 6-point scale in Study 1 and 0.57 points on the 7-point scale in Study 2. In most cases, those who are contextually closer to Russia are less likely to believe true stories, though not always at traditional levels of statistical significance. Supplemental Information (Appendix E) shows that these findings do not vary substantially within disinformation topic or strategy.

Average Marginal effects for demographic covariates.

Political awareness variables also generally function as would be expected by research in the United States and elsewhere. Those who are more knowledgeable are more likely to believe true stories and not believe disinformation. Most notably, our political knowledge battery is highly correlated with not believing disinformation and believing true stories. Indeed, there is a 0.35 standard deviation decrease in believing disinformation and a 0.37 standard deviation increase in believing true stories for the political knowledge variable when moving from 0 to 1 on this scale. This effect in standard deviation units translates into approximately two-thirds (0.64 and 0.68, respectively) of a point on the respective scales . Our retrospective and prospective turnout measures are also signed correctly and statistically significant at traditional levels. Voting and education also function in the same manner as political knowledge, with more educated and politically involved people more able to distinguish between true and false. Media consumption, however, has notably small correlations with belief in our news stories, and these correlations are generally not statistically significant at traditional levels, which is out of line with some research from North America (Bridgman et al., 2020). Because there are very few online subjects in villages, estimates about the effect of living in a rural area and belief in disinformation are highly uncertain in Study 1, and they are not statistically significant in Study 2.

The other demographic coefficients such as age, income, and gender appear weakly correlated with the ability to distinguish between true and false. The magnitudes of these relationships tend to be relatively small and mostly not statistically significant. The age finding differs from some previous work in the United States (Guess et al., 2019), which finds that older people may be more susceptible to fake news. We further probe into how these correlations vary by topic of information in the Supplemental Information file (Appendix E), and do not find that these covariates vary substantially by topic.

Conclusion

Contrary to some theoretical expectations (e.g., Humprecht et al., 2020), we find that Ukrainians, despite years of sustained exposure to Russian disinformation, are, on average, able to distinguish between true stories and pro-Kremlin disinformation claims. This ability is consistent in both an online poll and a nationally-representative, face-to-face survey, but it comes with important caveats. We preliminarily find that Ukrainians are not able to distinguish between all disinformation equally and have particular trouble distinguishing economic disinformation from truth. Further research could explore whether this is due to the historically poor Ukrainian economy, which has rendered disinformation more believable and true economic stories (which were positive in nature in our study) less believable. If our findings hold cross-nationally, then we would expect other countries with histories of economic mismanagement or weak economic growth to be relatively more susceptible to disinformation on the topic of the economy. Alternatively, it could be related to whether the economic disinformation and true statements were perceived as “good” or “bad” news and how that perception interacts with respondents’ prior understanding of the state of the economy. Moreover, it could be promising to investigate how the presence of multiple strategies or multiple topics in a single disinformation claim affect respondents’ ability to correctly label disinformation as untrue. Clearly, more research is needed to explain the mechanism, but we posit that this finding alone justifies comprehensively exploring different strategies and themes.

Consistent with other cross-national research, we find that partisanship is an important predictor of belief in disinformation. Those who support pro-Russia political parties appear to be less able to distinguish between true claims and pro-Kremlin disinformation. This finding builds on prior work and reinforces the notion that political attitudes are important to understand in identifying groups that may be more vulnerable to disinformation. Additionally, and perhaps more concerning, is that it appears those who speak Russian and identify as ethnic Russian are more likely to believe pro-Kremlin disinformation claims. This finding underscores the importance of studying Russian speakers and ethnic Russians in research focusing on Russia’s disinformation campaign in countries with substantial Russian minority populations. More broadly, we would expect this finding to be relevant in other contexts where ethnic or linguistic minority populations are exposed to disinformation through the mass media of their ethnic or linguistic homeland.

It is worth highlighting that we do not see those with contextual connections to Russia, or any other minority group, for that matter, as uniquely susceptible to disinformation in general. Rather, this is likely an example of identity and partisanship playing important roles in explaining who believes a particular type of disinformation, in this case pro-Kremlin disinformation. Had we, instead, focused on Ukrainian ultra-nationalist disinformation, there is theoretical reason to believe that this same group of Ukrainian citizens with ties to Russia would have been better able to label true stories as true and express disbelief in disinformation. Indeed, we believe that testing respondents’ ability to distinguish between disinformation and truth from a variety of sources could be an interesting avenue for further research.

Additionally, future work with respect to the finding about language and ethnic identity could probe whether it reflects past exposure to disinformation claims or a vulnerability to certain types of messaging. It could be that those who speak Russian and self-identify as ethnic Russian are more likely to consume Russian-language media that are, compared to Ukrainian-language media, more inundated with disinformation claims even if disinformation exists in both languages. Thus, these Russian speaking respondents may already have encountered—and believe to be true—the pro-Kremlin disinformation statements that we present. Alternatively, it may be that the Russian-speaking and ethnically Russian respondents are, on average, more likely to possess a worldview that is consistent with the Russian disinformation claims. In this situation, prior exposure may be no different, but the effects of the disinformation claims would be heterogeneous with respect to ethnic identity and language use.

Beyond raising key questions for future research, our findings make important contributions to the study of public opinion and disinformation. First, they underscore the importance of taking a historically-informed view of disinformation and the impact that it may have on its target audiences. Second, it shows the utility of testing multiple dimensions of disinformation claims. While we observe little difference along the strategy dimension, we find new insights by looking at the topic dimension, insights that can guide future efforts. Third, our findings highlight that, on many questions, respondents may be correct but they are not certain. One potential explanation for the low level of confidence in responses is that one of the Russian government’s objectives, “engaging in obfuscation, confusion, and the disruption or diminution of truthful reporting,” (Paul and Matthews, 2016, 3), may be working, although we note that more empirical tests would be required to support such a conclusion.

Supplemental Material

sj-pdf-1-hij-10.1177_19401612211045221 - Supplemental material for Is pro-Kremlin Disinformation Effective? Evidence from Ukraine

Supplemental material, sj-pdf-1-hij-10.1177_19401612211045221 for Is pro-Kremlin Disinformation Effective? Evidence from Ukraine by Aaron Erlich and Calvin Garner in The International Journal of Press/Politics

Footnotes

Acknowledgments

The authors collected the data presented in this article in collaboration with the National Democratic Institute, which funded the research. The analysis and conclusions contained here solely represent those of the authors. We thank Kevin Aslett, Jordan Gans-Morse, Beatrice Magistro, Dietlind Stolle, Amanda Wintersiek, attendees at the annual Southern Political Science Association (SPSA) meetings, 2020 American Political Science Association (APSA) meetings, and Severyns-Ravenholt Seminar in Comparative Politics at the University of Washington for feedback on earlier drafts of this paper. We thank Tim Roy for excellent research assistance. This research was carried out under Research Ethics Board (REB) no. #390-0217. Replication data can be found at ![]() .

.

Declaration of Conflicting Interests

The data for this article were gathered as part of a larger project by NDI, a nongovernmental, nonprofit organization. Both authors have worked as consultants for NDI, but neither believes that this relationship poses a conflict of interest. NDI has no control over the content of the authors’ published academic research. The analysis and conclusions contained here solely represent those of the authors.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.