Abstract

The adoption of artificial intelligence (AI) in academia is an emerging field of interest. However, there is scant literature that explores the phenomenon of AI adoption by graduate students in doctoral education. This study employs collaborative autoethnography to explore and better understand the nuances of how doctoral students experience AI technologies within academic pursuits. A critical analysis of data revealed that the collective researcher-participant experiences offered the primary overarching theme of adoption strategy, with four distinct subthemes: adoption fear, adoption resistance, adoption feasibility, and adoption ethics. The findings suggest a balanced approach to AI adoption depends on the development of comprehensive strategies that are informed by a deep understanding of both the technological capabilities and the human factors involved. We urge both doctoral students and educators involved in doctoral programs to think critically about these identified themes. For doctoral students, this analysis offers valuable insights into challenges associated with integrating AI technologies into formal learning environments, potentially enhancing a management strategy for their doctoral studies. Educators tasked with integrating and evaluating AI technologies for doctoral coursework may develop a deeper understanding of the challenges their students may encounter during the adoption process.

Keywords

Current doctoral students are engaging their academic careers during an era of significant digital disruption (Skog et al., 2018), and they are increasingly adopting a variety of digital technologies to enhance the efficiency, depth, and scope of their research. These technologies not only facilitate data collection and analysis but also improve communication and collaboration. For example, advanced software like SPSS, SAS, and R are widely used for statistical analysis. NVivo and ATLAS.ti are popular choices for managing, coding, and analyzing qualitative data such as interviews, focus groups, and textual content. Tools like Zotero, EndNote, and Mendeley assist doctoral students in managing literature reviews and citations, and in fields like engineering and physics, software like MATLAB, Simulink, and ANSYS are used for simulating experiments and modeling scenarios that are either too costly or impractical to conduct in real life. As emerging scholars integrate such technology into their research methodologies, they are not only enhancing their own academic capabilities but are also shaping the future landscape of scholarly research (Ivanashko et al., 2024).

The integration of artificial intelligence (AI) technologies into doctoral studies has the potential to transform how research is curated across various disciplines (Fauzi et al., 2023). While there is scant literature that examines the phenomenon of adopting AI technologies by doctoral students, the readiness of educators and students to embrace AI technologies in higher education has been a subject of investigation—with mixed results (Kerridge, 2023; Kim et al., 2022). On the one hand, integrating AI technologies into research methods can achieve higher accuracy, improve productivity, and enhance the dissemination and presentation of research findings (Fauzi et al., 2023). While on the other hand, the adoption of AI technologies for academic studies is hampered by apprehension surrounding use uncertainties, lack of guidance, scholarly resistance, and potential ethical implications (Yu & Yu, 2023). Schiff (2020) explored the dual aspects of AI technologies in education and highlighted the potential for democratizing learning through increased accessibility and efficiency while concurrently cautioning against the risk of fostering a highly industrialized learning environment. Such an environment could cause excessive dependence on technologies, thereby diminishing the crucial human elements of education and learning.

Yet the use of AI technologies in academic research and coursework has been increasingly common, providing advanced capabilities across various fields such as medicine, education, and engineering. While AI technologies offer numerous advantages like enhanced data assessment, predictive analytics, and automation of routine tasks, the core of its adoption also raises concerns about the potential impact on the rigor and integrity of doctoral education (Bozkurt et al., 2021). These exemplars highlight the need for a sensible approach to AI adoption in higher education and supports the purpose of this study: to better understand the adoption phenomenon of AI technology by graduate students within doctoral studies—advocating for strategies that address both the benefits and challenges of integrating AI technologies into academic research and learning environments. Accordingly, the following research questions guided this study:

How do doctoral students perceive the benefits and challenges of adopting AI technologies in their academic research and coursework?

What factors influence the readiness and apprehension of doctoral students toward integrating AI technologies into their doctoral education?

For this purpose, we believe a good start is to better understand the phenomenon of AI adoption by exploring doctoral students’ experiences with AI technologies rooted in academic contexts (Press & Rossi, 2022). To advance this understanding, this paper employs a critical lens to examine current doctoral student experiences. We do this by utilizing collaborative autoethnography (CAE) to explore the phenomenon of AI adoption to more comprehensively grasp the concerns and consequences associated with its use. Based on our findings, we present key themes and insights that highlight opportunities for both students and educators to improve the adoption process of AI technologies in advanced academic settings.

Background

The participants constitute a select yet varied group of academics, all of whom are either currently enrolled in a Human Resource Development (HRD) doctoral program or possess a Doctorate in HRD. This study was prompted by the observation that each participant has experienced challenges associated with adopting AI technologies, along with varying degrees of success in incorporating these technologies into their formal learning environments. Understanding how experiences with AI technologies influence our development as emerging scholars is essential for enhancing our effectiveness and success in academic roles.

Artificial intelligence is a field within computer science that focuses on developing systems capable of performing tasks that traditionally require human intelligence, such as understanding natural language, recognizing patterns, making decisions, and learning from data (Patole et al., 2024). In doctoral education, students utilize various AI tools to enrich their coursework and research projects. TensorFlow and PyTorch are prominent machine learning libraries that empower the creation of intricate AI models for applications like natural language processing and computer vision. Jupyter Notebook provides an interactive platform for data visualization and analysis, which is crucial for data science projects. Tools like ChatGPT aid in content generation and idea brainstorming, while GitHub Copilot assists in software development by offering code suggestions. Applications such as Grammarly and Turnitin ensure the quality and originality of written work, showcasing the diverse applications of AI technologies in supporting and enhancing academic pursuits. Emerging research has suggested AI adoption by graduate students can enhance their learning experiences, drive innovative research, and contribute to the advancement of knowledge in their respective fields (Fauzi et al., 2023).

However, research gaps persist in understanding how to effectively integrate AI technologies into higher education studies. For example, while Nunez and Lantada (2020) explored the state of AI technology in engineering education and emphasized its potential to enhance doctoral training through personalized learning experiences and innovative research methodologies, they also highlighted a significant gap. Specifically, the effectiveness of AI technology in delivering truly personalized learning experiences remains underexplored, particularly in accommodating the diverse learning styles and backgrounds of doctoral students. Chan and Zary (2019) provided an integrative review on the applications of AI technology in medical education, highlighting the importance of incorporating AI technology to improve learning outcomes and prepare students for AI-driven medical practices. However, they also note that research on the best practices for integrating AI technology into the medical curriculum is sparse. And studies should focus on identifying the most effective ways to incorporate AI tools without overwhelming the existing curriculum. Abulibdeh et al. (2024) discussed the integration of AI tools to support personalized learning and enhance the quality of education, preparing students with competitive skills for the job market. However, they also highlighted that the effectiveness of AI tools in supporting personalized learning across various educational disciplines, beyond engineering and technical fields, required further investigation. Despite the transformative potential of AI technologies for doctoral research, their adoption also raises uncertainties that students must navigate as these technologies become more integral to academic endeavors.

Method

Collaborative autoethnography represents a variation of traditional autoethnography, relevant when engaging multiple researcher-participants (Hernandez et al., 2017). Unlike autoethnography, where an individual researcher leverages personal experiences to explore sociocultural phenomena (Carpenter, 2022), CAE allows multiple researchers to explore their personal experiences within a cultural context while also reflecting on the collective experience. This method facilitates a joint exploration of personal stories, enabling participants to collectively analyze, interpret, and contextualize their shared sociocultural experiences in relation to both practice and theory (Chang et al., 2013; Grenier, 2016). Scholars have employed CAE within higher education as a method to investigate experiences (Gates et al., 2020; Munn et al., 2023) and as an “authentic learning activity” (Lee, 2020, p. 570).

In CAE, participants function as primary peer reviewers for each other, facilitating a group discussion where participants can compare and contrast their experiences, identify common themes, and explore differences. This process helps to manage individual biases through intersubjectivity, ensuring that multiple perspectives are integrated and scrutinized. Therefore, CAE transforms personal narratives into comprehensive, cohesive stories that enhance our collective understanding of sociocultural phenomenon (Hernandez et al., 2010; Wężniejewska et al., 2019). Through this approach, the group of researcher-participants can gain insights into the sociocultural meanings of their experiences and contribute to a deeper understanding of the phenomena under investigation (Hernandez et al., 2017).

Four current HRD doctoral students who are enrolled in a cohort PhD model of education participated in the study as researcher-participants, while a fifth researcher-participant who holds a PhD in HRD from the same university program participated as a collaborator (Chang et al., 2013), serving as a guiding facilitator (Grenier & Collins, 2016) and expert coder (e.g., Carpenter et al., 2022). Among the participants, three were Caucasian Americans, one was an Asian American, and one was an African American, offering a diverse representation. The age range varied from mid-forties to mid-fifties, reflecting a mix of life experiences. The group consisted of four males and one female. Each participant brought a unique background to the study, with experiences spanning corporate, medical, and academic leadership roles, contributing to a rich and multifaceted perspective on AI technologies in workplace and university settings. Participants’ work environments included a mix of fully virtual conditions, hybrid settings that combine electronic and in-person elements, and entirely in-person contexts.

The data collection began with (1) each participant conducting a free-writing exercise, prompted to describe their experiences using AI technologies in doctoral studies. Unedited self-reported narratives offer a unique window into an individual’s subjective experience at a specific point in time (Chang et al., 2013). This task was undertaken with explicit instructions to focus solely on the articulation of their experiences, disregarding concerns related to content coherence, structural organization, grammatical accuracy, and adherence to other scholarly conventions (Kostopulos Nackoney et al., 2011); (2) the researcher-participants shared their narratives with one another to facilitate a group discussion where they compared and contrasted their experiences, explored differences, and collaboratively identified key phrases and concepts (i.e., process codes; Chun Tie et al., 2019) within the data; (3) after the guiding facilitator tabled the process codes, they were reviewed by each participant and follow-up questions were generated to probe deeper into key relationship between the process codes and to validate that each researcher-participant engaged in reflexivity—examining their own role in both the experience and the research process. This approach facilitated the identification of cause-and-effect or sequential connections (Saldaña, 2021) within process codes, enabling researchers to systematically organize relationships into descriptive categories; (4) from here, it was important to make sense of the descriptive categories by referring back to the study’s intent. This reflexive approach helped the researchers to align categories with final themes in accordance with the scholarly purpose of the study (Byrne, 2022)—to better understand the adoption phenomenon of AI technology by doctoral students within doctoral studies. And by interlinking the themes with educational outcomes and institutional goals, the researchers aimed to explicate final themes that support the effective integration of AI technologies into doctoral education. Accordingly, each final theme was critically evaluated for its capacity to offer comprehensive insights into the AI adoption process, ensuring they encapsulated dimensions of the phenomenon of interest; and last (5) each of the final themes were discussed via group email to ensure clarity and consensus among the research group.

Findings

The participants’ narrative reflections highlighted a general uncertainty regarding the adoption of AI technology within doctoral studies. Initial coding of key phrases and concepts (process codes) began to illuminate their experiences and perspectives on the integration of AI technology in their academic work with the following process codes emerging (Table 1): initial skepticism, practical applications, ethical concerns, technological dependence, AI technology within academic context, efficiency and precision, ethical implications, transformative potential, emotional responses, learning curve, integration challenges, and collaboration and discourse.

Initial Process Codes from Participant Narrative Reflections.

Analysis of the data also revealed connections between processes, highlighting cause-and-effect or sequential relationships in process codes (Saldaña, 2021), observed both within and between classifications. For example, initial skepticism among the participants, particularly evident in one participant’s early encounters with AI tools like ChatGPT, often led to emotional responses as they grappled with the authenticity and viability of such tools. This skepticism was rooted in experiences where the AI-generated content appeared manufactured and detached from genuine human articulation, reinforcing doubts about its utility. However, as participants engaged more deeply with AI, practical applications began to demonstrate efficiency and precision, such as one participant use of AI for grammar checks and idea generation, which significantly influenced their perceptions of AI’s transformative potential. Ethical concerns and implications were deeply intertwined in these experiences, necessitating a balanced approach to integrating AI technology in academic contexts, particularly as another participant experimentation with AI raised questions about authorship and intellectual creativity. Additionally, the learning curve presented challenges, but these were mitigated through collaboration and discourse, as seen in the collective reflections and shared experiences during PhD group discussions, which highlighted the importance of peer support and shared knowledge in navigating the complexities of AI integration—emphasizing the vital role of community in addressing the ethical and practical challenges posed by technological dependence.

These insights led the researcher-participants to organize structured relationships into descriptive categories (Table 2). This categorization process facilitated a better understanding of the varied dimensions of AI adoption among doctoral students. By grouping the process codes into structured relationships, the researcher-participants highlighted key areas of concern and interest that emerged from the narrative reflections. These structured relationships yielded descriptive categories that encapsulated the initial skepticism and ethical concerns voiced by participants, as well as the practical challenges and operational dynamics of integrating AI technology into academic environments. This helps to set the stage for better understanding how AI’s efficiency and precision can transform academic tasks and the broader implications for the future of research, teaching, and learning. Additionally, the emotional responses and ethical implications associated with AI adoption were contextualized, offering a holistic view of its impact on both individual and collective academic practices (Gardner, 2017). Ultimately, this structured approach aided in developing final themes that inform strategies (Dwivedi et al., 2021) to navigate the complexities of AI integration, ensuring that its adoption is both beneficial and aligned with the core values of academic scholarship.

Structure Relationships from Process Codes into Descriptive Categories.

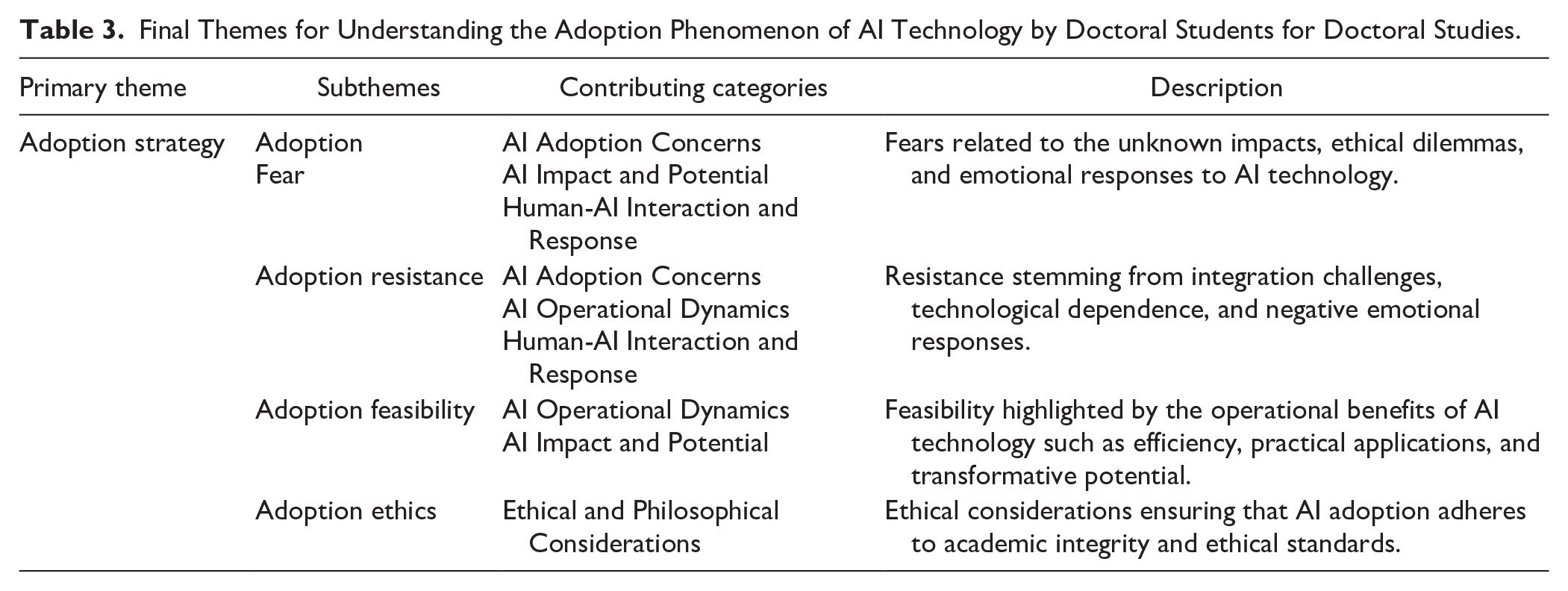

The derivation of final themes (Table 3) was approached to complement our methodological basis, focusing on the phenomenon of AI adoption by doctoral students within doctoral studies—leading to a comprehensive primary theme of adoption strategy. In this context, adoption strategy can be defined as a comprehensive framework designed to facilitate the successful integration of AI technologies within doctoral studies. This strategy encompasses understanding and addressing the emotional, ethical, operational, and practical dimensions associated with AI adoption. It includes identifying and mitigating fears (adoption fear) and resistance (adoption resistance), evaluating the feasibility of implementation (adoption feasibility), and ensuring adherence to ethical standards and academic integrity (adoption ethics). The goal of the adoption strategy is to balance the benefits of AI technology with the challenges it presents, ultimately enhancing the efficiency, depth, and scope of doctoral research and education.

Final Themes for Understanding the Adoption Phenomenon of AI Technology by Doctoral Students for Doctoral Studies.

To explain how the process codes and descriptive categories contributed to the development and framing of subthemes we provide the following perspectives. AI adoption concerns, such as initial skepticism and ethical dilemmas, contribute to adoption fear due to uncertainties about AI’s implications (Oprea et al., 2024). Emotional responses to AI integration could feed into this fear, especially if the rapid changes introduced by AI technology are perceived negatively (Kar & Kushwaha, 2023). Fears related to the unknown impacts and potential ethical dilemmas associated with AI technologies are significant contributors to adoption fear. Human-AI interaction and response also play a role, as doctoral students may be anxious about how AI technology could affect their academic practices and future career prospects. Integration challenges often lead to adoption resistance (Manuel Cyrus, 2023) among students and faculty, who may feel that AI technology does not fit well with existing academic practices or values. Resistance is also fostered by technological dependence and the steep learning curve required for effective AI use. If AI tools are perceived as overly complex or disruptive, students and educators are more likely to resist integrating them into their workflows.

Additionally, negative emotional responses toward the operational dynamics of AI technology, such as concerns about its impact and potential, can further fuel this resistance. The operational dynamics of AI technology, including efficiency and precision, highlight its adoption feasibility, showcasing how these tools can enhance academic productivity and learning experiences. The practical applications and transformative potential of AI technology demonstrate its significant benefits for reshaping academic research and methodologies (Bahroun et al., 2023; Mohamed Hashim et al., 2022). The feasibility of AI adoption is underscored by its ability to streamline complex tasks and improve research outcomes. Doctoral students who recognize these operational benefits are more likely to view AI technology as a valuable asset to their academic pursuits, albeit being mindful of any self or other academic constraints such as data integrity, responsible use, and the need for critical evaluation of AI-generated content. Finally, the implications of AI adoption ethics must ensure alignment with academic integrity and ethical standards. This involves addressing the ethical considerations comprehensively to ensure that AI adoption adheres to principles of fairness, transparency, and accountability. Ethical concerns related to data privacy, algorithmic bias, and the potential for AI technology to perpetuate existing inequalities must be carefully managed (Patole et al., 2024). Ensuring that AI technologies are used responsibly and ethically is crucial for maintaining the integrity of academic research and fostering trust in AI applications within doctoral studies.

Discussion

By crafting a comprehensive overview of the participant perspectives of AI adoption for doctoral studies, our final themes highlight potential barriers, facilitators, and ethical considerations that could inform future strategies for the effective integration of AI technology within doctoral programs. The primary overarching theme, adoption strategy, along with its four distinct subthemes—adoption fear, adoption resistance, adoption feasibility, and adoption ethics—serves as a foundational framework for doctoral students and educators. We urge both doctoral students and educators involved in doctoral programs to think critically about these identified themes. For doctoral students, this analysis offers valuable insights into challenges associated with integrating AI technologies into formal learning environments, potentially enhancing a management strategy for their doctoral studies. Educators tasked with integrating and evaluating AI technologies for doctoral coursework may develop a deeper understanding of the challenges their students may encounter during the adoption process. Next, we explore each theme in greater detail to foster a deeper understanding and discussion.

Adoption Strategy

A technology adoption strategy is a framework designed to facilitate the successful integration of new technologies within a specific context. In higher education, AI adoption strategies have been considered for teaching AI (Stadelmann et al., 2021), curricular development and design (Bae et al., 2020), learning analytics (Singh et al., 2022), and for automating administrative processes (Chen et al., 2020). Accordingly, it has been demonstrated that by establishing technology adoption strategies, learning institutions and educators alike can ensure that AI adoption not only enhances educational outcomes but also aligns with their broader academic and operational goals.

Making and enacting strategy is fundamentally a psychological activity (Payne, 2015). Here, strategy is offered as student decision-making as it relates to the sociocultural institution of doctoral studies. These decisions are intended to further student goals and employ a means to advance their academic pursuits. For this reason, it is crucial to not only select appropriate methods and resources to achieve desired outcomes but also to strategically conceptualize how legitimate uses of AI technologies can shape student behavior (Yorke, 2023). This concept is echoed by one participant who reflects on the complexity of integrating AI technology: At first, I was unsure how to even start using these tools—what would my professors think? But as I started incorporating AI into my research, I realized it was about more than just technology. It’s about how we, as students, craft our academic identities in this new landscape. It’s about striking a balance between AI-driven insights and our own intellectual contributions.

Other such behavioral examples include research planning (Goff & Getenet, 2017), resource leveraging (Soltis et al., 2023), self-regulated learning (Lin & Wang, 2018; Moran, 2005), and time management (Lim et al., 2019). This implies that doctoral students’ efforts to structure their strategies for adopting AI technologies reflect a deeper psychological impulse: the need to impose narrative coherence on their experiences. Meaning that the decision-making process involved in adopting AI technologies for the participants was not only about choosing the right technologies but also about crafting a personal and professional identity in a digitally evolving landscape. This aligns with Magolda’s (2001) notion that constructing a coherent personal narrative is essential for students to make sense of technological changes impacting their academic and professional environments. Therefore, developing a structured strategy for AI adoption in doctoral studies must address both a behavioral need for order and understanding and the achievement of practical research outcomes. One participant puts it like this: Doctoral students must find that balance between the old ways and the new to keep our work authentic and ethical. It’s like this crazy mix of the past and the future, challenging us to write the next chapter where our instincts and AI come together in a cool way.

To deepen our understanding of AI adoption strategies, it is essential to critically examine the key subthemes: adoption fear, adoption resistance, adoption feasibility, and adoption ethics. Each of these subthemes significantly influences the comprehensive strategy for adopting AI technologies within doctoral studies, helping to navigate the complex interplay between behavior and technology (Tondeur et al., 2017). For instance, one participant expressed their initial fear and resistance: Honestly, the idea of AI taking over parts of my research was terrifying at first. I worried it would diminish the value of my work, make it less ‘mine.’ But as I engaged with AI tools, that fear slowly turned into curiosity and then into strategic thinking—how can I use this to my advantage while staying true to my own academic voice?

These reflections support the importance of understanding AI adoption not just as a technical challenge but as a complex, multifaceted process that intertwines with students’ personal and professional identities.

Adoption Fear

AI adoption fear merits consideration, particularly because the literature indicates a link between fear and the acceptance of technology—technophobia (Khasawneh, 2018). And with the rapid evolution of AI technologies, public fear concerning AI adoption is expected to escalate. Li and Huang (2020) expanded on AI anxiety and fear by introducing the concept of integrated fear acquisition theory within the context of AI technologies. This approach aimed to uncover the nature of technophobia, emphasizing its shared origins and outcomes with fear, and providing a deeper understanding of how these anxieties arise in response to technological advancements. This theory portrays fear as a mixed form of emotions that serves to shield individuals from potential future threats. Indeed, the participant narratives implied that adoption fear referred to the emotional and psychological apprehension or anxiety about incorporating AI technologies into academic practices. Each narrative provided valuable insights into how this fear manifested and influenced their approach to AI adoption. For instance, several participants expressed concerns about how AI technology might disrupt established methods of teaching, learning, and researching, which are deeply ingrained in academic practices. As one participant noted: When I first started using AI tools, my biggest fear was how my professors would react. Would they see it as a shortcut, something that undermines the hard work we put into our research? That fear made me hesitant to fully explore what AI could do for me academically.

This sentiment reflects a broader anxiety among doctoral students about the potential dilution or obsolescence of their existing skills in an increasingly automated academic landscape. Doctoral students, who often invest significant time in developing specialized research skills, may worry that AI technologies could render their hard-earned expertise redundant or less valued in an increasingly automated academic landscape (Chamorro-Premuzic, 2023). For instance, Storey (2023) expressed concerns about the potential impact of AI technologies on critical thinking skills, questioning whether their integration into educational settings might inadvertently diminish students’ abilities to analyze and evaluate information independently. This threat highlights the need to carefully assess how AI technologies are adopted in academic curricula to ensure they complement rather than replace the cognitive processes essential for rigorous academic inquiry. This concern is echoed by another participant who shared: AI feels like both a tool and a threat. On one hand, it can streamline so many tasks; on the other, I worry that it might make my analytical skills obsolete. What if I start relying too much on AI and lose my ability to think critically?

This fear of diminishing critical thinking skills highlights the need to carefully assess how AI technologies are adopted in academic curricula to ensure they complement rather than replace the cognitive processes essential for rigorous academic inquiry. Such fears can create barriers to embracing technologies that might otherwise enhance research efficiency and depth. Furthermore, use considerations play a critical role in the fear to adopt AI technologies. Participants grappled with questions about the integrity of AI-driven research processes, such as the reliability of generated data, the transparency of AI methodologies, and the potential for AI technologies to perpetuate biases present in existing datasets. This is summed up by one participant who expressed: For me, adoption fear set in with ethical concerns; worries about unclear university policies regarding AI usage. I’ve also feared developing a technological dependence on AI, losing my own intellectual capacity and ability to think.

These reflections illustrate how concerns regarding the integrity and transparency of AI technologies directly contribute to adoption fear, as students must evaluate how these technologies adhere to institutional guidelines and academic standards (Alqahtani et al., 2023). Hence, the phenomenon of adoption fear is compounded by the need to maintain rigor and authenticity in scholarly work, making AI adoption for doctoral studies not merely a practical choice but a behavioral decision. These fears are primarily emotional responses that can prevent doctoral students from even attempting to engage with AI technologies, regardless of the potential benefits.

Adoption Resistance

Participant responses suggested that adoption resistance involved a more active pushback or reluctance toward embracing AI technologies—where existing norms and values within the academic institution favored traditional methods over new, potentially disruptive technologies. Resistance was characterized by a deliberate choice not to adopt or support AI technologies in doctoral studies, influenced by rational analysis, emotional response, or a combination of both. As one participant explained: To give control to machines in an academic or work setting goes against everything I know or that I’ve been trained to do. Where’s the authenticity or reliability? Intentional words, not algorithms, breathe thoughtfulness, trust, and genuine connection.

This resistance is often rooted in a deeply ingrained preference for traditional methods, reflecting a broader skepticism toward the reliability and authenticity of AI-generated outputs. The participant’s concern about the lack of intentionality and human touch in AI-generated content underscores a key factor in the reluctance to embrace these technologies. A prominent factor for student resistance was the lack of familiarity with AI technologies, with participants uncertain about how to effectively incorporate them into their academic activities (Dwivedi et al., 2021). One participant pointed out that this unfamiliarity can lead to adoption resistance as students may perceive the learning curve as steep and the risk of academic failure as high: When I first tried to use AI for a project, I was overwhelmed by how complex it seemed. It felt like there was so much to learn just to get started, and the idea of possibly failing because I didn’t understand the technology made me hesitant to even try again.

This fear of failure and the perceived complexity of AI tools contribute significantly to resistance among participants when considering the integration of AI technologies into their studies. The steep learning curve, coupled with the high stakes of academic success, can make students reluctant to experiment with unfamiliar tools. Additionally, the participants reported that educators’ skepticism about whether AI technologies can truly enhance or effectively replace existing methods contributed to adoption resistance. For example, one participant reflected on the hesitation they observed among faculty members: Some of my professors are really skeptical about AI. They worry it might undermine the critical thinking skills we’re supposed to develop. If the people teaching us aren’t on board, it’s hard to feel confident about using these tools ourselves.

This skepticism among educators can significantly influence students’ willingness to adopt AI technologies in academic settings. Research by Yuan et al. (2022) demonstrated that AI-generated peer reviews, while comprehensive, are generally less constructive and less factual than human-written reviews and can significantly influence educators’ perceptions and, by extension, their willingness to adopt AI technologies in academic settings. This scenario aligns with the broader theme of adoption resistance, where skepticism about the efficacy of AI technologies compared to traditional methods can deter educators and students from embracing these methods for doctoral studies (Alasadi & Baiz, 2023).

The feedback from participant responses indicated a clear pattern: educators and students might resist adopting AI technologies not merely out of habit or fear but because of legitimate concerns about the quality and utility of AI outputs. The fact that AI-generated texts tend to be less factual and constructive (Wach et al., 2023) could reinforce existing doubts about the ability of AI technologies to match or enhance the depth and rigor of human effort in academic evaluations. As one participant aptly summarized: I think the resistance comes from a place of protecting the integrity of our work. If AI can’t produce the same level of critical analysis that we can, then why should we trust it with something as important as our research?

However, there is evidence that educators “… are willing to adopt new technology if they believe students learn better when they are engaged with technology” (Carpenter et al., 2023, p 29). Similarly, research has consistently demonstrated that if educators perceive an instructional method as beneficial, they are more likely to engage in adoption strategies to integrate that method into their classroom practices (Backfisch et al., 2021). By recognizing the potential advantages of a new instructional approach, educators can motivate themselves to overcome initial hurdles and resistance, thereby facilitating a smoother transition and more effective adoption for themselves and their students.

Adoption Feasibility

The subtheme adoption feasibility is considered as the practicality and viability of integrating AI technologies into the existing academic and research environments of doctoral programs (Baglivo et al., 2023; Grover et al., 2022). It encompasses various factors that determine how easily and effectively AI technologies can be implemented to enhance doctoral education and research. While it is crucial to understand adoption feasibility in terms of its endpoint—implementation—this perspective may overlook the complexities inherent in the adoption process itself and how these complexities influence both implementation and eventual sustainability of AI technologies in doctoral studies. Recognizing that successful adoption is a precursor to successful implementation (Panzano & Roth, 2006), it becomes imperative to better understand how adoption feasibility is approached by doctoral students. A participant expressed AI adoption like this: Starting my research adventure with AI was like stepping into a room full of cool stuff. I was totally amazed not just by how AI tools made things super-efficient but how they kind of became my go-to for school stuff. Sorting through tricky data and finding new ideas for projects, AI was like my secret helper, turning the boring school stuff into something really cool!

This enthusiasm highlights the initial appeal of AI technologies, particularly in how they can simplify complex tasks and inspire new approaches to research. However, the participants also described the adoption process as typically initiated with the recognition of a specific academic or research need. This recognition is followed by a thorough exploration of viable AI technologies that could address these needs. The process advances through an initial decision to experiment with a chosen AI technology solution and engage in pilot testing, where AI technologies are applied on a small scale within specific parts of their research or coursework. This allows students to observe the impacts and potential disruptions, thereby assessing practicality before advanced implementation. Then, if deemed suitable, ends in a decision to integrate the technology more comprehensively into their doctoral studies (Damanpour & Schneider, 2006). One participant explained this phased approach: Before fully committing to using AI in all my research, I tried it out in smaller tasks, like data analysis for a single project. This way, I could see how it worked without risking too much. Once I saw how much time it saved me and how accurate it was, I knew it was something I wanted to use more extensively.

Understanding this sequence enhances the ability to pinpoint and tackle feasibility challenges and facilitate the actual adoption of AI technologies.

The participants also highlighted that compliance with institutional guidelines was a significant aspect that influenced the feasibility of AI adoption. However, guidelines concerning data privacy, ethical use of AI technologies, and research integrity were interpreted as vague with limited direction on how AI technologies may be integrated into doctoral research and coursework. One participant expressed frustration with this lack of clarity: I wanted to make sure I was following all the rules, but when it came to AI, the guidelines were really unclear. There was nothing specific about how or when to use these tools in my research, so I was constantly second-guessing myself.

Furthermore, it was observed that variations in professors’ adoption philosophies introduced further complexity to grasping adoption feasibility. Embracing new processes becomes particularly challenging when decision-makers within organizations fail to effectively communicate the details of the change process (Reio, 2015). As a result, doctoral students must have a thorough understanding of these guidelines as part of their assessment of AI adoption. Another participant remarked on this challenge by saying “… some professors are all for using AI technology, while others are totally against it. This makes it hard to know what’s acceptable and what’s not, especially when the institution doesn’t provide clear guidance.”

Feasibility must address the alignment of AI technologies with the program’s strategic academic goals, ensuring that its integration actively contributes to enhancing research quality, teaching methodologies, and learning outcomes (Pedro et al., 2019). This understanding helps with anticipating and mitigating scholarly challenges that could derail the adoption process. Additionally, it ensures that student use of AI technologies not only enhances their academic work but also complies with all necessary academic standards, which is critical for the legitimacy and credibility of their research outcomes. Therefore, the feasibility of adopting AI technology hinges not only on assessing its practical implementation but also on a thorough understanding and adherence to the (often underdeveloped) guidelines set by institutions, departments, and individual professors regarding decision-making for AI adoption within academic and research environments (Gupta et al., 2022).

Adoption Ethics

Navigating the ethical challenges of AI technologies in higher education has been a hot topic of research over the last few years (Holmes et al., 2022). Much of the ethical concern is generated around the issues of data privacy, algorithmic bias, and the integrity of automated decision-making processes. These concerns underscore the need for robust ethical frameworks and guidelines that can govern the deployment and use of AI technologies in educational settings. The call for a deeper academic framework is further reinforced by Hunkenschroer and Luetge (2022) who stressed the scarcity of papers offering a theoretical underpinning for ethical discussions in AI adoption, and by Prikshat et al. (2023) who noted the lack of comprehensive ethical frameworks addressing the roles and responsibilities of diverse stakeholders throughout AI implementation. Ensuring that AI applications respect the privacy rights of students and faculty, provide equitable outcomes, and maintain transparency in their operations is crucial (Nguyen et al., 2023). Therefore, as the adoption of AI technology continues to expand in higher education, institutions must prioritize the development and implementation of comprehensive ethical standards to safeguard against potential abuses and to foster an environment of trust and fairness that is conducive to the academic mission.

The participants indicated they struggled with ethical adoption of AI technologies for doctoral studies. And that this struggle was compounded by concerns that it may dilute academic rigor. One participant shared: I worry that by relying too much on AI, I might miss out on really learning the material. It’s easy to let the AI do the heavy lifting, but then what am I actually gaining? There’s a fear that we could end up with a shallow understanding of our subjects.

This apprehension stemmed from the potential for AI technologies to perform tasks traditionally requiring deep cognitive engagement, such as data analysis and critical thinking, thus potentially reducing the need for participants to develop these fundamental skills themselves. There was a real fear that reliance on AI technologies for complex intellectual tasks might lead to a superficial understanding of subject matter and weaken the thoroughness of scholarly training. Another participant expressed this concern: Using AI to handle complex tasks like data analysis feels like cheating, almost like I’m bypassing the hard work that would help me really understand the research. It’s a slippery slope, and I’m worried it might compromise the depth of my academic experience.

Consequently, it is imperative for educational institutions to ethically balance the integration of AI technologies with the maintenance of rigorous academic standards (Nguyen et al., 2023). The educator who helped guide this study of doctoral students stated this: As we continue to adopt AI technologies in higher education, it is important that we develop comprehensive ethical frameworks to guide its deployment and use. This ensures that AI applications not only respect privacy rights but also deliver equitable outcomes and maintain operational transparency. Amidst the rapid adoption of these technologies, we must also safeguard academic rigor, ensuring that AI technologies aid rather than replace critical cognitive processes necessary for scholarly training. The ultimate goal should be to harness AI technologies as a tool that complements traditional learning methods, thereby enhancing both the quality and integrity of doctoral education.

By developing curricula that incorporate AI technologies as a supplementary tool rather than a replacement for critical academic processes, educators can equip doctoral students with the ethical acumen needed to navigate the complexities of AI usage in their respective fields. This ensures that their approach not only enhances their research but also adheres to the standards of ethical conduct expected from doctoral scholarship (Berg, 2016; Halse & Bansel, 2012). This is well said by one participant as: It’s all about balance—using AI to help, but not to the point where it takes over. We need to be smart about how we integrate these tools into our work, making sure we’re still learning and growing as scholars.

Table 4 summarizes the implications for both doctoral students and educators, highlighting the primary themes of adoption strategy, including subthemes adoption fear, adoption resistance, adoption feasibility, and adoption ethics. These insights provide a comprehensive framework for understanding the barriers and facilitators of AI integration, ultimately guiding future strategies for seamless adoption.

Summary of Implications for Doctoral Students and Educators.

Implications for Practice

The integration of AI technologies by doctoral students into doctoral studies presents complex challenges and unique opportunities. It necessitates a thoughtful approach to adoption derived from the point of view of strategy, fear, resistance, feasibility, and ethics. Particularly, it is important to doctoral students that academic institutions develop clear strategic plans for AI adoption that include both short-term and long-term goals (Hou et al., 2012). This involves not only choosing the right technological tools but also addressing the broader impact on the academic culture and individual learning processes (Laurillard, 2013). Effective planning helps align AI adoption with institutional goals and student learning objectives. Comprehensive training programs for both students and faculty would enhance familiarity with AI technologies, focusing on both technical skills and ethical considerations. This training should also include strategies for integrating AI technologies in a way that complements existing skills rather than replacing them, ensuring that AI technologies are leveraged to enhance, rather than undermine, academic rigor.

Furthermore, doctoral students need academic institutions to develop and continuously update ethical guidelines to govern the use of AI technologies that address privacy, bias, and integrity of research. Making these guidelines transparent and accessible to all stakeholders in the academic community is important for maintaining trust and ethical standards in the use of AI technologies (Laurillard, 2013). No doubt, establishing support systems will aid students in navigating the complexities of AI adoption. This support should address both emotional and practical challenges, providing a safety net as students explore and integrate new technologies into their academic work. And finally, students need a safe pathway for facilitating ongoing adjustments and improvements, ensuring that AI technologies meet the evolving needs of their academic pursuits.

Implications for Research

There is a need for more research to explore the psychological and behavioral aspects of AI adoption (Emon et al., 2023; Nwankwo et al., 2021). Studies should focus on how AI technologies influence the formation of academic identity and the narrative coherence of students’ academic and professional lives. More empirical research is needed to evaluate the efficacy of AI technologies in enhancing learning outcomes, academic rigor, and research productivity. This research should also examine the potential cognitive downsides of over-reliance on AI technologies for critical thinking and problem-solving. It would be important to incorporate longitudinal studies on AI adoption on academic practices and outcomes to provide deeper insights into the sustainability and evolution of AI technology use in higher education.

Investigating how AI adoption affects different demographic groups within the academic community is vital (Kelly et al., 2023), with a focus on ensuring equitable access and addressing potential biases that may affect underrepresented groups. Moreover, engaging in comparative studies that examine the differences in AI adoption strategies across various academic disciplines and institutions can provide a broader understanding of the factors that facilitate or hinder successful integration.

Conclusion

The unique contribution of this study lies in its CAE exploration of student experiences with AI adoption within doctoral studies. As a practical lesson learned, our findings highlight the critical need to develop AI adoption strategies that address doctoral students’ concerns regarding fear, resistance, feasibility, and ethics. The findings suggest a balanced approach to AI adoption depends on developing comprehensive strategies that are informed by a deep understanding of both the technological capabilities and the human factors involved. Accordingly, academic institutions and higher education classrooms must prioritize the creation of environments that support ethical practices and guidelines, foster technological adeptness, and encourage critical engagement with AI technologies. By doing so, doctoral students can better navigate the complexities of AI adoption, ensuring that these technologies are used to enhance, rather than undermine, the academic integrity and rigor of doctoral education.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.