Abstract

Background:

Systematic and comprehensive data acquisition from the electronic health record (EHR) is critical to the quality of data used to improve patient care. We described EHR tools, workflows, and data elements that contribute to core quality metrics in the Type 1 Diabetes Exchange Quality Improvement Collaborative (T1DX-QI).

Method:

We conducted interviews with quality improvement (QI) representatives at 13 T1DX-QI centers about their EHR tools, clinic workflows, and data elements.

Results:

All centers had access to structured data tools, nine had access to patient questionnaires and two had integration with a device platform. There was significant variability in EHR tools, workflows, and data elements, thus the number of available metrics per center ranged from four to 17 at each site. Thirteen centers had information about glycemic outcomes and diabetes technology use. Seven centers had measurements of additional self-management behaviors. Centers captured patient-reported outcomes including social determinants of health (n = 9), depression (n = 11), transition to adult care (n = 7), and diabetes distress (n = 3). Various stakeholders captured data including health care professionals, educators, medical assistants, and QI coordinators. Centers that had a paired staffing model in clinic encounters distributed the burden of data capture across the health care team and was associated with a higher number of available data elements.

Conclusions:

The lack of standardization in EHR tools, workflows, and data elements captured resulted in variability in available metrics across centers. Further work is needed to support measurement and subsequent improvement in quality of care for individuals with type 1 diabetes.

Introduction

The T1D Exchange Quality Improvement Collaborative (T1DX-QI), formed in 2016, represents a learning health system of more than 50 pediatric and adult diabetes centers actively participating in interventions to improve processes and outcomes of care for individuals with type 1 diabetes.1-3 Deidentified electronic health record (EHR) data contributed by the centers and securely stored in the T1DX-QI database are a core part of the Collaborative, critical for measuring patient processes of care and outcomes, establishing benchmarks of care, supporting peer-to-peer learning, and ensuring high reliability of the system over time. 1 The aggregated data are made visible to members of the collaborative through the T1D Exchange Portal, which allows centers to track the progress of their patient population on key metrics and allows for comparison with other centers and as a collective. The number of centers contributing to the T1DX-QI database and the number of data elements being contributed grew from 10 centers in 2016 to 55 as of 2023.

To create the database, the collaborative created a set of database specifications and worked with each center to map their data to the standard. Although laboratory studies, patient demographics, encounters, and medications are captured in a structured manner across EHRs, many of the key metrics that are important for diabetes care (e.g. diabetes device data and self-management metrics) are captured uniquely at each center. The variability of data specifications across centers caused the data mapping process to become a large and laborious undertaking, typically taking between 3 and 12 months at each center.

We wished to provide attention to the structure and design of EHR tools and workflows in the T1DX-QI collaborative. Typically, the design is an afterthought, the “pain point” that health care professionals (physicians or advanced practice providers) (HCPs) often complain about regarding EHR use, but these tools and workflows impact both the quantity and quality of data being collected by centers and is key for ensuring that data elements for key metrics are transformed into a “clean” standardized data source for the collaborative. Our objective was to describe the EHR tools, workflows, and core data elements captured across the collaborative, to inform best practices for data-driven clinic workflows and optimal design of the EHR for quality improvement (QI).

Methods

The study involved 13 centers from the collaborative contributing data to the T1DX-QI. Participation was offered to all centers in the T1DX-QI. Those included were centers who were available for an interview. These centers encompassed a broad geographic representation, used at least two different EHR brands, and represented differing levels of informatics capacity, as some were mature in their data tools and workflows and others were nascent with their tools. For this analysis, we asked centers to share screenshots of EHR tools/interfaces used to capture and contribute to 15 core data elements that are queried from all T1DX-QI centers plus 2 additional elements. Core data elements included glycemic metrics (time in range and time in low range), diabetes self-management habits including the six associated with improved glycemic outcomes (continuous glucose monitor and pump use, blood glucose checks four times per day, timing of insulin, number of boluses per day, data review and insulin adjustments between visits), 4 patient-reported outcomes (i.e. social determinants of health [SDOH], depression, and diabetes distress), documented transition to adult center plan, and vital patient-level data elements (e.g. demographics, diabetes type, and date of diagnosis).

We then conducted interviews over Zoom with a clinical representative from each site, reviewing the screenshots and capturing clinic workflows which are defined as “a set of tasks, grouped chronologically into processes, and the set of people or resources needed for those tasks that are necessary to accomplish a given goal.” 5 Two authors (JL and DE) conducted the interviews and synthesized the findings and summary statistics related to EHR tools used, workflows, and core data elements captured. Centers were classified as having (1) structured data tools if they had flowsheets or electronic forms filled out by health care personnel, (2) questionnaires if they were integrated into the EHR platform (captured by health care team members or directly from patients), and (3) automated data tools if a technology platform directly inputted the metric into the EHR without human involvement. This study was approved by Western institutional Review Board (IRB), and each clinic obtained their own approvals per institutional policy.

Result

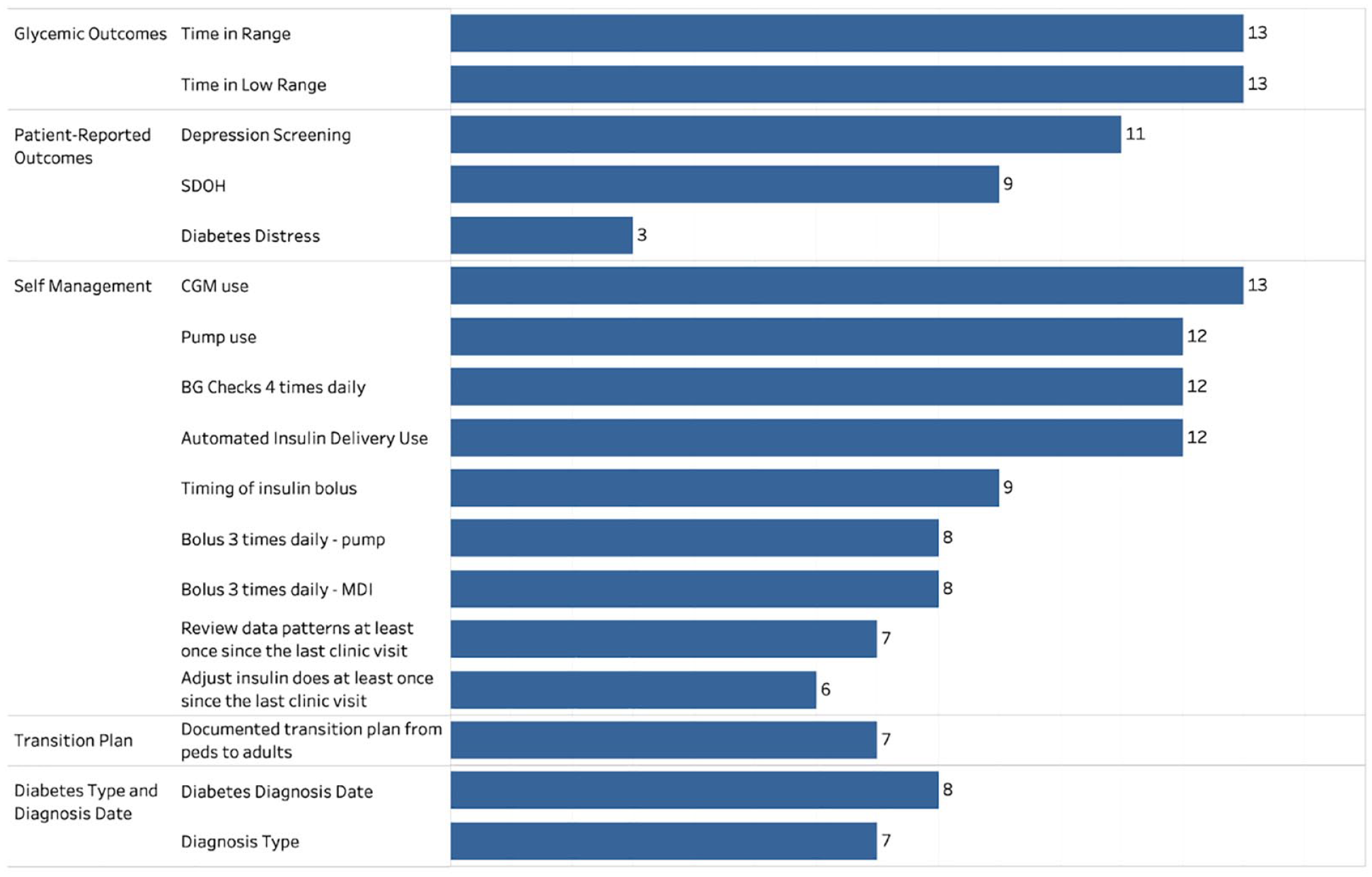

There were 13 centers (12 pediatric and one adult; 10 using Epic and 3 using Cerner) who provided screenshots and engaged in interviews. Figure 1 shows the number of centers with structured EHR data elements by domain and metric. Data elements were grouped into descriptive domains of similarly characterized data elements. All centers had structured items for glycemic outcomes. Of the self-management “six habits,” a greater number of centers had information about technology use, such as continuous glucose monitoring (CGM) and insulin pump use, but fewer centers had measurement of behaviors like bolusing, timing of insulin delivery, reviewing data patterns, or adjusting insulin doses. A majority of centers captured data about SDOH, depression, and transition from pediatric to adult diabetes care, but just a few centers were measuring diabetes distress. There were eight centers that had an EHR field to capture diabetes type, and seven centers had an EHR field to capture diagnosis date. The remaining centers relied on capturing ICD-10 codes in the problem list, billing codes, or medical history. At least five centers reported that they have either a research assistant or a diabetes team member verify the diagnosis and the diagnosis date.

The number of centers with structured electronic health record data elements, by domain and metric.

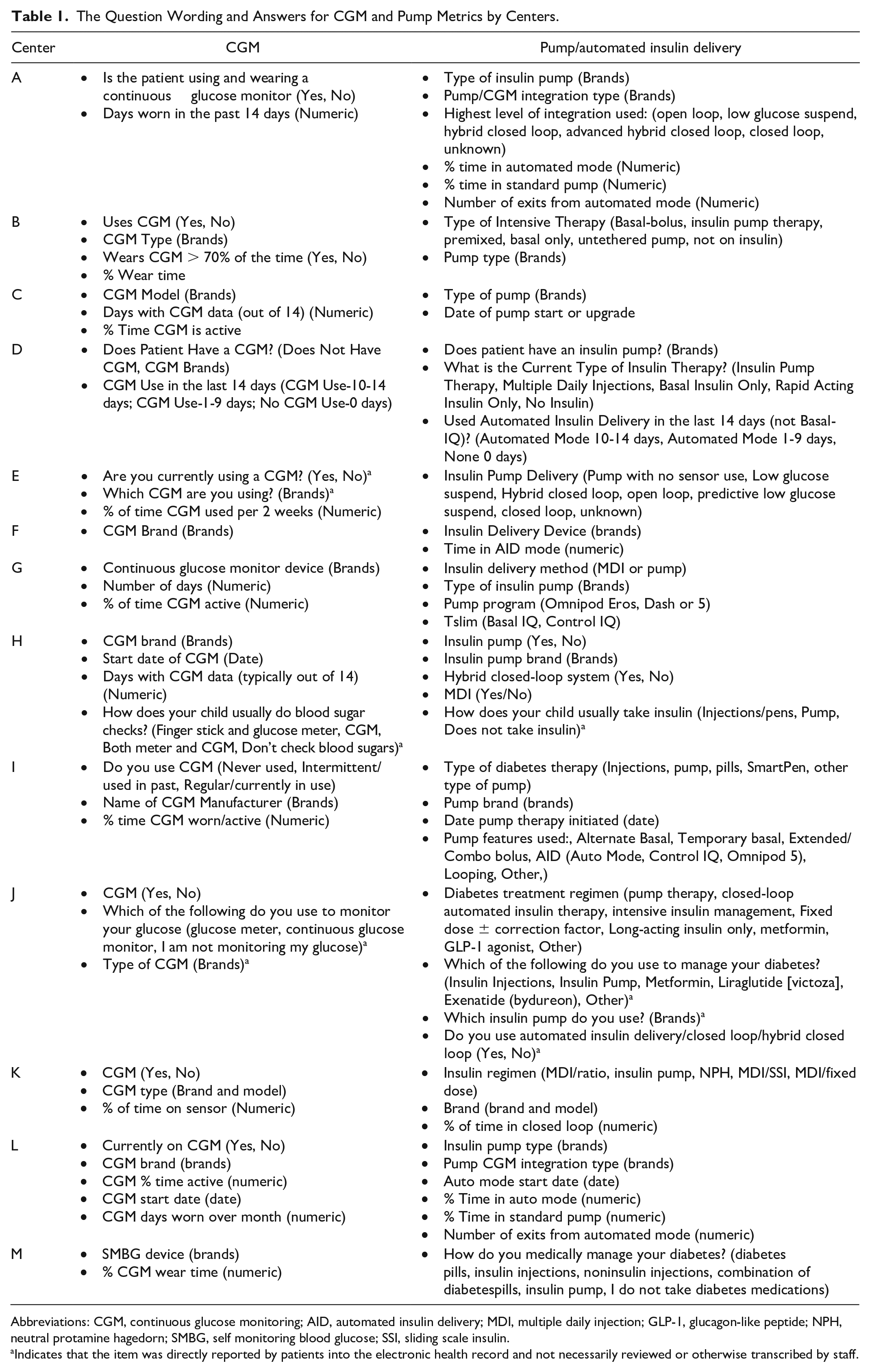

There was wide variability in the questions used for structured data elements which impacted the availability of data elements in the database. Table 1 provides an example of this variability, showing the question wording and answers for CGM, insulin pump, and automated insulin delivery (AID) metrics across centers as examples. Some centers asked only about the brand of CGM used, whereas other centers also asked about recent CGM use (i.e. within the last 14 days). This impacted the completeness of data in the overall T1DX-QI database, as many more centers were able to contribute information about CGM company and model, but fewer were able to capture information about use or percentage of time CGM was used. Similarly, although most centers asked about pump brand, only a subset could identify recent use of a pump or AID system (i.e. in the last 14 days or percentage of time used).

The Question Wording and Answers for CGM and Pump Metrics by Centers.

Abbreviations: CGM, continuous glucose monitoring; AID, automated insulin delivery; MDI, multiple daily injection; GLP-1, glucagon-like peptide; NPH, neutral protamine hagedorn; SMBG, self monitoring blood glucose; SSI, sliding scale insulin.

Indicates that the item was directly reported by patients into the electronic health record and not necessarily reviewed or otherwise transcribed by staff.

Regarding patient-reported outcomes, at least 11 centers performed depression screening using either the PHQ-9, PHQ-4, PHQ-8, ASQ4, or the PROMIS pediatric short form. Nine centers collected SDOH in a structured format. Over half of the centers had adolescent transition metrics, although the measures used varied across centers. Two centers recorded readiness for transition to adult care using the Readiness of Emerging Adults with Diabetes Diagnosed in Youth (READDY) tool, 6 one center used a general documentation tool for transition across the hospital, one center asked if transition readiness was discussed, and one center asked a series of questions related to competencies related to transition. Only three centers were measuring diabetes distress, using either the Teen or Child Problem Areas in Diabetes (PAID) or the Teen PAID. 7

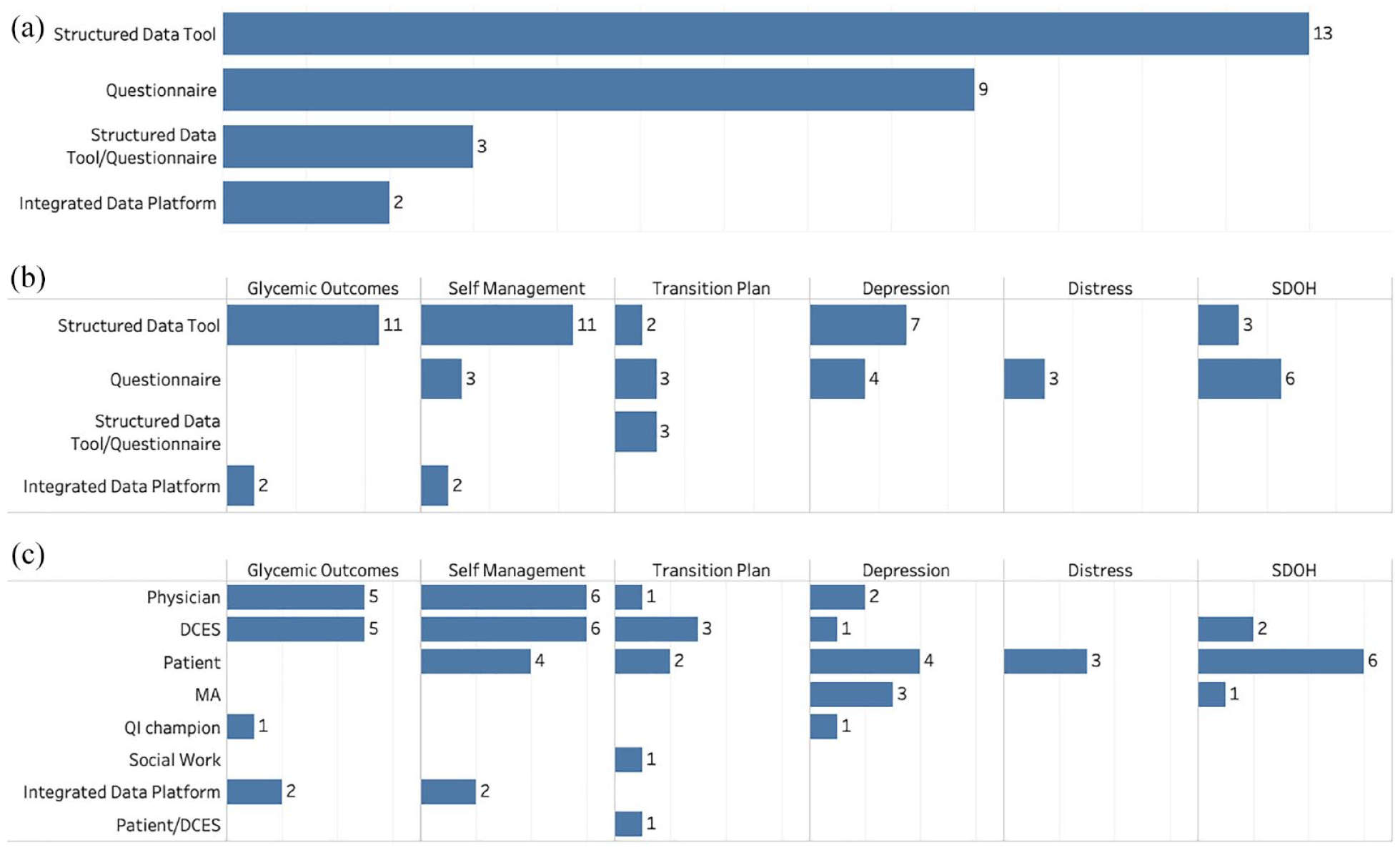

Figure 2a shows the number of centers with different EHR tools. All 13 centers had health care personnel facing data entry tools such as flowsheets or electronic forms. These tools allowed for the capture of diabetes-specific structured data at clinical visits that could be later shared for analytic purposes. Nine centers had questionnaires directly filled out by the patient, either using the patient portal or clinic tablets (native to the EHR), or through integration of a REDCap database, a commercial survey tool which sends a survey to patient mobile phones. Of these, three brought the questionnaire elements into the HCP structure data entry tool, so that HCPs could edit or accept the patient-reported data, whereas the other centers did not require HCP validation of questionnaires. Only two centers had automated integration of measures from a diabetes device platform directly to the EHR. All centers which had structured data tools had the ability to automatically import these responses into their notes, to ease the burden of documentation for HCPs. To ensure accurate data (for example, regarding diabetes type and date of diagnosis), three centers had a “hard stop” requiring HCPs to fill out these data elements before they could close their clinic encounters.

(a) Shows the number of centers with different EHR tools. (b) Shows data elements captured by each EHR tool. (c) Shows stakeholders responsible for capturing that particular data element.

Figure 2b shows specific data elements captured by the different EHR tools. Health care personnel-facing structured data tools captured glycemic outcomes, self-management metrics, and depression tools, whereas questionnaires captured patient-reported outcomes such as depression, distress, and SDOH. Figure 2c shows the different stakeholders who were responsible for capturing data elements. In the vast majority of centers, HCPs and diabetes care and education specialists (DCES) or nurses were the individuals most commonly responsible for entering data using flowsheets/electronic forms, but there was variability across centers. Patients directly reported psychosocial measures (depression, distress, or SDOH) and self-management metrics using questionnaires. Medical assistants were largely involved with inputting data for depression and SDOH screening. Interestingly, two centers had QI coordinators who were responsible for inputting the data for certain metrics.

Depression screening had the most diverse set of stakeholders and workflows involved in data capture, including HCPs, DCESs, patients, medical assistants, and QI champions. For example, some centers had patients answering questions using the patient portal or tablets in clinic, which would go directly into the EHR, but at least 7 of the centers had a workflow which started with the patient filling out a paper questionnaire in clinic for depression screening, and ended with a medical assistant (n = 3), a DCES (n = 1), or a HCP (n = 2) entering the patient responses into a flowsheet. One center using a REDCap survey automatically imported the results into the EHR. The other center using a REDCap survey had a QI coordinator who was responsible for monitoring an email where the questionnaire responses would be sent in real time during clinic, inputting the screening result into a flowsheet to record the score and generate the charge; and sending a secure message to the HCPs during the clinic visit. The other center that used a QI coordinator for data capture had them review the diabetes device download and enter glucose metrics into a flowsheet on behalf of the HCP.

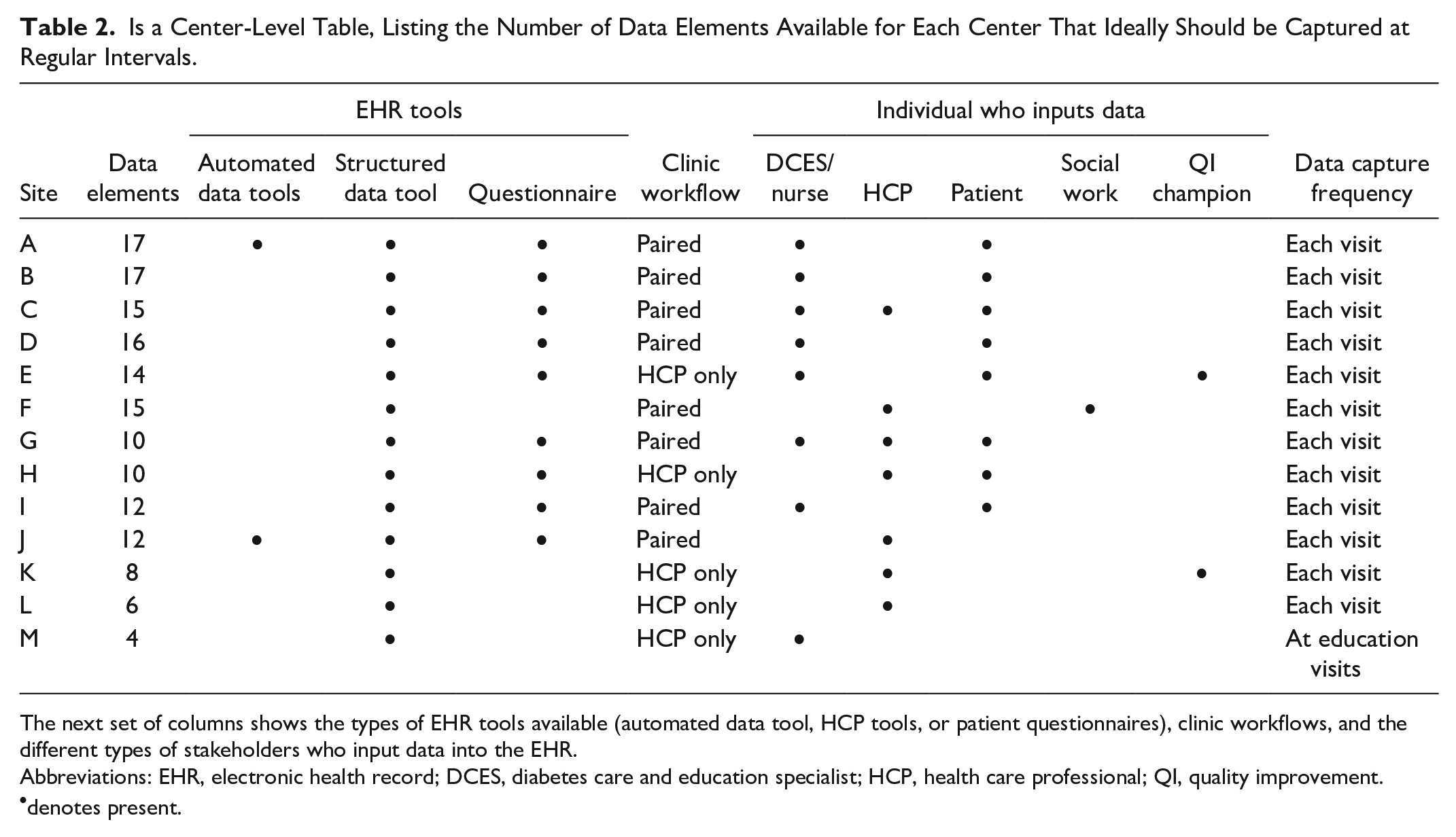

Table 2 is a center-level table, showing the number of encounter-level data elements reported, EHR tools available and individuals who input data at each site. The number of data elements available varied greatly among centers, ranging from 4 to 17.

Is a Center-Level Table, Listing the Number of Data Elements Available for Each Center That Ideally Should be Captured at Regular Intervals.

The next set of columns shows the types of EHR tools available (automated data tool, HCP tools, or patient questionnaires), clinic workflows, and the different types of stakeholders who input data into the EHR.

Abbreviations: EHR, electronic health record; DCES, diabetes care and education specialist; HCP, health care professional; QI, quality improvement.

denotes present.

There were two main different types of clinic workflows: eight centers had a paired DCES/nurse-HCP workflow, in which a DCES/nurse was available to see the patient in addition to the HCP at every diabetes clinic visit; five centers had an HCP-only workflow. This structure largely determined responsibility for data capture. Among the seven centers with the highest number of available data elements, six had a paired clinic workflow and six shared data input responsibility. In contrast, among the six centers with fewer data elements available, only two had a paired clinic workflow, and three had DCESs or patients entering data.

Finally, just because a structured data item exists, it does not mean that there will be available data. Twelve of the centers captured data at every visit, except for center M, which had DCESs only using them for diabetes education visits, which do not occur at systematic or standard intervals for patients. Hence, the majority of data were missing for their patient population.

Discussion

Systematic and comprehensive data acquisition from the EHR to capture information about overall processes and outcomes of care for diabetes is critical for QI, but in the current state, the design of EHR tools and workflows inadequately support HCPs and teams wishing to deliver high-quality patient care.8,9

Our findings demonstrate that the current design of EHR tools in terms of metric specification, tool design, and integration into workflows is variable, lacks standardization, and negatively impacts data availability. This challenge is even greater in the context of diabetes care, which is typically delivered and supported by multidisciplinary teams and the need for accessing and integrating patient-reported diabetes device data and patient-reported outcomes as part of diabetes care represent additional barriers.

The ability of centers to report on a metric depends on the type of question that is asked, the frequency with which it is asked, and the consistency with which the team captures the data. Based on our analysis, paired clinic workflows do seem to be key to ensuring more comprehensive data capture. Sites that had a staffing model to support joint DCESs involvement in care had DCESs entering portions or the entirety of data elements, which distributed the burden of data collection across the team. In contrast, sites that had an HCP-only workflow at clinic visits could only rely on HCPs to perform the data capture, which is a challenge for reliable and regular use of the tools during visits as subjectively reported by multiple centers. As a result, sites reported deliberately minimizing the number of data elements requested to reduce the burden, made certain items required before closing the note, or on the backend relied on other workarounds, such as incorporating other stakeholders such as QI coordinators or medical assistants to ensure reliable entry of the data.

Many sites now have the ability to capture patient-reported outcomes from their patients through automated questionnaires, either native to or integrated with the EHR. It is well recognized that addressing depression and diabetes distress are key components of care required for achieving optimal diabetes management, and tools like this increase the capacity of sites to assess and capture patient-reported outcomes systematically at the individual and population level. This automation decreases the work of data capture because it does not rely on documentation by clinicians, allows for measurement and scoring, and permits identification of high-risk patients in real-time during the visit. However, not all sites reported having this capability due to a lack of local resources such as not having access to tablets in the clinic.

There was a particularly diverse set of EHR workflows for depression screening, with a large number of centers using paper questionnaires and a wide variety of individuals inputting the data. Medicolegal risk and patient portal rules were reported as factors influencing workflow choice. For example, patient-reported questionnaires are often released for patients up to 1 week before a clinic visit, but if patients affirm that they are suicidal prior to arriving, health systems do not have individuals monitoring for positive responses ahead of time. Furthermore, many adolescents do not have their own portal accounts but rather a parent proxy account; health systems may not release the questionnaire to proxy accounts because of privacy concerns.

The variation in questions captured by centers contributes to differences in data availability across the wider collaborative. For example, in the T1DX-QI, of 28 T1DX-QI centers contributing data, 20 can provide information on the CGM brand and model, 14 can provide information on a CGM start date, and 15 can provide information on CGM use. These differences beg the question of standardization across centers. There are promising developments at one of the EHR vendors, including the ability to share prebuilt data tools between centers, and to create universal electronic forms that can be automatically installed at an upgrade without requiring additional IT resources, mitigating the need for teams to “wait in the queue” for IT support. However, this does require agreement and standardization of data elements across centers. There is recent ongoing work to support data standards for CGM integration into the EHR 10 ; a similar type of measurement and standardization is needed to capture additional diabetes metrics apart from glycemic outcomes, such as key self-management metrics like the “six habits.”

We did not evaluate data quality, but other studies have demonstrated that both the clinical workflows and the clinical stakeholders involved in generating EHR data impact clinical data availability and quality. 11 Optimally designed workflows in the EHR are central for improving HCP efficiency and reducing HCP burnout, but unfortunately in the transition from paper records to the EHR, most health systems simply replicated their tools and workflows from paper, constructing the “note” as the artifact of focus for the HCP. A redesign of local workflows with change management is needed to pivot HCPs from the old “note-centric” approach to the EHR, to a more data-oriented approach. Learning health systems will ultimately benefit from a “data-in-once” strategy, 12 in which data are captured once at the point of care and then are used for clinical care, operations, QI, and research. A standardized set of structured tools to capture key metrics at point of care can support shared decision-making between patients and HCPs, standardize processes of care for all patients across multiple centers, ensure systematic capture and learning from data through entities like the T1DX-QI, and reduce documentation burden for clinician as the data can become incorporated into a note through a simple shortcut or smartphrase. These tools are available through one of the major vendors and can reduce the work of information technology build at individual centers, but it will require the diabetes community to converge on standard elements and workflows and to adopt these tools in practice.

Limitations of this study included the fact that we did not talk to all centers but rather a convenience sample of centers in the T1DX-QI, who may be skewed to those with more Health IT capacity in the system; however, the wide variation in the availability of tools and metrics in this group was still notable. Second, we did not do video capture or electronic capture of workflows due to privacy concerns and therefore had to rely on screenshots and narrative description of workflows which may not generalize to all HCPs at the local site. Access to an EHR test environment could have been an alternative but this was not universally accessible to individuals at all sites. Third, we did not evaluate data quality but focused on data availability at centers. Fourth, we did not ask about availability of tablets in the clinics which is another technology tool for capturing patient-reported outcomes. Fifth, there was a greater representation of one specific brand of EHR among centers, but this is simply reflective of EHR use in the larger group of centers in the T1DX-QI collaborative.

The EHR is an essential tool for measurement of overall quality of care and outcomes in learning health systems like the T1DX-QI collaborative, but the EHRs used by centers are not currently optimized for data collection. Improvements in the design of measures, tools, and workflows to support seamless and standardized data collection and learning is needed, so that centers can focus on action toward improving health outcomes and quality of life for all patients with T1D.

Footnotes

Acknowledgements

We thank Andrew Lavik for his contributions.

Abbreviations

AID, automated insulin delivery; CGM, continuous glucose monitoring; DCES, diabetes care and education specialists; EHR, Electronic Health Record; HCP, health care professional; QI, Quality Improvement; SDOH, social determinants of health; T1DX-QI, Type 1 Diabetes Exchange Quality Improvement Collaborative.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: JL is on the GoodRx medical advisory board, is a consultant for Tandem Diabetes Care and has participated on a Sanofi Digital Advisory Board. OE is an advisor for Medtronic Diabetes and Sanofi Diabetes. He has received research support through his institution (T1D Exchange) from Abbott, Vertex, Eli Lilly, Dexcom and Medtronic. RW receives research support through her institution (Johns Hopkins) from Novo Nordisk as the center PI of a trial.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The T1DX-QI Collaborative is supported through the Leona M. and Harry B. Helmsley Charitable Trust. Support was also received from P30DK020572 (MDRC), P30DK092926 (MCDTR), and P30DK089503 (MNORC) from the National Institute of Diabetes and Digestive and Kidney Diseases and the Elizabeth Weiser Caswell Diabetes Institute at the University of Michigan.