Abstract

Background:

In this work, we leverage state-of-the-art deep learning–based algorithms for blood glucose (BG) forecasting in people with type 1 diabetes.

Methods:

We propose stacks of convolutional neural network and long short-term memory units to predict BG level for 30-, 60-, and 90-minute prediction horizon (PH), given historical glucose measurements, meal information, and insulin intakes. The evaluation was performed on two data sets, Replace-BG and DIAdvisor, representative of free-living conditions and in-hospital setting, respectively.

Results:

For 90-minute PH, our model obtained mean absolute error of 17.30 ± 2.07 and 18.23 ± 2.97 mg/dL, root mean square error of 23.45 ± 3.18 and 25.12 ± 4.65 mg/dL, coefficient of determination of 84.13 ± 4.22% and 82.34 ± 4.54%, and in terms of the continuous glucose-error grid analysis 94.71 ± 3.89% and 91.71 ± 4.32% accurate predictions, 1.81 ± 1.06% and 2.51 ± 0.86% benign errors, and 3.47 ± 1.12% and 5.78 ± 1.72% erroneous predictions, for Replace-BG and DIAdvisor data sets, respectively.

Conclusion:

Our investigation demonstrated that our method achieved superior glucose forecasting compared with existing approaches in the literature, and thanks to its generalizability showed potential for real-life applications.

Keywords

Introduction

Over the past 40 years, major efforts have been undertaken in the field of diabetes technology to develop decision support systems and automated insulin delivery systems for blood glucose (BG) management in individuals with type 1 diabetes (T1D). These systems are generally built around a model of glucose-insulin metabolism that is used to forecast future BG levels utilized to compute therapy interventions.

In such contributions, past glucose values, typically recorded by a continuous glucose monitoring (CGM) device, along with various physiological data including carbohydrate (CHO) intakes, fast-acting and slow-acting insulin doses, 1 or recorded physical activity (PA) and daily routines 2 are considered to predict future BG levels for different prediction horizons (PHs).

Numerous approaches have been taken in the diabetes technology literature to propose glucose predictive models, the majority being based on polynomial and state-space models.3-10 Thanks to the increased availability of real-life data collected for long-period trials, machine learning (ML) techniques have become increasingly popular and have been successfully employed to solve the BG prediction problem. In particular, early prior studies exploited random forest, multivariate adaptive regression splines,

11

More recently, the diabetes technology community has witnessed a booming interest in the application of Artificial Neural Networks to the purpose of BG prediction, mainly due to their ability to perform automatic feature extraction, and hence eliminating the need of feature engineering. For example, Zhu et al 18 proposed a convolutional neural network (CNN)-based model and Li et al 19 introduced GluNet, a deep neural network (DNN) framework, leveraging dilated convolutional layers, to improve the CNN performance and demonstrated that GluNet outperformed rival models, including autoregressive with exogenous inputs (ARX), SVR, and neural network for predicting glucose on in silico patients. Mirshekarian et al 20 proposed a recurrent neural network (RNN) architecture with long short-term memory (LSTM) units trained and evaluated on a data set of five patients containing approximately 400 days’ worth of data, for predicting BG up to 60-minute PH. They claimed that an LSTM trained on raw data from real patients may outperform SVR and polynomial models that were trained using manually derived features from the same data set. Li et al 21 introduced a convolutional recurrent neural network (CRNN) to estimate the BG level for up to 60-minute PHs based on prior CGM data and information on meal and insulin intakes, presenting results for both simulated and real patients.

Some of these studies have used simulated data for the evaluation of their proposed methodologies, which although are important for evaluating the feasibility of the models, they lack some challenges that are in real-life data, such as missing points in data, extreme or unusual environmental conditions, and medication interferences outliers. Moreover, in cases where data from real T1D patients were used, the sample size was small, which, albeit good for initial evaluation, precludes application to a large number of T1D patients due to the high rate of intersubject variability in glucose trends.

That said, our objective in this research is to build on our past work 22 to develop a new forecasting model for BG, built using a CNN-LSTM stacked architecture, overcoming the limitation of manual feature engineering, and evaluate its effectiveness on real patients’ data collected both in-hospital and in the outpatient setting for predicting future BG after 30, 60, and 90 minutes of PH.

The remainder of the article is structured in the following manner. The "Experimental Condition" section describes the details and preprocessing of the data sets. The "Methods" section goes into the details about our proposed architecture and prediction approach. Then, the obtained results are presented in the "Experimental Results" section and discussed in detail in the "Discussion" section . Finally, the "Conclusion and Future Work" section summarizes this study.

Experimental Conditions

Two distinct data sets of patients with T1D were used in this study, each of which contained information about meals, insulin boluses (slow and fast acting), and CGM values:

Data Preprocessing

Missing CGM data points in the Replace-BG data set, for gaps less than 60 minutes, were estimated using linear interpolation. 25 No interpolation was attempted in circumstances when the interval between two consecutive points was greater than 60 minutes, as this could cause the model to learn the estimated sequence rather than the genuine values, and when this was the case the entire corresponding day of the missing data was discarded. 26 Following that, the CGM variable was uniformly resampled every 15 minutes in both data sets, and insulin and meal characteristics were averaged within each 15-minute time interval. Next, we normalized each time series with regard to its own lowest and maximum values, resulting in the entire data set being in the range (0,1), to improve the prediction accuracy. 27 In the output of the model, an inverse transform was used to map the features back to their actual values.

Train-Test Splitting: Forward Chaining (FC)

In timeseries prediction problems, conventional cross-validation, that is typical random train/test splitting strategies, cannot be used because there is a temporal dependency between the data samples. Hence, to account for this temporal dependency as well as to avoid data leakage from training to test sets, we employ

To train the model, the train-set is divided into 80/20 training and validation subsets, using FC algorithm, which allows for an unbiased evaluation of a model’s fit to the training data set during the training process and helps ensure consistent performance.

Methods

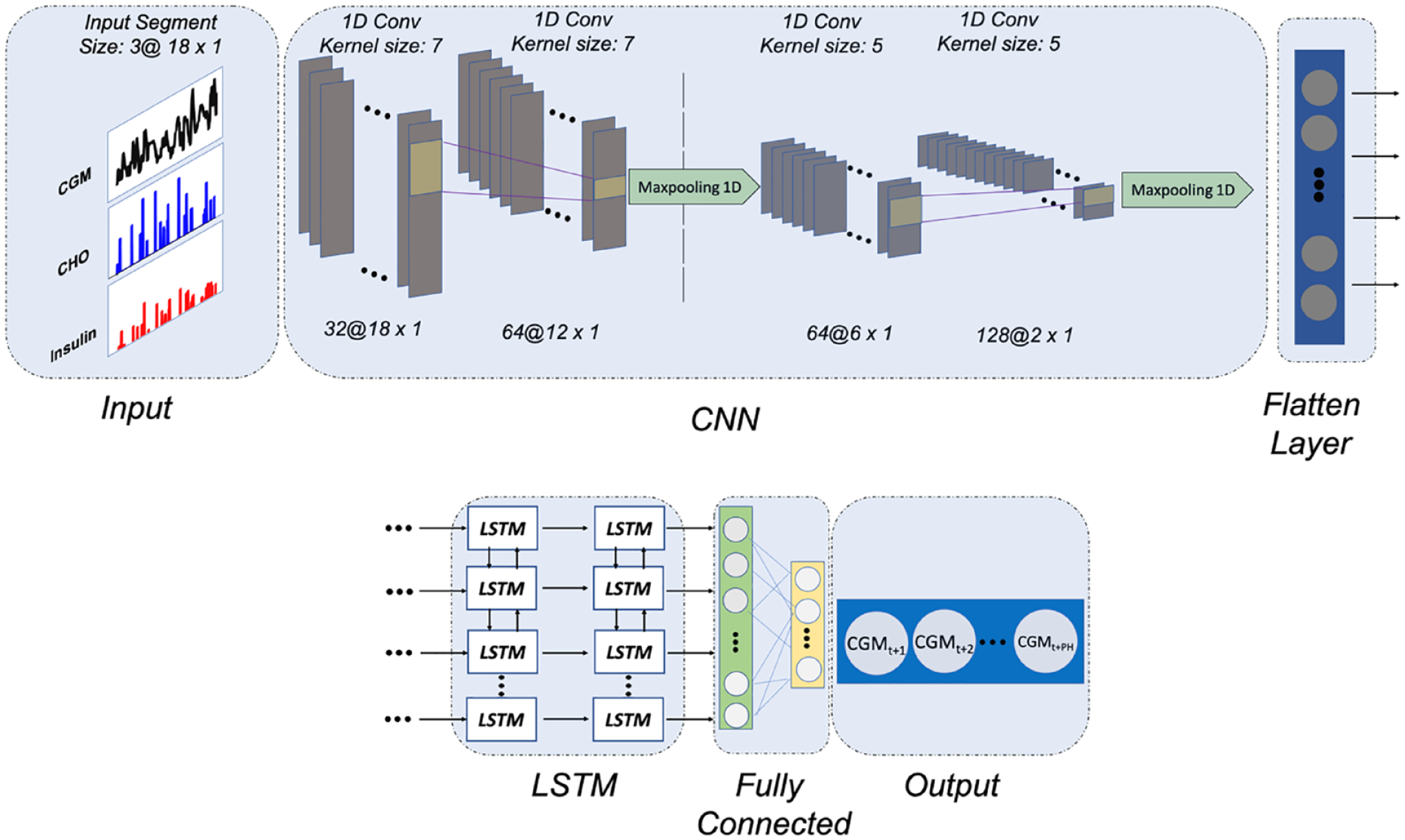

Figure 1 depicts the proposed CNN-LSTM model. Windowed Samples of past data with length three times the PH (

where

The proposed CNN-LSTM architecture for 90-minute PH BG prediction.

To avoid overfitting the model to the training data set and obtain a more generalizable model, dropout layers are used between each LSTM layer. Also, after each convolutional layer, a batch normalization operation is performed to re-center and rescale the layer’s input to reduce the internal covariance shift.

Training Hyperparameters

We use the TensorFlow 2.0 framework 29 to implement the proposed CNN-LSTM model. Manual hyperparameter tuning with expert knowledge was performed such that more than 20 different sets of hyperparameters, including number of neurons, activation functions, optimizers, learning rates, and batch sizes, were tested to have more control over the process and see how different hyperparameter scenarios may affect the model performance. Due to the length differences between the two data sets, batch sizes of 32 and 256 are used to optimize the parameters using the root mean square propagation method with an initial learning rate of 0.0001 and a moving average value of 0.9. Training is carried out for 500 epochs with an early stop point by monitoring changes in validation loss throughout a 50-epoch period.

To evaluate the accuracy of the prediction, three metrics were employed: MAE, the root mean square error (RMSE), and the coefficient of determination (R2), given in Equations (1-3):

where

The choice of the abovementioned metrics enables us to compare our suggested predictor to previously published methods. In addition, to assess the suggested algorithm’s clinical acceptability, we used the

Comparison With Other Methods

The predictive performance of our proposed CNN-LSTM model was compared against the ARX in,

6

the SVR model proposed in,

32

the LSTM model and the CRNN model proposed in.

21

Autoregressive with exogenous inputs is a good reference model since it has been used in many studies in the diabetes literature. Moreover, the choice of SVR models was motivated by the fact that among ML methods SVR has demonstrated promising clinical acceptability,

33

whereas LSTM, designed with the same architecture as the LSTM section of the proposed CNN-LSTM model, allows us to demonstrate the advantage of the added convolutional layers for prediction. In addition, since CRNN, which is comprised of three convolutional and one LSTM layer, and its modifications has shown promising results in various studies,21,34 we implemented it based on the code repository provided in.

34

For the SVR model, we use the radial-basis function as the activation function, with a value of 0.0002 and

Experimental Results

Population-Wise Analysis

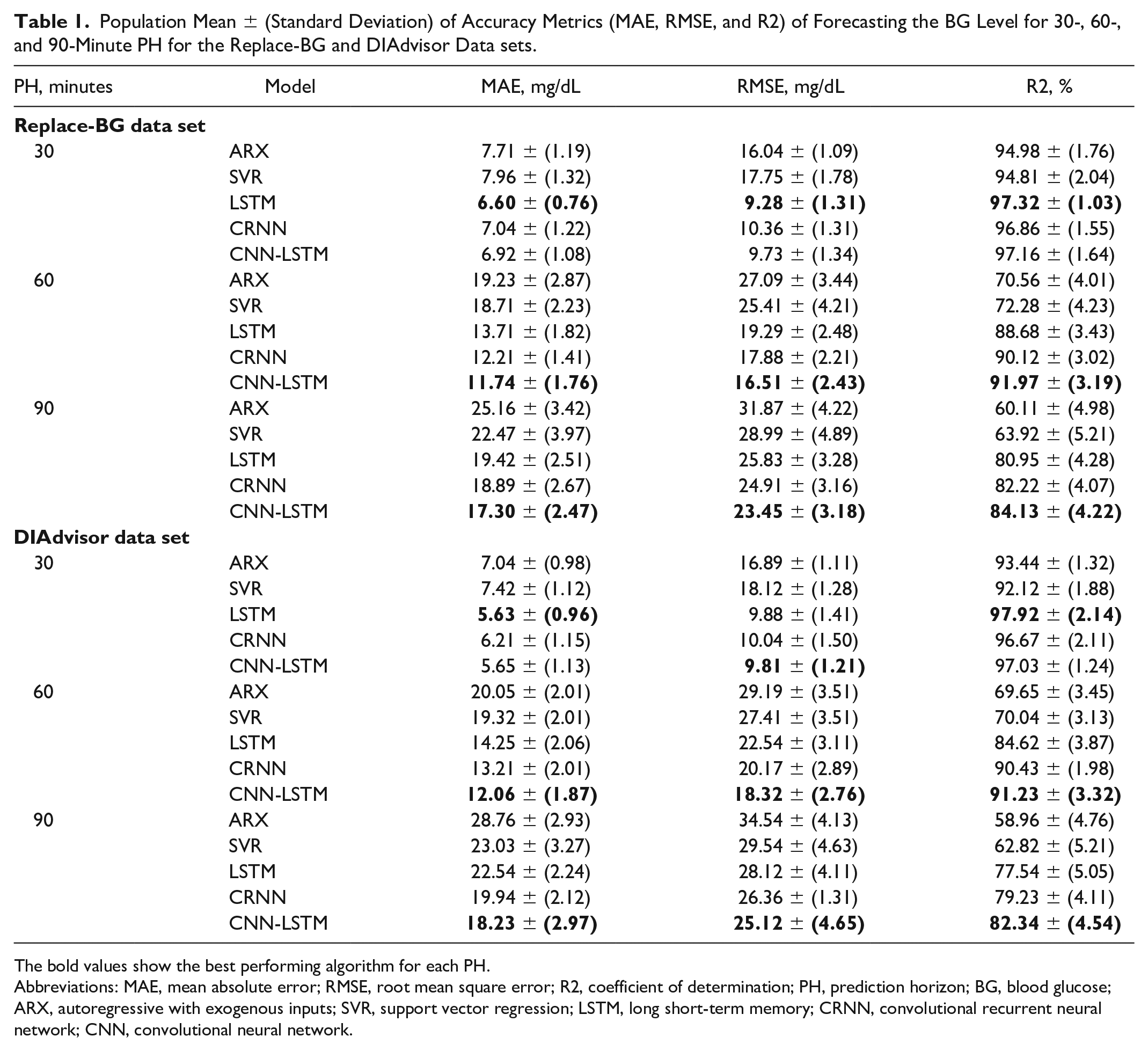

One of the major challenges for the glucose predictive models is the intersubject variability of the effect of insulin intake, meals, and other events on glucose dynamics. In this study, given the two data sets with large sample size, we can investigate the robustness of our model in dealing with this challenge. Table 1 shows population mean and standard deviation (STD) values of MAE (mg/dL), RMSE (mg/dL), and R2 (%) metrics when predicting future BG level for all T1D patients in both data sets. The highlighted values show the best performing algorithm for each PH. We observe that the performance of the proposed CNN-LSTM model is comparable with that of the LSTM for 30-minute PH for both data sets; however, for longer PHs, our method consistently outperforms all the other models by significantly reducing MAE and RMSE and increasing R2 values, in both data sets.

Population Mean ± (Standard Deviation) of Accuracy Metrics (MAE, RMSE, and R2) of Forecasting the BG Level for 30-, 60-, and 90-Minute PH for the Replace-BG and DIAdvisor Data sets.

The bold values show the best performing algorithm for each PH.

Abbreviations: MAE, mean absolute error; RMSE, root mean square error; R2, coefficient of determination; PH, prediction horizon; BG, blood glucose; ARX, autoregressive with exogenous inputs; SVR, support vector regression; LSTM, long short-term memory; CRNN, convolutional recurrent neural network; CNN, convolutional neural network.

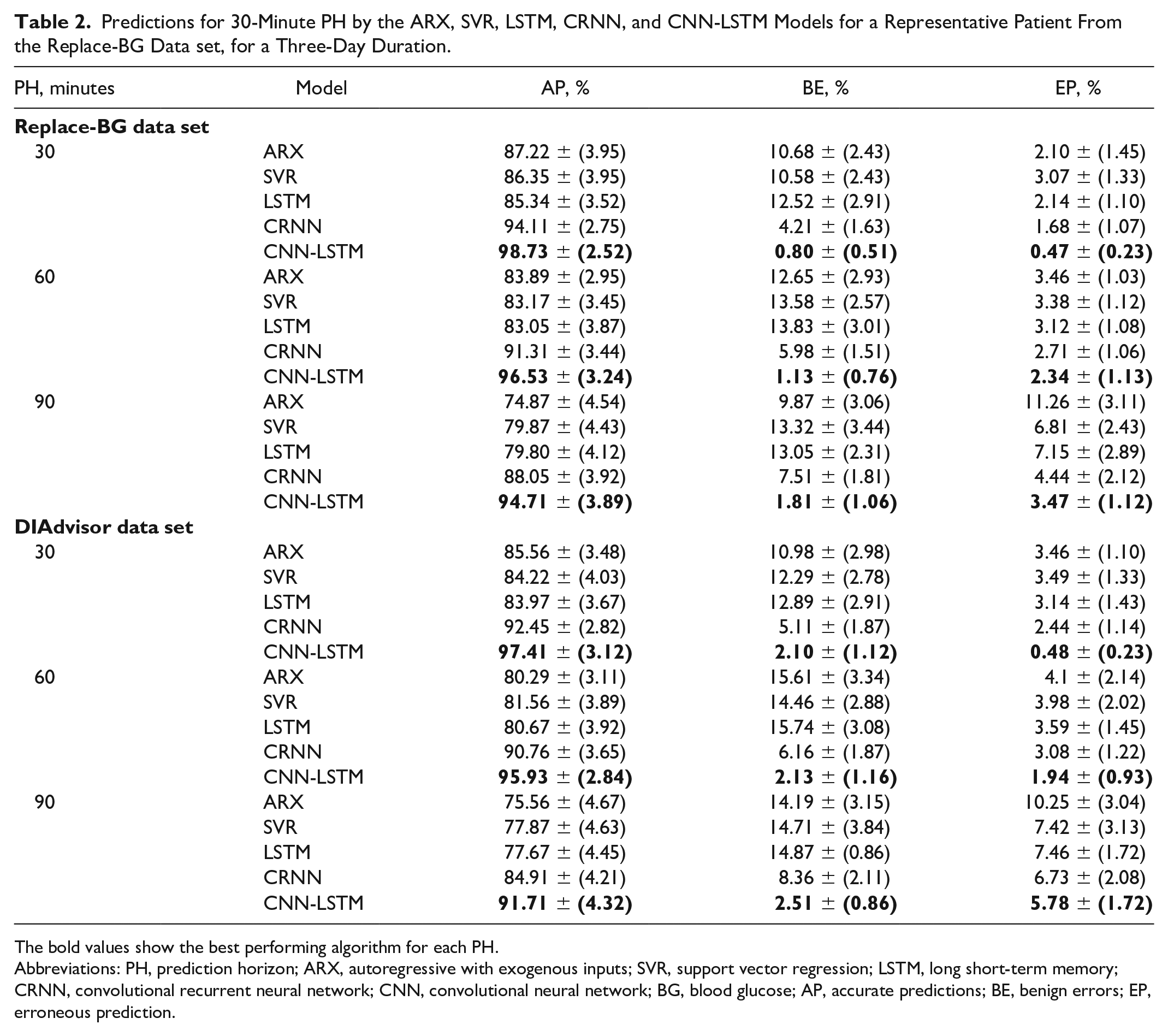

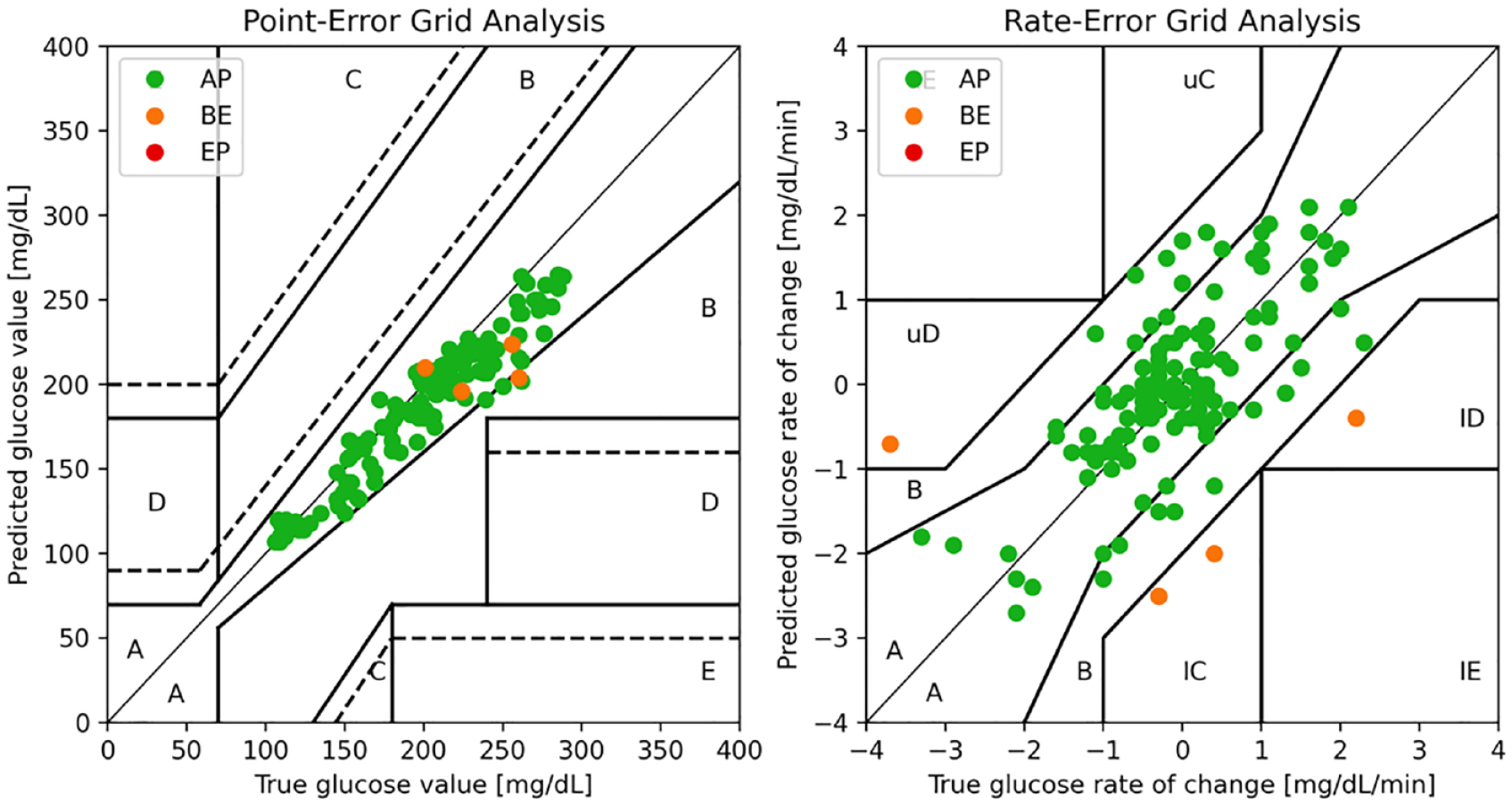

Table 2 summarizes the results in terms of the CG-EGA. The CNN-LSTM model that we propose outperforms the reference models across all PHs, with a higher percentage of accurate predictions (notice for instance 94.71 ± 3.89% for Replace-BG data set and 91.71 ± 4.32% for DIAdvisor data set, on the 90-minute PH) and less erroneous predictions (3.47 ± 1.12% for Replace-BG and 5.78 ± 1.72% for DIAdvisor data set, on the 90-minute PH) making it acceptable for clinical applications. Figure 2 illustrates an example of the CG-EGA plot of a representative patient from Replace-BG data set. It is observable that a significant proportion of predicted samples fall within the range A of both P-EGA and R-EGA, when compared with the associated true glucose values.

Predictions for 30-Minute PH by the ARX, SVR, LSTM, CRNN, and CNN-LSTM Models for a Representative Patient From the Replace-BG Data set, for a Three-Day Duration.

The bold values show the best performing algorithm for each PH.

Abbreviations: PH, prediction horizon; ARX, autoregressive with exogenous inputs; SVR, support vector regression; LSTM, long short-term memory; CRNN, convolutional recurrent neural network; CNN, convolutional neural network; BG, blood glucose; AP, accurate predictions; BE, benign errors; EP, erroneous prediction.

A representative example of CG-EGA with P-EGA (left) and R-EGA (right) components.

Our results demonstrate that our suggested strategy outperforms the polynomial, ARX, and conventional ML-based techniques, SVR as well as the LSTM model, acknowledging the advantage of adding up the CNN to the LSTM network. In addition, due to the optimized model structure and hyperparameter selections, our model performs better in terms of both the prediction accuracy and the clinical acceptability comparing with CRNN, which comprised CNN and RNN.

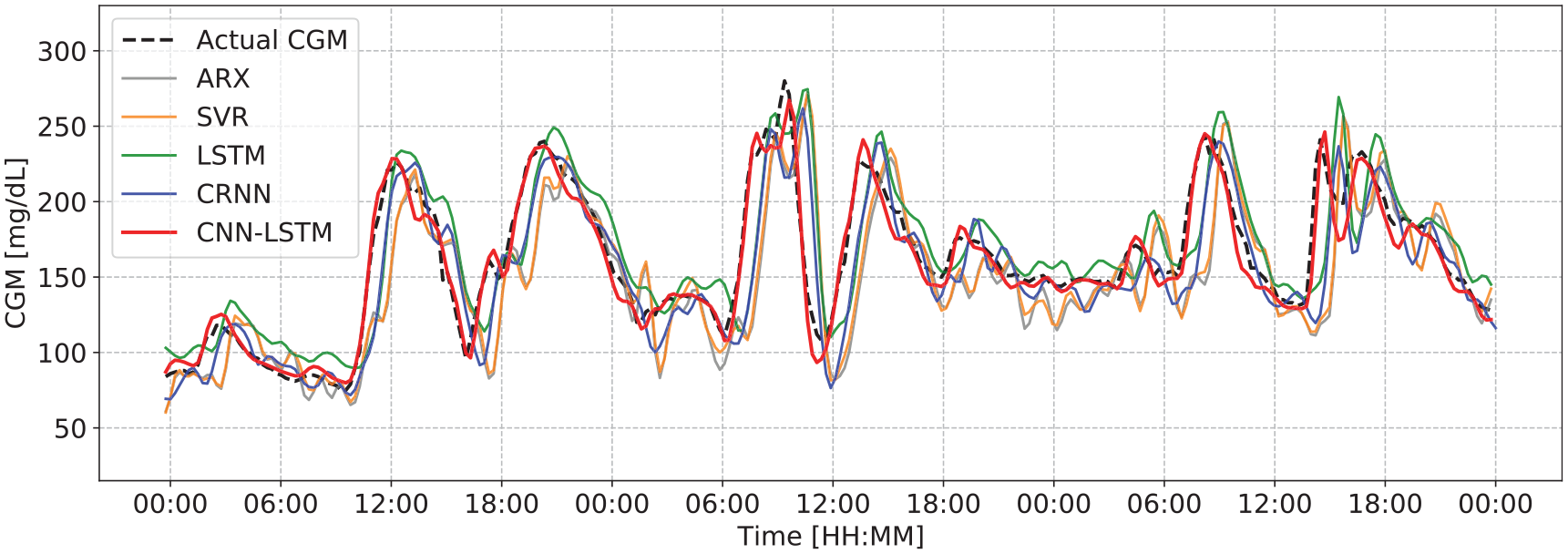

Figure 3 depicts a three-day period of recorded data for a representative participant from the Replace-BG data set, compared against the 60-minute-ahead predictions obtained with the considered models. It is observable that CNN-LSTM outperforms all the other methods in capturing the trends and fluctuations with greater accuracy, which is congruent with the corresponding MAE, RMSE, and R2 values. We would like to remind readers that inference results are generated by testing each model on data from previously unseen patients.

Actual CGM value compared with the predictions for 60-minute PH by the ARX, SVR, LSTM, CRNN, and CNN-LSTM models for a representative patient from the Replace-BG data set, for a three-day duration.

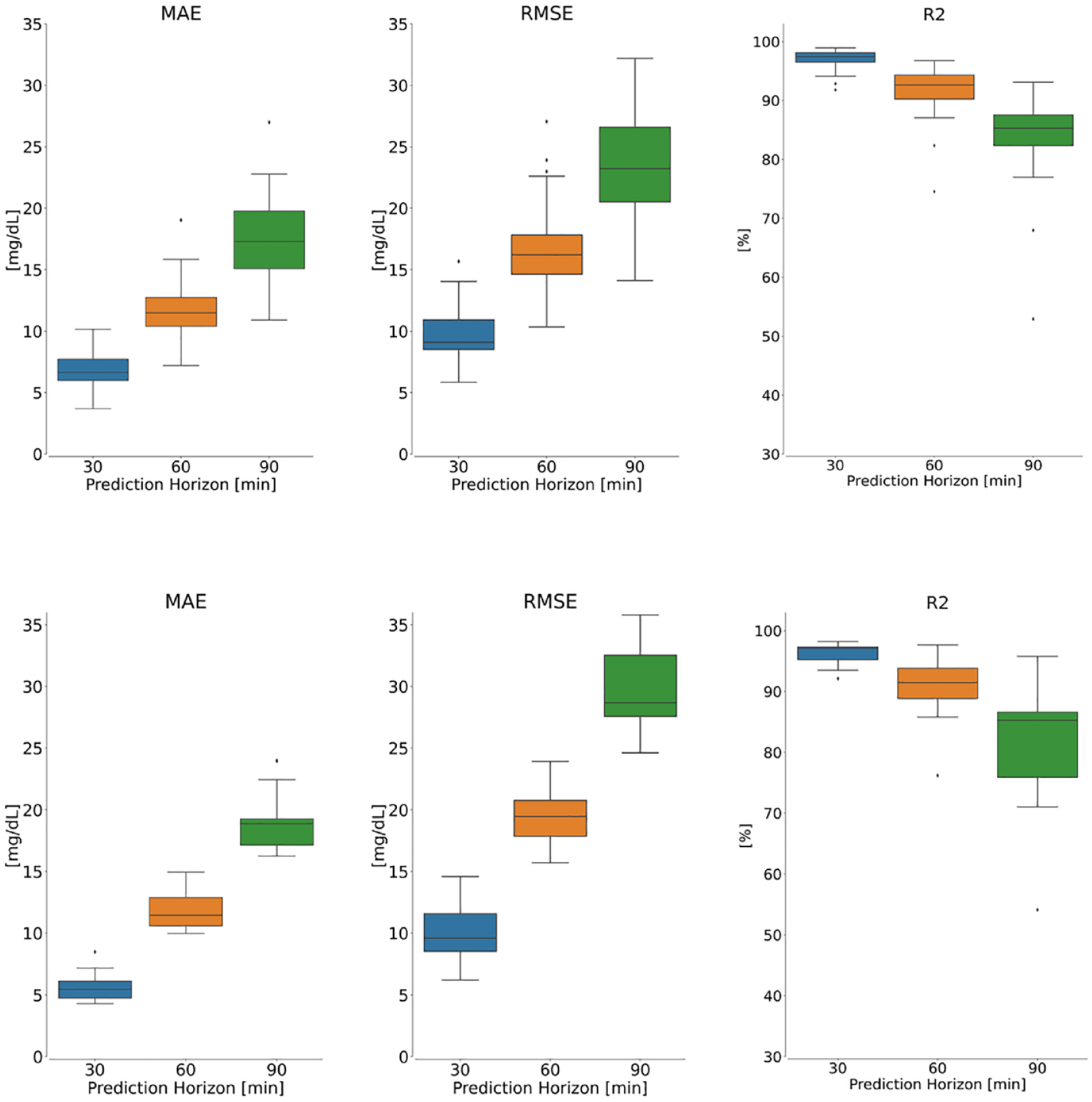

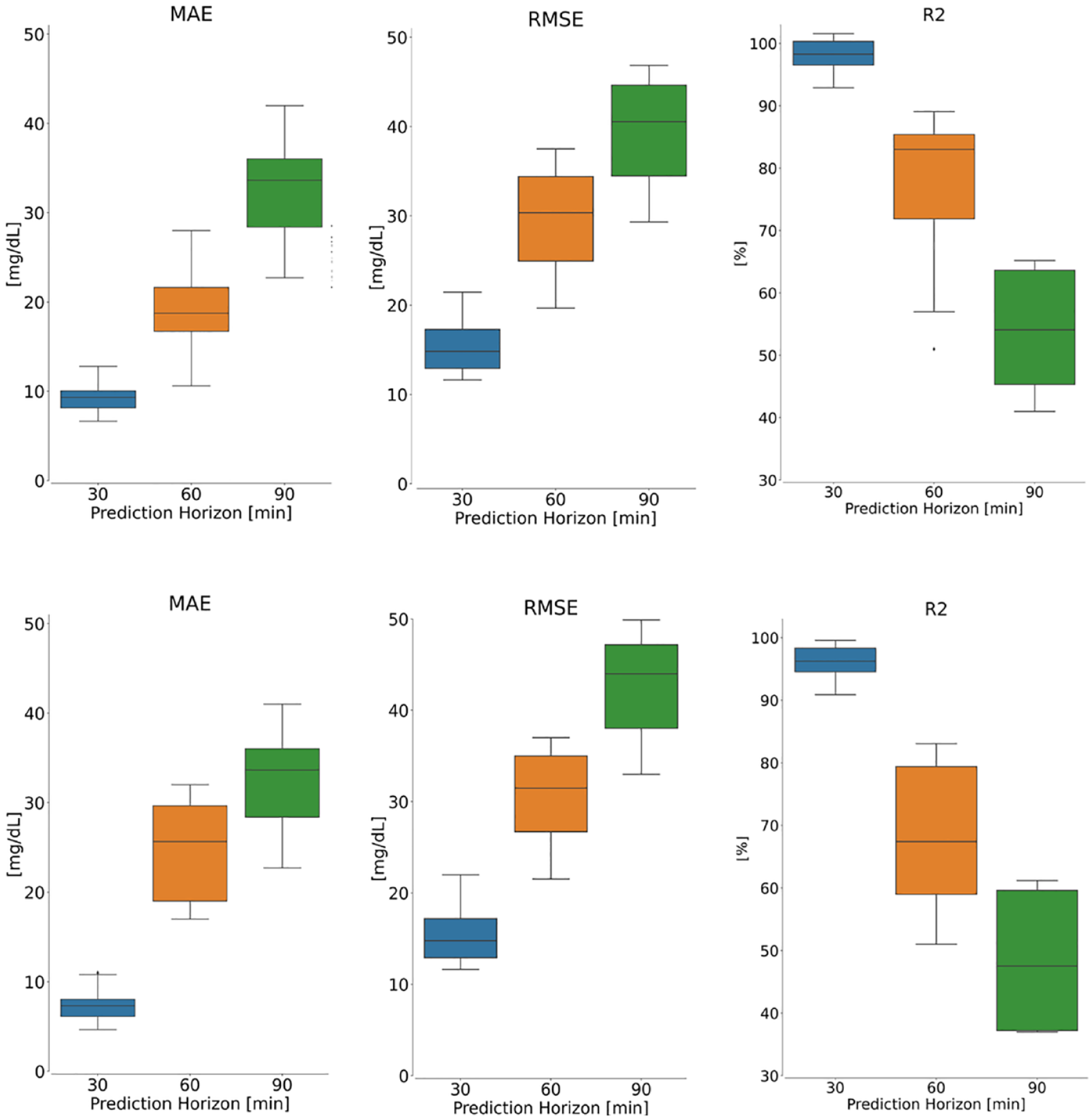

The boxplots in Figure 4 depict results obtained from our proposed model for each metric across all patients in the Replace-BG (top) and DIAdvisor (bottom) data sets, respectively. Although degrading for longer PHs, predictions remain within a somewhat reasonable range, demonstrating the robustness of the proposed model against intersubject variability of glucose dynamics in both data sets.

Performance evaluation of the proposed model on the Replace-BG (top panel) and DIAdvisor (bottom panel) data sets for three different PHs over test patients. Left: MAE, middle: RMSE, and right: R2.

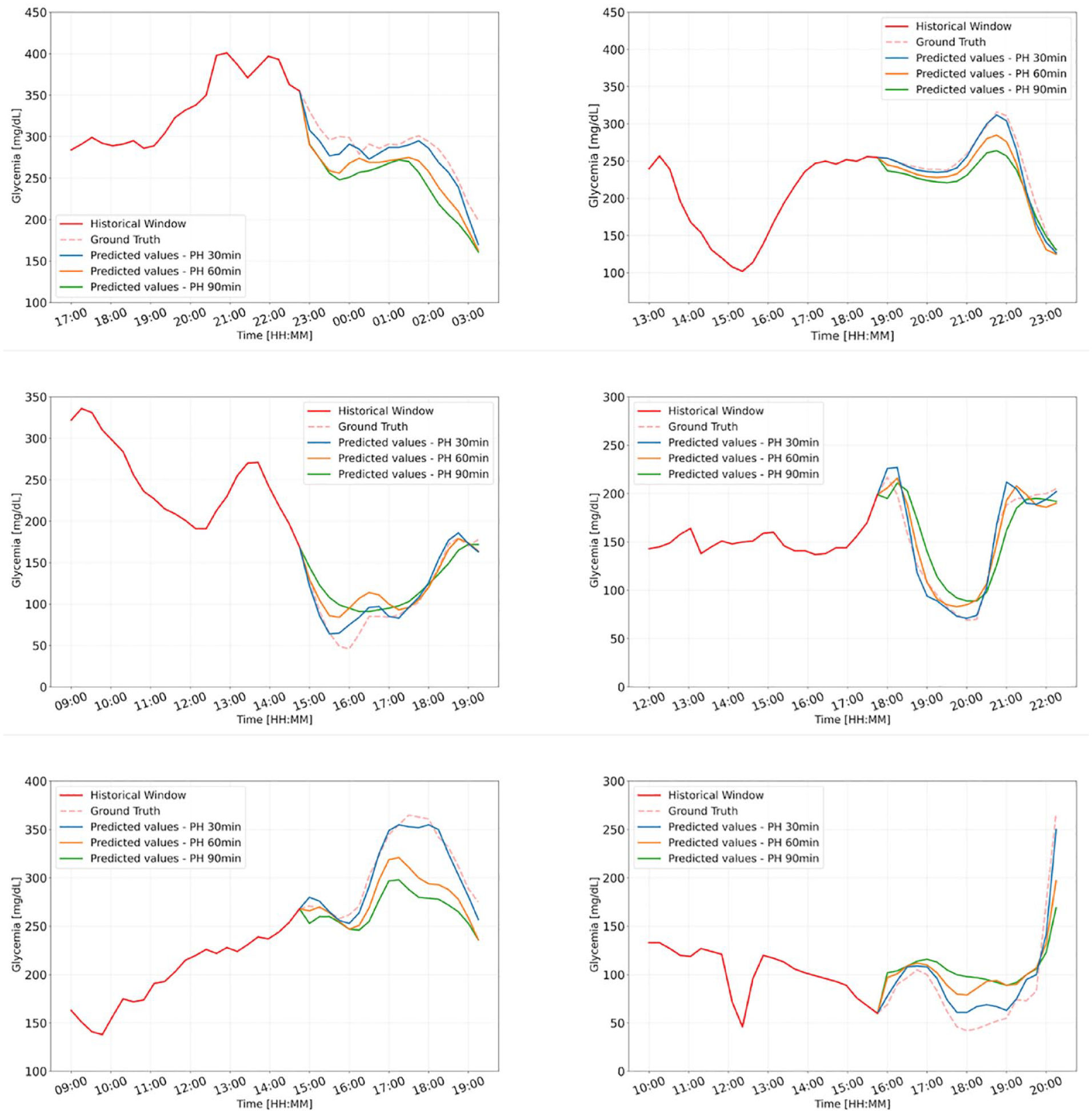

To assess the performance of the proposed model for different PHs, Figure 5 demonstrates examples of CGM prediction using CNN-LSTM with different PHs for six representative patients. In each case, the solid red line represents the historical window, while the blue, orange, and green lines represent CNN-LSTM-estimated forecast time series for 30-, 60-, and 90-minute PH, respectively. While the model for 30-minute PH clearly outperforms the others, the models for longer PHs continue to perform well by forecasting the rapid increases and decreases in ground truth (dashed red line), which may result in hyperglycemia or hypoglycemia, respectively, which is in our best interest.

Representative examples of the CGM prediction by the proposed CNN-LSTM for different PHs, for six patients from Replace-BG data set.

For completeness, Supplemental Table 1 in Online Appendix provides the range of RMSE (mg/dL) obtained by applying other methodologies proposed in the literature to different data sets collected from real subjects.

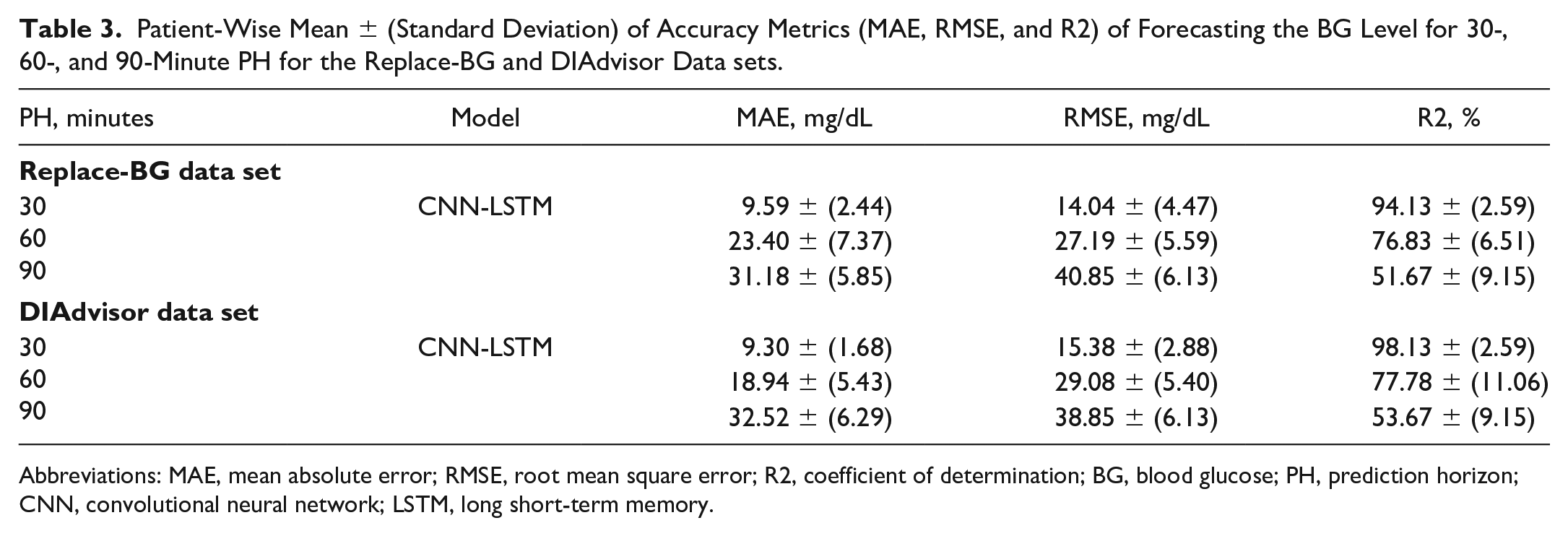

Patient-Wise Analysis

To account for the intra-subject variability of BG dynamics, we train and test the proposed method with each patient’s data set separately, that is the model is trained, evaluated, and tested on each patient’s data using an 80/20 split between the train and the test data sets. Table 3 illustrates the results in terms of mean and STD values of MAE (mg/dL), RMSE (mg/dL), and R2 (%) metrics while predicting the future BG level of each patient given the training set data for the same patient. Figure 6 exhibits the distribution of metrics acquired for each patient in the Replace-BG and DIAdvisor data sets. It is noticeable that, although the model performance deteriorates comparing with the population-wise metrics, it still produces encouraging results, particularly for short-term PH. However, as the PH increases, the distribution of the results increases as well, which makes sense given the small data set utilized for patient-wise training.

Patient-Wise Mean ± (Standard Deviation) of Accuracy Metrics (MAE, RMSE, and R2) of Forecasting the BG Level for 30-, 60-, and 90-Minute PH for the Replace-BG and DIAdvisor Data sets.

Abbreviations: MAE, mean absolute error; RMSE, root mean square error; R2, coefficient of determination; BG, blood glucose; PH, prediction horizon; CNN, convolutional neural network; LSTM, long short-term memory.

Patient-wise performance evaluation of the proposed model on the Replace-BG (top panel) and DIAdvisor (bottom panel) data sets for three different PHs over the test data set. Left: MAE, middle: RMSE, and right: R2. In each boxplot, the central mark is the median.

Discussion

Advantages and Limitation of the Proposed CNN-LSTM Model

In this article, we proposed a hybrid CNN-LSTM algorithm, for the prediction of BG concentration in people with T1D. Consisting of an automatic feature extraction component, CNN, and a sequence learner part, LSTM, our proposed CNN-LSTM demonstrated superior performance in extracting hidden features and correlations between various physiological variables, as well as learning their causal effect, to be used for forecasting future BG values.

Nonetheless, as seen in the “Patient-Wise Analysis” subsection, to obtain acceptable performance, CNN-LSTM, like all other DNN-based architectures, needs to be trained on a large enough data set. That is why the model generally performs better on the Replace-BG than the DIAdvisor data set. On the other hand, one of the primary drawbacks of dealing with physiological data sets is the large number of missing data points, which can have a substantial impact on model performance. As noted in the “Data Preprocessing” subsection, we used linear interpolation on the training set to address this issue when gaps in CGM data were shorter than 60 minutes; however, it would be more efficient to have a data set with fewest possible missing data points.

Comparison With Existing Algorithms

This study demonstrated the superiority of our proposed CNN-LSTM model over the ARX, SVR, LSTM, and CRNN models, in terms of both predictive accuracy metrics and clinical acceptability. This higher performance is due to a more sophisticated architecture comprised of stacks of convolutional and LSTM layers, which results in a more robust method for learning complex and hidden features in multivariate data sets as well as learning to predict abrupt changes in the CGM level caused by alteration in other variables, like food or insulin intakes. In addition, as illustrated in Figure 3, our proposed CNN-LSTM model is more capable of capturing rapid and abrupt changes in the CGM trend, owing to its capacity for learning the complex dynamics and correlations between variables in the data set. Sufficient data are required to produce the desired results, however, which accordingly raises the computational cost of the CNN-LSTM model as opposed to the reference models.

Conclusion and Future Work

In this article, we proposed a hybrid deep learning–based model, comprised of convolutional and LSTM layers, and proved its superior performance in predicting future BG levels, for two multivariate in vivo data sets of T1D patients, Replace-BG and DIAdvisor, respectively, over previously published models in the literature.

To account for intersubject variability, we used the

Supplemental Material

sj-docx-1-dst-10.1177_19322968221092785 – Supplemental material for Long-term Prediction of Blood Glucose Levels in Type 1 Diabetes Using a CNN-LSTM-Based Deep Neural Network

Supplemental material, sj-docx-1-dst-10.1177_19322968221092785 for Long-term Prediction of Blood Glucose Levels in Type 1 Diabetes Using a CNN-LSTM-Based Deep Neural Network by Mehrad Jaloli and Marzia Cescon in Journal of Diabetes Science and Technology

Footnotes

Abbreviations

CGM, continuous glucose monitoring; CHO, carbohydrate; ANN, Artificial neural network; DNN, deep neural network; ML, machine learning; CNN, convolutional neural network; LSTM, long short-term memory; RNN, recurrent neural networks; MAE, mean absolute error; RMSE, root mean square error; SVR, support vector regression; R2, coefficient of determination; STD, standard deviation; CRNN, convolutional recurrent neural network; CG-EGA, continuous glucose-error grid analysis.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr Cescon serves on the advisory board for Diatech Diabetes, Inc. Mehrad Jaloli declares no conflict of interest relevant to this project.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the University of Houston through a start-up grant.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.