Abstract

Background

Parkinson's disease (PD) is the most common movement disorder, and patients increasingly use YouTube to obtain health-related information.

Objective

This study aimed to assess the content quality and informational reliability of YouTube videos on PD exercises.

Methods

A total of 150 English-language YouTube videos were screened using the search terms “Parkinson exercises”, “Parkinson physiotherapy exercises”, and “Parkinson home exercise program”. For each video, the source, upload date, number of views, likes, dislikes, and comments were recorded. The Video Power Index (VPI) was assessed using the view ratio (views/day) and like ratio (likes × 100 / [likes + dislikes]). The clinical quality, reliability, and educational value of PD–specific exercise videos were assessed using the Global Quality Scale (GQS), modified DISCERN (mDISCERN), and guideline-based criteria derived from the European Physiotherapy Guideline for Parkinson's Disease (PD-GEC).

Results

A total of 29 videos met the inclusion criteria and were analyzed. Videos explaining how and why exercises were performed demonstrated higher mDiscern and GQS scores, while providing repetition, duration, and intensity information was associated with higher GQS scores but not mDiscern (p = 0.080); no differences were observed for disease specificity, functional linkage, or safety warnings (all p > 0.05). PD-GEC scores were not significantly related to video engagement metrics.

Conclusion

Higher-quality videos tended to provide clear explanations of exercise rationale and dosage, while guideline-based clinical features, including PD-GEC criteria, were not associated with viewer engagement.

Plain language summary

Many people with Parkinson's disease use YouTube to learn about exercise, but it is not always clear whether these videos provide reliable and high-quality information. In this study, we examined exercise videos for Parkinson's disease that were created by physiotherapists and available on YouTube. We evaluated 29 videos using established tools that measure information reliability, educational quality, and clinical relevance. Our findings showed that videos clearly explaining how and why exercises are performed were of higher quality and provided more reliable information. Videos that included clear exercise dosage details—such as repetition, duration, and intensity—also demonstrated better overall quality. However, features based on clinical guidelines were not linked to how popular the videos were, such as the number of views or likes. These results suggest that videos with clear explanations and practical exercise guidance are more helpful for viewers, even if they are not the most popular. Improving digital health literacy and supporting content created by healthcare professionals may help people with Parkinson's disease access more accurate and trustworthy exercise information online.

Introduction

Parkinson's disease (PD) is a rapidly growing neurodegenerative disorder worldwide. 1 It arises from complex interactions between genetic susceptibility and environmental factors in the aging brain, leading to dopaminergic neuron degeneration and reduced dopamine levels. 2 These pathological changes result in both motor and non-motor symptoms, ultimately contributing to progressive declines in daily functioning and quality of life. 3

Pharmacological therapy remains the cornerstone of PD management; however, persistent gait and balance impairments increase fall risk even with optimal medication. 4 Strong evidence from the past two decades indicates that exercise and physiotherapy improve motor function, balance, gait, and quality of life, supporting their role as evidence-based complementary strategies alongside levodopa.5,6

With technological advancements, digital platforms such as YouTube are widely used by both patients and healthcare professionals to access information about exercise and treatment. 7 YouTube, the second most widely used search engine after Google, stands out in digital content consumption and user engagement with over 2 billion monthly visitors. 8 The platform contributes to patient education and treatment processes through its wide range of content related to medical information and exercises. 9 In this regard, YouTube offers a significant opportunity for disseminating health-related information to a broad audience. 10 However, research clearly indicates that YouTube is the most common source of health-related misinformation. 11 The lack of quality control or academic peer review of the content may lead to professional disorganization and, consequently, to the spread of misinformation, particularly on health-related topics. 12

In recent years, the quality and reliability of YouTube videos have been evaluated across a range of health conditions, including musculoskeletal disorders, chronic pain, scoliosis, and rheumatologic diseases.13–15 These studies have consistently reported substantial variability in informational accuracy and clinical relevance. Despite this expanding body of research, Parkinson's disease–specific exercise videos remain insufficiently examined. Although German-language informational PD videos have been assessed, the clinical quality and educational value of exercise-focused PD content on YouTube have not been systematically evaluated using guideline-based criteria. 16 However, the quality and reliability of YouTube videos featuring exercises for PD have not been systematically evaluated to date. The aim of this study is to assess the content quality, information presentation, and overall characteristics of videos related to Parkinson's disease exercises, using guideline-based evaluation criteria derived from the European Physiotherapy Guideline for Parkinson's Disease (PD-GEC). The primary objective of the study is to analyze the exercises presented in the videos regardless of the different subtypes or stages of PD.

Materials and methods

Our cross-sectional study included YouTube videos that were accessible during the systematic search and provided therapeutic exercise recommendations for patients with PD. In this study, exercises were operationally defined as structured, therapeutic, and health-oriented physical activities. Eligible videos were categorized into the following exercise domains: aerobic and cardiovascular training, strength training, balance–agility–coordination exercises, flexibility and mobility exercises, home-based exercise programs, posture training, upper extremity and fine motor skill exercises, and education- or well-being-focused physical activity.

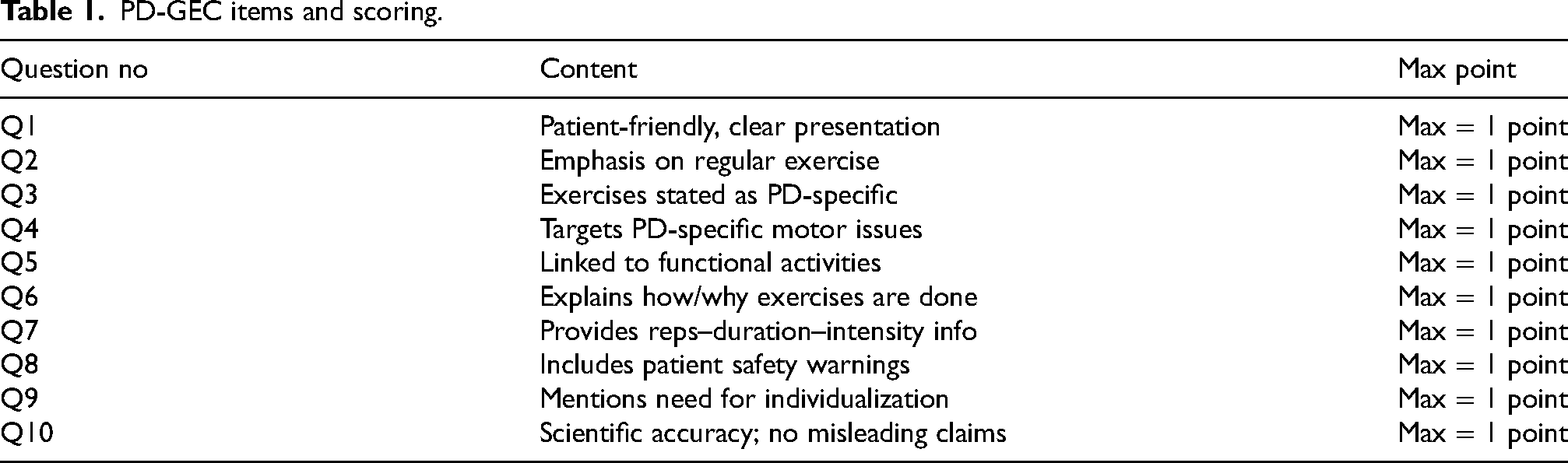

Before the video evaluation process began, two researchers jointly reviewed the first three videos to clarify the evaluation procedure and gain familiarity with the measurement tools. These three videos were excluded from the study. 17 Subsequently, all English-language videos were independently evaluated by two physiotherapists with advanced expertise in neurological rehabilitation using criteria derived from the European Physiotherapy Guideline for Parkinson's Disease (PD-GEC), a rigorously developed, evidence-based framework that informs best physiotherapy practice in Parkinson's disease. 18 To ensure methodological transparency and reproducibility, core recommendations from the guideline were systematically translated into a structured 10-item dichotomous checklist (Q1–Q10). Each item was scored as 0 (absent) or 1 (present), generating a maximum possible score of 10. The checklist encompassed domains patient-centeredness, Parkinson's disease–specific exercise content, functional relevance, safety considerations, individualization, and scientific accuracy (Table 1). Agreement between the two reviewers was assessed using Cohen's kappa, and any discrepancies were resolved through discussion. Cohen's kappa indicated strong agreement (κ = 0.82). Other assessment tools were applied by a single researcher using the same predefined evaluation framework.

PD-GEC items and scoring.

Ethical considerations and informed consent

Since all videos analyzed in this study were publicly available on YouTube and no direct involvement of human or animal participants occurred, ethical approval and informed consent were not required.

YouTube search

In September 15–25, 2025, we systematically searched YouTube.com using the Firefox browser with the keywords “Parkinson exercise”, “Parkinson physiotherapy exercises”, and “Parkinson rehabilitation exercises”. No personal Google or YouTube accounts were used during the searches. In addition, the browser was set to “incognito mode,” and the search history was cleared. To minimize bias related to upload date, the daily view rate was calculated for each video. For each video, quantitative characteristics, such as total number of views, number of comments, channel subscribers, video duration, likes, dislikes, time since upload, and the Video Power Index (VPI) was recorded. To calculate daily engagement, the total numbers of views, likes, dislikes, and comments were divided by the number of days the video had been available on YouTube.

19

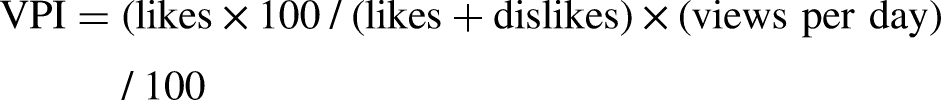

The VPI was computed using the following formula

20

:

Inclusion criteria

Videos were included if they met the following criteria: (1) published in English, (2) uploaded within the past 15 years, (3) had at least 250 views, and (4) were directly related to exercise or physiotherapy for PD, and (5) were prepared by physiotherapists providing exercise content for individuals with Parkinson's disease. Videos featured either providers performing exercises on camera while explaining them or sharing knowledge and experiences about the exercises. Videos that did not meet these criteria, were duplicates, or were unrelated to PD were excluded. All English-language videos were independently evaluated by two physiotherapists specializing in neurological rehabilitation using the Global Quality Scale (GQS).

Exclusion criteria

Videos that were duplicates, unrelated to PD, or failed to meet the inclusion criteria—particularly those lacking structured therapeutic exercise content (e.g., general lifestyle advice without practical demonstration, non-exercise educational material, promotional content, or unrelated physical activities)—were excluded. Videos with low view counts may fail to represent actual public exposure to information and are often newly uploaded or have limited visibility. Therefore, a minimum view threshold was applied to reduce sampling bias and to ensure that the analyzed videos reflect content that users are more likely to encounter in real-world settings.

Assessment of video reliability and quality

For each included video, we extracted data on the narrator type, video quality, content category, number of views, likes, comments, and duration, and recorded them in a standardized Excel file. To evaluate quality and reliability, we applied three validated instruments:

Global Quality Scale (GQS): The overall quality of the videos was assessed using the Global Quality Scale (GQS), a 5-point Likert scale commonly used to evaluate the quality, flow, and clinical usefulness of online health information. Developed by Bernard et al. (2007), this five-point Likert scale assesses overall quality, flow, and usability of video content. Scores of 1–2 indicate low quality, 3 indicates moderate quality, and 4–5 indicate high quality. 21 Higher scores reflect greater overall educational value.

Modified DISCERN (mDISCERN): A Third, Modified DISCERN (mDISCERN) criteria, developed by Charnock et al.l and revised by Singh et. all were used to evaluate the reliability of the video content22,23 It evaluates the purpose of the video content, the reliability and neutrality of the information provided, the presence of additional resources, and whether any uncertainties or controversial aspects related to the topic are addressed. A five-point rating system was used for each component. Answers to the evaluation questions were marked as either “yes” (1 point) or “no” (0 points). Higher scores indicated greater reliability. The Modified DISCERN is a five-item instrument scored dichotomously (0 = no, 1 = yes), yielding a total score between 0 and 5, with higher scores indicating greater reliability. A score of 1 denoted “unreliability,” scores of 2 to 4 denoted “partially reliability,” and a score of 5 denoted “highly reliability’’.

Statistical analyses

Statistical analyses were conducted using SPSS version 27.0 for Windows (SPSS Inc., Chicago, IL, USA). The Shapiro–Wilk test was applied to assess data distribution. Continuous variables were presented as median (minimum–maximum) values. Before conducting group comparisons, distributional assumptions were evaluated using the Shapiro–Wilk test for normality and Levene's test for homogeneity of variances. Accordingly, independent-samples t-tests were applied when parametric assumptions were satisfied, whereas the Mann–Whitney U test was used for non-parametric comparisons. In addition, relationships between variables were examined using Spearman's rank correlation analysis, which was selected to assess the strength and direction of associations under non-normal distributional conditions. Inter-rater agreement for PD-GEC categories assigned by the two researchers was assessed with the kappa coefficient. 24 A p-value of <0.05 was considered statistically significant.

Results

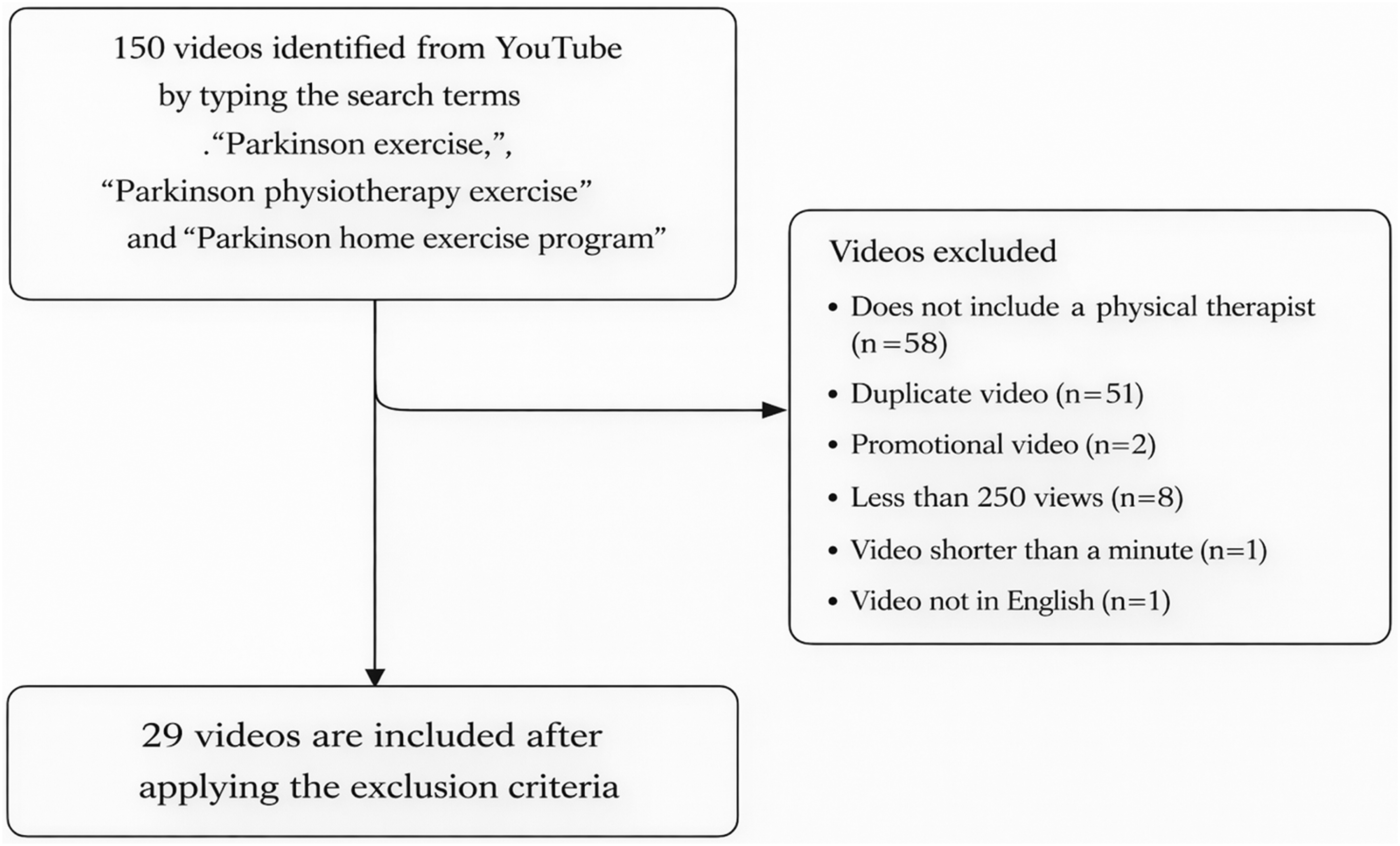

In total, 150 videos were screened. Specifically, 58 videos without a physical therapist, 51 duplicate videos, 2 promotional videos, 8 videos with fewer than 250 views, 1 video shorter than one minute, and 1 non-English video were excluded from the analysis. Consequently, 29 videos were included in the final analysis (Figure 1). The evaluation results indicate that the majority of videos scored within the upper range, with the highest concentration in the 9/10 category (10 videos). Five videos achieved a perfect score of 10/10, demonstrating excellent performance. Mid-range scores (6/10 to 8/10) account for 10 videos, while no videos fell below a score of 5. Overall, the dataset reflects a predominantly high-quality video set with strong performance outcomes.

Flow chart.

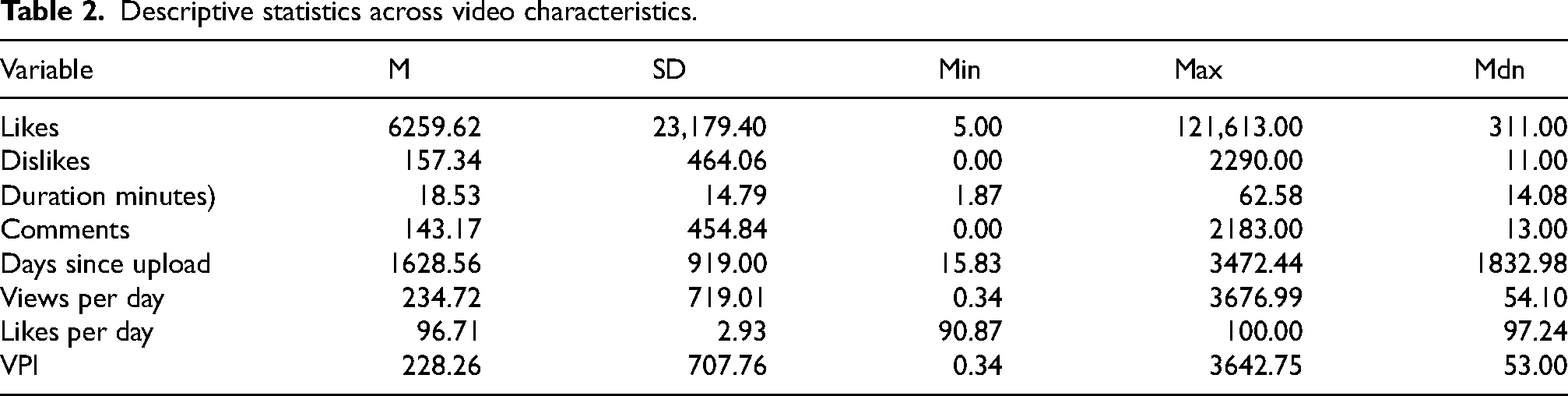

Interobserver agreement between the two evaluators was high, with a Cohen's kappa coefficient of 0.82, indicating almost perfect agreement. Descriptive statistics indicated considerable variability across video characteristics. The number of Likes ranged from 5 to 121,613 (Mdn = 311), while Duration (minutes) varied between 1.87 and 62.58 (Mdn = 14.08). The number of comments ranged from 0 to 2183 (Mdn = 13), and Age (days) ranged from 15.83 to 3472.44 (Mdn = 1832.98). VPI values varied between 0.34 and 3642.75 (Mdn = 53), whereas Dislikes ranged from 0 to 2290 (Mdn = 11). Views per day ranged from 0.34 to 3676.99 (Mdn = 54.10), and Likes per day values were high across all observations, ranging from 90.87 to 100.00 (Mdn = 97.24). Detailed summary statistics are presented in Table 2.

Descriptive statistics across video characteristics.

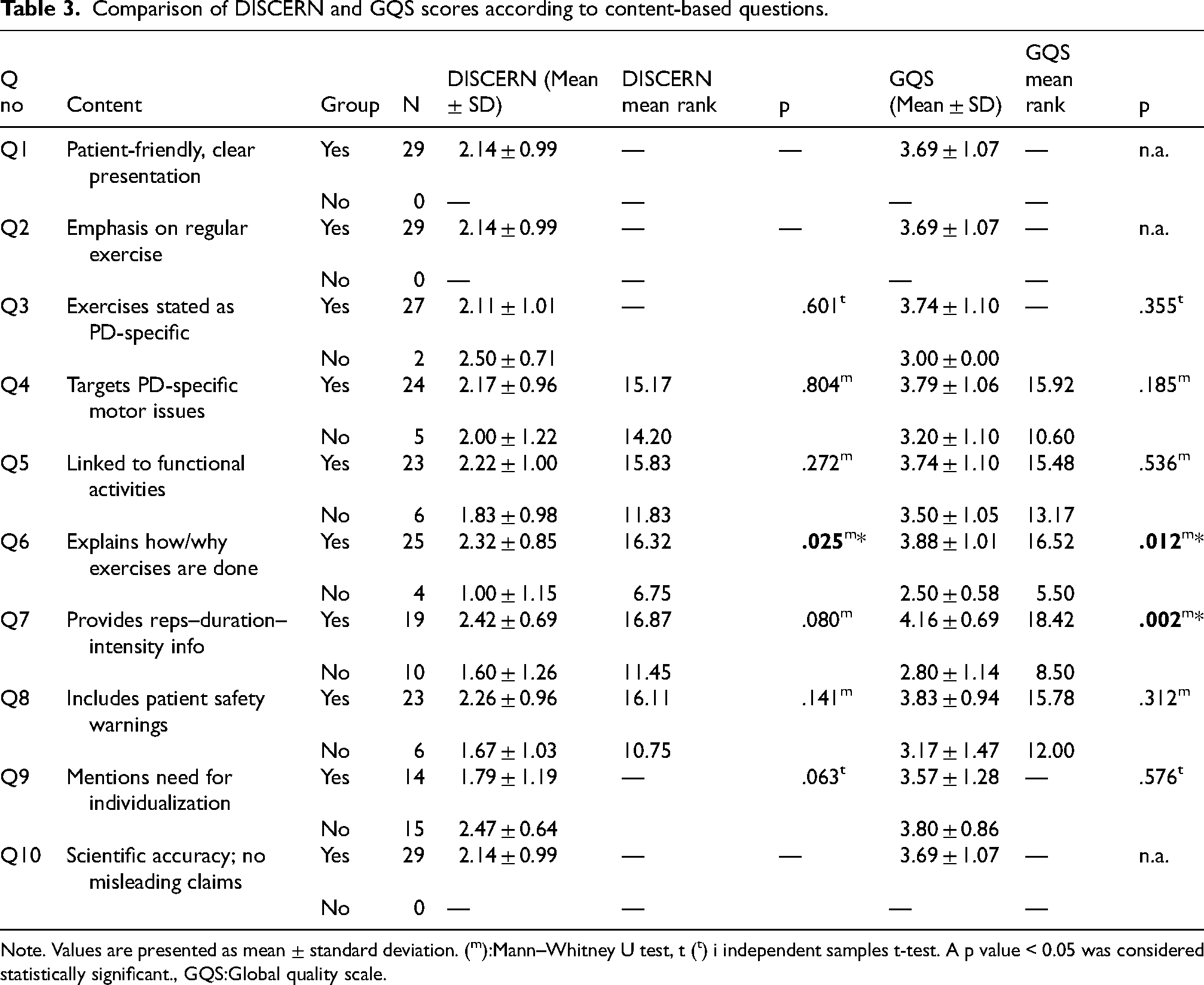

For Q1, Q2, and Q10, all videos were categorized as “Yes”; therefore, independent-samples t tests could not be conducted for these items (p = n.a.). For the remaining criteria, DISCERN and GQS scores were compared between videos that met the criterion (“Yes”) and those that did not (“No”). Across the evaluated content-based criteria, the two-tailed Mann–Whitney U tests revealed that videos explaining how and why the exercises were performed (Q6) demonstrated significantly higher quality scores for both mDISCERN (mean rank = 16.32 vs. 6.75, U = 17.00, z = −2.24, p = .025) and GQS (mean rank = 16.52 vs. 5.50, U = 12.00, z = −2.51, p = .012) compared with videos lacking such explanations. Additionally, videos that provided clear information regarding repetition, duration, and exercise intensity (Q7) achieved significantly higher GQS scores (mean rank = 18.42 vs. 8.50, U = 30.00, z = −3.11, p = .002), although the corresponding difference in mDISCERN scores did not reach statistical significance (p = .080). In contrast, no statistically significant differences were observed for videos targeting Parkinson's disease–specific motor issues (Q4), being linked to functional activities (Q5), or including patient safety warnings (Q8) for either mDISCERN or GQS outcomes (all p > .05) (Table 3).

Comparison of DISCERN and GQS scores according to content-based questions.

Note. Values are presented as mean ± standard deviation. (m):Mann–Whitney U test, t (t) i independent samples t-test. A p value < 0.05 was considered statistically significant., GQS:Global quality scale.

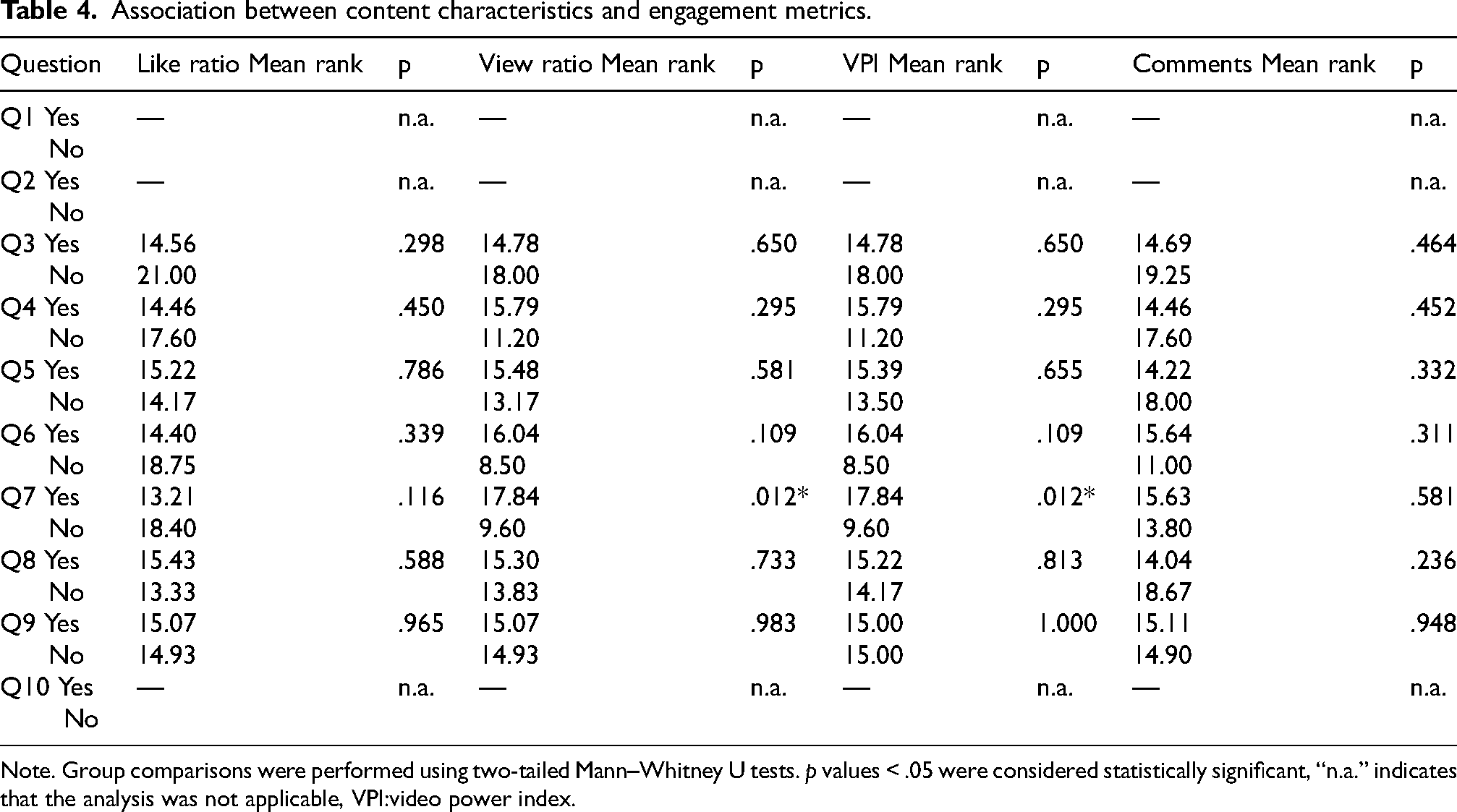

Across Q3 to Q9, two-tailed Mann–Whitney U tests revealed no statistically significant differences between the 0:No and 1:Yes groups for Like ratio, View ratio, VPI or Comments in the majority of comparisons (all p > .05). In contrast, for Q7, videos categorized as 1:Yes showed significantly higher engagement than those categorized as 0:No for both View ratio (U = 41.00, z = −2.51, p = .012) and VPI (U = 41.00, z = −2.51, p = .012). No statistically significant differences were observed for Comments across any of the questions (Table 4).

Association between content characteristics and engagement metrics.

Note. Group comparisons were performed using two-tailed Mann–Whitney U tests. p values < .05 were considered statistically significant, “n.a.” indicates that the analysis was not applicable, VPI:video power index.

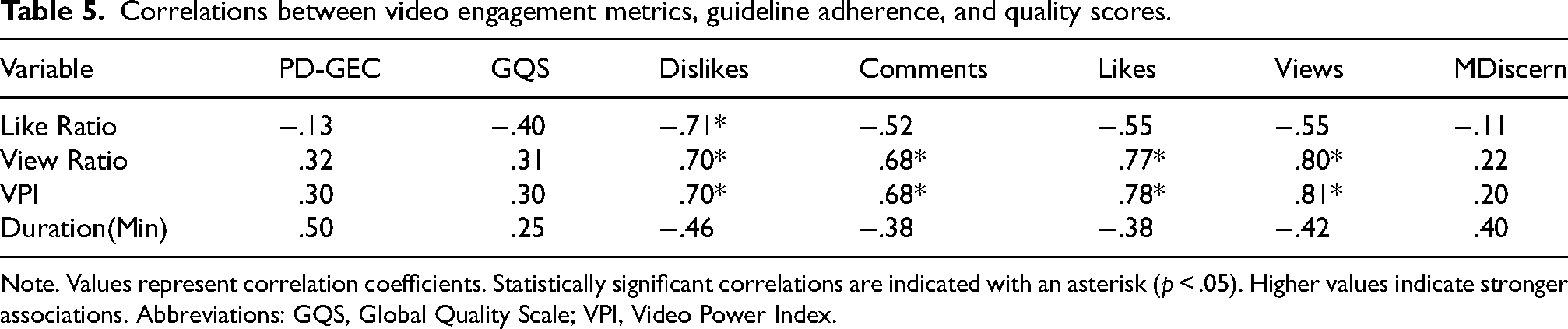

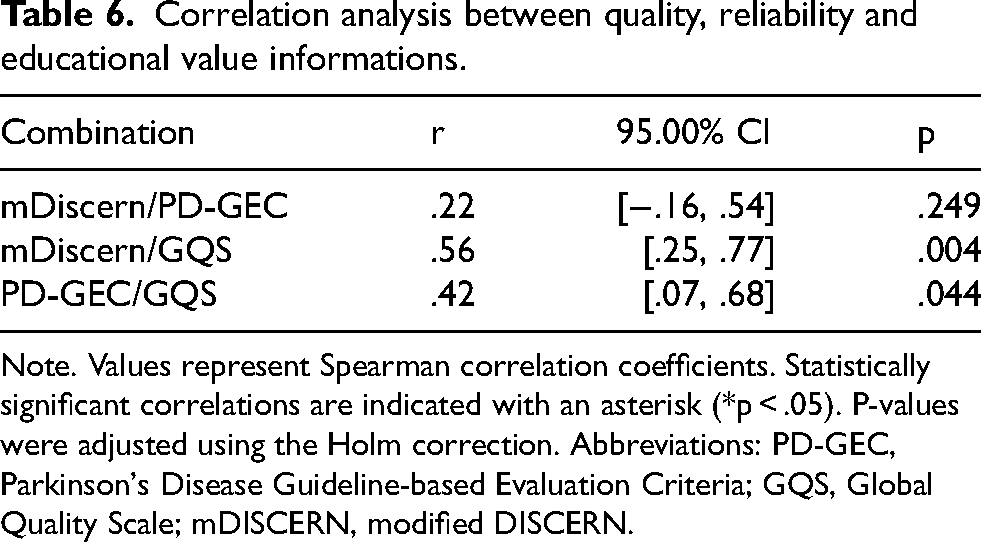

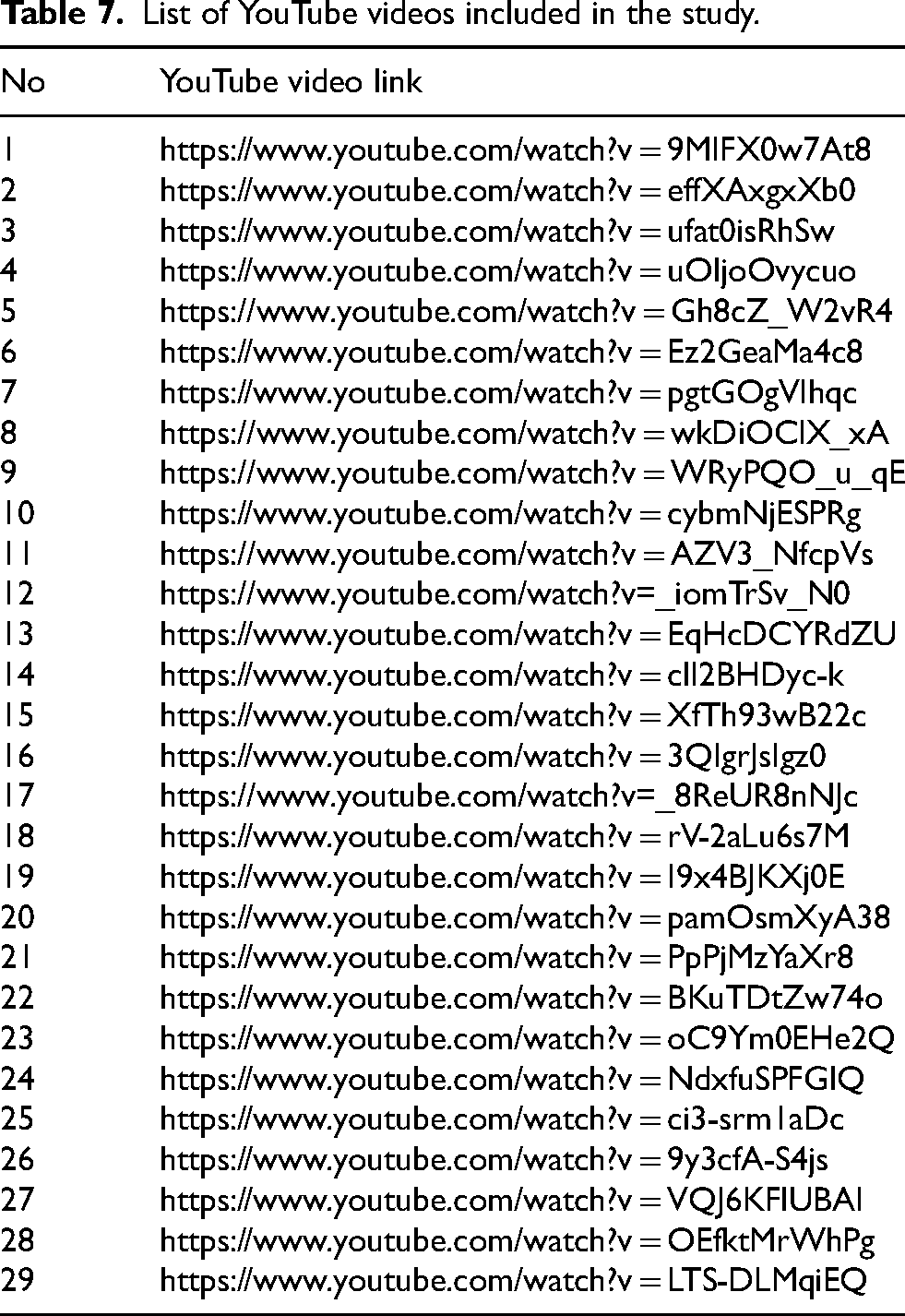

The results of the correlations were examined using the Holm correction to adjust for multiple comparisons based on an alpha value of .05. Significant positive correlations were found between VPI and several engagement metrics, including Dislikes (r = .70, p = .001), Likes (r = .78, p < .001), Comments (r = .68, p = .002), View ratio (r = 1.00, p < .001), and views (r = .81, p < .001), indicating large effect sizes. Dislikes also showed significant positive correlations with Likes (r = .95, p < .001), Comments (r = .91, p < .001), View ratio (r = .70, p = .001), and Views (r = .95, p < .001), while demonstrating a significant negative correlation with View ratio (r = –.71, p < .001). Likes were significantly and positively correlated with Comments (r = .94, p < .001), View ratio (r = .77, p < .001), and Views (r = .98, p < .001). Similarly, Comments were positively associated with View ratio (r = .68, p = .002) and Views (r = .90, p < .001), and View ratio was significantly positively correlated with Views (r = .80, p < .001). (Table 5). No significant correlations emerged for Duration or Like ratio with the remaining variables. Overall, these findings indicate that PD-GEC scores were not significantly related to video engagement metrics (Tables 6 and 7).

Correlations between video engagement metrics, guideline adherence, and quality scores.

Note. Values represent correlation coefficients. Statistically significant correlations are indicated with an asterisk (p < .05). Higher values indicate stronger associations. Abbreviations: GQS, Global Quality Scale; VPI, Video Power Index.

Correlation analysis between quality, reliability and educational value informations.

Note. Values represent Spearman correlation coefficients. Statistically significant correlations are indicated with an asterisk (*p < .05). P-values were adjusted using the Holm correction. Abbreviations: PD-GEC, Parkinson's Disease Guideline-based Evaluation Criteria; GQS, Global Quality Scale; mDISCERN, modified DISCERN.

List of YouTube videos included in the study.

Discussion

Evaluating the reliability and quality of exercise videos related to Parkinson's disease is crucial for ensuring patients can access to accurate and trustworthy information. The present study demonstrated that most YouTube videos met high educational standards according to the PD-GEC, with none classified as low quality. These findings suggest that content prepared by healthcare professionals, particularly physiotherapists, generally provides a reliable and structured source of information. A notable finding was that explaining the rationale and execution of exercises not only enhances perceived quality but also positively affects on informational reliability. In contrast, although providing parameters related to repetition, duration, and intensity improves overall video quality, it may not exert a decisive influence on the transparency or reliability of health information as assessed by mDISCERN.

Previous studies evaluating exercise-related YouTube videos for conditions such as fibromyalgia, neck pain, and low back pain have consistently reported predominantly low-quality content,11,25–27 whereas videos focusing on knee osteoarthritis tend to demonstrate moderate quality. 28 In contrast, the higher-quality scores observed in the present study may reflect the decision to include only videos produced by physiotherapists with expertise in Parkinson's disease. Unlike investigations based on more heterogeneous samples, this focused approach likely captured content developed within a stronger clinical and educational framework. Parkinson's rehabilitation is typically guided by structured recommendations and requires disease-specific expertise, which may encourage the production of more standardized and reliable educational materials. Differences across studies may also be partially explained by methodological variation.

The narrower scoring range of the modified DISCERN scale, compared with the original version, can influence how quality categories are defined. A similar pattern has been reported in rheumatoid arthritis research, where DISCERN scores showed minimal variation but significant differences emerged when alternative evaluation tools were applied. 29 Together, these findings suggest that both the professional background of content creators and the selection of assessment instruments can substantially shape conclusions regarding the quality of online health information.

Consistent with prior research on scoliosis videos, content uploaded by healthcare professionals tends to demonstrate higher transparency and reliability than videos produced by non-professionals. 13 The relatively homogeneous PD-GEC scores observed in this study were therefore not unexpected, given that all evaluated videos originated from a single professional group. While DISCERN primarily assesses the balance and presentation of treatment information. 30 PD-GEC enabled a more clinically oriented evaluation of Parkinson's-specific exercise content. Most PD-GEC domains—including patient-centeredness, safety considerations, and scientific accuracy—showed parallel trends with DISCERN and GQS scores, suggesting general agreement between informational and clinical quality indicators. Notably, videos that clearly explained both the rationale and execution of exercises were associated with higher quality ratings, whereas the inclusion of dosage parameters such as repetition, duration, and intensity mainly strengthened general educational value. This pattern suggests that viewers may prioritize clarity and instructional guidance when selecting digital exercise resources.

Engagement metrics provided an additional perspective. Videos presenting explicit dosage information achieved higher view ratios and video power index values, indicating greater audience reach. However, the lack of a meaningful association between engagement indicators and PD-GEC or mDISCERN scores points to a persistent gap between clinical quality and popularity on digital platforms, underscoring the growing importance of digital health literacy. Although the video power index has attracted increasing scholarly attention31,32 research examining its relationship with clinical quality remains limited31,33,34 As engagement metrics continue to influence algorithmic visibility and content dissemination, understanding how popularity interacts with informational reliability represents an important direction for future research.

The strength of this study is that, to the best of our knowledge, it is the first to systematically evaluate the quality and reliability of exercise videos prepared by healthcare professionals—specifically physiotherapists—for individuals with PD. One of the main limitations of this study is that although the quality analysis was conducted in accordance with European guidelines, further studies are recommended to validate the psychometric properties of the PD-GEC instrument. In addition, the research was limited to videos published exclusively on YouTube's official platform; content from other online media, such as Vimeo, Instagram, and TikTok, was excluded from the evaluation.

This study included 29 videos selected through strict inclusion and exclusion criteria, focusing exclusively on content produced by physiotherapists with expertise in Parkinson's disease. While this approach enhanced clinical relevance and scientific rigor, it may limit the generalizability of the findings to videos created by other healthcare professionals or content producers. The relatively small sample size may also have reduced statistical power, and the results should therefore be interpreted cautiously despite the use of appropriate nonparametric analyses. Future research incorporating larger and more diverse video samples is needed to validate and extend these findings. The integration of artificial intelligence–based analytic tools may further enable more comprehensive assessments of digital health content. Given the expanding influence of platforms such as YouTube, healthcare professionals are encouraged to develop exercise videos that are evidence-based, clearly structured, and supported by explicit explanations of rationale and dosage parameters to ensure both engagement and clinical integrity.

Conclusion

Although videos prepared by healthcare professionals were generally of acceptable quality, the absence of a relationship between clinical quality indicators and viewer engagement suggests that popularity does not necessarily reflect reliability; therefore, developing digital therapeutic content led by healthcare professionals may help reduce misinformation and improve access to trustworthy information.

Footnotes

Acknowledgments

We would like to thank Dr Mustafa Ferit Akkurt for his expert opinion, Sumeyye Akcay and Mine Seyyah for contributing during the editing of the article.

Ethical approval

Institutional ethical approval and informed consent were not considered necessary, as the researchers used publicly available YouTube videos by their nature.

Author statement

Anıl Tosun designed the study, obtained the data, drafted and wrote the article, and finally approved the article to be presented. Duygu Aktar Reyhanioglu designed the study, analyzed and interpreted the data, drafted the article, and critically reviewed it for important intellectual content, ultimately approved the article to be submitted.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.