Abstract

The COVID-19 pandemic has highlighted the powerful influence of social media in shaping public opinion, particularly in spreading vaccine misinformation. This study investigates the dynamics of disinformation during the pandemic, focusing on its unprecedented scale and rapid proliferation, as well as its implications for political stability and public trust. It emphasizes the crucial role of Information Systems (IS) in addressing these challenges, utilizing advanced technologies such as algorithms, data analytics, and artificial intelligence for real-time tracking, analysis, and countermeasures against misinformation. The study employs a data analytics approach to analyze over 2 million vaccine-related tweets, classifying them as misinformation and reliable information. The findings reveal that misinformation spikes often coincide with periods of public uncertainty, while central nodes—highly connected social media users—play a key role in amplifying misinformation and reliable content. Despite the challenges, users tend to engage more with trustworthy information, offering opportunities to amplify factual content. By examining the influence of disinformation cascades and reviewing existing counter strategies, this study aims to illuminate the complexities of managing misinformation in today’s digital landscape. Furthermore, it underscores the need for an integrated, multidisciplinary approach that combines insights from Political Science and IS. This approach fosters media literacy, enhances transparency, and promotes trust in credible sources, thereby strengthening the resilience of information ecosystems against disinformation threats.

Keywords

Introduction

In an era where the digital and physical realms increasingly intersect, the COVID-19 pandemic has not only highlighted the vulnerabilities of global health systems but also underscored the pervasive impact of social media on shaping public discourse and opinion. 1 Amidst this backdrop, social media platforms have emerged as double-edged swords, capable of both uniting and dividing, informing and misleading. The rapid proliferation of vaccine-related misinformation has created significant challenges for public health communication, policy implementation, and social trust in scientific expertise. As misinformation spreads in real-time, fueled by algorithmic amplification and user engagement patterns, understanding the mechanisms behind its dissemination is critical to formulating effective intervention strategies.

The role of disinformation in swaying public opinion is not a novel phenomenon; however, the scale and speed at which it has proliferated during the COVID-19 crisis are unprecedented.2,3 The consequences extend beyond individual decision-making, influencing policy responses, international relations, and trust in governmental institutions.4,5 Democracies worldwide now face the challenge of protecting the free flow of information while combating falsehoods that undermine public health and societal trust. 6 Despite growing research on disinformation, limited empirical studies have examined the interplay between misinformation, social media engagement, and the role of influential users in shaping online narratives.

Recent research by McLoughlin, Brady, Goolsbee, Kaiser, Klonick, and Crockett 7 highlights that misinformation exploits emotional triggers, particularly outrage, to drive rapid engagement and dissemination. Their findings suggest that the virality of misinformation is not solely determined by content accuracy but also by its ability to provoke strong emotional reactions. This underscores the need for analytical frameworks that capture both content virality and the psychological mechanisms fueling disinformation cascades.

In this context, Information Systems (IS) play a pivotal role in identifying, tracking, and mitigating the spread of disinformation. Through sophisticated algorithms, data analytics, and artificial intelligence, IS provides tools for real-time monitoring, analysis, and countermeasures against disinformation campaigns. 8 However, existing research often lacks a structured methodological framework for analyzing large-scale social media data in the context of misinformation spread. To address this gap, this study employs the CRISP-eSNeP framework 9 as a structured approach to extract, transform, and analyze large datasets from social media platforms. The framework provides a systematic approach for examining the velocity, volume, and virality of misinformation and reliable information, offering insights into how social media users engage with different types of content. Given the centrality of social media networks in amplifying information—both reliable and misleading—the framework provides a methodological foundation for investigating the role of influential users (central nodes) and engagement mechanisms in the dissemination of vaccine-related content.

By leveraging the CRISP-eSNeP framework, this study investigates the mechanisms driving disinformation spread, its impact on public opinion and engagement, and the effectiveness of past interventions in countering misinformation. Given the role of central nodes (highly connected users) in amplifying content, the study explores how these actors influence information flow on social media platforms. This interdisciplinary approach—integrating insights from Political Science and Information Systems—aims to identify strategic intervention points that can enhance real-time misinformation detection, mitigation, and prevention. Furthermore, the study highlights the importance of data-driven strategies in upholding democratic values and safeguarding public health, particularly in strengthening the resilience of information ecosystems against evolving disinformation threats.

Building on these foundations, this study seeks to understand the dynamics of information dissemination related to COVID-19 vaccines on social media, with a particular focus on the spread, interaction, and engagement of both reliable information and misinformation. Key research questions driving this analysis include: What are the temporal patterns in the spread of reliable information and misinformation? How do engagement levels differ between reliable information, misinformation, and other categories of content? What roles do influential users, as evidenced by central nodes, play in the dissemination and amplification of vaccine-related tweets?

Therefore, this paper makes several important contributions to understanding the landscape of vaccine-related discussions on social media during the COVID-19 pandemic, employing an integrated, multidisciplinary approach that combines insights from Political Science and Information Systems. First, by analyzing the daily distribution and engagement levels of reliable information and misinformation tweets, we offer a nuanced understanding of the temporal relationships between significant public events and the reactive spread of misinformation. This analysis highlights how major socio-political developments shape the flow of information, emphasizing the importance of timely, strategic communication from trusted sources. Second, the examination of engagement patterns reveals critical insights into the mechanisms through which both factual content and misinformation gain traction online. These findings help identify how social media algorithms and user behaviors contribute to the virality of content, providing a foundation for developing targeted strategies to amplify credible information and curb misinformation. Finally, our identification of central and non-central nodes within the social media network illustrates their distinct roles in spreading both reliable information and misinformation. This understanding of social media dynamics reveals strategic points for intervention, underscoring the potential for targeted engagement to mitigate misinformation effectively.

Current state of information cascades: A multidisciplinary perspective

The proliferation of disinformation on social media platforms, particularly in the context of the COVID-19 pandemic, presents a complex challenge at the intersection of technology, society, and politics. Information cascades, a phenomenon where a piece of information is rapidly spread across a network, regardless of its veracity, have been significantly amplified by the unique features and algorithms of social media platforms. This section explores the mechanisms behind these cascades, their implications for public discourse, and the pivotal role of information systems in mitigating their impact.

Mechanisms of disinformation spread

At the heart of social media platforms lies a sophisticated algorithmic structure designed to maximize user engagement10,11. These algorithms, by prioritizing content that elicits strong emotional reactions, inadvertently facilitate the rapid spread of disinformation. 12 The concept of echo chambers—digital environments where users are exposed primarily to viewpoints that echo their own—further exacerbates this issue.13,14 This algorithm-driven segregation diminishes cross-ideological discourse and amplifies misinformation, creating a fertile ground for disinformation cascades.

Today, the term “disinformation” has broadened its meaning, referring to any deceptive message that could be harmful but spreads unintentionally. 15 When it comes to the spread of disinformation on social media, it is not always referred to as disinformation by the public and one of the main things it has evolved into being called came into fruition because of a disinformation campaign. The campaign we are referring to in this context is a presidential one where their focus was on discrediting the large media corporations and the term coined during this was “fake news.” The realm of fake news constitutes its own unique reality—a distinct universe filled with intense agitation, where drama has become the norm and sensational revelations are an everyday occurrence. 16 The sphere of reality that “fake news” exists within is one of the many examples of an established echo chamber and illustrates one example of a disinformation cascade.

Echo chambers are a product of the algorithms that exist within social media. 17 The structure of social media algorithms focuses on placing individuals within groups of people who share similar interests. However, this is not understood by the average user of social media. As Bail 18 explains, “As we get pulled deeper into networks that include only like-minded people, we begin to lose perspective.” The reason this is such an issue is because the public is losing their ability to distinguish between the quality of sources. This inability to distinguish between the two can be linked back to social media. The link is to the structuring of timelines or “For You” pages, which are the pages generated by the algorithm for the user that contains what they deem relevant to them. The platforms allow for all information to flow freely without any noticeable differentiation between a credible source and an untrustworthy source. Twitter, now known as X, is the focus of this study and remains one of the largest social networking platforms 19 .

Political implications

The political ramifications of unchecked disinformation are profound. Misinformation regarding COVID-19 vaccines has not only undermined public health efforts but has also served as a tool for geopolitical actors to influence domestic and foreign policy discourse. 20 In democratic societies, where free expression is a cornerstone, the manipulation of information to sway public opinion poses a significant threat to the integrity of electoral processes, trust in public institutions, and the very fabric of democratic deliberation. Furthermore, a 2016 analysis revealed that up to 75% of Americans confronted with fake news were unable to easily identify it as false, believing instead that it was a fairly accurate depiction of reality. 16 The impact of disinformation extends beyond average citizens in the United States and globally. In one notable case, Pakistan’s defense minister, Khawaja Asif, accepted a fabricated article circulating online as fact. 18 The article he took as being a credible source was one that included fake military commentary from another country, and he took the threat as being real.

The role of information systems

Information Systems (IS) offer a beacon of hope in the tumultuous sea of digital misinformation. 21 Through the deployment of data analytics, natural language processing, and machine learning, IS can discern patterns of disinformation spread, identify misinformation sources, and flag content for review. Moreover, IS can aid in the development of counter-narratives and the promotion of accurate information, effectively inoculating the public against misinformation. The creation of digital literacy tools, fact-checking resources, and transparent content moderation policies, powered by IS, is essential in fostering a more informed and resilient public discourse.22,23

Toward a comprehensive strategy

Addressing the challenge of disinformation cascades requires a multifaceted approach that combines the strengths of Political Science and Information Systems. This involves not only implementing technological solutions but also enacting regulatory measures, public awareness campaigns, and international cooperation to combat cross-border disinformation campaigns. By understanding the current state of information cascades through a multidisciplinary lens, we can better navigate the complexities of the digital age, ensuring that social media becomes a platform for constructive dialogue rather than misinformation.

Lessons of previous disinformation cascades

The digital age has enabled the rapid spread of information but has also facilitated the unchecked dissemination of disinformation. Two notable cases—Pizzagate and COVID-19 pseudoscience—exemplify how disinformation cascades gain traction and shape public discourse, leading to real-world consequences.

The Pizzagate conspiracy falsely alleged that a Washington, D.C. pizzeria was at the center of a child sex trafficking ring. This baseless claim, fueled by social media echo chambers and algorithm-driven engagement, spread rapidly, culminating in an armed individual attempting to “investigate” the accusations. 24 At its peak, #pizzagate trended at a rate of five tweets per minute, illustrating how digital misinformation can escalate into offline action. 8 This case highlights the dangers of political disinformation, the role of digital polarization, and the need for robust digital literacy and critical thinking skills.

Similarly, COVID-19 misinformation evolved into an “infodemic,” where pseudoscientific claims regarding vaccines and public health measures spread widely, exacerbating vaccine hesitancy and public distrust.25,26 Unlike Pizzagate, which was politically charged, COVID-19 misinformation leveraged scientific uncertainty to reinforce pre-existing conspiracy theories, making it more difficult to counter.15,27 Research suggests that attempts to debunk misinformation often backfire, reinforcing the beliefs of misinformation adopters rather than correcting them. 18

Both cases illustrate the wider social and political ramifications of unchecked disinformation. They erode trust in institutions, distort democratic decision-making, and fuel public division. 28 These challenges call for an integrated approach that combines Political Science insights on persuasion, misinformation resistance, and public trust with Information Systems solutions for real-time detection, tracking, and intervention against digital falsehoods.

Leveraging political science and information systems

Addressing the challenges posed by disinformation cascades requires leveraging the analytical tools and theoretical frameworks of both Political Science and Information Systems. Political Science offers insights into the motivations behind disinformation campaigns and their impact on public opinion and democratic integrity. Concurrently, Information Systems provide the technological means to detect, analyze, and counteract the spread of false information. Together, these disciplines can guide the development of holistic strategies that not only mitigate the immediate effects of disinformation but also address the underlying societal vulnerabilities it exploits.

Previous strategies for addressing the spread: A historical to modern perspective

The battle against disinformation is as old as communication itself, but the advent of the digital era has significantly transformed the landscape in which this battle is waged. Over the years, a variety of strategies have been deployed by governments, media organizations, academic institutions, and technology platforms to mitigate the spread of disinformation. This section explores these strategies, tracing their evolution from historical approaches to contemporary solutions, while emphasizing the role of Political Science and Information Systems in shaping effective countermeasures.

Historical context and evolution

Traditionally, efforts to counter disinformation were primarily focused on media literacy and public education campaigns. These strategies were rooted in the belief that an informed populace, capable of critical thinking, would be resilient to misinformation. Governments and educational institutions played a central role in these initiatives, emphasizing the importance of discerning reliable sources and verifying information before sharing. Political Science provides a framework for understanding the socio-political implications of these strategies, highlighting how they reflect democratic values of freedom of expression and an informed citizenry.

With the rise of the internet and social media, the dynamics of disinformation spread have changed dramatically, necessitating innovative approaches. The digital age has seen the introduction of fact-checking organizations and collaborative efforts between tech companies and journalistic entities to identify and label false information. These efforts represent an intersection between traditional media literacy campaigns and the technological capabilities provided by Information Systems to analyze and counteract disinformation at scale.

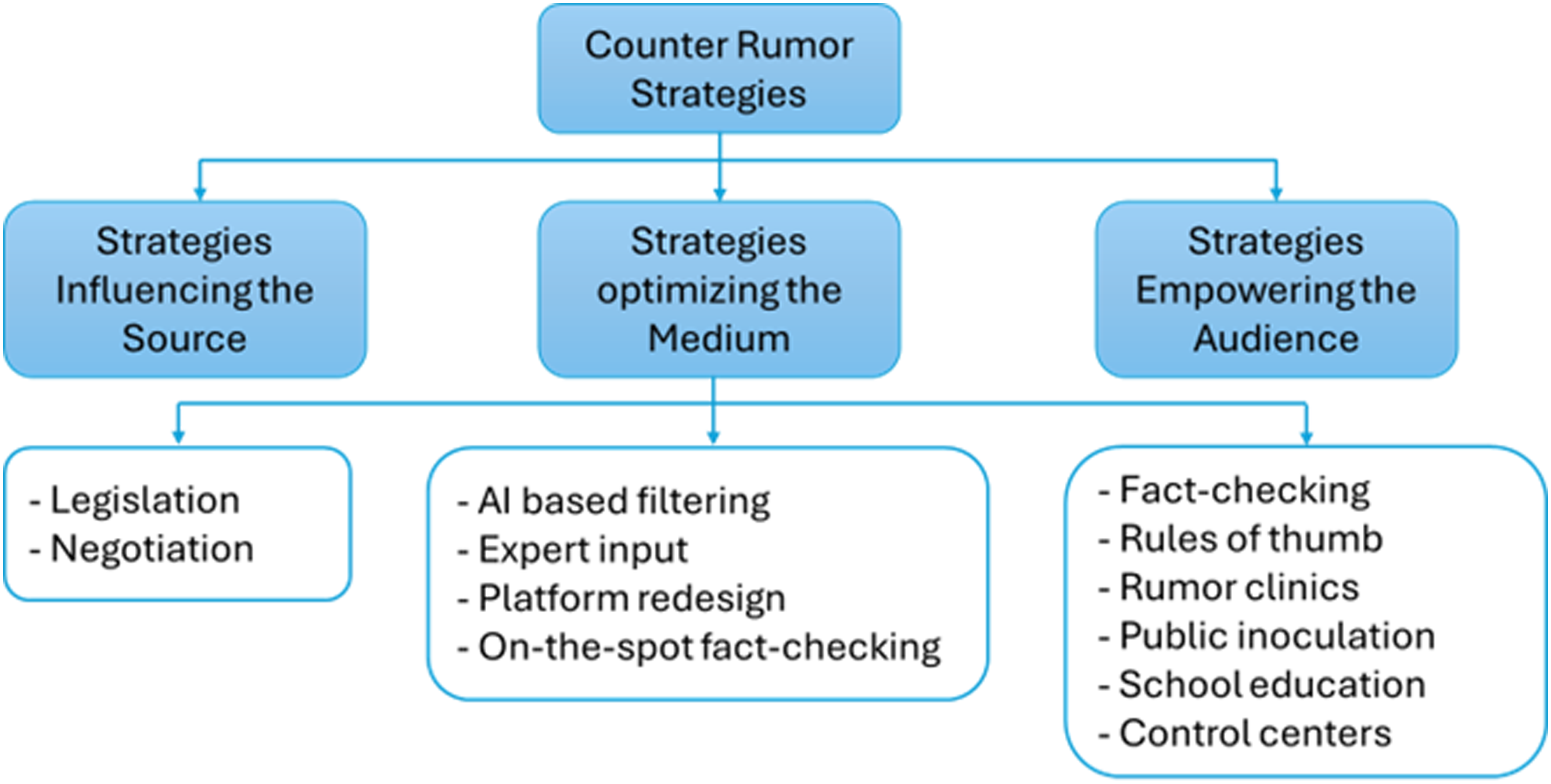

In the contemporary landscape of information dissemination, understanding the historical and current strategies employed to counter the spread of rumors is paramount. The evolution of these strategies, as delineated in the work of Fard and Verma,

15

reveals a sophisticated array of tactics developed over decades by various stakeholders, including media organizations, academic institutions, social media platforms, and governments. Central to this discussion is the categorization of counter-rumor strategies, as captured in a pivotal figure from Fard and Verma’s article (Figure 1). This figure serves as a visual representation, dividing strategies into three main categories based on their influence on the fundamental components of the communication process: the sender, the channel, and the receiver. The classification of counter-rumor strategies (adapted from Fard and Verma

15

).

The first category addresses strategies aimed at the source of rumors, implementing measures to deter initiators, especially in cases where rumors are spread deliberately. The second focuses on securing the channels through which communication flows, aiming to curb the emergence and circulation of rumors. The third category targets the receivers of these rumors, with strategies designed to shield the audience from the harmful effects of misinformation. This tripartite classification not only simplifies the understanding of the diverse approaches taken to mitigate rumor spread but also highlights the necessity of a multifaceted approach that addresses all aspects of the communication process. By dissecting the strategies in this manner, the figure underscores the complexity of rumor control and the importance of a comprehensive strategy that encompasses sender, channel, and receiver to effectively counteract the spread of disinformation in our increasingly digital world.

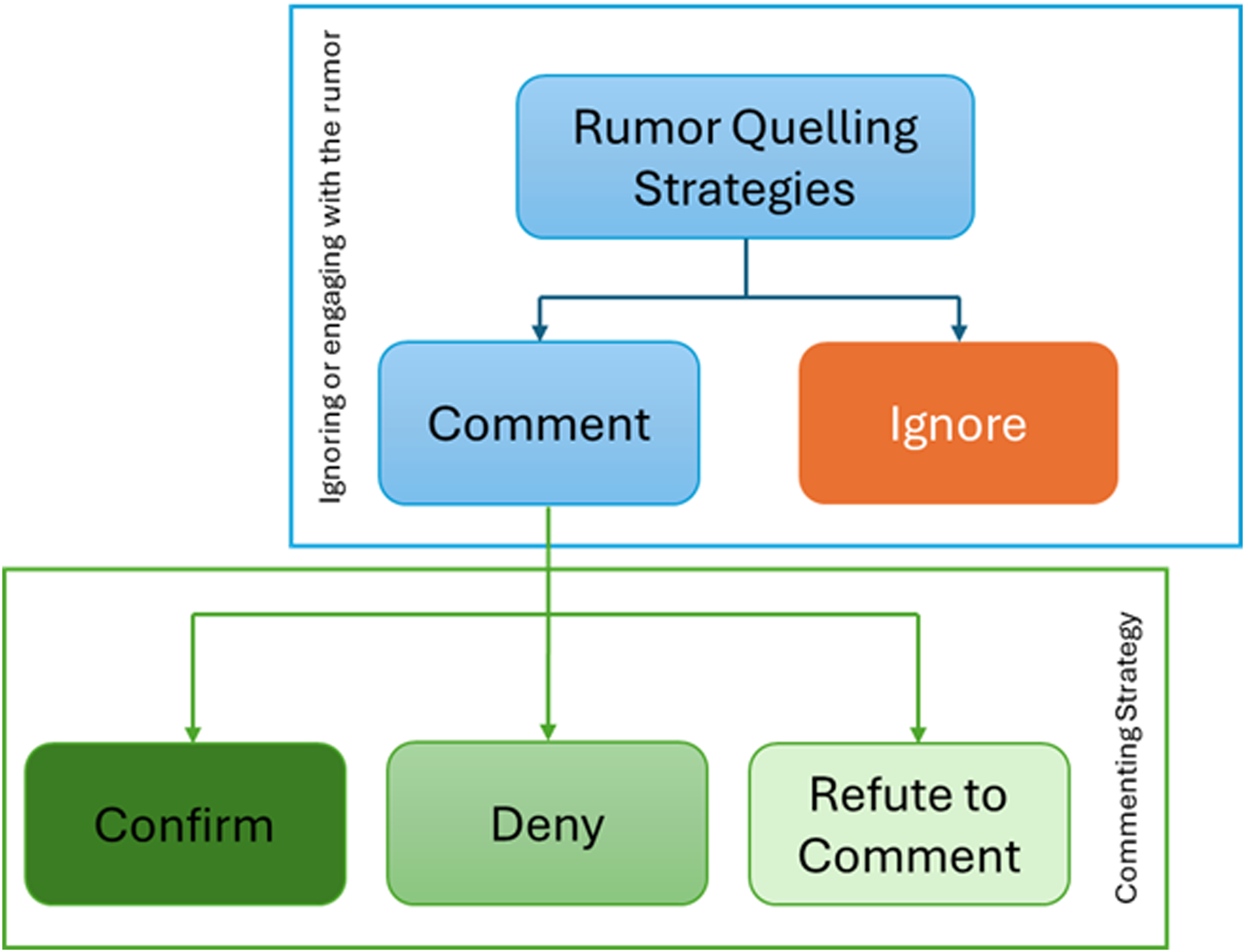

Within the intricate battle against rumors, particularly those targeting specific entities such as individuals, organizations, or countries, the strategic response to such rumors is critical. This nuanced approach to rumor management is elegantly depicted in Figure 2, which outlines the potential responses an entity can have when confronted with a rumor. The decision matrix presented in the figure begins with a fundamental choice: to address the rumor or to remain silent. Engaging with the rumor opens three pathways: confirming the rumor with details, denying the rumor with a rebuttal, or choosing to withhold comment. Each of these responses carries its own set of implications and strategic considerations. The classification of counter-rumor strategies (adapted from Fard and Verma

15

).

Choosing to ignore the rumor is typically the least effective method of quelling it. The rationale is that rumors, by their nature, find fertile ground in the varied perceptions of different individuals—what may seem implausible to one might be entirely believable to another, perpetuating the cycle of misinformation. In other words, while some individuals may stop spreading the rumor, others continue to take it up. The figure further illustrates that when parts of a rumor hold some truth, acknowledging these truths can significantly reduce the likelihood of further dissemination. 15

Denial, a commonly employed strategy, is fraught with complexity. The figure underscores that the effectiveness of a denial hinges on several critical factors: the truthfulness of the denial, the credibility and trust in the source issuing the denial, and the alignment of the denial with the context of the rumor. Importantly, the figure also highlights the pitfalls of inadvertently reinforcing the rumor through repetition in attempts to deny it, emphasizing the necessity for timely, clear, and evidence-supported rebuttals. Conversely, a no-comment stance is depicted as similarly ineffective, often interpreted as tacit acknowledgment or as evidence of something to conceal, thus potentially exacerbating the situation.

This figure serves as a strategic guide, illustrating the careful considerations entities must navigate when responding to rumors. It not only outlines the options available but also delves into the intricate dynamics of rumor management, offering insights into the factors that influence the efficacy of each potential response. Discussion of these two figures is necessary for understanding how rumors disseminate and past strategies for responding to them.

Modern strategies and technological innovations

In recent years, the focus has shifted toward leveraging technology to combat disinformation. Artificial Intelligence (AI) and machine learning algorithms have been employed to detect patterns indicative of false information, enabling social media platforms to flag or remove misleading content proactively. Information Systems play a crucial role in this strategy, providing the tools and frameworks necessary to process vast amounts of data and identify disinformation campaigns. Socially, social media emerges as an expansive network characterized by its hyper-connectivity and power-law degree distribution, significantly broadening the audience for rumors. This vast network facilitates the formation of communities bound by shared values and beliefs, heightening the potential for rumor proliferation among like-minded individuals. Furthermore, the diversity inherent in these large networks increases the range of rumors that can circulate, appealing to varied interests and perspectives.

From an institutional standpoint, social media platforms uniquely empower users to engage in the spread of information under the veil of anonymity. This anonymity provides a protective layer for those initiating or spreading unverified information, enabling them to broadcast rumors without accountability. The democratization of content creation and distribution further complicates this landscape. Users can circulate their content with little to no governmental oversight or platform supervision, contributing to an environment ripe for rumor spreading. The platforms’ minimal enforcement of codes of conduct or journalistic standards, under the guise of protecting free speech, only exacerbates this issue, creating an almost lawless space where misinformation can thrive.

Technologically, the use of recommendation systems and social bots deepens the polarization within these digital communities. By filtering content to align with users’ existing beliefs, recommendation algorithms ensure that individuals are less likely to encounter opposing viewpoints, thus reinforcing existing biases. Social bots, particularly in political contexts, play a pivotal role in amplifying content that resonates with certain groups, further entrenching users within their echo chambers. These technological tools, designed to enhance user experience and manage information overload, inadvertently contribute to the fragmentation of discourse on social media, making the platform an ideal ground for the unchecked spread of rumors and misinformation.

Furthermore, blockchain technology has been explored to ensure the integrity of information by creating immutable records of data and news sources. This innovative approach underscores the potential of Information Systems to offer solutions that not only address the spread of disinformation but also enhance transparency and trust in digital content 29 . Moreover, the deployment of sophisticated tools such as Debunk.eu’s algorithmic identification of disinformation and Semantic Visions’ AI-driven monitoring highlight the critical role of technology in the contemporary counter-disinformation toolkit. This technological advancement, coupled with grassroots documentation and community vigilance, represents a significant stride toward understanding and mitigating the impact of disinformation. However, the effectiveness of these measures is contingent upon a baseline understanding of disinformation’s prevalence in “calm” periods, which remains a challenging endeavor. The integration of strategic models by experts like Ben Nimmo, who assesses the dissemination pathways of disinformation across platforms and communities, alongside empirical analyses of disinformation’s engagement rates, offers insightful perspectives on the resilience of false narratives. 30 The success stories in combating disinformation, evidenced by heightened public awareness and strategic debunking efforts, underscore the potential for mitigating the spread of false narratives.

The role of policy and international cooperation

The fight against disinformation also involves regulatory measures and international cooperation. The European Union’s Digital Services Act represents a significant legislative effort to hold online platforms accountable for the content they host. This approach highlights the importance of policy in complementing technological solutions, a domain where insights from Political Science are invaluable. Similarly, global initiatives like the Paris Call for Trust and Security in Cyberspace exemplify how international collaboration is critical in addressing the cross-border nature of disinformation campaigns.

In the dynamic and often contentious arena of information operations, strategies to counter hostile disinformation have crystallized into four fundamental lines of defense, revealing a comprehensive approach to safeguarding the information environment. These strategies, ranging from documenting the threat for deeper understanding, and raising public awareness, to repairing systemic vulnerabilities and directly challenging information aggressors, underscore the multifaceted battle against disinformation. Initiatives like StopFake’s rigorous documentation efforts and NATO’s fact-checking factsheets exemplify proactive measures taken to counteract and debunk widespread disinformation narratives, particularly in the face of geopolitical tensions. 31 The current great powers conflict has already shown that disinformation campaigns have become the forefront of modern tensions as each side tries to control the narrative about oneself and the other.

The Global Engagement Center’s “counter-brand approach” and the push for self-regulatory standards among major social media platforms reflect a broader recognition of the need for robust counter-disinformation measures. Yet, the enduring challenge remains the inherent vulnerability of open, democratic societies to disinformation attacks—a vulnerability that demands continuous, innovative strategies to defend the integrity of the information space. As we transition from discussing these foundational strategies to exploring specific data-driven insights, the enduring complexity of countering disinformation in the digital age is evident, necessitating a vigilant, multi-pronged approach to preserve the veracity of the information ecosystem.

Integrated approach

Combating disinformation in the digital age requires an integrated framework that merges insights from Political Science with the technological capabilities of Information Systems. This comprehensive strategy not only focuses on detecting and mitigating false information but also addresses the underlying vulnerabilities that enable the spread of disinformation. By enhancing digital literacy, ensuring transparency on social media platforms, and fostering public trust in credible sources, we can build a more resilient information ecosystem.

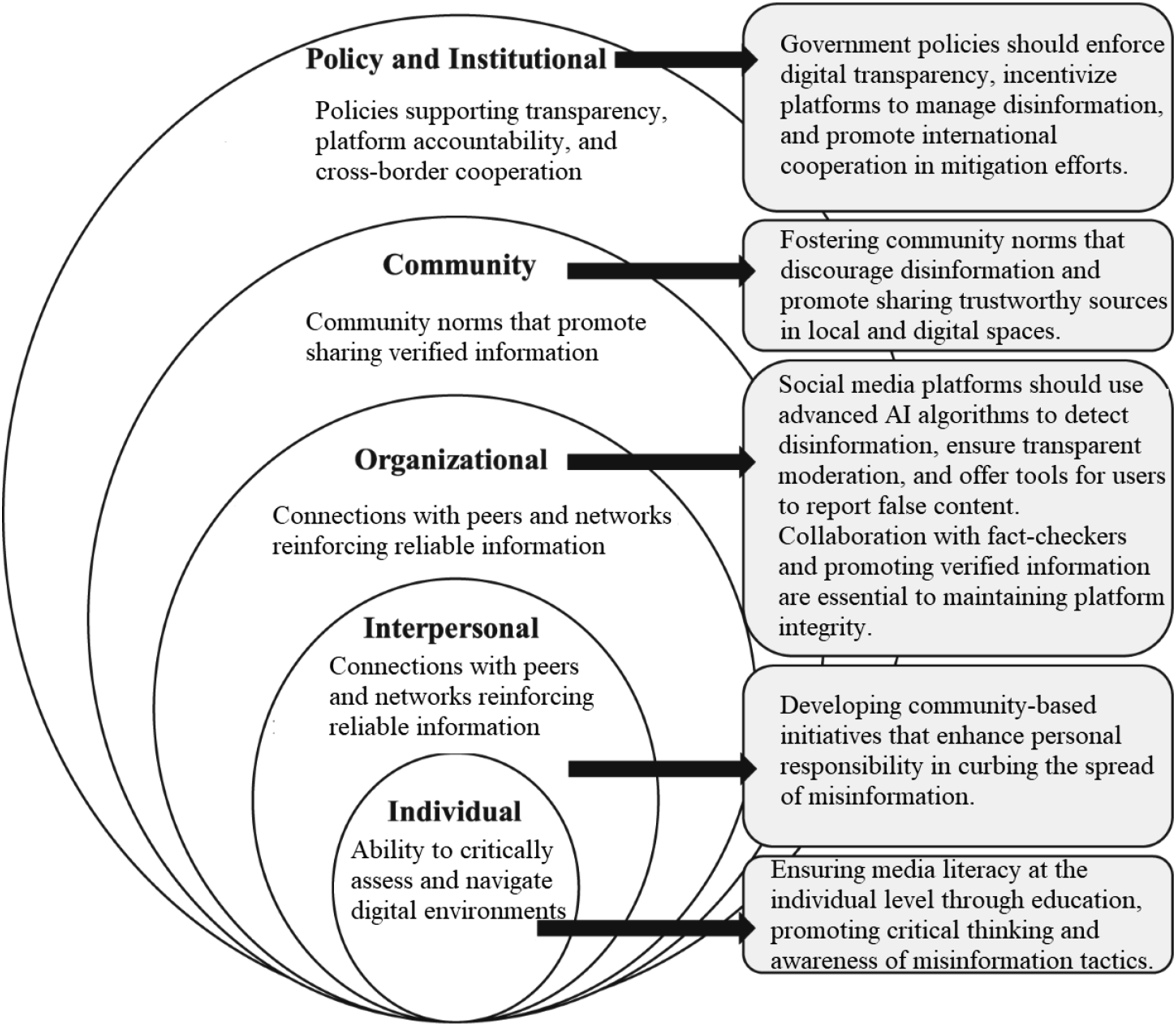

The framework, as illustrated in Figure 3, operates across multiple levels. At the core, the individual level emphasizes empowering individuals through media literacy and critical thinking skills, enabling users to navigate online information with discernment and avoid falling victim to disinformation. The interpersonal level focuses on the influence of close networks and peers, fostering environments where users engage with accurate information and challenge disinformation within their communities. At the organizational (platform) level, social media platforms play a critical role by employing advanced AI algorithms to detect and filter disinformation, promoting transparency in content moderation, and providing tools for users to report false content. Collaboration with fact-checking organizations further strengthens platform integrity. The community level involves promoting norms, both online and offline, that discourage the spread of disinformation and encourage the sharing of verified information, achieved through collective efforts from trusted community leaders and influencers. Finally, the policy and institutional level involves government and institutional policies that enforce digital transparency, regulate platforms, and promote cross-border cooperation in combating disinformation, ensuring a robust defense against global disinformation threats. This multi-level approach ensures that interventions occur at all key points within the information ecosystem, from individual actions to large-scale policy measures, creating a comprehensive defense against the spread of disinformation. A multi-level integrated framework for combating disinformation.

Data and method

To ensure the effectiveness of this framework in real-world applications, a detailed understanding of how disinformation spreads and can be countered requires rigorous data analysis. This leads us to the methodological backbone of this study, where we employ the CRISP-eSNeP framework developed by Asamoah and Sharda 9 for processing and analyzing large-scale social media data.

CRISP-eSNeP framework

In our modern world, where data is analogous to gold ore, the real value is unlocked when this massive data is mined, producing meaningful insights that can refine business processes. This transformative process of refining raw data into valuable insights echoes the paradigm of transforming gold ore into refined gold. The CRISP-eSNeP framework embodies this paradigm. It addresses the challenges posed by the 5 V’s of big data: volume, velocity, variety, veracity, and value. This model focuses on the efficient acquisition, preprocessing, management, and analysis of large volumes of social media data, ensuring that the data accurately reflects the characteristics of the specific social network platform in question.

For fields deeply rooted in data, like analytics, the comprehension of the domain of the project, the source of data, and the validity of the intended data variables concerning the addressed problem, is of paramount importance. In the realm of big data, possessing more data is only advantageous if the data is free of unnecessary elements that might distort the outcome of the analysis. 32 Hence, understanding the mechanism of information dissemination, especially on social media platforms, becomes pivotal. Notably, in studies parallel to ours, this understanding aids healthcare practitioners in architecting effective disease self-management strategies through these platforms. 9

In the vast networks of social media, there exist highly connected users that serve as vital connection points between other less connected users. Within this study, we define a central node as a user having more than 100 friends or adjacent connections.9,33 In contrast, a non-central node is defined as a user with less than 100 friends. Our objective roots itself in exploring the evident influence these central nodes exert in the spread of information. As per our analysis, influential users on platforms like X, dominate discussions with notably higher-quality discourse than their less influential counterparts.

The CRISP-eSNeP framework stands as an invaluable tool for two key reasons. Firstly, it’s adept at managing and analyzing vast datasets sourced from social network platforms. Second, it promotes efficient data management for practice-oriented projects. In the context of overwhelming data on social networks, CRISP-eSNeP’s methodological process shines by providing structure, aiding in the management, analysis, and derivation of valuable insights.

Data

The foundational dataset for this study was initially segmented across 30 Excel files, each containing approximately 100,000 tweets related to COVID-19. This extensive collection of data required a meticulous Extraction, Transformation, and Load (ETL) process to ensure its readiness for in-depth analysis. The primary goal was to consolidate these disparate data sources into a coherent, analyzable dataset without losing the granularity of the original data.

Extraction

The initial step involved a thorough extraction of tweets from Twitter. This phase was essential for capturing all relevant data, including tweets, retweets, user IDs, and associated metadata, to facilitate comprehensive analysis. We utilized the Twitter API to search for all vaccine-related tweets from November 15 to December 15, 2021, extracted using the Twitter API. This period was critical for COVID-19 vaccine campaigns due to several significant developments. Notably, during this time, the global vaccine rollout intensified, especially with booster shot approvals and updates to public health guidelines in response to the Omicron variant. This period also saw an uptick in misinformation efforts, driven by anti-vaccine movements that exploited uncertainties surrounding new variants and booster doses. As a result, both vaccine support and opposition surged, making this a key period for public discourse.

Transformation

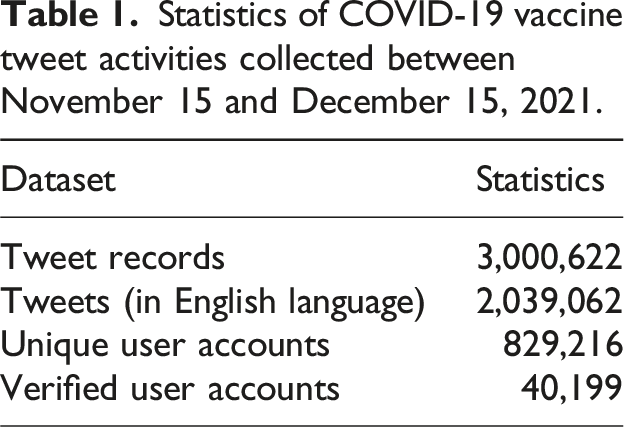

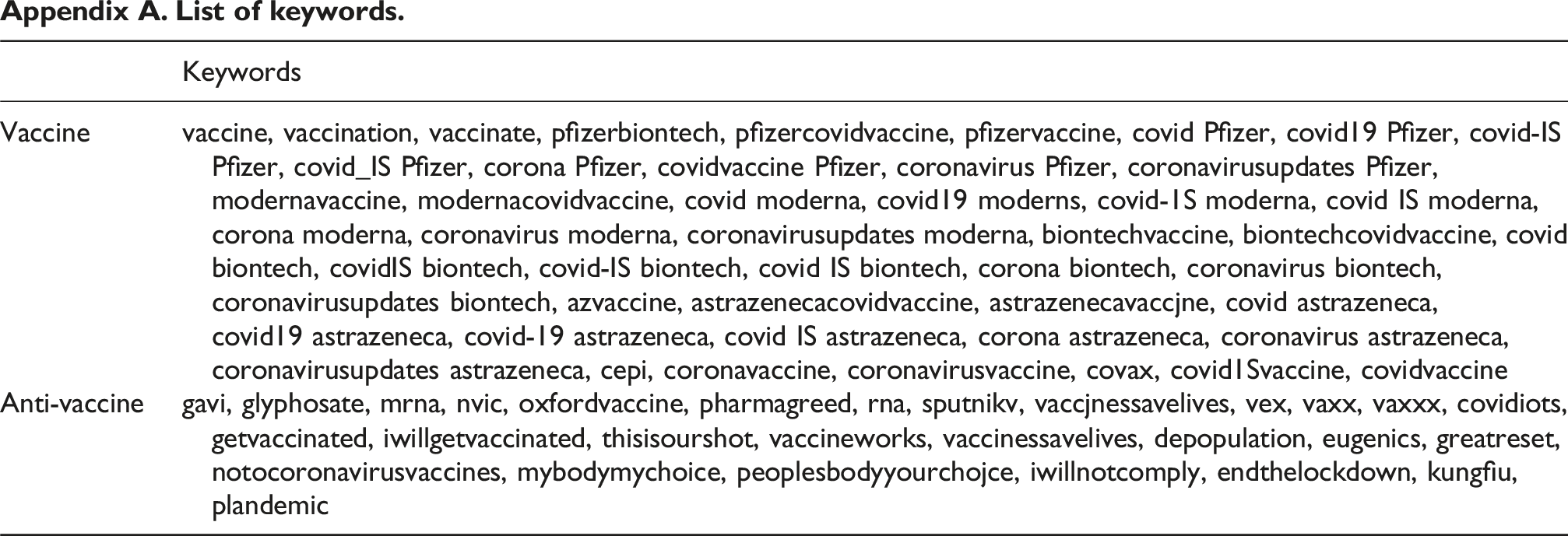

Following the extraction phase, the transformation stage aimed to standardize the data structure and content. Given the large volume of data, we consolidated individual daily vaccine-related tweets, stored in Excel files, into three master files, each containing one million records. This consolidation was instrumental in simplifying the data handling process. Key transformation steps included: • Language Filtering: We applied a filter to retain only English-language tweets, using a descriptive column that indicated the language of each original entry. This choice was made to focus our analysis on the discourse primarily within English-speaking segments of Twitter, resulting in a refined dataset of 2,039,062 tweet activities. Table 1 presents details about the collected tweets and the associated user accounts. The initial dataset comprised 3 million tweets written in 65 different languages, with the subset of English tweets accounting for approximately 67.9% of the total records. For further analysis, we utilized the tweets written in English. Table 1 also reports the fraction of English tweets that include unique user accounts associated with the records, as well as the number of verified Twitter accounts among those users, which is also 48.5%. • Classification: Each tweet was then classified into one of three categories: anti-vaccine misinformation (Label 1), reliable vaccine information (Label 2), and others (Label 3). The classification criteria were grounded in a predefined list of keywords relevant to vaccine discourse and anti-vaccine sentiments (See Appendix A for the list of keywords) Statistics of COVID-19 vaccine tweet activities collected between November 15 and December 15, 2021.

Load

The final stage involved loading the transformed data into R, a decision motivated by R’s robust data processing capabilities and its adeptness at handling large datasets. This platform served as the analytical backbone for the subsequent exploration and visualization of the data.

Several challenges emerged during the ETL process, notably: • Data volume: The sheer volume of data presented initial challenges in handling and processing. This was mitigated by breaking down the data into manageable segments and utilizing powerful computational resources to ensure efficient processing. • Data quality and consistency: Variations in data quality and format across the original Excel files necessitated rigorous data cleaning and normalization efforts. Custom scripts and manual review processes were employed to standardize the data format and resolve discrepancies. • Classification accuracy: Ensuring accurate classification of tweets into the three categories required the development of a nuanced keyword list, informed by both current literature on vaccine discourse and manual review of sample tweets. Ongoing refinement of the keyword list and classification criteria helped enhance the precision of the categorization.

Results

The analysis of COVID-19-related tweets has unearthed critical insights into the temporal patterns in the spread of reliable information and misinformation, differences in engagement levels, and the role of influential users in information dissemination. These findings not only illuminate the dynamics of information spread on social media but also offer strategic avenues for combating misinformation.

What are the temporal patterns in the spread of reliable information and misinformation?

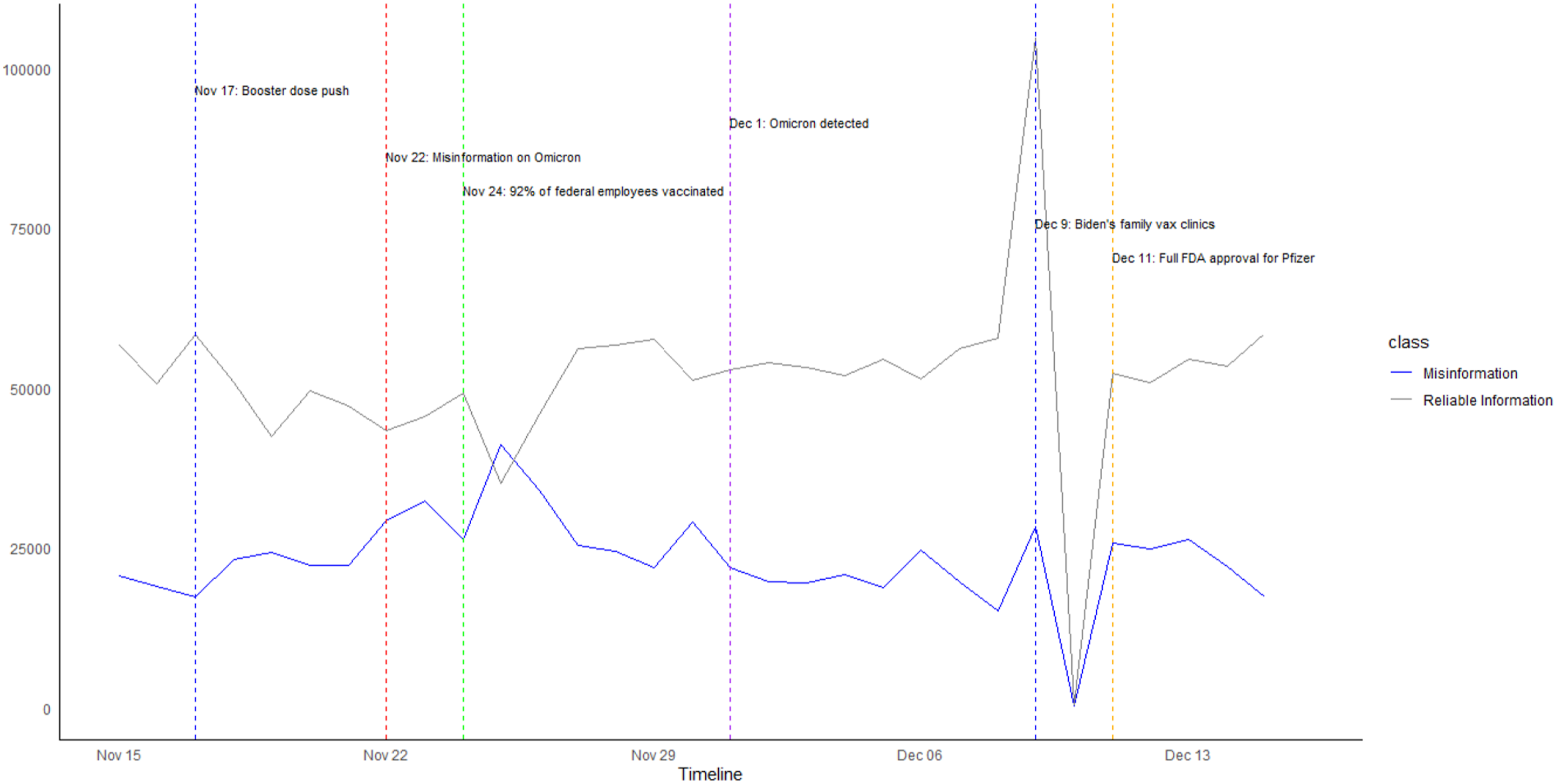

The examination of daily tweet distribution (Figure 4) revealed pronounced fluctuations in the volume of tweets categorized as reliable information and those tagged as misinformation. Tweet volume timeline from the emergency use.

Peaks in reliable information tweets often coincided with official health announcements or significant developments in the COVID-19 pandemic. For instance, on December 9, 2021, a surge in reliable tweets was linked to President Biden’s announcement of family vaccination clinics. Similarly, a peak on November 17 corresponded with the public interest in booster dose approvals, and another on December 1 aligned with heightened concern over the Omicron variant.

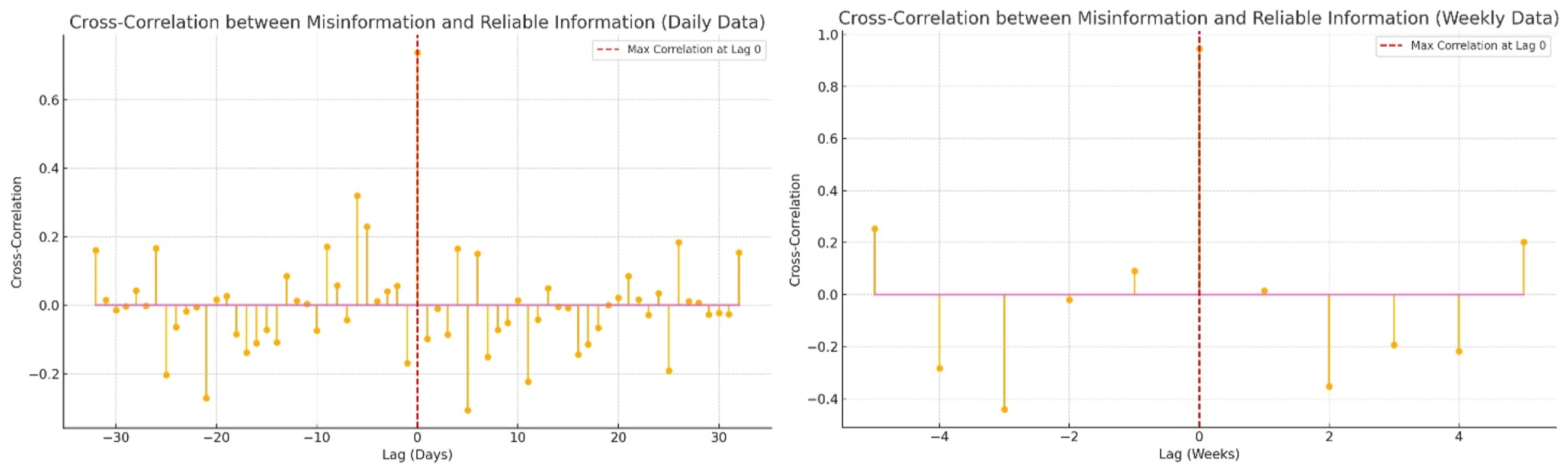

Conversely, spikes in misinformation often followed closely behind major health updates, indicating a reactionary pattern. The largest misinformation peak occurred on November 22, 2021, a few days after reliable information increased. This trend suggests that misinformation thrives on uncertainty, particularly in the aftermath of new health policies or scientific developments. Cross-correlation between misinformation and reliable information.

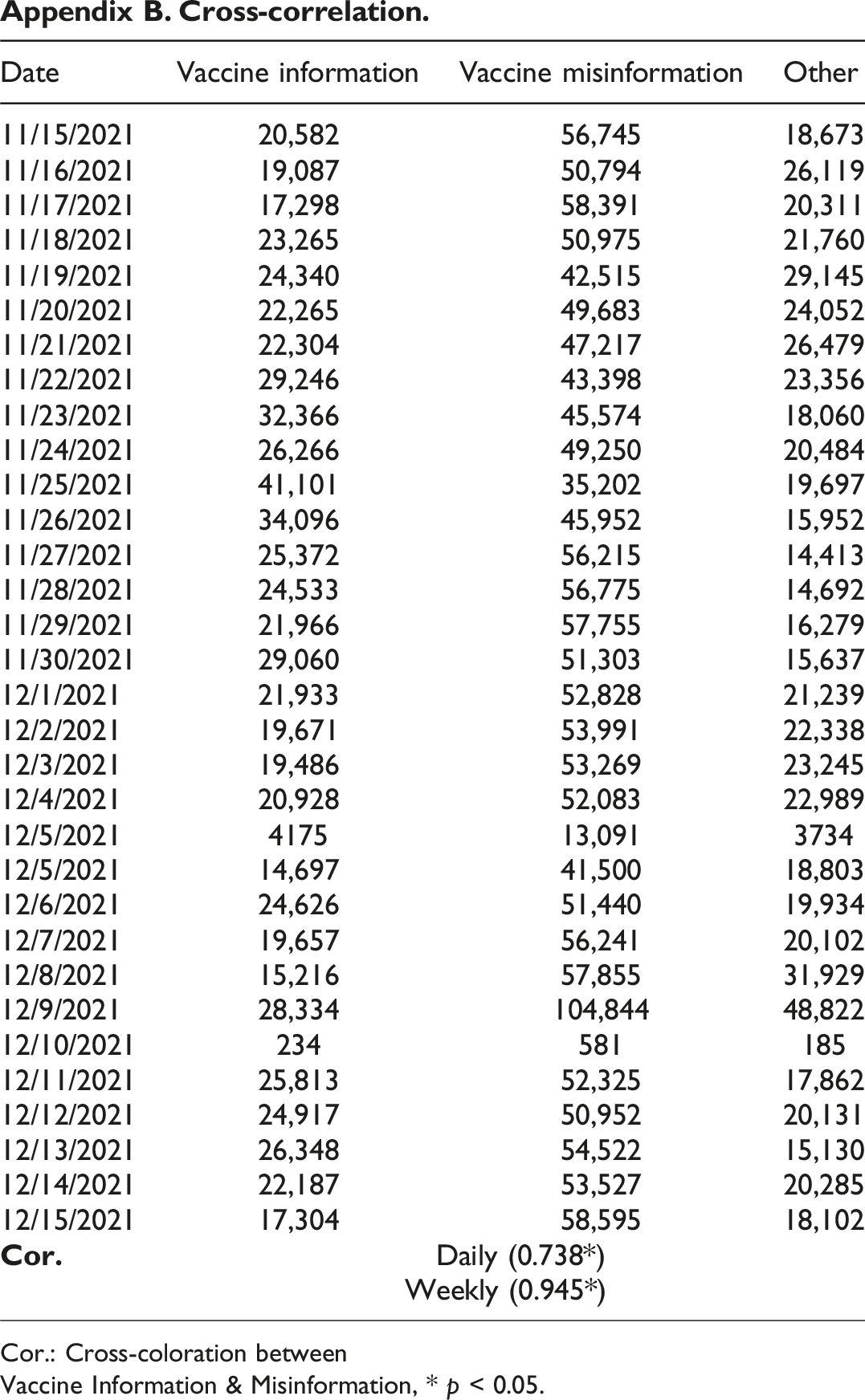

The cross-correlation analysis34,35, as shown in Figure 5, further confirms this reactive dynamic, showing a strong simultaneous relationship between misinformation and reliable information, with the highest correlation occurring at lag 0 (daily: 0.738, weekly: 0.945). This indicates that both misinformation and reliable information tend to spike together, responding to the same external triggers. The stronger weekly correlation suggests that these patterns persist beyond immediate daily fluctuations, reinforcing the importance of proactive communication strategies to mitigate misinformation before it takes hold (Please refer to Appendix B for a detailed analysis of the cross-correlation results).

How do engagement levels differ between reliable information and misinformation?

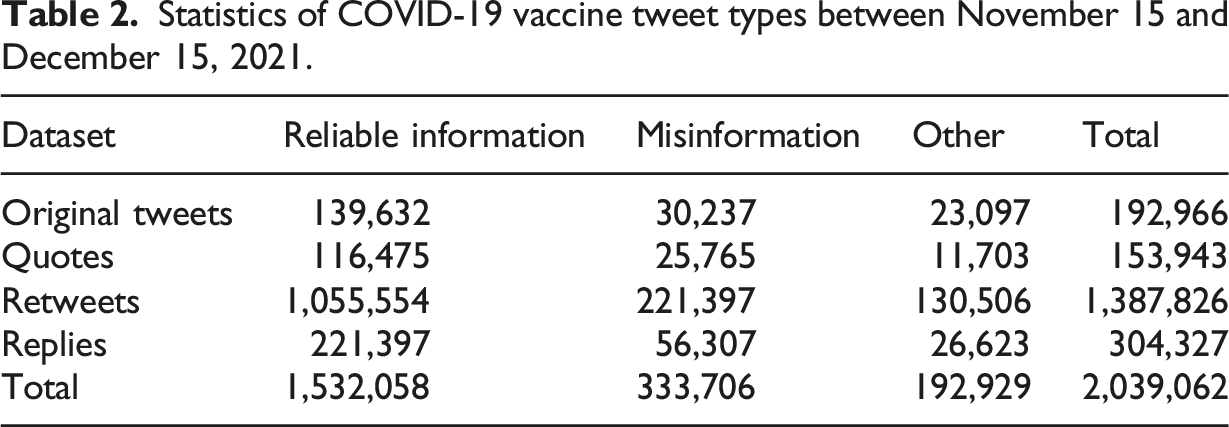

Statistics of COVID-19 vaccine tweet types between November 15 and December 15, 2021.

Reliable information tweets received significantly more retweets (1,055,554) compared to misinformation (221,397), suggesting that fact-based content was shared more frequently. However, misinformation tweets attracted more replies (56,307), indicating that users were more likely to engage critically or debate misleading content.

This engagement pattern was further validated by a Chi-squared test (χ2 = 45.67, df = 3, p < 0.0001), which revealed a statistically significant difference in engagement types between reliable information and misinformation. While reliable information spread more through retweets, misinformation was still amplified through shares and replies, highlighting its potential for virality despite the lower absolute volume.

Additionally, 128,464 tweets fell under the “Others” category, comprising content that neither promoted misinformation nor actively provided reliable vaccine information. The presence of this neutral category suggests a diverse range of vaccine-related discourse beyond polarized narratives of factual versus false content.

What roles do influential users (central nodes) play in the dissemination of vaccine-related tweets?

To assess the role of influential users, the dataset was segmented into central nodes (users with more than 100 connections) and non-central nodes (users with fewer than 100 connections), following. 9 Findings indicate that 635,411 central nodes dominated discussions, accounting for a significant portion of both reliable information and misinformation dissemination. Meanwhile, 266,632 non-central nodes played a smaller but still notable role, contributing to localized spread within niche communities.

This suggests that central nodes serve as primary amplifiers, shaping the broader discourse around vaccines. Their extensive reach makes them pivotal in both spreading and combating misinformation. Targeting these influential users—either by engaging trusted figures or adjusting platform algorithms to prioritize credible sources—could be an effective strategy in mitigating disinformation.

Additionally, while central nodes influence large-scale discourse, non-central nodes help sustain misinformation within insular echo chambers. This highlights the importance of intervention strategies that address both large-scale influencers and smaller, tightly knit communities.

Discussion: Implications for counter-misinformation strategies

This study highlights several key implications for counter-misinformation strategies, particularly in leveraging structured analytical frameworks such as CRISP-eSNeP to track and combat disinformation cascades. First, the importance of timeliness and clarity in communication is emphasized. Drawing on insights from Political Science, the findings stress that rapid and clear communication from trusted authorities during crises is crucial. By filling the information void proactively, governments and health organizations can reduce the spread of misinformation.

By leveraging Information Systems and data-driven insights from CRISP-eSNeP, authorities can identify misinformation surges in real-time and tailor interventions accordingly. Social media analytics can pinpoint which narratives are gaining traction, who the key influencers are, and which demographic groups are most affected. Targeted campaigns addressing specific misinformation themes (e.g., vaccine safety, long-term effects) can preemptively counter disinformation with factual, accessible content.

The findings indicate that reliable information generally receives higher levels of engagement through retweets, while misinformation attracts more direct replies and quote tweets. This suggests that while factual content spreads organically, misinformation tends to provoke discourse. Optimizing reliable information through social media algorithms, influencer collaborations, and engaging multimedia formats (e.g., infographics, and videos) can increase its reach.

CRISP-eSNeP’s framework highlights the potential of AI-driven real-time misinformation detection. Integrating machine learning algorithms into social media monitoring systems can help authorities monitor emerging misinformation trends and deploy swift countermeasures when necessary.

Finally, despite the power of data analytics, long-term solutions require improving public digital literacy. Ongoing public education about digital literacy and critical thinking is essential. These initiatives, informed by the interdisciplinary insights of Political Science and Information Systems, can empower individuals to critically evaluate the information they encounter online, thereby reducing the influence of misinformation.

Conclusion and strategic recommendations

The exploration of COVID-19 vaccine misinformation on social media has underscored the complex interplay between technology, society, and governance in shaping public discourse. By employing the CRISP-eSNeP framework, this study has demonstrated the effectiveness of systematic social media analysis in identifying information diffusion patterns, engagement dynamics, and key misinformation amplifiers. Drawing on the interdisciplinary insights of Political Science and Information Systems, the following strategic recommendations are proposed for policymakers, social media platforms, and public health officials.

Managerial implications for policymakers

The implications for policymakers highlight several critical actions to address misinformation. First, developing and enforcing legislative frameworks for digital transparency is essential. Policymakers must mandate that social media platforms provide transparency regarding their algorithms and content moderation policies. This will enable users to understand how information is filtered and presented, fostering a more informed and responsible digital citizenry.

Additionally, there is a need for increased support for fact-checking organizations. By providing more funding and resources to independent fact-checking entities, policymakers can ensure that misinformation is debunked efficiently and accurately. Integrating these efforts into public awareness campaigns, especially during health crises, will help build public trust in verified information.

Lastly, cross-border collaboration on misinformation is critical, as disinformation campaigns often transcend national boundaries. International cooperation is necessary for the effective monitoring, tracking, and countering of these campaigns. Diplomatic efforts should aim at creating shared databases and rapid response mechanisms to address misinformation on a global scale.

Managerial implications for social media platforms

The implications for social media platforms highlight several key strategies to effectively combat misinformation. First, platforms should invest in advanced misinformation detection algorithms. By developing and deploying AI and machine learning algorithms capable of identifying and flagging misinformation in real-time, platforms can respond more effectively to false content. These technologies must be regularly updated to adapt to the evolving tactics of those spreading misinformation.

Second, platforms should focus on user empowerment through digital literacy tools. Implementing features such as interactive guides, direct links to verified sources, and prompts that encourage users to question the credibility of information will help users make more informed decisions. These tools can foster a more discerning and responsible digital public.

Lastly, social media platforms must prioritize transparent content moderation policies. By clearly explaining why certain content is flagged, removed, or demoted, platforms can build trust with their user base. This transparency helps mitigate perceptions of bias or censorship and ensures that content moderation processes are seen as fair and accountable.

Managerial implications for public health officials

The managerial implications for public health officials focus on strategies to enhance communication and combat misinformation. First, adopting a proactive communication strategy is essential. Public health officials should directly and transparently address public concerns about vaccines, utilizing multiple media channels, including social media, to disseminate accurate information and counter prevailing myths. Preemptive messaging campaigns based on real-time misinformation tracking (such as insights from CRISP-eSNeP) can help shape public discourse before falsehoods dominate online spaces. This approach helps build public trust and provides timely, factual updates.

Second, collaboration with influencers and community leaders can significantly boost the reach of public health messages. By partnering with trusted figures such as influencers, celebrities, and community leaders, public health officials can amplify accurate health messages and engage broader, more diverse audiences. This leverages the established trust these figures have with their followers, making public health communications more relatable and impactful.

Lastly, monitoring and rapid response teams should be established to track misinformation trends in real-time. These teams can work closely with social media platforms to ensure accurate information is prioritized in users’ feeds, enabling a quick response to emerging disinformation and ensuring that public health messages remain at the forefront of the conversation.

In conclusion, the fight against vaccine misinformation requires an integrated approach that combines the analytical frameworks of Political Science with the technological solutions of Information Systems. By leveraging political insights to decode the motivations behind misinformation campaigns and employing structured analytical models like CRISP-eSNeP to track and mitigate their spread, stakeholders can develop more effective, data-driven countermeasures. A multidisciplinary approach—rooted in transparency, digital literacy, and technological innovation—is essential to safeguarding public health and preserving the integrity of digital discourse.

Statements and declarations

Footnotes

Conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Appendix

List of keywords.

Keywords

Vaccine

vaccine, vaccination, vaccinate, pfizerbiontech, pfizercovidvaccine, pfizervaccine, covid Pfizer, covid19 Pfizer, covid-IS Pfizer, covid_IS Pfizer, corona Pfizer, covidvaccine Pfizer, coronavirus Pfizer, coronavirusupdates Pfizer, modernavaccine, modernacovidvaccine, covid moderna, covid19 moderns, covid-1S moderna, covid IS moderna, corona moderna, coronavirus moderna, coronavirusupdates moderna, biontechvaccine, biontechcovidvaccine, covid biontech, covidIS biontech, covid-IS biontech, covid IS biontech, corona biontech, coronavirus biontech, coronavirusupdates biontech, azvaccine, astrazenecacovidvaccine, astrazenecavaccjne, covid astrazeneca, covid19 astrazeneca, covid-19 astrazeneca, covid IS astrazeneca, corona astrazeneca, coronavirus astrazeneca, coronavirusupdates astrazeneca, cepi, coronavaccine, coronavirusvaccine, covax, covid1Svaccine, covidvaccine

Anti-vaccine

gavi, glyphosate, mrna, nvic, oxfordvaccine, pharmagreed, rna, sputnikv, vaccjnessavelives, vex, vaxx, vaxxx, covidiots, getvaccinated, iwillgetvaccinated, thisisourshot, vaccineworks, vaccinessavelives, depopulation, eugenics, greatreset, notocoronavirusvaccines, mybodymychoice, peoplesbodyyourchojce, iwillnotcomply, endthelockdown, kungfiu, plandemic

Cross-correlation. Cor.: Cross-coloration between Vaccine Information & Misinformation, * p < 0.05.

Date

Vaccine information

Vaccine misinformation

Other

11/15/2021

20,582

56,745

18,673

11/16/2021

19,087

50,794

26,119

11/17/2021

17,298

58,391

20,311

11/18/2021

23,265

50,975

21,760

11/19/2021

24,340

42,515

29,145

11/20/2021

22,265

49,683

24,052

11/21/2021

22,304

47,217

26,479

11/22/2021

29,246

43,398

23,356

11/23/2021

32,366

45,574

18,060

11/24/2021

26,266

49,250

20,484

11/25/2021

41,101

35,202

19,697

11/26/2021

34,096

45,952

15,952

11/27/2021

25,372

56,215

14,413

11/28/2021

24,533

56,775

14,692

11/29/2021

21,966

57,755

16,279

11/30/2021

29,060

51,303

15,637

12/1/2021

21,933

52,828

21,239

12/2/2021

19,671

53,991

22,338

12/3/2021

19,486

53,269

23,245

12/4/2021

20,928

52,083

22,989

12/5/2021

4175

13,091

3734

12/5/2021

14,697

41,500

18,803

12/6/2021

24,626

51,440

19,934

12/7/2021

19,657

56,241

20,102

12/8/2021

15,216

57,855

31,929

12/9/2021

28,334

104,844

48,822

12/10/2021

234

581

185

12/11/2021

25,813

52,325

17,862

12/12/2021

24,917

50,952

20,131

12/13/2021

26,348

54,522

15,130

12/14/2021

22,187

53,527

20,285

12/15/2021

17,304

58,595

18,102

Daily (0.738*)

Weekly (0.945*)