Abstract

Early childhood educators may screen for mild motor delay in 4-year-old children but require appropriate resources. This study developed and evaluated the feasibility and acceptability of an instructional resource designed for educators to screen mild motor delay. An explorative sequential mixed methods study was used to co-design an instructional resource through focus groups with early childhood educators, then pilot test and evaluate the feasibility and acceptability of the instructional resource using a survey. Two focus groups guided the development of the instructional resource, a website, and manual. Eighteen educators assessed 18 children using the resources. There was good concordance in scores between educators and physiotherapist (Bland-Altman: M diff = −0.5, limits = −3.5 to 2.5). Educators ‘strongly agreed’ or ‘agreed’ on the instructional resources’ ethicality (90%), efficiency (75%), liked its use (75%), and believed it achieved its intended purpose (70%). These findings suggest that the instructional resource is feasible and acceptable.

Keywords

Introduction

Motor development is a continuous and flexible process, marked by key milestones that children must reach to promote typical everyday functioning (Dosman et al., 2012). The World Health Organisation’s International Classification of Functioning and Disability Framework (ICF) conceptualises the impact a child’s impairment can have on their function, activities, and participation (Holloway & Long, 2019). This framework details important considerations including contextual, personal, and environmental factors. The ICF defines the acquisition of basic and complex movement skills as a key component of development (Ferguson et al., 2014). The acquisition of these motor skills is problematic for children with motor delay. Inadequate motor skill competence may compromise a child’s social and emotional development in their later years (Cantell et al., 1994; Chang, 2007; Loprinzi et al., 2015).

Not all motor delay is overt in early childhood. Mild motor delay may remain undetected (Williams & Holmes, 2004) until the first few years of schooling (Glascoe, 1999), which is also a critical time for physical, emotional, cognitive, and social development. For example, children with mild motor delay may be at risk of speech delays, academic failure (Alloway & Archibald, 2008), motor activity avoidance, low self-esteem (Mandich et al., 2003), behavioural problems (Shevell et al., 2005), and participate less frequently in play than typically developing children (Kennedy-Behr et al., 2013; Rosenblum et al., 2017). Due to the link between motor and social-emotional skills, and since attainment of motor skills provides the foundations for more complex skills needed in later years, the assessment of motor performance at four years of age is vital (Piek et al., 2012). If left unidentified and untreated, a child’s mild motor delay could potentially develop into a more severe delay (Molinini et al., 2021; Sullivan & McGrath, 2003). Therefore, early screening for mild motor delay may be critical to the well-being of the child, as it may enable comprehensive assessment, diagnosis, and treatment for the child and their family early on.

Developmental screening tools enable the ongoing wellbeing of all children by identifying the need for further testing quickly, early, and often without direct costs. Motor developmental screening tools need to be standardised to ensure reliable methods to detect and monitor children with motor impairments. In contrast to screening, surveillance relies on checklists and longitudinal observations, which may not reliably detect mild motor delay (Aly et al., 2010; Mackrides & Ryherd, 2011). Although validated developmental testing by qualified healthcare professionals are more accurate in determining the presence of mild motor delay, they are lengthy, costly, and require a level of expertise to administer (Mackrides & Ryherd, 2011). Screening tools, on the other hand, have the benefit of being able to assess a larger number of children, reduce the costs and time needed to administer comprehensive testing, and enable non-healthcare professionals to screen and refer children with concern for further assessment.

Effective screening for mild motor delay may be suited to everyday settings like early childhood settings. Early childhood educators and teachers, referred to in this paper as educators, often spend many hours with children which allows them the opportunity observe a child’s behaviours and growth. Educators are also in an optimal environment to observe children of similar ages to recognise delay in age-appropriate motor development (Gulsrud et al., 2019). Currently, educators are utilising surveillance questionnaires (Branson, 2009; Filgueiras et al., 2013) such as the Denver-II screening test (Frankenburg, 1994), Brigance Screens (Brigance, 1990, 1997, 1998), and Battelle Developmental Inventory (Newborg, 2020), but this may have several shortfalls. While previous research has investigated how healthcare professionals (Chiu & DiMarco, 2010; Downs et al., 2020; Tieman et al., 2005) and parents (Chiu & DiMarco, 2010; El Elella et al., 2017; Rydz et al., 2006) use developmental screening, none have explored the use of performance-based motor screening by educators. Furthermore, several studies have demonstrated the effectiveness of training non-healthcare professionals in the community (Jocelyn et al., 1998; Kaale et al., 2014; Shire et al., 2017); however, none have explored whether educators can use a performance-based screening tool to assess for mild motor delay in children.

Therefore, the aim of this study was to co-design, develop, and assess the feasibility and acceptability of an instructional resource for educators to screen for mild motor delay in 4-year-old children.

Methods

Study Design

A three-stage, explorative sequential mixed methods study design was used to (1) develop an instructional resource through focus groups which could be used by educators, and (2) then evaluate its feasibility and acceptability through pilot testing and a survey.

Ethics and Consent

The study was approved by the Macquarie University Human Research Ethics Committee (Reference number 520221150239973). All educators provided written informed consent prior to participation. All parents or guardians provided written informed consent to have their child undergo the motor assessment by an educator from their facility, and all children provided assent to the assessment.

Recruitment

All participants were recruited by convenience sampling at various centre-based childcare services within 1 hour from Sydney Central Business District (CBD) through targeted emails and phone calls. All educators were employees of the centre-based service where recruitment occurred. Advertisement posters were provided to centre-based service facilities and were posted on educator-specific Facebook pages.

Stage 1: Preliminary Development of the Instructional Resource

The initial stage aimed to (1) review the current literature to explore current outcome measures available for educators in centre-based service settings to screen for developmental delay, (2) review tools used by physiotherapists to identify motor delay in children, and (3) develop brief examples of various formats to be included in the instructional resource to present to the focus group.

A preliminary version of the instructional resource was developed to enable educators to conduct screening using the rapid, newly developed, and validated Targeted Motor Control (TMC) screening tool (Brown et al., 2024). This tool is used by physiotherapists to identify children 3 years 9 months to 4 years 5 months in age with possible mild motor delay. It was developed from the Neurosensory Motor Developmental Assessment (NSMDA), a validated (Burns et al., 1989a; 1989b) and reliable, yet lengthy assessment. The TMC is an 11-item tool that includes an assessment of age-appropriate gross motor, fine motor, and sensory-motor skills. Illustrative tasks include hopping and catching to evaluate gross motor skills; drawing to evaluate fine motor skills, in this example, grasp and execution; and eye movement tasks to assess visual tracking, and a head-righting task to assess the ability to dissociate head and body movements as evaluations of sensorimotor function. All items on the TMC are scored as either 0 or +1, with higher scores reflecting better performance. Two items include an additional −1 scoring option to account for markedly immature age-related responses. The term ‘instructional resource’ in this study refers to both the TMC and instructions on how to use the TMC.

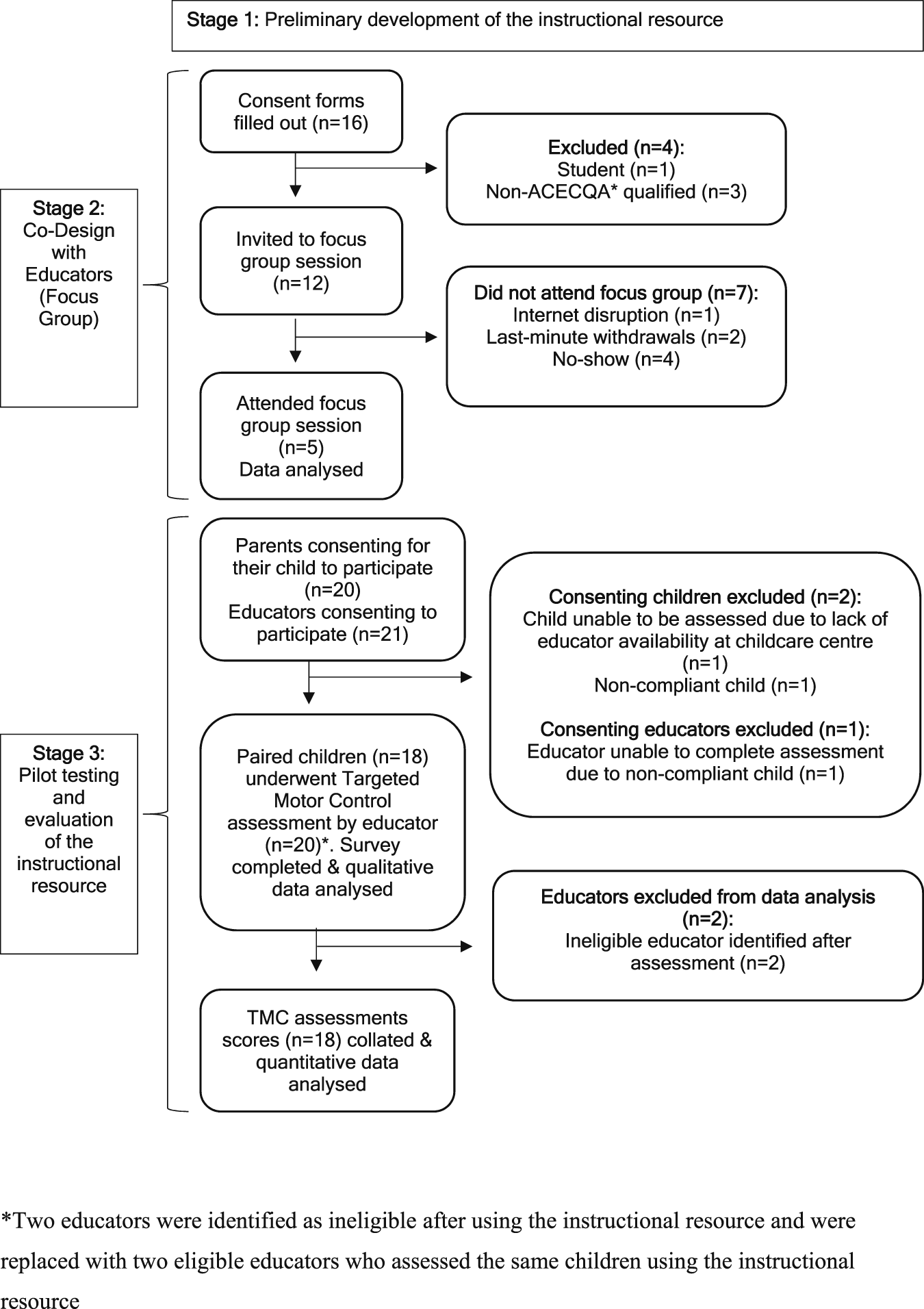

Preliminary versions of the instructional resource were created in various formats including a website, a written document, an online form for scoring, videos, photos, and audio-recordings. These versions were presented to the focus groups in Stage 2 for feedback. The flow of stages and inclusion of participants through this study is illustrated in Figure 1. Recruitment procedure for the creation and evaluation of the instructional resource. ACECQA: Australian children’s education & care quality authority

Stage 2: Co-Design with Educators (Focus Groups)

Focus groups were conducted to (1) determine participants’ experiences and perspectives with screening for motor delay in children, and (2) gain information regarding the desired format of the instructional resource. Based on these aims, semi-structured interview questions were developed by the research team to guide the focus group discussions (Appendix A).

Educators were eligible for inclusion if they (a) were early childhood educators or teachers as recognised by the Australian Children’s Education and Care Quality standards (ACECQA) (Australian Children’s Education & Care Quality Authority, 2022), (b) worked interactively with children 3 to 4 years of age, (c) were able communicate in English, and (d) had internet access.

Educators completed a survey created by the research team prior to participating in the focus groups to determine their confidence levels, experience, and the strategies used when identifying children with mild developmental delay. Educators were then interviewed at a mutually convenient time using Microsoft Teams videoconferencing. Up to 7 participants were invited to each focus group to ensure adequate facilitation of group discussion.

Stage 3: Pilot Testing and Evaluation of the Instructional Resource

Pilot testing was conducted to determine the feasibility and acceptability of the instructional resource. Educators were eligible for the study if they met (a)-(c) eligibility criteria listed in Stage 2, as well as (e) worked in a centre-based childhood service facility that was within an hour’s drive from Sydney CBD. Educators who were unable to perform any items on the TMC for any reason despite the addition of manual handling modifications, such as musculoskeletal injuries, were excluded.

Children were eligible to be assessed by educators if they (a) were 3 years 9 months to 4 years 5 months of age at the time of assessment, (b) attended a formal centre-based childcare service, and (c) were able to communicate in English. Children with confirmed moderate to severe gross motor impairments or intellectual or behavioural disabilities were excluded from the study.

Assessments were conducted once eligibility was confirmed and both educator and child participants were available in the same centre-based service facility. Once the educator and child were paired, the educator was provided the instructional resource in order to administer the TMC assessment items to score the child’s motor performance. A physiotherapist who was trained in the TMC by a TMC-experienced physiotherapist observed and simultaneously scored the child, blind to the scoring of the educator. A minimum sample size of 15 educator-child pairs was required as published in similar research evaluating the inter-rater reliability of a screening tool (Heyrman et al., 2011; Jayaraman & Puckree, 2009; Morelli et al., 2014; Tustin et al., 2016). Parents were provided a summary report of their child’s motor performance on the TMC by the physiotherapist.

After using the instructional resource, feedback was sought from the educator who completed an online survey via Qualtrics that assessed the feasibility (Weiner et al., 2017) and acceptability (Sekhon et al., 2017) of using the instructional resource to perform the assessment (Appendix B). A five-point Likert scale ranging from 1 (strongly agree) to 5 (strongly disagree), with a neutral option of 3, was used to quantify the feasibility and acceptability of the instructional resource. Open-ended questions were employed to identify problems, barriers, and suggestions for improving the instructional resource.

Data Analysis

Descriptive and survey statistics were calculated using Microsoft Excel and reported as frequencies, percentages, means and standard deviations (SD). Focus group interviews were video-recorded and auto-transcribed using MS Teams (Microsoft Software, version 1.5.00.27260). Transcripts were analysed by one investigator to determine overarching themes and sub-themes. Focus groups ceased when thematic saturation was achieved. A narrative synthesis was used to summarise the findings of the focus groups (Popay et al., 2006).

To further evaluate feasibility of the instructional resource in enabling reliable assessment in educators, the level of agreement in the total TMC scores between the scores of educators and the physiotherapist was determined. This was achieved using a Bland-Altman plot where the total TMC scores were plotted against each other to determine the mean of all the differences in scores and the 95% limits of agreement. This analysis was performed on JASP (JASP Team, 2022). For the open-ended questions in the survey, an inductive thematic analysis was conducted independently by two investigators, and discrepancies were discussed and resolved, with a third investigator available if consensus could not be reached.

Results

Stage 2: Co-Design with Educators (Focus Groups)

Participants

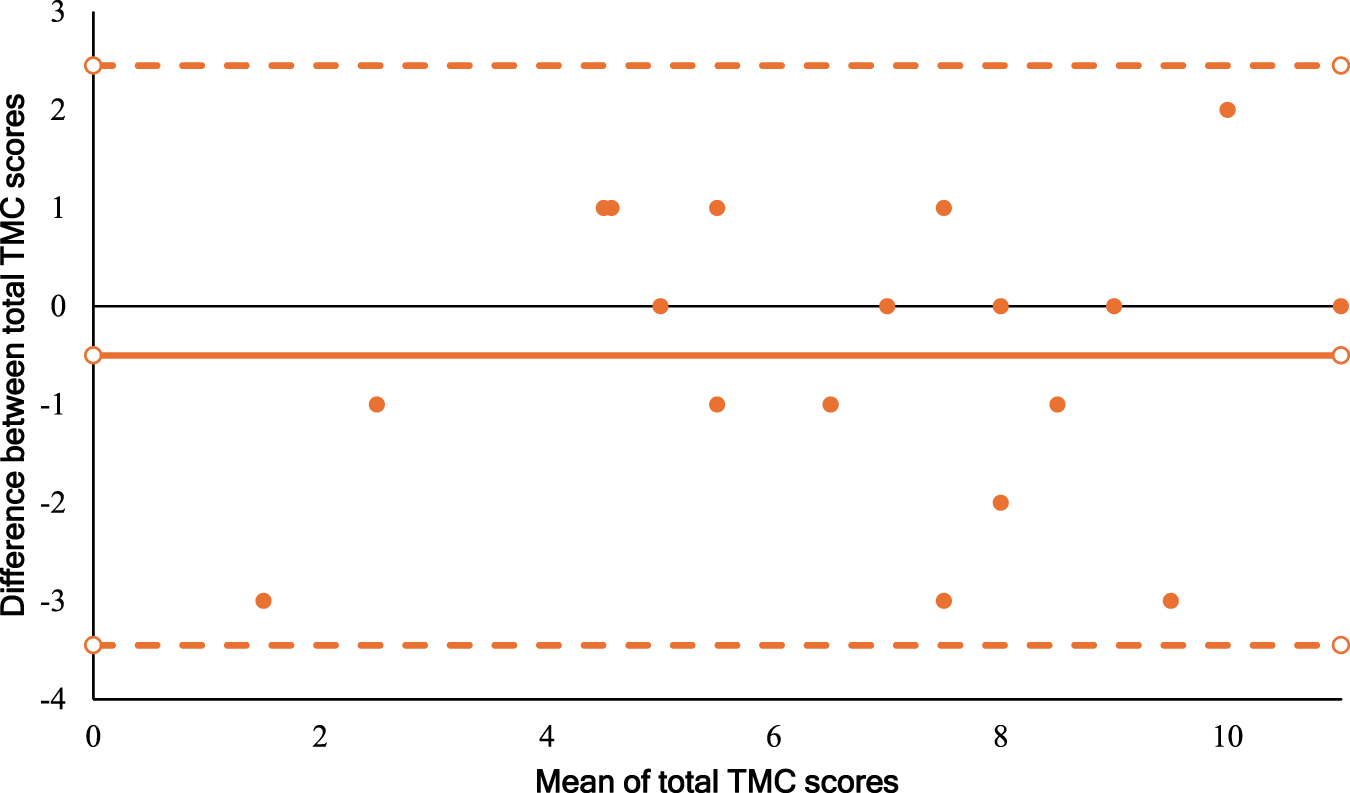

Demographic Characteristics of Educators Interviewed in the Focus Group

Outcomes of the Focus Groups

After in-depth consultation with educators regarding the design of an instructional resource to administer the TMC, four key themes were identified to help develop the instructional resource: (1) training, (2) the role of the educator around the screening process, (3) the content in the instructional resource, and (4) the formatting and graphics of the instructional resource. (1) Training.

All participants agreed that they did not require formal training prior to using the instructional resource; however, one group suggested that educators may benefit from a forum page to discuss and pose questions about the use of the TMC. (2) The Role of the Educator Around the Screening Process.

All participants understood the importance of discussing their concern for a child’s development with the parents when identified from the screening tool. Both groups noted that once a concern was raised, referring to physiotherapists was not common practice and wished to have more active involvement: ‘I’d like to know why [an area of development] is important and then what we can do as an environment to support’. (3) Instructional Resource Content.

Educators wanted evidence-based and up-to-date information, with inclusion of appropriate referral services when concerns were identified. One educator noted that child developmental resources that are currently available tend to be outdated. All educators believed that the instructional resource should include information about developmental milestones. When asked about whether research articles or literature should be included, participants emphasised the importance of simple and concise information so as not to ‘lose’ or ‘…overwhelm people’. (4) The Formatting and Graphics of the Instructional Resource.

All participants preferred paper over electronic formatting of the resource to enable physical filing. Some educators highlighted that an online instructional resource would be more accessible, allow easy and widespread distribution between child-service facilities, and be more environmentally and economically sustainable to reduce paper printing and cost. Participants raised the concern that not all facilities had access to several electronic devices such as laptops. Therefore, a printable manual that combined the website information and the TMC assessment was recommended. All participants agreed on the benefit of photographs, additional commentary space on the scoring sheet, and a neutral colour scheme throughout to appear more professional and enable focus on the content.

Stage 3: Pilot Evaluation

Participants

Eighteen children (M age = 48.3 months, SD = 2.5 months) (10 boys) were assessed by 18 educators (all women) using the instructional resource developed following the focus groups.

Outcomes of the Pilot Testing

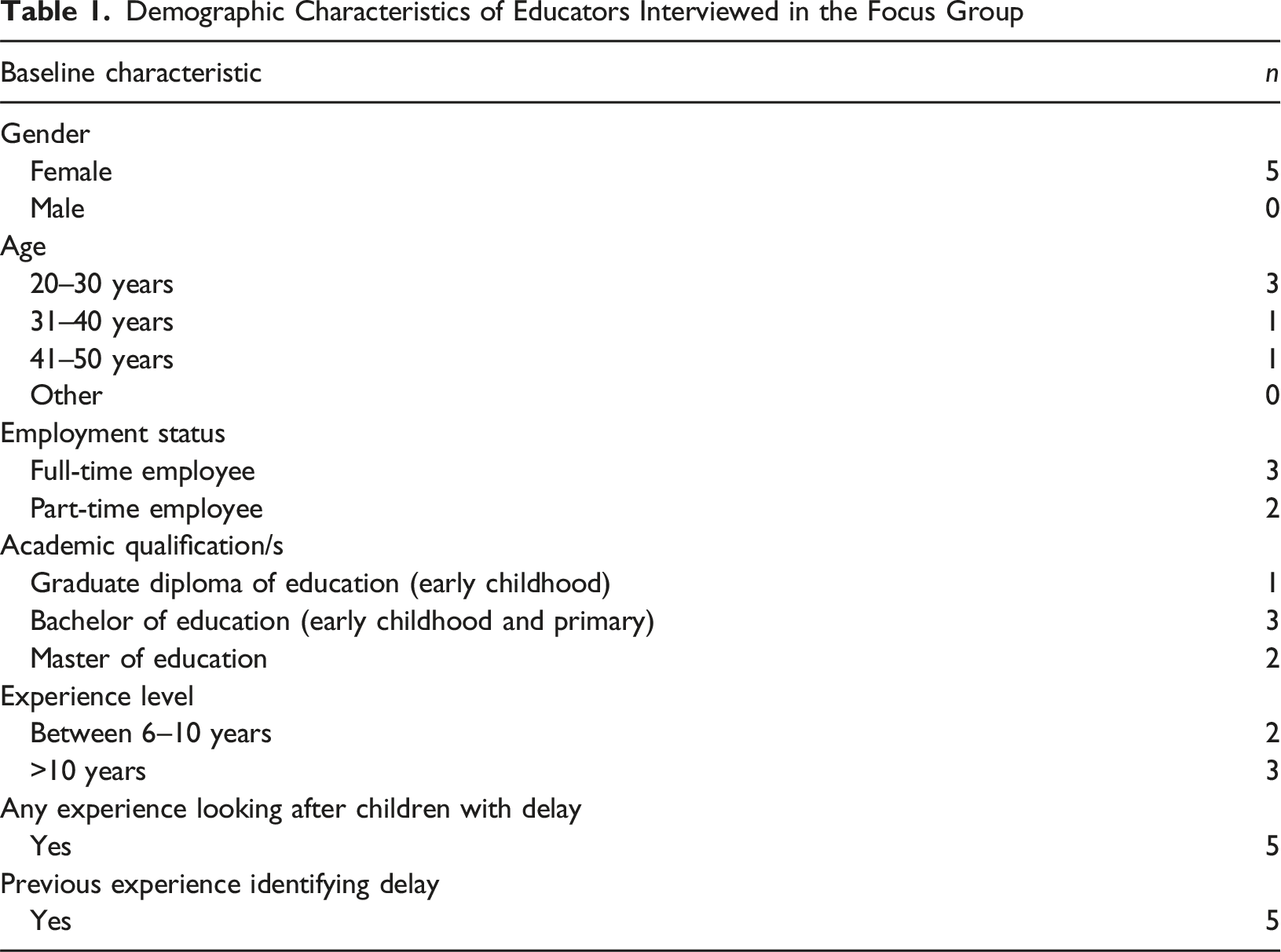

Errors are evident regardless of the magnitude of the averaged scores, with a mean difference of −0.5 total TMC score (SD = −1.2 to 0.2) (Figure 2). All plots lie within the upper (2.5, 95% CI 1.2 to 3.7) and lower (−3.5, 95% CI −4.7 to −2.2) levels of agreement. Similarly, there was complete agreement between raters on 5 out of 18 occasions. Educators were more likely to under-score than over-score children. In particular, children who had a mean TMC score less than 4, were all under-scored by the educator. Bland-altman plot for total TMC score level of agreement analysis between educator and physiotherapist (n = 18)

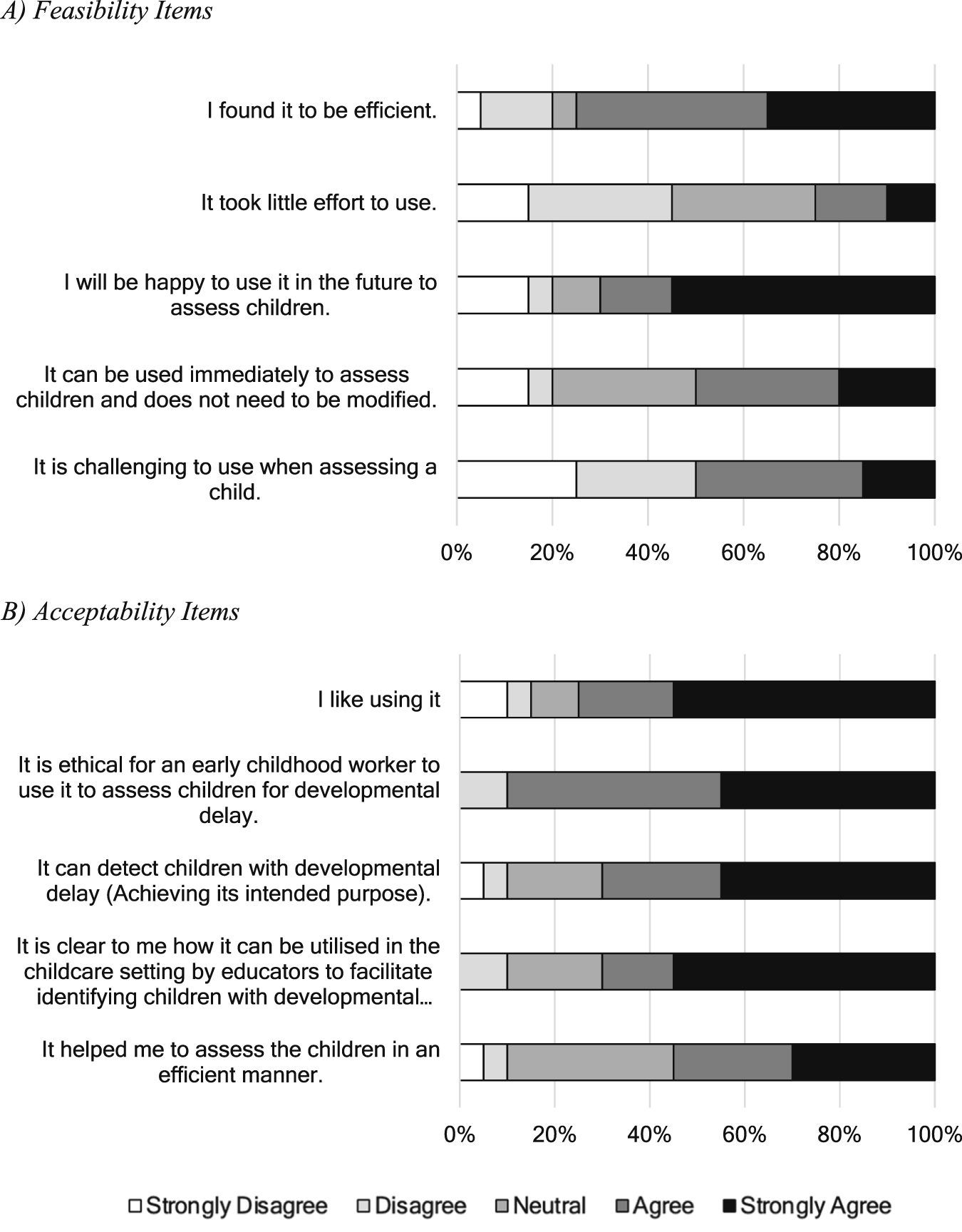

Feasibility and acceptability of the instructional resource were further evaluated in a survey following the assessment (Figure 3). Participants agreed that the instructional resource was feasible (Figure 3, panel A) in enabling educators to perform the TMC to screen for mild motor delay in 4-year-old children in centre-based settings. Namely, educators ‘strongly agreed’ or ‘agreed’ that the instructional resource was efficient to use to assess children (75%), and they would be happy to use the instructional resource in the future to assess children (70%). Educators provided mixed responses when asked whether the instructional resource was challenging, with 50% who ‘strongly agreed’ or ‘agreed’ that it was challenging. Educators required 30–60 minutes to screen despite being provided with the website link and additional reading time prior to the assessment to familiarise themselves with the resource. Stacked 100% bar chart representing the frequency of responses on a five-point scale for 10 items on the survey

In terms of the acceptability of the instructional resource (Figure 3, panel B) in assessing children using the TMC, 90% of educators either ‘strongly agreed’ or ‘agreed’ that it was ethical for educators to use the instructional resource to assess children; and thought the instructional resource was likeable (75%), clear (70%), and achieved its intended purpose (70%). Despite this, only 25% and 50% of educators ‘strongly agreed’ or ‘agreed’ that the instructional resource took little effort to use and found it difficult to use, respectively.

Suggestions for Improvement of the Instructional Resource

Three key themes were identified in the open-ended survey responses which highlight areas of further improvement in the use of the instructional resource: (1) need for training, (2) refinement of the contents in the instructional resource, and (3) the formatting and graphics of the instructional resource. (1) Need for Training.

The majority of the problems identified by educators was due to a lack of familiarity with the instructional resource, knowledge about how to screen in general, and struggling with scoring after completing an item. Educators raised concerns around whether they were conducting the screening correctly, citing their ‘…lack of knowledge of the tool’ as a barrier, and suggested a ‘…training session on how to…’ perform the assessment items would be beneficial to overcome these problems. In particular, multiple educators noted that ’head righting’ (item 5) was difficult to perform. (2) Refinement of the Contents in the Instructional Resource.

Educators found that the resource had ‘…lots of information…’ and ‘…chunky text’ which increased the difficulty with screening confidently and consequently led to confusion with how some items should be performed. Educators recommended providing detailed explanations for more challenging items such as head-righting but otherwise being ‘more concise’ in the instructions. (3) The Formatting and Graphics of the Instructional Resource.

Educators noted that the sentence structures and sequence of the TMC items made it difficult to perform the assessment. To overcome these barriers, educators recommended providing instructional videos on the website, video examples to aid scoring, and providing clearer dot-point instructions rather than blocks of text to improve clarity. Several educators preferred less text and more visual components.

Discussion

This study showed that an instructional resource co-designed with early childhood educators to enable screening for mild motor delay in 3-to-4-year-old children is feasible and acceptable. This is the first study to explore whether screening of mild motor delay can be performed by early childhood educators. Moreover, the findings of this study suggests that standardised screening for mild motor delay can be performed in the centre-based service setting.

While the instructional resource was deemed feasible and acceptable overall by educators, there was inconsistency in the scoring of the TMC between educators and the physiotherapist. A degree of disagreement may stem from educators scoring based on their prior knowledge of the child’s usual capabilities, uncertainty with assessment items, or striving for ‘perfect form’ and therefore under-scoring for conservative decision-making. This suggests that the instructional resource may not enable reliable assessment by educators using the TMC. The implications of a difference in scoring are that children who are considered to be ‘at-risk’ may be missed by educators who tend to over-estimate performance. Although less problematic, educators who under-estimate performance on the TMC may refer on for comprehensive testing more often, highlighting the potential for ‘over-assessment’ in this population. Analysis of the impact of differences in scoring on need for further assessment is an area of future research.

A lack of agreement between raters is not uncommon and therefore defining the limits of agreement can be challenging (Dalton et al., 2012). Some assessor variability is expected given the requirements of the TMC that includes observing a child’s motor performance, interpretation, and scoring of performance, especially when performed by non-healthcare professionals. This could potentially be minimised by including a greater level of detailed information in the instructional resource; however, this will increase the resource’s complexity, compromise its ease of use, and affect its overall acceptability. Future efforts to minimise rater disagreement may involve designing a short training session for educators before using the TMC in centre-based service settings, as suggested by educators in this study. Part of this session could include practicing scoring from videos and receiving feedback. This is particularly the case for performing certain assessment items such as head-righting that were difficult to execute in this study. Implementing an informative training program alongside provision of the instructional resource can maximise understanding and consistency. Other co-design studies (Ahern et al., 2022; Dennett et al., 2022; Revenäs et al., 2015; Woods et al., 2019) that have developed physiotherapy programs report a benefit in providing visual feedback or informative training programs prior to formal commencement of the program.

Overall, the results of this study suggest that the instructional resource may be beneficial in centre-based childhood service settings. A majority of feasibility and acceptability items were highly agreed upon by the educators, particularly its future use, efficiency, and ethicality. Conversely, our results suggest that educators found the instructional resource to be challenging and required a considerable amount of effort to use. Another feasibility contributor included time taken to assess. Whilst we anticipated that it would take up to 20 minutes to conduct the assessment, it took educators up to 60 minutes. Reasons for this may include a lack of familiarity with the online and paper versions of the resource and the TMC itself, lack of understanding of assessment items, or unavoidable disruptions at centre-based facilities. Given the time constraints in these settings, concerns were raised regarding the time required to deliver a one-to-one assessment in an environment that relies heavily on adequate educator-to-child staffing ratios for safety (Boyd, 2012; Coleman & Dyment, 2013).

We note some limitations in our study, including the lengthy administration time and the need for one-to-one assessments, making it impractical in the centre-based service setting. Furthermore, our study had limited focus group attendance and a relatively homogenous participant demographic, which may have restricted the emergence of additional themes. A training program may reduce the assessment time, or perhaps future developments include using a screening tool that takes less time and may screen larger groups of children. Future efforts to facilitate up-to-date and relevant professional learning sessions to educators on mild developmental delay, screening, and providing support within these centre-based services, may increase professional confidence enhancing scoring ability and inter-rater reliability. Furthermore, the educators willing to participate may be a specific group of highly motivated individuals; and educators may only opt to assess children they have concerns about who are more likely to have moderate or severe delays.

Conclusion

This study provides initial feasibility and acceptability data for implementation of an instructional resource to screen for mild motor delay in 4-year-old children in the centre-based service setting. The pilot evaluation of the instructional resource demonstrates satisfactory levels of agreement between educators and the physiotherapist, and an adequate level of feasibility and acceptability; however, further efforts are required to improve the reliability and ease-of-use of the TMC through training where educators are given time to grasp more challenging assessment items and are provided formative feedback prior to using the tool.

Footnotes

Acknowledgements

We would like to acknowledge all research participants. We would also like to acknowledge Macquarie University for providing funding support for this research project.

Ethical Considerations

The study was approved by the Macquarie University Human Research Ethics Committee (Reference number 520221150238072).

Consent to Participate

All educators provided written informed consent prior to participation. All parents or guardians provided written informed consent to have their child undergo the motor assessment by an educator from their facility, and all children provided assent to the assessment.

Author Contributions

TH: conception of project, design of methodology, data collection, analysis, writing of the first draft, critical revision of draft; EI: conception of project, design of methodology, data collection, analysis, critical revision of draft, supervision; LB: conception of project, design of methodology, data collection, analysis, critical revision of draft, supervision.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study received funding for research participants from Macquarie University.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data and material is available upon reasonable request to the authors.

Permission to Reproduce Material From Other Sources

No material was reproduced from other sources.

Clinical Trial Registration

This study was not a clinical trial.