Abstract

Leadership competencies in early childhood (EC) education present an intricate policy and practice landscape. This article provides insights into these complexities and shares a novel survey evaluation tool that can be used in services for identifying leadership competencies and gaps for ongoing professional development. The research employed a two-phase approach involving thematic analysis of governing documents and focus group interview data. This resulted in the development of five core domains of leadership competencies represented throughout a survey tool. The subsequent validation of the survey revealed both self-identified roles and demonstrated competencies among educators in Western Australian EC services. The article sheds light on discrepancies between perceived and observed leadership, suggesting the need for tailored professional development and succession planning. Insights into these dynamics contribute to a deeper understanding of effective leadership practices, offering a foundation for ongoing discourse and continual enhancement of leadership competencies in the context of EC education.

Quality leadership is an integral component of Early Childhood Education and Care (ECEC). Leadership is instrumental in shaping services and teams, for providing high quality educational processes and outcomes, and for creating the conditions for educators to be successful in their important work with young children and families (Brooks & Normore, 2017). Critically, the ways in which leadership is understood and enacted in ECEC has significant implications for young children and how they experience pedagogy and practice in long-day-care services (Nicholson et al., 2018).

In Australia, the National Quality Framework (NQF) governs ECEC, incorporating the National Quality Standards (NQS), which set the benchmark and articulate expectations concerning quality service delivery in ECEC. These frameworks include provisions for effective leadership and governance of services, with an emphasis on culture-building, and shaping and sustaining quality environments that support young children’s wellbeing, learning, and development (Togher & Fenech, 2020). It is noted that leadership in ECEC is “complex, multi-faceted, and diverse” (ACECQA, 2024, p. 334), with certain unifying aspects identified. For instance, there is a consistent focus on quality leadership being characterised by collaboration and reflection. Further specific expectations are set out within Quality Area Seven - “Governance and leadership” - which addresses the role of effective leadership in building and promoting a positive culture and a professional learning community (Standard 7.2). Within Standard 7.2, key elements are delineated: continuous improvement, educational leadership, and development of professionals. In terms of educational leadership, the element signals the role of the educational leader and support required for the position (ACECQA, 2024). While specific leader responsibilities are contextual and vary across different service settings, the NQF maintains a strong focus on quality leadership as an essential dimension of providing quality education and care.

Emphasis on quality leadership is also apparent in the revised Australian Early Years Learning Framework (EYLF; Australian Government Department of Education [AGDE], 2022), which includes a new principle: “Collaborative leadership and teamwork.” Here, the EYLF emphasises the importance of leadership for all educators in their daily work and their ongoing ethical practice, with collaboration premised on “shared responsibility and professional accountability” (p. 19) for the care and education of children, engaging professionally, respectfully, and with a disposition toward critical reflection and collegial conversations (AGDE, 2022). Attention is also paid to peer mentoring and the collective contribution of colleagues toward each other’s professional learning and advancement.

While the NQS and EYLF provide some parameters around what may comprise quality leadership, recent research has highlighted limitations around its conceptualisation and potential points of conflict and ambiguity in the information and resources made available (Cross et al., 2022). There is a need to progress further research that explores leadership in ECEC by examining the challenges, and the experiences and expectations of leaders working in services.

It is evident that quality leadership is a pivotal factor in ensuring that all children have safe and sustainable access to excellent pedagogy and practice, which affords them the best possible opportunity to belong, learn, and flourish. This article explores the significance of leadership, its complex nature in ECEC, and provides a validated survey tool that can be used to compare educators’ self-perception of leadership responsibilities with their leadership competencies.

Literature review

Quality leadership by EC Educational Leaders (ECELs) is crucial for improving organisational culture and supporting professional learning for educators (ACECQA, 2019). Despite the growth in literature available, a disconnect with National Quality Standards causes confusion as to what leadership looks like in an ECEC service (Klevering & McNae, 2019). In the context of ECEC services, providing education and care to children aged birth to five years, a critical distinction emerges between organisational structure and organisational culture. Traditional leadership models in ECEC services, reinforced by the National Quality Standards, often feature hierarchical structures with designated positions and authority (Bøe & Hognestad, 2015; Fleet et al., 2015; Heikka & Hujala, 2013). Recent trends, however, emphasise dynamic, democratic and collaborative education environments (Brooks & Kensler, 2011; Brooks et al., 2007), with distributed leadership approaches (Bøe & Hognestad, 2015; Clarkin-Phillips, 2011; Krieg et al., 2014; Spillane, 2012). This highlights the need to explore educators’ perceptions of leadership in their work and service.

Identifying as a leader is crucial for developing leadership skills (Cruz-González et al., 2021; Miscenko et al., 2017; Yeager & Callahan, 2016). Those who see themselves as leaders are more likely to be empowered and engage in development opportunities and strengthen their own leadership foundations (Miscenko et al., 2017; Yeager & Callahan, 2016). Cooper (2020) studied this in a New Zealand ECEC Service, finding that teachers who embraced a leader identity aligned their teacher and leader roles confidently. Alternatively, teachers who did not identify as leaders felt uncertain about pursuing leadership identities and roles, often due to discomfort with perceived power dynamics. This research highlights the importance of leader identity in facilitating dynamic distribution of leadership (Bøe & Hognestad, 2017; Male & Palaiologou, 2015; Sims et al., 2017). Regardless of identity, fundamental leadership practices require a certain level of competency (Normore & Brooks, 2011).

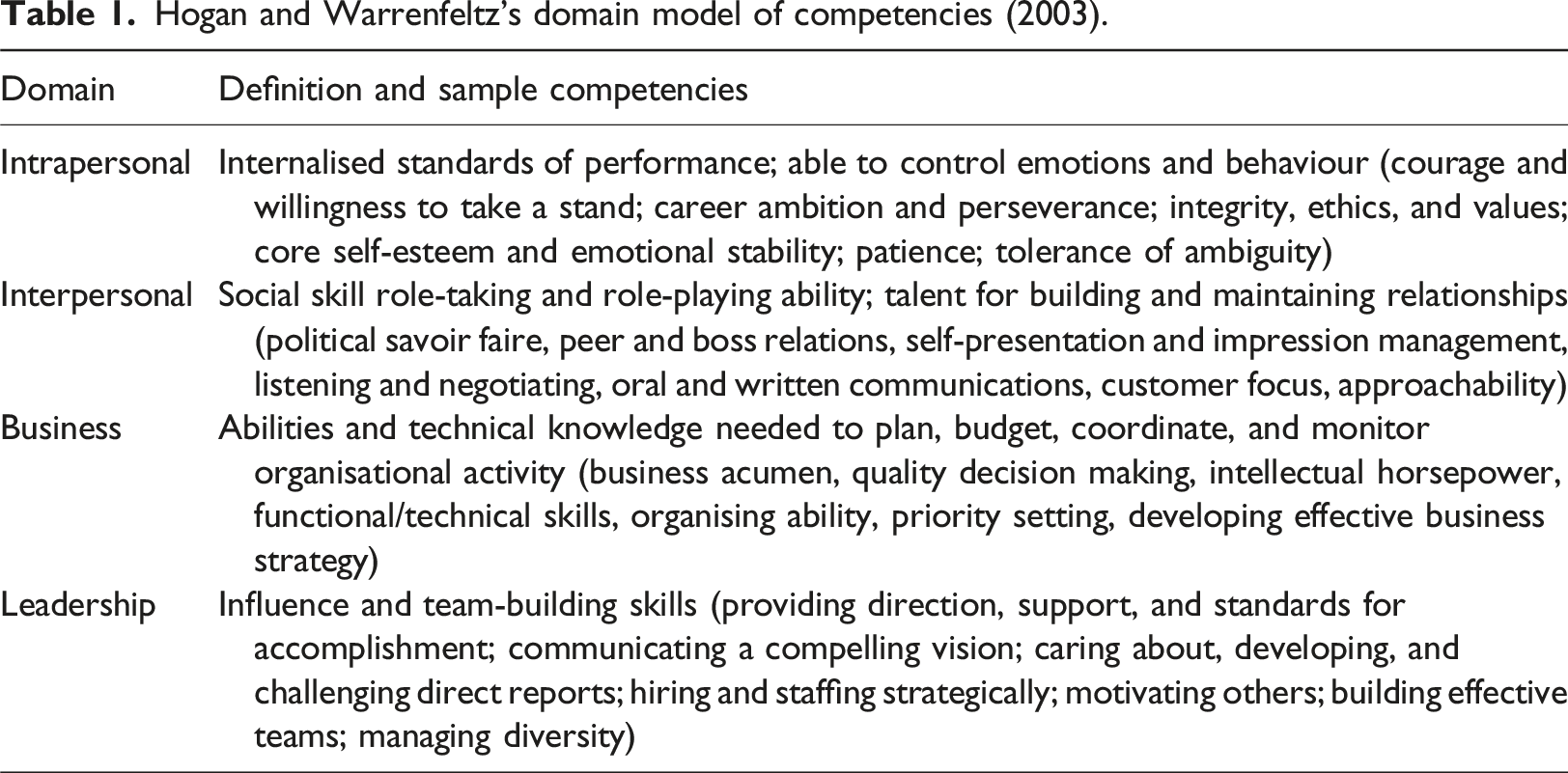

Hogan and Warrenfeltz’s domain model of competencies (2003).

The model is comprised of four types of competencies: intrapersonal, interpersonal, business, and leadership. Each of these is related to an attendant set of knowledge and skills (Leithwood, 2007) and aligns with the expectations outlined within the National Quality Standards’ Quality Area 7.2: Leadership, whereby individuals are expected to demonstrate continuous improvement, educational leadership, and development of professionals. These guiding principles mirror the competency domains outlined by Hogan and Warrenfeltz (2003) as leaders in ECEC services develop and promote intrapersonal, interpersonal, business, and leadership skills. Hogan and Kaiser (2005), explored the leadership competencies model, emphasising three key dynamics. First, the model is developmental, with skills evolving over a lifespan. Second, it is hierarchically organised by trainability, with intrapersonal skills being the most difficult to train. Finally, it is comprehensive, incorporating all existing leadership competency models available at the time of development (Hogan & Kaiser, 2005). However, it overlooks the complexities of ECEC leadership environments.

Leaders in the Western Australian ECEC sector face operational complexities due to internal and external factors, but critically, they are inextricably linked to the privatisation of the sector. Bronfenbrenner’s ecological perspective (1981) and Smith et al. (2017)’s work on instructional teacher leadership provide frameworks for understanding these challenges. However, while these models offer foundational insights, they fail to fully capture the complexities specific to the Western Australian EC environment. Our research adapts the leadership competencies model, integrating Hogan and Warrenfeltz’s domain model of competencies (2003) with the Western Australian ECEC sector’s ecology. Acknowledging the Australian Early Years Learning Framework (EYLF) and the National Quality Framework (NQF), we developed and validated a multidimensional survey tool. This tool measures leadership competencies in Long Day Care services, addressing the specific complexities of the Western Australian ECEC environment.

Methods

This research adopted an ontological perspective that recognised reality as socially constructed, acknowledging the complex nature of the ECEC sector and the various factors that influence quality leadership practices (Adams, 2006). It employed interpretivist (Creswell, 2013; Rehman & Alharthi, 2016) and critical theories of education (Apple et al., 2009; Kellner, 1989; Young, 1990) to critique sector governance and relational dynamics.

This article aims to elaborate on the development and validation of a multidimensional survey tool which is capable of measuring an individual’s leadership competencies. The development and validation of the survey aligned to Hinkin’s (1998) six steps to survey development and validation. Exploratory, confirmatory, and discriminant factor analysis assessed the tool’s validity (Farrell & Rudd, 2009). Data included governing documents and focus group interviews. The methods are detailed in two phases: Phase 1: Item Generation, describing data collection and item creation, and Phase 2: Survey Validation, explaining the collection of survey data for tool validation.

Ethics approval was provided by Curtin University’s Human Research Ethics Committee prior to commencement of research activities (HRE2021-0705). All participating services provided informed consent from a service representative and participating staff members. To preserve participant and service provider anonymity, no identifying information is included in this article.

Phase 1

Participants

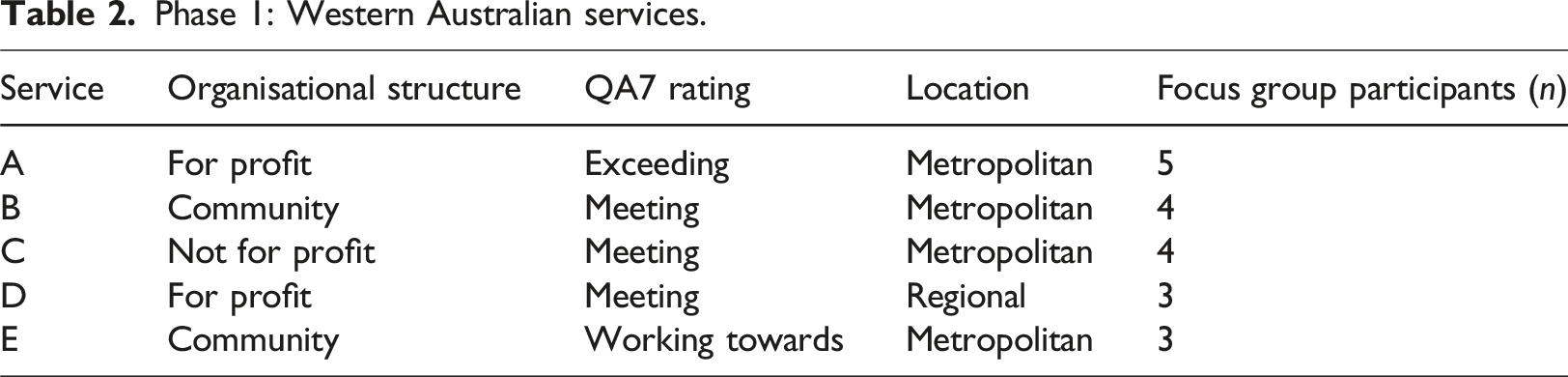

Phase 1: Western Australian services.

Data collection

The initial research phase involved generating survey items through data collection (Burton & Mazerolle, 2011). Data sources included governing documents and focus group interviews. We examined leadership documents, policies, procedures, organisational structures, and position descriptions from five participating services, obtained via email and desktop audit. Additionally, five focus group interviews explored leadership policies, organisational structures, and their impact, offering educators’ perspectives on quality leadership and ECEL roles.

Data analysis

Governing documents and focus group interviews underwent thematic analysis through inductive and deductive coding (Braun & Clarke, 2012), helping identify latent codes which reflected a more in-depth and implicit level of meaning (Braun et al., 2019). Inductive coding led to the development of coding categories, followed by deductive recoding using the initial inductive codes (Braun & Clarke, 2012; Braun et al., 2019). These codes assisted in identifying themes. These themes were then used for cross-case analysis, employing NVivo software’s coding matrix. This data informed the generation of survey questions.

Results

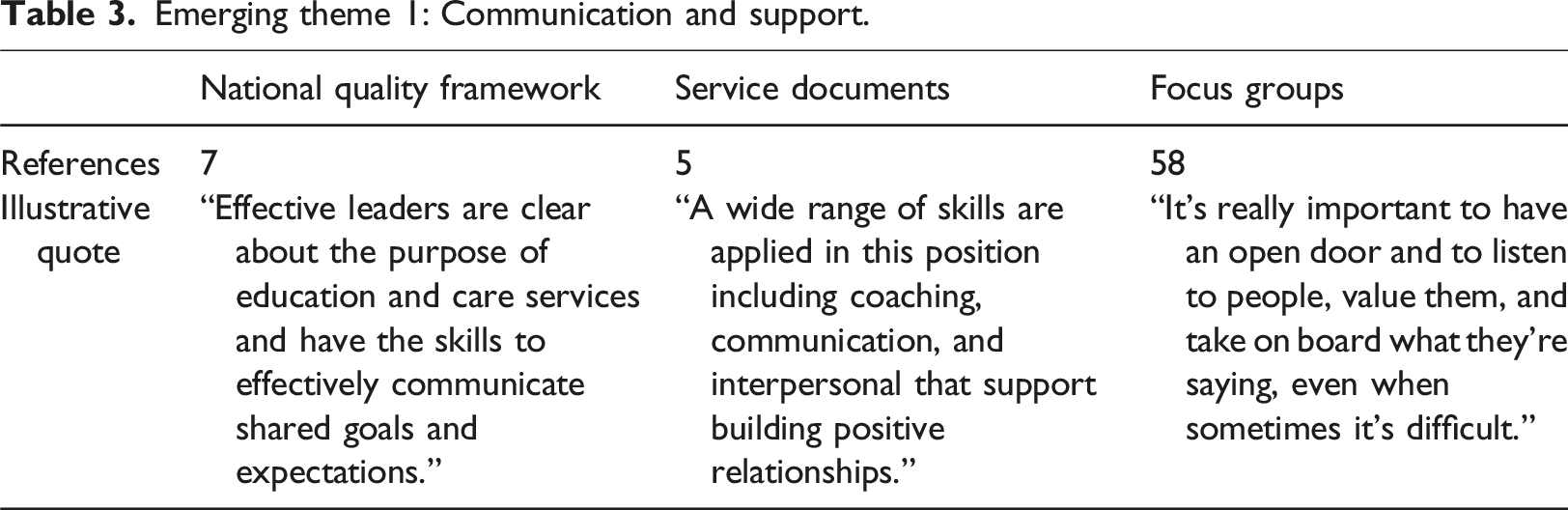

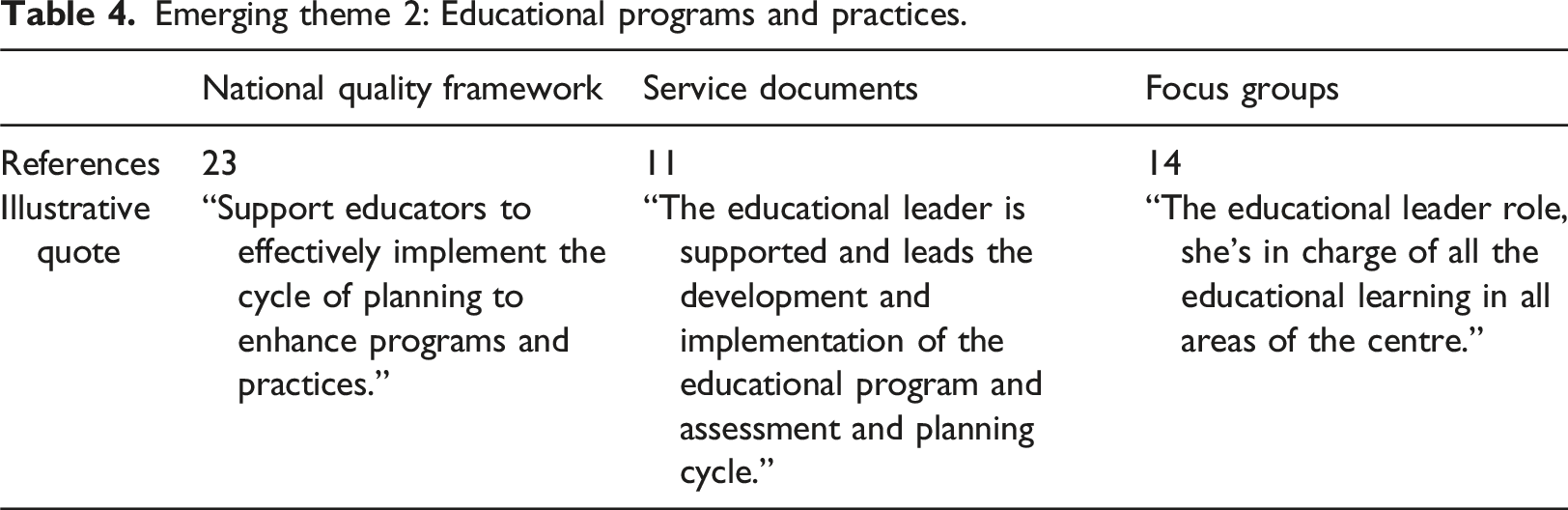

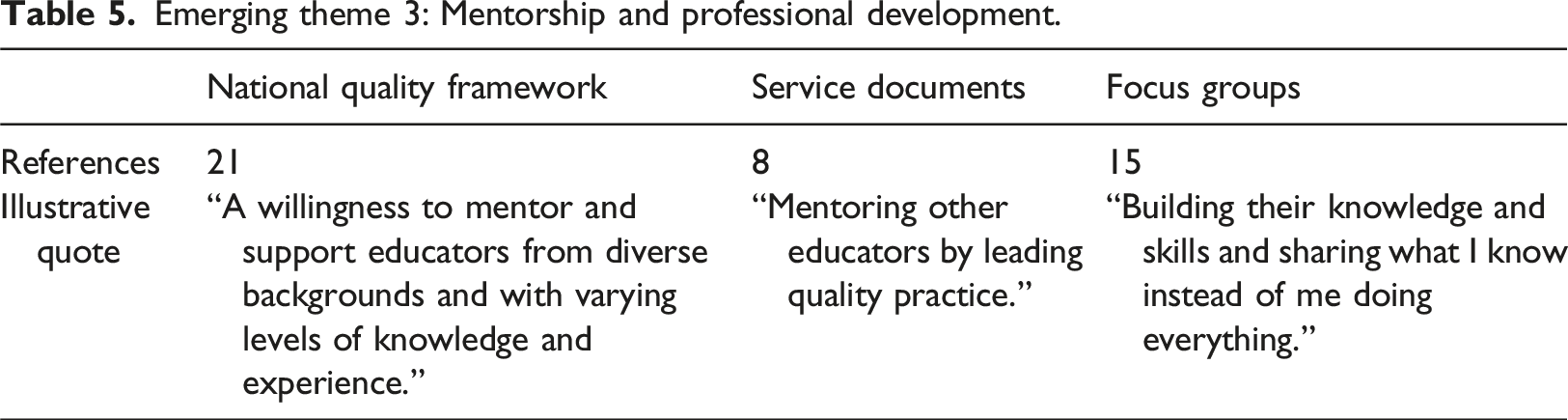

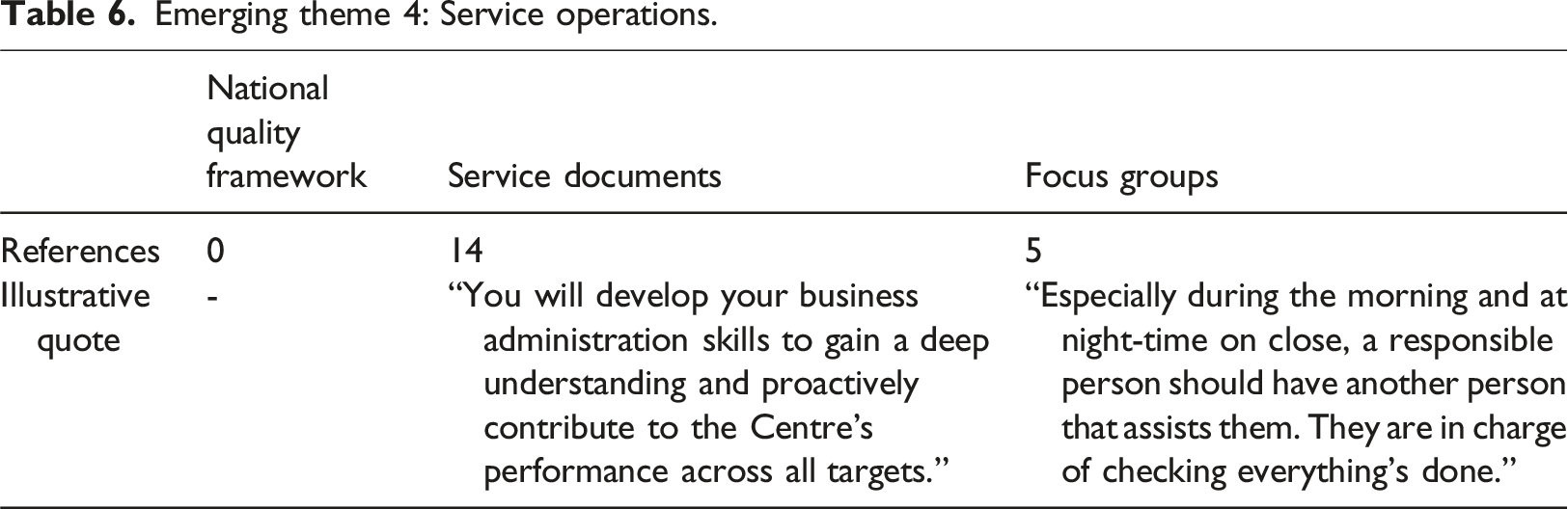

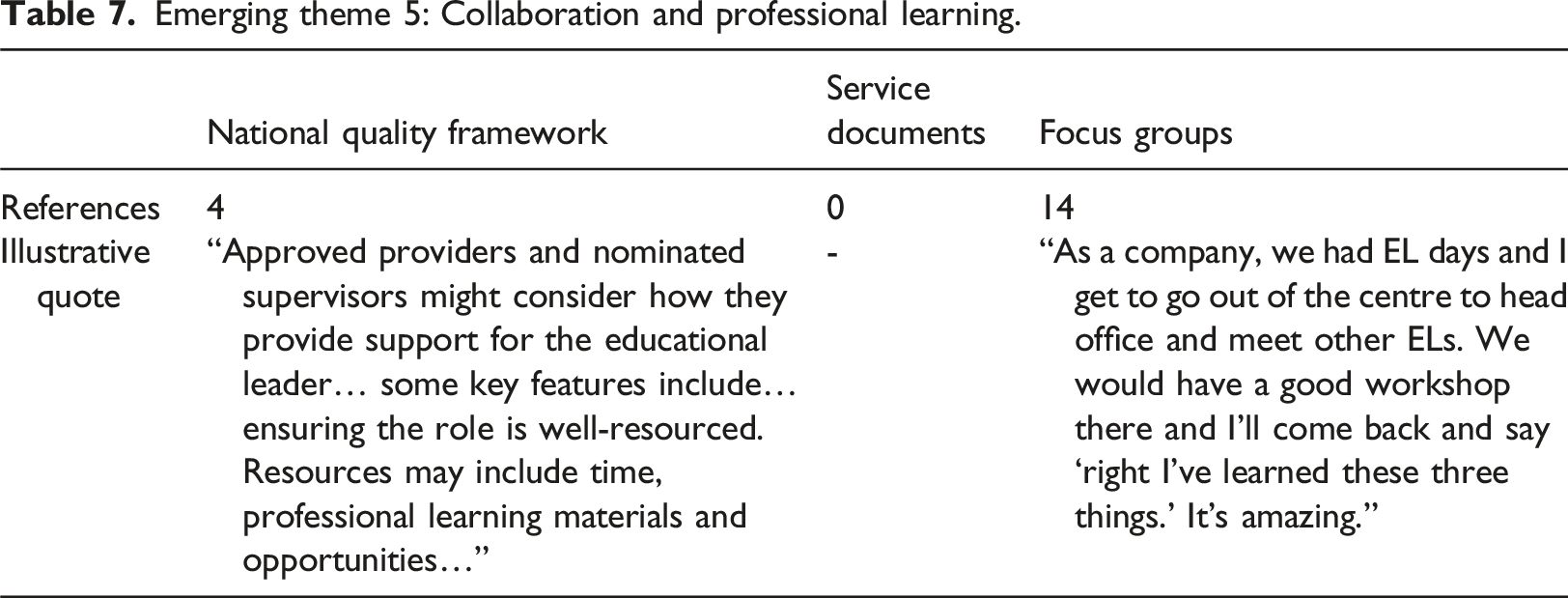

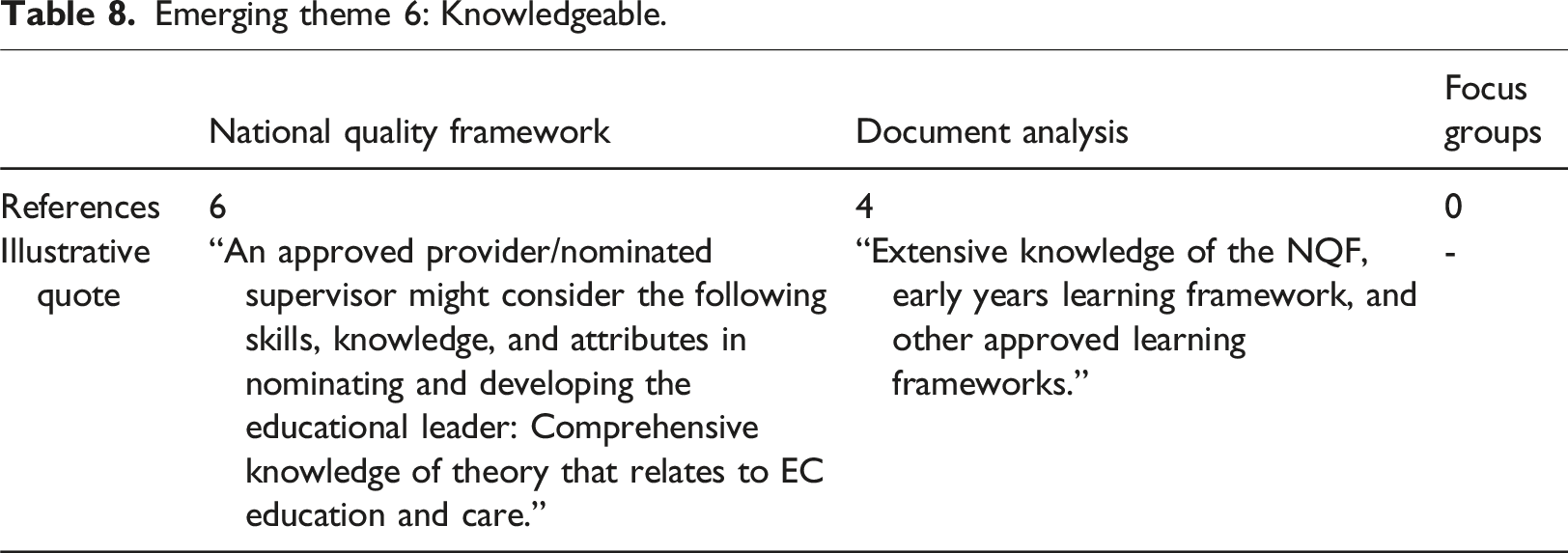

Data from governing documents and focus groups, collected in the initial research phase, informed item generation and was thematically analysed using both inductive and deductive approaches (Braun & Clarke, 2012). Six themes were identified related to the competencies of a quality leader in an ECEC service: communication and support, educational programs, mentorship, service operations, collaboration, and knowledge. These themes were substantiated with descriptors and illustrative quotes.

Emerging theme 1: Communication and support.

Emerging theme 2: Educational programs and practices.

Emerging theme 3: Mentorship and professional development.

Emerging theme 4: Service operations.

Emerging theme 5: Collaboration and professional learning.

Emerging theme 6: Knowledgeable.

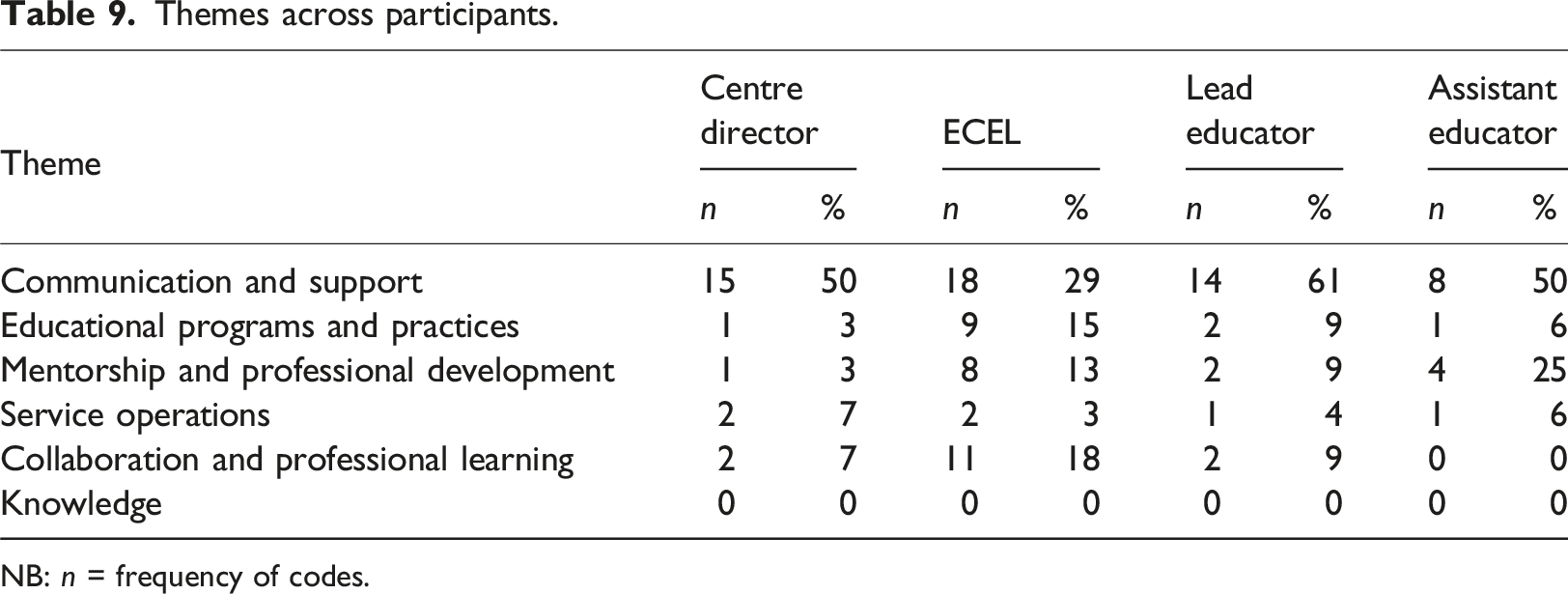

Themes across participants.

NB: n = frequency of codes.

Table 9 presents the findings from themes among the participants from various positions that participated in the focus groups. Due to unequal participant numbers representing each position, percentages were calculated to ensure accurate comparisons. These findings highlight the diverse perspectives on quality leadership characteristics, which informed the generation of items for the survey tool. Notably, the analysis showed that a leader’s communication and support was the most frequently discussed characteristic of a quality leader across all participant positions. These emerging themes underpinned the generation of items included within the survey tool. This is further elaborated through the discussions of this article.

Phase 2

Participants

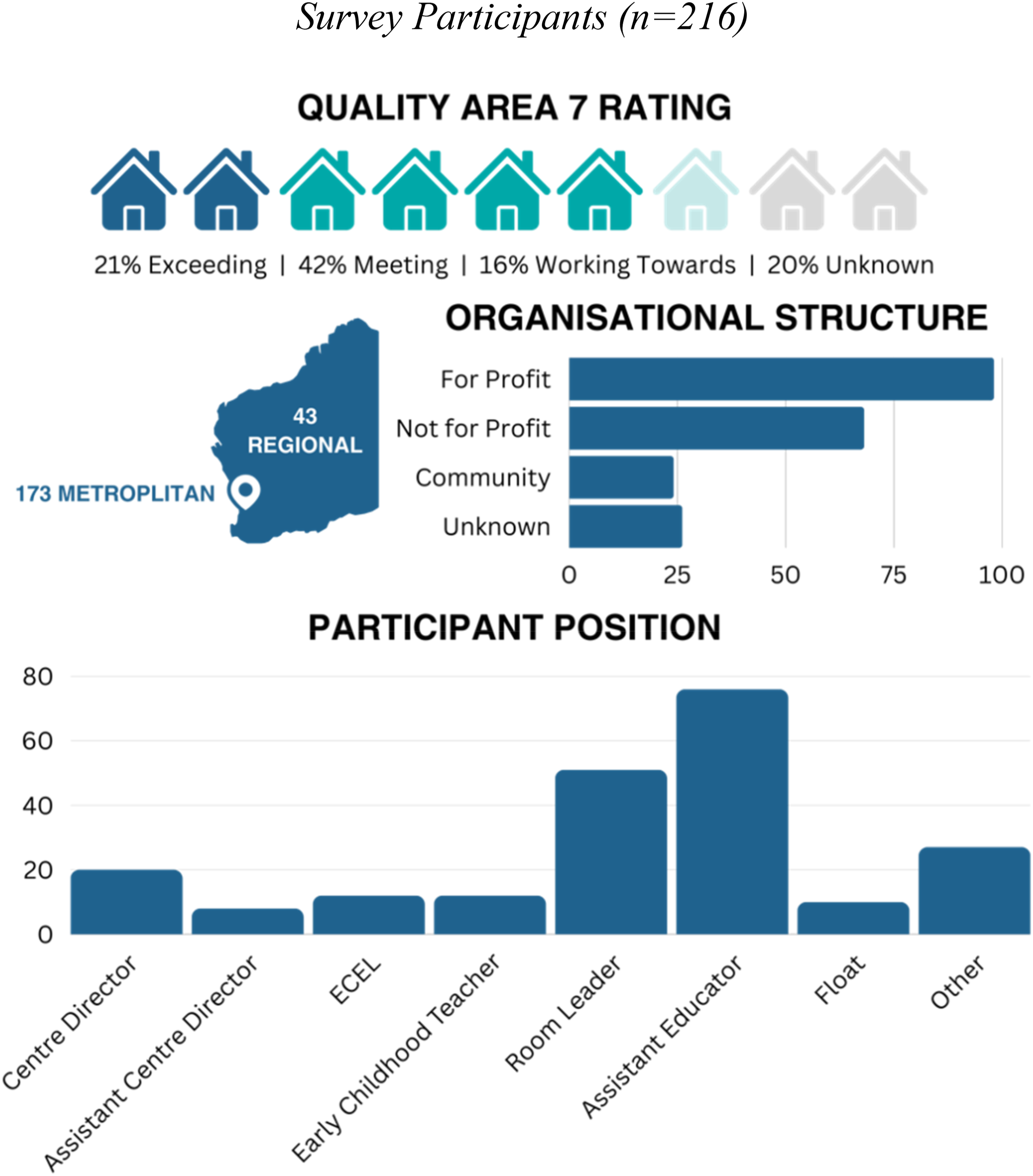

Survey participants were selected based on meeting criteria of working in long-day-care services assessed and rated after 2018 in Western Australia. Hinkin (1998) advised a minimum of 200 participants for robust analysis. Recruitment occurred in small groups to encourage relationships and support during data collection. Participant details are documented in Figure 1. Survey Participants (n = 216). NB: *ECEL = early childhood educational leader.

Participants self-nominated their Quality Area 7 ratings, with 16 (20%) choosing Excellent and one opting for Significant Improvement Required. However, no Western Australian ECEC services had these ratings per National Service Registers at the time. Therefore, this result is considered ‘unknown.'

Data collection

In the second phase, we validated the survey tool through data collection, starting with two pilot surveys. Insights from these pilots refined the final survey, which was then distributed to eligible ECEC services and educators. The researcher visited many services in person, while others were contacted via email, virtual meetings, or provided with an anonymous Qualtrics link for independent completion.

Data analysis

The survey data underwent thorough validation, encompassing exploratory factor analysis, and tests of convergent and discriminant validity (Hinkin, 1998). Variables with correlations below 0.4 were excluded to reduce potential errors (Churchill, 1979; Kim & Mueller, 1978). Internal consistency was assessed using Cronbach’s Alpha, with a coefficient of 0.7 indicating strong item covariance and satisfactory domain representation (Nunnally, 1976; Price & Mueller, 1986).

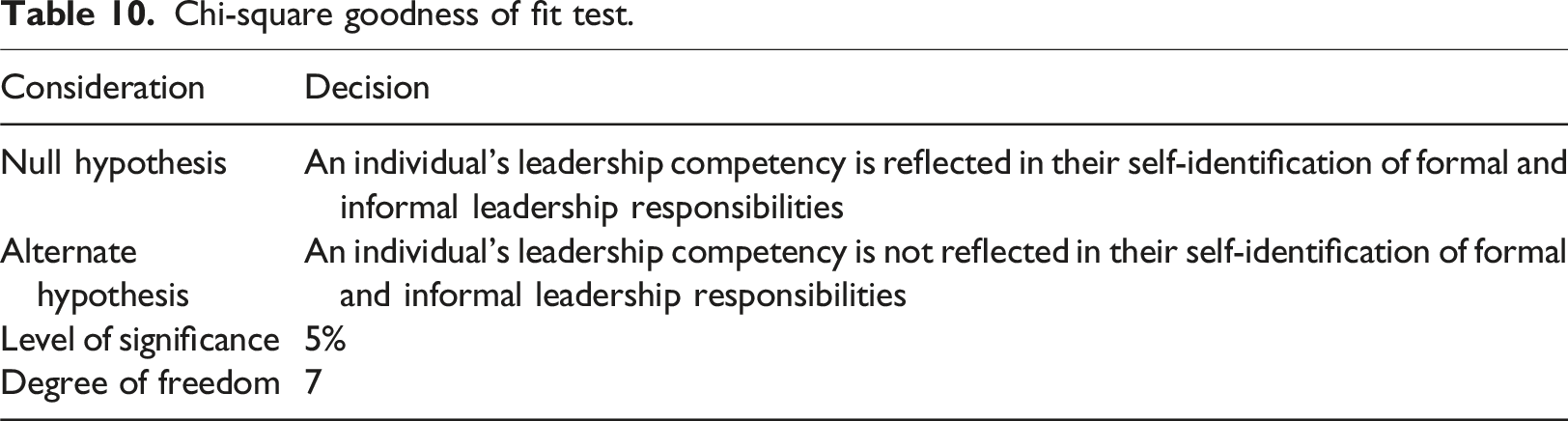

Chi-square goodness of fit test.

In the absence of similar construct measures, principal component analysis was employed for discriminant validation (Hinkin, 1998). This technique analysed inter-correlated dependent variables to extract key information and represent it as orthogonal principal components (Abdi & William, 2010).

Results

Data collected from 216 responses supported the validation of the survey tool, across multiple statistical approaches, beginning with exploratory factor analysis (EFA). To begin the EFA process, the survey items were analysed to understand how they related to each other. If the items did not demonstrate sufficient similarity (an interitem correlation lower than 0.4), they were removed (Churchill, 1979; Kim & Mueller, 1978). The survey began with five main areas (constructs) being measured, each containing ten items (questions). Interitem correlation calculations identified items for removal or re-distribution to more suitable construct areas. After this process, there were 9 items in the intrapersonal construct, 10 in interpersonal, 11 in business, 9 in society, and 3 in governance.

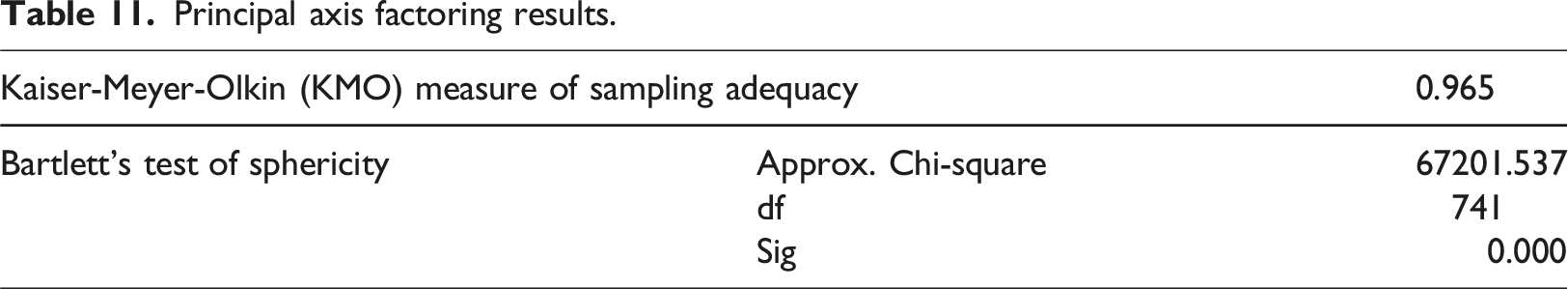

Principal axis factoring results.

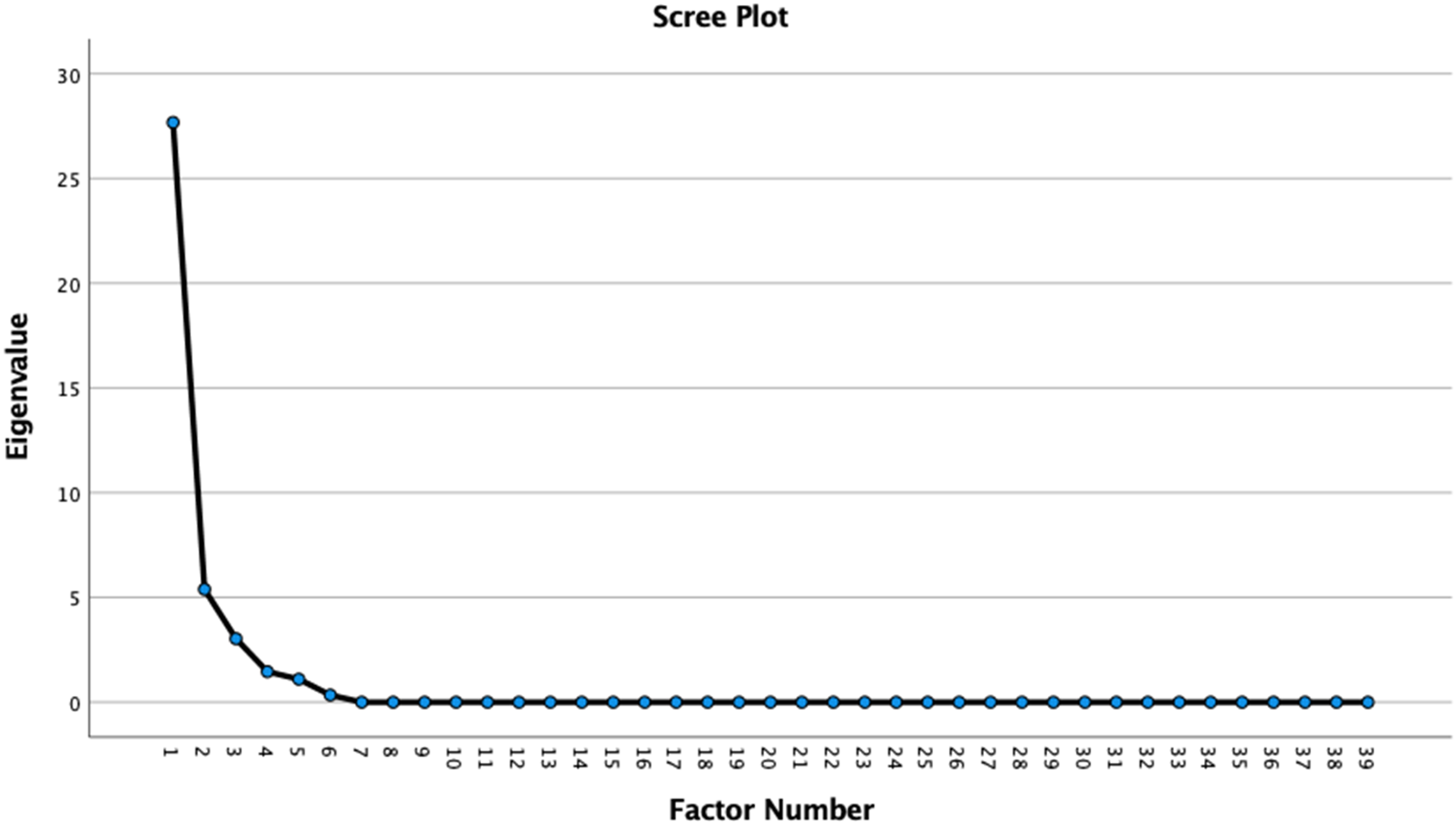

Table 11 displays results from KMO and Bartlett’s tests. KMO yielded a robust result surpassing the 0.6 threshold, while Bartlett’s Test significance was <0.001, indicating significant item correlation. All items exhibited anti-image correlation and communality exceeding 0.5. Exploratory factor analysis guided by quantitative results and theory (Hinkin, 1998) determined factors retained, without a specified number. The KMO and scree testing, per Hinkin (1998), confirmed researcher expectations. KMO testing identified five components with eigenvalues over 1, a finding supported by scree testing (Figure 2). Relevant literature and theory were also scrutinised to justify the retained factors. Scree test results.

The results of scree testing corroborated KMO test results showing that the survey contained five main areas (constructs), in alignment to what was expected based on preliminary analysis of theory. As such, all leadership competency domains were retained within the survey tool.

The exploratory factor analysis assessed internal consistency, crucial for survey tool validation (Kerlinger, 1986). To ensure that the survey was reliable, Cronbach’s alpha was used (Price & Mueller, 1986). A high alpha, recommended by Nunnally (1976) as 0.7, signifies robust item covariance, ensuring adequate domain representation. This threshold serves as the minimum for new instruments, considering sensitivity to item count (Cortina, 1993). The 39 items in the tool achieved a Cronbach’s Alpha of 0.982, surpassing the 0.7 minimum for sufficient domain representation in a new survey tool. As such, this testing showed a high level of consistency across the items and confirmed that the items covered the five areas (constructs) well. This marked the completion of EFA testing, paving the way for the initiation of Confirmatory Factor Analysis (CFA).

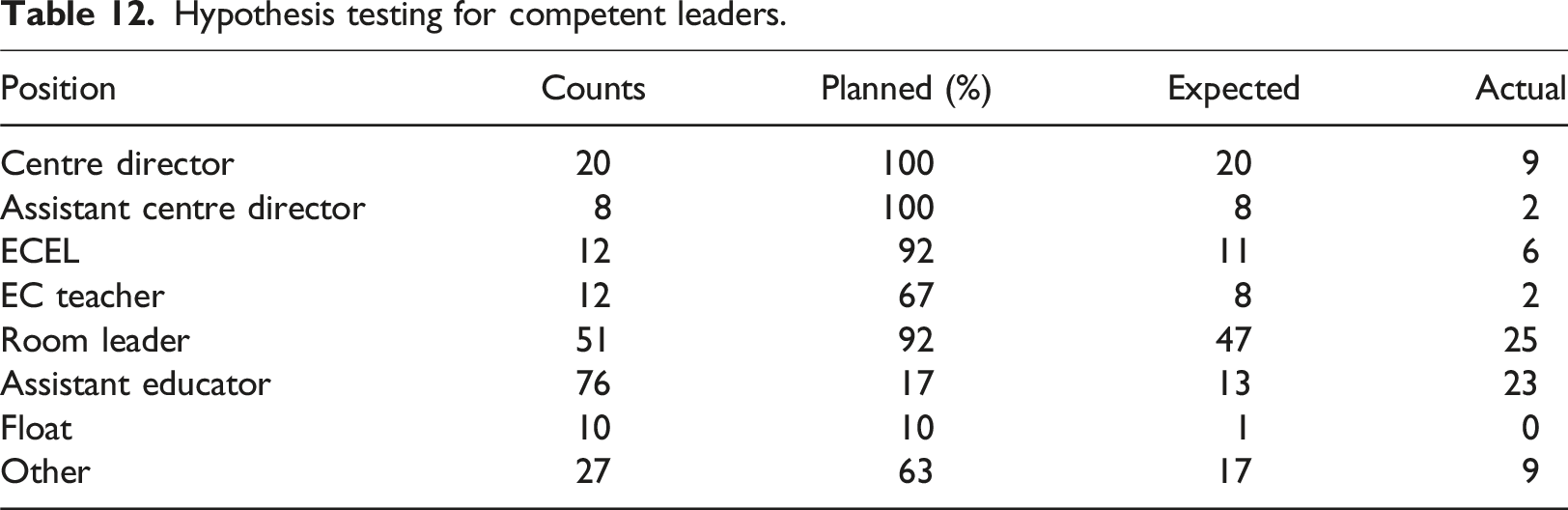

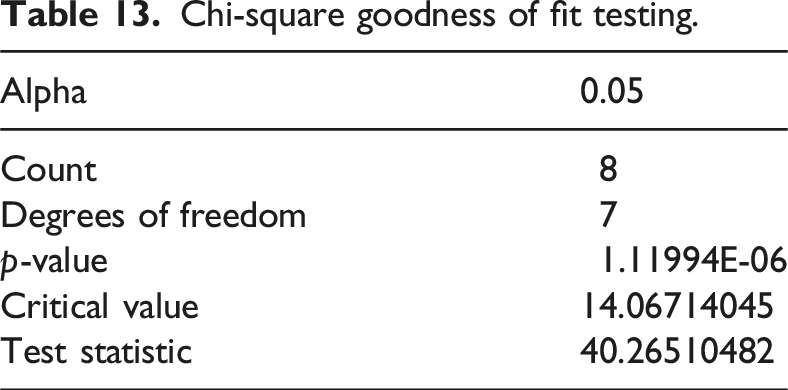

Confirmatory factor analysis, the fourth step in survey development, ensures that the survey tool measures what it is supposed to (validity). Previous survey development steps enhance validation and reliability, but Confirmatory Factor Analysis (CFA) is crucial for affirming their thoroughness. The chi-square statistic, assessing goodness of fit, validates if the theoretical distribution aligns with sample data (Cochran, 1952; Lancaster & Seneta, 2005). This project applied the chi-square approach to test whether educators not identifying as leaders exhibit leadership competencies.

Hypothesis testing for competent leaders.

Chi-square goodness of fit testing.

The chi-square goodness-of-fit test established a rejection threshold of 14.07. With a test statistic of 40.27, the null hypothesis was rejected, suggesting the plausibility of the alternate hypothesis. Through these stages of testing, it was confirmed that the survey identifies leadership competencies, even among individuals who do not see themselves as leaders. The final stage of validation involved discriminant validation; a check used to ensure the survey accurately measures different components of leadership. This research employed a principal component analysis approach to discriminant validation.

Discriminant validity in the competence domains was rigorously assessed using a principal components analysis. The resulting five-factor structure, supported by Nunnally (1976)’s criterion, demonstrated clear distinctions. Notably, one factor captured all Society items (except one), while Intrapersonal items revealed nuanced facets across three factors. Interpersonal items loaded on two factors, and Business and Governance items on two and one factor, respectively. Therefore, discriminant validity analysis confirmed that the survey contains five distinct competence domains (constructs) and measures each one individually.

Discussion

Phase 1: Item generation

The first phase of this research involved an exploration of the competencies required for quality leadership in ECEC services. Leveraging data from governing documents and focus groups, we conducted a thematic analysis, drawing on both inductive and deductive approaches (Braun & Clarke, 2012). The emerging themes informed the development of a survey tool designed to measure leadership competencies compared to leadership position identification. The findings of the thematic analysis identified six emerging themes: communication and support, educational programs and practices, mentorship and professional development, service operations, collaboration and professional learning, and knowledge.

Communication and support were identified as central competencies through thematic analysis. The focus group data highlighted a distinct emphasis on the expectation that leaders should be communicative and supportive (Brooks & Normore, 2017). Despite this focus, governing documents demonstrated a relatively low focus on these competencies. The discrepancy between identified competencies and emphasis on these skills underlines the importance of interrogating existing ECEC leadership literature to capture the diverse nature of leadership competency across the sector landscape (Fenech, Giugni, & Bown, 2012). Emergent themes highlighted the diverse competencies expected of quality leaders in ECEC services and guided the development of survey items. Insights from this phase informed a framework for effective leadership in ECEC education (Sims et al., 2017), adapting Hogan and Warrenfeltz’s Domain Model of Competencies (2003) to include five competency domains: Intrapersonal, Interpersonal, Business, Governance, and Society. The survey, comprised 63 items, including 10 items per domain, demographic questions, and an open-ended question, prior to validation.

Phase 2: Survey Validation

The second phase of the research focused on validating the survey tool derived from the thematic analysis. With a dataset comprising 216 responses, the validation process involved a series of statistical analyses, commencing with EFA. The EFA began by examining interitem correlations, leading to the refinement of the survey tool. Items with correlations below 0.4 were excluded, resulting in leadership competency domains (constructs) with varying item counts: 9 in intrapersonal, 10 in interpersonal, 11 in business, 9 in society, and 3 in governance.

To ensure the data’s suitability for factor analysis, Bartlett’s Test of Sphericity and the Kaiser-Meyer-Olkin (KMO) measure were applied. The KMO value surpassed the 0.6 threshold, and Bartlett’s test results were significant, confirming the data’s appropriateness for EFA. The analysis confirmed five components, aligning with the hypothesised constructs based on Hogan and Warrentfeltz’s (2003) Domain Model of Competencies adaption and thematic analysis. The high Cronbach’s alpha of 0.982, indicated strong internal consistency within the survey tool. This demonstrated the robustness of the items and affirmed the reliability of the survey tool.

Following EFA, CFA was used to validate the theoretical constructs. The chi-square goodness-of-fit test assessed whether the theoretical distribution aligned with the sample data. The rejection of the null hypothesis indicated that an individual’s leadership competency was not reflected in their self-identification of formal and informal leadership responsibilities, supporting the validity of the survey. Significantly, this process highlighted a noteworthy observation: all participating Centre Directors and Assistant Centre Directors self-identified with formal and/or informal leadership responsibilities (Woodrow, 2013). However, the manifestation of leadership competency was less prevalent among individuals occupying these roles. Conversely, a minority of Assistant Educators acknowledged either formal or informal leadership responsibilities, yet a greater proportion of these participants exhibited evident leadership competency. These findings highlight the need for improved professional development and recognition of leadership competencies, especially for assistant educators.

Discriminant validity was addressed through principal components analysis (Farrell & Rudd, 2009), showing clear separation among the competency domains. Society items grouped into one factor (except one), while Intrapersonal items spanned three factors, and Interpersonal, Business, and Governance items loaded on distinct factors. This analysis confirmed the model’s robustness and the distinctiveness of each competence construct.

Limitations and recommendations

The research is limited by sampling bias within Western Australian ECEC services. It is recommended that the survey is deployed nationally and internationally to establish broader validity and reliability (Mellinger & Hanson, 2020). Additionally, self-report measures may introduce bias, with participants possibly providing socially desirable responses (Donaldson & Grant-Vallone, 2002). A follow-up study could include an observational checklist to reduce self-reporting bias and triangulate results (Zenk et al., 2007).

Two key recommendations emerged: tailored professional development and succession planning interventions (Ryan & Gallo, 2011). Survey validation revealed gaps in leadership competence, stressing the need for bespoke professional learning programs in the Australian ECEC sector (Thornton & Cherrington, 2019). Further research should explore Assistant Educators’ self-efficacy, focusing on formal succession plans and enhancing potential leaders’ identity and capacity (Liou & Daly, 2020). Mentorship and targeted training could support quality leadership in ECEC services (Kupila et al., 2017).

Conclusion

This research has contributed our understanding of ECEC educational leadership by digging into the complexities of leadership competencies in ECEC services, and by shaping and validating a survey tool validated through robust statistical analysis. Notably, the findings explored the nature of leadership within diverse roles. The identified limitations emphasise avenues for future research to broaden the scope. Recommendations for tailored professional development and succession planning interventions offer actionable strategies that may support and enhance leadership across the sector. As the exploration of leadership in the ECEC sector continues, this survey tool contributes a foundation for ongoing discourse, encouraging a deeper understanding of effective leadership practices and fostering the continual enhancement of leadership competencies in EC education.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.