Abstract

Child observation is a critical component of quality pedagogy in early childhood education and care (ECEC). The ORICL (Observe, Reflect, Improve Children’s Learning) tool was co-designed by ECEC researchers, policymakers, leaders, and practitioners to support this work. Educators rate the experiences of individual children, and responses of educators and peers on 118 items across five domains. In this study of the utility of ORICL, the tool was used by 21 educators across 12 ECEC services for a total of 66 children. Descriptive statistical analyses were used to determine how educators used the full range of the ORICL rating scale, and the psychometric properties of the tool were explored. Findings suggest that the ORICL items can be readily observed and rated by educators for children aged under 3 years, the rating scale is appropriate, and there is early evidence to support the domain structure of the tool.

Keywords

Introduction

In 2013, the World Health Organization (WHO) made a strong case for global investment in very early child development: “the first 3 years of a child’s life are the time when a child has the greatest plasticity for growth and development, even under adverse circumstances” (Chan, 2013, p. 1515). In many developed nations, an important context for this investment is early childhood education and care (ECEC). Across high income countries, on average, 34% of 1-year old children, and 46% of 2-year-old children were enrolled in ECEC in 2018 (OECD, 2020). Ensuring that children are exposed to the highest quality of experiences in these services is critical for optimal child development. However, educators commonly report receiving insufficient training on the developmental and pedagogical needs of children under 3-years (Chu, 2016).

A cornerstone of high-quality early childhood pedagogical practice is the ability of educators to observe and reflect on children’s experiences to extend their learning (Melvin et al., 2019), yet few tools exist to support educators to do this. While multiple approaches to measuring and enhancing ECEC quality for children aged under 3-years have been developed and implemented (e.g., Infant-Toddler Environmental Rating Scale [ITERS], Harms et al., 2006; Classroom Assessment Scoring system [CLASS-Infant], Jamison et al., 2014), none of these tools aim to build capability in educators of all qualification levels to observe and reflect on the experiences of individual young children in their care (MeMoQ, 2015). Specifically, tools like ITERS and CLASS need specialised training to implement, and are designed to reflect quality at the room or group level, rather than at the individual child level. This paper documents the first study of a new and innovative tool, ORICL (Observe, Reflect, Improve Children’s Learning), co-designed with the field, for the field (Janus et al., 2018). ORICL is a 118-item educator-report tool that aims to gauge and improve ECEC quality through a focus on educator observations of individual children aged under 3-years. This study aimed to establish the feasibility of ORICL to be implemented in centre-based and home-based ECEC, as both a practice tool and research instrument, using Australia as a case study.

Early childhood education and care for children aged under 3-years

Globally, participation rates for very young children in ECEC have increased in developed countries over the last few decades. For example, in Australia, the site of the current study, 24% of 1-year-old children, and 37% of 2-year-old children attend ECEC services (ABS, 2017). Growing evidence shows the important role these early learning experiences have for longer term developmental outcomes (Hotz & Wiswall, 2019). The strongest evidence for universal ECEC services, comes from several robust quasi-experimental studies in Europe that link early centre-based care with enhanced motor and language development (Blau, 2021). Population data from Australia also suggest greater exposure to centre-based care prior to 3-years is associated with cognitive skill increases at age 7, but also poorer behavioural and social functioning (Coley et al., 2015), echoing findings in the United States (Coley et al., 2013). No doubt, the conferral of ECEC benefits will rely on a complex interaction among attendance, dosage, child characteristics, and the quality of ECEC experienced (Gilley et al., 2015). Quality is in part related to educator qualification and skill (Schaack et al., 2017), which varies among and within countries (Oberhuemer and Schreyer, 2018). For example, in much of Europe, infant and toddler educators are required to hold a 4-year bachelor’s degree (Blau, 2021), whereas in Australia and many US states, a vocational certificate is sufficient (Macfarlane & Lewis, 2012).

Australia, in recognition of disparities in qualifications and quality across state jurisdictions, introduced a national system of quality assurance for all ECEC services which includes the National Quality Standard (NQS), comprising of seven quality areas. Although the NQS has resulted in steady improvements in quality since its introduction, currently 11% of long day care services and 36% of family day care schemes do not meet all standards (ACECQA, 2023). Under-performance on the NQS is most evident in Standard 1.3 (Assessment and planning), with 8% of services rated as not meeting (ACECQA, 2023). Assessment and Planning requires educators “to take a planned and reflective approach to implementing the program for each child”, specifically to ensure that “each child’s learning and development is assessed or evaluated as part of an ongoing cycle of observation, analysing learning, documentation, planning, implementation, and reflection” (ACECQA, n.d., QA1 Element 1.3.2).

Achieving quality for early childhood education and care programs for children under three years

The extent to which ECEC provisions are universally of high quality is a key concern of Australian and international governments and regulators (EACEA, 2019; OECD, 2017). ECEC quality can be understood across two overarching dimensions of structural quality (e.g. group size, educator qualifications, ratios), and process quality (e.g. learning opportunities, interactions, materials; Howes et al., 2008). However, the empirical evidence for the role of ECEC quality in terms of children’s outcomes has been somewhat mixed. While some research finds that duration and dosage of ECEC exposure is more highly predictive of child outcomes than quality measures (Coley et al., 2013), others have linked higher quality to enhanced learning trajectories for children when compared to children experiencing low quality care (Ruzek et al., 2014), and particularly for socio-economically disadvantaged children (van Huizen & Plantenga, 2015). Noteworthy here are criticisms of the way that quality is measured in ECEC, including concerns about a strong focus on structural and compliance issues, without due attention to aspects of process quality including nuanced measures of teacher-educator relationships and interactions (Sabol et al., 2013; Siraj et al., 2019). Australia’s seven Quality Areas covering both structural and process quality aspects (ACECQA, 2012). Regardless of the challenges of measuring ECEC quality, and the nuances in the evidence for the role of quality in child outcomes, any systematic efforts to enhance the quality that children experience must be welcomed.

Indeed, it is possible that the experience of poorer quality ECEC by many children, particularly before age 3, is at least partially responsible for poor outcomes at school age (Shuey & Kankaraš, 2018). In Australia, for example, despite decades of significant government investment and a focus on access in the preschool sector (Hurley et al., 2020), there has been continuing or even increasing rates of developmental vulnerability for children at school entry (Australian Government Department of Education, Employment and Workplace Relations, 2009). The mechanisms of this relationship are difficult to pinpoint, as they can be a result of several factors that act to compound disadvantage for very young children. ECEC settings for children aged under 3-years generally have educators with lower qualifications (Macfarlane & Lewis, 2012) than those for children aged 3–5 years. Further, children under 3 years are more likely to attend home-based, rather than centre-based ECEC, in which poor quality is more common (ACECQA, 2021) and, importantly, children from socio-economically disadvantaged households are more likely to attend poorer quality care (Ruzek et al., 2014).

Taken together, it is clear there is a need for improvement in quality of ECEC for very young children, particularly in relation to assessment and planning, given the increasing number of young children participating. Further, there is a need for enhanced approaches to researching children’s experiences over time which can contribute to the evaluation of the educational impact of ECEC. We suggest the best way to enhance quality is to provide educators with tools to improve their understanding of children aged under 3-years and the skills they need to support children’s learning, development, and wellbeing, and that this approach can also provide researchers with important data to understand children’s experiences across time. Existing approaches to measuring and enhancing ECEC quality are not designed to be used by all educators (regardless of qualification level) or to build capability in skills of observation, reflection, interpretation, documentation and planning. Nor are they designed to focus attention, and provide comprehensive research data, on learning experiences of an individual child, despite wide recognition that child-centred pedagogy is essential to high quality ECEC (Melvin et al., 2019).

The current study

In this paper we document our effort to fill the gaps described above and report on quantitative findings of the first study of a new tool, ORICL (Observe, Reflect, Improve Children’s Learning), developed to support and enhance educator practice and through this, children’s learning. ORICL’s relevance and potential is underlined by the fact it was co-designed collaboratively with the field, for the field (Janus et al., 2018), as a tool to both gauge and improve ECEC quality for children aged under 3-years.

The Observe, Reflect, Improve Children’s Learning Tool: a new tool

ORICL was co-designed with industry stakeholders and end-users through several stages of development aligned with implementation science (Metz et al., 2015). Specifically we explored the need for such a tool with industry stakeholders through a one day workshop. In attendance were senior leaders in ECEC government departments from every state and territory, national peak bodies including advocacy groups and unions, and non-government organisations and providers of centre- and home -based ECEC services. Participants identified a gap in tools for children from birth to 3 and flagged the need for a tool to improve child wellbeing, and help educators better understand and recognise indicators of development. Participants also felt that such a tool needed to be aligned with the EYLF learning outcomes. Following this workshop, we developed the desired characteristics of the tool, drawing on a thorough search of existing related literature to develop an initial set of potential ORICL items. We then tested these for fit and acceptability through a Delphi panel comprising practitioners working with children under 3 years, service provider managers and practice leaders, government policymakers, consultants, researchers, and representatives from Indigenous children’s services and family support services. Drawing on two rounds of feedback from panel members, we revised and refined the ORICL to 118 items and initiated the pilot testing in the field which yielded the data for this report.

The ORICL tool was created to fill a significant gap in ECEC educator capabilities, with four key points of innovation. First, the ORICL focusses specifically on children in the first three years of life, an age group that is under-emphasised in early childhood educator training compared to children aged 3-years and over (Chu, 2016). This age group is seen by educators as more difficult (compared to older children) to plan for, and/or ‘find’ in national early years curriculum frameworks (Davis & Dunn, 2019). Second, the ORICL focusses on observations of young children’s experiences within the natural ecology of ECEC, rather than context-generic observations focussed on children’s developmental competency in specific areas as other tools do (e.g., Ages and Stages Questionnaire; Martinez-Nadal et al., 2021). ORICL provides a guide for educators to attend to actions and communications of individual children, as well as ways peers and educators respond to children. Used systematically, ORICL can inform planning targeted to each child’s interests and pace, as a unique individual learner, and as a participant within a group care setting. Third, ORICL focusses on the quality of individual children’s experiences in ECEC rather than overall quality of the ECEC environment at room or centre level. Existing tools, such as ITERS, CLASS-infant and the NQS, provide ratings at room level and are based on all educators and children present. This approach assumes that quality in a room affects all children equally, and that quality is a property of a room, rather than the individual, dialectic relationship between a child and educator. The approach taken in ORICL recognises that each child experiences and responds to the ECEC environment in their own way, and in turn educators respond differently to individual children. This is aligned with current (and foundational) thinking about individualised pedagogy in ECEC (Melvin et al., 2019). Fourth, the ORICL is designed to be completed by educators of all qualification levels, even those with a minimal level of pre-service training, and does not require certification to use the tool. This means the ORICL has higher potential than the existing tools to build capability in educators themselves to engage in observations that tap into ECEC quality. Further, there is strong potential for the tool to support widespread research data collection, enabling researchers to understand the experiences of children in ECEC without the need for expensive and time-consuming in-situ observational measures.

The focus and domain structure of observe, reflect, improve children’s learning

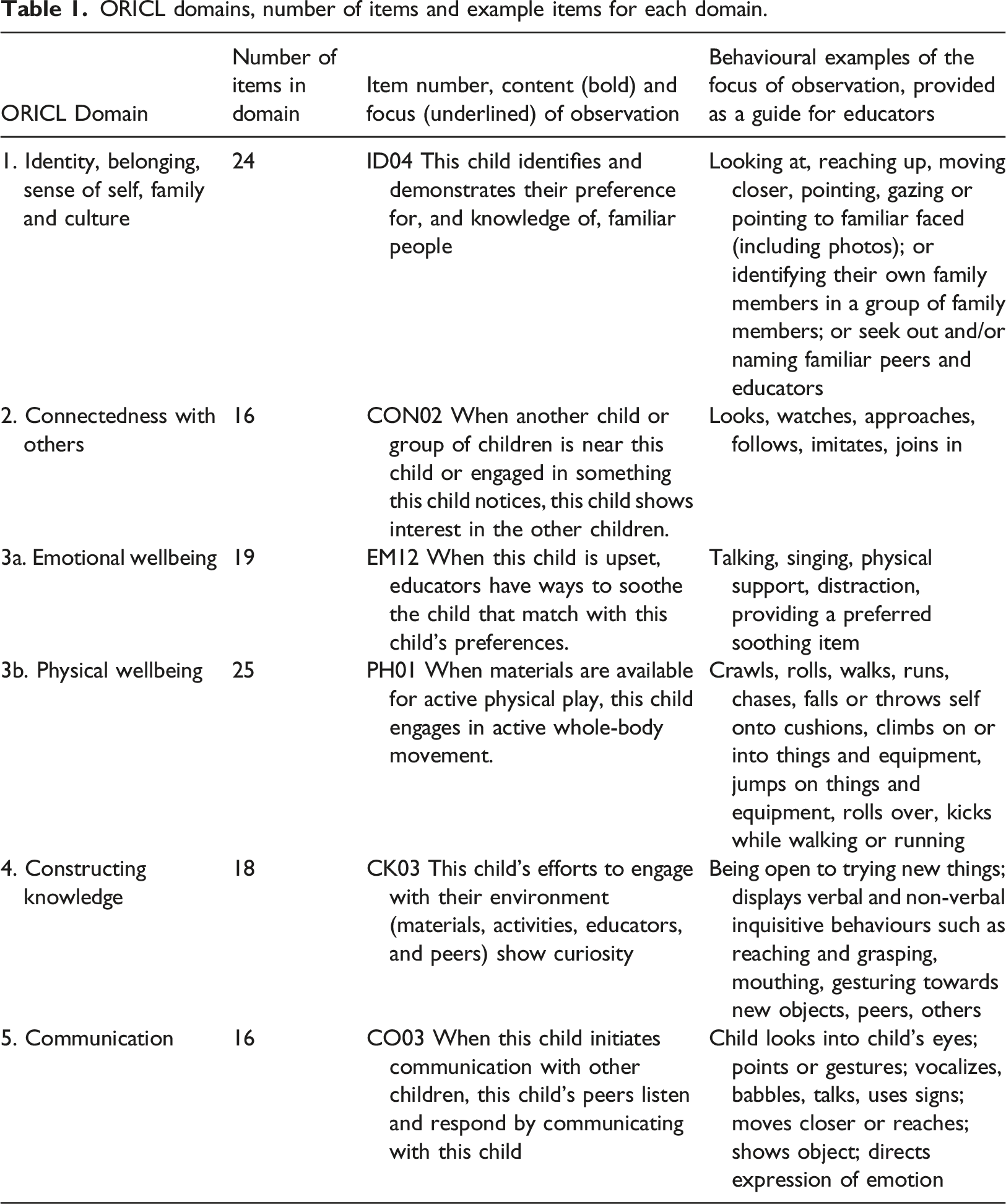

The ORICL items, 118 in total, were designed to capture three aspects of an individual child’s experiences: the child’s demonstration of actions, communications and responses to materials and others’ invitations (64 items); other children’s (

ORICL domains, number of items and example items for each domain.

Instructions for using observe, reflect, improve children’s learning

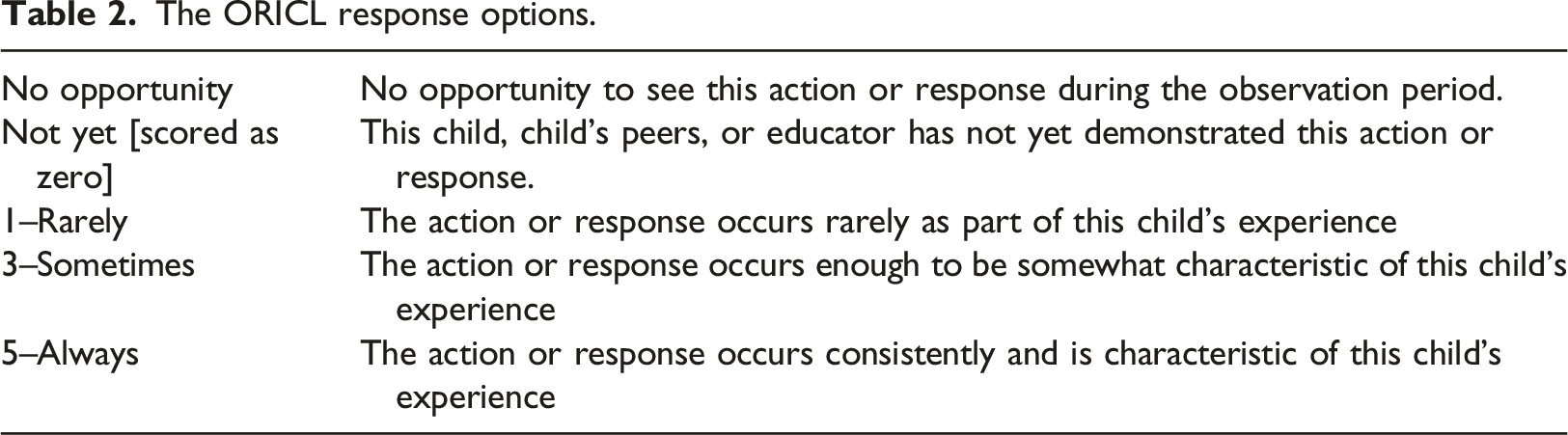

ORICL has been designed for use by educators during their regular work. Item ratings are meant to capture what life is like for the child on a typical day in their ECEC setting. Completing the tool does not require educators to do anything different from what is typically provided in their usual program and it was anticipated that observations of no more than 1 hour for each child would be sufficient to complete the tool. For each item an example of the kinds of behaviours that might be observed is provided before the response scale (see final column of Table 1 for examples). While each item includes examples of child, peer, or educator behaviours, these are only suggestions, included as observational triggers; for example, seeing a child perform one of the examples given or a similar one is sufficient for an educator to rate the item.

The ORICL response options.

Educator characteristics.

Instructions encouraged educators to consider the child in the whole context of their ECEC experience, thus collaboration and discussion with other educators to complete ORICL was encouraged. For the items related to educator interactions, these were designed as a global rating of how educators overall responded to the child and not limited to the educator completing the ORICL tool. Educators were able to choose items to not respond to across the tool, however for this pilot all items were generally completed.

Research questions

This feasibility study is part of a larger, overarching project that developed and piloted the ORICL. It is the first use of ORICL by educators in the field and analysis focusses on child data provided by educators on ORICL and what this tells us about how educators used the tool, and psychometric properties of ORICL. The study aimed to explore: (1) To what extent were educators able to make observations for each of the ORICL items? (2) To what extent did educators use the full range of responses available on each item? (3) How do the items within each domain perform and relate to each other? (4) How are the ORICL subsets of items that focus on the child, educator responses to the child, and peer responses used?

Method

Study design

We used a cross-sectional design with clustered sampling. First, ECEC services were recruited, then infant-toddler educators within each service were invited to participate in the research. Each educator then went on to recruit a number of children to the study and completed ORICL data for each participating child. Ethics was applied for and received from the Human Research Ethics Committee of Charles Sturt University (H18082) Approval number 1800000758.

Recruitment of early childhood services

Recruitment took place in five states and territories within Australia. Early childhood services were recruited through ECEC organisations that participated in the co-design of the ORICL tool (Janus et al., 2018) and through researchers’ professional networks. Services recruited catered for children aged from 6-weeks to 5-years: centre-based long day care (LDC) and home-based family day care (FDC). In Australia, LDC and FDC are licenced services that participate in the NQS Assessment & Rating process, and provide an approved educational program aligned with the EYLF. FDC educators provide care in their own home for up to four children under the age of 5-years, including their own children, and are supported by the coordination unit of the FDC scheme where staff must hold at least a 2-year Diploma level education and care. Licenced LDC centres vary in numbers and ages of children they enrol but must adhere to ratios of 1 educator per 4 children for children under 24-months, and 1 educator per 5 children aged 25- to 36-months. In LDC, educators must hold at least a 6-month Certificate III ECEC qualification and at least 50% of educators hold a Diploma qualification. LDC services caring for children over 3-years must also employ a Degree qualified early childhood teacher.

For LDC, three research members approached head office of overarching service organisations where an existing professional relationship was established. Managers who had a broad view across the organisation then recommended possible research sites, based on centre-level interest in birth to three research, and introduced the researcher to centre Directors. In addition, a research team member with an existing professional relationship with a stand-alone service made a direct approach to the centre Director. Once the research team had provided full information to Directors and gained their consent, Directors then invited eligible educators at the service (those working with children aged 6 weeks to 36 months) to consent to the research. For FDC, a research team member with an existing professional relationship approached each FDC scheme coordination unit, which in turn approached individual FDC educators on behalf of the research team.

A total of 12 different services agreed to participate. Five were FDC services, in New South Wales (NSW) and Victoria (VIC), and seven were LDC centres (two in NSW, two in Queensland (QLD), three in West Australia (WA). Eight services were in major cities, two in inner regional areas (both FDC) and two in outer regional areas (both LDC). Three services were in postcodes ranked in the lowest four deciles of socio-economic advantage (one was an LDC, two were FDC services), with the remaining eight services in postcodes ranked in the top five deciles of socio-economic advantage (Australian Bureau of Statistics, 2016).

Participants

Educators

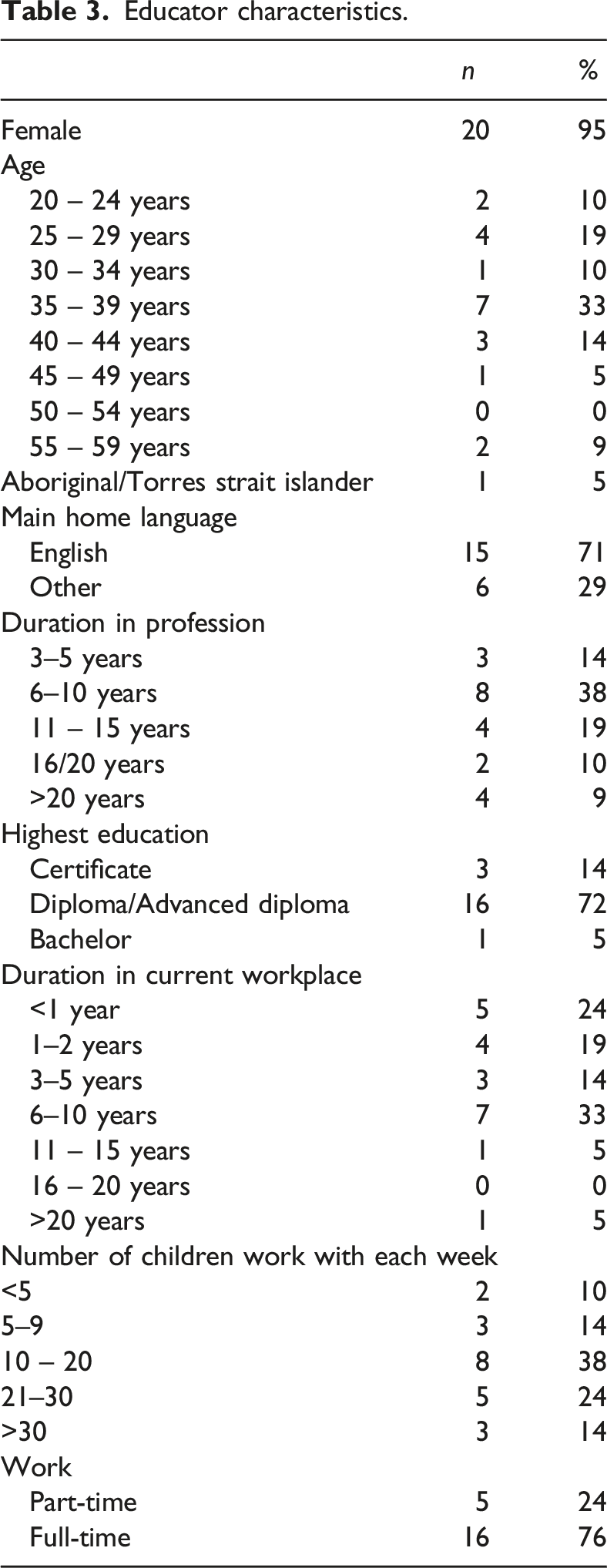

At each of the services recruited, any educators working in the infant-toddler age group were invited to participate in the research. A total of 21 educators participated in the study. At each of the five FDC settings, one educator was recruited. Educator recruitment numbers at the LDC centres were as follows: two and five educators at each of the NSW centres, one and four educators at each of the QLD centres, one educator at each of two WA centres, and two educators at the third WA centre. Descriptive information on educator participants is found in Table 3. Of those educators who spoke a language other than English at home, spoken languages included Portuguese, Bangla, Burmese, Gujarati, Mandarin, Punjabi, and Swahili. Six educators also indicated they used a language other than English at their workplace including French, Portuguese, Hindi, and Bangla.

Children

Eligible families for recruitment at each service were those with a child aged between 6-weeks and 36-months. Directors at LDCs and educators at FDCs, invited all eligible families to consent to the research. This resulted in 66 children participating in the research, most children (n = 57) attended the seven LDC centres, and nine children attended the five FDC homes. ORICL data were provided by each educator in the study for between one to seven children depending on consents received. Specifically, there needed to be a match between a consenting educator and consenting eligible children well known to that educator. Educators working in LDC tended to complete the ORICL for more children than educators working in family day care (average number of children per LDC educator = 3.6; per FDC educator = 1.8).

The 66 children (58% girls, n = 38) ranged in age from 7- to 33-months, with a mean age of 20.7 months (SD = 7.2). There were 10 children aged <12 months (16%); 33 children aged between 13- and 24-months (52%), and 23 children aged 25- to 33-months (32%). Three children spoke a language other than English (Chinese, Greek, French) and there were no Aboriginal or Torres Strait Islander children in the sample. Four children were described as having a disability or developmental delay with descriptions including ‘hip’, physical, sensory and anxiety concern, late walking, and right eye palsy. Children attended their ECEC service for one to five days a week with the modal days per week being three. Hours attended across the week ranged from 8 to 50 with a mean of 30.3 hours (SD = 12.03).

Data collection procedure

Educators completed a short survey about their work, training and experience in ECEC, read the ORICL instructions and completed a paper copy of the ORICL for each child in the study over a four-week period. A research assistant sent/called the educators with reminders after two weeks. Completed forms were checked for completeness and entered into an Excel database, then exported to SPSS for analyses. While the focus in this paper is the data provided across the sample for children, educators also provided data on their experiences with the tool including how long it took to complete and the benefits and challenges of its use (Elwick et al., 2023; Harrison et al., 2021). Briefly, most educators reported spending up to 1 hour observing children to complete ORICL, and most reported completing the actual tool in 1 hour or less. ORICL instructions alerted educators to the fact that observations did not have be undertaken in any specific circumstances other than usual daily practice, and that both the observations and completion of the tool could be completed over several days or weeks.

Analysis

Given the ORICL is not designed as a developmental tool, but a tool to reflect on and improve learning experiences, and that it is also meant to reflect the educators’ idiosyncratic interactions with children, the usual psychometric expectations of the tool (such as test-retest, inter-rater reliability or strong correlation with age) are not applicable and may not be required in a tool such as this where the purpose is professional learning and practice enhancements for educators. Our analyses, therefore, focused on the aspects related to feasibility and structure that would allow us to move onto a larger implementation.

First, the distribution of missing values (“not observed”) was examined for each item and domain (RQ 1), along with a set of descriptive analyses for all ORICL items (RQ 2). The Cronbach’s alpha for each domain was calculated to establish internal reliability and domain summary scores (an average of all items within each domain) and bivariate correlations among them, and children’s age and sex were examined (RQ 3). Finally, we produced Cronbach’s alphas for the substructure of ORICL that groups together items related to the child, peers, and educator behaviours (RQ 4). All analyses were conducted using SPSS Version 26.

Results

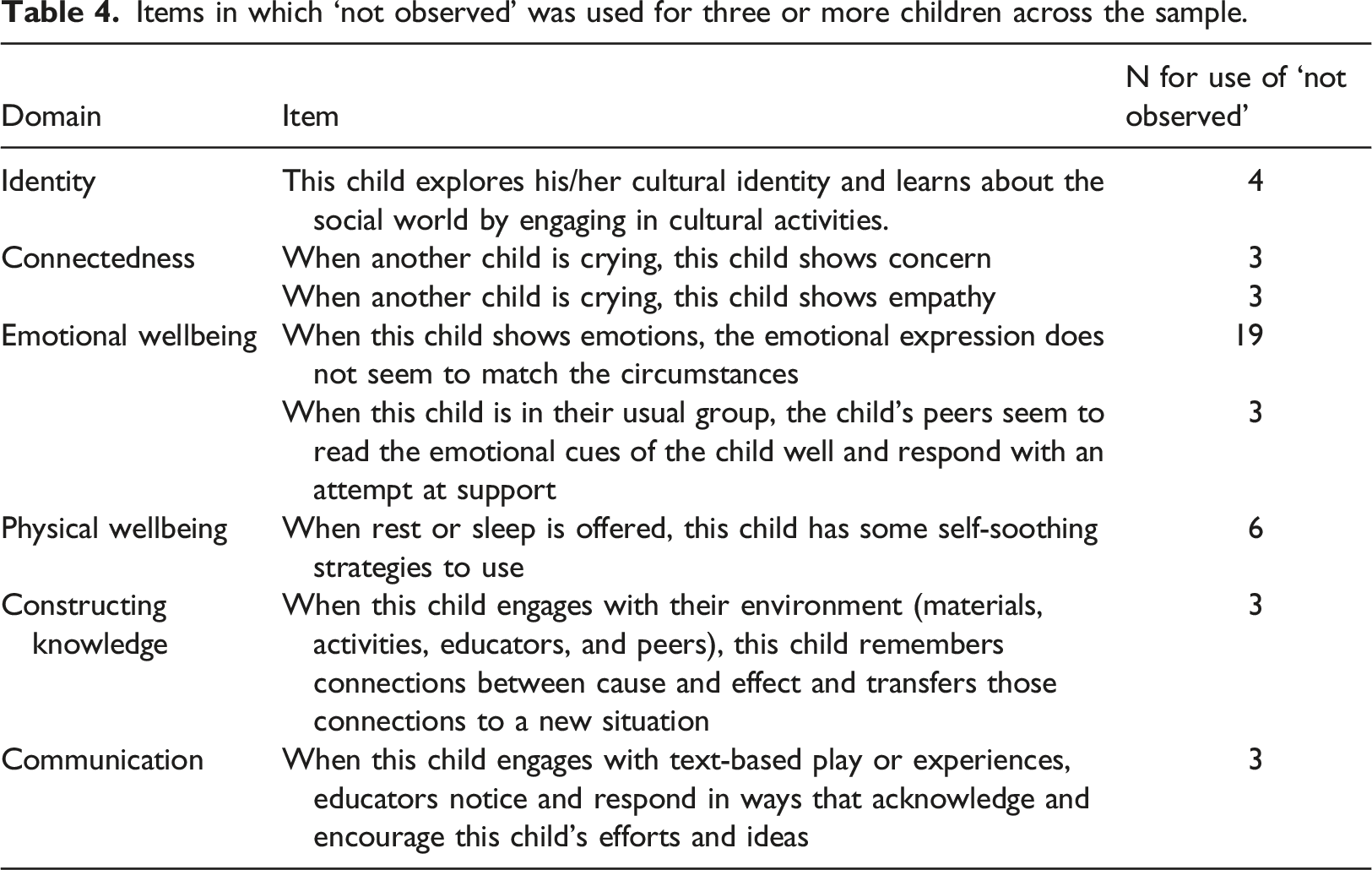

To what extent were the educators able to observe and rate observe, reflect, improve children’s learning items?

Items in which ‘not observed’ was used for three or more children across the sample.

To what extent did educators use the full range of responses available on each item?

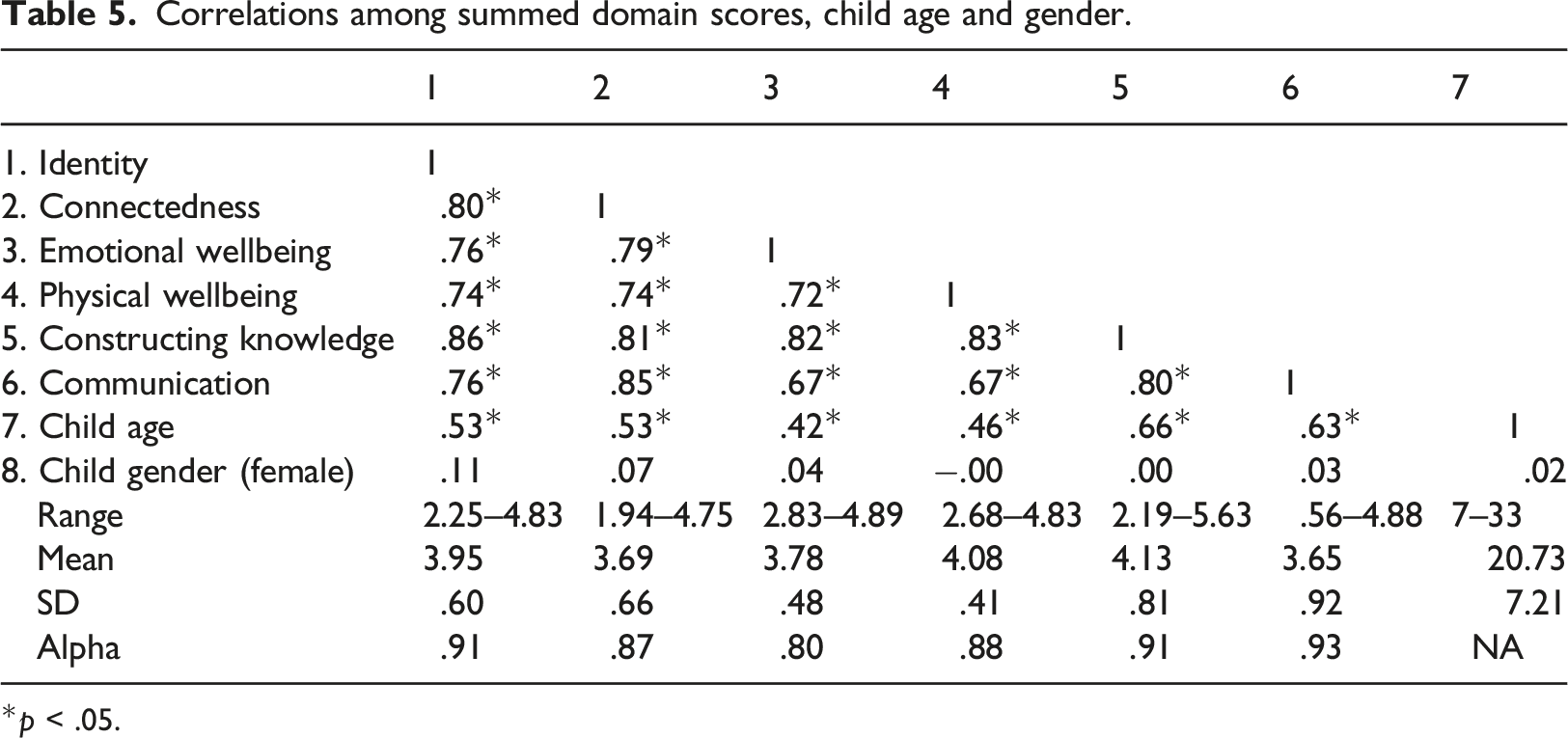

Correlations among summed domain scores, child age and gender.

*p < .05.

How do the items within each domain perform and relate to each other?

Internal reliability within each of the ORICL domains was high (see final row of Table 5). Scores within each domain were totalled and averaged and bivariate correlations among the domain totals and child age and gender examined (Table 5). There were strong correlations (>.70) among the domains and moderate correlations among domain scores and child age in months (.42–.66). The strongest correlations with age were the domain scores for Constructing knowledge, and Communication.

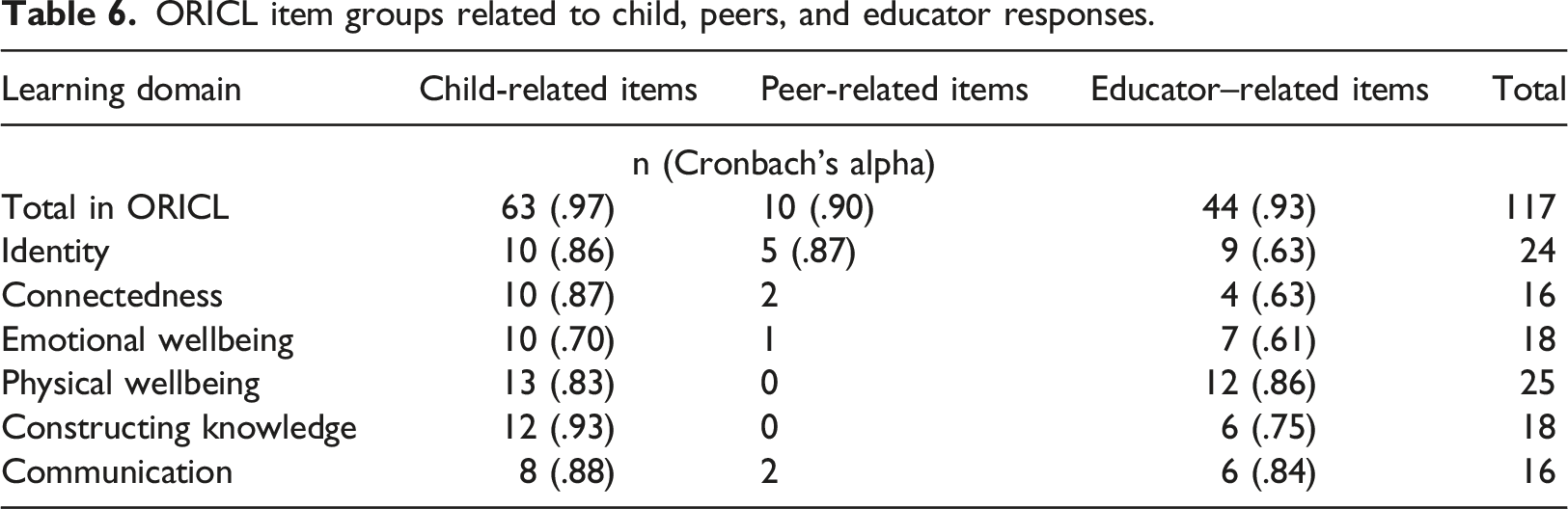

How are the observe, reflect, improve children’s learning subsets of items that focus on the child, educator responses to the child, and peer responses used?

ORICL item groups related to child, peers, and educator responses.

Discussion

This paper has presented findings of the first feasibility study of a new tool designed specifically for educators of infants and toddlers, ORICL. Overall, the findings are promising and demonstrate the feasibility of ORICL for the field, and for future research programs. Specifically, the 118 items of the ORICL were able to be completed by educators, regardless of their prior education level, for children under 3-years. The response scale appeared appropriate with data showing usage right across the scale. The structure of the tool, designed to align with domains of the national early childhood curriculum, was supported by the data with strong internal reliability estimates, and cross-domain correlations as would be expected. However, further larger sample data is required to undertake specific exploratory and confirmatory factor analyses to further confirm this structure.

The ORICL presents several contributions and addresses important existing gaps in infant and toddler education research and practice. First, the tool was designed with the field, for the field. Using educators’ own observations and experience directly, rather than relying on external observers, has been the cornerstone of ORICL philosophy and the co-development process (Janus et al., 2018). It is likely that the high relevance of ORICL items, as evidenced by completion rates, reflects the extensive input of educators in the development of these items. Second, ORICL was designed to measure contextual ECEC quality at the individual child level, rather than the group level. This is achieved through focussing not only on individual children and their observable behaviours, but also the responses of peers and educators within that context. Findings here suggest educators were equally able to use items focussed on peers and educators, as they were able to observe the target child themself. Third, unlike other existing tools, ORICL was designed to be used by staff of various qualification levels, not just by trained assessors. The sample of educators in this study had a range of education levels as well as cultural and language backgrounds, working in a range of sociodemographic regions, and all were able to complete ORICL.

In view of existing gaps in the professional development available for educators of the youngest children, our results are very promising, suggesting that the ORICL tool may be useful in supporting educators to observe, reflect and report on children’s interactions within the early education setting. A recent study by Romo-Escudero et al. (2021) measured observational ability of toddler educators in centre-based childcare by having them note their observations of pre-recorded videos of classroom activity. A key finding was that the ability of educators to notice markers of toddler social-emotional development, and effective educator-child interactions was overall limited. However, educators were not provided with prompts of what to look for, and thus the task differed substantially from ORICL observations which are scaffolded with concrete examples and undertaken in naturalistic settings. Importantly, there was some evidence that educators’ abilities to notice effective educator-child interactions in the pre-recorded videos was associated with more effective educator observed in the classroom (Romo-Escudero et al., 2021). This provides some support to our argument that should the ORICL support educators’ capacity to observe and reflect on children’s learning, it may also improve practice.

Prior to further research, one ORICL item will be changed given the data presented here. Item 9 in the Emotional Wellbeing domain had many uses of ‘not observed’ (n = 20) with the negative wording of the item making it difficult for educators to score. In the next ORICL iteration this item will read “when this child shows emotions, the emotional expression tends to match the circumstances.” No other findings suggested specific changes are needed to the ORICL before further study is conducted.

Limitations

Taken together, the early findings presented here indicate the feasibility of ORICL, and are important for guiding further research, however, this small study is not without limitations. Specifically, a much larger sample size is required to (a) fully confirm the internal reliability of the ORICL, (b) understand any shared variance as a function of clustering of children within educators, and educators within centres, (c) consider whether educators of different qualification levels, or in different setting types (e.g. LDC, FDC) or geographic areas show systematic differences in their use of ORICL, (d) understand whether there are child age-effects in the way ORICL is used. Finally, it is important to note that in future studies, repeated completion of the ORICL items will be required to understand (a) whether or not the act of using ORICL achieves the desired goal of enhancing educator capability and therefore children’s learning, and (b) whether the child level ORICL data collected is useful for any other purpose including predicting concurrent or future developmental outcomes for children.

Future implications

ORICL was designed as a data collection tool, and as an intervention to enhance educator practice, and ultimately the quality of experiences in ECEC for young children. While this study is not able to provide evidence for any of these anticipated positive outcomes, the educator feedback (qualitative and quantitative) strongly suggests that the potential for such effectiveness in terms of enhancing educator practice is high (Harrison et al., 2021). We argue that through guiding educators to observe how and what young children are learning, and enhancing their capacity to reflect on this, ORICL has the potential to lead to enhanced educator practice, individually targeted educational programs and enhanced child outcomes. Further, the tool has strong potential as a research data collection approach to track children’s experiences and educator practices over time. Given continuing rates of developmental vulnerability at school entry (Australian Government Department of Education & Training, 2022), a new approach to promoting and researching quality in early childhood education services is needed. Future ORICL studies should involve a longitudinal, experimental design, including measures of educator practice and child outcomes over time, to establish evidence for effectiveness.

Conclusion

The findings presented here demonstrate the feasibility and relevance of ORICL and suggest high potential for its use in the infant and toddler early childhood education and care sector for both practice and research. Further research on ORICL psychometric properties as well as implementation and evaluation of the extent to which its use stimulated the desired enhancements to educator practice is warranted. Despite our study’s limitations, it firmly establishes ORICL as an innovative tool for the early childhood care and education field. The ongoing testing and wider use of the ORICL will assist services and educators to understand and evaluate the enablers and barriers to learning for each child in their setting and modify these as needed to enhance the care and education provided for each child. Ultimately, higher quality education and care for our youngest citizens is likely to have far-reaching benefits.

Supplemental Material

Supplemental Material - Feasibility and initial psychometric properties of the observe, reflect, improve children’s learning tool (ORICL) for early childhood services: A tool for building capacity in infant and toddler educators

Supplemental Material for Feasibility and initial psychometric properties of the observe, reflect, improve children’s learning tool (ORICL) for early childhood services: A tool for building capacity in infant and toddler educators by Kate E. Williams, Magdalena Janus, Linda J. Australasian Journal of Early Childhood.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding was provided for this study by Charles Sturt University Grant Development Funding.

Ethical Approval

The data that support the findings of this study are not available due to ethical restrictions. This study was approved by the Human Research Ethics Committee of Charles Sturt University. Approval number 1800000758.

ORCID iDs

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.