Abstract

Since students’ academic English levels have been well researched to positively influence students’ academic success in English-medium universities, it has been the common practice for these universities to provide general English for academic purposes (EAP) support for linguistically weaker students. However, there is a lack of understanding in the importance of different EAP skills as perceived by instructors, and this might hinder the quality of the EAP provisions to prepare students from different academic backgrounds for their degree programmes. The current study aimed to fill in this gap by examining instructors’ perceptions of students’ academic English skills in tertiary education, regarding which academic English skills and areas might be perceived as more important in that particular subject for the degree programme. The 42-item Faculty Survey Instrument developed by Rosenfeld et al. to assess the importance of English competency in reading, writing, listening and speaking for academic success of university studies was adapted, and the Rasch analyses confirmed four skills perceived by instructors across disciplines as important for students’ university studies. The findings could provide direct insights to the development of EAP provisions in the English-medium universities to better help students to prepare for their academic studies in different disciplines.

Introduction

With the increasing research about English for academic purposes (EAP) support in English-medium universities, the focus has been more on the predictive role of students’ EAP levels upon their academic performance in the university (Bo et al., 2023; Neumann et al., 2019). The perceptions of important EAP skills (specifically the sub-skills in reading, writing, listening and speaking) are under-researched, especially those among the faculty instructors across disciplines in tertiary education. Without sufficiently knowing the perceived importance of different EAP sub-skills, it is challenging to provide proper EAP support for students to prepare well for their academic studies within their degree programmes of English-medium universities. As a matter of fact, students who are considered linguistically weak based on their language test scores (e.g., IELTS or university-developed tests) often receive the same EAP support before the start of their degree programmes or during their degree programmes in university (Dunworth, 2009; Elder et al., 2007; Harrington & Roche, 2014). It has been advocated to make the distinction of EAP support across various disciplines because of the different requirements and expectations of the EAP sub-skills (Monbec, 2018), but due to various reasons such as the lack of resources most universities are keeping the standard practice of offering general EAP courses to students from different disciplines. To more efficiently provide such EAP support to a large number of students with diverse academic backgrounds, it is essential to know how the instructors perceive the importance of EAP sub-skills differently upon robust measures, so that the content of the EAP provisions can be tweaked to better support students’ academic studies in their degree programmes.

Literature review

Academic English skills, or EAP as it is often called in language education research, is a subfield of English for specific purposes (Hamp-Lyons, 2011). As its name suggests, the cornerstone of EAP is preparing learners for their academic endeavours (i.e., university studies, scholarly exchange and research) through the teaching of a variety of English used specifically in academic contexts (Flowerdew & Peacock, 2001). This core aspect of EAP gives it a learner-centred focus (Link, 2018; Savignon & Wang, 2003); for EAP provisions to evolve as such, it is within expectation that needs analysis, defined as the collection and analysis of information about learners to inform design and implementation of EAP programmes, is imperative for developing EAP support (Brown, 2007; Grier, 2005; Nunan, 1988; Yalden, 1987).

Needs analyses

The literature on EAP and needs analysis has evolved significantly, reflecting the dynamic nature of academic language instruction and the increasing complexity of learners’ needs in diverse educational contexts. Works by Hafner and Miller (2018), Nafissi et al. (2017), Baştürkmen (2022) and Brown (2016) provide a comprehensive overview of the multidimensional aspects of EAP course design and the critical role of needs analysis in tailoring educational programmes to meet specific learner requirements. These texts underscore the necessity for educators to engage in systematic needs assessments to ensure that EAP curricula are responsive to the unique challenges faced by students in their respective disciplines.

Recent studies have further foregrounded the importance of needs analysis in EAP contexts. For instance, Nafissi et al. (2017) emphasised that the integration of needs analysis into EAP course design is essential for aligning educational outcomes with the linguistic and communicative demands of specific academic fields. Their research highlights the effectiveness of needs analysis in improving student performance through targeted curriculum adjustments. Similarly, Dou et al. (2023) discuss how globalisation has transformed the landscape of language education, necessitating a shift from traditional English instruction to more specialised EAP frameworks that address the specific needs of learners in various academic and professional domains.

Moreover, the literature indicates a growing recognition of the diverse linguistic competencies required for academic success. Alhassan (2019) pointed out that while early research predominantly focused on reading and writing skills, there is now an increasing emphasis on listening and speaking tasks within EAP programmes. This shift reflects a broader understanding of academic literacy that encompasses a range of communicative practices essential for effective participation in academic discourse. The need for a holistic approach to EAP instruction is further supported by the findings of Mazgutova and Kormos (2015), who investigate the syntactic and lexical development of students in intensive EAP programmes, illustrating the intricate relationship between language proficiency and academic writing skills.

Hutchinson and Waters (1987) divided needs into necessities (skills needed to function in a target situation), lacks (skills that students lack) and wants (skills students think they lack and want to work on). Brindley (1989) distinguished between needs (what students lack) and wants (students’ desire to reach a certain goal), while Brown (2016) compared needs that reflect “what students currently can do” with those that reflect “what students should be able to do” (p. 14). To specify, Brown (2016) discussed the concept from four perspectives, including the dramatic view (wants and expectations), the discrepancy view (deficiencies and gaps), the analytic view (what students should learn next) and the diagnostic view (necessities) (Atai & Hejazi, 2019). Various contemporary needs analysis studies have drawn on the aforementioned notions of needs, most notably Bosuwon and Woodrow (2009), Huang (2019), Gholaminejad (2022), and Chemir and Kitila (2022).

Scholars also differ over what needs analysis does. According to Dudley-Evans and St John (1998), the purpose of needs analysis is to determine learners’ current levels of proficiency, activities or tasks they will be using English for, and skills and knowledge they need to gain to efficiently carry out such activities or tasks. Hyland and Hamp-Lyons (2002) argued that needs analysis should identify “sets of skills, texts, linguistic forms, and communicative practices [that a] particular group of learners must acquire” (p. 5). Yuvayapan and Bilginer (2020) viewed needs analysis as a process of identifying and negotiating between various stakeholders’ perspectives – what students need support for or want to improve on one hand, and the expectations of their academic communities on the other. While needs analysis studies may vary in purposes, their findings, as Brown (2016) asserted, should be used to inform the learning objectives, materials, and assessment criteria and procedures of EAP support; to add to this, among the different stakeholders, teachers, students and the local administrators are the critical target (Atai & Hejazi, 2019).

In conclusion, the literature on EAP and needs analysis reflects a rich tapestry of research that underscores the importance of contextually relevant, learner-centred approaches to language instruction. The call for methodological advancements in needs analysis is also noteworthy. Serafini et al. (2015) argued for essential improvements in the methodologies employed in needs analysis, emphasising the need for a more nuanced understanding of learner populations. Their insights are crucial for ensuring that EAP programmes are designed with a comprehensive awareness of the specific contexts and challenges faced by students. This is echoed by the findings of Harper and Sun (2022), who highlighted the significance of learner perceptions in shaping EAP curricula, reinforcing the idea that student voices must be integral to the needs analysis process. As the field continues to evolve, it is imperative for educators and researchers to remain attuned to the changing landscape of academic communication and the diverse needs of learners. The integration of contemporary methodologies, the recognition of disciplinary differences and the incorporation of technology will be essential in shaping the future of EAP instruction.

EAP needs and cognate measures

Aspects of EAP in various contexts have been investigated in the existing literature. Some studies looked at the relationship between academic performance and English proficiency, which was measured through English test scores (Ghenghesh, 2015; Soruc et al., 2021) or vocabulary knowledge (Masrai & Milton, 2018). Another strand of research focused on EAP-related support, examining students and teaching staff's strategies to tackle language-related challenges in the learning process (Evans & Morrison, 2011; Jiang et al., 2016) or their perceptions of existing support (Galloway & Ruegg, 2020). Other studies investigated content area instructors and students’ attitudes towards English-medium instruction (Chang, 2010). Needs analysis studies, which will be discussed subsequently, also constitute a part of this literature.

Existing EAP needs analysis research varies greatly in their measurement and operationalisation of needs. Dimensions of EAP learning needs that have been explored include the perceived importance of communicative tasks (Alhadiah, 2021; Furka, 2024; Mak, 2021), language components (Gholaminejad, 2022) or areas identified as problematic in existing EAP provisions (Eslami, 2010), the perceived frequency of communicative tasks (Alhadiah, 2021; AlHashemi et al., 2017) or instructional activities (Akyel & Ozek, 2010; Yuvayapan & Bilginer, 2020), the perceived difficulty of communicative tasks (Akyel & Ozek, 2010; Alhadiah, 2021), and the perceived necessity of communicative tasks (Youn, 2018) or communication topics (Bosuwon & Woodrow, 2009). Other studies have conceptualised needs in terms of the perceived proficiency of students (Huang, 2010, 2013), their learning motivations (Chemir & Kitila, 2022), language resources they often used (AlHashemi et al., 2017) or the level of support they needed to develop certain skills (AlHashemi et al., 2017; Yuvayapan & Bilginer, 2020).

EAP learning needs have also been studied from the perspectives of different groups of stakeholders. Some needs analysis research drew on the perceptions of several stakeholder groups such as students and subject lecturers (Chemir & Kitila, 2022; Furka, 2024) or instructors, students, and industry employers and employees (Bosuwon & Woodrow, 2009). On the other hand, some studies focused on the perspective of a singular group, usually students, such as Youn (2018) or Yuvayapan and Bilginer (2020). More research is required to actually examine the perceptions of EAP needs across various disciplines from the instructors’ perspectives at the university level (Furka, 2024; Huang, 2013) for a more comprehensive understanding and more concrete implications to improve the EAP provisions for university students. It is also critical to explore the possible differences across subjects about the EAP needs for the literacy development since there are subject specific expectations (Hyland, 2009). As a matter of fact, the earlier research (Bo et al., 2023) identified the disciplinary difference about how students’ EAP proficiency levels shaped their academic performance in the English-medium university; it calls for the importance to understand how various disciplines might hold different expectations from students’ EAP skills, which will be addressed in the current study.

Though EAP needs analysis can take a range of approaches, research that disambiguates English-based communicative tasks relevant to academic studies and their perceived importance from the perspectives of subject lecturers is imperative. This is because EAP programmes and EMI courses have been widely criticised for inadequately preparing students for their academic studies (Chang, 2010; Galloway & Ruegg, 2020; Kuteeva & Airey, 2014). This inadequacy suggests that the design and implementation of English medium instruction in many universities as well as the linguistic support resources to prepare students for it are misaligned with actual requirements of university studies and students’ needs across subjects, a common finding in the literature (Karakas, 2017; Kirkgoz et al., 2021). Ignorance of or misconceptions about what content instructors expect of students in terms of academic English skills has been recognised as a common learning challenge faced by students at the tertiary education level, leading to misinterpretations of many learning activities and assessment requirements on students’ part (Booth, 2005; Itua et al., 2014). Most prior research seemed to focus on learners and learning content while overlooking the role of instructors. The lack of research about EAP instructors has been mentioned by different scholars (Ding & Bruce, 2017; Nazari, 2020), advocating for more studies in diverse learning contexts. In the limited research about EAP instructors, the recent study (Kaivanpanah et al., 2021) focusing on Iranian context tried to understand EAP teachers’ knowledge, trajectories and practice, which provided insights about the possible training and support for those EAP teachers to enhance the quality of EAP provisions. Similarly, Kaicanpanah et al. (2021) explored the differences of the self-adjudged perceptions of EAP practice competences and professional development activities between the Iranian teachers with EAP backgrounds and subject teachers who teach EAP; it was found that teachers with EAP backgrounds indicated higher self-adjudged competences regarding aspects such as feedback and error correction. This type of research is particularly important to address in the English-medium universities with students who might be multilingual speakers, like Singapore universities where even the domestic students just speak English as a lingua franca and speak a different ethnical language such as Chinese, Malay and Tamil (Bo et al., 2023). By examining the beliefs and perceptions of instructors across subjects in the multilingual context, it can provide insights about how the English-medium university can better prepare the students for their academic studies in their degree programmes.

Given this context, the Faculty Survey Instrument (FSI) by Rosenfeld et al. (2001) was adapted for this study, as it was developed to measure the perceived importance of various academic English tasks grouped under the four categories of listening, reading, writing and speaking from the perspectives of teaching staff. Apart from its suitability, the FSI has other advantages. Originally designed to examine academic English skills thought to be important for both native and non-native speakers in their university studies, the instrument would fit this study since the student population at the university are multilingual speakers who speak English as their first language. Further, the original questionnaire was designed to measure EAP needs across different disciplines (i.e., chemistry, computer and information studies, history, psychology, electrical engineering and business/management), and this study in part aimed to investigate if there are underlying differences between the perspectives of instructors from different academic fields.

Prior needs analysis studies have also faced several methodological limitations, which this study aimed to address. Although there was no lack of research that assessed needs based on the perceptions of teaching staff, most used a relatively small sample of instructors. Studies by Akyel and Ozek (2010), Huang (2010), Huang (2013) and Furka (2024), for instance, sampled only 125, 93, 88 and 126 subject lecturers respectively. In response to this methodological shortcoming, this study used data collected from a larger sample of 401 instructors at a publicly funded autonomous university in Singapore. Further, some research examined only one aspect of EAP, such as academic writing or speaking, such as AlHashemi et al. (2017), which investigated academic writing needs, or Mak (2021), which examined academic presentation skills. In adapting the FSI, this study could measure the importance of all four skill domains of academic English, namely listening, reading, writing and speaking, to achieve a more robust needs analysis.

Finally, while studies assessing needs across the four skill domains of academic English exist, the instruments employed have been validated either by just reporting reliability coefficients or via classical factory analytic procedures which are sample dependent and prone to misleading outcomes (Linacre, 1998). For example, in their study Akyel and Ozek (2010) reported a Cronbach's alpha of 0.75 and 0.70 for the student and teaching staff questionnaires on the perceived frequency and difficulty of various language tasks and concluded that that the questionnaires were adequately reliable. Likewise, Huang (2013) adapted the FSI but did not validate it. Instead, the study presented a Cronbach's alpha of 0.89, 0.83, 0.93 and 0.91 for the perceived importance of listening, reading, writing and speaking skills respectively. Such approaches to validating the scores arising from questionnaires have seen well-established limitations. For example, according to Lim and Chapman (2022), when applied to instruments with rating scales (e.g., Likert scale), classical factor analytic procedures provide no basis for the additivity of scores. Furthermore, the applicability of such procedures across a wide range of contexts is limited due to (1) dependence of person statistics on item-sample, (2) the assumption that person statistics are normally distributed, and (3) dependence of item statistics on person sample. Complementing such procedures would be Rasch analysis, which makes no assumptions about the distribution of person statistics, and item parameters in the instrument to be validated do not depend on the characteristics of respondents. Rasch analysis also enables researchers to interpret results in reference to a latent trait and allows researchers to identify misfitting items in an instrument to make the necessary adjustments. To this end, Rasch analysis was used to validate the FSI in this study.

The literature review undertaken identified several gaps in the existing literature. First, there is a lack of research into how university instructors across disciplines perceive EAP sub-skills (i.e., whether one EAP sub-skill is more important than another, and whether instructors from a particular discipline view an EAP sub-skill as more important than another). Second, measures employed for EAP needs analysis have focused more on the perceptions of students. Third, measures developed and used so far have not been validated via Rasch measurement theory (RMT). These gaps set the key research aims of this study which are: (1) to validate an existing EAP skills measure (i.e., the FSI) via RMT and hence, enable the provision of information about EAP sub-skills from instructors’ perspectives and (2) to identify differences, if any, in how instructors across disciplines view the importance of EAP sub-skills. The corresponding research questions are: (1) to what extent does the FSI yield valid and reliable measures? (2) are there differences in how university instructors perceive the importance of EAP sub-skills based on the FSI?

Methodology

Among 1,322 university instructors invited for this study, 442 instructors responded, of which 401 provided complete questionnaire responses which were used for the Rasch analysis. As highlighted, the questionnaire adapted for this study was the FSI by Rosenfeld et al. (2001). The following sub-sections detail the adapted questionnaire, sampling strategy, how data were collected, and how the data were analysed.

Adapted questionnaire

This study examined teaching staff's perspectives on the issue of students’ academic English in tertiary education, with the intent to discover which academic English skills and areas are perceived as more important for competent academic performance. We used and adapted the 42-item FSI originally developed in order to translate theoretical frameworks underlying the TOEFL test into specific listening, reading, writing and speaking proficiency tasks. Participants in the original questionnaire were asked to rate the tasks in terms of their importance to the successful completion of university coursework.

The tasks or items in this questionnaire were generated and defined by the four TOEFL 2000 Framework Teams based on the task statements used in previous needs analysis studies and after that were reviewed by 26 teaching staff and students at both the graduate and undergraduate levels. The Framework Teams then revised the task statements based on the feedback given before developing them into the final questionnaire. A total of 360 university instructors and 345 students from various disciplinary areas (e.g., chemistry, computer and information studies, history, psychology, electrical engineering and business/management) from 21 universities in Canada and the US completed the original questionnaire, which can be found in Supplemental Annex C.

Sample

This study used a mix of convenience and stratified sampling strategies. This provided sampling variability that enabled a robust validation and comparisons between sub-groups, given that convenience sampling alone suffers from generalisability issues (Lewis-Beck et al., 2004). A convenience sample of content area instructors at the university was first drawn. All instructors at the university who had been deployed for teaching in the past four semesters, and taught courses in English, were included (N = 1322). Teaching staff in the sample were distributed across the School of Human Development, School of Business, School of Science & Technology, School of Humanities & Behavioural Sciences, and other schools and departments (e.g., School of Law, Centre for Continuing & Professional Education, etc.) at the selected university.

As the data collection process had two phases, we further divided the convenient sample into two subsamples based on six stratification variables, namely gender, age, school/department, highest qualification, years of teaching experience and teaching position (i.e., course leader, consultant and instructor). The two demographic variables of age and gender were cross-tabulated with the four variables on teaching staff's academic background. The number of instructors in each cross-tabulated category was then divided to create two subsamples representative of the convenient sample. Following the creation of two subsamples, we distributed the questionnaire to the first subsample during the first phase of data collection and to the second subsample during the second phase of data collection. Over a period of six months, we received a total of 442 responses (i.e., a response rate of 33.43%). The 41 incomplete responses were excluded while the remaining 401 complete responses were analysed. Table 1 details information about the sample characteristics.

Sample characteristics of respondents.

Data collection

We obtained ethics approval from the Institutional Review Board (IRB) of the university chosen for this study before inviting instructors to complete the questionnaire. We encouraged participation by reimbursing each respondent with a S$20 voucher. While we collected respondents’ names and emails to issue them the vouchers and for auditing purposes, their personally identifiable information was collected through a survey separate from our study's questionnaire and therefore could not be traced back to their questionnaire responses. Separation of respondents’ confidential information from research data/questionnaire data allowed us to use incentives to increase response rates while also ensuring anonymity for research participants.

We entered the questionnaire items into Qualtrics and sent the questionnaire's link to respondents via their university emails, which we obtained from the relevant department in the chosen university and with permission from its IRB. The front page of the questionnaire contained an embedded information sheet detailing key information about our research and an informed consent form that allowed participants to indicate their consent to participate in the questionnaire by selecting the appropriate answer. Only those who indicated their consent through this step would be taken to the questionnaire. The questionnaire comprised 42 items intended to assess the importance instructors assigned to academic competencies categorised by language skills (i.e., reading, listening, writing and speaking). The categories of reading and listening each contained 11 items while the writing and speaking categories each comprised of 10 items. Responses were expressed through a 6-point rating scale (i.e., 0 = not important; 1 = slightly important; 2 = moderately important; 3 = important; 4 = very important; 5 = extremely important). The last six selected response items are questions about participants’ demographic and academic profiles. Please refer to the link for details of data and analysis in the manuscript: https://osf.io/h6sg4/?view_only = 7f6caa6d764243168f184710358c1e54.

Analysis of questionnaire data

RMT was used for analysing the psychometric properties of the questionnaire that was administered, in part, based on the literature review, to potentially fill the methodological gap suggested (i.e., that most EAP needs measures were either non-validated or validated via classical factor analytic approaches), and to address the research questions. The sample size was deemed to be adequate based on recommendations by Linacre (1994) (i.e., 243).

Rasch measurement theory

RMT is a probabilistic measurement model that is used to measure latent variables in the social and medical sciences (Andrich & Marais, 2019). The theory is based on the idea that the probability of a person giving a certain response to an item is a function of the difference between the person's ability and the item's difficulty or in the case of this study, the difference between the person's willingness to endorse an item and the item's endorsability. Rasch analysis has been used to analyse data from questionnaires, tests and surveys. It is used to determine the quality of the measurement instrument, the quality of the data and the quality of the responses. Rasch analysis is also used to determine the reliability and validity of the measurement instrument.

Rasch analysis was selected for analysing the data from the questionnaire in this study as: (1) Rosenfeld et al. (2001) had earlier proposed an a priori structure of the questionnaire (i.e., four domains of reading, writing, listening and speaking); (2) RMT provides a unified approach to instrument design, measurement theory and data analysis; (3) RMT makes no distributional assumptions of the data; and (4) RMT allows for ordinal rating scales such as the “importance” rating scale used in this study to be analysed by presenting appropriate elements of interval measurement on a log-linear scale (Lim & Chapman, 2022). In view of the “importance” rating scale used in this study, the polytomous Rasch model expressed by Equation (1) was applied:

With the intent to identify which areas of academic English in tertiary education were perceived by academics as important, RUMM2030 (RUMM Laboratory Pty Ltd, Perth, Australia) was used for the Rasch analyses, within which the following areas of the questionnaire were investigated: (1) threshold ordering, (2) item fit, (3) response dependence, (3) dimensionality, (4) item invariance and (5) targeting.

Threshold ordering

In RMT, threshold ordering refers to the property of a measurement instrument where the items are ordered in such a way that the probability of a correct or more endorsable response increases monotonically as the person's ability or willingness to endorse an item increases. Threshold ordering is an important characteristic of the measurement instrument because it ensures that the instrument is measuring an intended construct, as supported by validity evidence. The threshold parameters in the Rasch model represent the points on the latent trait continuum where the probability of a response changes from one response category to the next and hence, threshold ordering is essential for the Rasch model to work correctly and for the measurement instrument to yield valid and reliable outcomes (Andrich & Marais, 2019).

Item fit

Item fit in RMT refers to the degree to which the observed data (i.e., item responses) conform to the expected response patterns predicted by the Rasch model. Fit statistics are commonly used to evaluate item fit, though it is noteworthy that there is no absolute criteria for interpreting fit statistics and their corresponding values (Andrich & Marais, 2019). The χ2 statistic, item-fit residual statistics and the item characteristic curves, all of which are provided within RUMM2030 were used to evaluate item fit.

Response dependence

Response dependence refers to the situation where the response to an item is influenced by the response to another item. This is a violation of the assumption of local independence, which is a fundamental assumption of RMT. Response dependence can reduce the evidence of change and bias the person estimates, leading to misinterpretation of actual changes due to treatments or other reasons. Therefore, response dependence should be avoided in RMT for measurement outcomes to be valid and reliable.

Dimensionality

In RMT, dimensionality refers to the extent to which a measurement instrument measures a single construct or trait. If the instrument is unidimensional, the Rasch model can be used to estimate the person's ability level or willingness to endorse with greater precision. A very conversative way of evaluating dimensionality in RUMM2030 is by first dividing items of the first principal component into two subscales based on the factor loadings from the principal components analyses (PCA) and applying a paired t-test (Andrich & Marais, 2019).

Item invariance

Item invariance refers to the property of a measurement instrument where the item parameters are invariant across different groups of people. In this study, the respondents were grouped in terms of gender, school they taught with, age, qualification and years of teaching experience in the university in this study (see Table 1). If the items are not invariant across different groups of people, then the instrument is said to exhibit differential item functioning (DIF), in particular, adverse DIF as opposed to benign DIF (Breslau et al., 2008).

Targeting

Targeting refers to the degree to which the items in a measurement instrument are appropriately matched to the ability level of the respondents being measured. If the items are too difficult or too easy (or too difficult or easy to endorse in the context of this study) for the intended population, the instrument may not provide sufficient information with regard to the construct measured, leading to less precise estimates of the person's ability level. In RUMM2030, the person separation index (PSI), together with the person-item distribution can be used to evaluate how well targeted the instrument is. A higher PSI (e.g., between .70 and 1.00) indicates that the instrument is more reliable and can distinguish between respondents with greater precision.

Results

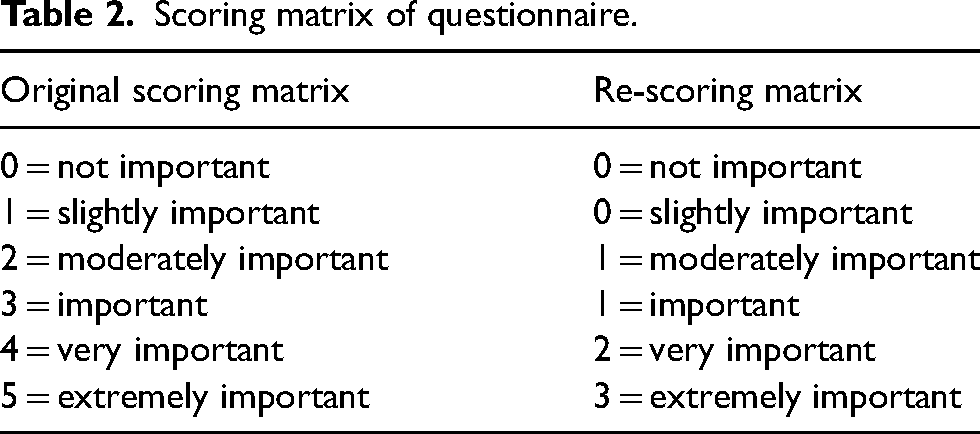

The Rasch analyses were performed progressively. All the 42 FSI items were first considered as manifest variables of an overarching construct labelled “Important academic English skills in tertiary education.” This initial Rasch analysis resulted in violations of RMT. For example, all except four items displayed disordered thresholds, and the values of the item-fit residual (M = .42, SD = 2.34) and person-fit residual (M = −.50, SD = 2.32) suggested that the observed data did not conform to the expected response patterns predicted by the Rasch model. Despite collapsing adjacent categories and re-scoring all the items (see Table 2), noting that collapsing adjacent categories is one way of overcoming disordered thresholds (Andrich & Marais, 2019), violations of RMT remained. As an example, the residual correlation matrix reported 15 standardised item residual pairs above 0.40, suggesting the presence of response dependency between 15 pairs of items. To resolve the violation of RMT due to response dependence, a total of 17 items with high item-fit residuals, or standardised item residuals and perceived as similar to other items were disregarded (see Table 3).

Scoring matrix of questionnaire.

Items disregarded and similar items retained.

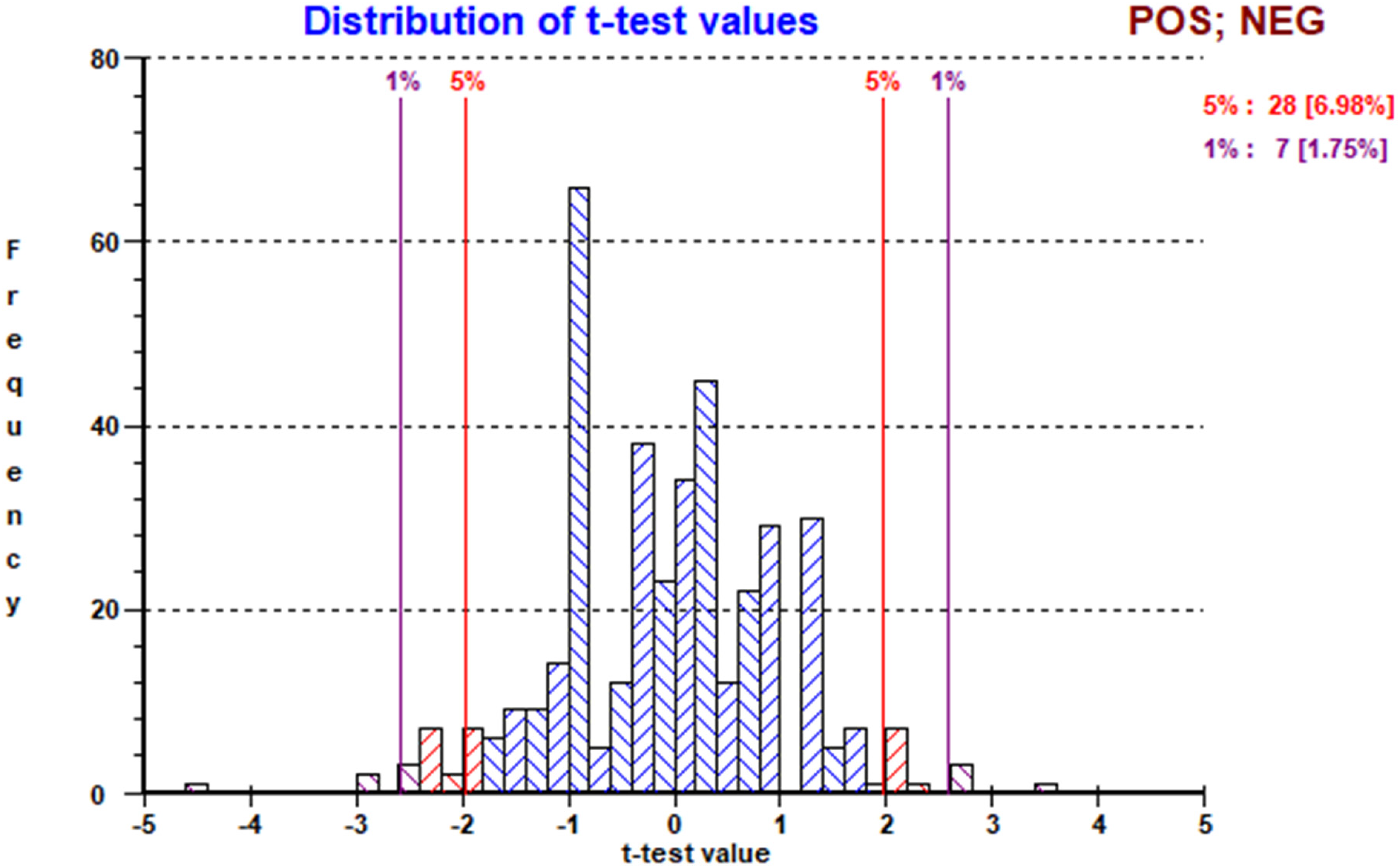

Despite collapsing categories to re-score the items and disregarding poor fitting items and items displaying response dependency, the Rasch analysis did not support unidimensionality. Figure 1 shows, based on the approach of applying a paired t-test to two subscales derived from the first principal component in RUMM2030, that 17.71% of respondents, way above the 5% critical value, showed a significant difference between the person locations based on the two subscales. Hence, it cannot be concluded that all the remaining 25 items could be regarded as manifest variables of an overarching construct.

Paired t-test result of two subscales derived from first principal component of initial Rasch analysis.

Subsequently, each domain (i.e., reading, writing, listening, and speaking) was analysed separately. Considering the a priori structure proposed by Rosenfeld et al. (2001) and to determine the presence of a general factor, a subtest analysis was also performed, similar to a bifactor analysis. The following sections present the results of the separate Rasch analyses performed, based on threshold ordering, item fit, response dependence, dimensionality, item invariance and targeting.

Reading

Comprising 11 items, the “reading” skill was updated to five items following the initial Rasch analysis that found six items that violated assumptions of the RMT (i.e., disordered thresholds, high standardised item residual correlations and high item-fit residuals). The Rasch analysis upon these remaining five items yielded sound psychometric properties, suggesting that reading, as perceived by academics for tertiary education students, could be represented by the skills identified through the items. The absence of disordered thresholds, the non-significant item–trait interaction χ2 statistic (χ2 (25, n = 401) = 29.44, p = .25), and the item and person-fit residual statistics (M = .37, SD = .75; M = −.28, SD = .96) indicated a fit between the observed responses and the Rasch model. There was also no evidence of response dependence, based on the standardised item residual correlation matrix that reported no values greater than 0.30 (Andrich & Marais, 2019).

The analysis also found evidence to support unidimensionality of this “reading” factor, as only 3.99% of respondents showed a significant difference between the person locations based on the two subscales derived from the first principal component. There was also no evidence of differential item functioning across all categories (i.e., gender, age, school the respondents taught with, qualification and years of teaching experience in the university in this study) and hence, item invariance was achieved. The items within this skill were also adequately targeted, with a PSI of .69.

Writing

This skill comprised eight items after re-scoring and removing three items due to the issue of high standardised item residual correlation. The absence of disordered thresholds upon collapsing adjacent rating categories, the non-significant item–trait interaction χ2 statistic (χ2 (40, n = 401) = 55.61, p = .05), and the item and person-fit residual statistics (M = .15, SD = 1.20; M = −.45, SD =1.50) indicated a fit between the observed responses and the Rasch model. Response dependence was also not detected as the standardised item residual correlation matrix reported no values greater than 0.30. This skill was also unidimensional, based on the paired t-test on the two subscales derived from the first principal component, with only 3.99% of respondents showing a significant difference between the person locations. Only one of the eight items (i.e., 20 Demonstrate a command of standard written English, including grammar, phrasing, effective sentence structure, spelling and punctuation) displayed DIF by age group, though this was concluded as a benign DIF (Breslau et al., 2008) as the item characteristic curve (Figure 2) did not suggest any systematic difference between age groups. Items within this skill were also well-targeted, with a PSI of 0.83.

Item characteristic curve of item WL020.

Speaking

This skill comprised five items, after five items were disregarded due to violations of the RMT (i.e., disordered thresholds, high standardised item-residual correlations and high item-fit residuals). The Rasch analysis on the remaining five items found: (1) a non-significant item–trait interaction χ2 statistic (χ2 (25, n = 401) = 34.82, p = .09); (2) all items had ordered thresholds; and (3) acceptable item- and person-fit residual statistics (M = -.19, SD = 1.94; M = −.41, SD =1.04). All these suggested that the observed responses fit the Rasch model. In addition, there was no evidence of response dependency as the standardised item residual correlation matrix reported no values greater than 0.30. This speaking skill was also considered unidimensional as only 2.00% of respondents showed a significant difference between the person locations based on the two subscales derived from the first principal component. While all the items within this skill were well targeted with a PSI of 0.83, two items (i.e., 25d Demonstrate facility with standard spoken English including grammar, word choice, fluency and sentence structure while giving and supporting opinions, and 25g Demonstrate facility with standard spoken English including grammar, word choice, fluency and sentence structure while explaining or informing) reported DIF by the school respondents taught with and age categories respectively (see Figures 3 and 4).

Item characteristic curve for item 25d.

Item characteristic curve for item 25g.

The DIF for 25d was considered benign as it was within reasonable expectation that respondents (i.e., academics) from the School of Science & Technology presented a lower probability of endorsing this item, as programmes this school offered were largely related to the hard sciences that involve quantifiable and measurable phenomena. This is contrary to the programmes related to the social sciences offered by the School of Humanities & Behavioural Sciences, which often require students to give and support opinions. The DIF presented by item 25g was also assessed as benign, as this item was about “explaining or informing” using proper grammar, and it was reasonable to expect that those aged 55 and above would present a lowered tendency to endorse this item as important. This expectation could be a reflection that the youngest of this age group (i.e., those born in 1968 and aged 55 as of 2023) would have completed their general education latest by 1988, and it was only in the 1980s that vernacular schools dwindled, and by 1987 all Singapore schools taught English as a first language (Ho, 2016). This being the case, it is within reasonable expectation that some of the respondents in this age group received their education in vernacular schools that might not have emphasised proper use.

Listening

This skill comprised seven re-scored items after four items were disregarded (i.e., items 26, 29, 35 and 36) due to high standardised item residual correlation values that suggested response dependency. The Rasch analysis on these seven items found a significant item–trait interaction χ2 statistic (χ2 (35, n = 401) = 68.16, p < .05). Nonetheless, noting the minimum sample size recommended by Andrich and Marais (2019) (i.e., 10 persons for each threshold), and that the χ2 statistic is sensitive to sample size, the sample was adjusted using the algebraic option in RUMM2030 to 275, way above the minimum required sample of 210 in this case. Using the updated sample size, the analysis yielded a non-significant item–trait interaction χ2 statistic (χ2 (35, n = 275) = 49.98, p =.05). Along with the ordered thresholds on all the items, and the acceptable item and person-fit residual statistics (M = -.31, SD = 1.90; M = −.47, SD =1.30), it was concluded that the observed responses fitted the Rasch model.

No response dependency between the items was detected, based on the standardised item residual correlation matrix. While there was also no DIF across all categories, there was a minor violation of unidimensionality as the paired t-test indicated that the estimates from the two subscales derived from the first principal component were different for 6.98% of the persons (see Figure 5). As this was a very conservative approach to determining dimensionality (Andrich & Marais, 2019), it could be concluded that the seven items within this skill represented manifest variables of a undimensional construct.

Paired t-test result of two subscales derived from first principal component of listening factor.

Subtest analysis

To determine if each of the 25 items (i.e., five on reading, eight on writing, five on speaking and seven on listening) was influenced by a general factor, a subtest analysis was performed in RUMM2030, noting that a subtest analysis presents a bifactor equivalent solution (An & Yu, 2021). The subtest analysis was performed by sub-testing the items from each factor, forming four subtests in all. The proportion of common variance retained arising from the subtest analysis was 0.91. This suggested that it was tenable to also consider all the 25 items as manifest variables of a general factor and use a total sum score, apart from the individual sum scores of each factor. As assessment surfaced as a common phenomenon based on the skills reflected by all 25 items, it was concluded that labelling this general factor as “importance of EAP skills for university assessment” would be appropriate, with the subtests, from least to most endorsable (as important skills), being reading (ST1), listening (ST4), writing (ST2) and speaking (ST3) (see Figure 6). This suggests that the respondents rated speaking as the most important skill for academic English skills for university assessment, and reading as the least; this was within expectation, as most of the university assessments were weighted significantly heavier upon assignments that required speaking (e.g., presentation) and writing (e.g., essay) proficiencies.

Subtest analysis item map.

Discussion and practical implications

In this study, Rasch analyses were undertaken to address the two specified research questions: (1) to what extent does the FSI yield valid and reliable measures? (2) are there differences in how university instructors perceive the importance of EAP sub-skills based on the FSI? As described, the Rasch analyses performed on the original FSI found items that violated assumptions of the RMT. Based on the analyses, the questionnaire was revised to a 25-item questionnaire (see Supplemental Annex B) that represented the four skills perceived by academics as important for tertiary education purported by Rosenfeld et al. (2001); it can be concluded that the revised 25-item FSI (FSI-R) would yield valid and reliable measures across the four EAP sub-skills (i.e., reading, writing, speaking and listening). As presented in Table 4, the analyses also identified the most and least endorsable items per skill, as with the subtest analysis that identified that most and least endorsable skill shown in Figure 6. With this information, institutions could focus on speaking, writing, listening and reading skills in that order, based on perceived importance. It is worth mentioning that no significant differences were found across various subjects about the perceived academic English skills, which might challenge the common claim in the literature review that there are subject specific expectations (Dou et al., 2023; Hyland, 2009). This could be due to the shared beliefs about the important academic English skills to lay the very basic foundation to help the further development of academic literacy in different subjects among the instructors in the context of the current study.

Most and least endorsable items per skill.

The items in Table 4 suggest skills or areas relevant to assessment at the university level. Based on these, academics can prioritise skills such as understanding assignment instructions, supporting ideas with reasons and explaining concepts over synthesising ideas or describing objects. Taking a written assignment such as a standardised test for example, it would be reasonable for academics to expect that it would be most important for students to be able to read and understand assignment instructions (item 5), failing which, the ability to synthesise ideas across texts (item 11) might be futile, for the effort undertaken for this could come to nought, if the student did not understand the assignment instructions clearly. In terms of writing, it is also within character that academics view the use of relevant reasons and examples to support a position or idea (item 18) as most important, compared with students being concerned about audience needs (item 13) in a written assignment, since all markers are required to follow a marking scheme or rubric, and all marking requires rigorous standardisation and moderation processes.

At the university level, it is within reasonable expectation that students have the ability to articulate and explain (as represented by items 25g and 30), rather than just describe an object (item 25c). Hence, the Rasch analyses found that academics identified item 25g as most important (endorsable) as opposed to item 25c within the listening factor; this is consistent with the desired outcomes of education stipulated by the Singapore Ministry of Education for post-secondary education (i.e., students should be able to think critically and communicate persuasively at the end of post-secondary education) (Singapore Ministry of Education, n.d.). In this regard, EAP courses should emphasise critical skills such as understanding main ideas in speaking and supporting arguments in writing.

As part of the Rasch analyses, no item-level adverse DIF was found within the FSI-R. This suggested that no differences between how instructors across the schools sampled viewed the importance of the four EAP sub-skills and hence, addressed research question (2). While there was slight DIF for the reading skill at the subtest level (see Figure 7), this DIF was concluded as benign, as it was within expectation that instructors from the School of Humanities & Behavioural Sciences would tend to perceive the reading skill as more important than instructors from other schools, noting that the reading skill comprised items such as “determine the basic theme (main idea) of a passage” and “synthesise ideas in a single text and/or across texts.”

Item characteristic curve for reading skill.

There are several practical implications based on what was analysed and discussed. Firstly, there are benefits of offering and assessing academic English courses addressing one or more of the four EAP skills identified (i.e., reading, writing, speaking and listening) (Galaczi, 2018). Despite the common belief that each skill (i.e., reading, writing, listening and speaking) has its unique benefits and plays a crucial role in learning processes, where there are resource constraints, universities could consider focusing, in order of perceived importance, on speaking, writing, listening and reading, for the purpose of training students to complete assessment. Each skill could also be designed based on information on how instructors perceived the most and least important item.

At a more micro level, information from Table 4 can be used to design and emphasise areas of academic English courses. To begin with, prior research found that speaking skills were much more valued by university instructors than students (who speak English as the first language) both at the undergraduate level and at the graduate level (Huang, 2013). The current study echoed the perceived importance of speaking by the university teaching staff who consistently pointed out the significance of speaking skills expected from their students (who are multilingual speakers with English as the first language). To specify, understanding the main ideas and the supporting information in speaking is considered as the most important aspect, and any EAP speaking courses should ensure the training of this aspect to help students meet the expectations of the disciplinary instructors in the university. Similarly, for a writing course that also aims to cultivate students’ skills to produce content, the training could be more focused on, along with more opportunities for student to practise, the use of relevant reasons and examples to support a position or idea. The importance of argumentative writing has been pointed out among second-language speakers of English (Hirvela, 2017), but the current study shows that the ability to write good arguments with supporting ideas is also perceived to be the most significant writing skill by the faculty members in the English-medium university with first-language speakers of English who have the multilingual backgrounds. Hence, it is critical to ensure the topic of building arguments is well covered in all the EAP writing provisions across subjects at the university level. In addition, based on Table 4, EAP provisions about listening could focus more on explaining or informing than describing objects, supporting previous findings about instructors’ perceptions among international students (Furka, 2024). Our study examining faculty members’ perceptions among domestic students in the English-medium university echoes the importance across various subjects in a multilingual context. Regarding reading skills, our findings suggest that students’ reading comprehension of assessment instructions is perceived to be the most critical, which can directly shape student performance in various assessments across disciplines. This indirectly confirms the finding in the literature review that misconceptions about what content instructors expect of students in terms of academic English skills was recognised as a common learning challenge faced by students at the tertiary education level, leading to misinterpretations of assessment requirements on students’ part (Booth, 2005; Itua et al., 2014). However, the repeated emphasis on the performance of assessments by the subject instructors does not seem to be highlighted in the literature, and it requires more attention when it comes to the EAP training of students’ reading skills, especially in contexts where assessments are well valued such as Singapore. This could be due to the cultural differences since student performance in the assessments could be easily interpreted as the demonstration for the skills (or the lack of skills) by the instructors in the exam-oriented contexts.

As with all research, there are some limitations in this study. Despite the sampling approach, there were relatively more respondents from the Schools of Business and Human & Behavioural Sciences. While Rasch analyses are sample independent, a more balanced sample (i.e., equal number of instructors from each school) could present more precise item-level estimates and measures, and enable a more robust sub-group analysis. Further, it would have been useful to gain access to data with more granularity, specifically, at the programme and even course level; the data used for this study were at the school level. It is recommended that with more granular data, information from the FSI-R could be interpreted at the programme or course level; EAP provisions could then be more targeted. As instrument validation is a never-ending process, it is recommended that a qualitative approach involving interviews with the respondents be undertaken subsequently to corroborate the findings of this study. Following the qualitative approach, the instrument can be refined and deployed regularly (e.g., triennially) to appreciate whether the most and least endorsable (important) EAP skills remain the same. With this information, EAP courses can then be further refined to better meet the learning needs of students.

Conclusion

This study was intended to investigate the extent to which the FSI yields valid and reliable measures of EAP sub-skills as perceived by university instructors, and identify, if any, differences in how university instructors perceive the importance of EAP sub-skills. The Rasch analyses undertaken confirmed the four EAP skills perceived by instructors across subjects as important for students’ university studies purported by Rosenfeld et al. (2001). In this regard, the validated instrument (i.e., FSI-R) would be able to provide insights to the development of EAP support in the English-medium universities both for domestic students and international students. While there were no adverse DIF and hence no differences between how instructors from different schools perceived the importance of the EAP sub-skills, the FSI-R presents, at the item-level by each sub-skill, which item is perceived as more important. This would be useful for designing or revising EAP provisions. For instance, the most important reading skill perceived by the instructors about understanding written instructions of assessments should require emphasis on the guidance from both the EAP trainers and the subject instructors in the university. In terms of writing skills, the utmost importance to use relevant reasons and examples to support a position or idea has always been considered the significant aspect of university learning across disciplines (Hirvela, 2017). However, most students were found to struggle with this skill (Hirvela, 2013; Miller & Pessoa, 2016), and unfortunately the subject instructors might not be well equipped to provide the specialised support to students in the subjects (Pessoa et al., 2017). This calls for the continuous training in the EAP support provided by the EAP trainers in the university, so that students can be sufficiently prepared for their academic studies in the disciplines to meet the expectations. Similarly, the perceived importance for students to listen for clear explanations and information echoed the previous study examining faculty instructors’ perceptions about international students (Furka, 2024); the current study extended the findings to the domestic students in the English-medium university who are also multilingual speakers. Last but not least, instructors in the present study appear to value the speaking skill of understanding the main ideas with the supporting information most, which was not evidently shown in the limited prior research, likely due to the previous study's focus on the presentation skills rather than speaking skills in general (Mak, 2021). Based on the current study's findings about the university instructors’ comprehensive perceptions of important skills in academic reading, writing, listening and speaking across subjects, it is imperative to take those insights into account to develop more suitable EAP support to facilitate students’ academic success across disciplines in the English-medium universities.

Supplemental Material

sj-docx-1-pac-10.1177_18344909241305749 - Supplemental material for The validation of a measure to identify academic English skills that matter for tertiary education

Supplemental material, sj-docx-1-pac-10.1177_18344909241305749 for The validation of a measure to identify academic English skills that matter for tertiary education by Lyndon Lim and Wenjin Vikki Bo in Journal of Pacific Rim Psychology

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the Singapore Ministry of Education Start-up Research Funding (grant number RF10018L).

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.