Abstract

Higher education institutions in the UK have organised into mission groups for the advocacy of shared interests and ideologies. Although research productivity is claimed as a key point of difference between these groups, this claim has received relatively little empirical scrutiny. The current study examined the clustering of UK universities based on the research productivity of academic psychologists. It found evidence for the Russell Group’s (RG’s) claim that it represents leading, research-intensive universities, at least with respects to the discipline of psychology. Productivity metrics of a representative sample of 1339 academic psychologists were extracted from Scopus and Scimago database and were averaged to derive department level productivity indicators. Results from cluster analysis provided evidence in favour of RG’s research superiority claim. Cluster level averages of the cluster comprising RG universities were approximately 50–300% higher than those of the cluster comprising non-RG universities. As anticipated, the universities of Oxford and Cambridge surpassed all others to form separate clusters representing an ‘elite’ within the RG.

Keywords

Universities are major producers of scientific knowledge (Youtie and Shapira, 2008). A university’s research performance impacts its capacity to secure funding, influences its national and international profile and rankings and helps drive student demand for its degree programmes. In this climate, the assessment of research productivity matters (McNally, 2010). Among the available measures of productivity, ‘objective’ bibliometric indicators such as publication counts, citation counts and the h-index are used most extensively (Sabharwal, 2013). Another bibliometric indicator of research quality is the Scimago Journal Rank (SJR). The calculation of a journal’s SJR takes into account both the quantity and influence of citing sources (unlike the Impact Factor, which only considers quantity) and higher SJRs reflects greater journal prestige (Falagas et al., 2008). From this, it is reasonable to assume that higher quality research tends to be published in journals with higher SJRs. Using cluster analysis, such metrics can be used to identify commonalities and differences between universities and university departments.

Psychology is a research intensive discipline (American Psychological Association, 2010). Research forms the backbone of psychology and psychologists, both with clinical and non-clinical expertise, are encouraged to continuously engage in research to develop evidence-based strategies for solving human problems. Relatedly, the higher education sector in the UK promotes research-informed learning in the classroom. As a result, research skills and statistical awareness have emerged as an instrumental part of the psychology curriculum (Robertson et al., 2011). To further enhance the quality and quantity of research, funding bodies are increasingly injecting funds into psychological research. For example, the UK Research and Innovation has invested more than £140 million in the last 5 years to fund mental health research (https://www.ukri.org). The expectation to continually engage in research is particularly high in academia where the career success of academic psychologists is hinged on their research performance, which is frequently quantified using bibliometric indicators like publication counts, citation counts and h-index scores. Bibliometric research productivity ‘norms’ for academic psychologists at different levels of seniority at different types of higher education institutions in the US (Joy, 2006) and Australia (Haslam et al., 2017; Mazzucchelli et al., 2019) have been published in recent years. However, in the UK, the research productivity of academic psychologists remains a relatively under-researched topic (McNally, 2010).

As in the United States and Australia, universities in the UK are organised into ‘mission groups’ to advance shared interests and ideologies (Filippakou and Tapper, 2019: 87). Among these groups, the Russell Group (RG) represents a group of elite universities which claim to be ahead of others in terms of research volume and quality (Gilbert, 2008). A recent study by Boliver (2015) examined the classification of UK universities in relation to this assertion. Using cluster analysis, Boliver assessed the clustering of UK universities by examining the quality of research, teaching, economic resources, academic selectivity as well as the socioeconomic student mix. It was reported that RG universities came together to form a cluster representing high research output of ‘international excellence’. This was consistent with their 2008 RAE (Research Assessment Exercise) scores. Notably, Boliver found that two RG universities, Oxford and Cambridge, formed a separate cluster owing to exceptional research performance surpassing the rest of the RG. This finding corresponds with Wakeling and Savage’s (2015) claim that even within the elite RG, a ‘golden’ class comprising these two universities exists. Similarly, Adams and Gurney (2010) reported that academics from golden triangle institutions (comprising University of Oxford, University of Cambridge, University College London, London School of Economics and Imperial College London) were co-authors on 43% of the highest impact papers with UK affiliations published between 2002 and 2006. During that time, the University of Cambridge alone was listed as an affiliation on 15% of all UK research outputs. To complement this, the Universities of Oxford and Cambridge have been at the forefront in receiving quality based research funds in the UK (Times Higher Education, 2015). Finally, the recent 2021 Research Excellence Framework exercise also indicates that Oxford and Cambridge are likely to be the first and third in terms of market share percentage of quality based funding (Times Higher Education, 2022).

Within the discipline of psychology, the evidence supporting claims that ‘elite’ institutions demonstrate the greatest levels of research productivity are more mixed. In the US, Joy (2006) observed only small productivity differences between psychology academics in ‘elite’ and ‘strong’ research universities. In Australia, Mazzucchelli et al. (2019) observed clear productivity differences between psychology academics within and outside of the ‘Group of Eight’, with the magnitude of these differences decreasing as seniority increased. In the UK, Lai et al. (2022) observed consistent differences between the productivity levels of psychology academics within and outside of the RG, which were due primarily to the very strong research performance of the RG professoriate. At lower academic levels, differences were more modest, and mostly non-significant. To our knowledge, an analysis akin to Boliver’s (2015), but at the psychology discipline level and focused solely on research performance has never been undertaken.

Therefore, the aims of this study were to use cluster analysis to (a) examine the grouping of UK universities based on the research performance of their psychology departments and, in doing so, (b) assess the research-intensity claims of the RG. We hypothesised that the RG will cluster (H1), with the exception of the Universities of Oxford and Cambridge, which will form a separate cluster (H2).

Methods

Design and data sources

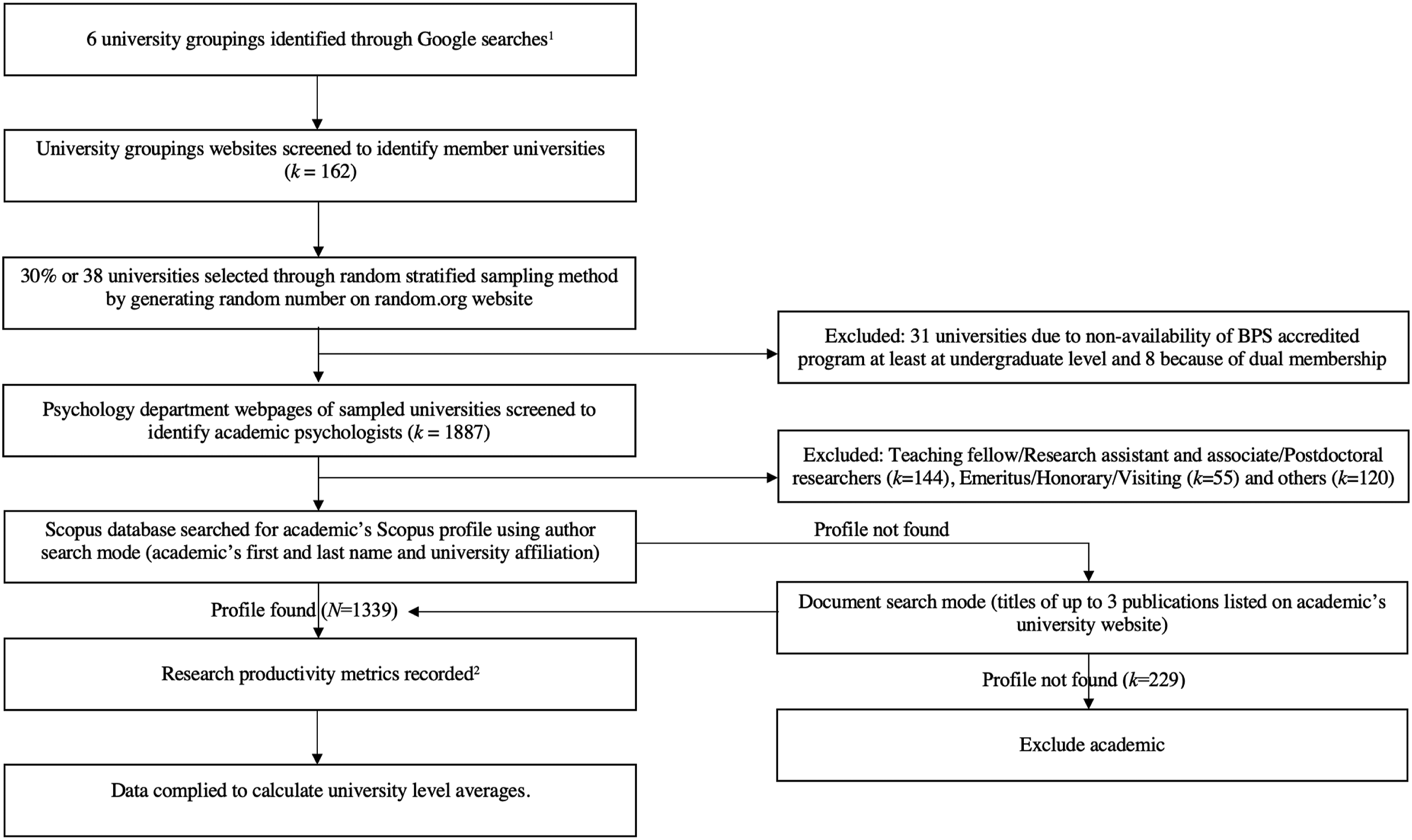

In this exploratory pre-registered study (https://osf.io/e93v5/), the cluster analysis was based on five indicators of research productivity: lifetime publications, lifetime citations, lifetime h-index scores, lifetime first author publications and SJRs of published in journals. This information was extracted from the Scopus and Scimago databases between May and June 2020 for all psychology academics with Scopus profiles employed at lecturer level or above (excluding emeritus, honorary, adjunct and visiting professors; N academics = 1339) for a stratified random sample of 30% of UK universities (N universities = 38) within each university mission group (Russell Group, Cathedrals Group, GuildHE, MillionPlus and University Alliance) as well as a random sample of 30% of unaffiliated UK universities, many of which were once members of the disbanded 1994 group. Figure 1 presents the flow diagram of the full data collection process. In situations where we were unable to determine an academic’s job title from their university web profile, we searched ResearchGate, Google Scholar and LinkedIn. If these searches were unsuccessful, we ran a Google search of the academic’s name and university affiliation. If we were unable to determine their job role within the first two pages of search results, they were excluded from our sample. After each academic psychologist who met our inclusion criteria was identified, their research productivity metrics were retrieved from their Scopus profile. Prior to the collection of any data, this study was approved by the School of Psychological Science Human Research Ethics Committee at the University of Bristol (Approval Code: 102862). Flow diagram showing the data collection process.

Data analysis

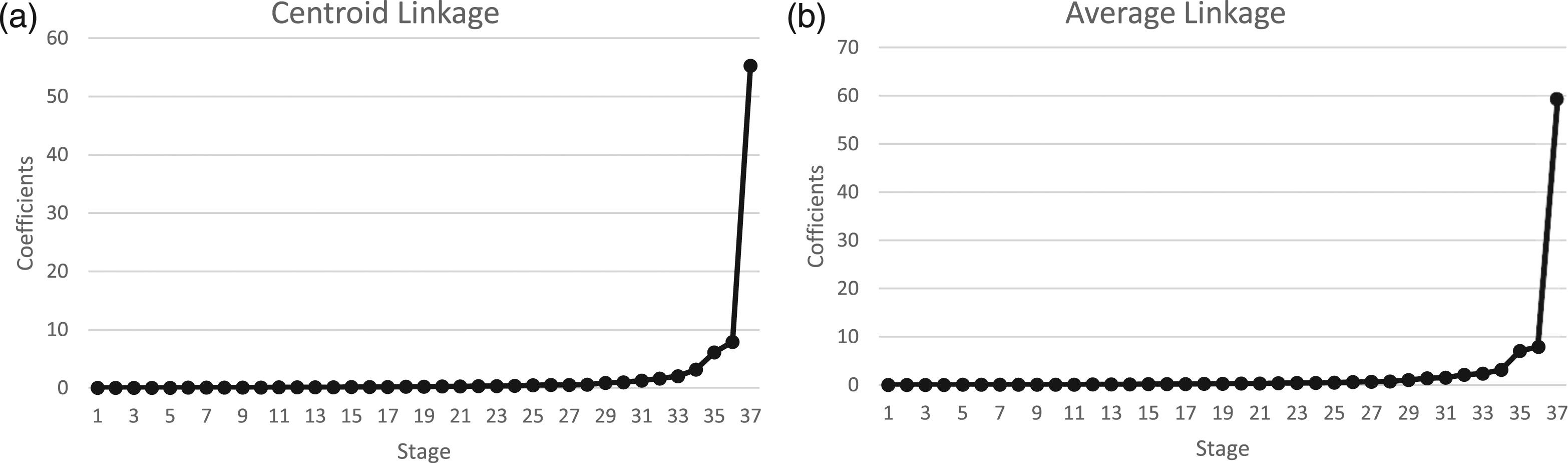

A hierarchical agglomerative cluster analysis with the centroid method and average linkage method based on squared Euclidean distance (Yim and Ramdeen, 2015) was performed to (a) classify the universities into clusters based on the five research productivity indicators and (b) to identify the optimum number of clusters with high within-cluster homogeneity and between-cluster heterogeneity. Prior to analysis, the indicators were standardised, since they were measured on different metrics. The agglomeration schedule was used to identify an ideal ‘elbow point’ or cut-off point by examining when the difference between coefficients of two consecutive stage increased suddenly (indicating an increase in heterogeneity) through a scree plot. Further, the dendrogram was used to verify the ‘elbow point’ and identify cluster members.

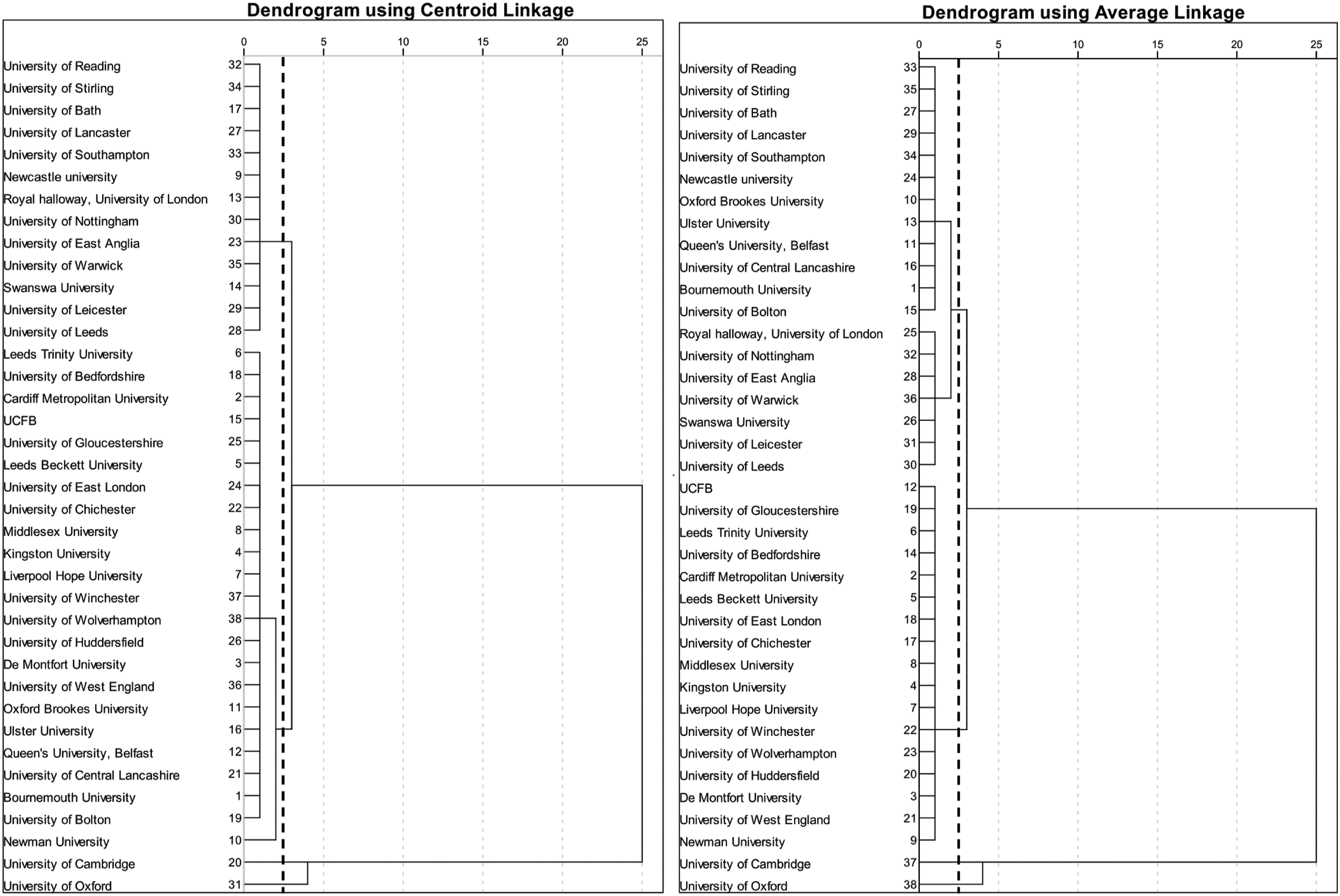

Results

Clustering started with each university representing a cluster, such that the dissimilarity accounted for by each cluster was 100%. Then, individual clusters (universities) fused in several stages to ultimately form one cluster, reducing the dissimilarity accounted for by each to 0%. At the ‘elbow point’, a further reduction in the number of clusters led to a drastic fall in the accounted dissimilarity by clusters (Boliver, 2015). To ensure robustness, we checked the convergence of our findings using two clustering methods––the centroid and average linkage methods. Since there were no notable differences between the clustering produced by the two methods, the results discussed herein pertain to the centroid method wherein the mean value (centroid) for each cluster is computed, and cases are fused based on their distance from this centroid. The scree plots and dendrograms for both methods are presented in Figures 2 and 3, respectively. Scree plots showing the change in coefficients by stage. Dendrograms showing the solution of cluster analysis.

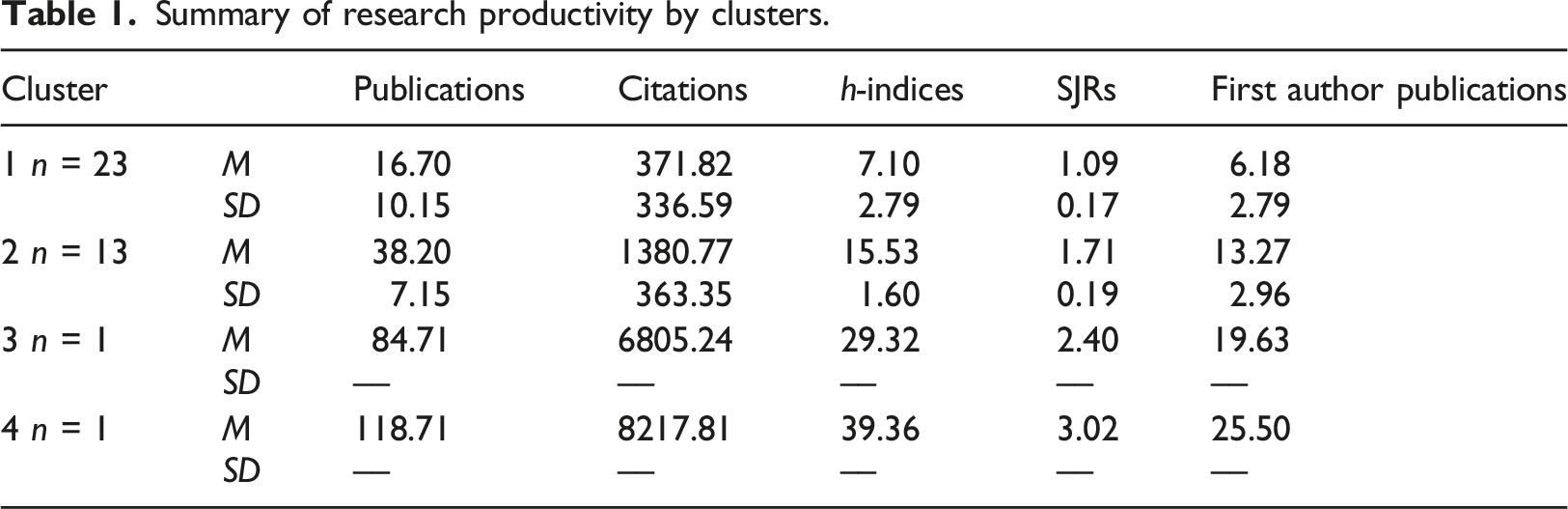

Summary of research productivity by clusters.

Clusters three and four were the Universities of Cambridge and Oxford respectively. Cluster two comprised five of the six RG universities (83.3%) and eight unaffiliated universities (including former members of the 1994 group). Cluster one represented a group of universities with below-average research performance. Queen’s University Belfast was the only RG university in cluster one. However, all RG universities clustered together when the average linkage method was used.

Discussion

This study aimed to assess the clustering of UK universities based on the research productivity of academic psychologists. The five indicators of research productivity used in the analysis were extracted from Scopus and Scimago for academic psychologists employed at lecturer level or above at a stratified random sample of 38 universities. These were publication counts, citation counts, h-index scores, first author publication counts and SJRs for the identified publications.

Findings from our study support the research-intensity claim of the RG, at least with respects to the discipline of psychology. As hypothesised, the organisation of universities into clusters was such that all RG universities came together into the clusters representing above-average research productivity when the average linkage clustering method was used. However, when the centroid method was used for the analysis, Queen’s University Belfast emerged as an exception. Its productivity means were below the overall average and it joined the first cluster, comprised of universities with below-average levels of research productivity.

While the differential clustering of Queen’s University Belfast pertains to the different clustering methods, its placement in the below-average research productivity cluster when using the centroid method is not entirely surprising. Queen’s University Belfast is an old and reputed university with good national and international standings. However, it occupies one of the lowest ranks in the subject of psychology amongst all RG universities (Times Higher Education, 2023). The psychology department at Queen’s University Belfast is known for its doctoral programmes in clinical and educational psychology, the admission process of which is handled autonomously by the university. Although several other RG universities also deliver such professional training in the UK, it can be argued that Queen’s University Belfast’s particular emphasis on professional psychology education has been at the expense of research productivity, at least as defined in our study.

Furthermore, since the unaffiliated group included reputed universities with high performing (research-wise) psychology faculties (e.g. University of Leicester and Swansea University), their inclusion in cluster two with most of the RG universities was not surprising. As expected, our study observed a branching out of the University of Cambridge and University of Oxford from the rest of the RG, a finding also reported by Boliver (2015). However, instead of forming a single cluster as seen in Boliver (2015), our results showed that they formed two single-member clusters. A closer examination of their research productivity metrics in Table 1 indicate that even after representing an ‘elite’ class, Oxford supersedes Cambridge to represent an ‘elite within elite’ class, with its research productivity indicators being extraordinarily high.

Boliver (2015) focused her analysis on variables indicating university-level excellence in teaching, academic selectivity, demographic mix and economic resources, along with research excellence. The current study based its clustering solely on research productivity at the psychology department level. This may explain some of the differences between our findings and Boliver’s. Moreover, Wakeling and Savage’s (2015) view of a ‘golden’ class comprising Oxford and Cambridge within the RG also corresponds with our findings. Support also comes from their idea of classification within the ‘golden’ class, where Oxford surpasses Cambridge in various aspects, a finding which is highlighted in this study. Our results, therefore, offer considerable support for H1 and H2.

Limitations and future directions

The interpretation of our findings warrants caution because of several limitations. First, while Scopus derived metrics have clear advantages over metrics derived from other academic databases (see Aghaei Chadegani et al., 2013), some inaccuracies were observed during sampling (e.g. incorrect or out-of-date affiliations). Second, although more comprehensive than other academic databases (e.g. PsycINFO), Scopus does not capture all academic outputs. We then further limited out searches to just ‘article’ and ‘review’ type journal articles. Consequently, the figures we’ve reported almost certainly underestimate true levels of research productivity amongst UK academic psychologists. Replications using other academic databases and search criteria, as well as other indicators of research productivity and research impact (Smith et al., 2013) are needed to validate and extend our findings. Third, even though journal metrics such as SJRs and impact factors are commonly used as proxies for the quality of journals (Diaz et al., 2021), their use when evaluating individual research papers or the work of individual researchers or research groups is controversial (Lariviere et al., 2016). Additionally, these metrics have been critiqued on many grounds including a lack of clear operationalisation, unreliable, out dated and nonreplicable rankings and concerns with management of self-citation (see Mañana-Rodríguez, 2015). Finally, the present study sampled academic psychologists to examine variation in research productivity across universities. We cannot, therefore, claim that our findings hold true for other disciplines. Further studies can assess the generalisability of our finding by sampling academics from different disciplines.

Conclusion

The aims of this study were to use cluster analysis to examine the grouping of UK universities based on the research productivity of their psychology departments and, in doing so, assess the research-intensity claims of the RG. To the best of our knowledge, only Boliver (2015) has investigated the grouping of UK universities in this way previously, albeit at an institution rather than discipline level. Furthermore, research activity was just one of the variables in Boliver’s analyses, and was primarily operationalised with 2008 RAE scores. We found that, whilst almost all RG universities came together in a cluster representing above-average research productivity, the universities of Oxford and Cambridge formed separate clusters, giving evidence of their exceptional research productivity. These findings support RG’s claims of research intensity within the domain of psychology. Future research extending to other academic databases and other disciplines is now needed.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.