Abstract

Estimating distance traveled is a frequently arising problem in robotic applications designed for use in environments where GPS is only intermittently or not at all available. In UAVs, the presence of weight and computational power constraints makes it necessary to develop odometric strategies based on minimilastic equipment. In this study, a hexarotor was made to perform up-and-down oscillatory movements while flying forward in order to test a self-scaled optic flow based odometer. The resulting self-oscillatory trajectory generated series of contractions and expansions in the optic flow vector field, from which the flight height of the hexarotor could be estimated using an Extended Kalman Filter. For the odometry, the downward translational optic flow was scaled by this current visually estimated flight height before being mathematically integrated to obtain the distance traveled. Here we present three strategies based on sensor fusion requiring no, precise or rough prior knowledge of the optic flow variations generated by the sinusoidal trajectory. The “rough prior knowledge” strategy is based on the shape and timing of the variations in the optic flow. Tests were performed first in a flight arena, where the hexarotor followed a circular trajectory while oscillating up and down over a distance of about

Keywords

Introduction

Estimating distance traveled by an aerial robot is a problem which frequently arises when designing applications for use in situations where GPS is available only intermittently or not at all. In UAVs (Unmanned Aerial Vehicles), reducing the Size, Weight and Power (SWaP) of the perceptual equipment is often of great importance in order to ensure that the robot’s task will be performed successfully.

Several visual odometric approaches involving the use of either optic flow,1,2 events, images & IMU (Inertial Measurement Unit) combinations 3 or the sparse-snapshot method 4 have been successfully tested on flying robots. All these approaches require ground height information providing the factor used to scale the visual information. This scaling factor can be determined separately using a static pressure sensor 5 or stereovision,1,4 or it can be integrated when using the hybrid approach, 3 for example. One of the approaches used to estimate the 2D position of a drone is a combination of onboard odometry and visual mapping, known as SLAM (Simultaneous Localisation and Mapping).6–8 Most of these approaches require the use of computationally intensive algorithms and feedback from the environment (such as the detection of a beacon or feedback from a map). A minimalistic alternative is IMU based dead reckoning – i.e. inertial integration. 9 A dead reckoning signal could be used by a UAV to return to the close proximity of its base station before reaching it a second time using other means of perception. In this case, the landing of the UAV on its base station can provide a new known starting point. Another minimalistic alternative consists in using optic flow cues, such as translational optic flow and optic flow divergence cues. Translational optic flow has been used on UAVs to control landing visually, 10 to follow uneven terrain 11 and to attempt visual odometry and localisation12,13 (see Serres and Ruffier 14 for a review).

Self-oscillations have been observed in honeybees flying forward in horizontal,15,16 doubly tapered

17

and high-roofed18,19 tunnels. The Self-oscillatory motion generates a series of expansions and contractions in the optic flow vector field, providing the optic flow divergence cue. Visually controlled landing has been achieved based on the optic flow divergence cue.20–23 The instabilities due to oscillatory movements have been used to determine the flight height of a micro-flyer based on the linear relationship between the oscillation and the fixed control gain.

22

The instabilities due to depth variations have been used to assess the optic flow scale factor of the scene observed to perform visual odometry onboard an underwater vehicle.

24

The local optic flow divergence was measured by means of two optic flow magnitudes perceived by two basic optic flow sensors placed on a chariot performing back-and-forth oscillatory movements in front of a moving panorama.

25

The local optic flow divergence was then used to estimate the local distance between the chariot and the moving panorama by means of an Extended Kalman Filter (EKF).

25

A SOFIa (

Here we investigated how to include some knowledge about the oscillations occurring during the trajectory in an odometric strategy based on optic flow cues alone. For this purpose, the optic flow based odometric scheme called SOFIa was tested both indoors and outdoors on a hexarotor equipped with optic flow sensors (see Figure 1).

First we applied the SOFIa method using only 2 optic flow measurements perceived along the longitudinal axis of the drone, with no prior knowledge of the optic flow variations. In order to improve the odometric accuracy, a sensor fusion strategy based on the parameters of the self-oscillation using 4 optic flow sensors embedded in the hexarotor was then tested. The idea was to use some prior knowledge about the oscillations imposed on the drone in order to measure the optic flow divergence and the translational optic flow cues more accurately. Two different sensor fusion strategies, based on precise and on rough prior knowledge of the optic flow variations, respectively, were tested. The sensor fusion strategy based on rough prior knowledge consisted solely in using the shape and timing of the variations in the optic flow. All three optic flow based odometric processing methods were tested first indoors on bouncing circular trajectories about

In section 2., the hexarotor used to perform both indoor and outdoor experiments is described. In section 3., the measurement of the local translational and divergence optic flow cues is discussed. In section 4., the minimalistic visual odometric method is discussed. In section 5., the odometric processing method based on raw measurements of 2 optic flow sensors without any prior knowledge of the optic flow variations is discussed. In section 6., the sensor fusion odometric processing method based on 4 optic flow sensors is discussed, both with precise and with rough prior knowledge of the optic flow variations. In section 7., the indoor experimental setup is first described, and experiments are then presented showing that the two sensor fusion strategies based on the knowledge of optic flow variations increased the measurement quality of the local optic flow cues. Lastly, the performances of the three minimalistic in-flight optic flow based odometric processing methods are compared. In section 8., we first discuss the outdoor experimental setup and then present experiments showing that the same considerations also apply to preliminary flight tests performed outdoors. In section 9., conclusions are drawn and projects for future studies are discussed.

The SOFIa hexarotor

The hexarotor was developed together with Hexadrone

(a) Hexarotor equipped with 4 optic flow sensors oriented towards the ground flying along a bouncing circular trajectory in the Mediterranean Flight Arena. (b) 2 optic flow sensors were set along the longitudinal axis

Characteristics of the optic flow sensors equipped on the hexarotor.

Measurement of the local optic flow cues

The translational optic flow is the angular speed magnitude of the optic flow vector field generated by the translational motion of a drone flying above the ground.

29

The theoretical local translational optic flow the sum of the two optic flow magnitudes perceived by the two optic flow sensors set along the longitudinal axis the sum of the two optic flow magnitudes perceived on the the median value of the four optic flow magnitudes sensed along the hexarotor’s longitudinal axis by the 4 optic flow sensors, scaled by a the difference between the two optic flow magnitudes perceived by the two optic flow sensors set along the longitudinal axis the difference between the two optic flow magnitudes perceived by the two optic flow sensors set along the lateral axis

The series of contractions and expansions generated in the optic flow vector field by up-and-down oscillatory movements is known as the optic flow divergence. When a drone flies forward while oscillating up and down above the ground, the optic flow divergence is superimposed on the translational optic flow in the optic flow vector field. Due to the oscillatory movements, the state vector

Hexarotor oscillating up and down while flying forward over the ground at the flight height

The SOFIa visual odometer method

A model for the honeybee’s visual odometer called SOFIa (

State space representation of the hexarotor along the vertical axis: The hexarotor’s system was modeled in the form of a double integrator receiving as its input the acceleration

Odometric method based on 2 optic flow sensors with N o P rior K nowledge (NPK) of the optic flow variations

The local optic flow divergence input: the acceleration of the drone measurement: the local optic flow divergence

See Appendix B for the EKF calculations.

Fusion strategies based on 4 optic flow sensors

Here we investigated how to use prior knowledge about the self-oscillations to further improve the accuracy of the distance traveled estimates with 4 optic flow sensors.

The optic flow divergence induced by the self-oscillation serving as an input to a Kalman Filter (KF) was expressed as follows (see Figure 4(a)):

(a) The sensor fusion based on 2 Optic Flow (OF) sensors is achieved using an Extended Kalman Filter (EKF). The embedded computer handles the outputs of the optic flow sensors set on the hexarotor, whose outputs are used to measure the local optic flow divergence

Inputs

The translational optic flow induced by the forward motion serving as the input to a KF was expressed as follows (see Figure 4(b)):

Fusion strategy using R ough P rior K nowledge (RPK) of the optic flow variations

Here we investigated how to implement the sensor fusion strategy based on 4 optic flow sensors without any knowledge of the oscillation amplitude

For this purpose, we approximated very roughly both the optic flow divergence and the translational optic flow cues in the form of a sinusoidal signal serving as the input to both KFs as follows (see Figure 4):

By using as KF input

Extended Kalman Filter for the fusion strategy with 4 optic flow sensors

To estimate the drone’s flight height input: the acceleration of the drone measurement: the local optic flow divergence

See Appendix B for the EKF calculations.

Indoor experimental flight tests

Indoor experimental setup

Indoor flight tests were performed in the Mediterranean Flight Arena (see Figure 5(a)). The position and orientation used in the hexarotor’s control system were taken from the motion-capture (MoCap) system installed in the flight arena, consisting of 17 motion-capture cameras covering a

Hexarotor flying in the Mediterranean Flight Arena (a). The same dataset taken at

Indoor experimental results

The sensor fusion strategies based on

(a) Comparison of the position of the hexarotor on the vertical plane (

As shown in Figure 5, the optic flow measurements were processed with the three strategies (NPK, RPK and PPK), taking the same dataset recorded under an illuminance of

The flight height estimates

Overall, the final percentage error in the distance traveled estimates

Distributions of the final percentage errors in the distance traveled estimates

Preliminary outdoor experimental flight tests

Outdoor experimental setup

Outdoors, for trajectory tracking purposes, the hexarotor was equipped with a TeraRanger Evo 3m distance sensor in order to measure the flight height of the drone and with a Pixhawk GPS sensor (from Holybro) in order to measure the horizontal position. These 2 sensors were connected directly to the PX4 flight controller. In order to validate the precision of the TeraRanger Evo 3m distance sensor with the help of the MoCap system, a test was performed in the flight arena, in which we observed that TeraRanger Evo 3m was very reliable. This reliability was confirmed by the quality of the TeraRanger Evo 3m sensor’s output, which was devoid of high frequency noise when measured on the field (see Section (2.) of Supp. Information). According to the PX4 documentation, the standard deviation of the horizontal position error is

Top view of the drone’s horizontal trajectory during an outdoor flight test (outdoor flight n

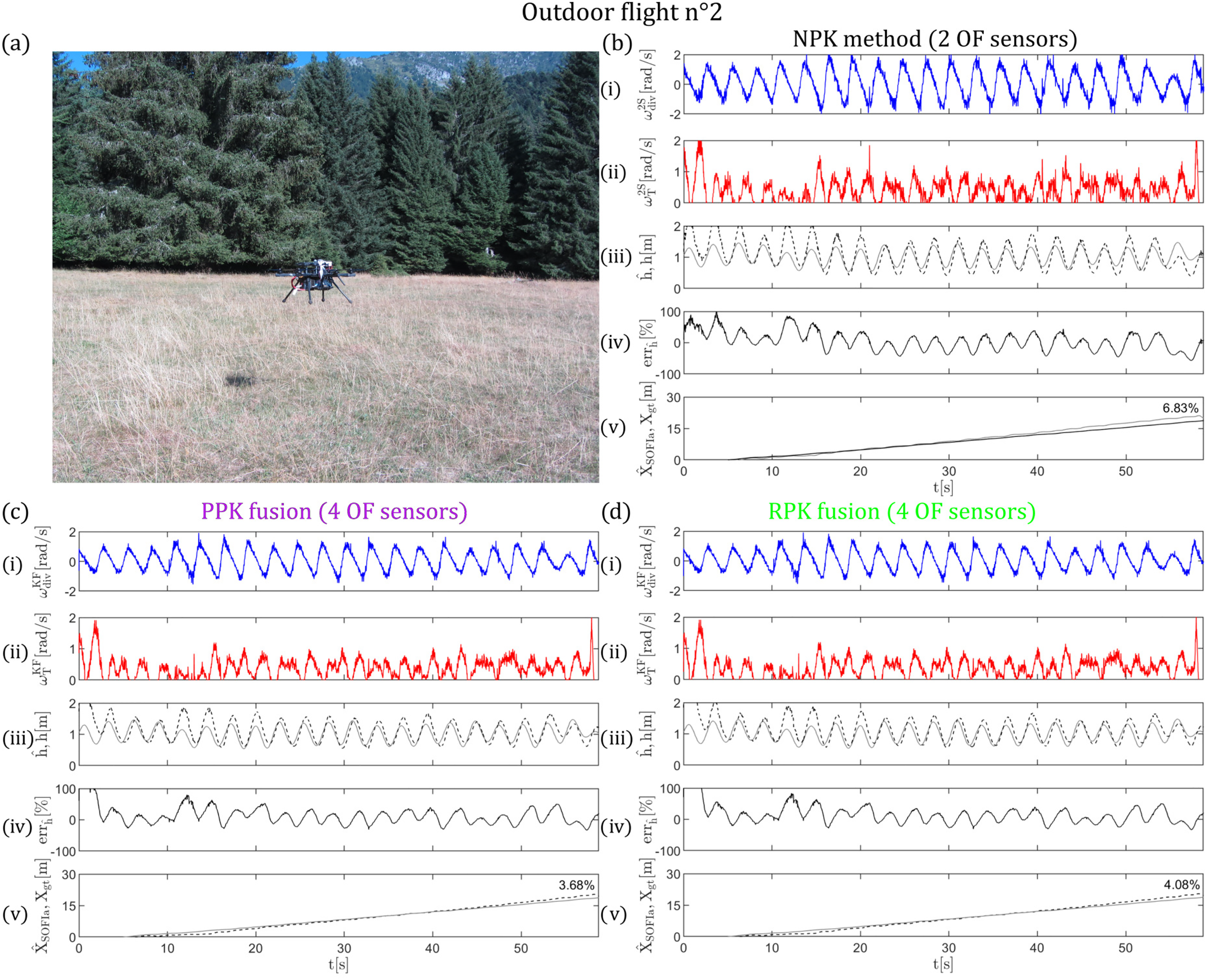

Hexarotor flying over a field irregularly covered with grass (outdoor flight n

(a) Comparison between the position of the hexarotor on the vertical plane (

In the 4 datasets recorded outdoors, the final percentage error in the distance traveled estimates

The outdoor experiments were performed using the same set of Pixart PAW3903 optic flow sensors, but adding a neutral density filter of 2 in front of the lenses to attenuate the solar luminosity. Without these filters, the optic flow sensors would have been saturated.

Results of preliminary outdoor experiments

4 bouncing longitudinal flight tests over a distance of about

Due to the presence of wind disturbances and the greater convergence time required by the EKF in outdoor visual setting, the distance traveled

Conclusion

In this study, we investigated how to use information about the oscillating trajectory to improve a minimalistic odometry based on optic flow cues. The experiments were performed onboard a hexarotor first indoors, following circular bouncing trajectories at a frequency of

The findings obtained in this study show that the sensor fusion strategies based on the use of 4 optic flow sensors make it possible to measure the optic flow divergence and the translational optic flow cues more reliably thanks to the use of additional Kalman Filters. This was the case even when taking only rough prior knowledge about the optic flow variations into account, and more specifically, only the general shape and timing of the oscillations during the trajectory. This prior knowledge can be considered acceptable since the general shape and timing of the oscillations are imposed by the drone itself on its own forward trajectory. The sensor fusion strategies presented decreased the error in the flight height estimates, and thus decreased the percentage error in the distance traveled estimates in all the cases considered, improving the odometric performances. These considerations also applied in the case of the few outdoor flight tests performed in the presence of wind and an irregular pattern of grass.

With all three odometric processing methods, the final distance traveled estimates were admittedly subject to small errors as the odometric strategy is a dead reckoning method involving no feedback from the environment. Nevertheless, we show here that the SOFIa model can be accurate and precise enough to move in close proximity to a target without GPS, indoors and outdoors. Likewise, this highly minimalistic optic flow based odometric strategy could also be used to enable a future drone to assess whether it is returning near its base station without any need for a GPS. So far, the present findings can be said to constitute the first experimental proof-of-concept of the SOFIa model 26 before this optic flow based odometric strategy is implemented on a nanodrone requiring very little computational power. 30 We now intend to test the robustness of these strategies in a range of forward speeds, in cases where a large drone pitch occurs and in the presence of strong reliefs.

Future studies will also include the implementation of an optic flow regulator keeping the translational optic flow around a given setpoint.

Supplemental Material

sj-pdf-1-mav-10.1177_17568293221148380 - Supplemental material for Indoor and outdoor in-flight odometry based solely on optic flows with oscillatory trajectories

Supplemental material, sj-pdf-1-mav-10.1177_17568293221148380 for Indoor and outdoor in-flight odometry based solely on optic flows with oscillatory trajectories by L Bergantin, C Coquet, J Dumon, A Negre, T Raharijaona, N Marchand and F Ruffier in International Journal of Micro Air Vehicles

Footnotes

Acknowledgements

We thank Dr. J. Blanc for improving the English manuscript and J.M. Ingargiola for his help with designing the printed circuit boards for the optic flow sensors. We are grateful to the three anonymous Referees, whose suggestions have helped us to greatly improve the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Financial support was provided via a ProxiLearn project grant (ANR-19-ASTR-0009) to F.R. from the ANR (Astrid Program). The participation of L.B. in this research project was supported by a joint PhD grant from the Délégation Générale de l'Armement (DGA) and Aix Marseille University. The participation of C.C. was also supported by the CNRS Innovation via a pre-maturation project. L.B, C.C., and F.R. were also supported by Aix Marseille University and the CNRS (Life Science, Information Science Institute as well as Engineering Science & Technology Institute). The equipments for the experimental tests have been mainly supported by ROBOTEX 2.0 (Grants ROBOTEX ANR-10-EQPX-44-01 and TIRREX ANR-21-ESRE-0015).

Supplemental material

Supplementary material for this article is available online.

Notes

Appendix A: Kalman Filter calculations

In the PPK strategy, the optic flow divergence and the translational optic flow cues were expressed as in the equations (7) and (8), respectively. In the RPK strategy, the optic flow divergence and the translational optic flow cues were both expressed as in the equation (9). With each optic flow cue, at each

(a) One-step ahead prediction

(b) Covariance matrix of the state prediction error vector

(c) Measurement update

(d) Covariance matrix of state estimation error vector

Appendix B: Extended Kalman Filter calculations

The discretized model for the hexarotor along the vertical axis (see equation (6)) can be expressed as follows:

(a) One-step ahead prediction

(c) Measurement update

Appendix C: Computation of the local divergence and translational optic flow cues

The local optic flow divergence can be measured as the difference between two optic flow magnitudes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.