Abstract

The development of vision-based navigation systems for mobile robotics applications in outdoor scenarios is a very challenging problem due to frequent changes in contrast and illumination, image blur, pixel noise, lack of image texture, low image overlap and other effects that lead to ambiguity in the interpretation of motion from image data. To mitigate the problems arising from multiple possible interpretations of the data in outdoor stereo egomotion, we present a fully probabilistic method denoted as probabilistic stereo egomotion transform. Our method is capable of computing 6-degree of freedom motion parameters solely based on probabilistic correspondences without the need to track or commit key point matches between two consecutive frames. The use of probabilistic correspondence methods allows to maintain several match hypothesis for each point, which is an advantage when ambiguous matches occur (which is the rule in image feature correspondence problems), because no commitment is made before analysing all image information. Experimental validation is performed in simulated and real outdoor scenarios in the presence of image noise and image blur. Comparison with other current state-of-the-art visual motion estimation method is also provided. Our method is capable of significant reduction of estimation errors mainly in harsh conditions of noise and blur.

Introduction

In this article, we focus on the inference of robot self-motion (egomotion) based on visual observations of the environment. Although egomotion can be estimated without visual information using sensors such as inertial measurement units (IMUs) or global positioning systems (GPSs), the use of visual information plays an important role specially in MU/GPS denied environments, for example, crowded urban areas or other environments where there are challenging imaging conditions such as aerial and underwater scenarios. In Figure 1, we present some examples of mobile robotic platforms equipped with vision sensors, spanning applications in land, sea, air and underwater (courtesy of INESC TEC).

INESC-TEC mobile robotics platforms on land, sea and air application scenarios. All robotic platforms are equipped with one or more visual sensors to perform visual navigation or other complementary tasks.

Egomotion estimation from outdoors imagery is extremely challenging due to multiple factors that generate blur, ambiguities and low signal-to-noise ratio in images. In land robots, camera vibration produces significant motion blur. In sea and underwater robots, repetitive image patterns and low texture generate serious matching ambiguities. In all cases, low lighting conditions, shadows and other illumination artefacts lead to unfavourable signal-to-noise ratios. It is thus essential to develop robust algorithms capable of mitigating some of the aforementioned effects.

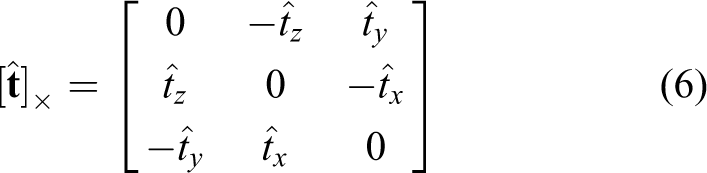

This article is an extension of the work by Silva et al. 1 where we have introduced the probabilistic stereo egomotion transform (PSET), a fully probabilistic algorithm for the computation of image motion from stereo vision systems that provides better estimates than alternative approaches. This article provides a deeper explanation, analysis and performance evaluation of PSET. In particular, it focuses on PSET advantages in images with severe amounts of noise and blur that often characterize outdoors operating conditions.

The article outline is as follows: in the following section, the related work is presented. We then make a brief introduction to the probabilistic egomotion estimation problem and an outline of the rationale of the method. Afterwards, we present in detail the steps of the probabilistic stereo egomotion approach, and the obtained results in both synthetic and real image data sets with emphasis in the results obtained under extreme image conditions (presence of image noise and blur). In the final section, we present the conclusions and future work.

Related work

In robotics applications, egomotion estimation is directly linked to visual odometry (VO) applications as described by Scaramuzza. 2 The use of VO methods for estimating robot motion has been a subject of research by the robotics community in recent years. One way of performing VO is by determining instantaneous camera displacement on consecutive frames and integrating over time the estimated linear and angular velocities. The need to develop such applications urged from the increase use of mobile robots on modern world tasks in different application scenarios. Robots need to extend their perception capabilities to be able to navigate in complex scenarios where typical inertial navigation system information cannot be used, for example, urban areas or underwater GPS-denied environments.

Visual motion perception is achieved by measuring image point displacement on consecutive frames. In monocular egomotion estimation, there is translation scale ambiguity, that is, in the absence of other sources of information, only the linear velocity direction can be measured in a reliable manner. Whenever a calibrated stereo setup is used, the full angular and translational velocity components can be extracted, which is denoted by stereo VO.

Most of the work on stereo VO methods started by Maimone et al.

3

and Maimone et al.

4

on the famous Mars Rover Project. The proposed method was able to determine all

6-degree of freedom (DOF) of the rover (

The stereo VO method implemented in the Mars Rover Project was inspired by Olson et al. 8 At the time, VO methods appear as replacements for wheel odometry dead reckoning methods to overcome long distance limitations. To avoid large drift in robot position over time, Olson method combined a primitive form of stereo egomotion estimation procedure also used by Maimone et al. 3 with absolute orientation (AO) sensor information.

The taxonomy adopted by the robotics and computer vision community classifies stereo VO methods into two categories based on either feature detection scheme or pose estimation procedure. The most utilized methods for pose estimation are 3D AO methods and perspective-n-point (PnP) methods.

The AO method consists of 3D points triangulation for every stereo pair and then motion estimation is solved using point alignment algorithms, for example, procrustes method, 9 the AO using unit quaternions method by Horn, 10 iterative-closest-point method 11 or the one utilized by Milella and Siegwart 12 for estimating motion of an all-terrain rover.

In the study by Alismail et al., 13 a benchmark study is performed to evaluate both AO and PnP techniques for robot pose estimation using stereo VO methods. The authors concluded that PnP methods perform better than AO methods due to stereo triangulation uncertainty, especially in the presence of small stereo rig baselines.

The influential work of Nister et al. 14 was one of the first PnP method implementations. It utilized the perspective-three-point method (P3P) developed by Haralick et al. 15 combined with an outlier rejection scheme (RANSAC). Despite the fact of having instantaneous 3D information from a stereo camera setup, the authors use a P3P method instead of a more easily implementable AO method. The authors concluded that P3P pose estimation deals better with depth estimation ambiguity, which corroborates the conclusions drawn by Alismail et al. 13

In a similar line of work, and in order to avoid having a great dependency of feature matching and tracking algorithms, Kai and Dellaert 16 tested both three-point and one-point stereo VO implementations using a quadrifocal setting within an RANSAC framework. Later on, Ni et al. 17 decouple the rotation and translation estimation into two different estimation problems. The method starts with the computation of a stereo putative matching, followed by a classification of features based on their disparity. Afterwards, distant points are used to compute the rotation using a two-point RANSAC method. The underlying idea is to reduce the problem of the rotation estimation to the monocular case. The closer points with a disparity above a given threshold are used together with the estimated rotation to compute the one-point RANSAC translation.

Recent efforts on stereo VO are being driven by novel intelligent vehicles and by automotive industry applications. One example is the work developed by Kitt et al. 18 The proposed method is available as an open-source VO library named LIBVISO. The stereo egomotion estimation approach is based on image triples and online estimation of the trifocal tensor. 19 It uses rectified stereo image sequences and outputs a 6D vector with linear and angular velocity estimation using an iterative extended Kalman filter. Comport et al. 20 also developed a stereo VO method based on the quadrifocal tensor. 19

Other recent developments on VO have been achieved by the extensive research conducted at the Autonomous System Laboratory of ETH Zurich University. 21–25 The work developed by Scaramuzza and Fraundorfer 26 and Scaramuzza et al. 21 takes advantages of motion constraints (planar motion) to reduce model complexity and allow a much faster estimation. Also, since the camera is installed on a non-holonomic wheeled vehicle, motion complexity can be further reduced to a single-point correspondence. More recently, the work of Kneip et al. 27 introduced a novel parameterization for the P3P PnP. The method differs from standard algebraic solutions for the P3P estimation problem 15 by computing the aligning transformation directly in a single stage without the intermediate derivation of the points in the camera frame. This pose estimation method combined with key point detectors 28–30 and with IMU information was used to estimate monocular VO 22 and stereo VO by Voigt et al. 23 In the study by He et al., 31 a visual-inertial egomotion estimation method is used to estimate an arbitrary body motion in indoor environment. Vision is used to estimate the camera motion from a sequence of feature correspondence using bundle adjustment while the inertial estimation outputs the orientation using adaptive-gain orientation filter.

Most of the previously mentioned state-of-the-art algorithms use deterministic methods to find matches between images and then compute the motion. Our approach, on the contrary, takes full advantage of not defining the correspondence at an early stage but keep multiple correspondence hypothesis that together will contribute to a more accurate egomotion estimation, especially when image conditions contain many ambiguous and unreliable correspondences due to non-ideal imaging conditions.

Probabilistic monocular egomotion estimation

The seminal work of Domke and Aloimonos 32 has introduced the notion of probabilistic correspondence in the context of the single camera egomotion estimation problem. The authors introduced the term probabilistic (which is actually a belief) to code the distance between Gabor filters using an exponential transformation. In this setting, it is possible to compute the angular velocity of the vehicle and the direction of the linear velocity (5-DOF) overall, but it is not possible to determine the amplitude (scale) of the linear velocity.

In this section, we briefly describe Domke and Aloimonos’ 32 approach and introduce the notation required for the remaining sections.

Probabilistic correspondence

Given two images taken at different times,

The belief image

where

So that, it maps to the range 0–1.

Probabilistic motion

Motion hypotheses are defined as a set of incremental rotation matrices

where

where

In order to obtain an estimate of the essential matrix (

For each point

If one assumes statistical independence between the measurements obtained at each point

In Figure 2, an illustration of these steps is presented.

Left: a point

Finally, having computed all the motion hypotheses, an optimization method

34

is used to refine the motion estimate around the highest scoring samples

where

Then, the output of the algorithm is the solution with the highest likelihood as defined

Probabilistic stereo egomotion estimation

Now we extend the notion of probabilistic correspondence and probabilistic egomotion

estimation to the stereo case. This allow us to compute the whole 3D motion information in a

probabilistic way. Let us consider images

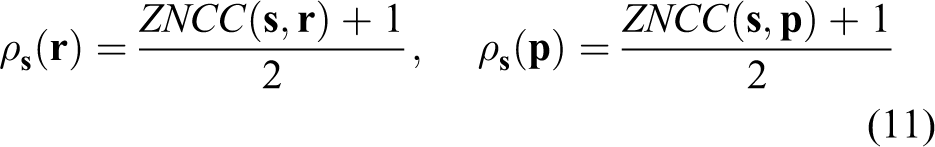

ZNCC matching used to compute the PSET transform. ZNCC: zero-mean normalized cross-correlation function; PSET: probabilistic stereo egomotion transform.

Example of probabilistic correspondence images (

For the sake of computational efficiency, analysis can be limited to subregions of the

images given prior knowledge about the geometry of the stereo system or bounds of the motion

given by other sensors like IMU’s. In particular, for each point

The geometry of stereo egomotion

In this section, we describe the geometry of the stereo egomotion problem, that is, will analyse how world points project in the four images acquired from the stereo setup in two consecutive instants of time according to its motion. This analysis is required to derive the expressions to compute the translational motion amplitude.

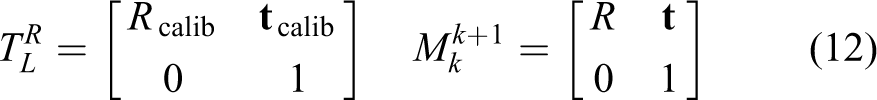

Let us consider the 4 × 4 rototranslations

We factorize the translational motion

The rotational motion

Let us consider an arbitrary 3D point

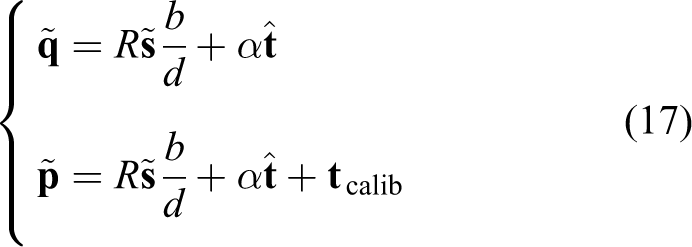

To illustrate the solution, let us consider the particular case of parallel stereo. This

will allow us to obtain the form of the solution with simple equations but does not

compromise generality because the procedure to obtain the solution in the non-parallel

case is analogous. In parallel stereo, the cameras are displaced laterally with no

rotation. The rotation component is 3 × 3 identity

Introducing the disparity

Replacing equation (16) in the last two equations of equation (14), we obtain

Let us write

Solving for

Solutions exist whenever disparity

This corresponds to the case when

One special case to take into account is when the translational component of motion is

zero. When this happens, the value of

Probabilistic scale estimation

In the previous section, we demonstrated how to estimate the translation scale factor

Previously in Silva et al.,

35

this was achieved by computing the rigid transformation between point clouds

obtained from stereo reconstruction at times

Instead in this article, we apply the notion of probabilistic correspondence to the stereo case. Instead of committing matches in space and time, we create a probabilistic observation model for possible matches

where we assume statistical independence in the measurements obtained in the pairwise

probabilistic correspondence functions

Probabilistic correspondence

From the pairwise probabilistic correspondence, we obtain all possible combination of

corresponding matches. Then, because each four-tuple

PSET accumulator

For computing the accumulator, we assume

First, a large set of points

After all probabilistic correspondences have been computed for a point

Finally, for a particular

From this best match, we retrieve from

and associated likelihood

Thus, each point

being

Dealing with calibration errors

A common source of errors in a stereo setup is the uncertainty in the calibration parameters. Both intrinsic and extrinsic parameter errors will deviate the epipolar lines from their nominal values and influence the computed correspondence probability values. To minimize these effects, we modify the correspondence probability function when evaluating sample points such that a neighbourhood of the point is analysed, instead of using only the exact coordinate of the sample point

where

Another method used to diminish the uncertainty of the correspondence probability function when performing ZNCC is to use subpixel refinement methods, for example, parabola fitting and Gaussian fitting as presented by Debella-Gilo and Kaab. 37

Velocities estimation

The linear and angular velocities are then estimated, using the same procedure applied by

Silva et al.

35

After having obtained the rotation (

where Δ

Likewise, the angular velocity is computed by

where

Kalman filter

In order to achieve a more smooth estimation, we filter the linear and angular velocities estimates using a Kalman filter with a constant velocity model. The state transition model with zero-mean stochastic acceleration is given by

where the state transition matrix is the identity matrix,

The observation model considers state observations with additive noise

where the observation matrix

We set the covariance matrices

where

The

Results

In order to evaluate the accuracy of PSET, we performed evaluation tests with synthetic and real image data. For comparison purposes, we used LIBVISO 18 as a state-the-art deterministic egomotion estimation method. The choice was based on the fact that it is an open source 6D VO library with a filtering step equivalent to ours (constant velocity model).

Synthetic images results

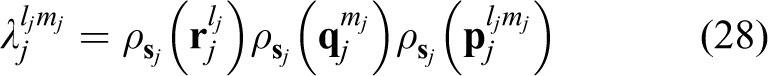

As a first test for evaluating the egomotion estimation accuracy of the PSET method, we utilized a sequence of synthetic stereo images. The sequence was created using a VRML-based simulator and implemented a quite difficult scene (see Figure 6) in which the images contain a great deal of repetitive structure that cause ambiguity in image point correspondence. The sequence is composed by four linear tracks (see Figure 7), as we are more interested in evaluating the performance of the method in the estimation of the translation scale factor.

Synthetic images stereo pairs for translation scale motion estimation

Generated and estimated trajectories in the synthetic image experiment.

We assume a stereo camera pair calibrated setup with a 10-cm baseline, 576 × 380 image

resolution, with ZNCC window

In Figure 7, one can observe the generated and the estimated trajectories obtained using PSET and LIBVISO.

From Table 1, we can observe that PSET obtains a more accurate egomotion estimation, having less root mean square (RMS) error than LIBVISO in all velocity components. This turns out to be more evident in the computation of the velocity norm over the global motion trajectory, where PSET results are almost 50% more accurate than the ones displayed by LIBVISO.

Comparison of the standard mean squared error between PSET and LIBVISO.

PSET: probabilistic stereo egomotion transform.

In this experiment, we focused on the evaluation of translational motion estimation, since the angular velocity case was already demonstrated by Silva et al. 35

In Figure 8, we can observe a box

plot of the instantaneous linear velocity error distribution during the sequence. It is

clear better performance of PSET both in terms of the mean, median and variance of the

error. Figure 9 shows the same

information discriminated by coordinate axis where the same tendency is observed,

especially for the

Error distribution ||

Error distribution of estimated linear velocities obtained by PSET and LIBVISO in all

three axes (

Real image sequences

The evaluation of PSET in real images was performed using KITTI data set 39 composed of stereo image sequences. The KITTI data set uses a car-vehicle robot in different road scenarios (urban street, countryside and highways) providing stereo image sequences in colour or greyscale format at 10 fps, 1.4-MP image resolution (1334 × 391) with IMU/GPS (OXTS RT 3003) information to act as external validation. In the study by Silva et al., 35 PSET and LIBVISO were already compared using that data set. Results show that PSET outperforms LIBVISO in both linear and angular velocity estimation. In this work, we perform novel experiments with added Gaussian noise and image blur. Not only we want to evaluate the egomotion estimation accuracy in normal conditions but also with unfavourable image characteristics, typical of outdoor scenarios. The main argument we want to validate is that probabilistic methods, although requiring additional computations, can be more effective in robotic scenarios where image conditions are far from ideal and deterministic egomotion estimation methods tend to fail.

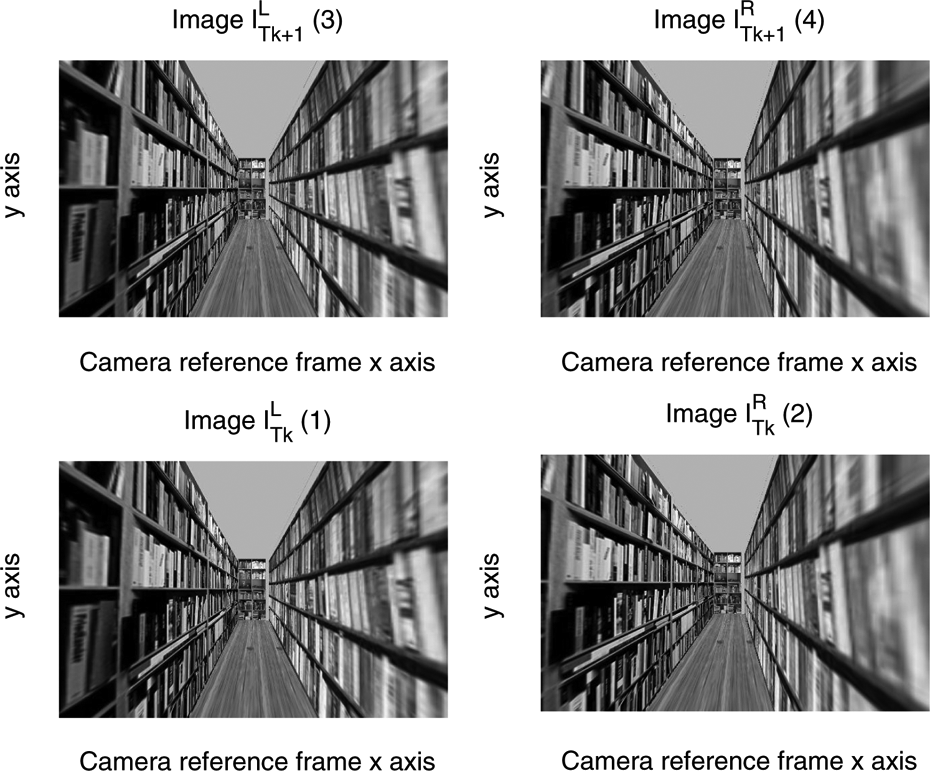

In Figure 10, we show an example of an image of the KITTI data set and the corresponding corrupted images with different values of Gaussian noise. Table 2 shows results for PSET and LIBVISO with three different noise powers: 0.001, 0.002 and 0.005 variance in grey level units in the 0–255 range.

Original image from KITTI data set drive 2011-09-26-0091, and corrupted versions with white Gaussian noise of variance 0.001, 0.002 and 0.005 in an image grey level range between 0 and 255.

RMS for PSET and LIBVISO under different values of image Gaussian noise.

RMS: root mean square; PSET: probabilistic stereo egomotion transform.

We measure accuracy as the RMS error between the estimates and the IMU/GPS information. Quantitative results are shown in Table 2 and Figures 11 and 12. The obtained results show higher accuracy of the PSET method under all values of added Gaussian noise compared to LIBVISO. Furthermore, as the noise power grows, the PSET method shows bigger improvements. For the largest noise power tested, PSET reduces the error in 23% for the linear velocities and 26% for the angular velocities.

Error distribution of the magnitude of linear velocity computed by PSET and LIBVISO, images corrupted with Gaussian noise with variance 0.001, 0.002, 0.005, denoted, respectively, 1×, 2×, 5×. PSET: probabilistic stereo egomotion transform.

Error distribution of the magnitude of angular velocities computed by PSET and LIBVISO images corrupted with Gaussian noise with variance 0.001, 0.002, 0.005, denoted, respectively, 1×, 2×, 5×. PSET: probabilistic stereo egomotion transform.

In Figures 11 and 12, we show the error distribution of the linear and angular velocity magnitude computed by PSET and LIBVISO, for all tested error powers. The accuracy in the egomotion estimation obtained by PSET is higher, since it displays lower median error when compared to LIBVISO for all cases.

Original image from KITTI data set drive 2011-09-26-0091, and corrupted versions with blur 1, 3, 5 pixels standard deviation denoted as 1×, 2×, 5×.

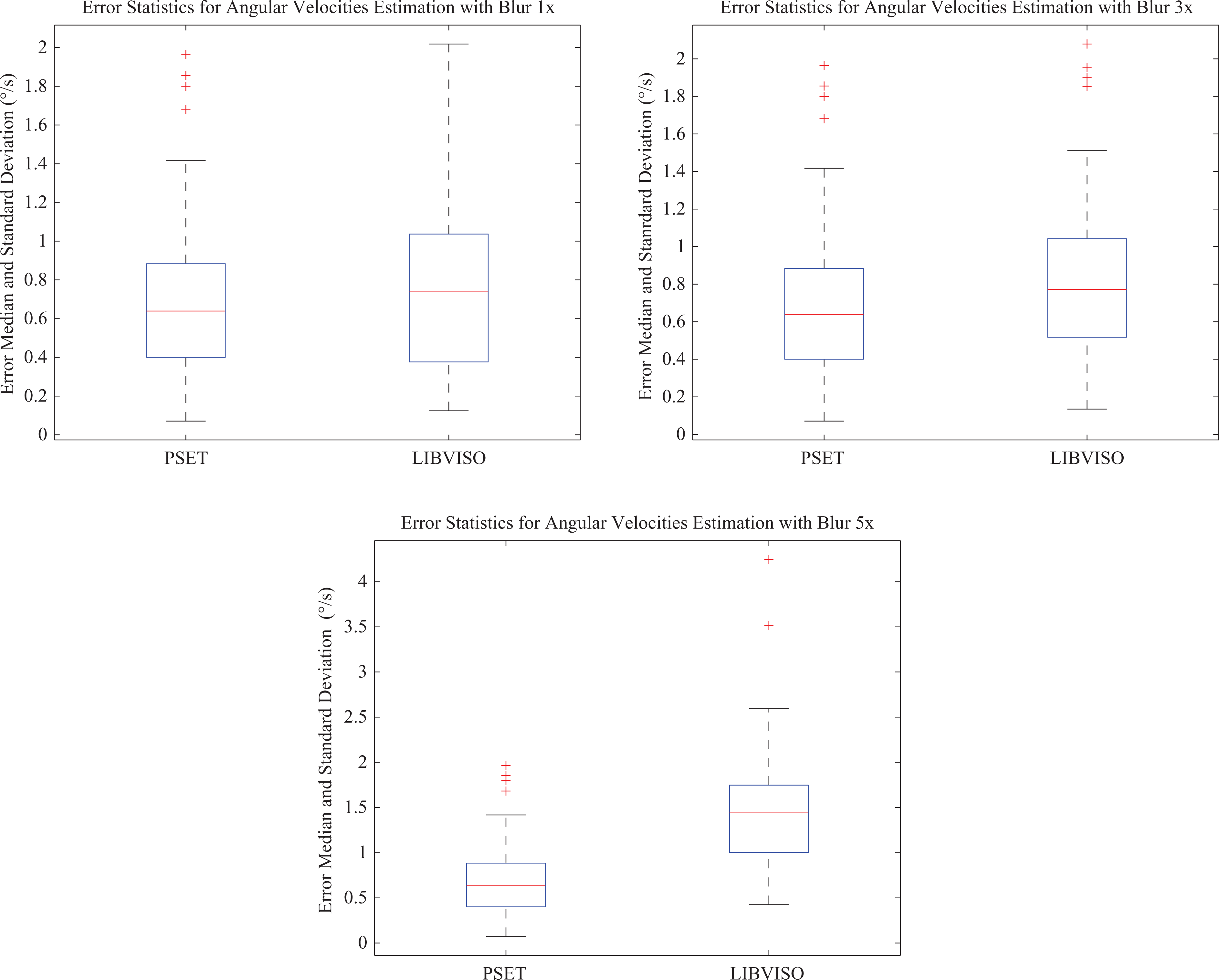

In Table 3, we show the RMS error using PSET and LIBVISO with different types of blur (1.0) when compared to IMU/GPS information. The corrupted images were created by adding a low-pass Gaussian filter of the image size with 1×, 3×, 5× standard deviation.

RMS error for PSET and LIBVISO under different values of blur.

RMS: root mean square; PSET: probabilistic stereo egomotion transform.

Again results show that PSET is more accurate than LIBVISO in the presence of higher quantities of blur. The error difference between PSET and LIBVISO increases from 7% for low values of blur (1×) to 48% and 32% in the linear and angular velocity estimation for high values of blur (5×).

In Figures 14 and 15, we can see the error distributions of linear and angular velocities both for PSET and LIBVISO. Again, PSET exhibits lower median error when compared to LIBVISO for all values of image blur.

Error distribution of the magnitude of the linear velocities PSET and LIBVISO, images corrupted with a Gaussian blur filter with 1×, 2×, 5× pixels standard deviation. PSET: probabilistic stereo egomotion transform.

Error distribution of the magnitude of the angular velocities PSET and LIBVISO, images corrupted with a Gaussian blur filter with 1×, 2×, 5× pixels standard deviation. PSET: probabilistic stereo egomotion transform.

The difference in the obtained accuracy from PSET and LIBVISO is bigger for the synthetic image scenario than in real image data sets. This fact maybe due to two causes. First the ground-truth information used in both experiments is different. In the synthetic image scenario, we generate the VRML simulation and therefore the world points have a precise position that provides a reliable egomotion trajectory verification. On the contrary for the real image data set, an IMU/GPS information is used. The IMU/GPS information is subject to bias and noise, and therefore, it can only be considered as weak ground truth information. Secondly, the KITTI sequence does not contain such image repetitive structure, and therefore, point correspondence ambiguity is lower.

Conclusions and future work

The probabilistic approach for stereo visual egomotion estimation described in this work has proven to be an accurate method of computing stereo egomotion. The proposed approach is very robust because no explicit matching or feature tracking is necessary to compute the vehicle motion. To the best of our knowledge, this is the first implementation of a fully dense probabilistic method to compute stereo egomotion. The results demonstrate that PSET is more accurate than other state-of-the-art 3D egomotion estimation methods, significantly improving the overall accuracy in linear and angular velocity estimation. We have shown improvements up to 50% in a highly repetitive texture synthetic image scenario with ground truth information and above 20% in real images with large amounts of blur and noise with respect to IMU/GPS reference. One of the main advantage of probabilistic egomotion estimation methods is their higher robustness in difficult imaging scenarios, for example, in the presence of image noise or blur. In the experiments, conducted PSET achieved a better performance than LIBVISO and the improvement (error difference) between both methods increased in the presence of higher values of image noise and blur. Despite the clear advantages over other state-of-the-art methods, its effectiveness and usefulness in mobile robotics scenarios requires further improvements on the computational implementations in order to have real-time functionality. Given the highly parallel nature of the algorithm, composed of many independent operations, in future work, we plan to develop a PSET GPU implementation to achieve real-time performance. Another objective is to pursue further validation of the PSET algorithm in other heterogeneous mobile robotics scenarios, especially in aerial and underwater robotics, where the lack of image texture combined with high matching ambiguity provides an ideal scenario for further accessing the robustness of the proposed methodology.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is financed by the Coral project NORTE-01-0145-FEDER-000036, and by National Funds through the FCT -Fundacao para a Ciencia e a Tecnologia (Portuguese Foundation for Science and Technology) as part of project UID/EEA/50014/2013 and project UID/EEA/50009/2013.