Abstract

This study analyzes recipient design in handover conversations involving customers, customer service chatbots, and human agents, and examines how repair strategies initiated by chatbots and human agents impact conversational progressivity. These text-based conversations were analyzed using Conversation Analysis. Findings show customers formulate their request and subsequent messages concisely when communicating with a chatbot (pre-handover), but use details, full sentences, and politeness markers when communicating with a human agent (post-handover). Moreover, both chatbots and human agents frequently initiate repairs to address troubles of understanding promptly, but their approaches differ: chatbots generalize inquiries broadly, often resulting in multiple sequences of repair, while human agents use follow-up questions to specify and guide responses, ensuring progressivity.

Keywords

Introduction

Nowadays, chatbots are being used in many sectors, such as travel, entertainment, news, shopping, and customer service (Grudin and Jacques, 2019). In customer service, intent-based chatbots have become a fundamental tool, assisting customers with their requests and supporting human agents (Martijn et al., 2024). However, their reliance on pre-programmed dialogues presents a limitation: they have difficulty understanding natural language effectively (Ashktorab et al., 2019; Reinkemeier and Gnewuch, 2022). When customers phrase their queries in ways that do not align with the chatbot’s intent recognition, they do not receive an appropriate response (Avgustis et al., 2021). Consequently, the progressivity of the conversation is hindered as troubles of understanding occur. This problem in human-chatbot communication emphasizes the necessity of “handovers”, in which the conversation is transferred from the chatbot to a human agent, ensuring an accurate and personalized response (Martijn et al., 2024; Poser et al., 2022; Waizenegger et al., 2020).

Previous research on human-chatbot communication has predominantly focused on dyadic human-machine interaction (Ashktorab et al., 2019; Dombi et al., 2022; Følstad and Taylor, 2019), but has not specifically examined handover conversations, in which a triadic interaction unfolds between chatbot, customer, and human agent. While these three parties do not communicate simultaneously, each part of the interaction shapes the next: the interaction between customer and chatbot influences how the customer engages with the human agent. This dynamic forms a triadic relationship, and the communicative dynamics of these handover conversations present a gap in our understanding of how they affect conversational progressivity. This paper addresses this gap by applying Conversation Analysis (CA) to real-life handover interactions, offering a novel focus on the triadic communication dynamics among these interlocutors. The widespread adoption of AI underscores the growing need to understand the practical organization of human-computer interaction, for which CA offers a valuable methodological approach (Stokoe et al., 2024). This understanding is essential for evaluating the broader societal implications of artificial intelligence, which are becoming increasingly important as chatbots and other AI technologies become more deeply integrated into daily interactions. The current work adds to this understanding by examining how recipient design (Sacks et al., 1978) in human-chatbot interaction shapes conversational progressivity. In addition to its theoretical contributions, this study holds practical relevance by revealing distinctive communication patterns in these interactions, offering actionable insights to enhance chatbot communication strategies and improve chatbot training for more effective customer service.

The paper is structured as follows: first, the conversation-analytical concepts of progressivity, repair, and recipient design are discussed in both human-human and human-chatbot communication. Next, the characteristics of the collected handover conversations data are explained, followed by a detailed analysis of the patterns identified in the data.

Intersubjectivity, progressivity, and repair in human-to-human and human-chatbot communication

For effective human-to-human interaction to take place, there must be some level of intersubjectivity (Heritage, 1984a, 1984b; Schegloff, 1992) or common ground (Clark, 2009). In such interactions, responding to prior speakers’ turns allows recipients to demonstrate their understanding of what was said, thereby providing speakers with evidence of whether, and how, their turns have been understood (Sacks et al., 1974). The turn-by-turn organization of conversation therefore provides a routine display of intersubjectivity by requiring participants to “continually, there and then [. . .] demonstrate to one other that they understood or failed to understand the talk that they are party to” (Moerman and Sacks, 1971: 185). Alongside this, there is a preference for progressivity in human conversation: the normative expectation that the interaction will move forward through the timely production of next relevant actions, without interruption (Schegloff, 2007, see also Heritage, 2007). For instance, the asking of a question projects an immediate response as the next relevant action. The preference for progressivity becomes particularly apparent in situations where a participant is selected as the next speaker but fails to respond; in such cases, other participants may step in to provide a response, treating it as preferable to advance the sequence rather than delay its progress while waiting for the selected speaker to respond, or risk further non-response (Stivers and Robinson, 2006).

However, maintaining (an adequate level of) intersubjectivity may temporarily interrupt progressivity through the initiation of “repair”. The conversation-analytic notion of repair refers to “an organized set of practices through which participants in conversation are able to address and potentially resolve [. . .] problems of speaking, hearing or understanding” (Sidnell, 2011:110; see also Schegloff, 2007). Troubles of understanding arise, for instance, when recipients fail to grasp a specific word or the broader context of the conversation (Drew, 1977). In such cases, repair practices such as clarification requests (e.g., “What did you say?”) can be used to resolve the trouble. Consequently, while repair may momentarily disrupt the progressivity of an ongoing interaction (Schegloff, 2007), it remains an essential resource for maintaining intersubjectivity (Heritage, 2007) and, by extension, for securing progressivity itself, enabling participants to resume the interaction without significant disruption (Schegloff, 1979).

In human-chatbot communication, however, human interlocutors cannot depend on intersubjectivity or common ground in the traditional sense due to chatbots’ limited “intention recognition” (Dombi et al., 2022). Intention recognition refers to the chatbot’s classification of a customer’s utterance to determine the appropriate pre-programmed context for responding to the customer’s request (Pearce et al., 2023). This process constrains human-chatbot communication: if customers do not formulate their queries in a way that aligns with the chatbot’s intent recognition capabilities, they may not receive the desired response (Avgustis et al, 2021). Accurate identification of customer intent is crucial to generating contextually relevant responses. However, chatbots have a limited ability to understand natural language (Reinkemeier and Gnewuch, 2022), which hinders the achievement of intersubjectivity and, consequently, progressivity. Disruptions often emerge when the chatbot attempts to address troubles of understanding through conversational repair. There is a fundamental difference in how humans approach repair versus how the chatbot approaches it. In human-human interaction, both interlocutors take on accountability in repair, jointly working on the interactional project of re-establishing intersubjectivity. In contrast, in human-chatbot interaction, the chatbot’s repair practices are oriented toward the more limited interactional project of intent recognition, which differs from the customer’s project of reaching resolution through intersubjectivity. As a result, the chatbot merely signals troubles of understanding without specifically mentioning the trouble source, hence, the responsibility for identifying the trouble source and achieving resolution is placed on the customer (Dingemanse and Enfield, 2024).

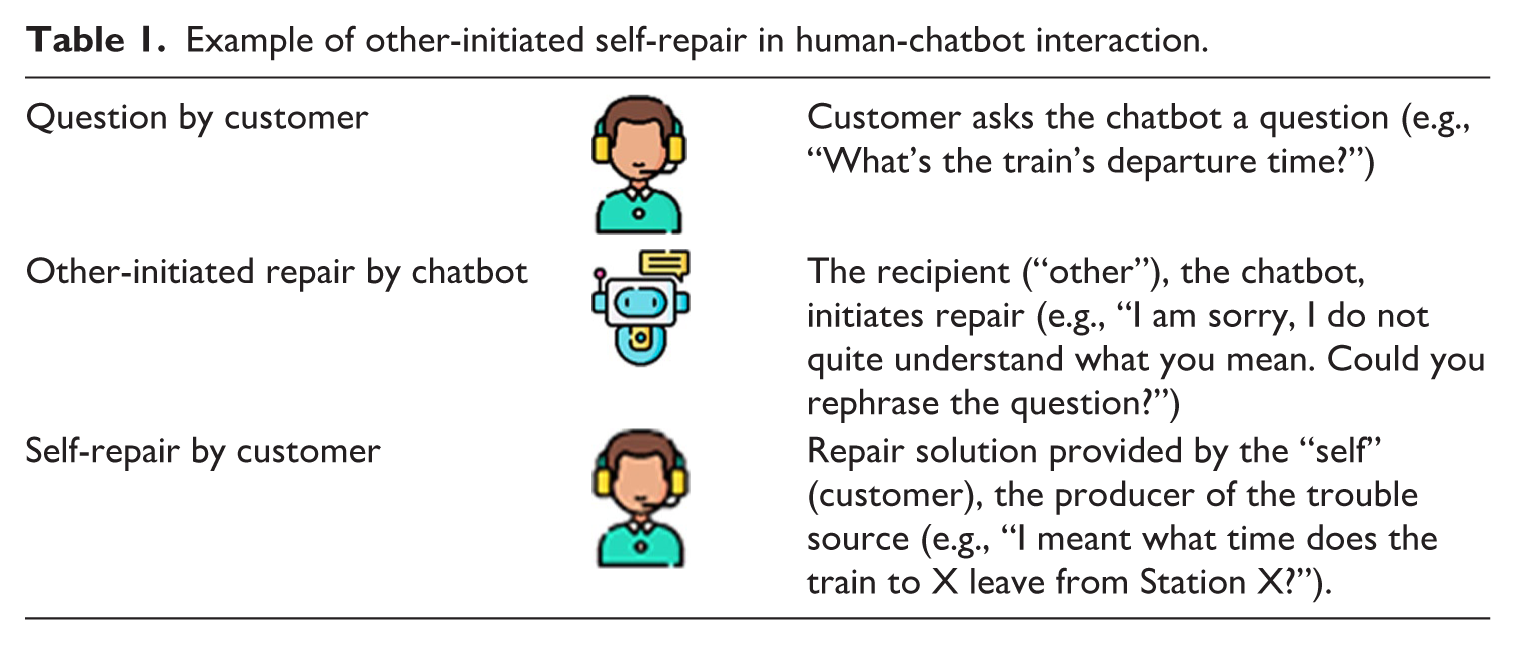

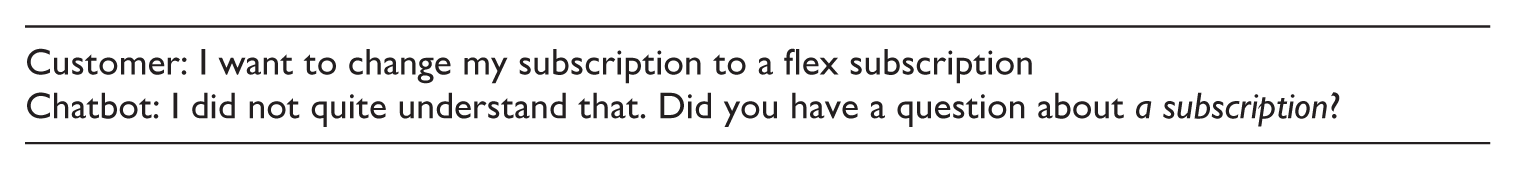

Repair can be initiated by either the speaker of the utterance that needs to be repaired (“self-initiated repair”), or by the recipient (“other-initiated repair”) (Sidnell, 2011). Similarly, the repair solution can be provided by either the speaker or the recipient. In human-to-human interactions speakers typically prefer self-initiated repair over other-initiated repair (Schegloff et al., 1977). However, in human-chatbot interactions, troubles of understanding often arise due to chatbots’ limited intent recognition. Therefore, repair initiated by the recipient – the chatbot – is more common, followed by the provision of the repair solution by the customer (Ashktorab et al., 2019). Table 1 exemplifies this other-initiated self-repair, frequently found in human-chatbot interactions.

Example of other-initiated self-repair in human-chatbot interaction.

When a chatbot displays troubles of understanding, most customers abandon the conversation after the chatbot fails to answer their request (Akhtar et al., 2019), as opposed to human-to-human conversations where people are more prone to (try to) solve the trouble. Nevertheless, in human-chatbot conversations, both chatbots and humans have different ways to resolve issues in understanding: the chatbot can suggest continuing the conversation with a human agent, or the customer can request to be transferred to one. When customers are transferred to a human agent, they are introduced to a new interlocutor, requiring them to adapt their communication style from interacting with a chatbot to engaging with a human. This transition highlights the importance of recipient design, as customers adjust their communication to the perceived understanding and capabilities of the new interlocutor. The following paragraph will focus on how recipient design is enacted during this switch between interlocutors.

Recipient design and progressivity in human-chatbot interaction

Studies on recipient design (Sacks et al., 1978) have examined how speakers tailor their messages based on their assumptions or expectations about the intended recipient(s). This adaptation involves structuring conversations linguistically and reformulating phrases to ensure immediate comprehension by the recipient (An et al., 2021; Sacks et al., 1978). For example, when talking to someone you do not know, you might refer to “my brother” instead of using his name, “Jonas”. In contrast, when speaking with friends, referring to him as “Jonas” is appropriate, given their background knowledge of your family situation. Recipient design is closely linked to the concept of progressivity in conversation. By tailoring their speech to be easily understood by the recipient, speakers facilitate a smooth and uninterrupted flow of the conversation.

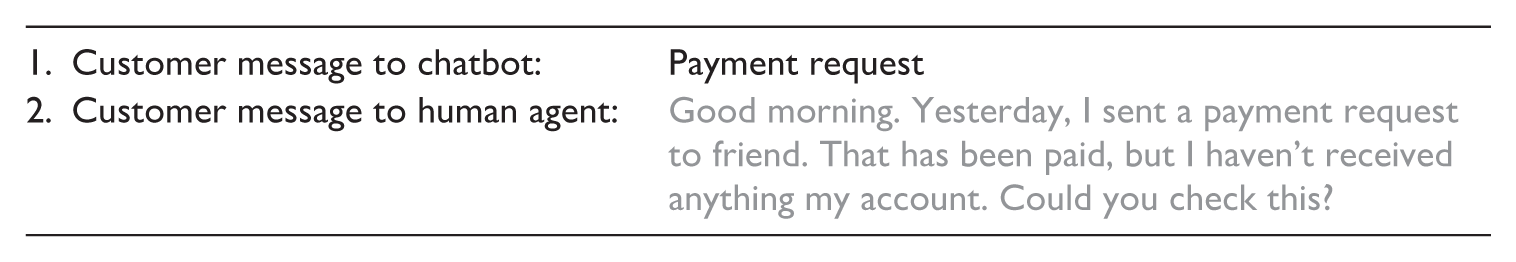

Prior work on recipient design in chatbot communication has shown how humans adjust their communication to suit non-human recipients, leading to successful outcomes as opposed to when they treat the chatbot as human (Dippold, 2024). A customer’s failure to tailor their communication to the chatbot significantly hinders the conversation’s progressivity. Take the following example from the data used for this paper. This example shows two ways in which the same customer opens the conversations with either a chatbot (1) or with a human agent (2). The customer only uses a noun (“payment request”, line 1) when addressing the chatbot while he formulates a complete message when addressing the human agent (line 2), thus adjusting their communication behavior according to their perception of the chatbot’s limited capabilities:

Consequently, by employing recipient design, and making their utterances understandable to the chatbot, human customers ensure the progressivity of the conversations (cf. Avgustis et al., 2021).

This study

The present study investigates progressivity, repair and recipient design in human-chatbot conversations, specifically focusing on transitions from a chatbot to a human agent, which are referred to as “handovers”. During handovers, there is a change in recipients: initially customers interact with the chatbot, but when the chatbot is unable to address a particular issue or fails to understand the customer’s request, the interaction is deferred to a human agent. While chatbot-initiated repair could secure progressivity by addressing problems in user input, our analysis reveals that such repair initiations often escalate interactional trouble instead of resolving it. Rather than clarifying the issue and enabling the user to complete their intended action, these repair sequences often lead to breakdowns that prompt the chatbot to offer a handover to a human agent as a solution. In handover conversations, customers need to adjust their communicative behavior to align with the characteristics and expectations of the new recipient. By analyzing both the chatbot’s uptake of the customer’s turn prior to the handover, and the human agent’s uptake afterward, we illustrate how repair strategies used by the chatbot hinder the conversation’s progressivity, while the human agent’s strategies facilitate its progression. Furthermore, by comparing customer–chatbot and customer–human agent interactions before and after the handover, we illustrate how recipient design unfolds in handover conversations. That is, the customer adapts their response based on their apparent understanding of both chatbot and human agent as a recipient, but in markedly different ways.

Data and analytical procedure

We analyzed the data using Conversation Analysis (CA). Following this methodology, analyses are grounded in the turn-by-turn development of the chat conversations, addressing the actions achieved by participants, the design of their turns and how these turns get responded to by recipients (Sidnell and Stivers, 2012). Specifically, we focused on customers’ initial inquiries, distinguishing between those directed at the chatbot (pre-handover) and those directed at the human agent (post-handover). Our approach involved an iterative analytical process, incorporating multiple rounds of analysis, including data sessions where authors discussed patterns, examined individual instances, and considered both their unique characteristics and common features.

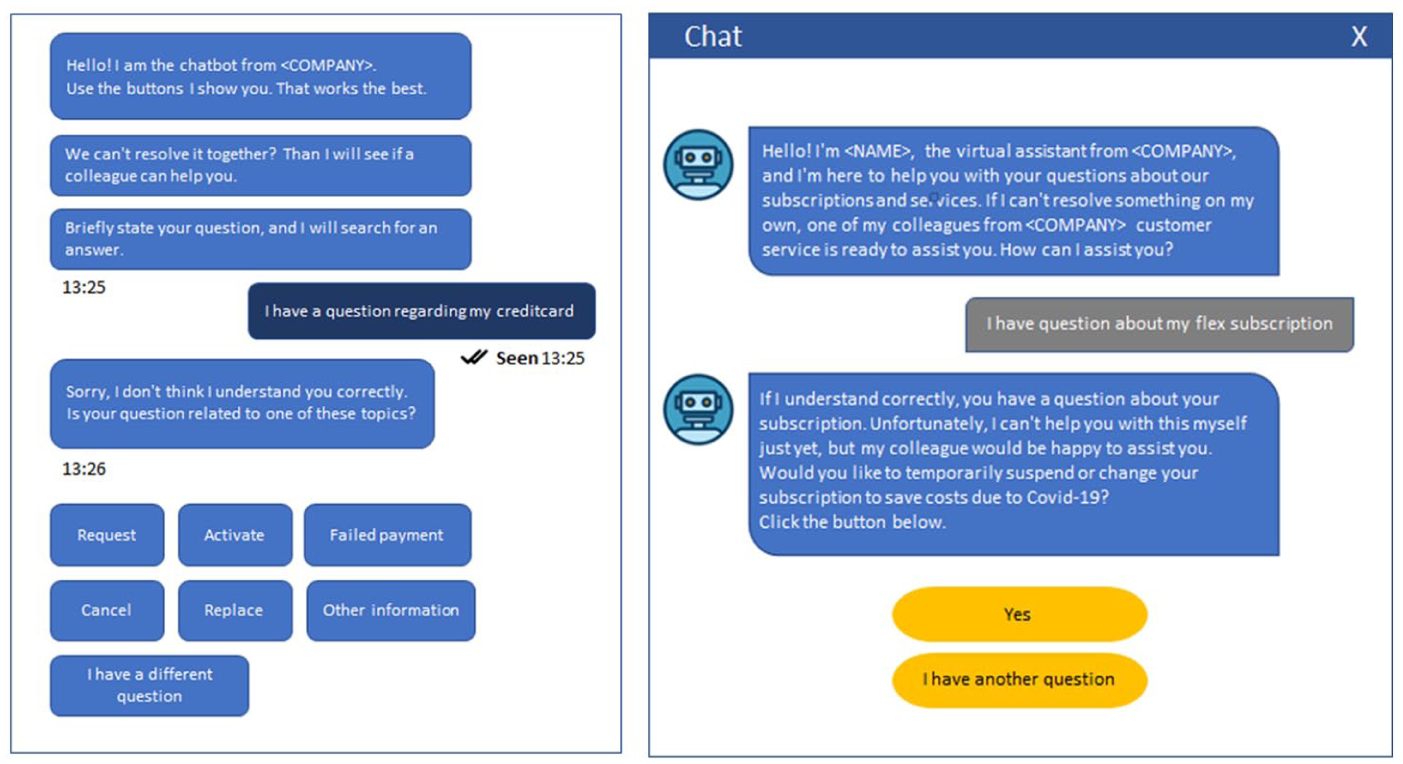

The data for this study were provided by a Dutch financial organization and a Dutch public transport company. The chatbots serve as the initial point of customer contact in the chat channel where they assist customers with their inquiries. Both are task-based chatbots with pre-programmed dialogues, and customers can communicate with the chatbots via text as well as via buttons (Figure 1). We received the data in an Excel format, which included metadata indicating when buttons were presented to the user. While the actual button texts were not always visible in the data, the meta data did show which button the customer chose. Both chatbots introduced the possibility of a handover in their welcome messages (see for example Figure 1). Both organizations took measures to remove personal data and other privacy-sensitive information, such as account numbers or client numbers, before providing us with the data. Anonymized data appear in the corpora as <FILTERED>, <IBAN> and <DIGIT_CODE>. After receiving the data, the corpora were filtered according to the selection criteria that both the chatbot and the customer had at least two conversational turns in each conversation.

Financial organization chatbot (left) and the public transport chatbot (right) mock-ups.

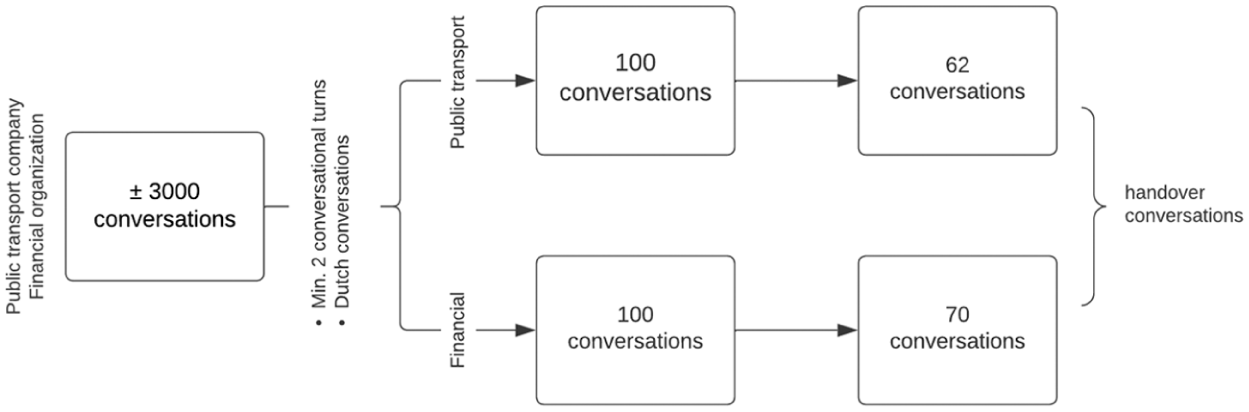

Since the chatbot’s responses were limited by their pre-programmed nature, the first author took a randomized sample of 200 conversations (100 per company) from the original data. This sample should be a good reflection of the data, as it covers a range of recurring topics and similar customer requests. For the analysis, we only included conversations that involved a handover from the chatbot to a human agent: 70 conversations from the financial organization and 62 conversations from the public transport company (Figure 2).

Corpora selection process.

Analysis

Sections “Absence of greeting and concise request” and “Detailed and lengthier inquiries” focus on the way customers design their initial inquiries in terms of recipient design during handover conversations between the chatbot, customer and human agent. These sections also show how the chatbot’s and human agent’s repair strategies affect the conversation’s progressivity. Section “Absence of greeting and concise request” shows a prototypical example of recipient design in human-chatbot conversations: when communicating with the chatbot, customers often do not greet the chatbot and formulate their request using keywords. In most cases, keyword-based formulations observably lead to an understanding on the chatbot’s side. In section “Detailed and lengthier inquiries,” we focus on situations where customers formulate lengthier requests instead of using key words, and how this leads to troubles in understanding on the chatbot’s part. Section “Talking to a chatbot versus human agent: Reformulations in handover conversations” compares how customers formulate their inquiries to the chatbot (pre-handover), versus how they formulate their inquiries to a human agent (post-handover). Finally, the analysis in section “Chatbot versus human agent repair strategies: Impact on progressivity” addresses the uptake of chatbot and human agent repair strategies in response to customer queries. We will reveal how the chatbot’s strategy impedes conversation progressivity, while the human agent’s strategy enables it.

Talking to a non-human agent: Recipient design in customers’ initial inquiries

Absence of greeting and concise request

Excerpts 1 and 2 illustrate how recipient design becomes particularly relevant in customers’ responses to chatbots’ welcome messages. In all instances within the dataset, the chatbot greeted customers, provided chat guidelines, stated the possibility of a handover, and offered additional communication guidance. While greetings form an adjacency pair, initiated by one speaker greeting the other (first pair part) and the other speaker responding with a greeting (second pair part) (Schegloff, 1986), in nearly all cases within our dataset, customers do not reciprocate the chatbot’s greeting. Instead, they typically formulate their inquiries using keywords or short sentences, rather than full sentences, thus demonstrating their awareness of interacting with an artificial agent. Examination of the chatbot’s uptake of the customer’s request shows how the formulation of the request (brief keyword-based sentence structure) influences the conversations’ progressivity. Excerpt 1 shows the welcome message from the financial organization chatbot. It shows how customers typically respond to the chatbot’s welcome messages.

Excerpt 1. Welcome messages of the chatbot of the financial organization and typical uptake of the customer.

1

Chatbot

Greeting/chat guidelines

Hallo! Ik ben de chatbot van <BEDRIJF>.

Maak gebruik van de knoppen die ik je laat zien. Dat werkt het beste.

Hello! I am the chatbot from <COMPANY>.

Use the buttons I show you. That works the best.

2

Chatbot

Handover statement

Komen we er samen niet uit?

Dan kijk ik of een collega je kan helpen.

We can’t resolve it together?

Then I will see if a colleague can help you.

3

Chatbot

Chat guidelines

Stel kort je vraag, dan ga ik voor je op zoek.

Briefly state your question, and I will search for an answer.

4

Customer

Initial request

pinverzoek afgewezen door de bank

pin request rejected by the bank.

5

Chatbot

Handover

Ik ga kijken of er een collega beschikbaar is die je verder kan helpen. Moment, ik verbind je door.

I will check if a colleague is available to assist you further.

One moment, I’ll connect you.

The moment the customer enters the chat, the chatbot greets them with a standard pre-defined welcome message (lines 1–3). When introducing the handover possibility, the chatbot adopts a more human-like persona by addressing the customer service representative as a “colleague” (line 2). Additionally, the chatbot provides the customer with interaction guidelines, suggesting they should “briefly state their question” (line 3). By emphasizing the brevity of the customer’s request, the chatbot is giving clear guidelines to the customer. This facilitates progressivity: the chatbot gives detailed instructions as to how the customer should interact with it, to reduce the chance of troubles of understanding.

As was visible in most conversations within our data, Excerpt 1 shows how the customer responds with their request rather than greeting the chatbot. The customer formulates their request in a very brief form: “pin request rejected by the bank” (Excerpt 1, line 4). This sentence misses articles and a verb to make it a syntactically complete sentence. In response to the customer’s initial request, the chatbot indicates the necessity of a handover and initiates it (line 5).

Unlike the chatbot of the financial organization, the public transport company’s chatbot does not provide any interaction guidelines. However, despite this absence, the data, as demonstrated by Excerpt 2, still reveals patterns of customers formulating brief, keyword-based inquiries. This shows that customers design their messages not (only) based on the instructions they have received, but also on their expectations of the recipient: Excerpt 2. Welcome messages of the chatbot of the public transport organization and typical uptake of the customer.

1

Chatbot

Greeting/purpose/handover statement

Hallo! Ik ben <NAAM>, de virtuele assistent van en ik help je graag met je vragen over onze abonnementen en diensten. Mocht ik er zelf niet uitkomen dan staat één van mijn collega’s van <BEDRIJF> klantenservice voor je klaar. Waar kan ik je mee helpen?

Hello! I’m <NAME>, the virtual assistant from <COMPANY>, and I’m here to help you with your questions about our subscriptions and services. If I can’t resolve something on my own, one of my colleagues from <COMPANY> customer service is ready to assist you. How can I assist you?

2

Customer

Initial request

Afgestudeerd en <FILTERED> flex

3

Chatbot

Handover initiation

Als ik het goed begrijp heb je een vraag over het studentenreisproduct of korting na je afstuderen. Hier kan ik je zelf nog niet verder mee helpen, maar mijn collega kijkt graag met je mee. Klik op onderstaande knop.

Excerpt 2 shows how the customer phrases the request using keywords (“graduated” and “<FILTERED> Flex”, line 2), to reference the situation at hand and the specific topic concerning the request, namely “flex”, a specific type of subscription (“flex subscription”). As shown in line 3, the chatbot displays an understanding of the topic as it uses a confirmation sentence, “If I understand correctly, you have a question about the student travel product of discounts after graduating”. The chatbot also signals the need to redirect the customer to a human agent. As in Excerpt 1, the chatbot initiates a handover at line 3, indicating that this is the most suitable option for the conversation’s progressivity.

Detailed and lengthier inquiries

Employing a single-sentence structure in response to the chatbots’ inquiries, as revealed in Excerpts 1 and 2, seems to contribute to the conversation’s progressivity. This becomes pertinent in the analysis of detailed and lengthier customers’ requests in our dataset, containing multiple sentences, details, and sub-questions rather than a single, concise query. Take Excerpt 3 and 4 below: such requests typically result in chatbot-initiated repairs indicating a problem of understanding (Schegloff, et al., 1977; Svennevig, 2008) thereby hindering the conversation’s progressivity.

Excerpt 3 demonstrates how the customer’s interaction with the chatbot, similar to how humans would interact with human interlocutors (line 2), leads to the chatbot’s trouble in understanding (line 3): Excerpt 3. Lengthy initial customer request resulting in non-understanding of the public transport chatbot.

1

Chatbot

Greeting/purpose/handover statement

Hallo! Ik ben <NAAM>, de virtuele assistent van <BEDRIJF> en ik help je graag met je vragen over onze abonnementen en diensten. Mocht ik er zelf niet uitkomen dan staat één van mijn collega’s van <BEDRIJF> klantenservice voor je klaar. Waar kan ik je mee helpen?

Hello! I’m <NAME>, the virtual assistant from <COMPANY>, and I’m here to help you with your questions about our subscriptions and services. If I can’t resolve something on my own, one of my colleagues from <COMPANY> customer service is ready to assist you. How can I assist you?

2

Customer

Initial request

Goedemiddag! Ik heb een studentenweekend abonnement, alleen nu krijg ik net een mailtje dat ik ook een dalabonnement heb en dat ik <BEDRAG> moet betalen. Dit is toch dubbelop? <FILTERED> dit stopgezet worden? Met vriendelijke groeten, <FILTERED>

Good afternoon! I have a student weekend subscription, but now I just received an email that I also have an off-peak subscription and that I have to pay <AMOUNT>. Isn’t this double? <FILTERED> can this be stopped? Kind regards, <FILTERED>

3

Chatbot

Repair initiation

Ik begrijp helaas niet helemaal wat je bedoelt. Wil je de vraag nog een keer stellen in andere woorden? Tip: Korte en bondige vragen begrijp ik het beste.

Unfortunately, I don’t completely understand what you mean. Could you please rephrase the question in different words? Tip: I understand short and concise questions best.

The conversation shows how the customer orients to their recipient as if it were a human interlocutor (line 2): the customer’s request includes a greeting (“Good afternoon!”), the use of full sentences, situation description and outlining the problem (“I have a student weekend subscription, but now I just received an email that I also have an off-peak subscription and that I have to pay”), a specific request regarding that context (“Isn’t this double?”), and a request for assistance (“Can this be stopped?”) followed by a polite conversation ending (“Kind regards”). However, this request formulation leads to the chatbot having trouble understanding and initiating repair (line 3), particularly a meta communicatively explicit initiation (e.g., Mostovaia and Pfeiffer, 2023; Raymond and Sidnell, 2019). While, in so doing, the chatbot shifts the responsibility for resolving the trouble source onto the user, the request to “rephrase the question in different words” and the turn-final “tip” (“I understand short and concise answers best”) assists the customer by offering a candidate solution (Svennevig, 2008), signaling that the customer should present their request in the form of a brief, focused question. This type of repair initiation is untypical for human-human interaction (see Mostovaia and Pfeiffer, 2023; Svennevig, 2008), but typical for human-chatbot interaction. In this case, recipient design is based on an apparent “wrong” expectation of the interlocutor; the customer interacts as if they are speaking to a human agent. In line with this, analysis of our data has shown that when customers formulate their inquiries with politeness markers, using full sentences and adding more context, as is the case in Excerpt 3, progressivity is hindered, often prompting the chatbot to express troubles of understanding.

We see a similar pattern in Excerpt 4: the customer greets the chatbot, presents a lengthy request (line 4) formulated by using a full sentence with an elaborate problem presentation (“can you check for me where the bank transfer . . .. from yesterday is?”). Next, the chatbot initiates repair, indicating a problem of understanding (Schegloff et al., 1977; Svennevig, 2008) with the preceding “long question” (line 5).

Excerpt 4. Lengthy initial customer request resulting in non-understanding of the financial organization chatbot.

1

Chatbot

Greeting/interaction guideline

Hallo! Ik ben de chatbot van <BEDRIJF>.

2

Chatbot

Handover statement

Komen we er samen niet uit? Dan kijk ik of een collega je kan helpen.

3

Chatbot

Interaction guideline

Stel kort je vraag, dan ga ik voor je op zoek.

4

User

Initial request

goedemorgen. kunt u voor mij nakijken waar de overschrijving van <AMOUNT> <AMOUNT> gisteren is gebleven. ik heb dit geld over gemaakt naar een ASN rekening maar daar is het tot nu toe niet bijgeschreven. ik heb het ook met <AMOUNT> <AMOUNT> geprobeerd en deze is wel bijgeschrevengoodmorning. can you check for me where the bank transfer of <AMOUNT> <AMOUNT> from yesterday is. I transferred this money to an ASN account but up until now it has not been credited. I also have tried it with <AMOUNT> <AMOUNT> and this has been credited

5

Chatbot

Repair initiation

Dat is een lange vraag. Sorry, kun je ‘m iets korter maken? Bijvoorbeeld: hoe activeer ik mijn betaalpas

6

User

Repair solution

Overschrijving

7

Chatbot

Repair initiation

Wat is precies je vraag met betrekking tot overboeken?

In line 5, the chatbot initiates a repair that implicitly conveys a problem of understanding (“That is a long question”), and offers a candidate solution by instructing the customer to shorten the request (“Sorry, can you shorten it a little. For example: how do I activate my debit card”) that is untypical for human-to-human interaction (see Mostovaia and Pfeiffer, 2023; Svennevig, 2008). While this repair hinders the conversation’s progressivity, it also serves it by determining how, and if, the chatbot can help the customer (cf. Heritage, 2007; Schegloff, 2007). The customer responds by rephrasing their original longer request into a keyword-based formulation (line 6). The chatbot responds with another repair initiation in the form of an interrogative, partially repeating the trouble-source turn (line 7, Golato and Golato, 2015). By doing so, the chatbot highlights the importance of accurately identifying customer intent to generate a relevant response (Dombi et al., 2022).

Overall, our analyses have revealed that lengthy requests (e.g., requests containing multiple sentences, details, and sub-questions) rather than a single, concise query impede the conversation’s progressivity as the chatbot indicates that it has trouble understanding the customer’s intent. However, when customers rephrase their original, lengthy request into a keyword-based formulation, typically prompted by the chatbot, the chatbot displays intent recognition, facilitating the conversation’s progressivity.

Talking to a chatbot versus human agent: Reformulations in handover conversations

In this section, we illustrate how customers reformulate their requests when the conversation is transferred from a chatbot to a human agent. By juxtaposing the customers’ initial message to the chatbot with their message to the human agent, we observe a difference in customers’ communicative behavior towards the chatbot and the human agent.

The analysis shows that customers tend to formulate brief and simple one-sentence requests when interacting with the chatbot (pre-handover). When addressing a human employee (post-handover), they reformulate their original requests into more contextually specific and elaborate sentences. By examining these differences in wording addressed to these two different interlocutors, it becomes evident that formulating a clear problem presentation seems to be a challenge for customers. Recipient design plays hereby an important role: while customers provide elaborate explanations to the human agent, they orient to the chatbot’s limited capabilities in understanding natural language by reformulating a complete message into keywords. However, when customers would provide the same elaborate explanation to the chatbot as they would to the human agent, this would result in a multiple sequence of repair, in which the conversation repeatedly returns to the same topic or request, impeding the conversation’s progressivity.

Excerpt 5 illustrates how a customer reformulates their request. Line 2 shows the customer’s request as originally formulated towards the chatbot: “question regarding ticket”. The customer directly states the request without greeting the chatbot and phrases their request in a very general and unspecified manner, making it unclear what the customer specifically wants to know regarding the ticket. Line 8, by contrast, shows the opening of the conversation post-handover, when the customer has been transferred to a human agent. Unlike in the contact with the chatbot, the customer formulates the request in a very different way: Excerpt 5. Customer’s formulations of the request to the public transport chatbot (pre-handover) and to a human agent (post-handover).

2

Pre-handover

Customer

Vraag over <TICKET>

8

Post handover

Customer

Goedemiddag, ik wil samen met mijn <FILTERED> een reis plannen naar <FILTERED>. Klopt mijn aanname dat ik <FILTERED> een daluur kaartje <FILTERED> 0 <FILTERED> aanschaffen en voor mijn <FILTERED> een <TICKET> kaartje <FILTERED> <FILTERED> bijkopen? Dus samen een retour <FILTERED> een totaalbedrag <FILTERED>

Good afternoon, I want to plan a trip together with my <FILTERED> to <FILTERED>. Is my assumption correct that I <FILTERED> purchase an off-peak hour ticket <FILTERED> <FILTERED> and purchase a <TICKET> ticket <FILTERED> 0 <FILTERED> additionally for my <FILTERED>? So together a return <FILTERED> a total <FILTERED>

Post-handover, the customer provides much more information regarding the request, specifying that it concerns an off-peak hour return ticket for two persons (line 8). Additionally, line 8 shows how the customer reformulates the initial keyword-based request into a full question (“Is my assumption correct that I . . . purchase an off-peak hour ticket . . . and purchase a . . . ticket . . . additionally for my . . .?”).

Excerpt 6 shows a similar pattern of reformulation and recipient design for a customer request posed to the financial organization chatbot and the human agent. Line 4 shows how the customer had phrased their initial keyword-based request towards the chatbot, which lacks specification. However, the question to the human agent after the handover is very different (line 7).

Excerpt 6. Customer’s formulations of the request to the financial chatbot (pre-handover) and to a human agent (post-handover).

4

Pre-handover

Customer

betaalverzoek

7

Post-handover

Customer

goedemorgen. gister heb ik een betaalverzoek gestuurd naar een vriend. die is betaald alleen heb ik niks ontvangen op mijn rekening. zou je dit na kunnen kijken?

Post-handover, the customer greets the human agent, and the customer reformulates their original request (“payment request”, line 4) into a question (“could you check this?”, line 7), providing additional context by specifying their intent with the date “yesterday”, the context “I sent a payment request to a friend”, and problem “I haven’t received anything into my account”. In line with the pattern as shown in Excerpt 5, the customer’s original concise request is reformulated into an elaborate request which provides context and specificity to the problem presentation. This adjustment in recipient design by the customer implicates a strategic adaptation to engage effectively with a human agent. The transition from the initially brief request to a more detailed request suggests a recognition of the need for clarity and specificity in communicating with a human agent compared to the earlier interaction with the chatbot.

Chatbot versus human agent repair strategies: Impact on progressivity

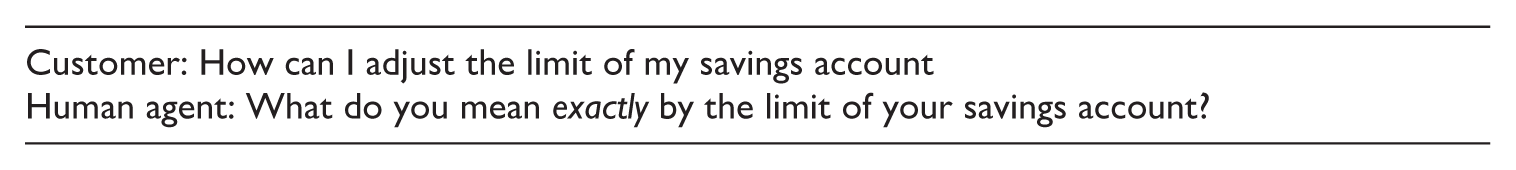

This section highlights a pattern in our data showing that the chatbot’s repair strategies differ from those of the human agent. Our analysis revealed that the chatbot displays troubles of understanding but does not specify the trouble source. Instead, it attempts to achieve intent recognition by employing a generalizing strategy, clarifying the overarching topic of the request, even though the customer had already introduced the topic in their initial utterance. This back-and-forth exchange revolves more around the topic of the customer’s request than finding the resolution. Hereby the chatbot determines the general subject of the query based on these exchanges but struggles to identify the specific problem. This generalizing approach reduces the conversation’s progressivity and ultimately leads to a form of intent recognition that confirms the chatbot’s inability to assist the customer effectively. Take the following exchange (snippet from Excerpt 8 below) demonstrating how the chatbot’s uptake reveals difficulties in understanding customer intent and initiates an understanding check (Svennevig, 2008) by posing a confirmatory question that generalizes the customer’s request: it alters the request about a “flex subscription” to the broader term “a subscription”.

In contrast, when the human agent encounters troubles of understanding, they specify immediately by asking clarifying questions, such as, “What exactly do you mean by. . .?”. By explicitly specifying the trouble source, the human agent signals the need for clarification, prompting the customer to elaborate. This mutual effort to resolve the trouble of understanding helps establish common ground. The human agent’s detailed follow-up questions enable them to gather background information, which allows them to provide the customer with relevant and tailored information. Take the following exchange (snippet from Excerpt 7 below), which illustrates how the human agent initiates repair by offering an interrogative with a partial repeat (Golato and Golato, 2015). However, instead of broadening the customer’s request, the human agent narrows it by asking what the customer means exactly by “the limit of your savings account”:

The repair strategies in Excerpt 7 and 8 below highlight two distinct interactional projects (cf. Raymond and Zimmerman, 2016): the chatbot aims to confirm whether it has identified the topic correctly, determining if it can further assist the customer or if a human agent should take over, while the human agent’s goal is to answer the customer’s request. Figure 3 visually demonstrates these distinctions between the two strategies used by chatbots versus human agents.

Chatbot’s generalizing versus human agent’s specifying customer repair strategy.

Excerpt 7 shows a conversation between the financial organization’s customer service chatbot, the customer, and the human agent. Excerpt 7 starts with the customer’s initial request, which concerns a very specific request regarding the “increase” of their savings account.

Excerpt 7. Different repair strategies employed by financial chatbot and human agent in a handover conversation.

1

Chatbot

Greeting

Hallo! Ik ben de chatbot van <BEDRIJF>.

2

Chatbot

Handover statement

Komen we er samen niet uit? Dan kijk ik of een collega je kan helpen.

3

Chatbot

Instruction

Stel kort je vraag, dan ga ik voor je op zoek.

Briefly state your question, and I will search for an answer.

4

Customer

Initial request

Ik wil mijn spaar rekening verhogen

5

Chatbot

Repair initiation: presenting options

Sorry, volgens mij begrijp ik je niet goed.

7

Customer

[KLIKT OP KNOP] {“Spaarrekeningen”}

8

Chatbot

Follow-up repair initiation

Wat is je vraag precies met betrekking tot de Spaarrekening?

9

Chatbot

Options

[TOONT KNOPPEN]

10

Customer

[KLIKT BEPAALDE KNOP AAN]

11

Chatbot

Repair initiation

Sorry! Ik kan je nog niet helpen hiermee.

12

Chatbot

Handover offer

Wil je een collega spreken?

13

Chatbot

[KNOP]

14

Customer

[KLIKT KNOP]

15

Chatbot

Handover initiation

Moment, ik verbind je door.

16

Human agent

Greeting

Welkom!

17

Human agent

Requesting information

Waar kan ik je mee helpen?

18

Customer

Customer rephrase

Hoe kan ik mijn limiet van mijn spaar rekening aan passen

19

Human agent

Repair initiation

Wat bedoel je precies met de limiet van je spaarrekeningen?

20

Customer

Repair solution

Het woord per <DIGIT_CODE> manden x bedrag van mijn rekening afgeschreven

21

Human agent

Answer

Deze kun je aanpassen in <Website>. Log in op <Website> klik op opdrachten > Klik op een betaling een vervolgens op de knop wijzigen. Hier kun je het bedrag wijzigen.

The excerpt shows that the chatbot has difficulty understanding customer intent and initiates repair by generalizing the topic. It does so by posing confirmatory questions about the topic (line 5 and 8), offering a button-based menu with options including one repeating the keyword “savings account” from the customer’s request (line 5: “Is your question related to one of these topics?”). We see something similar happening in line 8, when the chatbot proceeds by asking: “What is your question specifically regarding the savings account?” (line 8). Both repair strategies employed by the chatbot reveal a pattern in the data whereby the customer’s specific request is generalized into a broader topic.

When examining the human agent’s response in the conversation after the handover, it becomes apparent that the customer’s reformulation (line 18: “How can I adjust the limit of my savings account?”) still leads to troubles in understanding (line 19), leading to a handover to a human agent (line 15). In contrast to the chatbot, the human agent’s repair strategy explicitly identifies the trouble source, the specific part of the customer’s request causing troubles of understanding (line 19). By partially repeating this trouble-source turn (Golato and Golato, 2015) and adding the adverb “exactly”, the agent moves beyond merely signaling a trouble of understanding. Instead, they specify the issue (line 19: “limit of your savings account”) and invite the customer to elaborate, facilitating a more precise and collaborative resolution. Once the customer clarifies, the parties visibly establish mutual understanding, allowing the human agent to give advice and, in turn, for the conversation to move forward effectively (line 21).

The excerpt shows how the chatbot attempts to clarify the subject of the customer’s request by requiring the customer to formulate it in a way that the chatbot can recognize an intent. Its strategy involves signaling that it does not fully understand or partially understands the query and then generalizing, shifting from the specific customer request to a broader topic under which the request might fall. As a result, multiple conversational turns are often needed before the chatbot identifies the subject of the query. However, even successful intent recognition does not guarantee that the chatbot can assist with the customer’s request.

In contrast, in conversations with the human agent, displays of troubles of understanding are more targeted. The agent explicitly focuses on the part of the request they do not understand by partially repeating it (Golato and Golato, 2015), providing the customer with insight into the trouble source (line 21). This approach fosters common ground between the agent and the customer. With the chatbot, however, the customer rarely gains any insight into what the chatbot does not understand, limiting the opportunity to resolve the issue collaboratively.

The conversation in Excerpt 8 also demonstrates how, when troubles of understanding arise, the chatbot and human agent use different repair strategies, with the chatbot generalizing the customer’s request, and the human agent specifying the request. Line 2 depicts the customer’s initial request about the starting time of their flex subscription. Despite the specific nature of the request regarding the flex subscription, the chatbot responds by generalizing: “If I understand correctly, you have a question about your subscription” (line 3). It also indicates its inability to assist further with the subscription topic and suggests a handover (line 3).

Excerpt 8. Different repair strategies employed by public transport chatbot and human agent in a handover conversation.

1

Chatbot

Greeting/goal/handover statement

Hallo! Ik ben <NAAM>, de virtuele assistent van <BEDRIJF> en ik help je graag met je vragen over onze abonnementen en diensten. Mocht ik er zelf niet uitkomen dan staat één van mijn collega’s van <BEDRIJF> klantenservice voor je klaar. Waar kan ik je mee helpen?

Hello! I’m <NAME>, the virtual assistant from <COMPANY>, and I’m here to help you with your questions about our subscriptions and services. If I can’t resolve something on my own, one of my colleagues from <COMPANY> customer service is ready to assist you. How can I assist you?

2

Customer

Initial request

hoelaat gaat het flex abbonement in?

what time does the flex subscription start?

3

Bot

Repair/handover initiation

Als ik het goed begrijp heb je een vraag over je abonnement. Hier kan ik je zelf nog niet mee helpen, maar mijn collega kijkt graag met je mee. Wil je je abonnement tijdelijk stopzetten of wijzigen om kosten te besparen in verband met COVID-19? Klik op onderstaande knop.

If I understand correctly, you have a question about your subscription. Unfortunately, I can’t help you with this myself just yet, but my colleague would be happy to assist you. Would you like to temporarily suspend or change your subscription to save costs due to COVID-19? Click the button below.

4

Customer

Ik heb een andere vraag

I have another question

5

Bot

Handover

Mijn collega van <BEDRIJF> Klantenservice helpt je graag verder! Klik op onderstaande knop.

My colleague from<COMPANY> Customer Service will be happy to assist you further! Click the button below.

6

Customer

<KLIKT KNOP> Chat met een medewerker

<CLICKS BUTTON> Chat with an employee

7

Bot

Confirmation

Ik verbind je zo snel mogelijk door. Het kan even duren voor er een collega beschikbaar is.

8

Customer

Greeting

Goedemiddag

good afternoon

9

Agent

Repair/follow-up question

Goedemiddag, ik snap niet precies wat je bedoelt.

Heb je op dit moment al een Flex abonnement?

Good afternoon, I don’t quite understand what you mean.

10

Customer

Rephrase

op dit moment nog niet, morgen gaat m’n flex abbonement in, alleen mijn vraag is vanaf hoelaat ik het product <FILTERED> ophalen?

at the moment, not yet, my flex subscription will start tomorrow, but my question is, from what time can I collect the <FILTERED> product?

11

Agent

Information request

Zou je de volgende gegevens willen sturen: voorletters, achternaam, postcode, huisnummer, geboortedatum, OV-chipkaartnummer en e-mailadres?

Would you please provide the following details: initials, last name, postal code, house number, date of birth, OV-chipkaart number, and email address?

12

customer

Information

<FILTERED>

<FILTERED>

13

agent

Answer

Dit product is als het goed is ‘s nachts al beschikbaar.

Dus als je morgenochtend wil reizen is het zeker al beschikbaar.

Rather than immediately offering a handover, the chatbot suggests options, such as suspending or changing the subscription due to COVID-19. This prompts the customer to indicate that they have an alternative query (line 4). The chatbot’s project involves distinguishing between topics it can address and those it cannot, which must be determined before transferring the customer. Once the customer provides a response, the chatbot evidently determines it cannot help the customer, and initiates the handover (line 5). Post-handover, the human agent acknowledges the customer’s initial request, allowing the customer to reformulate their request (line 9). Specifically, the agent’s understanding check (Svennevig, 2008) is aimed at specifying the issue: “Do you currently have a Flex subscription?”. In contrast to the chatbot’s repair strategy, the human agent’s repair strategy explicitly identifies the aspect of the customer’s request that was not understood (cf. Golato and Golato, 2015; Svennevig, 2008). This approach aims to establish a mutual understanding of the customer’s request and possible solutions. In response, the customer, in a single turn, not only answers the human agent’s question but also reformulates their original request (line 10).

Discussion

Summary and discussion of the results

Intent-based customer service chatbots rely on pre-programmed dialogues, limiting their ability to understand natural language (Ashktorab et al., 2019; Reinkemeier and Gnewuch, 2022). When customers phrase their requests in ways that do not align with the chatbot’s intent recognition, the chatbot displays troubles of understanding. In such cases, the chatbot demonstrates that accurate recognition of customer intent is essential for generating a relevant response by initiating repair. However, such repair inevitably hampers the progressivity of the conversation.

This study also examined how handovers impact the customer’s recipient design, and we compared both chatbots’ and human agents’ repair strategies in these conversations. By analyzing these patterns, we aimed to enhance our understanding of customer interaction, providing insights that can inform the training of customer service chatbots to improve conversational progressivity. The analysis offers unique insights by analyzing service interactions where a single customer engages with two distinct conversational partners: a chatbot and a human agent. This approach allows for a direct comparison, providing valuable observations on recipient design; how customers tailor their communication based on their assumptions about their interlocutor’s capabilities. Our analysis shows that these orientations directly impact the conversation’s progressivity.

Customers’ expectations become visible through recipient design both before and after the handover. Prior to the handover, customers often formulate concise, keyword-based requests, which reflects an orientation to the chatbot’s limited capabilities. This strategy generally aids progressivity, as the chatbot quickly identifies a topic and either confirms it or initiates a handover. A particularly interesting, and paradoxical finding is that some customers adopt a more elaborate communication style with the chatbot, similar to how they would with a human. They use longer, more detailed sentences and politeness markers, which often leads to troubles of understanding, impeding the conversation’s progressivity. This suggests that the chatbot’s system, likely optimized for concise input, interprets these natural language elements as “noise,” hindering its ability to process the request accurately.

Throughout our analysis, we observed that intersubjectivity, the process through which interlocutors achieve a shared understanding, and the establishment of common ground are treated differently by chatbots and human agents, with implications for progressivity. In human–human service interactions, intersubjectivity is achieved through collaborative repair sequences in which parties actively work to pinpoint and resolve troubles of understanding, often using clarification questions and elaborations that build on shared assumptions and context (Clark, 2015). Our data reflect this pattern: human agents initiate repair when troubles of understanding occur by actively contributing to grounding, mentioning the specific trouble source and working collaboratively with the customer to resolve them.

In contrast, the chatbot’s repair strategies are less collaborative and do not engage in grounding in the same way (Dingemanse and Enfield, 2024). Instead of negotiating meaning, the chatbot relies on generic repair strategies as it prompts customers to “rephrase” or select from fixed options, without identifying what aspect of the prior utterance led to the troubles of understanding. The key challenge in chatbot interactions is that, although the chatbot initiates repair, it fails to explicitly identify the trouble source, placing the burden of resolution entirely on the customer. This reflects a fundamental challenge which is the nature of understanding in conversational interfaces as addressed by Dingemanse and Enfield (2024). When troubles of understanding arise, the division of labor becomes skewed, as the interpretive and interactive work falls disproportionately on the customer (Dingemanse and Enfield, 2024). This is a critical point, as rephrasing directed at chatbots has been shown to be an ineffective strategy: users often struggle to determine how exactly to reformulate their input in a way that the chatbot will understand the second time (Dippold, 2023). Thus, while understanding is central to the interaction, the chatbot does not engage in building common ground in the conversational sense. Instead, it appears to focus on categorizing user input to facilitate handover, rather than resolving the trouble of understanding itself. Our findings suggest that the problem is not the repair process itself, as temporary disruptions to progressivity are a natural part of restoring common ground or intersubjectivity, but rather the design of the strategy. The chatbot’s repair practices do not contribute to progressivity because they fail to isolate the source of trouble. This limitation also highlights a design trade-off: given the chatbot’s restricted understanding and action capabilities, more specific repair initiations might place an unrealistic burden on users by prompting responses the system cannot process. In this sense, increased specificity may actually reduce the overall efficiency of the interaction, particularly if handover is the inevitable next step.

These differences raise important questions about what constitutes “understanding” in human–machine interaction. If common ground is not co-constructed but imposed through system limitations, then intersubjectivity as understood in human–human conversation may not be achievable in chatbot dialogues. This has design implications: if we accept that current chatbots are limited in their ability to participate in grounding activities, then repair strategies should not aim to mimic human strategies superficially, but rather be designed in line with the system’s actual capacities and purpose (e.g., efficient triage and handover).

Implications, limitations, and future research

Our study results can be used to inform the design of customer service chatbots. To foster the conversation’s progressivity, the chatbot can instruct customers on how to re-formulate their requests. Additionally, chatbots could improve their intent recognition by being trained on how customers formulate their requests when interacting with human agents.

Both intent-based chatbots and generative chatbots are being used for customer service. There is a difference in how both chatbots address troubles of understanding. Intent-based chatbots typically initiate repair with responses like “I’m sorry, I didn’t understand that”. Generative chatbots however, will always generate an answer and never display troubles of understanding. In both cases, the customer will be responsible for repair and conversational progress. Future research should examine how we can design generative chatbots to recognize troubles of understanding and initiate repair in a more cooperative way.

The current study also has some limitations. Since both chatbot welcome messages in our current study disclosed the possibility of a handover, we could not compare customers’ communicative behavior in scenarios where the option was not disclosed. Future research could explore whether customers interact differently with chatbots that do not disclose the possibility of a handover in their welcome messages. Despite this limitation, our results show that, regardless of the handover disclosure, customers formulate their queries using both keyword-based and human-like approaches.

Conclusion

Our study has contributed to the literature on human-computer interaction, specifically focusing on recipient design, progressivity, and repair in handover conversations between humans and chatbots. Our findings underscore the importance of understanding how customers tailor their messages to both human and non-human interlocutors.

This understanding is critical given the increasing adoption of generative AI (GenAI) chatbots, which are capable of handling diverse user inputs and offering varied responses. However, the use of GenAI for frontline customer service remains limited due to its potential to produce misinformation or content misaligned with company policies (Bamberger et al., 2023). Our findings are crucial for developing training protocols that can help AI models effectively handle the nuances of human communication while mitigating these risks. Future research should continue to explore how recipient design influences conversational progressivity and how conversational AI can be trained to better interpret and respond to human communication in customer service settings.

Despite the increasing use of GenAI, the academic and practical significance of this study lies in its focus on how customers interact with an intent-based chatbot. For the foreseeable future, intent-based and generative chatbots are expected to coexist rather than replace one another. Regardless of the technology used, one factor remains constant: human oversight and intervention will still be required for certain topics, as well as to guide, train, and improve chatbots in line with company policies (Bamberger et al., 2023). The findings of this study offer valuable insights for developers to improve human-chatbot communication.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the Nederlandse Organisatie voor Wetenschappelijk Onderzoek (Grant Number KIVI.2019.009, 2020).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The authors do not have permission to share data.