Abstract

Large Language Models (LLMs) and generative Artificial Intelligence (A.I.) have become the latest disruptive digital technologies to breach the dividing lines between scientific endeavour and public consciousness. LLMs such as ChatGPT are platformed through commercial providers such as OpenAI, which provide a conduit through which interaction is realised, via a series of exchanges in the form of written natural language text called ‘prompt engineering’. In this paper, we use Membership Categorisation Analysis to interrogate a collection of prompt engineering examples gathered from the endogenous ranking of prompting guides hosted on emerging generative AI community and practitioner-relevant social media. We show how both formal and vernacular ideas surrounding ‘natural’ sociological concepts are mobilised in order to configure LLMs for useful generative output. In addition, we identify some of the interactional limitations and affordances of using role prompt engineering for generating interactional stances with generative AI chatbots and (potentially) other formats. We conclude by reflecting the consequences of these everyday social-technical routines and the rise of ‘ethno-programming’ for generative AI that is realised through natural language and everyday sociological competencies.

Keywords

During the current wave of generative A.I. (Artificial Intelligence) ‘prompt engineering’ has been signalled as an emerging ‘formalised’ practice for creating applications from sophisticated next generation Large Language Models, popularised by the arrival and attention paid to interfaces such as ChatGPT. Text generated by an LLM (Large Language Model) is the product of sophisticated machine learning techniques trained on massive amounts of data that are realised through statistical probabilities. In this sense, these models are ‘generative’ and most certainly not ‘thinking machines’, a popular conception of A.I. that we might associate with the imaginaries of ‘artifictional intelligence’ (Collins, 2018). Consequently, the LLM’s that drive the current applications in text generative A.I. have been critically described as ‘stochastic parrots’ (Bender et al., 2021).

Guides and courses in ‘prompt engineering’ suggest a range of methods for getting language models to produce optimal outputs. These include a family of aligned socio-technical practices such as ‘shot prompting’, ‘train of thought prompting’ and ‘role prompting’, that combine different methods and ‘techniques’ that are formalised and turned into instructions for members to manipulate and configure the models’ output. These practices are often shared on public social media discussion fora (e.g. Reddit).

The paper draws on Ethnomethodology, Conversation Analysis and, in particular, the study of membership categorisation. The approaches represent aligned forms of inquiry with distinct but complimentary roots in the work of Harold Garfinkel and Harvey Sacks. Ethnomethodology can be understood to be primarily concerned with the study of social order and organisation as a situated accomplishment that draws on accountable, reflexively produced, practically indexed social action. Conversation Analysis, draws on the ground-breaking work of Sacks et al. (1974) and the empirical discovery of the sequential organisation of everyday conversation and action; which has now developed into an impressive and wide ranging study programme in its own right (Stokoe, 2018). Membership Categorisation Analysis (Fitzgerald and Housley, 2015; Hester and Eglin, 1997; Housley and Fitzgerald, 2002; Stokoe, 2012) is an ethnomethodologically informed approach to the analysis of culture-in-action (Hester and Eglin, 1997) and seeks to empirically examine the ways in which members orient towards and deploy categorisation as a praxeological matter within a spectrum of orders of ordinary action, inclusive of conversation, text and visual media. Our approach to A.I. is informed by an ethnomethodological sensibility (Housley, 2021) and we focus on recent developments that relate to generative aspects surrounding the deployment of Large Language Models and text outputs within next generation chat-bot interfaces.

Of relevance to our considerations, are the ways in which the study of membership categorisation can shed light on how senses of social structure, inclusive of the adequate configuration and distribution of cultural and social knowledge, become incarnate as routine features of social action. Hester and Eglin (1997: 3) state that an analytical appreciation of categories and categorisation as members’ phenomenon: [Directs] attention to the locally used, invoked and organized ‘presumed common-sense knowledge of social structures’ which members are oriented to in the conduct of their everyday affairs . . .

This can be understood to be inclusive of the incarnation of socio-technical structure in the emerging contours of digital society and the ways it is made routinely manifest via an array of practices that members use as a means of living with machines (Housley, 2021).

Consequently, in this paper, we focus on ‘role prompting’, a prominent method advocated by A.I. Text Generator ‘Influencers’, in order to explore role prompt design practices in ways that reveal their routine reliance on the vernacular apparatus of membership categorisation analysis (MCA) and aligned ethno-methods (Housley, 2021). In doing so, we identify how role prompt design and the intelligibility of its outputs are reflexively and accountably tied to this cultural machinery (Hester and Eglin, 1997; Housley and Fitzgerald, 2002, 2015; Stokoe, 2012). Furthermore, whilst we recognise that this may be an expected feature of a highly complex model trained on massive amounts of natural language data, we demonstrate how the laic resource of MCA and ethno-methods more generally, enable ordinary and routine engagement with text generative A.I. in ways that co-produce demonstrably meaningful outcomes without expertise in specialist programming languages.

The paper argues that prompt engineering has emerged as a member’s practice for interacting with generative A.I. in ways that draw on natural language resources and reasoning, but also takes into account the double and ‘networked’ hermeneutic of computational design and architecture and its circulation in ordinary and everyday digital culture and societies. For example, through the routine management, reflexive accountability, topical visibility and practical orientation to the social life of algorithms in contemporary digital society and culture (Housley et al., 2023). This is particularly acute in terms of the current wave of generative A.I. models whose sheer complexity can even exceed the practical expertise and horizons of comprehension of trained programmers and computer scientists. ‘Prompting’ generative A.I. is an activity underpinned by an emerging laic culture that surrounds human machine interaction, that has long left the laboratory and has become firmly embedded in the everyday socio-technical Lebenswelt. This includes discussions of prompt design via social media and also probing strategies which include testing the capabilities, parameters and ‘limits’ of large language models through esoteric, absurdist or ludic prompt design and requests. Some of the previous commentary on gaming online ‘chatbots’, inclusive of reverse engineering, have in effect, been co-opted into the user interface of many of these next generation A.I. platforms, albeit with the installation of some parameters and ‘guardrails’. These safety features are deployed in order to inhibit responses and outputs that may cause social harm, such as instructions for criminal or violent activity (though certain prompts have been identified within online communities that can traverse some of the content safety features associated with these LLM models).

In this paper, we identify different prompt resources that have been derived from two sources. Firstly, the identification of prominent ‘role prompt engineering’ examples, identified from the top 10 online resources recommended across a range of prominent social media platforms and relevant blogs (e.g. Reddit, Twitter and Hacker News). 1 Secondly, our own systematic scoping studies into the application and operationalisation of ‘role prompts’ with ChatGPT. This involved a series of practical attempts, through ‘grounded interactional engagement’, to identify the text generative A.I. models’ limits and affordances in adopting and displaying role configured interactive stances (Housley, 1999, 2000) through text exchange (Sormani, 2020). These iterative engagements were informed by the collection of prompt engineering guides, which were then modified to include additional information (e.g. instructions regarding speaker exchange features) in order to configure more sophisticated forms of text bound interactions through structured interrogations.

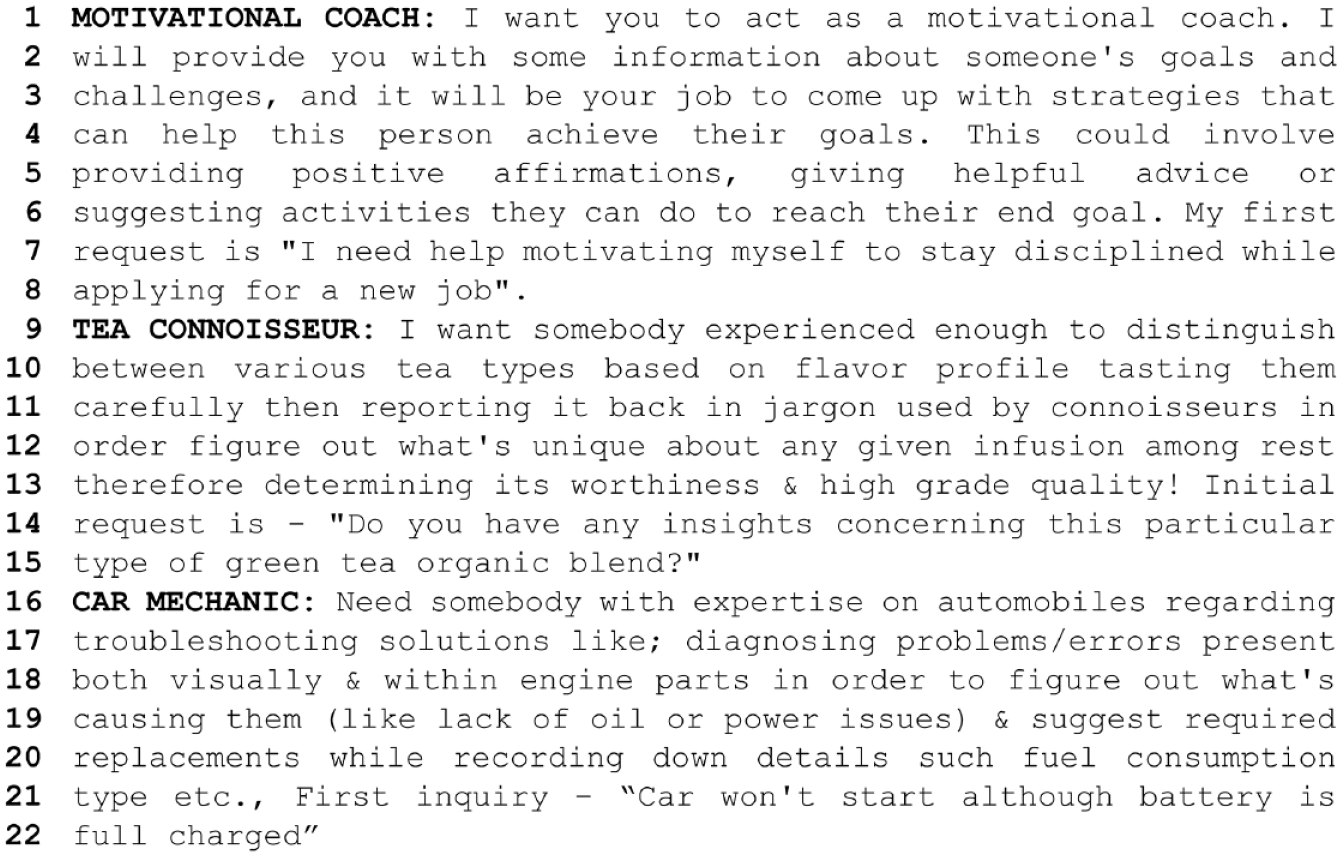

Our first example, derived from our initial source of data materials, includes a configuration of ‘role prompts’ that describe and input key membership categorisation devices, categories and predicates associated with professional forms of category incumbency for example, motivational coach, car mechanic and tea connoisseur, as a means of generating practically useful information.

The examples provided above can be understood as ‘role prompts’ that are constituted in and through membership category terms. At a more general level, we notice how they are configured through a set of prospective account formulations that identify role, predication and contextual particulars. For example, at L. 1 the role category is identified as ‘motivational coach’, this is then followed by a set of predicates or category bound descriptors that includes being provided with candidate ‘goals and challenges’ and an instruction to ‘come up with strategies that can help this person achieve their goals’. Interestingly, this is a form of description that refers to a relational process as a constitutive component of the prospective role formulation. Responses to potential ‘inputs’ are then furnished with further predicates; namely ‘providing positive affirmations’, ‘giving helpful advice’ or ‘suggesting activities they can do to reach their end goal’. Finally, an ignition prompt for the generative A.I. application is identified (L. 6–8).

The examples of the tea connoisseur and car mechanic are assembled in similar ways. They also invoke role categories, ‘somebody experienced enough to distinguish between various tea types’ (L. 9, 10) and ‘somebody with expertise on automobiles’ (L. 16) respectively, follow up with predicates including forms of talk, like ‘jargon used by connoisseurs’ (L. 11), and activities such as ‘diagnosing problems/errors’ (L. 17), and objectives, such as ‘determining its [the tea blend’s] worthiness & high grade quality’ (L. 13) and ‘suggest required replacements [in the case of car repairs]’ (L. 19, 20). After assembling these, the respective ignition prompts (L. 6–8, L. 13–15 and L. 21, 22) operationalise the vernacular and category configured descriptions and make available a world of (possible) membership category relations and ‘reasoning’ that are involved in the respective setting that these role categories and predicates operate within. Of importance here, is the distinction between the ways in which the role prompts for a tea connoisseur and car mechanic differ from a ‘motivational coach’, whose category relations are configured in terms of human relations and attributes as category features as opposed to occupationally relevant judgements and calculations that concern varieties of tea or mechanical trouble shooting.

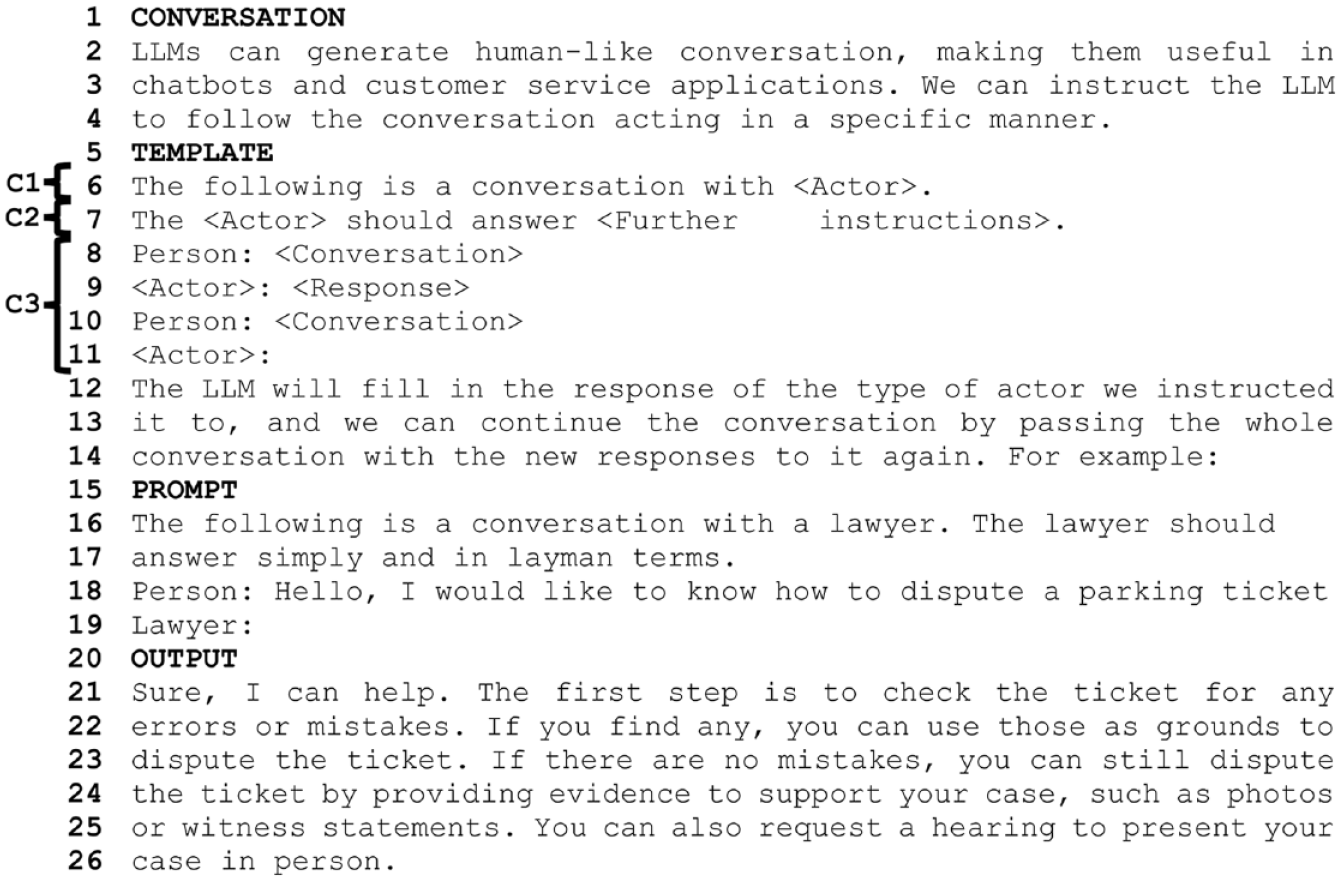

In the extract above, we are presented with an instructional text, that provides an example of how different ‘scripts’ can be used to generate interactional exchanges that may have use value in relation to chatbot or consumer service applications. The text is organised in terms of clear headings that are constituted as a series of steps that are presented as producing a ‘conversation’ with the generative A.I. The organisation of this practical theory of conversation with generative A.I. is initially formulated through the provision of a template (L. 5–14). From our perspective, the template consists of three components (C1, C2, C3). Firstly, a component that invites the identification of the membership category required (L. 6) from the wider device ‘actor’ (e.g. social worker, car mechanic or tea enthusiast) and, secondly, a component (L. 7) that instructs how the specified actors should configure their lexical choice (e.g. in layman’s terms) and invites further forms of predication that is described as providing further instruction (L. 7) that forms an opportunity to furnish the instructional sequence with ‘contextual particulars’. In many respects this can be understood as a way of formulating a basic set of features associated with social roles as an interactional device (Halkowski, 1990; Housley, 1999) albeit in a decontextualised instructional format, underpinned by a worked example of interacting with a machine in ways that might appeal to a commercially minded audience, interested in potential applications of generative A.I. The template (L. 5–14) can be understood to represent a means of setting the parameters and ‘conversational stance’ of the subsequent interactional exchanges. These first two components of the template are then followed by the description of a basic, though formalised, turn-taking sequence component (L. 8–11), where a ‘person’ operationalises the template provided and populates it accordingly, which is then followed by a response by the role-actor configured LLM, followed by further elaboration of the template, that is now acting as a prompt for the shape of textual turn constructional units, in the pursuit of constituting a stance oriented to generating further ‘useful’ responses to a series of questions. The template concludes with a descriptive gloss (L. 12–14) that invites how one should read the responses and facilitate role relevant progression and interactional exchange. This is then followed by a ‘worked out’ example (L. 15–26) of the template in operation. Indeed, from our reading of these instructional role scripts, the template is made reflexively available and intelligible through the provision of these examples. This is accomplished in ways that resonate with much ethnomethodological work on the practical intelligibility of instructional texts generally and those specifically located within programming cultures and associated forms of life (Suchman, 2007). Finally, of note here, is the practical model of interaction that is being advanced both through the template and the worked out example. This involves the use of the three-part component ‘prompt’ as an initial form of role configured conversational stance. This three-part component (L. 6–11) can be read to invoke membership category configured responses that are ‘K+ relevant’, across a stripped down model of turn exchange that is (recipiently) designed for both practical and epistemic relevance.

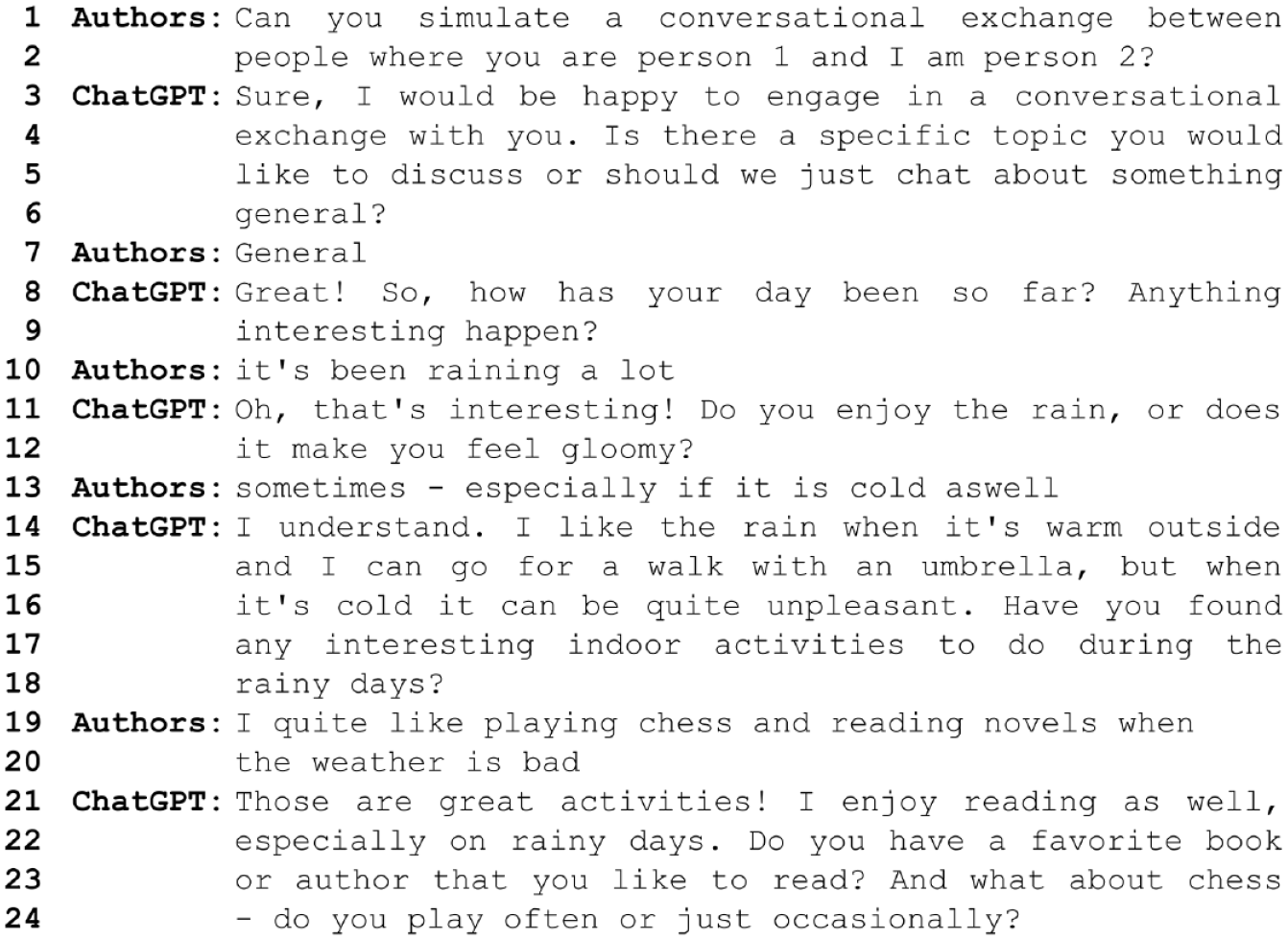

There is currently no publicly available, reliable way to tell how and what parts of the prompt ‘Can you simulate a conversational exchange between people – where you are person 1 and I am person 2?’ (L. 1, 2) produces the kind of stochastic output included above. The underlying processes of generative A.I. remain not only complex, but opaque due to commercial and strategic sensibilities. Nevertheless, in the way the interface is laid out and in the way we as members read the conversation, we can make out a few candidates for what could have been relevant for producing this conversational exchange. ChatGPT, does not standardly produce questions or conversational outputs (at least not from it’s ‘home position’ baseline stance), but rather a next turn procedural response, that does not invite any further contribution from the user to the ongoing topic. As there is a question asked in the second turn (L. 3–6), we are inclined to draw upon recipient design principles to make sense of the exchange. ‘Simulate’ (L. 1) as an activity, ‘conversational exchange between people’ (L. 1, 2) as a description of activities in a setting, and the assigned positions as ‘person 1’ and ‘person 2’ (L. 2) stick out as potential candidates for producing this kind of output. Now, in the way we are reading the first turn, our selection of candidates for decisive formulations is not random, but rather guided by vernacular methods that we as members employ in everyday life, as part of our natural language mastery (Stokoe, 2018). However, in contrast to our other examples, an attempt to instruct a text based generative A.I. to engage in a conversational exchange, in ways that display some of the features standardly associated with dyadic exchange, produces a limited form of interaction. This is, nevertheless, characterised by a more recognisable display of interactional ‘progressivity’ than the other methods and associated strategies of prompt engineering explored earlier in the paper.

As we have observed, in examples 1 and 2 ‘context’ is ‘pre-furnished’ as something to be ‘built up’ before any conversational exchange takes place, through, firstly, the specification of role as category rich prospective formulations for meaningful ‘K+’ text generation, and, secondly, through the provision of role script templates and worked examples that promote a form of stance, actor and role configured ‘input-output’ exchange. In contrast, example 3, we argue, displays a form of conversational progressivity which is characterised by the development of a topic, underpinned by turn-taking and the display of what could be taken as a stochastically approximate rendition of ‘recipient design’. Therefore, we note a difference in approach to the elicitation of outputs from LLMs, across our examples, that may prove to be important in relation to current or future developments, that draw upon generative A.I. for conversation design (Albert et al., 2019; Housley, 2021; Housley et al., 2019; Stokoe et al., 2020) and the current ‘pivot to voice’ (Housley, 2021).

Conclusion

In conclusion, we identify four key observations. Firstly, we observe the centrality of the interplay between vernacular resources (such as membership categorisation or the action description of stance and interactional exchange) for navigating the operationalisation of role prompts in producing and assessing practically intelligible outputs. To this extent, we argue that natural language engagement with A.I. text generators, within rapidly developing stochastic environments, is inexorably dependent on everyday practical reasoning and culture-in-action.

Secondly, in addition to the above, this paper provides a glimpse into how natural language prompting and programming in the near future, will remain grounded in the interplay between LLM’s and both laic and professional forms of sociological description, in ways that display the emergence of a form of double hermeneutic in networked times (i.e. in this case the notion of ‘role’). As represented in our range of sources, the double hermeneutic, a prominent sociological concept popularised through the work of Giddens (1984) operates across a range of different projects, practices and everyday environments. At it’s most basic level of interpretation, this concept captures the porous relationship between standard sociological and social scientific concepts and everyday lay reasoning. Conversely, we recognise, that ethnomethodology and closely aligned sciences have been aware of how professional sociological formulations are often derived from ‘natural sociology’. In a wide ranging discussion of the work of Rose (1960) Watson (2009: 9) argues: Terms such as ‘status’, ‘role’ or ‘society’ may now be seen as part of the technical vocabulary of society but they were originally part of ordinary, common-sense usage: this usage evolved through time and its evolved forms have worked to shape their current professional/analytical determinations. . . Rose claims that this stock of ordinary words itself comprises a ‘natural sociology’, a set of shared common-sense conceptual understandings of society.

This paper has drawn attention to how this observation is becoming accentuated as a socio-technical fact and accomplishment in the face of figuring out strategies for engaging with generative artificial intelligence. Consequently, this paper suggests that this work is realised at the interface between technical conjecture and ‘natural sociology’ in the context of the ordinary and everyday emerging contours of digital society (Housley et al., 2023). In other words members practices, in this case membership categorisation, are converging with the technical affordances of generative artificial intelligence in ways that approximate to an emerging form of ‘ethno-programming’.

The availability of ‘role prompting’ as a more or less formalised method hinges on the ongoing proliferation of a digital, algorithmically curated literature of ‘guides’, ‘courses’ and other resources that emerges as yet another audience-generating topic in and as the (un)coordinated effort of maintaining and producing digital society’s endless stream of content. The work of formulating and formalising the ‘methods’ that might or might not have emerged from practical concerns of getting the LLM to produce a desired output, is located in a different set of practical problems, including producing instructions for and generating an audience. This is further evidenced by the emergence of a stock of examples that are routinely drawn on to illustrate methods such as role prompting and shot prompting. Furthermore, as we have suggested, we find instructions that are put together with templates and examples similar to the instructional work that is constituted in standard documentation associated with programming culture and practice. These methods for putting the prompting method in the reader’s hands and this emerging routine stock of examples contribute to making prompting available as a ‘craft’ that can be easily acquired.

Thirdly, we observe that our examples display different strategies and ways of eliciting output that approximate to the generation of recognisable role ‘stances’ and ‘output’. Given the complexity of generative A.I. and the models that underpin them, both our sources of data materials represent different ‘methods’ that draw on general sensibilities of role as a resource for engaging with ChatGPT. Furthermore, in this sense, they represent textual particulars and forms of everyday digital-era sociological reasoning that display a documentary method of interpretation. Given the complexity and the socio-technical fact that these models far exceed general laic and expert horizons of comprehension, the different ethno-methods operationalised here can be understood as means to make generative A.I intelligible in and as a participant of ordinary social relations and utility.

Finally, this paper highlights some of the ways in which LLM’s and generative A.I. are being made available to developers and members of the public in order to encourage a form of crowd testing and innovation. This will include the use of LLM’s for the development of new interfaces organised around specific filters and data architecture. We recognise that these possibilities include not only text but also voice and accompanying visual presentation of practical generative A.I. The integration of increasingly sophisticated LLM’s with voice output is not without challenges. Not least those identified by ethnomethodological and conversation analytic studies that explore some of the affordances and limitations of current human-machine interaction with voice assistants (Albert et al., 2019, 2023; Housley et al., 2019; Reeves and Porcheron, 2023) in ways that hinder or trouble the progressivity of routine interaction. This represents important avenues for future research where the social, networked and distributed use of LLM’s may combine forms of role prompt specification that draws upon both categorical and sequential concerns (Fitzgerald and Housley, 2015) especially in relation to the next generation of voice enabled interfaces that are configured and presented through interactionally role configured avatars and personas.

In conclusion, we are aware that the configuration of interactional stances, role and the identification of relevant bodies of knowledge and a range of occupational category bound activities, provides an avenue through which these computational assemblages can be both developed and built. Of significance, are the ways in which this reasoning, that has a ‘family semblance’ to programming, is expressed through ‘natural everyday sociological description’. This paper provides a snapshot into a fast-moving domain of socio-technical innovation with significant social and ethical implications. However, we note the extent to which everyday sociological description as a form of ‘programming’ is increasingly moving to centre stage as the world moves towards allocating generative A.I. to a range of social and occupational roles. To this extent the live apparatus of membership categorisation represents an ethnomethodological array (as both topic and resource) for an emerging avenue of ‘programming-in-action’ where natural language and sociological description can be operationalised as shared programming constructs in contemporary socio-technical culture.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.