Abstract

The agent of action in Human-Computer Interaction is, as the hyphenated name of the field suggests, usually conceptualized as an contrastive binary of either human or computer. This study, informed by ethnomethodology and conversation analysis, instead describes the interactional achievement of distributed agency in a ‘smart homecare’ setting where a homecare worker and a disabled person coordinate shared activities using a virtual assistant. We focus on the tacit criteria, attributions, and discourses of agency embedded in the interactional details of their everyday homecare routine. The analyses reveal how collaboration in everyday care tasks involves the distributed agency of all participants, irrespective of their ostensible ‘humanness’. Our findings (a) provide a critical perspective on the technological imaginary of expensive, high-tech robotic replacements for human care work; (b) advocate low-tech strategies for adapting consumer technology for smart homecare systems; and (c) suggest alternative approaches to agency in assistive technology design, grounded in detailed observation of the interactional infrastructure of real homecare settings.

Keywords

Introduction

The issue of agency in non-humans is often conceptualized as a binary ontological category: whether or not chat-bots, robots, or animals can be said to have psychological characteristics like ‘selfhood, motivation, will, purposiveness, intentionality, choice, freedom and creativity’ (Emirbayer and Mische, 1998: 962). Here we follow Edwards (1994) in focusing on how tacit criteria, attributions, and discourses of agency are embedded in the organization of everyday social actions such as offers, requests, and accounts. The perspicuous setting of a ‘smart homecare’ routine invites us to examine the practical ‘implication of powers, abilities and will’ (Antaki and Crompton, 2015) in interactions involving a disabled man and his care worker. It also reveals how transient attributions of technological agency (Alač, 2016; Pelikan et al., 2022) are built into the participation framework that coordinates routine care activities. First, we outline medicalized concepts of agency that underpin assistive technology and social care service design, and how they are informing the development of ‘smart homecare’ systems. Our analyses then highlight the interactional co-construction of smart homecare as a socio-technical infrastructure. Finally, we argue that concepts of distributed agency offer a radically different starting point for assistive technology, integrating heterogeneous methods, devices, and components into social action through the universal technology of conversation (Sacks, 1984).

Background: The technological imaginary of the ‘care crisis’

Elderly and disabled people are increasingly receiving care at home rather than in residential care facilities that are often associated with a loss of choice and autonomy (Rozanova, 2010). The Health Foundation (2021) estimates that over the next decade, the social care workforce will need to more than double to meet the growing demand in the UK and in other countries where demographic aging is advancing (Ris et al., 2019). As social care budgets come under increasing pressure (Schlepper and Dodsworth, 2023), technologists and policymakers are turning to high-tech ‘solutions’ such as care robots, virtual assistants, and ‘smart home’ systems (Abou Allaban et al., 2020; Beaney et al., 2020; Callejas and López-Cózar, 2009; Do et al., 2018; Kim et al., 2019) to address a looming crisis in care provision. These developments are often driven by a logic of efficiency savings and the ongoing drive to replace forms of human care work with technology (Hester and Srnicek, 2023; Wright, 2021). Even consumer technologies such as the Amazon Echo are marketed as assistive ‘smart agents’ that can support the agency and autonomy of elderly and disabled people (Amazon, 2019). But how are these technologies used in a real homecare setting? How do they conceptualize agency – both in relation to the user and the virtual assistant? And how, if at all, might they support the agency of the care service user? In the following section we briefly review conceptualizations of agency in homecare work and as embedded in so-called ‘smart’ technologies. We then draw on the concept of distributed agency as it is empiricized in ethnomethodology and conversation analysis (EM/CA; Dingemanse et al., 2023; Enfield and Kockelman, 2017) to explore the distribution of work in participants’ talk and other coordinated activities within a smart homecare setting.

Conceptualizations of agency in smart homecare

Discourses of agency in health and social care often center on individualistic notions of autonomy and empowerment that prioritize personal choice and independence (Wray, 2004). Disability and care needs are, by extension, associated with loss of agency (Rozanova, 2010), identity, and a ‘sense of “being”’ (Pirhonen and Pietilä, 2018: 16). When care professionals ‘do for’ instead of ‘doing with’, this can undermine a service user’s confidence about living independently at home (Rooijackers et al., 2021). In this context, care professionals must manage conflicting priorities, as Pilnick (2022) puts it, ‘between autonomy and abandonment’: providing much-needed support, but without limiting service users’ agency, decision-making, and choice (Holmberg et al., 2012). Similarly individualistic discourses of agency tend to underpin technical understandings of the user in the design of conversational user interfaces and assistive technology (Albert et al., 2023). Human-computer interaction (HCI) design usually involves constructing a necessarily simplified model of the user that articulates their needs, choices, and capabilities (Fischer, 2001). Hersh and Johnson (2008) trace the ascendence of a social model of disability in user modeling: part of a broader critique of a ‘medical model’ that reproduces ableist understandings of disabled people in terms of individual impairments requiring a technical/medical ‘fix’ to achieve independence. Bennett et al. (2018) build on a social model of disability to suggest an alternative frame for assistive technology that prioritizes shared agency and interdependence. These conceptual dichotomies between social and medical models in assistive technology design and between discourses of autonomy and abandonment in health and social care (Cluley et al., 2020) mirror long-standing dichotomies between structure and agency in social theory (Pirhonen and Pietilä, 2018). While this theoretical discourse of agency has been vital for advances in social and research justice (Harrison, 2014), as an analytic framework it can shift focus away from participants’ practices and discourses of agency in social interaction (Dewsbury et al., 2004).

Data and methods

We used applied conversation analysis (Antaki, 2011) and multimodal transcriptions of video data (Mondada, 2018) to study the moment-by-moment unfolding of actions through which questions of agency become relevant for the participants in the smart homecare environment. This approach draws on related empirical studies of interactions with smart speakers and social robots (Alač, 2016; Due, 2021; Krummheuer, 2015; Pitsch, 2016) in which agency is understood to be ‘distributed across human bodies and technology’ (Alač et al., 2011: 905). These methods allow us to examine the distribution and ascription of agency in these interactions ranging from what Pelikan et al. (2022: 20) call ‘autonomous agency’, where a robot’s actions are treated as interactionally relevant by coparticipants, to ‘non-agency’ where a robot’s actions are not treated as accountable, nor as interactionally relevant for whatever happens next. Our data are selected from around100 hours of video collected by a disabled man (Ted) and his care assistant (Ann) using a smart speaker (Alexa) connected to a variety of voice-controlled lights, plugs, and two voice-controlled cameras that they could turn on or off at any time. The participants helped develop a data collection protocol, approved by Loughborough University ethics review (8-8-2019), and gave permission for all data to be used, shared, and published using open content licenses.

Analysis

In the analyses below, we focus on three variations in the organization of a common task within Ted and Ann’s care routine: turning a voice-controlled electric fan heater on or off. Ted initiates this action many times during his daily care activities such as getting out of bed, eating a meal, or getting dressed, usually before taking off blankets or opening a window.

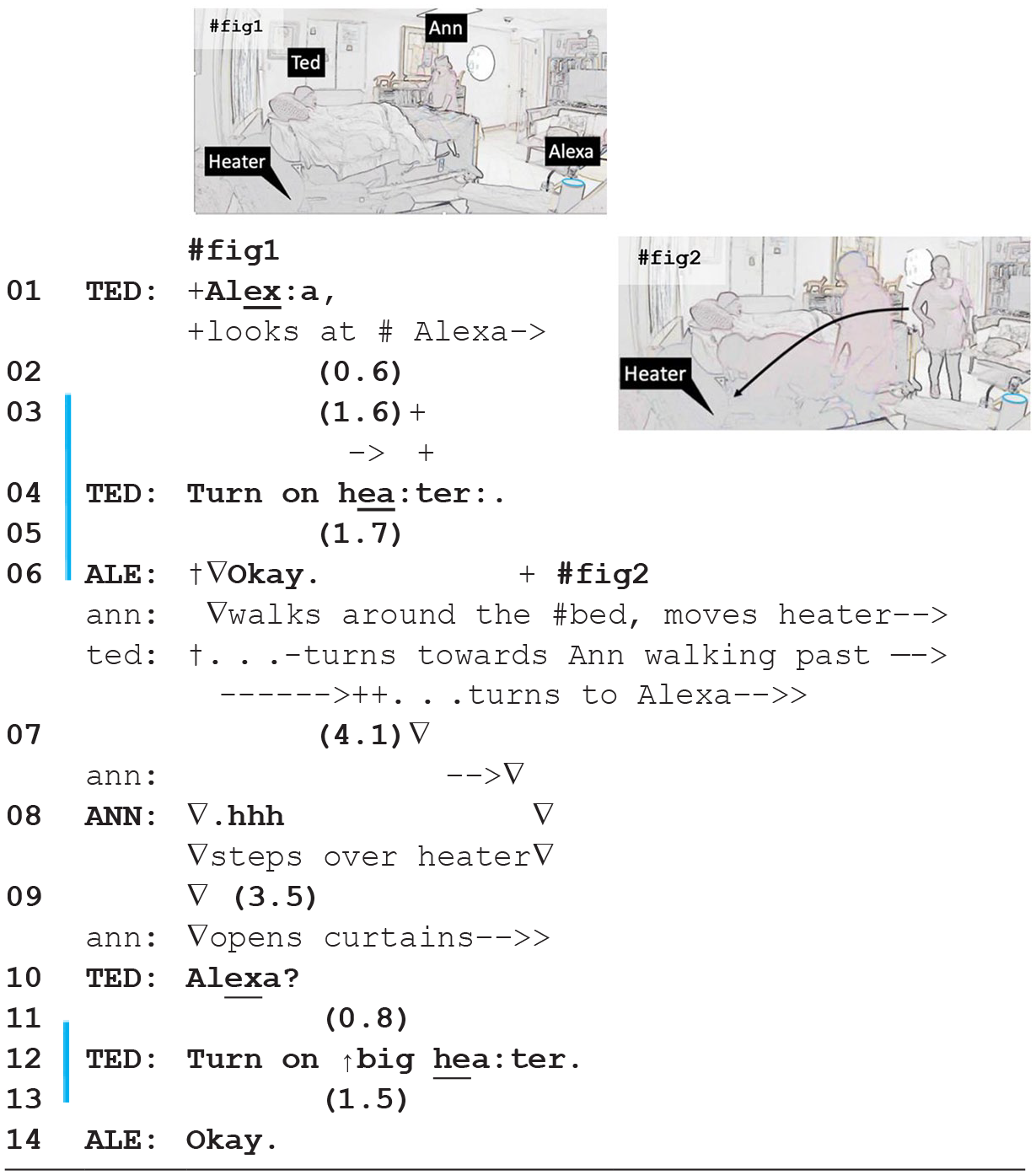

In Extract 1, for example, Ann has just arrived as Ted begins their morning routine of opening the curtains and getting him out of bed by asking Alexa to ‘turn on heater’.

Here we see how Ted plays his part in the ‘getting out of bed’ routine by turning heaters on while Ann is busy with her own tasks. In line 1 Ted initiates a ‘summons-response-request-response’ (SR3) sequence (Hall and Albert, 2024) with the smart speaker. This standardized sequence – common to many smart speaker interactions – starts with a wake word (here, ‘Alexa’) as a summons. The ‘wake light’ comes on as a visible response (transcribed as a blue line next to line numbers) showing the device is activated. This is usually followed by a request or command, and a response from the smart speaker. In this case, Ted just finishes the request ‘turn on heater’ at line 3 as Ann walks toward the heater. While she moves the heater out from under the bed, Alexa’s response ‘Okay’ and the heater turning on closes the sequence. Ted then does another SR3 sequence, this time turning on the ‘big heater’ in lines 10–14 as Ann continues walking around the bed opening the curtains. This illustrates the distribution of labor in Ann and Ted’s care routine and shows how Ted uses the virtual assistant to accomplish ‘his’ tasks and take an active role in the joint care activity.

Ted’s initiation of a sequence that is completed by Alexa turning on the heater is an example of what Pelikan et al. (2022) call ‘hybrid agency’, where the action is ‘treated as partly instructed by a human operator’. In this case, hybrid agency is accomplished by Ann timing her move of the electric heater to fit Ted and Alexa’s sequence closing and the heater coming on. Ted achieves agency as a participant in the care routine as he initiates the SR3 sequence that implicates Alexa first as a recipient, then as a participant in the task of turning on the heater, which, in turn involves Ann in the pragmatics and temporality of completing the task. Our next clip shows how turning the heater on and off remains Ted’s responsibility, even when he is clearly struggling with Alexa.

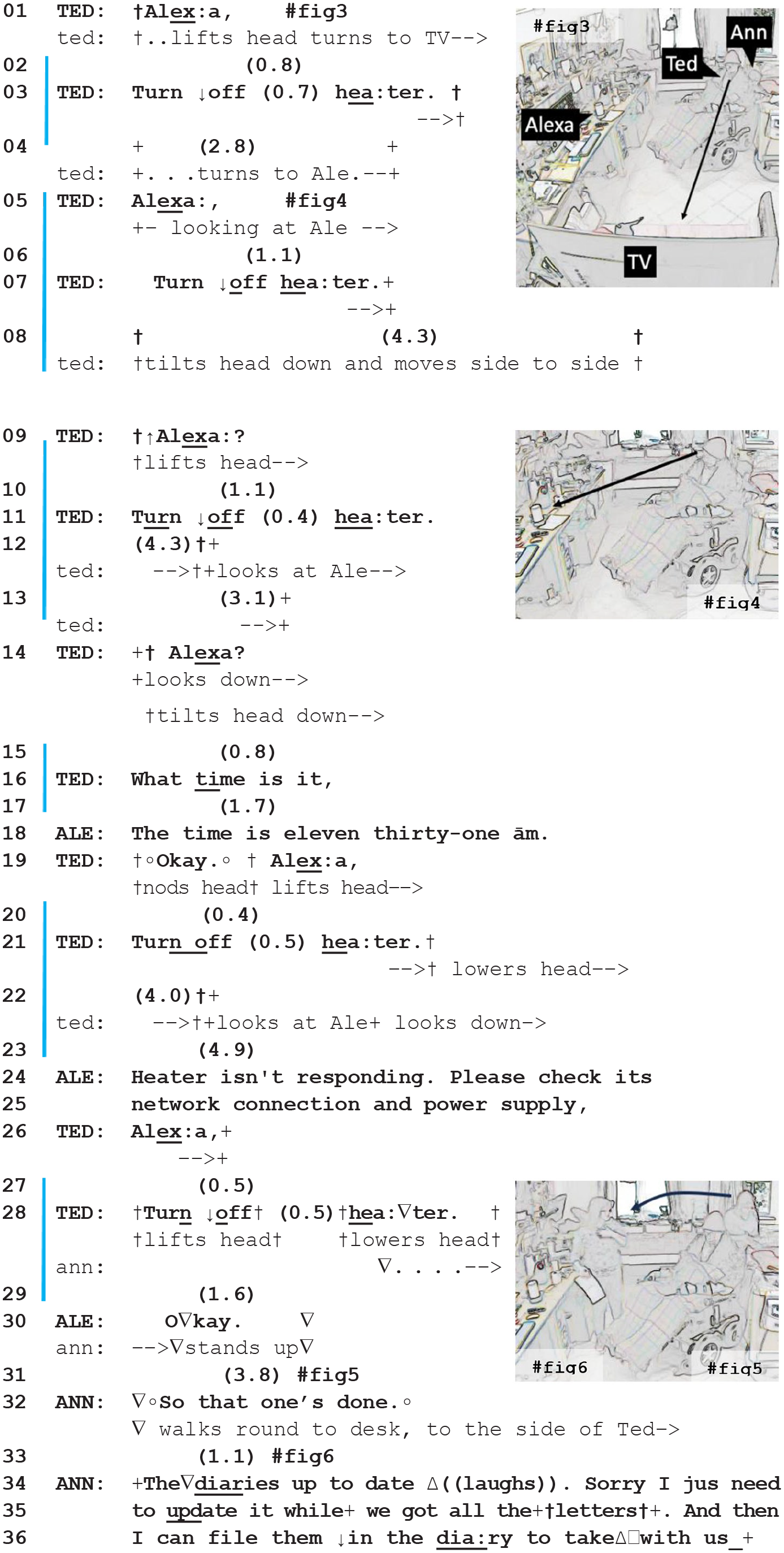

In Extract 2, the heater is already on. Ann is kneeling behind Ted, leaning on the bed, writing in a medication diary. Ted repeatedly struggles to get Alexa to turn the heater off, to no avail. Notice how Ann accounts for not intervening to help him afterward.

We see Ted struggling by trying new ways to ‘upgrade’ the design of his request in pursuit of a ‘heater-turning-off’ response from Alexa using methods that Stivers and Rossano (2010) identify as increasing the pressure on a recipient to produce a response. For example, his first unsuccessful SR3 sequence in lines 1–3 is produced without looking toward Alexa. When re-doing of this request in lines 5–7, Ted does recipient-directed speaker gaze toward Alexa and can monitor the ‘wake-light’ for a visible response to his summons. In the third SR3 sequence in lines 9–11, Ted raises the pitch and turn-final intonation of his summons. After trying all these ‘upgrades’, Ted switches the request component of his next SR3 sequence to ‘what time is it’ in line 16. This works as a ‘check if Alexa is working’, since as soon as Alexa responds ‘the time is…’ in line 18, Ted responds with ‘Okay’, and immediately follows up by redoing his earlier ‘turn heater off’ SR3 sequence in lines 19–21. This time, Alexa reads an error message ‘heater isn’t responding’ with instructions to ‘please check its network connection and power supply’, which Ted would not be able to do from his chair. As Ted initiates the next summons, Ann gets up just as Alexa finally responds and turns off the heater. This episode presents some evidence that Ann was intervening to help Ted at this point, since at lines 32–36 Ann announces the ‘close’ of her activity ‘So that one’s done’, laughs, then gives a sorry-prefaced account in lines 34–37 for not helping earlier while Ted was struggling with Alexa.

The changes in the design of Ted’s six SR3 sequences in this episode probe the availability of the device in different ways, treating it – at least potentially – as responsive to variations in ‘recipient design’ (Tuncer et al., 2023). While this practice, as a way of orienting to Alexa, does not explicitly attribute fully-fledged, agentic recipiency to the device, it clearly demonstrates the relevance and expectation of at least establishing addresseeship. As Dingemanse (2020) points out, participants in human interaction may use gaze, address terms, summonses, and interjections to establish this fundamental prerequisite of other-availability for the interactional achievement of distributed agency. Ann’s apology for not having helped also achieves a form of ‘hybrid agency’ in that it treats her involvement in and coordination with Ted and Alexa’s actions as an accountable matter. In this sense, we also see how Ann attributes agency to Ted by not helping him. Here she is managing the trade-off mentioned earlier: between autonomy (letting Ted do things and make choice for himself), and abandonment (leaving him to struggle).

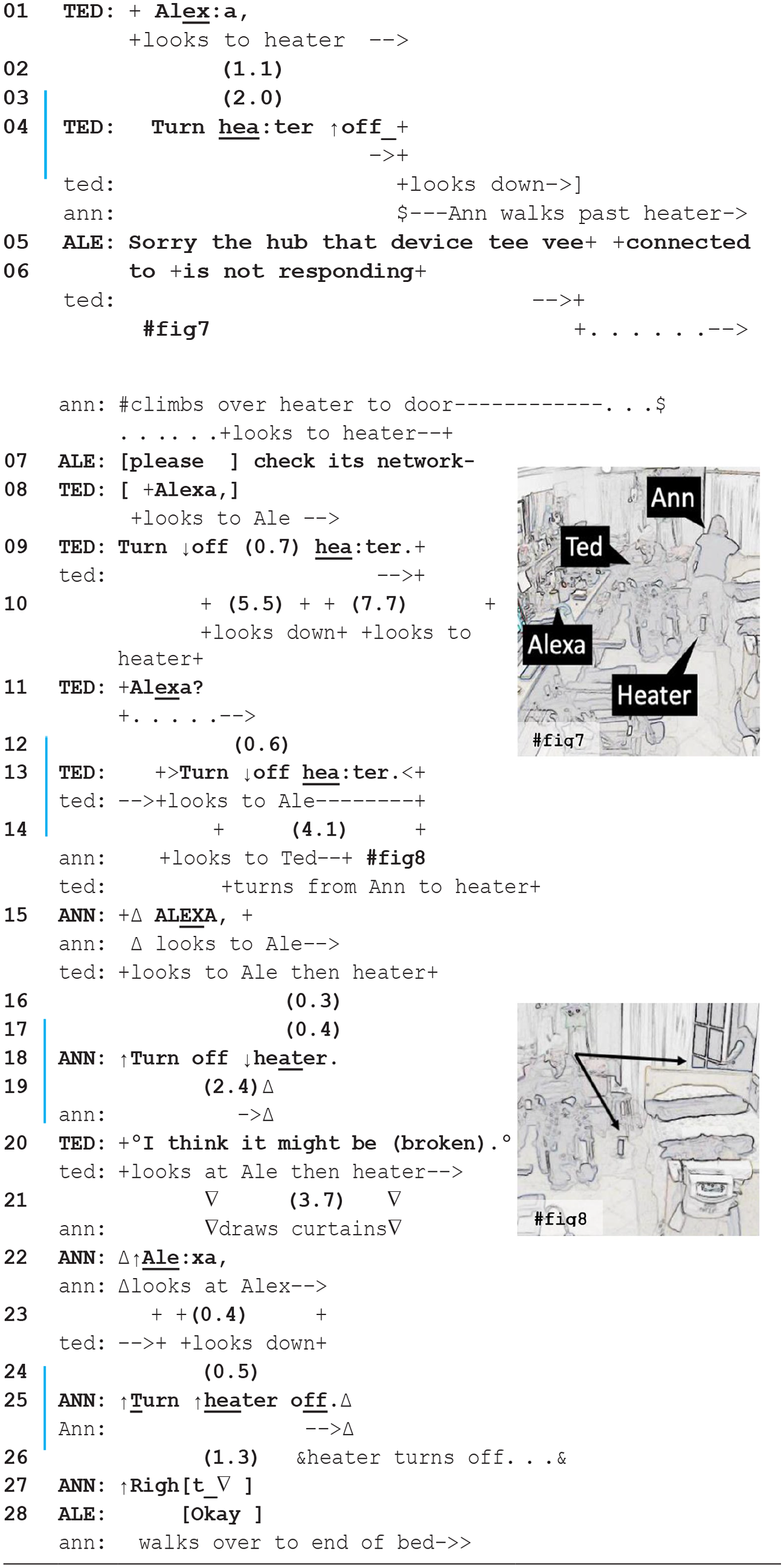

In Extract 3, Ted struggles to turn off the heater again, while Ann is moving about the room, busy with other tasks. This time, however, after the third time, Ann does intervene to help.

At first, Ann continues with her own tasks while leaving Ted to turn the heater on and off. After Ted completes his initial SR3 request to ‘turn heater off’ in line 4, Ann moves to close the curtains behind the heater. Just as Alexa responds with a ‘device not responding’ error message, Ann climbs over the heater in lines 5–6, not even glancing at it as she moves past. In this sense, Ann remains committed to her task while Ted again begins to ‘upgrade’ the design of his request in pursuit of a response from Alexa: first he interjects during Alexa’s error message in line 7 by re-using the wake word ‘Alexa’, which interrupts Alexa’s turn in progress as the device responds to the summons by ‘listening’ for a command. Ted then changes the syntax of his request from ‘turn heater off’ in line 4 to ‘turn off heater’ in line 9, while raising the intonation of the wake-word ‘Alexa?’ at line 11. Again, these incremental modifications to the recipient design of Ted’s requests suggest he ascribes Alexa a degree of agency in recipiency.

When Ann steps in to help Ted, we get stronger evidence for the accountability of Alexa’s conduct and agency in the way Ann shouts at and appears to ‘blame’ Alexa for Ted’s difficulties. When Ann looks up from her task, she finds Ted looking from her to the heater then back to Alexa. Ann quickly treats this as an opportunity to assist Ted with his task. She immediately suspends her current task and shouts the wake-word ‘AL

In our previous examples, we have seen more tacit forms of ‘hybrid’ or ‘ascribed’ agency emerge in the coordination and temporality, and in the recipient design of joint activities in a smart homecare setting. Here, however, we see a more clear-cut attribution of intentionality and agency in the way that Ann seems to blame and reprimand Alexa while stepping in and assisting Ted. Furthermore, by blaming Alexa, here, Ann protects Ted’s sense of agency and competence: if Alexa is to blame, then Ted is not at fault for being unable to get Alexa to turn off the heater. In extract 2, and in many other instances in these data (see e.g. Albert et al., 2023), Ann only intervenes to help Ted after either asking or being explicitly granted permission to do so. In this way, Ann maintains Ted’s prerogative to animate Alexa to do ‘his’ tasks, upholding Ted’s agency as an active participant in the care routine. In Extract 3, we see that Ann carefully monitors Ted’s activities, and can intervene to offer assistance where needed. By blaming Alexa, Ann manages the accountability of her intervention as well as its implications for Ted’s agency and competence as a participant.

Discussion and conclusion

Our analysis showcases a powerful example of how participants in a smart homecare setting can distribute their labor in ways that prioritize and protect the agency of the service user. Firstly, we showed how, in this setting, the agency of all participants, human or machine, is constructed through the joint management of routine tasks. When a participant initiates an action, pursues a response, or responds to an initiation in this interactional environment, they implicate their own agency and the agentic involvement of others. These pragmatic understandings of agency are similar to the ‘hybrid’ and ‘ascribed’ forms of robotic agency identified by Pelikan et al. (2022). Secondly, we found that in moments where the interaction ran into trouble, i.e., when Alexa did not respond as requested, this presented participants with an opportunity to renegotiate their contingent degrees of agency by withholding assistance, by intervening to help, or by ascribing blame to Alexa as a ‘scapegoat’. Where others (including a technical device) are available for the attribution of blame and the ascription of interactional incompetence (see Tuncer et al., 2023), this can mitigate against such ascriptions and attributions to the service user (Ekberg et al., 2020). Blaming the virtual assistant here also manages conflicting priorities in discourses of agency in care work: providing ways of doing assistance without undermining the agency of the care service user.

These findings also have some critical implications for the technical and conceptual design of homecare systems and services.

Firstly, it is striking that the participants in our data use the participation framework of their care routine to combine cheap consumer technologies into a highly functional smart homecare system. The functionality here depends on the realization of distributed, hybrid agency with humans and machines, rather than reliance on the kinds of expensive, high-tech, and often dysfunctional care robotics systems that still dominate the technological imaginary of this research area (Wright, 2023).

Secondly, the smart homecare setting described here demonstrates how participants can harness forms of hybrid and attributed agency involving technology – in the broadest sense – including what Sacks (1984) calls ‘the technology of conversation’. Like the frame of ‘interdependence’ for assistive technology proposed by Bennett et al. (2018), our analysis suggests an alternative to what Jackson et al. (2022) describes as the high-tech ‘disability dongle’ design paradigm. Rather than starting by building mediagenic gadgets that target an individual’s impairments for a medical or technical ‘quick fix’, we might initiate a design process by observing the distribution of agency and shifts between interactional roles and tasks in this kind of naturalistic setting.

Finally, we see how all participants are involved, in different ways, in the co-production and maintenance of the technical and interactional infrastructures of this smart homecare setting. This perspective highlights the inadequacy of widespread utilitarian concepts and rhetoric about the potential ‘efficiencies’ of replacing human care support that often motivate assistive technology and care robotics research (e.g. Bedaf et al., 2013; García-Soler et al., 2018).

Our findings also carry some broader methodological implications for our analytic approach to distributed agency and issues of interactional competence in computers, animals, and other ‘analytic objects’ (Edwards, 1994) which do not carry unquestioned assumptions of agency. For example, we could be inspired, in our analytic approach, by emulating the unproblematic and unmarked integration of the virtual assistant into the coordination of the homecare routine. As Jefferson (1989: 429) says of ‘the alien character of conversation analysis’: when examining smart homecare interactions, we might adopt an analytic approach that eschews the normative assumptions about agency built into lay characterizations of categories such as ‘human’ and ‘machine’.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by an ESRC PhD studentship funded through the Midlands Graduate School DTP [ES/P000711/1] and Loughborough University. The data on which this paper draws were collected for a British Academy/Leverhulme Small Research Grant [SRG19\191529] awarded to Saul Albert.