Abstract

Keywords

Yeah, I’m trying to. . .I need this picture that makes it work. You know?

Uhh. . ..oh, I see! You mean a desktop shortcut to start your database application. Let me show you.

As novice users learn an application, their mental models start from familiar concepts that can be far removed from the application’s actual features. Over time, these models expand into a more accurate representation that allow successful task completion.

This gap between representation and reality is hard to bridge by traditional forms of user support, like manuals or training. As Klowait et al. (2024) point out, LLMs and the conversational interfaces built on top of them open up new and exciting possibilities for doing so.

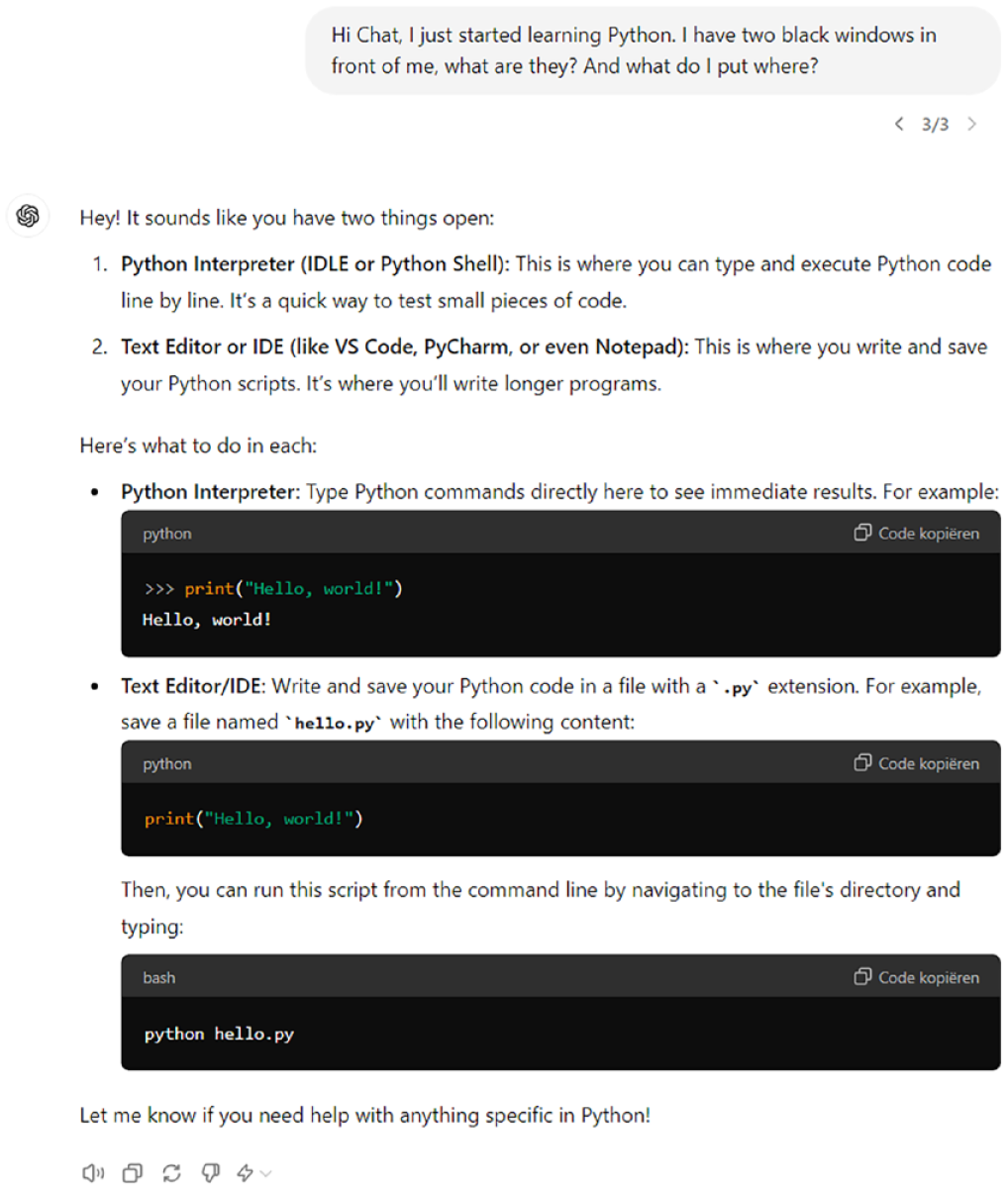

To illustrate this, let’s look at how ChatGPT can help a novice software developer, out-of-the-box (see Figure 1).

How can ChatGPT help a novice software developer?

What happens here in terms of disambiguation and alignment is quite remarkable. ChatGPT meets the user at her level of understanding, makes sense of her mental imagery and takes her on a guided exploration of some key concepts. It does so not by providing expert-level systemic knowledge, but by offering a task that’s meaningful to the user, with enough detail to ensure successful task-completion.

Can we use this inherent capability to deliberately design LLM-based apps for bridging knowledge gaps? The place to do that is the so-called system prompt: the core set of instructions that we give an LLM to not only scope what it can do, but also how it interacts with the user.

A classic system prompt runs along the lines of ‘You’re a helpful assistant that can answer questions’. By adding a role, context and instructions, we can prompt for richer behavior.

Here’s an example based on the paper’s explainer AI.

#role

You are an expert algorithm coach for novice users. Your goal is to facilitate exploratory learning and mental model building

##context

The user interacts with a game that has an algorithm running in the background. Their goal is to learn about the algorithm by manipulating the game’s interface.

##Instructions

Never give direct answers to the user’s questions.

Instead, ask a probing question.

In the resulting interaction model, the dynamics shift from answering questions to dynamically engaging the user in activating their own learning:

How does changing the slider value affect the game?

That’s a great question! What do you notice when you change the slider value? Can you observe any patterns or changes in the game?

I’m not sure. Can you give me some hints?

Sure! What other components of the game do you think might be affected by changing the first slider?

In sum, and in my experience, carefully designed system prompts let us tap into a relatively unexplored characteristic of LLMs: their inherent capability of aligning with users’ mental models. They let us move away from traditional Q&A conversations to interaction models that facilitate crucial exploratory learning.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.