Abstract

Low-rank representation (LRR) has attracted wide attention of researchers in recent years due to its excellent performance in the exploration of high-dimensional subspace structures. However, in the existing semi-supervised learning problem based on the LRR method, graph construction and semi-supervised learning are two separate steps. Therefore, the existing label information in the data set is not well used to guide the construction of the affinity graph. Therefore, these methods do not guarantee that the final result is a global optimal solution. This paper proposes a graph regularized low-rank representation for semi-supervised learning, called GLR2S2. This method combines the construction of affinity graph with semi supervised learning and unifies them into an optimization framework. By solving the joint optimization problem, the global optimal solution can be obtained. Experimental results on several standard data sets show that the GLR2S2 method proposed in this paper is effective.

Introduction

In most of the problems of pattern recognition, artificial intelligence and computer vision, we often face the problem of insufficient data labeling, and the acquisition of label information is very difficult and expensive. In practice, the data we can get widely is often unlabeled. This brings great difficulties to machine learning problem. How to make full use of limited data labels to improve the performance of learning algorithms is a key concern of many researchers. In this case, semi-supervised learning (SSL) can make full use of limited labeled data and a large amount of unlabeled data, 1 and has been deeply studied. In recent years, semi-supervised learning has attracted widespread attention in the fields of machine learning, computer vision, and pattern recognition. Among the current semi-supervised learning methods, graph-based SSL (G-SSL) is particularly compelling, mainly because it has achieved great success in practical applications.

The graph-based semi-supervised learning approach relies heavily on the construction of a graph

Although we can find millions of such relationships from the data, recent research on sparse representations and low-rank representations suggests the importance of choosing pairwise relationships. Yan et al.6,7 proposed a

Although the methods based on sparse representation and low-rank representation have achieved great success, there are still some obvious shortcomings in these methods. In most graph-based semi-supervised learning methods, the structure of the graph is usually predefined. Therefore, graph construction and semi-supervised learning are often two independent steps, so that these algorithms cannot get the global optimal solution. Since the semi-supervised learning algorithm relies heavily on the construction of graphs, it is necessary to combine semi-supervised learning with graph construction to solve jointly. Fang et al. 15 proposed a robust semi-supervised subspace clustering algorithm via non-negative low-rank representation (NNLRS). Yu et al.16 proposed a nonlinear learning method using local coordinate coding for subspace learning. By using weak label regularization local coordinate coding (LCC), Wang et al. 17 proposed a face annotation method, which simultaneously uses weak label regularization and sparse features based on graphs to improve the labels of similar face images. Peng et al. 18 proposed an enhanced LRR via sparse manifold adaption. By using the label information for semi-supervised learning, Yang et al. 19 proposed the label constrained sparse low-rank representation algorithm

Based on the above analysis, we propose a new graph regularized low-rank representation method for semi-supervised learning (GLR2S2). In this algorithm, the construction of the graph and semi-supervised learning are combined in a unified optimization framework, which ensures that the final result is globally optimal. Through joint optimization of graph construction and semi-supervised learning, the label information of data samples can be accurately propagated in the algorithm learning process. The main contributions of this paper are summarized as follows:

Previously, most G-SSL algorithms usually used graph construction and semi-supervised learning as two independent steps, and the GLR2S2 algorithm proposed in this paper integrates these two tasks into a unified optimization framework, which can guarantee the final result as the overall optimum. By introducing graph regularization and sparse constraints into the LRR algorithm framework, the proposed GLR2S2 method considers the intrinsic geometric features of the recovered data. In this way, the GLR2S2 algorithm can simultaneously capture the intrinsic local structure information and global structure information of high-dimensional data. Aiming at the optimization problem of GLR2S2 algorithm, this paper proposes an effective optimization strategy.

The rest of this paper is arranged as follows: Section 2 briefly reviews the relevant work. Section 3 introduces the proposed GLR2S2 and its optimization. A large number of experiments have been carried out to illustrate the effectiveness of GLR2S2 in Section 4. Finally, Section 5 gives the conclusion.

Related works

In this section, we will briefly introduce the algorithms of LRR and GLRR, 20 as well as the semi-supervised classification framework used in this paper.

LRR and GLRR

Assume that data

Among them, low-rank constraints have good performance in capturing the global structure of data.

9

The optimal solution of problem (1) is the lowest rank representation of data matrix

If the data in the high-dimensional space is located in a union of linear subspaces, then LRR algorithm can extract the low-dimensional structures embedded in the high-dimensional space very well. But in practice, this assumption cannot be satisfied. For example, the face images are sampled from nonlinear low-dimensional manifolds embedded in high-dimensional space. In this case, LRR method cannot find the intrinsic geometry structure and discriminative information of data, which is very unfavorable for practical application.

In order to maintain the intrinsic manifold structure of the data sets,

20

introduced the graph regularization term into the objective function of LRR, and proposed the GLRR method. The objective of GLRR is defined as follows:

Semi-supervised classification

In this section, we briefly introduce a very popular semi-supervised learning framework Gauss Fields and Harmonic Functions (GFHF).

21

Suppose

Graph regularized low-rank representation for semi-supervised learning

In this section, the graph regularized low-rank representation for semi-supervised learning (GLR2S2) is introduced. The goal of the proposed GLR2S2 method is, under a unified optimization framework, to perform the construction of the graph and the semi-supervised learning at the same time, thus, we can get an overall optimal solution.

Objective function of GLR2S2

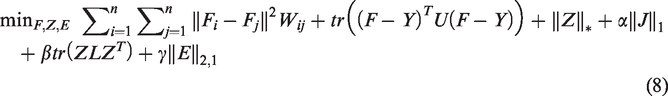

In GLR2S2, the graph learning and semi-supervised learning are simultaneously completed within one step. Based on low-rank representation theory and GFHF, we propose the following objective function of GLR2S2

s.t.

where

LADMAP for solving GLR2S2

There are several variables in the optimization objective function, in order to effectively solve the problem (7), in this paper, we use linearized alternating direction method and adaptive penalty method (LADMAP).

22

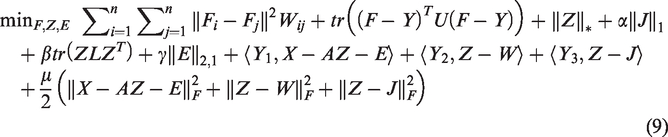

In order to make the objective function separable, we introduce two auxiliary variables

s.t.

In order to eliminate the three linear constraints in (8), we introduced three Lagrange multipliers

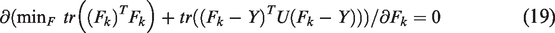

Computation of

Solving (9) w.r.t.

Computation of

Similarly, solving (9) w.r.t.

The sub-problem (12) has the following objective function:

Computation of

The sub-problem for updating

The solution is defined by

Computation of

Solving (9) w.r.t.

s.t.

where

Computation of

The sub-problem for updating

Then, we have

In Algorithm 1, a detailed procedure for solving the proposed method is described. LADMAP for solving GLR2S2 Input: Data matrix Initialization: while not converged do 1. Fixed the others and update 2. Fixed the others and update 3. Fixed the others and update 4. Fixed the others and update 5. Fixed the others and update 6. Update the multipliers as follows

7. Update the parameter

where 8. Check the convergence conditions where

9. Update

end while Output:

Convergence and complexity analysis

The algorithm described above converges to a globally optimal solution of (7) as it is a direct application of LADMAP.

When updating Z by singular value thresholding, see (11), we may predict the rank

Experiments

Experiment setup

-

-

Figure 1 shows some sample images in these four image datasets.

Sample images from ORL, extended Yale B, CMU PIE and USPS datasets. (a) Extended Yale B, (b) CMU PIE and (c) USPS.

Experimental studies

The purpose of semi-supervised learning task is to reveal more unlabeled information with limited labeled data. Therefore, in this paper, the percentage of labeled samples ranges from 10% to 60%, and the rest are unlabeled samples. The parameters of the GLR2S2 method are set to

Classification accuracy rates (%) on extended Yale B dataset.

Classification accuracy rates (%) on CMU PIE dataset.

Classification accuracy rates (%) on USPS dataset.

From the experimental results, we can observe that:

In most cases, compared to other graph based semi-supervised learning algorithms, the proposed GLR2S2 method can consistently get the highest classification accuracy, even with low labeled samples rate. Compared with NNLRS method which also use the sparse and low-rank constraints to construct affinity graph, the proposed GLR2S2 method is able to use the label information to construct affinity matrix effectively. In most cases, it can be seen that the classification accuracy has been significantly improved. In comparison methods,

There are three parameters that affect the performance of our proposed GLR2S2 method.

Classification accuracy with varied parameters (a) , (b) - and (c) ○.

As can be seen from Figure 1, the performance of GLR2S2 varies steadily over a relatively large range of parameters

Conclusion

This paper proposes a new semi-supervised subspace clustering method GLR2S2, which uses label information to guide the construction of affinity graph. In addition, GLR2S2 integrates affinity construction and semi-supervised subspace clustering into a unified framework to ensure overall optimality. Aiming at the optimization problem of less auxiliary variables and less matrix inversion, an efficient iterative linearized ADM algorithm with adaptive penalty (LADMAP) is adopted in this paper. Experimental results on three data sets show that our new method is more efficient than the most advanced methods through a set of evaluations on classification and recognition. However, a theoretical analysis the benefits of using graph regularization will be further investigated as the future work and the application of the proposed model to a broader range of problems is to be explored.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is supported by the National Natural Science Foundation of China (Grant No. 61902160, 61806088), the Natural Science Foundation of the Jiangsu Higher Education Institutions of China (Grant No.19KJB520006) and the foundation of Changzhou Science and Technology Plan (Applied Basic Research) (Grant No. CJ20190076).