Abstract

How to get maximal benefit within a range of risk in securities market is a very interesting and widely concerned issue. Meanwhile, as there are many complex factors that affect securities’ activity, such as the risk and uncertainty of the benefit, it is very difficult to establish an appropriate model for investment. Aiming at solving the curse of dimension and model disaster caused by the problem, we use the approximate dynamic programming to set up a Markov decision model for the multi-time segment portfolio with transaction cost. A model-based actor-critic algorithm under uncertain environment is proposed, where the optimal value function is obtained by iteration on the basis of the constrained risk range and a limited number of funds, and the optimal investment of each period is solved by using the dynamic planning of limited number of fund ratio. The experiment indicated that the algorithm could get a stable investment, and the income could grow steadily.

Introduction

The portfolio studies how to get the maximal expected profit controlled by a predefined risk range, or how to minimize the expected risk within a range of expected profit.1,2 For the general portfolio problem, an individual or a business makes a reasonable investment through the price, expense, and the resulting change in the amount of money, so that a limited number of funds are utilized in an optimal way. However, many uncertain factors would affect the portfolio. And moreover, the utility function is generally non-convex. 3 From the view of the long term, many conventional methods are hard to get the best allocation strategy, most of which only attaining the local optimal solution rather than the global optimal solution. It has attracted a lot of concerns about how to establish a reasonable model for the general investment problem and find a global optimal method, and has become a hot topic in recent years.

Portfolio investment is an effective way to control investment risk and attain profit. Some efforts proved that the portfolio investment could effectively reduce the risk by the mean-variance analysis, 4 and by using the proposed portfolio effective boundary model, the stock investors could find out the stocks of the lowest level of risks with the same rate of profit. 5 However, as the investment is often affected by many sophisticated factors, such as economic, social, and investor subjective attributes, which keep constantly changing over time, the so-called optimal solution by some fixed learned investment model which uses multiple investments reduce risk to get as much revenue as possible by document analysis is usually not the one that best fits the situation at the time.

Most portfolio models assumed that the profit on securities was subject to a normal distribution, and all investors were within a single investment period. The variance was used to assess the investment risk. Investors chose to invest according to the expected and variance of the yield combination. However, in the real world securities market, the rate of profit on securities does not necessarily obey the normal distribution, and the investors have their own risk aversion and risk appetite. What’s more, the investment is often influenced by uncertain factors. As a result, the difficulty of investment increased. The newly emerged reasonable models, which are classified as modern portfolio, are able to solve the problem in a better way, with the goal of optimal combination scale and the investment ratio. In a combination of investment, more portfolio investment increases the risk of the entire combination, but decreases the fee of investment, and the risk no longer fails when the number of assets in the portfolio reaches a certain number.The risk of investment combination is characterized by the covariance between the assets of the portfolio. Under specified conditions, there is a set of investment ratios that minimize the combined risk.

Most conventional portfolio investment models frequently first divide a single stage problem into a multi-stage one, and then use dynamic programming methods to solve the problem. With the development of the modern financial market, there are more and more types of investments, and as a result, the data generated by the multi-stage problem and the investment finance in the transaction cost constitute the curse of dimensionality. Moreover, the multi-stage portfolio investment is in fact a nonlinear dynamic stochastic process, which especially needs to consider the uncertain factors of each period.

In practice, dynamic programming has numerous limitations in dealing with portfolio investment problems. First, the fundamental inputs required for the model contain the variance of the current state, and the covariance between the two groups. The great number of stock combinations causes the curve of dimension as there are too many states. Secondly, data often contain errors that are caused by unreliability and uncertainty of the optimal results. The input data into the model are usually estimated by the previous data. If the data were estimated to be free from errors, the conventional model guarantees a valid portfolio, but as normally the expected data are unknown and require statistical estimation, it is very likely for the estimated input data to contain bias or even errors, which often result in incorrect product investment ratio. Thirdly, in the presence of investment transaction costs, an uncertain random information may affect the optimal solution, and cause the instability of the solution. Moreover, in the long-term investment process, if the investment cycle is within a certain period of duration, the adjustment of proportion of assets in the portfolio investment will lead to increasing transaction costs.

Many models, such as the mean-variance model, 6 the capital asset pricing model, 7 and the Black–Scholes option pricing model, 8 assume that the market is free of friction, that is, there are no tax and transaction costs. However, most securities markets are very complex, not only due to the existence of transaction costs, but also due to the liquidity and turnover, along with the correlation between the stocks. All these factors make the problem harder to be solved.

In this paper, aiming at solving the above-stated problems and related model defects generated by dynamic programming, we consider using approximate dynamic programming to establish a reasonable Markov Decision Process (MDP) model 9 for the portfolio investment problem. Under various constraints, we designed different decision-making goals for different targets, combined with actor-critics algorithm and piecewise linear function approximation method to solve the optimal investment policy, so as to achieve a certain risk within a stable investment.

MDP

MDP model

An MDP model can be denoted by a quadruple 〈

According to the policy π, the state

The reward function is

Given an infinite state sequence {

However, in practice, the steps are finite and it generally has a termination step which only represents the termination and is not involved in the computing reward. The state sequence {

Reinforcement learning

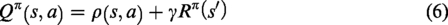

Reinforcement learning algorithms usually evaluate the policy π using the action value function

According to the definition, the action value function

15

is calculated as

According to the policy π that is learned by the algorithm, taking the action

We can get

Given a state action sequence {(

Then

Therefore, we can get the general form of

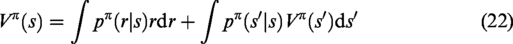

The state value function is

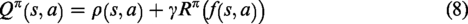

Similarly, we can get the discounted reward of state function Vπ(

In the undeterministic environment, there are two aspects of uncertainty that should be considered. First, the successive state can be random and cannot be determined by the current state and the selected action; second, the reward could also be uncertain. The corresponding action value function

In the MDP model of the undeterministic environment, the state transition function

First, consider uncertainty in state transfer. After taking the action

In an undeterministic environment, the state

Therefore, the general form of

Second, consider uncertainty in reward. Taking into consideration of uncertainty of the action taken means that the action corresponding to the policy π can be any of the action of the action set. The undeterministic environment also needs to consider the instability of the reward value and the reward value also multiplies a probability. In this way, the reward value of the unstable environment represented by the left part of equation (18) becomes

Correspondingly, the right part of equation (18), the

Therefore,

Similarly,

In the reinforcement learning, the policy that maximizes the expected accumulative reward, return, is called the optimal strategy π*, and the corresponding optimal action value function is

Actor-critic model

Different from value function-based reinforcement learning methods, the actor-critic algorithm has two independent structures, one for storing and updating the value function and the other for storing the updated policy.16,17 The agent selects the action according to the policy rather than the value function, where the policy part is called the actor, which performs an action, updates the value of the function, and makes use of value function to evaluate the action, and the value function part is called critic. The value function of the critic part can also use temporal difference error (TD error) which is calculated by TD learning method, e.g. Q-learning, that is able to learn directly from raw experience without a model of the system and is thus suitable for decision of dynamic and uncertain system. The framework of the actor-critic algorithm is shown in Figure 1.

An illustration diagram for framework of actor-critic algorithm.

The advantage of the actor-critic algorithm is to separate the policy from the value function, using linear approximation to learn the value function and the policy function, where the critic part is the value function approximator, 18 learning the estimate function, and then passed to the actor part. The actor part is a policy approximator, which learns a random strategy and uses the gradient-based policy update method to select the action. Then critic part uses the time difference algorithm to estimate each state value function caused by the action in the actor policy iteration process, using the estimated value function to evaluate the action selected by the actor to find the maximum value of the local or overall cumulated reward to provide more effective reinforcement feedback signal to the actor, and update the actor policy according to the random gradient. The actor-critic algorithm is much simpler than Q-learning in the computation, 19 can determine the optimal policy, and effectively applied to control the tasks.20,21

Markov chain model for investment

Prerequisites for the model

Given an investor with available cash

Given the investment transfer function

Given a stock, if the agent decides to increase investment holdings,

Considering the transaction costs, we define the cost of holding of financial products is 0, denoted by

Therefore, the transaction cost during the investment period is

When the value of the asset changes, the relative return of the market is defined as

From state

The initial stage of

Expected returns

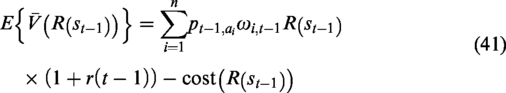

Given a stock pool

According to equation (30), the expected return of the

The update of the weight of the

Risk factor

The risk factor of a stock is

And the risk factor of a portfolio is

In a portfolio investment, the smaller the correlation between the selected stocks results in the smaller the impact on each other, and the smaller the risk factor, where the correlation is reflected through the correlation coefficient between the two shares of the stock, denoted by

Value function

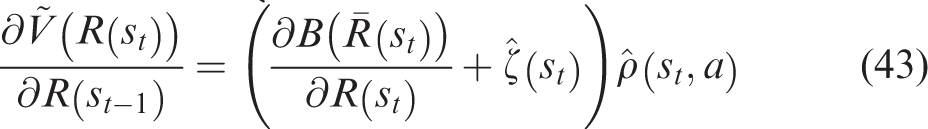

We use

From the above analysis, we can see in a portfolio investment that a greater risk factor has a smaller turnover rate, that a higher yield has a greater the weight, and that a smaller correlation of any two stocks has a smaller risk factor. Since the investor randomly chooses the same type of investment, it is hard to attain the exact value function at each stage. So it is necessary to get approximate solution by assigning different weights to different financial products. The approximate value function is denoted by

Here we use a utility function to measure the benefit by

Investors can receive a number of possible portfolio based on a certain range of risk and rate of return, and obtain the maximum utility expectations of the portfolio, which is called the optimal portfolio investment. The convexity of the indifference curve and the convexity of the effective set determine that the optimal portfolio is unique. The objective function can be written as

Let

Then the optimal objective function is

Algorithm description

We use softmax as the policy

The investment rate of return on the stock is sorted in descending order. The stock with the return that is greater than the current stock's maximum profit with least risk by considering risk factor is selected. The available policy is within a certain range, and those policies that exceed the range of risk factors will be discarded.

The actor-critic based algorithm of optimal portfolio investment under uncertain environment is as follows (1) (2) set threshold for deviation (4) initialize time step (5) initialize expected benefit rate (6) initialize risk factor (7) (8) compute expected benefits as investment times expected benefit rate at time step t: (9) compute value function by equation (44) (10) get current deviation at time step (11) (12) (13) (14) update (15) go to step (7) (16) (17) (18) transfer state (19) (20) get optimal investment : (21)

Experiment

The problem assumes that the risk matrix is based on historical data and follows the law of the single stock income-risk in the positive direction.22 There are three principles that are used to guide the investor for selecting the stock. First, the net income matrix has three important elements: the type of stock, net income and the amount of money invested. Second, the choice between the stock is not relevant, and it is up to the investor to decide the type and the holding number of stock. Third, total earnings include interest from the bank whose interest rate is predetermined, transaction costs, and profits. In addition to predefined investment funds and interest, the transaction costs are also known, while others are undetermined.

Given the total amount of funds

Initial setting of excepted return, risk factor, weight, turnover, selection probability of all stocks.

The portfolio at stage

Take stock#1 as example. The monthly difference of return rate is shown in Figure 2.

The monthly difference of return rate.

The total reward of 2015 and 2016 is shown in Figure 3 where the total reward kept increasing at each month of both years, indicating that our proposed method for investment worked well.

The total reward of 2005–2016.

Conclusion

Achieving maximizing the benefit in the scope of risk and cash is a widely studied problem by the public. We used ADP to establish a sound Markov decision model for portfolio investment. In the proposed model-based actor-critic algorithm, the corresponding optimal value function is obtained by iteration on the basis of the limited risk range and the fund, and then the optimal investment of each period is solved by using the dynamic planning. The algorithm is able to achieve a stable investment and the income increasing in both long term and short term.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.