Abstract

Bottom-up and top-down are two main computing models in granular computing by which the granule set including granules with different granularities. The top-down hyperbox granular computing classification algorithm based on isolation, or IHBGrC for short, is proposed in the framework of top-down computing model. Algorithm IHBGrC defines a novel function to measure the distance between two hyperbox hgranules, which is used to judge the inclusion relation between two hyperbox granules, the meet operation is used to isolate the

Introduction

There have been many researchers working in the granular computing field. Zadeh1,2 has identified three basic concepts, namely granulation, organization, and causation, that underlie the process of human cognition. More specifically, granulation is a process that decomposes a universe into parts. Conversely, organization is the way in which parts are integrated into the universe by the operation between two granules. Causation involves the association of causes and effects.

In general, organization process of objectives, granules, information granules are easily used to form granular computing algorithms, such as Jamshidi and Kaburlasos, 3 Kaburlasos and Pachidis, 4 Papadakis et al., 5 and Kaburlasos and Kehagias 6 represent a granule by a vector, obtain the granule set including the granules with different granularaties by the partial ordered relation between the two granules.

An associative memory is a system such that given input

Sossa and Guevara

8

introduce an efficient training algorithm for a dendrite morphological neural network (DMNN), for a training set, they regard a set as a hypercube, and partition the hypercube into 2

In this paper, isolation-based hyperbox granular computing classification algorithms are presented. Firstly, a granule is a hyperbox induced by the beginning point and the end point. Secondly, the

The layout of the remainder of this paper is as follows. The second section introduces motivation and related work. The third section describes algorithm IHBGrC. The fourth section presents comparative experimental results. The final section summarizes our contribution and describes future work.

Motivation and related work

In this section, the motivation for this proposed research work is presented, and some related works are discussed.

Motivation

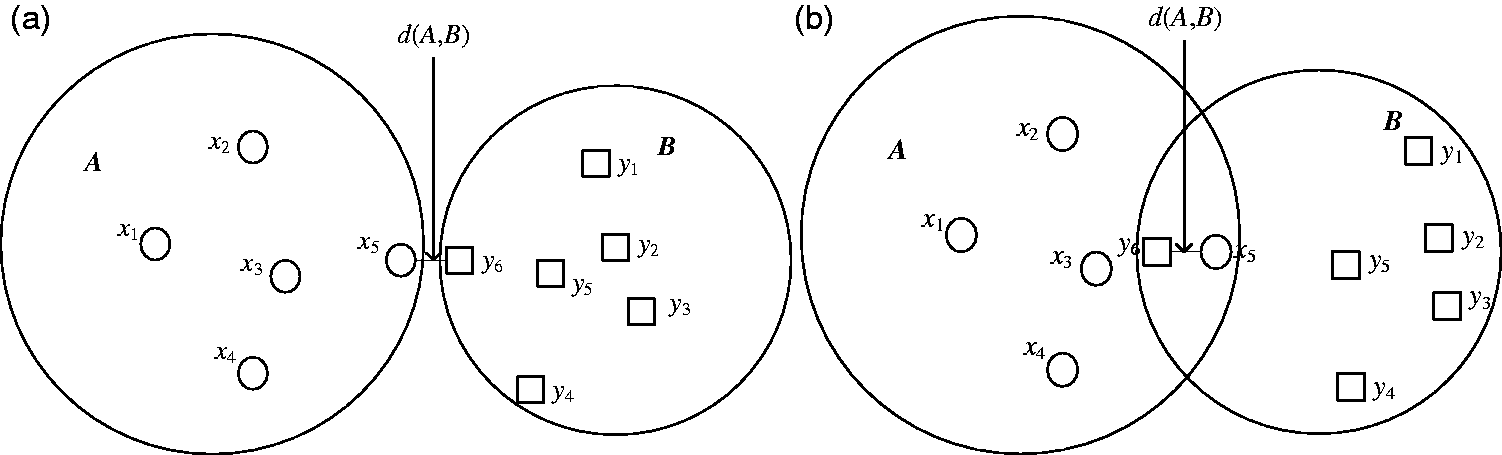

For granular computing classification (GrC) in view of set theory, a granule is represented as the subset for the training set Distances defined by formula (1) between two sets.

On the other hand, the operations that join and meet are used to generate the another granule. In most cases, the join operation is used by granular computing algorithms, and meet operation is used to measure the fuzzy inclusion relation, such as the fuzzy inclusion measure σ(

Decomposition method is the main strategy for divide and conquer methods,10,11 which are used in the transformation between complex task and simple task. In view of the limitation of traditional distance formula (1) and the advantage of decomposition method, we form the granular classification algorithms by the novel distance between two hyperbox granules and the meet operation between two hyperbox granules, which utilize the decomposition methods to divide the data set into some subsets, these subsets are regarded as granules. The novel distance formula is used to determine whether a datum is located inside the meet hyperbox. The labels of data lying inside the meet hyperbox determine the isolation process.

Related work

GrC has been proposed and studied in many fields, including machine learning and data analysis.3–6,12–18 In general, GrC is an emerging computing paradigm of information processing based on lattice computing theory.

GrC includes two kinds of computing models according to lattice computing theory, one is granular structure in terms of the relation between object and attribute. The other is fuzzy lattice reasoning induced by the relation between two objects based on lattice theory, such as fuzzy lattice, which defines the fuzzy inclusion measure between two granules, and the granule is related to the object. Pedrycz and his colleagues set up a certain conceptual framework composed of some generic and conceptually meaningful entities-information granules, which is granular structure and relates to the problem formulation and problem solving. This becomes a framework in which they formulate generic concepts by adopting a certain level of abstraction, carry out further processing, and communicate the results to the external environment. A few examples offer compelling evidence with image processing, processing, and interpretation of time series.12–15 A triarchic structure of granular computing integrates three important perspectives, namely, philosophy of structured thinking, methodology of structured problem solving, and mechanism of structured information processing.16,17 Fuzzy lattice reasoning is proposed by Kaburlasos and his colleagues, and generate granules with different granularity by the meet operation and the join operation between the two granules.3–6,18

The difference between the granular structure and fuzzy lattice reasoning is the different fuzzy relations. The granular structure mainly discusses the relations between object and attribute, and fuzzy lattice reasoning mainly discusses the partial order relations between two objects. In the literature of granular computing, the distance between two granules is seldom discussed. So we discuss the distance between two granules and isolate the

Granular computing classification algorithms based on isolation

For

Representation of the hyperbox granule

For the training set

≤ is the less than or equal relation between two scalars. Here, point

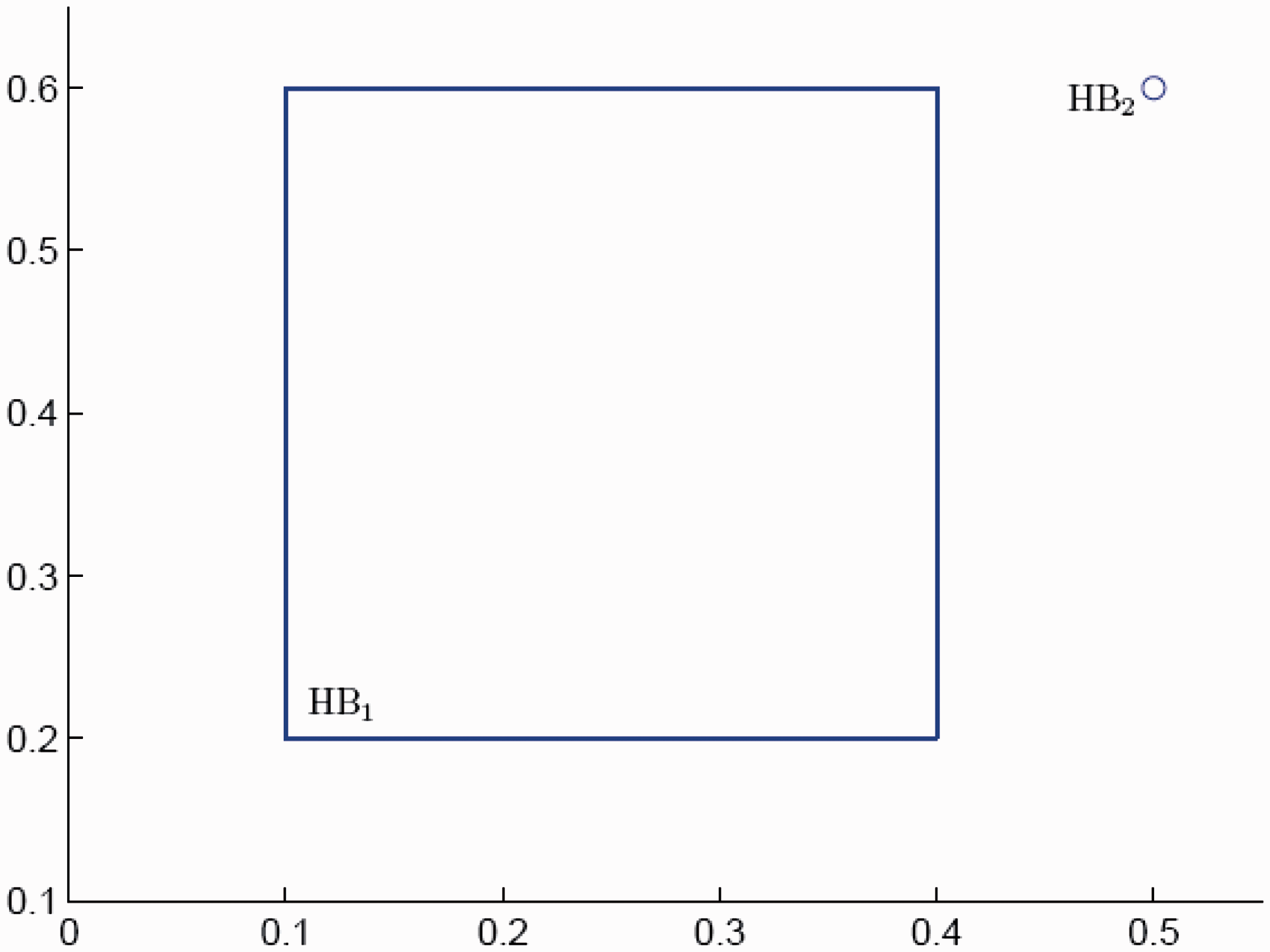

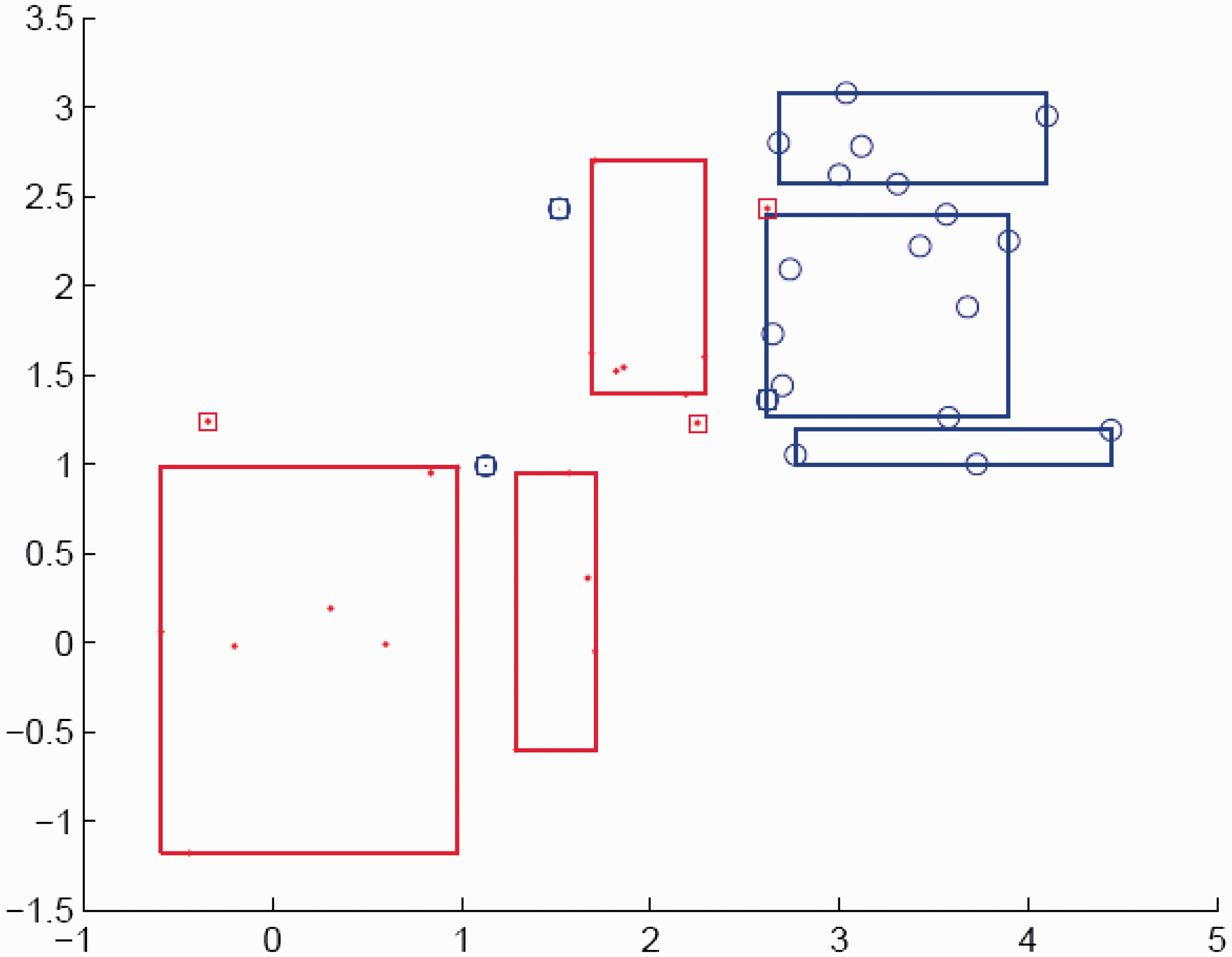

For example, in two-dimensional space, HB1 = [0.1, 0.2, 0.4, 0.6] represents the hyperbox granule shown in Figure 2, which has the beginning point (0.1, 0.2) and the end point (0.4, 0.6). The length of hyperbox granule equals 0.4, and its width equals 0.3. The granularity of hyperbox granule is 0.5, which is determined by the beginning point and the end point. The another example is the atomic hyperbox granule HB2 = [0.5, 0.6, 0.5, 0.6] shown in Figure 2 with the granularity 0, which represents the single point (0.5, 0.6).

Hypergranules in two-dimensional space.

Distance measure

Distance is a numerical description of how far apart objects are. Distance between two hyperbox granules is the measure of farness between two objects, such as hyperbox granules. In analytic geometry, the distance between two points of the xy-plane can be found using the distance formula. In the Euclidean space

Firstly, the distance between point P and hyperbox granule HB is defined as

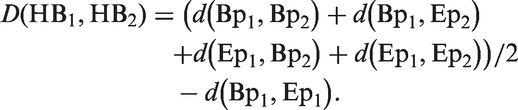

Secondly, the distance between two hyperbox granules HB1 = (Bp1, Ep1, g1) and HB2 = (Bp2, Ep2, g2) is defined as

The distance between two hyperbox granule has the following properties.

Because Theorem 3.1

Proof

If

If HB1 ⊆ HB2, both Bp1 and Ep1 are included in hyperbox granule HB2. According to theorem 1,

Especially, if HB1 is an atomic hyperbox granule induced by a point The distance (equation (3)) is not a metric distance and does not satisfy symmetry, namely

Theorem 3.2

Proof

Similarly,

Generally,

For two-dimensional space, two hyperbox granules HB1 = [0.2 0.1 0.3 0.4] and HB2 = [0.25 0.15 0.4 0.5], the distance between HB1 and HB2 are computed as follows.

Operation between two granules

In

To avoid the points lying in the line or hyperplane of hyperbox, the relaxation factor ξ is used to form hyperbox for the subset

The join operator ∨ between two hyperbox granules is designed to achieve the hyperbox granule with larger granularity compared with the original hyperbox granules. For two hyperbox granules HB1 = (Bp1, Ep1) and HB2 = (Bp2, Ep2), the join operation ∨ is designed as follows.

Conversely, the meet operation ∧ between two hyperbox granules is designed to obtain the hyperbox granule with the smaller granularity compared with the original hyperbox granules. The meet operation ∧ is designed as follows.

From formula (3), we can see Bp1 ∧ Bp2 ≼ Bp1, Bp1 ∧ Bp2 ≼ Bp2, Bp1 ≼ Ep1 ∨ Ep2, Bp2 ≼ Ep1 ∨ Ep2, ||Bp1 ∧ Bp2 − Ep1 ∨ Ep2||2 ≥ ||Bp1 − Ep1||2, ||Bp1 ∧ Bp2 − Ep1 ∨ Ep2||2 ≥ ||Bp2 − Ep2||2, namely the granularity of HB1 ∨ HB2 is greater than or equal to the granularities of HB1 and HB2, and the operation ∨ induces the hyperbox granule with larger granularity compared with original granules. From formula (5), we draw the opposite conclusion that the meet operation induces the hyperbox granule with the smaller granularity compared with original granules.

Isolation algorithm

For the training set

Input: the training set Output: the hyperbox granule set S1. S2. Extracting the S3. Compute the hyperbox HB1 = [a1 b1] by the S4. If HB1 is empty, the procedure is terminated, return S5. If the meet hyperbox HB of HB1 and HB2 is empty, S6. If HB = HB1, then HBt = HB1, else S7. Computing the vertex set S8. If the beginning point S9. The HBt is induced by S10. Find labels of data included in hyperbox granule in HBt S11. If all the labels are equal to

Algorithm1: the

In S3, the hyperbox can be formed by formula (5).

In S4, there are 2

In S5, suppose HB1 = [0.1 0.2 0.3 0.2 0.4 0.7] and HB2 = [0.15 0.35 0.55 0.3 0.5 0.9], the HB1 has the positive class and HB2 has the negative class, the meet hyperbox is HB = [0.15 0.35 0.55 0.2 0.4 0.7], which is obtained by formula (4) and nonempty, the beginning point of HB1 is

In S7, we computed eight hyperboxes, they are [0.1 0.2 0.3 0.15 0.35 0.55], [0.1 0.2 0.55 0.15 0.35 0.7], [0.1 0.35 0.3 0.15 0.4 0.55], [0.1 0.35 0.55 0.15 0.4 0.7], [0.15 0.2 0.3 0.2 0.35 0.55], [0.15 0.2 0.55 0.2 0.35 0.7], [0.15 0.35 0.3 0.2 0.4 0.55], [0.15 0.35 0.55 0.2 0.4 0.7], where [0.15 0.35 0.55 0.2 0.4 0.7] may be include other class data, and the other seven formed hyperboxes are added to the hyperbox granule set GS.

Finally, the data included into hyperbox [0.15 0.35 0.55 0.2 0.4 0.7] are composed of the updated training set S, and re-perform the procedure from S2.

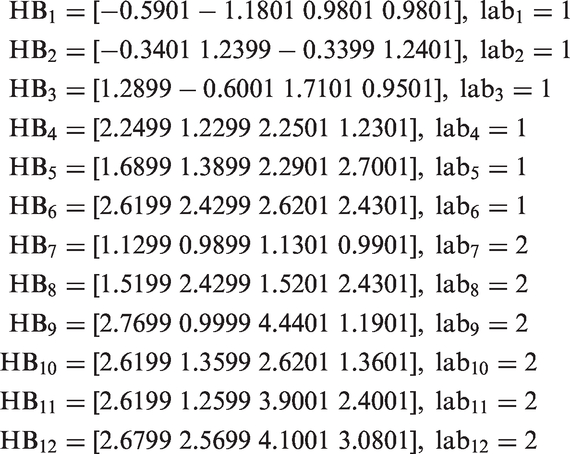

A key issue in the design of GrC is its training. For purposes of explaining the algorithm, a simple example of two-class problem with two attributes is used. Figure 4 shows the example patterns, each input includes two attributes, each output includes 1 or 2.

Training data in two-dimensional space.

Given IHBGrC algorithm Input: the training set S Output: the hyperbox granule set S1. S2. S3. Obtain the hyperbox granule set S4. S5. if S6. Algorithm 2

For the training data shown in Figure 4, if set ξ = 0.0001, 12 hyperboxes and the corresponding class labels are obtained by performing algorithm 2. They are

If class label is 1, all the data with class label 1 are included in one of the hyperbox granule set GS1 shown in Figure 5 with class lab1 by performing S3. If class label is 2, all the data with class label 2 are included in one of the hyperbox granule set GS2 shown in Figure 6 with class labs by performing S3. The final hyperbox granule set GS = GS1 ∪ GS2, and all the training data lie inside one of the hyperbox granule set GS with the corresponding class labels shown in Figure 7.

The firsth class data and the formed hyperboxes. The second class data and the formed hyperboxes. The training data and their formed hyperboxes.

For testing, the input unknown datum

Taking

Similarly,

So the class label of datum

For the achieved granule set, there are two significant properties, (1) there are not intersection between any two hyperbox granules and (2) each training sample is included in the only one hyperbox granule.

Experiments

In this section, validation experimental results are performed for two-class problems and

Classification problems in two-dimensional space

The first data set is the Ripley’s synthetic data set that consists of two classes in two-dimensional space.

20

The data are divided into a training data set and a test set consisting of 250 and 1000 samples, respectively, with the same number of samples belonging to each of the two classes. The training data set appears in Figure 8.

Ripley’s synthetic training data set.

For IHBGrC, the GS including 24 hyperbox granules with class label 1 and 26 hyperbox granules with class label 2 are obtained and shown in Figure 9 by IHBGrC. The testing data and hyperbox are shown in Figure 10. For Ripley’s data set, the training accuracy by IHBGrC is 100%, and the testing accuracy by IHBGrC is 88.6% when the parameter epsilon is set to 0.00001, testing accuracy by SLMP-R is 81.2%, and testing accuracy by SLMP-P is 87.8%.

21

For Ripley’s data set, IHBGrC testing accuracy is greater than SLMP-R and SLMP-P.

GS by IHBGrC and 250 training data for Ripley. GS by IHBGrC and 1000 testing samples for Ripley.

For SVMs, the best testing accuracy is the index of optimal SVMs, the best testing accuracy is 90.3% when C = 5000, the kernel function is polynomial kernel with order 5. The corresponding training accuracy is 89.6%. The training data and the classification boundary are shown in Figure 11.

250 Training data and the boundary by SVMs for Ripley, the training accuracy is 89.6%.

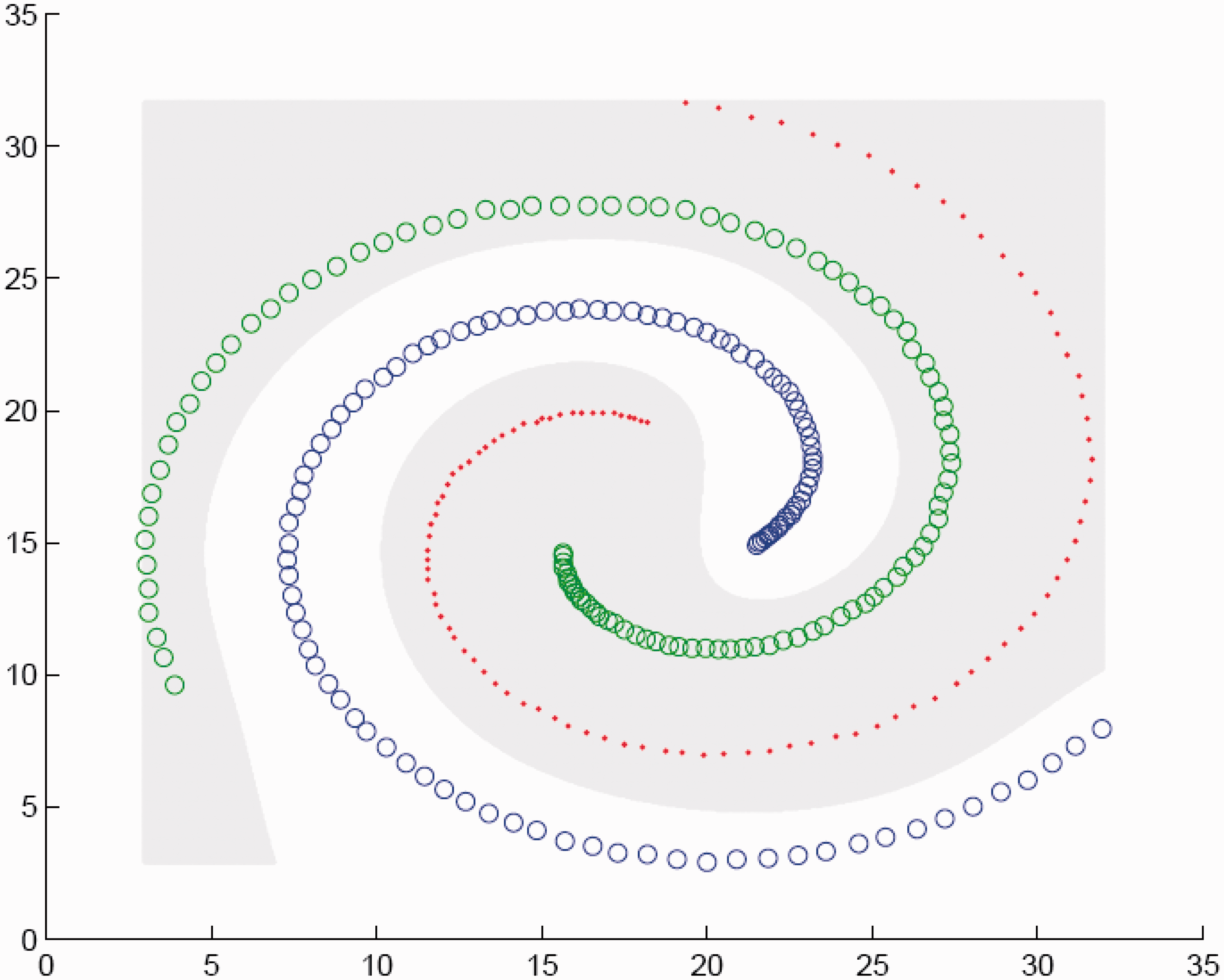

The second data set was the spiral synthetic data set that consists of three classes, which is used to verify the feasibility of IHBGrC for multi-class classification prolems in two-dimensional space.

22

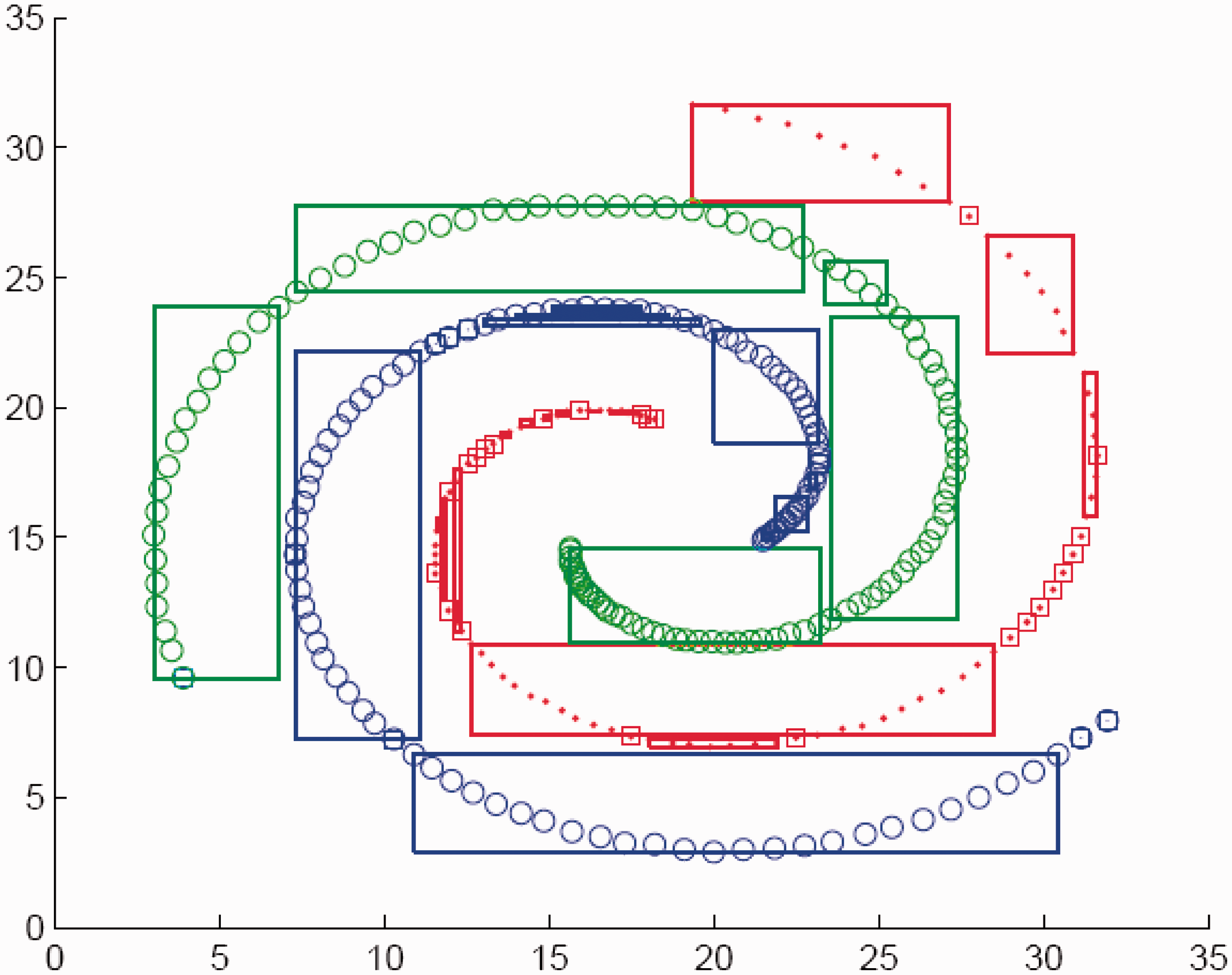

All the training data are shown in Figure 12, and the achieved hyperbox granules are shown in Figure 13. The achieved hyperbox granule set includes 61 hyperbox granules, where 31 hyperbox granules have the class labels 1, which are marked by the red rectangles in Figure 13, 6 hyperbox granules have the class labels 2 and are marked by the green rectangles in Figure 13, and 17 hyperbox granules have the class labels 3 and are marked by the blue rectangles in Figure 13.

Training data of spiral classification problem. The achieved hyperboxes of IHBGrC for the spiral classification problem.

For SVMs, the one-vs-rest strategy is adopted to the multi-class classification problems. The training accuracy is 100% when the radial basis function (RBF) kernel function with the width 5 is selected to perform the SVMs. Figure 14 shows the boundary of 1-vs-rest SVMs, Figure 15 shows the boundary of 2-vs-rest SVMs, and Figure 16 shows the boundary of 3-vs-rest SVMs.

Boundary of 1-vs-rest SVMs for the spiral classification problem. Boundary of 2-vs-rest SVMs for the spiral classification problem. Boundary of 3-vs-rest SVMs for the spiral classification problem.

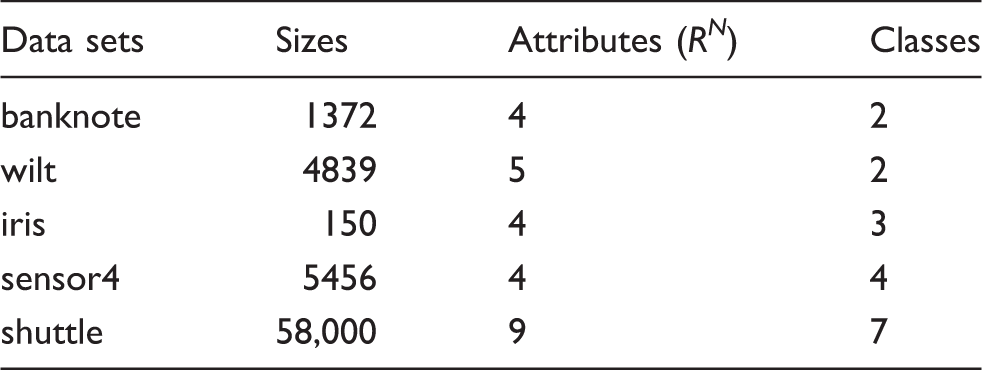

Classification problems in N-dimensional space

Classification problems in space

The data set

The data set

The data set

For data set

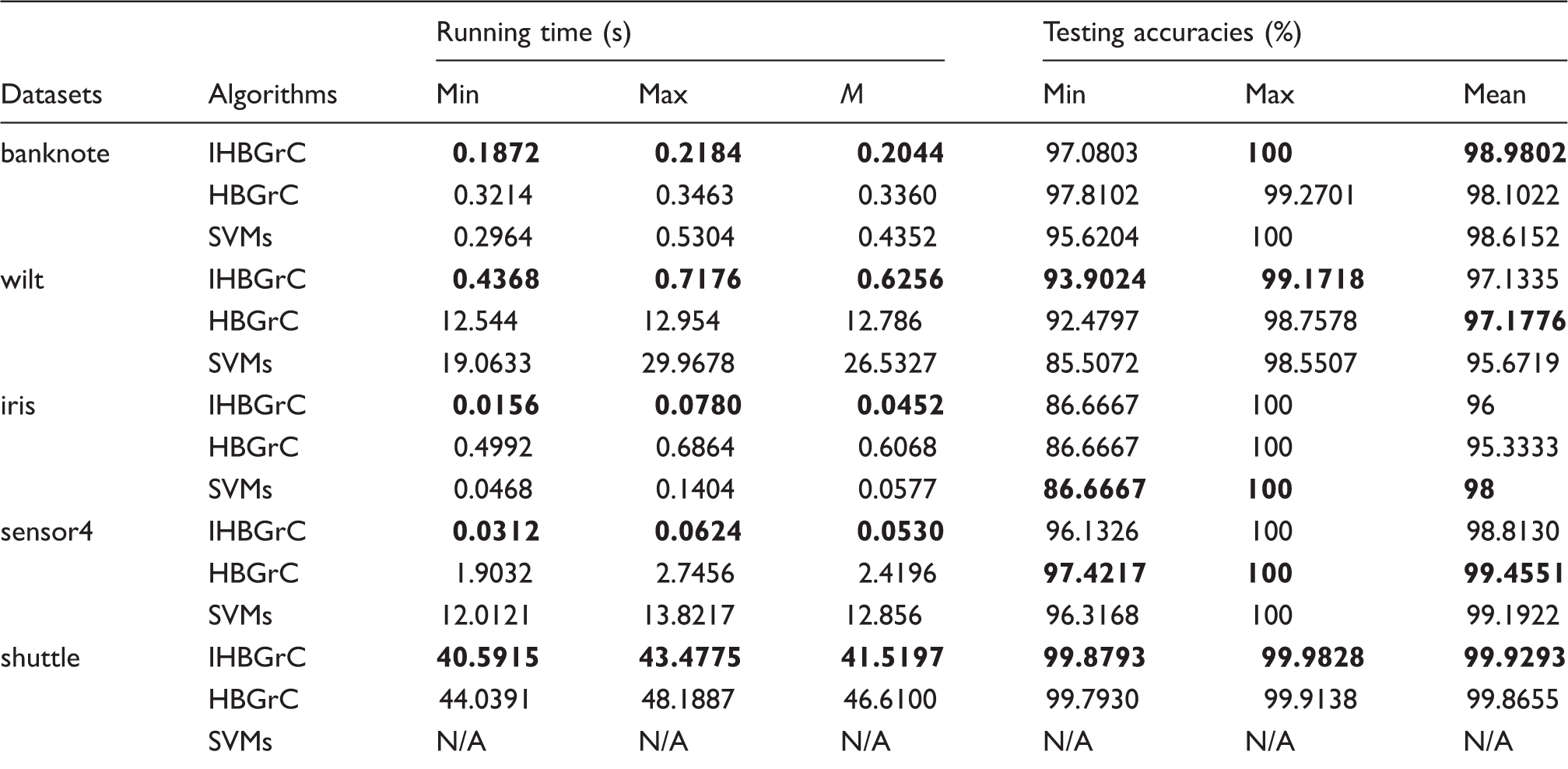

Performance of IHBGrC for classification problems in

The best performances are marked by the boldface ones.

For data set banknote, the minimal testing accuracy, the maximal testing accuracy, and the mean of testing accuracies by IHBGrC are greater than or equal to those of SVMs. For data set wilt, the testing accuracies of IHBGrC are better than those of SVMs, such as the minimal, maximal, and mean testing accuracies. For data set iris, the mean of IHBGrC is less than SVMs.

The experiments reveal that IHBGrC algorithm has the following features:

Convergence in a finite number of steps. Perfect classification of the training data. No overlap between hyperboxes with distinct class labels. Once the value of ξ is set, if there are the same training patterns, the hyperboxes generated are always the same. No dependency of class presentation order. IHBGrC can be applied to classification problems of

IHBGrC is presented by the meet operation between two hyperboxes with different class labels, and achieves the comparable classification accuracies compared with HBGrC, which is bottom-up granular computing classification algorithm designed by the join operation between two hyperboxes with the same class label. Two main drawbacks of the proposed algorithm are (1) the number of output hyperbox granule grows exponentially as the number of describing dimensions increases and (2) testing accuracy for the classification problems with the numerical attributes is better than the testing accuracy for the classification problems with the symbol attributes. For the symbol attributes, we must transform them into numerical attributes to perform IHBGrC, for example, symbol

For the computational complexity, IHBGrC and HBGrC can achieve the hyperbox granules through only one time scan of the training set, so their time complexities are less than SVMs. The aforementioned property (Theorem 1) of the distance between two hyperbox granules is mainly used to judge the inclusion relation between two hyperbox granules and compute the distance between two hyperbox granule, especially compute the distance between an atomic hyperbox granule and a hyperbox granule during the testing process. The non-symmetry of distance formula (3) results in the higher storage space compared with the symmetry distance, for hyperbox granule set including

Conclusions

The top-down hyperbox granular computing classification algorithm based on isolation is proposed in the paper. Firstly, a granule was represented as a hyperbox with the beginning point and the end point. Secondly, the meet operation was used to isolate the

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the Natural Science Foundation of China (Grant no. 61170202, 61402393) and Natural Science Foundation of Henan (nos. 132300410421, 132300410422).