Abstract

Regularization is a key element of variational models in image processing. To overcome the weakness of models based on total variation, various high order (typically second order) regularization models have been proposed and studied recently. Among these, Euler's elastica energy based regularizer is perhaps the most interesting in terms of both mathematical and physical justifications. More importantly its success has been proven in applications; however it has been a major challenge to develop fast and effective algorithms.

In this paper we propose a new idea for deriving a primal dual algorithm, based on Legendre–Fenchel transformations, for representing the elastica regularizer. Combined with an augmented Lagrangian for-mulation, we are able to derive an equivalent unconstrained optimization that has fewer variables to work with than previous works based on splitting methods. We shall present our algorithms for both the image restoration problem and the image segmentation model. The idea applies to other models where the elastica regularizer is required. Numerical experiments show that the proposed method can produce highly competitive results with better efficiency.

Keywords

Introduction

We are primarily concerned with the challenging problem of developing effective algorithms for the Euler elastica regularizer. The latter is often used in imaging applications including image restoration, which is briefly reviewed below to motivate the new work.

Image restoration is about acquiring and recovering unknown u = u(x) (without any restrictions) from an observed image by z = z(x), x ∈ Ω ⊂ ℝ

d

where Ω is the bounded domain and has a Lipschitz boundary. Here we consider planar images, i.e. d = 2 and x = (x1, x2). All results and discussions will be applicable to d > 2 and other models. An additive image restoration model assumes

The classical regularization technique by Tikhonov et. al 8 is to add a smoothing regularization term into the energy functional to derive the following minimization problem. The resulting well-posed model admits an unique solution. This classical model cannot preserve image edges, though it is simple to use. Based on the total variation (TV) regularizer, the Rudin-Osher-Fatemi (ROF) 4 model preserves the image edges by seeking solutions of piecewise constant functions in the space of bounded variation functions (BV) and, hence, is widely used. A variety of methods based on TV regularization have been developed to deal with imaging problems such as image restoration,9–12 image registration,13–15 image decomposition,3,16,17 image inpainting18–21 and image segmentation.22, 23

One disadvantage of the ROF model is that it yields so-called blocky (staircase) effects, in restoring smooth images in applications where edges are not the main features.24–27 Another disadvantage of the model is the loss of image contrast. 28 It should be remarked that the recently popular method by the iterative regularization technique 29 can reduce the staircasing effect and improve on the image contrast to some extent; it also provides fast implementation.

The elastica was discovered by Euler in 1744.30 Euler’s elastica energy function defined by

The rest of this paper is organized as follows. In the next section, we briefly review two recent models usingl Euler’s elastica for image processing and their associated numerical algorithms. In the section “The proposed algorithm for Eulers elastica regularization” we present our new algorithm for the models reviewed in the previous section. These are: image restoration and segmentation, respectively. The next section provides some numerical results to illustrate the effectiveness of our new algorithm. The final section draws some conclusions.

Previous works based on Euler’s elastica

As a regularizer, Euler’s elastica can be found in and potentially applied to many fields of imaging models. Below we review two models that interest us most.

Euler’s elastica denoising model33

Shen et al.

32

proposed to interpolate a gray-valued image by extending its lines of constant intensity (isophotes) in the inpainting domain. Their model for image denoising takes the form

Instead of solving it directly, Tai et al.

33

developed an augmented Lagrangian method that avoids the complicated higher-order derivatives. First rewrite (3) as

A Euler’s elastica-based segmentation model

The widely used Chan-Vese segmentation model

35

minimizes the length (via TV) of detected objects. Minimizing Euler’s elastica

34

instead of TV leads to a new segmentation model capable of integrating missing or broken parts to form complete meaningful objects and capturing objects with tiny but elongated structures. Their Euler’s elastica-based segmentation model takes the following form

This regularization was originally proposed and used in the famous work of segmentation with depth by Nitzberg et al. 36

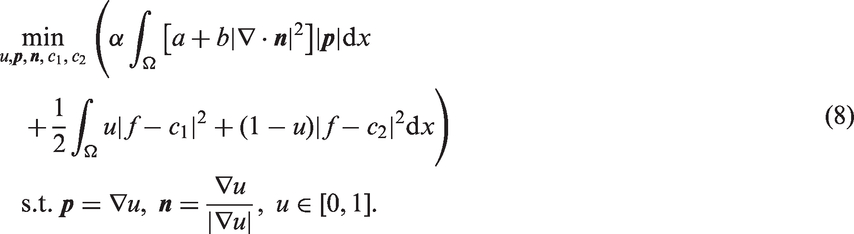

Letting u = H(φ) ∈{0, 1} and relaxing it to u ∈ [0, 1], the model (6) can be written as

The proposed algorithm for Euler’s elastica regularization

We shall take a different approach to develop algorithms for the above models. Our idea is to reformulate Euler’s elastica regularization term TeeV(u) first. This is achieved by a transformation. Let Definition 1 (Legendre–Fenchel transformation)

The Legendre–Fenchel transformation

In this work, we shall use the Legendre–Fenchel transformation to study TeeV(u) from (2).

Based the Legendre–Fenchel transformation of

Take

For any vector

The formula for the normal vector

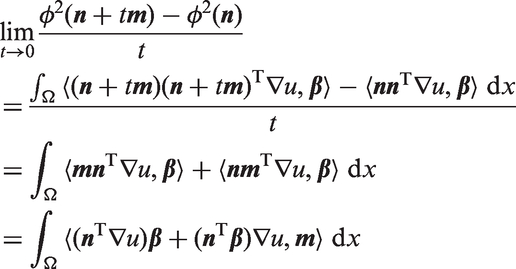

Lemma 1

Proof

Lemma 2

Lemma 3

Finally, the Euler elastica functional TeeV(u) can be split into the following scheme

in which all terms are differentiable.

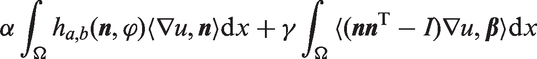

A new algorithm for image denoising

Now we are ready to present our augmented Lagrangian-based primal dual approach based on the Legendre–Fenchel transformation, which serves as an alternative algorithm to (5) for the elastica based model (3).

The approach considered here differs from existing augmented Lagrangian approaches for the solution of the same problem; indeed, the augmented Lagrangian functional we use here contains only one Lagrange multiplier (instead of three), and three associated augmentation (dual) terms (instead of four).

Following equation (13), the denoising problem (3) takes the equivalent form

Our goal is to develop a fast algorithm to seek the saddle-point of the energy functional

The corresponding subproblems are given by

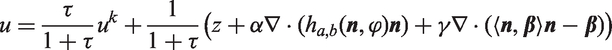

The remainder of this subsection describes in detail each step of the process. In u-subproblem, the energy functional

We first consider the first-order variation of the functional

Assume that

Firstly, let A be u-subproblem

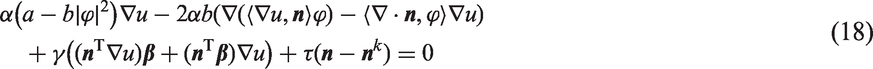

n-subproblem

Lemma 4

Proof

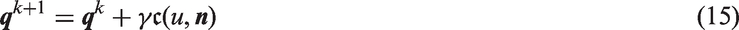

We address the optimization problem in (16) using an alternating iterative scheme with respect to u, ϕ, Step 1. Input an observed image z. Set the initial multiplier

Step 2: for k ≥ 0

Step 3. Stop if a given criterion is valid, otherwise go to Step 2.

Algoritham 1 (Augmented Lagrangian Primal-Dual method (ALPD)-elastica denoising)

Image segmentation

Euler’s elastica as a new regularization of segmentation contour was originally proposed and used in the famous in-depth work by Nitzberg et al 36 Recently, Zhu et al. 34 have developed a numerical method to minimize Euler’s elastica dependent functionals by using the augmented Lagrangian method. In their work, they also considered Chan-Vese’s model with the substitution of Euler’s elastica for the length term. As discussed in the introduction, the purpose of our present work is to present some new properties and implementation of this modified Chan-Vese’s model through numerical study with the aid of the Legendre–Fenchel transformation. 34

We know that the almost theoretical results for the segmentation case can be obtained in the same way as the denoising case of (16). Further, Euler’s elastica-dependent segmentation problem (8) can be rewritten as the following constraint optimization minimization in the equivalent form

Step 1. Given an initial image f, the initial multiplier

Step 2. for k ≥ 0

Step 3. Stop if a given criterion is valid, otherwise go to Step 2.

Algorithm 2 (ALPD-elastica segmentation)

Numerical results

In this section, we present some numerical results from applying our proposed algorithms. The experimental results include two parts: surface denoising and image segmentation. Let us first introduce the discrete setting which we will use in the rest of this section.

Discrete setting

We consider a regular Cartesian grid of size

Image denoising

We now use numerical simulations to illustrate the effectiveness of our denoising Algorithm 1 just developed. The numerical techniques are based on augmented Lagrangian and Legendre–Fenchel transformation. We follow the previous subsection and discretize the gradient and diffusion operators.

In order to demonstrate the functionality of the variant of our proposing algorithm, we applied it against a large variety of test problems. These problems include synthetic and natural images. For all these test problems (here all images are grayscale, and the original images are scaled to the range [0, 1]), the values of the noise function are uniformly distributed with mean variation σ > 0. The main compared approach is naturally the Tai-Hahn-Chung ( The denoising results for a synthetic image and a real image. The true and noisy images for three examples are listed in the first row; denoised and residual images by the

Performance comparison of different examples and different image sizes by using our algorithm and

THC: Tai-Hahn-Chung; ALPD: Augmented Lagrangian Primal-Dual method.

In Figure 2, plots of objective function versus iteration numbers (Iters), CPU times versus image sizes and noise variations versus psnr are shown for the “Smooth” example with the size 256 × 256 in Table 1. From the third plot (see Figure 2(c)), we can see that both algorithms produce the restored results of good quality with similar psnr, but the objective function in (3) of the proposed algorithm reduce faster and more stably compared to the The first plot (a) lists the plots of objective function values versus iterations for the example “Smooth” using our algorithm and the

It is well known that noise exists in computed tomography (CT) images. Figure 3 shows the results obtained while applying the implementation against CT images with noise. We conduct two experiments of Euler’s elastica model on real liver CT data. Three slices of liver and bone CT images are selected from two data sets, which are displayed at the top of Figure 3. The restored images are shown at the bottom of Figure 3. As illustrated by these two experiments, our proposed scheme can successfully remove the noise in CT images.

The performance of our method removing the noise contained in CT medical images. (a) CT with noise. (b) Bone I with noise. (c) Bone II with noise. (d) CT denoising. (e) Bone I denoising. (f) Bone II denoising.

Image segmentation

In this subsection, we describe the application of the proposed algorithm solving Euler’s elastica regularized Mumford–Shah model with two-phase clustering on real camera and medical images.

The first experiment consists of a two-phase segmentation in a natural image shown in Figure 4(a). The objective is to distinguish the cameraman and the background. Figure 4(b) and 4(d) show the segmentation contour and the sampled regions by our proposed algorithm. Figure 4(c) and 4(e) show the segmentation contour and the sampled regions by Zhu-Tai-Chan ( Iterations (Iters) and CPU times (CPU(s)) of different examples and different image sizes by using our Algorithm 2 and the ZTC: Zhu-Tai-Chan.. Euler’s elastica-based image segmentation: bone micro CT image. (a) Original image. (b) The contour {x : u(x) = 0.5} by our algorithm. (c) The contour {x : u(x) = 0.5} by

In some cases, one might want to do simulations on a sample containing trabecular bone. However, it is notable that some of the connectivity in the trabeculae bone is lost, which is quite important for some applications and affects the mechanical stiffness of this part. The problem is that preserving the small feature of connected complete trabeculae bone (the small feature being the thin trabeculae) is not possible when using a popular CV model based on total variation regularization. To illustrate the advantage of Euler’s elastica in integrating missing or broken parts, 34 we apply our algorithm to an image with incomplete trabeculae, as shown in Figure 6(a). Our method gives more satisfactory results in Figure 6 since our method works well at connecting the missing information and preserving more details in the bone image. It clearly shows that the proposed method is suitable for segmentation of trabeculae bone.

Conclusions

A novel implementation formula solving Euler elastica regularizer for image denoising and segmentation is proposed in this paper. There are two novelties. One is that a new constraint

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research of the authors was supported by the UK EPSRC grant (EP/K036939/1) and the National Natural Science Foundation of China (NSFC project 11301447).