Abstract

Multi-objective optimization and gray association are classical technologies in intelligent system. In this paper, a novel multi-focus image fusion method based on the technology is proposed. First, the input images are decomposed into low-frequency subband and high-frequency subbands using shift invariant Shearlet transform. Second, low-frequency subbands are fused by the weighted average fusion rule. The weight can be adaptively obtained by vector-evaluated quantum-behaved particle swarm optimization and gray relation analysis. The similarity of a corresponding region-based fusion rule is proposed and used to integrate high-frequency subbands. Last but not least, fusion image is obtained by inverse SIST. Visual and statistical analyses demonstrate that the fusion quality can be significantly improved by the proposed method.

Keywords

Introduction

Multi-sensor image fusion has become a hot topic in the area of information fusion in the past few years. The contents of multiple images tend to be complementary. Image fusion is an effective technique to combine complementary information from multiple images into a fused image, which is very useful for human visual or machine perception. 1 For example, optical imaging cameras cannot capture objects at various distances all in focus. To resolve this problem, image fusion can fuse several images with different sharp parts to get one image with all the objects focused. The basic idea of performing multi-focus image fusion is to choose the clearer image pixels (or blocks/regions) from source images or adaptively average observed images according to their respective sharpness, to construct the fused image.

Basically, there are two main methods for multi-focus image fusion. One is the spatial domain-based method, which select pixels or regions from clear parts in the spatial domain to compose fused images. 2 The simplest fusion method in spatial domain is to take the average of the source images pixel by pixel. 3 The main drawback is that all information within the images are treated in the same way. To overcome this drawback, a normalized weighted aggregation approach is proposed for image fusion. 4 However, pixel-based fusion methods come with several undesired side effects including reduced contrast and sensitive to noise or mis-registration. To improve the quality of fused image, some researchers proposed to fuse images based on blocks or segmented region instead of single pixels.5–7 The key point of this kind of methods is how to design reasonable focus measures to choose the clearer part from source images to construct the fused image. 8 Li et al. 5 used spatial frequency (SF) to measure the clarity of image blocks. Miao and Wang 9 and Eltoukhy and Kavusi 10 used energy of image gradients to measure the activity. In addition, variance, energy of image gradient (EOG), Tenenbaum’s algorithm and energy of Laplacian (EOL) 8 of the image are often used. However, the above-mentioned methods are easy to introduce blocking effect, and the boundary of focused regions cannot be accurately discriminated.

The other is transform domain-based fusion method, which fuse images with certain frequency or time-frequency transform. 2 As for this kind of methods, source images are projected onto localized bases and transformed coefficients are obtained. 3 The salient features provided by transformed coefficients are used to construct the fused image. The multi-scale transform (MST) is often used in image fusion, which can efficiently avoid blocking effect because the processing of fusion is performed in frequency domain. Commonly used MST tool include the Laplacian pyramid, 11 wavelet,12,13 Curvelet, 14 Contourlet 15 and nonsubsampled Contourlet transform (NSCT) 2 and Shearlet et al.16,17 As we all know, one of the well-known MST methods for image fusion is wavelet. The wavelet-based statistical feature can be used to sharpness measure. 18 Wavelet is good at isolating the discontinuities at object edges, but cannot effectively represent the ‘line’ and the ‘curve’ discontinuities. In addition, Wavelet decomposes image in only three direction subbands and thus cannot represent the directions of edges accurately. So wavelet-based fusion methods cannot preserve the salient features of source images very well and probably introduce some artifacts into the fused image. Concerning the curvelet transform, however, it does not build directly on the discrete domain and provide a multi-resolution representation of the geometry. As for the contourlet transform, the shift-invariance is lost while the NSCT is of high-time cost. SIST is one of the state-of-the-art MST means, 17 which has a rich mathematical structure similar to wavelet and the true 2-dimensional (2D) sparse representation for images with edges. It is more efficient for mathematics analysis and has been applied in image fusion.

Except for choosing MST tool, the other important thing is to design fusion rule. Considering the characteristic of human visual perception and the relationship among pixels, novel fusion rules combining intelligent algorithm and the similarity of regions are developed in this paper. Firstly, the source images are decomposed by using SIST. Secondly, fusion rules are designed for low-frequency subband and high-frequency subbands, respectively. The fusion rule of low-frequency subbands based on multi-objective optimization and gray association is designed. The fusion rule of high-frequency subbands based on the similarity of corresponding region is employed. If the similarity of corresponding region is greater than the threshold value, a fusion rule based on window is employed. Otherwise, a fusion rule based on region is performed. Finally, fused image is obtained by taking inverse SIST transform. The proposed method adequately considers the salient feature of image, and exploits the characteristic of region to design fusion strategy. According to fusion purpose, the fusion evaluation metrics are selected as multi-object functions of vector-evaluated quantum-behaved particle swarm optimization (VEQPSO) to realize the fusion of low frequency subbands. Experimental results show that the approach is capable of fusing images with higher image quality.

The remaining sections of this paper are organized as follows: Partition the source images presents how to get image regions. The next section describes the proposed image fusion method. Experimental results and discussion are given in the following section. Conclusions are given in the final section.

Partition the source images

The source images are partitioned into region because regions rather than arbitrary pixels are more meaningful in image fusion. To obtain the region map of source images, the average image of them is calculated.7,19 It is divided into

Texture features

Four of

The other three features are computed similarly.

2D entropy principal component

In this paper, 2D entropy principal component analysis (2DECA) method is applied to the feature extraction of a window. Instead of selecting features based on the cumulative variance contribution, the features are extracted from the 2DECA subspace based on the Renyi entropy contribution.21,22 The 2DECA makes full use of the 2D information of window and avoids reshaping a window matrix into a window vector. Those principal axes contributing most to the Renyi entropy estimate clearly carry most of the information of input space data set. The 2DECA algorithm is given as follows.

Input the dataset Calculate the average image of all training samples: Calculate the covariance matrix: Calculate the eigenvalues and eigenvectors of C, which are denoted as Calculate the estimate value of entropy: The window sample Projection: Output:

v1is the first entropy principal component, the size of v1is

Image segmentation by FCM

Image segmentation is an essential step in the proposed method. Fuzzy clustering techniques have been effectively used in image processing, pattern recognition and fuzzy modeling. FCM adopts the iterative algorithm to optimize objective function. 23

The clusters can be obtained by FCM as follows:

If

The proposed fusion algorithm

To improve the quality of fused image, multi-objective optimization and gray association are used to fuse the low-frequency subband coefficients, and region similarity is employed to guide the fused process of high-frequency subband coefficients.

The fusion rule of low-frequency subband coefficients

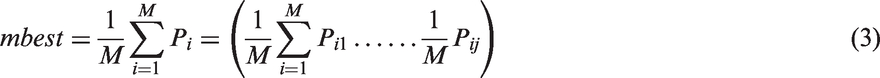

The coefficients in the coarsest scale subband represent the approximation component of the source image. The average weighted fusion rule is employed to fuse the low-frequency subband coefficients

In VEQPSO,

In view of the fact that QPSO is the basis of VEQPSO, it will be introduced firstly.

QPSO is an excellent evolutionary search technology. The moving process of particles is described as follows

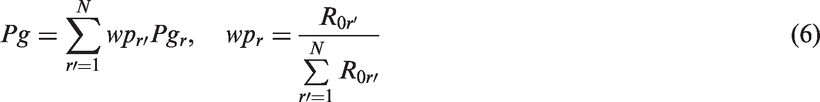

Next, the VEQPSO based on gray relation analysis is given as follows.

Initialize swarms. Suppose the number of object is N, then randomly generate N swarms Calculate the global best particle After getting Calculate the mbest of each particle swarm. Then, PG

ij

can be gotten. Use the global best particle ( The whole process 2–5 is repeated until maximum number of iterations. Finally, a group of Pareto optimal solutions selected from each of swarm is outputted as the optimization results.

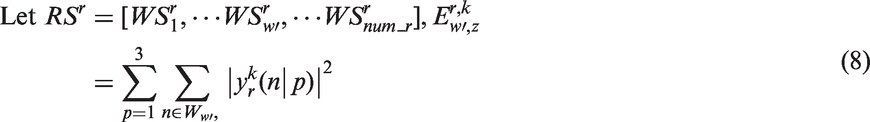

The fusion rule of high-frequency subband coefficients

Suppose that the feature matrix of rth region can be denoted as

The similarity of corresponding window in the corresponding region is given as follows

26

The fusion rule of high-frequency subband coefficients can be given by

Else

The procedure of the proposed fusion method

The scheme of the proposed method is depicted in Figure 1, and the fusion process is given in details as below.

Decompose the source images AandB by using SIST. The average weighted fusion rule is employed to fuse the low-frequency subbands. The weight can be adaptive obtained by VEQPSO and gray relation analysis. The average image of Aand B is partitioned into windows and then the feature vectors are extracted from each window. FCM algorithm is used to cluster the feature vectors. The segmentation results are mapped intoAandB. The fusion rule of high-frequency subbands is designed based on the similarity of corresponding region. The fused image F is obtained by inverse SIST. The scheme of the proposed method.

Experimental results and analysis

In this section, the ‘goldhill’ and ‘book’ images are employed to demonstrate the effectiveness of our proposed method. The source images are obtained from the website http://www.imagefusion.org/. The size of images are The fusion results of goldhill images. (a) Source image A; (b) source image B;(c)the average weighted fusion method;(d) PCA based method;(e) DWT based method; (f) morphological wavelets based method; (g) the normalized weighted aggregation based method;(h) the proposed fusion method with 2DPCA; (i) the proposed method; The fusion results of book images. (a) source image A; (b) source image B;(c) the average weighted fusion method;(d) PCA based method;(e) DWT based method; (f) morphological wavelets based method; (g) the normalized weighted aggregation based method;(h) the proposed fusion mthod with 2DPCA; (i) the proposed method;

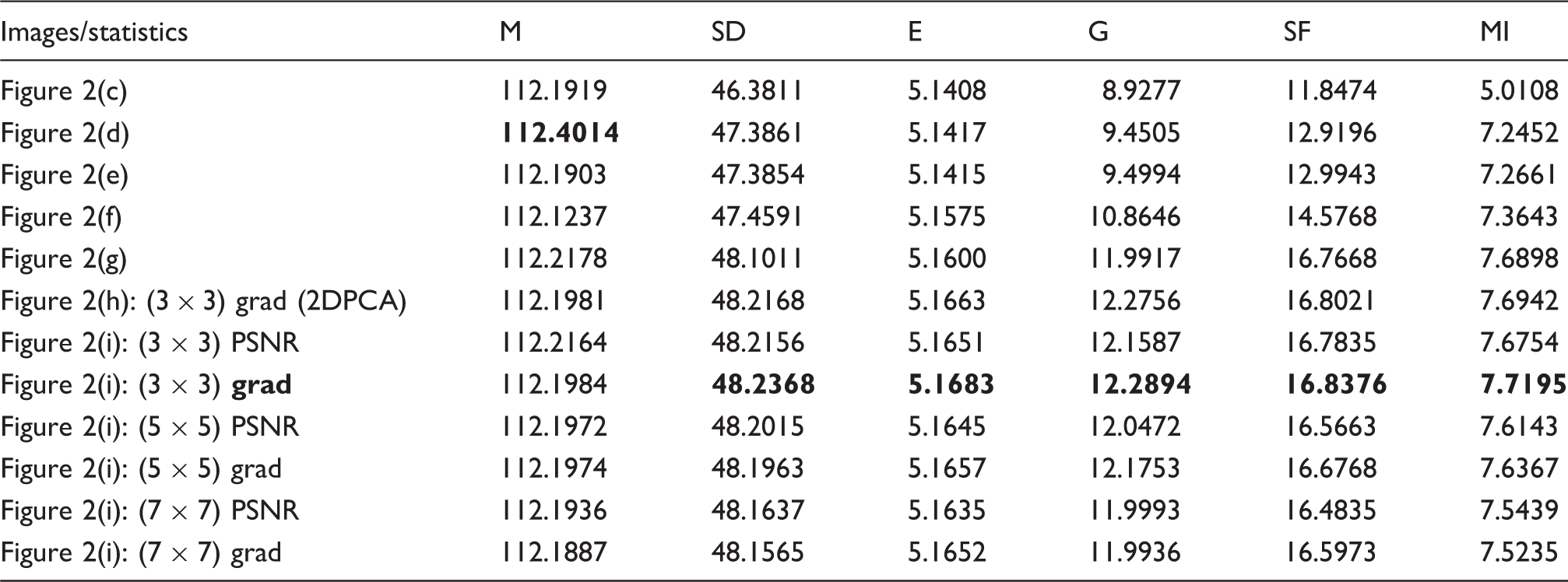

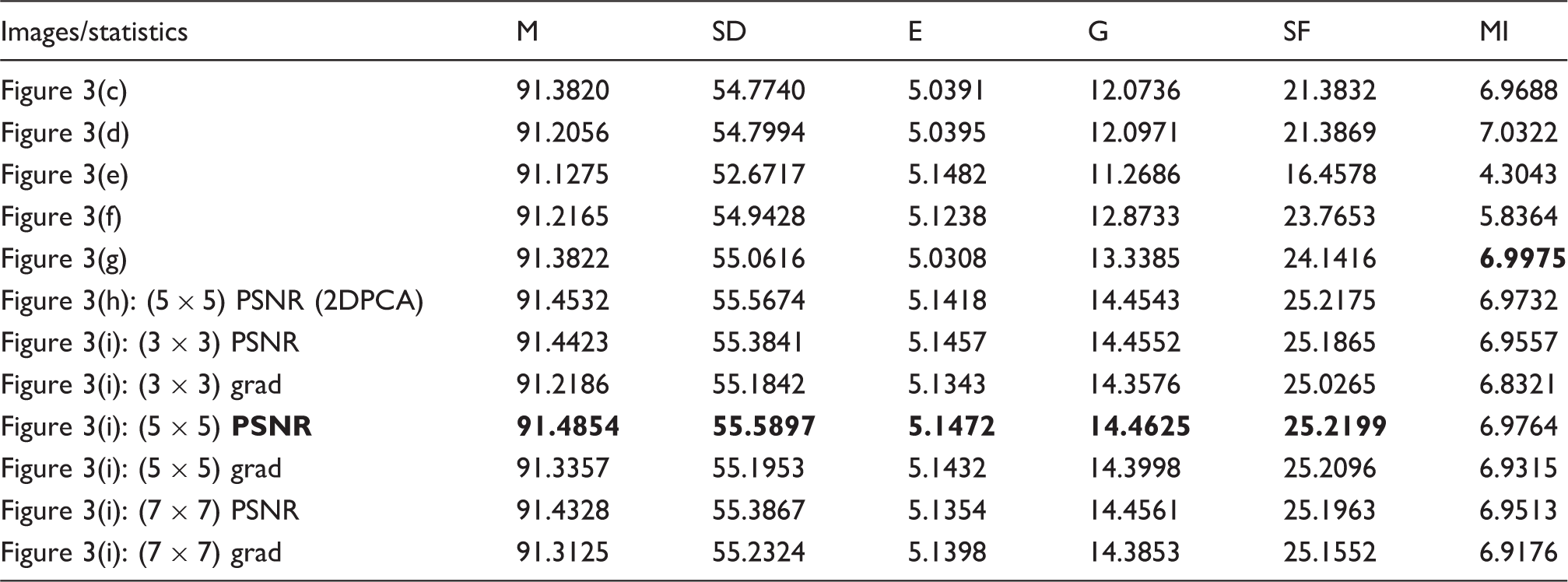

The proposed fusion algorithm compared with other typical fusion methods include: average weighted-based method, PCA-based method, 27 DWT-based method, 28 morphological wavelets-based method (Ishita De’s method 29 ), the normalized weighted aggregation-based method (Tian’s method 18 ) and the proposed fusion method using 2DPCA. Some objective evaluation indices are employed to evaluate the quality of fused image, which includes: mean (M), standard deviation (SD), entropy (E), average gradient (G), spatial frequency (SF) and mutual information (MI). 19 The high values of the above indexes means the better fusion quality.

Values of the object evaluations for fusion images in Figure 2 (the best values are in bold).

Values of the object evaluations for fusion images in Figure 3 (the best values are in bold).

Figure 2(a) source image A; (b) source image B; (c) the average weighted fusion method; (d) PCA-based method; (e) DWT-based method; (f) morphological wavelets-based method; (g) the normalized weighted aggregation-based method; (h) the proposed fusion method with 2DPCA; (i) the proposed method.

Figure 3(a) source image A; (b) source image B; (c) the average weighted fusion method; (d) PCA-based method; (e) DWT-based method; (f) morphological wavelets-based method; (g) the normalized weighted aggregation-based method; (h) the proposed fusion method with 2DPCA; (i) the proposed method.

Conclusions

In this paper, a new method based on multi-objective optimization and gray association is presented. The underlying advantages include: (1) The relationship between image fusion and multi-objective optimization method is constructed (2) The weights of objective functions are determined by gray association in the optimization process. (3) 2DECA has the advantage in information reservation, which is used to extract the feature of window and then is beneficial for getting the reasonable region. (4) Region-based fusion rule is designed, which can increase the reliability and robustness of the fusion method. The experimental results on several pairs of multi-focus images have demonstrated the superior performance of the proposed fusion method.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of P.R. China under grant no. 61103128, the China Postdoctoral Science Foundation under grant no. 2013M541601, Postdoctoral Research Funds of Jiangsu province under grant no. 1301079C, the Natural Science Foundation of Jiangsu Province of China under grant no. BK20151358, BK20151202 and the Fundamental Research Funds for the Central Universities JUSRP51618B. The Ministry of Housing and Urban-rural Development of the People's Republic of China under grant no. 2015-K8-035.