Abstract

Enhanced rationality has been linked to higher levels of autistic traits, characterised by increased deliberation and decreased intuition, alongside reduced susceptibility to common reasoning biases. However, it is unclear whether this is domain-specific or domain-general. We aimed to explore whether reasoning tendencies differ across social and non-social domains in relation to autistic traits. We conducted two experiments (

Reasoning and decision-making are crucial in both the social and non-social domains. As human beings, for instance, we must weigh up detailed information when we decide on whether to make a financial investment and consider the pros and cons of a romantic life partner before proposing marriage. How we do so across different scenarios and domains remains a crucial question for decision-making research. Recent years have seen a rapid expansion in research in this area, with many findings in the social or non-social domains. Perhaps surprisingly, few studies have examined the equivalence or not of how we make decisions across these domains. Such decision-making seems to differ across individuals. Carefully characterising these individual differences allows for understanding behavioural differences in both the social and non-social domains. Recently, it has been proposed that autistic people 1 and people with a high degree of autistic traits show different preferences and performance patterns to non-autistic people and people with a low degree of autistic traits, but studies have not examined such findings across domains. Here, we compare how adults in the general population use information to reason in the social versus non-social domains and examine how it relates to autistic traits.

Reasoning

Reasoning, allowing us to make decisions and draw judgments, is the capacity to move from multiple inputs to a single output with the goal of achieving a conclusion (Krawczyk, 2017). Dual Process Theories, among the popular accounts of cognition, propose that reasoning is achieved by two types of processing: slow “deliberation” and fast “intuition” (De Neys, 2018; Evans, 2008, 2011; Evans & Frankish, 2009; Kahneman, 2011; but see, Grayot, 2020; Osman, 2004). Broadly, deliberation is a slow and effortful process, one that is associated with fewer cognitive biases. Intuition is a quicker and low-effort process that shows more features of automaticity. Intuition enjoys a sort of default status in the reasoning process and often leads the reasoner to cognitive biases (Kahneman & Frederick, 2002). Tendencies towards deliberation and intuition in reasoning have been established as meaningful ways of distinguishing reasoning preferences and performance patterns across individuals. From this point of view, such patterns could go some way to explain the differences we see between people when they reason in the real world.

Autism and reasoning

Autism is characterised by persistent difficulties in social communication and interaction, alongside stereotyped behaviours, activities, and interests in

Autistic people also report reasoning and decision-making difficulties (Komeda, 2021; Levin et al., 2021; Luke et al., 2011; van der Plas et al., 2023). These difficulties can interfere with everyday decisions, such as deciding when to go to bed or what to wear, and can also interfere with more important decisions, such as those involved in successfully navigating a job interview or pursuing a goal of marriage (Gaeth et al., 2016; Robic et al., 2015; Vella et al., 2018). Logical reasoning may be particularly useful for many non-social decisions, but over-reliance on logical reasoning might require longer and more in-depth consideration, likely resulting in subtle but important cues of rapidly changing social situations being missed. Therefore, more exploration of enhanced rationality in autism would be beneficial to understanding differences in the social domain faced by autistic people and people with higher levels of autistic traits.

Reasoning in the social vs. non-social domains

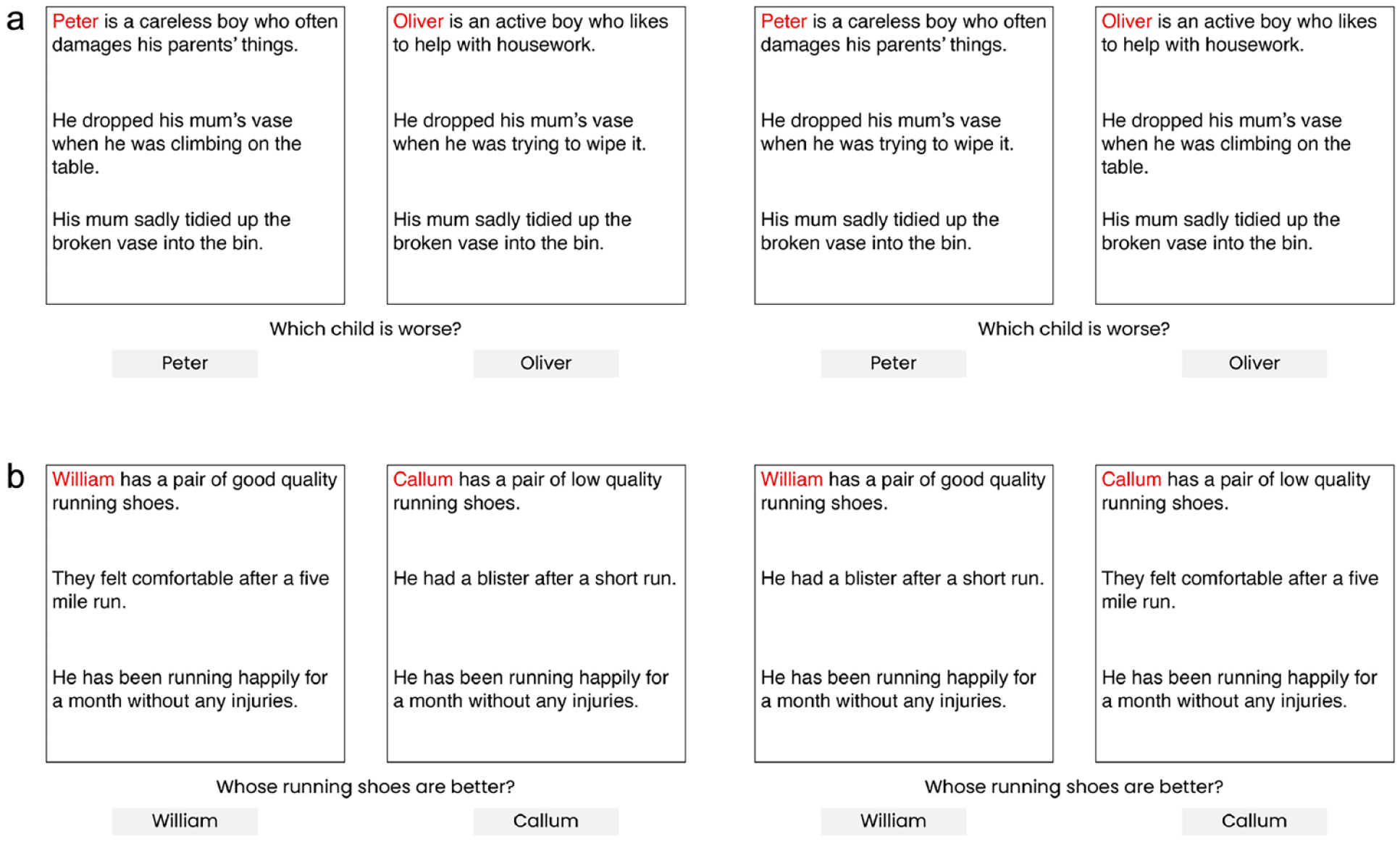

Reasoning in the social domain is considered here as reasoning about characters, behaviours, and intentions of others (Levesque, 2011). For instance, determining whether a child, who is generally well-behaved yet has purposefully broken her mother’s favourite vase, is good or bad (Komeda et al., 2016). Reasoning in the non-social domain is reasoning about features, properties, and performance of inanimate objects. For instance, determining whether a pair of otherwise good-quality running shoes, that have given you blisters after a quick run, are good or bad. Since properties of social behaviour are different than properties of objects, social reasoning and non-social reasoning can be considered to be different than each other (Shultz, 1982). There are few experimental tasks that provide structurally equivalent, and thus comparable, measures of reasoning in social versus non-social domains. One way to create an analogous comparison between the two is to compare people’s judgments of objects based on their features and performance (a non-social reasoning task), to their judgments of people based on their characteristics and behaviour (a social reasoning task—typically labelled

Moral reasoning

Moral reasoning, which is central to the evaluation of moral issues, is reasoning about what is right or wrong, or good or bad. Autistic people, compared to non-autistic people, are more likely to provide more concrete, yet less abstract, flexible, and detailed moral justifications (Dempsey et al., 2020; Shulman et al., 2012), more likely to depend on rules and authority, and more focused on behaviours and outcomes rather than underlying characteristics or intentions of social agents (Fadda et al., 2016; Komeda et al., 2016). Komeda et al. (2016) asked autistic and non-autistic adolescents to judge social scenarios’ protagonists as being good or bad. Each scenario in their task presented a social interaction between a child and the child’s parent with three lines of information: a line describing the character of the child, a line describing the behaviour of the child, and a line describing the outcome of the scenario. Komeda et al. (2016) found that, in forming moral judgments, autistic adolescents ignored character-based information of the protagonists, but considered behaviour-based information and the outcome of the scenarios; whereas their non-autistic counterparts considered character-based information too.

Rationale and aims

Studies exploring reasoning in autism have predominantly focused either on the social domain, as in Komeda et al.’s (2016) study, or on the non-social domain, as in Brosnan and Ashwin’s (2022) study. To our knowledge, the two domains have not been systematically compared. However, a non-social condition is needed to be able to warrant a conclusion related to exclusively social behaviour (Lockwood et al., 2020). Enhanced rationality in autism is proposed to contribute to core differences because intuition is suggested to play a particularly strong role in the social domain (Robic et al., 2015; South et al., 2014). Therefore, it is important to investigate whether this claim is consistent across domains. Moreover, researchers studying moral reasoning in autism have heavily relied on tasks involving negative scenarios like intense harm, such as the trolley problem in Moral Dilemmas (for a review, see, Dempsey et al., 2020) or scenarios involving risk-taking behaviour, such as Ultimatum Game (for a review, see Hinterbuchinger et al., 2018), that are rare in life, therefore not representative of real-life interactions.

We ran two experiments to examine how reasoning differs across social and non-social domains and how it relates to autistic traits. For this, we adapted the scenario-based comparison task of Komeda et al. (2016) and created structurally equivalent non-social scenario comparisons. With this task, we measured the proportion of reliance on behaviour-based information in social and non-social domains. We interpreted a greater consistency in reliance on behaviour-based responses to be more indicative of deliberative reasoning and switching between behaviour- and character-based information for different scenarios to be more indicative of intuitive reasoning. We predicted that the social domain would elicit more intuitive reasoning, particularly for those who self-report lower levels of autistic traits. We predicted that (1) all participants would reason more deliberatively in the non-social domain by providing higher percentages of behaviour-based responses, compared to the social domain. With the literature on reasoning differences between autistic and non-autistic people in mind, we also predicted (2) a significant positive relationship between higher levels of autistic traits and a higher percentage of behaviour-based information in the social domain, and (3) no significant relationship between the levels of autistic traits and percentages of behaviour-based information in the non-social domain.

Experiment 1

Method

This project was conducted in accordance with the British Psychological Society ethical guidelines with approval by the Science, Technology, Engineering, and Mathematics Ethical Review Committee at the University of Birmingham (ERN_09-719AP19). Pre-registration, material, and anonymised data can be found at https://osf.io/r8waf.

Participants

Sample size and effect size calculations

Sample

Seventy-two university students (female = 60, male = 12,

Materials

The Adult Autism-Spectrum Quotient (AQ)

The AQ is a 50-item self-report measure of autistic traits for adults (≥16 years of age) with average or higher intelligence levels (Baron-Cohen et al., 2001). The AQ is recorded on a 4-point Likert-type scale presenting “definitely agree,” “slightly agree,” “slightly disagree,” and “definitely disagree” as options. A response in the direction of autistic traits is scored as 1 and a response in the opposite direction is scored as 0. There is no difference between “definitely agree” and “slightly agree” or “definitely disagree” and “slightly disagree” in terms of scoring values. The highest score that can be obtained is 50, while the lowest is 0, with higher scores indicating higher levels of autistic traits. The AQ shows good test–retest reliability (

The Scenario-based Comparison Task

This task involves pairs of scenarios representing social or non-social interactions (adapted from Komeda et al., 2016). This task is used to measure whether participants rely on a specific kind of information when judging a scenario’s subject as good or bad. In this task, each scenario provides three lines of information (first line, characteristics; second, behaviours; and third, outcomes), valenced as binary (positive or negative). As in Figure 1a, social scenarios, which were translated from Komeda et al. (2016), presented an interaction between a child and child’s parent. After reading each pair of scenarios, participants were asked to judge which child was better or worse. This domain measured if people draw on child’s behaviour or underlying characteristics to decide which child is better or worse. In addition, we created structurally equivalent non-social scenario comparisons. As in Figure 1b, non-social scenarios presented an interaction between a person and an inanimate object. This domain measured if people draw on the performance of an object or its underlying qualities to decide which object was better or worse.

Example scenario comparisons in (a) Social Domain with a bad outcome and (b) Non-social Domain with a good outcome.

Scenario comparisons varied in the consistency of behaviour- and character-based information. For consistent scenario comparisons, the comparison was “good characteristics/good behaviour versus bad characteristics/bad behaviour.” For inconsistent scenario comparisons, the comparison was “bad characteristics/good behaviour versus good characteristics/bad behaviour.” The consistent scenarios allowed for estimating a proportion of reading errors, motor responses, and spurious responding. The inconsistent scenarios, which did not necessarily have a right or wrong answer, allowed for testing which kind of information participants drew on when making judgments on scenario subjects. The outcome line was the same for each scenario in a comparison. The number of letters in each outcome line across each comparison was the same.

In summary, the Scenario-based Comparison Task included two key variables of interest: domain type—whether scenarios were social or non-social; and consistency—whether behaviour- and character-based information were presented in consistent or inconsistent ways. It is worth noting here, for consistent trials, behaviour-based responses were also characteristics-based responses, so not necessarily evidenced that the participant used behaviour as the determining factor in making their judgment.

Characters’ genders and positions (presented on the left or right), and positive behaviour’s position (left or right) was counterbalanced. Domain order (presentation of Social or Non-social Domain First) was controlled across participants. For each domain, participants responded to 24 comparisons; 3 of them represented 2 characteristics (good × bad), 2 behaviours (good × bad), and 2 outcomes (good × bad). After counterbalancing, in total, participants completed 96 trials.

Procedure

Data collection began on 28 November 2020 and ended on 4 March 2021. Participants first completed the AQ (Baron-Cohen et al., 2001) via an online survey platform, Qualtrics (https://www.qualtrics.com/uk/). Then, they received a link for a video call over Zoom (https://zoom.us/) to complete the Scenario-based Comparison Task with the lead researcher (EB; female). The Scenario-based Comparison Task was built on Microsoft PowerPoint and presented to each participant by using the screen-sharing option on Zoom. Participants completed two practice trials before the main task. The scenario comparisons were presented one at a time. The pairs of scenarios, questions, and options appeared at the same time. Participants responded verbally with their choice. The researcher stayed muted and turned their video off during the main task to eliminate distraction. The participant was free to keep their video on or off, depending on their preference. The researcher moved to the next comparison once the participant provided an answer verbally. Participants could not return to the instructions or previous comparisons. The responses were manually recorded by the researcher and transferred to Excel sheets after each session. The sessions were not video- or audio-recorded. There was no time limit when responding, and the sessions were not timed because response time was not a targeted variable. Participants were rewarded with course credits through RPS.

Data analysis

The significance level was set at α = 0.05. The key Dependent Variable (DV) was the percentage of participants’ behaviour-based responses from the Scenario-based Comparison Task. If the participant’s response was behaviour-consistent, this was coded as 1, and if behaviour-inconsistent, this was coded as 0. This coding was not used to represent accuracy, rather the tendency to rely on behaviour-based information, indicating higher logical consistency across scenario comparisons. We ran a mixed 2 × 2 × 2 × 2 ANOVA with within-subjects factors domain type (Social, Non-social), consistency (Consistent, Inconsistent), and outcome type (Good Outcome, Bad Outcome), and between-subjects factor domain order (Social Domain First, Non-social Domain First). Follow-up tests were conducted on significant interactions. A two-tailed Pearson correlation test was conducted to investigate the relationship between the total scores on the AQ and the proportion of behaviour-based responses for the Social Domain. To investigate the relationship between the total scores on the AQ and the proportion of behaviour-based responses for the Non-social Domain, a two-tailed Spearman’s rank order correlation test was conducted, because the proportion of behaviour-based responses for the Non-social Domain were not normally distributed. Finally, a follow-up test was run to explore the differences between dependent correlations.

Results

Omnibus ANOVA

Results from the 2 x 2 x 2 x 2 ANOVA indicated a significant effect of domain type, with participants providing more behaviour-based responses for Non-social Domain (

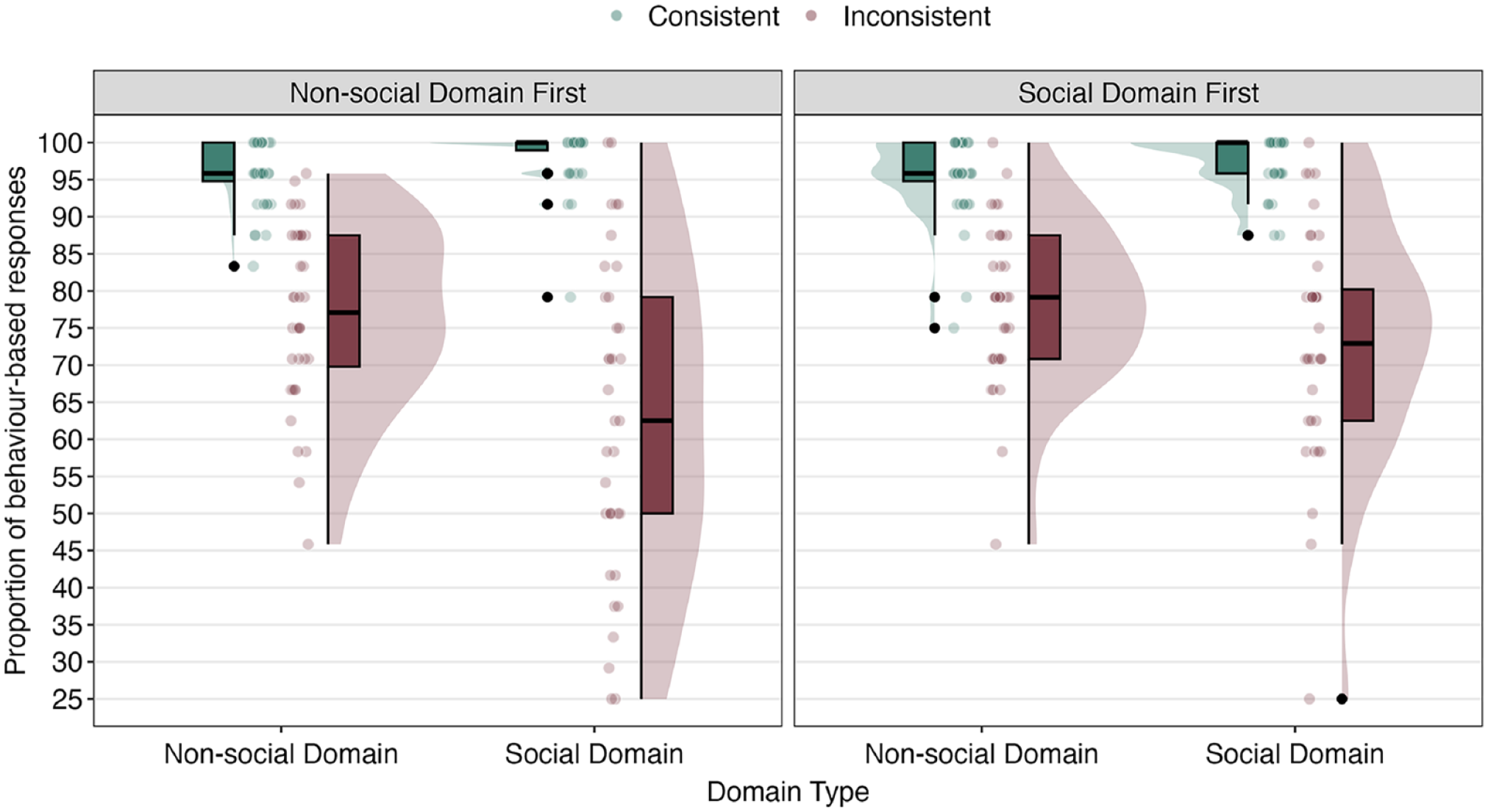

See Supplementary Appendix 2 for a summary of interaction effects. There was a significant domain type × consistency × domain order interaction,

Medians and quantiles of the proportion of behaviour-based responses for domain type, consistency, and domain order interaction, Experiment 1 (

We identified additional significant interactions, domain type x consistency,

Correlations between the total scores on the AQ and the proportions of behaviour-based responses

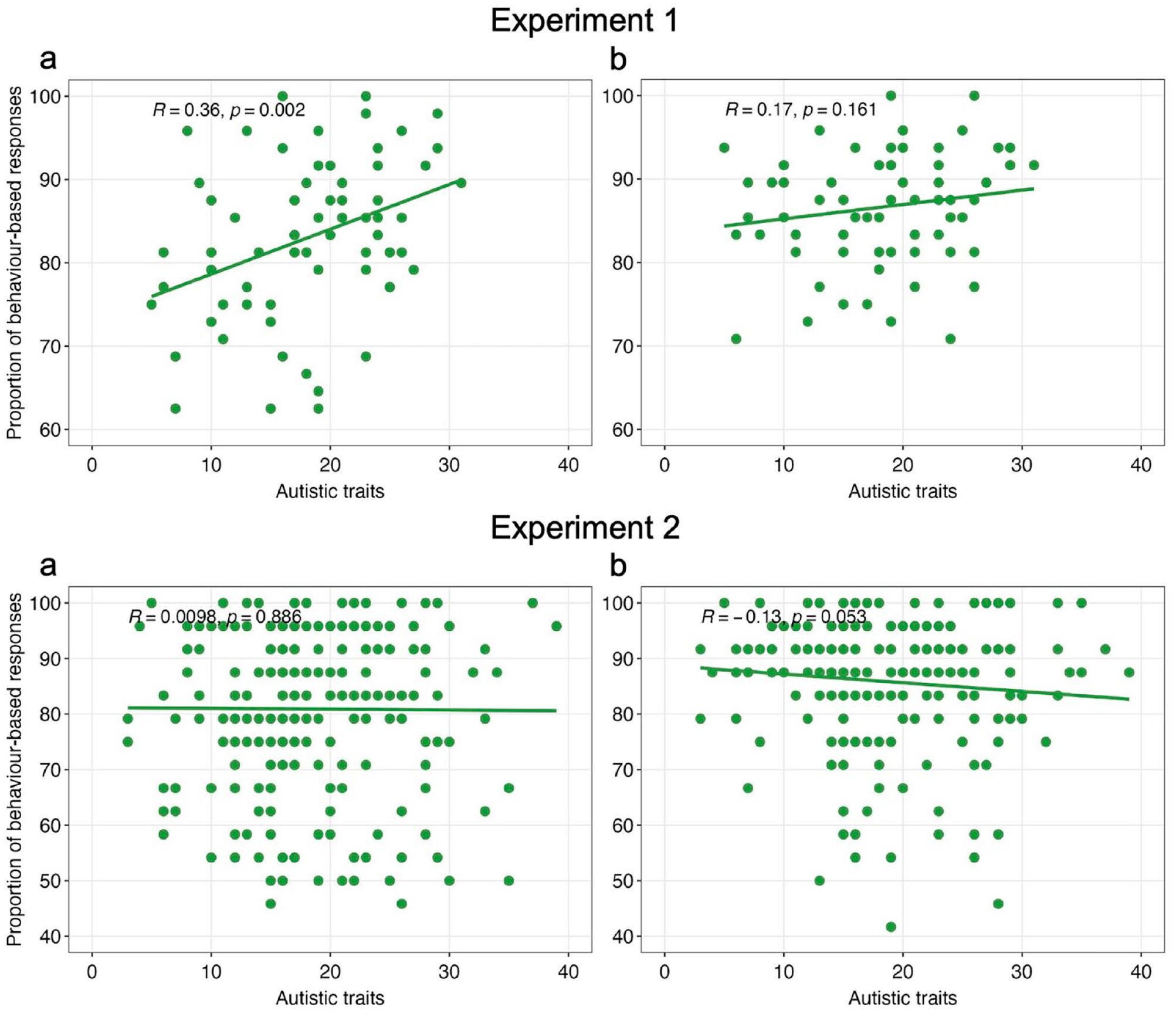

A Pearson correlation test revealed a significant positive correlation between the total scores on the AQ and the proportion of behaviour-based responses in the Social Domain,

Simple scatter plots of the proportions of behaviour-based responses in (a) Social and (b) Non-social Domain by the total scores on the AQ for Experiment 1 and 2.

Discussion

We investigated whether the information relied on by participants while making judgments on people and objects is affected by domain type, outcome type, consistency, and domain order. In line with our hypothesis, we found a significant effect of a domain type, showing that participants relied on behaviour-based information more for non-social scenario comparisons, suggesting a more consistent and rational reasoning approach for this domain. What we had not predicted was the impact of domain order on response patterns. Participants who completed non-social scenarios first showed the expected pattern—of being influenced more by characteristics for social scenarios. Those who did social domain first, however, showed similar patterns across domains. The outcome type also influenced participants’ judgments. When the scenario comparison ended with a good outcome, they relied more on the behaviour-based information. This is in line with Komeda et al.’s (2016) finding for the control group but not for the autism group.

We also investigated whether there was a positive significant relationship between the levels of autistic traits and the proportion of behaviour-based responses in social domain. We found that the correlation between the levels of autistic traits and the proportion of behaviour-based responses in the social domain was significant, suggesting that the higher a person scores on the autistic traits, more likely they are to rely on the behaviour-based information about the child. This result was also in line with Komeda et al. (2016) who found the autism group relying on behaviour-based responses more than the control group.

After confirming our hypotheses, we conducted a follow-up study to (1) test our task with a bigger sample, and (2) explore forced-choice judgments in-depth with justifications. Our main hypotheses for forced-choice judgement responses were same as Experiment 1. For justifications, we predicted that (1) participants would provide more exclusively character-based justifications for social domain, and (2) more exclusively behaviour-based justifications for non-social domain. We also predicted that, the levels of autistic traits would be (3) positively correlated with more exclusively behaviour-based justifications in social domain and negatively correlated with more exclusively character-based justifications in social domain, and (4) significantly correlated with neither the behaviour- nor character-based justifications in non-social domain.

Experiment 2

Method

This project was approved by the Science, Technology, Engineering, and Mathematics Ethical Review Committee at the University of Birmingham (ERN_09-719AP26). Pre-registration, material, and anonymised data can be found at https://osf.io/2rtp8.

Participants

Sample size and effect size calculations

We considered ANOVA results from Experiment 1 for power analysis. To find a small effect size (0.01) with a 95% power at

Sample

A total of 219 university students (female = 191, male = 23, other/non-binary = 3,

Materials and procedure

Data collection began on 12 October 2021 and ended on 5 November 2021. The same materials as Experiment 1 were used on Qualtrics with the following exceptions: (1) We shortened the Scenario-based Comparison Task by selecting scenario comparisons that were most representative of each domain. We used half of the comparisons from the first experiment after running an item validity check on the forced-choice data from Experiment 1. To do this, we calculated the proportion of behaviour-based responses for each scenario comparison across participants and selected the closest ones to the median score for that condition. In this way, we had 12 scenario comparisons for each domain. Participants completed 48-trials in total. (2) We asked participants’ written justifications for their forced-choice responses. This was presented with a blank section asking “Why?” after each comparison for providing justification. Participants were not instructed with any length or duration for justifications because neither time pressure nor response time was targeted. The comparisons were presented in a randomised order with the same counterbalancing technique as in Experiment 1. (3) Alongside the addition of a need for justifications, the key change from Experiment 1 was that participants made all responses on an online form, rather than reporting their responses to an experimenter over a video call. Participants self-enrolled to the study through RPS and followed the study link to Qualtrics to complete the study on their own time.

Data analysis

Data were recorded online and analysed as in Experiment 1 for forced-choice responses. For the written justifications, a blinded Research Assistant (RM; female) coded the responses into categories to indicate which kind of information the participants mentioned (character, behaviour, both, neither). The lead researcher coded 10% of the responses to check inter-rater reliability which was 87% (Cohen’s kappa = 0.71), suggesting a good level of agreement between coders. Written justifications were coded into 4 categories: (1) exclusively character-based, (2) exclusively behaviour-based, (3) both character- and behaviour-based, and (4) neither character- nor behaviour-based. For data analysis on the written justifications, we split the data by consistency. Then, we ran six 2 × 2 × 2 mixed ANOVAs with within-subjects factors domain type (Social, Non-social) and outcome type (Good Outcome, Bad Outcome), and between-subjects factor domain order (Social Domain First, Non-social Domain First) to explore the main and interaction effects of the manipulated variables on our DV for the categories of (1) exclusively character-based, (2) exclusively behaviour-based, and (3) both character- and behaviour-based separately. These analyses were run separately for consistent and inconsistent scenario comparisons. Here, unlike Experiment 1, both consistent and inconsistent trials provide meaningful data—for instance, even when characteristic- and behaviour-based information agree, one or the other or both can be used for justification. Follow-up tests were run on significant interactions. Finally, we ran two-tailed Spearman’s correlation tests between the total scores on the AQ and the percentages of responses in these categories.

Results

Omnibus ANOVA

We found the same main effects with the same directions as in Experiment 1. The results of 2 x 2 x 2 x 2 ANOVA indicated significant effects of domain type,

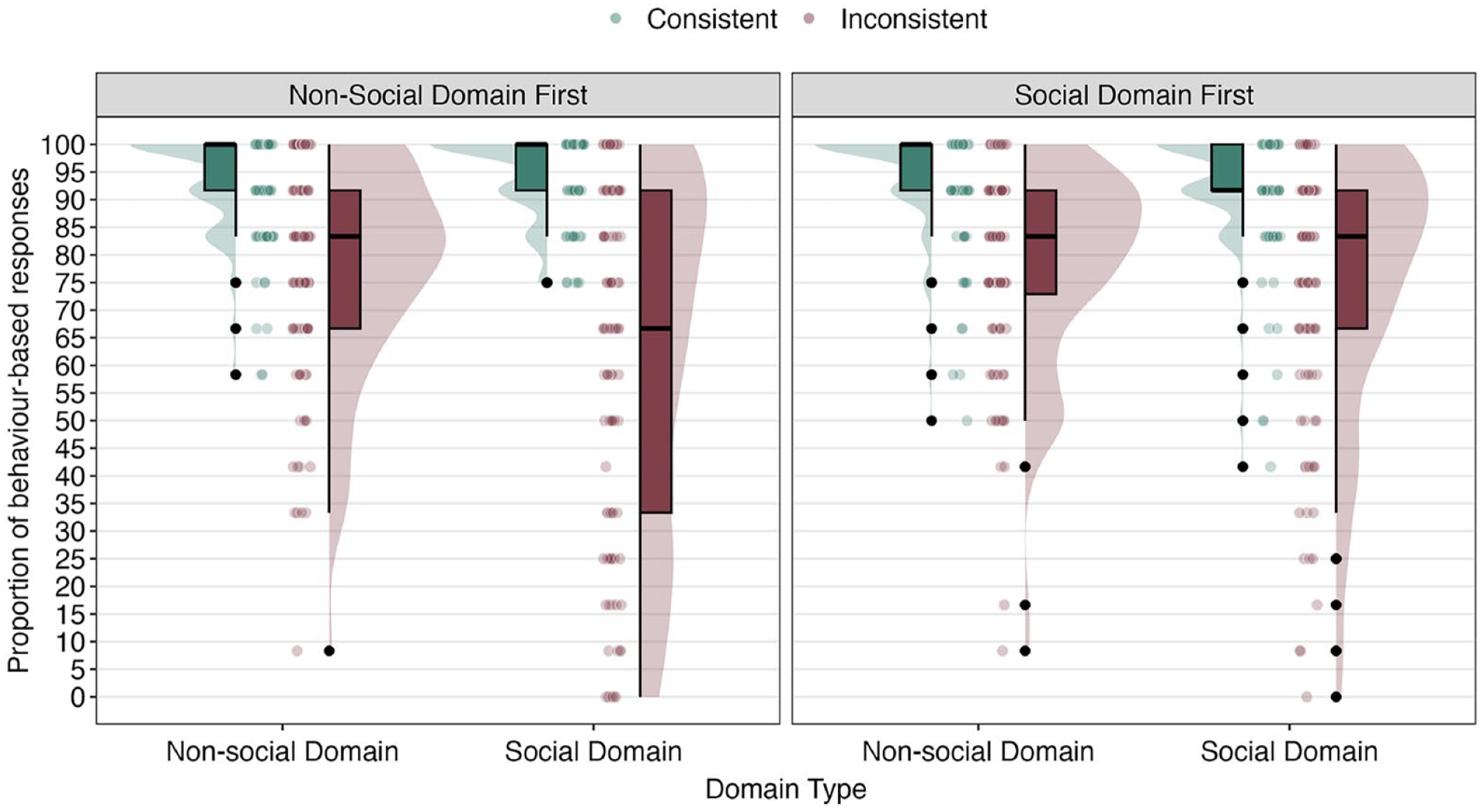

See Supplementary Appendix 4 for interaction effects. There was a significant domain type × consistency × domain order interaction,

Medians and quantiles of the proportion of behaviour-based responses for domain type, consistency, and domain order interaction, Experiment 2 (

We identified other significant interactions which were between domain type x consistency,

Correlations between the total scores on the AQ and the proportions of behaviour-based forced-choice responses

Spearman’s rank order correlation tests were run to investigate the relationship between the total scores on the AQ and the proportions of behaviour-based responses in Social and Non-social Domains. Contrary to our hypothesis and unlike the results from Experiment 1, no significant correlation was found between the total scores on the AQ and the proportion of behaviour-based responses in the Social Domain,

Omnibus ANOVA on written justifications

The significant results from 2 × 2 × 2 ANOVAs are described below for inconsistent and consistent scenario comparisons. See Supplementary Appendix 5 for descriptive details and paired-samples

Inconsistent scenario comparisons

For exclusively character-based justifications, we found a significant effect of domain type,

Consistent scenario comparisons

For exclusively character-based justifications, we found significant effects of domain type,

Correlations between the total scores on the AQ and the proportions of behaviour-based justifications for inconsistent scenarios

For Social Domain, we did not find significant correlations between the total scores on the AQ and the proportion of exclusively behaviour-based,

General discussion

We examined the tendency of young adults to rely on behaviour-based information or on a combination of behaviour- and character-based information for making judgments across social and non-social domains. We ran correlations between participants’ responses and their self-reported levels of autistic traits. We interpreted a greater consistency in reliance on behaviour-based responses to be more indicative of deliberative reasoning and switching between behaviour- and character-based information for different scenarios to be more indicative of intuitive reasoning. We predicted that social domain would elicit more intuitive reasoning, particularly for those who self-report lower levels of autistic traits. Both experiments showed that participants reasoned more deliberatively in non-social domain, consistently relying on the behaviour-based information of objects to determine their judgments regarding how good or bad the objects were. In social domain, participants were more likely to alternate from relying on children’s behaviour-based information to relying on their underlying character-based information in making judgments. Written justifications that were provided to explain their forced-choice judgments, in Experiment 2, were broadly consistent with forced-choice responses, though an interesting pattern emerged: even though participants were much more likely to rely on character-based information in justifying their responses in social domain, in non-social domain they were more likely to use both behaviour- and character-based information for their justifications. In addition, a mixed pattern emerged in the relation between reasoning biases and the levels of autistic traits. Experiment 1 found a significant association between autistic traits and a more deliberative reasoning pattern for the social domain. However, Experiment 2 found no meaningful association between autistic traits and the reasoning style for either domain.

Participants reasoned more intuitively in the social domain

We consistently found results showing that participants treated social domain differently from non-social domain by providing more behaviour-based forced-choice judgments for non-social domain compared to the social domain. We continued to find this pattern in Experiment 2 with participants’ written justifications showing that participants mentioned character-based information more for social domain but both character- and behaviour-based information for non-social domain. Glick (1978; reported in, Shultz, 1982) suggested that non-social knowledge requires logical strategies, whereas social knowledge requires intuitive strategies, and for the following reasons. First, behaviour of objects is more stable than behaviour of people because people do not only react but also act. Second, object behaviour is strictly deterministic whereas the behaviour of people is probabilistic. Finally, social knowledge (given, probably, the indeterministic nature of human behaviour) falls necessarily short of certainty and sensitive to context, in contrast with knowledge of objects, which sometimes allows for certainty. Shultz (1982) also suggested that people attribute moral responsibility to people and not to objects. People are therefore expected to reason in a more intuitive way in social domain than in non-social domain. Therefore, mentioning both character- and behaviour-based information when providing justifications regarding judgments of objects is as a more logical way of reasoning which is in line with the properties of non-social domain.

Understanding the relation between autistic traits and social reasoning

Findings from Experiment 1 were in line with Komeda et al. (2016), who showed that autistic young people relied more on behaviour-based information and less on character-based information, compared to non-autistic young people who relied on both character- and behaviour-based information, when making judgments about social scenarios’ main characters. However, Experiment 2 found no meaningful association between levels of autistic traits and reliance on the behaviour-based information for either domain. This might be due to minor methodological changes since the second experiment differed from the first in following ways: the experiment (1) was run with a bigger sample, (2) was run online and without the presence of a researcher, (3) was updated with a shorter version of the Scenario-based Comparison Task, and (4) asked participants to provide justifications for their forced-choice judgments.

A reasonable hypothesis is that autistic people and people who report higher levels of autistic traits would both reason more deliberatively across domains than non-autistic people and people who report lower levels of autistic traits, for the following reasons: given autistic people and people who report higher levels of autistic traits prefer and engage in a more deliberative thinking style, rather than an intuitive one (Brosnan et al., 2016, 2017), they engage in common reasoning biases less (Lewton et al., 2019), and they do not typically adapt their cognitive style according to their environment, but rather follow a logically consistent reasoning style (De Martino et al., 2008). Our results from first experiment are consistent with this possibility (but see, Morsanyi & Hamilton, 2023; Taylor et al., 2022). The results showed that people who self-report higher levels of autistic traits might tend to reason more deliberatively across domains, by responding in a more consistent and yet inflexible way rather than adapting their reasoning strategy to domain, in the way people who self-report lower levels of autistic traits do. Autistic people report experiencing difficulties in decision-making (Luke et al., 2011). These difficulties have not been considered across domains. However, there remains a question of whether autistic people might find reasoning in non-social context easier than social context. Therefore, there is a need to consider such decision-making difficulties across domains.

There were minor methodological differences between two experiments. There may therefore be several reasons why Experiment 2 produced a different pattern of results. The researcher being present through a video call during first experiment might have created social anxiety or social acceptance bias among participants or increased motivation. Moreover, the interaction between the participant and the researcher during first experiment, which made it so that the task was more socially interactive, might have differentially influenced participants’ responses. For future studies, checking the levels of social anxiety before and after the experiment could be useful, since social anxiety is highly common among autistic people and people who self-report higher levels of autistic traits (Freeth et al., 2013; Simonoff et al., 2008). It would be particularly interesting and have practical implications if social anxiety influenced autistic people’s social reasoning.

Other factors influenced reasoning style

The broad pattern of results from social domains of both experiments fit well with Komeda et al. (2016)’s results, which employed a social task only. Both experiments found that participants’ forced-choice judgments were influenced by scenarios’ outcome type. Participants provided more behaviour-based judgments for scenarios ending with a good rather than bad outcome. Komeda et al. (2016) found a similar result with their non-autistic group who provided more behaviour-based judgments for scenarios ending with a good outcome, compared to those with a bad outcome, while their autism group did not vary their strategy based on the outcome type.

We also unexpectedly identified that forced-choice responses were affected by domain order. Participants who completed social domain first and participants who completed the non-social domain first followed a different pattern of reasoning across domains. Participants who completed non-social domain first relied more on character-based information for social domain. However, participants who completed social domain first did not change their strategy across domains. This could be explained by a carry-over effect that occurred when a participant is introduced with a social task first. Previous studies suggested a carry-over effect when social and non-social tasks were introduced to participants as separate blocks (for instance, Surtees et al., 2012).

Limitations and future research

Komeda et al. (2016) found no significant interaction effects of working memory or language comprehension on participants’ forced-choice judgments; however, Taylor et al. (2022) showed that general cognitive ability predicted reasoning performance and reasoning style, rather than autism or the presence of autistic traits. That we did not measure any sort of cognitive ability, then, is one of the limitations of our study. Future studies should employ measures of cognitive abilities to control for this variable and try to match groups based on cognitive ability for between-group design. Even though there was a wide range of self-reported levels of autistic traits among our participants for both studies, samples that are dominated by specific groups are limited for generalisation. Although studies that employ measures of autistic traits in the general population have the potential to inform our understanding of populations who have received a clinical diagnosis (Happé & Frith, 2020), the findings from our experiments need to be replicated and extended with autistic samples before they can be extrapolated to autistic people (Sasson & Bottema-Beutel, 2021). Moreover, there might be interferences of co-existing diagnoses and/or difficulties which were found to be associated with atypicality in reasoning and cognitive biases (for ADHD, Persson et al., 2020; for anxiety, Remmers & Zander, 2018; for alexithymia, Rinaldi et al., 2017). Therefore, future studies should check their sample on co-existing conditions. Finally, though we consistently found significant effects of domain type, it might be useful to label the stimuli as “social” and “non-social” or check participants’ perception of the socialness of the stimuli (Lockwood et al., 2020; Varrier & Finn, 2022).

Implications

The question of whether autistic people or people who self-report high levels of autistic traits exhibit a higher level of deliberation that leads to a more deliberative and less intuitive reasoning is still open. Given the inconsistencies in the literature, potential publication bias might be damaging for the autistic community, which is highly heterogeneous in terms of strengths and weaknesses. If not well supported, the growing literature in this area might create pressure on the autistic community regarding a more logical thinking style. Given inconsistent results from our studies, there is a need to be cautious before emphasising enhanced deliberation and lack of intuition associated with autism and high autistic traits. Moreover, we should focus more on the ability of automatically alternating the reasoning strategy from intuitive to deliberative based on the context. Flexibility in reasoning strategies is likely to be very beneficial in everyday life. For instance, when the context is non-social, as in economics or meteorology, a deliberative reasoning style might be more helpful and a better strategy. However, when the context is social, we might want to switch to a more intuitive approach for a better fit in the social community.

Conclusion

Here, we introduce a novel scenario-based comparison task that systematically compares social and non-social domains. Both experiments consistently showed that people treat social and non-social contexts differently, relying more on behaviour-based forced-choice responses in non-social domain than in social domain. We also found this by showing that people justify their reasoning by mentioning both character- and behaviour-based information in non-social domain. The first experiment showed that the levels of autistic traits correlate with a more deliberative and logical reasoning style in social domain and not in non-social domain. This is in line with the research on enhanced rationality in autism. However, presumably due to relatively minor methodological changes, our second experiment did not find a meaningful association. Future studies should further explore the reasoning tendencies in relation to autism across domains by addressing limitations mentioned above.

Supplemental Material

sj-docx-1-qjp-10.1177_17470218241296090 – Supplemental material for Reasoning in social versus non-social domains and its relation to autistic traits

Supplemental material, sj-docx-1-qjp-10.1177_17470218241296090 for Reasoning in social versus non-social domains and its relation to autistic traits by Elif Bastan, Roberta McGuinness, Sarah R Beck and Andrew DR Surtees in Quarterly Journal of Experimental Psychology

Supplemental Material

sj-docx-2-qjp-10.1177_17470218241296090 – Supplemental material for Reasoning in social versus non-social domains and its relation to autistic traits

Supplemental material, sj-docx-2-qjp-10.1177_17470218241296090 for Reasoning in social versus non-social domains and its relation to autistic traits by Elif Bastan, Roberta McGuinness, Sarah R Beck and Andrew DR Surtees in Quarterly Journal of Experimental Psychology

Footnotes

Author contributions

E.B. and A.D.R.S. designed the project and prepared the main task. E.B. collected and analysed the data, completed the first draft of the manuscript, and led the revision. R.M. coded the written data, analysed the data, and provided feedback on the drafts. A.D.R.S. and S.R.B. co-supervised this project and provided critical feedback on the drafts. All authors contributed to the final manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: E.B. was funded by the Ministry of National Education of Republic of Türkiye. R.M. received financial research support from University of Birmingham.

Data accessibility statement

The data and materials from the present experiment are publicly available at the Open Science Framework website: https://osf.io/r8waf and ![]() .

.

Supplementary material

The supplementary material is available at: qjep.sagepub.com.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.