Abstract

Infants experience the world through their actions with objects and their interactions with other people, especially their parents. Prior research has shown that school-age children with hearing loss experience poorer quality interactions with typically hearing parents, yet little is known about parent–child interactions between toddlers with hearing loss and their parents early in life. In the current study, we used mobile eye-tracking to investigate parent–child interactions in toddlers with and without hearing loss (mean ages: 19.42 months, SD = 3.41 months). Parents and toddlers engaged in a goal-directed, interactive task that involved inserting coins into a slot and required joint coordination between the parent and the child. Overall, findings revealed that deaf toddlers demonstrate typical action skills in line with their hearing peers and engage in similar interactions with their parents during social interactions. Findings also revealed that deaf toddlers explored objects more and showed more temporal stability in their motor movements (i.e. less variation in their timing across trials) than hearing peers, suggesting further adaptability of the deaf group to their atypical sensory environment rather than poorer coordination. In contrast to previous research, findings suggest an intact ability of deaf toddlers to coordinate their actions with their parents and highlight the adaptability within dyads who have atypical sensory experiences.

Keywords

Introduction

Prelingual hearing loss can have a profound impact on social, cognitive, and linguistic development when the hearing loss results in a lack of early access to language. Most deaf children are born to hearing parents who do not use sign language, limiting access to both spoken and sign language. As a result, many children with hearing loss demonstrate delays in language skills (Davidson et al., 2014; Houston et al., 2012; Houston & Miyamoto, 2010; Kirk et al., 2002). In addition to language delays, deaf toddlers and children also demonstrate differences in other developmental domains, including general cognition. Fundamental cognitive skills such as visual working memory (Harris et al., 2013), visual habituation (Monroy et al., 2019), and visual statistical learning (Gremp et al., 2019; Monroy et al., 2022; Terhune-Cotter et al., 2021) have been found to differ among deaf infants and children compared with their hearing peers. These findings, although mixed, suggest that hearing loss has general effects on cognitive development, above and beyond hearing and language. However, despite significant interest in identifying behaviours and cognitive skills that may predict language outcomes in children with hearing loss (Fagan et al., 2020), few studies have focused on the nonverbal skills and capacities during early development that play a role in later communicative development. The current study addresses this gap by investigating joint action in young toddlers with hearing loss, an important developmental domain that has not yet been studied in this population.

Joint action coordination in hearing toddlers

Infants’ earliest experiences with the world are through motor actions and visual observation (Hunnius & Bekkering, 2014). Early motor experiences support the development of action understanding, which refers to the ability to understand the overall goal or intention of an observed or performed motor action (Gerson & Woodward, 2014). Action understanding is thought to be a precursor to advanced social-cognitive milestones such as theory of mind (Von Hofsten & Rosander, 2018) and is also necessary for engaging in successful interactions with other people. Action understanding and the basic skills required for smooth, coordinated joint action—such as hand-eye coordination and anticipatory gaze—emerge early in infancy (Abney et al., 2018; Falck-Ytter et al., 2006). Nevertheless, though infants are eager to interact with others from early in life, research on the development of joint action in toddlers has shown that action coordination is difficult for young children (see Brownell, 2011 for a review). In the current study, we asked whether variation in action coordination development would be influenced by hearing loss, which seems to affect at least some non-auditory cognitive domains (Pisoni, 2000).

First, hand-eye coordination relies on the integration of eye movements and manual actions. Research has shown that infants, like adults, gather visual information and use it to guide their goal-directed movements from the earliest months of life (von Hofsten, 1982). However, this perception-action link develops incrementally and is complicated by the fact that infants’ musculoskeletal system is growing and changing quickly (Thelen et al., 1993). Although newborns appear so uncoordinated that for decades developmentalists assumed that their movements were nothing but “excited thrashing” (von Hofsten, 1982, p. 450), in the second year of life older infants become increasingly able to coordinate their hands and eye to perform fine motor skills (Claxton et al., 2003; Corbetta & Thelen, 1996; Von Hofsten, 1991). Critically for the current study, contingent auditory feedback facilitates goal-directed reaching actions in infants as young as 10 weeks of age (Lee & Newell, 2013), supporting the idea that auditory action-effects support the development of the perception-action loop during normal development (Monroy et al., 2017).

For joint action, however, infants must also attend to and monitor the behaviour of their co-actor and then integrate these observed movements with their own action plans. Between 6 and 12 months of age, typically developing infants can successfully anticipate the goals of observed actions (Falck-Ytter et al., 2006). By 12 months of age, infants and parents can coordinate their visual attention during parent–child play (Yu & Smith, 2013). Initially, however, this is achieved largely from parents attending to the manual actions of their infants and aligning their attention with their child’s (Yu & Smith, 2017). By 2 years of age, toddlers can complete simple cooperation tasks like pulling a handle with a peer (Brownell et al., 2006), and this improves dramatically by three years of age (Ashley & Tomasello, 1998; Meyer et al., 2010). These and a number of other studies have roughly outlined the trajectory of children’s cooperation skills, though there is still much to learn about the underlying cognitive and motor skills that drive these developmental changes in joint action. In the current study, we expect that hand-eye coordination, motor proficiency (i.e. fine motor skill) and anticipatory looking are key skills that guide smooth joint action—and that may be influenced by auditory development.

Joint action in toddlers with hearing loss

Two lines of evidence support the possibility that hearing loss early in life may affect motor experiences and joint action. One line of research has examined motor development in deaf children and found general differences in gross and fine motor skills between deaf and hearing children. Wiegersma and Vander Velde (1983) conducted two studies with children aged 6–10 years old using a range of motor assessments that included gross motor tasks like walking on a balance beam and skipping, and fine motor tasks like cutting out shapes and lacing shoelaces. Their findings showed poorer overall physical fitness and dynamic whole-body coordination in the deaf children, and poorer performance in some of the fine motor tasks. These authors concluded that the poorer motor performance of deaf children was due to slower movements, which could reflect the slower underlying processing required to execute the movement. The underlying reason for these slower movements could be vestibular deficits, as the hearing and vestibular systems are closely linked. Other studies have also demonstrated poorer gross motor performance in deaf school-age children (Gheysen et al., 2008; Savelsbergh et al., 1991; Siegel et al., 1991). However, these previous studies rely on standardised assessments of gross and fine motor skills. These assessments provide performance measures of (older) children’s ability to successfully complete motor tasks, but they provide no information about how children complete the task or in what ways they might struggle. For instance, as discussed earlier, successfully throwing a ball to a social partner requires multiple motor and cognitive abilities: hand-eye coordination, anticipatory looking, motor ability, and social skills like compliance. In the current study, we took advantage of mobile eye-tracking technology to analyse toddlers’ hand-eye coordination in real time, yielding rich information about how young toddlers use action and motor skills within the context of a natural social interaction with their parent.

Another factor that could affect joint coordination development is that auditory experiences encourage infants to rehearse movements and actions, imparting knowledge about and experience with certain motor tasks. For instance, hearing infants are reinforced by the sound effects of their own and others’ actions, and therefore are more likely to practice those actions (e.g. banging and clapping) that produce sounds (Iverson, 2010; Monroy et al., 2017). Infants with hearing loss may experience fewer such opportunities from early in life, with a cascading effect on their emerging motor abilities throughout childhood. Evidence for this hypothesis comes from a recent study on object exploration in young infants. In a simple and elegant study, Fagan (2019) compared deaf and hearing infants’ exploratory behaviours with a range of objects. She observed the frequency with which infants banged, mouthed, or manipulated objects that had varying affordances. Interestingly, deaf infants were found to be more likely to mouth objects, while they were significantly less likely (than hearing infants) to perform actions with sound effects, like banging objects together or against a table. These findings provide the first direct evidence that deaf infants seek different kinds of action experiences, likely because of the differences in sensory feedback that they receive from their own actions. Such differences early in life could have a cascading effect on motor and action experience skills later in childhood.

A second line of research on early action experiences shows that, in general, parent–child interactions differ between hearing parents and their deaf children (H-d dyads) compared with hearing parents and their hearing children (H-h dyads). For instance, Cejas and colleagues (2014) observed that deaf toddlers engaged in different kinds of interactions with their hearing caregivers compared with hearing toddlers: specifically, they spent less time in “symbolic-infused” joint engagement with their parents, which referred to moments of joint attention that involved symbols or language (e.g. taking turns pretending to feed a doll). Unsurprisingly, joint engagement in this study strongly related to children’s language age, indicating that developments in language influenced developments in interactions with their caregivers. Children with hearing loss, who experience delays in access to language and language development, therefore, also experience different kinds of joint interactions with caregivers. These early differences likely have bidirectional effects on the general development of joint action experiences and skills.

However, much of the existing research on parent–child interactions in children with hearing loss has focused on joint attention or vocal interactions (Kondaurova et al., 2020). Less is known about the joint action behaviours of parents during parent–child play. It remains an open question whether parents respond differently to motor actions while interacting with their deaf toddlers. This question has implications beyond motor coordination: for instance, as mentioned earlier, recent research has established that parents’ manual actions are an important facilitator for achieving joint attention, an important context for language learning (Yu & Smith, 2017). Thus, the lack of research on the action and motor skills of deaf toddlers is a crucial gap that relates not only to social cognition but also to an indirect pathway that could affect language development.

The current study

In sum, previous research has demonstrated consistent differences in the motor abilities of deaf children and in the parent–child interactions between H-d and H-h dyads. As highlighted earlier, little is known about whether and how motor actions during parent–child play are affected by hearing loss. The current study addresses this gap by investigating parent–child sensorimotor coordination in H-d and H-h dyads. Deaf toddlers who wear cochlear implants and their parents engaged in a joint, goal-directed task that required them to coordinate their actions to successfully drop coins into a toy piggy bank. Inserting coins into a narrow slot demands both hand-eye coordination and fine motor skill. This task was chosen because it is an item on the Mullen Scales of Early Learning developmental assessment, providing confidence in the validity of this task in capturing fine motor skill. Second, the piggy bank toy is one of several popular toys used at home with many families that involve fitting objects into another object (e.g. shape blocks and wooden puzzles), supporting the ecological validity of this task in capturing a motor skill used in everyday play activities. We modified the task to encourage toddlers to jointly coordinate their actions with their parents while inserting coins into the piggybank (Meyer et al., 2010). This naturalistic task allows us to access a suite of sensorimotor skills, including action anticipation, hand-eye coordination in action execution, and fine motor capabilities.

Our first question was whether fine motor abilities differ between deaf and hearing toddlers. The evidence described above for poorer motor skills in deaf school-aged children would suggest that deaf toddlers may demonstrate more variable fine motor skill and less efficient motor proficiency than hearing toddlers (Gheysen et al., 2008; Savelsbergh et al., 1991; Siegel et al., 1991). Second, we analysed toddlers’ anticipatory looking towards their parents’ goal-directed actions as a fine-grained measure of coordinated action skill (Hunnius & Bekkering, 2014). We had no strong hypothesis regarding anticipatory looking, as there is little research that has examined anticipatory looking in an action context in this population. Finally, we examined joint action between parents and toddlers by measuring the timing coordination between dyad members as they passed coins to one another (Meyer et al., 2010). If H-d dyads are less coordinated than H-h dyads, we predict more timing delays (i.e., longer latencies) between their parent and child actions during this task. The current study represents the first, to our knowledge, that uses head-mounted eye-tracking to examine the action skills of children with hearing loss in a dynamic, interactive context.

Method

The coded data and analysis files are openly available on the Open Science Framework: https://osf.io/kbprz/

Participants

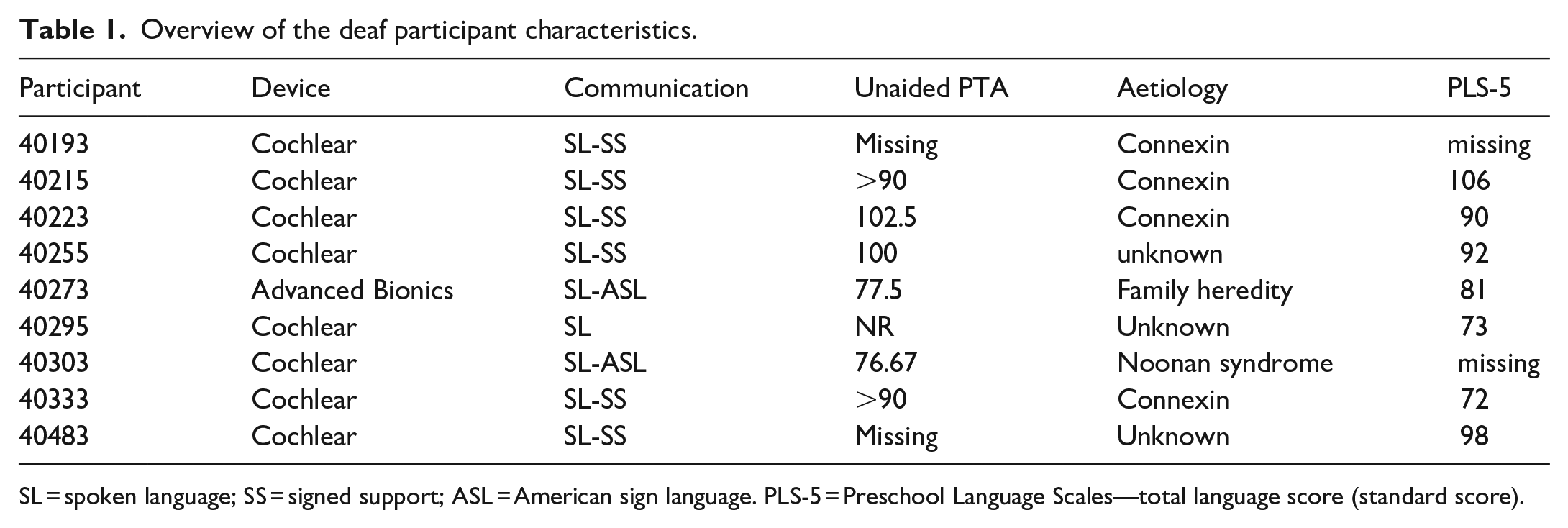

The sample consisted of 18 parent-toddler dyads that included 9 toddlers with hearing loss (mean age = 20.0 m, SD = 3.68, males = 5) and 9 with normal hearing (mean age = 19.44 m, SD = 3.45, males = 6). Deaf toddlers were diagnosed at birth with severe-to-profound bilateral sensorineural hearing loss, and all had received cochlear implants before 18 months of age (mean age at activation = 11.78, SD = 2.2; see Table 1 for participant characteristics).

Overview of the deaf participant characteristics.

SL = spoken language; SS = signed support; ASL = American sign language. PLS-5 = Preschool Language Scales—total language score (standard score).

At the time of testing, deaf toddlers had received 6–12 months of useable hearing experience through their implant, and all were enrolled in speech-language therapy with the goal of attaining spoken language. Each hearing toddler was matched to each deaf toddler in gestational age (±1 week) and, with one exception, gender. Hearing toddlers were born full-term and had no developmental diagnoses or history of chronic ear infections. The sample was broadly representative of the midwestern United States, consisting mostly of working- and middle-class families. All research and consent procedures were approved by The Ohio State University Social and Behavioural Sciences Institutional Review Board (#2016B0416) and in accordance with the Declaration of Helsinki.

Procedure

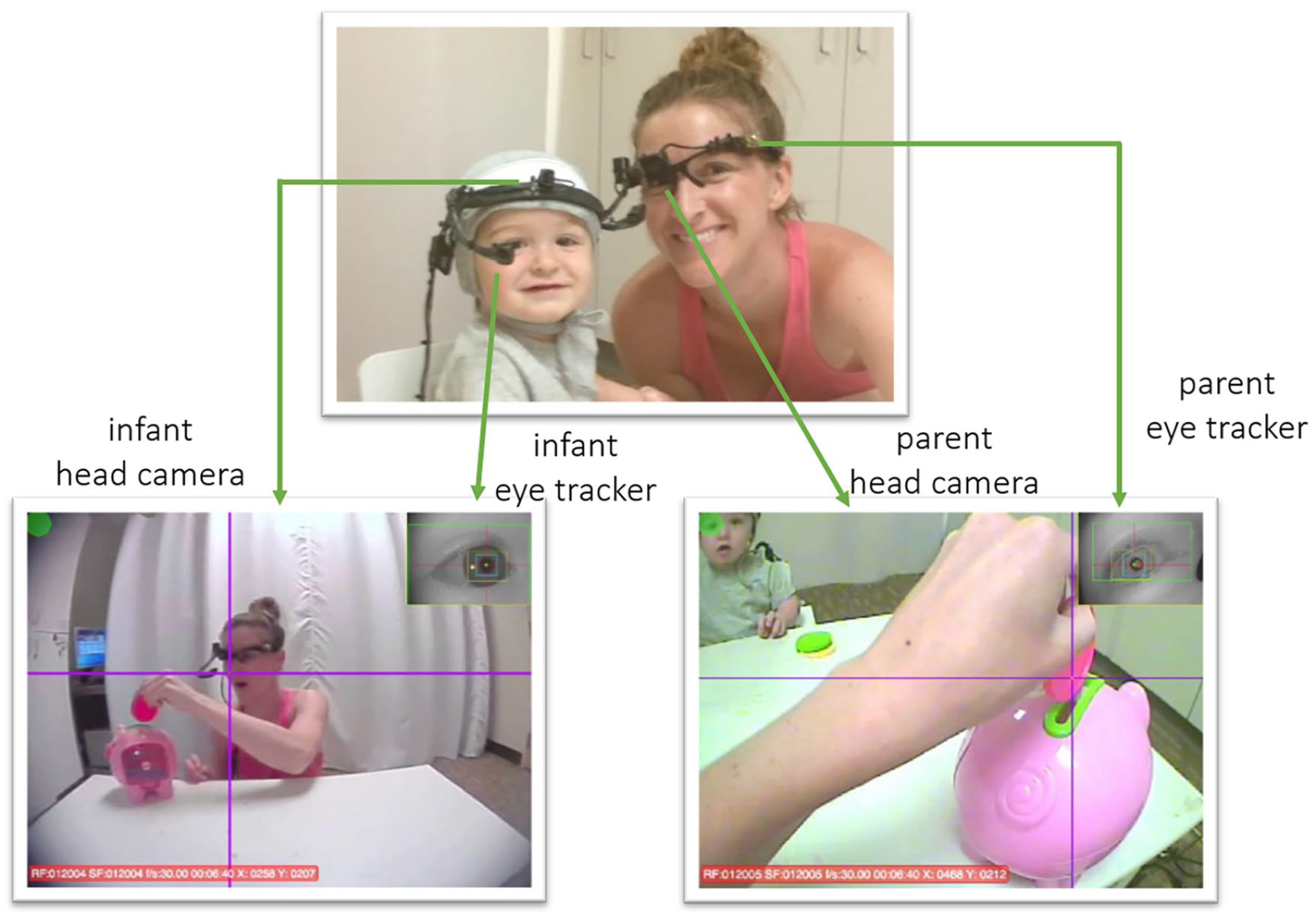

Toddlers and parents were seated at a child-sized table across from one another. Both dyad members wore head-mounted eye trackers (Positive Science, Inc; Figure 1), which feature an infrared camera that records the right eye and a head camera that records the visual field. Two additional cameras recorded third-person views of the toddler and parent behaviour. All cameras recorded at 30 Hz and were synchronised offline using ffmpeg (ffmpeg.org). To calibrate the eye trackers, a laser pointer was directed at nine unique locations on the tabletop to draw the toddler’s attention. This phase was used for offline calibration using Yarbus software (Positive Science, Inc.) by marking the locations on the corresponding video frames when the eye was directed at the laser pointer. Yarbus uses an algorithm to map each position of the pupil and corneal reflection from the eye-tracker recording to corresponding locations in the head camera recording. This yields a calibrated video with the estimated direction of gaze indicated by a crosshair and superimposed on the head camera recording (Figure 1).

Eye-tracking equipment and setup, showing example frames where the child is passing coins to his parent, who places them into the piggybank slot. The crosshair indicates estimated gaze direction. Photo printed with permission from the parent.

Following calibration, dyads were presented with a toy piggybank that comes with ten colourful coins (Figure 1). First, the piggybank was placed in front of the child, and the coins were placed before the parent. Parents were instructed to hand the coins to the toddler one by one, so the toddler could then insert them into the piggybank (“Child goal trials”). In a second round (“Parent goal trials”), the items were switched so that the piggybank was placed before the parent and the coins before the toddler; it was then the child’s turn to pass coins to their parent, who would insert them into the piggybank. The only task constraint was that the objects were arranged such that the child could not complete the task alone; they needed to cooperate and coordinate their actions with their parents’ to successfully insert the coins into the piggybank. Parents were otherwise instructed to interact with their children as they naturally would at home. There were 10 coins and therefore 10 trials per round, for 20 total trials per dyad. In subsequent data analyses, a trial was defined as the moment the first dyad member began reaching for a coin until the moment that coin (hereafter labelled the “target” coin) was fully inserted into the piggybank.

Data coding

Action annotation

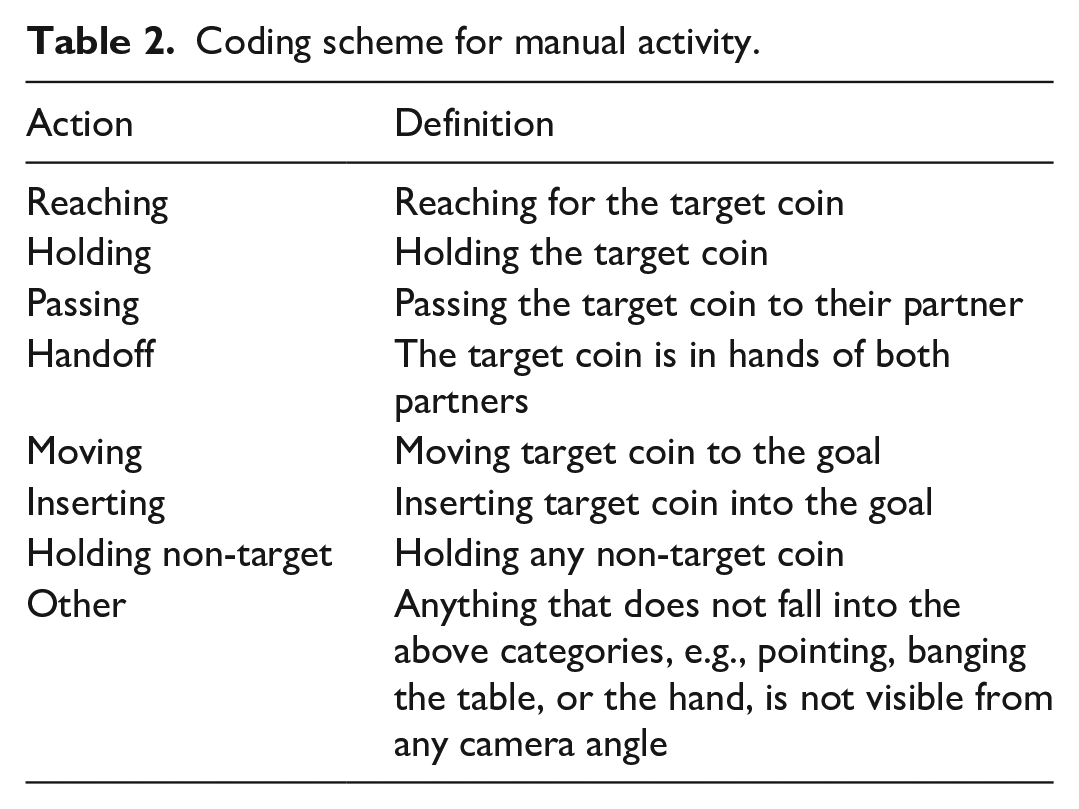

A trained researcher annotated the manual actions of the parent and child frame-by-frame, using custom in-house software and the images from the eye-tracking and scene video recordings (Monroy, Chen, et al., 2021). Left and right hands were annotated separately and then merged during data analysis. Table 2 defines the coding scheme for manual actions (see also Figure 2). A second researcher additionally annotated 50% of participants; interrater reliability ranged from 89% to 99%.

Coding scheme for manual activity.

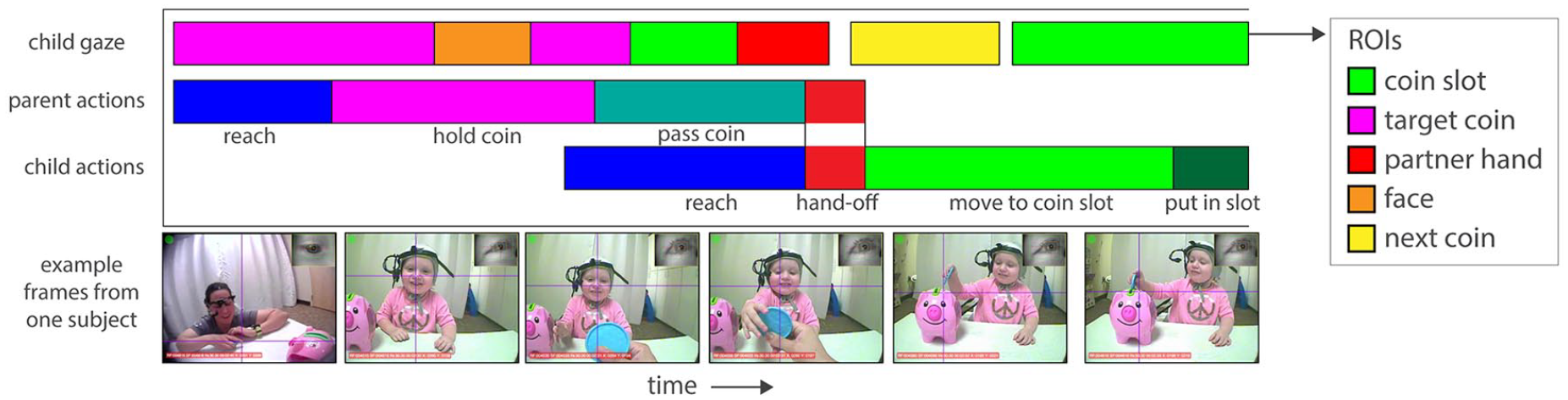

Example data streams representing the child gaze and the manual actions of both dyad members during the child goal trials of the task. For gaze, colours represent the different objects in the scene (regions of interest—ROIs). For actions, colours represent the separate phases of the joint action performed by both dyad members. During the parent goal trials, the parent and child actions would be reversed.

Gaze coding

After offline calibration, gaze direction was superimposed onto the head camera recording with a crosshair, yielding an additional recording of the calibrated gaze. All camera recordings were then exported into a series of single frames. A trained coder used frames from the calibrated recording to determine, on every frame, whether the crosshair fell within one of four regions of interest (ROIs): the goal (the piggybank slot), the target coin, the parent’s face, and the nontarget coins. During each trial, the target coin was defined as the coin currently being moved to the piggybank and inserted into the slot; all other coins were considered the “nontarget” coins. Frames were excluded if the eye-tracker failed to capture the eye or if the child was off-task (e.g., looking at the floor). Across groups, toddlers generated 1,611 looks to the ROIs in total. A second researcher additionally coded all participants. Disagreements between coders that were longer than 10 frames (0.33s) were resolved via discussion with the first author. Interrater reliability was therefore close to 100%.

Data analysis

Data processing was done in Matlab 2021a (Mathworks, Inc). Statistical analyses were done in R (R Core Team, 2021). All dependent measures were tested for normality using the Shapiro-Wilk test. Mann–Whitney U-tests were used to test for group differences whenever a measure differed significantly from a normal distribution; independent samples t-tests were used otherwise.

Motor proficiency

In the child goal trials, three measures of motor proficiency were calculated to assess toddlers’ ability to insert the coin smoothly and efficiently into the piggy bank slot. First, trials during which the parents provided physical help were removed (i.e. N = 25/155 trials, or 16.12% of trials) because these trials would not be a fair indicator of independent motor skill. For independent trials, total insertion time on each trial was summed and averaged across trials to yield mean goal duration per toddler. This included the time spent across multiple attempts if toddlers needed to try more than once to insert the coin. Mean insertion time across all trials was compared between toddler groups.

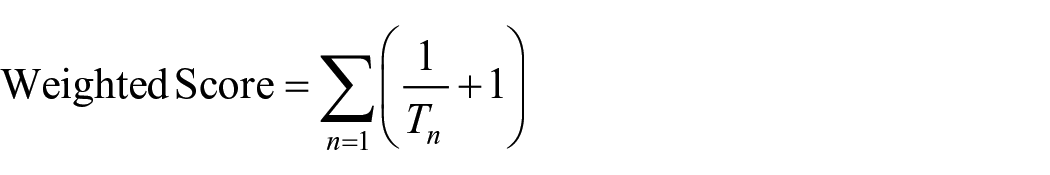

Second, motor skill in this task is reflected by both the ability to complete the task independently without help from the parent and how efficiently (i.e., quickly) toddlers could insert the coin into the slot once having reached it. Therefore, to account for both the duration of time it took to insert the coin into the goal as well as the number of successful, independent trials that were achieved, a weighted proficiency score was calculated as

where T = total insertion time and n = each successful, independent trial. Trials that were unsuccessful (i.e., the toddler failed to place the coin into the goal) or not completed independently were scored as zero. For instance, a toddler who completed 10 trials with very fast insertion time but with parent intervention would receive a lower score that a toddler who completed 10 trials more slowly but did so independently. A higher weighted score reflects overall better motor proficiency than a lower score. Scores on each trial were summed to yield a net weighted score per toddler.

Third, we assessed how variable/stable toddlers were in using their fine motor skills (Fulceri et al., 2018; Meyer et al., 2010). Prior research has demonstrated that a decrease in variability in motor tasks is associated with increasing age and improvement in performance (Meyer et al., 2010). To assess variability in performance, we calculated the coefficient of variation (COV) for goal insertion time, defined as the ratio between the standard deviation and the mean insertion time (SD/M). The COV accounts for any bias caused by differences in toddlers’ average movement time and allows for comparison of standard deviations between groups with different means. We focused first on the COV for the passing phase because this phase is most relevant to the joint coordination aspect of the task, as parents need to plan their own action to efficiently grasp the coin. Therefore, less variable timing of passing movements should make it easier for parents to coordinate their actions in response. We also selected the goal insertion phase for calculating COV because this phase represents the key motor aspect of the task; more stable timing for goal insertion represents better action control.

Action anticipation

Toddlers could also demonstrate coordinated action abilities by anticipating the course of their parents’ goal-directed actions. To measure action anticipation, we subtracted the moment the parent brought a coin to the goal location from the moment the child shifted their gaze to the goal (during the parent goal trials). We then compared anticipation of others’ actions to planning one’s own actions (Flanagan & Johannssen, 2003), by comparing this anticipatory gaze activity to toddlers’ self-anticipation of their own actions in the child goal trials (the difference between when the child first looked to the goal and when they began to insert the coin).

Parent–child coordination

Following the procedure of Fulceri et al. (2018), parent–child coordination was defined as the difference in time between the initiation of a reach for the target coin between the two dyad members, calculated during the passing phase of the task (Figure 2). In the child goal trials, coordination was defined as the absolute difference between the moment the parent begins to pass the coin to their child and the moment the child reaches out to receive it. In the parent goal trials, when toddlers passed coins to their parents, coordination was defined as the absolute difference in time between when the toddler begins to pass the coin and when the parent reaches to receive it. This measure reflects each dyad member’s understanding of their role in the joint action context, and the extent to which dyad members anticipate their partner’s movements and plan an appropriate motor response at the right time to efficiently complete the task.

Results

Visual attention

Gaze fixations to each ROI were converted into proportions by summing the total amount of time spent looking at each ROI (goal, target, face, and nontarget) per trial and dividing by the total length of the trial. On average, across the entire task, both groups spent over 67% of their total time attending to one of the four task-relevant ROIs. There were no significant group differences in the overall proportion of looking time to the four ROIs out of total interaction time, for either round (ps > .30). There were also no differences in the mean frequency or duration of looks across ROIs between groups (ps > .64), revealing that toddlers in both groups displayed similar patterns of visual attention during the task.

An analysis of variance (ANOVA) confirmed that gaze proportions differed significantly across ROIs, F(1,944) = 74.59, p < .0001. Post hoc comparisons using Tukey’s Honest Significant Difference test revealed significant differences between the goal ROI and all other ROIs (all ps < .0001); there were no other differences in gaze proportions between the remaining ROIs. These findings confirm that toddlers attended significantly more to the goal than to any other ROI and attended similarly to their parents’ face, the target coin, and the nontarget coins. An ANOVA with gaze proportions as the dependent variable, ROI as a within-subjects factor and group as a between-subjects factor revealed no main effect of group (p = .18) and no ROI*Group interaction (p = .82), indicating that hearing status did not affect overall gaze distribution across the ROIs.

Motor proficiency

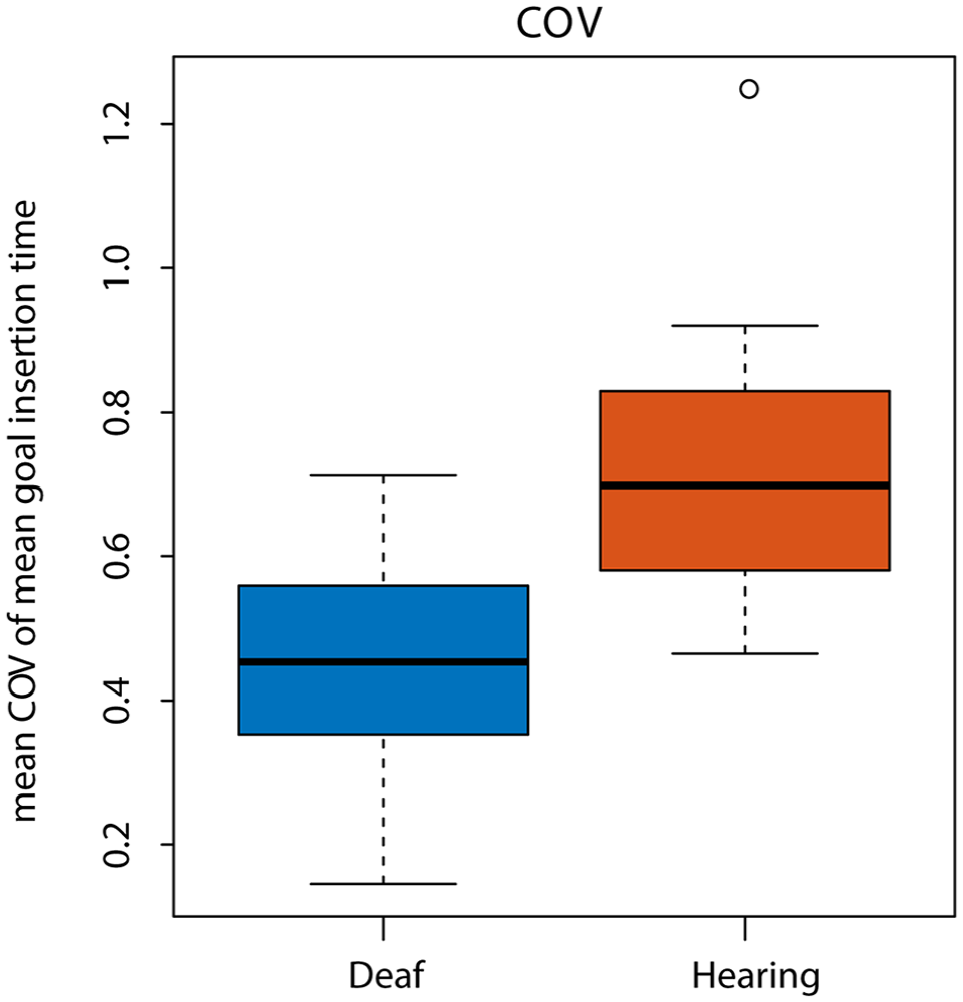

During child goal trials, there were no differences between toddlers in the mean duration for the handoff phase of the interaction (W = 2,763, p = .59), or the goal insertion phase (W = 3,068, p = .81). Weighted motor proficiency scores also did not differ between groups, t(14.38) = −0.3, p = .68. When accounting for both the number of trials completed independently and the length of time to insert the coin into the goal, deaf and hearing toddlers performed equivalently. COV for passing movements did not differ between groups, t(12.87) = 0.11, p = .91. However, COV of goal insertion time was significantly lower for the deaf group than the hearing group, indicating that deaf toddlers were more stable in their goal insertion timings compared with the hearing toddlers, t(10) = −2.52, p = .031; Figure 3. This difference remained significant even when removing one outlier in the hearing group, t(10) = −2.52, p = .039.

Coefficient of variation of goal insertion time.

Action anticipation

There were no significant differences between deaf and hearing toddlers for action anticipation of their parents’ actions (W = 727.5, p = .61). Median anticipation latency across groups was −0.067 s, indicating that overall toddlers looked to the goal location closely in time to moment their parent brought the coin to the goal. There were no differences between groups for the latency to predict their own goal-directed actions (W = 3,039, p = .68). Median anticipation latency for their own actions was −0.50 s, indicating that both groups used their vision to guide their actions and did so using similar predictive processes.

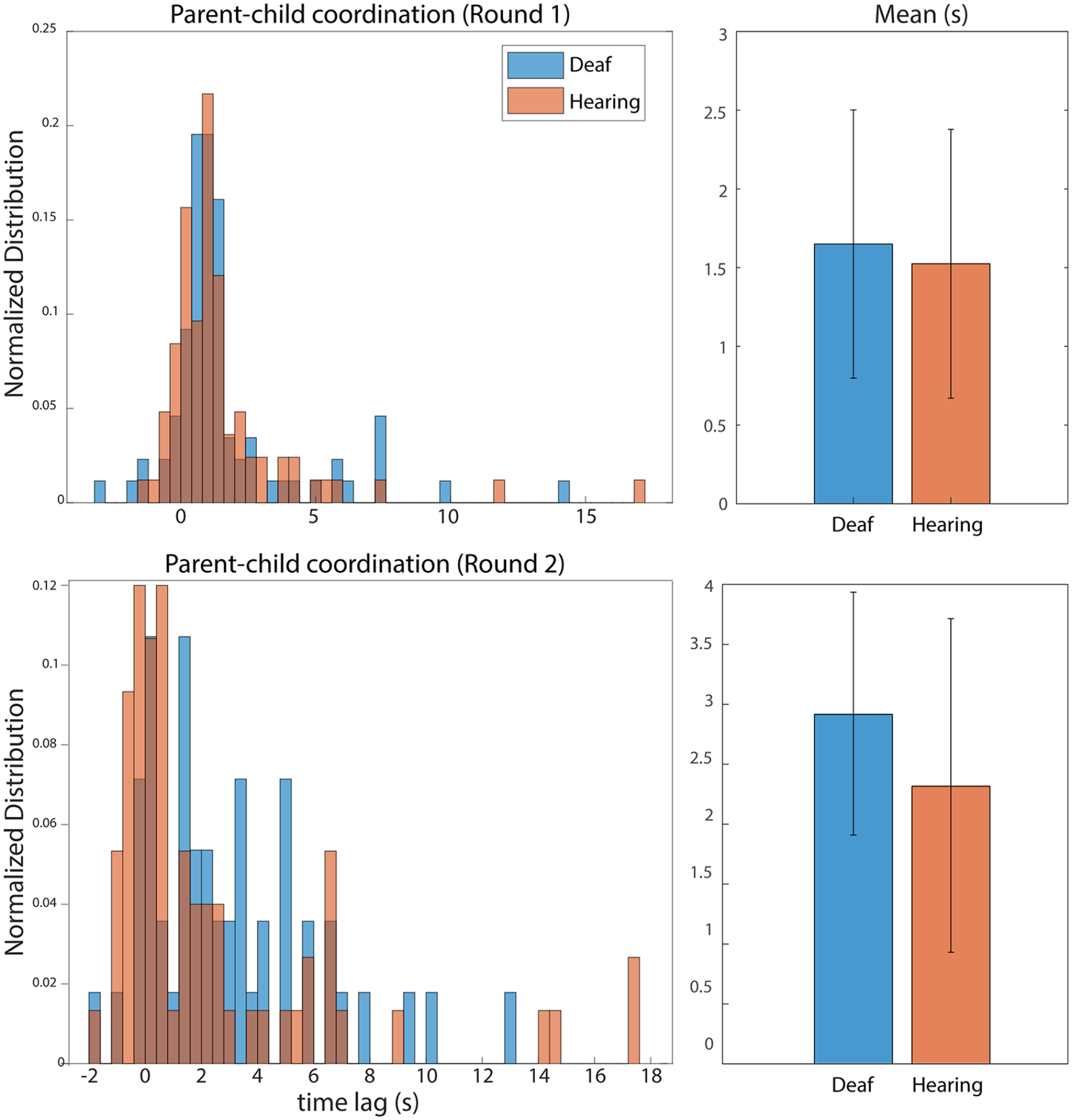

Parent–child coordination

Descriptive statistics for all dependent measures are listed in Table 3. For parent–child coordination during the child goal trials, there were no differences between groups (W = 3,308, p = .51; Figure 4 top). However, during the parent goal trials, a Mann–Whitney U-test revealed a significant difference between the deaf group compared with the hearing group (W = 2,092, p = .033). Median coordination was 2.23 s for the deaf group compared with 0.67 s for the hearing group, revealing tighter coordination within the hearing group when toddlers passed coins to their parents.

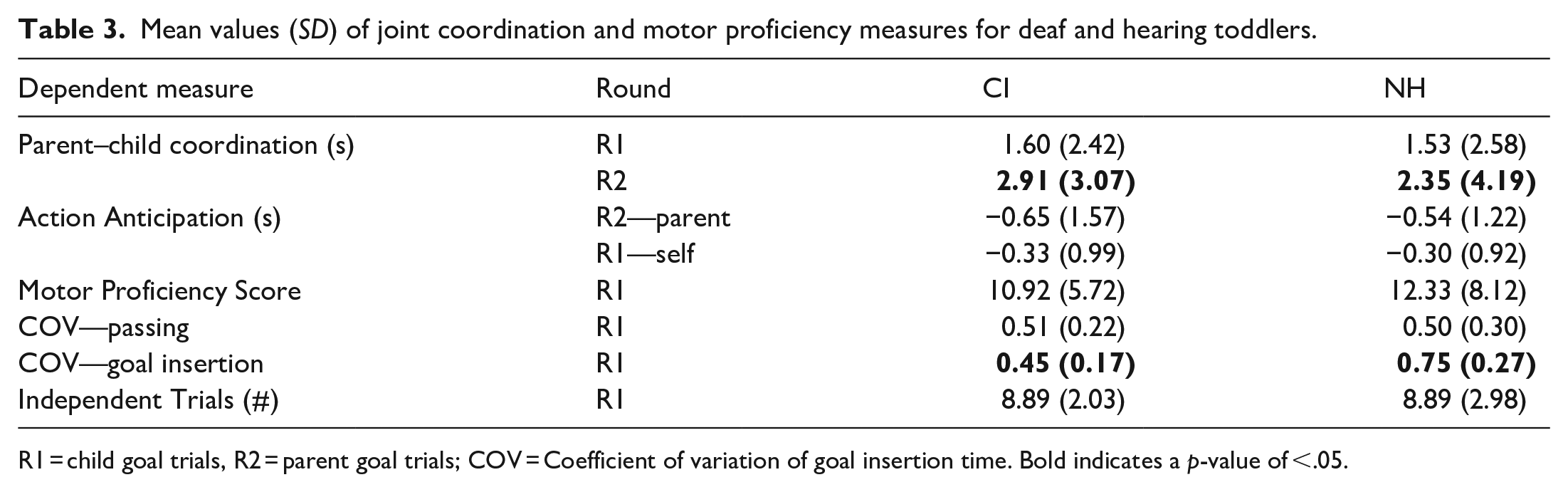

Mean values (SD) of joint coordination and motor proficiency measures for deaf and hearing toddlers.

R1 = child goal trials, R2 = parent goal trials; COV = Coefficient of variation of goal insertion time. Bold indicates a p-value of <.05.

Left: histograms showing the normalised distribution of parent–child coordination; that is, the delay between the dyad member passing the coin to their action partner and the partner reaching out for the coin.

To examine possible reasons for poorer coordination in the deaf group, we first compared the number of trials during which toddlers passed coins to their parents, as not all toddlers were cooperative and willing to pass the coins. For the deaf group, three of the nine toddlers never passed a coin to their parents, preferring instead to throw the coins across the table (n = 1), or simply play with the coins (n = 1). One deaf toddler did not complete the parent goal trials due to fussiness. However, there were no differences in the mean number of trials in which toddlers passed coins to their parents (W = 27, p = .24), and the pattern of results remained the same even when excluding these three toddlers and their age matches (W = 1,279.5, p = .023, n = 12).

To explore this finding further, we examined toddler hand and gaze behaviours prior to the passing coin action phase. One possible reason for less coordination during the parent goal trials is that deaf toddlers were more interested in holding and exploring objects for a longer period before passing them. Therefore, we examined the duration of holding actions that occurred immediately prior to each passing action. Findings reveal that, on average, deaf toddlers held coins longer prior to passing them compared with hearing toddlers (Mediandeaf = 1.63s, SD = 1.15; Medianhearing = 0.98s, SD = 1.08). A Mann–Whitney U-test revealed that this difference was statistically significant (W = 1,628.5, p = .024). Deaf toddlers also demonstrated longer durations of looking at the target coin during holding events in the parent goal trials (Mediandeaf = 1.33s, SD = 1.40; Medianhearing = 0.93, SD = 1.05) and this difference trended towards statistical significance (W = 1,163.5, p = .07). These findings suggest that deaf toddlers held and looked at target coins for a longer duration prior to handing the coins over, resulting in a longer lag time between the moment parents reached out for the coin and when the child passed it to them. Finally, chronological age (ps > .53) and hearing age (ps > .21) did not correlate with the primary dependent variables in our study.

Discussion

The current study used head-mounted eye-tracking to compare action skills and sensorimotor coordination between deaf vs. hearing toddlers and their hearing parents. We examined fine motor kinematics in the toddlers by measuring the latency and duration of movements as toddlers reached for, grasped and inserted coins into a piggybank. Second, we assessed sensorimotor coordination by analysing the latency between parent and child hand movements as they completed this joint task, and by analysing toddlers’ anticipatory eye movements to their parents’ actions. Our results suggest that deaf (Dh) toddlers and their hearing parents achieve smooth, coordinated joint action to the same degree as their hearing (Hh) counterparts, a finding that differs considerably from past work (Cejas et al., 2014; Fagan et al., 2014; Meadow-Orlans et al., 2004). In a simple play activity involving joint coordination, toddlers with and without hearing loss demonstrated similar joint action capabilities: they anticipated their parents’ movements and coordinated their movements with their parents’ in a mostly similar way as their hearing counterparts—with a few key exceptions that we will discuss below. Unlike previous research, these findings do not paint a picture of poorly coordinated interactions between parents and their children with hearing loss. On the contrary, they suggest that deaf toddlers demonstrate typical action skills in line with their hearing peers and experience similar kinds of joint actions with their parents when playing together. Below, we discuss the implications of these novel findings and outline new questions for future research.

Our study diverges from past research in that the current experimental context allowed toddlers and their parents to interact freely, while still yielding fine-grained measures of eye movements and manual actions. Previous studies of motor skill in deaf children has focused on outcome measures from standard assessments of gross and fine motor skill, such as the ability to string beads or balance on a beam (Gheysen et al., 2008; Horn et al.,2007, 2006; Wiegersma & Vander Velde, 1983). These studies focus on children’s ability to successfully complete the tasks in an assessment context, without providing detailed information about how children complete the task. Here, we were able to examine the kinematics of toddlers’ movements and their hand-eye coordination in real time, yielding richer information about their motor proficiency than rates of successful task completion. Our findings show that deaf toddlers who wear CIs can anticipate their parents’ actions and then plan and execute their own actions in response no differently than their hearing counterparts. They also demonstrate comparable visuomotor skills, as measured by their ability to reach for, grasp, and manipulate objects.

Deaf toddlers did differ from hearing toddlers in two measures: first, they showed more stability in their action timing during coin insertion. More stability (i.e., a decrease in variability) has been associated with better performance in previous research (Meyer et al., 2010), suggesting that the deaf toddlers demonstrated superior performance in inserting the coins into the slot. Although future research is needed to clarify why this particular skill would be better in the deaf group, one speculation is that differences in early motor exploration (Fagan, 2019) leads to some action skills being more advanced, rather than delayed, in infants with hearing loss. Another possibility is that differences in exposure to sound cause deaf toddlers to concentrate on motor tasks with fewer distractions from other sounds in their environment. A closer look at these motor skills and tracking them longitudinally is needed to fully understand whether these findings will replicate and what the underlying mechanism is that drives this potential advantage in the deaf group.

On the other hand, deaf toddlers and their parents showed slower coordination (i.e. longer lag times) during the child-to-parent round of the task. Upon closer examination, the deaf toddlers looked at the coins and held them for a longer period before passing them over, which could explain the longer lag times. This finding could reflect heightened interest in the coins compared with the hearing toddlers, or an increase in exploratory behaviours. This finding that is in line with the study by Fagan (2019) that also revealed differences in early motor exploration in deaf infants. Another possibility is that deaf toddlers are less cooperative with their parents, although we also assessed their overall willingness to pass the coins and did not find evidence to support this explanation. It is an intriguing possibility that previous findings of poorer coordination between deaf children and their parents could simply reflect a difference in how the deaf children physically explore their environment and the objects in it.

Throughout the current manuscript, we have referred to our participants with hearing loss as deaf toddlers, to reflect the fact that these children were born with severe-to-profound hearing loss, and all experienced a period early in life with no or limited access to sound. It is important to consider, however, that these toddlers all had several months of experience with sound through their CIs at the time of testing. It is possible that the current findings reflect the rapid adaptation of deaf toddlers to a world of sounds; in other words, any gaps in motor skills or in joint coordination that existed may have closed following implantation. A crucial next step for this area of research is to compare pre- and post-implant measures of sensorimotor coordination as well as to compare pre-implant infants with hearing controls.

Another possible factor for differences in motor development proposed by Wiegersma and Vander Velde (1983) comes from parents: parents of children with hearing loss may encourage fewer opportunities to develop motor skills or build confidence in motor ability. These authors suggest that this could be in part because of frustration with or over-protectiveness towards their child with a hearing loss, though they present no evidence to support this. In the current study, we did not observe any differences coming from the parents of deaf toddlers. We have also found in prior work that parents of deaf toddlers did not show differences in the extent to which they will scaffold their child’s motor skills (Monroy, Houston, & Yu, 2021). However, our current analysis primarily focused on the child’s motor skill and hand-eye coordination; future work could focus on parent’s actions and how they support motor skill development. This work could include a broader examination of whether and how parents of deaf toddlers and children encourage general physical activity and ability.

Future work

Our sample size was small in the current article (9 dyads per group) compared with many studies involving typically developing infants or studies using traditional screen-based eye-tracking methods. However, using mobile eye-tracking during natural interactions yields high-density data that has been shown to be reliable and generalisable even with small sample sizes (Yu & Smith, 2012). Our sample size is also consistent with other studies using this approach with infants and children who have hearing loss (Chen et al., 2019; 2020, 2021; Gabouer et al., 2020). Nevertheless, given the small sample size and the novelty of our experimental paradigm, future research could strengthen these findings with converging results from other similar motor tasks and additional participants.

Conclusions

In sum, action planning, coordination, and control are important skills that have been shown to predict later cognitive, language and more advanced motor outcomes (Von Hofsten & Rosander, 1996, 2018). Investigating the early motor development of infants and toddlers with hearing loss is an important domain that may shed light on the ways in which their developmental trajectories may differ from hearing peers. Previous research has indicated that deaf children struggle in the domain of motor development compared with hearing children, in part due to the effects of hearing loss on the vestibular system and the lack of auditory feedback from the actions of themselves and others (Gheysen et al., 2008; Horn et al., 2006; Wiegersma & Vander Velde, 1983). However, we found no consistent evidence for delays or deficits in the action or interaction skills of deaf toddlers with cochlear implants. Our findings suggest, instead, that these toddlers may engage in action exploration differently to a small extent but nevertheless demonstrate robust motor skills and the ability to successfully coordinate their actions with their hearing parents. The current study represents a first step in the effort to characterise and understand the fine motor development and emerging joint coordination skills of infants and toddlers with hearing loss, in the service of a better understanding of their social, cognitive and language outcomes.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by NIDCD grant number F32DC017076 to CM. We are especially grateful to the families who generously sacrificed their time to participate in our study.