Abstract

Even with the use of hearing aids (HAs), speech in noise perception remains challenging for older adults, impacting communication and quality of life outcomes. The association between music perception and speech-in-noise (SIN) outcomes is of interest, as there is evidence that professionally trained musicians are adept listeners in noisy environments. Thus, this study explored the association between music processing, cognitive factors, and the outcome variable of SIN perception, in older adults with hearing loss. Forty-two HA users aged between 57 and 90 years with a symmetrical, moderate-to-moderately severe hearing loss participated in this study. Our findings suggest that on-beat rhythm accuracy, pitch perception, and working memory all positively contribute to SIN perception for older adults with hearing loss. These findings provide key insights into the relationship between music, cognitive factors, and SIN perception, which may inform future interventions, rehabilitation, and the mechanisms that support better SIN perception.

Introduction

Hearing aids (HAs) are generally considered the primary solution for mild to moderate sensorineural hearing loss and are effective at improving listening and quality of life outcomes (Ferguson et al., 2017). However, HAs are only a partial solution, and they provide limited improvement when a user is in the presence of a noisy environment (Plomp, 1986). Better speech-in-noise (SIN) perception remains the most sought-after outcome for HA users (Kochkin, 2002), and is strongly associated with their satisfaction with an HA (Davidson et al., 2021). However, pure tone assessment (the standard measure of assessing hearing loss) is a relatively poor predictor of SIN perception (Moore et al., 2014). Age is also a significant factor, with older adults requiring more positive signal-to-noise ratio (SNR) than their younger adult counterparts, even after factoring for hearing threshold (Tremblay et al., 2021).

Busy social spaces such as cafes, restaurants, and recreational centres are inherently noisy environments. Difficulty with SIN makes everyday conversations more challenging, impacting an individual’s motivation to actively engage with others (Bennett et al., 2022). This disconnect may lead to smaller social networks and less frequent social interactions (Timmer et al., 2023), with older adults at a higher risk of social disconnection (Smith et al., 2023). Numerous factors have been associated with diminished SIN perception in older adults including declining cognitive processing such as working memory (WM) and auditory–motor functions (Moore et al., 2014; Panouillères & Möttönen, 2018); poorer pitch perception and access to harmonics (Oxenham, 2008); and poorer temporal resolution (Presacco et al., 2019). Taken together, effective SIN perception relies on a complex and dynamic interplay between cognition, central auditory processing, and peripheral hearing (Anderson, White-Schwoch, et al., 2013; Humes et al., 2012; Wong et al., 2010). Thus, there is an important need to explore and understand the factors that are related to older adults with hearing loss and SIN perception, which ultimately contribute to broader communication, psychosocial health, and quality of life outcomes.

The association between music perception and enhanced auditory perception is of interest to understand potential mechanisms for rehabilitation. Much of the interest stems from evidence that musicians with long-term experience have an advantage for SIN perception; which has been supported by recent reviews and a meta-analysis (Coffey et al., 2017; Maillard et al., 2023). Importantly, this musician advantage has been observed over a wide range of contexts, methodologies, and populations such as longitudinal designs for older adults with hearing loss (Dubinsky et al., 2019; Zendel et al., 2019), and without hearing loss (Worschech et al., 2021); longitudinal designs for children with hearing loss (Lo et al., 2020), and without hearing loss (Slater et al., 2015); and cross-sectional designs (musicians vs non-musicians; Parbery-Clark et al., 2009). Continual music practice is likely an important factor for the maintenance of any SIN benefit. For example, although Ruggles et al. (2014) found no significant difference between musicians and non-musicians, they nonetheless found an association between years of music training and SIN perception. Finally, a recent review by Schellenberg and Lima (2024) also suggested that the musician advantage may be heavily influenced by genetic and non-musical environmental factors.

Music activities are highly variable multisensory interactions that involve domains such as audition, vision, and cognition; and often leverage social interactions. Below, we provide a brief overview of two key frameworks that may support SIN perception: Dynamic Attending Theory (DAT; Jones, 1976) and the Processing Rhythm in Speech and Music (PRISM) framework (Fiveash et al., 2021).

The DAT is a seminal framework of fundamental temporal patterns and structures. We will focus on one aspect of DAT, emphasising the importance of auditory temporal relationships in the context of speech and music perception. The framework that DAT posits is that there is entrainment between internal rhythms (e.g., neural oscillations) and external rhythms (e.g., the rhythmic structure of music). Speech rhythms are typically contextualised as quasi-rhythmic (Gross et al., 2013), and recent evidence suggests our auditory systems benefit from its coupling with speech motor rhythms that are best modelled as an oscillating system (Poeppel & Assaneo, 2020). DAT suggests the benefit for speech understanding is that attentional cognitive resources can be flexibly directed towards the expected points in time, ultimately leading to an enhanced prediction of the rhythmic auditory stimuli.

The PRISM framework consolidates and builds upon a number of theories (such as DAT) and suggests that three rhythm processes in both speech and music may lead to improved speech perception. (1) “Precise auditory processing”—both speech and music perception require accurate processing of small deviations in temporal sequencing. Supporting evidence for this is derived from music training studies that suggest the demand characteristics of music may fine-tune the auditory system, leading to enhancements in speech processes such as temporal envelope (Kraus & Chandrasekaran, 2010), or formant tracking (such as in plosives such as /ba/ and /da/) (Lo et al., 2015). (2) “Synchronisation/entrainment of neural oscillations to external rhythmic stimuli”—because musical rhythm typically features temporal regularities, music is a useful stimulus to entrain neural oscillations. Entrainment optimises processes associated with attention and prediction (Large & Jones, 1999), and hierarchical processing (i.e., phonemic, syllabic, phrase level speech perception; Jones, 1976; Poeppel & Assaneo, 2020), playing a critical role in speech and music perception. (3) “Sensorimotor coupling”—auditory and motor networks are linked and implicated in effective processing of both speech and music domains (Hickok et al., 2011; Ross & Balasubramaniam, 2022).

Cognitive contributions also play a significant role in the perception of music and speech. Cognitive reserve is an active process that is defined as “the ability to optimize or maximize performance through differential recruitment of brain networks, which perhaps reflect the use of alternate cognitive strategies” (Stern, 2002). Greater cognitive reserve is typically conceptualised as more efficient use of brain networks, the ability to recruit from alternate networks (i.e., cross-modal plasticity), or the use of compensatory behaviours (Heald & Nusbaum, 2014; Stern, 2002). Recently, a narrative review found that music engagement may support cognitive reserve across the lifespan as well as moderate age-related decline (Wolff et al., 2023). In the context of hearing loss, more cognitive resources may be required to offset the difficulties associated with deafness. Thus, greater cognitive reserve is advantageous, with WM found to be strongly correlated with better SIN perception (Lunner, 2003; Rudner et al., 2011), noting that this effect appears to apply specifically to older adults (Füllgrabe & Rosen, 2016; Vermeire et al., 2019).

The frequency-following response (FFR) is a neurophysiological response to periodic acoustic stimuli that is broadly associated with pitch perception and the fidelity of central auditory processing (Krizman & Kraus, 2019). Although FFR responses are quite variable and heterogeneous, findings suggest that musicians show more robust encoding compared with non-musicians (Coffey et al., 2016). Thus, one interpretation is that FFR may reflect experience-dependent plasticity (Coffey et al., 2016). Alternatively, a study by Mankel and Bidelman (2018) found FFR was also reflective of inherent auditory abilities (i.e., both nature and nurture contribute to the auditory perception). Although the FFR was previously associated with subcortical processing, it has become clear in recent years that it is influenced by cortical processing (Coffey et al., 2019; Lai et al., 2023). In addition, our measure of phase synchrony was derived from the FFR by using inter-trial phase coherence (ITPC). Musicians show higher ITPC compared with non-musicians (Doelling & Poeppel, 2015), which is thought to reflect cortical entrainment, perceptual accuracy, as well as musical background and training (Sorati & Behne, 2019).

Our understanding of the musician advantage in the context of hearing loss and older adults is limited. Most research is focused on younger adults, professionally trained musicians, and normal hearing. The recent systematic review and meta-analysis by Maillard et al. (2023) indicates there is insufficient evidence to support a robust association between music and SIN outcomes for older adults. A review by McKay (2021) also found insufficient evidence that music training improved SIN outcomes for individuals with hearing loss, primarily due to methodological concerns such as inappropriate study designs, small sample sizes, incorrect interpretation of statistical findings, and a lack of suitable control groups.

Therefore, the aim of this study was to explore how music perception and cognitive factors were associated with SIN perception in older adults with hearing loss, utilising both behavioural and neurophysiological measures. This may enhance our understanding of the mechanisms by which music enhances speech perception outcomes, and lead to more effective targeted interventions and rehabilitation. Although this study is neither an intervention study nor a cross-sectional design, based on the available evidence (Coffey et al., 2017; Fiveash et al., 2021; Lunner, 2003; Maillard et al., 2023; Rudner et al., 2011), we hypothesised that in a normative sample of older adults who use HAs, better music perception and cognitive abilities would be associated with better SIN outcomes.

Methods

Ethics

Ethical approval to conduct this study was obtained from the Toronto Metropolitan Research Ethics Board (ID: REB 2018-089-1).

Study design

This study is part of a broader investigation around the benefits of choir singing for older adults with Has, which was registered as a clinical trial (clinicaltrials.gov, U.S. National Library of Medicine, ID: NCT03604185). This broader investigation used a randomised controlled trial design with three intervention arms. This study reports the outcomes from the baseline time point (i.e., before the participants were randomly assigned to an intervention arm). As all the measures were tested in unaided conditions, and only the SIN measures were tested in both unaided and aided conditions, this study reports the baseline outcomes and correlations in unaided conditions only.

Participants

Inclusion criteria were: (1) participants aged over 50 years; (2) HA users—individuals that currently use HAs on a regular basis; (3) a symmetrical hearing loss (≤ 15 dB difference between the ears at any given frequency); (4) an unaided hearing loss that was moderate-to-moderately severe as measured by their four frequency pure tone average across both ears (4FPTA); (5) proficiency with English; and (6) no significant cognitive impairment (methodological details to follow).

A total of 54 participants were recruited for the broader study of a 14-week choir-based training programme and were asked not to participate in any music-based learning activity (such as playing an instrument) during this period. However, nine participants subsequently withdrew from the study, and three did not pass the inclusion criteria. Thus, in total, 42 (28 female, 14 male) older adult HA users participated in this study. They were aged between 57 and 90 years (Mage = 73.5 years, SD = 8) with a symmetrical, moderate-to-moderately severe hearing loss (M4FPTA = 47 dB, SD = 7.8).

The recruitment of participants was multifaceted, designed to target a normative sample of older adult HA users. This strategy included: phone calls and email invitations targeting participants that fit our inclusion criteria on the Toronto Metropolitan Auditory Participant Information Database and the Toronto Metropolitan Senior Participant Pool; the facilitation of face-to-face seminars by the authors E.D., G.S., and F.A.R. describing the research for a lay audience and potential participants; phone calls to participants that fit our inclusion criteria through a collaboration with Hearing Solutions—the largest independently operated HA clinic in Toronto, ON, Canada; and the dissemination of flyers through social media platforms and local newspapers.

Materials

Test battery materials were selected that were well validated and widely used to ensure alignment with the field to allow for greater generalisability.

Cognitive screening

The Montreal Cognitive Assessment (MoCA) is a validated and widely used brief screening tool designed to detect mild cognitive impairment (Nasreddine et al., 2005; Utoomprurkporn et al., 2020). MoCA tests short-term memory recall, visuospatial abilities, executive functioning, phonemic fluency, verbal abstraction, attention, concentration, and orientation. MoCA was administered aurally and on paper. As findings from a recent systematic review and meta-analysis indicated adults with hearing loss score lower than their normal-hearing peers as a result of hearing-based task instructions (Utoomprurkporn et al., 2020), our MoCA inclusion criteria was set to a score ≥ 21 (out of 30), rather than the standard score of 26.

Speech-in-noise

SIN perception was measured using the Quick Speech-in-Noise (QuickSIN) and the Revised Speech Perception in Noise (R-SPIN) tests (Bilger, 1984; Killion et al., 2004).

For the QuickSIN test, participants were presented with four lists of six recorded sentences. The first list was a practice trial. Each sentence consisted of five keywords spoken by a woman in the presence of four-talker babble noise. The test was presented through insert headphones and the first sentence of each list was presented with a SNR set at 25 dB. Each consecutive sentence was made more difficult by decreasing the SNR by 5 dB, with the final sentence set at 0 dB SNR. Participants were asked to repeat back the target sentences as best they could and were awarded one point for each correctly repeated target word. SNR loss was calculated as an average of the three lists. A greater SNR loss is associated with poorer performance. QuickSIN has a test–retest reliability of 1.4 dB within a single list.

For the R-SPIN test, participants were presented with one list of 50 sentences, with 25 high-predictability and 25 low-predictability items randomly allocated within each list. Two sentences were presented as practice trials. Each sentence consisted of five to eight words spoken by a man in the presence of multi-babble noise and presented at 8 dB SNR. Participants were asked to repeat the last word of each sentence and were awarded a point for each correct response. The total score summed both high and low-predictability items.

Working memory

WM was assessed using the digit span subtest from the Wechsler Adult Intelligence Scale—Fourth Edition (WAIS-IV), a widely used measure of intelligence (Wechsler, 2008). This subtest consisted of a “Forward,” ‘Backward,’ and “Sequencing” digit span task, which required participants to recall the digits in the same order, reversed order, and sorted order (low to high), respectively. The maximum sequence of digits presented was 9. All three subtests were summed for a total score.

Rhythm perception

The Beat Alignment Test (BAT) was used to measure musical beat perception (Iversen & Patel, 2008). Thirty-six musical excerpts were played with a superimposed click track that was either: (1) “On beat”—on the beat; (2) “Tempo error”—off the beat with the wrong tempo; and (3) “Phase error”—out of phase. Participants were provided three practice trials. Participants had to identify if the excerpt was on the beat by responding on the keyboard with YES (“Y” key) or NO (“N” key). Participants were also instructed not to tap or move along to the music. Scores were calculated as a percentage of correct responses. The BAT was utilised as our single rhythm-based measure as per recent recommendations by Fiveash et al. (2022).

Pitch perception

A Frequency Difference Limen (FDL) task was used to evaluate pitch perception. Participants were presented with a three-alternative forced choice paradigm containing two pure tones at 500 Hz with the target stimulus presented at an adaptively selected higher frequency. The pure tones were 200 ms in duration, with 20 ms envelope rise and fall times. The participant identified which corresponding tone was higher on a computer keyboard (i.e., “1” = first tone; “2” = second tone; “3” = third tone). A pitch discrimination threshold was calculated with an adaptive staircase procedure with the frequency difference between the target and 500 Hz tones was divided by 2 after three correct responses or multiplied by 2 after one incorrect response. After five reversals, the step size changed, with the frequency difference divided by 1.414 after three correct responses or multiplied by 1.414 after one incorrect response. Participants completed two blocks, with each block ending after 12 reversals. FDL threshold was determined from the arithmetic mean of the last 10 reversals of each block, which were then averaged between the two blocks.

Timbre perception

The spectrotemporal modulation (STM) sensitivity task is a subtest from the Portable Automated Rapid Testing (PART, Lelo de Larrea-Mancera et al., 2020) that was used as a proxy measure of timbre perception. The STM paradigm utilised a two-cue two-alternative forced choice (two-cue two-AFC). The standard/reference stimuli consisted of unmodulated broadband noise from 400 to 8,000 Hz, whereas the target stimuli were spectrally modulated at two cycles per octave and amplitude modulated at 4 Hz, relative to the standard stimuli. The stimuli were 500 ms in duration. Difficulty was adjusted by adapting the modulation depth across two stages. The first stage used larger steps (0.5 dB) for three reversals, and the second stage used smaller steps (0.1 dB), with the task ending after six reversals. Thresholds were estimated from the mean of the second stage reversals. Participants were presented with a total of four choices. The first and fourth choices used standard stimuli, and the target stimuli was presented in the second or third choices. Participants would receive correct/incorrect feedback after each selection. The rationale for the first and fourth stimuli using standard cues allows the task to be performed by comparing information either forward or backward in time, reducing the impact of age-related differences associated with attention and memory (Gallun et al., 2012).

Frequency-following response

The FFR stimuli used was the speech syllable /da/, downloaded from the Auditory Neuroscience Laboratory (Brainvolts) website (https://brainvolts.northwestern.edu/). Participants were presented with 6000 sweeps (or 3000 sweeps per polarity). The /da/ is a voiced stop/plosive with a 170 ms total duration, 5 ms voice onset time, 50 ms transition from /d/ to /a/, and 120 ms steady state duration consisting of the vowel (/a/). Importantly, the fundamental frequency of the vowel was set to 100 Hz.

The ITPC, also referred to as the “phase-locking factor,” was calculated across all epochs per participant. The ITPC is a frequency-domain feature that measures synchronisation of neural responses to periodic auditory inputs (Delorme & Makeig, 2004). In other words, the ITPC represents how consistent the phase alignment of oscillatory responses across trials and may have utility as a measure of perception in noise (Koerner & Zhang, 2015).

Procedure

Initial eligibility visit

The initial visit confirmed participant’s eligibility through the signing of a participant information and consent form, completion of an audiological assessment to establish their level of unaided hearing loss (pure tone average testing at 0.25, 0.5, 1, 2, 3, 4, 6, 8 kHz), otoscopy to ensure the ears were not occluded, passing the MoCA screener with a criterion score ≥ 21, and the collection of demographic information. The initial eligibility visit took approximately 45 min to complete, and participants were reimbursed 10 CAD (Canadian Dollar) for their time and effort.

Test session

Otoscopy was once again completed to ensure the ears were not occluded prior to testing. Participants were seated in a double-walled sound-attenuated chamber (Industrial Acoustics Corp., Bronx, NY). The questionnaires (MoCA and WAIS-IV) were completed with the use of HAs, but all behavioural measures were completed without HAs, i.e., participants were unaided and used Etymotic Insert Earphones (Etymotic Research Inc., Elk Grove Village, IL). This was to limit the extraneous effects and variability associated with the HA itself. Stimuli were initially presented at 65 dB and adjusted according to the participants’ preference for a comfortable and audible listening level (either lower or higher) in 5 dB increments. The experimenter indicated that if anything was too loud or too soft at any point, the volume could be adjusted. Only one participant requested a 10 dB increase for QuickSIN and R-SPIN.

In preparation for the FFR recording, the participant’s forehead and right earlobe was thoroughly cleaned using alcohol wipes. Two EL504 Ag/AgCl disposable cloth electrodes (BIOPAC Systems Inc., Goleta, CA) were firmly affixed to the forehead. The centre of the right-sided electrode was positioned approximately 2 cm above the right eyebrow, whereas the centre of the left-sided electrode was positioned approximately 4 cm above the left eyebrow. A third electrode was placed on the right earlobe. Each electrode was connected to an MP150 data acquisition system via a MEC110C Biopotential Amplifier and an ERS100 C Evoked Response Amplifier Module (BIOPAC Systems Inc., Goleta, CA). The FFR data were sampled at 20 kHz during data acquisition. A visual inspection of the signal’s amplitude was performed through BIOPAC’s AcqKnowledge4 software (BIOPAC Systems Inc., Goleta, CA) to confirm that the range was within ±0.35 μV. When an optimal signal was achieved, participants were instructed to sit as still as possible and to concentrate on a silent movie while the auditory /da/ stimulus (F0 = 100 Hz) was presented at alternating polarities continuously for 25 min and recorded through the AcqKnowledge4 software.

The test session took approximately 2 hr to complete, and participants were reimbursed 30 CAD for their time and effort.

FFR data processing

FFR data were pre-processed using PHZLAB (Nespoli, 2016) and custom MATLAB scripts. A notch filter was first applied to the FFR signals to remove the 60 Hz powerline interference. A high pass filter with a cut-off frequency of 75 Hz was then applied to attenuate low frequency components in the signal (e.g., motion artefacts). The FFR was split into 253 ms epochs using a window of –40 to 213 ms relative to the onset of the stimulus. Trials containing myogenic artefacts were identified and discarded if amplitudes exceeded a threshold of 50 µV. The epochs were equalised to ensure the number of stimuli trials associated with positive and negative polarities were equal. The FFR in the remaining epochs were averaged over both polarities and the Fast-Fourier Transform (FFT) with a size of 8,192 samples was applied to generate the frequency spectra. The magnitude of the spectra at the fundamental frequency (i.e., 100 Hz) was calculated from the averaged signal.

Statistical analyses

A two-step statistical analyses was used to explore how music and cognitive factors were associated with SIN perception. First, a correlational analysis was used to identify the independent variables associated with SIN perception. Second, we conducted multiple linear regression modelling to explore the relative contribution of the identified independent variables. IBM SPSS Statistics Version 28 was used to conduct statistical analyses. QQ plots and histograms confirmed that most variables were normally distributed, with the exceptions of QuickSin, FDL, and STM measures, with positive or right skew values of 1.7, 2.8, and 2.9, respectively which are interpreted as highly skewed as a rule-of-thumb. Hence, correlations associated with QuickSIN were analysed with Kendall (non-parametric) correlation coefficients, whereas correlations associated with R-SPIN were analysed with Pearson (parametric) correlation coefficients. Significance levels were set to .05.

Based on previous findings, our expectation was that better WM and music perception measures would be associated with better SIN perception. Both behavioural (FDL, STM, and BAT) and physiological measures (FFR and ITPC) were included. Hence, correlations were analysed with one-tailed tests. Outliers were removed if they were deemed to be due to obvious measurement error. This was the case for two FFR measurements, as their results were off by a factor of 10, relative to the expected range.

Results

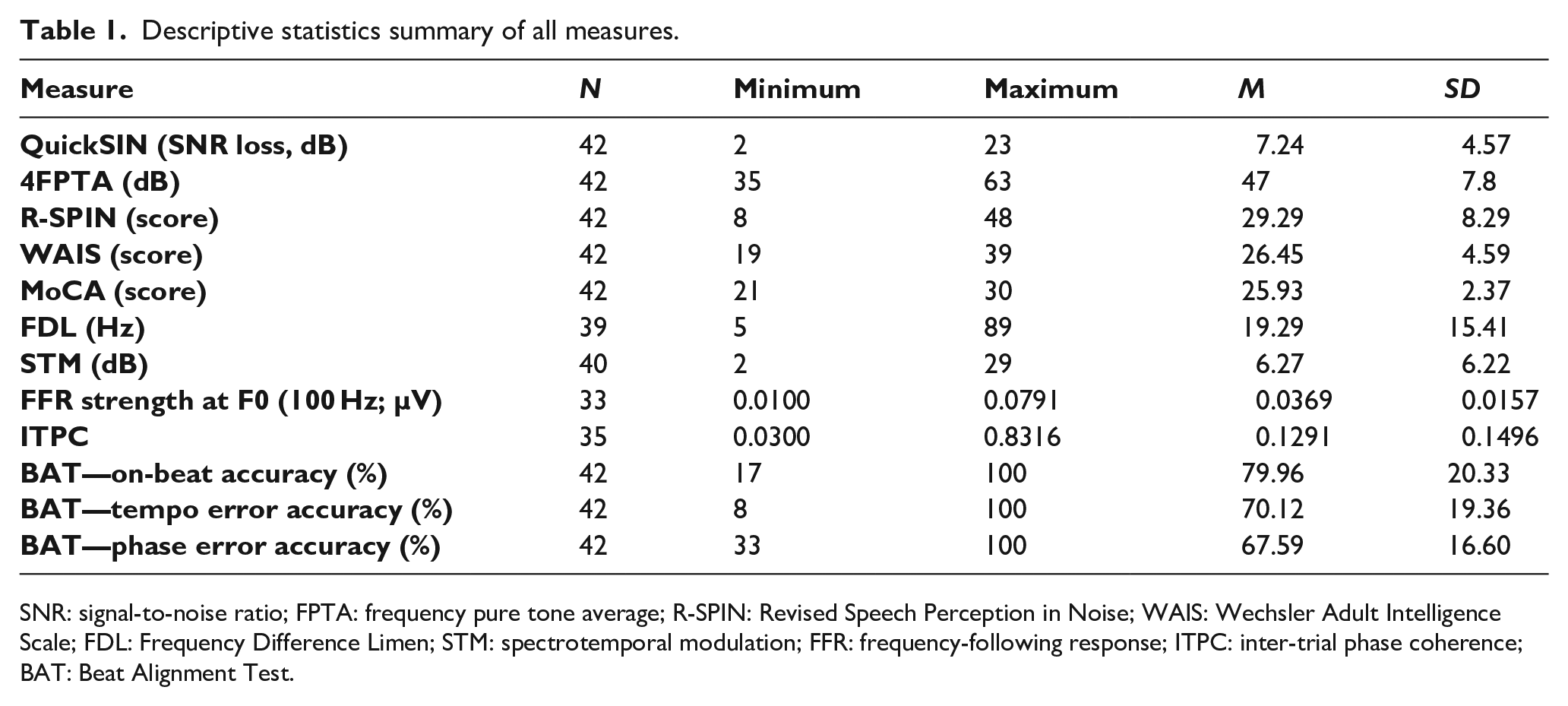

Descriptive statistics summarising all measures are available in Table 1.

Descriptive statistics summary of all measures.

SNR: signal-to-noise ratio; FPTA: frequency pure tone average; R-SPIN: Revised Speech Perception in Noise; WAIS: Wechsler Adult Intelligence Scale; FDL: Frequency Difference Limen; STM: spectrotemporal modulation; FFR: frequency-following response; ITPC: inter-trial phase coherence; BAT: Beat Alignment Test.

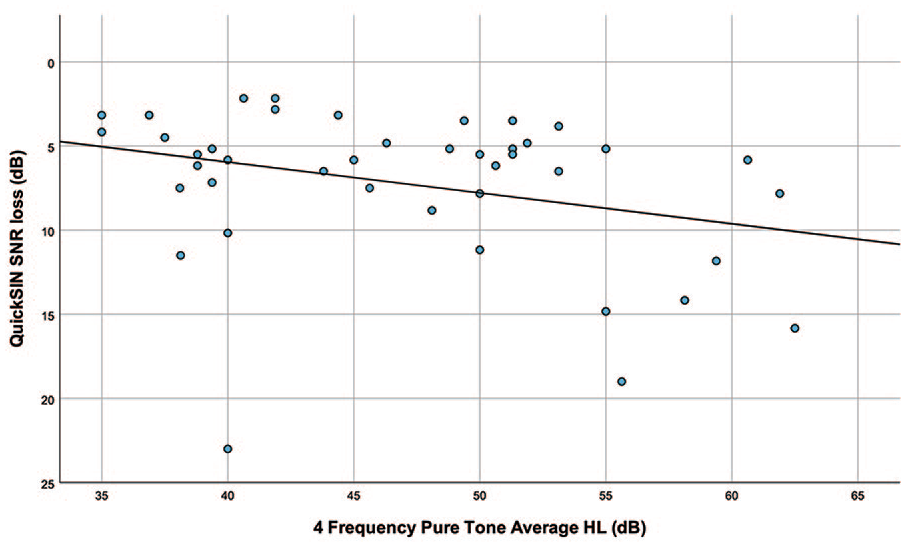

Correlations between SIN and hearing loss

Statistically significant correlations were found between QuickSIN and 4FPTA (Pearson r = .237, p = .015), but not between R-SPIN and 4FPTA (Pearson r = –.084, p = .220). Figure 1 shows a scatterplot of 4FPTA and QuickSIN SNR loss. As revealed in the plot, lower levels of hearing loss were associated with better SIN performance in QuickSIN.

Scatterplot of 4 frequency pure tone average (4FPTA) and QuickSIN SNR loss.

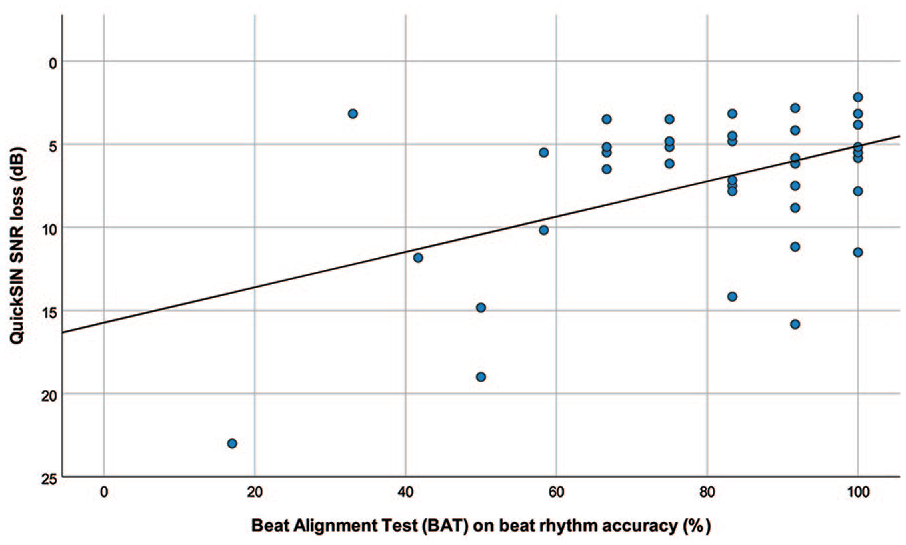

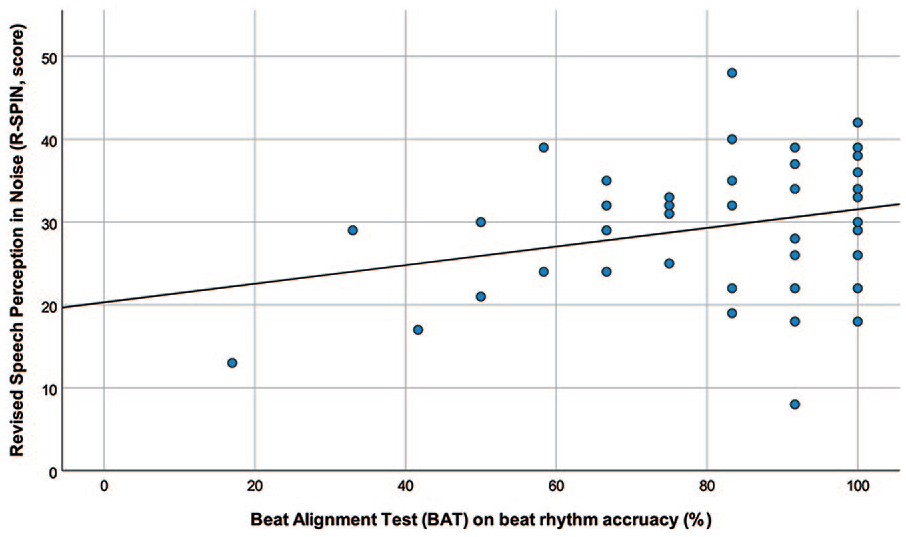

Correlations between SIN and rhythm perception

Statistically significant correlations were found between QuickSIN and on-beat rhythm measures (Kendall τb = –.204, p = .038), but not tempo error or off-tempo perception. Statistically significant correlations were also found between R-SPIN and on-beat rhythm (Pearson r = .275, p = .039). Figure 2 shows a scatterplot of on-beat rhythm accuracy and QuickSIN SNR loss, and Figure 3 shows a scatterplot of on-beat rhythm accuracy and R-SPIN scores. As revealed in the plots, on-beat rhythm accuracy (but not tempo error or off-tempo rhythm) was associated with better SIN performance in both QuickSIN and R-SPIN tasks.

Scatterplot of Beat Alignment Test (BAT) for on-beat rhythm accuracy and QuickSIN SNR loss.

Scatterplot of Beat Alignment Test (BAT) for on-beat rhythm accuracy and Revised Speech Perception in Noise (R-SPIN) scores.

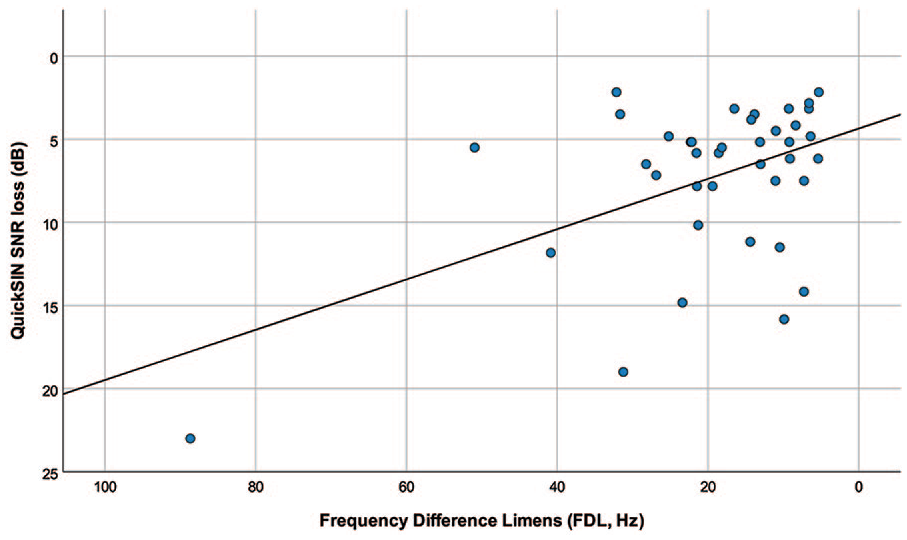

Correlations between SIN and pitch perception

Statistically significant correlations were found between QuickSIN and FDL measures (Kendall τb = .187, p = .048), but not between R-SPIN and FDL (Pearson r = –.258, p = .056). Figure 4 shows a scatterplot of FDL thresholds and QuickSIN scores. Hence, pitch perception was associated with better SIN perception (when measured by QuickSIN). However, it should be noted that this correlation between pitch perception and SIN is no longer significant if we remove the outlier (defined as any observation exceeding the 1.5 interquartile range).

Scatterplot of Frequency Difference Limen (FDL) thresholds and QuickSIN scores.

Correlations between SIN and timbre perception

Timbre perception (measured by STM) was not associated with better SIN perception.

Correlations between SIN and FFR

Neither FFR nor ITPC were associated with better SIN perception.

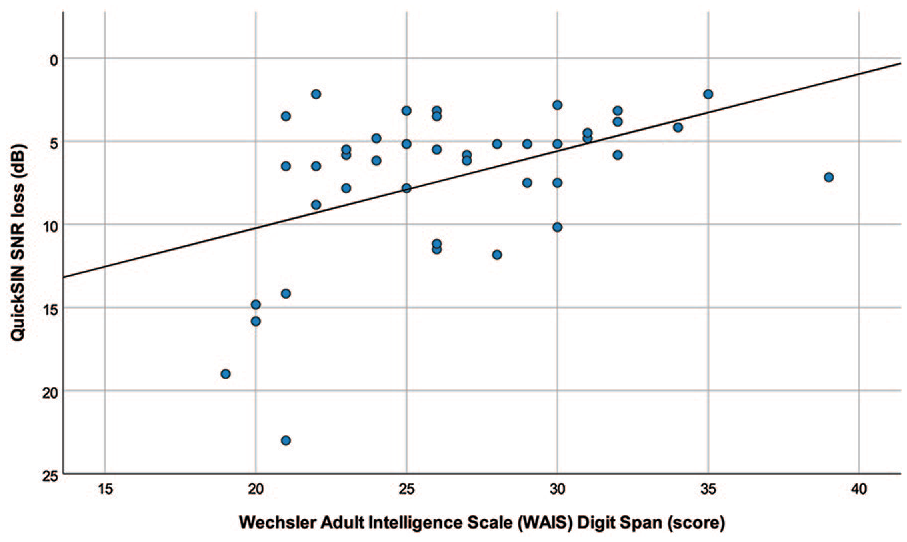

Correlations between SIN and WM

Statistically significant correlations were found between WM (as measured by the WAIS WM subtests) and QuickSIN (Kendall’s τb = −.332, p = .001), but not WM and R-SPIN. Figure 5 shows a scatterplot of WM and QuickSIN scores. The positive linear trendline reveals that higher WM was associated with better QuickSIN scores. However, better WM was not associated with improved R-SPIN performance.

Scatterplot of Wechsler Adult Intelligence Scale (WAIS-IV) digit span and QuickSIN scores.

Multiple linear regression modelling

From the correlational analyses, four independent variables were identified as being associated with QuickSIN perception: 4FPTA, on-beat accuracy, FDL, and WM. Hence, a stepwise multiple linear regression was conducted to identify the most influential predictors of QuickSIN perception from these candidate variables. The criteria for F-to-enter was ≤ .05 and F-to-remove was ≥ 0.10. In the first step, on-beat accuracy was entered into the model, resulting in a significant improvement, F (1,37) = 12.068, p = .001, adjusted R2 = .226. In the second step, WM was entered into the model, leading to another significant improvement, F(2,36) = 10.035, p < .001, adjusted R2 = .322. For every 10%-point increase for on-beat accuracy there was a 0.9 dB improvement for QuickSIN, and for every 2-point increase on WM there was a 0.7 dB improvement for QuickSIN. The final model suggests both on-beat accuracy and WM contribute to the prediction of QuickSIN perception.

Discussion

The purpose of this study was to explore how music and cognitive factors were associated with SIN perception in older adults with moderate-to-moderately severe hearing loss. Our findings provide evidence that better on-beat rhythm accuracy and WM are associated with better SIN perception. To the best of the authors’ knowledge—an association between on-beat rhythm and SIN perception in older adults with hearing loss and HAs has not been previously reported.

We hypothesised that better general rhythm abilities (i.e., all BAT subtests) would be associated with better SIN. However, our results indicated that only the on-beat rhythm condition (and not tempo error or off-tempo accuracy) was associated with better SIN performance in both QuickSIN and R-SPIN tasks. The ability to attend to the on-beat has been tied to enhanced attention that extends beyond the auditory domain (i.e., cross-modal activation and facilitation; Escoffier et al., 2010). On the contrary, a tempo error (off-beat) advantage appears to be associated with enhanced speech prosody perception (Hausen et al., 2013).

The association between on-beat rhythm and SIN perception is likely to arise from enhanced accuracy of temporally guided attentional predictions consistent with DAT (Jones, 1976), which suggests a benefit of beat perception is to focus processing resources and attentional energy at these temporal points of interest. This is extended within the PRISM framework, as rhythmic abilities may also confer specific speech perception advantages such as temporal envelope processing (particularly if the phonemic vowel nucleus at the word level is conceptualised as a “beat”) as well as enhanced formant tracking (i.e., sensitivity to small and rapid acoustic fluctuations), all of which are relevant for effective SIN perception.

The novel finding that on-beat accuracy is associated with SIN perception is also convergent to findings by Yates et al. (2019). In this study, on-beat accuracy (measured with the BAT) was associated with better SIN outcomes in young adults aged 19–40. In addition, our findings are complimentary to findings by Slater and Kraus (2016). In this study, participants were young adult males with normal hearing aged 18–35 stratified as musicians or non-musicians. Although the rhythm accuracy task was different (on-beat accuracy from the BAT vs a rhythm discrimination subtest from the Musical Ear Test [MET] using a same/different paradigm), QuickSIN was a shared primary outcome measure, and better rhythm discrimination was associated with better QuickSIN perception for all participants (musicians and non-musicians). Thus, despite the differences in the cohorts from this study compared with the study by Slater and Kraus (2016; i.e., older adults with hearing loss that were majority female compared with young adult males with normal hearing that were musicians or non-musicians); the similarity in findings are complimentary, and indicative of a robust effect irrespective of age, sex, music training experience, or hearing status.

Pitch perception, timbre perception, and FFR measures were not associated with SIN perception. Although the correlational analysis indicated better pitch perception was associated with better SIN perception, this finding was driven by one outlier. When this observation was removed, the association between pitch perception and SIN was no longer significant. In addition, pitch perception was not a significant candidate variable for the multiple regression analysis. STM was utilised as a proxy measure of timbre perception, which has been noted as being predictive of speech reception in adults under 65 years of age (Bernstein et al., 2013). However, our average age of participants was 73.5 years, which may explain why timbre perception was not associated with SIN perception. It was surprising that FFR was not correlated with SIN perception, given it is thought to provide a global assessment of the brain’s ability to follow and encode the frequency components of an auditory stimulus, primarily derived from the brainstem with lesser cortical contributions (Coffey et al., 2019; Krizman & Kraus, 2019).

However, there are several possible explanations for this lack of correlation. First, FFR responses were derived from a passive listening state, whereas QuickSIN requires active listening. Presacco et al. (2019) also found no correlation between FFR and QuickSIN in older listeners with or without hearing loss, suggesting that the four-talker babble provides more energetic masking than informational masking. This is debatable, with Yoo and Bidelman (2019) suggesting QuickSIN features high levels of informational masking. Alternatively, in a study with older adults with mild-to-moderate hearing loss, Anderson, Parbery-Clark, et al. (2013) reported a relative cue weighting deficit for temporal fine structure, relative to envelope cues. That is, older participants with hearing loss were more reliant on temporal envelope cues compared with temporal fine structure. FFRs are also modulated by arousal states in normal-hearing listeners, but this process is disrupted by hearing loss (Lai et al., 2023). Taken together, our findings are in alignment with these previous studies that indicate FFRs and SIN are not consistently correlated, as older age and hearing loss generates a bias towards temporal envelope processing and disrupts the functional benefit of arousal states.

Greater WM was associated with better QuickSIN scores in line with previous findings (Parbery-Clark et al., 2011; Strori & Souza, 2022). However, this association was unique to QuickSIN, as better WM was not associated with improved R-SPIN performance. QuickSIN has been shown to rely on WM more than other SIN tasks such as the Hearing-In-Noise-Test (HINT; Nilsson et al., 1994; Parbery-Clark et al., 2009). QuickSIN likely relies more on WM than the R-SPIN, as the participant is only required to recall the last word of the target sentence, rather than a whole sentence.

There are several limitations to consider. First, the statistical methodology was underpinned by correlation analyses. Thus, it is important to consider that our findings are based on associations and not causal inference. Second, the experience of hearing loss is heterogeneous, and we presume our sample is representative of this, noting there was no inclusion/exclusion criteria based on participants’ prelingual, or postlingual HA use. In addition, although the use of unaided hearing allowed us to remove the potential confounds of the actual HA device itself—and though we allowed participants to adjust the hearing level of materials to a comfortable listening level (which was only requested by one participant)—it is plausible that some participants may have been differentially affected, given the heterogeneous and/or frequency-specific nature of their hearing loss. Finally, our cognitive screening was administered using the standard MoCA test with a lower inclusion criterion to compensate for any hearing-related disadvantage. However, moving forward, we recommend the use of the MoCA for people with hearing impairment (MoCA-H; Dawes et al., 2023), which has been recently developed.

In conclusion, on-beat rhythm accuracy and WM were found to be positively associated with SIN perception for older adults with hearing loss. These findings are in alignment with the DAT and PRISM framework, and support an association between cognitive and music perception with SIN perception in older adults with hearing loss, noting that this cohort remains under explored (Maillard et al., 2023). The finding that more accurate on-beat rhythm is associated with better SIN outcomes is novel, particularly in the context of hearing loss, whereby access to frequency-related cues are typically diminished, but temporal cues (such as beat perception) are relatively intact. Although the benefits of rhythm may be less than that experienced by younger adults (Pearson et al., 2023), it is still beneficial, and importantly—rhythm is a trainable skill (Matthews et al., 2016). Thus, auditory training and rehabilitation that focuses and/or incorporates aspects of rhythm may be particularly beneficial for older adults with hearing loss.

Footnotes

Acknowledgements

We are grateful to the participants for their time and effort; our choir facilitators Sina Fallah and Christina Wynans; the veritable army of research assistants and contributors in the Science of Music, Auditory Research, and Technology (SMART) Lab including Francesca Copelli, Liz Earle, Karla Kovacek, Phuong-Nghi Pham, Amanda Raposo, Joseph Rovetti, Scott Ryan, Carley Toderick, Rea Tsigas, Irene Valentiner, Linda Dang, Joey Florence, Yuli Jadov, Wishah Khan, Arnon Weinberg, and Emily Wood; and Sean Gilmore for statistical advice.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a Research Chair sponsored by Sonova Holding AG awarded to F.A.R., as well as a Mitacs grant awarded to E.D. and C.Y.L.