Abstract

A redundancy gain occurs when perceptually identical stimuli are presented together, resulting in quicker categorization of these paired stimuli than lone stimuli. Similar effects have been reported for paired stimuli within the same conceptual category, particularly if the category is self-related. We recruited 528 individuals across three related studies to investigate whether, during perceptual and conceptual redundancy, such self-bias effects on foreground stimuli are modulated by natural versus urban backgrounds. Here, we highlight our observations pertaining to perceptual and conceptual redundancy effects of the foreground stimuli. In our first experiment, response options were randomised per trial. Results showed reaction time gains for perceptually identical stimuli, but this advantage was not modulated by self/other categorization. However, slower reaction times were observed for conceptually-related stimulus pairs and were influenced by self/other categorization. The second experiment replicated the methods of earlier studies of redundancy and observed comparable results to Experiment 1: a perceptual redundancy gain unmodulated by self/other categorization, yet for conceptual redundancy, no gain/cost but effects of self/other categorization. In the third experiment, self/other categories were substituted with arbitrary A/B categories: Once more, there was a perceptual redundancy gain and no conceptual redundancy gain. Notably, A/B categorisation produced effects equivalent to self/other categorisation. Overall, these findings challenge previous research on the facilitated early processing of conceptually-related stimuli and suggest that self-relatedness may not exert a unique effect on stimulus processing beyond attentional and response preferences during categorization. Our study motivates further research to understand conceptual categorization and redundancy gain effects.

Keywords

Introduction

Redundancy “gains” in reaction time

The perceptual redundancy effect refers to the observation that two identical stimuli are categorised faster than when only one exemplar is presented, despite the second (“redundant”) stimulus conferring no additional information (Miller, 1982). The reduction in reaction times (RT) when presented with two stimuli versus just one is referred to as a “redundancy gain” (Mordkoff & Danek, 2011; Sui et al., 2015; Sui & Humphreys, 2015; Töllner et al., 2011; Yankouskaya et al., 2012).

The typical explanation of this effect is that the two exemplars are processed in independent channels in a “race.” Although the stimuli are identical, stochasticity will lead one channel to “win,” and so on average, processing speed (and therefore RTs) will be faster with two stimuli than one (independent race model, Raab, 1962). On an alternative view, co-activation models propose that the two stimuli are processed in an integrative fashion, “co-activating” the same nodes additively and thus speeding processing time (Miller, 1982). While the former model asserts that processing of the two stimuli is independent, the latter proposes that processing is non-independent. One can arbitrate between these two models for some dataset using some paradigm/stimulus set by testing the race-model inequality (RMI; Miller, 1982). If the inequality is met, then independence can be assumed; if it is violated, then the co-activation model is (usually) assumed (Miller, 1982). Taking this approach allows us to test for qualitative differences in processing across different stimulus or task manipulations: if data from one condition satisfy the RMI but the RMI is violated in another, then we can infer qualitatively different processing across the two conditions.

Conceptual redundancy and self-bias

Recent work has used this approach to test for qualitative differences in processing for stimuli that are associated with “self” versus “other” (Sui et al., 2012, 2015; Sui & Humphreys, 2015). Self-prioritisation (self-bias)—in which exemplars related to the self are processed faster than other-related exemplars—is highlighted by Humphreys and Sui (2016) in their proposal a Self-Attention Network (SAN). The SAN is thought to compose neural circuits linking regions involved in self-representation (ventro-medial prefrontal cortex), attentional control (dorso-lateral prefrontal cortex; intraparietal sulcus) and bottom-up orienting responses (posterior superior temporal sulcus) which interact to generate the phenomenon in which self-related stimuli are preferentially treated in terms of salience and processing speed. Although classic redundancy paradigms have used perceptually redundant (i.e., perceptually identical) stimuli to investigate the self-bias effect, some recent work, such as that in Sui et al. (2015), have also demonstrated self-bias at conceptual levels of redundancy. Under conceptual redundancy, a participant will see two exemplars from the same category that differ perceptually. Sui and Humphreys found that self-related gains in visual processing speed were at least partially independent from reward, with reward only affecting processing speed during conceptual redundancy. These results were replicated in another experiment by the same authors in Sui et al. (2015), in which self-related biases caused faster overall RTs at both perceptual and conceptual levels and high-reward bias affecting RTs at a solely conceptual level.

Scope of this study

There is conflicting evidence on the impact that natural environments have on processing speed (Vella-Brodrick & Gilowska, 2022); nonetheless, evidence remains for the positive effect nature has on cognition (Mason et al., 2021). For example, in a series of experiments on Dutch school children using the Digit Letter Substitution test, long-test exposure to nature increased processing speed significantly for children in the natural environment condition (van Dijk-Wesselius et al., 2018) yet saw no significant increases in a previous study (van den Berg, 2016). More recently, Daniels and colleagues (2022) found that a nature-based intervention in the workplace increases processing speed alongside improvements in selective attention as compared to control groups. This positive impact nature has on cognition is theorised to be driven by recovery from either stress or mental fatigue: with the Stress Recovery theory identifying natural environments as a stimulus that facilitates stress reduction in the short term (Ulrich et al., 1991) and the Attention Restoration Theory proposing that natural stimuli increase attentional capacity by reducing the fatigue produced during mental processes (Kaplan, 1995). Such restorative properties of nature have been linked to processes associated with identity and the self (Morton et al., 2017). Furthermore, conceptual links between natural environments and the self are also reported, with “self-nature” associations being more prevalent than “self-built up area” associations (Schultz & Tabanico, 2007).

The potential for natural environments to exert an effect on processing speed and its association to the self may be better understood using an enhanced redundancy paradigm. To this end, we will investigate any potential effects natural versus urban environments may have on the well-documented influences of self-bias on RTs within the redundancy paradigm.

The paradigm as described allows for changes in stimuli (shapes, faces, pictures, and so on) and context of categorisation (self/other; high reward/low reward), and its effects have been well documented. We intended to expand its potential by adding an additional level of manipulation, adding background images that vary between natural and urban environments.

The aim of the present study was to build on previous work showing a “self-boost” to perceptual processing, testing whether these effects are greater in natural than urban environments. Using the redundancy gains paradigm described in the “Redundancy ‘gains’ in reaction time” section, adapted to display natural/urban backgrounds, we predicted that:

1 Trials in which shapes are correctly categorised as relating to the “self” will be processed quicker than trials where shapes are correctly categorised as relating to “other”

2 Both perceptual and conceptual redundancy gains would be greater in stimuli trained to be associated with “self” than “other”

3 That the self-boost would be greater for stimuli presented in natural than in urban environments.

To do this, we used four perceptually distinct shapes (square, triangle, circle, and diamond) and trained two to be associated with “self” and two with “other.” On each trial, one shape was be presented on its own (no redundancy, i.e., baseline condition), in a duplicated pair (perceptually redundancy), or two exemplars from the same category was presented (conceptually redundancy). Furthermore, on each trial, the shape(s) were presented over either a natural or an urban background. Participants were instructed to categorise the shape as “self” or “other” (or, in control Experiment 3, as “A” or “B”) as fast and as accurately as possible. Accuracy (% correct) and RT were recorded.

Experiment 1

Methodology

Participants

We recruited a total of 261 participants, 196 (116 male; 18–66 years of age

Stimuli and task

Four black-filled geometric shapes (circle, square, triangle, diamond) were assigned to one of two conditions (counterbalanced across participants): with conditions being described as either “self-related” or “other-related.” Participants performed a simple categorisation task where they had to report, as quickly and accurately as possible, the category membership of the presented shape(s). On each trial, participants saw either one or two exemplars from the same category. If only one shape was presented (non-redundant condition), the shape was presented in either the left or right visual field. If two were presented as a pair (redundant), then shapes appeared on both the left and right fields of vision.

The geometric shapes were presented over photographs depicting either a natural or an urban scene. Scene type (urban vs. natural) was randomly determined on each trial (see https://osf.io/fjkty/ for images used). All photographs were of identical size and mean luminance. Background images were manually selected from a dataset such that they had no salient features such as legible text, unusual bright colours, features that immediately occupy attention, or contained any blurry elements. Stimuli were presented to participants via the Gorilla Experiment Builder software using their own laptop or PC.

Procedure

Each experiment had two stages: a training stage and an experimental stage. After giving informed consent (online form via Gorilla platform), participants were shown and asked to familiarise themselves with the geometric shapes and were told which shapes belonged to which category (“Self” or “Other”). Once they were ready to continue, participants completed a 48-trial training block. After 500-ms presentation of a central fixation cross, either one or two shapes appeared on the left and/or right visual fields for 250 ms. If two were presented, they belonged to the same category. Shapes were presented over either a natural or urban background. Participants had 3000 ms to categorise the shape(s) into one of the two categories they were given at the start (self/other) by pressing the left key “d” or the right key “k.” Valid feedback (green-tick or red-cross) was given after each trial.

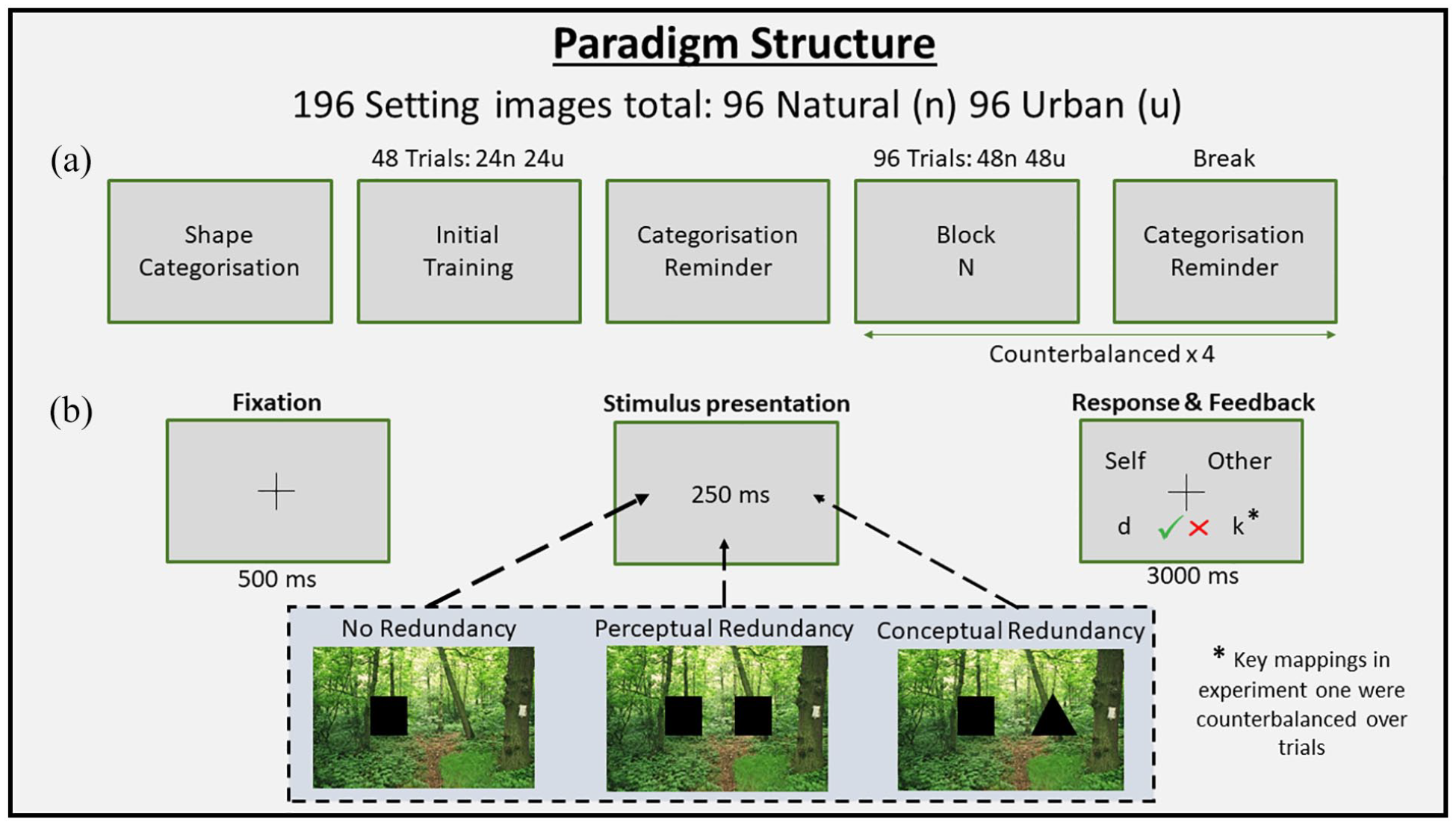

After training, participants were once again shown the geometric shapes and their categorisations and reminded of their task. They then began the experimental portion of the task, which consisted of four blocks of 96 counterbalanced shape trials, utilising our set of 192 background images (96 natural/96 urban, see link above). Stimuli were presented to the participant in the same manner as the practice section, with breaks between each block reminding them of their shape categorisations and task instructions (See Figure 1).

Procedure of the paradigm in experiment one, two, and three with the only caveat being that key mappings for Experiment 1 were counterbalanced between trials (i.e., Self and Other response keys were pseudo-randomised between keys “d” and “k” throughout the experiment). (a) Overall procedure order detailing the first exposure to the randomised shaped categories—training block—reminder of the shape categories followed by four counterbalanced blocks of shapes and images with categorisation reminders between each block. (b) Breakdown of a singular trial with a 500-ms fixation cross—250 ms shape/background stimulus—3000 ms response screen detailing the individual trial response mapping and correct/incorrect feedback once a response has been given.

Unlike the previous experiments we built upon (Sui et al., 2015; Sui & Humphreys, 2015), key-response mappings were counterbalanced over trials: on each trial, where the categorization name (Self/Other) appeared on either side of the screen corresponding to the left (“d” key) or right (“k” key). The response mapping for any given trial was made known to the participant in the response screen for that particular trial. In this way, during stimulus presentation, participants could prepare their categorization decision but not their motor response. More importantly, it ensured that participants could not skip the process of categorising the shapes and just associate shapes with their assigned keys (i.e., “circle = press k” rather than “circle = self”). For this reason, the response window was extended to 3000 ms to allow for the extra time necessary to make an unmapped response.

Condition descriptions

The (within-subjects) conditions present in the following analyses are Shape (Singular, 2 Same, 2 Different), Category (Self, Other), and Scene (Urban, Natural). When computing Redundancy Type: Perceptual Redundancy = Singular – 2 Same, Conceptual Redundancy = Singular – 2 Different.

Data pre-processing

Data were extracted from Gorilla and ran through a quality-checking protocol in which participants were excluded for poor categorisation accuracy of less than 90% or connection speed that resulted in >50 trials with a >1-ms lag in stimuli presentation; checks were also made for fast guesses (RT < 150 ms). Because the natural/urban background images within the paradigm likely violates the assumption that RTs are drawn from the same underlying distribution, RTs were not used to fit the independent race model as per Sui and Humphreys (2015) and Sui et al. (2015). Instead, median RTs were calculated and brought forward to the analyses stages as executed by Yankouskaya et al. (2012), Sui et al. (2015), and Yankouskaya et al. (2017).

Results

Basic redundancy effects

The first step of our analysis was to determine whether we found redundancy gains in our data. To this end, and collapsing over levels of Category and Scene, we subtracted median RTs in the “Singular” shape trials from median RTs in the “2 Same” shape and “2 Different” shape trials using only correctly categorized trials. One sample t-test against 0 found significant perceptual redundancy gains,

Effects of scene and self/other on perceptual redundancy gains

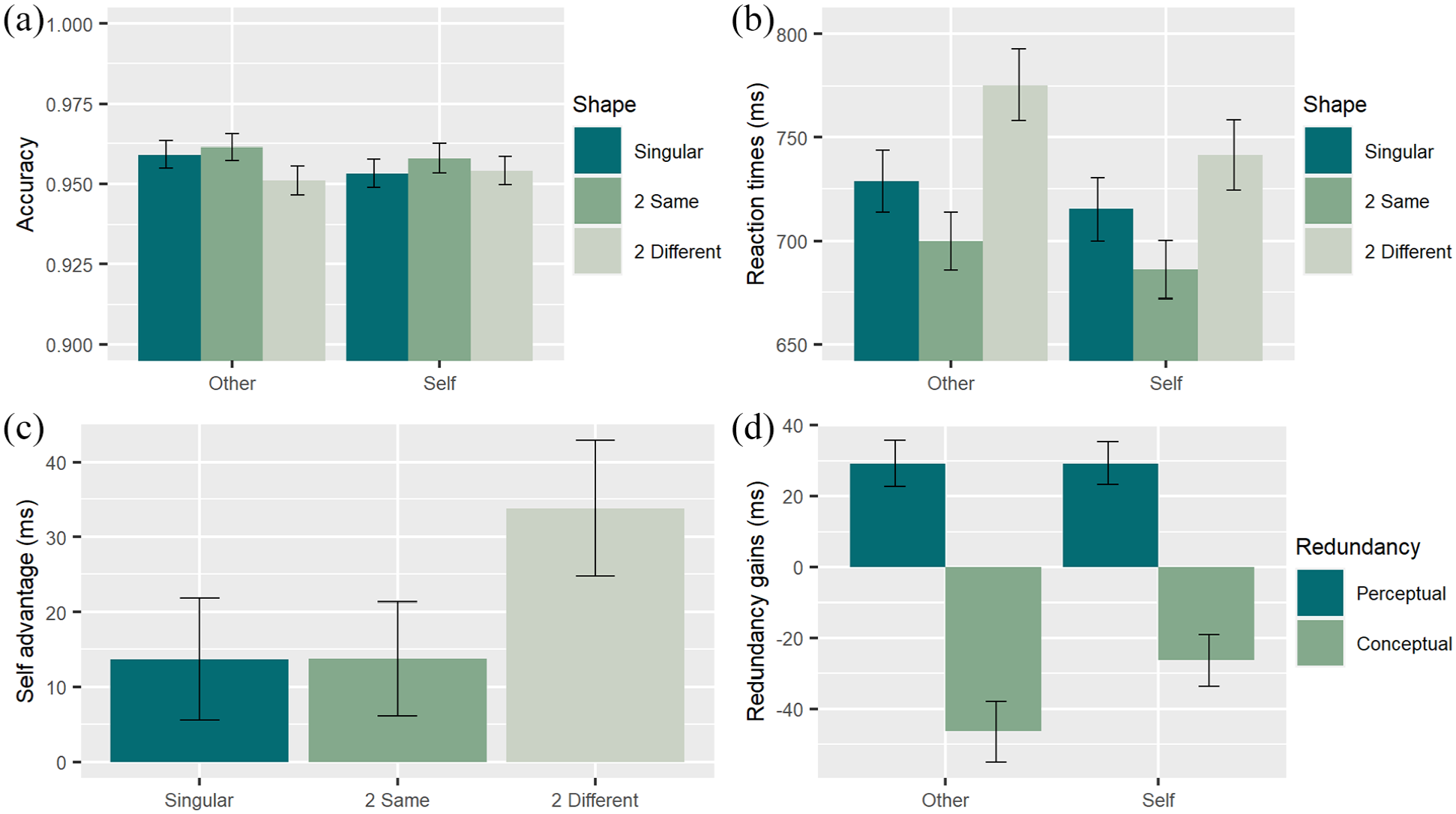

To determine whether the “self-boost” to perceptual redundancy gains (the RT benefit of seeing two identical shapes) was replicated, and to test whether that self-boost is greater in natural than urban contexts, we ran a Scene × Category repeated-measures analysis of variance (ANOVA) on perceptual redundancy gains (see Figure 2 for a visual representation of these effects). To control for the shapes that were allocated to each condition, we also had a nuisance regressor (covariate) representing the six between-subjects shape combinations.

Experiment 1 results. (a) Accuracy of participants in correctly categorising shapes expressed as a percentage. (b) Reaction times in milliseconds for each redundancy type split by categorisation. (c) Gains in milliseconds when as a function of Other Trials—Self Trials split by redundancy. (d) Redundancy gains expressed in milliseconds where perceptually redundant trials (two Same) and conceptually redundant trials (two Different) are subtracted from lone stimuli trials sample by sample (equal samples). Error bars represent 95% CI.

No significant main effects were found for Scene

So that we could determine whether we found evidence for

Effects of scene and self/other on conceptual redundancy losses

We repeated the aforementioned analyses but on conceptual redundancy gains (see Figure 2 for a visual representation of these effects). Here, we were looking at the RT benefit of seeing two different shapes from the same category. We found no significant main effect of Scene

To determine whether we found evidence for

Experiment 1 observations

In Experiment 1, we attempted to build on previous work finding a “self-boost” to both perceptual and conceptual redundancy gains (Miller, 1982; Sui et al., 2015; Sui & Humphreys, 2015), predicting that those self-boosts are greater in natural than urban settings.

However, we failed to replicate the effects seen in the original redundancy paradigm. Significant perceptual redundancy gains were found but were unchanged by self/other categorisation (or by scene type). Furthermore, while we did find conceptual redundancy effects, they were in the opposite direction to those previously reported: We found conceptual redundancy

Our failure to replicate could potentially be explained by the changes that were made to the response paradigm. In Experiment 1, we randomised the response-key mappings trial by trial to ensure that participants had to specifically categorise the shapes as self/other, and not as “respond k”/“respond d.” It could be argued that the increased cognitive load associated with randomising response-key mappings in this way influenced the results. To this end, the focus of Experiment 2 was to replicate Experiment 1 using a paradigm closer to those in the literature while retaining the background manipulation.

Category self/other

Methodology

Participants

There was a total of 125 participants who took part, 99 (75 male; 18–65 years of age

Stimuli

The stimuli were identical to those in Experiment 1.

Procedure

The procedure was identical to that in Experiment 1, except that (in line with previous literature) rather than randomly changing response buttons trial by trial, response keys were kept the same throughout the experiment and counterbalanced across participants. While a typical response time for this procedure is much lower than the 3000 ms permitted in this experiment, time allowance for a response was kept identical to Experiment 1 for consistency.

Data pre-processing

The data pre-processing was identical to Experiment 1.

Results

Basic redundancy effects

We tested for redundancy gains/losses in an identical manner as Experiment 1: Collapsing over levels of Category and Scene, we subtracted median RTs in the “Singular” shape trials from median RTs in the “2 Same” shape and “2 Different” shape trials using only correctly categorised trials. As before, significant perceptual redundancy gains were found,

So that we could determine whether we found evidence for

Effects of scene and self/other on perceptual redundancy gains

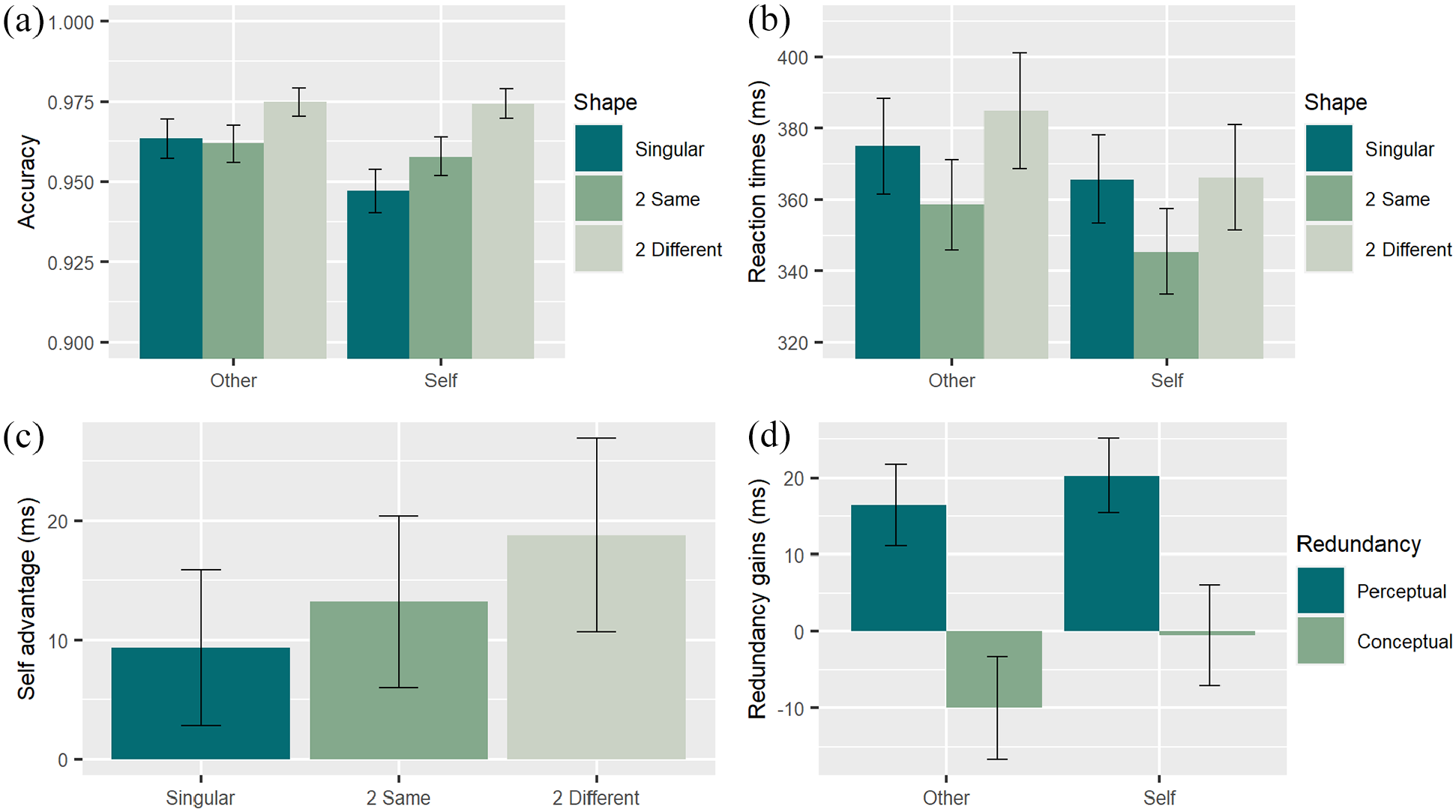

To determine whether the “self-boost” to perceptual redundancy gains (the RT benefit of seeing two identical shapes) was replicated, and to test whether that self-boost is greater in natural than urban contexts, we ran a Scene × Category repeated-measures ANOVA on perceptual redundancy gains (see Figure 3 for a visual representation of these effects). To control for the shapes that were allocated to each condition, we also had a nuisance regressor representing the six between-subjects shape combinations.

Experiment 2 detailing the (a) accuracy of participants in correctly categorising shapes expressed as a percentage. (b) Reaction times in milliseconds for each redundancy type split by categorisation. (c) Gains in milliseconds when as a function of Other Trials—Self Trials split by redundancy. (d) Redundancy gains expressed in milliseconds where perceptually redundant trials (two Same) and conceptually redundant trials (two different) are subtracted from lone stimuli trials sample by sample (equal samples). Error bars represent 95% CI.

A significant main effect of Scene was found

To determine whether we found evidence for

Effects of scene and self/other on conceptual redundancy losses

We repeated the aforementioned analyses but on conceptual redundancy gains (see Figure 3 for a visual representation of these effects). Here, we were looking at the RT benefit of seeing two different shapes from the same category. We found no significant interaction between Scene and Category

Significant main effects were found for Scene,

To determine whether we found evidence for

Differences in conceptual losses between Experiment 1 and Experiment 2

Visual comparison of conceptual redundancy losses in Experiment 1 (Figure 2d) and Experiment 2 (Figure 3d) indicates an overall reduction in conceptual redundancy losses for Experiment 2. To probe this attenuation in losses across experiments, we subtracted median RTs in the “Singular” trials from median RTs in the “2 Different” trials at each level of Category and Scene using only correctly categorised trials. Collapsing across Category and Scene, an independent samples t-test using Welch’s method on median RTs showed significant differences between conceptual redundancy losses in Experiment 1,

Combined Bayesian analysis across Experiments 1 and 2

To probe the effects of category across Experiments 1 and 2 under different levels of redundancy, two Bayesian ANOVAs with Category and Scene as within-subjects factors and Study as a between-subjects factor were conducted for perceptual and conceptual redundancy trials separately. Very strong evidence against a main effect of category (BF = .016) and very strong evidence against an interaction between Category and Study (BF = .011) were found during conceptual redundancy, suggesting that there was no difference in self/other categorisations during perceptual redundancy in both experiments and that this lack of an effect was consistent across both experiments. Extreme evidence was found for a main effect of Category (BF > 100) and anecdotal evidence against a Category and Study interaction (BF = .526), suggesting that self-related categorisations were processed faster than other-related categorisations in both experiments and that this effect of self-bias may be consistent across both experiments.

Experiment 2 observations

In Experiment 2, we attempted to replicate the experiment described by Sui et al. (2015) by removing the changes made to the response paradigm in Experiment 1, while still retaining the manipulation of natural/urban backgrounds. However, in line with the results seen in Experiment 1, we failed to replicate the effects from the original paradigm. Experiment 2 produced significant perceptual redundancy gains, but these gains were only affected by Scene, the implications of which take a back seat in relation to this article. To reiterate, the categorisation of shapes into self-related and other-related had no effect on the magnitude of perceptual redundancy gains, consistent with Experiment 1 and in opposition to previous literature (Cunningham et al., 2008; Ma & Han, 2010; Sui et al., 2012). Furthermore, reducing the difficulty of the paradigm does not generate the expected conceptual redundancy gains consistent with previous literature, but rather reduces the

Experiments 1 and 2 show a clear failure to show both conceptual redundancy gains and self-bias in perceptual redundancy gains. Furthermore, these results motivate inquiries into whether the effects of categorisation in conceptual decisions remain if we remove self-bias from the paradigm, with differences being driven instead by other cognitive mechanisms such as the preferential effect of attentional priority (Sali et al., 2016). Using the letters, A/B in lieu of self/other categorisations, we hypothesised that the main effect of categorisation during conceptual redundancy remains significant—with category A being processed faster than category B—as evidence of an alternative mechanism behind the observed self-biased increase in processing speed.

Category A/B

Methodology

Participants

There was a total of 142 participants who took part, 100 (66 male; 18–57 years of age

Stimuli

In this experiment, the task involved reporting, as quickly and accurately as possible, whether the shapes belonged to the Category “A” or “B.” Two of the four basic shapes were assigned to each Category (counterbalanced across participants). At the beginning of the experiment, participants were shown on screen the category membership of each shape, described as “A” or “B.” They also completed a training session identical to those in Experiments 1 and 2. This A/B categorisation differs from the previous two experiments and probes the self/other phenomenon further by asking if RT gains are found with neutral categorisations. It is also the sole difference of this paradigm with Experiment 2.

Procedure

Geometric shapes were presented over counterbalanced background images (natural/urban), and participants were asked to identify the category of the geometric shapes. In line with previous literature, rather than randomly changing response buttons trial by trial, response keys were kept the same throughout the experiment and counterbalanced across participants. This response paradigm is identical to Experiment 2 (Section 4).

Results

Basic redundancy effects

We tested for redundancy gains in an identical manner as Experiments 1 and 2: Collapsing over levels of Category and Scene, we subtracted median RTs in the “Singular” shape condition from median RTs in the “two Same” shape and “two Different” shape trials using only correctly categorised trials. Significant perceptual redundancy gains were found,

Effects of scene and A/B on perceptual redundancy gains

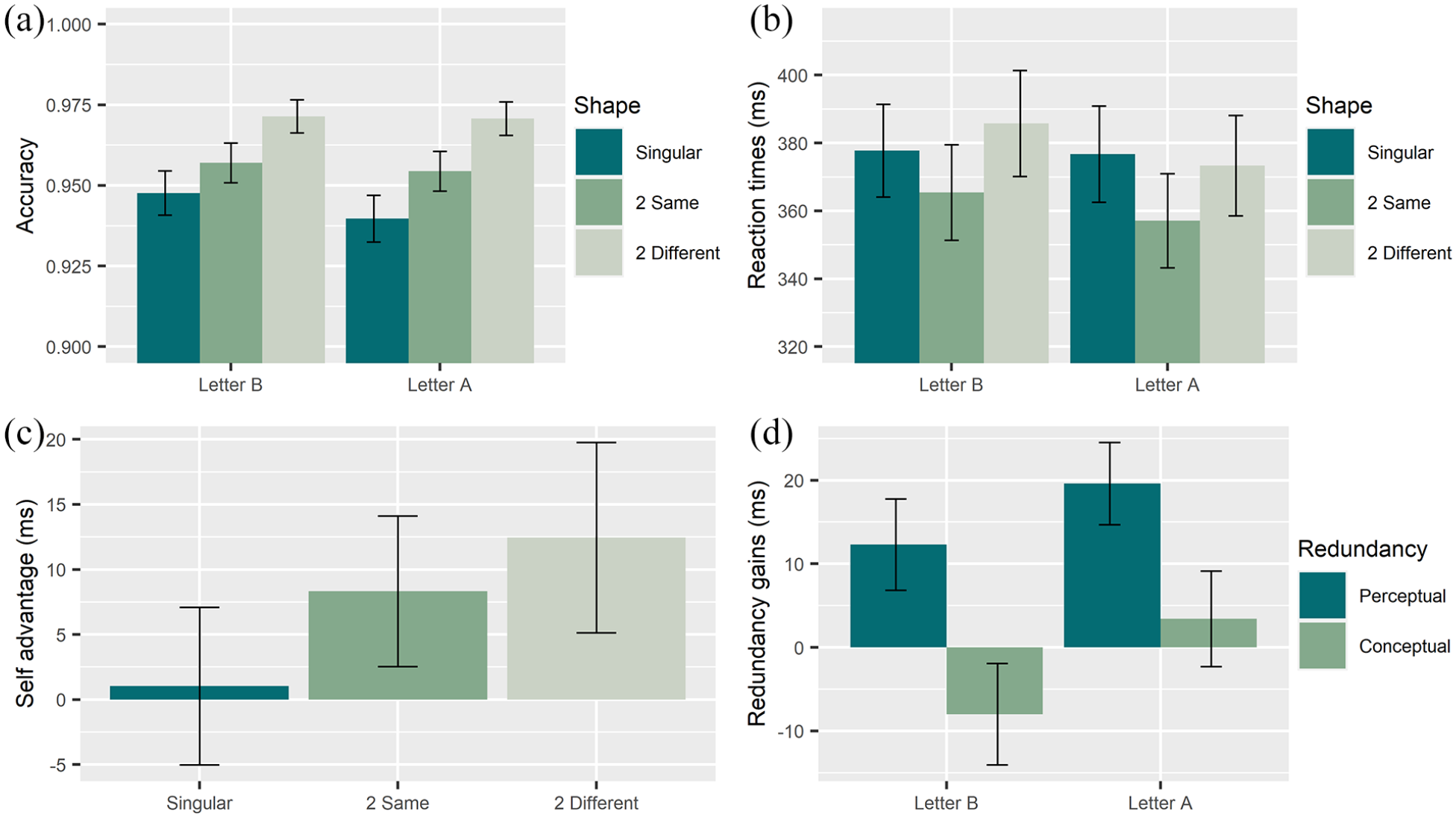

To determine whether an “A over B boost” to perceptual redundancy gains (the RT benefit of seeing two identical shapes) was present and to test whether that boost is greater in natural than in urban contexts, we ran a Scene × Category repeated-measures ANOVA on perceptual redundancy gains (see Figure 4 for a visual representation of these effects). To control for the shapes that were allocated to each condition, we also had a nuisance regressor representing the six between-subjects shape combinations.

Experiment three detailing the (a) accuracy of participants in correctly categorising shapes expressed as a percentage. (b) Reaction times in milliseconds for each redundancy type split by categorisation. (c) Gains in milliseconds when as a function of Letter B Trials—Letter A Trials split by redundancy. (d) Redundancy gains expressed in milliseconds where perceptually redundant trials (two Same) and conceptually redundant trials (two Different) subtracted from lone stimuli trials sample by sample (equal samples). Error bars represent 95% CI.

Significant main effects were found for Scene

Because the main effect of Category and its interaction with Scene are only marginally significant, we ran a Bayesian repeated-measures ANOVA of design identical to the aforementioned one so that we could further understand their effects on the data. We found anecdotal evidence for an interaction between Scene and Category (BF = 1.457) and anecdotal evidence against effects of Category (.772). These results suggest that the data lack sensitivity to address the hypothesis that Category and its interaction with Scene affect RTs during perceptual redundancy.

Effects of scene and A/B on conceptual redundancy gains

We repeated the aforementioned analyses but on conceptual redundancy gains (see Figure 4 for a visual representation of these effects). Here, we were looking at the RT benefit of seeing two different shapes from the same category. We found no significant main effect of Scene

So that we could determine whether we found evidence for

Differences in redundancy × category interaction between Experiment 2 and Experiment 3

Finally, we explicitly tested the difference in both perceptual and conceptual redundancy when making self/other versus A/B categorisations. To this end, and for Experiment 2 and 3 data separately, we subtracted median RTs in the “Singular” trials from median RTs in the “two different” trials at each level of Category and Scene using only correctly categorised trials. With these raw redundancy gains/losses in milliseconds, we further subtracted “Gains: Perceptual, Category: Self” from “Gains: Perceptual, Category: Other” for both natural and urban scene trials. The same calculations were made for conceptually redundant trials separately. Then, collapsing over Scene, an independent samples t-test using Welch’s method on median RTs was conducted. No significant difference between Experiment 2,

We followed up these null results with two Bayesian t-tests. A uniform prior was used with 0 as the lower bound on the difference and 3.835 (perceptual)/9.417 (conceptual) as the upper bound (taken as the mean difference of self/other during perceptual and conceptual redundancy trials in Experiment 2). Evidence for a difference between Experiment 1 and 2 for self-gains in both perceptual (BF = 1.252) and conceptual (BF = .853) redundancy was insensitive.

Experiment 3 observations

In lieu of any effect of self-bias on an observable redundancy gain using the paradigms in Experiments 1 and 2, Experiment 3 set out to probe the effects of category during conceptual decisions. Staying true to Experiment 2 and closer to previous redundancy paradigms (Sui et al., 2015), no statistical deviations from Experiment 2 were hypothesised, with focus on conceptual redundancy and category.

Results from Experiment 3 demonstrate that categorising stimuli as A/B or Self/Other has strikingly similar effects on RTs. This is despite replicating the basic effects of perceptual and conceptual redundancy. Because the categorisation task in Experiment 3 was neutral (unrelated to self, reward, and so on), it is a control condition that puts into question the interpretation of previous research as a “self-boost” to perceptual processing.

RTs during conceptual redundancy were not significantly different from single-shape exemplars, but—in replication of Experiment 2—RTs were significantly reduced during Category A trials when compared to Category B trials, showing a clear effect of category during conceptual redundancy.

Categorisations during conceptual redundancy were found to be non-significantly different from no redundancy categorisations but—as with Experiment 2—were affected by category, showing Category B–related deficits when compared to Category A.

Capitalising further on the similarities between Experiment 2 and Experiment 3, differences in the interaction between category and conceptual redundancy were non-significant, with follow-up Bayesian analyses showing that the evidence for a difference was insensitive. This leaves open the possibility that the observed statistical effect of “self-related” categorisations on RT during conceptual redundancy in Experiments 1 and 2 could be driven by perceptual phenomena separate to the hypothesised “self-boost” proposed in previous literature (Cunningham et al., 2008; Ma & Han, 2010; Sui et al., 2012).

General discussion

Overview

This study aimed to build on the redundancy paradigm whose function was to probe perceptual/conceptual processing and its relation self-bias by superimposing the paradigm over different environmental scenes. Perceptually redundant (two same shape) stimuli were indeed shown to effect a

Based on these findings, the manner in which we interpret the results focus on the viability of the redundancy paradigm in its current state and the validity of the self-bias manipulation, noting important methodological details that may have impacted any effects on the data.

Viability of the redundancy paradigm

Our current findings suggest that stimuli presented under perceptual redundancy are facilitated with respect to processing speed but are unaffected by self-/other-relatedness. Evidence for perceptual redundancy gains in these experiments are in line with previous literature (Miller, 1982; Sui et al., 2015; Sui & Humphreys, 2015). An alternative explanation for perceptual redundancy gains could lie in the saccadic eye movements required to respond to lone or conceptually redundant trials not necessary in perceptually redundant trials. It is true that in “resting eye” conditions of manual reaction paradigms, RTs are quicker than their post-saccadic counterparts (Baedeker & Wolf, 1987). However, shorter saccadic RTs have been found for redundant signals possibly driven by increased saccadic peak velocity during redundancy (Turatto & Betta, 2006), and without controlling for saccadic movements in this experiment, no concrete claims can be made. While participants were instructed to remain focused on a centralised fixation cross and use their peripheral vision, adherence to this instruction could not be controlled for in the current experiments.

Conceptual redundancy gains were not seen in any of the three experiments, contrary to previous articles using this paradigm (Sui et al., 2015; Sui & Humphreys, 2015). Instead, a significant increase in RTs relative to lone-stimiuli trials was observed in Experiment 1. As such, considerations outside the effects of

Under these interpretations of the current data, it is clear that the replication of the redundancy paradigm with the addition of background materials was unsuccessful. To address the hypotheses generated from this discussion, more research is required into:

1 The effect of saccadic movements during lone-stimuli trials and its relationship with RTs during perceptually redundant trials.

2 The replicability of

Validity of the “self-bias” manipulation

While we found no evidence for effects of “self-relatedness” on perceptual redundancy gains, effects under conceptual redundancy

More problematic are the results in Experiment 3 (A/B): By stripping self/other categorisations down to A/B, we should not have seen any effects of categorisation bias on raw processing speed in conceptually redundant trials. Instead, the main effects of category on processing speed bear striking similarity to that seen in Experiment 2 (“self/other”), though evidence for this lack of difference (or absence thereof) was insensitive.

The notion of “self-prioritisation effects” (SPEs) impacting processing speed is well contested within the literature. There is concern that the evidence for self-prioritisation of arbitrary stimuli is “limited” and that a self-related bias to perceptual processing—driven by a specific neural network—is not well established at present (see Golubickis & Macrae, 2022, for a review). For example, Navon and Makovski (2021) have found that effects of self-prioritisation in a memory task were not elicited by the representation of self, but rather the order in which the concepts of “Self” and “Other” were presented. Indeed, each experiment conducted in this article presented categories “Self”/“A” and its respective shape allocations before “Other”/“B” allocations. Using this perspective to probe the potential driver of the observed Categorisation effect is that of attentional priority. In this scenario, the letter A is presented before the letter B and is therefore treated as a preferential target, as would be the notion of self over the notion of other. These implicit preferences would bias the goal-driven behaviour of the participant during a categorisation exercise. Changes in behavioural goals have been shown to shape the way different stimuli involuntarily capture attention (Serences et al., 2005; Wolfe et al., 1989). For example, Caughey et al. (2021) found that upon changing the contents of task instructions from target-shape associations (self/other) to identity of shape (square/circle) or its location (above/below fixation), SPEs disappeared. Similarly, manipulating the perceived relevance of self/other associations in shape label-matching tasks determines whether self-prioritisation exerts its effect (Golubickis et al., 2020; Woźniak & Knoblich, 2022). The effects of response bias cannot be understated within the context of the conducted experiments (Constable et al., 2019; Golubickis et al., 2018). Differences in the extent of categorisation effects can be seen between Experiment 1 and Experiment 2/3 where the latter allowed for button responses to be memorised without a need for remembering category associations (i.e., square/circle = left, triangle/diamond = right). It is necessary to view the results in this article with this notion in mind. Finding self/other and A/B differences during conceptual redundancy (and not during perceptual redundancy) suggests that attentional priority towards preferential stimuli is unlikely to be driven by purely bottom-up processes. Evidence from this article suggests that indeed prioritisation of arbitrary stimuli is not an obligatory bottom-up process but rather represents a task-dependent phenomenon driven by existing attentional processes Golubickis and Macrae (2022).

In their review, Sali and colleagues (2016) propose the existence of mutually inhibitory brain regions that represent information of a similar type. This competition between sets of particular neurons lead to bias in which the “winner” impresses its representation of a certain concept onto the mind and takes attentional priority (Sali et al., 2016). Further work addressing the “Self/Other” label-matching method employed in the redundancy paradigm have produced similar results to Experiment 3, instead using non-self-relevant labels such as “SNAKE” in place of “SELF” suggesting that yet-unidentified arbitrary associations have a significant impact on processing speed and confound the effect of self-relevance (Wade & Vickery, 2017).

The driver of the observed Category effects can be evidenced by the significant shape allocation effects seen throughout all three experiments. The significant main effect of categorisation on RTs during conceptual redundancy seen in Experiment 1 was driven by shape allocation, with an effect size close to 1 (.977). This is a clear indication that responses could be relying upon preferential targets during the high-load-response paradigm. The main effect of Category did remain in Experiment 2; despite the data being insensitive to detect an effect conceptual redundancy on RTs. This is likely because there were no changes made to the experiment other than the level of difficulty or load experienced by the participant during the response paradigm. Interestingly, a sharp drop in the effect size of shape allocation (.612) was observed. Likewise, the significance of shape allocation remained during Experiment 3, with effect sizes similar to Experiment 2 (.606 and .581). We could then think of both response paradigm type (enhanced/simple) and categorisation type (self-related/arbitrary letters) as indicators of difficulty, with reliance on preferential effects/attentional priority of shapes decreasing as perceptual load and difficulty decreases. Theories on attentional blindness of low-priority stimuli during high perceptual load are numerous (see Lavie et al., 2014 for a review) and describe the Categorisation effect without the need for a perceptual advantage driven by self-bias. We propose that effects of shape allocation/attentional priority are only significant during deficits in processing speed (as compared to stimuli that are presented alone). More succinctly, during trials of high perceptual load, the need to rely on alternative perceptual mechanisms is more pronounced. Taken together, attentional priority and perceptual load paint a picture that neatly describes the interaction between conceptual redundancy deficits and categorisation preferences within a purely attentional framework.

Methodological considerations

While the experiments conducted in this article found no evidence of an effect of self-relevance on response times during redundancy gains, they do not conclusively preclude an absence of these effects. The three experiments conducted do, however, have a substantially higher-powered sample size than is typically collected within the field and therefore represent a cause for concern over the replicability of “self-bias” and redundancy gains. What is clear from the methodology of these experiments is that they do not represent a direct attempt at replication of the redundancy paradigm used in previous research. This prevents any conclusive assertions that a failure to replicate was not caused by the natural and urban background images, originally intended to serve as a unique contribution to the understanding of self-relevance and redundancy gains. However, successful replication of perceptual redundancy gains across all three experiments suggests that background had no effect on the redundancy effect itself. In addition, the background is unlikely to have interfered with the self-prioritisation manipulation or with the learned associations as evidenced by the fact that participants had no trouble learning the shape associations (as evidenced by >90% accuracy across all three studies). Finally, the potential that background images alter the way in which self-related stimuli are processed is put under question by the lack of any difference in category effects between Experiments 2 and 3. As such, we conclude that the addition of a background to the redundancy paradigm is unlikely to have driven the present results. To validate this claim, further work is necessary to directly compare background vs no background conditions using the sample sizes present in the current work.

The intention of the addition was to implement an extra dimension to a paradigm of which effects have been widely replicated (Sui et al., 2015; Sui & Humphreys, 2015). The expectation was that any differences in Scene could be interpreted over a stable foundation of well-replicated results. Having exhibited vastly inconsistent results regarding redundancy and self-bias on processing speed, interpretations of any effect of background is difficult without having to consider the notion that the effects may be sporadic in nature. As such, no concrete assumptions can be made regarding the effect of natural and urban environments on processing speed within the redundancy paradigm.

This addition has further implications on any attempt to replicate analyses from previous investigations into redundancy effects including fitting the independent race model (Sui et al., 2015; Sui & Humphreys, 2015) as differing photographs in each trial likely violate the assumption that RTs are drawn from the same underlying distribution. As such, a more general approach using median RTs were made (Sui et al., 2015; Yankouskaya et al., 2012, 2017).

In this light, our proposal that self-bias is not as large in effect as other drivers such as the ones proposed in this article necessitates further research. This should be done within a more sanitary paradigm that places stricter emphasis on the direct replication of previous paradigms (Sui et al., 2015). Furthermore, the use of “other” rather than “friend” in the association paradigm may have had some effect on the results, with stranger representing an abstract concept rather than a concrete exemplar. Indeed, neural evidence points to differences in brain activations for self/stranger associations but not for self/friend. Perhaps the lack of categorisation differences during perceptual redundancy gains were driven by this choice of methodology. Having said this, insignificant conceptual redundancy “gains” across all three experiments and its interaction with Category is a strong cause for concern regarding replicability of both redundancy gains and self-bias. Finally, findings of perceptual redundancy gains and our interpretation of these gains are somewhat marred by the inability to track eye saccades during the experiment. An alternative to the online version of this paradigm would be to include eye-tracking equipment that ensures strict adherence to re-focussing on the fixation cross. This addition would address concerns relating to eye saccades being a potential driver behind perceptual redundancy gains and further probe the effect of conceptual redundancy losses.

Conclusion

In conclusion, the pattern that has arisen from the results of these three experiments casts a shadow on two theories surrounding redundancy “gains”: (a) Physically different but conceptually redundant stimuli are processed at greater speeds than lone stimuli presented at either side of the visual field, and (b) self-related stimuli are processed at greater speeds than other related stimuli because of self-bias. What the data instead show is that during high cognitive load, conceptual categorisations are processed slower than when identical, low-load categorisations are made. Furthermore, any conceptual bias on processing speed is less likely to be related to self-bias but has the potential to be linked to shape preference driven by attentional priority that decreases with cognitive load. Finally, this article represents a call for more research into self-bias in relation to the redundancy paradigm, as preferential biases relating to specific categorisations in this paradigm are less likely to be related to self-related processes, but to other processes or preferences that need to be clarified.

Footnotes

Acknowledgements

The authors thank Marketa Borkovcová and Isabella Santoro for assisting in the setup of the data-collection process.

Author contributions

Joel Patchitt: Data curation, Formal analysis, Investigation, Methodology, Project administration, Software, Validation, Visualisation, Writing—original draft, Writing—review & editing. Maxine Sherman: Conceptualisation, Data curation, Formal analysis, Software, Supervision, Validation, Writing—review & editing. Hugo Critchley: Conceptualisation, Funding acquisition, Resources, Supervision, Writing—review & editing.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Sussex Centre for Consciousness Science (JP, MTS, and HC)