Abstract

Online surveys are a popular method for collecting data in the social sciences. Despite its cost-effectiveness, concerns regarding the legitimacy of data from online surveys are increasing. One such concern is fraudulent responses or “spam” by malicious agents intentionally deceiving the survey process to gain monetary incentives or sway research results. The research costs of “spam”—their influence on research conclusions and their threat to scientific integrity—are not well understood. Here we show the differences in financial and research costs of spam using data from an online survey of transportation workers that was cleaned using a stringent battery of spam detection techniques that utilized commercially available features and a custom spam detection algorithm. We found that we would have wasted about 73% of our budget on incentivizing spammers if we had stopped data collection upon reaching the intended sample size. We also found significant differences in research conclusions related to the relationships between key organizational constructs, including affective commitment, job satisfaction, and turnover intention, between subsamples with and without spam. Our results demonstrate that researchers who are unaware of spam or do not adequately clean their data may spend substantially more monetary and human resources, as well as derive misleading conclusions. This study highlights the importance of survey researchers being cognizant of spam responses and employing robust spam detection techniques to ensure the scientific integrity of non-probability online survey research.

Introduction

Researchers are ethically responsible for conducting research and disseminating scientific findings that are supported by reliable and valid data and accurate interpretations of the results (American Sociological Association, 2018). The integrity of scientific research is in jeopardy given the proliferation of disinformation affecting data quality (Pozzar et al., 2020). Online survey research, where the distribution of survey instruments and collection of participant responses are conducted through the Internet, has grown as a dominant data collection approach for quantitative studies across the social sciences and various other disciplines (Chandler and Shapiro, 2016; Daikeler et al., 2020). Online surveys as a ubiquitous tool for data collection has introduced a potential challenge to maintaining the integrity of scientific research and increasing disinformation via fraudulent responses (Griffin et al., 2022; Levi et al., 2022).

Many online surveys rely on non-probability sampling methods, where members of the target population have an unequal and unknown probability of being sampled (Crano et al., 2015; Vehovar et al., 2016). A common non-probability approach used in online surveys is convenience sampling, where the sample consists of individuals who are available and willing to participate in the study (Crano et al., 2015). Convenience samples in online survey research are often obtained using social media postings, emails, and online panels (Vehovar et al., 2016). Such non-probability sampling-based online surveys offer benefits of cost-effectiveness, convenience, and the ability to engage hard-to-reach populations (Das et al., 2018; Dillman et al., 2014). However, they suffer from a potential lack of generalizability of results to the target population (Vehovar et al., 2016) and other methodological challenges such as low response rates (though response rates for surveys regardless of mode of administration have declined over the past two decades) and concerns about data quality (Daikeler et al., 2020; Manfreda et al., 2008). Traditionally, data quality concerns in online survey research have focused on data compromised by careless responders (Arthur et al., 2021; Meade and Craig, 2012; Nichols and Edlund, 2020). In recent years, concerns have increased as fraudulent responses and misinformation have been proliferated by devious agents in online surveys (Bybee et al., 2022; Levi et al., 2022). With researchers continuing to accumulate scientific knowledge through online surveys using the convenience sampling approach, it is imperative that researchers devote attention to understanding the impact of fraudulent survey responses to both research budgets and the integrity of science itself. This study presents an investigation of the financial costs (e.g. monetary participation incentives) and research costs (e.g. misleading research conclusions) of fraudulent responses in a large online survey utilizing a convenience sampling approach.

Fraud in online surveys

Human fraud and automated bots are the two main types of fraud in online surveys (Simone et al., 2024). Human fraud refers to an invalid human response where a participant intentionally deceives (or “scams”) the survey process by providing phony answers, assuming fictitious identities, or attempting to take the survey multiple times using different fake profiles (Zhang et al., 2022). Automated bots (or “survey bots”) refer to computer-generated responses that are algorithmically programed to automatically respond to surveys, often multiple times, with fake data (Kennedy et al., 2021; Storozuk et al., 2020). Automated bots are relatively easy to create and deploy, exacerbating the threat to the integrity of online survey research (Dupuis et al., 2019). In this study, we refer to these fraudulent responses collectively as “spam” and the responders as “spammers.” Spammers attempt to deceive researchers through fabricated information or multiple completions to gain survey incentives (e.g. financial compensation) or to sway survey results (Griffin et al., 2022; Pozzar et al., 2020). Although research fraud is not unique to online surveys, as inauthentic participants have also been reported in qualitative research studies (Dougherty, 2021; Hoskins et al., 2025; Panicker et al., 2024), the rise of automated bots presents a much greater challenge in online survey research.

Fraudulent responses appear to be increasingly prevalent in online surveys, with several studies identifying most of their data as spam, especially those using non-probability sampling strategies (Bell and Gift, 2023; Buchanan and Scofield, 2018; Bybee et al., 2022; Griffin et al., 2022; Ruby et al., 2025). This increased prevalence results from technological advances, with modern survey bots able to circumvent many conventional data quality checks used in online surveys. For example, survey bots can effectively circumvent instructed response attention checks (e.g. “Please select ‘Strongly agree’ for this question”), which are commonly used to detect inattentive responses (Kennedy et al., 2021). Even complex CAPTCHA (Completely Automated Public Turing test to tell Computers and Humans Apart) tests can be bypassed by bots that utilize advanced techniques like deep learning models (Zhang et al., 2022). Additionally, inexpensive access to virtual private networks has enabled spammers to falsify their IP location and respond to surveys that are otherwise restricted to certain regions (Pozzar et al., 2020). Some researchers analyze responses to open-ended text questions to identify automated spam. However, modern survey bots can generate convincing human-like text responses to open-ended questions by applying natural language processing techniques (Li et al., 2021). This issue will likely be exacerbated by the emergence of bots powered by artificial intelligence (AI) text generators, such as OpenAI’s ChatGPT, which are built on large language models capable of producing contextually relevant and consistent text responses similar to those provided by humans (Sawhney et al., 2025).

Costs of survey spam

There are two primary types of costs associated with spam responses in online survey research. First, the financial costs, which include the resources spent on collecting and incentivizing spam responses. For example, federal funding agencies in the U.S. allocate copious amounts of money to research efforts annually. In FY 2023, the National Science Foundation appropriated $7.86 billion for research activities (NSF, 2024), while the National Institutes of Health spent $34.9 billion on extramural research (Lauer, 2024). Researchers have an ethical responsibility to spend research funds responsibly on real participants; if they are not cognizant of spam responses in online surveys, funds will be wasted, and data will be compromised. Prior work has alluded to the financial costs associated with providing participation incentives to fraudulent respondents in online survey research (Levi et al., 2022). However, researchers have not explicitly examined the potential for research funds wasted on spam.

Second, and more importantly, are the research costs, which denote the influence of fraudulent responses on research conclusions and their threat to scientific integrity. Since fraudulent data do not represent the responses (e.g. attitudes, behavior, or opinions) of the target population in online survey research, inferences made from analyzing data contaminated with spam may be invalid and misleading. Griffin et al. (2022) noted that spam in online surveys may be even more harmful for research involving marginalized groups.

Spam responses can produce skewed results (Kennedy et al., 2021) and alter study findings (Bernerth et al., 2021; Chandler et al., 2020), thus contributing to the proliferation of disinformation. Even low rates of inaccurate responses can profoundly bias survey results (Arthur et al., 2021), suggesting that researchers who are not able to counter the more advanced forms of online survey spam risk jeopardizing the scientific integrity of their study results. Past studies have reported the impacts of fraudulent responses by analyzing the differences in frequency of responses (Chandler and Shapiro, 2016), correlations among focal variables (Chmielewski and Kucker, 2020; Pratt-Chapman et al., 2021), reliability and validity indicators of constructs (Chmielewski and Kucker, 2020), and treatment effects and their confidence intervals (Kennedy et al., 2020). However, these studies either did not explicitly examine the impacts of spam on overall research conclusions or relied on specific participant recruitment platforms (e.g. MTurk) for data collection that may not be limited in their ability to recruit participants from certain population subgroups.

Hence, failing to adequately address spam responses in online survey research raises serious ethical concerns. For example, misallocation of research funds—particularly public funding—to incentivize spam responses reduces researchers’ public accountability, and misallocation of staff time to manage and analyze fraudulent data takes away valuable expert resources that could otherwise be spent on meaningful research. Publishing findings based on compromised data, even if unintentional, can drive subsequent ill-informed research, leading to further waste of time, effort, and funding by other researchers. Moreover, unreliable and invalid results from compromised data can have dire consequences if used to inform public policy and can significantly deteriorate public trust in the scientific community.

The current study

This study investigates the following research questions:

We answer these questions using a large, convenience sampling-based online survey of U.S. transportation workers. The survey was affected by fraudulent activity and went through a stringent battery of spam detection techniques to identify fraudulent responses. We analyzed the financial costs of spam associated with this survey by estimating the amount of funds that would have been spent on incentivizing spammers if the researchers were unaware of them. We also examined the research costs of spam by empirically comparing the results and inferences for valid responses and spam responses in the survey.

Methods

Online survey

To analyze the costs associated with spam responses, we examined data from an online survey as part of a project funded by the National Science Foundation to investigate the potential workforce impacts of automated vehicles in three transportation industries: trucking, taxi services, and gig driving. Workers in seven occupational groups were recruited to participate in the survey from these industries, including truck drivers, trucking supervisors/dispatchers, trucking owners/managers, gig drivers (ride-hailing or app-based delivery service), taxi drivers, taxi supervisors/dispatchers, and taxi owners/managers. In line with the primary purpose of our study, the original target sample size was 350 for each of the seven industry groups based on the available research budget and a priori power analysis to achieve adequate power to detect medium-to-small effects. The online survey was not originally designed to investigate fraudulent responses; instead, our experience with spam during data collection prompted the post-hoc analysis presented in this study. Details of the survey and a brief data collection timeline are provided below.

The 15–20-minute survey was administered online through Qualtrics, a web-based survey platform, in the U.S. between June 2022 and October 2022. The survey study was approved by the Institutional Review Board (IRB) at Clemson University and Michigan State University. Participants who completed the survey were redirected to a separate “incentive survey” where they were given an option to provide their name and email to receive a $10 Amazon e-gift card as a participation incentive. At the beginning of the survey, participants were told that the survey includes questions about their current job and their perceptions of how fully automated vehicles may affect their jobs and industry. They were also informed that they would be compensated only if they answered questions to check respondent’s attention correctly. Given the limited research budget and hard-to-access samples, quota sampling (Battaglia, 2008) was implemented with a target sample size of 350 participants for each of the seven targeted worker groups.

During the data collection period, we recruited survey participants through four approaches. First, we contacted several industry organizations, worker unions, local transportation agencies, taxi companies, and a national gig-driving company to distribute the survey among their networks or employees. Second, we disseminated the survey through the research team’s relevant industry and professional networks. Third, we posted flyers and advertisements on social media platforms (e.g. Reddit, Facebook, LinkedIn). Finally, we placed flyers at truck stops (targeting truck drivers) and on some university campuses in the states of South Carolina, Michigan, and Georgia (targeting students who drove for gig mobility services). Those interested in participating could access the online survey through an anonymous link or by scanning a QR code. The heavy reliance on online convenience sampling strategies led to an unexpected influx of spam responses.

Two universities were involved in this project. The Qualtrics survey was first managed by Clemson University and then switched to Michigan State University. Clemson University collected 3,393 complete responses between June 2022 and July 2022. During the first 10 days of data collection, we identified an influx of spam responses (e.g. bursts of similar responses in a short period of time) and paused data collection to develop techniques for detecting spam responses (see “Spam prevention and detection techniques” section below). Given the extent of spam we experienced, we sought and received additional approval from the IRB to link data from the incentive survey to the main survey responses. The data were linked by matching IP addresses and aligning the start timestamps of the incentive survey with the end timestamps of the main survey. The survey was moved to Michigan State University’s Qualtrics platform as it offered more advanced spam detection features (e.g. RelevantID fraud score and invisible reCAPTCHA). We also updated the information for participants at the beginning of the survey to include that suspicious or low-quality survey responses would not be compensated. We resumed data collection and collected 9,685 completed responses between July 2022 and October 2022 through Michigan State University. Once data collection was complete, all survey responses from both universities were evaluated with a custom spam detection algorithm and a series of manual checks for consistency across the entire sample.

Spam prevention and detection techniques

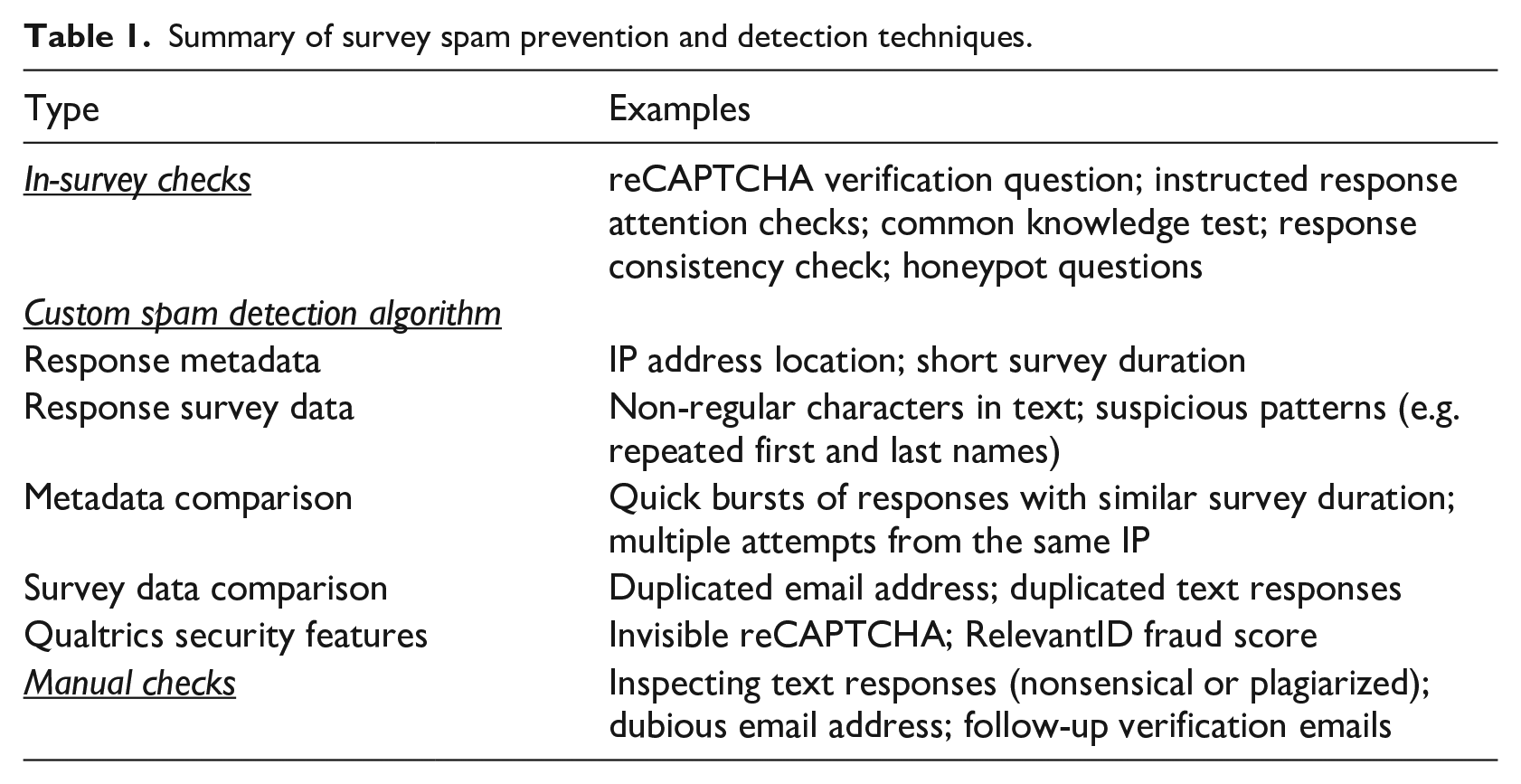

The existing literature on survey spam detection suggests that a combination of common data quality checks and preventative measures serves as the best approach for detecting survey spam, as no single technique appears to capture all types of online survey fraud (Griffin et al., 2022; Lawlor et al., 2021; Simone et al., 2024; Storozuk et al., 2020; Zhang et al., 2022). To prevent low-quality and fraudulent responses, our research team implemented in-survey checks when the survey was administered. We also employed strategies to detect fraudulent responses, including a custom spam detection algorithm and manual checks. Using these techniques, we classified the completed survey responses as “valid” or “spam.” Table 1 presents a summary of the implemented methods. An overview of the spam detection process is provided in Supplemental Figure 1.

Summary of survey spam prevention and detection techniques.

Step 1: In-survey checks

The survey contained several types of questions to identify inattentive, careless, or fraudulent responses (Abbey and Meloy, 2017; Simone et al., 2024). First, participants had to successfully respond to a reCAPTCHA verification question to continue the survey. Within the survey, participants had to correctly respond to two Likert-style instructed response attention checks (e.g. “Please choose ‘Strongly agree’”), one of the two randomly selected instructed response items (e.g. “Please type ‘99’ in the text field below”), and one of the two randomly selected common knowledge test questions (e.g. “Please enter the result of 2 + 2”). Randomization was added to reduce bots’ ability to memorize the in-survey check questions. A response consistency check was included where participants reported the industry sector of their primary occupation toward the beginning and end of the survey. The survey included two “honeypot” questions, which were visually hidden from human participants but were detectable by bots (Simone, 2019). The survey immediately ended for participants who failed any of these checks, and their responses were considered incomplete and removed from further analysis.

Step 2: Custom spam detection algorithm

Our research team developed a custom spam detection algorithm that classified each complete response as either “presumed-valid” (no apparent reason for suspicion of spam), “low-potential-spam” (indicating a mild level of suspicion), or “high-potential-spam” (indicating a strong basis for suspicion). The algorithm consisted of multiple filters that either checked metadata and survey data within individual responses or compared them between all survey responses. We included two Qualtrics fraud detection premium add-ons (Qualtrics, 2023): Google’s invisible reCAPTCHA technology (tracks user actions and behaviors) and Imperium’s RelevantID fraud score (comparison of user metadata to other online activity using digital fingerprinting) during the second phase of data collection. The details for filters used to flag “high-potential-spam” and “low-potential-spam” responses are presented in Supplemental Table 1.

Previous studies have used similar strategies to classify responses with an increasing level of suspicion of fraud (Goodrich et al., 2023; Miner et al., 2012). However, their filters were mostly limited to either IP address, survey duration, or suspicious responses to knowledge-based questions. All “low-potential-spam” responses were manually checked, as detailed in the next section. The remaining “high-potential-spam” responses were grouped as “’spam.”

Step 3: Manual checks

As the final step for data that had not been removed during the first two steps, we performed a series of manual checks to classify “presumed-valid,” “low-potential-spam,” and certain “high-potential-spam” responses as either “valid,” “potential-spam,” or “spam.” A team member manually checked for suspicious patterns in responses to the three open-ended text response questions and email addresses across respondents. The survey included three text response items that inquired about participants’ day-to-day job responsibilities, factors they would consider if changing jobs, and their anticipated outcomes of autonomous vehicle adoption in the transportation industry. To assist the researcher in keeping track of suspiciously similar responses, we utilized a fuzzy string-matching algorithm implemented in the “fuzzywuzzy” Python package to flag similar text responses (Cohen, 2022). We also inspected for nonsensical (e.g. “ghsjehqb”) or inadequate (e.g. “There is no”) open-ended text responses, plagiarized text responses from the Internet, as well as suspicious email address patterns (e.g. “01email@mail.com” and “02email@mail.com”).

A verification email was sent to (1) all responses deemed “potential-spam” responses and (2) any “high-potential-spam” respondents who contacted the research team inquiring about their survey incentive. The verification email verified three pieces of information (i.e. age, educational attainment, industry), which were then compared to their original survey responses within a reasonable deviation. Small differences in age response (e.g. 1 year older than in the original data) were ignored, as were minor wording differences between industry and education responses. If the information was verified, the survey response was classified as “valid”; otherwise, it was classified as “spam.” These manual checks were time-intensive but crucial for enhancing confidence in the data.

Analysis of financial costs of spam

To underscore the financial costs of online survey spam, we calculated the hypothetical amount of funds that would have erroneously been spent on spam responses. This calculation is based on the intended sample size of the study, assuming we had relied solely on conventional data quality checks (i.e. in-survey checks). Since our original intent was to incentivize the first 350 respondents in each of the seven industry groups that passed all typical survey methods to detect fraudulent responses, we stratified the data to capture (1) the first 350 responses in each group and (2) their final flag (valid or spam) after our battery of data quality checks. Stratifying the data in this way allowed us to calculate the cost of compensating respondents, which would have otherwise been valid based solely on traditional checks of fraudulent responses.

Analysis of research costs of spam

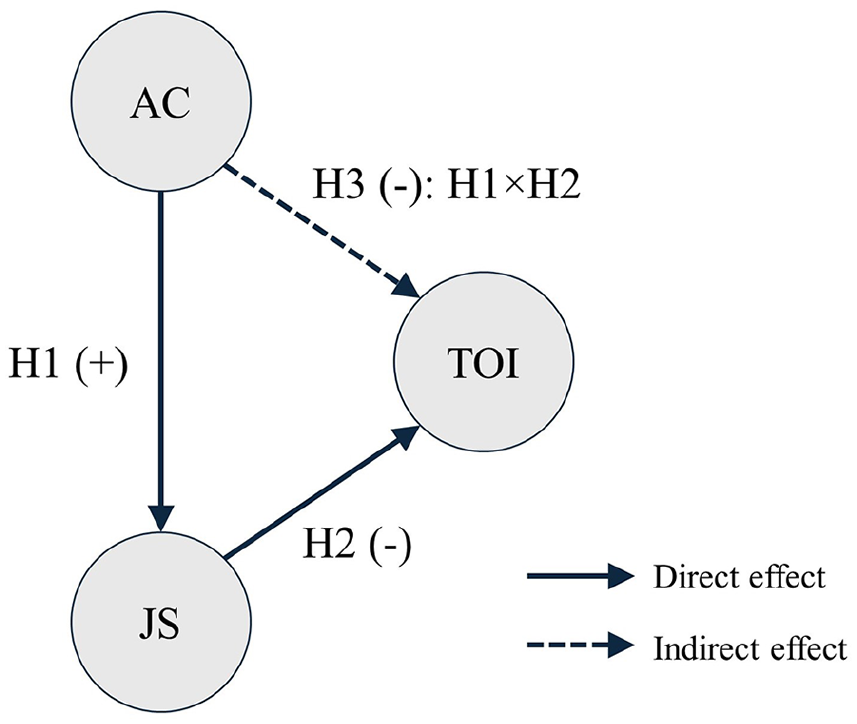

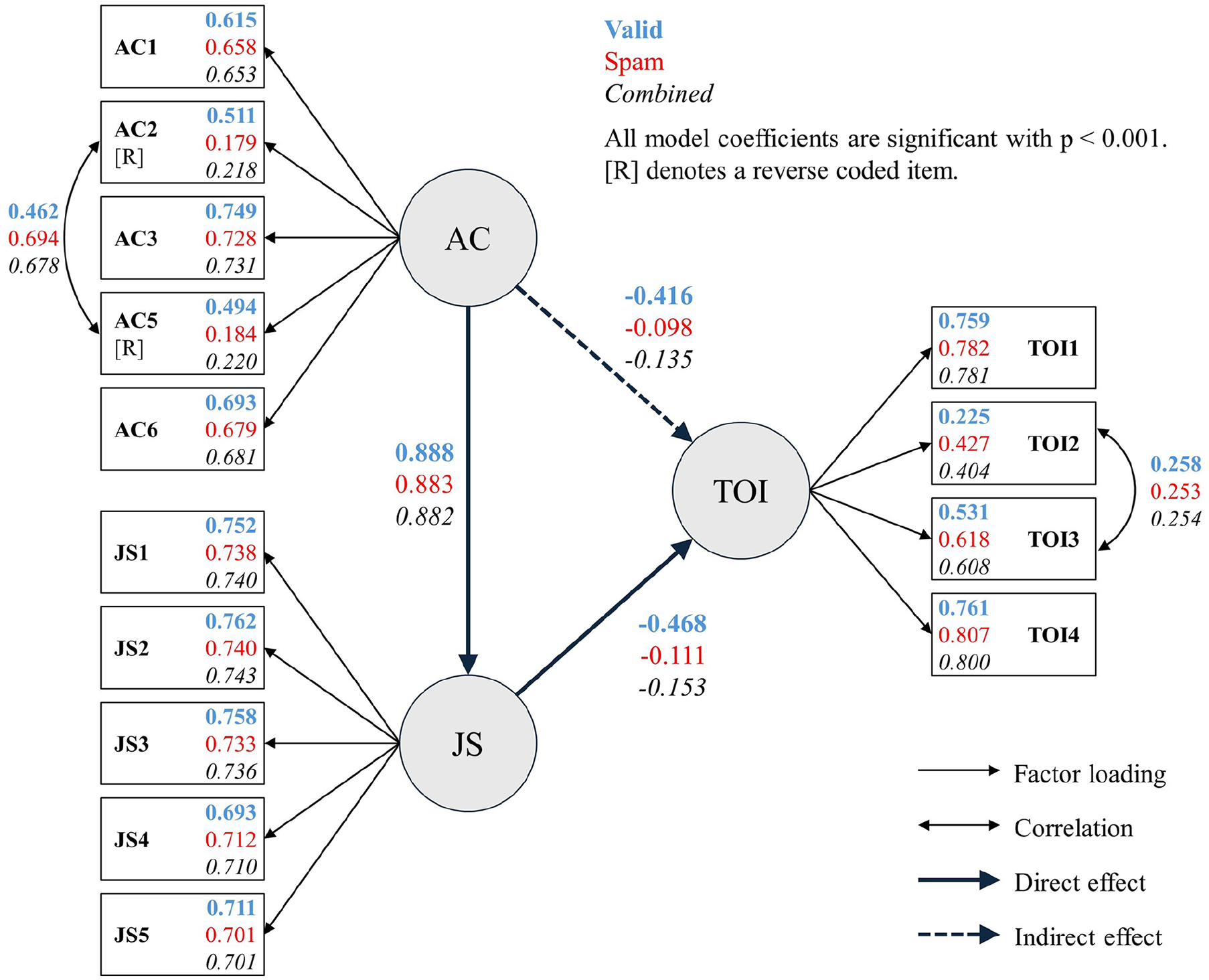

To investigate if there were differences in research findings due to spam responses, we tested the hypothesized relationships between affective occupational commitment (AC; i.e. the emotional attachment one has toward their occupation), job satisfaction (JS; i.e. one’s overall evaluation of the favorability of their job), and turnover intention (TOI; i.e. the desire one has to voluntarily leave their industry). These constructs have been extensively studied in the organizational science literature, which provides us with the expected strength and direction of their relationships (Judge et al., 2017; Tett and Meyer, 1993; Xu et al., 2023). Past literature has found AC to be strongly correlated with JS as feeling emotional attachment and pride for one’s occupation generates strong positive appraisals of their job (ρ = 0.63; Cooper-Hakim and Viswesvaran, 2005). JS and TOI have been found to have a strong negative relationship, as workers who are satisfied with their jobs are less likely to desire to leave (ρ = −0.55; Özkan et al., 2020). Given these expected relationships, we hypothesized the following (see Figure 1):

Hypothesized relationships between affective commitment (AC), job satisfaction (JS), and turnover intention (TOI).

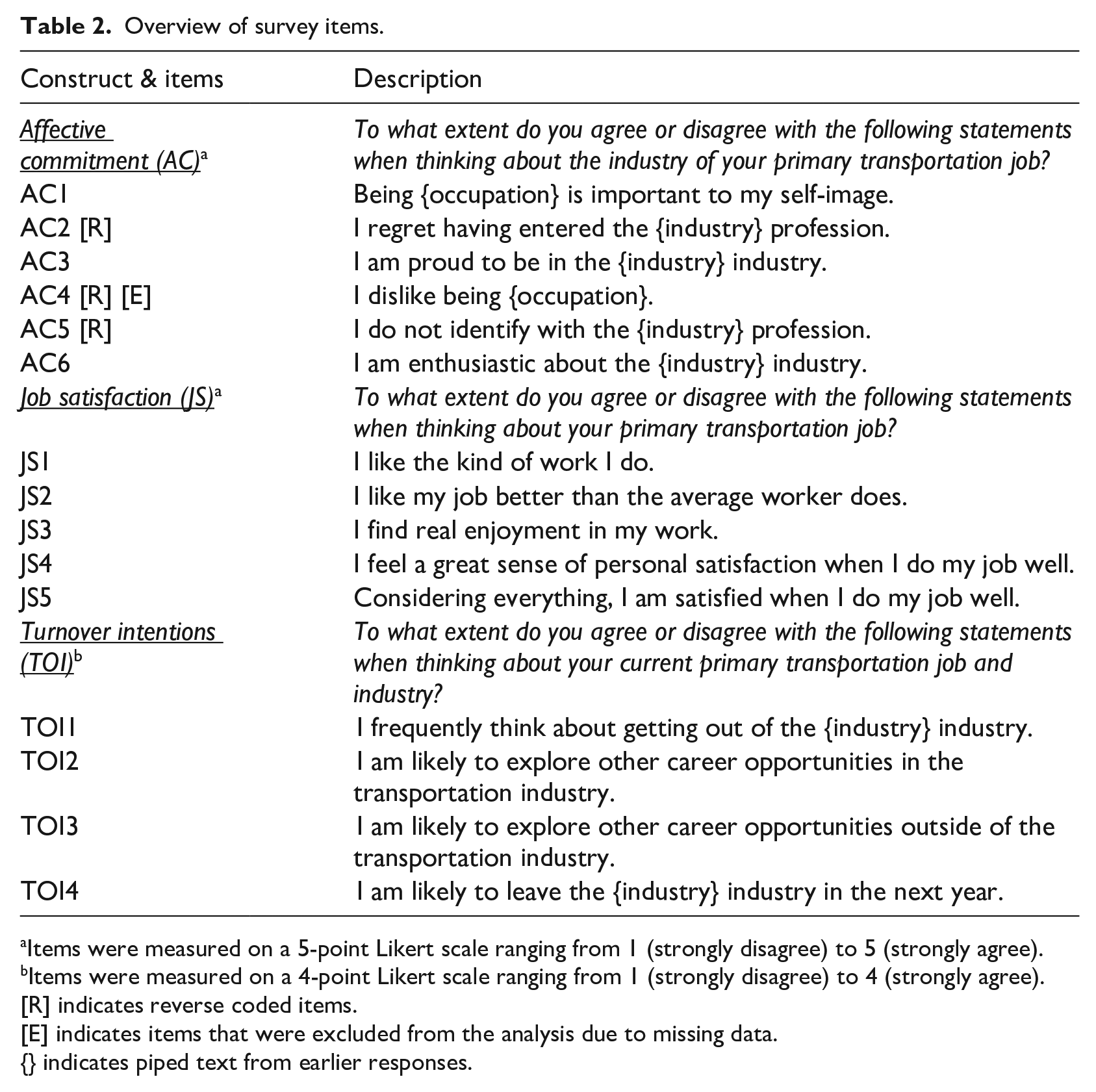

AC (Meyer et al., 1993), JS (Morgeson and Humphrey, 2006), and TOI (Meyer et al., 1993) were measured using established scales that were modified for the study context, as described in Table 2.

Overview of survey items.

Items were measured on a 5-point Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree).

Items were measured on a 4-point Likert scale ranging from 1 (strongly disagree) to 4 (strongly agree).

[R] indicates reverse coded items.

[E] indicates items that were excluded from the analysis due to missing data.

{} indicates piped text from earlier responses.

We tested these hypothesized relationships (see Figure 1) using structural equation modeling (SEM) on three subsamples independently: (1) valid responses only, (2) spam responses only, and (3) all completed responses (combined spam and valid responses). Before we estimated the SEM, we assessed the empirical equivalence of constructs between valid and spam groups by testing for measurement invariance of the three scales using confirmatory factor analysis (CFA; Kline, 2016).

The SEM and CFA model fits are assessed using the following fit indices: (1) Chi-square goodness-of-fit test, with p > 0.05 suggests a good model fit, (2) Comparative Fit Index (CFI) and Tucker-Lewis Index (TLI), with CFI ⩾ 0.95 and TLI ⩾ 0.95 indicating a relatively good model fit, and (3) Root Mean Square Error of Approximation (RMSEA) and Standardized Root Mean Square Residual (SRMR), with RMSEA ⩽ 0.06 and SRMR ⩽ 0.08 indicate a relatively good model fit (Hu and Bentler, 1999).

Since the primary objective of this study is to compare the overall research inferences across subsamples, rather than directly comparing individual parameter estimates between subsamples, we used standardized parameter estimates to enhance the interpretability of the model results within each subsample. Typically, items with standardized factor loadings between 0.30 and 0.40 are considered to meet the minimum requirement, and loadings ⩾ 0.50 are considered acceptable (Hair, 2010). AC4 was excluded from the analyses due to missing data for over half of the responses, as it was measured only for participants whose primary occupation is driving. We also assumed two correlated error terms: (i) AC2 and AC5, as they both are reverse-coded items, and (ii) TOI2 and TOI3, as they are created by decomposing the original item on the TOI scale “I am likely to explore other career opportunities” into “. . .opportunities in the transportation industry” (TOI2) and “. . .opportunities in a non-transportation industry” (TOI3). The CFA and SEM models were estimated using the maximum likelihood estimator implemented in the “lavaan” package (version 0.6-15) in the R programing language (Rosseel, 2012).

Results

We employed a stringent battery of spam detection techniques to classify completed responses as “valid” (n = 1,774) or “spam” (n = 11,304).

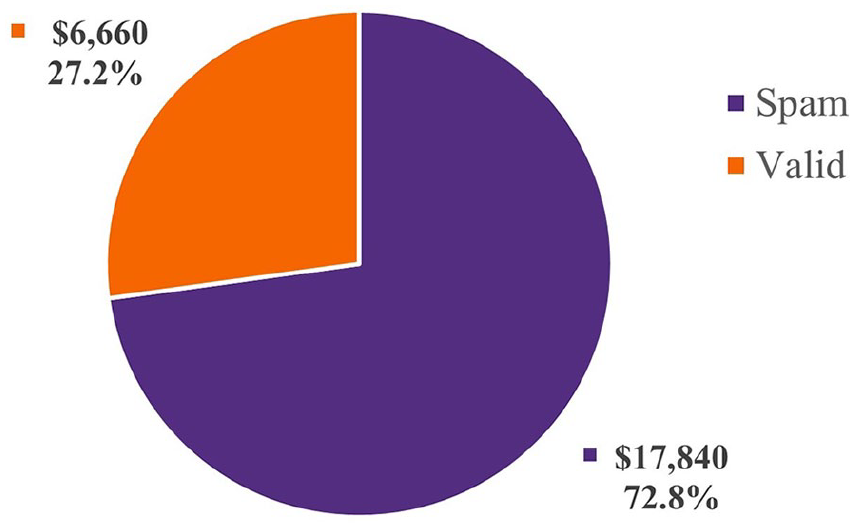

Financial costs of spam

We offered a $10 gift certificate as a participation incentive for each respondent, resulting in a budget of $24,500. Out of our target sample (N = 2,450), 1,784 were spam responses and 666 were valid responses. Therefore, about 73% of our incentive budget ($17,840) would have been wasted on survey spam if we had not implemented robust spam detection techniques, as illustrated in Figure 2.

Hypothetical expenditure in our survey with conventional data quality checks.

In addition to the direct financial costs of participation incentives, there were several indirect costs related to personnel expenditure, including salaries and a lack of staff time to work on other project components. In this study, multiple graduate research assistants, a postdoctoral researcher, and an assistant professor spent substantial time and effort developing spam detection techniques and cleaning the data over several months. Like many academic researchers, we did not keep a detailed log of time spent on this data cleaning effort, which makes it difficult to estimate these costs precisely.

Research cost of spam

Model results show that the configural invariance model did not have an acceptable fit based on the Chi-square test (χ2(144) = 7107.6, p < 0.001) as well as incremental and absolute model fit indices (CFI = 0.901, TLI = 0.875, RMSEA = 0.087, SRMR = 0.108; see Supplemental Table 2 for detailed results). Measurement invariance between the “valid” and “spam” groups was not supported at the configural level, indicating that the survey scales do not measure the same underlying focal constructs and should not be compared between the two groups.

Further, the results indicate that the estimated factor loading for TOI2 is less than the desired threshold of 0.50 in both subsamples (0.217 for valid and 0.426 for spam), which is not unexpected given that this item was not previously validated and is very similar to TOI3. The results also illustrate that while the estimated factor loadings for the two reverse-coded items (AC2 and AC3) are acceptable in the valid subsample (0.536 and 0.520), they are much lower than the desired threshold in the spam subsample (0.196 and 0.200), indicating a poor factor structure. The SEM results show similar factor loading patterns.

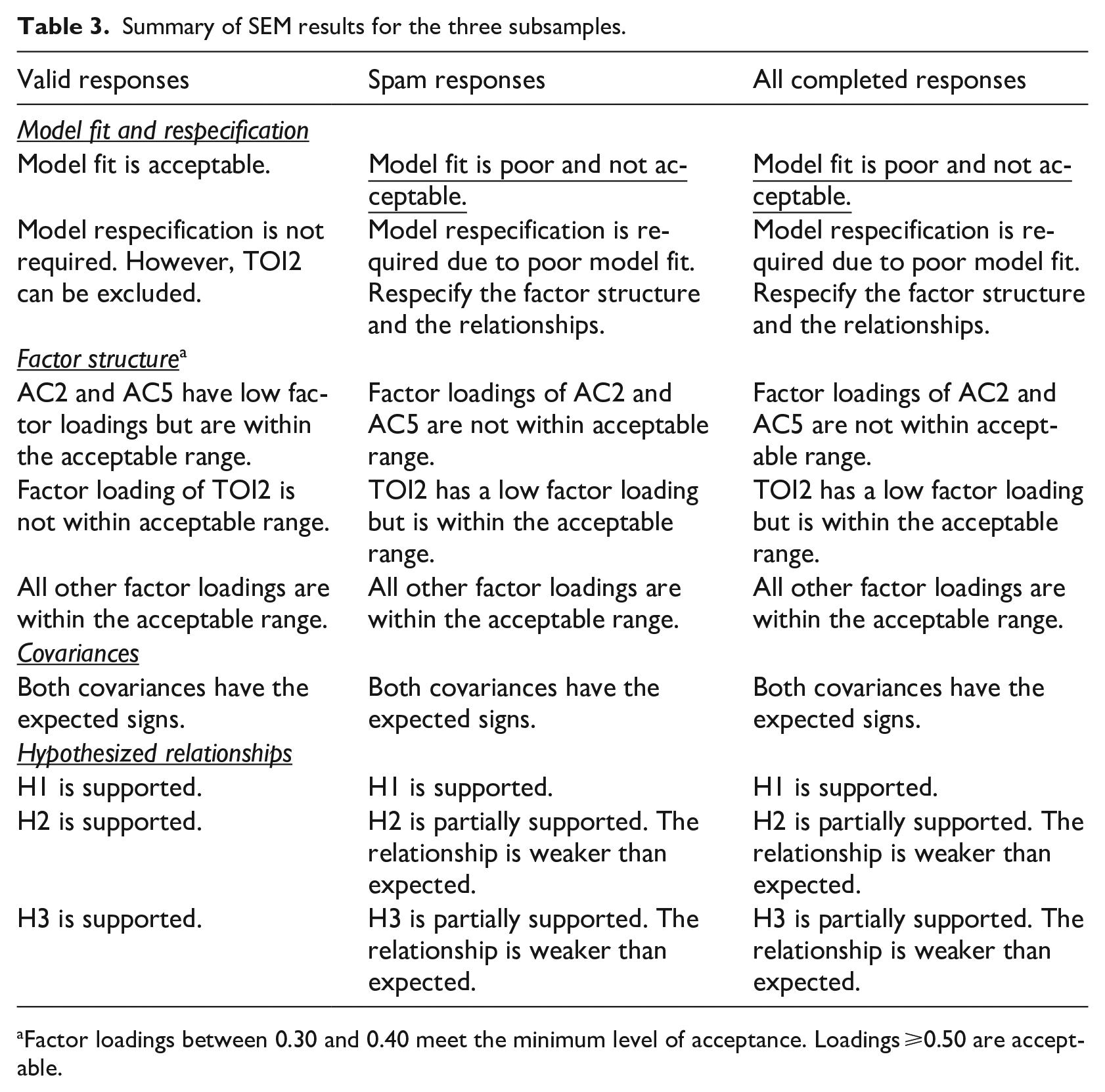

Importantly, the SEM results indicate a good model fit for the valid sample (χ2(73) = 507.7, p < 0.001, CFI = 0.950, TLI = 0.938, RMSEA = 0.060, SRMR = 0.068), while they indicate a poor model fit for the spam sample (χ2(73) = 6699.3, p < 0.001, CFI = 0.893, TLI = 0.866, RMSEA = 0.091, SRMR = 0.125) and the combined sample (χ2(73) = 7173.2, p < 0.001, CFI = 0.899, TLI = 0.874, RMSEA = 0.087, SRMR = 0.119; see Supplemental Table 3 for detailed model results). Although the Chi-square goodness-of-fit test indicates that neither model fits the data perfectly, this test is highly sensitive to sample size and is often significant in large samples (Kline, 2016). The estimated model parameters for the hypothesized relationships between the valid, spam, and combined subsamples are illustrated in Figure 3. A comparison of results between the valid, spam, and combined samples is summarized in Table 3.

Model results for the hypothesized relationships across the three subsamples: Valid, Spam, and Combined.

Summary of SEM results for the three subsamples.

Factor loadings between 0.30 and 0.40 meet the minimum level of acceptance. Loadings ⩾0.50 are acceptable.

Discussion

To our knowledge, this is the first study to: (1) explicitly assess the potential financial costs of spam, and (2) examine the research costs of spam by empirically comparing the results for valid and spam responses in online surveys using non-probability sampling. Our results show that there are substantial direct financial costs of survey spam—nearly three-fourths of our incentive budget would have been unintentionally misused to compensate spammers—and considerable indirect costs (e.g. personnel time expenditure, including tuition and salaries) associated with spam detection and data cleaning processes. However, the financial costs are only a part of the more significant issue with online survey spam.

Our analysis shows that the inclusion of spam would have compromised the scientific integrity of this research. First, the lack of configural invariance typically indicates differences in contextual/cultural factors or item interpretation (Kline, 2016). However, for the U.S. transportation workers sample, the most plausible cause for the lack of configural invariance is differences in response styles between valid and spam responses. This suggests that failing to exclude spam responses can compromise the factor structure and yield spurious relationships. Further, spam responses performed particularly poorly on reverse-coded items, as indicated by their low factor loadings. These findings suggest that spam respondents tend to select answers at one extreme, which is consistent with existing literature that has found a tendency for insincere respondents to choose positive answer choices (Kennedy et al., 2021). Further research is needed to determine if reverse-coded items can help identify spam responses without increasing false positives, while balancing issues like participant confusion (Venta et al., 2022) and lower internal consistency (Weijters and Baumgartner, 2012).

Second, as in our study and others (Griffin et al., 2022; Webb and Tangney, 2024; Xu et al., 2022), it is likely that spam responses in affected online survey studies outnumber valid responses due to automated spam. Researchers who are incognizant of spam in online surveys, particularly when using non-probability sampling methods, may draw false conclusions despite appropriate analysis. For example, our combined dataset would not have supported the hypothesized relationships due to poor model fit, unlike the valid subsample.

Furthermore, including spam responses would have produced inaccurate and potentially misleading results that could influence both theory and practice. For example, worker turnover is costly for organizations (Park and Shaw, 2013), stimulating longstanding interest in improving worker retention and identifying attrition factors (Lee et al., 2017; Shaw, 2011). However, if we had not detected and removed spam responses, we would have wrongly concluded that (1) job satisfaction has a significant but weak negative relationship with workers’ turnover intention, and (2) affective commitment has a significant but weak negative indirect effect on turnover intention through job satisfaction. Thus, including spam responses in the combined sample would underestimate the influence of affective commitment and job satisfaction on turnover intention in the transportation industry, leading researchers and practitioners to overlook them for transportation workers.

Though our study offers important implications into promoting the responsible use of research funds and preserving the scientific integrity of findings, it has limitations. Without the ground truth, we could not evaluate the spam detection techniques for false positives (valid response flagged as spam) and false negatives (spam response flagged as valid). Although such errors likely impact both research costs and financial costs, the practical constraints of non-probability sampling made this evaluation infeasible. Some studies have evaluated their spam detection strategies (Zhang et al., 2022); however, they did so by making strong assumptions that specific techniques (e.g. manually checking open-ended text responses) yield accurate results. More precise estimates of the human costs of identifying and resolving spam issues are also needed. In addition, we were unable to benchmark our fraud rate in the context of the literature as they vary widely (1.2%–99%) across studies (Ballard et al., 2019; Bell et al., 2020; Kennedy et al., 2021; Pozzar et al., 2020; Thomas and Clifford, 2017), likely due to inconsistencies in how fraud was defined (e.g. different levels of rigor in spam detection indicators), recruitment strategies used (e.g. social media, crowdsourcing platforms, and opt-in panels), and other factors (e.g. whether the study was targeted by spammers or not). Next, our study was focused on specific industries with hard-to-recruit participants. More research examining a broader range of participants is needed. In addition, identifying the most effective spam detection techniques across recruitment and sampling strategies could help researchers with limited knowledge and expertise in this area. Finally, although some studies compared spam incidence across different recruitment sources (e.g. crowdsourcing vs social media; Antoun et al., 2016) and participation incentive amounts (Simone et al., 2024), we were unable to do so due to a lack of tracking data.

Conclusion

The aim of this study is not to deter researchers from using online surveys with non-probability sampling methods but to highlight the importance of being cognizant of survey spammers and employing multiple survey spam prevention and detection techniques. A few studies have incorporated in-survey checks as part of their survey bot detection and prevention spam strategies (Goodrich et al., 2023; Moss et al., 2021), while some used in-survey checks—and them alone—to ensure their data does not contain fraudulent responses (Belliveau and Yakovenko, 2022; Kennedy et al., 2021). However, in-survey checks can potentially deter or even elicit noncompliant behavior from genuine participants, especially when an explanation for the check (e.g. “bot test”) is not included within the question text (Silber et al., 2022). Some studies have also suggested using statistical methods for data screening (Arthur et al., 2021; DeSimone et al., 2015; Dupuis et al., 2019); however, such techniques that rely on underlying data distributions might not work with a high proportion of spam responses in the data. Hence, as the more recent literature on survey spam detection suggests, a combination of common data quality checks and preventative measures serves as the best approach for detecting survey spam, as no single technique appears to capture all types of online survey fraud (Griffin et al., 2022; Lawlor et al., 2021; Simone et al., 2024; Storozuk et al., 2020; Zhang et al., 2022). Spam behavior has also been found to differ depending on the recruitment strategy (Kennedy et al., 2021), yet extant work on online survey spam has primarily been in data collected via opt-in panels or crowdsourced platforms such as Amazon Mechanical Turk (Chandler et al., 2020; Kennedy et al., 2021) rather than the increasingly widely used social media-based recruiting approach. Advertising online surveys through social media platforms may be more attractive and accessible to researchers because it provides a more cost-effective recruitment option compared to opt-in panels or crowdsourced platforms (Zindel, 2022), as researchers avoid paying platform fees. In conclusion, responsible science is reliant on valid data. Hence, it is imperative that researchers be cognizant of how spammers compromise online survey data, which can not only lead to unintentionally misused research funds but also lead to misleading or inaccurate results and conclusions, thereby reducing scientific integrity.

Supplemental Material

sj-docx-1-rea-10.1177_17470161251357454 – Supplemental material for It’s more than money: The true costs of spam in online survey research

Supplemental material, sj-docx-1-rea-10.1177_17470161251357454 for It’s more than money: The true costs of spam in online survey research by Shubham Agrawal, Gwendolyn Paige Watson, Amy M. Schuster, Shelia R. Cotten, Michael L. Tidwell, Nathan Baker and Sicheng Wang in Research Ethics

Footnotes

ORCID iDs

Ethical considerations

In accordance with federal regulations 45 CFR 46.104(d), this study was qualified as Exempt under category 2 by the Office of Research Compliance at Clemson University (#IRB2020-183: “FW-HTF-RL: Preparing the Future Workforce for the Era of Automated Vehicles”; Approval date: July 6, 2022; PI: Shelia R. Cotten).

Consent to participate

Respondents provided informed consent virtually at the beginning of the survey by choosing to continue with the survey. The survey informed consent page included the following statement: “To receive compensation, you must meet the eligibility criteria as determined by screening questions in the beginning of the survey, answer questions to check respondent’s attention correctly, and complete the entire survey. Any suspicious or low quality survey responses will not be compensated.”

Author contributions

Shubham Agrawal: Conceptualization, Methodology, Investigation, Software, Formal analysis, Data curation, Visualization, Writing—original draft, Writing—review & editing. Gwendolyn Paige Watson: Methodology, Investigation, Data curation, Writing—original draft, Writing—review & editing. Amy M. Schuster: Investigation, Visualization, Writing—review & editing. Shelia R. Cotten: Conceptualization, Investigation, Funding acquisition, Writing—review & editing. Michael L. Tidwell: Investigation, Data curation, Writing—original draft. Nathan Baker: Investigation, Data curation, Writing—original draft. Sicheng Wang: Investigation, Writing—original draft.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Science Foundation under the grant “Preparing the Future

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Data supporting the findings of this study are available upon request from the authors.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.