Abstract

The problem of dual use is characterized by a wide range of activities or types of research and technology utilization. In this article, I explore the phenomenon of dual use in several steps to make it accessible for ethical inquiries: first, I examine the phenomenon in more detail; is it a genuine property of technologies and methods, a fundamental problem for research ethics, or a specific precondition for trade-off situations? Second, I show that various factors contribute to a certain good becoming a real dual use good. Third, I propose to develop a three-dimensional classification and evaluation system for dual use risks.

Keywords

Background from research ethics and global technology governance

In an era of rapid technological progress and numerous international crises threatening global prosperity, health, and peace, a new normative understanding of application areas dealing with security-relevant research (these are primarily bioethics, ethics of technology, and research ethics) seems to be making headway. We are witnessing far-reaching upheavals that affect the theoretical and practical foundations of applied ethics, especially of research ethics, on several levels:

a. the accelerating pace of change in the life sciences and related fields,

b. the ongoing convergence of biology and biomedicine with mathematics, engineering, chemistry, computer science, and information theory,

c. the uncontrolled spread of capabilities in biology and biomedicine around the world,

d. the irreversible expansion of science with new digital tools altering how information is gathered, handled, disseminated, and accessed,

e. and, in light of current global military conflicts, the increased willingness to develop, produce, test, and deploy weapons.

Therefore, it is increasingly necessary to understand research ethics and ethics of science as interdisciplinary, multifunctional, and particularly risk-aware areas of applied ethics. In conditions of epistemic uncertainty, shifting social dynamics, volatile political decision-making, and unpredictable economic limitations, ethical evaluations must be made by weighing costs and benefits, potentials and risks, well-being and harm, research freedom or progress, and the significance of public security. Consequently, the future of applied ethics, particularly in the normative assessment of security-relevant research, may be marked by an ethical ambivalence reflecting conflicting goals and intentions. It appears that we are still a considerable distance from the conclusion of technology diffusion, which is inextricably connected to the sharing of technologies, including dual use technologies (Meier, 2014: 9).

Are research results, knowledge, materials, and technologies inevitably susceptible to dual use risks?

Epistemic and material goods such as research outcomes and technical artifacts are indeed prone to dual use risks. Emerging technologies, while creating significant value, also pose substantial dangers. They offer new opportunities but also carry unforeseen potential threats. For instance, artificial intelligence technologies exhibit considerable ethical ambivalence, for example, a pattern recognition algorithm could be a key component of a weapon if it is used to identify and select military targets. But, at least in principle, if such an algorithm were to be adapted for use in medicine to be implemented to identify cancer cells, artificial intelligence makes a novel methodological-technical nexus between bioethical and non-bioethical areas of application, which should be given special consideration in the future. Nevertheless, bioethical assessment of dual use risks must still distinguish between biotechnologies with autopoietic systems and non-biological technologies (i.e. pure poietic systems), although they are becoming increasingly difficult to separate. We will see later in the article that concerning so-called higher-level dual use risks and their ethical evaluation, safety-relevant biotechnologies hardly differ from newer quasi-autopoietic AI technologies. 1

The term “dual use” encompasses phenomena with varying degrees of ethical double or hybrid effects in research and application. “Dual use” can be defined in different ways, as we will see, but generally (and colloquially) it refers to the possibility that the same technology or item of scientific research has the potential to be used for harm as well as for good. Of course, neither research results nor technologies can themselves be perpetrators of misuse (cf. Pitt, 2014), unless we as humans allow the technology to do so or deliberately use that technology for bad purposes. In a society strongly committed to technical and scientific progress, the dual use dilemma, its prevalence, and the responsibility to address it seem unavoidable.

Mapping the current dual use discourse

To date, it is quite incomprehensible or even seems ironic that few ethical discussions have focused on dual use research. 2 Although the dual use problem has been a recurring and marginal issue for decades, it is now with the increased use of and massive research into AI, 3 the “shifting sands” of gain-of-function research and do-it-yourself science (e.g. DIYbio) that the time has come to ask more precisely for what purpose a person, a company, or a public institution is or will be using a particular research result, scientific method, or technology (cf. Schmid et al., 2022).

Rath et al. (2014) note a lack of a universal understanding of dual use research in the literature pertaining to ethical discourse (p. 770). 4 In particular, the current literature often fails to recognize that the dual use problem has broader ethical implications and may concern an incalculable range of cross-over activities and different types of research that at first glance appear to be independent of each other. Up to now, only one publication, which is not particularly recent, has endeavored to examine the issue of dual use in its full multidimensional context: Rappert and Selgelid (2013). Of course, the dual use problem usually begins in the laboratory or the test station, but it is not yet solved once a declaration of safety has been issued, since some research results only become dual use goods once they have been disseminated accordingly. Basic research or pure science is not self-sufficient but is usually carried out with industrial partners and other non-researching parties. Both empirically and conceptually, the intersection between research activities and trade controls is still neglected (Zhao, 2024).

Although that is not the central theme of this article, dual use items can be subject to export controls. Therefore, it is part of the responsibility of the individual researcher to consider whether their research activities could be subject to export controls. It is well known that some technologies and/or research results evade control in general and export control in particular. The higher the degree of technology diffusion in a society, the more difficult it becomes to establish effective control mechanisms. 5 Knowledge, especially “tacit knowledge” (Revill and Jefferson, 2014), can even be exported relatively barrier-free and thus easily escapes almost any form of control. This makes it more important to define and propagate very early on what makes a good a dual use good. The prevailing discourse on dual use items lacks focus on developing clear conceptual distinctions and definitions, an issue this article seeks to address.

Outline of the article

To conceptualize the dual use dilemma, I will first examine common and tentative definitions of dual use. Methodologically, this article will navigate the dual use issue through several stages to prepare it for an ethical evaluation fitting the complexity of the problem: Initially, the dual use phenomenon will be scrutinized; is it a genuine property of technologies, a fundamental problem for research ethics, a concrete ethical dilemma, or a particular precondition for trade-off situations? Second, it is important to understand in what way a technology or research process has the potential to be harmful and beneficial at the same time. Third, another aim is to identify those objects and technologies that are particularly susceptible to evoking the dual use problem. Fourth, it is necessary to review the current ethical evaluation methods to see whether and to what extent they can be applied to new research methods and emerging technologies.

To facilitate an overall assessment, I will present three possible perspectives on the evaluation of dual use scenarios: the determinist view, the intentionalist view, and the (social) contextualist view. Ultimately, I advocate for the social contextualist perspective, incorporating key elements from the other views to establish an ethical assessment approach that addresses the multidimensional nature of the inherent and extrinsic dual use potentials of “double-charged” research findings, scientific entities, and technologies. 6 This makes it possible to determine more precisely (1) the type of risk, (2) the identity of the abusing actor (Who is pursuing a malicious interest here?), and (3) the identity of the potentially harmed actor (at whose expense is this malicious interest?).

What is dual use all about?

In the recent literature, both the distinction between the civil and the military use of the same good in question (e.g. European Commission, 2022) and the occurrence of certain trade-offs when proliferating research results and technologies with high risks and high benefits (Korn et al., 2019) is called “dual use.” 7 Defining “dual use” remains a challenging task because the term describes a broad category that has blurred boundaries whose composition is determined by the context in which the items are used as well as by the intrinsic qualities of the good in question (e.g. research result, technology). Jonathan B. Tucker, in his seminal work on dual use technologies, labels proponents of the latter as “determinists” and the former as “social contextualists” (Tucker, 2012, 29). Nearly every dual use issue has a deterministic aspect based on the item’s intrinsic properties and a contextual aspect influenced by its social integration, even if not entirely defined. The complexity increases when considering a third perspective, that of the “intentionalists.” Intention-based approaches assume that virtually any object or good – depending solely on the intention of an actor (while ignoring the “double-chargedness” of the item in question)—can be used for a good or a bad purpose. According to this view, even harmless objects can become lethal weapons if someone decides to use them as such. To avoid preempting the discussion, I refer you to my detailed explanations under section ‘‘A property-based approach towards the specification of certain goods as dual use goods’’.

The dual use knowledge matrix

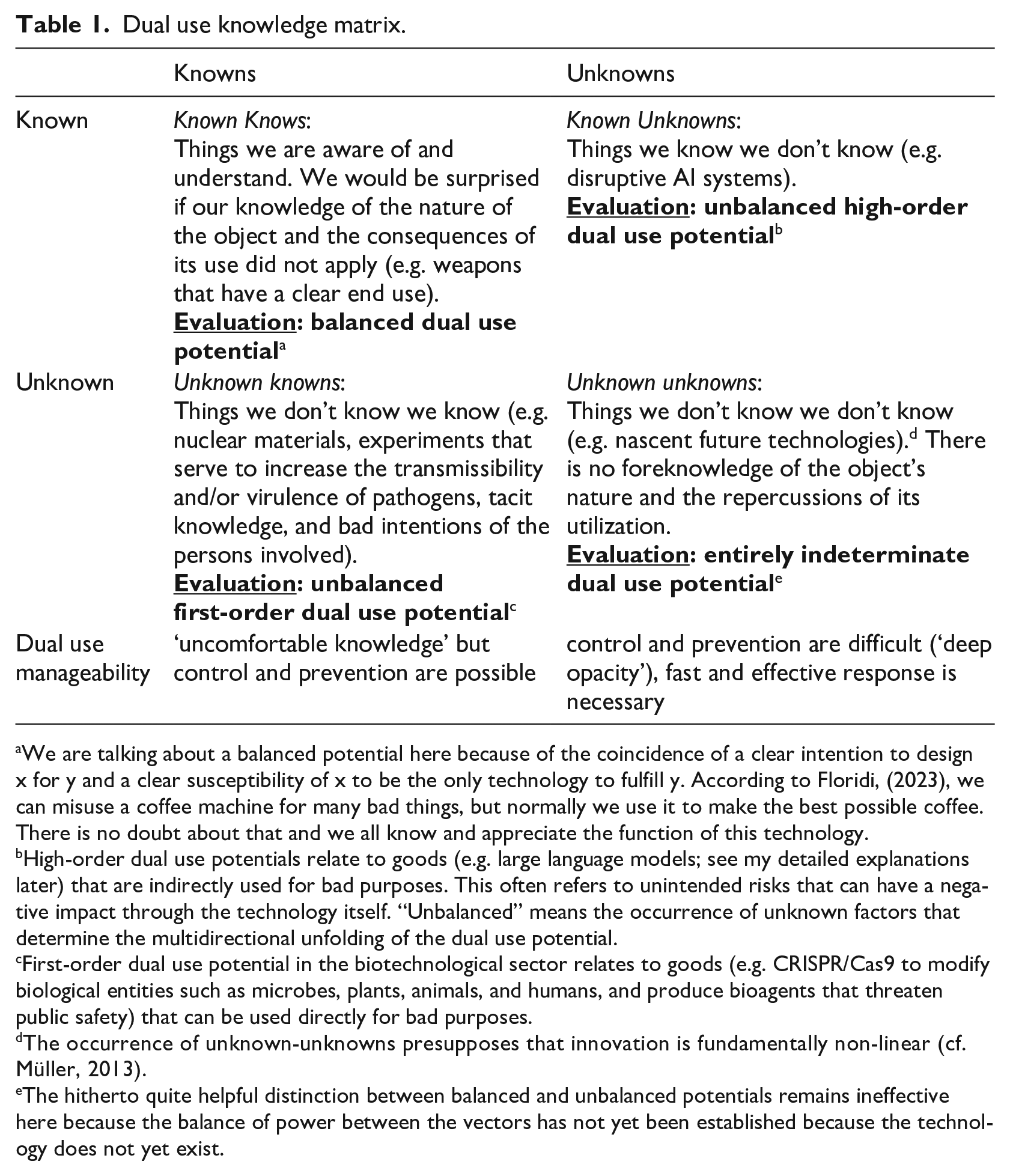

It is widely accepted that dual use is closely related to both explicit and tacit knowledge under conditions of epistemic uncertainty. In research, theoretical knowledge often merges with practical knowledge, yet the specific applicability of a research result may not always be clear. Particularly in the realm of transferring knowledge into practice, various factors can influence whether the application will be beneficial or detrimental. It is impossible to predict which element of theoretical knowledge will be utilized, for what specific purpose, at which time, and by whom. There are instances where the dual use dilemma may emerge without any human foresight, making it completely unpredictable. To cautiously tackle the issue of conceptualization, I suggest situating the dual use problem within the following knowledge matrix (Table 1).

Dual use knowledge matrix.

We are talking about a balanced potential here because of the coincidence of a clear intention to design x for y and a clear susceptibility of x to be the only technology to fulfill y. According to Floridi, (2023), we can misuse a coffee machine for many bad things, but normally we use it to make the best possible coffee. There is no doubt about that and we all know and appreciate the function of this technology.

High-order dual use potentials relate to goods (e.g. large language models; see my detailed explanations later) that are indirectly used for bad purposes. This often refers to unintended risks that can have a negative impact through the technology itself. “Unbalanced” means the occurrence of unknown factors that determine the multidirectional unfolding of the dual use potential.

First-order dual use potential in the biotechnological sector relates to goods (e.g. CRISPR/Cas9 to modify biological entities such as microbes, plants, animals, and humans, and produce bioagents that threaten public safety) that can be used directly for bad purposes.

The occurrence of unknown-unknowns presupposes that innovation is fundamentally non-linear (cf. Müller, 2013).

The hitherto quite helpful distinction between balanced and unbalanced potentials remains ineffective here because the balance of power between the vectors has not yet been established because the technology does not yet exist.

As we can see here the dual use problem consists of many knowns and unknowns (cf. Marris et al., 2014: 397f.) that have an intricate relationship with each other. In addition to that, there is also the challenge that we do not uniformly speak of dual use. For example, “dual use” appears in the context of R&D, export control, foreign relations, and the IT sector. Traditionally, the term “dual use” did not originate in the field of science and research, but in export control (Nestler, 2023: 4). It referred to goods (including software and technology), commodities, or even modes of behavior that, although primarily researched, produced, or carried out for civilian use, were not intended for civilian use. Due to certain properties of the products or even the foreseen intentions of their owners, recipients, buyers, etc., a wide range of items can be used for military purposes or simply become significant under the aspects of safety and security. In legal terms, the term “dual use” is thus located in the foreign trade (criminal) law. 8

The knowledge matrix shows that the phenomenon is multi-layered and that with an increasing degree of non-knowledge, the dual use potential also increases. This leads to methods of controlling and preventing harmful consequences being less and less effective.

Four tentative definitions of dual use

The preceding matrix serves to scrutinize existing or implicitly presupposed definitions of dual use to determine if they adequately address often overlooked aspects arising in the discourse like ignorance, (practical) non-knowledge, tacit knowledge, and epistemic uncertainty. Presently, one can identify four distinct definitions: a broad and value-laden definition with a popular-scientific slant (D1), a narrow and value-neutral definition (D2), a legal definition (D3), and a teleological definition employing the evaluative terms of moral philosophy (D4):

A broad and value-laden definition of dual use can be found in daily newspapers. For example, the German daily newspaper FAZ writes that “research conducted for the benefit of humanity, but which in the wrong hands can lead to disaster.” 9 This definition does not describe specific characteristics and features of dual use but is mainly aimed at increasing general awareness of dual use issues.

A narrow and value-neutral definition refers to research objects that, given the appropriate intention, are suitable for both civilian and military use. This very general descriptive definition can be found in most books and essays on the dual use problem that do not analyze the term in more detail (e.g. Alic et al., 1992).

A legal and far more precise definition of “dual use” is formulated within Article 2, No. 1, 1 of the Regulation (EU) 2021/821 of 20 May 2021 setting up a Union regime for the control of exports, brokering, technical assistance, transit and transfer of dual use items. 10 It states that:

“dual-use items” means items, including software and technology, which can be used for both civil and military purposes, and includes items which can be used for the design, development, production or use of nuclear, chemical or biological weapons or their means of delivery, including all items which can be used for both non-explosive uses and assisting in any way in the manufacture of nuclear weapons or other nuclear explosive devices.

11

Although this definition is very precise, it is limited to the dual use context of export control.

The teleological definition is again much more general than the legal one insofar as it refers to the general possibility that a research object or technology can be used for a good but also for a bad purpose. 12 Unlike D2 and D3, it aligns more closely with an intentionalist approach. Similar to D1, this definition is also value-laden but offers more specificity and applicability because it enables a context-sensitive evaluation of the individual structure and integration of a specific dual use item. In the current literature on dual use, the teleological definition is either implicitly used or trivialized in the form of D1. 13

What is the significance of each definition? Certainly, it is a general advantage of D1 that it expresses the great potential for misuse that lies in current research and the negative effects this can have on the common good. Although the definition remains very unspecific, it can help to raise public awareness of dual use risks. D2 refers to the actual subject matter of dual use research, that is, research subjects that can be used not only for civilian but also for military purposes. However, D3 is most meaningful because it addresses the different types of actual and potential dual use items and their “life cycles.” However, this definition is only about which materials are considered dual use and not about what dual use means. In other words, it is not clear what similarities characterize the materials mentioned, which may be the reason for classification as dual use material. D4 introduces a teleological component, insofar as in this definition the focus is no longer on the inherent properties of the dual use goods but on the intentions and goals of those who aim at something good or bad with a good that is susceptible to dual use. Although D4 remains very unspecific and abstract, it does have one advantage over D3: whereas D3 is only about the construction of objective characteristics in the form of dual use goods, that is, there is no recourse to an intended use, in D4 it is precisely this recourse that is central. D4 shows that the classification of goods as dual use can never happen without reference to subjective and social behavior. 14

As indicated in the dual use knowledge matrix above, it seems evident that none of the four definitions can fully capture the unknown-unknowns that may arise with technological and societal advancements. For instance, the extent to which gain-of-function research (GOFR) or the advancement of large language models (LLMs)—both subsets of dual use research—might lead to threats against individuals or communities remains uncertain. Therefore, it is crucial to examine the characteristics of potential dual use goods and their users more closely.

A property-based approach toward the specification of certain goods as dual use goods

It cannot be denied that certain goods can be made dual use goods if they have certain causally effective properties inherent or attributable to them. 15 In the following, I will distinguish between three classes of properties that make a good a dual use good:

Type I: Dual use as an inherent property of purposely specified objects (= determinist view)

This type attempts to specify the characteristics inherent to an identifiable good. Because we are not talking about natural objects here, this perspective assumes that no research object or technology is morally neutral because it was created with a specific method, technique, and intention. Nevertheless, a research object or technology has certain resultative characteristics that must be able to be evaluated independently of any blueprints and intentions of its creators. There are goods—for example, research objects such as technologies or scientific publications, economic goods – that are security-relevant because they have an intrinsic potential for misuse, that is, they are particularly susceptible to misuse. The degree of this potential for misuse can be gauged across the following three dimensions:

(1) Does the good in question pose a direct or indirect danger? There are dual use goods, for example, nuclear materials, that already pose a direct danger without having to fall into the “wrong hands.” In contrast, scientific publications of sensitive research results pose an indirect danger, since some “conditions” (e.g. careless reviews, lack of outrage from the scientific community, other malevolent researchers and non-researchers using these research results for illicit purposes) must be met for the good in question to become dangerous.

(2) Has the good in question been designed or manufactured for proliferation? It is usually the case that sensitive research results or risky technologies can only be disseminated after a readiness check. Before this readiness check, one can rely solely on the responsibility of the researcher and their superiors, who should work according to the criteria of good scientific practice. Once a research result or a technology is in circulation as a dual use good, new control mechanisms take effect (which can go as far as export control). It must therefore be clear before any readiness check whether the good is to have an ethically unambiguous end-use or not. Probably, this is quite easy to determine in the case of lethal weapons but not in the case of research results. Further specification is needed here.

(3) Is it a first-order or higher-order dual use good? This parameter poses a new challenge to the assessment of goods as dual use goods. Thus, gain-of-function research (GOFR) and novel AI technologies are almost by themselves capable of generating new dual use problems, 16 some of which we do not even know about today. 17

By applying these three dimensions (degree of immediacy of danger, level of dissemination, hierarchy of dual use goods), dual use goods can be further specified and sorted. This is an important preliminary work for successful risk management, as it allows goods to be divided into certain hazard classes, which in turn is the basis for successful anticipatory governance (a proposal for such a decision framework can be found in Tucker, 2012: 69).

Type II: Dual use as a property of certain intentions of individual actors (=intentionalist view)

This type II-property is rather external to the good in question than type I-properties. Ultimately, it is a characteristic that is not intended to be limited to psychological states but allows different descriptions of the same action (cf. Anscombe, 2000). Research results and technologies as certain objects of actions are known to be achieved or produced by humans for a specific purpose. In dual use scenarios, why is it beneficial to categorize the same action, such as publishing research findings, under various descriptions?

In order to be able to make a normative judgment about a situation, for example, the publication of a research result, we must be clear in advance about how the act of “publishing a research result” can be identified. Modern action theory clarifies that intentions are neither internal psychological states nor externally observable behaviors but should be guided by practical reasons. Incidentally, what is ruled out here is the possibility that bad intentions can have good consequences. In the case of the moral evaluation of the dual use problem, we should bear in mind that bad intentions can be involved in the case of good use of X and good intentions in the case of misuse of X.

It is not surprising that the fact that intentions have a central meaning for the correct use of something cannot be eliminated from the European dual use regulation, which states that dual use goods are “specially designed,” that is, have an intended final use. What is designed or researched for a military purpose cannot be per se a dual use good. However, things that were originally developed for military purposes may be also used in everyday civilian life (so-called spin-offs or spin-outs). 18 Nevertheless, it always remains true that a hand grenade is not made or used to decorate the Christmas tree (pathological cases excluded). Certainly, subjective purposes and intentions are hidden, but they can be made visible under certain circumstances. 19 Subjective intentions are mostly implicit, and they can play a role in human actors and, in the future, also in AI systems, which could be said to have the ability to set purposes on their own. Tacit human and opaque artificial knowledge refer to skills, knowledge, and techniques that cannot be readily codified and are obtained through experience, by working in teams, and by participating in professional scientific networks through a process of “learning by doing” or “learning by example” (Marris et al., 2014: 397). Recognizing the importance of tacit or opaque knowledge is crucial for more elaborated assessments of threats caused by dual use goods.

Type III: Dual use as a property of relationships between collective actors with a double-binding nature (=social contextualist view)

A third type of property is neither objective nor subjective in nature. It pertains to a broader context, echoing the adage: “Guns don’t kill people, people do.” Rappert points out that, “asking whether it is the user or the gun that is the “real” agent missed how the weapon and the person are hybridized together in a locally accomplished assemblage” (Rappert, 2014: 215). Capturing this hybridization within an assemblage is challenging. However, we can assume that the consideration of the wider context must go hand in hand with the analysis of the nature of the relationships between two or more (user) groups entering into an exchange with a certain dual use good. The nature of this kind of exchange is very often reciprocal: just imagine mutual economic benefit (win-win), unilateral humanitarian aid, settlement of compensation claims, or friendly gestures in the form of gifts (e.g. circulating between representatives of a state or company). The character of the relations between the exchange partners determines the future properties and the intentional structure of the good in question.

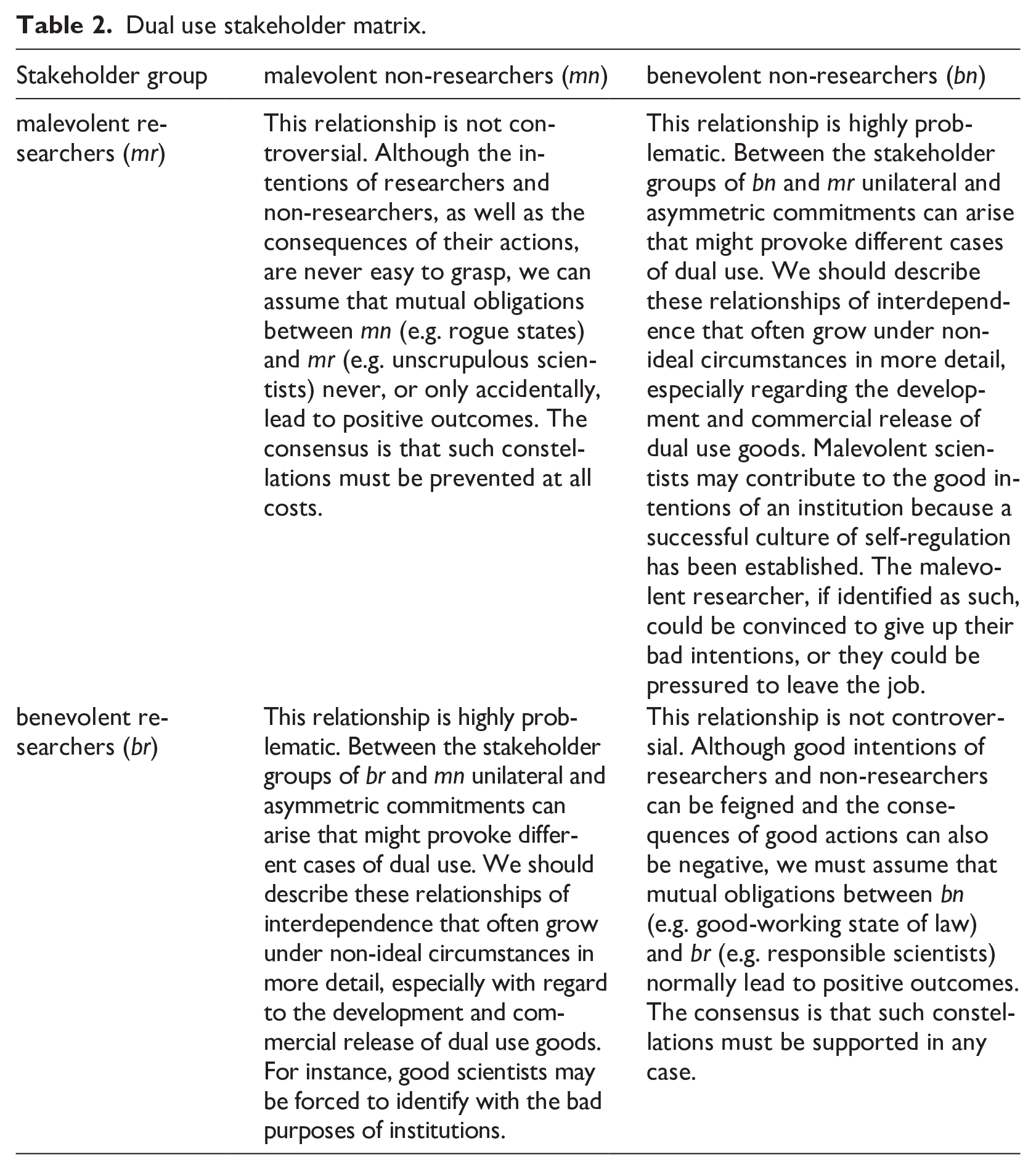

The following second matrix tries to schematize the role of trans- and interactions between different stakeholders about a specific dual use good. It can be assumed that (mostly reciprocal) transactions with dual use potential only take place between four groups: malevolent researchers, benevolent researchers, malevolent non-researchers, and benevolent non-researchers. These interactions between the stakeholder groups mentioned here can be symmetrical or asymmetrical, although the role played by groups working at the interface between research and non-research will have to be shown elsewhere. Irrespective of this, it is clear that intricate networks of relationships develop between these groups, which I would like to characterize briefly (Table 2):

Dual use stakeholder matrix.

It is striking that these reciprocal relationships often have the character of a mutual obligation. 20 Potential dual use goods, including research results, can be exchanged, although the nature of the mutual obligation in a market situation is different from that in a non-market situation. Components of weapons can be exchanged commercially as dual use goods and are subject to export controls. However, goods can also be exchanged that do not follow an economic interest or only pretend to do so. This means that they are not subject to any export controls. Whether a good becomes dual use depends largely on the context in which it is exchanged. It plays a role in whether a good, for example, an epistemic technology, escapes export control or not. If it can escape export control, then additional, non-economic interests play a decisive role in the qualification of a good as a dual use good, for example, political (and even ideological) interests such as secret declarations of solidarity, evading sanctions, spreading disinformation, etc. 21

To sum up: Dual use goods can thus also be hybrid in a way other than the usual, insofar as they can be exchanged on the market as “normal” (=not safety-relevant) economic goods, but their potential purpose is to function as safety-relevant goods for non-market interests. From that it follows that type III-properties automatically incorporate aspects of type I and II, that is, the goods in question have the potential to become dual use if and only if (a) the good itself is predisposed to produce good as well as bad; (b) the user (an individual or a collective), who is not designated for the end use, intends to misuse the good. Above all, the assessment of the relationship between the stakeholder groups of bn and mr as well as of br and mn is quite complex, precisely because numerous “dilemmas for cooperation” (Vaynman and Volpe, 2023: 599) can arise here. To unravel these complex relationships, targeted monitoring is necessary, including the self-monitoring of researchers and engineers, at both the micro-level of institutions through ethics committees and the macro-level of global governance via international cooperation.

From the characterization of dual use properties and the stakeholder matrix, we can infer that limiting dual use to type I-properties overlooks significant aspects of “dual usability,” as list definitions fail to consider subjective intentions, and pure mitigation strategies are confined to export control. For types II and III, a new classification scheme is imperative to lay the groundwork for an ethical framework. Given the diversity and context-sensitivity of double-charged items, the issue of dual use becomes inherently multidimensional. These insights prompt the question: what would an ethical framework for dual use research entail?

What could research ethics on dual use look like?

This question is anything but easy to answer due to the internal and external complexity of the dual use issue. First, it is difficult to link the three classes of dual use properties from the perspective of a unified account of dual use. Second, it is a great challenge to derive ethical assessment tools or guidelines from this tripartite scheme, which is, of course, not complete. Third, we should ask the question of how to deal with the chronic problem of epistemic uncertainty from an ethical perspective.

In the final section, I would like to present different modes, tools, and methods for ethical evaluation. Referring to the property types of dual use I introduced, we will see that the instruments differ from type to type, but there are also important conceptual and methodological entanglements. It will be a task in the future to identify these interfaces more precisely to provide a basis for the development of a comprehensive ethical framework for dual use.

Ethical tools for evaluating type I-properties

Dual use properties according to the determinist view necessarily lead to list definitions of dual use, as we can see from the export control problem (see also D3). If a single good in question complies with a good on the list, then it is identifiable as a dual use good. This approach is fraught with all sorts of problems:

(1) No list can ever be complete. We therefore must assume that several dual use goods are not listed. Although the legislator has introduced regulations concerning non-listed dual use goods, the criteria for a licensing requirement are vague and always susceptible to manipulation.

(2) It is not always clear which criteria lead to the selection that a certain group of goods is labeled as dual use goods.

(3) List definitions do not differentiate between items based on their ethical significance. Without a clear hierarchy, the selection seems arbitrary. Indicating risk potentials alone is not enough for list inclusion, which could extend to items like knives or computers.

(4) Item lists are not well suited for operational purposes due to their enormous size and lack of clarity. As every list is expected to grow (e.g. the current EU dual use item list does not yet include AI technologies), the degree of impracticality will continue to increase.

In general, presumably objective list definitions are known to be combinatorically vague because they are incomplete and always subject to revision. Moreover, there are many demarcation problems here (to non-listed and non-dual use goods), and the link to subjective intentions that can make a good a dual use good is not addressed. Nevertheless, the list seems to be indispensable for issues of export control; for other areas of dual use such as R&D, the recourse to lists is less relevant or meaningful. Perhaps it would seem more informative if each list definition or classificatory scheme is supplemented by a teleological factor that introduces an ethical benchmark with the help of which it is possible to qualify a good in accordance or discordance with good subjective goals and positive objective values. According to D4, dual use items listed under the category “sensors and lasers” should be qualified more precisely insofar as one also specifies what must be given for a good on this list to fulfill or fail to fulfill its original purpose. This also includes checking whether and to what extent product specifications can be unlawfully changed (e.g. amplifications of laser devices by improving the radiation concentration), intentions in dealing with the good can be misguided (e.g. repurposing of medical lasers for security monitoring) or properties that are external to the good can be created by changing the social-political context (e.g. lasers, which are used to detect toxic industrial hazards in times of peace and to detect combat chemical weapons in wartime).

Ethical tools for evaluating type II-properties

For the ethical evaluation of dual use, as just noted, the linking of a good in question with a personal good or subjective bad intention plays an important role. This brings us to type II of dual use properties (intentionalist view). In this respect, we primarily must ask the question of whether and to what extent an intention can turn a good in question into a dual use good and how to ethically classify different determinations of the intention to act.

There is much to suggest that the dual use problem could be a particular instantiation of the classical Doctrine of Double Effect (DDE). According to Warren Quinn, there are four essential criteria to describe the double effect: (a) the intended end must be good; (b) the intended means to it must be morally acceptable; (c) the foreseen bad upshot must not itself be willed (i.e. must not be, in some sense, intended); (d) the good end must be proportionate to the bad upshot (i.e. must be important enough to justify the bad upshot). As you can see here, “DDE exploits the distinction between intentional production of evil and foreseen but unintentional production of evil.” (Woodward, 2001: 2) That seems to be important for evaluating dual use issues. If we do not want to be ethical alarmists, but just want to take a precautionary attitude toward dual use, then DDE could be evaluatively helpful because it is a doctrine that can put consequentialist reasoning in its place: “The DDE thus gives each person some veto power over a certain kind of attempt to make the world a better place at his expense” (Quinn, 2001: 37). It follows that DDE prioritizes not the consequences of an action, but the assessment of the quality or species of an action. This move prevents bad intentions from being legitimized by a good research purpose and a bad research purpose by good intentions. But does this also apply if no intentions are involved in dual use cases, because the dual use potential is inherent in the technologies, and we do not know if and when it will unfold?

Initially, I think it makes sense to work out the similarities and differences between DDE and the assessment of dual use cases (cf. Briggle, 2005). There is a narrow semantic relationship between “dual use” and “double effect.” DDE, like the dual use approach, is primarily located in the field of applied ethics, and with the help of DDE, the good and bad use of the same good is brought into an action-theoretical and ethical context: “My positive action plays a specific causal role in bringing about the bad effect—namely, it enables or aids another person to do something wrongful (to jostle the pedestrian into the path of my car/to drive while intoxicated/to create a bioweapon)” (Uniacke, 2013: 160).

Of course, there are also unmissable incompatibilities between these two approaches: Compared to DDE, dual use problems in applied ethics cannot be based on prima facie duties because the stakes are too high. For example, in unknown-unknown scenarios, one cannot be committed to anything except the obligation not to participate in a research activity with an undetermined outcome. There must always be the possibility that no one is prevented from being bound by their conscience, especially when it comes to researching goods that present a higher level of dual use risk. However, it is also a genuine characteristic of research to work on things whose outcome is uncertain—also from an ethical point of view. Other features of dual use make it difficult to combine it with DDE. Dual use approaches focus, for instance, more on collective than individual intentions. Very often, they are subject to legal-political considerations: “The basic question posed by a “dual use dilemma” is, I take it, whether it would be morally permissible to engage in the activity in question given the risks of its misuse, and not whether it would be morally right or morally obligatory to do so” (Uniacke 2013: 157f.) From that, it follows that dual use approaches operate more strongly with probabilities of occurrence and trade-offs (cost/benefit) than DDE. Under the perspective of DDE, actors have little time for deliberation and extremely limited options for action. Dual- use cases are not so often about the choice of options, but about the question of avoiding enabling bad options. Moreover, some important conditions for a case of DDE fall away: In the case of a dual use dilemma, the foreseen bad use is not intended by the agent who faces the dilemma (cf. Uniacke, 2013: 162).

However, the disadvantages of existing differences between dual use and DDE do not outweigh the advantages of existing similarities. Without an action-theoretical assessment of the dual use problem, neither the goals nor the intentions of the stakeholders who have an interest in the dual use good can come into view. I do not fully agree with Luciano Floridi, who states that “dual-use [. . .] is an empirical, not an ethical assessment” (Floridi, 2023), because I am convinced that the dual use problem can directly lead—under the guidance of DDE—to an ethical evaluation that helps to distinguish between intended, foreseeable, and unforeseeable consequences.

Ethical tools for evaluating type III-properties

The specification of type I and type II-properties is not sufficient to determine the complete dual use potential of any object in question. It therefore makes sense to integrate these two perspectives into the third perspective of social contextuality. This makes it possible to determine more precisely (1) the type and order of risk, (2) the identity of the misusing individual or collective actor (“Who is pursuing a malicious interest?”), and (3) the identity of the potentially harmed individual or collective actor (“At whose expense is this malicious interest?”). Disruptive Technologies or security-relevant research results can fully develop their dual use potential if they are embedded in a suitable social context that favors the realization of the potential. By contrast, they can completely fail to exploit their dual use potential if they are embedded in a context that disfavors the realization of the potential (this would be the case, e.g. if researchers, ethics committees, politicians, technology developers, and others act responsibly in organizational structures designed for the purpose to act so). Without taking the social context into account, it is not possible to determine responsibilities and accountabilities in the event of misuse, which means that systemic weaknesses cannot be identified, and personal mistakes cannot be rectified. This move also allows us to answer the question of the moral neutrality of a research result or a technology whose consequences we cannot yet assess. If we are aware of the significance of social contexts, we can recognize the extent to which a research object or technology is not neutral, because the intentions of those who have achieved a research result or designed a technology come to light. But how to create the conditions for responsible dual use?

According to our enriched social contextualist view, mutual obligations mainly generate relationships of shared responsibility, especially when they are supported by the good intentions of individuals or entire groups. 22 In this sense, we need to talk about the “role responsibility” (Forges, 2013) of each stakeholder (researcher, politician, scientific publisher, etc.) as well as the collective responsibility of universities, companies, or states in relation to potential and actual dual use goods. But what does responsibility mean in this respect? 23 To put it quite formally, responsibility means: “X is responsible for Y because of Z”. Since we represent a goods-based approach to dual use, we are primarily interested in the “because of Z”. This expression could especially refer to the good in question and can change the way X and Y acts and values. A contemporary notion of responsibility that has been informed by dual use issues also transcends the network of mutual obligations between stakeholders, as another relatively uncertain factor is added—the future. Accordingly, retrospective and prospective responsibility must be rethought in relation to a potential or actual dual use good. In terms of self-regulation, the role responsibility of the scientist needs to be emphasized more in relation to dual use. I think that based on what has been said, we should develop a dual use-specific ethics of responsibility that should not be limited to risk-ethical considerations (cf. Placani and Broadhead, 2023) 24 or mere compliance issues. The intentions and virtues of researchers and non-researchers (cf. Grinbaum and Adomaitis, 2024), who owe each other something, need to be considered. A description of the nature of the political, social, and economic relationships between partners in the exchange of dual use goods helps to better assign responsibilities. The exchange of dual use goods conceals certain intentions on the part of the exchange partners, the elucidation of which helps to weave the “web of prevention” (Rappert and McLeish, 2007).

Conclusion and outlook

To identify a good as dual use, it is insufficient to only consider its technical and functional characteristics. Indeed, most items can serve both beneficial and harmful purposes. A good is designated as dual use or further categorized based on the intentions of the possessor, whether they are benevolent or malevolent. The Doctrine of Double Effect (DDE) helps establish that certain constraints make it morally indefensible to achieve a negative outcome or to employ harmful means to attain a positive one. Instead, dual use goods are distinguished when they become part of trading activities, leading to the development of social or economic relationships grounded in mutual adherence to moral obligations. Various stakeholders assume responsibility for a good that yields significant scientific and economic interest, earning credit for benefits and incurring liability for damages related to the dual use dilemma. The level of liability for harm is contingent on the degree of participation in the unequal trade of goods and the predictability of potential damages.

Despite the promising specification work, one is confronted with numerous limitations. A key limitation of my analysis is that higher-level dual use goods such as AI technologies or security-sensitive research material can cause unintended short-term and long-term harms, the likelihood of which is impossible or almost impossible to measure. Making realistic dual use risk evaluations long into the future is not feasible due to epistemic uncertainty. It follows that long-term effects can hardly, if ever, be addressed via governance or decisional frameworks (which, of course, can ensure that ethics-by-design approaches become increasingly important). This is also related to the question of who is to blame for the damage that occurred because of non-compliance with a dual use risk. Ways must be found that neither do everything in their power to find those responsible for collectively caused damage and hold them liable, nor attempt to communitize unintended dual use risks and thus make it unfeasible to assign individual responsibility. To address these and other issues, what steps should be taken in the future to enhance the conceptualization and ethical operationalization of dual use dilemmas?

First, much work needs to be done on a relational or interpersonal theory of dual use goods. Dual use goods are always embedded in social, political, and economic contexts and are usually part of mutual obligations that exist between individuals, collectives, and institutional bodies and which are often conceivable as non-monetary and non-contractual relations of debt. Illuminating these personal and impersonal relationships helps in the attribution of responsibilities and can also close possible gaps in this regard.

Second, the creation and distribution of dual use goods should be articulated through detailed action theory terms. Our initial approach using the Doctrine of Double Effect (DDE) was preliminary. Recognizing dual use potentials will be unattainable unless we assess the intentions behind individual or collective actions.

Third, the notion of ethical responsibility must be more precisely aligned with the issue of dual use. Given that the concept of dual use is intrinsically linked to notions such as “risk,” “probability,” and “uncertainty,” additional clarifications are necessary. What steps should be taken to advance dual use research further?

I therefore have proposed to develop a three-dimensional classification system of the dual use risks which is open to fulfill some requirements of an ethics-by-design approach. This system could classify misuse scenarios based on (1) whether they involve a direct (first-order) or indirect (higher-order) dual use risk, 25 (2) the identity of the misusing actor, and (3) the identity of the potentially harmed party. Continuous monitoring is essential to enable the immediate implementation of adaptive strategies for preventing or mitigating unforeseen harms and dangers. With the help of a future classification system and some reflections made in this article, it could be possible to develop an ethical framework that contributes to finding more appropriate mitigation strategies and to identifying regulatory gaps and filling them where necessary. Such a framework could also help researchers to identify potential “dual use research of concern” (DURC). Of course, the researchers should not be left to their own devices. Someone who is acting with good intentions does not always realize that their research result may be misused by third parties. 26 It is important to bear in mind that a balance must always be struck between raising general dual use awareness, for example, by applying the precautionary principle, and avoiding unnecessary administrative burdens when conducting and translating safety-related research, while at the same time preventing public alarmism. The more precisely one identifies a good as a dual use good, the easier it is to exploit its opportunities and minimize its risks.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]() .

.

Ethical approval

Our institution does not require ethics approval.