Abstract

Peer review facilitates quality control and integrity of scientific research. Although publishing policies have adapted to include the use of Artificial Intelligence (AI) tools, such as Chat Generative Pre-trained Transformer (ChatGPT), in the preparation of manuscripts by authors, there is a lack of guidelines or policies on whether peer reviewers can use such tools. The present article highlights the lack of policies on the use of AI tools in the peer review process (PRP) and argues that we need to go beyond policies by creating transparent procedures that will enable journals to investigate allegations of non-compliance and take decisions that will protect the integrity of the peer review system. Reviewers found to violate relevant policies must be excluded from the process to safeguard the integrity of the peer review system.

Introduction

Peer review not only plays a main role in the scholarly communication; it also facilitates quality control and integrity of scientific research (Guston, 2007; Rennie 2003), even in the digital age (Nicholas et al., 2015). Setting academic research to the judgment of peers who are experts in the field aims to critically assess the novelty, quality, impact, and ethicality of academic research (Ware, 2008). To enhance peer review systems, publishers have incorporated automated screening tools in the peer review process (PRP) that enable editors to accelerate manuscript screening, to assess compliance with journal policies and to identify suitable reviewers based on expertise and past performance, which, however, have raised concerns of responsibility and transparency among scholars (Schulz et al., 2022). On the other side of the publishing process, we are witnessing a turning point where academic authors increasingly use Artificial Intelligence (AI)-assisted technologies, particularly generative conversational AI such as Chat Generative Pre-trained Transformer (ChatGPT) and Large Language Models (LLMs) in general, in the manuscript preparation phase (Dergaa et al., 2023; Lee et al., 2023). Generative AI and LLMs in general are the point of focus for this manuscript because of their ability to produce content, which is not always disclosed as such. AI-assistance tools frequently used by scholars such as search engines, formatting and thesaurus are out of the scope of the arguments presented herein. Although generative AI tools have the potential to enhance and speed up academic writing, their use raises ethics issues on authorship, authenticity, credibility and accountability of academic work (Dergaa et al., 2023), with subsequent legal implications on copyright.

However, an issue that has not received comparable attention so far is what happens when a reviewer uses AI-based tools to produce a peer review report without disclosing it. In other words, what would be an appropriate policy for editors to follow in the case that authors expressed their concerns, or editors themselves have suspicions that the peer review report is a result of, or it includes parts produced by, generative AI tools? How should these allegations be investigated? Should the reviewer be excluded from peer reviewing? If so, at which level? Should it be exclusion from the peer review of the journal or all journals the publisher is responsible for? What are the responsibilities of different parties in this process to ensure compliance with ethics standards and integrity? Are these sufficiently described in journal publishing policies?

The triggering event for this article is the author’s own experience as an editor, during the peer review of a manuscript, where ethical dilemmas emerged and the lack of effective procedures was exposed. The aim of this article is to illustrate the lack of specific policies on the use of AI-based tools in the PRP, to analyze the potential consequences on the integrity of the PRP, and propose practical ways to mitigate the risks and safeguard the integrity of peer review.

Materials and methods

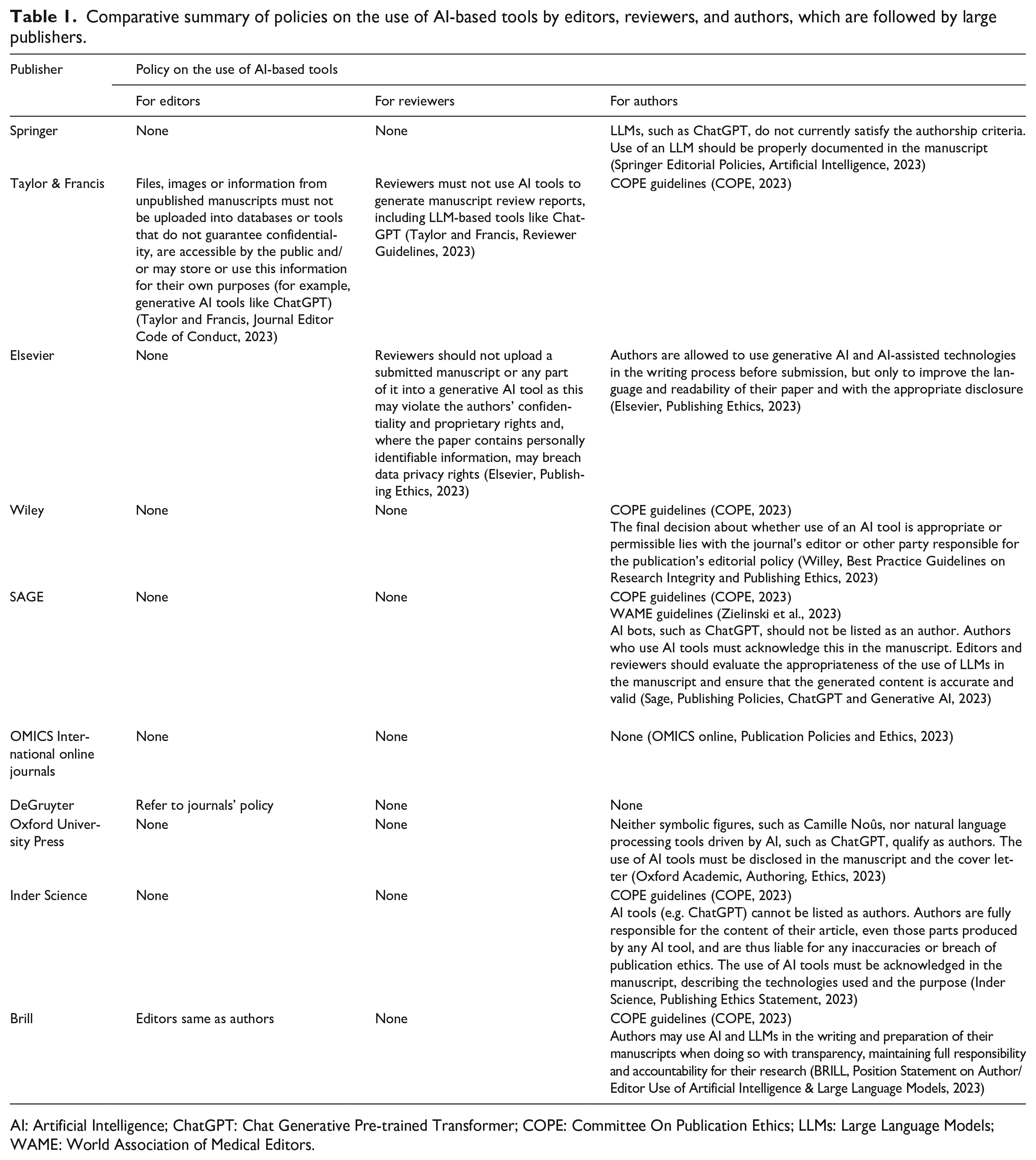

An ethics analysis approach was followed and critical reasoning was applied to explore whether the absence of policies exposes the peer review to risks. To provide grounds for the absence of policies, the publishing policies of the 10 largest scientific publishers, as classified by journal count (Nishikawa-Pacher, 2022), were reviewed based on the information provided in their websites. It is acknowledged that this is just an indicative list of publishers, suggestive only of the different policies, which serves the aim of this manuscript. Individual journal publishing policies were not reviewed.

Results

Large publishers have adapted their publishing policies to the potential use of AI-assisted technologies by authors during manuscript preparation (Table 1). Eight out of the 10 largest publishers have established policies on the use of generative AI and AI-assisted technologies by authors, five of which are in line with the Committee On Publication Ethics (COPE) position statement on “Authorship and AI tools,” according to which: AI tools, such as ChatGPT or Large Language Models (LLMs), cannot be listed as an author of a paper and authors who use AI tools in the writing of a manuscript, production of images or graphical elements of the paper, or in the collection and analysis of data, must be transparent in disclosing how the AI tool was used and which tool was used. (Committee on Publication Ethics, 2023)

Comparative summary of policies on the use of AI-based tools by editors, reviewers, and authors, which are followed by large publishers.

AI: Artificial Intelligence; ChatGPT: Chat Generative Pre-trained Transformer; COPE: Committee On Publication Ethics; LLMs: Large Language Models; WAME: World Association of Medical Editors.

Only 1 of the 10 large publishers also refers to the World Association of Medical Editors (WAME) recommendations, according to which: [. . .] chatbots cannot be authors, authors should be transparent when chatbots are used and provide information about how they were used, authors are responsible for material provided by a chatbot in their paper and for appropriate attribution of all sources. (Zielinski et al., 2023)

Nonetheless, neither COPE nor WAME have published guidelines on the use of AI tools by peer reviewers, and most large publishers do not cover in their policies the use of generative AI by reviewers. Notably, only 2 out of the 10 large publishers mention the use of AI tools by reviewers. However, the policies of these two publishers have a distinct focus. Elsevier focuses on data security, the potential breach of the authors’ confidentiality and rights, as well as on potential breach of data privacy rights when personal information are included in the manuscript. Thus, this policy does not explicitly prohibit the use of generative AI to produce the content of the peer review report. For instance, if generative AI was used offline or as part of a private generative AI tool, then data confidentiality would not be an issue, and the reviewer would be allowed to do so.

Taylor & Francis is the only publisher that explicitly mentions that reviewers “must not use AI tools to generate manuscript review reports, including LLM-based tools like ChatGPT,” although the rationale is not clarified. Regarding the use of generative AI tools by editors, the policy by Taylor & Francis prohibits the use of generative AI tools like ChatGPT to protect confidentiality of the information in the manuscript. Thus, one could assume that the prohibition for reviewers is also based on confidentiality reasons.

None of the policies of large publishers, as described in their websites, indicate the procedure to be followed in handling potential violations. By way of explanation, there is a lack of transparency regarding the investigation of cases where a reviewer (or an editor) has used or is suspected to have used generative AI. It remains obscure if and how these cases are handled by the journal’s Ethics Committee (if one exists at all) or research integrity team. It is unclear whether publishers have any means available to provide evidence for the detection of content generated by AI tools. There is opacity on whether any kind of sanctions will be imposed on reviewers using generative AI tools in the PRP.

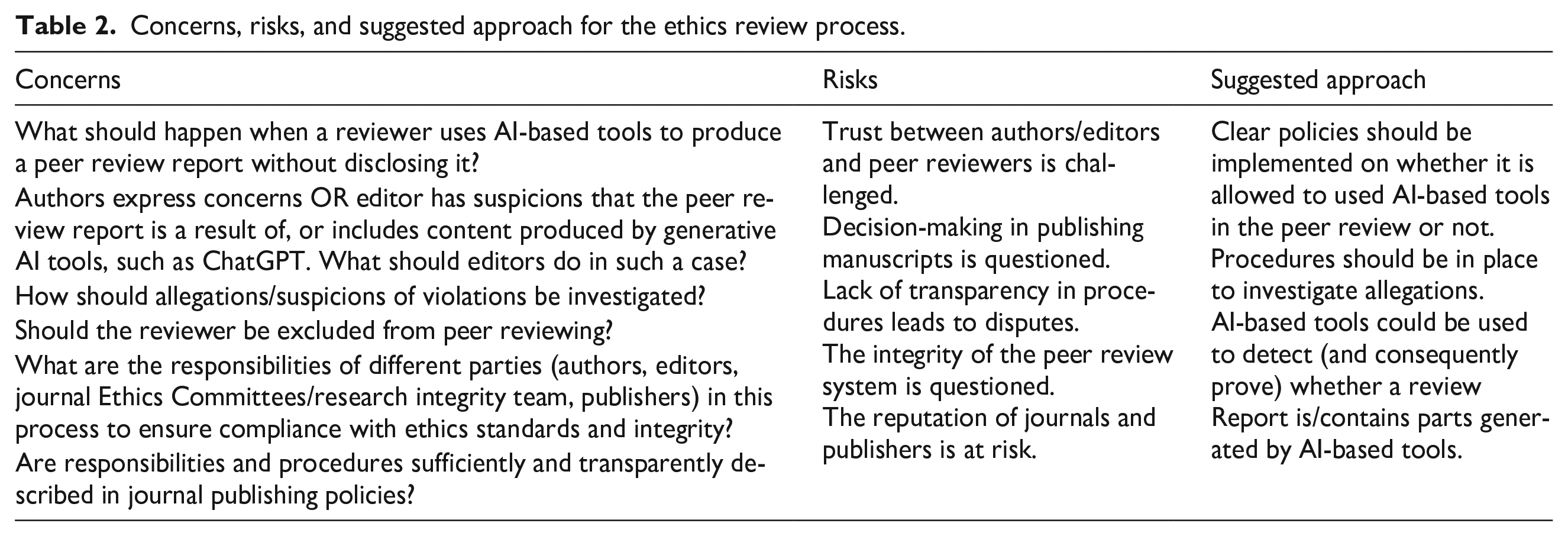

The lack of relevant policies entails risks for the PRP, which are described in Table 2. Unless transparent policies are established by publishers, the trust between different parties involved in the PRP, including authors, editors, peer reviewers, and publishers, is at stake. Decision-making on which manuscripts will be published is challenged, and disputes between authors and journals may arise. A consequent critical risk is that the integrity of the peer review system overall is called into question.

Concerns, risks, and suggested approach for the ethics review process.

Discussion

Clear guidelines on the use of AI-based tools by academic authors, like the position statements published by COPE and WAME, are essential to promote transparency and integrity in research and publishing. Therefore, it is not surprising that several large publishers follow these guidelines. However, this is not the case for reviewers playing an important role in the PRP. There is clearly a lack of guidelines by appropriate committees, such as COPE and WAME, on whether AI-based tools should be used by reviewers and if yes, under which conditions. This issue is also insufficiently addressed by the existing publishing policies of large scientific publishers.

Such an issue should be examined by taking into consideration the advantages and disadvantages of using AI tools in the PRP. For instance, AI tools can facilitate editors in the initial screening phase of manuscripts to assess their quality, detect plagiarism, check formatting, and whether they fit with journal scope in a time efficient manner; they can be used in identifying suitable reviewers; they can even be used in summarizing individual review reports and in writing final decision letters. Generative AI tools can also enhance peer reviewers by generating more concise, informative and well-written reviews for native but particularly for non-native speakers, empowering them to confidently use the English language and improve their writing style; they can also save time and effort for reviewers from correcting grammar and spelling mistakes.

However, recent studies investigating the potential of AI tools to serve as peer reviewers in the evaluation process of manuscripts, highlight the limitations of AI-generated review reports. Donker (2023) found that when ChatGPT was used to produce a peer review report, it was able to produce a good summary of the manuscript clearly describing its main goal and its conclusions, but it was unable to provide specific improvements for a manuscript and was mainly left to general comments, with no critical content about the described study. Of note, the AI-generated peer review provided a list of specific-looking general comments with no bearing on the text and included specific but unrelated comments to the study content, which could be perceived as reasons for rejection. When additional references were asked, ChatGPT fabricated articles which were non-existent but were authored by real persons working on similar topics. The author concluded that due to the ability of ChatGPT in summarizing the paper and its methodology remarkably well, it could easily be mistaken for an actual review report by persons that have not fully read the manuscript and that authors should be prepared to challenge reviewer comments that seem unrelated and non-specific (Donker, 2023).

A study by Checco et al. (2021) aimed to illustrate and discuss the potential, pitfalls, and uncertainties of the use of AI to approximate or assist human decisions in the quality assurance and peer-review process associated with research outputs. For this reason, they developed an AI tool to assess, amongst others, whether AI can approximate human decisions in the peer-review process, whether it can uncover common biases in the review process or preserve such biases. It was found that even when rather superficial metrics such as word distribution, readability and formatting scores were used to perform the training, the AI tool was often able to successfully predict the peer review outcome reached as a result of human reviewers’ recommendations. This may indicate that the “first-impression bias” found in reviewers can be present in a training dataset and thus, the trained AI model can propagate such biases (Checco et al., 2021).

Additional to limitations of AI-tools in the PRP, their use raises ethical concerns on various other aspects. Data confidentiality is a key issue in the PRP, highlighted in all publishing policies as an obligation for editors and reviewers involved. One of the possible risks when using AI tools, such as ChatGPT, in the PRP is that of data breach. When confidential manuscripts are uploaded, they contain original and novel ideas that are the authors’ intellectual property, original research data, or even include personal data of research subjects. It is unknown if these can be used by LLMs for self-training and subsequent tasks. Therefore, data security is a prerequisite not always met when using online generative AI tools.

Algorithmic bias of the LLMs that are used can be also introduced to AI-produced review reports. Existing peer reviews are used as training datasets for the AI algorithms, and these datasets may contain biases propagated by the AI model. Such biases include first impression bias (Checco et al., 2021), cultural and organizational biases (Diakopoulos, 2016) including language skills, and biases towards highly reputable research institutes or wealthy countries with high research and development expenditure. AI-generated review reports which are biased can have a significant impact on a researchers’ career or in a research team’s reputation, emphasizing that human oversight is necessary in the process. There are also concerns that AI-based tools could exacerbate existing challenges of the peer review system allowing fake peer reviewers to create more unique and well-written reviews (Hosseini and Horbach, 2023).

Most importantly, one should not overlook the risk of editors and reviewers over-relying on AI tools to assess the originality, quality and impact of a manuscript, overlooking their own experience, scientific and expert judgment. Dependency on AI tools – even to a certain extent – in order to manage the PRP can jeopardize the way that editors and reviewers exercise their autonomy, which becomes even more problematic when biases exist in the algorithms, as discussed earlier. Consequently, this leads authors to distrust the PRP due to a lack of transparency on the rationale of decision-making.

Ultimately, the potential negative consequences of AI tools used in the peer review may undermine the integrity and the purpose of the process, and challenge academic communication and even trust in science at large. Thus, the need to have clear policies on the use of AI tools by reviewers is evident. Indeed, this issue has been very recently raised by scholars who argue that there is a need to apply a consistent, end-to-end policy on the ethics and integrity of AI-based tools in publishing, including the editorial process and the PRP, to avoid the risk of compromising the integrity of the PRP and of undermining the credibility of academic publications (Garcia, 2023; Ling and Yan, 2023).

Judging from existing policies on the use of AI-based tools by authors, the policies on whether reviewers can use AI tools will be either restrictive or permissive on the condition that the tools and the way they are used are disclosed in the review report. But the next question that arises is: are policies enough and what are the steps that need to be taken further down to ensure that policies are followed and reviewers who violate them are being identified? WAME guidelines for the use of AI tools by authors recommend that: [. . .] editors need appropriate tools to help them detect content generated or altered by AI for the good of science and the public, and to help ensure the integrity of healthcare information and reducing the risk of adverse health outcomes. (Zielinski et al., 2023)

Should we use similar tools to detect AI-generated/altered review reports or will this undermine the altruism on which peer review is based on? Reviewers voluntarily spend time and effort on manuscript aiming to help authors improve their papers and play an active role in the scientific community (Mulligan and Raphael, 2010). Checking for AI content in review reports challenges the trust between reviewers and editors as integral parts of the PRP, as well as the reviewers’ willingness to continue offering their expertise is the peer review system.

But then again, without tools to detect content generated or altered by AI in peer review reports, how can we prove whether allegations are true or not? How can we fill in the gap of evidence in cases where there are suspicions that a reviewer has used AI tools, such as ChatGPT, to produce a review report? What are the procedures to be followed by editors in such cases? Publishers urgently need to consider forming appropriate policies for such cases, which are expected to increase along with the use of generative AI and LLMs. Policies and procedures have to be transparent, detailed and solid, facilitating decision-making when a reviewer is found to have used AI-tools without acknowledging it. In case authors suspect that a reviewer has used AI-assisted technologies to produce a review report, they need to alert the editors, who subsequently have an obligation to raise such matters to the journal’s Ethics Committee (again, assuming one exists) or research integrity team. But in the absence of evidence, how could such allegations be investigated by Ethics Committees? If the use of AI tools in review reports cannot be proven, for example, through tools that detect content generated or altered by AI, I am afraid that this will lead to the death of ethics in publishing. Unless measures are taken, this weakness will be added on top of the existing criticism on the peer review system related to the long delays in publishing new findings, to the efficacy of the whole process, to its susceptibility to perceived bias by editors and reviewers, and to its inability to detect fraudulent research and scientific misconduct (Castelo-Branco, 2023; Manchikanti et al., 2015; Tennant et al., 2017).

Thus, in the dilemma “death of a reviewer or death of peer review integrity?,” I choose the death of a reviewer, after acquiring evidence of course. Reviewers who are found not to comply with publishing policies must be flagged and excluded from the PRP, not only for the specific journal but also for all journals under the same publisher. Publishing policies are essential, but they need to enable certain solid steps towards protecting peer review ethics. Reluctance to do so can put the journal’s and/or the publisher’s reputation at risk. In fact, it is more than reputation which is at stake here: the integrity and ethics of the peer review system can be compromised. Peer review misconduct is not just about attempts to make fake reviews or abusing the recommended reviewer functionality of journals. It is also about trying to verify the originality and accuracy of the peer review report, for which reviewers are solely accountable.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]() .

.

Ethics approval

Not applicable.