Abstract

When scientists act unethically, their actions can cause harm to participants, undermine knowledge creation, and discredit the scientific community. Responsible Conduct of Research (RCR) training is one of the main ways institutions try to prevent scientists from acting unethically. However, this only addresses the problem if scientists value the training, and if the problem stems from ignorance. This study looks at what scientists think causes unethical behavior in science, with the hopes of improving RCR training by shaping it based on the views of the targeted audience (n = 14 scientists). Previous studies have surveyed scientists about what they believe causes unethical behavior using pre-defined response items. This study uses a qualitative research methodology to elicit scientists’ beliefs without predefining the range of responses. The data for this phenomenographic study were collected from interviews which presented ethical case studies and asked subjects how they would respond to those situations. Categories and subcategories were created to organize their reasonings. This work will inform the development of future methods for preventing unethical behavior in research.

Keywords

Introduction

Responsible conduct of research (RCR) is important to the safety of participants, the credibility of scientists, and the pursuit of knowledge itself. Without strict oversight, research with human subjects can inflict terrible harms (National Commission for the Protection of Human Subjects of Biomedical and Behavioral Research, 1979). Likewise, conflicts of interest, cronyism, and misuse of public funds can undermine the credibility of scientists and their results. Perhaps most fundamentally, fabricated or doctored results can cause scientists and the general public to endorse false hypotheses for many years even after the false data has been refuted. Training scientists to understand and enact RCR has thus been standard practice in the United States for more than 30 years. 1

Despite the long history of research ethics training in the US and elsewhere, the standard approaches to this training have been repeatedly criticized as ineffective (Anderson et al., 2007; Antes et al., 2009; Kalichman, 2014; Kornfeld, 2012; Phillips et al., 2018). Scientists do not value RCR training, and largely view it as irrelevant to the actual causes of misconduct (Kalichman, 2014). Consequently, RCR training is not taken seriously. People engage with learning when it’s relevant (Albrecht and Karabenick, 2018), and so we hypothesize that one way to improve effectiveness would be to make ethics training more relevant to scientists. To this end, this study looks at what scientists think causes unethical behavior in science and the implications this has for the form and content of research ethics education. In particular, we argue that scientists’ beliefs about what causes unethical behavior in research could be used to make research ethics training into a more valuable and relevant experience for scientists.

Some researchers have studied ways to improve ethics training, and some have studied what causes unethical behavior, but there has been little research on what scientists believe causes unethical behavior in research. One notable exception (Buljan et al., 2018) focuses on the biomedical community and directly asks scientists what they think causes unethical behavior. Another large survey (Holtfreter et al., 2020), is limited in that it directly prompted scientists to rate the importance of a predefined list of causes, potentially excluding some causes that are salient to scientists. Our study includes scientists from a wider range of basic sciences and investigates what they believe causes misconduct by using both direct and indirect methods.

In the next section, we will discuss the history, purpose, and effectiveness of RCR training. We also review studies which investigated the causes of unethical behavior. In section III, we provide a theoretical grounding for making RCR training relevant, the importance of identifying scientists’ beliefs about the causes of misconduct to that project, and the suitability of a phenomenographic approach to this question. We then describe our sample of scientists, the interview process and associated fellowship, and the qualitative research methodology of phenomenographic analysis which lead us to categorize the causes of research misconduct articulated by scientists. The results section includes a table and descriptions of the categories identified. In the final sections, we describe how designing future ethics training initiatives may benefit from taking heed of scientists’ beliefs about the causes of unethical behavior.

Literature review

Federal requirements for RCR training were established in the late 1980s in the United States, when it became apparent that the apprenticeship model of teaching research ethics was no longer sufficient (Kalichman, 2013: 282). Unfortunately, while federal regulations have mandated RCR training for federally funded researchers ever since, they “provide no guidelines or requirements for the format, scope, content, duration, or frequency of the training” (Phillips et al., 2018: 228). Universities and research institutes have thus been free to devise their own understanding of RCR and models of RCR training.

Despite a lack of uniformity, some broad trends have emerged regarding the content and modality of training. This federal requirement has consistently covered five topics: “conflict of interest, data management, authorship and publication, research misconduct, and human and animal subjects” (Kalichman, 2013: 385). The popular CITI training covers eight topics: authorship, collaborative research, conflicts of interest, data management, mentoring, peer review, research misconduct, and plagiarism (The CITI Program, 2021). While the concept of responsible conduct of research has started to expand to include science communication, diversity, and the social implications of science, training programs have largely not incorporated these features.

This content is largely delivered in two modalities: online or in traditional face-to-face lecture formats. By far the dominant mode is the online CITI training system: which centers on trainees taking self-guided online modules followed by a quiz (The CITI Program, 2021). Face-to-face RCR training, while less prevalent, has a tendency to involve a more holistic approach focused on “cases, discussion, and student engagement” (Kalichman, 2013: 386). Almost all research institutions either opt for an online program similar to the CITI program or for a lecture course involving case studies (Phillips et al., 2018: 238).

The focus on online instruction (or self-contained coursework) has contributed to the feeling that “research institutions tend to participate in a ‘race to the bottom’ seeking the least costly, rather than most useful, approach to meet federal requirements” (Kalichman, 2014: 68). The general sense is that institutions have adopted the most convenient methods of satisfying RCR requirements, with little regard to their ethical impact.

RCR training effectiveness

It is perhaps unsurprising then that there is little evidence that these standard models of RCR training are effective. Kornfeld (2012) summarizes the prevailing view: they “could find no evidence to suggest that this effort has been effective” and that despite increasing the ethical knowledge of participants, these programs did little to change behavior. There are several different dimensions upon which to assess the effectiveness of RCR training. Mumford highlights four possible evaluation measures: a knowledge measure (a check of what students remembered from their ethics training), a transfer task (students responded to scenarios involving ethical questions), participants’ reaction to instruction received, and taking a test (Mumford, 2017). Of these, the most studied indicators of success are ethical decision-making and reductions in RCR non-compliance. Several studies have indicated that the standard model of RCR training is ineffective or even undermines ethical reasoning (Anderson et al., 2007; Antes et al., 2009; Kalichman, 2014; Kornfeld, 2012; Phillips et al., 2018). We discuss each in turn.

In a 2017 review of the literature, Mumford found little evidence of changes in ethical decision-making after receiving standard RCR training (Mumford, 2017). This comports with systematic studies such as Antes et al. (2009) which found that it was difficult to judge the effectiveness of training programs given the lack of set standards, but using the same measure as Mumford, found that training did little to improve ethics and in some cases decreased ethicality of decisions made by participants, saying “ethics instruction is at best moderately effective as it is currently conducted” (Antes et al., 2009: 379).

Antes et al. (2010) later study of the effect of research training on biomedical students suggested that RCR training may even increase unethical behavior. In particular, “harmful effects might result if instruction leads students to overstress avoidance of ethical problems, be overconfident in their ability to handle ethical problems, or overemphasize their ethical nature” (Antes et al., 2010: 519). Of note was that RCR training seemed to increase participants’ “self-protective behavior,” including “failure to take personal responsibility, deception, and retaliation” (Antes et al., 2010: 524). Likewise, Artino and Brown (2009) identified that graduate students (who were less likely to have completed CITI training) were more likely to note behaviors as being unethical than faculty (who were required to have completed training).

Of note, while the effectiveness of standard RCR training is generally low, there is evidence that some kinds of RCR training appear to be more effective than others. Mumford, for instance, notes that courses which involve active student engagement and real-world ethics cases are more likely to be effective. By analyzing critical incidents of ethical misconduct following ethics education programs, they observed fewer false complaints and more quickly reported serious complaints amongst those who had completed these “active” ethics training programs (Mumford, 2017: 260). In 2018, an NSF workshop made these conclusions about RCR training: “(1) noninstructor-led, online-only programs do not provide adequate instruction; (2) multiple formats of instruction are needed; (3) programs should be wide-ranging and cross-institutional, with content that varies by disciplinary areas and career stage; (4) ethics education cannot be administered in a single “dose”; and (5) principal investigators (PIs) should be positively involved in teaching RCR to their trainees” (Phillips et al., 2018). The generic research training used by most universities is online, self-paced, administered in a single dose, and does not have the PI’s involved in the teaching. This could explain why studies have found RCR training to be ineffective, as well as suggest changes to be made to training (Antes et al., 2009, 2010; Kalichman, 2014).

Why people fail to act ethically

The existing research on why people fail to act ethically identifies a range of contributors, including: pressure (Belle and Cantarelli, 2017; Davis et al., 2007: 200; Kornfeld, 2012; Sovacool, 2008), gain (Belle and Cantarelli, 2017; Boes et al., 2017), mental/emotional state (Davis et al., 2007), character (Belle and Cantarelli, 2017; DuBois and Antes, 2018; Kornfeld, 2012; Ruiz-Palomino and Linuesa-Langreo, 2018; Sovacool, 2008), loss aversion (Belle and Cantarelli, 2017), health/family trouble (Davis et al., 2007), relationships (Davis et al., 2007), competition (Boes et al., 2017), opportunity (Adams and Pimple, 2005), and cultural factors (Davis et al., 2007). These studies do not indicate which factors are most likely, only that they are factors in causing unethical behavior.

The study of ethical wrongdoing, in general, has been approached through a psychological model of ethical decision-making. The dominant “cognitive” approach to moral psychology views ethical behavior as caused by good reasoning about ethical decisions (Rest et al., 1999). This cognitive model claims that failures to act ethically are the result of a failure to develop the right kind of reasoning about ethics. For example, wrongdoers see ethical behavior as a way to avoid punishment, rather than as driven by universal principles. Moreover, moral behavior is made possible by four psychological components: (i) moral sensitivity (i.e. the capacity to correctly identify morally relevant circumstances), (ii) moral judgment (i.e. the capacity to make decisions using appropriately moral principles), (iii) moral motivation (e.g. the capacity to see moral behavior as part of one’s responsibility) and, roughly, (iv) moral action (i.e. not being weak-willed, tired, or overburdened). Others reject the cognitive approach to ethical decision-making and instead focus on non-cognitive psychological factors, such as: greed, egocentrism, self-control depletion, slippery slope effect, loss aversion, and time pressure (Belle and Cantarelli, 2017). Still others reject the psychological model, and view ethical decision-making as a “multidimensional construct” where non-cognitive characteristics play a dominant role (Beu et al., 2003). These social approaches focus on an individual’s identity (Ruiz-Palomino and Linuesa-Langreo, 2018) and social-economic structures as explanations for unethical behavior.

The social model has been the dominant mode of study when considering research misconduct in particular. Sovacool (2008) states that unethical behavior can be seen via three possible narratives: a few bad individuals, the pressures on researchers to perform, or a failure of scientific institutions to promote ethical values. Indeed, different studies have investigated whether the problem is individual, institutional, or ethical. For example, DuBois et al. (2013) concluded that individual factors, such as narcissism, play a significant role in misconduct, and that the remedy would be focusing on sense-making strategies, mental models, and bias reduction. Kornfeld (2012) has discussed how unethical actions in research occur because of individual traits and the situations scientists are put into (e.g. the constant pressure to publish or potential whistleblowers’ fear of retaliation). They propose better mentoring programs (e.g. better ratio of mentors: mentees, fostering close mentor relationships) and that whistleblowers should be acknowledged and protected (Kornfeld, 2012). In another paper, Adams and Pimple (2005) discuss how most RCR programs look to prevent individual failings and few address the opportunity and situations that lead to unethical behavior. They argue that while individual responsibility is relevant, often it is easier to change the situations to decrease opportunity for misconduct than to use RCR to teach the definitions of unethical behavior (Adams and Pimple, 2005). Finally, a small strain of research has approached the problem through a criminological lens (Faria, 2018; Walker and Holtfreter, 2015).

What scientists believe about why people act unethically

Two prior studies have sought to identify scientists’ beliefs about why their peers engage in unethical behavior. Buljan et al.’s (2018) study collected data from three focus groups of biomedical researchers: two of doctoral students, and one of research experts. Each focus group met for a discussion in which they were asked questions about research ethics and what they believe causes unethical behavior. Buljan found several forces that the focus group believed determined ethical behavior. The pressure to publish was seen as a stressor that could lead to unethical behavior. The ego of a scientist could encourage them to sabotage others’ work. A scientist with a lack of experience might not know what constitutes unethical behavior. The focus groups also discussed the frustration one might feel in seeing other researchers succeed through unethical behavior. Forces such as these, coupled with a lack of punishment in many cases of misconduct, are seen by researchers as leading to unethical behavior (Buljan et al., 2018). Buljan et al. focuses on the biomedical community and uses the method of directly asking scientists what they think causes unethical behavior. We are extending the study of beliefs about the causes of research misconduct beyond biomedical research.

In another study by Holtfreter et al. (2020), mail and online surveys were sent out to faculty at top research universities in the United States. In this survey they were given a list of reasons why people might act unethically, and for each item on the list, the respondents ranked the item as one, two, or three, with one being “not at all” and three being “very well.” There were four categories, each with several subcategories. The categories in order from highest scores to lowest scores were high strain (2.01), low deterrence (1.74), low self-control (1.61), and social learning (1.51). Our study differs and expands upon Holtfreter’s work by not giving the interviewees a list of possible causes of research misconduct, and instead phenomenographically analyzing conversational interviews with scientists. Investigating the beliefs of basic scientists by using a phenomenographic framework may help identify a broader set of beliefs that could be useful for designing future ethics training programs.

Unethical behavior in non-STEM fields

Unethical behavior happens in other research fields besides STEM. In a study about unethical behavior in business, Boes et al. (2017) concluded that competition and monetary gain are main influences on unethical behavior in business transactions, and that having a business frame of mind as opposed to an ethical frame of mind makes a significant difference in ethical behavior. Another study into unethical behavior in business involved the development of a taxonomy of why unethical acts occur in business school research (Hall and Martin, 2019). The taxonomy developed in this study identified if the behavior was appropriate, questionable, inappropriate, or blatant misconduct. It then goes on to describe the sources of unethical behavior and the severity of punishment typically received and how different stakeholders are affected by the unethical behavior. In Bailey et al.’s (2001) study of accounting research misconduct, they noted that researchers had similar motivations to scientists when acting unethically (pressure and professional advancement), but that since their work was not life-and-death issues, there was less motivation to act ethically. Because of this, respondents “believe that about 21 per cent of the literature is tainted” (Bailey et al., 2001). The humanities haven’t previously been shown to have a high prevalence of research misconduct, but in a 2021 survey of researchers in ethics and philosophy, “91.5% of the respondents considered research misconduct to be on the rise; 63.2% considered at least three of the fraudulent practices referred to in the study to be commonplace” (Feenstra et al., 2021).

Methods

This work was done with a pedagogical design perspective in mind. We take the position that the responsible conduct of science is learned and improved ethics education can therefore lead to improved decision-making and outcomes. In order to improve ethical education, we argue that scientists must see ethics education as being relevant to themselves and their jobs (Albrecht and Karabenick, 2018). If scientists see ethics training addressing what they believe to be the causes of unethical behavior, they will be more likely to care about the ethics training. The goal of this study is therefore to identify what scientists believe are the reasons other scientists act unethically.

In order to elucidate the beliefs of scientists, we adopted a phenomenographic approach (Marton, 1986). Phenomenography is a qualitative research methodology that seeks to describe the way people experience the world and to identify the themes and variations within that experience; it does not seek to make statements about the world, but instead people’s conceptions of the world. Because of this, phenomenographic approaches are particularly good at identifying the ways in which people experience or make sense of a particular phenomenon. Phenomenographic approaches make a fundamental assumption that while everyone experiences a particular phenomenon differently, there are a limited set of ways to perceive that phenomenon (Marton, 1986). In this study, we focus on scientists’ perceptions of the phenomenon of research misconduct.

This study looks for those perceptions and turns them into categories as a way to describe them. We wanted to know how scientists think about the causes of unethical behavior. This will allow future ethics training programs to be designed with the learners in mind. By understanding the experiences of scientists and what they believe causes unethical behavior in their research, we can make the training more relevant to them.

Context

The data were collected as part of a larger study investigating the use of conversations about the goals and values of science to promote scientists’ attitudes toward RCR. This study received IRB approval (a copy of which is on file with authors and journal editors). As part of this project, we recruited 15 practicing scientists from a university in the US to be a part of a fellowship to meet regularly and discuss case studies about scientific decision-making, and philosophical work on the goals and values of science. The number of participants was capped at no more than 20, in order to ensure the feasibility of the small group discussion sessions, not by considerations of data saturation. Individuals were recruited through email, word of mouth, and explicit invitations by the fellowship organizer using a snowball methodology, where participants help identify further recruits. The fellows were intentionally solicited to try to increase diversity along dimensions of gender and academic status. The group included 3 women and 12 men. The membership included 4 pre-tenure faculty and 11 post-tenure. There were three from chemistry, six from biology, two from biochemistry, three from physics, and one from geology. Members of this fellowship were interviewed both before and after the fellowship where they were asked about their views on ethics and to respond to several case studies. The data used in this study come from interviews performed before the fellowship started, and thus the views expressed are not affected by the intervention, though they may be affected by the selection process.

Data Collection

The data for this paper were collected in Summer 2019 using pre-fellowship, semi-structured, one-on-one interviews of 15 STEM research faculty. The purpose of these pre-fellowship interviews was to establish their baseline understanding of research ethics prior to the fellowship. Each subject was asked general questions about research ethics, what ethical issues arise in their own labs, and what they would do in a series of vignettes describing potential research misconduct. These vignettes described hypothetical scenarios involving safety of human research subjects, “p-hacking,” authorship disputes, non-financial conflicts of interest, appropriation of unpublished data, and gender diversity in faculty hiring. The text of the first five scenarios was adapted from vignettes presented in Mumford et al.’s (2006) Ethical Decision-Making (EDM) test. The sixth, reproduced below, was developed by the authors. The full script for these interviews can be requested from the corresponding author.

Each participant was interviewed once, for approximately 1 hour. The interviews were video recorded, with supplementary notes written by the interviewer after the conclusion of the interview. Author Scott Tanona (a male, tenured faculty member in Philosophy) conducted the interviews on the campus of the university with only himself and the interviewee present. The interviewer is an experienced philosopher of science, with a long standing interest in the goals of science. Prior to participant interviews, a practice session was conducted with a scientist who is not in the data set and who had no knowledge of the research design. The interviewer had varying degrees of familiarity with the interviewees, ranging from well-known to unknown. Subjects were aware that the interviews were part of a project that was investigating the effect upon RCR competency of conversations about the goals and values of science.

Data analysis

In analyzing the data, 14 of the 15 interviews were selected. The remaining interview was not analyzed because the coder suggested they may be a future student of the interviewee. Each interview was transcribed in full by an automated transcription service (otter.ai). Transcripts and quotes were not returned to participants for correction, but the transcription was corrected as needed by the authors. The data were analyzed phenomenographically following the suggestions of Marton (1986). A single primary coder watched the entirety of each interview alongside the transcripts, and identified quotations where the interviewee explicitly or implicitly indicated motivations for, explanations of, or causes of researcher misconduct. The beginning and end of each quote was determined by the contextual information required to understand what the scientist was describing as a cause for unethical behavior. This was done so that the quotes could be confidently categorized later on. The quotes collected were organized by question for additional context. Data were coded in an Excel file, organized by interviewee and question. Dedicated coding software (NVivo) was considered, but a simple spreadsheet was chosen due to its low cost, flexibility, and the familiarity the coder already had with the program.

As the quotes were collected, commonalities between quotes and the motivations they referenced lead to the development of categories of causes. We looked for common themes among quotes, grouped them, and gave those groups initial names. As the groupings continued to be developed, we focused on the central meaning of each group of quotes and adjusted the names of the groupings accordingly. These groups evolved in name and quantity as we discussed and made sense of the core factors represented by each grouping of quotes. Finally, all the collected quotes were sorted into at least one of these groupings. Note that counts within these categories are not mutually exclusive, as participants sometimes identified overlapping causes. For example the following quote was coded as both “moral deficiency” and “pressure”: (P7) “It’s people with slightly dodgy, dodgy compasses, I think being put under a lot of pressure, not necessarily unconsciously becoming less aware.” These groupings were set into a list and called “subcategories.” These subcategories were then categorized under three larger categories, in order to better organize the categories and connect the related overarching reasons for unethical behavior.

After all the quotes were sorted into categories, we performed an inter-rater reliability check. Three authors who were neither interviewer nor primary coder, were asked to categorize a random 10% of the quotations using a chart with the subcategories and their definitions. This IRR resulted in a Fleiss’ Kappa value of 0.88, which signifies “almost perfect agreement” (Landis and Koch, 1977).

Finally, as a check on whether our vignettes were prompting scientists to identify causes they otherwise did not consider salient, we analyzed the distribution of categories by the question which prompted the quotation. The vignettes did not add any new categories: every cause that was expressed by a participant in response to a vignette was also expressed in response to the open-ended questions about research ethics.

Results

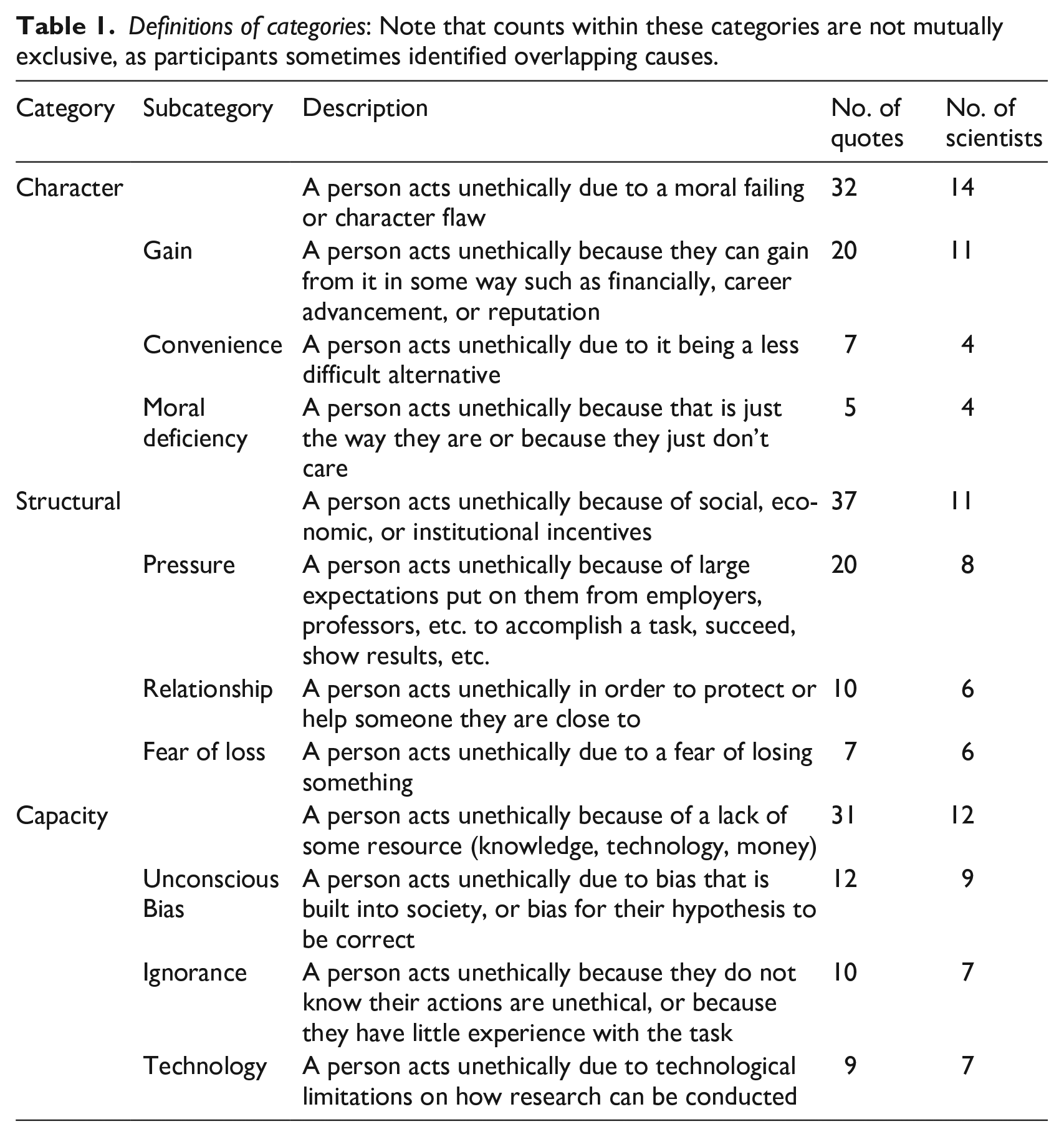

The phenomenographic analysis of these interviews led to the creation of nine categories of statements about the causes of research misconduct. These categories were then grouped into three larger groups based on where the causes of unethical behavior stem from: character, structural incentives, and capacity. This section defines each category and sub-category and provides illustrative quotes for each sub-category. The quotes are labeled as (P#) indicating the participant number who said the quote. These categories are summarized in Table 1, along with the total number of statements in that category across all interviewees and the number of interviewees to make at least one such statement in that category.

Definitions of categories: Note that counts within these categories are not mutually exclusive, as participants sometimes identified overlapping causes.

Character

All interviewees expressed the belief that scientists sometimes act unethically because of what can be broadly characterized as character flaws. These kinds of statements identify personal vices—greed, laziness, amorality etc.—as the cause of unethical decisions.

Gain

Most participants identified personal gain as a cause of research misconduct (Table 1). On this view, misconduct is motivated by a desire for more: whether that be money, promotions, or prestige.

(P2) “. . .they kind of do this as a job and money or prestige and that’s more important to them.”

(P13) “. . .I think, though, that the bigger reason is for personal benefit, personal or group or, but mainly personal benefit.”

(P5) “If you’re getting paid to, you know, to, to do something by somebody who has a vested financial stake in what comes out, I mean, it just, just seems wrong.”

Convenience

Some participants also suggested that a researcher might act unethically if it were to save time, effort, and mental or physical exertion, even though it can also create false data or harm.

(P2) “Sometimes, it’s just easier to do things that are simpler, and you don’t really think it through.”

(P3) “. . .we wrote down all of our answers, before Quizlet was the thing, and sold them to the postdocs. . .because, you know, they were already working these 60 hour weeks, and then they’re saying like, ‘Take this ethical training that doesn’t help you in any way. It doesn’t actually solve any problems with ethics and in science.’”

Moral deficiency

Some participants also discussed the idea of people inherently lacking in morals and that being a cause of unethical behavior.

(P3) “. . .I don’t know anybody who started out with fundamental ethical problems, that is going to be changed by clicking on multiple choice.”

(P4) “Humans are flawed.”

Structural

Most interviewees also noted that misconduct can occur even if scientists generally want to act ethically, because of social or institutional pressures that make them feel that they have limited options. Perhaps paradoxically, research misconduct can sometimes be explained by a desire to protect careers, livelihoods, and personal relationships.

Pressure

Over half of the participants discussed the stresses of and pressure to publish in high-impact journals, as well as the general stressful nature of research, and that this may be a cause for unethical behavior. This reason was also referenced 20 times (Table 1), which was the same number of references that the subcategory of “gain” as a reason for research misconduct received.

(P15) “In a lot of cases, I think it’s pride or trying to get, trying to get to a finish line that their information, or in this case of research, trying to get a paper out that their data doesn’t necessarily support, because as we all know, it’s publish or perish. . .”

(P3)”Because it’s an incredibly competitive world, and it’s an intense amount of pressure on everyone.”

Relationship

Some participants noted that the researcher’s relationships could lead them to act unethically, whether that is protecting those relationships or providing those close to them a benefit.

(P4) “Now I recognize that if [the research advisor in the case study] doesn’t get tenure and gets kicked out of the university, that’s also going to be bad for the students”

(P3) “. . .and like it’s difficult when I know that this person just moved to this location, and maybe they are having financial problems and getting this paper published would help them get a grant. . .”

(P11) “If I could find a way of advancing someone else’s career by stretching a little bit their contributions to the work and sticking them on as an author. I would do it. . . . it would be out of generosity and in an effort to help out.”

Fear of loss

Similarly, some of the participants said that fear could be a motivator to act unethically. The faculty discussed that sometimes career or funding for a project can be on the line, which can be stressful enough for some to make unethical decisions.

(P12)”. . .trying to protect something, whether that’s a person or an idea, or a position.”

(P5) “Why did you like submit you know, like 10 [. . .] grants with like completely like fabricated data? And it’s like, ‘You know, I’ve got my career dependent upon it.’ “

Capacity

Finally, most participants identified that there are some ethical failures that occur even if people are trying to act ethically and don’t feel compelled by structural pressures. Biases that they don’t realize they have, actions they don’t realize are unethical, or technological restrictions can all contribute to these.

Unconscious bias

Many participants discussed that unconscious bias could lead to unethical behavior, whether that is related to people’s identities or to what one expects to find in the result of a study.

(P11) “I like to tell people, and I like to tell myself, ‘Don’t say what you expect to get. Don’t do an experiment when you, that you say what you expect to get. This is incredibly hard to resist. Don’t say “Here is what I expect to find.”. You’ll find it! You’ll find it! You’ll manipulate the data in such a way that you’ll find it.’”

(P7) “I think the issues I’ve observed. . .have largely had to do with self-delusion, like they haven’t been objective enough about their own work. . .”

(P3)”I don’t think that people realize that they’re behaving unethically when they behave unethically. I mean, at least most of the time, I don’t think people are making a conscious decision of ‘Oh man, I am going to cheat the system, and I am going to benefit. Women don’t have a place here. I’m not gonna hire her’ or ‘What would happen if we had minorities here?’. . .because they don’t see the inherent bias in themselves.”

Ignorance

Half of the participants referenced a lack of understanding of ethics as being a cause for unethical behavior.

(P13) “I think there are two reasons. One is ignorance: not knowing that you’re not being ethical. . .”

(P7) “. . .and maybe you think that that’s actually an accurate readout of [. . .], but you’re actually confounded by some, by some effort from a phenomenon that you don’t understand. I think this happens quite often.”

Technology

Half of the participants also discussed technological limits to acting fully ethical in research.

(P13) “Sometimes you have to deceive the subject, otherwise the test won’t be valid.”

(P4) “Well that’s kind of, kind of a complicated one, because I imagine if you actually provide the context to the patient, you are negating the ability to actually test the question at hand.”

Discussion

Our interviews with researchers in the basic sciences identified a range of considerations that scientists believe cause unethical behavior. Our study suggests that structural issues (i.e. publication pressure) and individual character flaws (i.e. pursuit of personal gain) are widely attributed as causes of research misconduct. Moreover, ignorance of the RCR rules appears to be less salient as a cause than positive or negative incentives such as personal gain and pressure to produce results (publications, grant funding, etc.).

Placing these results in context, our study suggests that scientist’s beliefs about the causes of research misconduct largely align with the existing research on the actual causes of research misconduct (see also Buljan et al., 2018). Consider the importance that our participants placed on individual character traits as a cause of unethical behavior. Most studies on the causes of unethical behavior cite individual factors (Belle and Cantarelli, 2017; Boes et al., 2017; DuBois et al., 2013; Kornfeld, 2012; Sovacool, 2008). Often, these are described as classical character flaws: “greed, egocentrism, self-justification” (Belle and Cantarelli, 2017), or “a self-centered personality” (DuBois et al., 2013). Sometimes, these individual factors are described as a kind of amorality or nihilism—that is, misconduct conducted by “sociopaths” or “the ethically challenged” (Kornfeld, 2012). Regardless of their precise presentation, however, our participants’ identification of personal gain, convenience and amoral thinking as the causes of research misconduct aligns with a key set of causes identified in the literature.

Likewise, structural incentives were cited the most by participants, with “pressure” and “relationship” factors being brought up most. Several previous studies have identified pressure to publish as one of the main causes of unethical behavior (Davis et al., 2007; DuBois et al., 2013; Kornfeld, 2012). Likewise, other studies emphasize the importance of creating research environments where it is easier to act ethically than not (Adams and Pimple, 2005). While the precise contours of such environments are still being studied, scientists are clearly aware of the ways in which workplace structure and academic incentives contribute to research misconduct.

It is notable, however, that scientists identify some things that are not extensively discussed in previous research on the causes of unethical behavior. For instance, unconscious biases are rarely discussed as a direct cause of misconduct, though some have argued that bias reduction should be a component of RCR education (Bouter, 2015; DuBois et al., 2013). Yet unconscious biases—including confirmation bias toward hypotheses and implicit socio-cultural biases in structuring experiments—was one of the top three categories mentioned by our participants. The wide range of cognitive biases makes interpretation of this category difficult but it does suggest that further research on the contribution of different cognitive biases to misconduct might be fruitful.

Of course, our study is limited in scope, having only sampled a small group of scientists at a single university. Larger surveys of scientists have recently been conducted (Holtfreter et al., 2020), and these arguably provide more robust insights into scientists’ beliefs about the causes of research misconduct. Nonetheless, these prior surveys relied upon expert pre-specification of causes to develop survey items, and our phenomenographic approach adds value because it elicits rich feedback from participants and allows their own experiences to guide the formation of categories without prompting from survey items. Indeed, our study supports the unprompted salience of some of these categories (viz. pressure and unconscious biases), but also detects some that were not included in prior surveys (i.e. morally deficient characters). Our participants did not identify a lack of deterrence or low odds of detection as causes, suggesting that some previously reported results on the high salience of “low odds of detection” may be partially due to survey response biases (Holtfreter et al., 2020: 2170). Furthermore, while prior studies have utilized a qualitative, “bottom-up” methodology to code perceived causes (Buljan et al., 2018), our approach extends this effort beyond the biomedical sciences. Further work, using more scalable methodologies to assess the frequency of the perceived causes we have identified, is therefore needed to establish the breadth and depth of these beliefs across different institutions, in different disciplines, and in different career stages (e.g. graduate students).

Our findings suggest several avenues of interest for future studies into the promotion of responsible conduct of research. Recall that many scientists do not regard RCR training as relevant or effective. There are many possible explanations for this lack of motivation to engage seriously with RCR training, but we proposed that if we want scientists to take RCR training seriously, it is important for the training to be both: (i) relevant to what the scientists believe causes unethical behavior, and (ii) match with what actually causes unethical behavior. If this conjecture is true, then our study provides three preliminary lessons for RCR training.

One lesson is that scientists are unlikely to believe that existing RCR training is relevant to their own behavior. Consider the dominance of character traits as an explanation for misconduct. Few people regard themselves as intrinsically bad or selfish, and so if one believes that people who act unethically are intrinsically bad, there is little motivation to interrogate or invigilate one’s own behavior. This is compatible with the finding that students are often overly confident in their ability to handle ethical dilemmas (Antes et al., 2010). If RCR training is presented as “don’t do these bad things” and scientists think that bad things are done by bad people, then we risk scientists distancing themselves from the idea that they could be unethical. Thus, if RCR training is to have an impact, it needs to tackle head on the assumption that misconduct is the result of intrinsic character flaws.

Second, scientists may not regard current models of RCR education as effective at changing anyone’s behavior. In particular, the didactic, deficit model of RCR education (e.g. the CITI program), does not appear well equipped to change the structural and character-based causes scientists believe are most relevant. Structural causes like pressure and personal relationships are not failures to know or understand what would have been proper conduct. Moreover, character traits are typically thought to be immutable or resistant to change. In this respect, if scientists think that character is what causes RCR failures, they are unlikely to think that RCR training limited to an online multi-choice quiz will be effective. If scientists do not believe RCR education can change behavior, then they are unlikely to engage with (and encourage engagement with) RCR training. It is no surprise that scientists appear not to take RCR training seriously, and perhaps even actively avoid its requirements. As one of our interviewees explained: (P3) “. . .we wrote down all of our answers, before Quizlet was the thing, and sold them to the postdocs. . .because, you know, they were already working these 60 hour weeks, and then they’re saying like, ‘Take this ethical training that doesn’t help you in any way. It doesn’t actually solve any problems with ethics and in science.’”

Finally, the emphasis placed on structural incentives suggests that scientists believe even a vastly improved version of RCR education will only go so far in eradicating misconduct. There are limits to what can be achieved through training alone. If scientists believe there are structural problems with the way research works, then they are likely (perhaps correctly) to be skeptical of training without structural interventions to help make the right choice into the easy and obvious choice. If pressure to publish is identified by scientists as a major cause of research misconduct, then they are likely to believe that even the best kinds of individual training will not remove the incentive to engage in fabrication or data manipulation. Likewise, if conflicts of interests arising from personal relationships (between colleagues, or between student and mentor) are of salience to researchers, then they may likewise be supportive of structural efforts to control and eliminate personal conflicts of interests. More positively, the recognition by scientists that structural incentives are a significant cause of misconduct means that they are likely to be supportive of efforts to lower the pressure experienced by working scientists.

Ultimately, if RCR training is to be effective, we argue that scientists must see ethics education as being relevant to themselves and their everyday practices. If ethics training is designed around what they believe to be the causes of unethical behavior, we might find higher engagement, better understanding and less misconduct amongst those subject to RCR training requirements.

Conclusion

We interviewed scientists at a university in the United States and analyzed those interviews using a phenomenographic analysis to determine what they believe causes unethical behavior in research. We found that their beliefs about the causes of unethical behavior match what other studies have found to be the actual causes of unethical behavior, with character traits and pressure being the top two causes. We found that scientists believe unethical research behavior has a variety of possible causes: gain, convenience, moral deficiency, pressure, relationship, fear of loss, unconscious bias, ignorance, and technology. Gain, pressure, and unconscious bias were the most commonly and often referenced reasons.

Footnotes

Funding

This material is based upon work supported by the National Science Foundation under Grant No. 1835366. All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]() .

.