Abstract

One of the essential insights from psychological research is that people’s information processing is often biased. By now, a number of different biases have been identified and empirically demonstrated. Unfortunately, however, these biases have often been examined in separate lines of research, thereby precluding the recognition of shared principles. Here we argue that several—so far mostly unrelated—biases (e.g., bias blind spot, hostile media bias, egocentric/ethnocentric bias, outcome bias) can be traced back to the combination of a fundamental prior belief and humans’ tendency toward belief-consistent information processing. What varies between different biases is essentially the specific belief that guides information processing. More importantly, we propose that different biases even share the same underlying belief and differ only in the specific outcome of information processing that is assessed (i.e., the dependent variable), thus tapping into different manifestations of the same latent information processing. In other words, we propose for discussion a model that suffices to explain several different biases. We thereby suggest a more parsimonious approach compared with current theoretical explanations of these biases. We also generate novel hypotheses that follow directly from the integrative nature of our perspective.

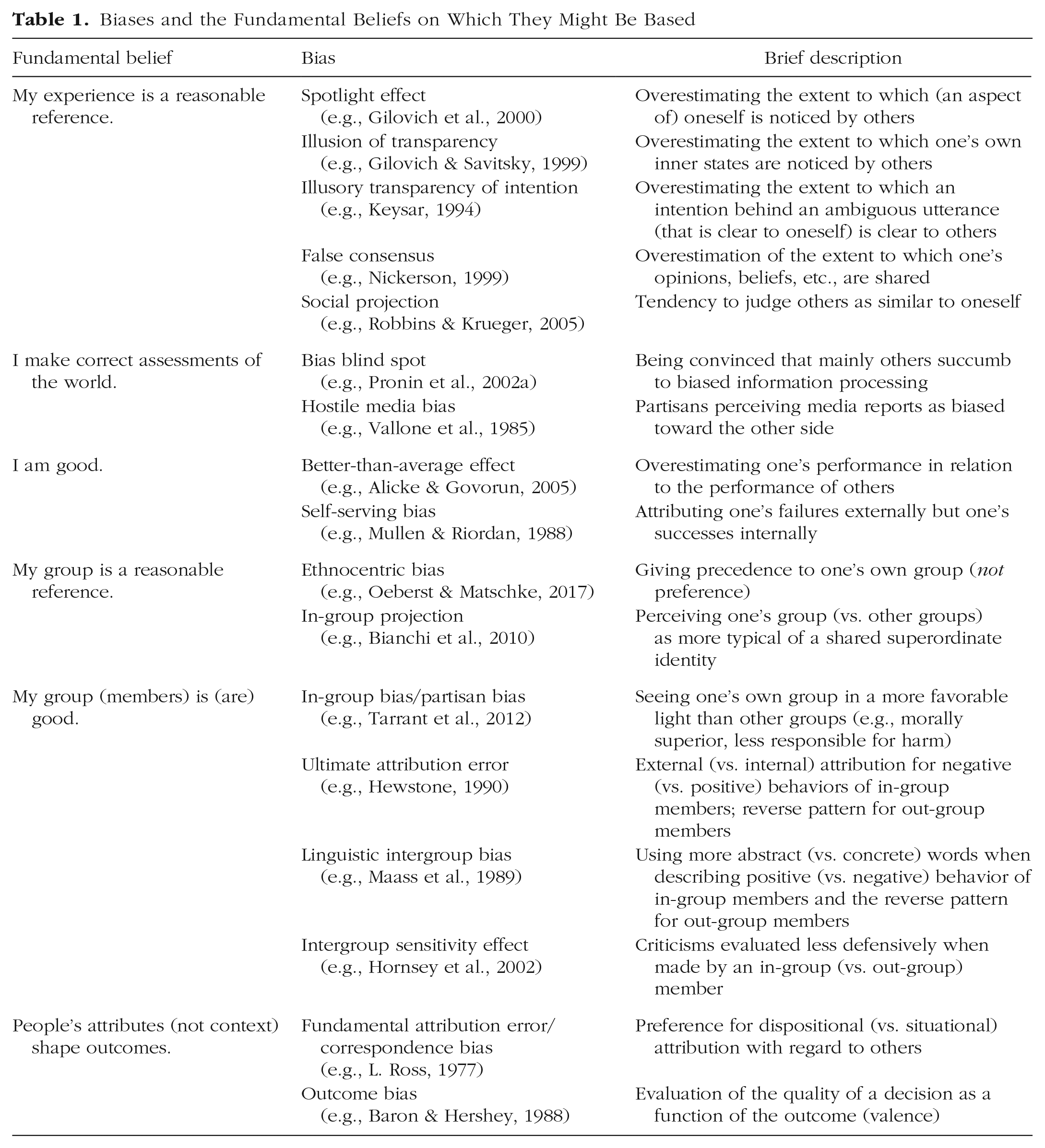

Thought creates the world and then says, “I didn’t do it.”

One of the essential insights from psychological research is that human information processing is often biased. For instance, people overestimate the extent to which their opinions and beliefs are shared (e.g., Nickerson, 1999), and they apply differential standards in the evaluation of behavior depending on whether it is about a member of their own or another group (e.g., Hewstone et al., 2002), just to name a few. For many such biases there are prolific strands of research, and for the most part these strands do not refer to one another. As such parallel research endeavors may prevent us from detecting common principles, the current article seeks to bring a set of biases together by suggesting that they might actually share the same “recipe.” Specifically, we suggest that they are based on prior beliefs plus belief-consistent information processing. Put differently, we raise the question of whether a finite number of different biases—at the process level—represent variants of “confirmation bias,” or peoples’ tendency to process information in a way that is consistent with their prior beliefs (Nickerson, 1998). Even more importantly, we argue that different biases could be traced back to the same underlying fundamental beliefs and outline why at least some of these fundamental beliefs are likely held widely among humans. In other words, we propose for discussion a unifying framework that might provide a more parsimonious account of the previously researched biases presented in Table 1. And we argue that research on the respective biases should elaborate on whether and how those biases truly exceed confirmation bias. The proposed framework also implies several novel testable hypotheses, thus providing generative potential beyond its integrative function.

Biases and the Fundamental Beliefs on Which They Might Be Based

We begin by outlining the foundations of our reasoning. First, we define “beliefs” and provide evidence for their ubiquity. Second, we outline the many facets of belief-consistent information processing and elaborate on its pervasiveness. In the third part of the article, we discuss a nonexhaustive collection of hitherto independently treated biases (e.g., spotlight effect, false consensus effect, bias blind spot, hostile media effect) and how they could be traced back to one of two fundamental beliefs plus belief-consistent information processing. We then broaden the scope of our focus and discuss several other phenomena to which the same reasoning might apply. Finally, we provide an integrative discussion of this framework, its broader applicability, and potential limitations.

The Ubiquity of Beliefs and Belief-Consistent Information Processing

Because we claim a set of biases to be essentially based on a prior belief plus belief-consistent information processing, we first elaborate on these two parts of the recipe. First, we outline how we conceptualize beliefs and argue that they are an indispensable part of human cognition. Second, we introduce the many routes by which belief-consistent information processing may unfold and present research speaking to its pervasiveness.

Beliefs

We consider beliefs as hypotheses about some aspect of the world that come along with the notion of accuracy—either because people examine beliefs’ truth status or because they already have an opinion about the accuracy of the beliefs. Beliefs in the philosophical sense (i.e., “what we take to be the case or regard as true”; Schwitzgebel, 2019) fall into this category (e.g., “This was the biggest inauguration audience ever”; “Homeopathy is effective”; “Rising temperatures are human-made”), as does knowledge, a special case of belief (i.e., a justified true belief; Ichikawa & Steup, 2018).

Following from this conceptualization, there are certain characteristics that are relevant for the current purpose. First, beliefs may or may not be actually true. Second, beliefs may result from any amount of deliberate processing or reflection. Third, beliefs may be held with any amount of certainty. Fourth, beliefs may be easily testable (e.g., “Canada is larger than the United States”) after some specifications (e.g., “I am rational”), partly testable (e.g., not falsifiable; e.g., “Traumatic experiences are repressed”), or not testable at all (e.g., “Freedom is more important than security”). It is irrelevant for the current purpose whether a belief is false, entirely lacks foundation, or is untestable. All that matters is that the person holding this belief either has an opinion about its truth status or examines its truth status.

The ubiquity of beliefs

There is an abundance of research suggesting that the human cognitive system is tuned to generating beliefs about the world: An incredible plethora of psychological research on schemata, scripts, stereotypes, attitudes (even about unknown entities; Lord & Taylor, 2009), top-down processing, but also learned helplessness and a multitude of other phenomena demonstrates that we readily form beliefs by generalizing across objects and situations (e.g., W. F. Brewer & Nakamura, 1984; Brosch et al., 2010; J. S. Bruner & Potter, 1964; Darley & Fazio, 1980; C. D. Gilbert & Li, 2013; Greenwald & Banaji, 1995; Hilton & von Hippel, 1996; Kveraga et al., 2007; Maier & Seligman, 1976; Mervis & Rosch, 1981; Roese & Sherman, 2007). Furthermore, people (as well as some animals) generate beliefs about the world even when it is inappropriate because there is actually no systematic pattern that would allow for expectations (e.g., A. Bruner & Revusky, 1961; Fiedler et al., 2009; Hartley, 1946; Keinan, 2002; Langer, 1975; Riedl, 1981; Skinner, 1948; Weber et al., 2001; Whitson & Galinsky, 2008).

Explanations for such superstitions, but also for a variety of untestable or unwarranted beliefs, repeatedly refer to the benefits arising from even illusory beliefs. Believing in some kind of higher force (e.g., God), for instance, may provide explanations for relevant phenomena in the world (e.g., thunder, pervasive suffering in the world) and may thereby increase perceptions of predictability, control, self-efficacy, and even justice, all of which have been shown to be beneficial for individuals, even if they are illusory (e.g., Alloy & Abramson, 1979; Alloy & Clements, 1992; Day & Maltby, 2003; Green & Elliott, 2010; Kay, Gaucher, et al., 2010; Kay, Moscovitch, & Laurin, 2010; Langer, 1975; Taylor & Brown, 1988, 1994; Taylor et al., 2000; Witter et al., 1985). Religious ideas, in particular, have furthermore fostered communion, orderly coexistence, and even cooperation among individuals, benefiting both individuals as well as entire groups (e.g., Bloom, 2012; Dow, 2006; Graham & Haidt, 2010; Johnson & Fowler, 2011; Koenig et al., 1999; MacIntyre, 2004; Peoples & Marlowe, 2012). Indeed, there are numerous unwarranted—or even blatantly false—beliefs that either have no (immediate and thus likely detectable) detrimental consequences or even lead to positive consequences (for placebo effects, see Kaptchuk et al., 2010; Kennedy & Taddonio, 1976; Price et al., 2008; for magical thinking, see Subbotsky, 2004; for belief in a just world, see Dalbert, 2009; Furnham, 2003), which fosters the survival of such beliefs.

Beyond demonstrations of peoples’ readiness toward forming beliefs, research has repeatedly affirmed people’s tendency to be intolerant of ambiguity and uncertainty and found a preference for “cognitive closure” (i.e., a made-up mind) instead (Dijksterhuis et al., 1996; Furnham & Marks, 2013; Furnham & Ribchester, 1995; Kruglanski & Freund, 1983; Ladouceur et al., 2000; Webster & Kruglanski, 1997). And last but not least, D. T. Gilbert (1991) made a strong case for the Spinozan view that comprehending something is so tightly connected to believing it that beliefs may be unaccepted only after deliberate reflection—and yet may affect our behavior (Risen, 2016). In other words, beliefs emerge the very moment we understand something about the world. Children understand (and thus believe) something takes place long before they have developed the cognitive capabilities that are needed to deny propositions (Pea, 1980). After all, children are continuously and thoroughly exposed to an environment (e.g., experience, language, culture, social context) that provides an incredibly rich source of beliefs that are transmitted subtly as well as blatantly and thereby effectively shapes humans’ worldviews and beliefs from the very beginning. Taken together, the research has indicated that people readily generate beliefs about the world (D. T. Gilbert, 1991; see also Popper, 1963). Consequently, beliefs are an indispensable part of human cognition.

Belief-consistent information processing—facets and ubiquity

To date, researchers have accumulated much evidence for the notion that beliefs serve as a starting point of how people perceive the world and process information about it. For instance, individuals tend to scan the environment for features more likely under the hypothesis (i.e., belief) than under the alternative (“positive testing”; Zuckerman et al., 1995). People also choose belief-consistent information over belief-inconsistent information (“selective exposure” or “congeniality bias”; for a meta-analysis, see Hart et al., 2009). They tend to erroneously perceive new information as confirming their own prior beliefs (“biased assimilation”; for an overview, see Lord & Taylor, 2009; “evaluation bias”; e.g., Sassenberg et al., 2014) and to discredit information that is inconsistent with prior beliefs (“motivated skepticism”; Ditto & Lopez, 1992; Taber & Lodge, 2006; “disconfirmation bias”; Edwards & Smith, 1996; “partisan bias”; Ditto et al., 2019). At the same time, people tend to stick to their beliefs despite contrary evidence (“belief perseverance”; C. A. Anderson et al., 1980; C. A. Anderson & Lindsay, 1998; Davies, 1997; Jelalian & Miller, 1984), which, in turn, may be explained and complemented by other lines of research. “Subtyping,” for instance, allows for holding on to a belief by categorizing belief-inconsistent information into an extra category (e.g., “exceptions”; for an overview, see Richards & Hewstone, 2001). Likewise, the application of differential evaluation criteria to belief-consistent and belief-inconsistent information systematically fosters “belief perseverance” (e.g., Sanbonmatsu et al., 1998; Trope & Liberman, 1996; see also Koval et al., 2012; Noor et al., 2019; Tarrant et al., 2012). Partly, people hold even stronger beliefs after facing disconfirming evidence (“belief-disconfirmation effect”; Bateson, 1975; see also “cognitive dissonance theory”; Festinger, 1957; Festinger et al., 1955/2011).

All of the phenomena mentioned above are expressions of the principle of belief-consistent information processing (see also Klayman, 1995). That is, although specifics in the task, the stage for information processing, and the dependent measure may vary, all of these phenomena demonstrate the systematic tendency toward belief-consistent information processing. Put differently, belief-consistent information processing emerges at all stages of information processing such as attention (e.g., Rajsic et al., 2015), perception (e.g., Cohen, 1981), evaluation of information (e.g., Ask & Granhag, 2007; Lord et al., 1979; Richards & Hewstone, 2001; Taber & Lodge, 2006), reconstruction of information (e.g., Allport & Postman, 1947; Bartlett, 1932; Kleider et al., 2008; M. Ross & Sicoly, 1979; Sahdra & Ross, 2007; Snyder & Uranowitz, 1978), and the search for new information (e.g., Hill et al., 2008; Kunda, 1987; Liberman & Chaiken, 1992; Pyszczynski et al., 1985; Wyer & Frey, 1983)—including one’s own elicitation of what is searched for (“self-fulfilling prophecy”; Jussim, 1986; Merton, 1948; Rosenthal & Jacobson, 1968; Rosenthal & Rubin, 1978; Sheldrake, 1998; Snyder & Swann, 1978; Watzlawick, 1981). Moreover, many stages (e.g., evaluation) allow for applying various strategies (e.g., ignoring, underweighting, discrediting, reframing). Consequently, individuals have a great number of options at their disposal (think of the combinations) so that the degrees of freedom in their processing of information allows for countless possibilities for belief-consistent information processing, which may explain how belief-consistent conclusions arise even under the least likely circumstances (e.g., Festinger et al., 1955/2011).

In sum, belief-consistent information processing seems to be a fundamental principle in human information processing that is not only ubiquitous (e.g., Gawronski & Strack, 2012; Nickerson, 1998; see also Abelson et al., 1968; Feldman, 1966) but also a conditio humana. This notion is also reflected in the fact that motivation is not a necessary prerequisite for engaging in belief-consistent information processing: Several studies have shown that belief-consistent information processing arises for hypotheses for which people have no stakes in the specific outcome and thus no interest in particular conclusions (i.e., motivated reasoning; Kunda, 1990; e.g., Crocker, 1982; Doherty et al., 1979; Evans, 1972; Klayman & Ha, 1987, 1989; Mynatt et al., 1978; Sanbonmatsu et al., 1998; Skov & Sherman, 1986; Snyder & Swann, 1978; Snyder & Uranowitz, 1978; Wason, 1960). In addition, research under the label “contextual bias” can be classified as unmotivated confirmation bias because it demonstrates how contextual features (e.g., prior information about the credibility of a person) may bias information processing (e.g., the evaluation of the quality of a statement from that person; e.g., Bogaard et al., 2014; see also Dror et al., 2006; Elaad et al., 1994; Kellaris et al., 1996; Risinger et al., 2002). In other words, the same mechanisms apply, regardless of peoples’ interest in the outcome (Trope & Liberman, 1996). Hence, belief-consistent information processing takes place even when people are not motivated to confirm their belief. Furthermore, belief-consistent information processing has been shown even when people are motivated to be unbiased (e.g., Lord et al., 1984), or at least want to appear unbiased. This is frequently the case in the lab, where participants are motivated to hide their beliefs (for an overview of subtle discrimination, see Bertrand et al., 2005). But it is even more true in scientific research (Greenwald et al., 1986), forensic investigations (Dror et al., 2006; Murrie et al., 2013; Rassin et al., 2010), and in the courtroom (or legal decision-making, more generally), in which an unbiased judgment is the ultimate goal that is rarely reached (Devine et al., 2001; Hagan & Parker, 1985; Mustard, 2001; Pruitt & Wilson, 1983; Sommers & Ellsworth, 2001; Steblay et al., 1999; for overviews, see Faigman et al., 2012; Kang & Lane, 2010). Taken together, overabundant research demonstrates that belief-consistent information processing is a pervasive phenomenon for which motivation is not a necessary ingredient.

Biases Reexplained as Confirmation Bias

Having pointed to the ubiquity of beliefs and belief-consistent information processing, let us now return to the nonexhaustive list of biases in Table 1, for which we propose to entertain the notion that they may arise from shared beliefs plus belief-consistent information processing. As can be seen at first glance, we bring together biases that have been investigated in separate lines of research (e.g., bias blind spot, hostile media bias). We argue that all of the biases mentioned in Table 1 could, in principle, be understood as a result of a fundamental belief plus belief-consistent information processing because they might all be based on a fundamental underlying belief. Furthermore, in specifying the fundamental beliefs, we suggest that several biases actually share the same belief (e.g., “I make correct assessments”; see Table 1)—thereby representing only variations in which the underlying belief is expressed.

To be sure, the current approach does not preclude contribution from other factors to the biases at hand. We merely raise the question of whether the parsimonious combination of belief plus belief-consistent information processing alone might provide an explanation that suffices to predict the existence of the biases listed in Table 1. That is, other factors could contribute, attenuate, or exacerbate these biases, but our recipe alone would already allow their prediction. Let us now see how some of the biases mentioned in Table 1 could be traced back to the (same) fundamental beliefs and thereby be explained by them—when acknowledging the principle of belief-consistent information processing. We do so by spelling this out for the biases based on two fundamental beliefs (“My experience is a reasonable reference” and “I make correct assessments”).

“My experience is a reasonable reference”

A number of biases seem to imply that people take both their own (current) phenomenology and themselves as starting points for information processing. That is, even when a judgment or task is about another person, people start from their own lived experience and project it—at least partly—onto others as well (e.g., Epley et al., 2004). For instance, research on phenomena falling under the umbrella of the “curse of knowledge” or “epistemic egocentrism” speaks to this issue because people are bad at taking a perspective that is more ignorant than their own (Birch & Bloom, 2004; for an overview, see Royzman et al., 2003). People overestimate, for instance, the extent to which their appearance and actions are noticed by others (“spotlight effect”; e.g., Gilovich et al., 2000), the extent to which their inner states can be perceived by others (“illusion of transparency”; e.g., Gilovich et al., 1998; Gilovich & Savitsky, 1999), and the extent to which people expect others to grasp the intention behind an ambiguous utterance if its meaning is clear to evaluators (“illusory transparency of intention”; Keysar, 1994; Keysar et al., 1998). Likewise, people overestimate similarities between themselves and others (“self-anchoring” and “social projection”; Bianchi et al., 2009; Otten, 2004; Otten & Epstude, 2006; Otten & Wentura, 2001; A. R. Todd & Burgmer, 2013; van Veelen et al., 2011; for a meta-analysis, see Robbins & Krueger, 2005), as well as the extent to which others share their own perspective (“false consensus effect”; Nickerson, 1999; for a meta-analysis, see Mullen et al., 1985).

Taken together, a number of biases seem to result from people taking—by default—their own phenomenology as a reference in information processing (see also Nickerson, 1999; Royzman et al., 2003). Put differently, people seem to—implicitly or explicitly—regard their own experience as a reasonable starting point when it comes to judgments about others and fail to adjust sufficiently. Instead of disregarding—or even discrediting—their own experience as an appropriate starting point, people rely on it. When judging, for instance, the extent to which others may perceive their own inner state, people act on the belief that their own experience of their inner state (e.g., their nervousness) is a reasonable starting point, which, in turn, guides their information processing. They might start out with a specific and directed question (e.g., “To what extent do others notice my nervousness?”) instead of an open and global question (e.g., “How do others see me?”). People might also focus on information that is consistent with their own phenomenology (e.g., their increased speech rate) as potential cues that others could draw on. Finally, people may ignore or underweight information that is inconsistent with their phenomenology (e.g., their limbs being completely calm) or discredit such observations as potentially valid cues for others. In the same vein, people assume that others draw on the same information (e.g., their increased speech rate) and that they draw the same conclusions from it (i.e., their nervousness). All of this—as well as the empirical evidence for the biases outlined within this section—suggests that people do take their own experience as a reference when processing information to arrive at judgments regarding others and how others see them.

Now let us entertain the notion that people do, by default, regard their own experience as a reasonable reference for their judgments about others. Would biases such as the spotlight effect, the illusion of transparency (of intention), false consensus, and social projection not (by default) follow from the default belief when taking the general human tendency of belief-consistent information processing into account? From our point of view, the answer is an emphatic “yes.” If people judge the extent to which others notice (something about) them or their inner states or intentions, hold the fundamental belief that their own experience is a reasonable reference, and engage in belief-consistent information processing, we should—by default and on average—observe an overestimation of the extent to which an aspect of oneself or one’s own inner states are noticed by others as suggested by the spotlight effect and the illusion of transparency (of intention). Likewise, people should overestimate the extent to which others are similar to themselves (social projection) and share their own opinions and beliefs (false consensus).

This reasoning might be reminiscent of anchoring-and-(insufficient)-adjustment accounts (Tversky & Kahneman, 1974), and there are certainly parallels so that one could speak of a mere reformulation. A crucial difference is, however, that we explicate a fundamental belief that explains why people anchor on their own phenomenology when making judgments about others: They (implicitly or explicitly) believe that their own experience is a reasonable reference, even for others. Yet another advantage of our proposed framework is that it acknowledges even more parallels to other biases and provides a more parsimonious account. After all, we argue that these biases—at their core—could be understood as a variation of confirmation bias (based on a shared belief). That is, we propose an explanation that suffices to predict the existence of these biases while clearly acknowledging that other factors may and do contribute, attenuate, or exacerbate these biases.

“I make correct assessments”

Let us turn our attention to a second group of biases and entertain the notion that they stem from the default belief of making correct assessments, which people hold for themselves but not for others. As we argue below, biases such as the bias blind spot and the hostile media bias are almost logical consequences of people’s simple assumption that their assessments are correct.

Having the belief of making correct assessments also implies not falling prey to biases. Precisely such a meta-bias of expecting others to be more prone (compared to oneself) to such biases has been subsumed under the phenomenon of the bias blind spot. The bias blind spot describes humans’ tendency to “see the existence and operation of cognitive and motivational biases much more in others than in themselves” (Pronin et al., 2002a, p. 369; for reviews, see Pronin, 2007; Pronin et al., 2004). If people start out from the default assumption that they make correct assessments, as suggested by our framework, one part of the bias blind spot is explained right away: the conviction that one’s own assessments are unbiased (see also Frantz, 2006). After all, trust in one’s assessments may effectively prevent the identification of own biases and errors—either by failing to see the necessity to rethink judgments or by failing to identify biases therein. The other part, however, is implied in the fact that people do not hold the same belief for others (for a somewhat similar notion, see Pronin et al., 2004, 2006). Importantly, we propose that people do not generate the same fundamental beliefs about others, particularly not about a broad or vague group of others that is usually assessed in studies (e.g., the “average American” or the “average fellow classmate”; Pronin et al., 2002a, 2006; see also the section on fundamental beliefs and motivation). The logical consequence of people’s believing in the accuracy of their own assessments while simultaneously not holding the same conviction in the accuracy of others’ assessments is that people expect others to succumb to biases more often than they themselves do (e.g., Kruger & Gilovich, 1999; Miller & Ratner, 1998; van Boven et al., 1999). Another consequence is to assume errors on the part of others if discrepancies between their and one’s own judgments are observed (Pronin, 2007; Pronin et al., 2004; Ross et al., 2004, as cited from Pronin et al., 2004).

The hostile media bias describes the phenomenon by which, for instance, partisans of conflicting groups view the same media reports about an intergroup conflict as biased against their own side (Vallone et al., 1985; see also Christen et al., 2002; Dalton et al., 1998; Matheson & Dursun, 2001; Richardson et al., 2008; for a meta-analysis, see Hansen & Kim, 2011). The reasoning of our framework here is similar to the one applied to the bias blind spot (see also Lord & Taylor, 2009): If people assume their own assessments are correct and, by nature of being correct their views are also unbiased, it is almost necessary to assume others (people/media reports) are biased if their views differ. People starting from the belief to make correct assessments process the available information (e.g., a discrepancy between their own view and media reports) in a way that is consistent with this basic belief (e.g., by attributing the discrepancy to a bias in others, not themselves). In addition, in line with our argument of rather general mechanisms being at play, the hostile media effect was found in representative samples (e.g., Gunther & Christen, 2002) and even for people that were less connected with the issue at hand (Hansen & Kim, 2011), that is, not strongly involved with the issue as Vallone et al. (1985) initially regarded as prerequisite.

To summarize, we argue that the bias blind spot and the hostile media bias can essentially be explained by one fundamental underlying belief: People generally trust their assessments but do not hold the same trust for others’ assessments. As a consequence, they are overconfident and do not question their own judgment as systematically as they question the judgment of others (e.g., when confronted with a different view). Hence, we suggest that these biases are based on the same recipe (belief plus belief-consistent information processing). Even more, we suggest that these biases are based on the same fundamental belief: people’s belief that they themselves make correct assessments. By doing so, we not only provide a more parsimonious account for different biases but also bring together biases that have heretofore been treated as unrelated because they have been researched in very different areas within psychology (e.g., whereas hostile media bias is mainly addressed in the intergroup context, bias blind spot is not).

Further Clarifications and Distinctions

So far, we have attempted to show that the biases listed in Table 1 can be understood as a combination of beliefs plus belief-consistent information processing. This is not to say that no other factors or mechanisms are at play but rather to put forth the idea that belief plus belief-consistent information processing suffices as an explanation (with the corollary that the fundamental beliefs are not held for other people as well). In the next section, we add some clarifications to our approach regarding the role of “innocent” processes, motivation, and deliberation, which also differentiate our approach from others. We also contrast our reasoning with a Bayesian perspective.

The role of innocent processes

We have repeatedly emphasized the parsimony of our account but several explanations have been put forward that are even more parsimonious in the sense that they outline how biases can emerge from innocent processes without any prior beliefs that led participants to draw biased conclusions (e.g., Alves et al., 2018; Chapman, 1967; Fiedler, 2000; Hamilton & Gifford, 1976; Meiser & Hewstone, 2006). Instead, characteristics of the environment (e.g., information ecologies) and basic principles of information processing can lead to profoundly biased conclusions, according to these authors (e.g., evaluating members of novel groups or minorities more negatively; Alves et al., 2018). Within these frameworks, individuals’ only contribution to biased conclusions lies in their lack of metacognitive abilities that would enable them to detect (and control for) such biases (e.g., Fiedler, 2000; Fiedler et al., 2018). Obviously, a crucial difference between these accounts and our current perspective is that they start out from the notion of perfectly open-minded individuals that do not hold any relevant beliefs (i.e., a tabula rasa), whereas our main argument rests on the assumption that many biases actually result from already having beliefs. Although this difference already makes clear that these two perspectives do not necessarily compete with one another, but could—in principle—both contribute to biases (at different stages), we are very skeptical about the prevalence of open-mindedness (of not holding any prior belief; see also Fiedler, 2000, p. 662).

As outlined above, we regard beliefs as an indispensable part of human cognition because people are extremely ready to generate beliefs about the world. Therefore, we are skeptical of a truly open mind (in the sense of having literally no prior beliefs or convictions) to be a prevalent case. Nevertheless, innocent circumstances (such as the information ecology) might explain a possible origin of (biased) expectations and beliefs where there were none before (see also Nisbett & Ross, 1980; Sanbonmatsu et al., 1998).

The role of motivation

One recurrent theme in the explanations of several biases is the notion of motivation (e.g., Kruglanski et al., 2020). The bias blind spot, for instance, is sometimes interpreted as an expression of individuals’ motives for superiority (see Pronin, 2007). More generally, for biases based on the beliefs “I am good” and “My group is good,” a number of explanations are based on presumed motives for a positive self-concept or even for self-enhancement (e.g., J. D. Brown, 1986; Campbell & Sedikides, 1999; Hoorens, 1993; John & Robins, 1994; Kwan et al., 2008; Sedikides & Alicke, 2012; Sedikides et al., 2005; Shepperd et al., 2008; Tajfel & Turner, 1986). Following from our account, however, such motivational antecedents are not necessary to explain biases. To be clear, we do not claim that motivation is per se irrelevant. Rather, we can well imagine that motivation may amplify each and every bias. We argue here, however, that motivation is not a necessary precondition to arrive at any of the biases listed.

Fundamental beliefs and motivation

Are people motivated to make correct assessments of the world? Probably yes. Do people need a motive to arrive at the belief that they make correct assessments? Certainly not. Instead, people might simply overgeneralize from their everyday experiences (Riedl, 1981). People almost always correctly expect darkness to follow the light, the downfall after a jump, thirst and hunger after some period without water and food, fatigue subsequent to an extended period of intensive activity, the keys where they left them, electricity from the sockets, a hangover after a lot of alcohol, newspapers to change contents each day, doctors trying to make things better, and salary being paid regularly—just to mention a tiny fraction of the abundance of correct assessments in everyday life (D. T. Gilbert, 1991).

Not all assessments or beliefs about the world are correct, however. Crucially, various mechanisms preclude the realization of making incorrect assessments. First, we have already pointed out that some beliefs may be untestable or unfalsifiable—which has its own psychological advantages as one cannot be proven wrong (Friesen et al., 2015). 1 Second, people usually do not attempt to falsify their beliefs, even if that would be possible and desirable (Popper, 1963). Instead, they engage in the many ways of belief-consistent information processing as we have outlined above. This, of course, also contributes to the existence and maintenance of beliefs—and first and foremost to the belief that one makes correct assessments (see also Swann & Buhrmester, 2012). After all, processing information in a belief-consistent way and “confirming” one’s beliefs entails the experience of making correct assessments. Third, even if we set aside the human tendency toward belief-consistent information processing, it is often not possible for people to realize their incorrect assessments. Be it because they lack direct access to the processes that influence their perceptions and evaluations (Nisbett & Wilson, 1977; Wilson & Brekke, 1994; Wilson et al., 2002; see also Frantz, 2006; Pronin et al., 2004, 2006) or because they lack a reference for comparison that would be necessary to identify biases. In the real world, for instance, people often have no access to others’ perceptions and thoughts, which generally precludes the recognition of overestimations (e.g., of the extent to which own inner states are noticed by others, i.e., illusion of transparency). Similarly, once a society decided to hold a person captive because of the potential danger that emanates from the person, there is no chance to realize the person was not dangerous. Likewise, people cannot systematically trace the effects of a placebo to their own expectations (e.g., Kennedy & Taddonio, 1976; Price et al., 2008), just to mention a few instances. In other words, people cannot exert the systematic examination that characterizes scientific scrutiny (which, however, also does not preclude being biased, e.g., Greenwald et al., 1986) and thereby do not detect their incorrect assessments. Taken together, due to a number of reasons people overwhelmingly perceive themselves as making correct assessments. Be it because they are correct, or because they are simply not corrected. Such an overgeneralization to a fundamental belief of making correct assessments could, thus, actually be regarded as a reasonable extrapolation. Consequently, no motivation is needed to arrive at this fundamental belief. Rather, we expect healthy individuals to be—by default—naive realists (see also Griffin & Ross, 1991; Ichheiser, 1949; Pronin et al., 2002b; L. Ross & Ward, 1996). In other words, we propose people generally start from the default assumption that their assessments are accurate.

Since people do not have immediate access to the experiences and phenomenology of others, however, they do not hold the same default belief for other people. This is crucial with regard to biases. After all, if people did not only believe that they make correct assessments of the world, but at the same time and with the same verve also believed that other people make correct assessments of the world, we would not expect biases such as the “bias blind spot” to occur. The fact that people do not hold such a conviction for others, however, does not necessarily involve motivation either—the belief may be lacking for the simple reason that people do not have immediate access to others’ experiences. Consequently, motivation is not necessary to arrive at self-other differences in this regard. Let us illustrate this with regard to ingroup bias: If people merely held the belief that their own group is good (see also Cvencek et al., 2012; Mullen et al., 1992), but did not hold the same belief for other groups, ingroup favoritism could result—without assuming people to believe that their group was better than other groups. Indeed, a lot of research suggests that people have an automatic ingroup favoritism, but not a parallel automatic outgroup derogation (see Fiske, 1998, for an overview).

As we postulate that motivation is not necessary for the fundamental beliefs to arise, we also propose that motivation is not a necessary ingredient for self- or group-favoring outcomes. Crucially, this is at odds with the most commonly accepted theoretical explanation for in-group bias—the social-identity approach (Tajfel & Turner, 1979; Turner et al., 1987), which posits that (a) memberships in social groups are an essential part of individuals’ self-concepts (see also R. Brown, 2000) and (b) individuals strive to see themselves in a positive light. Following from these two postulates, there is a fundamental tendency to favor the social group they identify with (i.e., in-group bias; e.g., M. B. Brewer, 2007; Hewstone et al., 2002). Contrary to this approach, we argue that it does not need a motivational component (i.e., the striving for a positive self-concept). To be sure, motivation may add to and thus likely pronounce in-group bias, but we do not expect it to be a necessary precondition. In fact, our reasoning is in line with the observation that people do not show heightened self-esteem after engaging in in-group bias (for an overview, see Rubin & Hewstone, 1998), as would be expected from original social-identity theorizing.

Belief-consistent information processing and motivation

Quite frequently, belief-consistent information processing—such as in the context of confirmation bias—is equated with motivated information processing (Kunda, 1990), in which people are motivated to defend, maintain, or confirm their prior beliefs. Some authors have even suggested speaking of “my-side bias” rather than confirmation bias (e.g., Mercier, 2017). In fact, belief and motivation often come together: Some beliefs just feel better than others, and “people find it easier to believe propositions they would like to be true than propositions they would prefer to be false” (Nickerson, 1998, p. 197; see also Kruglanski et al., 2018; on the “Pollyanna principle,” see Matlin, 2017). In addition, in some beliefs, people may have already invested a lot (e.g., one’s beliefs about the optimal parenting style or about God/paradise; e.g., Festinger et al., 1955/2011; see also McNulty & Karney, 2002) so that the beliefs are psychologically extremely costly to give up (e.g., ideologies/political systems one has supported for a long time; Lord & Taylor, 2009). Hence, wanting a belief to be true likely pronounces belief-consistent information processing (Kunda, 1990; see also Tesser, 1978) and may even include strategic components (e.g., the deliberate search for belief-consistent information; Festinger, et al., 1955/2011; Yong et al., 2021). But despite this prevalent association of confirmation bias and motivated information processing, the latter is not a necessary precondition of the former. On the contrary, as already outlined above, belief-consistent information processing takes place when people are not motivated to confirm their belief as well as when people are motivated to be unbiased or at least want to appear unbiased. Consequently, belief-consistent information processing is a fundamental principle that is not contingent on motivation.

The role of deliberation

Although deliberation is not entirely independent of motivation, it deserves extra discussion because it can be and has been viewed as a remedy for biases. Specifically, knowledge about the specific bias, the availability of resources (e.g., time), as well as the motivation to deliberate are considered to be necessary and sufficient preconditions to effectively counter bias according to some models (e.g., Oswald, 2014; for a similar case, see the potential solutions proposed by Nickerson, 1999). Although this might be true for logical problems that suggest an immediate (but wrong) solution to participants (e.g., “strategy-based” errors in the sense of Arkes, 1991), much research attests to people’s failure to correct for biases even if they are aware of the problem, urged or motivated to avoid them, and are provided with the necessary opportunity (e.g., Harley, 2007; Lieberman & Arndt, 2000; for a meta-analysis about ignoring inadmissible evidence, see Steblay et al., 2006).

A very plausible reason for this is that people fail to take effective countermeasures spontaneously (Giroux et al., 2016; Kelman et al., 1998). Recall the biases that we suggested might follow from an overgeneralizing of one’s own phenomenology. Overgeneralizing one’s own phenomenology, in turn, effectively boils down to ignoring information one has. This may be quite easy if the nature of the information to be ignored and the judgment to be made are clear-cut, as is the case in most theory-of-mind paradigms (for an overview, see Flavell, 2004). In a typical false-belief study, for instance, people are required to set aside their knowledge that an item was removed from its place in the absence of a person who had previously observed its placement, and the people have to indicate where the other person would believe the item to be. Essentially, the information in this task is binary (present vs. not present; i.e., people have to ignore the knowledge that the item was removed and therefore is not in its original place anymore). In addition, the information to be ignored refers to an aspect of physical reality that is (a) objective in that interindividual agreement should be perfect in this regard and (b) readily accessible (see also Clark & Marshall, 1981). Consequently, not only the information to be ignored but also its impact on the required judgment may be unequivocally and exhaustively identified—and therefore effectively controlled for.

However, the situation is substantially different in the tasks underlying the spotlight effect, the illusion of transparency (of intention), as well as the false consensus effect (and egocentrism in similar tasks; e.g., Chambers & De Dreu, 2014; for other association-based errors, see Arkes, 1991). Here, the information to be ignored is often not binary (e.g., one’s emotions, one’s attitudes) and therefore also not necessarily entirely and unequivocally identifiable to people themselves (i.e., the specific extent or intensity). Furthermore, even without the requirement to ignore some information, the task is much fuzzier in itself (i.e., determining how others view me, the extent to which others may determine what’s going on in my head, how others feel about certain topics). These tasks lack the objectivity and knowledge required to undo the influence of the information to be ignored (for an elimination of the false consensus effect if representative information is readily available, see Engelmann & Strobel, 2012; see also Bosveld et al., 1994).

Under these circumstances, the simple attempt to ignore information likely fails (e.g., Fischhoff, 1975, 1977; Pohl & Hell, 1996; Steblay et al., 2006; see also Dror et al., 2015; Servick, 2015). After all, the very information to ignore is not even clearly identifiable, nor is its impact on the task—which needs to be determined to be able to correct for it. Consequently, the very obvious strategy people likely choose—to somehow inhibit or ignore information they have—is ineffective. Thus, exhibiting a bias may not be due to a lack of deliberation. In addition, (unspecific) deliberation alone might not help in avoiding biases (in fact, more deliberation may even entail more belief-consistent information processing and, thus, more bias; Nestler et al., 2008). Rather, avoidance of biases might need a specific form of deliberation. Interestingly, much research shows that there is an effective strategy for reducing many biases: to challenge one’s current perspective by actively searching for and generating arguments against it (“consider the opposite”; Lord et al., 1984; see also Arkes, 1991; Koehler, 1994). This strategy has proven effective for a number of different biases such as confirmation bias (e.g., Lord et al., 1984; O’Brien, 2009), the “anchoring effect” (e.g., Mussweiler et al., 2000), and the hindsight bias (e.g., Arkes et al., 1988). At least in part, it even seems to be the only effective countermeasure (e.g., for the hindsight bias, see Roese & Vohs, 2012). Essentially, this is another argument for the general reasoning of this article, namely that biases are based on the same general process—belief-consistent information processing. Consequently, it is not the amount of deliberation that should matter but rather its direction. Only if people tackle the beliefs that guide—and bias—their information processing and systematically challenge them by deliberately searching for belief-inconsistent information, we should observe a significant reduction in biases—or possibly even an unbiased perspective. From the perspective of our framework, we would thus derive the hypothesis that the listed biases could be reduced (or even eliminated) when people deliberately considered the opposite of the proposed underlying fundamental belief by explicitly searching for information that is inconsistent with the proposed underlying belief. That is, we would expect a significant reduction of the spotlight effect, the illusion of transparency (of intention), the false consensus effect, and social projection if people were led to deliberately consider the notion and search for information suggesting that their own experience might not be an adequate reference for the respective judgments about others. Likewise, we would expect a debiasing effect on the bias blind spot and the hostile media bias if people deliberately considered the notion that they do not make correct assessments. Put differently, if our framework is correct, the parsimony on the level of explanation would also translate to parsimony on the level of debiasing: The very same strategy could be effective for various biases.

Bayesian belief updating

Our framework suggests a unifying look at how people with existing beliefs process information. As such, it contains two ingredients also prominently part of Bayesian belief updating (e.g., Chater et al., 2010; Jones & Love, 2011). In an idealized Bayesian world, people hold beliefs (i.e., priors), and any new information will either solidify these beliefs or attenuate them depending on its consistency with the prior. Importantly, however, strong prior beliefs will not be changed dramatically by just one weak additional bit of information. Instead, to meaningfully change firmly held beliefs requires extremely strong or a lot of contradictory evidence. Cumulative and consistent experience with the world will thus often lead to a situation in which a new bit of information seems negligibly irrelevant and will not evoke a great deal of belief updating. This may sound reminiscent of our approach of fundamental (i.e., strong prior) beliefs plus belief-consistent information processing, but there are marked differences, as we briefly elaborate.

First, information processing in the classical Bayesian framework is not biased. Although the possibility of biased prior beliefs is well acknowledged (Jones & Love, 2011), rational processing of novel information is a core assumption. That is, the same bit of information means the exact same thing for each recipient; it will just affect their beliefs to differing degrees because they have different and differently strong priors. This is dramatically different from our perspective with its focus on how the same bit of information is attributed, remembered, processed, interpreted, and attended to differently as a function of one’s prior beliefs (see also Mandelbaum, 2019). This notion of biased information processing is utterly absent from the Bayesian world (see also next section). Take, for instance, the finding that the same behavior (e.g., torture) is evaluated differently depending on whether the actor is a member of one’s own group or of another group (e.g., Noor et al., 2019; Tarrant et al., 2012). Or likewise, take the differential evaluation of the same scientific method depending on whether its result is consistent or inconsistent with one’s prior belief (e.g., Lord et al., 1979). Both are incompatible with the fundamental idea of Bayesian belief updating. And more generally, our approach is about the impact of prior beliefs on the processing of information rather than the impact of (novel) information on prior beliefs. Given these stark differences, it is not surprising that many predictions we derive from our understanding are not derivable from a Bayesian belief-updating perspective.

Second, by relying on the rich empirical evidence on belief-consistent information processing, we explicitly emphasize the many ways in which the novel information is already a result of biased information processing: When people selectively attend to or search for belief-consistent information (positive testing, selective exposure, congeniality bias), when they selectively reconstruct belief-consistent information from their memory, and when they behave in a way such that they elicit the phenomenon they searched for themselves (self-fulfilling prophecy), they already display bias (see also next section). People are biased in eliciting new data, and those data are then processed; people do not simply update their beliefs on the basis of information they (more or less arbitrarily) encounter in the world. As a result, people likely gather a biased subsample of information, which, in turn, will not only lead to biased prior beliefs but may also lead to strong beliefs that are actually based on rather little (and entirely homogeneous) information. But there are even more and more extreme ways in which prior beliefs may bias information processing: Prior beliefs may, for instance, affect whether or not a piece of information is regarded as informative at all for one’s beliefs (Fischhoff & Beyth-Marom, 1983). Categorizing belief-inconsistent information into an extra class of exceptions (that are implicitly uninformative to the hypothesis) is such an example (or subtyping; see also Kube & Rozenkrantz, 2021). Likewise, discrediting a source of information easily legitimates the neglect of information (see disconfirmation bias). In its most extreme form, however, prior beliefs may not be put to a test at all. Instead, people may treat them as facts or definite knowledge, which may lead people to ignore all further information or to classify belief-inconsistent information simply as false.

In sum then, our reasoning deviates from the Bayesian approach in that its core assumption is one of biased (vs. unbiased) information processing. Specifically, we propose prior beliefs to bias the processing of novel information as well as other stages of information processing, including those that elicit (novel) information. Furthermore, the hypotheses that fall from our framework cannot be likewise derived from the Bayesian perspective.

Bias, rationality, and adaptivity

Given that this article and its presented framework are about biases, it seems reasonable to add a few elaborations on this as well as related concepts. Although we are mainly proposing a framework to explain biases that have already been documented and defined by others, it is noteworthy that all of the biases listed in Table 1 comprise one of the following two conceptualizations of the term “bias”: On the one hand, some of these biases are defined as a systematic deviation from an objectively accurate reference. For instance, if people are convinced that their opinions and beliefs are shared to a larger extent than what is actually the case, their evaluation (about others) deviates from the objective reference (i.e., the evaluation of others) and thus indicates a false consensus. Essentially, all biases that refer to an overestimation or underestimation build on the comparison between peoples’ judgments and the actual (empirical) reference. This is possible because the judgment itself refers to some aspect in the world that can be directly assessed and, thus, compared.

On the other hand, for several judgments such references are lacking for comparison. For instance, with what could or should one compare a person’s evaluation of a scientific method or moral judgments to draw conclusions about potential biases? A typical approach is to examine whether the same target (e.g., scientific method, behavior of another person) is evaluated differently depending on factors that should actually be irrelevant. In other words, bias is here conceptualized (or rather demonstrated) as an impact of factors that should not play a role (i.e., the influence of unwarranted factors). For instance, if the identical scientific method is evaluated differently depending on whether or not it supports or challenges one’s prior beliefs (i.e., is discredited when yielding belief-inconsistent results; Lord et al., 1979), this denotes disconfirmation bias (Edwards & Smith, 1996) or partisan bias (Ditto et al., 2019). Likewise, when the very same behavior (e.g., torture, violent attacks) is evaluated differently depending on whether the actor is a member of one’s own group or of another group, one speaks of “in-group bias” (e.g., Noor et al., 2019; Tarrant et al., 2012). In other words, bias in this case is operationalized as a systematic difference in information processing and its outcome as a function of unwarranted factors. This notion of unwarranted factors also differentiates biases from other phenomena: For instance, we would not speak of bias in the case of experimental manipulations (e.g., mood inductions) affecting individuals’ retrieval of happy memories to regulate their mood (e.g., Josephson, 1996). However, if the same manipulation affected individuals’ perception of novel information (e.g., Forgas & Bower, 1987; Wright & Bower, 1992), we would subsume it under the umbrella term “bias.” This aligns well with our definition of beliefs as hypotheses about the world that come along with the notion of accuracy. In other words, it is about beliefs that state or claim something to be true, for example, “I make correct assessments” or “I am good,” regardless of whether or not it actually is true (e.g., “This was the biggest inauguration audience ever”; see also above).

Because biases have been conceptualized with a sense of accuracy in mind and a plethora of research has by now documented peoples’ biases, thus pointing to the frequent inaccuracy of their judgments, two secondary questions have been raised and lively debated in the past: the question of the (ir)rationality of biases and the question of the adaptivity of (certain) biases (e.g., Evans, 1991; Evans et al., 1993; Fiedler et al., 2021; Gigerenzer et al., 2011; Gigerenzer & Selten, 2001; Hahn & Harris, 2014; Haselton et al., 2009; Oaksford & Chater, 1992; Sedlmeier et al., 1998; Simon, 1990; Sturm, 2012; P. M. Todd & Gigerenzer, 2001, 2007). In particular, it has been argued that many biases and heuristics could be regarded as rational in the context of real-world settings, in which people lack complete knowledge and have an imperfect memory as well as limited capacities (e.g., Gigerenzer et al., 2011; Simon, 1990). In the same context, researchers have argued that some of the heuristics lead to biases mainly in specific lab tasks while resulting in rather accurate judgments in many real-world situations (e.g., Fiedler et al., 2021; Sedlmeier et al., 1998; P. M. Todd & Gigerenzer, 2001). In other words, they argued for the adaptivity of these heuristics, which are mostly correct, whereas research focuses on the few (artificial) situations in which they lead to incorrect results (i.e., biases). Apart from the fact that this debate mainly revolved around heuristics and biases that we do not deal with here (e.g., the set of heuristics introduced by Tversky & Kahneman, 1974), an adequate treatise of the rationality and adaptivity is beyond the scope of this article for two reasons. First, consideration of the (ir)rationality as well as adaptivity of biases is a complex and rich topic that could fill an article on its own. One factor that complicates the topic is that there is no single conceptualization of rationality but a variety of different perpectives on this topic (e.g., normative vs. descriptive, theoretical vs. practical, process vs. outcome; for an overview, see Knauff & Spohn, 2021), each of which come along with different definitions of rationality or standards of comparisons that allow for conclusions about rationality. The same holds for adaptivity because it would inevitably have to be clarified what adaptivity refers to (e.g., survival, success—in whatever sense, accurate representations). Second, and even more importantly from a research perspective, biases are first and foremost phenomena evidenced by data and empirical observations—whereas the question of the rationality of these observations is basically an evaluation of this observation and, thus, yet another issue. In presenting a framework of common underlying mechanisms, however, this article’s focus is on the explanation of the biases, not on their (normative) evaluation.

Broadening the Scope

Let us return to the application of our recipe to biases. Above we spelled out our reasoning in detail by taking two fundamental beliefs and discussing how they might explain a number of biases. Specifically, we put up for discussion that the general recipe of a belief plus belief-consistent information processing may suffice to produce the biases listed in Table 1. It is beyond the scope of the current article to do so for each of the other biases contained in Table 1. Instead, we would like to reexamine additional phenomena under this unifying lens.

Let us begin with hindsight bias, the tendency to overestimate what one could have known before the fact (Fischhoff, 1975; for an overview, see Roese & Vohs, 2012; for meta-analyses, see Christensen-Szalanski & Willham, 1991; Guilbault et al., 2004). That people overestimate the extent to which uninformed others may know about outcomes or an event that one has already learned about could likewise be understood as people taking their own experience as a reference when making judgments about others. When judgments were about themselves in a still ignorant prior state, however, our framework would at least need the extra specification that people took their current experience as a reference when asked about previous times, which is quite plausible (e.g., Levine & Safer, 2002; Markus, 1986; McFarland & Ross, 1987; Wolfe & Williams, 2018). In more general terms, people oftentimes hold the (erroneous) conviction that they have held their current beliefs forever (e.g., Greenwald, 1980; Swann & Buhrmester, 2012; von der Beck et al., 2019).

Several other phenomena that are usually not conceptualized as bias, or at least not linked to bias research, could essentially be understood as variations of confirmation bias as well. Stereotypes, for example, are basically beliefs people hold about others (“people of group X are Y”) and likewise elicit belief-consistent information processing and even behavior (e.g., discrimination). Belief in specific conspiracy theories might be understood as an expression of the rather general belief that “seemingly random events were intentionally brought about by a secret plan of powerful elites.” This basic belief as an underlying principle might provide a parsimonious explanation of why the endorsements of various conspiracy theories flock together (Bruder et al., 2013); of why such a “conspiracy mentality” is correlated with the general tendency to see agency (anthropomorphism; Imhoff & Bruder, 2014), negative intentions of others (Frenken & Imhoff, 2022), and patterns where there are none (van Prooijen et al., 2018); and also of other paranormal beliefs that play down the role of randomness (Pennycook et al., 2015).

When we consider the breadth of our conceptualization of beliefs, it becomes clear that the integrative potential of our account might be even larger: The beliefs we have elaborated on and presented in Table 1 are likely rather fundamental beliefs in that they are chronically accessible and central to people. Recall, however, that our conceptualization of beliefs also entails beliefs that are rather irrelevant to a person and only situationally induced. In consideration of this fact, a number of experimental manipulations may be subsumed under our reasoning as well. Across diverse research fields, scholars have provided participants with the task to test a given hypothesis. Although such experimenter-generated hypotheses are clearly different from long-held and widely shared beliefs, they follow a similar recipe if we regard them as situationally induced beliefs. For instance, the questions that Snyder and Swann (1978) had their participants examine—whether target person X is introverted/extraverted—can be regarded as a situationally induced belief that is examined by participants. Even if they did not generate the belief themselves and even if they were indifferent with regard to its truth, it guided their information processing and systematically led to the confirmation of the induced belief. So the question arises as to whether a number of experimental manipulations (e.g., assimilation vs. contrast; Mussweiler, 2003, 2007; promotion vs. prevention focus; Freitas & Higgins, 2002; Galinsky et al., 2005; mindset inductions; Burnette et al., 2013; Taylor & Gollwitzer, 1995) could also be treated as experimenter-induced beliefs that elicit belief-consistent information processing. In this regard, a plethora of psychological findings could be integrated into one overarching model.

Summary and Novel Hypotheses

Now that we have outlined our reasoning in detail and highlighted its integrative potential, let us turn to the hypotheses it generates. The main hypothesis (H1) we have repeatedly mentioned throughout is that several biases can be traced back to the same basic recipe of belief plus belief-consistent information processing. Undermining belief-consistent information processing (e.g., by successfully eliciting a search for belief-inconsistent information) should—according to this logic—attenuate biases. Thus, to the extent that an explicit instruction to “consider the opposite” (of the proposed underlying belief) is effective in undermining belief-consistent information processing, it should attenuate virtually any bias to which our recipe is applicable, even if this has not been documented in the literature so far. Thus, cumulative evidence that experimentally assigning such a strategy fails to reduce biases named here would speak against our model.

At the same time, we have proposed that several biases are actually based on the same beliefs, which leads to the assumption that biases sharing the same beliefs should show a positive correlation (or at least a stronger positive correlation than biases that are based on different beliefs, H2). Thus, collecting data from a whole battery of bias tasks would allow a confirmatory test of whether the underlying beliefs serve as organizing latent factors that can explain the correlations between the different bias manifestations.

Further hypotheses follow from the fact that there is a special case of a fundamental belief in that its content inherently relates to biases—the belief that one makes correct assessments. Essentially, it might be regarded as a kind of “g factor” of biases (for a similar idea, see Fiedler, 2000; Fiedler et al., 2018; Metcalfe, 1998). Following from this, we expect natural (e.g., interindividual) or experimentally induced differences in the belief of making correct assessments (e.g., undermining it; for discussions on the phenomenon of gaslighting, e.g., see Gass & Nichols, 1988; Rietdijk, 2018; Tobias & Joseph, 2020) to be mirrored not only in biases based on this but also other beliefs (H3). However, in consideration of the fact that we essentially regard several biases as a tendency to confirm the underlying fundamental belief (via belief-consistent information processing), “successfully” biased information processing should nourish the belief in one’s making correct assessments—as one’s prior beliefs have been confirmed (H4). For example, people who believe their group to be good and engage in belief-consistent information processing leading them to conclusions that confirm their belief are at the same time confirmed in their convictions that they make correct assessments of the world. The same should work for other biases such as the “better-than-average effect” or “outcome bias,” for instance. If I believe myself to be better than the average, for instance, and subsequently engage in confirmatory information processing by comparing myself with others who have lower abilities in the particular domain in question, this should strengthen my belief that I generally assess the world correctly. Likewise, if I believe that it is mainly peoples’ attributes that shape outcomes and—consistent with this belief—attribute a company’s failure to its CEO’s mismanagement, I get “confirmed” in my belief that I make correct assessments. Only if belief-consistent information processing failed would the belief that one makes correct assessments likewise not be nourished. This is, however, not extremely likely given the plethora of research showing that people may see confirmation of the basic belief even if there is actually none or only equivocal confirmation (e.g., Doherty et al., 1979; Friesen et al., 2015; Lord et al., 1979; Isenberg, 1986), let alone disconfirmation (Festinger et al., 1955/2011; Traut-Mattausch et al., 2004). If, however, engaging in any (other) form of bias expression would attenuate biases following from the belief of making correct assessments, this would strongly speak against our rationale.

There is one exception, however. If one was aware that one is processing information in a biased way and was unable to rationalize this proceeding, biases should not be expressed because it would threaten one’s belief in making correct assessments. In other words, the belief in making correct assessments should constrain biases based on other beliefs because people are rather motivated to maintain an illusion of objectivity regarding the manner in which they derived their inferences (Pyszczynski & Greenberg, 1987; Sanbonmatsu et al., 1998). Thus, there is a constraint on motivated information processing: People need to be able to justify their conclusions (Kunda, 1990; see also C. A. Anderson et al., 1980; C. A. Anderson & Kellam, 1992). If people were stripped of this possibility, that is, if they were not able to justify their biased information processing (e.g., because they are made aware of their potential bias and fear that others could become aware of it as well), we should observe attempts to reduce that particular bias and an effective reduction if people knew how to correct for it (H5).

Above and beyond these rather general hypotheses, further corollaries of our account unfold. For instance, we would expect the same group favoritism for groups people do not belong to and identify with but which they believe to be good (H6). This hypothesis would not be predicted by the social-identity approach (Tajfel & Turner, 1979; Turner et al., 1987), which is most commonly referred to when explaining in-group favoritism.

Conclusion

There have been many prior attempts of synthesizing and integrating research on (parts of) biased information processing (e.g., Birch & Bloom, 2004; Evans, 1989; Fiedler, 1996, 2000; Gawronski & Strack, 2012; Gilovich, 1991; Griffin & Ross, 1991; Hilbert, 2012; Klayman & Ha, 1987; Kruglanski et al., 2012; Kunda, 1990; Lord & Taylor, 2009; Pronin et al., 2004; Pyszczynski & Greenberg, 1987; Sanbonmatsu et al., 1998; Shermer, 1997; Skov & Sherman, 1986; Trope & Liberman, 1996). Some of them have made similar or overlapping arguments or implicitly made similar assumptions to the ones outlined here and thus resonate with our reasoning. In none of them, however, have we found the same line of thought and its consequences explicated.

To put it briefly, theoretical advancements necessitate integration and parsimony (the integrative potential), as well as novel ideas and hypotheses (the generative potential). We believe that the proposed framework for understanding bias as presented in this article has merits in both of these aspects. We hope to instigate discussion as well as empirical scrutiny with the ultimate goal of identifying common principles across several disparate research strands that have heretofore sought to understand human biases.