Abstract

Ensuring comparability of Likert-style items across different countries is a widespread challenge for authors of large-scale international surveys. Using data from the EUROGRADUATE Pilot Survey, this study employs a series of latent class analyses to explore which response patterns emerge from self-assessment of acquired and required skills of higher education graduates and how these patterns vary between eight participating countries. Results show that countries differ in the number of classes which most accurately fit the data structure. The effort to overcome national specifics by combining the levels of acquired and required skills into a single measurement of skill surplus/deficit reduces heterogeneity of patterns across countries and slightly increases comparability, yet the notion that respondents understand the scale in the same way across countries (measurement invariance) is not supported.

Keywords

Introduction

Measuring levels of attained competences is an important reflection on how well one of the objectives of tertiary education is being met. There are basically two strands of competence measurement methodology. One approach uses direct measurement—the person being measured carries out certain activities and tasks and their success rate then indicates the level of their competence. The second approach uses subjective self-assessment on a given scale. In most general large-scale surveys, the second approach is often used as it does not necessarily prolong the already extensive questionnaires.

A standard European survey of higher education graduates should become an important support for achieving European higher education policy objectives and for an effective increase of investment in education and skills. Such a survey is currently being set up and it is necessary to analyze the different parts of the project, its methodology, and its implementation in detail. The EUROGRADUATE pilot survey exemplifies that it was possible to collect comparable data on higher education graduates across eight European countries. (Mühleck, 2020)

Through our research and this article, we aim to support this process and enhance the utility of the skills assessment part of the project. Self-assessment of skills enables us to obtain information on skills acquisition relatively easily, but this method also has certain pitfalls. By assessing the responses of graduates in different countries in relation to their own skills and furthermore in relation to skills needed in the workplace, we will try to identify common patterns in the responses of graduates in different countries. We will also assess whether combining the scales into a single measure may improve the research instrument for obtaining the data on skills.

To tackle the issue of potential shortcomings which researchers face when designing and analyzing inter-national skill surveys (see below), we explore the common patterns that emerge in the structure of higher education graduates’ answers when they assess current levels of their own skills and skills required in their work using standard Likert-style items. These are used to answer several research questions: - Do the patterns change when the levels of own skills are evaluated in relation to the levels required at graduates’ current work? - Do the participating countries show similar response patterns, or do the respondents differ in their approach to self-assessment across countries? In other words, can measurement invariance be assumed? If the countries do differ in this regard, how?

Issues with self-measurement

When faced with the task of assessing their own levels of skills in any domain in a survey using an ordinal scale with numbered levels (which is one the most usual forms of skill self-evaluation), respondents necessarily need to form a subjective inner rating system. If we assume an ideal model respondent willing to devote their time and genuinely commit to the task, that is, If we put aside any potential effects of streamlining, intentional distortion due to social desirability etc., the respondent will gauge their perceived abilities against individually established set of criteria that are deemed relevant for the context in which the assessment is being held. For example, when assessing “communication skills including presenting and teaching” in a survey which is otherwise heavily focused on workforce and labor conditions, respondents may purposely omit less formal aspects of their communication skill set that are either not typically associated with such topics (e.g., the ability to entertain) or that do not correspond to contents of their current job (many workers never teach anyone else or do not present in front of audiences).

In terms of validity, concision is a costly trade-off in large-scale general surveys with target populations spanning a variety of scholarly fields. Defining every competence on the most concrete level of practical usage might be applicable with some challenges to feasibility such as prolonging the evaluation process; evidence of the often-cited argument that questionnaire length has a detrimental effect on response rate is not conclusive, but plausible (Bogen 1996; Galesic and Bosnjak, 2009). However, it is precisely the vaguely defined nature of competence which is regarded as one of the most fundamental reasons for people to have incomplete knowledge of their own levels of abilities (Dunning et al., 2004). Lack of clarity allows respondents to define criteria to their advantage. The consequence of this fact is that people tend to believe themselves to be above average on traits that are ill-defined, but not when the definition is more specific and constrained (ibid.). Results from the EUROGRADUATE study attest to the increased self-awareness when assessing skills where the content is comparatively easier to conceptualize and evaluate. Although it could take weeks or months of full-time involvement in a group project to evaluate a person’s team-working skills (with not everyone necessarily agreeing on the criteria or the result), some degree of basic foreign language skills or advanced ICT skills (e.g., programming, syntax in software) can be convincingly demonstrated in a matter of minutes. The data show that reported own levels of these last two skills were generally considerably lower with a lower degree of variability compared to the rest.

Moreover, as Ward et al. (2012: 69) point out, it would not be sufficient to show that all individuals are measuring themselves based on the same criteria as even the best scale is subject to interpretation. Respondents need to make sense not only of the insufficiently labeled middle options but of the exact meaning of the loosely defined extreme options such as “very low” and “very high” as well. We argue that there are several conceivable ways for respondents to internally construct scales based upon commonly held standards, other individuals or groups which serve as reference points. These options include, but are not limited to: - Assessment based on external standards. Respondents may compare themselves to generally known standards of good practice in or even sets of explicitly described requirements regarded as necessary for successful completion of a task if these are available, such as physician assistants performing a standardized patient interview. Such informed self-assessment yields benefit of increased accuracy: “Learners aware of specific benchmarked standards with access to objective data (i.e., external data) demonstrate improved self-assessment abilities compared to those who rely solely upon their own internal judgments.” (Cheng et al., 2021: 2). Conceived collectively in this way, it would be perfectly acceptable for the study to yield results with a vast majority of high-scoring individuals and close to none low-scoring individuals because graduating from higher education institution ought to ensure at least rudimentary levels of most of the skills and the relative standings are not mutually exclusive. - Relative assessment in comparison with peers. Respondents in this mode may contrast their skill levels with perceived performance of other workers in their field of study who have passed the same requirements and thus should de jure possess comparable levels of competence. Why would anyone resort to judging their skills based on hypothetical performance of others even though the wording of the question does not instruct them to do so? First, it has been long known that in a wide variety of dimensions and given the opportunity, people tend to use social comparison instead of objective criteria to judge their own performance unless the inter-personal benchmark would put them in an unfavorable position (Klein, 1997). Once again, the context of assessment is key here—respondents know that the shared reason they are undertaking the survey is their being graduates. Second, the current predominant framing of the purpose of both secondary and higher education in late capitalist economies is a highly competitive one. The commodification and marketization of higher education in the past decades brought about increased reliance of academic judgment on “...impersonal judgment and references based on assessments derived from algorithms, quantified indicators and standardized processes.” (Musselin, 2018: 677). Students are perceptive of this environment. A great many official documents regarding higher education policy either implicitly or explicitly present skills acquired throughout the education process as means to gain leverage in a highly competitive labor market where the resources are scarce and one of the ways to obtain a work position is outperforming other applicants. Tracking students’ and graduates’ ability to promote and secure their ability to ensure further economic growth is thus stated as a widespread motivation for realizing the large-scale testing and surveying, as in the case of PISA (Glaesser, 2019: 74), the EUROGRADUATE project, New Skills Agenda for Europe (EC, 2016), etc. - Relative assessment in comparison with general population. Most of the assessed skills are not field-specific nor are they restricted in need to work positions intended for highly educated individuals. One of the potential effects is that graduates who include general adult population in their imagined pool of competitors may adhere to using only the upper-most part of the scale, reserving the rest for those without formal educational credentials.

Apart from differences in comprehension of the meaning of the task ahead of respondents (i.e., “What exactly am I supposed to evaluate?”), there is another layer of potential systematic distortion—response styles. As Dolnicar et al. (2011) point out, the existence of these pervasive response biases which respondents display independent of the content of the question is widely acknowledged and Likert-style items are especially prone to them.

International differences

So far, we have only looked at issues common to all investigations. However, international surveys face additional difficulties that stem from cultural differences in the ways people answer questions. These differences violate the assumption of measurement invariance, which is a property of a measurement instrument (in the case of survey research, a questionnaire), implying that the instrument measures the same concept in the same way across various subgroups (Davidov, 2014: 58). Cultural clusters reflecting prevalence of various response styles were identified covering European, North and Latin American, African, and Asian cases (e.g., Bachman and O’Malley, 1984; Chen, Lee, Stevenson, 1995; Takahashi, 2002; Lee and Green, 1991).

Different patterns were found concerning European context as well. For example, in the case of Greek respondents, highest level of both acquiescence and extreme values were noticed, Spanish and Italian respondents scored consistently higher than British, German, and French respondents (Van Herk et al., 2004). Studies regarding differences in response styles between countries have shown fairly consistent results.

In a project containing 26 countries (Harzing, 2006), previous patterns were confirmed and showed that these patterns are stable over time; same patterns were observed 40 years ago. This project covers also Lithuania, Greece, and Austria that are part of the EUROGRADUATE project, relation to Norway can be traced here by replacing it with Nordic countries such as Sweden and Denmark, which have similar characteristics in surveys with self-assessment questions. Similar patterns were observed in the EUROGRADUATE results. Norwegian graduates assess their own skills lower than expected while Austrian graduates assess their skills higher (Meng et al., 2020: 13).

As noted in the EUROGRADUATE pilot survey comparative report, these differences are evident when comparing the results of countries in projects such as PISA, PIRLS, or PIAAC (actual measurement of skills) and REFLEX or EUROGRADUATE (self-assessment used) (ibid.: 246). When comparing the relative positioning of countries (here we select those that have participated in the EUROGRADUATE project) in both types of projects, some countries are similar, others differ partially, and others differ significantly. The results are similar (worse rankings) for Greece and Croatia, partly different (better to average) for Austria, Czech Republic, and Lithuania, but the biggest disproportion is for Norway, with the best ranking for projects with actual skills measurement, but one of the worst rankings for projects with self-assessment.

Relative anchors

To summarize so far: an individual respondent’s final decision about their own reported skill level is a result of their understanding of the criteria (which aspects of the skill are included), the perceived standards against which the assessment is being held (absolute or relative), and their response style (a mixture of individual and group-level traits).

Authors of the EUROGRADUATE survey have long been aware of its potential shortcomings. Striving to mitigate the aforementioned issue of “meaningless numbers,” they decided to juxtapose the scale to answer two separate questions: EUROGRADUATE captures not only the current own level of competencies but also the level of competencies that is required at their current work (in both cases, as perceived by the respondent). According to authors, comparing the levels of acquired and required competence levels then allows for conclusion on the matching and the relevance of certain skills. (Meng et al., 2020: 249).

When used this way, acquired (or “own,” used interchangeably) and required skill levels form certain forms of what might be called relative anchors for each other. The reasoning is following: respondents’ response bias should remain roughly consistent across questions with identical measuring scale. Whichever distortion (in absolute terms) is present in the own skill assessment towards one end or another, applying the same standards again on skill requirements will nullify, or at least mitigate, the bias, resulting in a measurement of skill surplus of skill deficit (see below) that is comparable across respondents with different response styles, respondents from different countries, etc.

This solution comes with the advantage of straightforward implementation and from the point of view of education and training policy, it may help partially answer a broader question—when it can be said that there is enough of a given skill in the population (Allen and Van der Velden, 2005). On the other hand, it narrows the topic of higher education graduates’ skills to question of usefulness for the current work position where any existing problems may be temporary and/or result of a job mismatch. The question at hand is whether (and if so, how well) providing a mutual frame of reference in this way alleviates the discussed problems.

Data

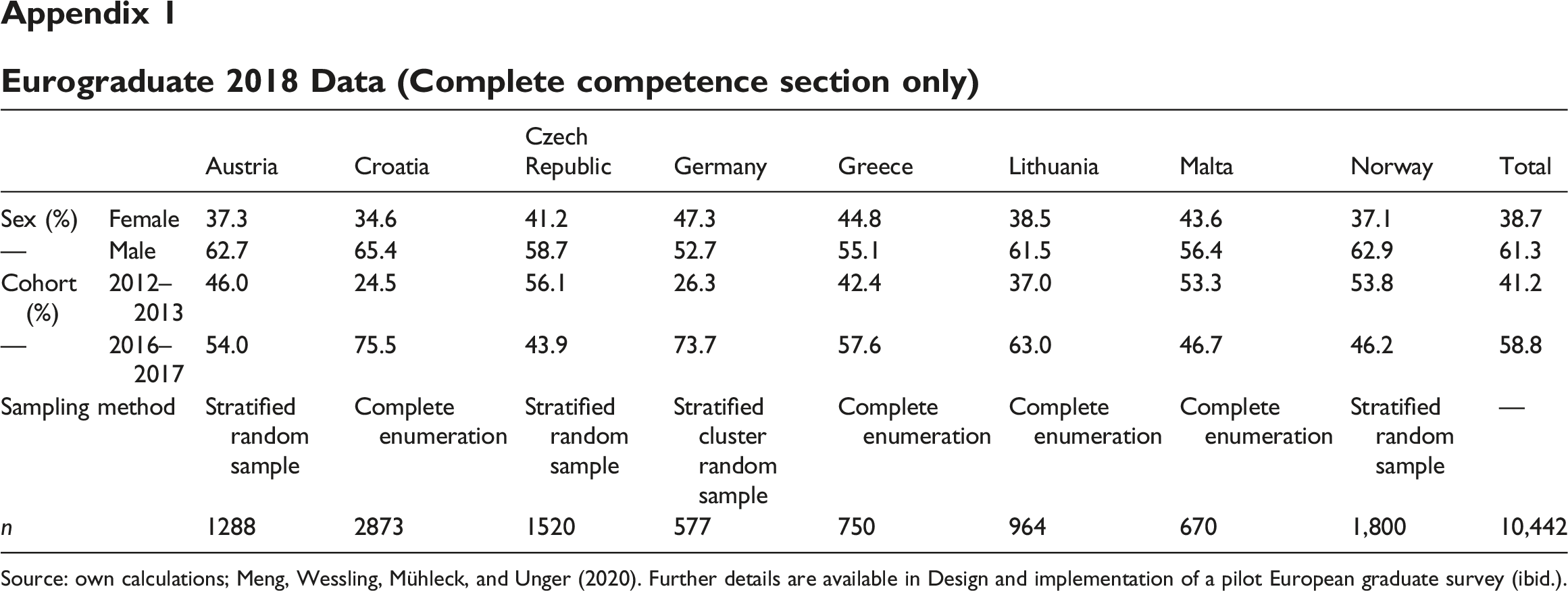

The data used for the analysis come from the EUROGRADUATE Pilot Survey which was a large international survey comprising eight countries (Austria, Croatia, the Czech Republic, Germany, Greece, Lithuania, Malta, and Norway) conducted on behalf of the European Commission in 2018–2019. It targeted two cohorts of higher education graduates (of the academic years 2012/2013 and 2016/2017) including both bachelor levels and master levels and covered topics of sustainable employment, personal skills development, active citizenship, mobility experience, and spatial relocation patterns. In total, 16,582 useable questionnaires were collected with a considerable variance in data quality—net response rate ranges from 5.4% in Lithuania to 34.8% in Germany in the older cohorts. Average response rate was 12.0% (Meng et al., 2020). Since our interest lies in skills self-assessment, we work with a total of N = 10,442 working graduates with fully completed skills sections. Data for individual countries are described in more detail in Appendix 1.

In this study, we focus mainly on two question batteries in which respondents were asked to rate a set of nine competencies: Own field-specific skills; Communication skills (incl. presenting and teaching); Team-working skills; Foreign language skills; Learning skills; Planning and organization skills; Customer handling skills (incl. counseling); Problem solving skills; Advanced ICT skills (e.g., programming, syntax in statistical software). These were rated in respect to a) the current own level and b) the required level in their current work. In both cases, levels were measured using Likert-style items ranging from 1 (very high) to 5 (very low) with no verbal labels of the levels in between the ends. In the subsequent steps, we use a new variable which we call “skill surplus/deficit” that is calculated by subtracting the numeric level of own skill from the level of skill required at work and collapsing the resulting nine categories (ranging from −4 to 4) into a five-point scale (large skill deficit, slight skill deficit, skill match, slight skill surplus, and large skill surplus).

Methods

In the first step, we focus on how the calculated skill surplus/deficit battery performs in relation to a single secondary question dealing with skill requirements that potentially exceed graduates’ current levels: “To what extent does your current work demand more knowledge and skills than you can actually offer?” (henceforth called “declared skill surplus/deficit).” This question, measured on a 5-point scale, presents an opportunity for preemptive assessment of convergent validity—as it is a generalized statement which relates to the same underlying concept, we should expect high levels of association. Additionally, relationships between all pairs of skills are analyzed in order to explore the average degree of association.

Ward et al. (2012) have previously expressed concerns about using association metrics to evaluate self-assessment accuracy. First, many such analyses are based on the questionable assumption of reliability of expert evaluations against which we gauge the subject’s actual performance. This issue clearly does not apply here. Similarly, second discussed problem—differential use of scales among different participants—is not relevant because of the intra-personal relative anchoring. Third, using correlation coefficients carries a risk of few highly incompetent individuals skewing the overall performance of otherwise competent assessors. This issue is solved by the fact that we analyze the relationship between calculated and declared skill surplus/deficit using Kendall tau-b measure of strength of association which is highly resistant to outliers.

In order to tackle the problems of international comparability of questionnaire measures and to unravel the latent traits underlying the response patterns in measured items, a number of methodological approaches (mainly survey design principles) and statistical methods have been proposed, such as the popular confirmatory factor analysis. (Avvisati et al., 2019a). Since our interest lies in identifying potential subgroups of graduates with similar response patterns, we opted for exploratory latent class analysis (LCA). Latent class analysis applies a person-centered approach which means that it attempts to identify unobserved subgroups of individuals characterized by particular combinations of values of variables—in our case, skills (in contrast with more common variable-centered approaches which try to find similarities between variables). Latent class analysis is a mixture model which categorizes all units into disjoint and exhaustive sub-populations (classes). All classes are characterized by the response probabilities for the categories of the observed variables and each respondent is assigned to one of the classes based his or her response patterns (Eid in Van de Vijver et al., 2019: 71). This technique is appropriate because the observed (manifest) variables are measured on an ordinal level and the latent variables are expected to be categorical. The analyses are undertaken with an exploratory approach—we state no explicit hypotheses about the number, size, or meaning of the classes. Results are interpreted a posteriori.

Furthermore, we use a multigroup extension of LCA in which we divide the sample into priori known subgroups, in our case, eight countries. By employing this division, it is possible to assess whether the countries differ in the number of latent classes and whether these classes have the same meaning and size in different countries. We follow the “bottom-up” approach as described in Eid in Van de Vijver et al. (2019): first, an LCA is conducted separately for each participating country with the appropriate number of classes chosen based on standard information criteria—Akaike Information Criterion (AIC) and Bayes Information Criterion (BIC), with the latter being generally considered superior for larger data sets because it takes sample size into account (Kankaras et al., 2010: 375). Equal number of classes in each country would suggest potential validity of measurement invariance. However, different number of classes does not necessarily eliminate the possibility of measurement invariance—LCA could accommodate this situation by specifying a model with higher number of classes and some of these having proportions of 0 (ibid.: 373–374).After interpreting the meaning of classes in these individual analyses, the sample is pooled and ‘[i]f the number of classes found in the first step does not differ across countries, the assumption of full measurement invariance is tested by comparing the fit of the model without measurement invariance (i.e. allowing observed responses to reflect both class and country membership) and the fit of the model with measurement invariance.’ (Avvisati et al., 2019b: 6).

Thus, LCA on the pooled sample is carried out in three different forms, that is, simple LCA without any explicit grouping and multigroup LCA with and without holding the assumption of measurement invariance. The entire described procedure is performed twice—first, using the original assessment of own level of nine skills; then, the multigroup LCA is applied to the calculated skill surplus battery.

All LCA models are estimated 10 times, each time starting with a different number of starting values for the estimation algorithm. This ensures search for the global rather than just a local maximum of the log-likelihood function (Linzer and Lewis, 2011: 12). Each estimation is based on a maximum of 3000 iterations through which the algorithm cycled. Classes are sorted by their population shares.

All statistical computations and graphic outputs have been developed using the R language and environment (R Core Team, 2021) and the packages poLCA (Linzer and Lewis, 2011) and glca (Kim and Chung 2020a).

Analysis

Different skills may vary in their contribution to the overall impression of an individual that they lack the ability to properly execute tasks they are given or that their skills are not being utilized to their full potential. In the whole dataset, 9% of respondents declare that their current work position demands more knowledge and (unspecified) skills that they can currently offer to a very high extent, another 21% chose the second outmost choice.

Relationship between declared overall deficit and calculated surplus/deficit.

Susceptibility of Likert-style items to the effect of response style bias necessarily results in amplification of inter-item associations, especially when the assessment is skewed towards extreme values. In the case of own skill assessment, measuring the strength of association of all pairs of skills (with 9 × 8/2 = 36 combinations) on the pooled sample yields average value of Kendall’s tau-b of 0.32. Individually, the strongest relationship was found while pairing problem solving with learning (0.54) and planning (0.53) or between communication and team-working skills (0.51).

When the same process of inter-skill association measurement is applied to the surplus/deficit battery, the average value drops to 0.17. This is not surprising because large proportion of graduates is content with the skill requirement at their work and declare complete skill match, lowering the variance of the skill surplus/battery in comparison to the own skill battery, which in turn reduces strength of the analyzed relationships. However, the relative standing of these associations remains quite similar; again, the strongest relationships are found between problem solving and learning (0.24), problem solving and planning (0.30) or learning and planning (0.29).

Latent class analyses of own and required skills

In order to determine the solution for each country that most adequately summarizes the response patterns of both own and required skills, we construct a series of individual LCA models, each with an increasing number of classes ranging from two to seven. The upper limit is chosen deliberately to rule out potential overly convoluted solutions with little added explanation value.

Although there are no formulated hypotheses about the class properties, we may still state one general expectation—the abovementioned high levels of inter-skill associations indicate that most classes might be characterized by relatively homogenous evaluations across all skills.

Individual LCAs of own skills—model comparison.

Individual LCAs of skills required at work—model comparison.

Although not decisive for the model selection, additional diagnostic criteria are included. Almost all models are above or near entropy value of 0.8 which is generally considered an acceptable value for a good distinction between classes (Weller et al., 2020). Models for the Czech Republic contain extremely small classes (2% and 3% of cases, respectively), however, these classes are conceptually sound (as discussed below) and similar classes were always present in other solutions as well.

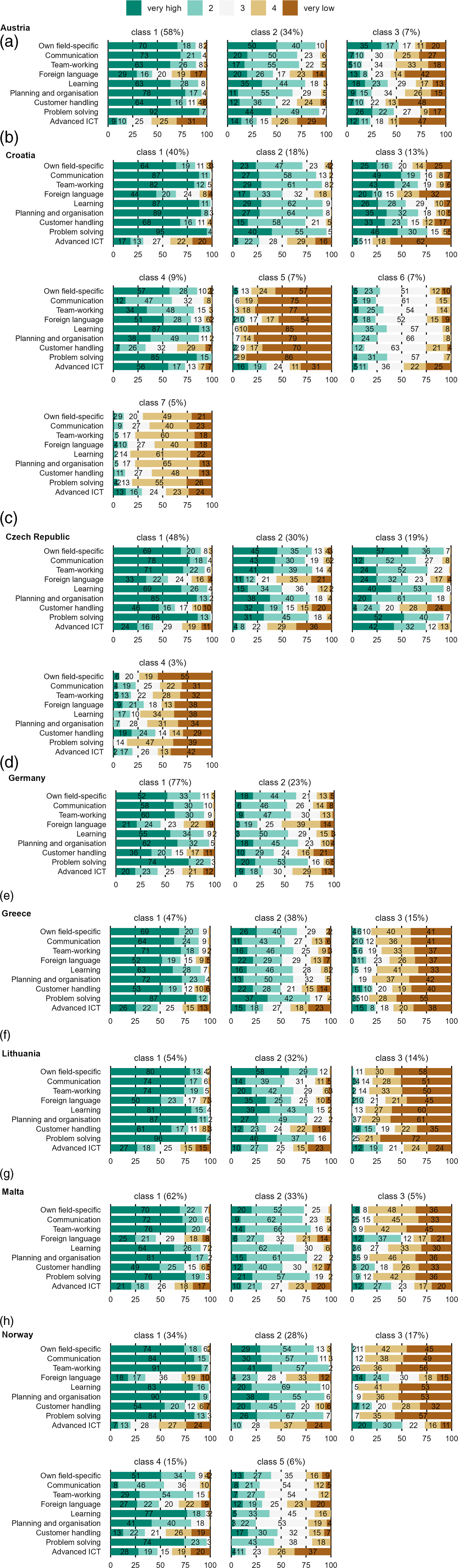

In the case of LCAs for own skills, differing number of classes across countries is a first important indicator that does not support the assumption of measurement invariance. Setting the variety of solutions aside, the resulting models share certain similarities as shown in Figure 1. Most notably, in vast majority of cases, classes are delimited by a common modal assessment value (1–5) across the skills with the exception of foreign language and ICT skills. This large inter-skill homogeneity is often found on either side of the five-point scale—there are very few if any graduates showing signs of middle response style (tendency to use middle response categories). LCA—Conditional probabilities of own skill assessment.

Most countries contain a class of graduates who, to a varying degree, consider themselves universally or almost universally competent in almost all domains, with the exception of foreign languages and ICT, although these are much less articulated in countries with three-class solutions. High scoring classes also vary greatly in size but reach as much as 51% in the case of Austria. Croatia and Malta contain non-negligible portions (20% and 22%, respectively) of extremely confident graduates—conditional probabilities of choosing the “very high” option for most skills often exceed 90%.

Another recognizable type of class consists “universal pessimists” who tend to rate themselves rather poorly in all respects. This type always constitutes a small minority and is not present in its clearest form in all countries—Austria lacks this class completely and Germany and Czech Republic have heterogeneous classes leaning towards poor assessment with considerable proportion of middle-scale responses. At the same time, only few respondents cluster their responses overwhelmingly around the middle of the scale these—such class is found in Croatia and to a lesser extent in Greece.

In general, class distinctions of own skills do not seem to be predominantly driven by specialized combinations of skills (i.e., substantive content of the assessed skills) but rather by respondents’ overall approach to the measurement. We do not find groups of graduates with, for example, exceptionally developed interpersonal skills, highly creative people with poor planning skills, etc. If these do exist, their presence is overridden by a more predominant pattern of inter-skill agreement.

Moving on to the case of required skills (Figure 2), the most fitting models are similar in the number of classes to the proposed solutions of own skill LCAs. For three countries (Austria, Croatia, and Norway), the number of classes is identical to their respective own skill solutions. For the Czech Republic, the number is increased from three to four. For all remaining countries, the most fitting models contain one less class than the respective solutions of own skill models. LCA—Conditional probabilities of required skill assessment.

Similarly, the structures of required skill classes in individual countries bear a striking resemblance to their counterparts. Most countries contain a large class of respondents who perceive their work as extremely demanding in all respects except foreign languages and advanced ICT skills. This type is extremely prominent in Croatia and Malta where it accounts for approximately one fifth of all graduates. The previously recognized trend of language and advanced ICT skills being scored across the whole scale persists.

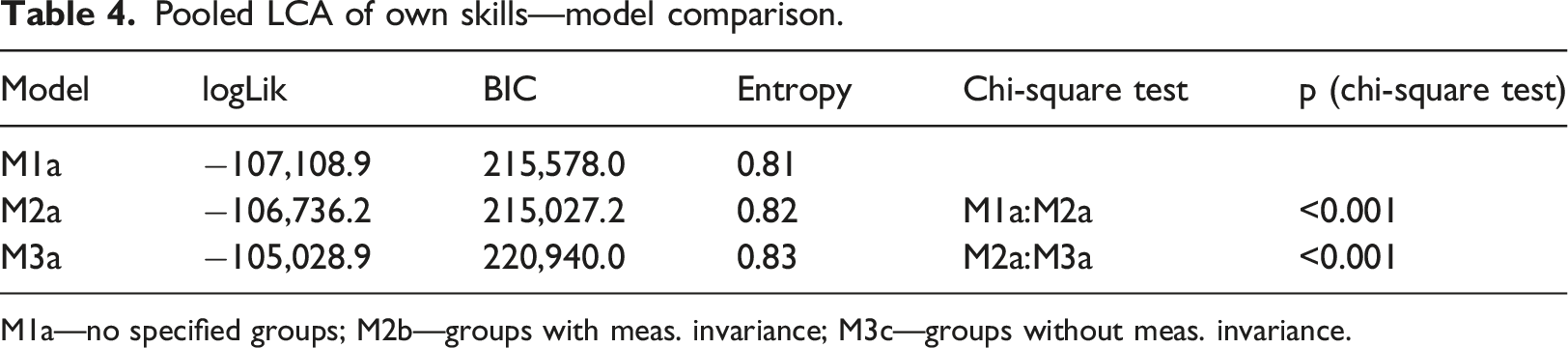

The issue of differential conception of scales across countries is further shown by pooling the data and creating basic LCA models with three classes (three and four are the most common number of classes in individual countries) without any explicit grouping (M1a for own skills, M1b for required skills). To consider multilevel data structure, we incorporate higher-level units (countries) into the models. When the measurement invariance is assumed (M2a and M2b), item-response probabilities are constrained to be equal across groups (Kim and Chung, 2020b). The third pair of models (M3a and M3b) takes countries into account without presuming measurement invariance. Since model M1 is nested in model M2 and model M2 is nested in model M3, the comparison is conducted using a chi-square test.

The model comparisons are presented in Tables 4 and 5. Results further demonstrate how respondents tend to approach their own competencies and the levels required at their work using the same set of internal criteria: - For both batteries, the class prevalences vary significantly across countries (M1a compared to M2a, M1b compared to M2b). - Measurement invariance does not hold (M2a compared to M3a, M2b compared to M2b). - In all cases, the entropy levels between competing models are almost identical and sufficiently high for clear delineation of classes. Pooled LCA of own skills—model comparison. M1a—no specified groups; M2b—groups with meas. invariance; M3c—groups without meas. invariance. Pooled LCA of required skills—model comparison. M1b—no specified groups; M2b—groups with meas. invariance; M3b—groups without meas. invariance.

Latent class analyses of skill surplus/deficit

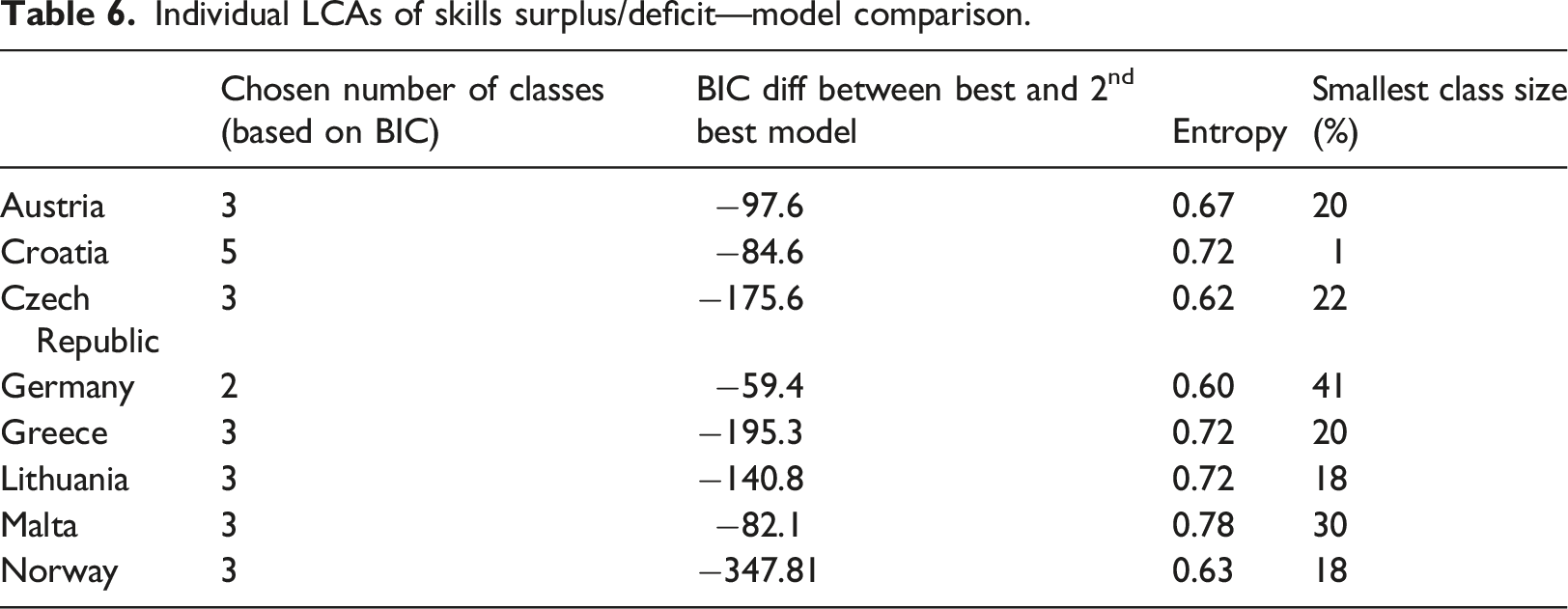

Individual LCAs of skills surplus/deficit—model comparison.

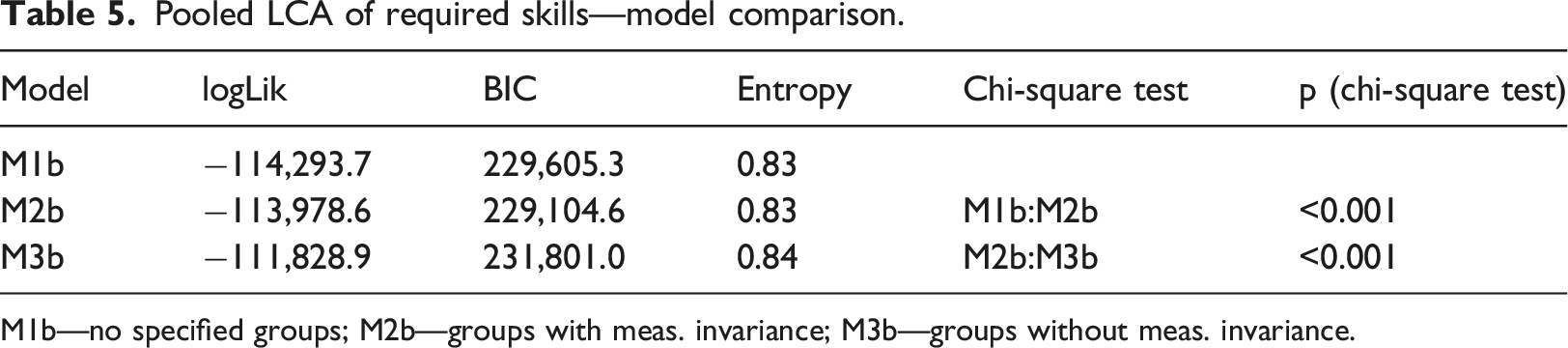

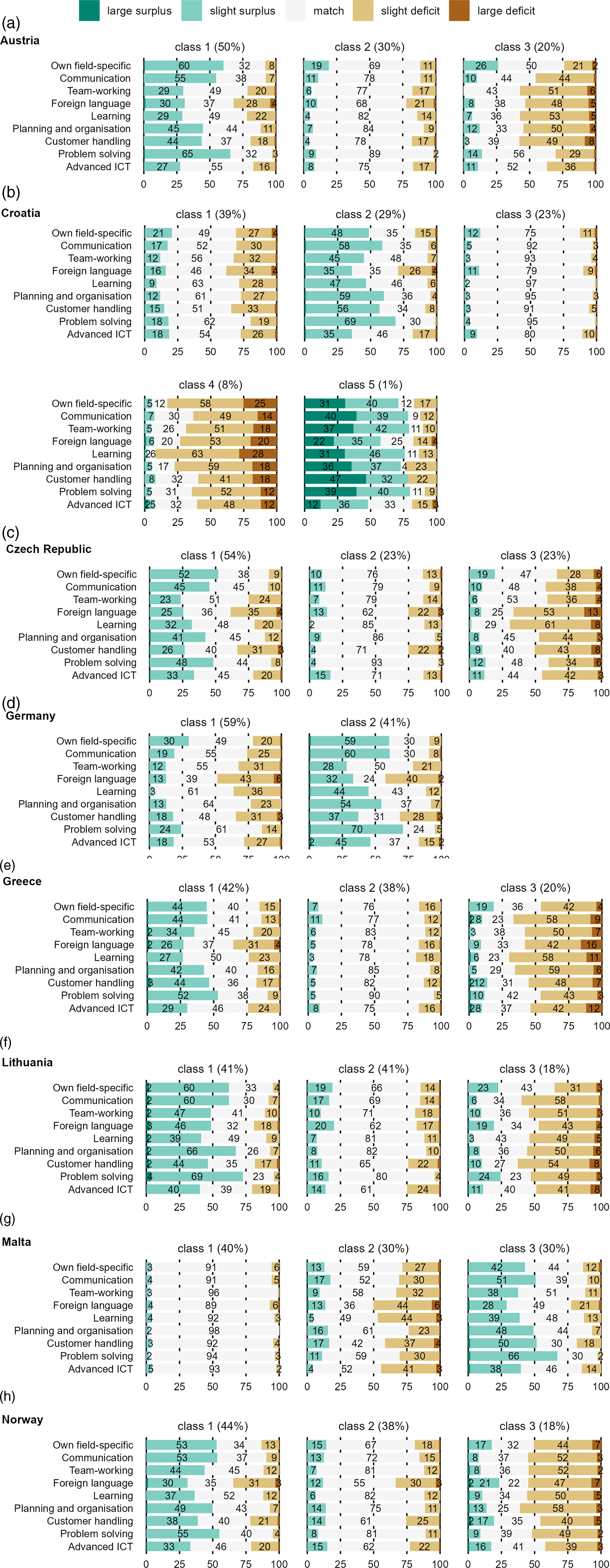

Class structures presented in Figure 3 show a decrease in class type variability compared to own skill and required skill assessment, most notably in three-class solutions. First of the emerging types is a class of “fully matched” graduates who are generally content with all or almost all aspects of their work position requirements. This type is especially pronounced in Malta (with 40% of respondents) where almost all skills have more than 90% conditional probability of being perfectly matched. LCA—Conditional probabilities of skill surplus/deficit assessment.

The second broad type of class is a mixed case; matched or slightly over-skilled graduates form most of the cases, however, probability of slight deficit is non-negligible. We find this type with varying degree of prevalence in Austria (50%), Croatia (29%), the Czech Republic (54%), Germany (41%), Greece (42%), Lithuania (41%), Malta (30%), and Norway (44%).

Third type of class of generally partially matched or slightly under-skilled graduates is similar in both structure and prevalence across Austria (20%), the Czech Republic (23%), Greece (20%), Lithuania (18%), and Norway (18%).

Severe forms of skill mismatch are rare; particularly large surplus is almost non-existent. Foreign language skills and ICT skills do not stand out distinctly as their generally lower acquired and required levels partially even out.

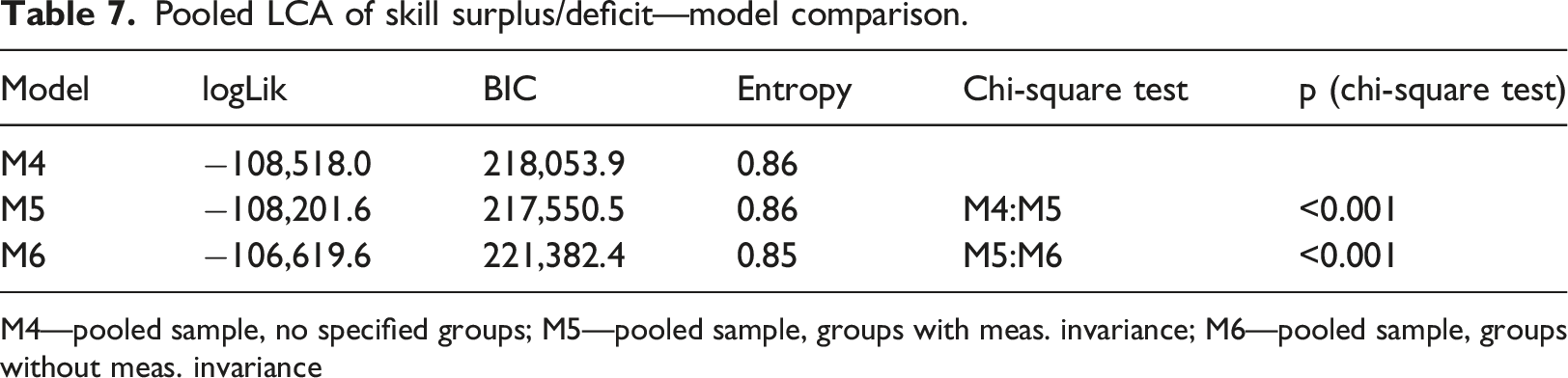

Pooled LCA of skill surplus/deficit—model comparison.

M4—pooled sample, no specified groups; M5—pooled sample, groups with meas. invariance; M6—pooled sample, groups without meas. invariance

The surplus/deficit battery thus poses an ambivalent solution. On the one hand, we see the intended mitigation in country-specific response patterns variability as expected. On the other hand, the resulting classes remain burdened by the overall straightlining tendencies of respondents. The widespread assessment of one’s own skills mirroring the perceived difficulty of their work responsibilities results in clusters with little added information value which are even more prominent after combining the scales than before.

Discussion

Our analysis of the skill scales yielded mixed results. Achieving formal measurement invariance is a notoriously difficult task. Although neither of the discussed scales reaches total invariance, calculating the skill surplus/deficit still at least results in a lower inter-national heterogeneity regarding number of classes (with three fourths of countries being partitioned into three classes) and intra-class structure. However, this can be to a large extent explained by the fact that there are almost no cases of large surplus or large deficit which excludes a substantial number of possible response patterns. In other words, from a practical standpoint, comparing acquired and required skills effectively reduces the issue to a ternary question—whether there is skill deficit, match or surplus—without less attention to degree to which skills are matched.

Bearing in mind lower entropy of the individual skill surplus/deficit analyses (meaning more blurred distinctions), certain types of classes in these models, such as the “fully matched” class or the “slightly under-skilled” class, seem to be universally present, making them useable for future comparison. According to Eid et al. (2003: 207), this is one of the ways in which we could relax the assumptions (and thus facilitate comparability)—assuming that only certain classes are universal while others are country specific. The second way is to assume that some, but not all, items are invariant across countries (ibid.). Considering the large number of countries involved in our analysis, such inquiry is a topic for potential future research.

No single result of a latent class analysis in any given country can, by itself, answer the question of appropriateness of the current solution. For example, it is realistically conceivable that there is a non-negligible portion of higher education graduates who report very high levels of own skills simply because they actually do excel in most academical, vocational, and interpersonal domains. At the same time, distinguishing them from less attentive respondents with extreme response style is a difficult task. Similarly, while some workers and entrepreneurs with higher education degree may be perfectly matched with every hard skill and soft skill requirement of their work, reaching as much 40% of population (in case of Malta) might raise questions about credibility of the results. It is only with gradually piling pieces of evidence—discrepancies between international rankings of countries based on performance tests of students and rankings of graduates based on self-assessment, extremely high levels of inter-skill correlations, low accordance between two measures of skill deficit, and to a certain extent varying numbers of latent classes across countries—that we see the importance of venturing into alternative measuring approaches. All these together further confirm the notion that has been known for well over half a century—that differences in Likert-style items primarily represent response sets, and only to a secondary degree actual differences in intensity (Peabody, 1962: 73; Dolnicar et al., 2011).

The present study also has substantive limits. As our efforts were targeted mainly towards demonstration of existence and scope of (potential) inter-national differences in skill self-assessment, we did not inquire into causal explanations and did not examine peculiarities of individual countries in detail. Inclusion of all and even most of factors responsible for these differences are beyond the scope of this paper. These might include language effects of varying intensity of the scale description, the level of cultural extraversion, collectivism or country’s power distance (Harzing, 2006) as well as potential covariates including socio-demographic variables. Also, the global nature of tests for comparing models leaves us with little information as to which particular restrictions are responsible for rejecting the invariant models (van de Vijver, 2019: 93).

Since the possibility of comparing country results is one of the important reasons for conducting international surveys, we cannot stop at stating that care should be taken when interpreting the results. We should identify at least some ways to make the results of international comparisons more useful.

Here, we pay attention to those factors that are related to the focus of our article.

First, we discuss the design of the survey methodology: the way the survey instrument is prepared should reflect known biases that may occur, such as harmonization of the translation concerning the national context, but also the setting or specification of questions. Typical examples (which show up in our analyses) are language and ICT skills, where much higher numbers of respondents answer at the extreme ends of the response scale. Thus, it appears that for ICT skills in particular, when it is specified that advanced ICT skills include, for example, programming skills, then only a limited proportion of users may rate their skills as better than basic. It turns out that, according to the nature of the answers, respondents also tend to answer similarly when asked about language skills. In both cases it is, therefore, necessary to consider what is being asked and what is the distribution of these skills in the target population and what we actually want to learn from the answers before including these items in the questionnaire.

So, how do we deal with the factors we need to consider when designing a survey methodology? If we restrict ourselves to what we are concerned with in this paper, one of the main issues is self-assessment in the case of different skills in terms of understanding the content of the skill and the scale against which the respondent defines their response. One approach is to introduce a relative anchor to help better relativize respondents' statements. In the EUROGRADUATE survey, two sets of questions were used with this aim in mind: skills possessed and skills required at work.

Quite extensive experience exists in the US O*NET survey with a more precise definition of the scale on which self-assessment takes place. (e.g., Rounds et al., 2018; Siekmann and Fowler, 2017; Handel, 2016). The respondent is assisted by brief statements at several levels of the scale, making their statement not entirely subjective in terms of understanding the content of the skill being assessed; the subjective assessment of one’s own level remains, of course. In designing the survey methodology, it is necessary to assess to what extent specifying the description of each skill level will help reduce subjectivity in determining the self-reported level. At the same time, it is necessary to find a tolerable threshold that does not yet cause a decrease in the respondents' willingness to answer.

Rating and ranking are two other approaches to reduce bias in international surveys that use Likert scales. It turns out that ranking the items in question compels the respondent to reflect better on the content and to express their own hierarchy for the items under consideration. In particular, ranking has proven to be an effective means of reducing the bias cause by response styles and language differences in international surveys that are intended for cross-country comparisons (Harzing et al., 2009). However, limitations then arise in the possibilities of using statistical tools in the subsequent analysis of the survey data.

Second, we look at the analytical phase. In analyses, it is necessary to be aware of the various dimensions of cultural differences that, already in the choice of analytical tools, can make comparisons between countries, or at least some of them, irrelevant in the interpretation of results. An example might be the use of statements at the extreme poles of the intervals that is not appropriate in international surveys because there is a tendency in some countries to use statements at the extreme poles of the scale more and less in others. (Harzing, 2006). When analyzing the EUROGRADUATE data, this is most noticeable when the results of the Norwegian respondents are included. There is a tendency for them to judge their skill level rather low (which does not correspond to the measurements of actual skill level). Thus, to use only the maximum (or minimum) levels in the analysis in this particular case means that an already systematic bias will be further amplified.

When we look at potential solutions for the factors that occur in the analytical phase, and specifically self-assessment in the case of acquired skills, caution needs to be exercised in the choice of analytical approaches. Consideration should be given to how the method of analysis, or the construction of the analytical model, may affect the presentation of the results obtained in a survey, that is, a survey that includes self-assessment as the response styles characteristic of some countries may significantly affect the distribution of results.

The difficulty of achieving equivalence in practice does not necessarily mean that we should abandon the possibility of cross-country comparisons. As Davidov (2014) shows, there are three ways to assess the degree of equivalence achieved. First, he suggests finding subsets of countries or concepts where measurement equivalence applies. Then proceed to compare these sub-groups. Second, there is a suggestion to determine how severe the violation of measurement equivalence is. Based on that assessment, we decide whether the comparison between countries can still be meaningful and in case of doubt, drop non-invariant items. The third is the recommendation to try to explain the individual, social, or historical sources of measurement nonequivalence.

In our case, this could be applied to assess whether to keep the Croatia and Germany sets in the analysis or to create corresponding country subsets for this particular analysis in the case of skills. An assessment of social or behavioral aspects could then consider how to deal in the analyses with the different ways of responding of the Norwegian, Maltese, or Greek respondents. Although the used dataset covers a pilot number of eight countries, these assessments will be much more relevant when the EUROGRADUATE survey in its next round covers larger number of countries.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author biographies

Appendix

Source: own calculations; Meng, Wessling, Mühleck, and Unger (2020). Further details are available in Design and implementation of a pilot European graduate survey (ibid.).

Austria

Croatia

Czech Republic

Germany

Greece

Lithuania

Malta

Norway

Total

Sex (%)

Female

37.3

34.6

41.2

47.3

44.8

38.5

43.6

37.1

38.7

—

Male

62.7

65.4

58.7

52.7

55.1

61.5

56.4

62.9

61.3

Cohort (%)

2012–2013

46.0

24.5

56.1

26.3

42.4

37.0

53.3

53.8

41.2

—

2016–2017

54.0

75.5

43.9

73.7

57.6

63.0

46.7

46.2

58.8

Sampling method

Stratified random sample

Complete enumeration

Stratified random sample

Stratified cluster random sample

Complete enumeration

Complete enumeration

Complete enumeration

Stratified random sample

—

n

1288

2873

1520

577

750

964

670

1,800

10,442