Abstract

This paper investigates the fitness-for-purpose and soundness of bibliometric parameters for measuring and elucidating the performance of researchers in the field of education sciences in Germany and other European countries for the purpose of benchmarking. In order to take into account the specificities of the publication pattern of researchers in education sciences, bibliometric data are used as benchmarks. Specifically, the analysis is based on the data of Scopus and SCImago and exploits the contributions of educational researchers from Germany, Spain, the Netherlands, and Scandinavian countries to leading international journals within the period 2010 to 2015–2016. The results demonstrate that the visibility of German education in international journals is not as bad as is often assumed. Nevertheless, substantial shortcomings are revealed. After discussing some factors responsible for these shortcomings, the article concludes with considerations on possible consequences to be drawn from the status quo of international visibility of German educational science.

Introduction

In the area of education policy and analysis, the proverb “good decisions are informed decisions” commonly refers to evidence-based decision making as well as to far-reaching expectations concerning the quality of educational research (cf. Keiner, 2005). A couple of years ago, a former Dutch minister for education expressed the mantra of neoliberal educational policy with the words: “The high social importance of education, in combination with limited resources, demands that policy and practice are based on the best possible insights into ‘what works’” (Van der Hoeven, 2007: 151). Melting budgets for education, the demand for accountability of public budgets, and general aspirations to make education more efficient nurture enduring interests of politicians in benchmarking, the practice of assessing the quality of educational products, programs, or strategies and comparing them with peers working in the same fields. Since the 1990s, benchmarking has become a fad in education policy aimed at fabricating and securing the quality of educational systems at all levels (cf. Ozga et al., 2011; Resnick et al., 1995; Scheerens, 2004; Soguel and Jaccard, 2008). In consequence, the idea of benchmarking the quality of education has advanced to a cornerstone of the agenda of influential stakeholders, such as the European Union (2002) or the OECD (Scheerens and Hendriks, 2004).

From the perspective of strategic planning—as outlined, for example, by Kaufman (1988)—benchmarking corresponds with needs assessment, which is focused on examining the expectation gaps between the current (“what is”) and desired (“what should be”) states of an educational system or a particular sector of it. Accordingly, success and productivity of an educational system is seen as depending on the attainment of aspired outputs and outcomes (Scheerens, 2004). Thus, the main objectives of benchmarking and needs assessment are (a) to find out whether and which improvements are necessary to meet changing requirements; (b) to analyze how others achieve their performance levels; and (c) to use this information to improve one’s own performance. The end result of benchmarking consists in an inventory of prioritized objectives and quality standards. As a component of strategic planning, benchmarking tries to capture the opportunities for improvement in a given educational context and its constraints. Needs assessment usually addresses different clients: individuals, organizations, and society as a whole (cf. Kaufman et al., 1996). Hence, trade-offs may result from the clients’ divergent goal setting. In accordance with the flavor of the neoliberal zeitgeist, the idea of benchmarking actually addresses not only the quality of schooling but also educational research, which is requested to move toward a more useful, more influential, and better funded enterprise (cf. Burkhardt and Schoenfeld, 2003). Consequently, universities and academic institutions today use the number of publications and citations to measure the academic competence of their faculty. Thus, the phrase “publish or perish” initially coined in the 1930s is today a demanding challenge for most postgraduates (cf. Rawat and Meena, 2014).

Benchmarking from the “publish or perish” perspective

Widely accepted quality criteria of publications can serve as useful tools for providing comprehensible scientific information services (Thomson Reuters, 2008), but they can also produce strong biases in the politics of funding and staffing (Kornhaber et al., 2016). Australian universities, for example, receive extra funding based on their academic publication rates and academic promotion is difficult without a good publication record (McGrail et al., 2006). Comparably, in the Netherlands academic promotion is predominantly linked to productivity measured by citation indexes and internationally refereed publications (e.g. Tijssen et al., 2002; Van den Brink, 2010)—in the eyes of Van Raan (2005) a “fatal attraction,” because it is then not only funding that becomes increasingly dependent on the visibility of research in international journals but also staffing. At present, one can observe a global tendency across most academic disciplines to consider publications in “top-tier” journals as eligible preconditions for hiring junior and senior scientists (e.g. Baneyx, 2008; Hasselback et al., 2012; McGrail et al., 2006; Müller and De Rijcke, 2017; Rawat and Meena, 2014; Schmidt-Hertha and Tippelt, 2014; Van Leeuwen et al., 2003). In consequence, postgraduates are experiencing high pressure to publish in scholarly journals earlier than at any previous time, frequent publication has become a powerful method to demonstrate academic achievement to peers, and successful publication of research in a top-tier journal brings attention to scholars and their institutions. Thus, the demand for scientific productivity is deeply rooted in a general agreement within scientific communities that quality standards of publications are immensely important to the scientific endeavor. As a consequence, the trend to publish in “top-tier journals” is also observable in the area of educational research (e.g. Fischman et al., 2018; Johnson et al., 2016; Schneider, 2009), and boosting the quality of publications has become an important goal of educational research in Germany and other European countries (cf. Backes-Gellner and Schlinghoff, 2010; Gogolin et al., 2014; Streitwieser et al., 2015).

When the first bibliometric analyses were published in the late 1990s, it became evident that education research in some countries in Europe (e.g. Germany, Scandinavia, France, Italy, Spain) was locked in its national language and peculiarities, and thus did not present an internationally recognized profile (cf. Burkhardt and Schoenfeld, 2003; Ingwersen, 2000). “As a reaction, education research became forced to internationalize, to meet the given theoretical and methodological standards of psychology and social sciences” (Knaupp et al., 2014: 84). Consequently, a substantial rethinking concerning the practices of publishing education research has gained ground in most European countries since the beginning of the 21st century.

The reverse side is the observation that scholars often are not comfortable about being put on performance scales and might perhaps even experience the publication pressure as a health threat (e.g. Tijdink et al., 2013). Aside from these personal aspects, there is also professional concern that the “publish or perish” pressure involves the potential to undermine the trustworthiness of publications due to the predisposition of top-tier journals towards positive findings of mainstream research (cf. Grimes et al., 2018; Young et al., 2008). According to Fanelli’s (2010) analysis of US states’ data (including a total of 1316 publications), papers across all disciplines are less likely to be published and to be cited if they do not report positive results. Competitive academic environments increase not only scientists’ productivity but also biases. As result of her comprehensive review, Fanelli (2010) distinguishes different procedures to manage “negative” results by turning them into positive results. Amongst other ways, this can be done by “hypothesizing after the results are known” (Kerr, 1998), by selecting the results to be published, by tweaking data or analyses to “improve” the outcome, or by willingly and consciously falsifying them (cf. De Vries et al., 2016). Although Fanelli’s (2009) meta-analysis indicated that only a very small number of researchers (2%) in medicine and social sciences admitted falsification or fabrication of research data, Hartgerink et al. (2016) consider this result as the tip of the iceberg. When Bakker and Wicherts (2011) analyzed about 280 articles published in the psychological literature they found that around 18% of statistical results were incorrectly reported. John et al. (2012) conducted an anonymous survey of 2000 psychologists and found questionable research practices which reduce the interpretability of the reported statistically claimed effects. Although the reported studies indicate that fraud is rare, the line between explicitly fraudulent behavior and merely “questionable” research practices is perilously thin. Inevitably, incorrect application of statistical tests, lack of transparency and disclosure about decisions, or exclusion of outliers undermine the confidence in sciences (cf. Simmons et al., 2011).

To uncover academic fraud and misconduct, scientific journals use a number of strategies. For instance, they regularly use iThenticate for detecting plagiarism, and sophisticated statistical techniques can be used to detect fabricated numerical data (e.g. Hartgerink et al., 2016), but first and foremost, peer reviews are the core of academic quality control. Although the peer-review process is not secure from biases (cf. Lee et al., 2013; Rigby et al., 2018; Stroebe et al., 2012), many scientific journals have policies in place to deal with fraud (Resnik et al., 2009), and new methods of peer review in scholarly journals are continuously being developed (Björk and Hedlund, 2015). Notably, top-tier journals are less susceptible to authors’ careless and fraudulent conduct than journals with a lower reputation (Fanelli, 2009; Grimes et al., 2018). This observation clearly intensifies the claim of academics to publish in top-tier journals as listed, for example, by the Web of Science or Scopus. For the individual researcher, citation analysis has important implications—for better or for worse: Citation is considered in grants, hiring, and tenure decisions, and for many reasons, researchers may want to demonstrate the impact of their work, and citation analysis is one widely accepted way of doing so.

Measures of scientific productivity

Measuring the productivity of scientific disciplines is the task of the two closely related approaches of bibliometrics and scientometrics, which commonly use the method of citation analysis to examine the impact of a published document on referring documents. Specifically, the number of citations to articles published in a journal determines the impact factor of the journal, and the number of citations to an article over time provides a measure of the productivity of authors (cf. Lowry et al., 2004).

The idea of a citation index for sciences was introduced by Garfield (1955), who then realized his vision a few years later with the production of the Science Citation Index® (Garfield, 1961), which led to the ISI Web of Knowledge (Garfield et al., 2000). At present, there are three main sources of bibliometric data: Web of Science®, Scopus®, and Google Scholar. They allow users to search forward in time from a particular article to more recent publications that cite this article.

The Web of Science® corresponds with the ISI Web of Knowledge and was provided by Thomson Reuters from 1992 to 2016, when Clarivate Analytics took over the Intellectual Property and Science part, including the Web of Science. Its databases cover the sciences, social sciences, and humanities, and have recently expanded to also include conference proceedings in addition to journal articles. The annual Journal Citation Reports specify the impact factor of a particular journal X by counting the frequency of cited documents over a period of two previous years divided by the number of citable articles (i.e. research articles and reviews) for the two-year period. The journal impact factor (JIF) is a very popular measure of scientific productivity because it is easy to obtain and offers convenience and a sense of objectivity. However, the citation analysis does not include the full text of the article and the references.

In contrast, Scopus® (http://www.scopus.com/), supplied by Elsevier, exploits the text of the citations, including abstracts and references (cf. Guz and Rushchitsky, 2009). According to Elsevier, Scopus is the largest citation database of peer-reviewed literature and quality Web sources. Based on the citation network of Scopus®, SCImago (Scimagojr.com) has developed the Journal Rank Indicator (SJR indicator) as a new approach to measure scholarly journals’ scientific prestige (cf. González-Pereira et al., 2010). The SJR indicator is a measure of the scientific influence of scholarly journals that accounts for both the number of citations received by a journal and the “prestige” of the journals that the citations come from. Specifically, the SJR indicator is based on a variant of eigenvector centrality applied to complex networks. Measures of eigenvector centrality capture the impact of a node within a network on the basis of its relationships to other nodes in accordance with the principle that the rank of a node increases depending on the degree of relationships to higher-ordered nodes (cf. González-Pereira et al., 2010). The outcome is called the scientific “prestige” of a journal. SCImago also offers a country rank, which permits comparisons between particular countries with regard to the total number of publications, citations per document, and the importance or prestige of the journals. Within the area of social sciences, SCImago encompasses almost 1000 journals explicitly dedicated to education.

For a while, Scopus and the Web of Science were the market leaders, but since 2004 they have competed with Google Scholar, which is recommended especially by scholars from social sciences and humanities (e.g. Martín-Martín et al., 2017; Prins et al., 2016). This freely accessible Web search engine connects the top 20 Anglophone journals per subject area with the cited articles that contribute to the rank position. However, this emphasis on citation counts in the ranking algorithm might strengthen the “Matthew effect of accumulated advantage” insofar that highly cited documents of top ranks gain more citations while new publications hardly achieve top ranks and therefore get fewer citations (Beel and Gipp, 2009). These authors also point out that Google Scholar is vulnerable to spam (Beel and Gipp, 2010). Nevertheless, Google Scholar provides a valuable resource for bibliometric analysis and for using citations in the evaluation of research. The use of Google Scholar as a citation source may involve some problems but it has repeatedly been reported that more citations are found using Google Scholar than using the Web of Science and Scopus and also that there is only a limited overlap between the citations found through Google Scholar and those found using the Web of Science (Larsen and Von Ins, 2010). According to Mingers and Meyer (2017), Google Scholar has clear advantages over Web of Science and Scopus in terms of its coverage of the social sciences and humanities but has less reliable data and fewer bibliometric tools. A particular problem of Google Scholar, however, is its lack of normalization 1 to reduce redundancy and improve data integrity. Thus, Jacsó (2010) comes to the conclusion that the metadata created by Google Scholar are substandard, neither reliable nor reproducible, and distort the indicators at the individual, corporate, and journal levels. Due to these drawbacks, Google Scholar metadata are not included in our analysis.

To sum up, there are three main sources of citations: specialized databases such as the Web of Science or Scopus, and Google Scholar, which searches the Web to find citations from different sources such as articles and book chapters. Each database has its advantages and limitations (cf. Reale et al., 2017). Several comparisons have shown that both the Web of Science and Scopus generally provide robust and accurate data for the journals they cover, but have their limitations with regard to the coverage of social sciences (e.g. Adriaanse and Rensleigh, 2013; Crespo et al., 2014; Harzing and Alakangas, 2016; Meho and Yang, 2007; Mingers and Lipitakis, 2010; Prins et al., 2016). Several studies (e.g. Amara and Landry, 2012; Mingers and Lipitakis, 2010) have shown that in social sciences often less than 50% of the publications of a person or institution actually appear in the database of the Web of Science and the numbers of citations are correspondingly lower, and thus put researchers at an unjustified disadvantage (Diem and Wolter, 2013). A journal with a high impact factor can include considerable variability in the citation rates for individual papers (cf. Campbell, 2008). Hence, alternative indicators have been suggested as means of measuring the quality of output from an individual researcher. Despite their scientometric handicaps, Google Scholar and also Research Gate (www.researchgate.net) often provide individual authors with more appropriate information about their individual index of citations than the Web of Science or Scopus.

Method of analysis

Aiming at a comparison of the international visibility of several European scientific communities, we assess bibliometric data on Germany for a five-year period and compare them with data for the Netherlands, Spain, and Scandinavian countries (Denmark, Finland, Norway, Sweden). We have chosen the Netherlands because Dutch scientists started in the 1990s to link academic promotion to productivity based on citations in top-tier journals. We have chosen the Scandinavian countries because they had a similar point of departure in the 1990s as Germany (Ingwersen, 2000), and finally we have chosen Spain because its country ranking of citable citations has been at the top places for a couple of years.

In general, we apply the procedures of bibliometrics as described in the literature (e.g. Ball and Tunger, 2005; Malciené, 1989; Masic, 2016). In accordance with the particular aim of assessing the international visibility of German educational research, we adopt Botte’s (2007) criterion to analyze only contributions to journals that address an international audience and are therefore published in English. Furthermore, we focus on journals that apply the standards of peer review procedures (see above).

According to comparisons of the indices (e.g. Leydesdorff, 2009; Meho and Yang, 2007; Ramin and Shirazi, 2012), it can be concluded that the two databases of Scopus and Web of Science overlap and are to a great extent complementary. As part of the Web of Science, Clarivate Analytics offers the Social Science Citation Index (SSCI) as a commercial citation index that encompasses more than 3000 journals in the area of social sciences, but only 224 of them are assigned to education (http://ip-science.thomsonreuters.com). Alternatively, SCImago offers the SJR indicator and a country rank for more than 1000 journals in the field of education. SCImago provides valuable features such as weighting citations received based on the prestige of the citing journals, the exclusion of journal self-citations, and international collaboration (cf. Jacsó, 2010).

We have selected 130 top-ranked journals from SCImago’s comprehensive list of 1066 items, placing emphasis on journals related to central sectors of educational research. Aiming at recent trends, we focus especially on the period from 2010 to 2015–2016. Altogether we have inspected more than 50,000 documents for this period.

Results of the bibliometric analysis

In this section we distinguish between general trends of visibility of educational research in scholarly journals. First, we analyze the trends over the period from 1996 to 2015. For the more detailed analysis, we focus on the period from 2000 to 2015–2016.

General trends of visibility—the “big picture”

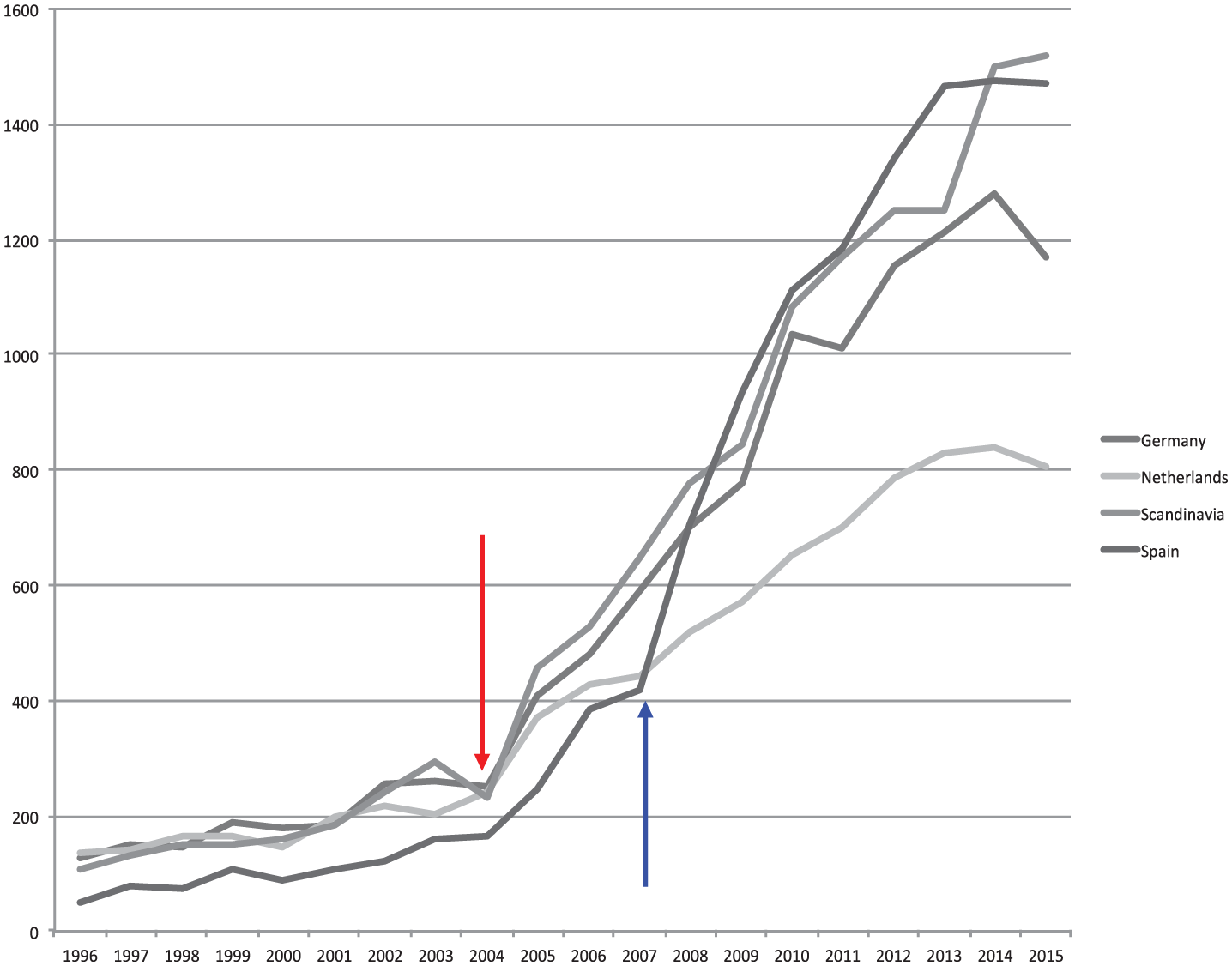

For the period from 1996 to 2015, the analysis of publishing productivity in education reveals a similar pattern for the Netherlands, Spain, Germany, and Scandinavian countries (Denmark, Sweden, Norway, Finland) (see Figure 1).

SCImago trends of publications (y-axis: number of citable documents = peer-reviewed articles) from 1996 to 2016 for Germany, Spain, the Netherlands, and Scandinavian countries.

At first glance, Figure 1 suggests that the year 2004 can be considered as a turning point in the overall trend of publication patterns in the selected countries. Whereas the period from 1996 to 2003 is characterized by a continuous but only small increase in publications, a significant increase in citable documents (i.e. peer-reviewed articles) can be observed from 2004 to 2015–2016 (

This finding corresponds with observations by Plume and van Weijen (2014), who state that there has been a consistent growth in the number of articles published since 2004, and that at the same time, the number of authorships increased at a far greater rate until 2013. One common belief is that as a result of the “publish or perish” pressure, individual researchers tend to publish more and more articles every year. However, Fanelli and Larivière (2016, p. 1) point out a paradox: Although the total number of articlespublished per year has increased since 2004, “researchers’ individual publication rate has not increased in a century.” What has increased from 2004 to 2015 is the average number of authorships per article because authors are progressively collaborating and co-authoring more than in previous years. In the literature, this phenomenon is called “the rise of fractional authors” (McKercher and Tung, 2016). It refers to the allocation of credits to authors on multi-authored publications—sometimes reporting the same research in various journals (Hagen, 2008).

The growth of citable publications since 2004 has also been intensified by the transformation of the scholarly communication landscape associated with the emergence of digital media (e.g. Borgman, 2007; Tenopir and King, 2000). While Björk et al. (2009) stated that in 2006 fewer than 5% of articles became immediately available on the internet and an additional 3.5% after an embargo period of one year, electronic journals are now the standard for accessing and reading scholarly articles (Tenopir et al., 2015). However, “open access” at the journal level comprises a complex picture of availability. In most cases, open access journals are not necessarily new publications. In fact, many established journals make only a few recent years of content available online, while the majority of their content remains accessible only through traditional access paths. Accordingly, SCImago’s comprehensive list of journals contains 141 open access journals (i.e. 13%) in the area of education, with 80 of them available in English. Several reviews (e.g. Larivière et al., 2015; Larsen and Von Ins, 2010) indicate that the form of scholarly journals has not changed since the digital revolution, though it has improved access, searchability, and navigation within journals. Hence, traditional scientific publishing is still increasing, although there are big differences between fields and countries. According to Ellegaard and Wallin (2015), articles from the most productive countries are among the most cited.

Contrasting country ranks of productivity

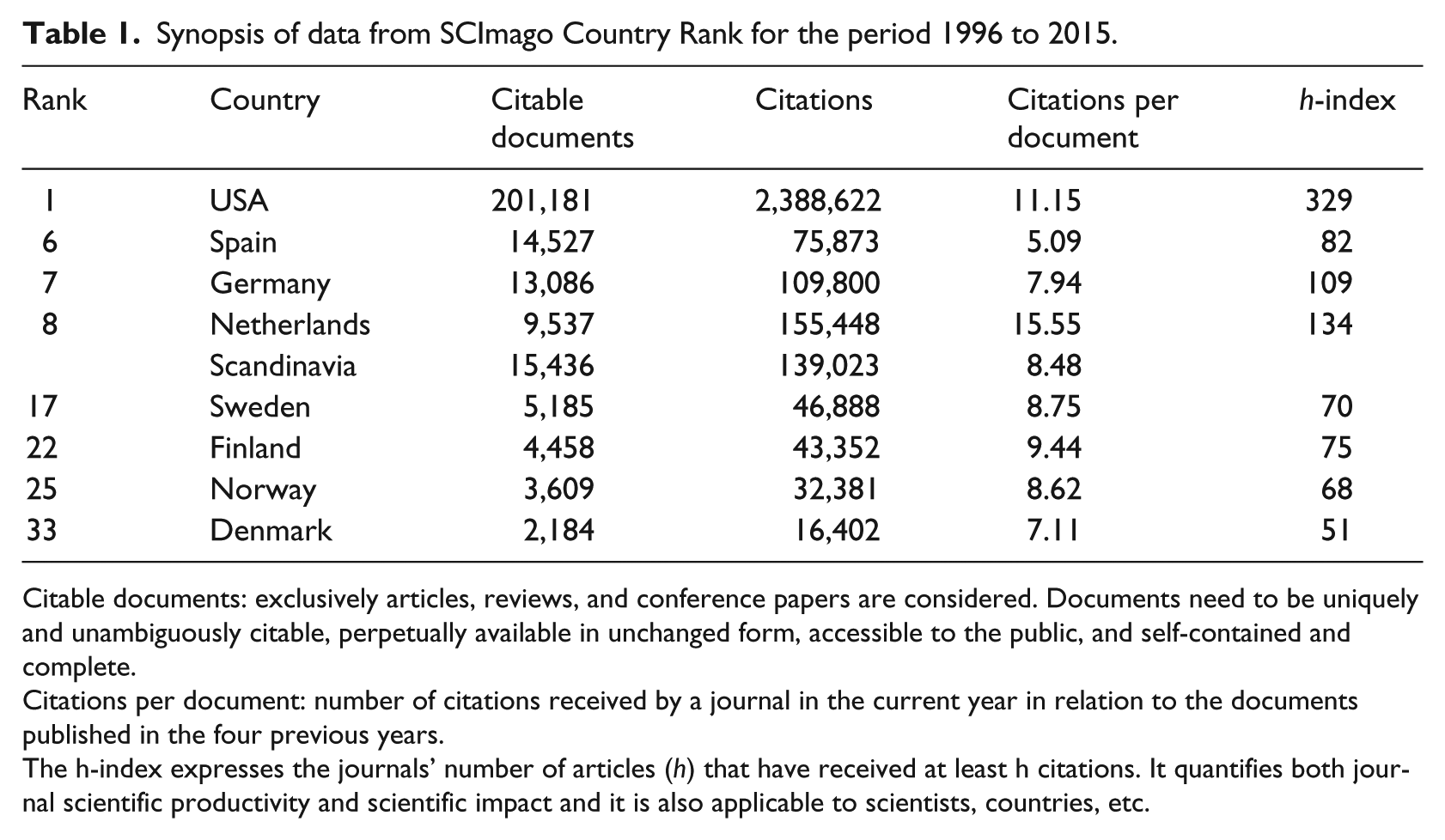

Since 2004, the overall quantity of publications for Scandinavian countries has been consistently higher than for Germany and the Netherlands. Remarkably, the number of citable documents from Spain has increased significantly since 2007, outperforming the other countries. In consequence, Spain takes position six in the SCImago Country Rank with regard to citable documents, followed by Germany and the Netherlands. In compliance with the idea of benchmarking, relevant data from the SCImago Country Rank, such as the number of citable documents, citations, and citations per document, are summarized in Table 1, which also includes, for comparison only, data for the United States of America.

Synopsis of data from SCImago Country Rank for the period 1996 to 2015.

Citable documents: exclusively articles, reviews, and conference papers are considered. Documents need to be uniquely and unambiguously citable, perpetually available in unchanged form, accessible to the public, and self-contained and complete.

Citations per document: number of citations received by a journal in the current year in relation to the documents published in the four previous years.

The h-index expresses the journals’ number of articles (h) that have received at least h citations. It quantifies both journal scientific productivity and scientific impact and it is also applicable to scientists, countries, etc.

Table 1 illustrates considerable differences in the publication patterns of the selected countries, but the predominance of the USA becomes immediately evident. However, SCImago ranks productivity in accordance with the quantity of “citable documents.” In consequence, Spanish educationalists reveal more productivity than their German and Dutch peers. This picture changes when we integrate the data for the Scandinavian countries, which outperform Spain overall with regard to citable documents. The picture changes again when we focus on the quantity of actual citations and the citations per document. Then the Netherlands turns out to be the most effective in the category “citations per document” (i.e. peer-reviewed articles), actually outperforming both the USA and the European countries.

It is often assumed that psychologists are more productive in publishing than educationalists (e.g. Knaupp et al., 2014). Data from SCImago Country Rank confirm this assumption. In Table 2, we have contrasted the productivity of education and psychology with regard to citations, citations per document, and the h-index.

Synopsis of data from SCImago Country Rank for education and psychology from 1996 to 2015.

In all its particulars, Table 2 confirms a different publication pattern and tradition of psychologists in their publishing policy. Again, among European countries the Netherlands leads in the category “citations per document,” which indicates a high efficiency of publications. Compared with pedagogues, German psychologists published four times more and Dutch psychologists three times more citable documents than educationalists.

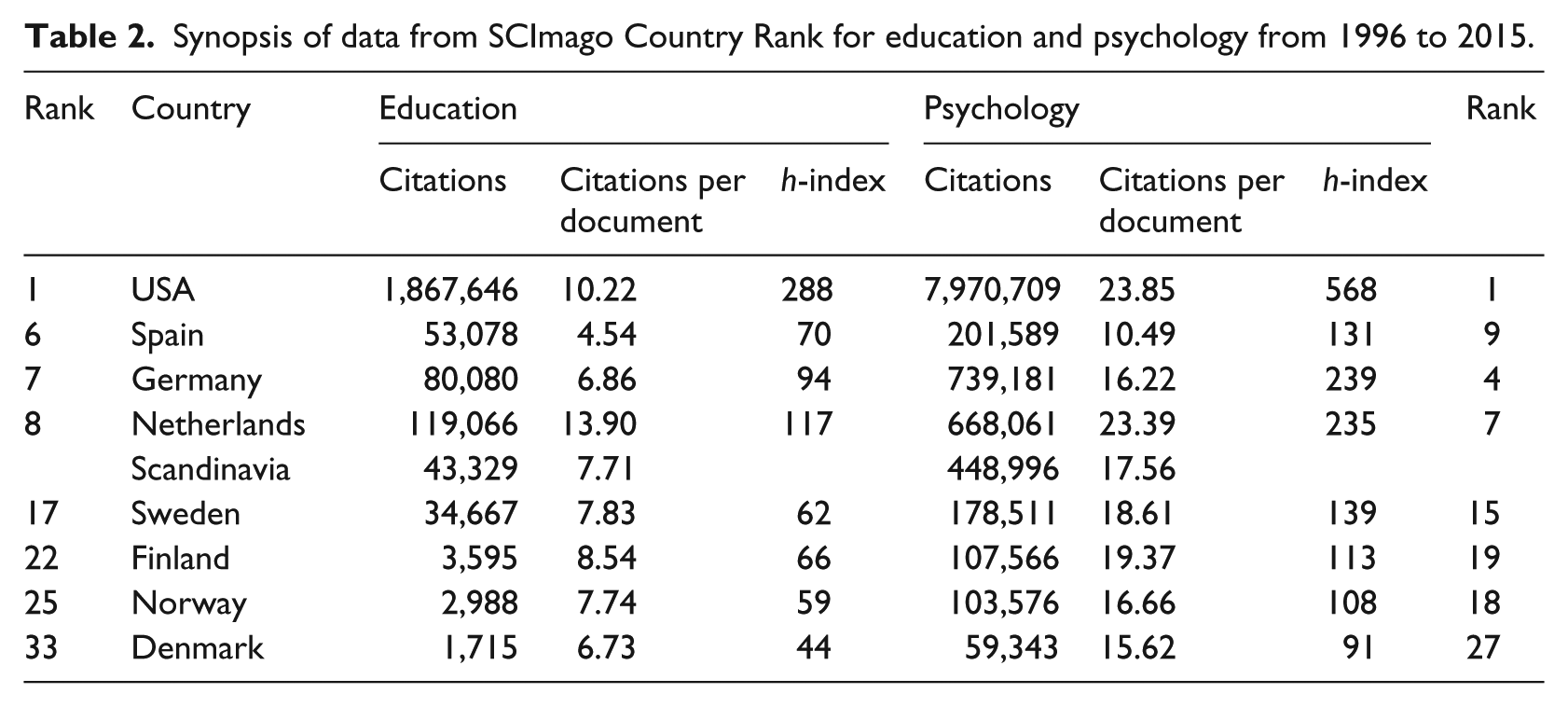

Although the reported data of the SCImago Country Ranks reveal evidence concerning the overall publishing productivity of different countries and disciplines, they provide no indications for international visibility. The quantity of publications in international journals is more significant for this factor. Therefore, we have analyzed the publication of articles from the Netherlands, Spain, Germany, and Scandinavia in international journals ranked in accordance with SCImago’s SJR indicator for the period 2010 to 2015. The results of this analysis are summarized in Table 3. The reported percentages refer to the relative share of all papers published in a journal that originated in one of the four countries. Originating means placing the nationality of authors. In the case of international teams of authors, each of them is included in originating documents.

Visibility of Dutch, Scandinavian, Spanish, and German educational research in international journals from 2010 to 2015–2016.

As expected, Table 3 shows a heterogeneous picture of the visibility of educational research in the selected countries. At first glance, it becomes clear that German education is not well positioned in the first 10 top-tier journals. Indeed, German educationalists did not manage to publish many papers in the top-ranked journals such as American Journal of Educational Research and Review of Educational Research, but this observation also applies to authors from other countries. When we arbitrarily consider a 5% level as “critical mass,” 40 of the 70 analyzed journals meet this criterion. However, German authors are only well represented in two top-tier journals: Child Development and Sociology of Education. However, a closer examination indicates that contributions of German authors to Child Development originate mainly from psychologists. Dutch and Scandinavian authors meet the 5% criterion in one top-tier journal.

The picture changes when we consider the publication patterns of the other top-tier journals because now one can find much bigger percentages that, however, are dependent on the particular thematic orientation of journals. It seems that German and Dutch authors are very well represented in educational journals with a psychological orientation, whereas the areas of technology, higher education, and adult education appear as particular domains of research in Spain and the Scandinavian countries. Actually, German authors meet the 5% criterion neither in technology-oriented journals nor in journals focusing on higher education and adult education.

Patterns of visibility in specific areas of educational research

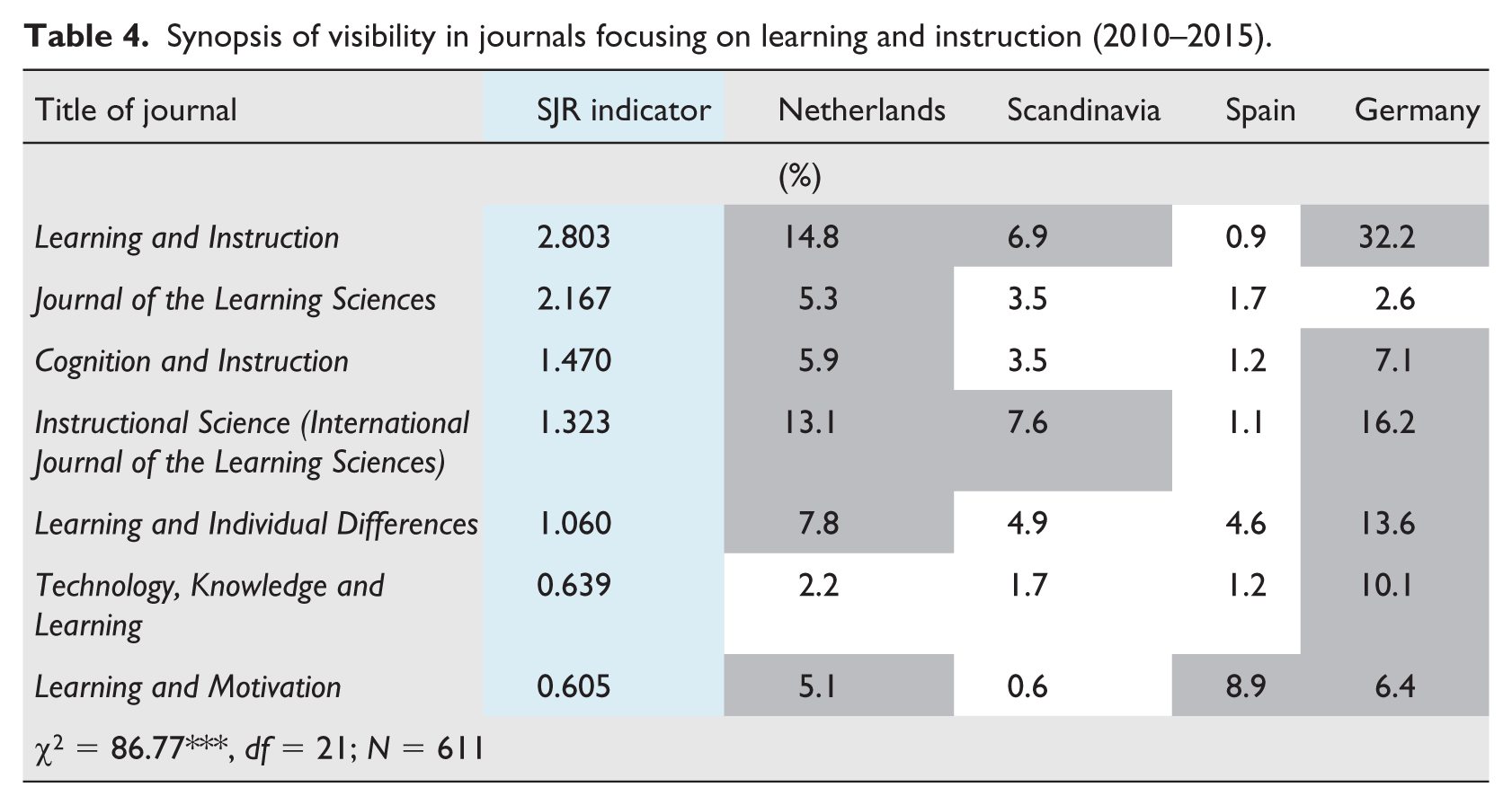

According to Table 3, authors from the included countries obviously differ in their concentration on particular fields of interest. For example, German authors contribute more often to the field of learning and instruction, whereas Dutch and Scandinavian authors focus on schooling, teaching, and related issues. Table 4 gives a survey of publication patterns in journals with the emphasis on learning and instruction.

Synopsis of visibility in journals focusing on learning and instruction (2010–2015).

German authors contributed the most articles to the journal Learning and Instruction, with Dutch authors coming in at a distant second. Notably, this journal is edited by the European Association of Research on Learning and Instruction (EARLI), in which Germany and the Netherlands are traditionally strongly involved—much more than Spain and Scandinavian countries. Apart from this particular relation with EARLI, German and Dutch authors also contributed significantly to other journals with a particular psychological orientation, such as Instructional Science, Learning and Individual Differences, Learning and Motivation, and Cognition and Instruction. By comparison, Spanish educationalists contributed significantly to Learning and Motivation, but rarely to the other journals. The top-ranked Journal of the Learning Sciences records only a small but significant quantity of contributions from Dutch authors. The lack of contributions from German, Spanish, and Scandinavian authors in this journal is likely linked to its emphasis on a particular orientation to research on learning (cf. Seel and Zierer, 2016). Remarkably, German authors contributed significantly to the journal Technology, Knowledge and Learning, but it seems that this journal is not well known in the other countries.

On the whole, the data in Table 4 confirm the observation made by Botte (2007) that research on learning is highly visible in international journals because it is also a special field of interest in psychology. Actually, a more detailed analysis shows that more than one-third of the articles in the journals mentioned in Table 4 were authored by psychologists. This holds true also with regard to journals focusing on issues of educational, developmental, and social psychology related to both education and psychology. From 2010 to 2015, German authors actually shared more than 10% of articles in the top-tier journals Educational Psychologist and Journal of Educational Psychology as well as more than 20% of articles in the journal Trends in Neuroscience and Education and the third-tier journal Talent Development and Excellence, which covers a broad range of educational and psychological issues, whereas Trends in Neuroscience and Education should be considered as an outlier of educational research. In principle, this applies also to Child Development, with its particular emphasis on neural processes.

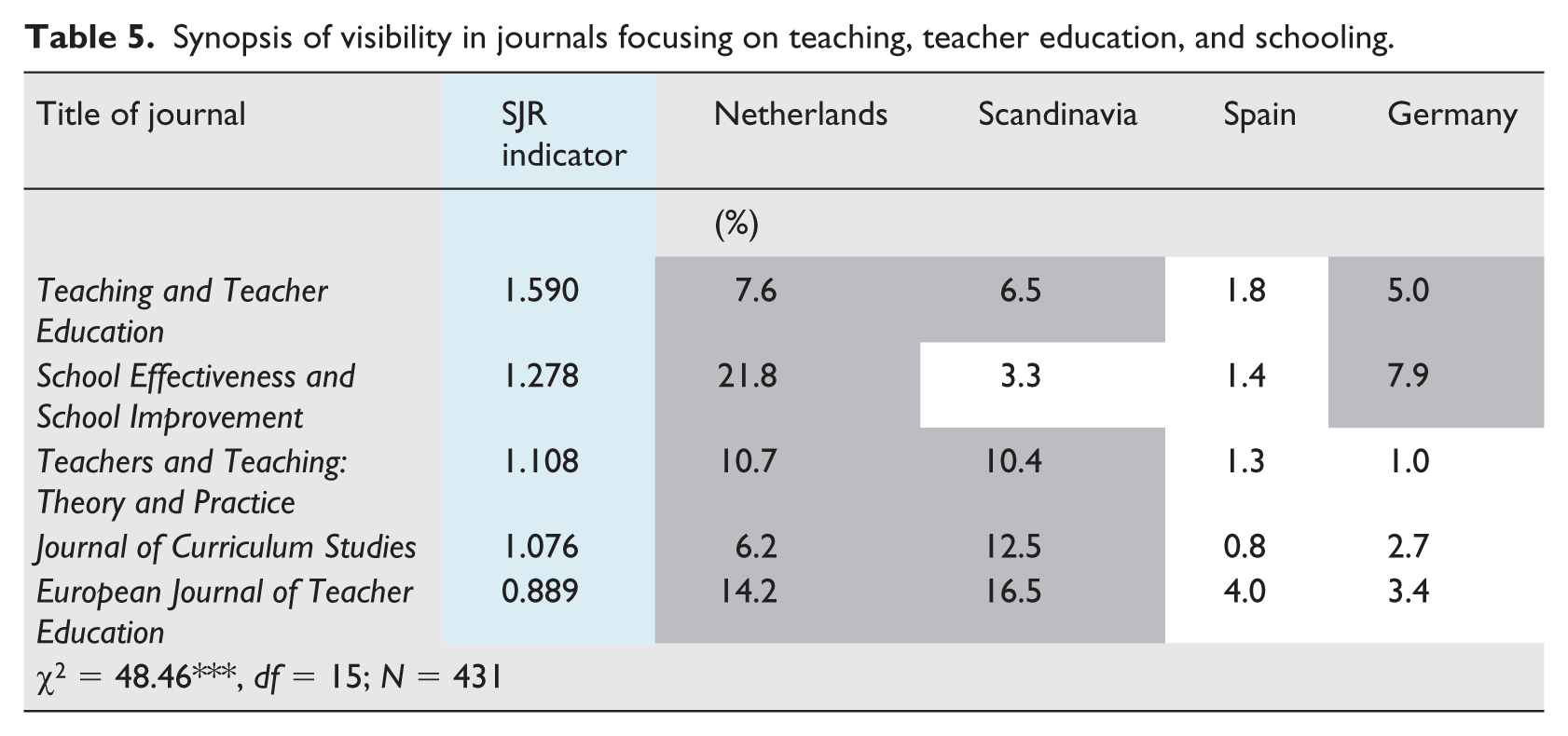

In comparison with the visibility of German pedagogy in international journals with a strong psychological orientation, the visibility in the domain of schooling, teaching, and teacher education decreases considerably (see Table 5).

Synopsis of visibility in journals focusing on teaching, teacher education, and schooling.

This table provides a clear picture, demonstrating that issues of teaching and teacher education as well as curriculum studies constitute the main focus of Dutch and Scandinavian authors, whereas German authors reach a sufficient portion of articles only for the journal School Effectiveness and School Improvement, but even this is far behind the portion of Dutch contributions. Inasmuch as this journal also focuses on evaluation, this result corresponds to the top positions of Dutch authors in other journals, such as Studies in Educational Evaluation (11.3%) and Educational Research and Evaluation (13.6%), both published in the Netherlands.

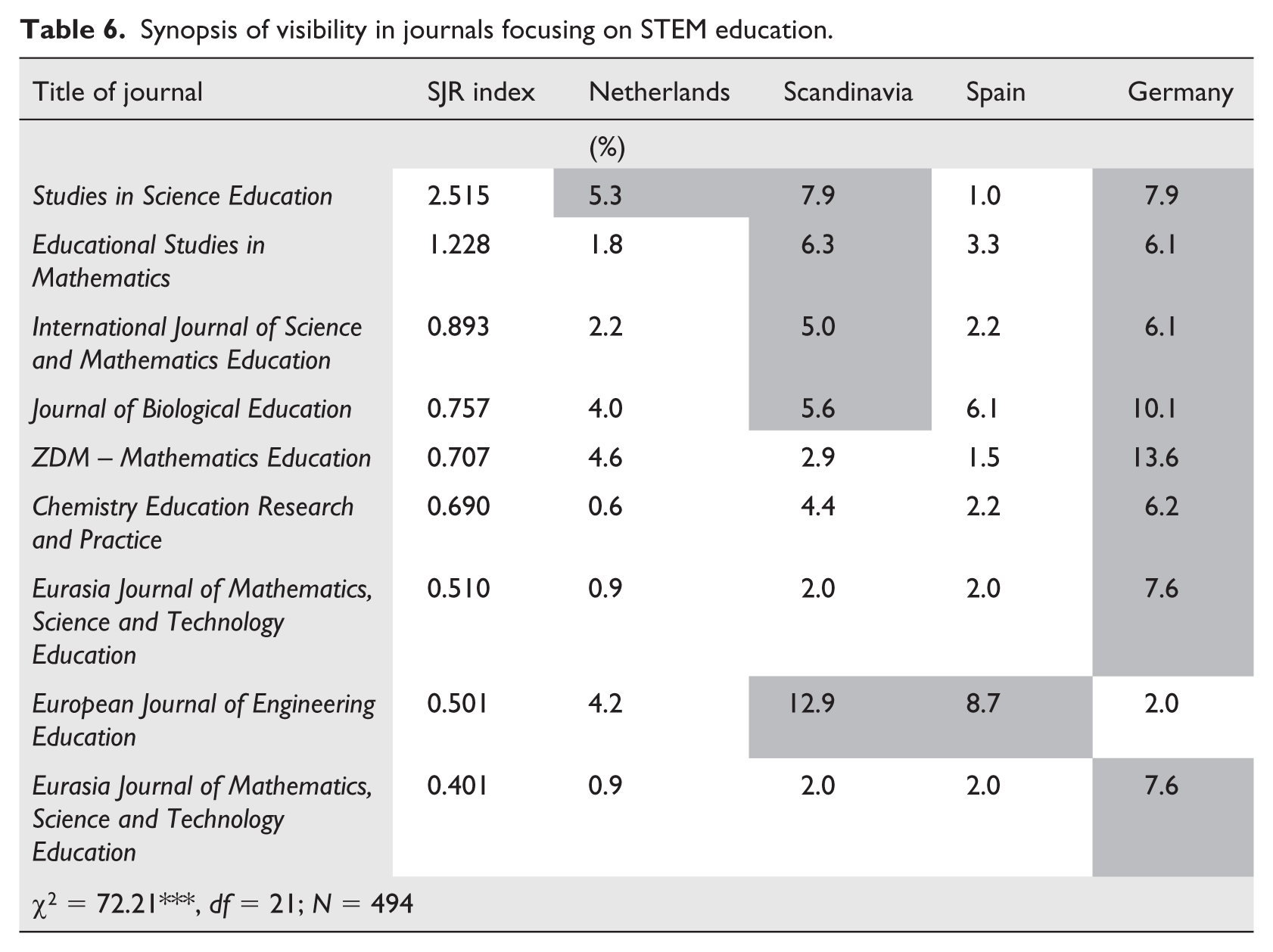

The picture with regard to teaching and schooling, however, changes considerably when we analyze the publication patterns for research on STEM education (i.e. science, technology, engineering, and mathematics).

Apart from the area of engineering education, which is significantly favored by Scandinavian and Spanish authors, German authors succeed especially in math and science, biology, and chemistry education, whereas Dutch authors are represented only modestly in these areas. With one exception, the journals listed in Table 6 do not include technology as a topic for which, however, contributions of German authors are sparse, as already mentioned above. Apart from a comparable appearance of German authors with a share of 10% in the 2nd-tier journal Technology, Knowledge and Learning, the visibility of Germany in technology-oriented journals is low, and this result must be additionally qualified because the majority of German contributions to this journal refer to knowledge and learning. The overall result of German authors in information technology corresponds to a longstanding and traditional conflict between technology and pedagogy in Germany. Remarkably, Spanish authors published widely in the area of information technology, and Dutch and Scandinavian also take good positions in this area. However, a large quantity of articles is contributed by Asian and North American authors. For example, more than two-thirds of the contributions to Computers & Education and Learning, Media and Technology are authored by Asian and North American educational technologists.

Synopsis of visibility in journals focusing on STEM education.

The visibility of German educational research is weak not only in the area of educational technology but also in the areas of higher and adult education. With the exception of the European Journal for Research on the Education and Learning of Adults, which shows a significant quantity of German contributions, the participation of German authors in journals of adult and higher education is actually not pronounced. This observation also holds true for Dutch pedagogues, whereas in Scandinavian countries the emphasis on these special fields of education is much more intense.

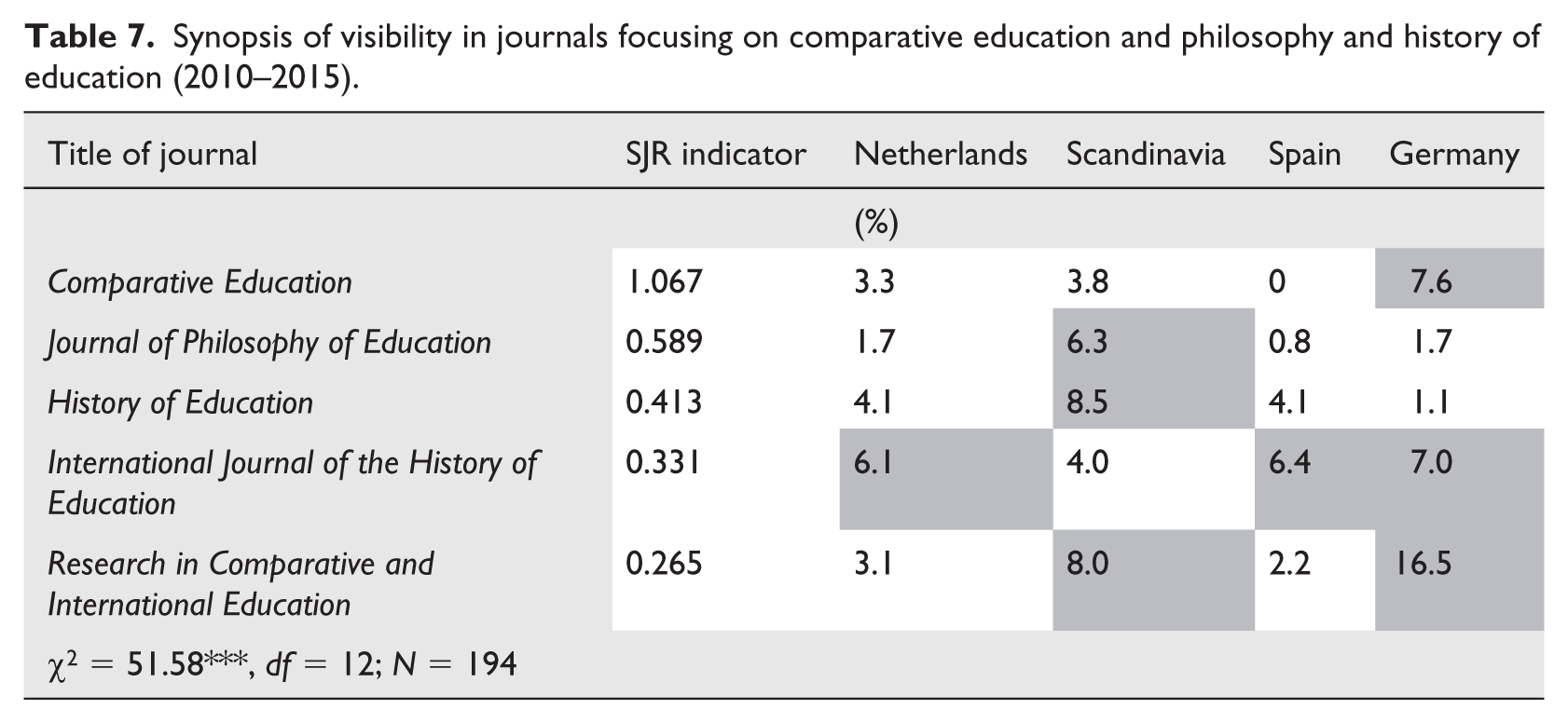

Interestingly, Scandinavian educationalists are also considerably represented in international journals focusing on comparative education and on philosophy and history of education (see Table 7).

Synopsis of visibility in journals focusing on comparative education and philosophy and history of education (2010–2015).

With the exception of publications in history of education, Spanish authors lack visibility in journals focusing on theoretical, conceptual, and methodological debates in the field of comparative education as well as in the philosophy of education. However, Dutch and German educationalists have also published comparatively few articles in philosophy of education, whereas German authors have contributed extensively to the area of comparative and international education. In contrast to Dutch and Spanish educationalists, their Scandinavian and German peers also contributed significantly to journals focusing on economics and management of education (see Table 3).

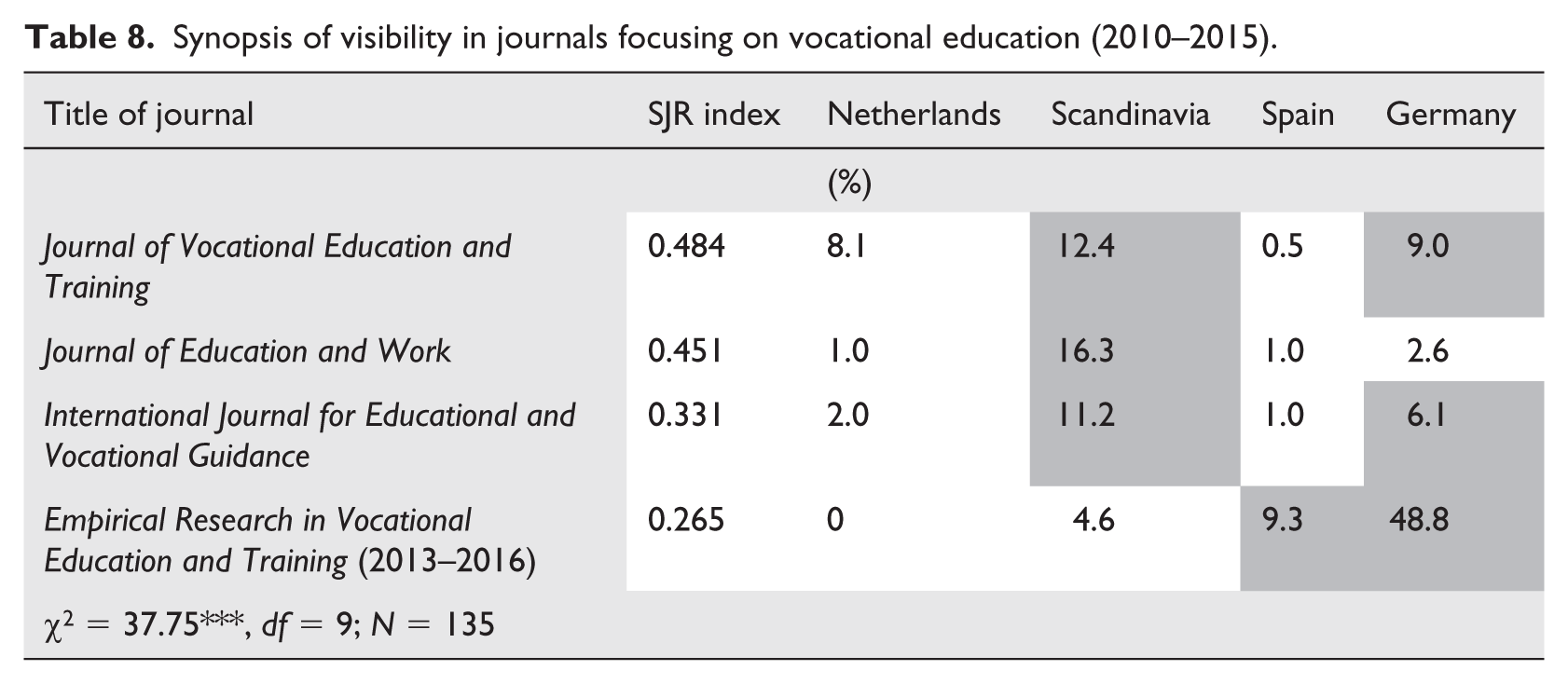

The results of the analysis with regard to vocational education are more balanced (see Table 8). Authors from the selected countries appear in different proportions in several journals, but on the whole it can be concluded that vocational education is of central interest across Europe.

Synopsis of visibility in journals focusing on vocational education (2010–2015).

Clearly, the huge proportion of contributions to the journal Empirical Research in Vocational Education and Training from German authors can be ascribed to the fact that this journal is published in Germany to attract German educationalists to publish in English. We will come back to this point in the next section of this paper. Here, we have to note that, compared with vocational education, the area of economic education is clearly underrepresented in the efforts of Dutch, German, Spanish, and Scandinavian authors to make their research visible in international journals.

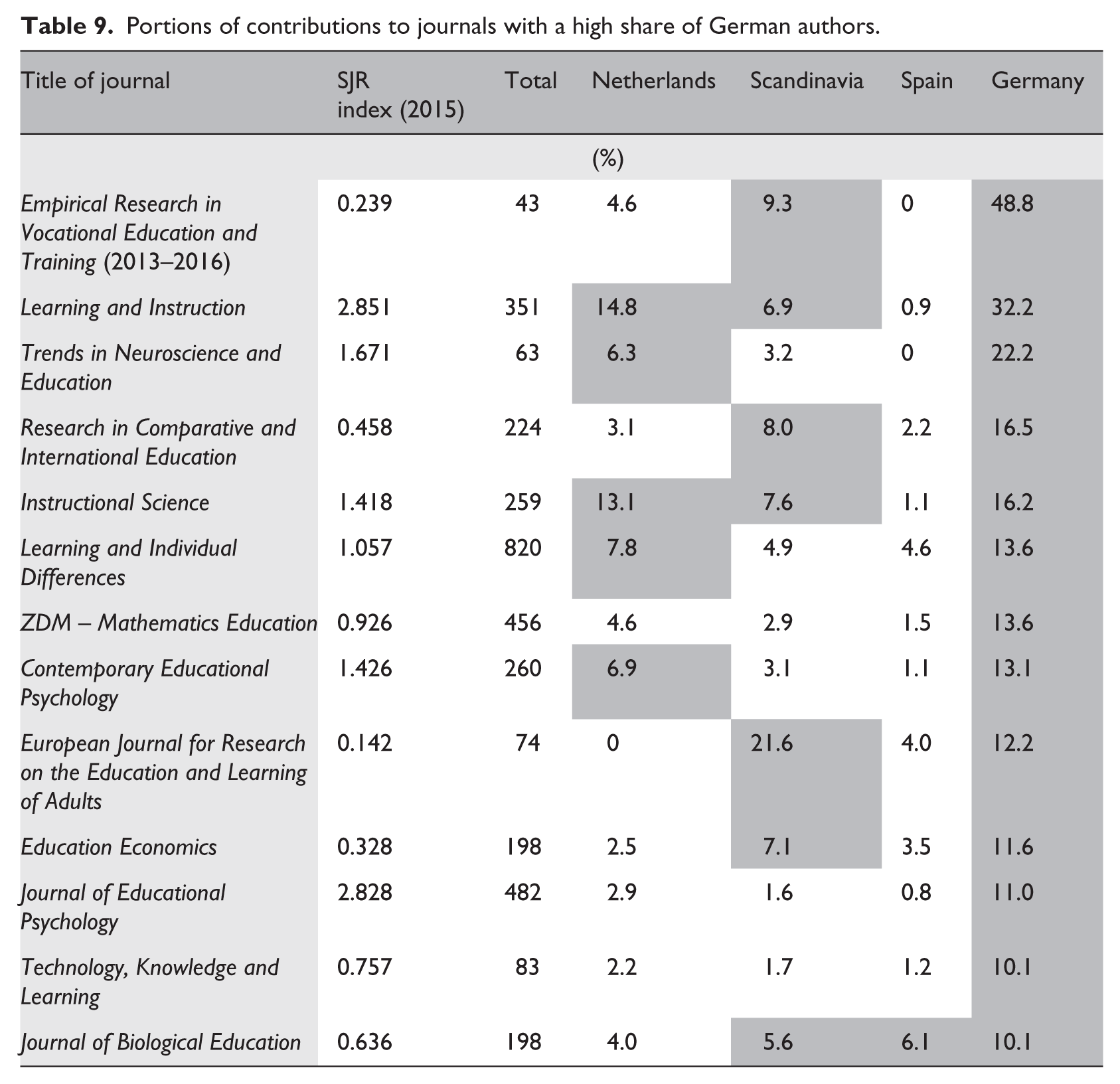

According to the bibliometric data from 2010 to 2015, the core areas of German educational research are learning and instruction, STEM education, and comparative and vocational education. To some extent, the large quantity of publications in the field of learning and instruction can be put down to contributions from psychologists, but despite this observation learning and instruction is actually a favored area of educational research in Germany. Table 9 summarizes the relative portions of articles from German authors in the aforementioned areas.

Portions of contributions to journals with a high share of German authors.

At first glance, the portions reported in Table 9 corroborate the belief that German educational research is highly visible in international journals, but this belief must be qualified because the percentage of German contributions is higher than 20% in three cases only, whereas in 10 cases the percentages vary from 10 to 16.5%.

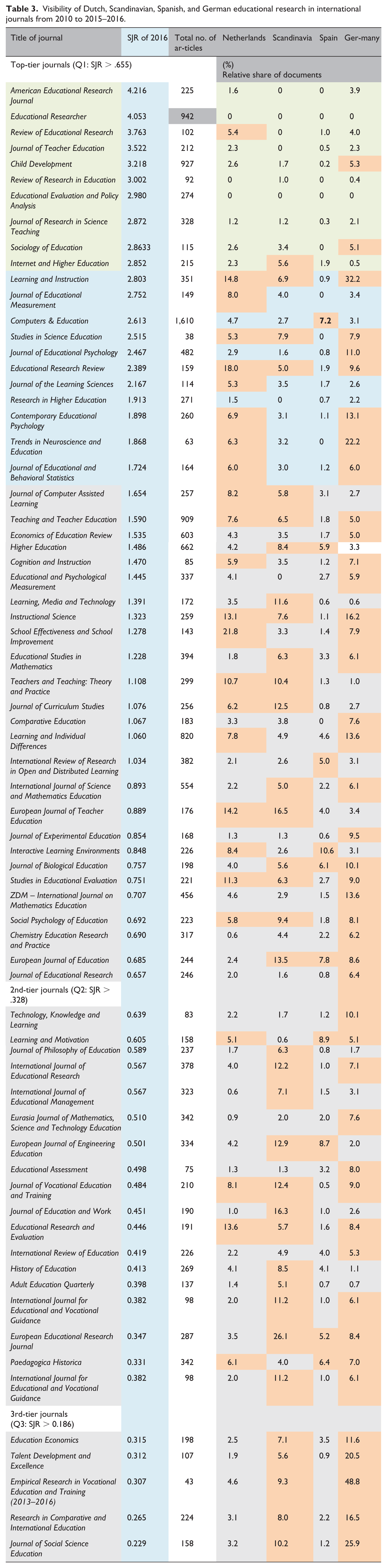

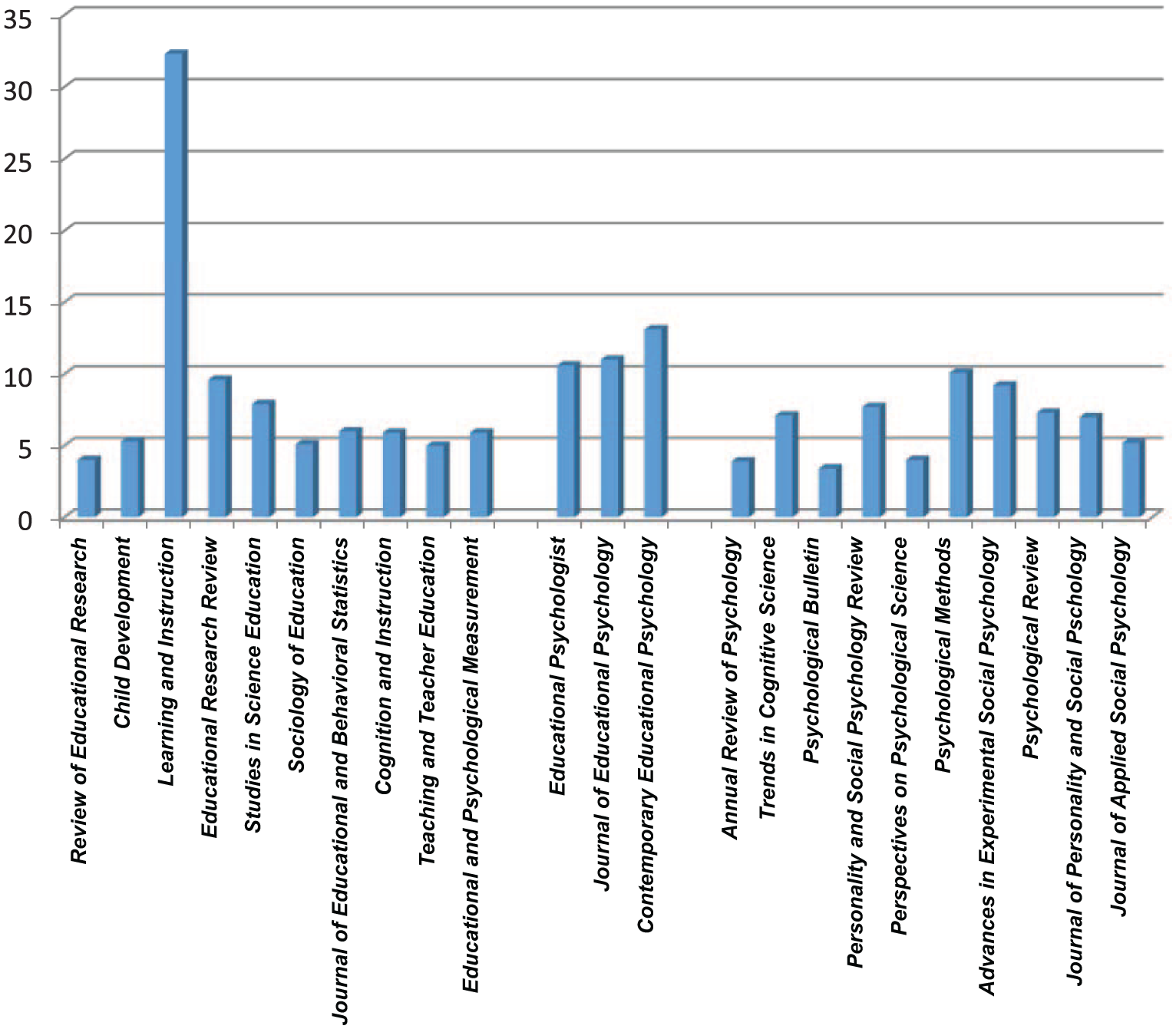

Contrasting the productivity of disciplines

Scientists’ activity and productivity are influenced by a wide range of factors, such as individual characteristics (e.g. age, educational background, gender) as well as the social and political context. Not to forget that research cultures matter (Holosko and Barner, 2016). Accordingly, the productivity of the educational researcher is often compared with the productivity of psychologists (Knaupp et al., 2014). Aiming at international visibility has been discussed in German psychological associations for decades, and thus, German psychologists started publishing in international journals much earlier. Hence, we have compared the patterns of publication of German pedagogues and psychologists in the 10 top journals of each discipline (see Figure 2).

Contributions of German authors to top-tier journals of education and psychology (2010–2015).

Due to the high percentage of contributions to Learning and Instruction, the portion of German contributions to journals listed by SCImago in the field of education is 8.7% higher on average than that in the field of psychology (6.4%). However, the list of educational journals contains some titles (e.g. Child Development) that belong topically to psychology, too. Additionally, Learning and Instruction, as well as other journals, such as Cognition and Instruction and Educational and Psychological Measurement, are targeted to some extent at psychologists as well. However, this observation is compensated for by the fact that German educationalists have contributed a significant portion of articles to three psychological journals with an explicit concentration on educational issues. Notwithstanding these observations, the results of the bibliometric analysis depicted in Figure 2 are surprising with regard to the rather small share of contributions from German psychologists to leading international journals of their discipline. This result is also surprising in view of Fiedler’s (2009) conclusion that the portion of internationally published documents is more than 60% in German-speaking countries. This apparent contradiction dissipates when we take into account that most psychological journals published in Germany have meanwhile changed over to the use of the English language and rigorous peer reviews. As a consequence, the qualitative difference between national and international journals does not exist anymore. Nevertheless, Fiedler (2009) asserts that German psychologists submit their best papers to the most internationally renowned journals first. Only if the submission is refused do they turn to a national journal. This fits also with the observation that educational psychologists spread their articles over top-tier journals in the realm of education, such as Learning and Instruction, Journal of Educational Psychology, and Contemporary Educational Psychology.

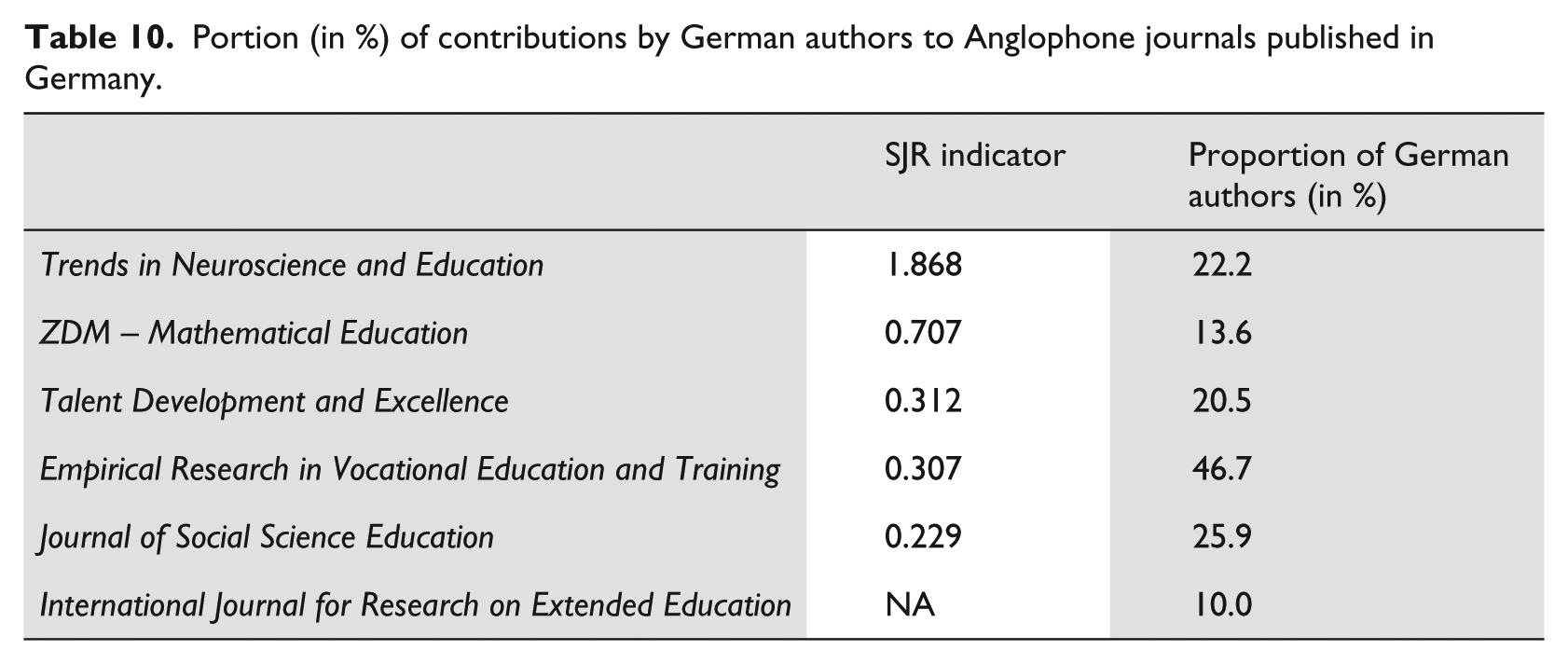

Altogether, it seems that the publication policy of German psychologists has set a precedent, because a similar policy in the area of education has become observable in the past few years. Several journals have gone over to publishing exclusively in English, not only the journal ZDM Mathematical Education—one of the oldest mathematics education research journals—but also newer journals, such as Trends in Neuroscience and Education or the Journal of Social Science Education.

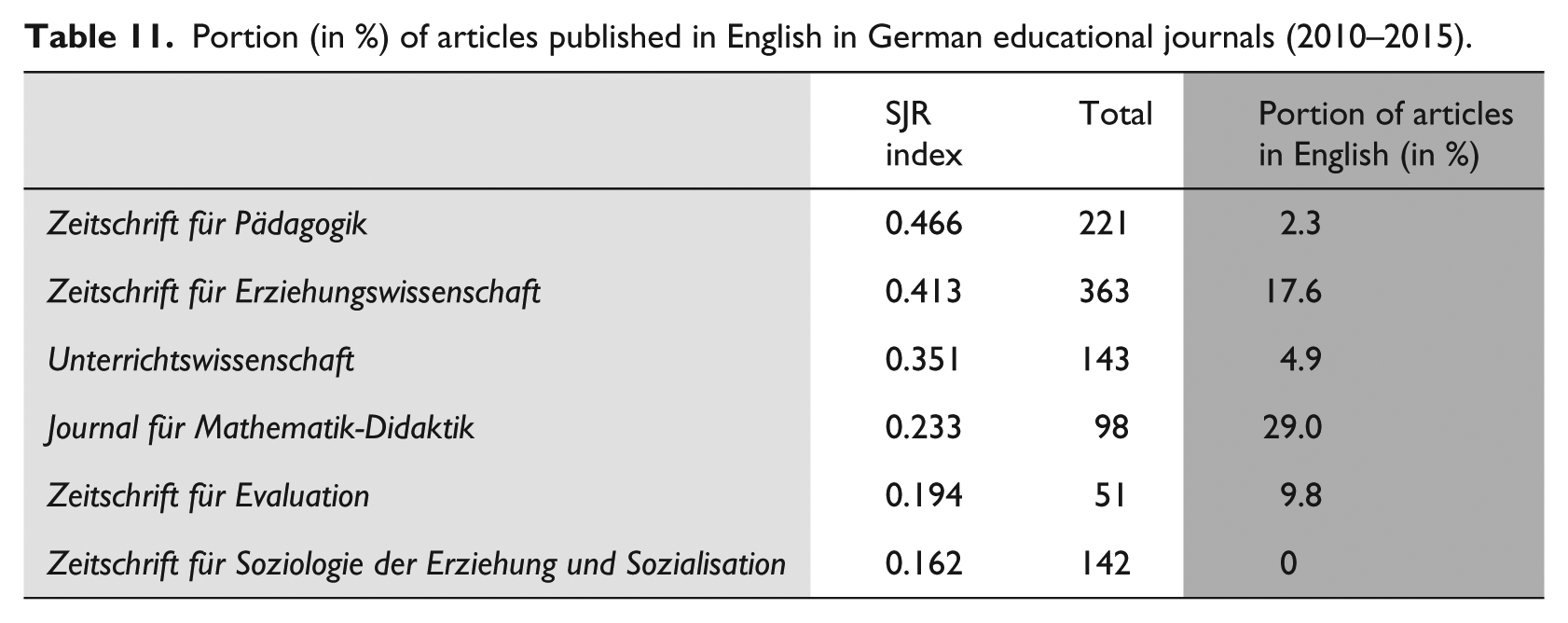

The relative portions reported in Table 10 point to the fact that—with the exception of the journal focusing on vocational education—the listed journals actually are international. In addition to Anglophone journals published in Germany, well-established journals like the Zeitschrift für Pädagogik have also started to publish articles in English. However, there are major differences between German journals listed by SCImago with regard to this publishing policy (see Table 11).

Portion (in %) of contributions by German authors to Anglophone journals published in Germany.

Portion (in %) of articles published in English in German educational journals (2010–2015).

Although two of the journals mentioned in Table 11 indicate a comparatively big share in articles published in English, the reported percentages are far from the portions of Anglophone articles in German psychology journals. This comes along with the observation that only a few German journals in the field of education possess an impact factor.

Discussion

In consideration of the reported bibliometric data, we can conclude that the visibility of German educational research in international journals is not as low as is often claimed. This conclusion applies to topics and issues of general interest as well as particular orientations. The data confirm an observation made by Zapp and Powell (2016) that educational research in Germany has undergone unprecedented changes over the past two decades. In contrast to the longstanding tradition of a philosophy-oriented and humanities-based pedagogy, a pronounced adjustment to empirical educational research has emerged since the late 1990s. However, it is only a small number of German academics who disperse their contributions among journals with comparable topical orientations. We interpret this as an indication that authors develop a tendency to repeat their success in transcending language barriers by publishing an article in an Anglophone journal. According to Fiedler (2009), this tendency corresponds to observations in psychology, where especially junior academics rarely feel inhibitions to publish in English. To frame it in strong words, in both psychology and pedagogy the names of the “usual suspects” regularly appear as authors in different journals, but this assumption needs more clarification through another study. Interestingly, our bibliometric analysis did not provide any evidence for a particular divergence between psychologists and educationalists with regard to publishing in international journals. Moreover, according to the SJR indicator, the Anglophone journals of German psychology are not more successful than the journals in the realm of education. Hence, the transition to the English language did not result in more international visibility of journals published in Germany. This observation is remarkable because there has been a controversial debate for some years about the fact that the German language is becoming increasingly less important in scientific terminology and communication (e.g. Ammon, 1998; Kretzenbacher, 2010). However, scientific thinking and arguing is essentially language-dependent, and the scientific vocabulary is thus coined by the language used. As a consequence, if we allow international journals to determine the standards of research publications, we run the risk of leveling out differences between scientific cultures and only fostering mainstream research paradigms.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.