Abstract

The concept of ‘approaches to learning’ (Marton, 1976) has long assumed a position of central importance in the analysis of student learning outcomes. However, constructing effective measures of students’ approaches to learning is a complex task, and it is an empirical question whether such measures transfer well across contexts. In this paper, we examine the functioning of a moderately modified version of one of the most commonly used assessment of approaches to learning – the revised two-factor Study Process Questionnaire (R-SPQ2F) – in three African contexts (Ghana, Kenya and Botswana). We first present confirmatory factor analysis, which demonstrates that the modified R-SPQ2F functions in these contexts as intended by the developers of the instrument. We then consider the potential utility of the R-SPQ2F in these contexts by examining its relationship with student background characteristics, educational experiences at universities and learning outcomes.

Introduction

‘Approaches to learning’ may be understood in very broad terms to include a wide range of affective and behavioural traits and orientations, most obviously concerning students’ strategies and motivations to learn but also encompassing a range of additional attitudes and behaviours considered conducive (or otherwise) to learning. These are characterized somewhat differently in the literature according to various disciplinary traditions; in this paper, we focus on one noteworthy conceptualization of approaches to learning derived from Marton and Saljo (1976), alongside one specific attempt to measure these approaches among students in higher education, derived from Biggs (1987). These are applied in three sub-Saharan African contexts (Botswana, Ghana and Kenya), where few studies of approaches to learning in higher education have been conducted to date.

In their seminal paper, Marton and Saljo (1976) distinguish between two fundamentally different ways of ‘processing’ when reading academic texts: a ‘deep’ approach focused on ‘understanding’ and ‘search for meaning’, and a ‘surface’ approach focused on reproduction and memorization. Marton and Saljo’s notion of ‘deep’ and ‘surface’ processing has since been extended – by Marton (1976) himself but also by others (e.g. Entwistle et al., 1979; Svensson, 1977) – to explain students’ more general approaches to learning. In this usage, those demonstrating a ‘surface’ approach are those who are motivated simply by progressing to the next stage in their education and, therefore, apply minimal effort in order to progress, and those demonstrating a ‘deep’ approach are those who are motivated by a desire to learn and understand and, therefore, engage meaningfully and appropriately with the task at hand. Although, in subsequent years, the distinction has received robust theoretical criticism, it has also been validated empirically in a number of studies, which have shown that students who demonstrate the characteristics of ‘deep’ rather than ‘surface’ approaches to learning tend to achieve better learning outcomes (for further detail on specific studies, see the following section).

Although it is not entirely clear from the existing evidence whether the link between approaches to learning and learning outcomes is causal, it at least appears to be possible that promoting ‘deeper’ approaches to learning might help to improve learning outcomes for students. Furthermore, research has shown that institutions can influence student approaches to learning, in both positive and negative ways (Biggs, 1993). As a result, those working to improve student learning within universities, including in many African contexts, often focus on student approaches to learning as an area for possible intervention and, therefore, seek ways to evaluate their students in this regard.

Nonetheless, the measurement of students’ approaches to learning is a complex task which attracts some debate. Furthermore, while the results from questionnaires aimed at measuring approaches to learning often show high levels of reliability in the contexts for which they are validated, it is an empirical question whether measures constructed for one context transfer well to another. With respect to higher education in sub-Saharan Africa, outside of South Africa, scant evidence is available on the reliability and validity of instruments to measure approaches to learning. In this paper, we contribute to remedying this gap by examining the functioning of a moderately modified version of one of the most commonly used assessments of approaches to learning – the revised two-factor Study Process Questionnaire (R-SPQ2F), designed by Biggs (1987). We do so by employing data from the ‘Pedagogies for Critical Thinking’ project, a longitudinal study of critical thinking in higher education in Botswana, Ghana and Kenya, established in 2015.

The paper proceeds by outlining the theoretical and empirical background and methodology to the study, before examining the scale reliability (internal consistency) of the R-SPQ2F in the three study contexts. We conduct confirmatory factor analysis (CFA) to examine the functioning of the instrument in comparison to expectations based on results from studies conducted by the developers of the instrument. We then proceed to examine the potential utility and predictive validity of the R-SPQ2F in the three contexts, based on its relationship with students’ demonstrated critical thinking ability at entry to university.

Literature review

In the 1970s, a series of studies conducted by researchers at the University of Goteborg (Gothenburg) demonstrated that students tend to utilize one of two qualitatively different levels of processing when confronted with academic texts: a ‘surface’ level, whereby students focus on the words in the text, and a ‘deep’ level, whereby students focus on the meaning behind the words (e.g. Marton and Saljo, 1976). Over time, the notion of ‘levels of processing’ expanded to that of approaches to learning (Richardson, 2015). Building on the Gothenburg studies, other researchers argued that ‘deep’ and ‘surface’ approaches could be identified more generally, with students tending to take either a ‘deep’ approach to learning, whereby they are motivated by interest in the task at hand, or a ‘surface’ approach, whereby they are motivated by something extrinsic to the purpose of the task, for example, by progressing to the next level in their education (Biggs, 1993). In contrast to the work of researchers at Gothenburg, which had focused on the processes adopted by students when confronted with particular tasks, the expanded field also considered students’ predispositions to adopt particular processes. Biggs’ work, for example, investigated students’ motivations towards learning and the strategies that they tended to employ, ultimately generating a two-factor ‘motive-strategy’ model akin to the ‘deep/surface’ model proposed by the Gothenburg studies (Biggs, 1993).

Taken as a whole, the approaches to learning literature has had a profound impact on educational research, including at the higher education level, as it has highlighted the importance of student motivation as a crucial factor affecting learning processes and outcomes. Accordingly, the concept has played an important predictive function in research, being employed to explain differences in learning outcomes between students in empirical studies (see, for example, Diseth, 2002). Importantly, unlike other individual differences that have been found to affect learning (e.g. IQ measures), approaches to learning appear to be less static or fixed and less stable (Biggs, 1993) and are therefore seen to be potentially more ‘malleable’. Indeed, there is strong evidence that elements of the teaching context do have a direct impact on the likelihood of students adopting deep or surface approaches (Biggs, 1993). The concept has, therefore, attracted considerable attention as a potential area for intervention. However, there has also been some important criticism of the concept over the years. Most importantly, there has been significant debate around the fundamental notion that students have only one general ‘approach’ to learning, given strong evidence that students actually employ different approaches to learning depending on the context and/or task (Laurillard, 1979; Ramsden, 1979). This concern has motivated additional research into the conditions which affect both students’ motivation to learn and the strategies that they are likely to employ when faced with different academic tasks and environments, which, in turn, has inspired a myriad of interventions within schools and universities, aimed at encouraging students to more consistently adopt ‘deeper’ approaches to learning throughout their educational careers (e.g. Norton and Crowley, 1995). 1

As theoretical interest in the concept of learning approaches has grown, so have attempts to empirically assess it. To date, the majority of studies that seek to evaluate learning approaches have used one of a small handful of inventories of learning processes. Of these, the most frequently cited are Biggs’ Study Process Questionnaire (SPQ) and Entwistle and Ramsden’s Approaches to Study Inventory (ASI). Both instruments seek to capture students’ motivations towards learning and the processes/strategies that they tend to employ when confronted with academic tasks. Both do so by presenting a battery of (usually Likert-scaled) attitudinal statements with which students must agree or disagree; and both have been validated for use in a variety of cultural and institutional contexts (e.g. Akande, 1998; Entwistle et al., 2000; Mogre and Amalba, 2014; Sulaiman et al., 2013).

However, there are concerns about the validity of these instruments. One common critique concerns their self-reported nature. Students may self-report particular behaviours due to social desirability bias and/or ‘acquiescence’ (Watkins and Regmi, 1995). Furthermore, self-report questions may reflect learners’ impressions of what their approach to learning should be, as opposed to reflecting their actual behaviour when confronted with academic tasks (Mitchell, 1994). An additional critique concerns the one-off nature of the assessment. It is clear, as discussed above, that students may take markedly different approaches to learning depending on the context. There are, therefore, concerns with taking any assessment of learning approaches as indicative of a general state of being, or as a valid description of the student as a learner (Haggis, 2003). These concerns are exacerbated when the instruments are used in cross-cultural context (Richardson, 2004). In their analysis of the use of ASI in South Africa, for example, Mogashana et al. (2012) identified three problems likely to lead to students selecting ‘incorrect’ (or at least inauthentic or unintended) responses: (a) statements that confused students; (b) statements in which one word triggered a problematic response; and (c) statements generating answers that would vary depending on the particular learning context (p. 788). These results cast doubt on the appropriateness of using such inventories in cross-cultural context, particularly without additional validation with the specific population in question.

Methodology

Research objectives

The ‘Pedagogies for Critical Thinking’ (PCT) project is a mixed-methods study which investigates the impact of pedagogical reforms aimed at improving critical thinking skills within a sample of universities in Kenya, Ghana and Botswana. It follows a sample of students longitudinally, assessing their gains in critical thinking skills over a two-year period, and complements these quantitative results with qualitative analysis of the teaching and learning environment within each of the participating universities. The quantitative component of the design of the PCT project follows a ‘difference-in-difference’ approach, in which student gains in critical thinking across the research sites are compared, taking account of both individual student-level and institutional-level factors. These factors are measured using a student-background questionnaire and a student-experience questionnaire, in addition to the approaches to learning inventory discussed in this paper.

With respect to measurement of approaches to learning, we were conscious of the limitations of existing inventories of learning approaches, as discussed above, especially their inability to effectively capture the diversity of approaches that each individual employs when faced with different learning contexts. However, we felt it was important to include some measure of student approaches to learning in our difference-in-difference design for two reasons: (a) we assumed that incoming student attitudes/approaches to learning would likely affect both their incoming critical thinking ability and their gains over time (meaning that they were likely to be an important confounding variable in our longitudinal analysis); and (b) we hypothesized that the pedagogical reforms under investigation might have an unintended impact on student learning approaches, in addition to their (intended) effect on student critical thinking skills. We therefore opted to include an inventory of learning approaches in our quantitative data collection and selected the revised two-factor version of the SPQ (Biggs et al., 2001) as our chosen instrument, owing to its extensive use in diverse cross-cultural contexts (see, for example, Phan and Deo, 2007). However, given our concerns with cross-cultural validity, we elected to begin with validation of the tool for use in the three country contexts before proceeding to the main analysis. This paper focuses on the results of that initial validation phase.

The research questions addressed in this paper are, therefore:

To what extent can the R-SPQ2F be considered a reliable and valid measure of approaches to learning in Ghana, Kenya and Botswana?

To what extent do students’ surface/deep approaches to learning (R-SPQ2F results) when first arriving at university differ according to the faculties in which they study? (In other words, are approaches to learning related to selection into particular universities in these contexts?)

To what extent do the results of the R-SPQ2F predict students’ incoming skills in critical thinking, including when conditioning on key student background factors?

Study sample

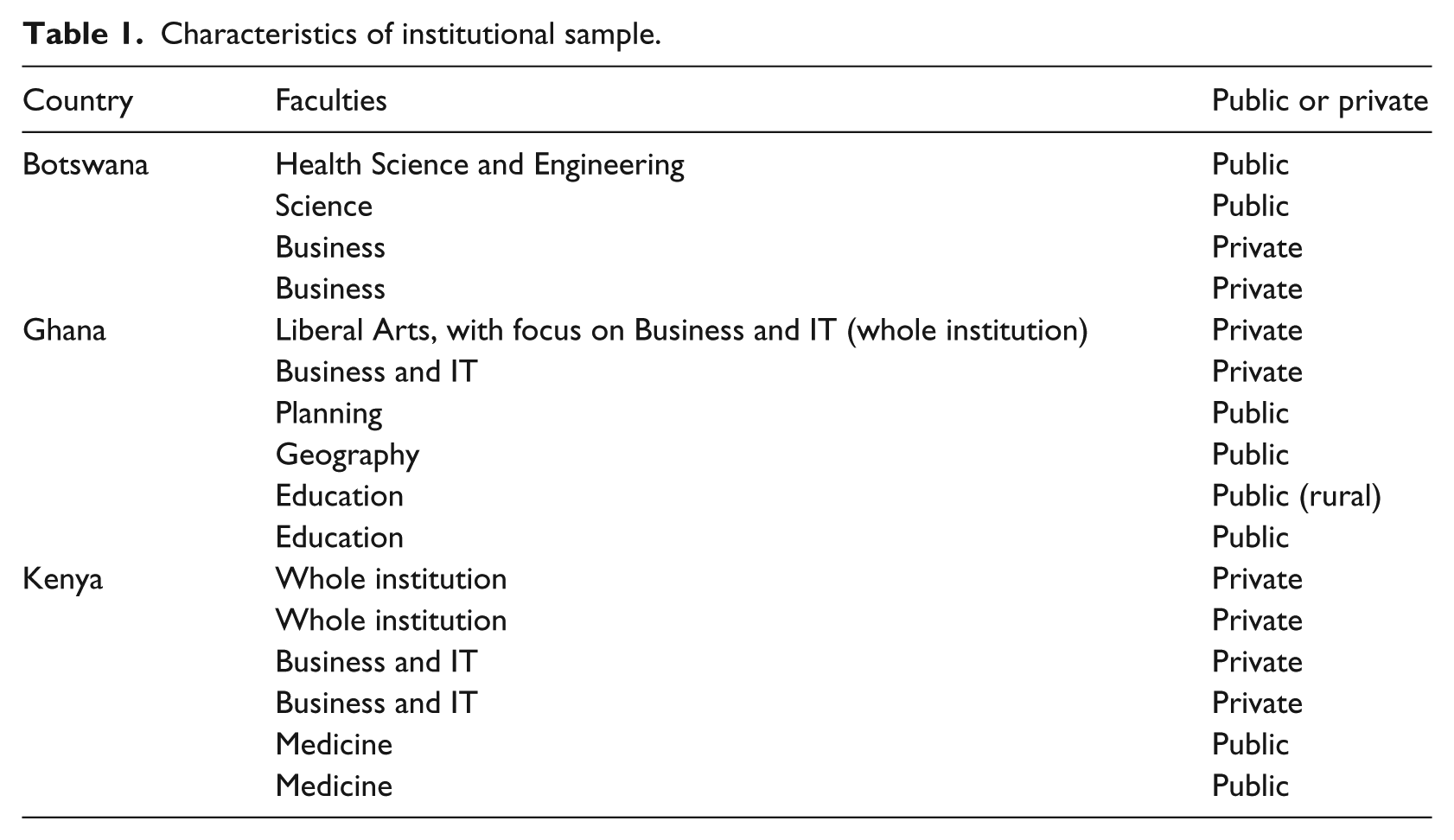

Students in the PCT study were randomly sampled from within 16 research sites. Most of these were university departments or faculties, but the institutional sample also included a few whole universities. Basic characteristics of the 16 research sites are outlined in Table 1.

Characteristics of institutional sample.

As the PCT project overall aims to assess the effectiveness of eight pedagogical ‘innovations’, the institutional sample was purposively selected (with eight of the research sites being identified by stakeholders as sites in which ‘innovative’ pedagogies are in use and eight selected as appropriate ‘controls’, in which more traditional pedagogy is employed). As a result, the aggregated country-level samples in this paper are not representative of students nationally or sub-nationally and cannot be used for simple descriptive comparisons.

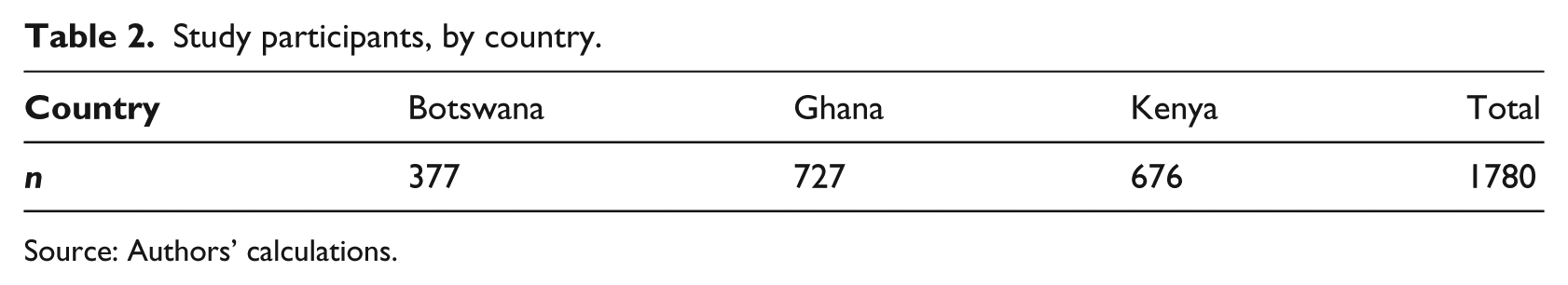

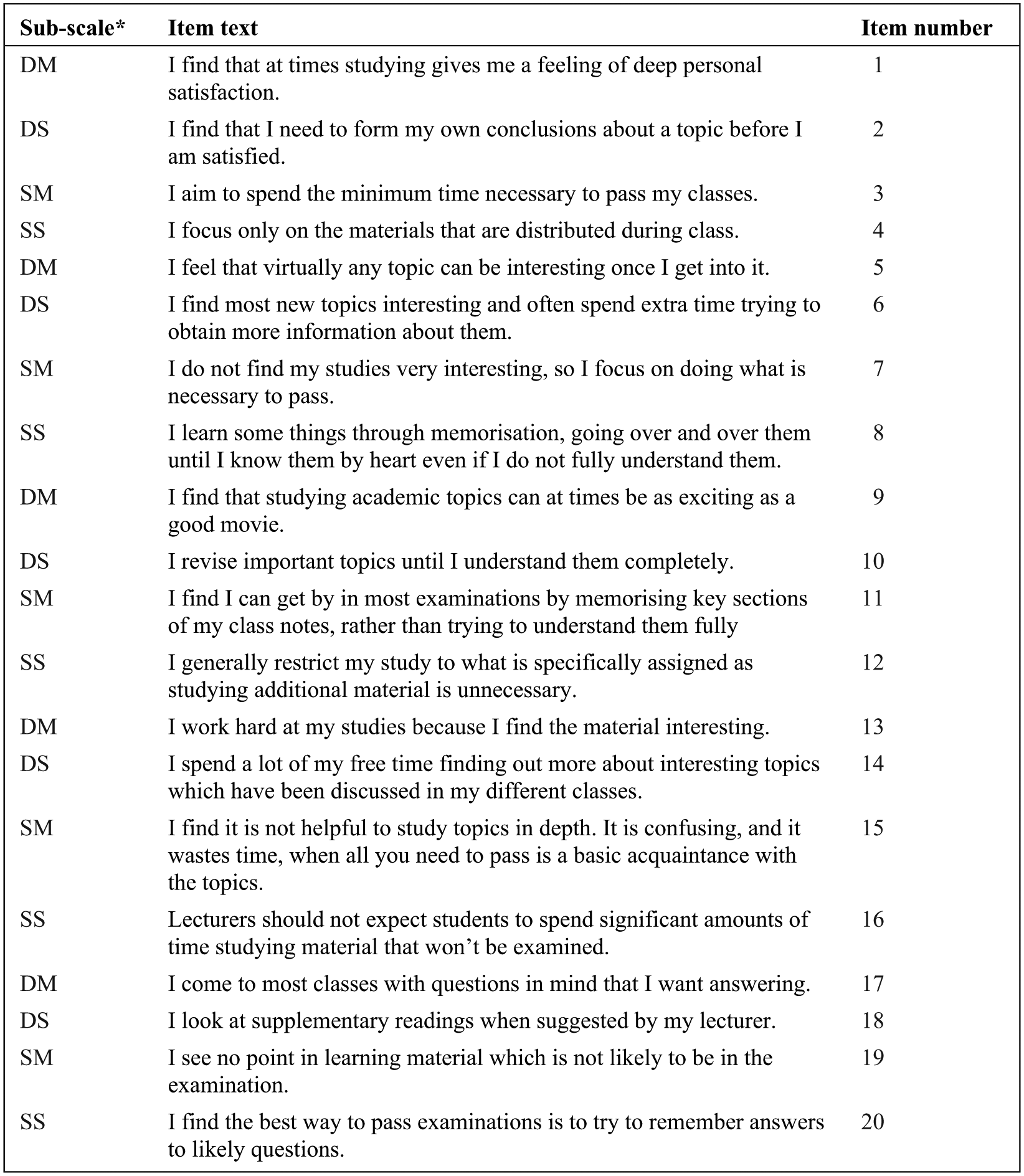

In terms of the student-level sample, a random sample of approximately 120 first-year undergraduate students was drawn from enrolment lists at each of the research sites. In this paper, we restrict our analysis to the baseline results, as this allows us to focus on the relationship between student approaches to learning and student critical thinking ability at the beginning of their time at university (therefore allowing us to consider the question of how approaches to learning may form part of the selection effect evident when comparing the teaching and learning environment in different universities). Focusing explicitly on the baseline results also allows us to avoid some of the problems with the one-off nature of the assessment, as we are interested in students’ general statements about how they learn, at the time of entering university, rather than using the results as indicative of all strategies they might employ when faced with different kinds of academic tasks during their time at university. At baseline, there were 1780 student participants, disaggregated by country (Table 2). Table 3 reports some basic characteristics of the students in each of the three country samples. These show that the sample is predominately male in Ghana, while being balanced in the other two countries. The sample is more advantaged in terms of educational background in Kenya, where more than four-fifths of students come from homes with at least one member with higher education. Even in Ghana this figure is around three-fifths, indicating, as expected, that university students in all three countries are a somewhat advantaged group in this respect. Relatively few had attended private schools, however, except in Kenya where the proportion who had done so was slightly below one third. Incoming critical thinking scores are not very dissimilar, while being somewhat higher in Botswana.

Study participants, by country.

Source: Authors’ calculations.

Key characteristics of study participants, by country.

Including university or technical college.

Maximum score on the critical thinking assessment was 45. A detailed explanation of the critical thinking assessment used in the PCT study is available as PCT Technical Note 1 (Schendel and Rolleston, 2018).

Source: Authors’ calculations.

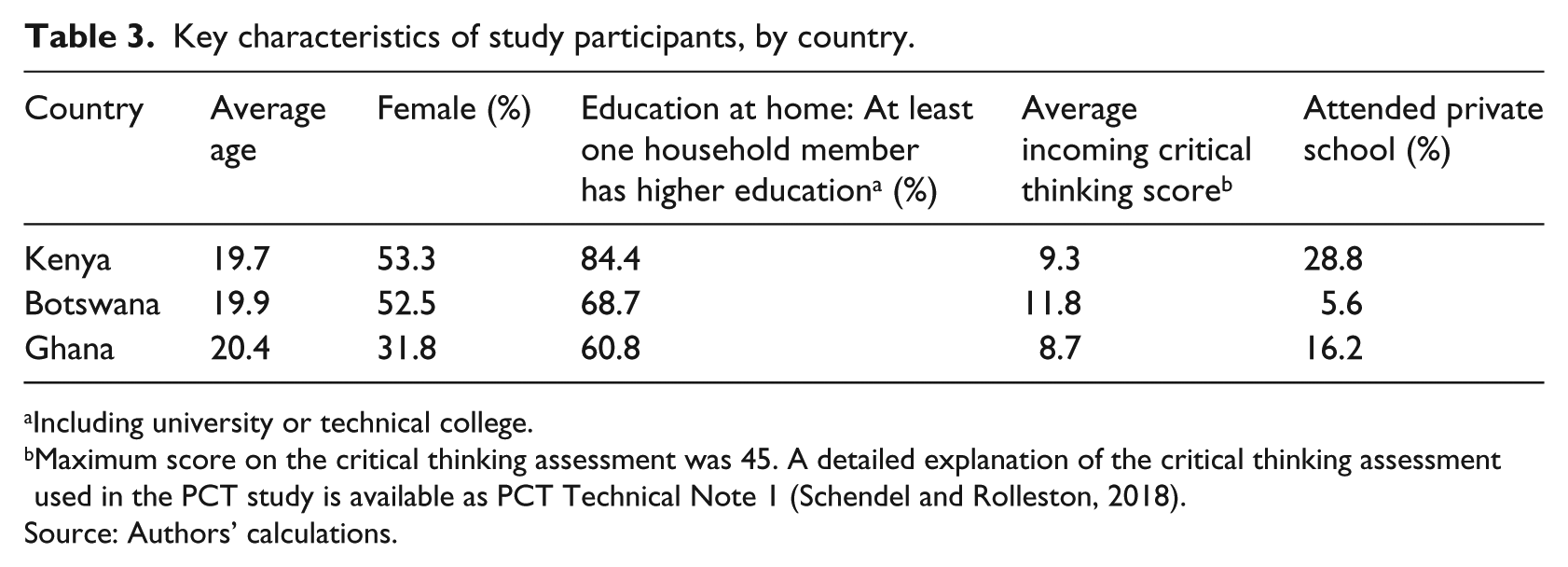

Adaptation, implementation and administration of R-SPQ2F

The R-SPQ2F (Biggs et al., 2001) is a self- administered 20-item questionnaire, consisting of two main scales: (a) deep approach and (b) surface approach to learning. Each of these main scales has two subscales related to students’ attitudes to study (motivation) and their ways of studying (strategy). Thus, the R-SPQ2F provides four subscales scores: (a) deep motive (DM) and (b) deep strategy (DS); and (c) surface motive (SM) and (d) surface strategy (SS). All items are scored on a four-point Likert scale, with students responding to each item (see Figure 1) by selecting ‘never or rarely’, ‘sometimes’, ‘often’ or ‘always or almost always’ true of me.

Revised two-factor Study Process Questionnaire (R-SPQ2F) Questions used in the PCT project.

Given the concerns raised by studies such as Mogashana et al. (2012), the validity of the instrument in the Kenyan, Ghanaian and Botswanan contexts needed to be examined prior to administering the R-SPQ2F. We therefore elected to conduct a qualitative pre-pilot of the instrument with a small sample of students in each of the study locations. During piloting, we met with two focus groups of approximately 10 undergraduate students in each of the study locations and asked them to share their understandings of, reactions to and interpretations of the question items on the R-SPQ2F. The focus groups confirmed that the statements did occasionally mean slightly different things to the students involved. Nonetheless, they demonstrated that the items were generally well understood and mostly culturally appropriate. However, following consultation with the pilot participants, we opted to slightly modify the wording of a small number of the statements, to improve clarity and reduce ambiguities that they had identified. We then piloted the instrument with a sample of 218 students across the three contexts to examine the likely reliability of the instrument before proceeding to include the R-SPQ2F in the full-scale study. Results from the pilot indicated relatively high levels of reliability given the small samples, with Cronbach’s alpha values of approximately 0.6. Figure 1 shows the full set of items administered in the final version of the questionnaire and records the sub-scale to which each item belongs – surface, deep, strategy and motivation.

Scoring the R-SPQ2F

We then followed Biggs to compute scores using a simple additive approach, providing a ‘deep’ and ‘surface’ approach score for each student. In the case of each Likert-scale item, responses were scored 1–4 with 4 being the highest with respect to the trait concerned (effectively reversing negatively phrased items). As each of the two approaches is addressed by half of the items (i.e. 10), the maximum score for each approach is 40. In addition, we employed a partial-credit model (PCM 2 ) based on item response theory (IRT) to estimate values for the latent traits ‘surface’ and ‘deep’. This second method has the potential advantage that, unlike in the simple additive method, each item is not automatically weighted equally in the estimation of the trait values, allowing some items to have greater weight in the resulting scale. For example, strong agreement that ‘studying provides a deep sense of personal satisfaction’ might be expected a priori to count for more in a measure of deep approaches to learning than ‘I come to most classes with questions in mind I want answering’, even though both are measures of ‘deep motivation’. The second method also provides estimates for students who have failed to answer one or more questions, estimating a trait value based on the questions for which responses are available. The model effectively allows for Likert-response thresholds for different questions (for example between strongly disagree and disagree) to weigh differently in the estimation, as well as for different questions to weigh differently in the computation of scaled scores.

Confirmatory factor analysis

In literature relating to the R-SPQ2F in other contexts, both theory and empirical studies provide support to the intended two-factor structure of the tool (Biggs et al., 2001). Although our pilot had suggested that the underlying construct (i.e. approaches to learning) could be considered valid in the study contexts prima facie, it was necessary to also conduct an empirical validation exercise of the two-factor structure prior to beginning analysis, in order to confirm that the questionnaire would operate in a similar manner in our three study contexts. We opted to use confirmatory factor analysis (CFA) for this process, as outlined below.

Predictive validity and the relationship between approaches to learning and critical thinking

In addition, we sought to examine the extent to which a measure of approaches to learning might be associated with the learning outcome of interest in our study, namely critical thinking. Subject to adequate functioning of the inventory in context, we hypothesized that students whose approach to learning identified more closely with a ‘deep approach’ and less closely with a ‘surface approach’ would demonstrate higher levels of critical thinking skills. This leaves open the question of causal interpretation to the extent that students whose approach may be described as ‘deep’ are likely to differ in important ways from those with a ’surface approach’, many of which may also explain differences in incoming critical thinking ability, providing for a complex relationship. Some of the factors that might be expected to be correlated with both critical thinking and approaches to learning include home background, previous educational (school) experience, gender and area of residence. We elected to consider these relationships via a simple descriptive regression modelling approach, aiming to identify the extent to which an apparent influence of approaches to learning remains after controlling for some key possible confounding effects.

Results and discussion

Exploring the validity of the R-SPQ2F in context

Distributions of measured approaches to learning

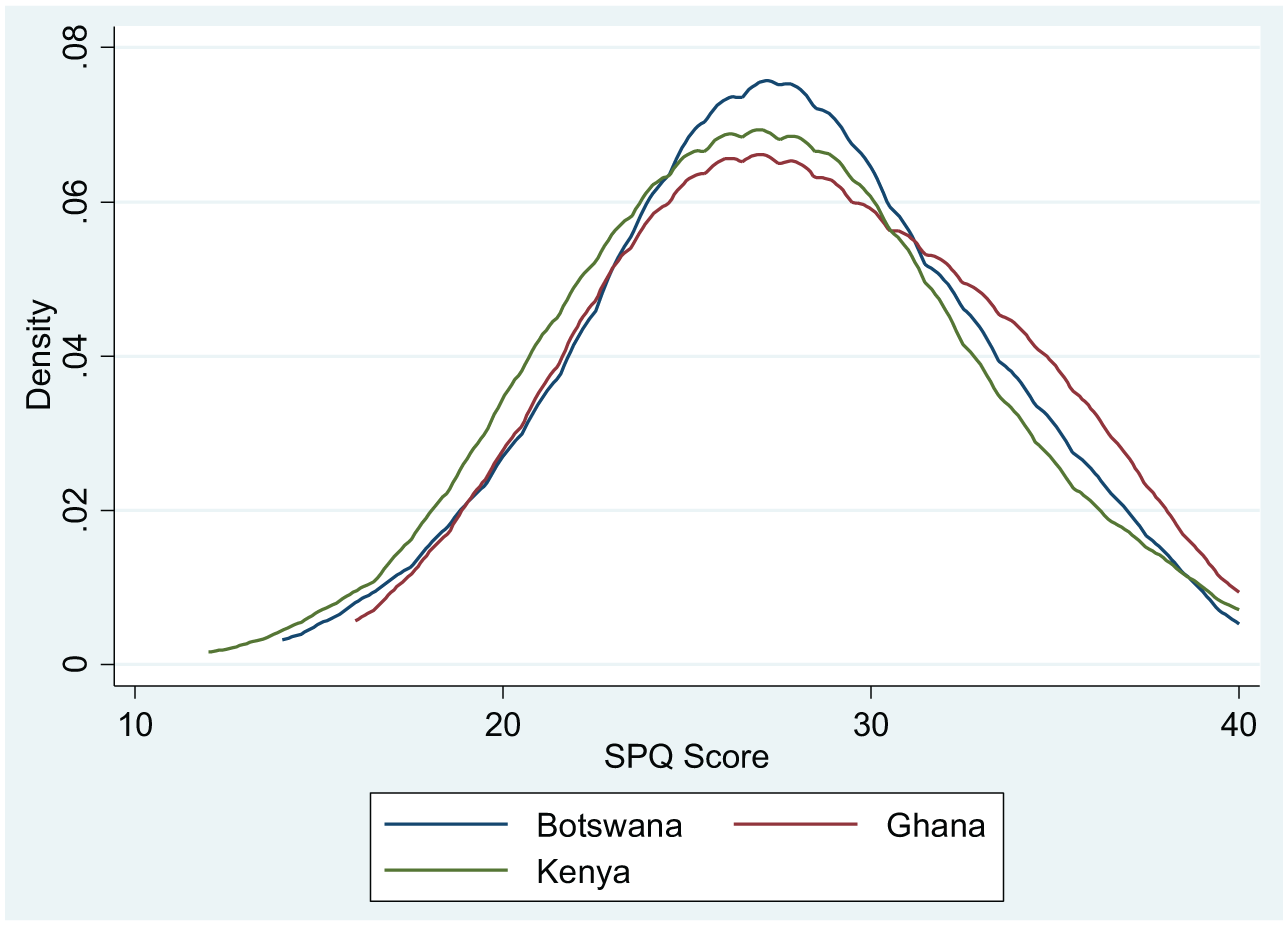

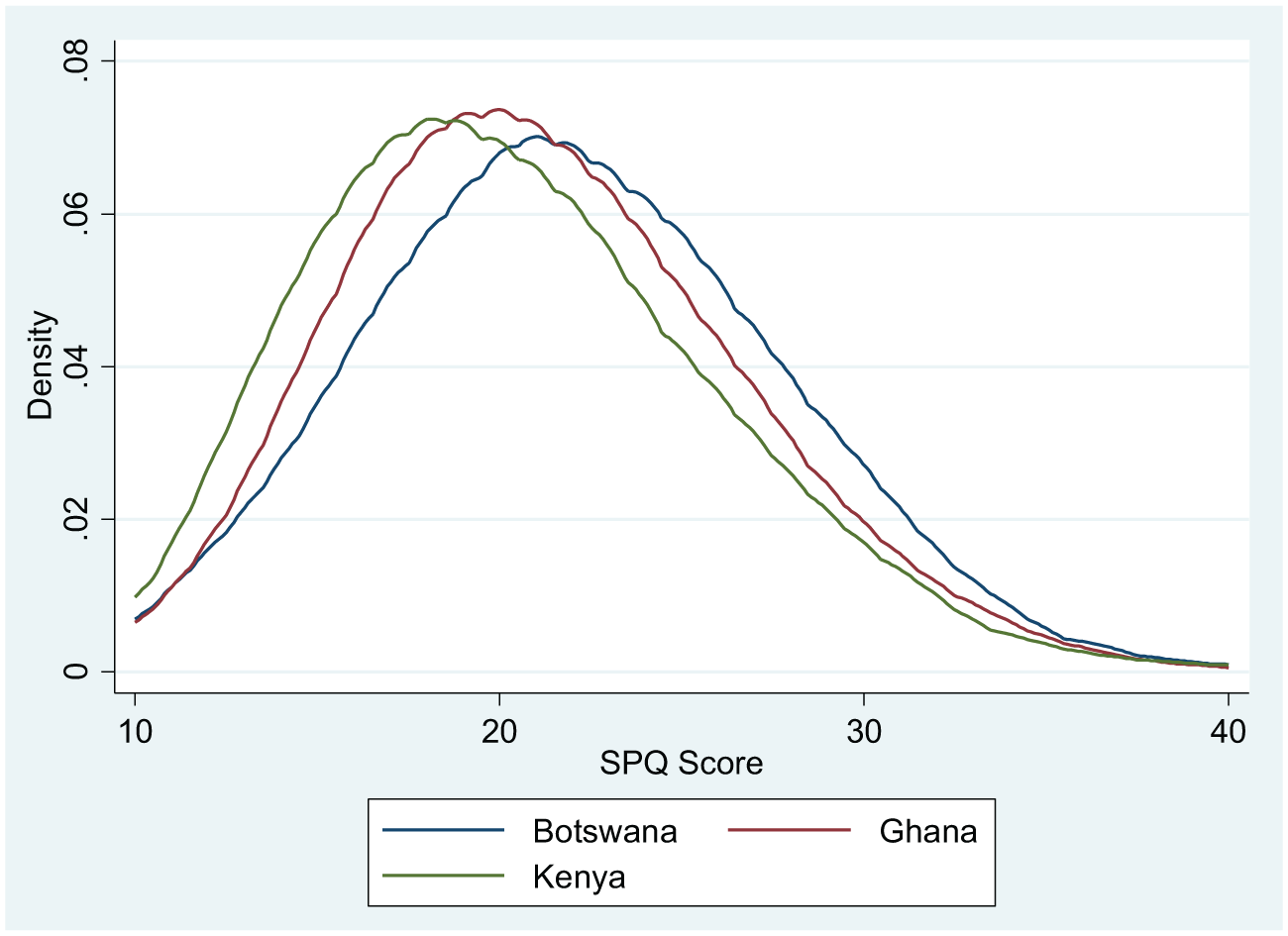

Figures 2 and 3 present the distributions of scaled scores (using the simple additive approach mentioned above), first for ‘deep’ approach and second for ‘surface’ approach for each country sample. 3 For ‘deep’ approach, the results approximate a normal distribution in each country, being very similar overall, with slightly greater central tendency in Botswana and slightly more positive (rightward) skew in Ghana. For ‘surface’ approach, positive skew is apparent in all three countries, while responses in Botswana show a somewhat higher mean level of ‘surface approach’ than in Ghana. Table 4 shows the full results. It is important to note that cultural differences, as well as sampling issues, affect response patterns across these samples, so that direct cross-country comparison of descriptive results are informative in only a limited way. These distributions nonetheless show that the R-SPQ2F detects a good (and notably similar) range of variation in responses among students in the three countries, potentially reflecting meaningful variation in the underlying traits of interest.

Distributions of ‘deep’ approach to learning in Ghana, Kenya, Botswana.

Distributions of ‘surface’ approach to learning in Ghana, Kenya, Botswana.

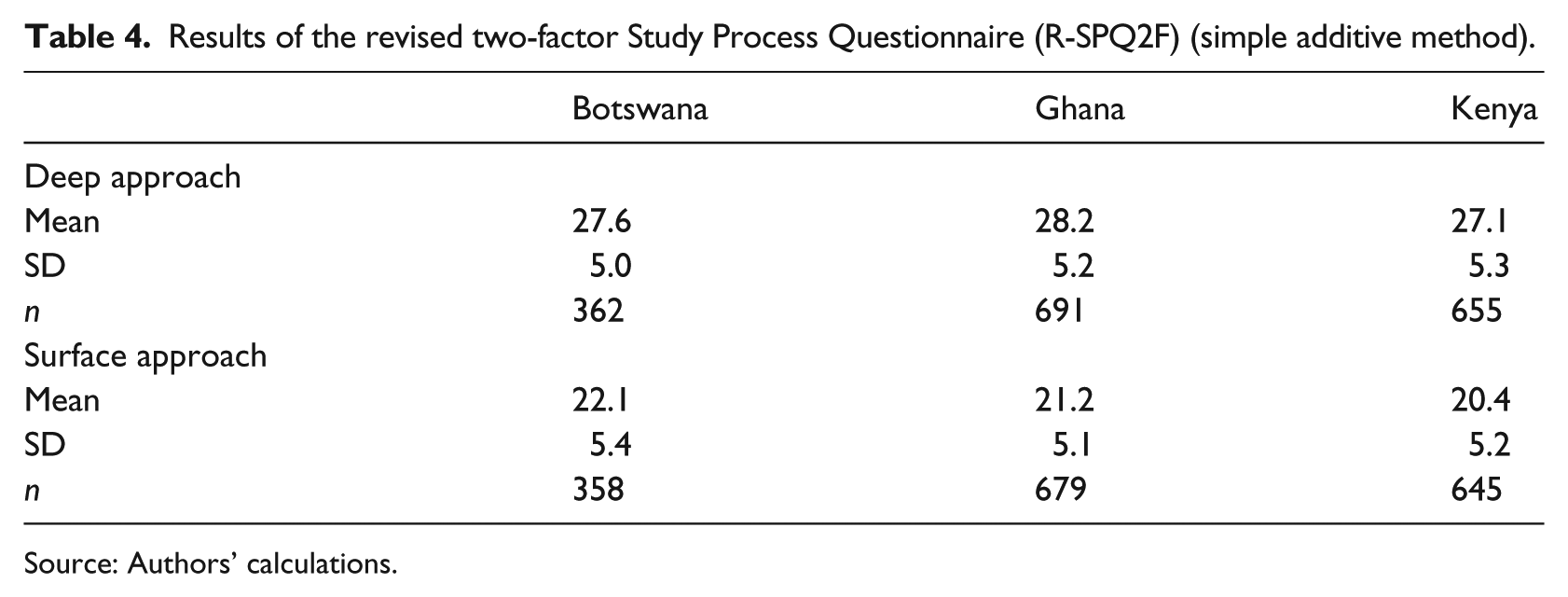

Results of the revised two-factor Study Process Questionnaire (R-SPQ2F) (simple additive method).

Source: Authors’ calculations.

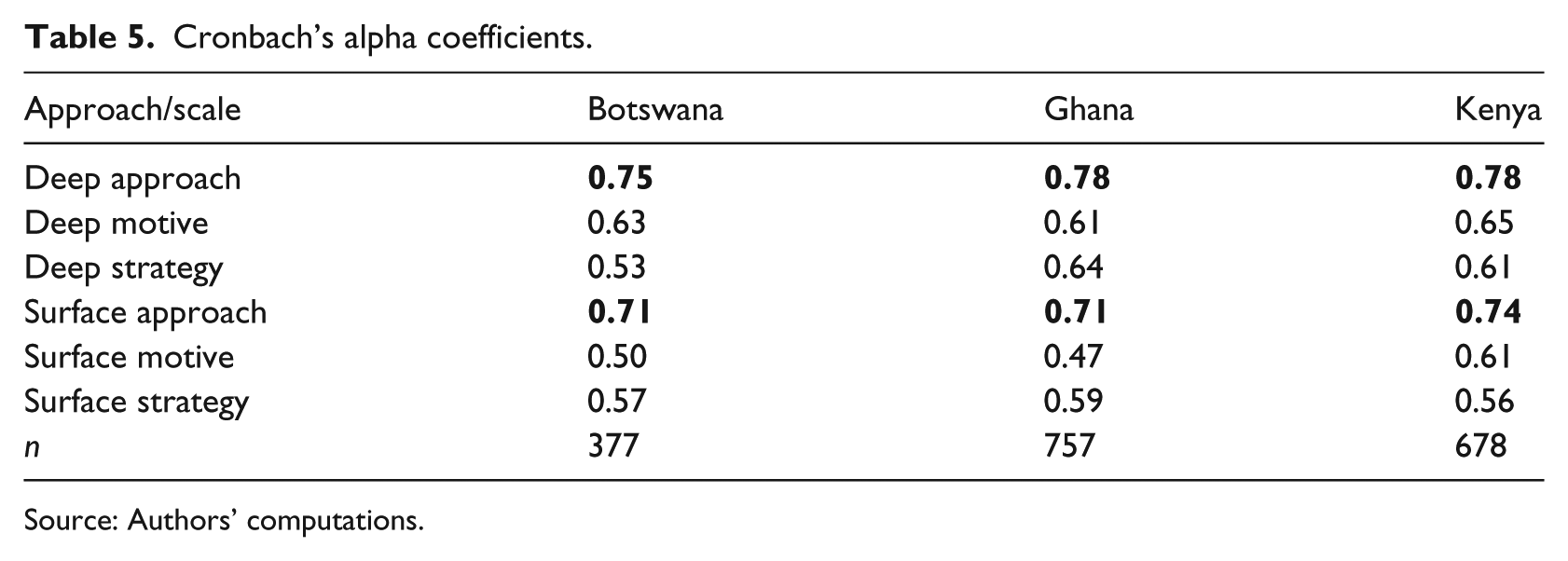

Scale reliability and internal consistency of the R-SPQ2F

Table 5 reports the internal consistency or reliability statistics for the two main scales and four sub-scales for each of the three countries, using Cronbach’s alpha as a simple indicator of the coherence of responses on each scale. A value of 0.7 is commonly accepted to represent acceptable scale reliability or sufficient consistency of responses to consider that the items on an instrument measure a single construct. In the case of the two main scales, this level of consistency is reached in all countries. With regard to the sub-scales, which contain fewer items (and where as a result higher levels of consistency are more difficult to achieve) these fall below the 0.7 threshold but are in many cases close to 0.6 which may be considered to indicate moderate reliability.

Cronbach’s alpha coefficients.

Source: Authors’ computations.

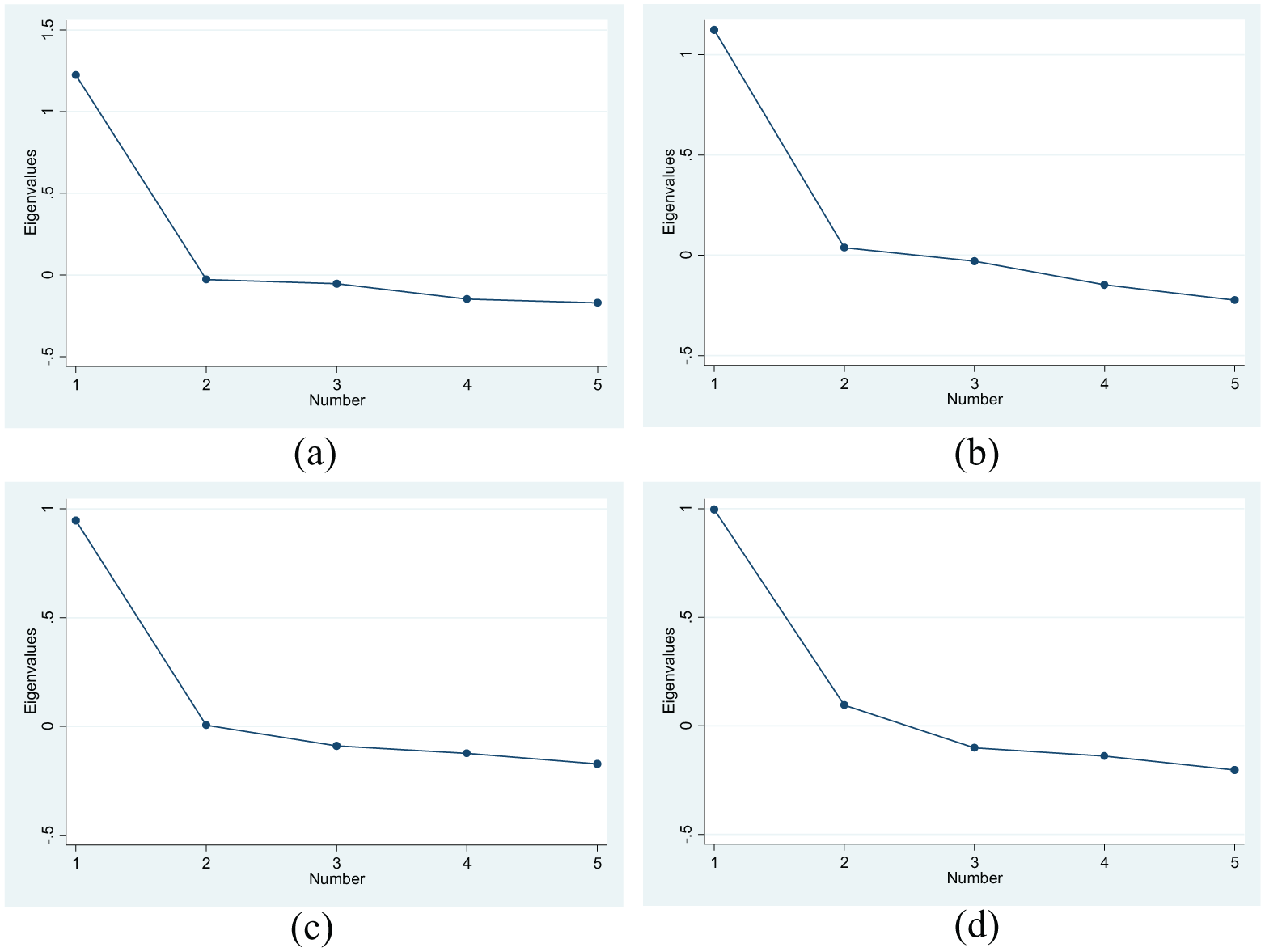

Based on the similar values across countries for reliability as well as means and standard deviations – and owing to the relatively small in-country samples – the data were pooled across countries to conduct factor-analysis at sub-scale level. The screeplots 4 presented in Figure 4 provide an overview of the results. In the case of each sub-scale there is a significant ‘arm’ effect indicating strong evidence for a single factor explanation of the variation in responses to the sub-set of questions designed for each sub-scale.

Screeplots after factor analysis of approaches to learning sub-scales for: (a) Deep motivation; (b) deep strategy; (c) surface motivation; and (d) surface strategy.

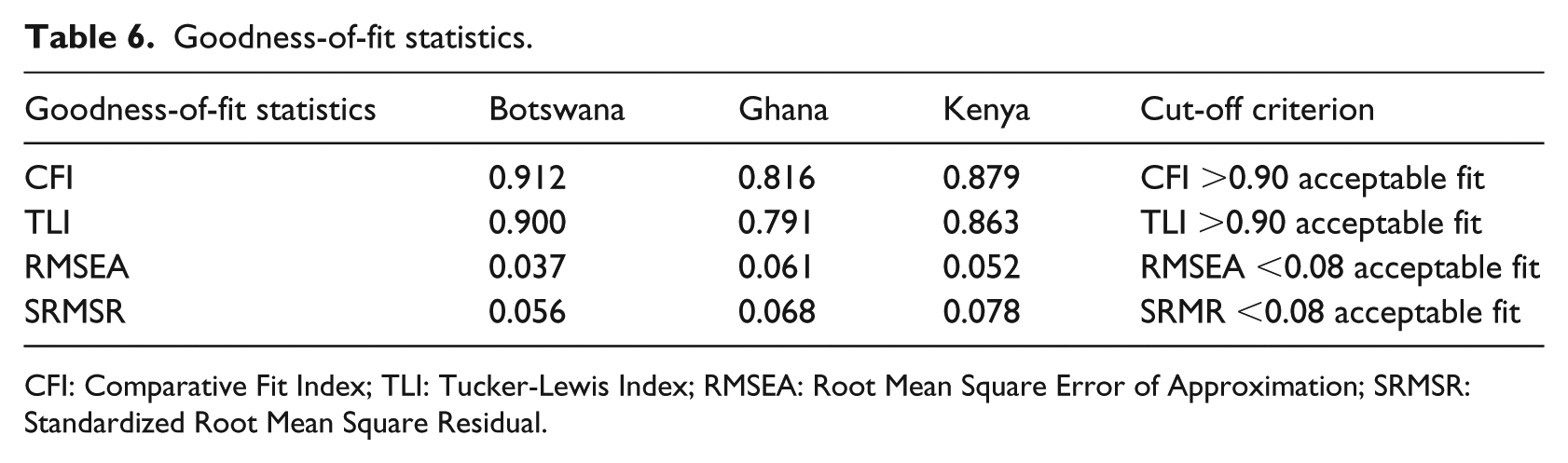

Factor structure

A review of the goodness-of-fit statistics was conducted to verify whether the ‘two-factor model’ described by Biggs (1987) fitted the data accurately (see Table 6), according to the most commonly employed goodness-of-fit tests and their usual threshold or cut-off levels. For Botswana, the values of CFI (Comparative Fit Index) and TLI (Tucker-Lewis Index) are above the cut-off criteria and the values of RMSEA (Root Mean Square Error of Approximation) and SRMSR (Standardized Root Mean Square Residual) are below the cut-off criteria, in each case indicating a good fit of the model. In Ghana and Kenya, all four goodness-of-fit values are slightly below the cut-off criteria, slightly more so in the case of Ghana, but nonetheless may be considered to indicate reasonable or adequate fit of the model for these two countries.

Goodness-of-fit statistics.

CFI: Comparative Fit Index; TLI: Tucker-Lewis Index; RMSEA: Root Mean Square Error of Approximation; SRMSR: Standardized Root Mean Square Residual.

In addition, to examine the ‘fit’ of the two-factor model, we examine the ‘factor loadings’ 5 for the two-factor model for each country separately. For all three countries, the factor loadings on individual items are reasonably high, being close to 0.5 in many cases (see Appendix 1 for detailed results). This indicates that the constructs (factors) provide a reasonable account of the patterns or responses to the items identified as belonging to them. Further, the correlation between surface and deep motivation is negative across all three countries, as expected based on Biggs’ conceptual framework. Deep and surface approaches are nonetheless not entirely antithetical to each other by any means and as considered in the next section there are some faculties in which response patterns are somewhat unanticipated when considered in the light of Biggs’ framework.

Discussion

When taken together, the results of the qualitative pre-pilot and the full-study empirical validation exercise indicate that there is no significant reason to consider that the R-SPQ2F cannot be used as a valid measure of student approaches to learning in the three study contexts (research question 1). Although there are minor variations in the patterns of response by country, which suggests some differences in interpretation of certain items on the questionnaire or in response propensities (e.g. the extent of social desirability bias in particular cultures), the two-factor structure proposed by Biggs appears to hold as expected, and the response patterns across the three countries are similar enough to render the data broadly comparable for the purposes of analysis. This implies that university students in Ghana, Kenya and Botswana do tend to describe their preferred approaches to learning in one of two qualitatively different ways, a finding which suggests that approaches to learning may indeed help to explain differential learning outcomes within the three study contexts.

Exploring approaches to learning as a selection effect

Results

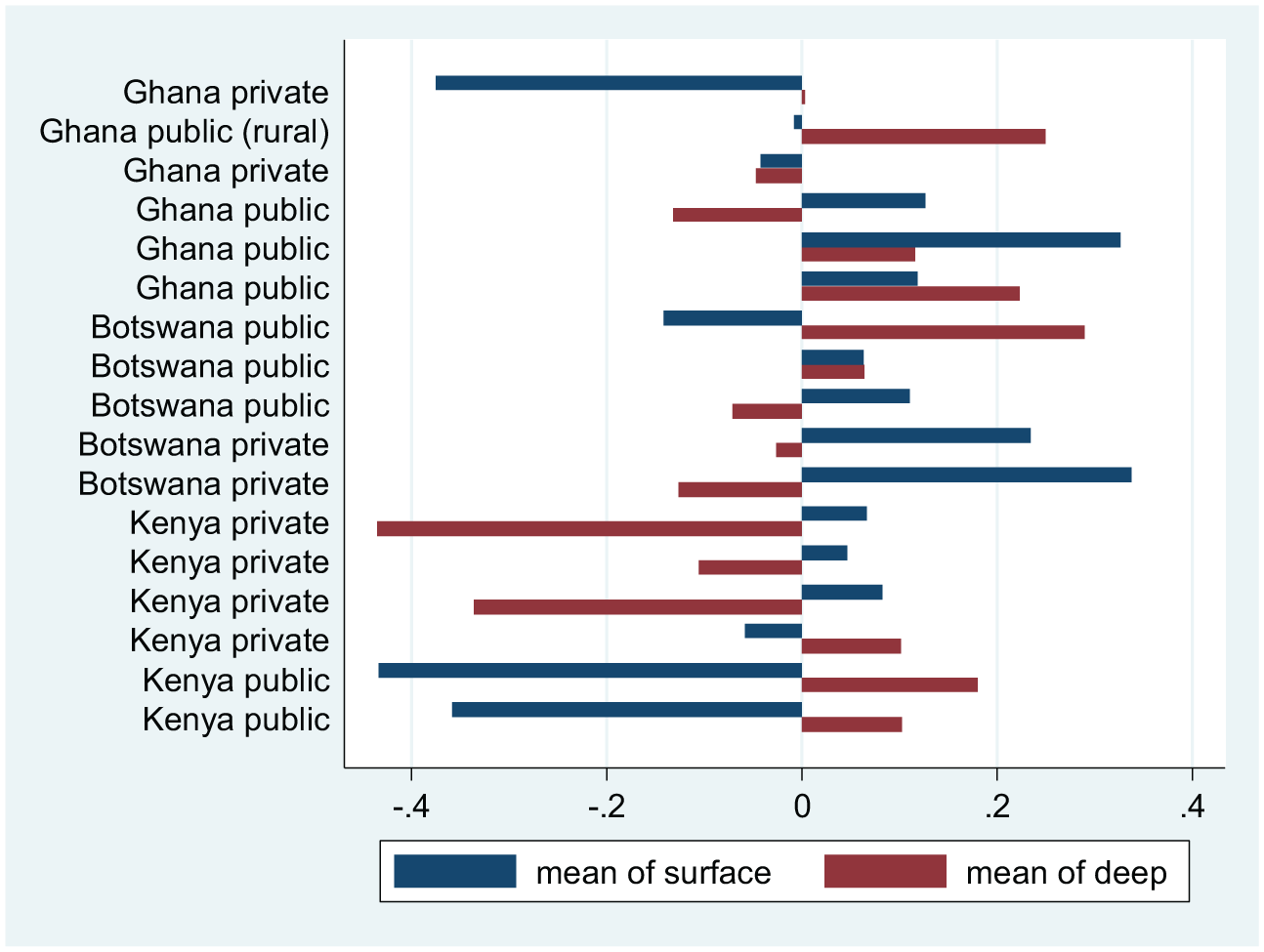

Figure 5 illustrates the patterns of deep and surface approaches to learning at baseline in each of the participating faculties (anonymized), using the IRT-PCM scaled scores (centred on zero). These vary widely, but with some intelligibility. In Kenya, for example, students from the two medical faculties in the sample (both of which are based at prestigious public universities) showed relatively high scores on ‘deep’ approaches and very low scores on ‘surface’ approaches, whereas students enrolling in three of the four private universities in our Kenyan sample – and both of the private universities in Botswana – demonstrated the opposite pattern (i.e. high ‘surface’ scores and low ‘deep’ scores). Other patterns, especially in Ghana, are more difficult to interpret, particularly where, as in two public university faculties in Ghana, students’ responses scored positively on both surface and deep approaches to learning (when compared to the whole three country sample centred on zero). The somewhat less clear pattern in Ghana may be considered consistent with the weaker fit of the two-factor model in the Ghanaian data.

‘Deep’ and ‘surface’ approaches to learning by faculty.

Discussion

The results outlined in this section demonstrate that there are significant differences between the participating faculties, in terms of incoming students’ approaches to learning. Some of these differences clearly relate to ‘selection’ that is, differences in who attends the various faculties, but what is less clear is whether ‘selection’ is primarily about selection by the universities during admissions or whether it is about students’ own choice of institution. It appears likely that both explanations are at work, given that, unlike in many other African contexts, students in Ghana, Kenya and Botswana can choose which university they attend (subject to their budgets and to meeting entry criteria). However, the data unfortunately provide only limited information to enable detailed examination of these selection issues.

The results are further complicated by the fact that our faculty sample includes different academic subjects and also includes full institutions (smaller universities), as well as individual faculties within (larger) institutions. There are likely to be selection effects related to student choice of particular fields of study (for example, medicine) as well as particular institutions (i.e. for reputational reasons or because particular institutions prioritize particular things during admissions).

However, what we can say with certainty is that approaches to learning, as measured via the R-SPQ2F, are not randomly distributed across the faculty samples. Rather, some faculties (e.g. medical faculties) within our sample have clearly recruited larger numbers of students with ‘deep’ approaches to learning than others. These ‘selection effects’ might be expected to be linked to qualitative differences in the learning environment of the different institutions in the study, but this is somewhat unclear. It is possible that prestigious universities (and courses) are more able to select students who tend to demonstrate a ‘deep’ approach or it may be that those more likely to demonstrate a ‘deep’ approach might prefer these institutions and courses. With respect to a study of pedagogy, however, these orientations are at least initially causally unrelated to teaching at the university (since no teaching has occurred). They are important, however, for a study which sets out to explain learning progress (gains over time) within higher education institutions because incoming approaches to learning, if not observed, are likely to constitute important sources of ‘omitted variable bias’, to the extent that they are potentially linked both to learning progress and selection of courses and institutions.

Exploring the relationship between critical thinking and approaches to learning

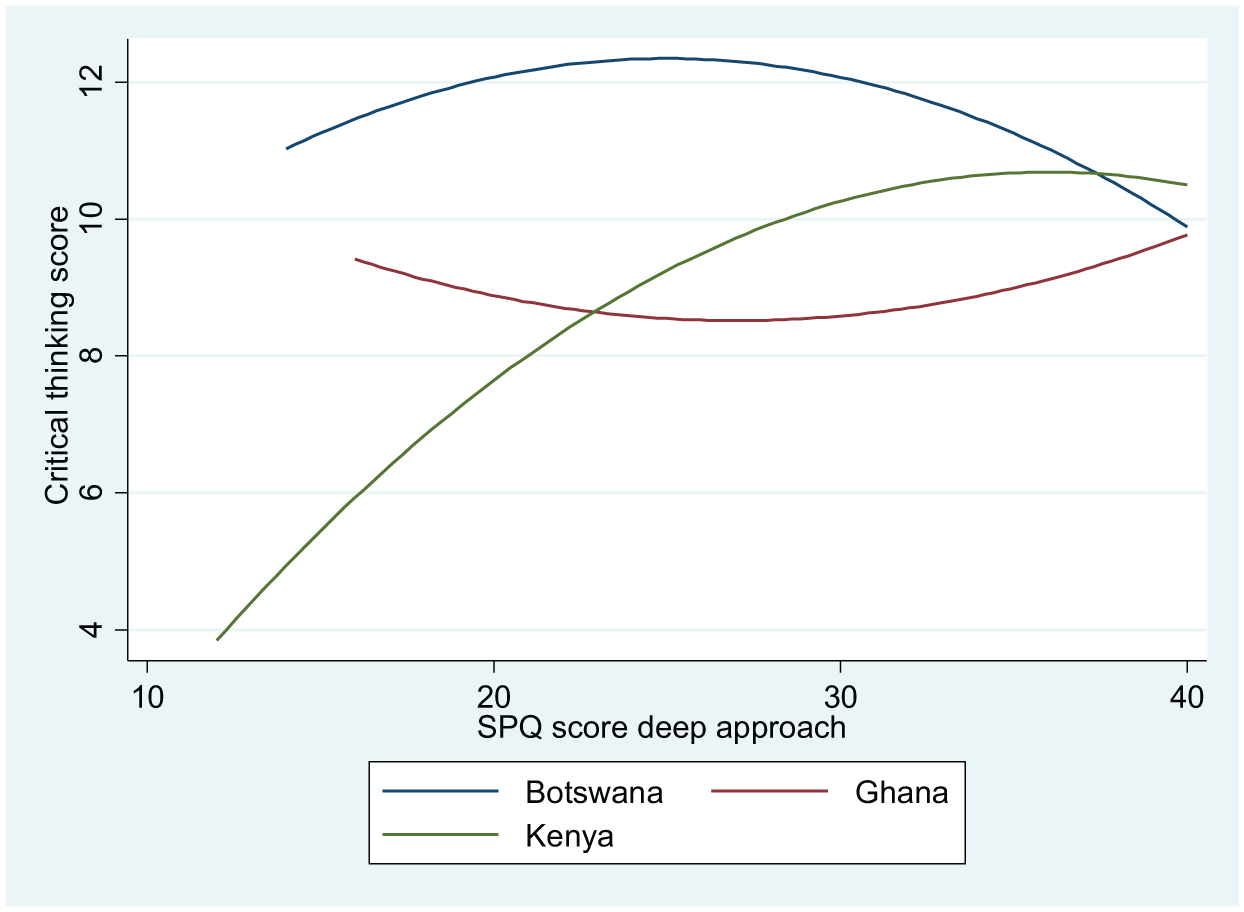

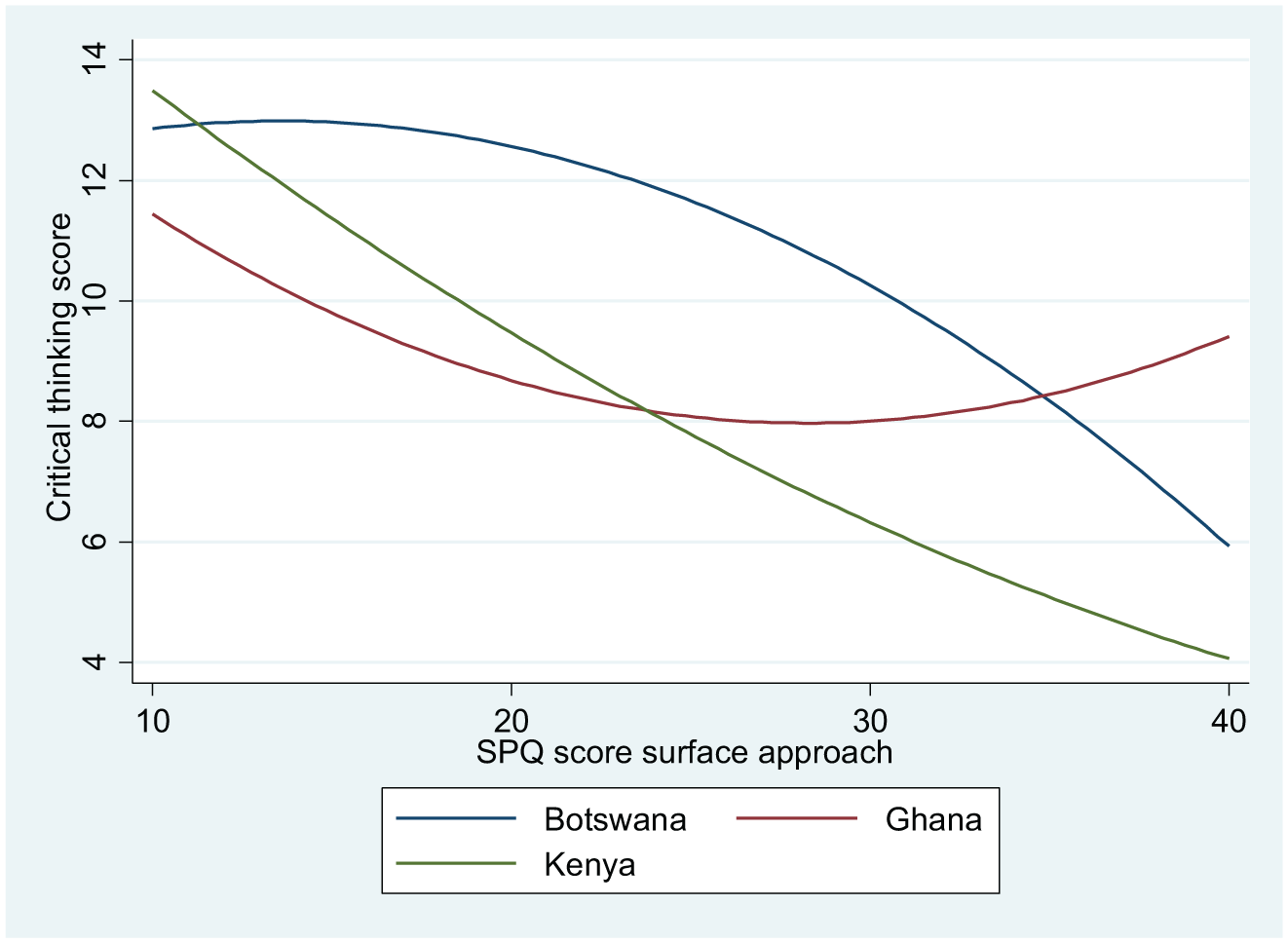

Figures 6 and 7 illustrate the relationships between students’ baseline critical thinking scores and, in turn, their incoming deep and surface approaches to learning trait estimates (using IRT-PCM). 6 The bivariate relationships are modelled as curvilinear using a quadratic relationship. In Botswana and Ghana, there appears to be little relationship between deep approaches to learning and critical thinking, while in Kenya students with a ‘deep’ approach show higher critical thinking scores. In the case of surface approach, however, a clearer pattern emerges across the three countries, in the form of a fairly strong negative bivariate relationship, again most notably in Kenya.

Relationship between critical thinking scores and ‘deep’ approach.

Relationship between critical thinking scores and ‘surface’ approach.

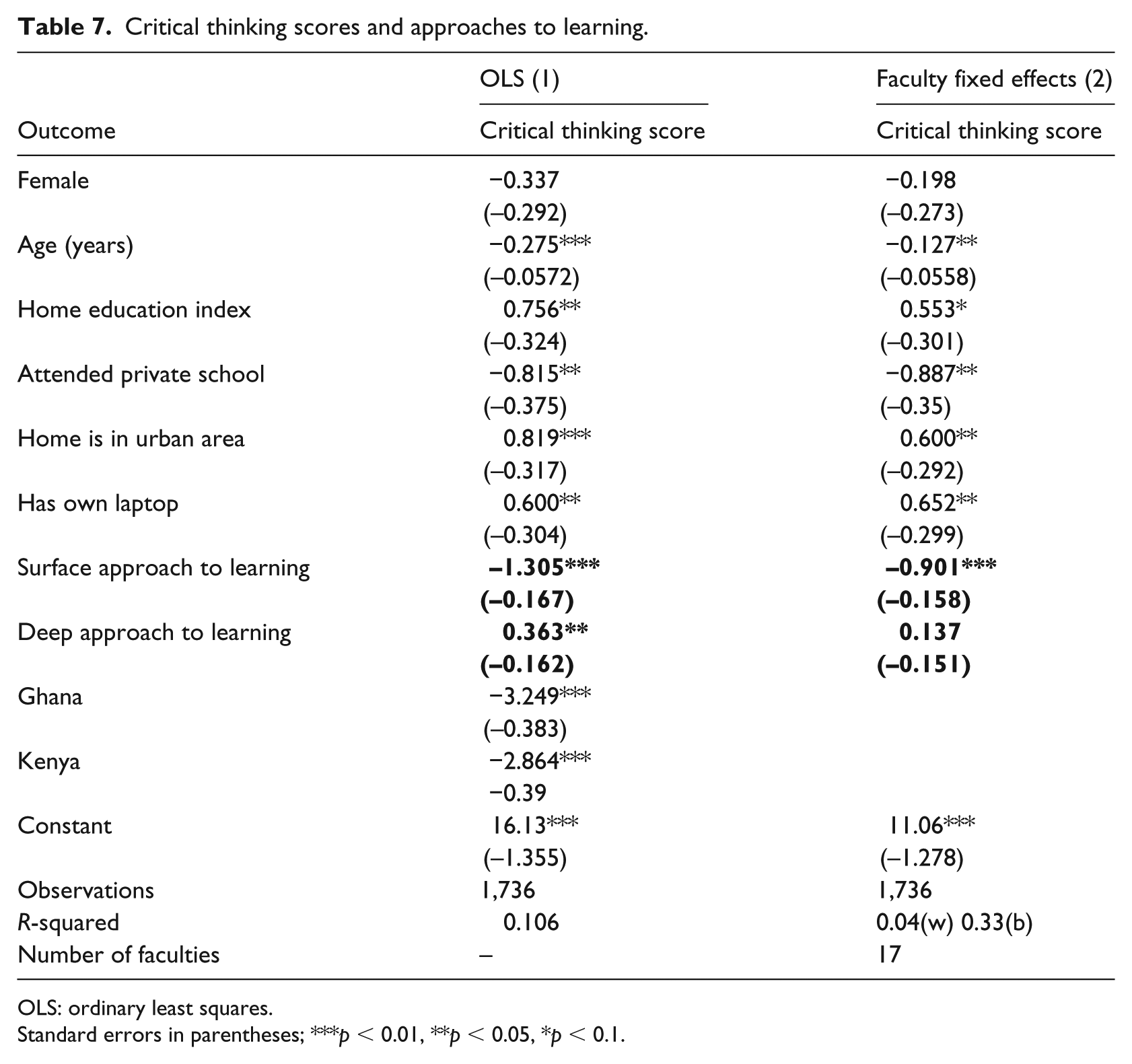

Table 7 reports the results of two regression modelling exercises, each of which models the predictors of students’ critical thinking scores (at baseline) based on their approaches to learning (also at baseline), plus a number of other explanatory variables taken as indicators of students’ backgrounds (i.e. gender, age, home education, attendance at private school, urban residence, laptop ownership). 7 In the first (ordinary least squares (OLS)) model, dummy variables are included for each country, with Botswana being the reference category (i.e. the coefficients for other countries are relative to Botswana). In the second model, faculty fixed effects are included, so that results for the other indicators may be interpreted as ‘within faculty’ effects (i.e. the coefficients represent the differences between students within an average faculty). This approach is intended to address the issue that, at faculty level, student samples are affected significantly by selection effects, so that comparisons which do not account for this may be misleading.

Critical thinking scores and approaches to learning.

OLS: ordinary least squares.

Standard errors in parentheses; ***p < 0.01, **p < 0.05, *p < 0.1.

The results indicate, somewhat unsurprisingly, that home and individual-level advantage are associated with higher critical thinking scores at baseline, other things equal. However, the important finding here is that, net of these associations, there is a relationship between ‘approaches to the learning’ and the key learning outcome of interest in our study. In other words, students adopting a more ‘surface’ approach demonstrate lower scores on the critical thinking assessment and those adopting a ‘deep’ approach demonstrate higher scores (with the surface ‘effect’ being considerably larger in magnitude). Indeed, an increase in surface approach of approximately one standard deviation (which equates roughly to a shift across one third of the distribution at the mean) is associated with a reduction in critical thinking score of 1.3 points. This represents a not insignificant reduction of around 10% at the mean critical thinking score (see Table 3).

When comparing only within faculties (i.e. removing the ‘between’ faculty effects), co-efficient sizes are mostly reduced by comparison with the OLS results, but nonetheless most retain statistical significance. In the case of surface approach, the effect is reduced to around 0.9 points but remains significant at the 1% level. The effect of deep approach, however, becomes statistically non-significant. These results indicate that, while some of the predictive effects of approaches to learning do reflect selection into faculties, there remains a strong relationship between surface approaches to learning and lower incoming critical thinking ability within individual faculties.

The regression results presented therefore suggest that approaches to learning, as measured via the R-SPQ2F, are predictive of students’ critical thinking skills in the three study contexts, including when conditioning on key student background factors (e.g. socioeconomic background; educational background) and when controlling for selection into faculties. This finding offers important evidence of the instrument’s predictive validity, given that one would expect to find a relationship between these constructs when properly measured. Indeed, critical thinking may be linked to a ‘deep approach’ in intuitive terms, since critical thinking itself may require an approach which involves ‘looking beyond ‘appearances’. Specifically, the critical thinking assessments employed by the PCT project focus on identifying bias, separating fact and opinion and assessing the strength of arguments and evidence. Accordingly, there may be a sense in which ‘deep’ approach to learning or at least a weaker orientation towards a ’surface’ approach and critical thinking skills are ‘two sides of the same coin’. As a result, caution is required when interpreting this relationship in causal terms.

With respect to the potential implications for pedagogical intervention, it may be that interventions which successfully improve critical thinking, or which develop deeper learning approaches may be expected also to develop the other skill/orientation at the same time. Further research, including as part of the PCT project, is required to shed light upon these relationships. Our descriptive findings do, however, point towards important overlapping relationships between students’ home advantage, their approaches to learning, their selections of higher education institutions and courses and their critical thinking skills.

Conclusion

The revised SPQ instrument was found to demonstrate relatively high levels of reliability in the three African higher education contexts which formed the sample of this study. The study indicates that the construct and measurement approach may be considered valid in these contexts. These findings might be considered a little surprising given the Western origin of the instrument and the potentially important role played by culture and language in its interpretation. Similarities between the three countries’ higher education systems and those of other anglophone contexts may play a key role in rendering the instrument relevant (and informative) for these settings. The fact that students in Ghana, Kenya and Botswana all study in English throughout their educational careers is also likely to be an important factor with respect to the validity of the construct and instrument. Students who have used English for a smaller proportion of their education (as is the case for other countries in the region, such as Rwanda and Tanzania) might be expected to show different response patterns. Accordingly, we do not expect that the results of this study would necessarily transfer to other African contexts. Nonetheless, our results suggest that in these three contexts, the instrument effectively measures what are important differences in learning orientations across two dimensions and that these dimensions predict, to some extent, both the universities into which students enrol and their critical thinking skills at entry to university.

While the relationships identified cannot be concluded to be causal, they may serve important predictive and diagnostic functions, especially for universities and faculties. The instrument is simple to administer, and its responses are straightforward to process, being considerably less onerous both to students and faculty than cognitive assessments. It potentially provides indicative evidence for examining the ‘impacts’ of pedagogical change as well as providing ‘benchmarking’ data on student attitudes which may be informative for the design of pedagogical interventions. While the measurement of critical thinking skills requires a relatively sophisticated cognitive assessment test, the SPQ records only self-reported attitudes, which we consider to be conceptually distinct from critical thinking skills qua ‘cognitive skills’. Nonetheless the close relationship between these two measures is significant for higher education policies and for pedagogical innovation and development.

Of course, an important caveat underpinning this entire discussion is the fact that a ‘surface’ approach may actually lead to better examination results at university, depending on the dominant method and format of assessment and the balance of skills which is emphasized. Even if students who demonstrate ‘deeper’ approaches to learning make greater improvements in ‘higher-order’ skills such as critical thinking while studying at university, it is not necessarily the case that they will also show similar improvement on ‘traditional’ examinations. In turn, to the extent that labour markets tend to reward ‘credentials’, at least at the entry stage, ‘deeper’ approaches need not necessarily be expected to help students in finding ‘traditional’ formal employment. ‘Credentialism’ is clearly much less of an issue in the informal sector and in self-employment, however, and, at least intuitively, it might be expected that both ‘deep approaches’ and critical thinking would be supportive of transitions into these forms of work.

The relationship between approaches to learning and critical thinking is an important one for universities to explore, as they attempt to better support the development of a broader range of abilities in their students. It appears that there are important synergies between student attitudes and critical thinking development – and, possibly, between so-called ‘hard’ (cognitive) and ‘soft’ skills (attitudes and dispositions). These potential dynamic complementarities remain important topics for investigation in the context of sub-Saharan Africa and elsewhere.

Footnotes

Appendix 1

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Economic and Social Reseach Council (ESRC) and Department for International Development (DFID) [grant number ES/M005496/1].