Abstract

Some students perceive that online assessment does not provide for a true reflection of their work effort. This article reports on a collaborative international project between two higher education institutions with the aim of researching issues relating to engineering student perceptions with respect to online assessment of mathematics. It provides a comparison between students of similar educational standing in Finland and Ireland. The students undertook to complete questionnaires and a sample of students was selected to participate in several group discussion interviews. Evidence from the data suggests that many of the students demonstrate low levels of confidence, do not display knowledge of continuous assessment processes and perceive many barriers when confronted with online assessment in their first semester. Alternative perspectives were sought from lecturers by means of individual interviews. The research indicates that perceptions of effort and reward as seen by students are at variance with those held by lecturers. The study offers a brief insight into the thinking of students in the first year of their engineering mathematics course. It may be suggested that alternative approaches to curriculum and pedagogical design are necessary to alleviate student concerns.

Introduction

This article presents empirical findings from a recent research project aimed at understanding the conceptions and expectations that first-year engineering mathematics students have prior to online assessment. We also gathered students’ reflections immediately following online assessments. The research was prompted by an analysis of anecdotal observations gathered over several years from formal institutional qualitative feedback media and from informal feedback, including a reflective diary. These suggest that many students may inadvertently experience negative socio-emotional effects in advance of, or following an online assessment. Recent research (Gallimore and Stewart, 2014; Gill et al., 2010; Tempel and Newman, 2014) suggests that negative effects may be deeply embedded, resulting in the need to introduce additional support for mathematics at third level.

A review of science, technology, engineering and mathematics (STEM) provision in Ireland (McCraith, 2015) within primary and post-primary levels raised issues as follows: transition to third level; the use of information and communications technology (ICT); and international performance and comparison. Lecturers at third level are concerned that there is a mismatch between the skills required at second level compared to those needed at third level. Of particular concern is the decline in basic mathematical skills (Treacy and Faulkner, 2015) among students with mid-range Higher Leaving Certificate qualifications, particularly since the introduction of a new mathematics curriculum to address this very issue. Research provides evidence that similar issues to those raised in the review of STEM provision are also pertinent in Finland (Kinnari, 2010; Rinneheimo, 2010), suggesting that Programme for International Student Assessment results may differ from ‘teachers’ experiences of students’. The importance of the role of assessment and ICT is well documented within the literature (Cox, 2012). An extensive review of e-assessment conducted by Jordan (2013), which focused on online computer-marked quizzes, highlighted the increasing role of e-assessment technologies within the learning environment and how this environment may be optimized beyond simple quizzing (Johnson et al., 2015).

For this project, a joint Irish/Finnish study was developed to examine whether, within the boundaries of first-year engineering mathematics, the anecdotal concerns were justified. Samples of second-year engineering students, based in Ireland and Finland, were invited to participate in group discussion to obtain the views of those who had progressed from first year. The mathematics curricula of the participating higher education institutions in Ireland and Finland were analysed to determine levels of similarity prior to the research, and interaction between lecturers took place under the Erasmus+ teacher exchange scheme to confirm the degree of similarity in first-year engineering mathematics. Levels of similarity in programme content, assessment methods and student cohort were considered sufficiently close to allow comparisons to be made. It is hoped that the outputs from this research will provide for discussion in the design of new programmes as online provision is expanded. The data will help designers frame their understanding of the effects of the assessment technology on the learning process and facilitate an examination of pedagogical barriers and support, enabling them to understand how these relate to levels of interaction and engagement online.

The project was designed within a socio-cognitive theoretical framework of self-efficacy (Bandura, 1977, 1989) to help the researchers understand the experiences and perceptions that learners have in their transition to third-level engineering mathematics. The main thrust of self-efficacy theory is that the actions of the learners, and their subsequent reactions, are influenced by the learners’ observations and experiences. In considering the constructs of self-efficacy and expectancy values, Parajes (1996) supports Bandura’s theoretical perspective that considerations of outcomes may be separated from judgements of self-efficacy. Parajes suggests that the act of assessment of academic capability is within the abilities of the students, although most tend to be biased towards a sense of overconfidence; the relationship between expectation and perceived importance of academic tasks is complex, and context dependent. Those students demonstrating low confidence levels are most likely to give up quickly when confronted by difficult tasks. The social contextual situation for low-performing students, who exhibit low confidence levels, is paramount. Performance is not improved if the educational environment lacks the necessary equipment, effective teaching interventions or supporting resources (Alt, 2015; Parajes, 1996). Of further interest within the realm of self-efficacy is the valence (or worth) of the actions to the student. Simon and Hastedt (1999) recognize the complexities of the inter-relationships in attaching the importance of valence to the student’s self.

Within this framework, the research focused on students’ pre-existing attributes, perceived barriers, self-confidence and the awareness of existing support mechanisms for learners. To remain cognisant of ethical considerations, the research plan, proposed questionnaires and interview questions were submitted for approval to the ethics committee of the School of Education at the University of Glasgow. Data gathering did not commence until approval was granted. Additional ethical approval was also sought and received at the participating institutions in Ireland and Finland.

Self-efficacy and transition to third level

The first year of study at third level is a transitional period, in which students adjust to a new norm and in which they are expected to take greater responsibility for their own learning. The fluidity of the transitional period influences the students, resulting in alterations in behaviour (Bandura, 1977), and these alterations may be perceived to be positive or negative depending on the experiences of the student. Increased stress levels in students during the period leading up to the culmination of second-level education has been noted, as has the need for more shared learning objectives across the transitional period into the first year of study at third level (Higher Education Authority, 2015). Students exhibiting a greater sense of curiosity and deemed to be engaged with the process are considered to be more likely to succeed in completing the transition to the first year of study at third level. The degree of preparedness of the student (Van Rooij et al., 2016) arriving from second level is dependent on many factors and variables such as: study choice, academic interest, understanding, effort and social skills. In addition to preparedness, the student’s sense of belonging (Ni Shuilleabhain et al., 2016) is another important factor in determining whether or not the first year at third level is perceived as a success. It is suggested that a successful transition to third level is highly dependent on the sense that students have of their own achievement. The perception and value placed on the goal valence (Simon and Hastedt, 1999; Vroom and Deci, 1992) is an important factor in promoting self-efficacy (Huang, 2016), as is a sense of mastery. Success measured in terms of academic achievement and student satisfaction at third level correlate with the realities of student expectations that were generated at second level (Maloshonok and Terantev, 2016). Self-efficacy encompasses these issues and performance accomplishments are considered to be a principal source of information in determining whether or not a behavioural change has been positive.

The principal source of information in the literature examining the transition to third level relates to the student body engaging in university education; the literature referring to other third-level providers, such as institutes of technology, is scarce. It is important to note that, with a few exceptions, non-university third-level students, such as those attending institutes of technology, do not demonstrate high levels of academic achievement at second level. One area of underachievement is mathematics; this aspect is important due to the domain specificity of self-efficacy and its implications for instruction (Artino, 2012; Bandura, 1977).

E-assessment of mathematics and third-level engineering curricula

Prior to the 1980s (Jordan, 2013), learning was undertaken using face-to-face techniques such as handwritten assessments, and private and public communication and observation. This approach was superseded by a new ‘standardized’ curriculum design philosophy (Goldberg, 2008), based on an industrial design methodology leading to a performance-based approach described by Lodge (2002). Assessment and programme delivery underwent a sea change with the new millennium when education authorities and professional bodies adapted their validation methods to include learning outcomes (Tremblay et al., 2012) within programmes of study. The assessment techniques within programmes altered accordingly to address these requirements, thus embedding a performativist ethos in the belief that students would become self-governing. In addition, the forces applied to programme designers to engage with online learning methods have meant that many programmes of study now have an online presence. The thrust for innovation, exploration, and utilization of new techniques and technologies continues unabated at all levels of education and this is particularly evident within the global higher education sphere (Johnson et al., 2015). The discourse within e-assessment for self-government of learning supports students who are highly motivated and cognitively aware of the methods required to succeed in a performativist environment (Charteris et al., 2016). All subject areas within the higher education sphere are being addressed, none more so than those described by McCraith (2015) as being included in the STEM subjects.

Summative assessment methods of mathematics at second level, irrespective of whether the assessment is of skills attained or conceptual understanding, utilize traditional methods in the form of written examinations. The use of e-assessment for mathematics does not currently appear in the second-level examination domain in Ireland (Leaving Certificate), the United Kingdom (GCSE and GCE) or Finland (Matriculation). The criteria for assessment are visible in the marking scheme and marks may be awarded for partial or incomplete answers (Ashton et al., 2006). Students at second level are not exposed to e-assessment for summative purposes and the application of e-assessment for formative purposes is not yet considered to be mainstream (Sangwin, 2012); students are generally not exposed to e-assessment until they enter third-level studies, such as an engineering course.

The literature on e-assessment reports many positive outcomes of its application at third level and, in particular, within the domain of engineering. Computer algebra systems are increasingly being utilized (Henderson et al., 2016; Rasila and Sangwin, 2016) to test mastery in learning mathematics in both formative and summative situations. Ivanova et al. (2016) explore the application of e-assessment for summative assessment of learning performance with the aim of developing an adaptive assessment model suited to Bulgarian engineering students. The use of tablet computers for purposes of formative assessment for learning is described by Lohani (2014) for freshman engineering mathematics students. The need to provide formative feedback to large class groups is addressed in this programme by innovatively sharing a sample of anonymised student responses with the large group.

The majority of the studies relating to e-assessment in the domain of mathematics education are theoretical and empirical (Martinez-Sierra et al., 2016) but the perceptions and beliefs (Schneider & Artelt, 2010) of students have not been addressed in the same way. The literature review conducted by Struyven et al. (2005) into perceptions held by students on evaluation and assessment is the most significant, whereas Iannone and Simpson (2013) suggest that the students’ voices often go unheard. The majority of studies at pre-third and early third-level education involve high-achieving students (Iannone and Simpson, 2013; Kelly and Hottkoff, 2016; Ni Shuilleabhain et al., 2016).

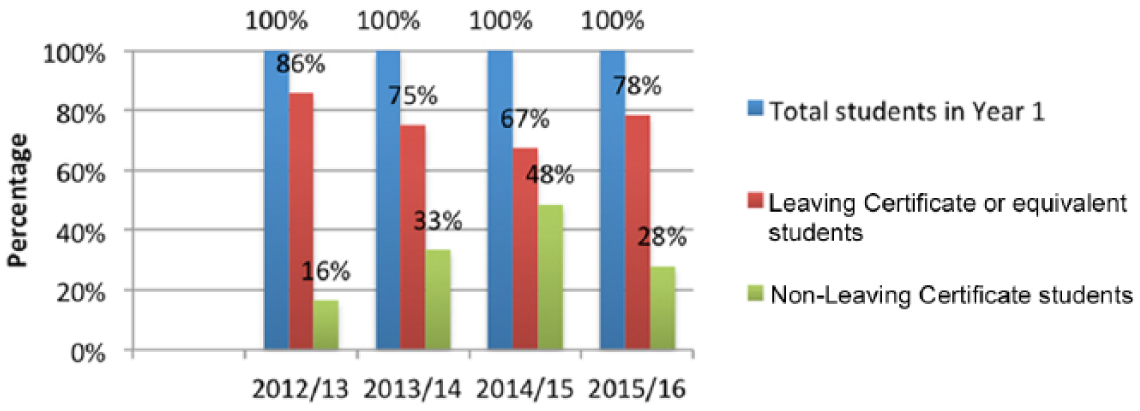

The profile of the students in this study is deemed to be representative of the student base within the respective participating educational organizations. An analysis of the Irish student intake in relation to engineering programmes since the academic year 2012–2013 in terms of the ratio of standard school leaver registered student to non-standard registered student is displayed in Figure 1. The profile of the student intake has changed in the interval between commencing recordings of anecdotal evidence and the start of this study. Prior to the academic year 2012–2013 the percentage of non-standard registered student entrants was considered negligible. Programmes initiated by the government to address upskilling needs were introduced (OECD, 2013) to address the needs of the labour market, resulting in an increase in students returning to education in the tertiary sector. The ratio of standard to non-standard entrants reduced significantly over three years, recovering slightly in 2015–2016, and the profile of the student base has altered with an increased average student age; the impact of this change is the source of several research projects in Ireland (O’Sullivan et al., 2014).

Comparison of Leaving Certificate students to non-Leaving Certificate students in engineering.

The students engaged in this study are generally not considered to be from higher academic tracks; the learner group from Ireland resides in the top 67% of the student base. Approximately 16% of the Irish learners group entered third level from a non-standard route, such as mature access, in the academic year 2012–2013. The percentage increased steadily until 2014–2015, when it peaked at 48%, and dropped to 28% in 2015–2016. A comparison of the annual pattern describing the learner group from Finland cannot be provided, as figures are only available for the academic year 2015–2016. The range of neurodiversity within the learner group makeup is in itself problematic, without adding additional stressors to the system by introducing activities that may result in negative experiences. The researchers are cognisant that a degree of subjectivity exists within the process and that not all learner activities result in negative experiences. The focus of the research is to establish a baseline from which to develop meaningful assessment processes.

Comparison of routes to third level for the University of Applied Sciences in Finland reveals an interesting difference when compared with the Letterkenny Institute of Technology. Non-standard student entrants in the sample from the Institute of Technology occupy 28% of the overall student entry, whereas non-Matriculation student entrants to the University of Applied Sciences in Finland occupy 65%. The majority of students in this sample from the University of Applied Sciences gained entry via the vocational route having studied what is termed the ‘short mathematics course’ in Finland.

Research questions

Using technology to enhance learning is an area that has been identified in the review of STEM provision in Ireland (McCraith, 2015). The review was limited to primary and post-primary education and considered the pedagogical issue of assessment for learning. A special note was made of the gender imbalance – as reflected in the statistics within this study. Of particular interest is that lecturers at tertiary level have concerns when dealing with first-year students when mathematics forms a significant element of the curriculum. Utilizing anecdotal evidence to initiate the questioning process, a set of research questions was developed encompassing the issues of e-assessment considered important to the students. These issues formed the backbone of the questioning and main thematic areas for the coding schema. The research questions are:

Are students prepared for e-assessment of mathematics in the first year of study at tertiary level?

Do students perceive barriers that may form impediments to e-assessment of mathematics in the first year of study at tertiary level?

Does the self-efficacy of the students affect the perceptions of students with respect to e-assessment of mathematics?

What level of understanding of assessment and feedback do students have?

Is there any evidence of a digital divide?

The analysis seeks to explore a discourse of these research questions. Outcomes of the research questions will be addressed and explored within the discussion.

Research methodology

Learner groups

The active first-year group study was split between Ireland (n = 67) and Finland (n = 60) and the participants came from a variety of engineering disciplines in the first year of study at BEng ordinary degree level or equivalent. The location was the natural class environment, to maintain a structured, contextual setting leading to a case study with phenomenological output (Smith, 1996). The phenomenological output permits the establishment of a dialogue to enable a baseline to be constructed with regard to the status of online assessment for the participants. A mixed methods (Bryman, 2016) approach was taken to triangulate and guide the initial outputs with qualitative and quantitative approaches operating simultaneously. Each participant engaged with their consent, without compulsion or negative effect on their participation within their programme of study. All participants completed an anonymised questionnaire containing open-ended and closed questions within a short timescale to ensure synchronic reliability. The questionnaires were tested to ensure issues of language were not problematic between the two countries. A second questionnaire was provided at the beginning of the second semester to the student group in Ireland (n = 59). The purpose of this second questionnaire was to determine if there were any changes in the mindset of the first-year students after a period of reflection on the first semester programme. The number of participants had decreased by eight as a result of students moving to other programmes of study.

Sampling for participation in discussion group activity was based on convenience as determined by the availability of learners to the researchers. The first-year student sample group (n = 8) was not self-selecting and was drawn from a group of available participants within a standard timetabled session. The second-year student sample group (n = 7) was self-selecting and the participants made themselves available during a lunchtime session. The discussion group activities utilized a semi-structured, standardized open-ended question approach. The first-year student group discussion activity was timed to take place shortly after the questionnaire had been completed, and immediately after the first online assessment exercise. The second-year student group discussion activity took place at the beginning of the second semester to allow them time to reflect on their experiences within the second year. A standardized open-ended question approach was utilized to ensure that all topics and issues to be covered were specified in advance, and that all interviewees were asked the same basic questions to ensure comparability of responses. Student identities within the discussion group processes were coded to ensure anonymity. The interviews were video recorded but steps were taken to ensure that faces could not be determined.

Lecturer group

The study involved two groups of mathematics lecturers from Ireland: those who engage in e-assessment and those who don’t (n = 3 and n = 2, respectively). Interviews with mathematics lecturers engaging in e-assessment were conducted in Finland (n = 2). Each lecturer participated with consent in an anonymised semi-structured video interview and was asked the same questions to allow comparisons to be made. Prior to the lecturer interviews an analysis of the student questionnaires was conducted to establish main thematic areas for consideration. The selected thematic areas were deduced from the completed student questionnaires using the combination of responses to open-ended and closed questions. The lecturer interview questions were formed around the following thematic areas: training/preparation for online assessment; perceptions of student confidence for online assessment; and perceptions/knowledge of barriers for optimal online assessment.

Analysis

Quantitative instruments

Two anonymised questionnaires were distributed in class, even though this study is focused on e-assessment. A decision was made that it would be easier to control and administer if delivered in class. A pilot questionnaire was tested to ascertain any areas of difficulty or misunderstanding. The questionnaires were subjected to ethical approval prior to delivery. The first questionnaire, which was delivered at the beginning of the first semester, contained a total of seven questions, including multiple-choice, five-point Likert scale and open-ended questions. The second questionnaire was delivered at the beginning of the second semester and contained a total of eight questions, including multiple-choice, six-point Likert scale and open-ended questions.

The open-ended questions were scanned to obtain a feel for the emerging themes. The open-ended responses were then coded using an interpretative phenomenological analysis (IPA) theory (Smith et al., 2009) approach to permit the themes to emerge from the data. This allowed the responses to generate the concepts and themes that would underpin questions at the planned interviews to follow. The complete data set was entered into SPSS for analysis. The open-ended responses were revisited to confirm the validity of the codes generated from the analysis. The themes were then compared with the research questions to determine their appropriateness and to ascertain if the questions needed to be re-evaluated.

As a result of the SPSS analysis it was decided to include a discussion group interview with second-year engineering students to determine their experiences of first-year mathematics assessment.

Qualitative instruments

The methodology applied in the qualitative analysis of the questionnaires and video interviews is IPA (Smith, 1996; Smith et al., 2009; Symeonides and Childs, 2015). IPA was used as a means of examining how individuals make sense of life experiences by engaging with their reflections. The engagement was a hermeneutic approach where transcriptions were viewed as textual representations of idiographic experiences. The deduced phenomenological output permitted dialogue to establish a baseline of the status of online assessment for first-year learners in engineering mathematics.

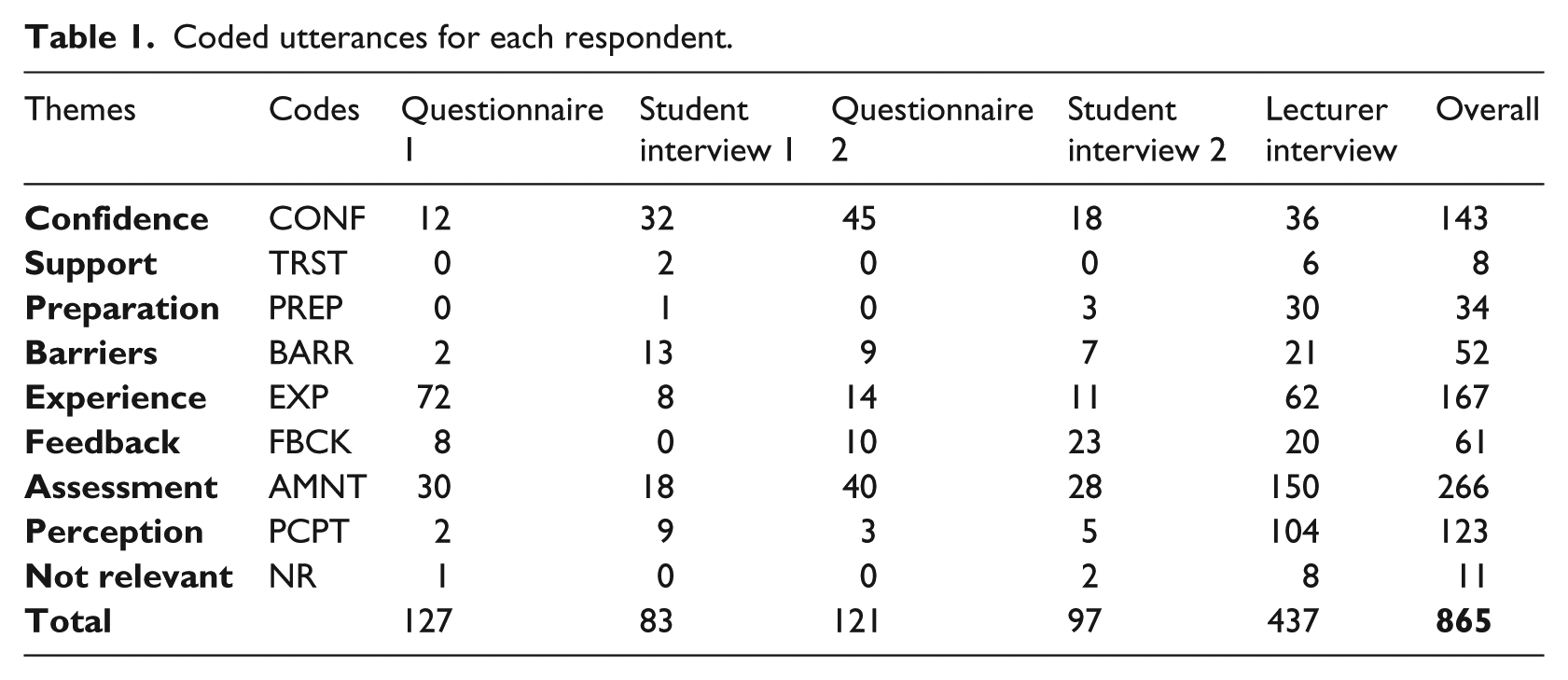

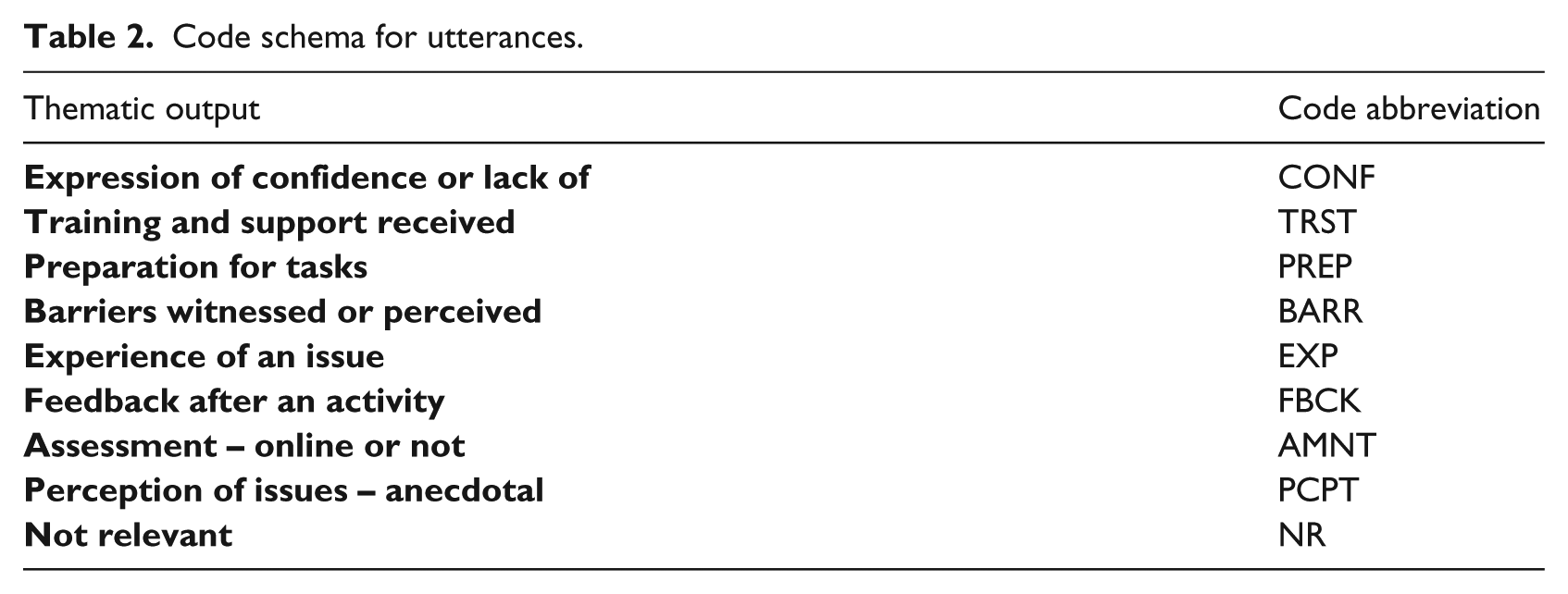

A coding schema (Ryan and Bernard, 2003) was developed from the questionnaires, discussion groups and video interviews. For open-ended question responses the level of granularity for the analysis was determined to be an utterance rather than individual words. An utterance could be part of a sentence or even a complete sentence. To reduce the complexity the same utterance could not be awarded an additional code – all utterances were considered to be unique within this research. The code selected to represent the utterance was not changed until a succeeding utterance, response or phrase required an alternative code. Initial analysis resulted in 28 sub-themes to determine the topology of the quote/sub-theme/main theme tree; the main themes (see Table 2 in Appendix 1) were selected to maintain the context of the research.

Results

Student group first-year engineering: Questionnaire 1

The first-year engineering student group in Ireland consisted of 66 male students and 1 female within the age range 18 years to 45 years. The first-year engineering group in Finland consisted of 57 male students and 3 female students within the age range 18 years to 25 years. Questionnaire 1 was executed during a timetabled mathematics lecture in the fourth week of the first semester, after all students were informed about the voluntary nature of the research and that it would not impact on their studies in any way. Five students were missing from this class session and explicitly requested that they be able to participate by completing the questionnaire on the following day. All questionnaires were given a number but not linked to any names to ensure confidentiality. A student was selected to distribute and collect the questionnaires at random within the class. The study was designed to reveal any issues faced by the students when engaging in online testing. The codes used to analyse the utterances from the open-ended questions within the questionnaire are shown in Table 2 in the Appendix. Table 1 shows the total utterances for Questionnaire 1. Utterances have not been separated out for gender comparison because the number (n = 4) within the sample is too small to generate useful data. The profile of the sample displayed in Figure 1 is similar to the typical entry to engineering programmes in both organizations. Of note, however, is the increasing trend in non-standard entry students since the academic year 2012–2013 as displayed in Figure 1. A total of 73.2% of students stated they had some prior experience of computer-based testing, but not necessarily in mathematics. Of the Irish students, 55.5% noted a positive experience and 73% of the Finnish students felt they had had a positive experience. If students responded with a poor experience of computer-based testing the main themes generated were lack of confidence or that they did not enjoy it.

I am unconfident and unsure about my submission of my answers. (S23, IRL, 2015) Computer-based tests are highly over-rated, to take it as gospel can corrupt a person’s motivation for change. (S72, FI, 2015)

Coded utterances for each respondent.

For those students who stated that they did not have any prior experience of computer-based testing a major factor was that all tests had been paper based without choice (88.2%, n = 34). Issues relating to the digital divide appear in the replies with students in each group stating that they did not have access to a computer at home. Of interest is the hostility shown to computer-based testing by two students in Finland.

I rarely or never come across them, and I definitely do not like and do not want to use them. (S70, FI, 2015) I find it harder to understand when it is not written in front of me. (S13, IRL, 2015)

In relation to the level of training and support received in the use of online systems more than 80% of students in both countries indicated satisfaction levels of moderate support or greater. The levels of dissatisfaction suggest that some weaker students need to be identified and supported at an earlier stage. The levels of dissatisfaction increase to 38.8% for Irish students when support and training for online quizzes are concerned. The Finnish students had not engaged in online mathematics quizzes at this stage; however, 26.7% felt they did not receive sufficient support for online quizzes they had engaged with.

Issues of self-efficacy were explored when enquiries were made about how confident the students felt. Of note is that 96.7% of the Finnish students felt moderately confident or greater compared to 80.6% of the Irish group. This issue is explored further in Questionnaire 2.

Not everyone is good at computers. They can sometimes get frustrating. (S120, FI, 2015) I don’t think I learn much online. (S95, FI, 2015)

The perceptions held by students as to their levels of preparedness for engaging with online testing are quite similar for both groups; 68% felt moderately prepared or better. The Finnish students indicate a higher level of preparedness (76.7%) and there is a suggestion this may be related to the greater confidence levels displayed by them. When asked about the number of tests conducted online the Irish students (67%) indicate that they have engaged with few online tests. The Finnish students (35%) indicate that they have only engaged with a few tests.

Been out of the education system for many years. I have not encountered this before in my life. (S25, IRL, 2015)

Student perceptions of barriers or issues that hamper their engagement with online testing are such that 92.1% of the combined groups felt that at least one barrier or issue existed; 19% felt that a lot of barriers to their engagement existed.

I have poor broadband and it keeps dropping. (S12, IRL, 2015)

Student group first-year engineering: Questionnaire 2

The first-year engineering student group in Ireland consisted of 58 male students and 1 female within the age range 18 years to 45 years. Questionnaire 2 was executed during a timetabled mathematics lecture at the beginning of the second semester, after all students were informed about the voluntary nature of the research and that it would not impact on their studies in any way. The number of respondents (n = 59) is less than for Questionnaire 1 due to dropout and absence at the beginning of the second semester.

An open-ended question relating to what aspects the students found good about online assessment was analysed using the coding system shown in Table 2 in the Appendix to remain consistent with Questionnaire 1. The main themes generated were a positive experience (10.2%), the usefulness of feedback/immediate results (16.9%), the ability to engage anywhere with an Internet connection (47.5%) and the ease of use (23.7%). Of particular note is one student (1.7%), who indicated that the experience was not good. Another single response was an example of a sensible student (Sangwin, 2013: 3) using metacognition to strategically solve the problem.

You can also use the answers provided to work backwards if you don’t understand what formula to use. (OP27, IRL, 2016) After the test is finished you get your result instantly. (OP7, IRL, 2016)

The open-ended question enquiring about what students felt was bad about online testing revealed that 10.2% had had a negative experience, 67.8% were unhappy that attempt marks were not given (partial credit), 15.3% had had issues with computers and Internet access, 5.1% indicated that the testing did not stretch them sufficiently and 1.7% did not have any bad comments to make.

I find that computer-based aren’t as effective as written tests because the test does not show fully what they are looking for. (OP1, IRL, 2016) How accurate is it? Computer-based tests are highly over-rated to make it as gospel. (OP2, IRL, 2016) Lack of computer knowledge, Internet and access to computer itself. (OP4, IRL, 2016)

A Likert scale question relating to perceived levels of confidence resulted in 96.6% of the respondents indicating that their levels of confidence were moderate or greater. This is a significant shift from the responses given in Questionnaire 1 and was identified as an issue to be explored further in the interview discussion with the second-year engineering students. Preparedness was also explored with a Likert scale question, revealing that 98.3% of respondents felt they were now moderately prepared or better for online testing.

Of great concern in the responses to Questionnaire 1 was the perception that many barriers or hindrance issues continued to exist online. The responses in Questionnaire 2 reveal a slight shift towards the feeling that fewer barriers exist. The shift is less than anticipated and is explored further with the second-year students in their discussion.

Student group first-year engineering: discussion interview

The Irish sample (n = 8) was selected from within an existing class group during a timetabled class session for reasons of practicality to ascertain a sense of the experiential phenomena determined from Questionnaire 1. The Finnish sample (n = 5) was self-selecting with consideration given to the need to speak English – independent support was available to aid translations if required. The coding schema (see Table 2 in the Appendix) was employed to determine the responses to the thematic outputs from Questionnaire 1. The timing was scheduled to follow closely after the execution of the questionnaire and it was considered that the students would still be reasonably familiar with the project. The themes for discussion were: confidence, preparation, training/support, anxiety, barriers/negative aspects, feedback and perceptions. The duration of the interview was limited to 30 minutes. Following are some extracts from the discussion where anxiety, fear, ease of use, experiential perceptions, confidence, barriers and preparation are explored. The digital divide appears in a particular discussion where one student thinks that everyone has equal access and this is rebutted strongly.

R: Think about before you did the very first online assessment with me. How confident would you have felt about tackling it before you actually did it? Did you feel confident opening it up and doing it or were there any feelings of trepidation and so on? I was nervous, very anxious but that is me, it is what I am like with all exams. Especially with mathematics, it’s not my strongest point. I was incredibly frightened! (I4, 2015) It is a lot easier than a normal test would be where you just sit down and see the questions and then you felt pressured but you can’t do anything and you have to keep going. At least with online test if you saw a question and you didn’t like it you could go off and take five minutes, have a cup of tea and research a bit more or do more studying. The timeframe we had to do it over a week where we could stop and start puts a lot less pressure on us than a normal exam would be. (I1, 2015) For me it is fine! (F3, 2017) R: After having now done one or maybe even two of these assessments, how confident would you feel now about doing other assessments online? Very confident! (I1, 2015) Along the same lines about time limits and stuff, I would feel confident but if it is time limited you feel more pressure because you need to break up the questions. That’s the first thing you have to do! Decide how many questions and how much time you put towards one. Otherwise there is no time limit and no pressure at all on you. (I3, 2015) Oh God! Not again! Can I do it another way? (F3, 2017) I prefer handwritten. I think with the computer it is too slow and frustrating. (F5, 2017)

The conversation revolved around the actual experience of two online quizzes conducted during the first semester.

R: It is interesting that you are talking about the distractions. These distractions, are they things you experience at home or in college or somewhere different? It takes you far longer to do it online because you have so many distractions at home. Might start looking up something on YouTube on your laptop when you are doing it but forget time. Someone sends you a link to a video or something. It will just take you a bit longer. (I6, 2015) When you are asked questions online and given tasks online that I can do sometime later. But when you have tasks on paper I feel you have to do them right now and I focus on the task. If I go to something else it would not be to study but like music or my motorbike. (F2, 2017) R: Is there anything you found that got in the way of doing the test online? Things that made it harder! You pick up either a full mark or a zero mark where there is no in between working out anything where you might get extra marks. (I3, 2015) R: Okay, so a problem is the marking. Is there anything else such as difficulty actually accessing it, making it more difficult for you, for that experience? Most people would have access to it at this stage. (I2, 2015) I don’t have a computer at home so I don’t have Internet access. I only have access in college. (I8, 2015) With mathematics it would be nice if we had a way to write online in the traditional way. With the writing tests sometimes they are done with the computer but the mathematics is hard. It is better with pen and paper. (F4, 2017)

Student group second-year engineering: discussion interview

The sample (n = 8) was drawn from a request made to second-year class groups. The students in the discussion were those able to attend at the scheduled time. The interview duration was 30 minutes to allow the students to make full use of their lunch break. The purpose of this discussion was to consider if the responses of the first-year students were consistent with their own experiences and to tease out any issues for further exploration. The following extracts from the discussion reveal the issues of interest as the students gain confidence (18.5%) and become more immersed in their engineering programme through greater metacognitive awareness of the need for feedback (23.7%) and assessment requirements (28.8%).

R: I would like to hear your opinions on the way you have worked with maths this year and how this compares with the way you worked with maths in the first year. I just think that any assignments in maths in year 1 we got good feedback. You knew where you were going wrong whereas, in year 2 there is no such feedback. (Y1, 2016) R: In terms of online assessments compared to handwritten assessments, what are your feelings towards these? I preferred the online because you could sit down and study them while taking your time. You learn it rather than rush it. (Y4, 2016) R: Do you have any suggestions for improvement of online assessment? What might make things a bit better? Be able to put your calculations into the computer so you get working out marks instead of zero. (Y3, 2016) Sometimes putting in letters, etc. is a problem – syntax. (Y4, 2016)

Brief overview of lecturer interviews

The dominant utterances of the lecturers are centred on assessment (approximately 30%).

Lecturer 1 concentrates 46% of all utterances on assessment followed by 15% of utterances that are described as perceptions of student beliefs or actions. When asked if the lecturer engaged with the students online the reply was,

I will help the students that need help. I don’t have to sit down with students who are passing the quizzes because they have the knowledge.

Lecturer 2 devotes 28% of all utterances to assessment and 25% to the experiences of the students. When asked if the lecturer engaged with the students online the reply was,

I give them a mark but they have to work out which questions they got wrong.

Lecturer 3 considers confidence (23%) of students to be an issue after assessment (36%). This lecturer engages with the students online and would like to utilize more open-ended questions, saying,

I used to use open-ended questions but the students didn’t like them. They want multiple choice questions (MCQ) and so I boost the number of options in each question to stop them guessing.

Lecturer 4 alludes to many perceptions (20%) without evidence and concentrates on the mechanics of assessment (36%). This lecturer does not use any online techniques, saying,

I can’t see how you could examine [matrices] on Blackboard … It is my perception that it is only MCQ … I only use Blackboard to administer notes and assignments.

Lecturer 5 discusses many perceptions of student activity (35%) and 30% of utterances were devoted to assessment. This lecturer does not use any online techniques apart from storing course notes and says,

In presenting them with questions from previous years, they realize that on first sitting down they are not really in a position to do anything … My own experience of e-learning is that once you take the pen as the means of input away you are inclined to stop working things out to the same extent as with pen and paper.

For lecturers 6 and 7 35% of utterances are related to perceptions of student activity and beliefs followed by 24% on assessment, an example being,

I am able to display a solution using my document camera and students are able to watch and ask questions while I do this. Many listen in the background or send private messages because they may be less confident … I use PDF files because I am able to see the complete solution … I do not have good tools to allow me to test the mathematics using the Internet.

Of interest is the paucity of utterances relating to feedback (minimum 0% to maximum 13%). None of the lecturers explicitly expressed any indication of metacognition within the process (Veenman et al., 2006).

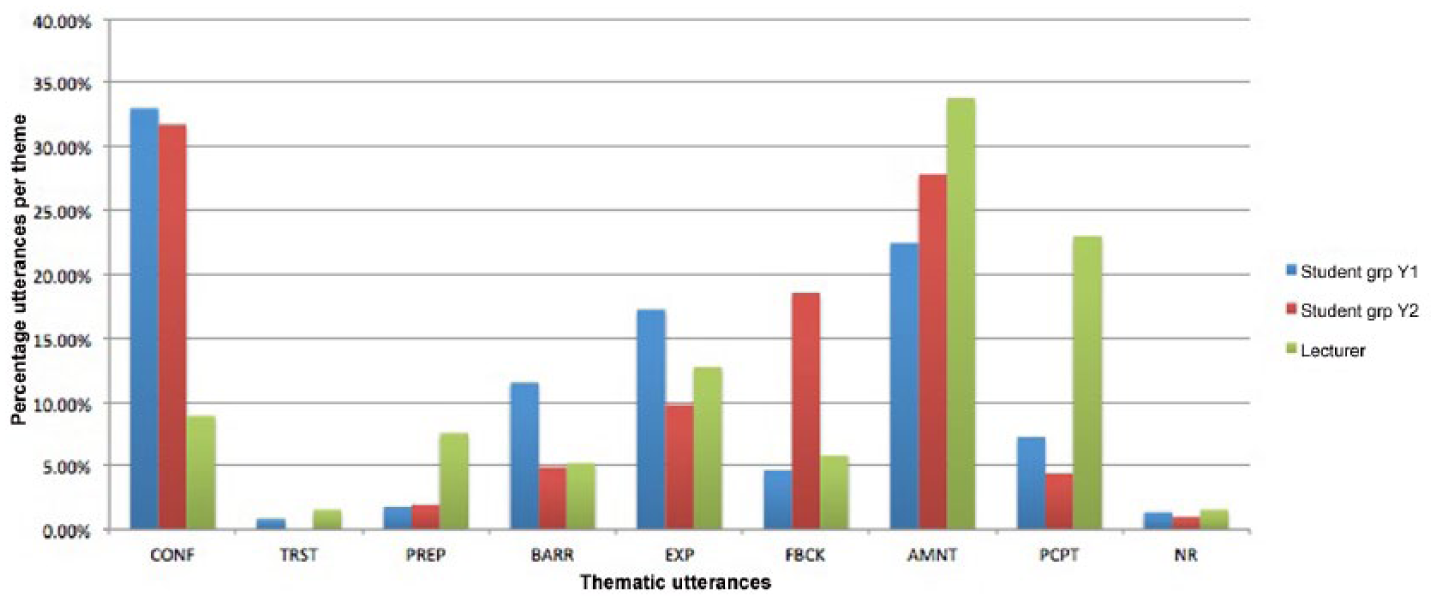

Overview of discussion interviews with lecturers and students

Analysis of all interviews with students and lecturers as well as responses to open-ended questions within questionnaires 1 and 2 is provided in Table 1, with Figure 2 displaying the coded utterances for themes relating to confidence, assessment and feedback. The changing narrative for the engineering students from the beginning of the first semester through to the beginning of the fourth semester suggests that the initial perceptions held are overcome to a certain degree. With reference to Figure 2 it is possible to view the adjustments to thought processes as the metacognitive engine taking stock and applying rational input to help the students cope with any threats and barriers. In parallel, it is also possible to extract a sense of the viewpoint and actions of lecturers. Issues of confidence were of primary concern to the student groups when they embarked on their first semester and completed Questionnaire 1. The subsequent interview with a sample of students confirmed confidence as a major issue and again with Questionnaire 2 at the beginning of the second semester. A point of note is that the students appear to be gaining in confidence, although some fears exist with regard to barriers, and issues that hinder the online engagement, and this is extolled through comments where anxiety about mathematics is mentioned.

Coded utterances per theme.

Training and support are discussed in the questionnaires but are considered minor in comparison with issues such as confidence. The experiences of the students are growing more positive as they move through the first semester and they reflect this in the comments made in the second year. The narrative evolves from first year to second year where the emphasis moves from issues of confidence to those of feedback and assessment. The transitional period within the first year is dominated by students coming to terms with the metacognitive aspects of third-level education. Reflection on their first-year experiences by the second-year students suggests that a growing awareness of assessment procedures, feedback practices and lecturers’ policies moves to the fore.

Discussion

The role of technology is to facilitate teaching and to promote learning in a variety of situational contexts, and a central tenet of the learning is assessment (Robles and Braathen, 2002; William, 2011). Many engineering activities are based on ill-defined and complex tasks and learners are made aware of these issues to ensure authenticity, with formative and summative tasks being performed to aid the judgement of the depth of learning. Many of the concepts taught in engineering have a high level of abstraction and this is often problematic in the instructional context particularly via a virtual learning environment. The assessment of learning of such abstract concepts may be facilitated in the classroom through observation, discussion and paper-based activities. Abstract concepts may be discussed online through whiteboards, discussion forums and email, but experience has shown these discussions mainly focus around the written word; learners appear to be more reluctant to discuss abstract mathematical constructs. All lecturers in this study consider the online assessment of such abstract mathematical concepts problematic.

Within the STEM environment a higher-level cognitive assessment result may be a calculation, determination of an expression or an equation. It is suggested that the current mechanics of assessment are inadequate to fully address the needs of educators in their endeavours to provide prompt, accurate and objective feedback. The assessment is a holistic examination of the complete process, based on a learner’s submission. Key questions include: Is learning evident from the submission? What depth of learning can be determined? Is the learning appropriate? What type and level of feedback is appropriate for the learner? Alternate choice, multi-choice, matching and gap-fill questions allow lower-order recapitulation and knowledge to be quickly assessed. Deeper knowledge-based questioning is more problematic to assess automatically and research has been conducted to explore this area (Ashton et al., 2006; Sangwin et al., 2013). Some lecturers request scanned copies of written work by students to be submitted online to aid the assessment of deeper knowledge.

Males within the age range 18 years to 46 years comprised 97% of the participants in this research; gender-related differences or similarities could not be examined in depth or any conclusions drawn. Analysis of questionnaires reveals that many learners struggle to engage online with abstract mathematical concepts and consider a loss of reward to be a negative attribute of the assessment process. Common responses from learners are,

I lost the marks because the final answer was wrong but my working was correct. (S36, IRL, 2015) I made a mistake copying the answer into the quiz. My work wasn’t even considered. (S106, FI, 2015)

This is a result of standard automated quiz techniques, such as numerical calculation or text entry-type questions, being applied.

The major thematic outputs are in the areas of self-efficacy relating to self-esteem, confidence and self. Two very distinct student and lecturer groups, separated by language and geography, and related only through area of study, were analysed using a mixed methods approach. The depth of data obtained through IPA allows thematic concepts that otherwise wouldn’t emerge to be exposed through in-depth discussion. One student remarked afterwards,

No one ever asked me before how I really felt about studying. (I1, 2015)

The first-year experience of high achievers at third level is an area of much debate and interest in the higher education community, but this study involves low to medium achievers. A unique coding schema was developed specifically as a result of the analysis of questionnaires completed by students in two countries. The educational experiences of both groups prior to entry to third level are significantly different. Issues such as a sense of greater self-efficacy, and preparedness in the Finnish group (when compared to the Irish students at the same stage in their studies) are illuminating. This raises questions, including: How complex is the reasoning behind it? Is it significant enough that further study is required to determine the reasons? Is it desirable for these emotional and behavioural aspects to be inculcated within the Irish student group? The students in both countries allude to the digital divide through several responses. The divide is not just in terms of access to resources in the form of computers and Internet access off campus, it is also related to the student’s ability and knowledge base. Some students displayed highly complex and contemporary skills, whereas others displayed a low level of ability in the use of what is considered de-facto standard resources. There may be a relationship between these issues and the neurodiversity displayed within the respective student groups.

The lecturers expend a considerable amount of energy on the role of assessment within the programme, with a blurring of boundaries evident between summative and formative activities. Comments by students indicate that although assessment is recognized as a fundamental element of the activity within the programme there is insufficient emphasis given to feedback and its timeliness. The evolving narrative within the research suggests that students become very quickly aware of the need for feedback. This metacognitive awareness is an encouraging sign and indicates the growing maturity of the students within the system.

The assessment (including e-assessment) of learners exists in many programmes of study and the outputs offer a myriad of mechanisms for exploring learning at an individual and group level. However, the value of the e-assessment to the learner requires an accurate understanding of him/her. The outcomes of this research will guide a second stage in the application of e-assessment in both institutions. Evidence of a ‘gap’ between what lecturers expect and what students actually do is emerging from the thematic discussions. This conflict is evident to the students as they create their own learning spaces and manage their expectations. Therefore, the lecturer has an increased responsibility to the student to ensure that this conflict is minimized, and the gap reduced within any assessment exercise. This is particularly so if assessment is to be made online when face-to-face resolutions are not always possible. One issue raised by the students is the absence of a natural interface and, yet, this is not considered problematic within the literature (Sangwin, 2013).

Underpinning the complete process is the role of metacognition and observation of the metacognitive journey made by the students. Veenman et al. (2006) found that, ‘teachers lacked sufficient knowledge about metacognition’ in their responses in interviews. This is replicated in the responses from the sample of lecturers to questions posed during the interviews in this research. A major element in the metacognitive process is feedback from the lecturer, but most discussion in this area came from the students.

The theoretical framework of self-efficacy, in association with IPA, permitted the research to develop from the initial open and freely composed comments of the students. The voice given to the students by this methodology and the strength of many of the comments are illuminating. The research generated a significant amount of qualitative and quantitative data representing the views of the majority of first-year engineering mathematics student groups in the participating organizations. The involvement of the students was not problematic and was at times encouraging as they displayed an eagerness to participate. This interesting situation was not anticipated because normally these students are considered reluctant to share emotions and feelings. The ethical considerations of the research were paramount throughout the study. Even though the groups were considered to be not at risk, one of the researchers has responsibility for assessment of four first-year programmes, not just mathematics, for the Irish group. The confidentiality of responses by students was guaranteed through use of anonymous questionnaires and only undertaking audio recordings with the students using pseudonyms during discussions. A second researcher administered the questionnaires for the Finnish group and had no input into the analysis stage, to ensure objectivity as far as possible in the process.

Conclusions

Endeavouring to ascertain the thoughts, reflections and emotions of engineering students in the first year of their journey in tertiary education has been challenging. Most of the students are late-teen males and the notion of not only exploring their reflections but also sharing them with a person of authority is a novel experience for them. Experience in the classroom prior to the study suggested that male students would be uneasy about sharing feeling and emotions in front of their peers.

The study was introduced in a non-threatening manner and discussed openly with all students before seeking their consent in an attempt to attenuate any threats perceived by the students with regard to their full participation. The findings from the study suggest that the anecdotal evidence was an accurate reflection of student feelings in the first year of their studies at tertiary level in engineering mathematics. Students bring their emotions and understandings with them into the new and sometimes alien tertiary environment in which they are expected to engage in metacognitive learning and take greater responsibility for that learning. The narrative derived from the questionnaires and interviews with first-year students and, compared with the narrative of the second-year students, suggests an evolving series of thought processes leading the students to accept and engage with the tertiary process. The analysis suggests that there may be a basis for greater understanding by lecturing staff of the first-year learning process through a narrowing of the gap seen in the thematic outputs; what lecturers consider important is not what students consider important in their first year. The students’ voices remain unheard, particularly the voices of low to medium achievers

The study is a temporal snapshot undertaken within a single year and there is a danger that the data gathered is not an accurate reflection of all first-year experiences. The findings from the study will form the basis for further longitudinal research with the aim of achieving a greater understanding of the first-year experiences and how the students develop skills such as: reflection; dealing with threats; engaging with various types of assessment; understanding feedback; growing their membership of the student community; and planning, monitoring and evaluating. The longitudinal study will attempt to smooth out or highlight any anomalies detected and establish a baseline for programme design. The responses by the lecturers are interesting in that they fail to mention feedback in a significant sense. Further research to determine the reasons for the failure to discuss feedback is planned as part of a more extensive study into this area of importance to students.

Footnotes

Appendix 1

Code schema for utterances.

| Thematic output | Code abbreviation |

|---|---|

|

|

CONF |

|

|

TRST |

|

|

PREP |

|

|

BARR |

|

|

EXP |

|

|

FBCK |

|

|

AMNT |

|

|

PCPT |

|

|

NR |

Acknowledgements

The authors would like to thank Mr Jarkko Hurme from the Oulu University of Applied Sciences for facilitating access to the students.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.