Abstract

This paper examines the counter-violent extremism and anti-terrorism measures in Australia, China, France, the United Kingdom and the United States by investigating how governments leveraged internet intermediaries as their surrogate censors. Particular attention is paid to how political rhetoric led to legislation passed or proposed in each of the countries studied, and their respective restrictive measures are compared against the recommendations specified by the United Nations Special Rapporteur on the Promotion and Protection of the Right to Freedom of Opinion and Expression. A typology for international comparison is proposed, which provides further insights into a country’s policy focus.

Keywords

On 15 March 2019, an Australian gunman live-streamed his shooting attack on a mosque in Christchurch, New Zealand, on Facebook. This shooting and the subsequent attack on the nearby Islamic Centre resulted in 51 people dead and 49 injured (Britton, 2019; Roose, 2019). The tragedy ignited a global call to governments around the world to devise new approaches in countering violent extremism online. France subsequently led this discussion by joining hands with New Zealand to host the Christchurch Call to Action Summit in Paris 2 months later. Representatives from the European Commission, 17 countries and eight internet intermediaries pledged to cooperate in countering violent extremism through a non-binding agreement. By September 2019, the United Nations Educational, Scientific and Cultural Organization (UNESCO), the Council of Europe and another 14 countries had joined this pledge.

Despite this cross-national and cross-sector cooperative approach in countering violent extremism, other countries decided to go separate ways. Scholars lamented that both the US and China were not on board in promoting systemic change in countering violent extremism online (Burton, 2020; Veale, 2019). During the time of the above summit, the Trump administration stated that the US government was ‘not currently in a position’ to back such measures (Browne, 2019). In addition, Australia and the UK, the other two important countries within the common law system, each devised their own strategy to deal with violent materials online.

In light of the above, this paper compares the respective policies in five countries (Australia, France, China, UK and US) in countering violent extremism online and explores their political rhetoric in turning internet intermediaries into their de facto surrogate or proxy censors (George, 2018; Kreimer, 2006; Kuper, 1975). A typology for international comparison is also proposed, with a view to advancing future policy and political communication analysis.

Literature review

Conceptualizing violent extremism

What constitutes violent extremist content? In the Plan of Action to Prevent Violent Extremism devised by the United Nations (UN, 2019), the UN Secretary-General says, ‘Violent extremism is a diverse phenomenon, without clear definition’ (Section I, para. 2). By contrast, the Joint Declaration on Freedom of Expression and Countering Violent Extremism (the Joint Declaration) adopted by the UN Special Rapporteur on the Promotion and Protection of the Right to Freedom of Opinion and Expression (Special Rapporteur on FOE) in 2016 stated that ‘all stakeholders, including public authorities and internet intermediaries, should not use or apply the concept of “violent extremism” as the basis for restricting freedom of expression unless it is clearly defined’ (para. 2c).

The question of who defines extremism and who delegitimizes violence is at the core of an ongoing ethical debate. Some scholars hold the view that violent extremism is a precursor to terrorism (Lowe, 2017; Morina, 2019), while others argue that the definition of terrorism should be broad and thus encompass violent extremism (Carr, 2007). The UN Security Council (2014) also makes an explicit connection between violent extremism and terrorism by promoting international efforts to counter ‘violent extremism that can be conducive to terrorism’. By contrast, recent studies found that violent extremism is frequently used as a ‘social label’ on both acts of terrorism and other forms of violence that are connected to religious or race ideology (Southers, 2013; Striegher, 2015). This line of thinking tends to distinguish between violent acts committed by White nationalists and Islamic terrorists, and is often considered to be Western-centric.

Notwithstanding the lack of a common definition, a more pragmatic approach adopted by many governments is to avoid the debate on what constitutes violent extremism and focus on which types of threats they want to mitigate. In this context, violent extremism can be conceptualized through the lens of the UN Special Rapporteur on the Promotion and Protection of Human Rights and Fundamental Freedoms while Countering Terrorism (Special Rapporteur on Counter-Terrorism), who states that any definition of terrorism should include at least one of the following: (1) the intention of taking hostages; (2) actions aimed at causing death or serious bodily harm to the general population or segments of it; and (3) actions involving lethal or serious violence to the general population or segments of it (UN General Assembly, 2015; UN Human Rights Council, 2010). With the objective of mitigating national security threats, ‘violent extremism content online’ in this study is defined as materials that are digitally stored or displayed (e.g. through streaming) to its users which either depict or contribute to one or more of the three factors stated by the Special Rapporteur on Counter-Terrorism (Birnbaum and Rodrigo, 2019).

Theoretical framework

Internet intermediaries such as social media (Facebook, YouTube, Twitter, etc.), search engines (e.g. Google) and internet service providers (ISPs) have been enjoying a long period of self-regulation, acting as gatekeepers of third-party content and carrying out quasi-adjudicative and quasi-enforcement functions without sufficient independent or democratic oversight (Huszti-Orban, 2018; MacKinnon et al., 2014). However, stemming from a concern over protection of intellectual property, European policymakers began to challenge the conflicting roles of internet intermediaries and the appropriateness of the self-regulatory regime (Stalla-Bourdillon, 2012). With the rise of the ‘Islamic State’ (ISIS, ISIL or Daesh) in 2013 and other extremists continuing to leverage social media in garnering support (Benigni et al., 2017) from 2013 onwards, governments began to join hands in pressurizing internet intermediaries to play a more proactive role in censoring third-party content online (Elgot, 2017; Toor, 2017). Such efforts instigated debates about the role of internet companies in countering violent extremism, from the EU to the UK and then Australia (Gibbs, 2017; McCann, 2017; Sparrow and Hern, 2017) as well as the US (Romm and Harwell, 2019).

Against this backdrop, the Joint Declaration stipulates that any initiatives undertaken by private companies in relation to countering violent extremism online should be sufficiently transparent, in the sense that users are able to anticipate that the materials uploaded would be moderated or even deleted. Efforts should also be made to allow users to know beforehand that personal information might be collected and could be sent to relevant public agencies (para. 2i). The Special Rapporteur on FOE, in his 2011 report to the UN Human Rights Council, also stressed that censorship must not be delegated to the private sector on behalf of any government (para. 43). Specifically, the Special Rapporteur has five recommendations for intermediaries (para. 47), which formed the analytical framework when dissecting the policies and legislation created by each of the countries in this study:

Recommendation 1: Restrictions to users’ right to privacy and the right to freedom of speech or expression should be carried out only after judicial intervention.

Recommendation 2: Be transparent to the users and where applicable, the wider public, regarding the restrictive measures taken.

Recommendation 3: Serve alerts to users prior to executing any restrictive procedures.

Recommendation 4: Minimize the restrictive effect by addressing only the specific materials involved.

Recommendation 5: Provide effective remedies for affected users, including an appeal system provided by the intermediary and/or by the relevant authorities.

From a conceptual standpoint, the above recommendations are also guidelines for governments to consider before they delegate any censorship responsibility to internet intermediaries. Recommendations 1, 2 and 5 require any online restrictions to be clearly stated in law, whereas Recommendations 3 and 4 concern the restrictive effects of content moderation technology. Moreover, the level of restrictive effects depends on whether the primary policy objective is prevention or containment (i.e. minimizing the negative effects of specific content). As such, these guidelines can be translated into a typology of two dimensions: (1) ‘legislation- / technology-oriented’; and (2) ‘prevention / post-incident management’ (Figure 1).

Typology for comparing counter violent extremism online restrictive measures.

Leveraging on this typological model, this study contributes to existing literature by providing an integrated framework for comparing legislative practices adopted by common law and civil law jurisdictions when countering violent extremism online. How respective polices and political rhetoric in Australia, France, China, the UK and the US fit into this model will be examined in the latter part of this paper.

Method

This study first compares the legal frameworks for countering violent extremism in the US, the UK and Australia, arguably the three most influential common law jurisdictions. Existing and proposed restrictive measures, including those proposed in draft bills and public consultation papers, are compared against the five recommendations set out by the Special Rapporteur in order to determine whether the self-regulation tradition had been morphed into a more direct and invasive legislative regime (i.e. turning internet intermediaries into surrogate censors). The respective political rhetoric of the relevant documents in each country will then be analysed to critically examine governments’ justifications in promoting such changes.

For comparative purposes, related legislative measures in two representative civil law jurisdictions, France and China, are also analysed using the same method. France was chosen due to its pioneering role in countering violent extremism online after the Christchurch shooting, and its proposed new law in online censorship has spurred other European nations to follow suit. China was selected in this study also because of its global influence. It is the primary target of Western critics for instigating the world’s most stringent online censorship measures that violate democratic values. In contrast, the Chinese online surveillance system is considered as a ‘role model’ for other authoritarian regimes (e.g. Russia, Turkey and several African states), hence it is essential for this cross-country comparison.

Finally, similarities and differences in political rhetoric in these common law and civil law jurisdictions were examined using the proposed typology. Recent examples were also drawn from Germany, Russia and Japan to test the appropriateness of the model.

Findings

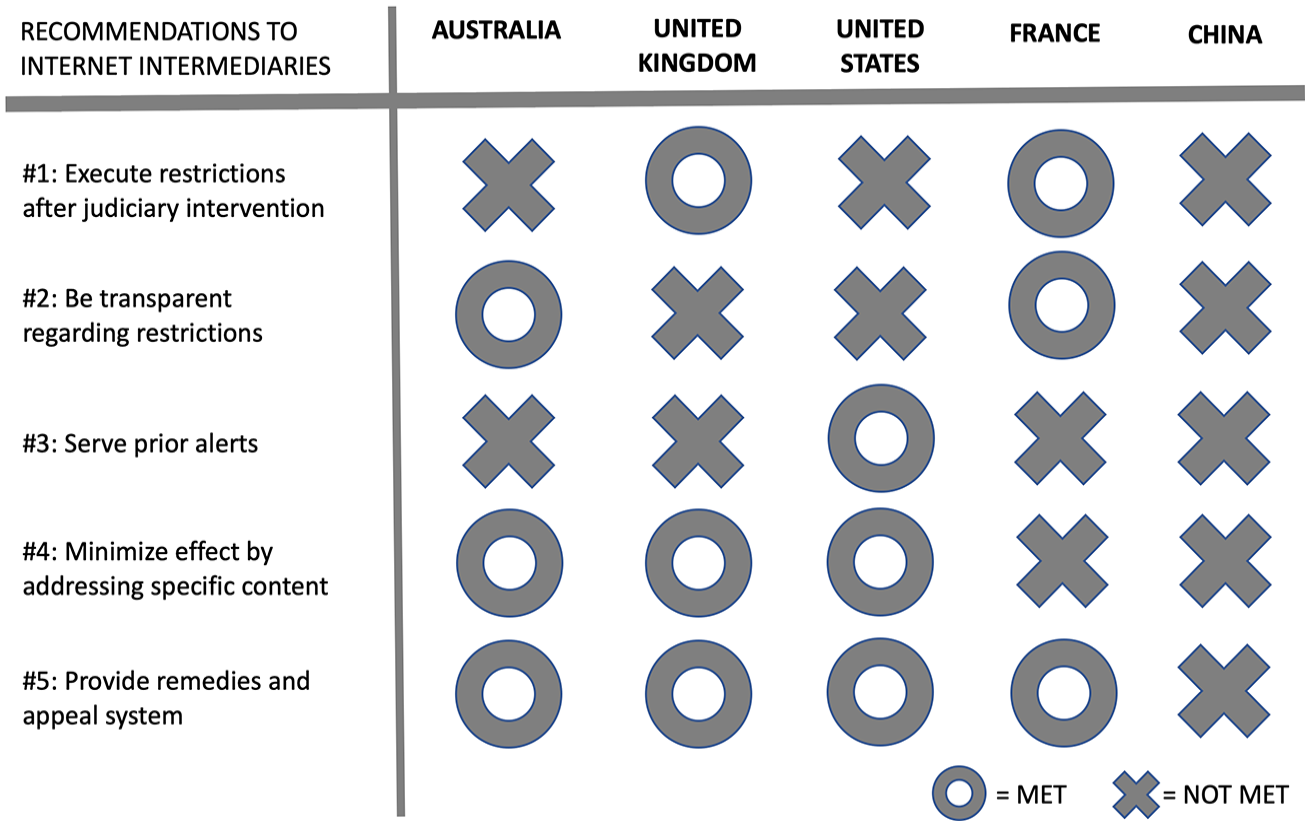

The degree to which legislative practices from individual countries met the five recommendations set out by the Special Rapporteur of FOE is summarized in Figure 2.

Cross-country comparison of restrictive measures against recommendations by the Special Rapporteur on FOE.

To elaborate on the above finding, issues pertaining to the respective legislative measures and the accompanying political processes of each of these five countries are detailed below.

Australia

Two weeks after the Christchurch shooting, the attorney-general for Australia announced that the government had already prepared a draft bill for censoring live-streaming of ‘abhorrent violent material’. By the following week, the Criminal Code Amendment (Sharing of Abhorrent Violent Material) bill was rushed through both the Parliament and the Senate in less than 48 hours and passed into law on the last day of the legislative calendar. In essence, the Act required internet intermediaries to report to federal authorities any online content that streamed or recorded a person or persons engaging in terrorism as well as violent crimes, such as inflicting grievous bodily harm, abduction, sexual assault and so on. In addition, intermediaries would be commiting an offence if they failed to remove such content within a reasonable time frame. Penalties included fines and/or imprisonment for an individual, or a fine up to one-tenth of the corporation’s annual turnover.

Policymakers across Australia’s political spectrum unanimously supported the Act because they wanted a quick fix to drum up support ahead of the upcoming federal election then only a month away (Karp, 2019). Even the opposition Labour Party voted in favour of this bill, though they promised to review it after the election if they won (they actually lost) – a tactical move showing that tightening control over social media was an election issue that all political parties had to embrace during the campaign period.

By contrast, scholars, legal experts and technology companies across the world criticized this bill as having been passed recklessly in an extremely short time frame without proper public consultation, which would likely lead to grave consequences in restricting freedom of expression online (Leung, 2019; Scott, 2019). Upon closer investigation, a major issue pertaining to the execution of this Act is the lack of a clear definition of the phrase ‘expeditious removal’. With respect to being accused of being too slow in content removal, it is unclear whether the starting point should be at the moment when the user uploads the content or on receipt of removal notices from the authorities. Also, it might take days to evaluate whether a recording captures genuine violent extremism or a fake, staged act. Paradoxically, the time required for careful evaluation of context is in direct contrast with the legal expectation that internet intermediaries should alert the authorities immediately.

Most notably, the Act would create problems for internet intermediaries in adhering to the recommendations provided by the Special Rapporteur on FOE mentioned above. These problems include: requiring private companies to remove content on behalf of the government before judicial intervention would restrict the right to freedom of expression (cf. Recommendation 1) and forewarning to users would not be given (cf. Recommendation 3). However, given that the Act explicitly defines what is abhorrent violent material and judiciary review is allowed, the other three recommendations are arguably met.

United Kingdom

The ‘Online Harms White Paper’ was published on 8 April 2019, 4 days after the enactment of Australia’s Criminal Code Amendment (Sharing of Abhorrent Violent Material) Act. In the foreword of the white paper, the Christchurch shooting was quoted as one of the reasons for the UK government to tighten online security, holding intermediaries accountable for dealing with actions that were marginally legal at the time, but should be considered as harmful (Government of the UK, 2019a). The ministers boasted that the proposed bill made the UK a global pioneer in tackling harmful materials online by using a single comprehensive shot, instead of introducing various regulations to target specific types of harm.

The overall direction that the British Parliament agreed upon is clear: sufficient measures in ensuring the safety of internet users were absent (Government of the UK, 2019a: Summary, para. 7) and a regulatory framework would be drafted and passed into law to give internet intermediaries a ‘duty of care’ (Smith, 2019). A public regulator with statutory powers would be established to take action against companies that did not fulfil their duties. Specifically, online platforms would be subject to more stringent requirements in reporting and drafting their terms and conditions documents.

The main controversy surrounding the white paper is how to define ‘harmful but not illegal’ content (Government of the UK, 2019c: Article 19; Meyer, 2019; Tambini, 2019; Volpicelli, 2019). Conceptually, the UK government approached the issue of censoring harmful content online from a different angle than the Australian government: instead of defining what types of content were abhorrent to the general public and should be removed, UK policymakers focused on the users and categorized some of them as ‘vulnerable people’ who may be at risk of being abused online (Government of the UK, 2019a). In the case of violent extremism, the white paper defined these ‘vulnerable people’ as internet users who would be radicalized by terrorist groups (Government of the UK, 2019d: Summary, para. 3). As such, internet intermediaries were said to have a duty of care towards these vulnerable individuals and should ascertain that these users would not be ‘harmed’ by certain online content, even if such content was not necessarily illegal.

The concept of duty of care has long been established for ‘offline’ companies. It is a legal concept that centres on risk and a tortious liability (Grantham, 1997; Woods and Perrin, 2019). For example, services provided to minors should have more safeguard mechanisms in place to mitigate risks than services aimed at adults. However, the white paper does not explain how internet intermediaries should identify these vulnerable individuals who are at risk of being radicalized. In practice, it might be impossible for any intermediaries to identify these vulnerable users beforehand unless they knew the terrorists’ plan in the first place, or they were provided with intelligence relating to national security on an ongoing basis.

Under this light, the regulatory framework proposed in the white paper goes against the Special Rapporteur on FOE’s advice to internet intermediaries: since identifying specific groups of vulnerable users beforehand is either not feasible or involves state secrets, companies would be unable to be transparent to both the users and the wider public about the restrictive measures taken (cf. Recommendation 2); nor can the intermediaries issue alerts before executing any restrictive measures (cf. Recommendation 3). Nevertheless, this framework does allow judiciary intervention before restrictions are implemented, and provides the possibility of appeal through procedures provided by the intermediary, which are in line with Recommendations 1 and 5, respectively. Moreover, the requirement that an online duty of care involves internet intermediaries to distinguish between children and adults when providing access, implies that the restrictive effect on content will be specific (cf. Recommendation 4).

United States

As the first legislative effort to address violent extremism online, the National Commission on Online Platforms and Homeland Security Act received unanimous support from the House Committee on Homeland Security in October 2019, indicating Congress’ serious concern that the technology industry’s long-established self-regulatory regime might not align with national security interests.

A dedicated commission would be set up under the Department of Homeland Security but not for the oversight of internet intermediaries. Rather, it would be given subpoena power to obtain all relevant communications about violent extremism from any internet intermediaries to allow them to have a thorough understanding of the complexity of the issue. Specifically, a two-pronged study on how online platforms might affect national security would be conducted:

Comprehensive research with literature review into whether online platforms facilitate acts of violence, taking into account advancements in artificial intelligence (AI); and

A study with public hearings on how social media platforms may have been exploited in three areas: (a) covert foreign state influence campaigns; (b) international terrorism; and (c) domestic terrorist acts.

The commission’s task was to compile a final report with suggestions on how social media platforms could counter violent extremism, including new policies, technological solutions, and also ‘voluntary approaches’ for internet intermediaries to implement. Given that the restrictive measures would continue to be managed by the online platforms, that is, without judiciary intervention, Recommendation 1 of the Special Rapporteur on FOE was not met.

When compared against the other four recommendations laid down by the Special Rapporteur, a major problem with the research proposed by the bill is that the three main areas of study are too disparate. For example, while a study on ‘covert foreign state influence campaigns online’ might look into operations led by Russia and China, an investigation on ‘international terrorism that leveraged on social media’ would involve research on existing terrorist organizations and fringe extremist groups, whereas a study on ‘domestic terrorism via online platforms’ would involve research into white supremacist and/or nationalist groups. Moreover, the online platforms involved could be very different (Facebook, Telegram, Twitter, Weibo, WhatsApp, 8Chan, etc.). In this connection, the Center for Democracy and Technology commented that this commission ‘may be out of its depth’ to conduct a study with such a vast scope (Woolery, 2019). Since the content nature varies significantly, the moderation algorithm adopted by each platform in response to this bill would unlikely be transparent to the public, not to mention that it is proprietary (cf. Recommendation 2).

However, on addressing the responsibilities of internet intermediaries, the bill specifically acknowledges the Santa Clara Principles on Transparency and Accountability in Content Moderation (2018), which will likely serve as future guidelines for the US government to devise further actions in countering violent extremism online. These principles were proposed by a group of non-profit organizations, advocates and academic experts as the minimal steps for companies engaged in ‘content moderation’ and can be summarized using three keywords: (a) ‘#notice’: legitimate reasons pertaining to moderating the specified content and suspending user accounts should be given to all affected users; (b) ‘#numbers’: statistics about content moderation and account suspension should be reported publicly; and (c) ‘#appeal’: a responsive appeal mechanism should be available. In essence, (a), (b) and (c) of the Santa Clara Principles adhere to Recommendations 3, 4 and 5, respectively.

France

The French took a different approach to legislation than the common law jurisdictions in countering violent extremism online. Conceptually, instead of punishing people or companies that have already uploaded or streamed violent content, the French government wants to stop violent extremism before it happens, by eliminating content that incites hatred on grounds of race, religion, ethnicity, sex, sexual orientation or disability. Internet intermediaries are required to monitor and moderate hate speech related content and can be fined up to 4 per cent of global annual turnover for failing to do so within 24 hours of notice. A special branch of ‘tech police’ dealing specifically with terrorist and child abuse content would be set up to handle the blocking of servers or other electronic means enabling access to content that has been declared illegal by the court. Failing to cooperate with law enforcement, such as by preserving data that enable identification of those posting such content, would be fined up to €250,000.

The Public Bill Committee of the National Assembly adopted the Countering Online Hatred Bill (Proposition de loi contre les contenus haineux sur Internet) on 19 June 2019, and a debate was held on 3 July in the National Assembly under a fast-track procedure (i.e. only one reading in each house of the parliament). The bill received cross-party support with 434 voting in support and 33 voting against it in the lower house.

Compared to the recommendations set out by the Special Rapporteur on FOE, the requirement that content needed to be declared illegal by a court before moderation and the provision of an external appeal avenue are in line with Recommendations 1 and 5. By highlighting hate speech as the primary focus, the restrictive measures are arguably fairly transparent to the public (cf. Recommendation 2). However, the strict 24-hour time cap could lead to the use of filtering technology that resulted in over-removal of content without prior notice, thus violating Recommendation 3. In addition, the scope of the new law is overly broad (cf. Recommendation 4), both in terms of companies covered (e.g. search engines) and the types of content that must be removed. In particular, the law required monitoring of both public and private exchange of content, as well as intermediaries that host public forums as an ancillary activity. Such a vast scope of monitoring raises significant issues in infringing privacy. Given the context-sensitive nature of hate speech, critics also consider it is impossible for automatic content filters to distinguish the nuances between legal and illegal content (Berthélémy, 2019; Government of the UK, 2019b: Article 19; Turley, 2019).

China

To a certain extent, the Chinese government has taken a similar route to the French by focusing on preventing violent extremism (Xinhua, 2018). Yet the Chinese government has taken a much broader, mass surveillance approach: Article 28 of China’s new National Security Law (effective on 1 July 2015) declared that the ‘State is against all forms of extremism and terrorism’, and it would enhance its capacity to stop and to prevent terrorism acts, which included surveillance and intelligence works as well as monitoring of capital flows. The scope of surveillance covered 16 key ‘national security areas’: political system, economic system, military system, homeland and border, society, culture, technology, cyberspace, outer space, deep sea, polar region, nuclear, energy and resources, animals, ecology, and overseas interests. More importantly, national security was placed as an essential element for achieving the ‘great rejuvenation of the Chinese nation’ (National Security Law, 2015). When the Standing Committee of the National People’s Congress passed this legislation in 2015, the bill received unanimous support with only one abstained vote.

Furthermore, with respect to countering violent extremism online, the Anti-Terrorism Law that took effect on 1 January 2016 defines extremism as ‘any activities that distort religious teachings, or incite violence, hatred, discrimination’ (National Security Law, 2015: Article 4, para. 2). Specifically, internet users were required by law to report to law enforcement agencies if they found any online content that advocated terrorism or violent extremism, while telecommunication operators and ISPs needed to prevent transmission of any extremist content.

As an authoritarian regime, the extensive approach to online censorship (commonly known as the ‘Great Firewall’; Yang and Liu, 2014) adopted by the Chinese government basically violates all of the five recommendations laid down by the Special Rapporteur on FOE. Critics are of the view that the Chinese government’s definition of extremism is overly broad and vague, which raises human rights and privacy concerns. In practice, internet access in China is provided by eight ISPs that are essentially the proxy censors of the Ministry of Industry and Information Technology (MIIT). Nevertheless, internet censorship in China is often carried out not at the ISP level but at the corporate level, through the supervision of the Cyberspace Administration of China (CAC). The CAC has occasionally fined Tencent, Baidu and Weibo for failing to censor online content: from pornography to fake news, or any content that ‘incites ethic tension’ and ‘threatens social order’. In this connection, the Citizen Lab of the University of Toronto found that the most popular social app in China, WeChat, is constantly assisting the government in the surveillance of images and files shared on its platform through machine learning, using data collected from its users without any notification (Knockel et al., 2020).

Analysis

Analysing the political rhetoric of the government offered an opportunity to understand more about its intention, its expectations of the surrogate censor and, more importantly, the possible next moves. For example, in the case of Australia, the government’s word choice in drafting the title of the Act already suggested that the primary duty expected from the intermediaries in countering extremism is to delete ‘violent material’ swiftly. Priority is thus given to speed over accuracy. From a rhetorical standpoint, putting the adjective ‘abhorrent’ in the title of the bill revealed that the Australian government wanted to claim the moral high ground at the very beginning of the legislative process. It was a tactical move which made any suggestions that the bill should be delayed for further discussion look insensitive when compared against the Christchurch tragedy. Notice that the word ‘abhorrent’ would lead to the connotation that the online violent materials were so disgusting (as compared to violence in entertainment) that it was the obligation of the internet intermediaries to get rid of such content as quickly as possible – hence ‘time is of the essence’ from a legal point of view when enforcing this Act. This legislative intent also explains why the major requirements highlighted in the Act, that is, expeditious removal, reasonable time, alert process, all pointed towards the issues of ‘speed’ and ‘accuracy’. Thus, the Act as a symbol carries the message: ‘The State is acting quickly to deal with these abhorrent violent materials and we expect internet intermediaries to censor quickly as well’.

Similar political rhetoric is also found in the UK: parallel to the negative-positive or problem-solution pairing of ‘abhorrent materials-expeditious removal’ in the Australian bill, the UK government pairs up the two nouns ‘harm-care’ to achieve similar rhetorical effects – that the state is ‘taking good care’ of British citizens and will push internet intermediaries as hard as it can to ensure that they fulfil their duty of care to keep the UK safe – internet intermediaries have been criticized at the beginning of the white paper as not going ‘far enough and fast enough’. It is also worth noting that at the time this white paper was published, the Conservative government had just encountered a historic defeat in the parliament in trying to pass a ‘Brexit Deal’ by the then prime minister, Theresa May, making the strong rhetorical choice by her cabinet members when launching a public consultation more understandable. Emphasis is put on ‘speed’ and ‘accuracy’ again since the government is asking internet intermediaries to act swiftly and decisively as its proxy censors.

Notice that the French also stressed speed but added the concept of ‘scale’ in their rhetoric when promoting their new bill. While the digital affairs minister claimed that ‘protecting citizens in the virtual online world is the government’s highest priority’, the proponent of the bill, National Assembly member Laetitia Avia, went as far as portraying online hate speech as a ‘public health issue’ (AFP, 2019). The rhetorical strategy deployed here is to ride on fear, describing the spread of hatred in the online world as a plague. Thus, the vast scope of the new legislation is necessary because the government does not aim at merely containing, but preventing the ‘pandemic of hatred’ from spreading. In this connection, when the European Commission decided to use France as a reference for drafting a new bill to counter hate speech the following year, Vice-President Jourova adopted similar rhetoric: ‘Hate knows no borders. We need to respond to it together in a European way’ (Euronews, 2020). In response, Avia said that every European nation should have stronger regulations imposed on online platforms.

However, with the Chinese government’s successful demonstration to the world of using AI for mass surveillance as a means to control the COVID-19 pandemic (Lu, 2020), experience gained from dealing with this public health crisis will likely invoke knowledge transfer to both the technology and security sectors. From a rhetorical point of view, given that countering violent extremism is vital to the ‘great rejuvenation of the Chinese nation’, it is a call to every Chinese citizen to share this responsibility to prevent any types of threat (16 areas in total) to state sovereignty. Hence the law requires not only internet intermediaries but also individuals to act as the government’s proxy censors. As such, the focus of the Chinese political rhetoric goes beyond achieving scale in content moderation, but emphasizes the importance of being ‘thorough’. Mass surveillance using technology to monitor violent extremism online is also expected to advance further.

Coincidentally, the US is taking a similar approach. Although Congress strikes a positive tone by acknowledging the Santa Clara Principles as ‘best practice’, turning these principles into law implies that politicians believe that online platforms should be held accountable for national security matters and subjected to a certain level of oversight, rather than ‘pure’ industry self-regulation (Hemphill and Longstreet, 2016). Internet intermediaries would conceptually be recognized as one of the surrogate censors of the government in countering violent extremism. As such, future legislation in this connection would also likely adopt a similar oversight approach, asking internet intermediaries to be both ‘thorough’ and ‘accurate’ when moderating content. The US political rhetoric that focuses on a ‘principle-driven’ AI reinforces the concept that machines can learn to serve humans better. This approach led to the technology sector’s call for a more thorough understanding of the social costs of adopting AI and machine learning in content moderation: industry representatives (Electronic Frontier Foundation, 2019) and the Special Rapporteur on Counter-Terrorism (Warner, 2019) both criticized automated content moderation systems as being shrouded in mystery as the technologies are proprietary. Rather than a genuine sense of duty of care towards users, online platforms could task AI to meet the minimum statutory requirements in order to evade potential penalties.

In summary, all these new restrictive measures resemble the legislation in many historical ‘symbolic’ censorship campaigns (Knox, 2014; Zurcher et al., 1971) in the sense that the passage of the legislation was mainly a response to external pressure that ‘something needs to be done’, without a thorough consideration of practical issues such as enforcement problems and effectiveness, not to mention human rights violations. The key difference between the measures examined in this paper and legislation in response to a moral crusade is that the former was conceived by the governments for political gain, whereas the latter involved public affirmation of social ideals after a longer-term movement led by a particular section of society (Gusfeld, 1968). This ‘top-down’ approach explains why many of the online censorship measures taken by liberal democracies and authoritarian regimes are similar in nature. Professor David Kaye, UN Special Rapporteur on FOE between August 2014 and July 2020, criticized this adversarial tone in countering violent extremism online, lamenting that ‘a rhetoric of danger is exactly the kind of rhetoric adopted in authoritarian environments to restrict legitimate debate, and we in the democratic world risk giving cover to that’ (Pomerantsev, 2019).

Discussion

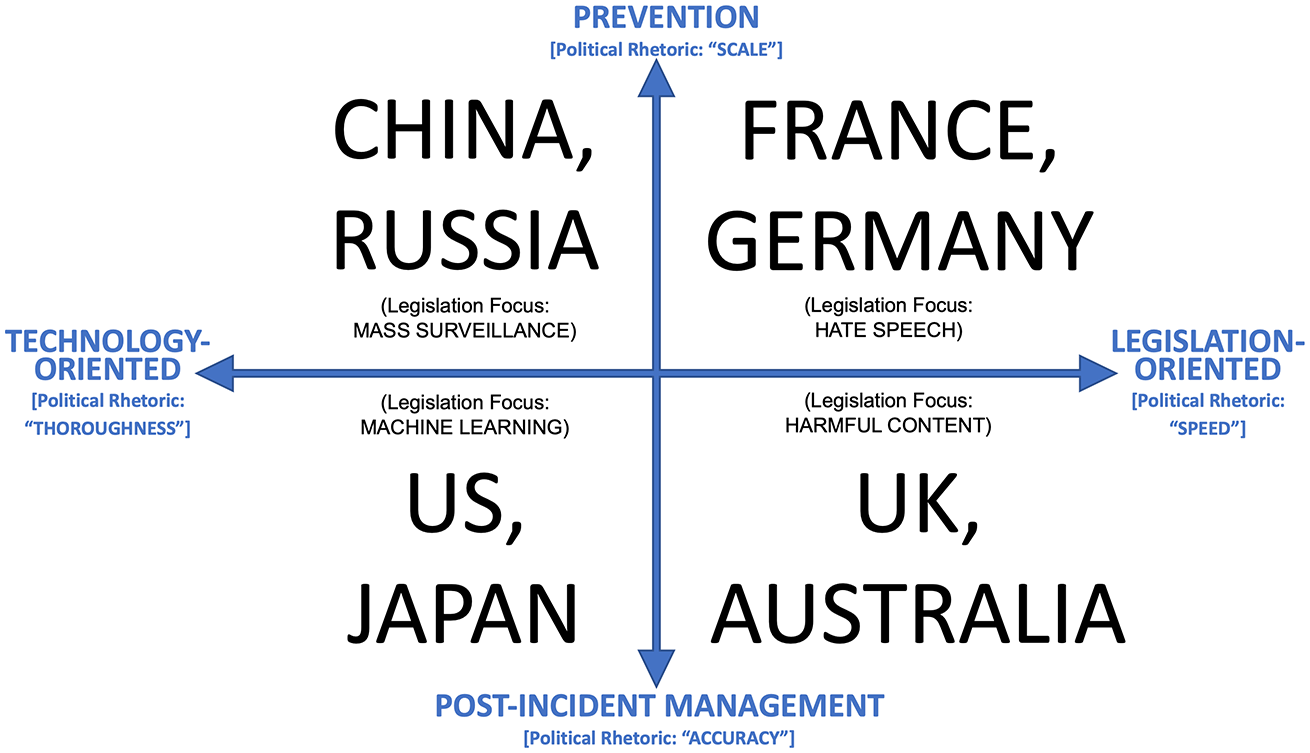

From a conceptual viewpoint, the countries studied in this paper can be seen as adopting four basic strategies in countering violent extremism online. These strategies can then be put under the proposed typology of two dimensions: (1) ‘technology- / legislation-oriented’; and (2) ‘prevention / post-incident management’.

Cross-country comparison under the proposed typological model.

As discussed above and also shown in Figure 2, countries that focused on adhering to Recommendations 1, 2 and 5 are categorized as ‘legislation-oriented’, whereas countries that put more emphasis on Recommendations 3 and 4 are considered ‘technology-oriented’. In the case of China, although its authoritarian measures do not meet any recommendations laid down by the Special Rapporteur on FOE, an analysis of its legal text and political rhetoric (see above) shows that China is clearly focusing on a technology-oriented strategy for preventing any threats to its national security. Using the rhetorical analysis approach, whether a government’s policy focus is aiming at ‘prevention’ or ‘post-incident management’ can also be identified.

A point of caution regarding this typology is that a countering online violent extremism strategy usually takes a multifaceted approach, hence no country would probably accept that they are simply ‘legislation-oriented’ and not relying on technology for prevention. Nevertheless, this analytical framework can serve as a guide in gaining insights to the political mindset behind an individual country’s policy. Recent examples include:

In response to the far-Right extremists’ shisha bar shootings on 19 February 2020, the Germans approved amendments to the Network Enforcement Act to crack down on online hate speech that took a similar approach to the French (DW, 2020).

Russian President Vladimir Putin personally proposed legal amendments to decriminalize first-time online extremism offences (passed by State Duma on 15 November 2018). Scholars were of the view that this move reflected the Russian government’s confidence in their mass surveillance system (the ‘digital iron curtain’) that would match the Chinese ‘Great Firewall’ (Cheang, 2018; Gabdulhakov, 2020).

In preparing for the Olympic Games, the Japanese government introduced machine-learning technology in detecting terrorist threats (Rirou, 2019). Japan is also a major contributor to the UN’s 2020 research initiative to investigate the risk-benefit duality of AI in counter-terrorism (UN Office of Counter-Terrorism Centre, 2020), a research approach similar to the study conducted by the US Department of Homeland Security mentioned above.

Given that many online platforms are transnational enterprises, how will internet intermediaries adapt to different legal frameworks and shoulder the responsibilities imposed on them by various governments simultaneously? The above typology provides two solutions: One scenario is to focus on the quadrant(s) in which the intermediaries have the highest revenues or most business opportunities. Another likely scenario is that they will adopt a ‘common solution’ approach, i.e. leveraging technologies that allow them to fulfil minimum statutory duties in various jurisdictions at the same time, thus trying to satisfy the needs of all four quadrants in the above model through the adoption of AI. Future research may consider conducting empirical studies to investigate which approach is more common among online platforms, and in different jurisdictions.

Conclusion

Against the above backdrop of a convergence in political rhetoric, a common solution for internet intermediaries to counter violent extremism online starts to emerge. This ideal solution should be able to: (1) satisfy the need for ‘speed’ in abhorrent content removal; (2) exercise a duty of care to a large ‘scale’ of internet users; and (3) demonstrate ‘thoroughness’ and ‘accuracy’ in the complex content moderation process. To many countries, the common answer to the problem, ‘Speed x Care x Thoroughness x Accuracy’, is the adoption of AI. The Special Rapporteur on FOE recognized this phenomenon in a more recent report to the UN General Assembly in August 2018, noting that ‘AI is often used as shorthand for the increasing independence, speed and scale connected to automated, computational decision-making’ (UN General Assembly, 2018.: para. 3), and ‘pressure for increasing the role of AI comes from both the private and public sectors’ (UN General Assembly, 2018: para. 14). Yet, the Special Rapporteur on FOE warned that relying primarily on AI might lead to various human rights issues and incur heavy social costs since AI is usually developed from data that contains discriminatory assumptions in its algorithms (Caliskan et al., 2017). At this stage, the Special Rapporteur believes that automated content moderation still has severe limitations, and actions determined by AI could risk discrimination and other human rights violations because of difficulties in addressing contextual knowledge, such as variation of language cues, as well as rhetorical and cultural particularities (UN Human Rights Council, 2011, 2018).

In other words, internet intermediaries acting as surrogate censors for governments may risk their users’ human rights to achieve high speed and massive scale as required by the increasing number of legislative measures across the world. The solution to this problem, as proposed by the Special Rapporteur on FOE, would be the involvement of human moderators with human rights knowledge and a ‘serious commitment to involve cultural, linguistic and other forms of expertise in every market where they operate’ (UN Human Rights Council, 2018: para. 57).

At the same time, policymakers will continue to be ‘out of their depth’ in understanding the complexity of the algorithms behind AI, but may be willing to stay ignorant as long as the transparency reports churned out by the online platforms contain ‘good results’ (i.e. a high removal rate) that would allow them to boast that the government has been keeping the public safe. This in turn would provide a ‘comfortable’ distance for internet intermediaries to handle their own business with minimal political interference. Such a downward spiral would create an illusion of care and accountability for both the internet intermediaries and the states.

In his 2018 report presented to the Human Rights Council, the Special Rapporteur on FOE stipulates: ‘National laws are inappropriate for companies that seek common norms for their geographically and culturally diverse user base’ (UN Human Rights Council, 2018: para. 41), and authorities should avoid passing new laws that lead to proactive filtering of online content (UN Human Rights Council, 2018: para. 67). To prevent a more stringent legislative regime of surrogate censorship, AI amalgamated with human intelligence in content moderation might provide the speed and transparency the states call for, while ensuring internet intermediaries respect human rights in countering violent extremism. Yet the major loophole in this new model is not the absence of duty of care from humans, but the lack of transparency in the system.

With this growing trend, more countries will likely migrate from the self-regulatory to the legislative regime, passing more laws to pressure their surrogate censors to remove content with tighter deadlines. Over time, the adoption of AI in content moderation would likely become the global standard for all intermediaries by using ‘big data’ and transparency reports to justify that they have met their statutory duty in countering violent extremism.

Limitations and future research

One of the major limitations of this study is its focus on developments mostly after the Christchurch shooting. While the tragedy is considered as a global incident that sparked international cooperation, future studies could benefit from a more historicized account of social media and terrorism, such as how to empower users to access quality information, instead of merely protecting people from harmful or violent content (the ‘rhetoric of danger’ commonly found in political discourse). Another limitation is the number of countries covered in this paper. Legislative measures adopted by prominent ‘tech powers’ like India and South Korea, as well as the different approaches in countering extremism taken by the Muslim countries (which involve Sharia law or adopting a secular system), should also be examined to improve the proposed typological model. Finally, the use of AI to interfere with users’ rights to seek and receive ideas online would need to be further explored, as it will blur the line between liberal democracies and authoritarian states. Movies and novels often portray the human race as heading towards a ‘singularity’ with an AI that surpasses human intelligence in all domains, but one should never confuse singularity with uniformity, let alone political conformity.

Footnotes

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The author is a recipient of the Hong Kong PhD Fellowship Scheme.