Abstract

Recent advances in artificial intelligence (AI) have renewed debates about the future of leadership, in particular the nature of the relationship between human leaders and intelligent machines. The literature tends to portrays human-machine relations in complementary terms: the machine does the data analysis and the number crunching while the leader makes the decisions, guided by their unique vision, gut-feeling, and inner moral compass. Drawing on the historical metaphor of the Mechanical Turk, the paper complicates this division of labour in two main ways. First, we revisit the influence of game theory on business thinking in order to question whether leadership has ever relied solely on uniquely human faculties such as intuition, vision, or moral judgment. Instead, we suggest that leadership has long been shaped by the algorithmic logic of strategic optimization. Second, we show that AI is becoming adept at simulating human leadership, a capability that makes it tempting to outsource traditional leadership functions to a computerized system. We warn that this dynamic risks producing a reversal of the Mechanical Turk: not a machine concealing human intelligence, but rather a human concealing machine intelligence. We conclude by arguing that AI, understood as a socio-technical system, is currently reshaping what leadership is and how it is enacted in organizations.

Keywords

Introduction

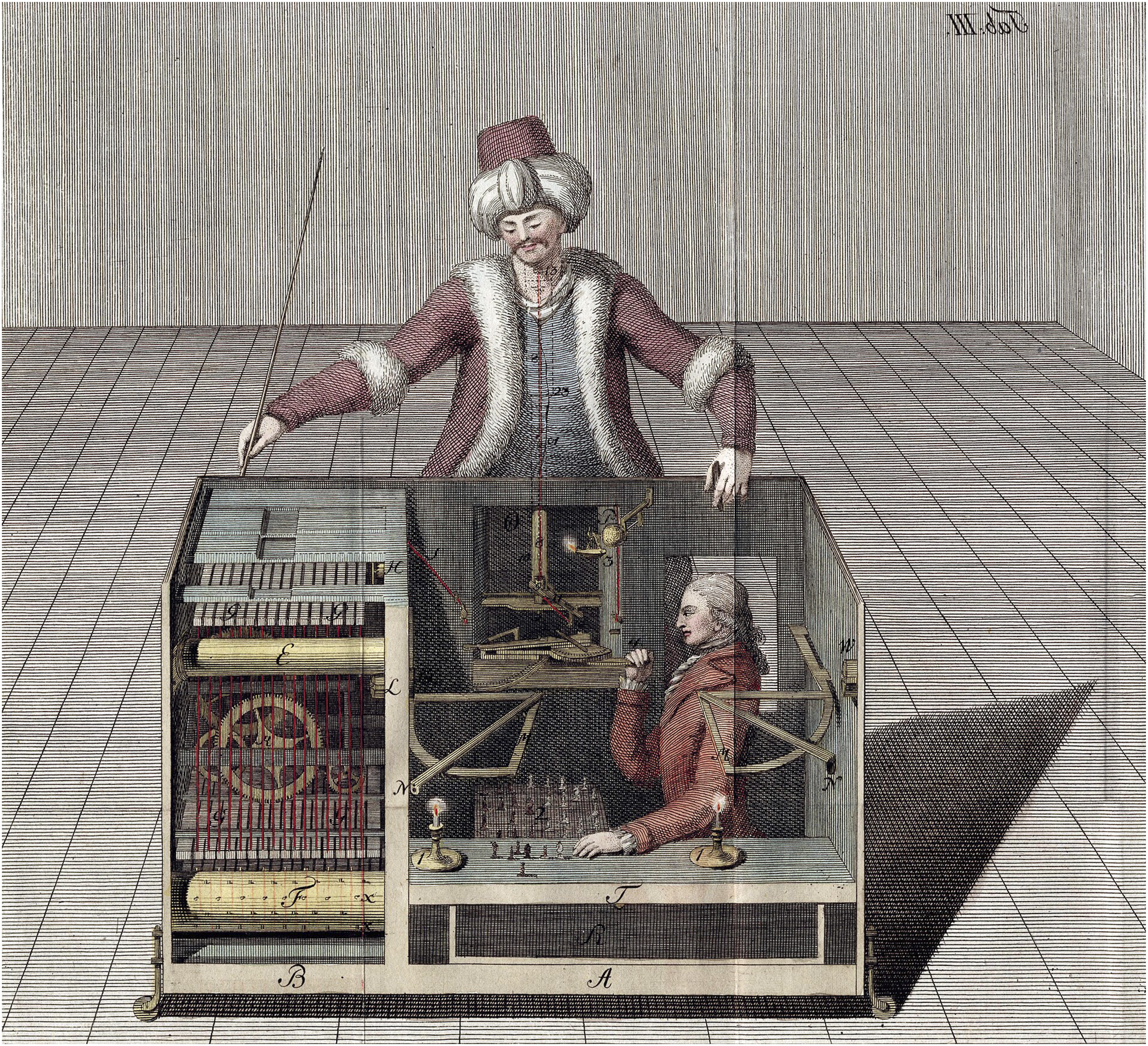

In 1770, Wolfgang von Kempelen first presented his chess-playing machine at the imperial court in Austria, hoping to impress Maria Theresa, the Empress of Austria. The machine consisted of a chessboard on top of a large cabinet, behind which sat a life-sized doll dressed in Ottoman clothes that appeared to play the game and beat its human opponents (see Figure 1). For almost a century, the machine – known as the ‘Mechanical Turk’ – travelled through Europe and the US, beating not only statesmen such as Napoleon Bonaparte and Benjamin Franklin, but also some of the best chess players of the time. Despite decades of speculation and theorizing, the secret of the Turk remained a mystery until Edgar Allan Poe’s family doctor, Kersley Mitchell, purchased the machine. Mitchell wanted to check whether Poe’s theory about the Turk’s functioning, which had been published in the form of a detective-like story, was correct and later established “a club whose members would each pay $5 or $10 to join, in return for being let in on the secret” (Standage, 2002: 189). Mitchell revealed what most people already suspected, namely, that the Mechanical Turk was not a true automaton; it was in fact an elaborate illusion, and the doll was operated by a human chess player hidden in the cabinet. Illustration of The Turk chess automaton (Racknitz, 1789). Public domain. Source: Wikimedia commons.

Historically, the Mechanical Turk marks the beginning of serious reflection about machine intelligence and its relation to human intelligence. This debate continues today, especially in terms of organizational decision-making in the age of artificial intelligence, or AI (Jarrahi, 2018; Neiroukh et al., 2025; Shrestha et al., 2019). In this context, the term ‘AI’ refers to a wide range of computer-based applications first conceptualized in the 1950s (Pasquinelli, 2023), encompassing everything from a system of algorithmic rules that lead to decisions (‘symbolic AI’) to programmes that are trained to recognize patterns in data (‘machine learning’) (Dyer-Witheford et al., 2019).

Recent advances in AI, driven in part by the availability of large datasets via the internet and the improved power of graphics processing units (GPUs) (Hao, 2025), mean that ‘intelligent machines’ are now capable of human-like cognitive tasks in organizational contexts (Kemp, 2024). As a result, some of the relatively simple work traditionally performed by managers, such as monitoring and control, is increasingly being turned over to computerized systems (Jarrahi et al., 2021; Stark and Vanden Broeck, 2024). But that is not all. More complicated tasks, including strategic planning and employee motivation, are also coming to depend on predictive analytics and auto-generated recommendations (Biloslavo et al., 2025; Malik et al., 2023). For example, tech companies like Neuronify and Sana offer a suite of bespoke ‘AI agents’ that are meant to augment various executive functions, from cross-functional coordination to organizational design support – a trend that indicates how human intelligence is becoming ever-more intertwined with machine intelligence in corporate settings.

These developments have led to conjecture about the future of leadership (Iansiti and Lakhani, 2020), which – in contrast to management – typically involves faculties that go beyond those needed for the functional and mechanistic side of organizing. Here, the paradigmatic example of the leader is a transformational ‘force for change’ (Kotter, 1990), someone who can fundamentally alter the culture and strategic direction of a company. Leadership has been considered an exclusively human domain because it is said to require special qualities – such as vision or authenticity (Avolio and Gardner, 2005; Nanus, 1995) – that go beyond mere planning, controlling, and problem-solving. However, recent leaps in AI technology have brought this assumption into question. For example, automated leadership agents may be perceived as more trustworthy and transparent than human leaders (Höddinghaus et al., 2021), which minimally suggests that the nature of leadership is already being reconfigured by intelligent machines.

There is a small but growing literature that explores the future of human leadership by examining the relation between leadership and AI (e.g. Bevilacqua et al., 2025; Quaquebeke and Gerpott, 2023; Wesche and Sonderegger, 2019). Of course, human-computer relations have been explored for many decades in fields such as cybernetics, information systems, and psychology (Card et al., 1983; Simon, 1960; Wiener, 1948), but our focus here is on the way these issues are addressed within the scope of leadership studies. This literature has given rise to several new leadership concepts, such as ‘algorithmic leadership’ (De Cremer, 2020; Harms and Han, 2019; Walsh, 2019), ‘AI leadership’ (Aziz et al., 2025), and ‘data-driven leadership’ (Fink, 2025). On offer in these accounts is a version of the narrative that human leaders and intelligent machines can complement one another, since they both possess unique qualities that can strengthen and support the other. The winning team, in other words, is neither the leader nor the machine, but the leader with the machine, each contributing complementary strengths that make the whole greater than the sum of its parts. The literature presents this division of labour as clear and unproblematic: the machine does the data analysis and the number crunching while the leader makes the decisions, guided by their unique vision, gut-feeling, and inner moral compass – characteristics that cannot be outsourced to chips, processors, and software.

In this paper, we complicate this division of labour in two ways. First, we question the extent to which corporate leaders have exercised a uniquely human capacity – even prior to the current wave of AI. We explore this issue by tracing the influence of game theory on business thinking. This history calls into question whether corporate leadership has ever relied solely on human faculties such as vision, gut feeling and moral judgement. We suggest that leadership has long been algorithmic in nature when viewed through the lens of business as a game, one that requires strategic optimization and efficiency maximization.

Second, we show that AI is becoming increasingly adept at simulating human leadership. While AI still lacks true human judgment – as the literature on algorithmic leadership rightly emphasizes – it is remarkably proficient at simulating such judgment by processing vast amounts of data derived from past human decisions. This capability makes it tempting to outsource leadership functions to a computerized system, even though the result can only ever be a semblance of human leadership. The risk is that human leaders become part of such a semblance, where the leader does not ‘humanize’ the organization but rather conceals the fact that it is run according to a logic of algorithmic calculation. This, we argue, would amount to a reversal of the Mechanical Turk: not a machine concealing human intelligence, but a human concealing machine intelligence.

This paper contributes to ongoing discussions about the future of leadership in increasingly algorithmic workplaces by challenging overly optimistic portrayals of human-machine collaboration. Specifically, we question the assumption that human leaders can simply be ‘augmented’ or ‘enhanced’ by AI in a straightforward, additive manner. Instead, we argue that technological advances do not merely support human leadership; they also serve to reshape it, influencing how leadership is understood and enacted. This leads us to conclude that any model of corporate leadership that envisions AI as a tool that can be seamlessly ‘bolted on’ to human capabilities is fundamentally flawed. In particular, such models risk obscuring the ways in which leadership itself is being redefined by algorithmic logics.

The paper is structured as follows. In the first section, we review the literature on algorithms in relation to management and leadership, focusing on the ‘new’ partnership between humans and machines in organizations. We continue by reflecting on game theory in business thinking and leadership practice, a strand of thought that invites us to see business as a game just like chess (even when it is not). Finally, we turn to the prospects for leadership in the ‘age of intelligent machines’ before offering some suggestions for further research in the field of leadership.

Algorithmic management and algorithmic leadership

In 1977, Abraham Zaleznik published a paper in the Harvard Business Review entitled ‘Managers and Leaders: Are They Different?’. His answer to this question was a resounding ‘yes’. Leaders, Zaleznik (1977: 3) claims, have “a personal and active attitude towards goals”, whereas managers passively pursue the goals that are “deeply embedded in their organization’s history and culture”. In setting new goals, and thereby setting a new direction for the organization, the leader draws on their personal values, their gut feelings, and their unique vision. The manager, by contrast, dedicates their time to optimizing processes that will achieve the established goals of the organization, leaving aside all emotional content.

Of course, Zaleznik’s thesis was not radically new. It echoed the call for charismatic and transformational leaders in business and politics that had become more prominent around the same time in the US (e.g. Burns, 1978; House, 1976), partly in response to the economic problems stemming from the energy crisis of the 1970s and the increasing competition from Japanese companies (Spector, 2014). Yet Zaleznik’s article nicely illustrates a break in leadership studies that remains to this day. In the 1950s and 1960s, ‘leadership’ was not easily distinguishable from ‘management’, and the two terms were often used interchangeably to describe the same activities (Spector, 2016). For instance, line managers or supervisors were often referred to as ‘group leaders’, a nomenclature that is far less common today. Since Zaleznik, we have become accustomed to the idea that managers perform a function within the organization whereas leaders bring something in from the outside (Spoelstra, 2018). Leaders provide the X-factor, the ‘special something’ that separates an ordinary business from an extraordinary business by initiating a process of radical change and renewal.

Zaleznik’s distinction between management and leadership, and the broader heroic leadership literature that it belongs to, has received well-deserved criticism, not least in the pages of this journal (e.g. Hughes, 2016; Tourish and Vatcha, 2005). These critiques have sparked a rich body of scholarship that adopts a collective perspective on leadership, including shared, relational, and distributed approaches (Bolden, 2011; Döös and Wilhelmson, 2021). However, traces of Zaleznik’s thesis persist even in some of these more ‘post-heroic’ forms of leadership. Such approaches often implicitly maintain the fundamental distinction between leadership and management, although the X-factor is now distributed among organizational members rather than the sole property of the individual leader (Spoelstra, 2018).

One way of thinking about the leadership/management dichotomy is in terms of the distinction between humans and machines. The manager acts in a machine-like manner – for example, they process information, set clear goals, delegate tasks, evaluate performance, provide feedback, optimize outcomes, recruit staff, and so on. These tasks mostly follow a computational logic of input and output, so it is no surprise that they are increasingly performed by algorithms (Dennis and Aizenburg, 2022). The leader, by contrast, taps into faculties beyond the merely mechanistic. Instead of managing organizational processes according to rationalized principles, the leader does something else: they establish an emotional bond with followers, articulate a compelling vision of an idealized future, stay close to their inner values, build trust for their community, set the organization on a fundamentally new course, and so on (e.g. Díaz-Sáenz, 2011; Kouzes and Posner, 2005). The leader is said to have qualities that cannot be replaced by machines, qualities that do not translate into a strictly computational framework.

The traditions of leadership studies and management science therefore differ in how they conceive the role of the human in relation to business. Traditionally, leadership scholars put their faith in the exceptional qualities of individuals and their ability to inspire employees and transform organizations, raising the corporate mission to a higher moral level (Bass, 1985; Bass and Steidlmeier, 1999). In the post-heroic tradition, leadership (understood as a social and relational process) is dispersed throughout the organization, ideally creating a more harmonious and less power-driven workplace (Crevani et al., 2010). In contrast to both these traditions, management science focuses on decision-making processes within the organization in order to achieve optimal productivity and efficiency. On this view, the individual is at the service of the organization and its goals, acting as little more than a cog in the machine or, better, an input in the computational infrastructure (Simon, 1960).

Consider Frederick Taylor’s (1911/1998: 15-16) principles of scientific management, one of the earliest examples of computational thinking in a pre-digital era: “The managers assume…the burden of gathering together all of the traditional knowledge which in the past has been possessed by the workmen and then of classifying, tabulating, and reducing this knowledge to rules, laws, and formulae” that will ultimately “replace the judgement of the individual workman”. Taylor reduces the task of management to purely mechanistic principles: supervisors need only provide workers with a set of de-personalized instructions for achieving the desired outcome. If leadership studies places the human at the top of the hierarchy – either as a lone genius in the heroic tradition, or an inspired collaborator in the post-heroic tradition – management science relegates the human to a mere function of the system of which they are a part (Butler and Spoelstra, 2025).

Today, ‘algorithmic management’ refers primarily to automated forms of management and supervision in digitalized work environments (Jarrahi et al., 2021; Stark and Vanden Broeck, 2024). For example, platform companies like Uber, Deliveroo, and Upwork use algorithms to control, incentivize, and evaluate workers with minimal human input (Bucher et al., 2021; Rosenblat and Stark, 2016). But algorithmic management is prominent not only in the gig economy, a sector that relies on a diffuse network of freelancers who are connected to the company via an app. Increasingly, algorithmic management is also coming to replace the tasks of management in more traditional work settings. At some of Amazon’s warehouses, for instance, data-driven algorithms dictate the nature and pace of work for employees, monitoring, quantifying, and assessing each of the actions performed by workers without the need for human oversight (Beverungen, 2021; Vallas et al., 2022). Similar processes can be observed in HR functions, too. For example, companies are now turning to hiring platforms – such as Recruit, Kronos, and Snagajob – that use predictive analytics to sort, screen, and appraise job candidates according to pre-determined criteria (Kellogg et al., 2020), replacing the need for human decision-making. Such forms of algorithmic management are strikingly similar to the recommendations made by Taylor more than one hundred years ago, leading some scholars to speak of ‘digital Taylorism’ or ‘Taylorism 2.0’ (Altenried, 2020; DeWinter et al., 2014) – a form of management mediated by computer-based processing rather than by human judgement. This suggests that, while middle management is being partly replaced by its non-human counterpart, the logic of efficiency and optimization that underpins them both is essentially the same.

Algorithmic management has been subject to sustained academic scrutiny in recent years, especially in relation to its ethics (or lack thereof) (Benlian et al., 2022). Some of this research has focused on the benefits of algorithmic management for improving organizations, such as enabling a “smarter and more responsive definition of tasks and allocation of resources and effort” (Schildt, 2017: 23) – key areas of focus for any managerial intervention. Other research has highlighted the negative aspects of algorithmic management, exposing job insecurity and poor working conditions among workers subject to AI supervision (Gal et al., 2020), forms of discrimination faced by those who are targeted by computer-mediated modes of evaluation (Köchling and Wehner, 2020), a lack of transparency and accountability in automated decision-making (Kim and Routledge, 2022), and increased levels of digital surveillance in algorithmically managed workplaces (Newlands, 2021). From a critical perspective, what is at issue is the way that new work technologies advance an exploitative agenda that undermines workers and their rights (De Stefano, 2019).

Less researched, however, is what some have called ‘algorithmic leadership’. The few studies that examine this phenomenon tend to approach the topic from a practitioner perspective, one that offers broad-brush recommendations for managers instead of in-depth qualitative or quantitative analysis (e.g. De Cremer, 2020; Walsh, 2019). In these studies, the human leader is seen as someone who contributes something important to the algorithmic age, something that machines lack. Luca et al. (2016: 124) express the problem as follows: Algorithms tend to be myopic. They focus on the data at hand – and that data often pertains to short-term outcomes. There can be a tension between short-term success and long-term profits and broader corporate goals. Humans implicitly understand this; algorithms don’t unless you tell them to.

The authors do not explicitly draw on the language of leadership. But the distinction between short-term (operational) goals and long-term (strategic) goals echoes the management/leadership dichotomy that dates back to Zaleznik. Essentially, Luca and colleagues are saying that an algorithm is able to identify patterns and trends that are imperceptible to human observation, yet it lacks the ability to lead a company to success. For this reason, they argue, organizations need leaders who can use algorithms skilfully in order to “unlock their remarkable potential” (Luca et al., 2016: 125).

Similar sentiments are found in studies that outline the promise of algorithmic leadership (De Cremer, 2020; Harms and Han, 2019; McGuire and De Cremer, 2023; Walsh, 2019). The problem with algorithms, for De Cremer (2020), is that they are unable to inspire workers to unite around a shared corporate mission. An algorithm can optimize processes and predict outcomes, but it cannot articulate a compelling vision, listen to others, admit mistakes, or encourage a diversity of perspectives – key traits for any successful human leader. For this reason, algorithms are ideally suited to management but singularly incapable of leadership. As De Cremer (2020: 61) puts it, “the more algorithms take over management, the more we will need leadership to bring in human judgement to help set priorities”.

The literature tells us that, while machine intelligence can help organizations analyze data, predict trends, and simulate outcomes, leaders are required to exercise their unique cognitive skills such as intuition, curiosity, and critical questioning. At the same time, a leader can learn from algorithms and start to “think like a computer” (Walsh, 2019: 70) – for example, by breaking down problems, recognizing patterns, and establishing rules for decision making. On this view, algorithmic leadership is a fusion of human and computer, a synthesis that is said to result in organizational success and ethical outcomes.

This type of collaborative partnership between human and computer is articulated most clearly by Harms and Han (2019: 75): [H]umans need not consider algorithms to be rivals so much as a new type of partner, both for leaders and followers. [Algorithms] can enhance performance and well-being and they, in turn, will need humans to provide them with information and feedback in order to be effective at executing their core functions and improve over time.

Authors such as these are reflecting on a world of work in which algorithms play an increasingly prominent role in the execution of organizational activities. They claim that, while algorithms may well come to replace the traditional tasks of salaried managers – such as assigning tasks and assessing outcomes – there is still a place for human leadership, one that is based on transformational, visionary, and ethical foundations. What we need, in other words, are algorithmic leaders who recognize that artificial intelligence needs to be supplemented by “authentic intelligence” (De Cremer, 2020: 63). In effect, this literature seeks to bridge the gap between leadership studies and management science. In line with the management science tradition, such authors highlight the role of algorithms as the key to business success in the digital age. Yet they also remain faithful to traditional leadership thinking by emphasizing the irreplaceable role of leaders in working with machines.

For theorists of algorithmic leadership, humans are required to ensure that business can remain – or become – ethical in a world that is increasingly data-driven. Indeed, problems start to occur when algorithms are left unsupervised, operating without any human oversight. For example, Walsh (2019) reflects on the case of a 2017 United Airlines flight that had been overbooked. An algorithm resolved the booking problem by selecting a 69-year-old man named David Dao as one of four individuals who would have to give up their seats. Dao refused to leave and was violently removed from the plane by security personnel, an event that was captured on video. The footage soon went viral, causing public outrage and negative media attention. The scandal damaged the reputation of United Airlines and cost CEO Oscar Munoz his expected promotion to company chairman. Walsh’s (2019: 4) point is that there is no room for human judgement in “a company driven by rules” and “governed by data and algorithms”. Of course, the algorithm is not responsible for the level of violence experienced by Dao – that is something for which the security personnel ought to be held responsible. But Walsh’s point is this: we need human leadership to steer the process of algorithmic decision-making, otherwise the risk of negative moral consequences (which may include, in the worst case, acts of violence) only increases. Such a view is shared by other theorists of algorithmic leadership: leaders are required because, without them, organizations would lose the capacity for moral reflection and so raise the spectre of ethical misconduct.

On the surface, the literature on algorithmic leadership tells a plausible story: the rigid rules we find in algorithmic decision-making pose a danger because they cannot account for exceptions, outliers, dilemmas, and other sticky situations – the kind of circumstances where finely tuned and ethically grounded human judgement is called for. Nor do algorithms allow for the kind of human creativity associated with vision and insight. However, in the following section, we will cast doubt on this all-too-smooth narrative. Drawing on the history of chess and the influence of game theory on business thinking, we will show that the equal division of labour between human and machine is in fact a mirage, and has been since the second half of the 20th century.

The game of business

There was a short period in the history of chess during which the combined power of a human and a computer was stronger than either a human or a computer alone. So-called ‘man + machine’ matches – also known as ‘advanced chess’, ‘cyborg chess’, or ‘centaur chess’ – had their heyday in the late 1990s and early 2000s. In this format, the human player was permitted to use a computer engine to help them decide which moves to make. The human player remained in control of the overall game strategy and used the engine primarily for ‘blunder-checking’, which ensured that a candidate move did not fail because of an overlooked tactical possibility.

Recent technological developments have put an end to this era of human-computer collaboration. The Google-owned chess engine AlphaZero is not only much stronger than the current human world champion (this is true for almost all of today’s chess engines); it also relies on ‘zero’ human chess knowledge – hence its name (for a critical discussion, see Larson, 2021: 161-162). AlphaZero is based on an algorithm that enabled it to learn and master chess by playing millions of games in a continuous learning process over a short period of time. This innovation caused a sensation in the chess world because it pushed strategic thinking down paths rarely trodden by human players (Sadler and Regan, 2019).

Chess is a context in which machine calculation has now surpassed human intelligence. As a result of this development, the human-machine partnership is redundant when it comes to playing chess at the highest level. Today, the greatest human grandmaster of all time is on a lower plane than the chess capacity of a computer. In short, there is no longer any need for human involvement – or, as De Cremer might say, ‘authentic intelligence’ – due to the overwhelming analytical strength of machine learning.

In Wolfgang von Kempelen’s time and the century that followed, the prospect of a chess machine that could beat a human player was often envisioned as the ultimate proof of the machine’s superior intelligence and wisdom in comparison with that of humans (Standage, 2002). That is why the Mechanical Turk enthralled audiences across the world. But we know today that this belief was unjustified. The ability of a computer to outperform human players in a game of chess – or indeed in any game – does not necessarily translate to performance in other contexts. After all, game environments are separated from real life and delineated by a set of rules that allows for the calculation of optimal strategies (which may or may not evolve during gameplay). On this view, game environments – especially those that involve closed systems and complete information like chess – are the perfect terrain for algorithmic learning, yet they are not immediately assimilable to the human experience of life more broadly. Chess showcases the processing power of computers because, like many games, it limits action within a rule-governed framework. By contrast, humans possess the unique ability to leave the game or reevaluate its importance in a wider social or environmental context, which may radically change their behaviour as a result. Consider the following example. A human is playing a computer at a game of chess in a building which catches fire during the match. The human will immediately leave the building, yet the computer will continue to contemplate its next move, going up in smoke as the flames engulf the room. Finding an emergency exit is obviously the ‘smarter’ move in this situation, even if it means abandoning the game at hand.

When authors such as De Cremer and Walsh write about the importance and prospects of algorithmic leadership, they have in mind similar situations, situations in which the computations of machines must be overruled by leaders. In other words, leaders recognize something that machines simply cannot: that other things must sometimes take precedence over normal business activities, such as prioritizing the safety of a customer instead of resolving a booking problem on a plane (as in the case of United Airlines). The literature on algorithmic leadership thus portrays corporate leaders as standing on a threshold of a new era in which algorithms become the motor of business success, but it is a threshold fraught with risk. Leaders must ensure that they cultivate their inimitable human capabilities in order to prevent the machines from taking over completely, an outcome that would have potentially disastrous consequences in terms of an organization’s ethical commitments to its employees, customers, and stakeholders.

We agree with proponents of algorithmic leadership that human qualities such as ethical judgment, empathy, and contextual awareness remain beyond the reach of intelligent machines. However, we challenge the assumption that corporate leaders operate outside of game-like environments. Unlike a chess player who can choose to leave the game, corporate leaders are often deeply embedded within the logic of business-as-game.

As we have seen, management science invites us to think of business algorithmically, with Taylorism as the paradigm case of this approach. Yet it was only with the development of game theory, decades after the birth of scientific management, that algorithmic decision-making was reframed by thinkers who explicitly linked business decisions to the type of strategies used in games with clearly defined rules (Ericksson, 2015). Within this context, Von Neuman and Morgenstern’s game theory, set out in Theory of Games and Economic Behavior (1944), played an important role, especially once their mathematical work was taken up by scholars in the social and behavioral sciences, including business and management.

The link between mathematical game theory and actual games is not entirely superficial. Von Neumann was familiar with the chess scene in Central Europe and was an acquaintance of, among others, former chess world-champion Emanuel Lasker. Indeed, Von Neumann developed his game theory in the context of an intellectual debate about the mathematical nature of strategy games in general and of chess in particular. His first major academic paper on games, Leonard (2010: 72) notes, was part of “a rich contemporaneous discussion of the mathematics of chess and parlour games”. Von Neumann was not the first to tread this path. In fact, his earliest steps in the formulation of game theory were anticipated in a 1913 paper by Ernst Zermelo, which applied set theory to the game of chess (Erickson, 2015).

In reality, the world of business is fundamentally different from games like chess. In business, there are few boundaries, many conflicting objectives, no clear rules, and ever-changing circumstances. But management science has sought to re-make business as a game, proposing strategies that can guide organizational decision-making and optimize outcomes. In this light, management science is not simply a neutral method for analyzing data, predicting outcomes, and improving work processes. It is an attempt to change our orientation towards organizations and society in radical ways, according to its own inexorable economic logic (Solomon, 1999). In a fundamental way, management science encourages us to view the entire social body – and the market relations that constitute it – as a kind of game.

This framing has deeply influenced economic policy and corporate thinking. Consider, for example, Milton Friedman’s (1970) infamous New York Times Magazine article, in which he asserted that the only social responsibility of business is “to increase its profits so long as it stays within the rules of the game”. For Friedman, “the rules of the game” are the only constraints on business, effectively excluding moral considerations from the executive’s role. From this perspective, even ethically charged issues are reduced to optimizing strategies toward the fixed end of profit maximization, just as a chess player seeks only to checkmate an opponent. The enduring presence of game metaphors – ‘beating the competition’, ‘playing hardball’, the imagery of chess pieces on the front covers of strategy textbooks – not only reflects this mindset but reinforces it: business is not merely like a game, but is meant to be treated as one.

Despite the idealized narratives found in heroic leadership discourse, corporate leaders often follow this same game-like logic of optimization. To illustrate this point, let us return to the example of United Airlines. We might ask what a human leader would have been able to do differently if the airline booking system was not run solely by an algorithm. For instance, a human leader would probably have been able to find a non-coercive solution to the problem by determining that violently removing a passenger from the aircraft is not the best way forward in this situation. Even so, we might ask whether a leader would have exercised their uniquely human qualities, or whether they would simply have reached a ‘new and improved’ version of a decision that an algorithm would, in theory, also be capable of. Consider an algorithm that has access to more data points about the possible reputational damage to the company, David Dao’s past behaviour, Oscar Munoz’s promotion opportunities, and so on. In such a scenario, the algorithm would likely optimize the final decision in just the same way as a human leader – perhaps even more so. This suggests that leaders already try to act like intelligent machines, such as chess engines, in much of the decision making that we often consider to be quintessentially ‘human’.

When authors on algorithmic leadership claim that human leaders are more important than ever, and that they can ‘augment’ algorithmic optimization with their human qualities, they overlook the extent to which leadership is already a fusion of human insight and algorithmic thinking. In fact, they assume that human leadership is a pure counterbalance to inhuman algorithms. In reality, much of what we recognize as corporate leadership already is algorithmic in nature, even in its pre- or non-digital forms.

One critical shift that is happening today, therefore, is that machines are simply becoming better at playing the game that corporate leaders have been playing for decades. Yet this is only part of the issue. As we explore in the final section, not only is leadership in practice more machinic than we typically portray it to be, but machines have recently become more human-like. This makes it increasingly tempting to outsource even the most ‘human’ capacities to systems that, however convincingly, can only ever simulate them. Ultimately, we suggest that this illusion risks obscuring the algorithmic apparatus behind the human face of the leader, turning leadership into a performance that conceals rather than counters the logic of machine-driven calculation.

Leadership in the age of intelligent machines

In the preceding analysis, we used the history of computer chess to show how apparently unique human faculties of thought can in fact be supplanted by algorithms. Chess engines are capable of making the kind of ‘genius’ moves that a human player could only ever reach through deep insight or gut feeling because rational human thought simply cannot calculate the required number of options at the necessary speed. Similarly, we can imagine that machines could make ‘genius’ strategic business decisions, such as entering a new market or creating a new product, that are typically said to require human vision. As we have seen, machines are best able to demonstrate a capacity for making optimal decisions in game environments, so it is no surprise that games like chess are dominated by engines such as AlphaZero. To the extent that business is (or is treated as) a game, we can expect machines to demonstrate a similar dominance in the workplace of the future (Van Quaquebeke and Gerpott, 2023).

But it is not merely that machines are replacing those aspects of leadership that are calculative or algorithmic in nature. With the rise of large language models (LLMs), machines are increasingly capable of simulating the more elusive, affective, and interpretive dimensions of leadership – the very things that machines do not actually possess. Through exposure to large amounts of human-generated text, LLMs (in the form of generative AI) can convincingly mimic empathy, intuition, even moral reasoning (Constantinescu et al., 2022; Morton, 2025). This simulation does not reflect genuine understanding or judgment, but it can nonetheless produce outputs that appear strikingly human. This is why some critics characterize generative AI platforms like ChatGPT as algorithmically sophisticated ‘bullshit machines’ (Hick et al., 2024), technologies that generate statistically probable lines of text rather than true or meaningful statements.

Take the example of ethical judgement. There are many different interpretations of what constitutes an ‘ethical judgement’ (Mudrack and Mason, 2013). Yet most commentators agree that the process of ethical decision-making goes beyond a rules-based, universally applicable calculation and must also incorporate a non-mechanical element based on careful consideration of the unique situation and context (Jones et al., 2006), an insight that applies equally to the ethics of leadership (Ciulla, 1995; Voegtlin, 2016). From this perspective, it is clear that intelligent machines cannot make ethical judgements (Vallor, 2024; Weizenbaum, 1976). Judging requires a certain freedom or openness, a moment of reflection in the face of an ethical dilemma that cannot be solved by blindly applying rules. Even when an ‘ethical code’ is in place, such as when algorithms are programmed to recognize and eliminate gender bias or racial discrimination (Ferrer et al., 2021), there is a need to reflect on the ethics of the ethical code in question, which cannot be outsourced to other bits of ‘code’ without creating an endless computational regress. Human experience, human cognition, and human judgement are always required to close the ethical loop (Lindebaum and Fleming, 2024; McQuillan, 2022).

Despite the fact that machines are incapable of genuine ethical judgment, they are increasingly adept at imitating it. Pose an ethical dilemma to ChatGPT (or to Grok, Elon Musk’s ‘anti-woke’ LLM, for a contrasting value base), and you will receive a coherent, seemingly thoughtful moral response within seconds. This is not because these systems understand the situation or possess the capacity for critical evaluation. On the contrary, it is because they simulate ethical reasoning by drawing on vast datasets of human discourse, identifying patterns in how people have responded to similar dilemmas in the past. What generative AI produces is not ethical judgment in any meaningful sense, but a plausible facsimile.

The trend is not only that machines are starting to dominate in areas where they are capable; they are also making inroads into domains where they are, by their very nature, incapable. Recall the film Her (2013), in which a man falls in love with an AI-driven operating system. That was pure science fiction at the time but now feels eerily prescient. Machines are clearly incapable of love and care in any genuine sense, yet this has not stopped millions of people from using platforms like Replika (branded as “the AI companion who cares”) to form ongoing relationships with an LLM-based bot, some romantic or sexual in nature. These AI companions are marketed as ‘empathetic’, ‘attentive’, and ‘always available’, but only the last of these is true.

We are not yet seeing the same thing in leadership. There are plenty of digital leadership tools on the market, such as ‘employee experience platforms’ offered by companies like CultureAmp and Lattice, but these typically do not offer a full replacement of the human leader in the same way that Replika offers ‘AI companions’ to users. Instead, such tools take the form of digital dashboards that track employee analytics, engagement scores, and performance metrics – sometimes integrated with generative AI functionality, sometimes not. We can expect these tools to advance rapidly. In this scenario, AI bots will become increasingly capable of imitating those qualities we traditionally associate with leadership: communication, decisiveness, empathy, even charisma.

Just as a Replika companion doesn’t ‘care’ about the user, an AI leader will never truly offer insight, ethical judgment, empathy, authenticity, nor will it ever acquire the ability to interpret, pause, doubt, or reflect (Butler and Spoelstra, 2025). Digital objects are not capable of ‘true’ leadership as we usually understand it. And yet, they can still convince us they are. As AI bots become proficient at mimicking human values, contextual awareness, and even emotional expression, they also begin to encroach on the very qualities that have traditionally distinguished leadership from management. What was once considered the exclusive domain of human judgment is now increasingly being simulated by intelligent machines.

In 2023, the Polish beverage company Dictador announced it had replaced its human CEO with an AI-driven robot called Mika (Mann, 2023). In an interview with Reuters (2023), the bot said it works around the clock, always “ready to make executive decisions” in the interests of the company. Almost certainly a publicity stunt, Mika nonetheless signals the direction we are moving in, a direction in which we could easily mistake a non-human digital avatar for a living, breathing human leader. Some people already feel as if they are talking to a real person when they interact with chatbots like ChatGPT – asking for advice or seeking connection and, for a moment, forgetting they are communicating with a computerized system (Spencer-Elliott, 2025; Demopoulos, 2025). Similarly, as generative AI becomes better at playing Turing’s imitation game (Turing, 1950), it may become increasingly difficult to tell apart the human leader from the intelligent machine. Beyond Dictador, other companies are capitalizing on this blurring between the human and the non-human by outsourcing core leadership functions to AI tools, as evinced by platforms that build AI assistants to “automate executive-level work” (Neuronify) or “think alongside your teams” (Sana). In the future, we can expect the boundary between human judgment and machinic influence to further dissolve, even as employees, customers, and other stakeholders continue to valorize the image of the human leader (Lochner et al., 2025).

But what cannot be outsourced is the human need to account for oneself. As philosopher Korsgaard (2009: xi) reminds us, humans differ from animals in our “capacity to control and direct our beliefs and actions”. This capacity brings with it an inescapable demand: to ask what motivates our actions and, more broadly, to take a stance on what ought to be done. AI can assist in deliberating such questions, but the danger lies in allowing machinic recommendations to substitute human self-constitution. This is where the idea of algorithmic leadership as a partnership of complementary strengths begins to unravel. When we uncritically adopt the values embedded in LLMs – values shaped by data, design choices, and commercial incentives – we begin to hollow out our subjectivity (Fischer, 2022). Instead of enhancing human leadership, the machine starts to replace it, without ever truly possessing it.

Such reflections suggest that the prospects for ‘algorithmic leadership’ are more problematic than commentators typically assume. There is indeed a new partnership between human leadership and algorithmic decision-making, but it is far from an equal split between two domains of responsibility. In the extreme case, the role of the human leader is reduced to maintaining the semblance that a human is indeed exercising oversight. In such cases, human leadership resembles the same trick pulled by Wolfgang von Kempelen in the 1770s – except in reverse: not a machine that is secretly operated by a human player, but rather a machine that is hidden behind an ‘authentic’ (or ‘visionary’, or ‘charismatic’, or ‘responsible’) leader. In the same spirit as Edgar Allan Poe’s family doctor, we invite scholars in leadership studies (and beyond) to reveal the elaborate illusion by unveiling the machine beneath the human face whenever and wherever possible.

Conclusion

Leadership scholars tend to think of themselves as dealing with organizational problems that are distinct from the kind of problems that management science typically tries to solve. There are good reasons for this belief: management science is principally concerned with optimizing the system from the inside, whereas leadership studies is traditionally occupied with radically transforming the system from the outside. This outside force is what is typically referred to as ‘leadership’ – specifically, the heroic or transformational leader, someone who can enact change within the organization due to their extra-ordinary abilities. In an age in which intelligent machines have infiltrated the workplace, making us feel overwhelmed and disenchanted, it is only natural for leadership scholars to search for human leadership qualities that might offer a way out of this predicament.

What is easily missed, however, is that there is already an algorithmic component within leadership discourse and practice. Management science has long invited us to see the activities of human leaders in terms of an optimization game. If this approach is accepted, the once ‘extra-ordinary’ leader is no longer an external force but is reduced to one of the many functions within a system that must be optimized. As a ‘visionary strategist’, for example, the leader is instrumental to finding the optimal path to some defined goal. Viewed in this way, there is little space for human originality or decision-making. In fact, such visionary strategists simply perform a task for which algorithms are better suited (such as finding gaps in the market or seeing future possibilities) and so may find themselves supplanted. Similarly, as the ‘authentic’ face of an inhuman machine, the leader performs a representational function, one that is firmly embedded within an algorithmic logic of optimization – what we have referred to as a reversal of the illusion of the Mechanical Turk.

The complex interplay between human leadership and algorithmic processes, as discussed in this paper, has implications for the field of leadership. So far, discussions of leadership have focused on the possible and actual roles of leaders in corporate decision-making, emphasizing their vision, intuition, and judgment. But we are now moving into an era in which the leader-algorithm relationship itself becomes a primary subject for researchers. In this paper, we have suggested that this relationship cannot be understood as a binary one between human and machine. Matters are more complex. To wit: the ‘leadership’ side is already a human-machine assemblage, and the ‘algorithm’ side is increasingly emulating human modes of communication and behaviour (Esposito, 2022). For leadership studies, this complexity calls for empirical studies of the reciprocal influence between leaders and algorithms. For example, interpretivist researchers could further examine how digital leadership platforms mediate the relations between leaders and their team, how senior managers interact with generative AI in their daily work, or how employees relate to anthropomorphized chatbots in leadership positions – a strand of research that is still in its infancy in the broader field of management and organization studies (see e.g. Einola et al., 2023; Scott and Orlikowski, 2025; Stollberger et al., 2025). It is likely that such studies will offer insight into the dilemmas and pressures created by an increasing reliance on algorithms in the workplace. Leadership in this new reality does not offer an easy or clear way out, as the literature on algorithmic leadership suggests. It is, rather, a concept that will continue to mutate – in surprising, unpredictable ways – alongside developments in those socio-technical systems we call ‘artificial intelligence’.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Swedish Research Council (Vetenskapsrådet), project no. 2023-00986.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.