Abstract

While many are concerned about artificial intelligence (AI) enabled automation and the threat of mass technological unemployment, this paper contributes to a stream of organizational research that suggests semi-automation is a more probable scenario. A growing subtheme in this stream emphasizes ‘conjoined agency’, where employees collaborate with smart machines rather than be subordinated by them. In this paper, we argue that conjoined agency has a hitherto unexamined dark side. Building on an in-depth qualitative study of passenger train drivers and their managers in the United Kingdom, we demonstrate how drivers require taxing levels of stamina to successfully work with algorithms and semi-automated train systems. This is exacerbated by what we call an ideology of automation, notably threats from management that driverless trains are imminent. Drivers feel compelled to compete with these prospective robots. We term this human robotic mimesis, where workers attempt (but often fail) to perform like robots. By exploring this dark side of conjoined agency, we propose that it isn’t only the practical application of new automating technologies that is shaping organizational life but their ideological evocation within specific power relationships.

Keywords

Introduction

As advanced computational and cybernetic technologies reshape how we work, human agency and technology collide in ever more complex ways. An increasingly popular way of framing this nexus is Murray, Rhymer and Sirmon’s (2021) notion of conjoined agency. A good example is train drivers. These skilled workers express their expertise within a highly technical, semi-automated environment characterized by algorithms and onboard digital systems. Building on the concept of conjoined agency, we suggest that it has a hitherto unexplored dark side. Namely, that when working with smart machines, human beings themselves can take on machine-like qualities to perform the task successfully. This roboticization of human labour is exacerbated, we argue, in settings where managerial threats of future full automation are salient, something we term the ideology of automation. To illustrate our focus more succinctly, let’s begin with an empirical observation.

Outside London’s St Pancras International railway station – in areas only staff can see – bottles of urine litter the tracks. They have been covertly discarded there by drivers. What motivates them to do this? An obvious answer to this question – which was derived from an empirical study of passenger train drivers in the United Kingdom – is the lack of toilets on trains. This is often true. Additionally, perhaps the gruelling schedules imposed by management are to blame. This is also frequently the case as coercive timetabling managed by digital algorithms reduces the time required for personal needs. But officially at least, comfort breaks are guaranteed in the enterprise agreement. Drivers ignore this concession, not because they are lazy; indeed, interviewees unanimously found urinating in bottles undignifying. The conundrum thus deepens. In the following study, we demonstrate an association between this practice and occupational threats and anxieties around technological unemployment. Indeed, our results uncover a previously unexamined facet of conjoined agency with significant implications for workers affected by artificial intelligence (AI), unassisted robotics and machine learning in contemporary organizations.

Public debates about the future of work have been marked by predictions of enormous joblessness in the wake of AI, deep machine learning and unassisted robotic process automation (Frey, 2019). For example, some studies project that 47 per cent of current jobs in the United States might soon disappear (Frey & Osborne, 2017, 2018) and 800 million jobs worldwide by 2030 (McKinsey Global Institute, 2017). However, a contrary argument is now emerging which suggests that despite years of computerization, the institution of paid employment sees little sign of disappearing (Benanav, 2022). Despite the promises of an AI revolution, jobs are more likely evolving, rather than vanishing (Autor, Mindell & Reynolds, 2022). According to Fleming (2019), for example, new labour-saving technologies typically automate only parts of a job rather than replacing it in toto, resulting in semi-automated work systems.

While critics suggest that autonomy and skill can be seriously eroded by semi-automation (e.g. Altenried, 2022), a more optimistic stance is gaining ground in organization studies. It emphasizes the growing number of occupations that are enhanced and ‘augmented’ by high-tech innovations (Glaser, Pollock & D’Adderio, 2021; Kellogg, Valentine & Christin, 2020; Raisch & Krakowski, 2021). Here employees work with rather than against or in subservience to smart machines, creating more enriched and fulfilling jobs. A good example is commercial piloting and fly-by-wire technology. In an influential contribution, Murray et al. (2021) recently called this conjoined agency. Once routinized tasks are mechanized, humans are able to focus on higher-order competencies that require judgement and cognitive perspicacity – typically with the aid of sophisticated computational algorithms that assist rather than hinder them.

At first glance, passenger train drivers present a germane example of conjoined agency. Despite the extensive adoption of semi-automating technologies over the last twenty years, these jobs remain highly skilled and well remunerated. While on the surface drivers may seem in control, enjoying agentic unity with an interactive digital infrastructure, behind the scenes the situation is somewhat different. As our in-depth qualitative study will demonstrate, long shifts in the cabin interacting with computerized onboard systems is physically and mentally demanding. The situation is compounded by the aforementioned ideology of automation that pervades the occupation. By this we mean managerial threats of future technological unemployment – whether realistic or not – that informs present power relations between workers and management. This is different to related notions such as ‘technological imaginaries’ (Kazansky & Milan, 2021) in civil society or ‘technological pre-framing’ (Rezazade Mehrizi, 2023) in organizations where employees speculate about as-yet undeployed machine intelligence. Instead, the ‘ideology of automation’ is anchored in social domination at the point of production, fuelled by the divergent (often antagonistically so) interests of management and labour.

While the concept of conjoined agency is useful for conceptualizing how human labour intersects with semi-automating digitality in contemporary organizations, the influence of this ideology of automation unveils a dark side, which may help us explain why drivers secretly urinate in bottles – despite opportunities to avoid doing so. Building on our empirics, the argument is as follows: to signal (to management) their ability to perform as well as any robot, drivers tightly regulate their bodily functions, involving impressive feats of endurance. In short, they begin to behave like the very smart machines they fear might one day replace them, a phenomenon we term human robotic mimesis. But unlike machines, human beings have biological limits. So, when nature inevitably calls (i.e. with the need to urinate), drivers decide against taking a rest break because that would disrupt the ‘symbolic performance’ (also see Pachidi, Berends, Faraj & Huysman, 2021) and concede to management the superiority of driverless trains. Hence the necessity, for many drivers, of including an empty bottle in their work kit.

To substantiate this argument, our paper is structured as follows. After briefly discussing predictions of mass unemployment in the wake of astonishing technological breakthroughs, we focus on the concept of semi-automation, particularly those optimistic evaluations of conjoined agency and ‘augmentation’ where employees harmoniously collaborate with digitalized architectures. What we call the ideology of automation adds a disconcerting quality. To demonstrate this, our empirical section focuses on why passenger train drivers covertly urinate in bottles. When positing an explanation, the discussion examines the wider implications these findings have for organizational research and outlines possible avenues for future scholarship. We conclude by expounding the relevance of our study.

Automating Work in the Age of AI

Work and the ‘second machine age’

Startling media reports about smart machines performing complex human tasks – including unassisted laparoscopic surgery, pilotless military aerials and generative AI (e.g. ChatGPT) – might give the impression that the rise of job-stealing robots is something new. However, what was once called ‘mechanization’ has long characterized industrial capitalism (Aronowitz & DiFazio, 1994; Noble, 1986). According to many analysts of AI, neural nets and deep machine learning, this time might be different though. If first-wave computerization rendered routine-mental work vulnerable to automation, then the so-called ‘fourth industrial revolution’ (Neufeind, O’Reilly & Ranft, 2018; Schwab, 2016) will focus on replacing non-routine-manual and non-routine-mental jobs, including skilled professions once deemed safe from state-of-the-art robotics (Frey & Osborne, 2017, 2018; Susskind, 2020).

Already, numerous jobs have been (or soon will be) replaced by intelligent machines in various industries. For example, deep-sea maintenance divers on oil rigs have been displaced by robotic marine submersibles for safety reasons (Shukla & Karki, 2016). Document checking in the legal profession is increasingly conducted by learning algorithms due to their superior accuracy and speed (Lankester, 2018). And driverless or ‘unattended train operation systems’ now service cities such as London, San Francisco and Copenhagen (Kaka, Sonawane, Jani & Patel, 2018). According to Brynjolfsson and McFee’s (2014) study of the ‘second machine age’, these escalating innovations may soon destroy many occupations as Moore’s Law accelerates AI’s capacity to simulate human cognitive and manual behaviour at even cheaper rates. Considering these developments, ‘the rapidly emerging picture is that of an economy where far fewer people work because work is unnecessary’ (Russell, 2019, p. 120).

Yet, despite these predictions of joblessness as new technology enters the workplace, paid employment has not disappeared. Autor (2015a) poignantly asks why there are still so many jobs today, especially in countries that have embraced advanced industrial robotics and machine learning (e.g. Japan, Korea, the US and numerous parts of Europe). He concludes that ‘journalists and even expert commentators overstate the extent of machine substitution for human labor’ (Autor, 2015a, p. 5; also see Autor, 2015b). If it is true that human labour is still required or deemed preferable, notwithstanding the widespread utilization of deep structured learning robotics, then we must examine how that work changes when human and machine collide.

Conjoined agency, algorithmic management and symbolic mediation

Relevant to this discussion is an emergent stream of management scholarship that documents how many occupations are now collaborating and functioning in unison with deep machine learning and AI-equipped robotics. Unlike technological pessimists, this research suggests that deskilling and underpaid drudgery is not an inevitable corollary of semi-automation and the fourth industrial revolution. This is especially so when human judgement is still required to interpret the data produced by algorithms, for instance, or to assess the reliability and/or quality of outputs created by smart machines (also see Pakarinen & Huising, 2025; Waardenburg, Huysman & Sergeeva, 2022). This creates a significant skill premium. The diffusion of AI in medicine, to take an often-discussed example, has produced new competencies for clinicians using medical image diagnostic equipment in radiology, pathology, gastroenterology and ophthalmology (Rajpurkar, Chen, Banerjee & Topol, 2022). In banking and finance, neural networks assist analysts with market forecasting, including data sorting and quantitative categorizing. These computers augment and amplify human capabilities (see Cao, 2021). And as mentioned earlier, flight data monitoring software and AI-enabled autopilots did not displace commercial airline pilots but changed the way they performed their work (Soo, Mavin & Kikkawa, 2021).

Extending this line of research, Raisch and Krakowski (2021) reviewed three books that advocate AI-enabled augmentation over full automation in the workplace. Augmentation is favoured because technological integration becomes ‘a coevolutionary process during which humans learn from machines and machines learn from humans’ (Raisch & Krakowski, 2021, p. 195). Having said that, automation and augmentation cannot be neatly separated in a clear-cut manner. For Raisch and Krakowski (2021) this presents a paradox. Emphasis on either side of the dualism might create problematic social consequences. It is concluded that a more ethically aware managerial framework is required to blend contemporary jobs with sophisticated labour-saving devices: ‘if organizations adopt a broader perspective comprising both automation and augmentation, they could deal with the tension and achieve complementarities that benefit business and society’ (Raisch & Krakowski, 2021, p. 192).

To conceptualize this symbiosis between technology and human labour, Murray et al. (2021) coined the term conjoined agency. They define it as: . . . a shared capacity between humans and nonhumans to exercise intentionality. Humans have long worked alongside technologies—tools and artefacts—to practice organizational routines. Yet, agentic technologies—those that possess the capacity to intentionally constrain, complement, and substitute for humans in a routine’s practice—shift the locus of agency away from humans in protocol development and action selection. This results in new and distinct forms of conjoined agency. (Murray et al., 2021, p. 555)

They analyse conjoined agency in relation to organizational routines, which consist of repetitive behaviour based on protocol development and action selection. Routines and advanced technology may interact in four ways. The first radically reduces human agency: conjoined agency that mostly automates a dominant routine. However, a job may entail numerous routines, so this isn’t necessarily a recipe for human redundancy. The other three types of conjoined agency Murray et al. (2021) discussed actually empower human actors. Assisting technologies enable workers to extend an already given skill (e.g. cardiac surgery technology); arresting technologies help operators reduce error in their jobs (e.g. commercial aircraft cockpit warning systems that prevent stalling); and augmenting technologies, as Raisch and Krakowski (2021) also intimate, allow human operators to build new abilities (e.g. design software in the gaming industry).

While the concept of conjoined agency emphasizes the shared agentic features of semi-automated occupations, downplayed in this literature is the way new digital data surveillance systems – including advanced algorithms – can significantly influence the human agency required to successfully complete a task. Indeed, management by algorithm has received increased attention in the literature (Glaser, Sloan & Gehman, 2024; Kellogg et al., 2020; Walker, Fleming & Berti, 2021). Depending on the discretion required in a role, these surveillance systems can noticeably curtail the agency expressed in semi-automated jobs. By the same token, research examining the symbolic mediation of conjoined agency reveals a more complex picture whereby, for example, the added value of algorithmic technologies can be feigned to fulfil the social expectations of stakeholders. In other words, a degree of expressive symbolism and impression management can be involved. Pachidi et al.’s (2021) study of a sales team demonstrates this dynamic. Due to various agendas, workers gave the outward impression that positive sales figures were attributable to newly introduced data analytic models. But in fact, workers’ personal engagement with sales leads was the real cause. What Pachidi et al. (2021) term ‘symbolic conformity’ quietly underpinned algorithmic control in this organization. Such symbolic conformity indicates how a hidden (and very human) backstage realm can mediate this frontstage labour/technological interface and, in this case, proffer a misleading impression of digital supremacy. In some ways, this finding supports the concept of conjoined agency (also see Bucher, Schou & Waldkirch, 2021). Under certain circumstances workers may simply allow these digital systems to ‘appear’ useful and effective, while evoking undisclosed backstage labour – involving choices, decisions and compromises – to shore up this veneer (also see Berente, Gal & Yoo, 2010).

In summary, to help explain our introductory empirical observation (i.e. why drivers secretly urinate in bottles), we have identified three important theoretical touchstones regarding how advanced technologies impact on contemporary organizations. First is semi-automation and a resultant conjoined agency. Second is the role of management by algorithms that may circumscribe or at least regulate the human agency involved. And third is the importance of symbolic mediation, frontstage impression management that persists in even the most technologically-laden settings. However, a final and fourth element is still missing, which we suggest is relevant for fully appreciating the dark side of this trend. How do these three dynamics – conjoined agency, management by algorithm and symbolic impression management – play out in contexts where the fear and threat of future full automation are salient? When addressing this question in the following study, we found that the labouring body itself becomes a contested terrain as workers compete with those envisaged smart machines and push their organism to its natural limits.

Setting, Methods and Theory-Building Process

Research setting

British passenger train drivers operate in a highly complex institutional environment managed by public and private organizations. The overall rail network is administered by the Department for Transport in conjunction with Network Rail, a public body that oversees the infrastructure and signalling system. Following the privatization of the UK rail network (1994–1998), drivers were employed by for-profit franchises. Privatization was marked by protracted conflict and antagonism (Jupe & Funnell, 2015). And even today, the relationship between the drivers’ union – the Associated Society of Locomotive Engineers and Firemen (ASLEF) – and management is hostile, with strikes and go-slows occurring frequently due to disputes over remuneration and conditions. This industrial unrest is often commented on by politicians and the news media. For example, during a London Underground strike, government officials announced that a driverless train network is being seriously considered (Macaulay, 2022).

The wider UK rail network would be difficult to fully automate compared to the underground metro. From the 1980s onwards, lack of investment by successive foreign multinational owners has rendered it ‘Victorian’. Nevertheless, the desire to automate is prominently broached by think tanks, the media and corporate managers when ASLEF announces strike action (de Quetteville, 2022). These are not empty threats. Parts of the UK rail network have already been automated, known as automatic train operation (ATO). And more generally, digital technology plays an increasingly prevalent role in the occupation. Inside the cabin, drivers are subjected to onboard semi-automated systems that regulate their bodily presence and attention throughout the shift. This includes driver safety devices (DSDs), a feature of the job that was regularly mentioned by interviewees. Train drivers are also algorithmically managed by ‘downloads’. Downloads produce precise data points that track the efficiency of a shift, including speed, braking, stops, rest breaks and so on.

This technological system creates a kind of digital sensorium that highly structures physical and mental activity in the train cabin (see Figure 1).

Inside the modern cabin.

The time period drivers spend in the cabin is determined by algorithm which coordinates specific shift patterns for any given day. A shift can extend up to ten hours, with a typical working week of 35–37 hours in total (and no more than 44 hours during a 7-day period). Train drivers often work unsociable hours, including weekends, at dawn and sometimes until midnight or later. A 20-minute break during a 6-hour shift is recommended by ASLEF and should preferably take place by the third or fifth hour at the latest. Shifts longer than 6 hours must include an additional 20-minute break between the fifth and eighth hours. As we will demonstrate, despite regulations intended to accommodate biological needs, train drivers still push their bodies to extreme limits to satisfy their shift timetables (otherwise known as ‘diagrams’).

Data collection

The initial objective of this study was to understand how train drivers align efficiency demands (from management, safety protocols, timetables, and so on) with their everyday working lives. Over subsequent rounds of interviews the focus homed in on technology, automation and drivers’ physical/mental adaptations. After receiving ethics approval, which involved submitting a sample of interview questions, one author conducted semi-structured interviews (Charmaz, 2014) with train drivers and managers to better understand the pressures drivers face in this industry.

The first round of interviews involved ten drivers and managers from one rail franchise. In line with a grounded theoretic approach, the semi-structured interview questions were generally open-ended in their design. For example, interviews commenced by asking participants to describe a typical day from start to finish, then the kinds of trains they drive and the onboard technology they interact with. During this first round of interviews, drivers reported that their roles and workplace conditions were fairly uniform across the network. Additional interviews were sought through referral chains and snowball sampling (Robinson, 2014). Drivers reported that the onboard technology played a central role in mediating their activities in the cabin. For example, technological systems such as DSDs monitor both the physical and mental presence of drivers. For this reason, the interview schedule was amended to explore these themes in more depth, a process facilitated by a grounded theoretic approach (e.g. Muethel, Ballmann & Hollensbe, 2024).

The second round of interviews involved 35 train drivers and managers from multiple franchises across England and mainly focused on the driver/technology interface. The core questions asked were: How has the onboard technology changed over the years? Do these emergent digital systems affect the way a shift is performed? What is the physical and mental impact of DSDs during a shift? Do drivers manage or regulate their bodies and mental capacities accordingly? What does an efficient train driver look like in this digitized environment? In this round of interviews, one author embarked on a 1-day observational trip at a major franchise headquarters. During the visit, he was trained on a 700-class rail simulator – the most sophisticated simulator in the country – and shadowed the training of a new driver. This proved useful for empirically experiencing conjoined agency in the modern cabin. For example, the author experienced the challenge of keeping one’s foot on the DSD, to lift and re-apply it when prompted. On the same visit, this author was also shown an ATO mockup that simulated full automation on a major London through line, underscoring the deskilling propensities of prospective new technologies and the impending threat of automation. Notes and reflections from the trip became central for orientating the next data collection phase and ongoing theorization process.

As the research refocused on the technology/labour interface, especially during discussions about the prospect of driverless trains, several participants raised the subject of urinating in bottles, despite rest break opportunities built into their timetable. The phenomenon of driverless trains was also mentioned by managers when referring to the limitations of drivers. Hence an ideology of automation became an important piece of the puzzle for interpreting driver behaviour. An interest in this theme informed a third and final confirmatory interview with a train driver that lasted one hour and fifty-one minutes. Core questions asked were: How will the occupation change in the future? Will ATO be common across the network? Can human drivers compete with or perform the job better than these prospective ATOs? Do humans and the current technology ever conflict in this role?

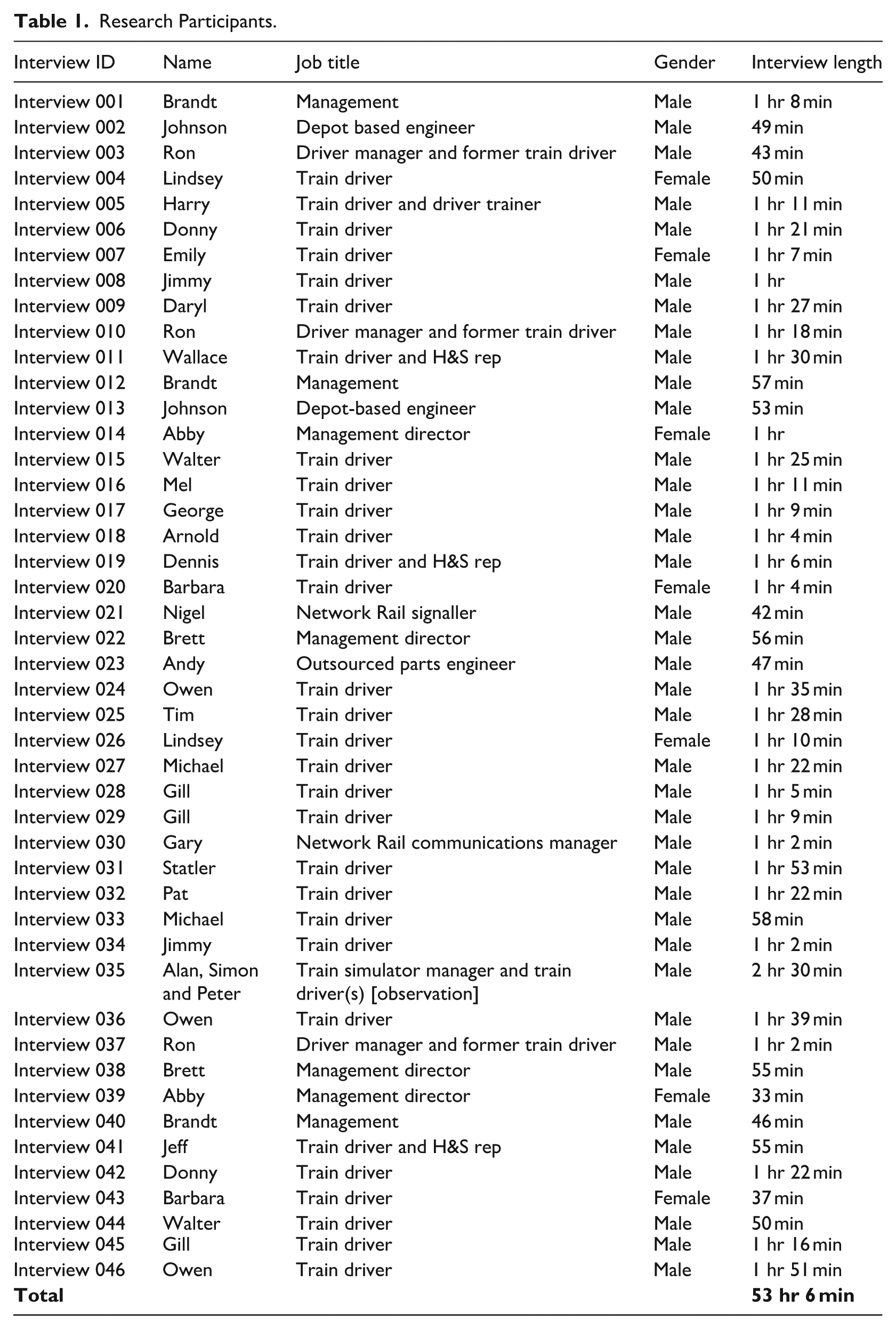

This resulted in a total of 46 interviews in all, averaging over an hour in length. The data from the interviews was recorded and fully transcribed. The names of both drivers and managers have been anonymized (see Table 1).

Research Participants.

Data analysis

We followed a grounded theory approach to our problem, beginning with an empirical observation (drivers urinating in bottles) and then developing a conceptual framework that contributes to wider knowledge in this domain (Corley, 2015; Edmondson & McManus, 2007; Locke, 2001). The grounded-theoretic analysis (Glaser & Strauss, 1967) of train drivers and management allowed us to posit several interesting insights about conjoined agency at work. For example, data mentioning how technology is linked to drivers’ bodies and minds became a central part of our analysis leading to open-coding themes on *bodily management*, *mind management* and *bodily limitations (e.g. urinating in bottles)*. Given the large corpus of interview data, we converged our analysis on bodily limits with codes concerning *conjoined agency* and *fear of impending automation*. More specifically, four interweaving phases were involved in our analysis.

Phase one – manual/prima facie data analysis. After completing the data collection process in late 2022, we focused on the bodily demands that train drivers experience in their work. The theme of drivers urinating in bottles was a noteworthy theme for us, even during the data collection process. Importantly, the data indicated that when drivers spoke about this sensitive issue, it was regularly couched in wider fears about driverless trains. At this juncture we wondered if the data was indicating a fault line between human labour and technology, where collaborating with machines morphs into competing with them. With the open-coding process identifying themes linked to bodily limits, we began to connect these limitations to codes concerning the impact of technology, including the management algorithms used to determine comfort breaks.

Phase two – assessing possible explanations for drivers urinating in bottles. The most basic explanation for our problem is a lack of working toilets on a train. However, drivers have rest breaks built into their schedules, have the right to stop and delay a train to use a station toilet and can face disciplinary action if caught urinating in bottles. Thus, the practice seemed to have been normalized as a covert facet of the labour process, with drivers reporting they and others always carry empty bottles in their bags. Once again, the question of technology was prominent in our interviews. For example, drivers complained that timetables were algorithmically calculated in a manner that treated them like robots (i.e. the scheduled rest breaks were often considered insufficient). And managerial threats of full automation also arose. This gave us reason to believe that the ‘ideology of automation’ was somehow significant. Here our coding led to an additional analysis regarding how threats of automation may converge with bodily limits and onboard technological controls. This preempted phase three which entailed a more systematic approach.

Phase three – systematic data analysis. With our hunch in mind, we undertook a deeper and more extensive analysis of the data. They were coded under the themes of *semi-automation*, *bodily needs*, *rest breaks*, *management conflict* and *driverless trains*. This more methodical examination of the interview material created a bank of 94 excerpts that were then scrutinized with theory building in mind. Given that the threat of automation was so prominent in the transcripts, it became clear at this juncture that this theme would be central to our theory-building process.

Phase four – theory construction. Finally, we developed our theory from the findings of the excerpt bank. This permitted us to develop our conceptual contribution in relation to conjoined agency, management by algorithm and symbolic impression management, which we overview in the discussion.

In light of the data analysis and our consultation with the emerging literature about automation in organization studies, a novel research problem presented itself: how do conjoined agency, management by algorithm and symbolic impression management play out among frontline workers when the threat of full automation is salient? Our inductive observations in the data collection process and subsequent grounded theory-building phases revealed a hitherto unexplored dark side of conjoined agency. In the findings below, we explore how train drivers are tightly controlled by the technology that encapsulates their role, how they test the biological limits of their bodies, how this is, partially at least, motivated by an ideology of automation and how drivers mimic the technology that they fear will one day replace them.

‘It’s Not Apple Juice’

Inside the modern train cabin

Modern passenger trains use extensive digital semi-automated technological systems that regulate the bodies and minds of train drivers. A good example is the aforementioned driver safety devices (DSDs). One type of DSD consists of a pedal that drivers must always press with their foot, except when a beep in the cabin prompts them to lift it. The foot pedal is colloquially known as ‘the dead man’s switch’: Every minute, if you don’t do anything with any other control – this noise will go off and you have to lift your foot up and pull it back down again or sometimes it’s on the handle. (Michael, train driver)

Were a driver to become ill or suddenly lose consciousness, the DSD would detect the depressed pedal and automatically stop the train. Drivers swiftly reapply their foot when digitally prompted to prevent the train from halting. Any unscheduled stops between stations is a serious infringement, resulting in a ‘one-on-one’ meeting with management and their driving data being downloaded for close examination. This DSD entails an engrained bodily routine that govern how the train is controlled.

Another DSD is a dashboard button that drivers are regularly required to press following an electronic beep. This checks their bodily presence and their mental concentration as they cognitively recognize the beep (its tone differs from the ‘dead man’s switch’). These DSD systems are linked to a broader digital network that regulates train driver behaviour. This includes an automatic warning system (AWS; see Figure 2) and train protection and warning system (TPWS), among numerous others. The AWS and DSD systems are connected to magnets placed on the tracks that interact with the onboard technology to issue in-cabin alerts, while the TPWS uses transmission frequencies regarding track conditions. These technologies have been added in a piecemeal fashion and thus require substantial familiarity to navigate their specific setup: Because we’ve got different companies that are building the trains, Siemens, Bombardier, GE, that have different software versions, because it’s not just the mechanical way now, where you put in a lever to isolate something. It’s buttons. And the problem with these buttons, some of them are soft touch buttons, some of them are hard touch buttons [which affects interactions between drivers and on-board technology. (Donny, train driver)

AWS acknowledgement button.

This piecemeal admixture of technological suites can confuse drivers and cause disruptions. Moreover, the overall opinion among drivers is that each digital embellishment aims to semi-automate vigilance in their work: Until quite recently, we used to have a 150-class diesel train and whilst it had a DSD, a driver safety device or the pedal – it didn’t have vigilance [the DSD that involves pressing a button continuously to ensure presence and concentration]. So you didn’t have to raise your foot every 60 seconds, you didn’t get the alarm going off, and you have to take the foot off and put it back on. So quite a few drivers used to put their bags on the pedal. And then they didn’t have to worry about it. (Owen, train driver)

DSDs are intended to ensure safety. But they place significant demands on drivers, both physically and mentally. Some drivers find them challenging because the standardized interface often does not sit well with certain body shapes and sizes: The foot pedal – which is the DSD [driver safety device]. I sometimes have trouble with that because I’m a bit skinny and I haven’t got heavy legs. So I’ve really got to wedged myself underneath the desk a little bit to keep it there, whereas some of the men have less problems because they’re just heavier than me. (Barbara, train driver)

In the name of safety, management increasingly algorithmically manage and monitor drivers’ behaviour: how they approach or depart a platform, how quickly they stop when encountering a red signal, unscheduled stoppages and missed turnaround times. The collected data is called a ‘driver download’ and is collated by intelligent software and various algorithms (e.g. Arrowvale On-Train Monitoring Recorders). As manager Ron observes: We download the onboard data recorders on the trains, so they can get what they call ‘an unobtrusive ride’ – because we need to prove that we’re checking drivers’ performance and safety when they don’t know they’re being monitored

However, these downloads have certain limitations, as train driver Donny argues: These algorithms that come with the driver downloads – I said [to my manager] – the circumstances [on the tracks] are different at any given time. Outside factors influence your driving technique on the day.

Such algorithmic management, alongside onboard technology that semi-automates the role, ends up placing pressure on drivers in terms of fatigue, attention span and also bodily needs, such as the use of toilets. As a driver explains, ‘a major problem in our industry with fatigue, is balancing it with technology – technology just wants one thing. . . it doesn’t care how tired you are’ (Donny, train driver).

‘Peeing in a bottle’

A driver describes the scene on a little-known section of tracks outside one of London’s biggest and most famous train stations, London St Pancras International: As you come into the station, you’ll see loads of bottles full of beverages – and it’s not apple juice! I carry a bottle, and hand sanitizer and wipes, so if I need to pee, I’ll pee in a bottle. I mean a large coffee cup can be filled up quite quickly. That’s what train drivers do – they’ll keep them in their work bag, and they’ll fill them up. (Tim, train driver)

The practice follows the evolution of contemporary cabin design. Older diesel engines had a urinal in the cabin and were double manned, making it easier for one driver to urinate without causing a delay. Some drivers would also stop the train and exit the cabin to urinate, which is extremely dangerous. In February 2022, a driver was killed by a passing train for this reason. On this same stretch of track, ‘there had been previous complaints regarding drivers discarding bottles full of urine on the track at West Worthing and threats of disciplinary action for those caught doing so’ (ASLEF, 2022). Yet the practice persists and, short of placing CCTV cameras in cabins, the electronic monitoring system is unable to detect it. The practice is puzzling given that drivers have comfort breaks built into their schedules that is ratified by ASLEF. And as mentioned earlier, drivers could be severely disciplined should they be caught urinating in a bottle.

In light of these factors, why does the practice continue? One reason is linked to the driver’s computerized shift schedule, or ‘diagram’. Diagrams are negotiated and agreed to by ASLEF and management. They dictate when a driver begins a shift, the turnaround time between services and rest break timings: You do get what’s called a turnaround time, so I’ll get six minutes to turn around, that doesn’t really include time for going to the toilet or getting a drink because it’s not accounted for – you shouldn’t be doing it then. (Dennis, train driver)

Because ASLEF ratified the diagram (with what they considered adequate toilet breaks), drivers are reluctant to complain about needing more time to attend to bodily needs. Doing so could risk attracting the ire of management and undermine their positive standing within ASLEF. As train driver Michael observes: They’re [management] gonna say, none of your colleagues complained about this so it’s something you need to be dealing with. Collectively, it’s difficult when you’ve accepted that train drivers can drive a train for four hours before a break – even though they will be pissing in a bottle or out the door, start to argue that all of a sudden it can’t be done.

However, other drivers appeared to find their schedules adequate for these bodily needs or at least did not explicitly raise it as a problem. This implies that other factors might also be causing them to urinate in bottles.

Who’s afraid of automation?

Themes of computerization (or semi-automation in the cabins) and the prospect of fully automated trains consistently appeared in the data. This constituted what we have termed an ideology of automation whereby the management/employee relationship is marked by the threat of future technological unemployment. For drivers, this is a topic of consternation and a degree of fatalism was evident. For management, safety is the primary justification evoked when celebrating the prospect of driverless trains. For example, drivers who desperately need to urinate make unsafe decisions, as the fatality mentioned earlier illustrates. A train driver gives another example: We have had a couple of incidents – there’s this guy who just couldn’t take it and so he goes through a red light because they don’t go to the toilet beforehand. They were concentrating on the toilet and missing a signal and things like that. (Emily, train driver)

Full automation is an obvious solution to these safety breaches, according to manager Ron: . . . of course, with automatic train operators (ATO), you’d eliminate all of those safety issues overnight because computers don’t make mistakes if it’s [sic] programmed properly to do exactly what to do – if you tell them to stop at the station, they’ll stop. Tell them to stop at red light, they’ll stop.

Ron mentions London’s Driverless Light Rail (DLR) and believes the entire rail network will inevitably follow suit: ‘That’s the future – take the human element out of it altogether.’ The DLR uses a blend of remote human users (located in a centralized command unit) and deep learning algorithms (i.e. ‘Tracklink’) to control trains. Ron continues: I think automation is inevitable in the driver role. We have the ATO already in place. It just needs to be plugged in and switched on. Likewise, a lot of new trains now are built for ATO. But the system is just lying dormant, and at some stage in the future. Yeah, again, they just need to plug it in, flick a switch and the train’s ATO.

On the other hand, most managers also acknowledge the difficulty and high cost of automating the rail network: ‘[automation] is still a long way off and extraordinarily expensive. And open to all sorts of faults, failures, and it is far, far better to have someone in there until such times as you’ve created a train which is 100%, reliable and successful’ (Alan, management). Thus, in the context of combative industrial relations, the ideology of automation is often more of a threat than a realistic alternative. As this manager illustrates regarding driver passenger announcements: We’ve got some amazing drivers out there right now that do just the best announcements, we’ve got probably the majority that do [announcements] okay, and then we’ve got a minority that just don’t do it at all – in the future when there’s automatic train operation – it [announcements] is really important. (Abby, director of customer services, management)

Similar proclamations are repeated by media outlets when union action unfolds in the UK. The headline of a popular newspaper is indicative of this: ‘The impending rail strike misery highlights the case for automating our railways’ (de Quetteville, 2022). These narratives reinforce the ideological narrative that a semi-automated workforce will one day be replaced and that organized workplace dissatisfaction (e.g. strikes, and so on) simply makes the case for occupational obsolescence stronger.

Which ‘robot’ is winning?

Manager Bret made a telling observation when speaking about the idea of fully automated trains transforming the network. He implied that drivers are now competing with autonomous machine technology (that might one day replace them) and are judged according to that standard (i.e. efficiency, safety compliance, and so on). But will human drivers ever outperform these fully automated train systems or even equal their capabilities? He is doubtful: We want people [drivers] to do proper safety checks. We want people to read their notices. We want to make sure they’re always prepared for duty under time pressure. We want them to do all of that stuff really well, but we always want to do it quickly. So the two things don’t necessarily match. (Bret, manager)

Drivers also reproduce this ideology of automation. Because much of the work in the cabin has already been semi-automated, drivers interpret this managerial enthusiasm for ATO (e.g. ‘it just needs to be plugged in and switched on’) with much trepidation. Add to this government calls for a ‘technological solution’ during periods of industrial unrest, fear about the increasing use of computerization is rife among train drivers: Would they [management] change our role? Would we be train captains [passive attendants] or something? I’m a bit dubious about automation because it’s like they’re trying to get rid of jobs – it’s quite scary. (Emily, train driver)

Emily’s concerns were common among participants, privately acknowledging that ATO would ultimately make them redundant. Not only drivers, but conductors and other auxiliary staff would be affected too: I think the worry [about ATO] is the conductors will be in the firing line. They’ll say [government and operators] ‘two people on the train is too expensive’ [train driver and conductor]. I know I’ve got a pension – but that’s something that has definitely been in the firing line previously. There’s more concern for the future. (Mel, train driver)

This looming threat of ATO also has important implications for the quality of the job. An author had firsthand experience of a sophisticated ATO simulator involving a main trunk line through London. These are the notes s/he recorded during the encounter: The skill involved in approaching and departing platforms becomes almost completely irrelevant as you sit and watch the train perform the maneuvre independently in the driver seat. ATO doesn’t just deskill driving techniques, but it takes away the fun of driving too. The experience of the ATO was far less satisfying than driving it myself. I can only imagine the threat of this workplace change as something I would dread.

Together with Emily’s fear of redundancy, Mel’s concerns about pensions and our experience with the ATO simulator, an interesting thematic is salient in the interviews. It is evident in Bret’s (manager) comment about drivers being compared to automated systems when assessing their productivity. While speaking about the threat of automation, drivers often transitioned into a discussion about what management – in the context of that perceived threat – now expect from them. According to Jimmy (train driver), most drivers now recognize that performing their roles efficiently today means acting as robotic as humanly possible: They [private rail companies] ultimately want the most out of the driver for what they can do in their day. If you are bums on seats, driving for as long as humanly possible, with the shortest break possible, they are getting the most efficiency out of you.

For these workers, the perceived threat of future automation and its intersection with unrealistic managerial expectations about appropriate human performance was often framed in terms of how the human body has inherent limitations (such as the need to urinate): It can make you actually quite resentful in the way the diagram has been done when you think, okay, I’m supposed to do four or five hours at a time here, and then another half hour break, and then do another three or four hours, and you’ve got the bare minimum times. And yes, the computer [which calculates the diagram] has made it happen. But it doesn’t look like a human. You look and think, I’d go to the toilet in that time, or I’d get a drink in that time. It just makes you a bit pissed off. You think, I’m not a robot. I’m afraid at the moment we haven’t got driverless trains. You’ve got a person there and they’ve got needs. Just because you can make a diagram, and use it to have a turn-around time of 9 minutes doesn’t mean the person wants to do that or can do that. (Mel, train driver)

Mel resented being forced to act as if he is a robot. And he clearly is not one. Furthermore, it is obvious that train drivers are at times unable to urinate within turnaround times, while driving or during breaks. But these turnaround times were negotiated by their union, so why aren’t they enough? Train driver Michael provided some key information: We gave a lie to how long the management felt that we could function driving a train without a toilet break. We gave them the lie, if you like, that we could do it longer than we could. So certainly, by hiding it [the need to urinate] in the first instance – I think continuous driving was something like three hours. It might have even been five hours – it was a ridiculous amount of time. So, peeing in a bottle was something that people did. More often than not, certainly for when I was driving the train. The service was such that there was never very long before we were still at a red light, stationary, and that was my cue if I ever wanted to go.

We believe this ‘lie’ aimed to give the impression that workers could perform as well as, if not better than, the ATO that ominously looms in almost every negotiation with management around pay and conditions. In addition to machine-like levels of physical and mental endurance, drivers also insist they bring to the job irreplaceable human judgement, which cannot be replicated by a robot, as driver Mel explained: There’s still a need [for a human driver] for when things go wrong [e.g. damaged tracks or engine], when a computer might go wrong, and you want a person there to say, ‘Okay, this is what we’re going to do.’

In the context of these institutional expectations and discursive narratives regarding automation, we suggest, drivers endeavour to regulate their bodies as if they were robots, bypassing or ‘hacking’ natural needs to avoid unscheduled stoppages: This is what I learned very quickly: that you have to kind of know your body. So for me, I’ll have water in the morning but I won’t drink too much. Because I have to make sure that my bladder is kind of empty before I go onto my train. But then I don’t want to dehydrate myself. So then halfway through the first half of my shift. I’ll drink some water so then on my break, I’ll be going to the toilet. It took me a couple of years to work that out. But it’s so you don’t . . . yeah, you’re not phoning up saying that I need to get to the toilet as quick as possible. (Emily, train driver)

Eating is self-regulated in the same manner, with varying degrees of success: I try and sort of time my mealtimes – so you’re not eating while you’re driving. But yeah, you can drive up to six hours before you have a break. And so, obviously, you got to be very careful with things like toilet breaks, because you’re gonna be very dehydrated because you’re sitting in a seat for three hours. (Tim, train driver)

Workers in other occupations regulate their bodily functions too. However, long periods in the cabin – combined with managerial expectations and the aforementioned ‘ideology of automation’ – see workers testing the boundaries of endurance. For example, Tim (train driver) told us in relation to toilet breaks, ‘it takes a big toll on the body, and I know drivers that have had bladder issues which have been directly attributed to the job’. For Owen (train driver), ‘The most common thing we get is carpal tunnel, or repetitive strain issues.’ Another driver remarked: When I’ve been sat down for long periods of time driving trains – I did end up with a trapped nerve in the bottom of my back. It was excruciating. I ended up havingDiazepam to sort of relax the nerve and deal with the pain. (Emily, train driver)

Chronic fatigue is also a problem because shift work disrupts circadian rhythms: ‘that’s one of the biggest challenges, you know, and that has all kinds of effects on the body. It’s carcinogenic, it’s linked to occupational cancer’ (Wallace, train driver). Barbara (train driver) similarly mentioned that ‘9 times out of 10 – you speak to other drivers and they’ve not been able to sleep very well. But they’ve come into work, and they do the job. But it is hard.’ Robotically performing a semi-automated job also means that the mind can sometimes enter into ‘autopilot’, as this driver observed: Sometimes you stop at a station and you think, ‘Christ did I stop at the last station?’ You don’t even remember stopping at that station – you’ve just been on autopilot – in the zone. (Dennis, train driver)

Indeed, workers privately concur that performing in this mechanistic fashion is difficult (if not impossible) to sustain indefinitely. The human body is simply not designed to function like this. Nevertheless, the future of their jobs and livelihoods depend upon maintaining the performance. Hence why drivers push their body to its limit and hide those limitations when nature calls: So you live your life by the times that are written on this piece of paper [‘diagram’] during the day for you to work off. Efficiency is how they’ve made that time for everything, sometimes as tightly as possible. I talked about it earlier, okay, I haven’t got time to use the toilet, that is ridiculous, they’ve been too efficient. It doesn’t work, it might be efficient for a computer to say this is possible. But a human being – that doesn’t really work as efficiently as they want us to. (Mel, train driver)

Discussion

Our theory section concluded by asking how three key concepts – conjoined agency, management by algorithm and symbolic impression management – play out in contexts where the fear and threat of future full automation is salient. By investigating this in our empirical setting, we can better study the dark side of the labour/technology interface and explain why workers push their organism to its limits. What we have termed the ‘ideology of automation’ is pivotal and sheds light on why drivers secretly urinate in bottles rather than use assigned rest breaks. In effect, they are competing with the robots that management claim will someday replace them. Let’s discuss each conceptual element – conjoined agency, management by algorithm and symbolic impression management – in relation to our data and explore the wider implications they have for organizational scholarship before outlining possible avenues for future research.

Conjoined agency and its discontents

The concept of conjoined agency holds much promise for exploring how human labour intersects with semi-automating digital technologies (Murray et al., 2021). For us, however, this and related ideas such as ‘augmentation’ (Glaser et al., 2021; Kellogg et al., 2020; Raisch & Krakowski, 2021) and ‘hybrid human–AI collaboration’ (Raisch & Fomina, 2025) present an overly optimistic interpretation of how human work is affected. For these scholars, full automation may transpire, but in many cases labour and AI-led robotics establish a collaborative equilibrium with their human counterparts, ‘enriching’ jobs in the process. A case in point is Murray et al.’s (2021) reflections about the empowering effects of AI algorithms at The New Yorker. Computational intentionality assists human judgement and cognitive discretion, producing win–win outcomes for the editorial team.

At first glance, passenger train drivers also appear to exemplify a harmonious union between smart machines and human work. However, all is not what it seems. Behind the scenes drivers mentally and physically struggle to keep pace with this digitalized environment. And given the constant threats of technological unemployment from management, they feel compelled to signal their ability to perform as well as any robot. In short, drivers compete with both extant technologies and those they imagine will soon steal their jobs.

This finding has important implications for how we research partial-automating digital technologies in organizations. Power and domination are salient. What on the surface appears to be a positive human–AI symbiosis must be placed within the context of asymmetrical power relations (e.g. the employment relationship), specifically management hierarchies, labour process control, performance metrics, surveillance and uneven access to decision-making opportunities. Hence why we prefer the term ideology of automation as opposed to ‘technological imaginaries’ (Kazansky & Milan, 2021; Mager & Katzenbach, 2021) or ‘technological pre-framing’ (Rezazade Mehrizi, 2023) where future-orientated expectations about full automation are also formulated. The word ‘ideology’ connotes a struggle over variable socio-economic interests at the point of production and foregrounds structures of domination that so often mark contemporary workplaces. This is omitted, for example, in Rezazade Mehrizi’s (2023) study of radiologists framing the ‘promise’ of as-yet undeployed intelligent computational software. Pre-framing is treated as neutral mental schemas and bosses are conspicuously absent. Even when radiologists fear the worst – being replaced by AI – the trepidation is triggered by the endogenous potential of diagnostic deep-learning algorithms rather than top-down managerial pressure.

Power relations explain why we never heard either management or drivers mention the automation of office work in the rail industry, even though there is plenty of evidence suggesting that white collar and managerial work too is susceptible to AI-enabled displacement. Furthermore, this finding extends our understanding of conjoined agency by showing how power and domination significantly influence sense-making about what the techno-future may hold. This is crucial, we suggest, for gaining a more nuanced behind-the-scenes comprehension of semi-automation at work, even when all seems agreeable. Intermediated by an antagonistic ideology of automation, our approach explains why workers may interpret their clever machines not as allies in a collaborative enterprise but as potential rivals they must outsmart and outperform.

Embodied algorithms at work

Previous research suggests that organizational algorithms can be approached from several different angles. For example, Hillebrand, Raisch and Schad (2025, p. 343) differentiate between human–AI collaboration (HAIC) and algorithmic management (AM): ‘while HAIC explores how humans use AI to manage, AM describes how humans are managed by AI’. Our empirical study emphasized the latter (AM) once it became evident that drivers were struggling to keep up with the in-cabin digital infrastructures and meet management expectations about desirable work performances, expectations rendered problematic via the ideology of automation. While appearing concordant from the outside, our analysis reveals that employees will experience conjoined agency negatively when managerial algorithms are deployed for surveillance purposes. Of course, semi-automated systems already compel employees to behave like machines in such roles. But when algorithms micro-monitor the felicity of that performance (e.g. detecting mistakes, lapses in concentration, and so on) the roboticization of human labour is pointedly reinforced.

Important for understanding management by algorithm are the social textures that culturally embed them, conferring meaning and nuance (also see Kellogg et al., 2020). Once again, we found that power is an important mediating variable in this regard. The discursive threat and foreboding of technological unemployment imbued driver downloads with sinister import that exceeded the actual technical artefact. This ideological surplus instigated a kind of ‘algorithmic cruelty’ (Gray & Suri, 2019) since workers were constantly reminded of their potential obsolescence when interfacing with core elements of their workplace. This harmed not only the fatigued body as it wrestled with the technological assemblage but also workers’ sense of security, worth and dignity.

Our findings extend current knowledge of algorithms and their organizational outcomes in other ways. For example, a poignant irony is evident in the data that gives the notion of conjoined agency an unexpected twist. Unlike the algorithmic surveillance that delimits human agency (e.g. see Anteby & Chan, 2018; Newlands, 2021; Walker et al., 2021), we found that a covert agency among drivers was crucial for identifying ‘blind spots’ in the managerial datascape in order to maintain the pretence of their machine-like abilities. Driver downloads, for instance, couldn’t detect the practice of urinating in bottles or other physical workarounds inside the cabin. And that backstage agency was essential for sustaining the symbolic impression of seamless rationalization to managers. If say, CCTV cameras were installed in cabins, then the charade would no longer be tenable, revealing the unreasonable nature of this digital regime to all, including management who might possibly relax their unrealistic performance expectations as a result.

In terms of advancing current research, our investigation highlights the importance of the human body in the age of digitalized work. Indeed, surveying the growing literature about management by algorithm in organization studies, we are struck by how disembodied these investigations are. For example, in Hillebrand et al.’s (2025) expansive review on the topic, the body isn’t mentioned once. Even Glaser et al.’s (2021) ‘biography’ of an algorithm features no concrete, embodied people. Given that algorithms are a digital abstraction, this lacuna is perhaps understandable. But our research demonstrates the unavoidable visceral, material and bodily dimension of these virtual control systems. We observed employees physically living with their algorithmic masters, adapting to their modulations, carefully regulating their bodies to match its expectations and demands. Consequently, we suggest that a kind of cyber corporeality or virtual viscerality must be kept in mind when studying digitality in the workplace because conjoined agency must inevitably enrol, engage and imbricate (often imperfect) embodied organisms.

Symbolic impression management: Human robotic mimesis

By exploring the dark side of conjoined agency, our empirical investigation offers germane insights into the automation/augmentation debate. As explained by Raisch and Krakowski (2021), AI-enabled augmentation results when non-demanding routine tasks are automated, permitting workers to focus on higher-order activities or what Pakarinen and Huising (2025) call ‘relational expertise’, the rich emotional and cognitive practices that machines are unable to perform. The implication is that technological augmentation may enhance workers’ unique humanness – especially the more cerebral, artisanal and relational attributes associated with non-routine higher-order interaction (also see Hillebrand et al., 2025).

Our study reveals a different dynamic in which ‘humanity’ at the AI/human interface does not fare well. Stark power relations, an aggressive ideology of automation and endemic conflict, frames workers’ comportment to their smart machines, compelling them to engage in signalling activity regarding their ability to perform as well as or better than robots. Impression management thus symbolically mediated the enactment of these digital architectures (also see Pachidi et al., 2021). Echoing our earlier remarks concerning virtual viscerality, this may involve what Lawrence, Schlindwein, Jalan and Heaphy (2023) term body work (also see Harding et al., 2022) – regulating food and water intake, and covertly peeing in bottles. What makes this corporeal self-management different compared to other professions where workers cannot spontaneously take a bathroom break (e.g. theatre performers or surgeons) is the ominous and acrimonious threat of full automation that foreshadows the situation. Consequently, unlike technological augmentation that sets humans free from routine tasks so that they can focus on higher-order cognition, drivers found themselves becoming machine-like instead to ward off managerial criticisms about their weaknesses.

This attempt to simulate technology with the aim of deterring (or at least postponing) high-tech automation we term human robotic mimesis. At least three dimensions are involved, we argue. First is the most basic dimension, what we call first-order mimesis. This is where workers behave robotically due to the intrinsic features of the labour process. We call it ‘basic’ because the rationalization of human labour has long been a feature of industrial capitalism, as Charlie Chaplin’s Modern Times wonderfully illustrates. Concerning our case, we observed this dimension of mimesis in relation to DSDs, onboard data management systems and other computer technologies in the cabin. Encouraged by managerial algorithms, driver behaviour inevitably reflects this hyper-mechanistic environment, especially when compared to traditional (i.e. pre-digital) cabins. Although judgement is still required for attending to unforeseen circumstances, computerized beeps and other cues demand a robotic-like response from drivers. This is not unusual and can be observed in many other organizational contexts. For example, studies of Amazon’s ‘fulfilment centers’ reveal how this semi-automated environment forces workers to follow processes dictated by storage/retrieval algorithms and digital tracking devices, including wearables (see Delfanti, 2021; Moore & Woodcock, 2021).

The second dimension of human robotic mimesis concerns the practice of regulating the body so as to effectively function within tightly managed temporal and spatial environments. We term this second-order mimesis and it tends to be self-imposed (by workers) rather than by the technology per se. Our case provides strong evidence of this. For example, as they follow the timetable or diagram, train drivers must remain in the cabin for extended periods of time. Consequently, they approach their body as if it were a machine in order to cope. A kind of machinic bioregulation is required. Drivers monitor what they eat, drink and ensure adequate restroom breaks before each shift. Other occupations also see workers undertaking such ‘body work’. In the military, for example, fighter pilots are trained to regulate their sleeping, eating and bodily waste-management functions as if they were part of the equipment (what is termed ‘human performance optimization’) (Lattimore, 2017).

The third dimension of human robotic mimesis is closer to our research focus and empirical findings. We term this third-order mimesis. This roboticization of human labour is not engendered by a simple one-to-one technological interface per se but extrinsic organizational or cultural factors, including expectations directed to workers by an audience such as management or customers. This is where the ideology of automation becomes important once again. Our case study provides ample evidence of this dimension of mimesis. Like other strike-prone industries where unions remain relatively strong, rail company managers use the threat of technological unemployment to try and subdue industrial unrest. They strategically parrot popular narratives around driverless trains, despite their implementation being many decades away. Drivers in turn tacitly signal their ability to perform as well as (or even better than) those prospective robotics, mimicking ATO as much as possible by pushing their bodies to their natural limits – even when organizational designs and rules allow them to attend to those bodily needs.

Interestingly, we observed third-order mimesis mainly when a driver could no longer successfully act as if s/he is a robot (such as desperately needing to urinate). In that situation, they took measures to discreetly maintain the (now false) appearance of robotic efficiency for their audience (urinating in a bottle instead of using proper breaks or complaining to management/ASLEF about the inadequate turnaround time). Echoing Pachidi et al. (2021), we propose that this dimension of mimesis has a strong symbolic aspect. Workers aim to signal the advantages of having a human driver in the cabin vis-a-vis ATO (e.g. context-specific expertise and judgement, the ability to deal with unanticipated events, personable driver announcements) and de-emphasize the perceived disadvantages of human bodies (e.g. the need to urinate) and cognition (e.g. mental fatigue).

This way of understanding advanced digitization and its roboticization of living labour opens up a new problematic in organizational research. It centres on what happens to human beings within this labour/technology nexus, including their emotional, cognitive and corporeal makeup. Present research falls into two general camps. Optimistic ‘conjoined agency’ and ‘augmentation’ scholarship hopes that a fuller, more empowered and creative human being will be forged in the second machine age (e.g. Kober, 2025; Raisch & Krakowski, 2021). Pessimistic researchers, on the other hand, state that the opposite is happening – the negation of human potential as workers become the ‘machine’s machine’ in alienating, mechanical and lifeless jobs (e.g. Fleming, 2019; Moore & Woodcock, 2021). Our study reveals a third space. Drivers evinced neither a fully engaged nor a fully negated humanity. Instead, they embodied an unenvious way of life – very rich in anxiety, stress, mental fatigue, physical pain and unsavoury bodily mishaps. Indeed, successful human robotic mimesis required an impressive set of human capabilities after all, albeit underscored by suffering. In this third space of computational nesting, workers ultimately discover that they are human after all . . . all too human.

Potential future research areas

There are several future research areas in organization studies that could develop and extend the insights we have presented in this paper. First, our study argues that even if advanced digitalization, AI and robots do not completely replace an employee’s job, the person themselves will be altered nevertheless, including their subjective and cognitive qualities. This notion has already been broached in the philosophy of AI research. Smith (2019) argues, for example, that next-generation AI and robotics will still only have reckoning prowess as opposed to judgement – the latter of which is essential to train driving and other jobs that supposedly face the prospect of full automation (see also Aloisi & De Stefano, 2022). Whereas reckoning is calculative and quantitative, judgement instead deals with intuition, past experience, unforeseen contingencies, the difference between reality and appearances, and creative problem solving (i.e. lateral thinking, and so on). What ‘terrifies’ Smith (2019, pp. xix–xx), therefore, is not the idea that machines will outsmart human intelligence, but the opposite: ‘by being unduly impressed by reckoning prowess, we will shift our own human mental activity in a reckoning direction’. Organizational research has already touched on the question of how algorithmic reckoning may change human decision-making competencies (Moser et al., 2022). Our study invites a more extensive examination of this subject. For instance, how might semi-automation reshape political awareness, personal identity, ethical deliberation and intuition? Echoing Smith (2019), we suggest that this is where the real danger of next-generation smart machines probably lies.

Second, we believe that future research could provide more insights about how the ideology of automation functions as a mode of power. As mentioned earlier, recent studies of automation in the ‘second machine age’ largely concentrate on its practical feasibility. Our study suggests that this is only part of the picture. Threats about its potential uses (and perhaps abuses) are also important. Additional research is needed to understand how this ideology bolsters existing power relationships and/or becomes a unique source of governance in diverse organizational settings (see Aloisi & De Stefano, 2022). Relatedly, how do workers resist this ideological threat? We have indicated that one reason why workers – such as the drivers we studied – might push their bodies to the limit is to discredit or undermine the necessity of full automation. Further inquiry is required to better understand how these complex dynamics play out in various occupational roles.

And third, rather than simply catalogue the specific effects of automation – either as an ideological threat or a semi-enacted reality – organization studies scholars are well placed to ask important ethical questions about conjoined agency and the future of work in a more critical way. Ultimately, the impact of unassisted robotics, neural nets and deep machine learning is not simply a technical problem but a moral one. Who will be the winners (i.e. better pay, conditions and status) and losers (i.e. poorer pay, conditions and status) after the full implications of the ‘fourth industrial revolution’ unfold? By asking such questions, we must not only pragmatically address present-day ‘grand challenges’ (Ferraro, Etzion & Gehman, 2015) but also imagine what grand challenges await in the future. This, of course, requires informed speculation, which is unfortunately deemed methodologically unsound in the social sciences, not to mention organizational research (Gümüsay & Reinecke, 2022). However, if we hope to design organizational alternatives that resist the roboticization of living labour, then we must begin the process of theorizing better and more just futures of work now, by mapping the risks that potentially lie ahead.

Conclusion

We concur with an emergent stream of research that the chances of AI and advanced cybernetics completely replacing the human workforce have been grossly exaggerated. Like our empirical setting, semi-automation is a more realistic scenario. But this will not necessarily result in a symbiotic unity between human and machine as proponents of ‘conjoined agency’ and ‘augmented automation’ predict. While train drivers enjoy high levels of skill, prestige and income, our study shows that even high-status operators may feel at odds with the smart machines they work with, producing various unintended consequences. More specifically, workers are compelled to mirror – in terms of bodily movements, cognition and increasingly their emotional comportment – the technology with which they interface.

Train drivers are not alone. Indeed, our message holds much relevance for critically scrutinizing semi-automating AI innovations across a wide range of organizations. In short, the real danger is not that robots will steal our jobs but that working with them will radically alter what it means to be labouring human beings. We risk becoming more like them rather than they becoming more like us. We hope that this study will place these troubling developments at the centre of coming debates regarding new-generation technologies in contemporary organizations, perhaps including our own.

Footnotes

Acknowledgements

The authors would like to thank Professor André Spicer, Professor Bobby Banerjee, Dr Daisy Chung, Professor Jean-Pascal Gond, Dr Jukka Rintamäki, Professor Amit Nigam, The Centre for Responsible Enterprise (ETHOS) and The Sussex Business School Write Club. All played a part in giving helpful feedback and suggestions. We also appreciate the guidance and support of Professor Renate Meyer, Professor Paolo Quattrone and Professor Tammar Zilber and our three anonymous reviewers. Finally, we would also like to thank the many train drivers and members of the UK rail industry who contributed to this research.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.