Abstract

This paper argues for a criminology of the future. This matters at a time when the accelerating use of technologically-supported and digitally enhanced (techno-digital) policing methods outpaces our ability to take stock of their social and criminal justice effects. Criminology and policing studies have been swift to address the organisational and operational complexities of techno-digital transformations, and have raised critical questions of the politico-ethical implications of this qualitatively different paradigm of policing. However, this scholarship remains marginal to, and is eclipsed by futures-facing technoscientific research agendas which continually bring the future into being through practices of building, inventing, designing and experimenting. Criminology steps lightly, if at all, into the future. Trapped by the conventions of retrospective analyses, the discipline has difficulty engaging with uncertainty and the unknown, and is reluctant to speculate on worlds-to-come. This paper works towards a criminology of the future, and does so by firstly, drawing on Jasanoff’s notion of sociotechnical imaginaries to unpack the strategic, forward-looking discourse of contemporary techno-digital policing; and secondly, using science fiction – specifically cyberpunk cinema – as an analytical tool for probing the possible futures of today’s techno-digital investments. The speculative fictions of cyberpunk films can guide, warn against, anticipate and inspire innovative frames of reference which not only raise difficult and incisive questions about the transformative complexities of techno-digital innovation, but also bring criminology into productive alliance with the sub-disciplinary fields of futures, cultural, film, policing and science and technology studies.

Keywords

Introduction: A retrospective criminology

21st century policing is underpinned by an infrastructure of technological and digital innovations which have transformed how civil society is policed, surveilled, visualised, protected and rendered safe. From automated facial recognition technology, to machine-learning and crime-mapping tools, to the use of bots and Big Data, technologically-supported and digitally-enhanced solutions to law enforcement, counter-terrorism, search and rescue, organised crime, cybersecurity, intelligence-gathering, traffic flow and the surveillance of inter/national mobilities, are assembled into what Holmes (2004) describes as an ‘imperial infrastructure’ of security. Drones, satellites, sensors, radio masts, locational tracking devices, apps, biometrics and scanners, ‘thick with network connectivity’ (Holmes, 2004: 2), not only forge a novel landscape of techno-digital 1 securitisation, but also recalibrate conventional lines of democratic accountability and policing-public relations based on trust, consent, fairness, transparency and legitimacy.

Academic criminology and policing studies have been swift to address the organisational and operational complexities of techno-digital transformations, and have raised critical questions of the regulatory and ethical implications of this qualitatively different paradigm of policing (Coldren et al., 2013; Henry and Smith, 2007). In practical terms, studies have exposed a roll-out programme hampered by inadequate funding, skills development, technical support and infrastructural investment, alongside poorly-planned implementation, uncoordinated delivery schedules and lack of knowledge transfer between forces and non-policing partners (Ferguson, 2017; Marciniak, 2021; Ratcliffe et al., 2019; Tanner and Meyer, 2015). In terms of governance, commitments to ensure accountability, transparency and fairness in data-driven processes, have not materialised in a way which secures and maintains public consent and trust in a continually changing techno-digital landscape. Moreover, the absence of a centralised ethical framework for data governance, no agreement on core principles and protocols for data retention and analytics, lack of clarity about lines of redress in case of complaints and malpractice and the failure to establish an independent body with external oversight of governance issues, are viewed as inherently problematic for policing legitimacy, the protection of privacy and the preservation of justice (Bennett Moses and Chan, 2018; Brayne and Christin, 2021; Ridgeway, 2018; Westendorf, 2022).

Taking a ‘digital turn in criminology’ (Smith et al., 2017: 263) has ushered in a novel conceptual vocabulary which, amongst other things, foregrounds the notion of ‘datafication’. First coined by Mayer-Schoenberger and Cukier (2013), ‘datafication’ involves more than the conversion of numerical, symbolic, behavioural and visuo-discursive phenomena into digital form; this, they argue, is to confuse digitisation with datafication, a process which makes digital records, indexable, searchable and analysable, and converts people, events and actions into data-points. In their review of ‘Big Data’ analytics, these authors acknowledge how datafication embeds knowing in architectures of computation and networks of data capture, generating continuous flows of data (knowledge) which energise and feed into systems of surveillance, intelligence-gathering, information-sharing, investigation, crime control, decision-making, strategic planning and the anticipatory logics of predictive, precautionary modelling (Bennett Moses and Chan, 2018; Chan et al., 2022; Fussey and Sandhu, 2022; Lavorgna and Ugwudike, 2021; Lyon, 2014; Marciniak, 2021). As Mejias and Couldry (2019) note, datafication represents ‘a new form of extractivism’ (p. 7), a ‘view from nowhere’ rather than a ‘view from somewhere’ (Chan et al., 2022) which not only reproduces neo-liberal (and neo-colonial) political rationalities based on consumer- and market-led solutions (O’Malley and Smith, 2022), but also creates novel modes of governmentality which entrench and confound existing injustices and exclusions (Aradau and Blanke, 2017; Reeves and Packer, 2013; van Dijck, 2014).

Unfolding through a mixed economy of multi-sited, cross-sectoral, digitally-networked partnerships (Crawford et al., 2005; Jones and Newburn, 2006 – see also Special Issue on Visibilities and New Models of Policing, Surveillance and Society 17[3-4]), datafication – and attendant processes of real-time data-generation and Big Data analytics – has been introduced without (or with little) regulation or oversight of data-sharing practices, and with inadequate legal protections to guard against the commercialisation of knowledge, the violation of privacy and the encroachment of private companies into discretionary decision-making spaces, as well as their empowerment to control investment into new technologies (Bennet Moses and Chan, 2018; Burrell, 2016; Dencik et al., 2018; Hannah-Moffat, 2018; O’Grady 2021; Ugwudike, 2020). At the same time, to make full use of techno-digital innovations, public policing embraces new forms and sources of expertise, evidenced by the expansion of specialist civilian units, such as intelligence analysts (Sheptycki, 2004), the outsourcing of digital labour to the private sector and the involvement of the public through participatory initiatives such as crowdsourcing and WhatsApp neighbourhood crime prevention schemes (Mols and Pridmore 2019; Spiller and L’Hoiry 2019a, 2019b). Thus, a myriad of ‘always-on’, interoperable socio-digital circuits, interfaces and platforms, capture the minutiae of millions upon millions of credit card transactions, text messages, web profiles and dashcam footage; given ‘the ubiquitous quantification of social life’ (Baack, 2015: 2), disparate and variegated constituencies of interest are overtly and surreptitiously enrolled and embroiled into the task of policing through and by ‘the datafication of everything’ (Mayer-Schoenberger and Cukier, 2013: 94). In a techno-digital world it is difficult to know where the authority and responsibility for policing resides.

For all this, the burgeoning critical scholarship on techno-digital policing is overshadowed and outnumbered by an interdisciplinary research agenda which adopts an altogether more optimistic and positive tone. In their timely and wide-reaching review of the extant academic literature, 2 Lavorgna and Ugwudike (2021) identify three pivotal frames of analysis which map across optimistic, neutral and oppositional political orientations to data-driven technologies. It is with some concern that they expose the prevalence – indeed, the dominance – of optimistic narratives which not only valorise the (assumed) objectivity, efficiency, reliability and utility of automated models of law enforcement and crime control, but also buy into the panacean claims of techno-digital approaches ‘despite well-documented concerns about data harms . . . . and regardless of their accompanying negative impacts’ (Lavorgna and Ugwudike, 2021: 12). While much of this optimism reflects the preponderance of computer science and engineering publications in this particular dataset (62% of reviewed articles), it speaks to broader questions of how an optimistic orientation is articulated, enacted and maintained; where does techno-digital optimism circulate and take hold; who is leading, participating in, adding to and aligning with this outlook; what are the terms and conditions of engagement with it; and how are the complexities, contradictions and challenges of advanced technologies presented, mitigated and managed.

To respond to these questions, the paper makes use of Jasanoff’s (2001) concept of ‘sociotechnical imaginaries’ – see also: Jasanoff (2004, 2005, 2015), Jasanoff and Kim (2009, 2015) and Special Issue on Sociotechnical Imaginaries, Social Studies of Science, 50(4). Jasanoff (2015) defines sociotechnical imaginaries as ‘collectively held, institutionally stabilised, and publicly performed visions of desirable futures, animated by shared understandings of forms of social life and social order attainable through, and supportive of, advances in science and technology’ (p. 4). Imaginaries, in this context, are not fantasies, modes of escape, an aesthetic preference or mere contemplation, but, as Appadurai (1990: 5) puts it, are a kind of labour, an organised field of social, cultural and political practices and a site of negotiation. Moreover, sociotechnical imaginaries are not policy agendas; they are less explicit, less issue-based, less goal-directed, less instrumental and less politically accountable than a policy programme (Jasanoff and Kim, 2009). Equally, they do not amount to a theory based on formal propositions, nor do they constitute a master narrative (Lyotard, 1984) grounded in historical memory, or accumulated knowledge. Rather, sociotechnical imaginaries are futuristic, flexible, contested, open-ended ‘visions of what is good, desirable and worth attaining for a political community; they articulate feasible futures’ (Jasanoff and Kim, 2009: 123). However, as Jasanoff (2015: 4) reminds us, this description privileges desirable futures, and not only buys into an optimism about technological invention and progress which is not always shared, but also glosses over alternative imaginaries which project a more sceptical, negative and resistant outlook. Sociotechnical imaginaries are not, then, inherently utopic or dystopic, but are situated imaginings of possible futures which reflect ethico-political standpoints and socio-cultural positionalities, and are always-already contestable. As heuristic frameworks which transport us to future worlds and open up new vistas of dispute, sociotechnical imaginaries bring an innovative temporal dynamic to the criminological table.

Criminology, as is the case for the social sciences more generally, steps lightly (if at all) into the future, has difficulty engaging with uncertainty and the unknown, and is reluctant to speculate on worlds-to-come (Selin, 2008). Rather, criminology and policing studies are wedded to a retrospective critique of events, processes and practices which have already occurred – or, at best, are contemporarily taking place. To be sure, criminological analyses of techno-digital policing – as set out above – look to the future in terms of policy recommendations and/or suggestions for further research. However, these are presented as either correctives to the negative outcomes, and unintended consequences of extant policies, or as supplements to fill the gaps in our current knowledge. What is missing is a willingness to (creatively, imaginatively and speculatively) discover, invent or propose possible, probable or preferable futures, where the future(s) of techno-digital policing are the temporal point of departure. In short, the discipline is reluctant to engage in ‘transformative worldmaking’ (Bina et al., 2020: 1). 3

For Adam (2008), the future ‘emerges as a domain of possibility, as a realm of pure potential, which we influence, co-produce and realise in and for the present’ (p. 113). Where futures were once the province of prophets, gods and oracles who foretold of worlds to come (Adam and Groves, 2007: Chapter 2), in today’s secular societies, the future is not merely imagined, but is actively made (and unmade) through practices of building, inventing, designing and experimenting. Futures are argued for by a disparate and heterogeneous community of ‘experts’; inter alia, internet service providers, software developers, security consultants, cryptographers, computer scientists, business analysts and police strategists, in diverse and contradictory ways, coax the (a) techno-digital future into being. Whether and how criminology engages with and contributes to this ‘expert community’, or if the discipline is willing to do so, is a moot point and is a question which this paper seeks to explore.

In the next section I unpack the future(s) of techno-digital policing through the prism of UK police strategy documents, regarded here as the discursive expression of a sociotechnical imaginary. In so doing, I explore how UK policing – and other police jurisdictions – invests in and (enthusiastically) embraces the promise of nascent technologies, not only envisaging a future of enhanced, sustainable police efficiency and effectiveness and enriched police-public relationships, but also normalising a panoply of techno-digital possibilities. Of key interest is how this imaginary is embedded within, buttressed and promoted by a ‘technoepistemic network’ (Ballo, 2015: 10) of experts who not only shape and lead a particular vision of the future, but also exclude and marginalise the critical voices of the criminological academy and the dissenting views of a sceptical public. This lays the important groundwork for mapping out an entry point for a future-facing criminological analysis. More specifically, the paper acknowledges cyberpunk cinema – a sub-genre of science fiction – as a pivotal interlocutor in the imagination and exploration of alternative futures, and as a culturally-embedded and valuable medium for socio-temporal analysis. By framing cyberpunk films as an analytical tool for not only questioning the techno-optimism of policing’s strategic ambitions, but also probing the entanglements of social transformation and techno-digital innovation, the complex sociotechnical imaginaries of futuristic techno-digital worlds come into view and bring criminology into productive alliance with the sub-disciplinary fields of futures, cultural, film, policing, science and technology and post/transhumanist studies.

Sociotechnical imaginaries of techno-digital policing

In England and Wales, the National Policing Digital Strategy 2020–2030, launched in Manchester at the Police Digital Summit 2020, sets out a policing futures framework of emerging and embryonic technologies, at different stages of development and design,

4

in support of ‘five key digital ambitions’ (Police Digital Service/National Police Technology Council [PDS/NPTC], 2020: 6) which seek to: provide seamless, digitally-enabled experiences to enhance police-citizen interactions; promote the proactive, targeting of risk and early interventions in the physical and digital worlds; equip officers and leaders with the knowledge, skills and tools to optimise digital ways of working; embed a whole public system approach through data-sharing; and explore new ways in which digital technologies can empower the private sector to share responsibilities for public safety where it is appropriate and ethical. Similar strategy documents, responsive to an accelerated demand for critical digital capabilities, and committed to ‘next generation technologies’ and ‘game-changing resources’, are now commonplace across numerous jurisdictions including South Africa, Canada, Australia and New Zealand, for example, and are the topic of focused discussions/learning workshops at international policing events, such as the Pearls in Policing Annual Conferences.

5

To be sure, policing services face novel and, some would say, intractable challenges, operating in a complex landscape of cyber-inspired, digitally-enabled offences which have reconfigured how criminality is enacted, experienced, organised, distributed, detected, investigated and controlled. Cyber-criminologists have been prolific in exposing how variegated forms of online abuse and virtual violences, identity thefts, scamming and phishing frauds, computer hacking and trades in illicit, unsafe and dangerous goods, open up and expand the criminogenic and victimogenic propensities of digital technologies and media – see, for example, Jaishankar, 2011; Jewkes and Yar 2010; Wall, 2001, 2007; and the International Journal of Cyber Criminology [since 2007]). Equally, policing authorities acknowledge the scale of the problem, noting that ‘more than 90% of reported crime now has a digital element’ (PDS/NPTC, 2020: 3), with estimates that only 10% of digitally-generated crime is actually reported (Jewkes, 2010: 526). From this vantage point, it is little wonder that chief officers, police and crime commissioners, policing strategists and senior police management regard a techno-digital future as not only desirable but necessary. As the National Policing Digital Strategy (NPDS) states: Policing is at a critical juncture. We either improve how we harness digital opportunities from existing and emerging technologies, or we are at risk of becoming overwhelmed by the demand they create (PDS/NPTC, 2020: 1).

In this sense, policing’s public articulation of ‘digital ambitions’ not only contributes to, strengthens and reproduces the optimistic discourse of the computer scientists, engineers and allied specialists such as software developers, data scientists, statisticians and mathematicians, but also expresses a sociotechnical imaginary in which techno-digital innovations will ‘come to the rescue’ (Smallman, 2018). Consulting on UK policing’s digital journey, Deloitte (2015: 3) spells out who else shares this particular vision of the future – utility companies, global entertainment corporations, self-service channels, consumer goods developers, insurance and financial services and the telecommunications sector – with the added promise that by joining this visionary community ‘police forces can transform the delivery of their services . . . . use their resources more intelligently, target criminal activity more proactively, and deliver a faster, more targeted response through real-time information-sharing and effective decision-making’ (Deloitte, 2015: 3). This establishes what Smallman (2020: 591) refers to as the ‘soft ties’ of shared norms, priorities, preferences and values which not only bring disparate constituencies of interest together, but also enrol them into a collectively held, networked and publicly articulated vision of what is good, desirable and worth striving for.

Building alliances with this robust company does not also initiate policing authorities as ‘experts’, or grant them an expertise in techno-digital matters, especially those predicated on ‘the science’ which underpins the design, operability, application and capacity of emerging and advanced technologies. At best, policing occupies a nodal space within an ‘elite’ grouping (Smallman, 2020) which includes, on the one hand, a cross-sectoral spectrum of policy-makers, strategists, consultants and executives; but which is led, on the other, by the ‘experts’ – that is, the inventors, designers, engineers and technicians of the STEM

6

industries. In Ballo’s (2015) words, this fluid coalition of (often) competing political agendas, commercial interests and cultural orientations, nonetheless forms a heterogeneous ‘technoepistemic network’ (p. 10) of experts (and quasi-experts) able to inject their priorities and ideas into particular visions of the future, assess the risks and affordances of new and emerging technologies and gatekeep questions of regulation and governance (Hurlbut, 2015; Lavorgna and Ugwudike, 2021). Normative concerns about what is at stake in social, legal, political and ethical terms are regarded as ‘epiphenomenal’ (Hurlbut, 2015); as Smallman (2020) notes: ‘The uncertainties of science (can) be overcome with more science, and the social and ethical issues relating to science (are) spoken of as epiphenomena that (can) be separated from science itself and then minimized’ (p. 592). What is hinted at here is not so much the autonomy and purity of technoscience, but its claim to be ‘the institution most capable of governing technological emergence’ (Hurlbut, 2015: 129, original emphasis). As such, STEM thinking and discourse pronounces on not only what counts as a ‘matter of concern’ (Latour, 2005), and where such matters figure on a putative scale of importance, but also constructs and constrains who can meaningfully deliberate the efficacy of emerging technologies and how they serve the public good. Consider, for example, the UK’s Sciencewise

7

public engagement programme, a series of dialogue events which bring together selected groups of citizens to discuss technoscientific innovations, with the aim of informing government policy. Reporting on the public dialogue on the ethics of data science, Sciencewise (2016) points out that: Clearly, many members of the public are not as well-versed in data science as those working in the field and without accessible explanations of how data science works (e.g. why, in some circumstance a correlation between two sets of non-causal data could still be a useful tool for understanding) they are liable to pull data science projects apart and raise doubts about the value of using data science over other approaches (p. 30).

For a programme designed to elicit and take account of public views, concerns and aspirations for techno-digital investments, it works – at least in this example – to dismiss and discredit particular viewpoints which militate against, challenge or do not conform to the preferred sociotechnical imaginary (Macnaghten and Guivant, 2010; Smallman, 2020; Welsh and Wynne, 2013).

For some time, governments across the world have acknowledged the importance of taking seriously the ethical, legal and social implications (ELSI) 8 of advances in science and technology, and require the completion of ELSI research to secure funding for new innovations and technologies, especially those concerned with nanotechnology and genomics (Hurlbut, 2015; Macnaghten et al., 2005; Selin, 2008; Smallman, 2020). On the face of it, then, a governmental imperative to incorporate social scientific knowledge into STEM research and development makes it a central component of innovative technoscientific endeavour. Yet within the narrower field of techno-digital policing studies, there is growing evidence that criminological concepts and ideas are poorly understood (Lavorgna and Ugwudike, 2020), ignored in practice (Bennett Moses and Chan, 2018) or omitted altogether from key policy and strategy documents (Westendorf, 2022). Perhaps this is to be expected given that the substantive and critical issues raised by criminological research are always-already cast into the marginalised domain of the epiphenomenal. However, some argue that we are entering a post-ELSI phase, and to be ‘more than a box-ticking exercise’ (Balmer et al., 2016: 73) social science effort in general, and criminology in particular, should aim to be more collaborative, co-productive and ‘neighbourly’, more willing to take risks, be less opaque and esoteric and more prepared to open up discussions on goals which are not shared (Balmer et al., 2016; Fitzgerald and Callard, 2014). Such an approach is commendable and is certainly preferable to shouting from the sidelines or withdrawing from the field altogether. However, it leaves unaddressed how technoscientific and techno-digital research and innovation is not only riven with experimentation, uncertainties and failures to launch, but it also moves at such a speed that deliberations about ethical, legal and social regulation are invariably impossible ahead of its commercial or industrial application (Selin, 2008). At the time of writing, over one thousand technoscience leaders, researchers and entrepreneurs, including Elon Musk, Steve Wozniak and Yoshua Bengio, 9 have called for a moratorium on the further development of artificial intelligence (AI), citing ‘profound risks to society and humanity’ (Metz and Schmidt, 2023). In an open letter to the Future of Life Institute, they argued that AI developers are ‘locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one — not even their creators — can understand, predict or reliably control’. 10

Perhaps now, we have reached a point when the imagined future(s) of techno-digital policing has become less desirable, less expressive of the public good and more fluid, uncertain and unknown than has hitherto been the case. Put another way, though AI is only one element of the techno-digital landscape, the demand for a temporary pause on advanced AI design, brings the future of technoscience into view as an object of dispute, discussion, analysis and re-assessment. Here, then, is a moment when the future is being remade, reimagined and adjusted to accommodate present concerns of the Armageddon to come. In this ‘disruptive context’ (Inayatullah, 2008: 4), the putative boundaries between critical and optimistic orientations collapse, and open up a hybridised space for negotiation and collaboration between marginal (social scientific and public) and central (technoscientific and sectoral) interests. In such a hiatus, the future is up for grabs and different imaginaries of techno-digital policing can be forged, argued for and debated. In the remainder of the paper, I step into the future through the medium of science fiction cinema, specifically the sub-genre of cyberpunk film. Cyberpunk worlds are dirty, gritty, violent and dystopian. Signalling a distinct break from the (largely) techno-utopian dreams and conventions of science fiction cinema (Bina et al., 2020; Elyamany, 2023), contemporary cyberpunk movies chronicle the dystopic ecologies of urban societies transformed by a heterogeneity of advanced technologies. 11 In these futures, the figure of the cyborg (and related humanoid forms), looms large and is central to how cyberpunk milieus are policed, surveilled and controlled. In the analysis which follows, I introduce the notion of ‘embodied singularity’ to capture the self-sufficiency and operational detachment of cyborgian protagonists, and map out the contours of alternative sociotechnical imaginaries which, on the one hand, embrace innovative ways of re-imagining techno-digital police labour, but on the other, signal an unexpected shift in the socio-cultural dynamics of police organisational practices.

Techno-digital policing and speculative fictions

Science fiction . . . gets eyeballs. It gets widely read. In cinema it is an eminently popular genre that shapes the public imagination. It is powerful. Everyone knows science fiction (Lombardo and Ramos, 2015: 3).

Science fiction (SF) pervades contemporary culture in the form of novels, concept art, comic books, Japanese manga, animations, toys, video games, television drama and films, and is the most visible, commonly experienced and accessible mode of futuristic thinking. The storytelling, visualisation, characterisations, dialogue, aesthetics, music and dramaturgy of SF movies render the future meaningful, graspable and possible and are central to the communication and dissemination of the imagined (in)securities of worlds-to-come. SF cinema is not based on fantastical-thinking, or on truth-claims, but is an ‘epic vision of our present social reality’ (de Lauretis, 1980: 170). Indeed, some regard SF as the paradigmatic vehicle for engendering reflexive, provocative and prophetic cultural narratives which not only articulate a critical commentary on the possible futures of actually-existing technologies, but also signal, inspire and warn of the politics and ethics of their realisation (Bould, 2012; Lombardo, 2006; Roberts, 2016). SF cinema, then, sharpens and shapes collective imaginations, crystallises our hopes and fears for the futures of techno-digital securitisation and has ‘massive textual presence’ (Stone, 1991: 95) within a vibrant cultural public sphere, finding expression as much through technical reports, doctoral theses, patent applications, hardware designs, conference papers and strategy documents, as it does through film productions. In sum, SF cinema creates multiple spaces of encounter for navigating the tension between the speculative fictions of future worlds and the empirical realities of the present; as Bina et al. (2017) note ‘(science fiction) offers a wealth of “scenario-like” material . . . and provides an excellent service to decision makers engaged in prioritizing challenges and the research of solutions, by elaborating on the question of “what if”’ (p. 180).

Cyberpunk – sometimes known as neon-noir – is a sub-genre of SF, characterised by its dystopian futuristic settings and its ‘combination of low-life and high-tech’ (Sterling, 2016: 5). While the term ‘cyberpunk’ is usually attributed to Bruce Bethke’s (1983) short story of the same name, 12 this sub-genre gained traction and popularity primarily through the novels of William Gibson, Bruce Sterling, Lewis Shiner and Pat Cadigan (Featherstone and Burrows, 1995), and later through a myriad of blockbuster films including Blade Runner (1982), The Terminator (1984), Total Recall (1990/2012), The Matrix Trilogy (1999, 2003a, 2003b), Minority Report (2002), Robocop (1987/2014), Ghost in the Shell (1995/2017) and Blade Runner 2049 (2017) (McFarlane et al., 2019). 13 Many acknowledge that cyberpunk has become an important resource for social and cultural theory (Collie, 2011; Featherstone and Burrows, 1995), and cyberpunk cinema, in particular, generates a wealth of narrative, visual, performative and discursive data for experiencing, engaging with and exploring the futures of techno-digital policing environments through the prism of present matters of concern.

Cyberpunk scholars and enthusiasts draw attention to several tropes which characterise the sub-genre – an aesthetics of urban detritus and decay; the rise of mega-corporations and the concomitant weakening of legal-administrative, democratic government; dehumanised, alienated and stratified populations; the pervasiveness of centralised, asymmetrical surveillance; the absence of poetry, nature and beauty; the withering away of collective life; and the banality of violence, corruption, conflict and abuse (Bina et al., 2017; Collie, 2011; Gillis, 2005; Kellner, 2003; Lavigne, 2013; McFarlane et al., 2019). These themes serve as organising frameworks across a suite of different ‘imaginaries’ – such as ‘urban imaginaries’ (Bina et al., 2020), ‘aesthetic imaginaries’ (Jacobson, 2016), ‘legal imaginaries’ (Tranter, 2011; Travis and Tranter, 2014), ‘gendered imaginaries’ (Gillis, 2007; Hellstrand, 2017) and ‘politico-economic imaginaries’ (Alphin, 2021) – and provide an important cultural springboard for futures-thinking across several areas of interest. Here, though, I focus on the sociotechnical imaginaries of techno-digital policing, working through the post/transhumanist futures of operational officers to cast a spotlight on the transformative effects of techno-digitally enhanced ‘police bodies’. This positions the analysis within the theoretical terrain of post/transhumanist scholarship and its interrogation of the complex entanglements of the corporeal and the technological, the human and nonhuman, mind and matter. 14

Central to the post/transhumanist prospectus and, indeed, a pivotal character in cyberpunk narratives is the figure of the cyborg, and related humanoids such as the replicant, android, metamorph, clone and robot. These bio-engineered entities represent the fusion of the biological and the mechanical, invariably designed to restore, enhance and/or replicate human capabilities. Goicoechea (2008) rather eloquently writes that the cyborgian being is: (A) malleable material of amazing plasticity, penetrated by all sorts of instruments and substances. The shapeless batch of human parts seems to reach perfection only through its union with the machine, growing dangerously dependent on all its technological extensions, and letting its structure, through successive operations, to be slowly invaded by inorganic elements (p. 2).

In cyberpunk storyworlds, cyborgs/humanoids are more than cinematic props or a series of special effects; rather, they occupy the centre stage of the drama and are the conditions of possibility for plot development and narrative action. Though sometimes cast as the villain (The Terminator, 1984), the helper (I, Robot, 2004) or the dispatcher (The Machine, 2013), it is as the main protagonist that the cyborg/humanoid character speaks directly to the futures of not only techno-digital policing but also what it means to be a techno-digital police officer. As a complex entanglement of organic and inorganic materialities, the cyborgised police body is the symbiosis of human and machine – a technologically-sophisticated, digitally-networked, somatically-enriched, self-contained crime-fighting unit. I introduce the notion of ‘embodied singularity’ to capture this complexity, and to cast a spotlight on the sociotechnical imaginary of the ‘techno-digital cop’ of the future. Recasting the operational officer as an embodied singularity prompts a reworking and renegotiation of deep-seated philosophical questions and ethical questions about, for example, what it means to be human in an object-oriented world; who/what has the capacity and right to exercise free will, agency, autonomy; how do we make sense of the ‘cyberpunk police officer’ as a subjectivity, an identity and as a socio-legal figure of authority; and how far can modes of techno-digital securitisation deliver fairness, trust, consent and accountability in policing. In other words, in the speculative fictions of cyberpunk, the injustices, violences and inequalities of techno-digital policing are not marginal issues to be retrospectively debated, but are front and centre of lived experience and in the vanguard of routine law enforcement. No longer confined to the realms of the epiphenomenal, ethico-political (and socio-cultural), matters of concern acquire form and content, are co-emergent with and are mutually implicated in the warp and weft of everyday life. In the remainder of the paper, I use the tensions of post/transhumanist futures as a lens for working through these issues, and focus on three cybernetic protagonists, Robocop, Major and K, each of which, and in different ways, encapsulate the variegated contours of techno-digital policing in an embodied singularity.

Two cyborgs and a replicant

Set in 2028, a Detroit detective, Alex Murphy (Joel Kinnaman), is fatally injured in a car bomb explosion orchestrated by crime boss Antoine Vallon (Patrick Garrow), as revenge for Murphy’s zealous investigation of his gunrunning activities. Murphy’s reputation as a grounded, stable, family man makes him a suitable candidate for OminiCorp’s ambition to develop a cyborg police officer as an extension of their globally successful deployment of robotic peacekeepers in militarised/war-torn regions. Though Murphy’s injuries are extensive and only his head (minus parts of his brain), lungs, heart and right hand are salvageable, with the written consent of his wife, Clara (Abbie Cornish), the inventive bio-engineer/scientist, Dr Dennett Norton (Gary Oldman), commits to an experimental and ground-breaking programme of advanced cybernetics, to rebuild Murphy and transform him into the world’s first operational cyborg, RoboCop. At the first simulation field test, much to the annoyance of OminiCorp’s CEO, Raymond Sellars (Michael Keaton), RoboCop displays hesitation, fear, empathy, an over-cautious sensibility and a heightened awareness of the dangers and risks his actions pose to bystanding others, all human propensities which dilute OmniCorp’s ideal of crime-fighting efficiency. For Sellars, this ideal only requires the semblance of RoboCop’s autonomy, not its actuality; as Rizov (2023) argues: ‘Discretion in policing appears to be a worthwhile façade for OmniCorp, but a nuisance in practice’ (p. 102). Norton’s solution is to alter RoboCop’s programming so that it overrides and bypasses his human judgement; at the next field test, he explains the reboot to Sellars thus (Figures 1 and 2).

When he engages in battle, the visor comes down and the software takes over, then the machine does everything. Alex is a passenger, just along for the ride . . . . . When the machine fights, the system releases signals into Alex’s brain making him think he’s doing what our computers are actually doing. I mean, Alex believes right now he is in control, but he’s not. It’s the illusion of free will.

RoboCop. Still taken from RoboCop (Director: José Padilha. MGM/Columbia Pictures, 2014).

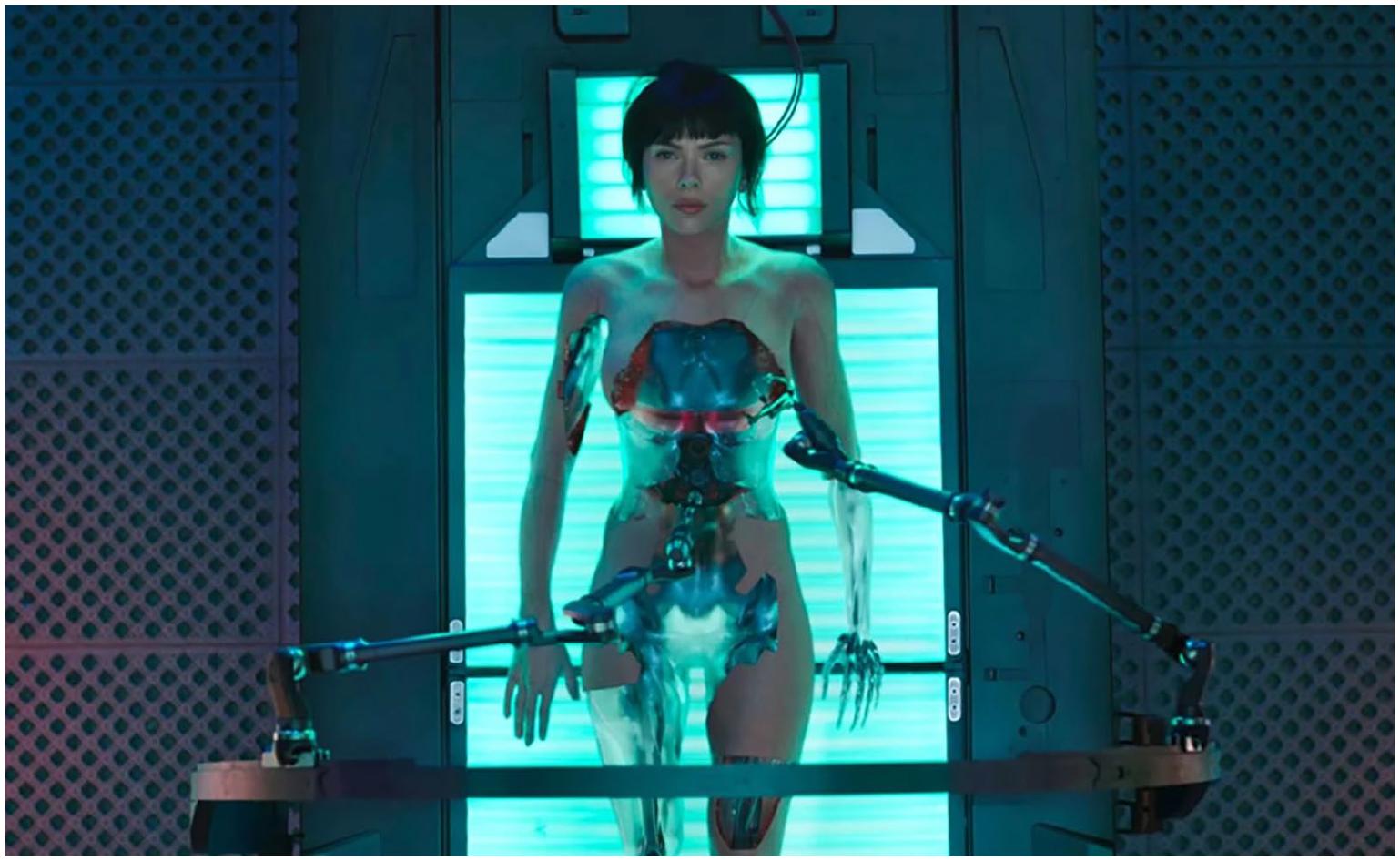

Major Mira Killian. Still taken from Ghost in the Shell (Director: Rupert Sanders. Paramount Pictures, 2017).

K (K6-3.7). Still taken from Blade Runner 2049 (Director: Denis Villeneuve. Warner Brothers/Sony Pictures, 2017).

Managing the tension between nonhuman automation and human autonomy is a recurring theme across numerous films. For example, Ghost in the Shell’s (2017) protagonist cyborg, Major Mira Killian (Scarlett Johansson), though valued for her human attributes of care, intuition and imagination, is routinely monitored to ensure that any memories of her pre-cyborg life are blocked and erased. Indeed, the film’s narrative arc follows Major as she tries to make sense of, and distinguish between intermittent hallucinations, implanted false memories, the effects of being ‘ghost-hacked’ (Lovins, 2019: 27) by the film’s antagonist, Kuze (Michael Carmen Pitt), and authentic memories of her human past – all of which are quickly explained away by Major’s designer, Dr Oulet (Juliette Binoche), as ‘glitches in the code . . . sensory echoes from your mind’s shadows’ which must be permanently deleted from Major’s digital archive. Memory in Ghost in the Shell, rather than free will, emotion and empathy, is foregrounded as the key marker of being human and must be eliminated not only to preserve the efficient functioning of the mega-city’s cybersecurity ecosystem, but also to avoid exposure of the nefarious provenance of Hanka Robotics’ experimental cyborg programme. 15 With Major’s memory re-described as nothing more than cloud storage, Oulet muses, by way of gentle reassurance:’ We cling to memories as if they define us, but what we do defines us’.

Blade Runner 2049 unfolds in an advanced techno-digital world devastated by environmental collapse and mass species extinction, inhabited by a subsistent, deracinated human population reliant on the slave labour of bioengineered androids (replicants). K6-3.7 (Ryan Gosling) – K for short – is a Nexus-9 series replicant blade runner employed by the Los Angeles Police Department (LAPD) to hunt down and ‘retire’ (kill) Nexus-8 models which had been declared illegal in 2022 for their rebellious, anti-authority predilections. After each hunt, K is required to take a Post-Traumatic Baseline Test (PTBT) which measures and records his bodily reactions – such as eye movements, pupil dilation, heart rate, respiration and flushing of the face – to a series of emotionally provocative questions. This bizarre interrogative exchange and its strange script, 16 is designed to confirm his status as a replicant; that is, as a nonhuman entity who is obedient, compliant, has no self-determination, is incapable of doubt and critical thinking, and has no subjectivity, consciousness or moral compass – in short, K must demonstrate that he has no interiority and does not possess a soul. However, as Rizov (2023) points out, the very existence of the test ‘casts doubt on the Wallace Corporation’s claim that Nexus-9 models are incapable of turning on their masters’ (p. 192). Indeed, as the narrative develops, we encounter K not only failing the baseline test, but also deliberately lying to his (human) police boss, Lieutenant Joshi (Robin Wright). By crossing the threshold into personhood, K consigns himself to imminent ‘retirement’, and the film ends with his death – if, indeed, he had ever been alive – on the steps of the Wallace Corporation’s memory-design facility, Stelline Laboratories.

Through the prism of RoboCop, Major and K, these films set in motion a series of human vs machine oppositions which jostle, collide and usually collapse across the films’ action sequences and plot twists. To be sure, they create important filmic moments for audiences to reflect upon and ruminate age-old philosophical questions about what makes us human, with free will, emotion, empathy, memory, consciousness, a soul, all making an appearance. However, there is more to be gleaned from these moments than clichéd inventories of non/human traits, not least because this does not take us very far down the road of a futures-thinking criminology. An alternative reading suggests that the fragile juxtaposition of people and things, and the entanglements of people as things, and things as people, provide a cinematic allegory of how we might chart a politico-ethical course towards advanced techno-digital futures. Two thematics come into view, and I address each in turn.

Re-imagining police labour

The (re)programming of RoboCop replays familiar debates on the value and merits of police discretion vis-à-vis the statistical abstractions of Big Data analytics. In their nuanced research of intelligence-led policing, Chan et al. (2022) make use of Jasanoff’s (2017) ‘regimes of sight’ framework to differentiate two competing regimes of intelligence-production – the experiential, subjective ‘view from somewhere’ (VFS), and the scientised, objective ‘view from nowhere’ (VFN). These are ideal types, as the authors are swift to acknowledge, but the point here is that ideal or otherwise, Chan et al. (2022) give credence to a bifurcated landscape of intelligence work in which two separate domains of practice operate independently of each other – a ‘reactive, personalised, contextualised, and case/experience-based’ (p. 3) approach, on the one hand, and a ‘data-driven, depersonalised and decontextualised’ (Chan et al., 2022: 3) approach, on the other. It is a dichotomy which is perfectly mapped by the developmental phases of Robocop discussed above. However, RoboCop’s evolution did not stop at the fully-automated, hyper-programmed, super-efficient version of the cyborg and it is in the narrative denouement that the operational potential of RoboCop is revealed.

A third version of RoboCop emerges when he is confronted by his wife, who tells him of their son’s traumatised state of social withdrawal – ‘Alex, you need to come home . . . . You need to speak with your son. . . I know you’re in there’. This scene is overlaid with dialogue spoken by Norton’s assistant, Jae Kim (Aimee Garcia), who observes that RoboCop seems to be ‘overriding the system’s priorities’, initially by re-directing his surveillance energies onto his son’s everyday challenges but going on to access the sealed digital archives of his attempted murder and initiating an investigation of it. In other words, RoboCop 3 regains a human subjectivity which is not only capable of subverting the software and overwriting programmed protocols and directives, but also of instigating alternative plans of action based on different interests and imperatives. As Jones (2015) notes: ‘The film seems finally to suggest that there is an ideal melding of man with machine, and that just this amalgamation is reached at story’s end’ (p. 421). A similar fusing concludes Major’s narrative of self-discovery; despite recovering the memory of her past life as an anti-cyber-enhancement activist, Motoko Kusanagi, Major embraces her new identity as cyborg, reconnects with her mother and achieves a sense of belonging and community. Returning to work as a Section 9 counter-terrorist operative, in the closing sequence, Major offers her personal philosophy: ‘My mind is human. My body is manufactured. I am the first of my kind, but I won’t be the last. My ghost survived to remind the next of us that humanity is our virtue. I know who I am and what I’m here to do’. Of course, these are Hollywood-style ‘feelgood endings’, but they also inspire a rethinking of what might count as a desirable – at least, a promising – future for techno-digital policing.

Suppose we envisage the ‘happy marriage’ of human judgement and algorithmic logic, not in the sense of their co-existence as two separate spheres of knowing – Chan et al.’s (2022) VFS and VFN – but as interconnected, thoroughly enmeshed and interacting modes of everyday policing practice. The National Policing Digital Strategy (NPDS, 2020), discussed above, imagines a future where officers at all levels will be equipped ‘with the right knowledge, skills and tools to deal with increasingly complex crimes’ (p. 8). Moreover, the strategy document talks of developing a ‘digitally literate’ and ‘digitally enabled’ workforce, and of digitising core policing processes so as to remove the need for manual, repetitive and duplicative practices thereby ‘increasing our officers’ and staff’s operational efficiency’ (NPDS, 2020: 8). This is commendable, but as a plan for the future it is couched in the instrumental language of using, harnessing and deploying techno-digital resources as if they were neutral, passive and apolitical tools (Bennett Moses and Chan, 2018; Browning and Arrigo, 2021; Chan et al., 2022; Ferguson, 2017). A more forward-thinking approach might extend the scope of digital literacy to include the capacity to challenge, (re-)interpret and problematise techno-digital systems and their outputs. If we learn anything from RoboCop and Major, it is their human abilities to critique and override their own cybernetic powers which provide the checks and balances required for informed, even-handed decision-making. One route to achieving this capability might be to re-imagine the structure and organisation of police labour. Currently, digitally literate personnel are siloed into specialist, civilianised units and locked into practices of ‘intelligence-hoarding’ (Sheptycki, 2004: 320–321) which stymie more open, interactive and collaborative ways of working. One strategy for the future, then, might be to regard the data scientists, intelligence analysts and ICT technicians as policing practitioners rather than non-policing (or civilian) experts in digital technologies, and to embed them as pivotal team members within multiple operational, training and managerial policing milieux. Such a move has the potential to foster an integrated rather than a bifurcated policing workforce, and encourage critical dialogue, the exchange of ideas and contextualised deliberations informed by both the intuitive, experiential knowledge of operational officers, and the calculative abstractions of computational officers, each providing a corrective for the other. Indeed, the story of K reflects the futility of dichotomised police labour. Trapped in a zero-sum game of disinterested detachment, or death, K’s inclinations to cross the threshold, and blur the boundaries of subjective/objective decision-making is ultimately (and fatally) blocked.

Connectivities and/or collectivities

By focusing on the human/machinic entanglements of techno-digital labour, these cyberpunk films signal the desirability of a balanced and interlinked epistemological approach to decision-making, where the dualism of automated versus autonomous police analytical work is erased. It is a desirability, however, which does not emerge consensually through soberly discussion, but is struggled for and fought over, sometimes violently; Robocop, Major (and K to a certain extent) ‘succeed’ only by working against the police organisation, not with it. This tension serves to warn us of the potential dangers and uncertainties of a cyborgian future. In his perceptive and nuanced reading of RoboCop, Linnemann (2022) argues that: In the posthumanist social science fiction of RoboCop, the flesh-and-blood cop and his titanium armor, cybernetic operating system, futuristic sidearms, and lethal “data spike” are all components of the same machine and, as such, a unitary embodiment of the police power’ (p. 138).

Linnemann (2022) theorises that this power circulates as horror and is always-already embedded in structures and relations of coercion, violence and threat. Police, he argues, are ‘monsters with badges’ (pp. 15–19), equipped with and enhanced by militarised techno-digital resources, and whose troubling familiarity as everyday figures of trust makes them all the more horrifying. Linnemann’s thesis is innovative, insightful and compelling, but I am not convinced that the ‘unitary embodiment’ he identifies in the cyborgised RoboCop is energised exclusively by the power of horror. Counterposing police force and police service is a well-worn debate in policing studies (Bowling et al., 2019; Brodeur, 2010; Stephens and Becker, 1994) with most accepting that ‘(public) policing is a multi-faceted activity not easily reduced to a simple formula’ (Loader, 2020: 2). Given its omnibus mandate, Linnemann’s inclination to read policing practice only through the lens of crime-fighting, order maintenance and surveillance, limits the extent to which he acknowledges service-oriented tasks and the alternative dispositions this involves. Here, then, I want to suggest that the embodied singularity of the corporeal and the techno-digital does not exclusively enhance the power of force and violence, but has implications across the policing portfolio of organisational and operational practices, most especially the capacity to work in collective, collegial ways.

The cyborg police officer of the future is not too unlike his/her contemporary counterpart; both are kitted out with an array of gadgets and prosthetics designed to increase efficiency and effectiveness in crime control. While we are some way away from K’s spinner (flying) car with its detachable voice-command drones and ground-penetrating scanners, or RoboCop’s ocular-operated fingerprint processor, or Major’s ability to navigate the internet as though it were a physical space, the image of a police officer bedecked in techno-digital accessories (body cameras, mobile phones, data processing devices, two-way radios), as well as analogue technologies such as taser guns, guns, truncheons and handcuffs, is very recognisable. These operational combos give today’s patrolling officers a certain level of flexibility to engage in proactive work such as vehicle checking and crime analysis (Lum et al., 2017), or to accomplish multiple tasks at the scene(s) of crime, including the inputting of information, co-ordinating the availability of other policing actors, organising additional vehicles, undertaking online fingerprint identification and criminal history searches and preparing and compiling statements and reports (Singh, 2017). But fast-forward into a cyberpunk future and we encounter policing operatives who not only have the flexibility to multi-task in the field, but also seem to work independently of a wider ecology of policing units, departments and personnel. For example, RoboCop’s orbit of action throughout the film moves to and from the cybernetics laboratory, the OmniCorp field simulation training centre and downtown Detroit; it is only his longstanding friendship with his former police partner, Jack Lewis (Michael K Williams), which connects RoboCop to policing as an occupational setting and a socio-cultural milieu. Indeed, apart from the film’s opening scenes, RoboCop’s presence inside a police station is limited to his confrontation with the corrupt officers who had betrayed him (as Alex Murphy) to Vallon.

Similarly, Major’s role within the specialist, cyborg-staffed Section 9 counter-terrorist unit, positions her at some distance from the variegated hinterland of ‘policing proper’ and there is no glimpse, or even reference to other policing actors, sections or contexts at any point in the film. The same can be said of K where his engagement with the LAPD writ large is limited to a few interactions in the office of his line manager, Lieutenant Joshi; and, more tellingly, his marginality to the Department is signalled when he leaves the precinct building following a PTBT – ‘Fuck off skin job’ is the derogatory comment of an officer who passes him in the corridor. There is, of course, a limited universe of characters and settings in any narrative (Propp, 1968); but we recognise in these protagonists not only the recurring figure of the hero who stands apart from, and seems to transcend an erstwhile ‘pirate’s crew of losers, hustlers, spin-offs, cast-offs, and lunatics’ (Sterling, 2016: 3), but also the noir underpinnings of the cyberpunk genre. That is to say, the distancing of RoboCop, Major and K from the hub-bub of routine policing, even if it is a question of narrative economy, seems to forewarn us of the dangers of aiming for a (semi-)detached, self-sufficient, techno-digitally equipped operational officer working at arm’s length from a heterogeneity of police colleagues.

This matters when strategy documents stress the importance of teamwork and partnerships, strong leadership and effective management, good communication and information-sharing, all couched in the language of integration, connectedness and collaboration (NZP, 2017; RCMP, 2020; SAPS, 2018 – see Footnote 4 for references). As the NPDS (2020) puts it: ‘We should foster a “one-team” mentality – encouraging collaboration across forces and with public sector partners’ (p. 14). Such aims are hardly controversial, but it is unclear how co-operative ways of working can be nurtured and delivered when they sit alongside ambitions to maximise the affordances of techno-digital investments. Consider, for example, how the South African Police Service predicate their digital journey to enhanced communication and collaboration on an increased usage of ‘instant messaging, group messaging (and) online workspaces’ (SAPS, 2018: 6); or how, in setting out their Policing Vision 2025, the UK’s College of Policing valorises a hands-off model of leadership ‘which equips leaders at all levels to meet the challenges of the future and . . . . . allows levels of supervision and checking to be reduced’ (College of Policing, 2016: 9). 17 Aspirations to foster ‘togetherness’ and ‘strong leadership’ in a digitally-enhanced working environment are a little hollow when team-building, management and communication rely exclusively on the remote connectivities of screens, up/downloads, emails, texts, message boards and live streaming. Perhaps, then, we are sleepwalking into a future where real-time automation and the shift to digital modes of communication displaces both the need and the opportunity for different kinds of on-the-job interactions – from fleeting conversations to briefing meetings, case conferences and training workshops – all of which create the conditions of possibility for social networking, ad hoc supervisory guidance, storytelling and the circulation of experiential, organisational and legal-administrative know-how. Through a myriad of disparate interactions, socio-cultural identities, camaraderies and alliances, a sense of belonging and common cause are forged; while this invites its own critique (see, for example: Chan, 1997; Cockcroft, 2013; Loftus, 2009; Whelan, 2017), a techno-digital future which encourages and promotes individualised, self-reliant, remote practices, decentres policing actors from collective goals, detaches them from meaningful deliberation and stymies their ability to form, negotiate and modify a cultural, social, ethical and political consciousness. Drawing from Virilio (1998), Kellner (1999) cautions against a fully-automated society, arguing that: ‘Losing control over its world, the human subject becomes a mere recording device, and the human body is reduced to functions in a technological system’ (p. 117).

Conclusion: Towards a criminology of the future

The use of science fiction cinema as an analytical resource fits easily into the sub-field of popular criminology and its focus on popular cultural media (Kohm and Greenhill, 2011; Rafter, 2006; Rafter and Brown, 2011; Wood, 2019). Yet, there is more on offer here than representational data and its interpretation. Kellner (2003), for example, suggests that ‘cyberpunk science fiction can be read as a sort of social theory’ (p. 299); while Davis (1990) regards the novels of William Gibson as ‘stunning examples of how realist “extrapolative” science fiction can operate as prefigurative social theory’ (p. 3). In this paper, I have steered away from the conceptual architecture of theorisation in favour of an approach which navigates the contours of our techno-digital futures through the lens of Jasanoff’s (2001) notion of sociotechnical imaginaries. As a way of engaging with the future, sociotechnical imaginaries do not offer a prescriptive prospectus or a detailed policy programme for techno-digital advancement, so much as articulate what is good, desirable and worth aiming for, and conversely, what is undesirable, troubling and should be resisted and avoided. I have mapped out the sociotechnical imaginary which circulates in and through the strategic ambitions of contemporary policing, noting how they are infused by an optimistic orientation to techno-digital investments, and subscribe to panacean expectations that the automation and speed of digital technologies will deliver an effective and efficient model of crime control and law enforcement. Despite a burgeoning portfolio of critical research, criminology remains peripheral to the strategic discourse of a ‘technoepistemic network’ (Ballo, 2015: 10) of experts who take the lead in, and set the terms and conditions of debate. Yet, as a discipline, I have argued that criminology is poorly-equipped, if not reluctant to engage with the future – a situation which is all the more pressing when the speed and impacts of technoscientific innovation outpace our ability to take stock of their ethico-political implications. Harnessing our sociotechnical imagination is one route to a futures-facing criminology, and using the cultural space of cyberpunk cinema is, in turn, an important entry point for exploring that imagination.

There is no claim here to comprehensiveness, but by focusing in on the embodied singularities of bio-engineered, cyborgian policing actors, we do get a glimpse of the kinds of futures which may be possible, desirable, dangerous and problematic. For example, the perennial juxtaposition of objective versus subjective modes of knowing implies a binary choice, as though epistemological approaches are not only siloed into separate spheres of knowledge production but are also judged solely on their comparable merits. However, this juxtapositioning is erased when machinic automation and human autonomy, and the integration of specialist IT and operational officers, are thoroughly enmeshed within decision-making practices. Moreover, this entanglement extends the concept of digital labour and digital literacy beyond the instrumental use of digital technologies, to incorporate an ability to critique, challenge and engage analytically with techno-digital output. At the same time, and thirdly, the drift to a digitally accessorised patrol officer may be highly conducive to multi-tasking and responsibilised remote working, but it comes at the expense of teamwork, collegiality, mentoring and supervision, and restricts the discursive opportunities and organisational spaces for co-present officers to moderate, clarify, dispute and dis/agree over how policing is practiced, managed, regulated and accounted for.

This barely scratches the surface of the myriad sociotechnical imaginaries which circulate in and through cyberpunk cinema. These are futuristic worlds which gesture towards the limits, dangers and potentialities of techno-digital policing. As Slaughter (1998) has argued, cyberpunk fiction ‘gives us other, often divergent, images, options, arenas of possibility that lie beyond reason and instrumental analysis. These sources provide access to an entire “grammar” of future possibility’ (p. 1001). Criminology’s ‘digital turn’ (Smith et al., 2017) signals a developing and cumulative portfolio of critical research into the vagaries of techno-digital policing. This paper contributes to this effort but is advocating an alternative temporal orientation, one which looks beyond today’s constitutive realities and the normative ambitions of strategy documents, to step boldly into the future. Such a move enjoins us to establish different points of departure from those which are routinely articulated in police policy statements and strategy documents. There is nothing especially contentious in aims to harness the power of techno-digital resources to provide a seamless citizen experience, identify harms and target interventions, deepen collaboration with public sector and criminal justice partners and to empower the private sector to appropriately share in public safety responsibilities (NPDS, 2020: 6–9) – though how far such aims are met, and with what effects will certainly invite critical criminological attention. As presented, these ambitions attract the ‘least drama’ (Brysse et al., 2013) and appeal to the general public’s, governments’, business leaders’ and other decision-makers’ (perceived) sense of relevance, progress, affordability and feasibility in planning for the long term.

Suppose, though, we fast forward into the futuristic world of cyberpunk and find ourselves in a dystopian society where, as Baudrillard (1996) puts it, the catastrophe has already happened. If it is good enough for the world’s most celebrated technoscience pioneers to catastrophise the untrammelled roll-out of AI technologies, then we should take seriously the extreme scenarios of filmic futures and regard them as present day springboards for reorienting our sociotechnical imagination. How do ‘frictionless digitally enabled citizen experiences’ (NPDS, 2020: 7) enhance public engagement with, and build trust in policing when a technologically saturated society is already harnessing privately circulating data, and exploiting it for surveillance and intelligence-gathering purposes? How does policing ‘empower the private sector’ (NPDS, 2020: 9) when techno-business logic has already displaced community priorities and infiltrated criminal justice agendas? Restated as an actually-existing state of affairs, techno-digital policing appears less of a solution to existential challenges, and more as a problem to be addressed. It inspires a criminology of the future and a sociotechnical imaginary in which the grammar of progress, enhanced efficiency, and real-time immediacy is jettisoned in favour of a more questioning and sceptical approach to techno-digital advancements. The speculative fictions of cyberpunk vividly illustrate the possible (and epic) futures of techno-digital policing and are especially effective at signposting eventualities which may well be good, desirable and worth aiming for, but more importantly, they set out a route map of outcomes we are best to avoid or, at least, to navigate with extreme caution. This does not require us to adopt a Virilian-style technophobia, but neither does it leave us at the margins of technoscientific debate, trapped by and locked into the conventions of retrospective analyses. Bina et al. (2017) sum this up very neatly: ‘Fiction can be propaedeutic to ethics because it presents imaginary and plausible situations in which we can imagine ourselves facing dilemmas, options, having to envision possible solutions in adverse scenarios’ (pp. 168–169).

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research for this paper was funded by the Leverhulme Trust, Grant No: RF-2022-111.