Abstract

This article explores the evolving role of artificial intelligence (AI) in K–12 educational leadership. We argue that AI is not merely a technological innovation but now a leadership imperative that can help schools personalize learning, streamline administrative tasks, and support data analysis and continuous improvement. AI tools will become increasingly embedded in both daily life and school systems, so school leaders must develop new leadership competencies to guide their ethical and effective use. The article introduces a framework of six AI Leadership Domains aligned with the Professional Standards for Educational Leaders, encompassing strategic vision, instructional innovation, equity, and stakeholder engagement. Developed through conceptual synthesis and practitioner validation, the framework draws on theories and research in leadership and technology adoption to provide actionable guidance. The article concludes with implications for policy, leadership preparation, and future research, emphasizing the need for human-centered, equity-driven approaches to AI in education.

Introduction

Artificial intelligence (AI) is quickly changing how schools function, teach, and make decisions. Tools such as adaptive learning platforms, predictive analytics, and generative AI (like ChatGPT, Microsoft Copilot) are now commonplace in education. When implemented thoughtfully, these tools can help schools personalize learning, streamline administrative tasks, and support data analysis and data-informed insights that support continuous improvement. Principals must approach AI with thoughtful planning, ethical responsibility, and a commitment to equity. Without the necessary expertise and leadership, principals risk overlooking critical issues, perpetuating existing inequalities, or diminishing their own impact. This moment presents both opportunities and challenges for educational leaders and their communities.

So far, the field of educational leadership has struggled to keep up with the rapid technological changes brought by AI. In the United States, nearly 60% of principals reported using AI tools during the 2023-24 school year, yet only 18% indicated that their district provided guidance on AI use (Kaufman et al., 2025). Similarly, a survey of 200 school leaders in England found that, although AI is regarded as a promising technology, only 8% felt prepared to use it effectively, and nearly 75% reported lacking internal expertise (Browne Jacobson, 2025). These disparities between usage and preparedness highlight a critical leadership challenge: principals are expected to navigate complex AI tools without sufficient guidance, training, or frameworks to support responsible use. While considerable attention has been given to AI instructional applications (e.g. i-Ready, Socratic by Google, MagicSchool AI, and Duolingo), much less attention has been given to the leadership competencies required for the responsive and effective use of AI in schools.

The way principals lead is crucial to how AI is introduced, managed, and evaluated in schools. According to the Professional Standards for Educational Leaders (PSEL) (National Policy Board for Educational Administration, 2015), principals are expected to act ethically, maintain professional integrity, and continually seek improvement in all aspects of the school—including their own leadership. In the context of AI, researchers have begun to emphasize that leadership requires thoughtful decisions about data, algorithms, student privacy, and automation, while staying committed to student well-being, equity, and academic success (e.g. Arar et al., 2025b, Fullan et al., 2024; Karakose and Tülübaş, 2024).

To help meet these demands, we present six AI Leadership Domains that outline essential practices for using AI in schools in ethical, effective, and equitable ways. We begin by defining AI and clarifying common misconceptions. Next, we review key theories on technology adoption and educational leadership to provide a strong foundation for principals engaging with AI. Our framework is based on a comprehensive literature review and feedback from practicing and former principals in district leadership. We also used AI tools to structure and refine our ideas, while remaining transparent and careful in their use. The article's core sections introduce our AI Leadership Domains, which we later connect to the PSEL. We conclude with recommendations for school leaders, policymakers, and practitioners.

Defining artificial intelligence

AI is a broad and evolving idea with no single agreed-upon definition. The term “artificial intelligence” emerged in the 1950s as researchers worked to create systems capable of carrying out tasks resembling human thinking with some autonomy. For example, the European Commission's High-Level Expert Group on Artificial Intelligence (2018) describes AI as “systems that display intelligent behavior by analyzing their environment and taking actions—with some degree of autonomy—to achieve specific goals” (p. 2). AI pioneer Nils J. Nilsson calls AI a system that “functions appropriately and with foresight in its environment,” highlighting adaptability and goal-oriented actions (Sheikh et al., 2023, p. 16). Gil de Zúñiga et al. (2024) adds that AI can be seen as the ability of non-human systems to perform tasks, solve problems, and interact in ways that echo human thought. These definitions stress AI's ability to act independently, respond to an environment, and imitate human reasoning. AI has developed from early rule-based programs and symbolic logic to more advanced machine learning and neural networks, which now power applications like language processing, image recognition, and decision support (Sheikh et al., 2023).

It is important for principals and educational leadership scholars to recognize that AI is fundamentally different from human intelligence, both in how it works and what it can do. AI systems are capable of processing large amounts of data, identifying patterns, and performing tasks more quickly and accurately than humans in some cases, but they lack consciousness, emotions, life experience, and moral judgment. Humans think and act based on their emotions, social backgrounds, and ethical values, which allows for creativity and empathy. Bewersdorff et al. (2023) point out that a common misunderstanding is to personify AI and treat it like it thinks or feels like a human. In reality, AI relies on probability to disseminate information but does not truly comprehend the concepts it generates. Another misunderstanding is that AI systems are neutral or objective. AI tools can mirror and even increase biases found in the data used to train them (Bewersdorff et al., 2023). In education, AI should be used to support and enhance human roles rather than take them over.

Theoretical underpinnings to artificial intelligence adoption

Theories of technology adoption

The way organizations adopt and adapt to new technologies is an important topic in social science research. Key theories like the Technology Acceptance Model (TAM), Unified Theory of Acceptance and Use of Technology (UTAUT), and Diffusion of Innovations (DOI) provide useful ways to understand how AI is taken up in schools (Al’kfairy, 2024; Venkatesh, 2022; Venkatesh et al., 2003). These models explore why some technologies are adopted while others are not, as well as the cultural, ethical, and structural influences that affect adoption. As AI becomes more common in education, these theories help highlight both its benefits and challenges. For instance, the TAM by Davis (1989) is often used to predict how likely people are to accept new technology. The model includes external variables such as system design and user training which trigger cognitive responses (perceived usefulness, perceived ease of use, intentions to use, and actual use). Recent studies show that factors like openness to new experiences and having a positive attitude toward AI influence individual's willingness to use it (Ibrahim et al., 2025). In a study of human resource professionals engaged in employee recruitment efforts, Almeida et al. (2025) found that AI tools improved efficiency and helped optimize resource management but users worried about the potential loss of personal interactions and changing job roles. These results show that while TAM is helpful, adopting AI also depends on wider psychological and situational factors.

Venkatesh's (2022) UTAUT expanded on TAM by including factors like social influence, available support, and expected performance. This model is widely used in education. For example, Patterson et al. (2024) found that students were more likely to adopt generative AI when their peers and mentors used it and when they saw productivity benefits. Factors like age and gender did not significantly affect AI use, which point to the importance of school culture and support systems in shaping AI use. UTAUT also highlights that leadership and organizational norms are key in deciding how AI is adopted and used. Schools with trusting collaborative cultures and supportive principals might have advantages in AI adoption and usage.

DOI theory helps explain how new ideas and tools spread through groups and organizations over time (Rogers, 2003). Recent studies have used DOI to understand how AI is adopted in many fields, including education. The theory points to factors like the perceived benefits, how well the innovation fits with current systems, and how visible its results are as key reasons for adoption. Alka’awneh et al. (2025) suggest DOI is useful for explaining why some schools adopt AI faster than others, which can depend on leadership vision, peer influence, and how well AI fits with school goals. In online education, Almaiah et al. (2022) found that schools with a strong culture of innovation and clear communication were more successful at adopting AI.

These theories collectively underscore how AI adoption is both a technical process socio-organizational process shaped by individual perceptions and idiosyncrasies, cultural and social norms, and systemic and organizational conditions. TAM emphasizes individual perceptions of usefulness and ease of use, UTAUT adds the role of social influence and organizational support, and DOI highlights systemic factors such as relative advantage and compatibility. Together, these theories and frameworks suggest that successful AI integration depends on both personal attitudes and broader cultural and structural conditions, which underscore the need for leadership practices that address technical adoption drivers while also navigating ethical and equity concerns. Principals can and should play a key role in helping teachers and school personnel navigate both the technical and human sides of using AI, drawing on their skills in ethics, judgment, and social awareness. They can also use their professional experience to support successful AI adoption—a topic discussed in the next section.

From adoption to school leadership

Studies in educational leadership and technology adoption find that successful integration is shaped by more than just having access to new tools. Factors such as individual beliefs, skills, and the wider school environment and culture all play a role (Dexter, 2008; Dexter and Richardson, 2020; McLeod and Richardson, 2011). Leadership readiness comes from both personal qualities and system-level supports like professional learning, policies, and stakeholder involvement. Importantly, adopting technology is a social process, shaped by trust, relevance to teaching, and shared goals. For example, McLeod and Richardson (2011) emphasize the need for principals with vision and digital skills to drive innovation, while Dexter (2011) points to the value of sharing leadership and working together to sustain technology adoption initiatives.

A principal's success in introducing new technologies or policies often depends on both internal and external factors. Preparing principals to use and integrate AI goes beyond teaching them technical skills. Weiner et al. (2021) points out that emotions and workplace politics also affect how leaders respond to change. In the case of AI, concerns about ethics, job security, and teaching value can make principals and teachers hesitant to adopt new tools. This means leadership training and support systems need to be rethought, along with the stories and beliefs that influence how schools approach innovation (Dexter, 2008; McLeod and Richardson, 2011).

Different leadership theories offer insights into how principals might handle AI and other new technologies. Instructional leadership focuses on improving teaching and learning, so principals using this approach consider the extent to which AI tools fit with their instructional goals and support teacher growth (Hallinger, 2005). Transformational leadership is about vision and motivating change, so principals who encourage innovation and model ethical AI use can help their schools overcome adoption challenges (Leithwood and Jantzi, 2005). Distributed leadership values teamwork and shared responsibility, making it a good fit for implementing technology together (Spillane, 2006). Social justice leadership adds a focus on equity, ensuring that AI benefits all students, especially those from underserved backgrounds (Theoharis, 2007).

Process of framework development and artificial intelligence disclosure

To create our six AI Leadership Domains, we used a multi-step process grounded in both technology adoption and leadership theory. First, we reviewed existing research and frameworks about AI adoption and principal leadership. This work included identifying key practices and challenges as well as synthesizing insights from well-known adoption models previously mentioned (TAM, UTAUT, DOI) alongside leadership theories, such as instructional, transformational, distributed, and social justice leadership. Our synthesis helped ensure our framework reflected both individual-level adoption drivers (e.g. principal's perceived usefulness, social influence) and system-level conditions for change (e.g. compatibility, organizational culture). In the synthesis process, we recognized a tension between efficiency-oriented adoption approaches and equity-driven leadership principles, which in turn shaped our decision to prioritize ethical AI use and embed ethical and equity considerations throughout the framework.

Next, each author examined the leadership practices to begin creating domains. We also used AI tools to help organize the practices and clarify domains. Then, we engaged a convenient sample of 10 current principals or former principals working in district leadership positions across varied U.S. contexts, including urban, suburban, and rural schools. These individuals were selected based on their demonstrated leadership experience, including prior recognition in school, district, and state initiatives and involvement in technology integration efforts. We invited them to review the six initial domains and respond to questions about the relevance, clarity, ordering, and alignment with real-world contexts and experiences. Specifically, we asked the practitioner group to respond to the following questions:

Which of the six domains feels most relevant to your current leadership work, and why? Are there any domains that seem unclear, less applicable, or missing key elements from your perspective as a school or district leader? How well do the domains reflect the challenges and opportunities you see in AI integration related to teaching, learning, and school operations? What kinds of supports (e.g. professional development, policy guidance) might help you lead across these domains? In what ways do these domains align and/or conflict with your school or district's mission, vision, and values?

Responses were provided through individual comments made on the draft, through email, and through follow-up conversations. We systematically reviewed all feedback from the participants by organizing comments by domain and collectively discussing feedback and recommendations. When we made revisions, we referred to the literature and theoretical models to ensure that adjustments were both evidence-based and aligned with the conceptual foundations of the framework. The authors resubmitted the manuscript to the respondents for a final round of feedback. Notably, the responses prompted us to reorganize the ethical integration into the first domain and consolidate two other domains due to their overlap and redundancy.

Finally, we matched our six-domain framework to the PSEL (National Policy Board for Educational Administration, 2015) so it could be used by principal preparation programs and other organizations. We considered the PSEL's structure and focused on how AI leadership fits into the larger goals of school improvement and student success. Throughout this process, we used generative AI tools like Microsoft Copilot, Grammarly, and Scribbr to help organize research, clarify our writing, and summarize feedback. These tools supported our work, but the ideas and analysis remained our own. In accordance with the ethical guidelines established by the Committee on Publication Ethics, AI tools were not credited as authors because they did not generate original research findings or interpret data. The authors assume full responsibility for the accuracy, originality, and scholarly integrity of all content, including that influenced by AI assistance (COPE, 2023). Our disclosure also aligns with the Oxford/Cambridge framework for ethical AI use in academic writing, which emphasizes human oversight, substantial human contribution, and transparent acknowledgment of AI involvement (Nature Machine Intelligence, 2023). All AI-generated content was reviewed, edited, and approved by the authors to ensure alignment with academic standards and ethical expectations.

Six domains for ethical and effective artificial intelligence school leadership

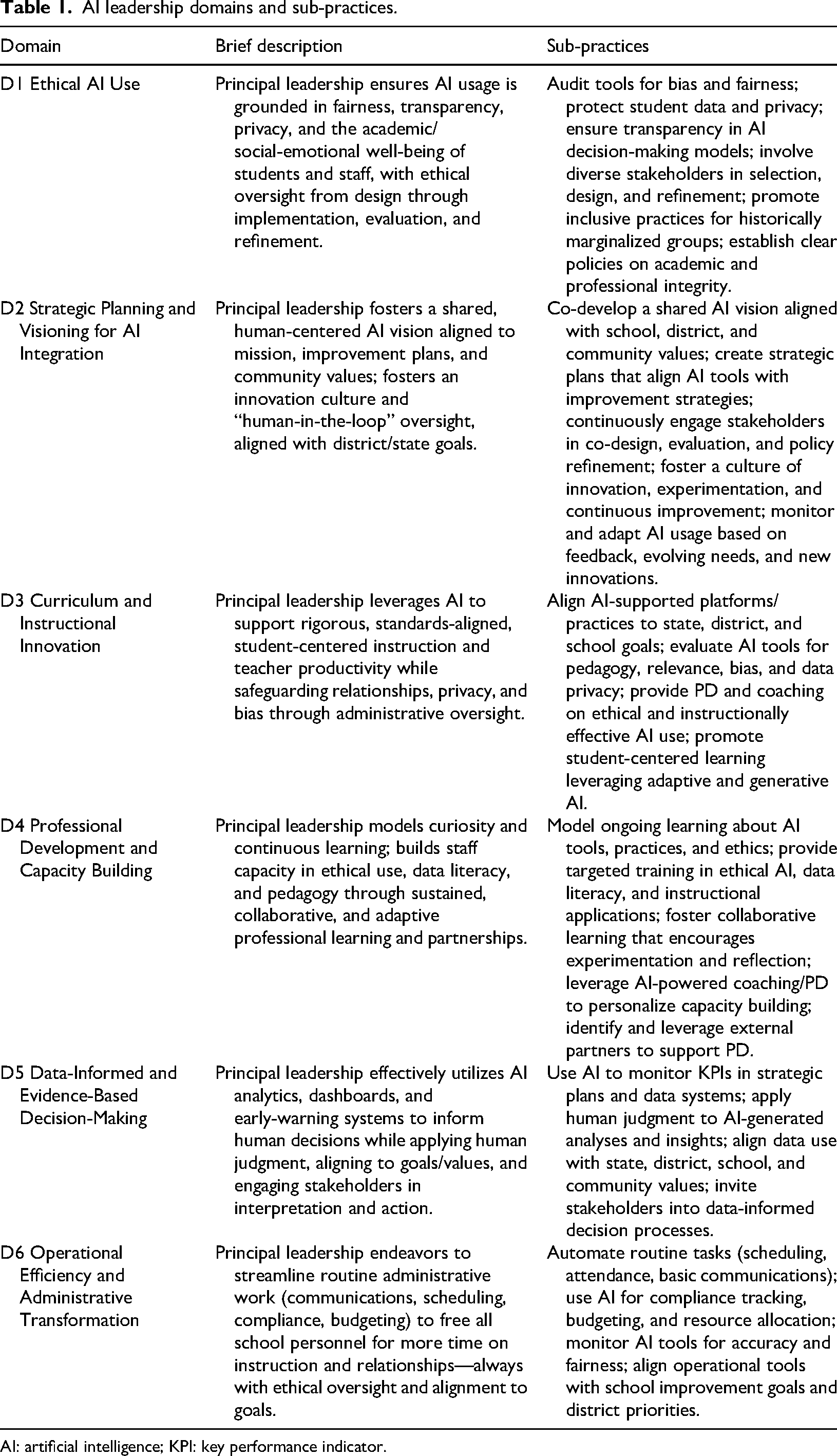

The six domains are arranged so that ethical AI use is the starting point for each domain. While Domain 1 serves as a foundational entry point, the six domains are intended to be interdependent and mutually reinforcing. Effective AI leadership necessitates integrative engagement across domains, with ethical oversight and stakeholder involvement present throughout (resulting in some minor repetition across domains). Each domain includes an overview and actionable steps drawn from research and theory (see Table 1). This structure offers a clear and adaptable framework suitable for diverse school contexts. The framework is designed to support principals in leading with intentionality and care while implementing ethical and effective AI use. Both the potential benefits of AI in schools and the essential role of human leadership in maintaining alignment with educational values, equity, and strong relationships are emphasized.

AI leadership domains and sub-practices.

AI: artificial intelligence; KPI: key performance indicator.

Domain 1: ethical artificial intelligence use

Principals must ensure AI is used fairly, transparently, and with respect for privacy, always prioritizing the academic and social-emotional well-being of all students and staff. This requires ethical oversight at every step—from design to implementation and ongoing evaluation. If principals do not lead ethically, AI can reinforce bias, threaten privacy, and deepen inequities that already exist in schools (Arar et al., 2024; Arar et al., 2025b; Göçen and Döğer, 2025; Wang, 2021). Principals need training to understand how AI works, including the fact that AI tools are built from historical data and can unintentionally repeat biases—especially in student assessment, personalized supports, and teacher hiring (Bixler and Ceballos, 2025; Wang, 2021). For this reason, principals should regularly review algorithms and data sources to prevent AI from repeating or creating new harms for students or staff (Adams and Thompson, 2025; Aldighrir, 2024).

Protecting student privacy has always been important for principals, but AI brings even greater risks when it comes to handling private data. Principals must follow strict data protocols, make sure families and students give informed consent for any data collected, and be transparent about how AI systems work and make decisions (Fusarelli and Fusarelli, 2024; Polat et al., 2025). They should also use their skills to involve a wide range of stakeholders in designing and reviewing AI tools, with special attention to the needs of students from marginalized groups (Arar et al., 2025a; Marrone et al., 2025). To uphold ethical standards and integrity, principals need to collaborate with educators, district staff, and experts to report and address any concerns about unethical AI use (Kafa, 2025).

To foster ethical AI integration, principals need to be prepared to:

Audit tools for bias and fairness Protect student data and privacy Confer with district IT about acceptable use policies Ensure transparency in AI decision-making models Involve diverse stakeholders in tool selection, design, and refinement Promote inclusive practices, particularly for historically marginalized groups Establish clear policies on academic and professional integrity

As schools increasingly integrate AI, principals need to stay updated on new technologies and understand how these changes affect students and schools. Fullan et al. (2024) stress that ethical AI use should be rooted in strong policy, sound research, and a focus on people. This might mean principals partner with district or universities specializing in AI ethics, ask vendors for documentation on how AI tools were developed and trained, and/or treat AI adoption as an ongoing process rather than a one-time approval. Principals should lead with the understanding that AI is meant to support—not replace—the human relationships and efforts that are central to good teaching, care, and equity in schools.

Domain 2: strategic planning and visioning for artificial intelligence integration

In this domain, principals use AI to create a shared, people-centered vision that fits with the school's mission, improvement plans, and community values. They are responsible for building a culture of innovation while making sure there is always human oversight—what is called the “human-in-the-loop” approach—and for aligning AI work with district and state policies (Fullan, 2024; Richardson et al., 2025). AI can help with tasks like updating master schedules and making budget decisions (Karakose and Tülübaş, 2024; Van Quaquebeke and Gerpott, 2023). Importantly, principals should help everyone see that AI is not just about saving time or cutting down on paperwork. Instead, AI can change how schools, teachers, students, and families connect and work together for everyone's benefit.

Principals, educators, and families may initially view AI integration as a threat to job security and a potential substitute for human leadership. Strategic planning for using AI should always keep people involved in decision-making—this “human-in-the-loop” idea builds trust, ensures oversight, and promotes ethical use (Ifenthaler et al., 2024). Principals will likely need to collaborate with staff to create new vision statements, develop policies for AI, and foster a school culture where it's safe to try out and learn from new technologies.

Principals also need to make sure their efforts to use AI match with district and state goals—this increases the chances of ethical and effective AI adoption. Tyson and Sauers (2021) found that leaders who connected their AI work with district goals and gained staff support saw better results. Marrone et al. (2025) point out that being strategic is key for getting buy-in, handling ethical questions, and shaping a school culture that welcomes AI.

To lead successful AI planning and visioning, principals should be prepared to:

Collaboratively develop a shared AI integration vision aligned with the school vision, district goals, and community values Create strategic plans for AI integration and alignment of AI tools with school improvement plans and strategies Continuously engage stakeholders in co-design, evaluation, and refinement processes for AI usage and AI policies Foster a culture of innovation, experimentation, and continuous improvement Monitor and adapt AI usage based on feedback, evolving needs, and emerging innovations.

To fully implement these efforts, principals need to collaborate with various stakeholders and allocate time and resources for ongoing learning and reflection.

Domain 3: curriculum and instructional innovation

When principals lead with ethics and careful planning, they can ensure AI tools enhance curriculum and instruction—boosting critical thinking, deep learning, and student agency and independence (Chiu, 2024; Pratschke, 2024). Still, principals and teachers must use their own expertise and judgment to ensure technology does not overshadow the human relationships vital for student growth and instructional quality. Generative AI tools like ChatGPT and Midjourney can help teachers create engaging, cross-disciplinary lessons and support challenging curricula that encourage students to explore and practice new skills. However, these innovative uses require ongoing oversight to keep instruction ethical, relational, and aligned with school standards and goals.

In this area, principals need to make sure all AI tools used for teaching are standards-aligned and match up with state, district, and school goals. Principals should oversee AI-powered chatbots, tutoring systems, and adaptive platforms to confirm they deliver accurate, differentiated instruction and helpful real-time feedback (Karakose and Tülübaş, 2024; Villegas-Ch et al., 2020). Although AI can reduce teachers’ workloads, principals should check that teachers are using their extra time to connect with students and boost learning. Using AI with students brings up important issues around data privacy, bias, and ethics. Principals need to set clear guidelines and actively monitor how teachers and students are using AI tools to prevent misuse, especially in the absence of national, state/regional, or local policies. Regular classroom observations and reviews of student data will be key responsibilities. Additionally, principals must familiarize themselves with the data privacy policies of AI tools they plan to adopt and use in school. In doing so, all school personnel can be prepared to engage with AI without breaching data privacy protections and meeting district confidentiality policies. Principals should also make sure teachers get the support they need to use AI well, understand how it shapes instruction, and spot trends in student assessment and data analysis.

To foster curriculum and instructional innovation, principals need to be prepared to:

Align AI-supported instructional platforms and practices to state, district, and school goals Regularly evaluate AI tools for pedagogical soundness, instructional relevance, algorithmic bias, and data privacy Provide professional development and coaching on ethical and instructionally effective AI integration Promote student-centered learning that utilizes best practices in adaptive and generative AI tools.

Traditional instructional leadership practices, such as collaborative planning, coaching, and ongoing professional development will still be important, but with a greater focus on how AI is changing education.

Domain 4: professional development and capacity building

For AI to be effectively utilized in schools, principals need to work alongside teachers in a culture of ongoing learning and growth—especially since AI will continue to evolve. Principals should be hands-on with AI themselves, showing a willingness to learn and reflect. Professional development in this area includes not just technical training, but also building skills in ethical decision-making, data literacy, and the ability to keep evaluating how AI is being used. Fullan et al. (2024) recommend focusing on developing people's social skills and teamwork to help everyone keep up with the changes that AI brings.

Principals should show curiosity and a commitment to learning, setting the tone for a culture where it is safe to experiment and ask questions about AI. Richardson et al. (2025) stress that principals create better results when they encourage professional learning environments that support trying new things and reflecting on what works. Tyson and Sauers (2021) found that principals who joined professional networks and learned with their staff were more effective at bringing in new technology like AI. Principals should remember that AI-related professional development should be timely, ongoing, and flexible, since technology changes faster than the typical school year or training schedule. To help everyone keep learning, principals should review their current systems and build new ones that support continuous growth—like peer mentoring, online courses, and partnerships with universities or technology companies. Aldighrir (2024) also suggests leadership training that builds long-term management skills and helps leaders at every level work together across the school and community.

To foster professional development and capacity building, principals need to be prepared to:

Model curiosity and continuous learning about AI tools, practices, and ethical topics Provide targeted training on ethical AI use, data literacy, and instructional applications Foster collaborative learning environments that encourage AI experimentation, evaluation, and reflection Leverage AI-powered coaching and professional development platforms to personalize capacity building efforts Identify and leverage external partners to support professional development.

Many principals can feel unprepared for AI technology (Kafa, 2025), and leadership programs are only beginning to focus on AI (Hejres, 2022; Richardson et al., 2025). This means that principals will need to learn and adapt in collaboration with teachers and other stakeholders.

Domain 5: data-informed and evidence-based decision-making

AI not only adds new tasks for principals and teachers but can also greatly improve their ability to make decisions informed by data. With the help of AI-powered tools like predictive analytics, dashboards, and early-warning systems, principals can quickly analyze complex data, monitor student progress in real time, identify students who may need extra help, and allocate resources more effectively (Adams and Thompson, 2025; Villegas-Ch et al., 2020). When used alongside school improvement plans and goals, these data-driven approaches can help schools better serve all students.

Wang (2021) describes AI as an “extended brain” for principals, helping them process information and make timely decisions. Osegbue et al. (2025) note that AI can improve school management by tracking compliance and performance in real time, especially when guided by ethical standards. For example, principals might use AI-created dashboards to spot trends in attendance or discipline, but they should also gather feedback from staff, students, and families to understand emerging patterns. Likewise, if AI identifies students at risk, principals should confirm these findings with teachers and counselors before acting on the identification. This approach ensures data is used ethically and with human judgment. As Göçen and Döğer (2025) point out, AI works best when paired with strong oversight.

To engage in data-informed and evidence-based decision-making, principals should:

Use AI tools to monitor key performance indicators in strategic plans and relevant data systems Apply human judgment to all AI-generated data analysis and insights Align data use with state, district, school, and community goals and values Invite stakeholders in data-informed decision-making processes.

These practices enable principals to make informed, timely decisions that support students and enhance the school. However, principals should also consider ethics, culture, vision, instruction, and professional development to ensure that data is used in ways that benefit the entire school community.

Domain 6: operational efficiency and administrative transformation

Principals often face growing workloads; AI tools can help by making daily operations more efficient and reducing manual tasks. For instance, AI can automate things like basic emails, meeting summaries, and budget tracking. With this extra time, principals can focus on building staff capacity for AI, leading instructional improvements, and strengthening relationships in their schools (Arar et al., 2025b; Dogan and Arslan, 2025).

AI tools can be especially helpful for principals in schools with limited staff. When engaging with AI tools, all school staff must be diligent in reviewing data privacy policies and, in doing so, not use publicly accessible tools (i.e. ChatGPT) for sensitive information. Generative AI can help with tasks like creating reports, analyzing student data, setting up professional development schedules, and tracking compliance (Adams and Thompson, 2025; Chiu, 2024). Before using generative AI to engage with student data or other sensitive information, principals and their staff should access appropriate, data secure platforms through an institutional account with privacy protections in place (e.g. Google Gemini, Harvard AI Sandbox). This domain provides balance for the extra duties described elsewhere. AI scheduling tools, for example, can quickly create master schedules that consider teacher availability, student needs, and compliance requirements. As with all domains, using AI to improve operations must be done ethically and in line with school and district plans. Too much reliance on AI without human oversight can create ethical problems, data privacy risks, and weaken the trust and relationships that are essential for good leadership (Polat et al., 2025; Wang, 2021).

To enhance operational efficiency and engage in administrative transformation, school leaders should:

Automate routine tasks such as scheduling, attendance reporting, and basic communications Utilize AI tools to support compliance tracking, budgeting, and resource allocations Monitor AI tools for accuracy and fairness Align operational AI tools to school improvement goals, district priorities, and data privacy standards.

These practices can reduce the time spent on administrative tasks, allowing principals to focus more on teaching and school culture. Still, it is essential to maintain strong ethical oversight that aligns with school and district goals.

Discussion

AI is becoming a common part of education, but this raises an important question: Are principals truly ready to adopt and manage AI? While frameworks like the one we present can offer direction, the field still shows uneven levels of preparedness. As Anysiadou and Gkliati (2025) reports, many school leaders recognize AI's potential but do not feel confident or trained enough to use it well. This gap between awareness and practical readiness points to the need for leadership development programs that address AI literacy, ethical decision-making, and strategic planning. Without these supports, the benefits of AI may not reach many schools. Other barriers include resistance to change, mistrust of new technologies, and concerns about privacy or bias within AI-generated outputs (Polat et al., 2025; Wang, 2021). Some leaders worry that AI could replace human judgment or weaken the personal connections that are central to effective leadership (Adams and Thompson, 2025). The lack of clear policy and ethical guidance for AI use in education can also make it hard for principals to know what steps to take toward AI.

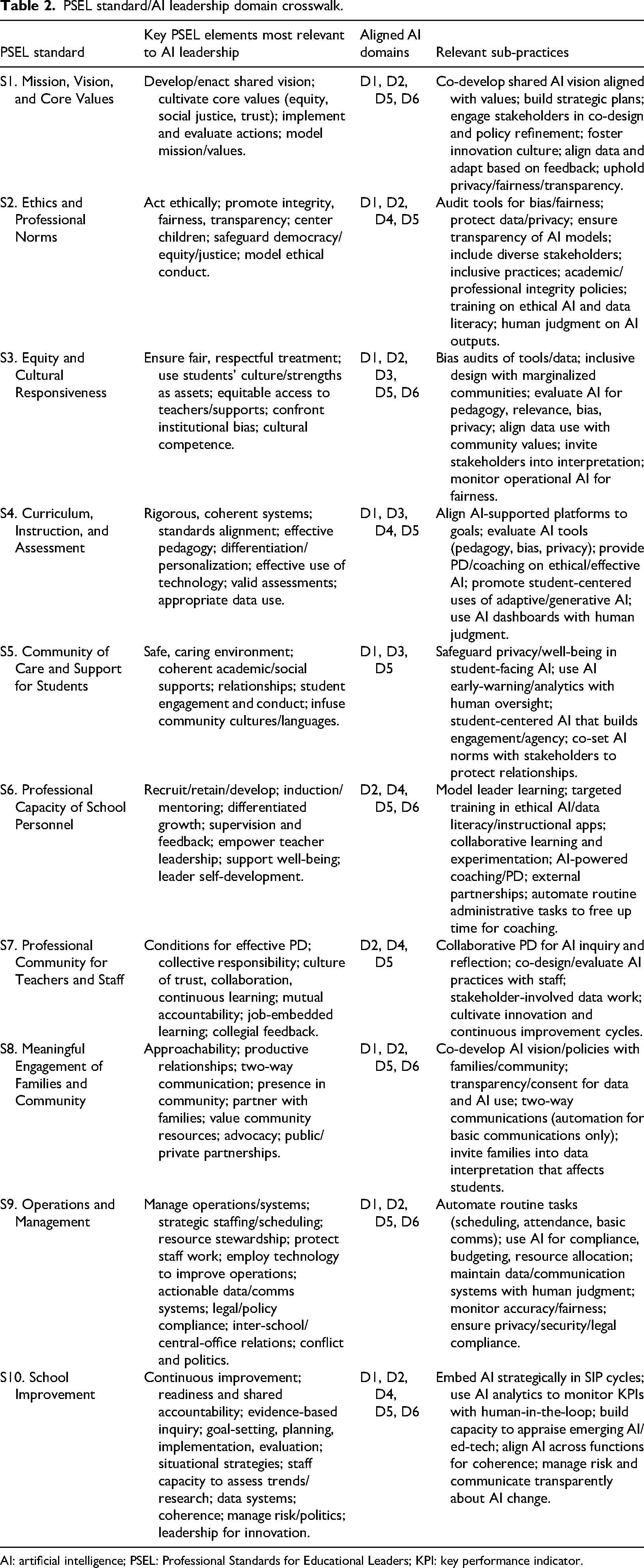

Moving ahead, educational leadership scholars and practitioners must take a proactive approach to AI use. This means building professional development focused on AI skills, encouraging teamwork in implementing new tools, and involving stakeholders in conversations about ethics, especially in relation to data privacy (Marrone et al., 2025; Richardson et al., 2025). Leadership training and district professional development should include AI-related content that aligns with standards like the PSEL or relevant state guidelines. Table 2 shows how our AI Leadership Domains connect to the PSEL standards, which have not been updated since 2015 and do not explicitly address AI or related emerging technologies. In addition, AI adoption may advance state and district goals related to improving instructional quality and operational efficiency. Yet, each nation, state, and district operate within unique policy environments, resource constraints, and public expectations that will require contextually responsive, multi-level approaches that consider local priorities alongside broader policy frameworks.

PSEL standard/AI leadership domain crosswalk.

AI: artificial intelligence; PSEL: Professional Standards for Educational Leaders; KPI: key performance indicator.

In addition to aligning with PSEL, researchers should keep exploring how leadership theories like distributed and transformational leadership shape AI adoption in schools (Leithwood and Jantzi, 2005; Spillane, 2006). Tackling these issues will help make sure AI strengthens educational values and leadership, rather than disrupting them. The AI Leadership Domains we share here provide a foundation for rethinking and expanding these theories. For instance, our framework shows how AI can support teamwork, shared decision-making, and faster feedback, but it can also change how leadership works by adding new forms of digital collaboration and ethical responsibility. These changes deserve more research and practical attention.

Likewise, the Technological Pedagogical Content Knowledge (TPACK) framework by Mishra and Koehler (2006) offers a helpful way to think about how leaders guide teaching and learning changes with AI. While TPACK is usually used to describe teacher skills, it can also apply to principals, especially in areas like curriculum development and ethical AI use. Principals must understand how technology, teaching methods, and subject matter work together, and they need to address issues like bias, access, and cultural relevance within pedagogical practice. As AI tools become more common in schools, educational leadership research should look at how TPACK can be updated to include the strategic, ethical, and system-wide challenges of technology, helping to bridge the gap between teaching and organizational leadership today.

Our six-domain framework also helps to extend existing leadership theories, particularly distributed, transformational, instructional, and social justice leadership. For example, distributed leadership emphasizes shared responsibilities and collaboration, which AI tools can support through enabling more inclusive decision-making processes and real-time feedback loops. Transformational leadership, which centers on vision and changes, aligns with strategic planning and ethical oversight required for AI integration while instructional leadership can now incorporate digital fluency and data literacy as core competencies. Social justice leadership theories can also be extended by embedding ethical governance protocols, stakeholder co-design, and data equity into core routines that AI tools can enable or support. These extensions present new opportunities for reframing educational leadership models and practices, particularly as AI use evolves in schools and society. Moreover, our framework aligns and extends more recent AI frameworks, tenets, and taxonomies put forward in both the K-12 and higher education leadership spaces (e.g. Arar et al., 2025a; Ghamrawi and Tamim, 2023)

Conclusion

Our AI Leadership Domains help to reconfigure existing digital and educational leadership models by raising attention to ethics, stakeholder co-design, and instructional equity into AI leadership practices. We recognize that our AI Leadership Domains are a starting point that will need to evolve as technology advances and as new research and experiences emerge. Our goal is not to offer solutions to every possible AI use or concern, but to contribute to the conversation and lay the groundwork for ethical, effective AI leadership that benefits students and educators. Our six-domain framework complements existing models of digital and AI-enabled leadership by integrating ethical governance, stakeholder co-design, and instructional equity into core leadership routines. While similarities exist between our model and others, our domains partly reflect a reconfiguration of Fullan et al.'s (2024) humanity-centered leadership, Sposato's (2025) AI taxonomy, and Ghamrawi and Tamim's (2023) 5D digital leadership by emphasizing AI as a socio-technical actor that can reshape decision-making, planning, and professional learning. Moreover, our domains advance the existing discourse by offering a structured, practice-oriented model that bridges leadership and technology-related theories with emerging realities of AI in schools.

Utilizing AI tools in the development of this framework—from literature review development, idea organization, and language refinement—allowed us to engage with the technology that mirror many of the leadership practices we proposed. We approached the process with intentionality, aiming to interact with the technology in a thoughtful, ethical, and transparent way. Our goal was to use AI's strengths while maintaining strong academic and ethical standards—just as we hope principals will do. At times, using AI felt strange or even a little unsettling, especially when we saw how quickly it could help us organize our ideas or link our framework to the PSEL standards. These experiences made us reflect on what it means to work as scholars with access to such powerful tools, and whether AI supports or complicates our sense of identity and productivity. We share these reflections as part of an ongoing discussion, not as definitive answers. We encourage others in educational leadership to explore, question, and use AI thoughtfully to enhance integrity and leadership in rapidly changing schools and an evolving world.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.