Abstract

This study examines how principals perceive the potential benefits and challenges of artificial intelligence (AI) for students’ learning and teachers’ work across 12 countries, utilizing data from the 2023 International Computer and Information Literacy Study (ICILS 2023). We utilized latent network models, which allow for a flexible, network-based understanding of latent constructs, to examine the structural relationships between variables related to principals’ perceptions of AI. The findings revealed that while many school leaders recognize AI's potential to enhance student engagement and support teaching, they also express worries about its impact on academic integrity, teacher workload, and instructional practices. Those who view AI as beneficial for students tend to see similar advantages for teachers, whereas concerns about increased workload often accompany negative perceptions of AI's role in education. These insights emphasize the importance of developing balanced AI integration strategies that optimize benefits while mitigating potential challenges for educators. We suggest that policymakers design professional learning opportunities for school leaders that address both the benefits and effective integration of AI in teaching and learning, as well as strategies to mitigate potential negative consequences, such as increased workload or unethical use (e.g. cheating).

Keywords

Introduction

The rapid advancement of artificial intelligence (AI), particularly with the emergence of groundbreaking Generative AI technologies, has significantly influenced education. A substantial amount of research on these technologies in educational settings has been conducted within a remarkably short timeframe (Arar et al., 2025; Berkovich and Eyal, 2025; Fullan et al., 2024; Harris et al., 2024; Richardson et al., 2025). Recent research on AI in education has focused on various educational stakeholders, including students and teachers in schools, as well as academic staff in higher education institutions (Celik et al., 2022; Chan and Hu, 2023; Mah and Groß, 2024; Mustafa et al., 2024; Taktak et al., 2024). Furthermore, research on the use of AI from the school leadership perspective is expanding (Pietsch and Mah, 2024; Marrone et al., 2025) with the majority of studies focusing on the opportunities and challenges of implementing AI technologies in school leadership practices (Adams and Thompson, 2025; Dai et al., 2024; Polat et al., 2025; Richardson et al., 2025; Tyson and Sauers, 2021; Wang, 2021). However, studies on how the attitudes of school leaders regarding technological orientation influence their leadership practices and drive technology integration in schools remain limited. For example, the technology acceptance model (TAM; Davis, 1986) has been widely used to explain individuals’ willingness to adopt new technologies, considering the roles of perceived usefulness and perceived ease of use. This model has influenced a substantial body of research on technology integration in educational settings, with a significant focus on teachers and students (Schepers and Wetzels, 2007). However, despite this growing interest, relatively little research has examined technology acceptance at the school level, particularly among school leaders who play a crucial role in guiding digital transformation efforts (Arar et al., 2025).

Against the backdrop of scarce empirical evidence, this study aims to further explore school leaders’ views and attitudes toward AI, particularly regarding its perceived impact on students and teachers. Understanding principals’ perceptions of AI is essential, as school leaders play a key role in driving educational innovation and shaping school-wide change efforts (Bryk, 2010). Their attitudes and beliefs regarding technology use can significantly influence the success or failure of digital transformation initiatives, by either facilitating or impeding meaningful integration (Dexter and Richardson, 2020; Tyson and Sauers, 2021). To this end, the study employs data from the International Computer and Information Literacy Study (ICILS) 2023. ICILS 2023is a large-scale assessment designed to examine international differences in the computer and information literacy (CIL) and computational thinking (CT) of eighth-grade students. The first survey was conducted in 2013 (Fraillon et al., 2014), followed by ICILS 2018 (Fraillon et al., 2020) and the latest cycle of ICILS 2023 (Fraillon, 2024). ICILS 2023 includes an addendum on principals’ report on the use of generative AI in schools. A total of 12 ICILS countries participated in the 2023 survey, with principals from these nations completing the optional questions. In this paper, we use the terms “ChatGPT” and “AI tools” interchangeably. Although ChatGPT was the primary tool explored, references to generative AI tools in general are occasionally included for conceptual clarity.

Theoretical background

AI in education

Research on AI has been conducted since the 1960s; however, interest in AI-based technologies in education has seen a notable rise in recent years, particularly following the emergence of generative AI in late 2022. Since then, generative AI, including extensive language models (LLMs) such as ChatGPT from OpenAI, has been adopted by students and educators in educational institutions. In general, AI in education is promising, with the potential to enhance learning, teaching, and educational administration (Bond et al., 2023; Celik et al., 2022; Crompton et al., 2022). Research and applications in the field of AI in education show a wide variety, including examples such as AI language translators, speech-to-text AI and text-to-speech AI, learning analytics for predictions, adaptive systems and greater personalization (Fengchun and Homes, 2023; Mougiakou et al., 2023; Tsai et al., 2020; Yusuf et al., 2024). Benefits of AI in education are numerous, including personalized learning (e.g. customized instructions, adaptive learning paths, immediate feedback), enhancing teaching efficiency and effectiveness (e.g. creation of initial drafts of lesson plans, educational materials, formative assessment), and the chance to redefine educational practices and assessment (e.g. rethinking assessment methods and developing more critical thinking and competency-based assessments) (Bond et al., 2023; Crompton and Burke, 2024; Pratschke, 2024).

Concurrently, there are several challenges of AI in education, such as ethical considerations including bias, discrimination, and lack of diversity (e.g. reinforcement of stereotypes), digital divide and educational inequality (e.g. high costs of some AI systems), privacy and data security (e.g. data privacy, data misuse) (Cotton et al., 2023; Crompton and Burke, 2024; Nguyen et al., 2023). Furthermore, AI literacy is a primary challenge, referring to the competencies that enable individuals to use and evaluate AI technologies in an informed, self-reflective, and responsible manner (Chiu et al., 2024; Chiu and Sanusi, 2024; Lintner, 2024; Long and Magerko, 2020). AI literacy refers to competencies enabling informed and ethical engagement with AI technologies. It refers to competencies enabling informed, ethical, and responsible engagement with AI technologies. Recently, AI competency frameworks have been developed for teachers and students, such as those by UNESCO (UNESCO, 2024a, 2024b), to address the implications for teaching and learning in the age of AI and to develop the necessary competencies. In consideration of the aforementioned potential benefits and challenges, it is crucial to identify key areas for the utilization and implementation of AI in education. This will allow for the adequate outlining of research agendas and policy recommendations (Ifenthaler et al., 2024). This encompasses gaining a deeper understanding of stakeholders’ perspectives, including their expectations for the use of AI tools in education, as well as the ability to engage meaningfully with AI (OECD, 2025).

With a focus on generative AI in K-12, research has been addressed, for example, AI learning tools in general (Yim and Su, 2024), the use of LLM by students and teachers (Stanford University, 2024), academic integrity and detection of AI-generated texts (Fleckenstein et al., 2024) and how generative AI might impact student engagement and learning (Abbas et al., 2024; Fan et al., 2024) including preliminary meta-analyses (Deng et al., 2025; Heung and Chiu, 2025), which, given the early stages of incorporating generative AI in educational settings, may not entirely reflect the accuracy of research in the field. Regarding teachers, for instance, Sanusi et al. (2024) explore teachers’ perceptions of and behavioral intention to teach AI. Research addressing school leaders’ perspectives on (generative) AI in K–12 is limited. However, preliminary studies indicate that school leaders’ digital mindset is a crucial factor in the implementation of AI in schools (Pietsch and Mah, 2024). Hence, further research is necessary to gain insight into school leaders’ perspectives on AI, particularly with regard to their attitudes towards AI for teaching and learning in K-12 education in an international context.

The role of school leaders in AI integration

Research on the use of AI from a school leadership perspective is growing. These researchers were primarily concerned about how AI might benefit school leaders in leading their schools effectively. They demonstrated that integrating AI into educational settings can facilitate decision-making processes by providing opportunities for extensive data analysis (Adams and Thompson, 2025; Dai et al., 2024; Fullan et al., 2024; Tyson and Sauers, 2021; Salha et al., 2025; Wang, 2021). For example, AI can enhance administrative efficiency by automating routine tasks like scheduling, attendance tracking, and budget management. It supports data-driven decision-making by analyzing large datasets to identify trends in student performance, teacher effectiveness, and resource utilization for evidence-based leadership (Adams and Thompson, 2025). Dai et al. (2024) suggested that AI can enhance the decision-making abilities of school leaders by processing and analyzing vast amounts of data, allowing them to make informed, data-driven decisions.

While the majority of available studies focused on the benefits of integrating and the ways to use AI tools in educational leadership (Adams and Thompson, 2025; Dai et al., 2024; Fullan et al., 2024; Wang, 2021), only little is known about whether and how the attitudes of school leaders towards technological orientation influence their leadership practices and drive technology integration in schools. However, there are only a few studies available on this topic to date (Marrone et al., 2025; Pietsch and Mah, 2024; Tyson and Sauers, 2021). The issue is critical, as scholars have indicated that school leaders are drivers of educational innovation and change (Bryk, 2010; Pietsch et al., 2025b). Their mindsets and attitudes towards technology integration in schools can be either major barriers or enablers to digital transformation (Dexter and Richardson, 2020; Tyson and Sauers, 2021).

The role of school leaders in integrating AI into schools could be particularly significant. For example, Cheng and Wang (2023) demonstrated that a positive attitude toward technology and digital tools can enhance learning, administration, and communication within educational settings, hence increasing the use of AI in schools. It could help reduce external barriers, such as a lack of clear curriculum guidance, complex AI systems, the absence of standardized tools, insufficient teacher training, ethical policy gaps, and time constraints, and internal barriers, including teachers’ limited AI knowledge, low confidence, and difficulty incorporating AI into instruction. On the other hand, a lack of knowledge and sufficient training regarding the integration of AI tools could lead to negative perceptions about the use of AI in classrooms (Kafa, 2025). The broader structural issues, such as the way the education system is structured, can also limit or influence principals’ attitudes toward promoting these technologies (Reyes, 2021). Moreover, while Pietsch and Mah (2024) report that principals’ tendencies to support new ideas and the adoption of new technologies led to higher AI technology integration in schools, Tyson and Sauers (2021) suggest that school leaders’ intentions to promote AI integration can be successful by leveraging interpersonal skills to align AI initiatives with district goals and address stakeholder needs, ensuring a structured and inclusive transition and learning. Marrone et al. (2025) suggest that most principals in their Australian sample had a limited understanding of the algorithm driving AI, which creates an obstacle to the integration of these tools in schools.

Technology acceptance in schools

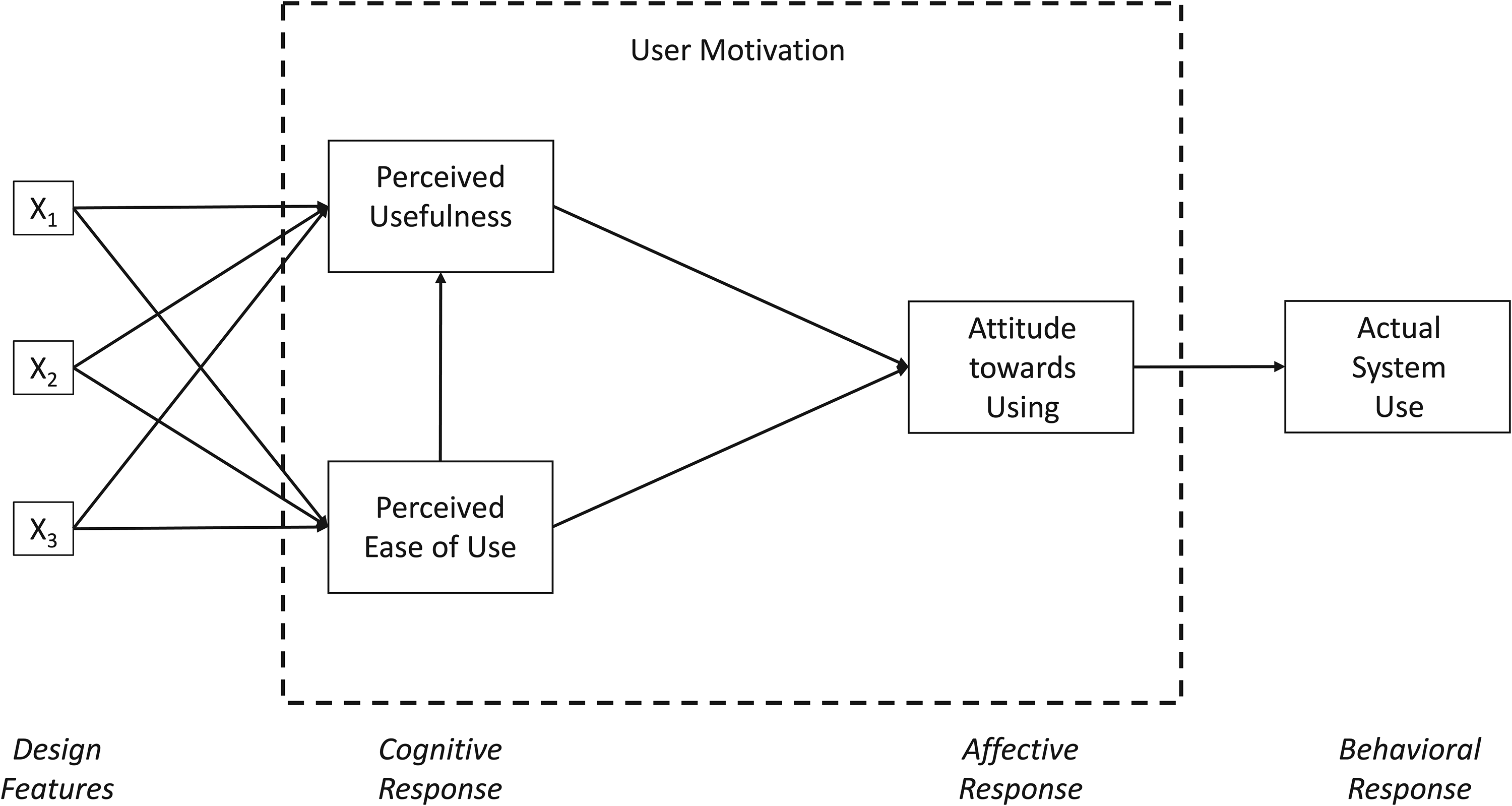

For about 40 years, the TAM, as depicted in Figure 1, has been used to analyze why technology is or is not used (Marangunić and Granić, 2015). At its core, this model is based on the assumption that the actual use of an information system or technology can be explained or predicted by a user's motivation, which in turn is directly influenced by external stimuli (Davis, 1986). The original model includes three facets of user motivation: perceived usability, perceived usefulness and attitude (Davis, 1986, 1989). Further, it posits that perceived usefulness and perceived ease of use serve as mediators in the influence of external factors on attitude and the intention to use a technology (see Figure S1). The model's simplicity makes it highly transferable, enabling its application across a wide variety of contexts and technological domains (Marangunić and Granić, 2015; Marikyan et al., 2023).

Technology acceptance model, TAM (Davis, 1986).

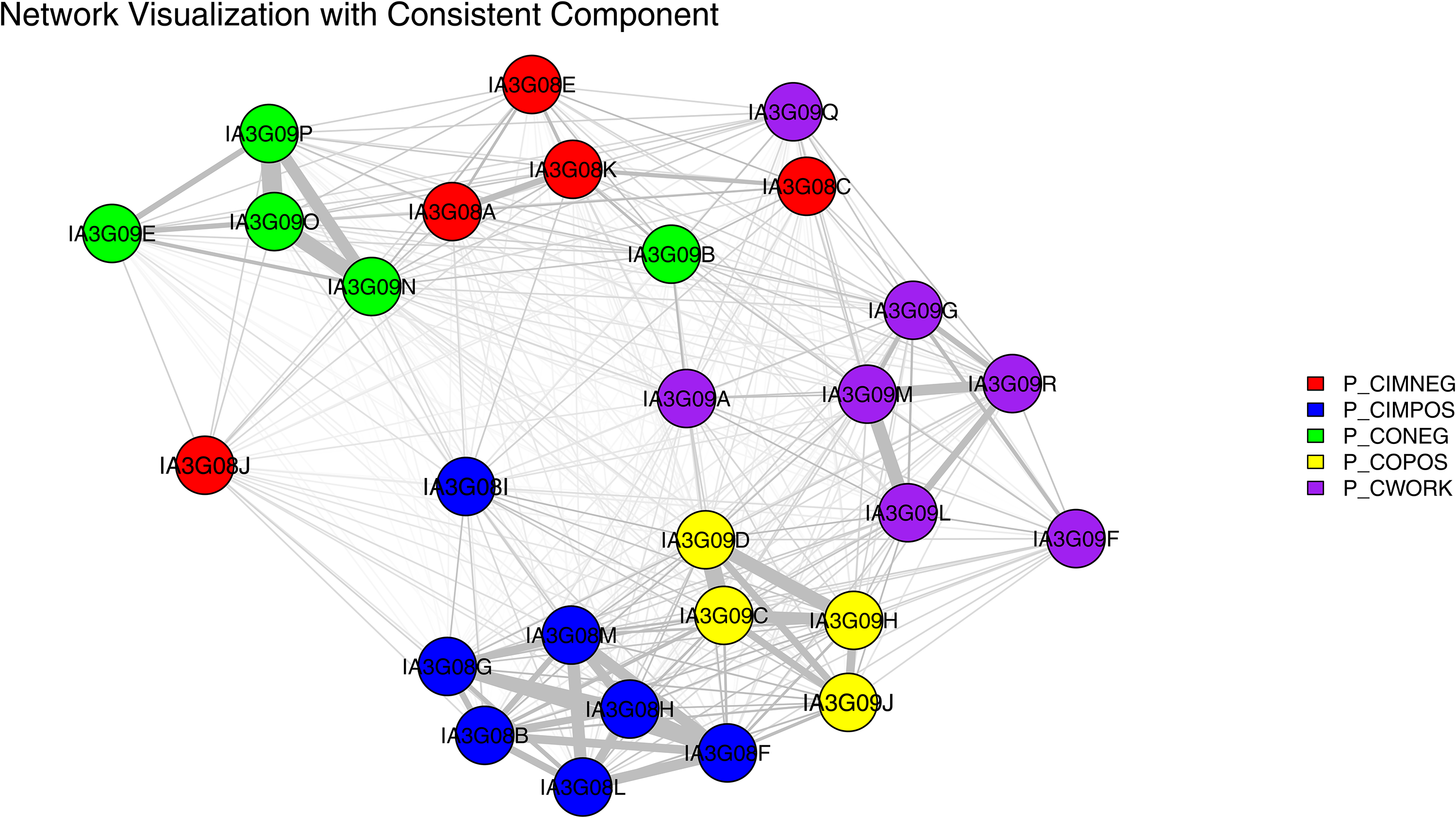

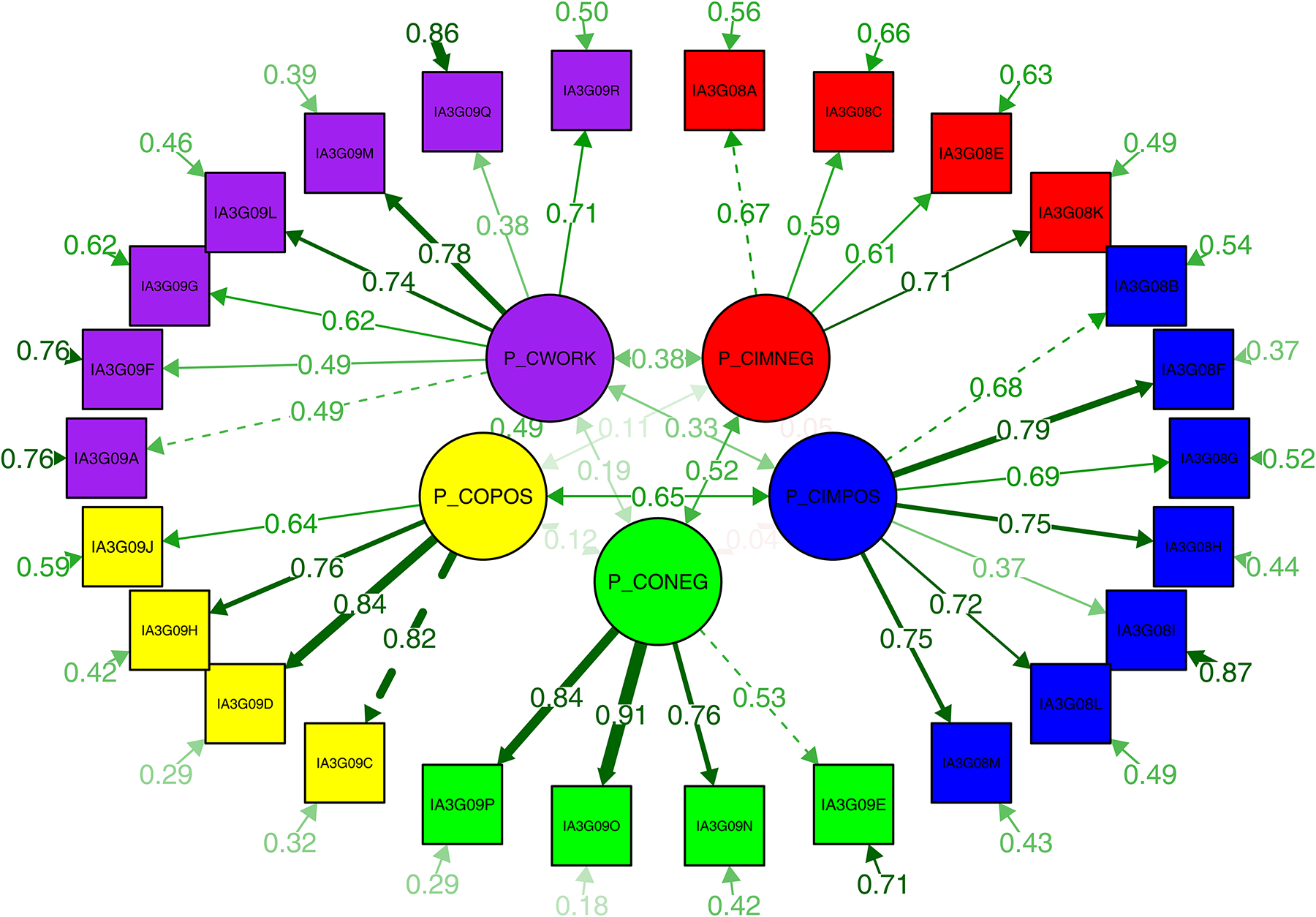

Latent network model of principals’ perceptions of ChatGPT: network structure of construct relationships. Note: P_CIMNEG = principals’ views on the negative impact of ChatGPT on students’ learning; P_CIMPOS = principals’ views on the positive impact of ChatGPT on students’ learning; P_CONEG = principals’ views on the negative consequences of ChatGPT on teachers’ work; P_COPOS = principals’ views on the positive consequences of ChatGPT on teachers’ work; P_CWORK = principals’ views on increased workload as a consequence of ChatGPT.

Despite its widespread use, TAM has faced notable criticism. Bagozzi (2007) argues that TAM suffers from key limitations. These include its failure to explain the origins of core beliefs like perceived usefulness and perceived ease of use, and the lack of a theoretical or methodological basis for identifying their determinants. Additionally, the model largely overlooks the social, cultural, and group-based contexts in which technology adoption occurs, simplifies the role of emotions in decision-making, and relies on a deterministic view that neglects self-regulation and intentional behavior.

With these criticisms in mind, TAM attracted the attention of many researchers. Schepers and Wetzels (2007) reviewed approximately 20 years of TAM application and combined the findings in a structural equation model. They found that both perceived usefulness and perceived ease significantly predicted attitudes towards technology use. In turn, these attitudes served as a mediator on the behavioral intention to use technology, which, again, predicted the actual use of an information and technology system. In a recent meta-analysis, Marikyan et al. (2023) indicated that the interrelations of the various TAM components vary depending on the context. For instance, the interrelations of the components are less pronounced with regard to educational technology than with regard to medical technology (but again stronger than with regard to e-baking technologies). An additional relevant finding was that the authors discovered a generally negative relationship between effort expectancy and perceived risks, as well as between these factors and both attitudes towards and the intention to use technology.

However, for schools, Scherer and Teo (2019), through the application of meta-analytical structural equation modeling (MASEM), have demonstrated that attitudes are a relevant predictor of teachers’ intention to use technology in schools (β=0.338), particularly regarding teachers’ utilization of computer-related technologies (Scherer et al., 2019). However, the relationship between the variables was less pronounced regarding other technologies. Consequently, in a third study, Scherer et al. (2020) have reported that the facets of the TAM do not constitute several clearly distinguishable constructs. Instead, they can be seen as a set of closely connected elements that cannot be clearly separated in practice.

Despite the extensive body of literature addressing technology leadership in schools (Dexter et al., 2016; Dexter and Richardson, 2020; Richardson and Sterrett, 2018), its antecedents remain an under-researched area (i.e. Zhang et al., 2023). Consequently, in contrast to studies comprising teachers, the technology acceptance of school leaders has so far been little investigated (i.e. Anysiadou and Gkliati, 2025; Weng and Tang, 2014), with only a small number of studies in general examining technology acceptance at the school level (Sun and Gao, 2019). In a qualitative study, Ruloff and Petko (2022) found that school principals’ views on technology use influence their leadership behaviors. Jang et al. (2024) obtained analogous results through the utilisation of multi-level latent profile analyses and showed that a principal's view on technology use influences their teachers’ technology acceptance.

Objectives and research questions

Against this backdrop and in line with the findings of Scherer et al. (2020), the first objective of our study is to examine whether principals’ perceptions of AI form a coherent syndrome, in which several aspects are so closely interconnected that they cannot be empirically distinguished. Our second objective is to explore the extent to which the expected consequences of AI for students and teachers are interrelated. Finally, the third objective is to investigate how principals perceive the interplay between the opportunities and challenges of AI integration in schools. Consequently, our study is guided by the following three research questions:

Addressing these research questions is important because, as shown in the previous research, school leaders’ perceptions and expectations regarding AI shape the strategic decisions they make about its adoption in schools (Kafa, 2025; Pietsch and Mah, 2024). For example, understanding how they weigh benefits against challenges can help understand the kinds of support or resistance that might arise during implementation. Overall, these questions can provide valuable insights into shaping professional development, policy design, and capacity building for the integration of AI in schools.

Methods

Sample

This study utilizes data from the International Computer and Information Literacy Study (ICILS) 2023, a large-scale international assessment coordinated by the International Association for the Evaluation of Educational Achievement (IEA). ICILS 2023 is designed to examine how students develop key digital competencies, with a particular focus on CIL—the ability to use digital tools to investigate, create, participate, and communicate in various contexts—and, more recently, CT—problem-solving approaches related to programming and algorithmic reasoning (Fraillon et al., 2014; Fraillon et al., 2020; Fraillon and Rožman, 2024).

ICILS 2023 adopts a two-stage stratified cluster sampling design to yield nationally representative samples of Grade 8 students and their schools. In addition to student assessments in CIL and CT, ICILS 2023 collects extensive contextual data through questionnaires administered to students, teachers, school principals, and ICT coordinators. These questionnaires gather rich background information on digital learning environments, technology access, instructional practices, and leadership perspectives, thus enabling analyses of both individual-level and institutional-level factors influencing digital literacy (Fraillon et al., forthcoming). ICILS provides trend data across cycles—2013, 2018, and 2023—allowing for international comparisons and monitoring of progress over time.

ICILS 2023 also introduced an important innovation: an optional module on principals’ perceptions of generative AI tools, including ChatGPT. This module was implemented in 12 participating countries and offers the first internationally comparable dataset capturing school leaders’ expectations, concerns, and attitudes toward the integration of AI in schools. Given the growing importance of AI in education and the central role of school leadership in guiding technology adoption (Dexter and Richardson, 2020; Pietsch and Mah, 2024), this module provides a unique opportunity to examine an underexplored aspect of digital innovation in schools. The present study draws on these data to analyze school leaders’ expectations for AI across diverse national contexts.

A total of 35 countries participated in ICILS 2023; however, for this study, data from N = 1134 schools nested within 12 countries were available: Chile, Cyprus, Denmark, Greece, Korea, Norway, Romania, the Slovak Republic, Slovenia, Sweden, Taiwan, and Uruguay. Following ICILS 2023 procedures (Fraillon et al., forthcoming), sampling weights were computed to account for school-level and student-level selection probabilities, as well as non-response adjustments at both levels. The final analytic sample includes all schools from the 12 participating countries for which complete principal survey data on the AI module were available. The dataset comprises nationally representative samples, with survey responses weighted to reflect the sampling design, ensuring reliable cross-country comparisons.

For details on the construction and use of sampling weights, see Fraillon et al. (forthcoming), ICILS 2023 Technical Report. We rely on ICILS 2023 because it is the only large-scale, cross-national dataset that systematically captures principals’ perceptions of generative AI in schools. Unlike single-country or small-scale enquiries, ICILS 2023 provides nationally representative samples across 12 countries, standardized instruments, and rigorous quality-assurance procedures, enabling valid international comparisons. The optional principal AI module was specifically designed to assess school leaders’ expectations regarding the benefits and challenges of AI integration, making ICILS 2023 uniquely suited to our research questions. Rather than conducting our own survey, which could tailor items but would trade off representativeness, cross-national comparability, and survey-design documentation (weights, non-response adjustments) essential for system-level inference, we use ICILS 2023. Replicating ICILS 2023's scope across multiple countries within a single project would be logistically prohibitive, increase participant burden without clear added value, and yield results that are less comparable and less reproducible than those from an established international assessment. Accordingly, using ICILS 2023 is both methodologically appropriate and ethically efficient for the aims of this study.

Measures

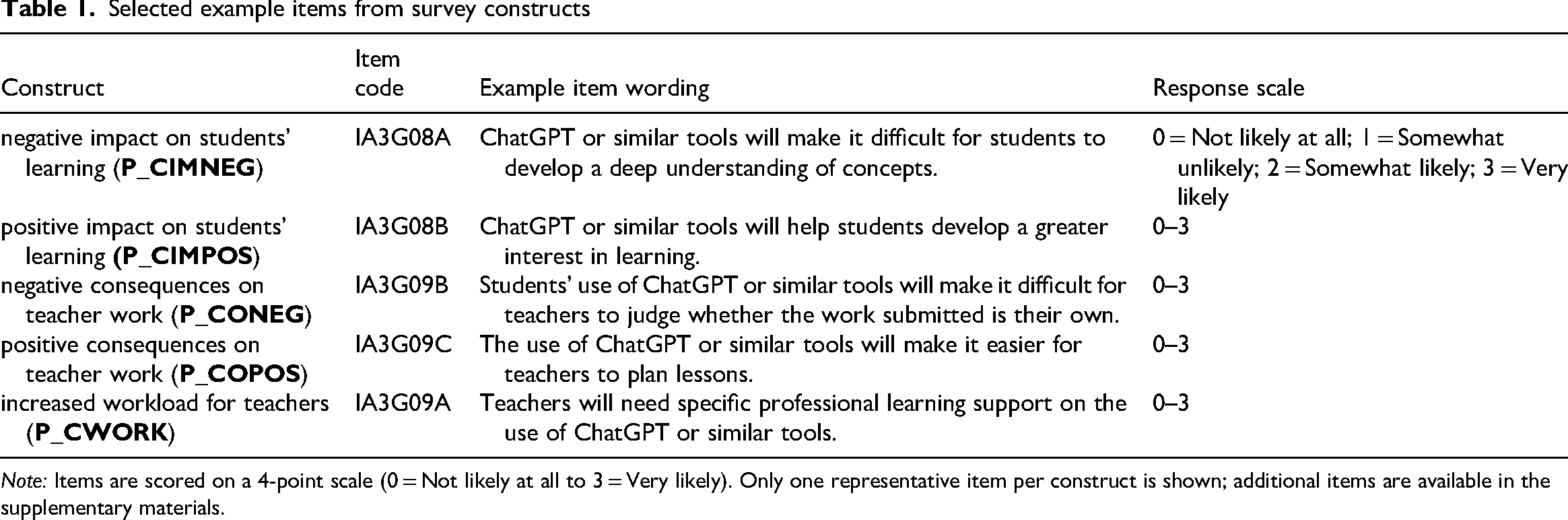

This study examines principals’ perspectives on the impact of ChatGPT and similar AI tools on students’ learning and teachers’ work. The data is drawn from the ICILS 2023 Principal Survey, where principals were asked to assess the likelihood of various outcomes related to AI tools in education (Fraillon et al., forthcoming). Responses were recorded on a four-point Likert scale: 0 = Not likely at all, 1 = Somewhat unlikely, 2 = Somewhat likely, 3 = Very likely. The study includes five key constructs, each measuring different aspects of ChatGPT's perceived impact.

Principals’ Views on the Negative Impact of ChatGPT on Students’ Learning (P_CIMNEG). This scale consists of five items and captures concerns about potential risks associated with ChatGPT use in education, such as its effects on students’ understanding, academic integrity, and dependency on AI tools The Omega coefficient for this scale was 0.715, indicating acceptable internal consistency. Principals’ Views on the Positive Impact of ChatGPT on Students’ Learning (P_CIMPOS). Comprising seven items, this scale assesses the perceived benefits of ChatGPT, including its role in fostering students’ interest, creativity, critical thinking, and overall learning experience. The Omega coefficient for this scale was 0.861, reflecting high reliability. Principals’ Views on the Negative Consequences of ChatGPT on Teachers’ Work (P_CONEG). This scale includes five items and examines principals’ concerns about how AI tools might negatively impact teachers’ work, such as challenges in assessing students’ originality, reliance on inaccurate information, and misalignment with pedagogical best practices. The Omega coefficient for this scale was 0.822, indicating strong internal consistency. Principals’ Views on the Positive Consequences of ChatGPT on Teachers’ Work (P_COPOS). Consisting of four items, this scale explores how ChatGPT might support teachers, including facilitating lesson planning, resource creation, and individualized learning support. The Omega coefficient for this scale was 0.854, showing high reliability. Principals’ Views on Increased Workload as a Consequence of ChatGPT (P_CWORK). This scale includes seven items and focuses on the additional responsibilities AI tools may impose on teachers, such as the need for professional development, monitoring student reliance on AI, and adapting assessment strategies. The Omega coefficient for this scale was 0.801, indicating good reliability.

The ICILS 2023 instruments, including the Principal Survey, underwent international verification processes to ensure cross-cultural comparability, which included standardized translation procedures and psychometric quality control (see the ICILS 2023 Technical Report for more information). However, formal measurement invariance testing of the AI-related constructs across countries was beyond the scope of this study and remains an important area for future research. It should be noted that the constructs analyzed in this study are based on the pre-defined item sets developed within the ICILS 2023 Principal Survey and thus reflect both the strengths and limitations of the original instrument design. In line with the exploratory aim of this study, we did not conduct a formal confirmatory factor analysis (CFA) but focused instead on the network-based analysis of these constructs.

These scales provide a comprehensive framework for understanding principals’ perspectives on both the opportunities and challenges of integrating AI tools, such as ChatGPT, into educational settings. Example Items are reported in table 1, full list of items is presented in the Appendix.

Selected example items from survey constructs

Note: Items are scored on a 4-point scale (0 = Not likely at all to 3 = Very likely). Only one representative item per construct is shown; additional items are available in the supplementary materials.

Analytical strategy

To assess the reliability of each construct, we computed Cronbach's alpha across countries to evaluate the internal consistency of the scales used in this study. This ensures that the measurement models accurately capture the underlying constructs and that the scales consistently measure the intended concepts.

As our constructs were pre-defined, we only applied confirmatory analysis rather than exploratory methods. Following the approach of Belvederi Murri et al. (2022), we utilized latent network models (LNMs) to examine the structural relationships between constructs (Epskamp et al., 2017; Belvederi Murri et al., 2022). LNMs allow for a flexible, network-based understanding of latent constructs, providing insights into interdependencies between variables beyond traditional factor analysis.

In contrast to CFA, which assumes that correlations between items are explained by underlying latent factors, LNMs build a network of items based on the variance–covariance structure of the data. This approach enables the examination of how constructs are related without imposing directional assumptions, as is typically done in structural equation modeling (Epskamp et al., 2017).

A network consists of a set of nodes and a set of edges connecting these nodes. Here, the nodes represent variables, and the edges indicate statistical relationships between these variables, such as correlations. If two variables are not directly connected in the network, it indicates that their relationship can be explained by other variables in the model, meaning they are conditionally independent. Compared to CFAs, LNMs are also less sensitive to cross-loadings or residual correlations because they do not require strict assumptions about local independence (Ouyang et al., 2023).

To make this approach more intuitive, LNMs can be thought of as a map of how psychological aspects, that is, ideas, beliefs, or traits, are connected. Instead of assuming that all survey responses stem from a few unobserved underlying factors as in CFA, this method examines how individual indicators, that is, observable variables, relate to one another directly. For example, the model might show that agreeing with the statement “AI saves time” is directly linked to believing “AI increases efficiency,” and that “efficiency” in turn connects to “AI improves my work.” If there is no direct link detected between “saves time” and “improves my work,” the model suggests that the effect is mediated by “efficiency” rather than acting directly. This makes it possible to uncover indirect pathways empirically, rather than testing such relationships based on theory-driven assumptions. Visualizing these direct connections also allows for the identification of clusters of closely related psychological aspects, offering a clearer and more nuanced understanding of how psychological aspects operate as an interconnected system, rather than as separate or isolated constructs.

To validate the internal structure of the constructs, we applied the Walktrap algorithm, which detects clusters based on network connectivity. This method optimizes the grouping of related indicators, helping to identify meaningful latent structures within the data (Epskamp et al., 2018). The Walktrap algorithm can be understood as a method for identifying groups of closely connected variables within the network — in this case, sets of AI-related perceptions that school leaders tend to associate with one another.

Next, to test the robustness of the identified latent relationships, we employed the Partial Correlation Likelihood Test (PCLT). In simple terms, the PCLT assesses whether the observed relationships among variables are better explained by an underlying latent structure or by direct network connections. This method evaluates whether the observed relationships are better explained by a latent-variable structure or a network-based structure, ensuring that the results are not driven by spurious associations (Golino et al., 2020a, 2020b; Belvederi Murri et al., 2022; Yang et al., 2016). By integrating all the approaches above, this study applies a rigorous analytical framework to assess the reliability, structure, and validity of the measures used.

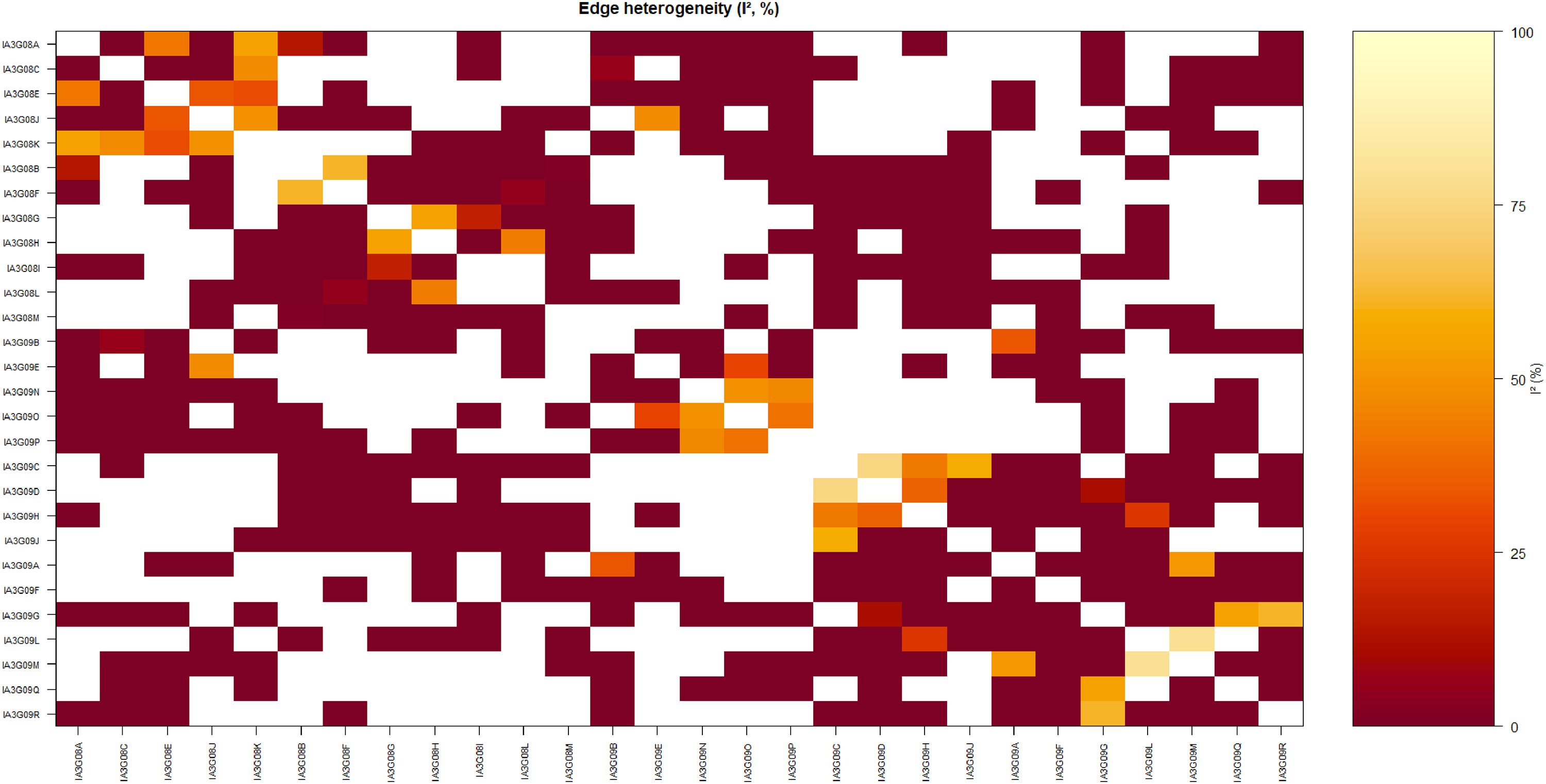

Finally, we estimated country-specific partial-correlation networks and then pooled each edge across countries with a random-effects model on Fisher-z–transformed coefficients, following Epskamp et al. (2022). To quantify between-country variation, we report I2, the proportion of total variability attributable to heterogeneity rather than sampling error, following standard meta-analytic practice. In our case, I2 represents the percentage of total variability observed in the item-to-item connections, that is, edges. Thus, it reflects the actual, systematic differences between countries in these relationships—or, more precisely, the extent to which these associations are inconsistent across countries. Following Higgins and Thompson, (2002), we interpret I2 < 25% as low, 25–50% moderate, 50–75% substantial, and >75% considerable edge heterogeneity. Here, edge heterogeneity means the strength of the same item–item relation differs across countries; low I2 indicates a broadly generalizable relation, whereas high I2 signals context dependence.

All analyses were conducted in R using specialized packages for network modeling and data visualization. LNMs were implemented using the psychometrics package (Epskamp, 2024), while network structures and relationships were analyzed using igraph (Csardi and Nepusz, 2006). The Walktrap algorithm was applied to detect communities within the network, optimizing the grouping of related indicators (Csardi and Nepusz, 2006; Newman, 2003). Network visualizations were generated using qgraph (Epskamp et al., 2012), facilitating the interpretation of latent structures. Model fit was assessed using standard fit indices, including the Akaike Information Criterion (AIC) and the Bayesian Information Criterion (BIC) (Golino and Epskamp, 2017; Golino et al., 2020a, 2020b), to ensure the robustness of the confirmatory model. These methodological approaches provided a structured framework for evaluating the reliability and validity of the measures used in this study.

Results

Descriptive results

The reliability of P_CIMNEG ranges from 0.580 (Slovenia) to 0.887 (Chinese Taipei), P_CIMPOS from 0.810 (Chile) to 0.946 (Greece), P_CWORK from 0.758 (Norway) to 0.926 (Chile), P_CONEG from 0.747 (Korea) to 0.918 (Romania), and P_COPOS from 0.789 (Chile) to 0.929 (Romania) across the countries that provided data.

The international average reliability values are 0.75 (P_CIMNEG), 0.909 (P_CIMPOS), 0.852 (P_CWORK), 0.836 (P_CONEG), and 0.895 (P_COPOS), suggesting that P_CIMPOS and P_COPOS exhibit the highest overall reliability, while P_CONEG shows the greatest variation across countries. It should also be noted that these reliability estimates are influenced by the length of the respective scales (i.e. number of items), in addition to their internal consistency.

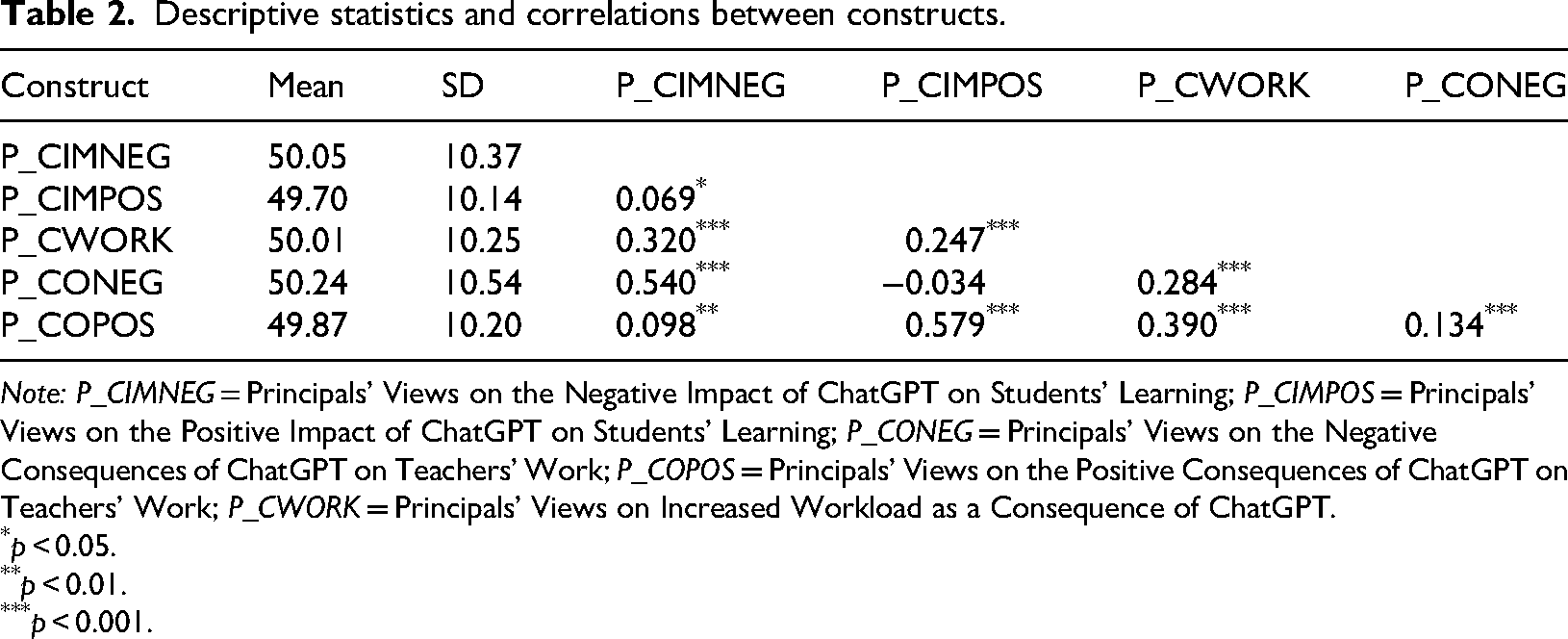

In Table 2, the findings reveal that principals’ views on the negative impact of ChatGPT on students’ learning (P_CIMNEG) and the negative consequences of ChatGPT on teacher work (P_CONEG) are strongly correlated (r = 0.540, p < 0.001), indicating that school leaders who perceive ChatGPT as harmful to student learning also tend to believe it negatively affects teachers’ work. Conversely, principals who recognize the positive impact of ChatGPT on students’ learning (P_CIMPOS) are also more likely to acknowledge its positive consequences for teachers’ work (P_COPOS), as indicated by a strong positive correlation (r = 0.579, p < 0.001). This suggests that principals’ attitudes toward ChatGPT's role in education tend to align across student and teacher experiences.

Descriptive statistics and correlations between constructs.

Note: P_CIMNEG = Principals’ Views on the Negative Impact of ChatGPT on Students’ Learning; P_CIMPOS = Principals’ Views on the Positive Impact of ChatGPT on Students’ Learning; P_CONEG = Principals’ Views on the Negative Consequences of ChatGPT on Teachers’ Work; P_COPOS = Principals’ Views on the Positive Consequences of ChatGPT on Teachers’ Work; P_CWORK = Principals’ Views on Increased Workload as a Consequence of ChatGPT.

p < 0.05.

p < 0.01.

p < 0.001.

Furthermore, principals’ views on increased workload due to ChatGPT (P_CWORK) show significant positive correlations with both P_CIMNEG (r = 0.320, p < 0.001) and P_COPOS (r = 0.390, p < 0.001), implying that while some school leaders associate ChatGPT's integration with additional burdens, they may also recognize its potential benefits for teachers. The non-significant correlation between P_CIMPOS and P_CONEG (r = −0.034) suggests that perceiving ChatGPT as beneficial for students does not necessarily reduce concerns about its negative impact on teachers, pointing to a complex and nuanced perception among school leaders regarding the technology's overall influence in education. Please see Supplementary Materials for each country's correlation results.

Interestingly, P_CIMPOS (positive impact on students) and P_CONEG (negative consequences for teachers) are not significantly correlated. This suggests that school leaders’ positive expectations regarding students’ learning with AI do not necessarily imply lower concerns about potential negative effects on teachers’ work—highlighting that leaders may view impacts on students and teachers as relatively independent domains.

Latent network models (LNMs) results

The network visualization confirms that items cluster strongly according to the five predefined constructs. The red cluster (P_CIMNEG) shows that principals’ concerns about negative impacts on students’ learning are internally coherent and distinct from other domains. Similarly, the blue cluster (P_CIMPOS) forms a tight group, suggesting that positive expectations for students’ learning are perceived as a separate and consistent domain.

The purple nodes (P_CWORK) are positioned centrally in the network, with multiple strong links to green (P_CONEG) and yellow (P_COPOS) items. This indicates that concerns about

The relative separation between red (P_CIMNEG) and blue (P_CIMPOS) clusters indicates that principals clearly distinguish between benefits and risks for students. However, the moderate connections between student-focused and teacher-focused domains suggest that leaders still see these aspects as part of an interconnected system: perceptions of student outcomes influence, but do not fully determine, their views of teacher implications.

In short, the visualization demonstrates that principals’ perceptions are not random but organized in a structured way: clear clusters (validating the measurement model) combined with key bridging points (especially workload) that shape overall interpretations of AI in schools.

Cross-component relationships are represented by edges connecting nodes of different colors. These edges suggest interdependencies between components, such as the potential link between the negative consequences of ChatGPT on teachers’ work (P_CONEG, green) and the increased workload resulting from ChatGPT (P_CWORK, purple). The network reveals that while within-component relationships are generally stronger, there are meaningful cross-component connections, particularly between the positive and negative impacts of ChatGPT on students’ learning (P_CIMPOS and P_CIMNEG). These findings highlight the complex interplay of perceptions, where the impacts on students’ learning and teachers’ work are interrelated, emphasizing the need for a holistic approach to understanding and managing ChatGPT's role in education.

The overall model fit suggests a moderate to acceptable fit based on conventional fit criteria. The Chi-square test is statistically significant (χ2(340) = 2082.433, p < 0.001), which is expected given the large sample size (N = 1134). The comparative fit index (CFI = 0.865) falls slightly below the conventional threshold of ≥0.90 for good fit, suggesting room for model improvement. The RMSEA (0.067, 90% CI: 0.064–0.070) indicates a reasonable fit, as values below 0.08 are generally considered acceptable. However, the SRMR (0.084) is slightly above the benchmark of ≤0.08 for a good fit, indicating some residual misfit. The factor loadings of IA3G08J (0.278) and IA3G09B (0.342) were low, and upon removing these items, the model fit improved to an acceptable level (CFI = 0.905, RMSEA = 0.059, SRMR = 0.070). The improved model (CFI = 0.905, RMSEA = 0.059, SRMR = 0.070) meets accepted fit thresholds. Overall, while the original model demonstrated reasonable fit, these refinements enhanced its comparative and absolute fit indices.

The latent network model reveals significant insights into principals’ perceptions of ChatGPT's impact across different domains. The latent variables (P_CIMNEG, P_CIMPOS, P_CONEG, P_COPOS, and P_CWORK) represent broad constructs related to the negative and positive impacts of ChatGPT on students’ learning, its consequences on teacher work, and increased workload (Figure 3). Each latent variable is connected to its respective observed indicators, with factor loadings indicating the strength of these relationships. For instance, P_CONEG demonstrates a strong connection with IA3G09N (loading = 0.90), signifying that this indicator significantly contributes to the negative consequences perceived for teacher work. Conversely, some indicators, such as those linked to P_COPOS, exhibit weaker loadings, suggesting they contribute less to the latent construct. These relationships validate the latent constructs while highlighting varying degrees of relevance among the observed variables.

Confirmatory latent network model of principals’ perceptions of ChatGPT. Note: P_CIMNEG = principals’ views on the negative impact of ChatGPT on students’ learning; P_CIMPOS = principals’ views on the positive impact of ChatGPT on students’ learning; P_CONEG = principals’ views on the negative consequences of ChatGPT on teachers’ work; P_COPOS = principals’ views on the positive consequences of ChatGPT on teachers’ work; P_CWORK = principals’ views on increased workload as a consequence of ChatGPT.

Additionally, the correlations between the latent variables provide insights into their interrelationships. Notably, P_CIMPOS (positive impacts on students) correlates moderately with P_CONEG (negative consequences for teachers) at 0.56, suggesting that principals who perceive positive impacts for students may also acknowledge challenges for teachers. Similarly, P_CIMPOS and P_CWORK (increased workload) exhibit a moderate correlation of 0.38, suggesting a connection between perceived benefits for students and concerns about workload. The model highlights overlap in principals’ perceptions across domains, indicating that the effects of ChatGPT on one aspect of education may also influence perceptions in other areas. The strong associations and moderate correlations provide a robust basis for further exploration of these interconnected perceptions. These findings emphasize the importance of addressing both the benefits and challenges of integrating AI tools, such as ChatGPT, in educational settings.

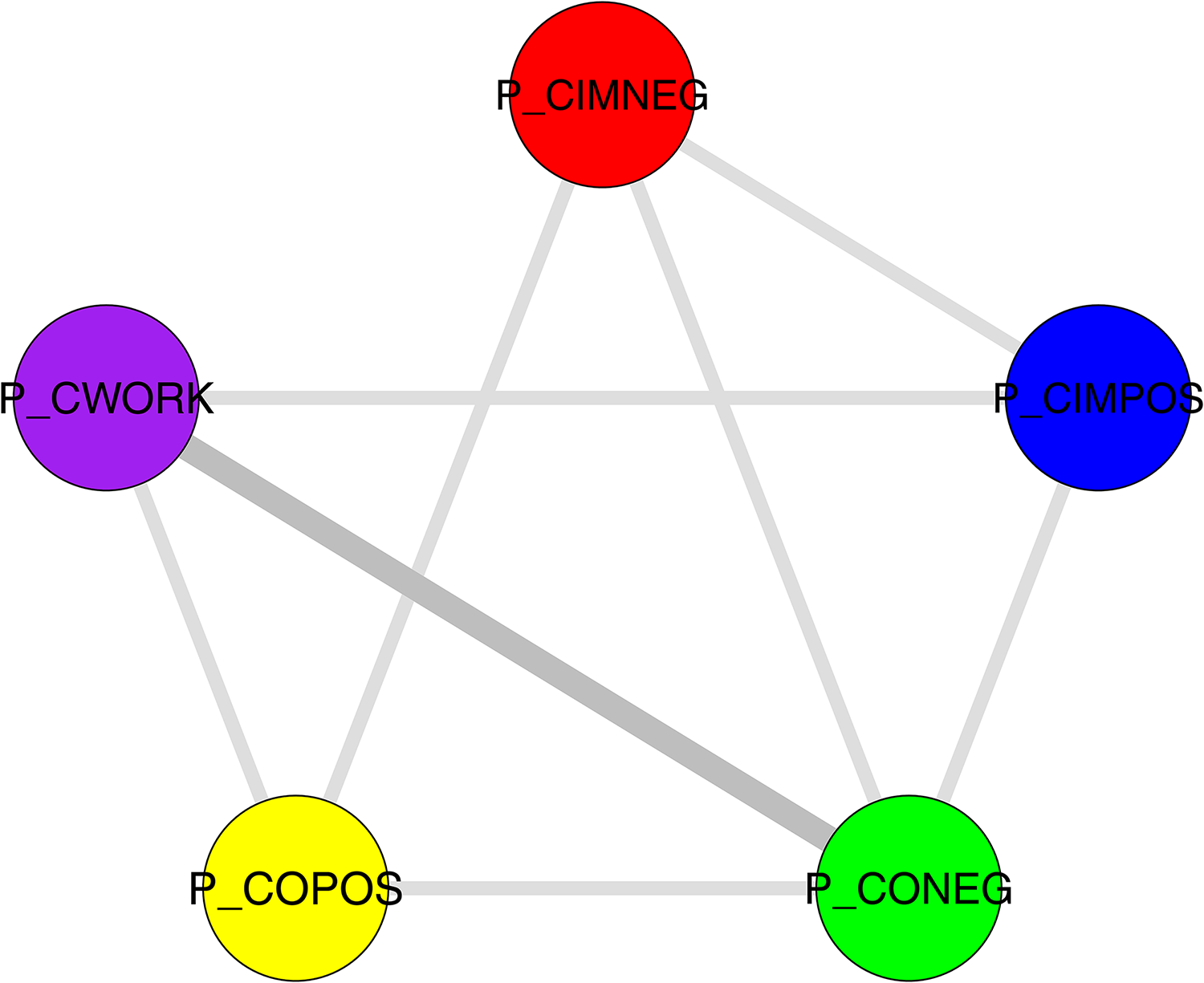

This network visualization highlights the relationships between principals’ perceptions of ChatGPT's impact across five key domains: negative and positive impacts on students, negative and positive consequences for teachers, and increased workload (Figure 4). The thicker edge between “Increased workload” and “Negative consequences for teachers” indicates a strong perceived link, suggesting that additional workload is closely associated with adverse effects on teachers. Meanwhile, the relationships between “Negative impact on students” and other constructs are weaker, showing a relatively independent perception of its effects. The visualization emphasizes that workload serves as a central factor connecting perceptions of ChatGPT's impacts on students and teachers, underlining its pivotal role in shaping overall attitudes toward its integration in education.

The diagram of network connections between key constructs on ChatGPT's impact. Note: P_CIMNEG = principals’ views on the negative impact of ChatGPT on students’ learning; P_CIMPOS = principals’ views on the positive impact of ChatGPT on students’ learning; P_CONEG = principals’ views on the negative consequences of ChatGPT on teachers’ work; P_COPOS = principals’ views on the positive consequences of ChatGPT on teachers’ work; P_CWORK = principals’ views on increased workload as a consequence of ChatGPT.

The central position of increased workload in the network suggests that concerns about additional demands on teachers may serve as a key lens through which school leaders interpret the broader impacts of AI integration. An increased workload is connected to both negative consequences for teachers and positive perceptions of AI benefits, indicating that even when principals recognize potential advantages, they simultaneously anticipate additional burdens on teaching staff. This highlights that practical implementation concerns may strongly shape leadership attitudes toward AI.

Our cross-country heterogeneity analysis helps contextualize the substantive findings. The I2 heatmap (Figure 5) indicates that most edges are remarkably stable across systems: 88.4% fall below the low-heterogeneity threshold (I2 < 25%), suggesting that core relationships (e.g. IA3G08A–IA3G08C, IA3G08C–IA3G08E) are broadly generalizable. In other words, most associations show little or no variation between countries and can inform guidance for school leaders and teachers across diverse contexts. By contrast, a small subset of edges (4.3%) exhibits substantial heterogeneity (I2 ≥ 50%). For example, the link between IA3G09L and IA3G09M varies meaningfully across countries, indicating that the perceived connection between potential societal benefits and risks of generative AI depends strongly on national context. This underscores the influence of cultural or institutional factors on how these constructs relate. Practically, our findings suggest that systems can confidently act on stable edges (e.g. reinforcing consistently linked practices), while heterogeneous edges should be treated as targets for local analysis and policy adaptation. We highlight these areas as priorities for moderator analyses (e.g. region, average school SES, accountability regimes) and emphasize that such variation is a natural feature of cross-national research, highlighting where tailored, context-sensitive interventions are more appropriate than one-size-fits-all recommendations.

Cross-national variability in item–item relations (I2 heatmap), white = no connection observed.

Discussion

Despite the increasing body of research on AI in education, studies focusing on its role in educational leadership remain scarce. Leveraging a large-scale international survey—the first to capture school principals’ perceptions of AI—our study examines the following research questions: (R1) Do school leaders’ perceptions of AI form a coherent, empirically inseparable construct?; (R2) How are school leaders’ expectations regarding AI's impact on students and teachers interrelated?; and (R3) How do school leaders balance perceived benefits for students with potential challenges for teachers (and vice versa) in the context of AI integration?

Regarding R1, our findings demonstrate that school leaders’ perceptions of AI in schools generally form an empirically distinguishable construct, indicating strong within-component relationships. For instance, the network visualization highlights that variables within the same component, such as negative impacts on students (P_CIMNEG) or increased workload (P_CWORK), tend to form distinct clusters. This clustering indicates strong within-component relationships and validates the coherence of principals’ perceptions within these domains. For example, the strong internal coherence in P_CWORK underscores the consensus among principals regarding the impact of ChatGPT on workload. This finding, thus, only partially aligns with the results of Scherer et al. (2020), suggesting that school leaders’ expectations regarding AI, while conceptually distinct and empirically distinguishable, are nevertheless closely interconnected, forming a practically inseparable set of perceptions in school leaders’ everyday practice.

Regarding R2, we found that the investigated constructs are indeed interrelated, with some showing stronger relationships than others, highlighting interdependencies across the components. Thus, cross-component edges in the network reveal meaningful interdependencies between domains, particularly between “Increased workload” (P_CWORK) and “Negative consequences for teachers” (P_CONEG). This connection suggests that principals view additional workload as a significant driver of adverse effects on teachers’ work. Similarly, the moderate correlation between “Positive impacts on students” (P_CIMPOS) and “Increased workload” (P_CWORK) highlights the complexity of perceptions, where concerns about teacher burden may accompany potential benefits for students. These findings clearly indicate that, when it comes to AI, principals adopt a whole-school perspective that takes all stakeholder groups into account, while viewing teachers and teaching as key mediators influencing students. This suggests that principals conceptualize their own leadership impact in terms of an indirect effects model (Ninković and Knežević Florić, 2024), whereby their actions influence teachers and instruction, which in turn shape student outcomes.

In terms of R3, the results indicate that school leaders adopt a balanced perspective on the benefits and challenges of AI. In essence, the latent network model reveals a nuanced perspective among principals, recognizing both the positive impacts of ChatGPT on students (P_CIMPOS) and the challenges it poses for teachers (P_CONEG and P_CWORK). The interplay between these domains emphasizes the need for a balanced approach to integrating ChatGPT in education, ensuring that the benefits for students do not come at the expense of increased teacher workload or negative outcomes for educators. Prior research on educational innovation (Prenger et al., 2022) and technology leadership in schools (Dexter and Richardson, 2020) highlights that sustainable implementation depends on school leaders’ ability to balance the demands of innovation with the professional workload of teachers, since instructional quality fundamentally relies on teachers’ capability to effectively adapt to and integrate new technologies. Consequently, these findings highlight the crucial role of leadership practices that promote the benefits of AI integration for students while safeguarding teachers’ capacity and well-being through proactive prevention of technostress (Pothugsanti, 2024).

What is additionally striking is the network centrality of the item “The use of [ChatGPT or similar tools] by students will make it difficult for teachers to judge whether or not the work submitted by students is their own” and its connectedness with the expected negative impacts on students (P_CIMNEG) increased workload for teachers (P_CWORK) and the expected negative consequences for teachers (P_CONEG). This underscores school leader's concern that AI-related cheating and plagiarism are perceived as central factors driving negative consequences for both students and teachers (Kovari, 2025; Lee et al., 2024). While the network visualization highlights the central role of Increased Workload, it is essential to note that the current latent network model does not formally test moderation effects. Exploring the potential moderating role of this construct would be a valuable direction for future research.

Finally, based on the TAM model (Davis, 1986), it is possible to argue that while principals recognize AI's potential to enhance learning outcomes, their simultaneous concern about teacher workload may create resistance to adoption (Marikyan et al., 2023; Scherer et al., 2019). Because they perceive these technologies as burdensome for teachers and a potential source of cheating, they might be less willing to use them and support their use in school. Building on TAM (Davis, 1986), our results suggest that AI-induced workload concerns need to be explicitly incorporated into technology acceptance frameworks for school leaders. We therefore propose an extended TAM-based model in which external factors (e.g. infrastructure, support, training) influence perceived usefulness and ease of use, but where workload mitigation factors act as a critical mediator shaping attitudes and behavioral intention toward AI adoption. This responds to Bagozzi's (2007) critique by embedding contextual variables—particularly workload—into the TAM structure, thereby aligning technology acceptance with leadership practice. This theoretical integration would position school leaders’ technology acceptance as a pivotal mediator in AI adoption within schools.

Limitations

In this study, we aimed to explore the latent network structure of principals’ views on ChatGPT using a latent network modeling approach. While our analysis provided insights into the relationships among key constructs, we acknowledge several limitations.

First, due to sample size constraints within individual countries, we were unable to conduct a formal multi-group latent network analysis (MG-LNM). A multi-group approach would have allowed us to statistically compare whether the network structures were invariant across countries. Future studies with larger, balanced samples across countries should aim to assess cross-national measurement invariance in latent network structures.

Second, although our study assesses network connectivity and structural relationships, it does not account for potential contextual differences (e.g. educational policies, digital infrastructure, or principals’ experience with AI tools), which may influence the interpretation of ChatGPT's impact. Future research could integrate contextual variables to better understand how these factors shape the structure of principals’ perceptions.

Third, psychometric network models generally face difficulties in capturing non-linear relationships between variables and constructs (Slipetz et al., 2024). Especially in the context of large-scale assessment data, as used in this study, validating findings by applying machine learning approaches (Aydin et al., 2025) and using items instead of aggregated scale scores (McClure et al., 2024) appears to be a promising strategy to investigate such associations, since both approaches model relationships directly from the data rather than relying on prior theoretical assumptions (Pietsch et al., 2025a).

A further limitation of this study concerns the scope of our cross-national analysis. National contexts are likely to play a significant role in shaping how AI is perceived and implemented in schools. However, the aim of this study was not to compare countries or explain results based on contextual differences, but rather to provide an overall picture of how school principals initially perceived AI when it was introduced. While this broad perspective offers valuable insights into emerging perceptions, it does not capture the full diversity of national contexts. Future research should therefore engage more deeply with country-level variations to better understand context-specific dynamics.

Finally, our study relies on cross-sectional data, meaning that our findings capture network structures at a single point in time. Longitudinal analyses could provide deeper insights into how these relationships evolve as AI-driven technologies, such as ChatGPT, become more integrated into educational settings.

Despite these limitations, our study provides a novel application of latent network analysis in the field of educational leadership and technology adoption. It highlights the need for further methodological advancements and cross-national comparisons to deepen our understanding of the evolving role of AI in education.

Conclusion, implications, and future research

Based on the data collected in the context of ICILS 2023 on generative AI from the perspective of school leaders, we found that principals worldwide have a nuanced understanding of the topic and carefully weigh its pros and cons. Nevertheless, concerns about potential negative consequences appear to prevail, with potential plagiarism emerging as a particularly pressing issue for school leaders. This means that they are aware of some ethical issues and challenges associated with these technologies, as discussed in previous research (Adams and Thompson, 2025; Cheng and Wang, 2023; Kesim et al., 2025). From the TAM perspective (Davis, 1986), these negative perceptions could be significant barriers to its usage by school leaders. However, such perceptions are reasonable, as most AI tools available today had not yet been introduced when the ICILS 2023 survey was conducted, nor had extensive knowledge about AI's benefits in schools been widely established. As principals develop a more in-depth understanding and acquire more skills concerning AI and scholars develop strategies to address some of these concerns, their openness to its adoption and potential advantages in education may increase in the future (Marrone et al., 2025).

Considering future research, network psychometrics remains a promising lens for unpacking complex psychological systems (Borsboom, 2022). In light of the international large-scale assessment data we analyze—and the meta-analytic Gaussian network aggregation (MAGNA; Epskamp et al., 2022) that we applied here—this framework is particularly advantageous for extending cross-national evidence. Building on our MAGNA results, subsequent work can (i) conduct IPD network meta-analyses (Brunner et al., 2023; Campos et al., 2023; Pietsch et al., 2023), widely regarded as the gold standard in evidence synthesis (Debray et al., 2015); (ii) test moderators (e.g. policy regimes, resource levels) to explain edge heterogeneity; (iii) examine temporal stability by aggregating networks across assessment cycles; and (iv) integrate measurement diagnostics (e.g. alignment/approximate invariance) to ensure that cross-system differences in edges reflect substantive variation rather than scale artefacts. Together, these steps would turn network-based synthesis from a one-off analysis into a cumulative, policy-relevant evidence base.

In this study, we focused on principals’ perceptions regarding the impact of AI on students and teachers; however, these perceptions are not formed in isolation. As Bagozzi (2007) critiques, one of the key limitations of dominant models like TAM is their failure to theorize the origins and determinants of perceptions. Similarly, the broader institutional context, such as centralized governance structures, high-stakes accountability, and competing stakeholder interests, as highlighted by Reyes (2021), may significantly influence how school leaders perceive and respond to technological innovations. Therefore, we suggest that future research should move beyond measuring acceptance to examine the structural, social, emotional, and cultural factors that shape principals’ attitudes toward AI.

On the other hand, it seems important to investigate the extent to which school leaders’ expectations and attitudes promote and hinder the use of AI in schools, as several studies found that how leaders think about such topics results in opportunities and possibilities being opened or closed (McCarthy et al., 2023; Pietsch and Mah, 2024; Witthöft et al., 2024). Given the prevailing negative perceptions, our study calls for policymakers to develop appropriate professional learning opportunities through established programs or network activities that can help leaders enhance their perceived usefulness and ease of use, as well as address potential biases regarding AI integration. In particular, we consider it important to focus on schools’ ability to identify, interpret, and make use of AI-related knowledge (Fischer-Schöneborn et al., 2025), as well as the microfoundations of AI implementation in schools (Pietsch et al., 2025b; Witthöft et al., 2025), that is, how individual actions and interactions shape collective practices of implementing and using AI within schools. For example, introductory workshops on AI in education, certified courses on digital leadership, collaborative AI exploration groups, or hands-on training activities with AI tools for school management could help principals develop a better understanding and more positive perceptions of AI integration.

At the policy level, our findings underscore the need for systemic strategies to mitigate workload. For instance, some education systems have explored models that provide teachers with dedicated time for professional learning in digital competencies, which could serve as inspiration for integrating AI training more systematically. Similarly, Singapore has introduced an ‘AI for Leaders’ program focusing on managing workload through digital tools (Adams and Thompson, 2025). At the school level, leaders can adopt redesigned workflows that automate administrative tasks (e.g. AI-assisted formative assessment) and provide targeted training to increase perceived ease of use (e.g. lesson planning support).

For practitioners, we like to encourage school leaders to engage in ongoing professional development to build foundational AI literacy and develop an understanding of common misconceptions about its use in education. We also recommend that leaders reflect on their own attitudes and potential biases, as these shape the broader school response to innovation.

Supplemental Material

sj-docx-1-ema-10.1177_17411432251401487 - Supplemental material for School leaders’ expectations of AI’s impact on students and teachers: Insights from ICILS 2023

Supplemental material, sj-docx-1-ema-10.1177_17411432251401487 for School leaders’ expectations of AI’s impact on students and teachers: Insights from ICILS 2023 by Nurullah Eryilmaz, Dana-Kristin Mah, Mehmet Sükrü Bellibas and Marcus Pietsch in Educational Management Administration & Leadership

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is supported by the Alexander von Humboldt Foundation through a Senior Researcher Fellowship to Mehmet Şükrü Bellibaş (TUR—1227776-HFST-E) and by the German Research Foundation (DFG) through a DFG Heisenberg Professorship and project funding to Marcus Pietsch (451458391, 531146345).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Author biographies

![]() .

.

![]() .

.

![]() .

.

![]() .

.

Appendix

How likely is it that the use of [ChatGPT or similar tools] will result in the outcomes listed below, with respect to students’ learning at your school? Item wording How likely is it that the use of [ChatGPT or similar tools] will result in the outcomes listed below, with respect to students’ learning at your school?

0 = Not likely at all; 1 = Somewhat unlikely; 2 = Somewhat likely; 3 = Very likely

How likely is it that the use of [ChatGPT or similar tools] will result in the outcomes listed below, with respect to students’ learning at your school? Item wording How likely is it that the use of [ChatGPT or similar tools] will result in the outcomes listed below, with respect to students’ learning at your school?

0 = Not likely at all; 1 = Somewhat unlikely; 2 = Somewhat likely; 3 = Very likely

How likely is it that the use of [ChatGPT or similar tools] will have the following consequences on the work of teachers at your school? Item wording How likely is it that the use of [ChatGPT or similar tools] will have the following consequences on the work of teachers at your school?

0 = Not likely at all; 1 = Somewhat unlikely; 2 = Somewhat likely; 3 = Very likely

How likely is it that the use of [ChatGPT or similar tools] will have the following consequences on the work of teachers at your school? Item wording How likely is it that the use of [ChatGPT or similar tools] will have the following consequences on the work of teachers at your school?

0 = Not likely at all; 1 = Somewhat unlikely; 2 = Somewhat likely; 3 = Very likely

(R09) How likely is it that the use of [ChatGPT or similar tools] will have the following consequences on the work of teachers at your school?

Table 4.39.1: Item wording How likely is it that the use of [ChatGPT or similar tools] will have the following consequences on the work of teachers at your school?

0 = Not likely at all; 1 = Somewhat unlikely; 2 = Somewhat likely; 3 = Very likely

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.