Abstract

The U.S. Food and Drug Administration launched Project Optimus with the aim of shifting the paradigm of dose-finding and selection toward identifying the optimal biological dose that offers the best balance between benefit and risk, rather than the maximum tolerated dose. However, achieving dose optimization is a challenging task that involves a variety of factors and is considerably more complicated than identifying the maximum tolerated dose, both in terms of design and implementation. This article provides a comprehensive review of various design strategies for dose-optimization trials, including phase 1/2 and 2/3 designs, and highlights their respective advantages and disadvantages. In addition, practical considerations for selecting an appropriate design and planning and executing the trial are discussed. The article also presents freely available software tools that can be utilized for designing and implementing dose-optimization trials. The approaches and their implementation are illustrated through real-world examples.

Background

The conventional phase 1 dose-finding paradigm was developed during the era of cytotoxic therapies with the primary goal of identifying the maximum tolerated dose (MTD) based on dose-limiting toxicity (DLT). The underlying assumption of this more-is-better approach is that both efficacy and toxicity increase monotonically with the dose. However, concerns have been raised regarding the appropriateness of using this approach in the age of targeted therapies and immunotherapies.1–4 Many of these innovative therapies exhibit a shallow dose–response, meaning that the MTD may not be reached within a clinically effective dose range. Furthermore, efficacy may not increase monotonically with the dose and often reaches a plateau after a certain level is reached.5,6 Consequently, the MTD may provide minimal improvements in efficacy over a lower dose, while causing more adverse events (AEs). For these reasons, the focus of dose-finding and selection should be shifted from finding the MTD to the identification of the optimal biological dose (OBD).

In 2021, the U.S. Food and Drug Administration (FDA) Oncology Center of Excellence launched Project Optimus with the goal of reforming the dose optimization and selection paradigm in oncology drug development. 7 To facilitate this shift, the FDA also released draft guidance titled “Optimizing the Dosage of Human Prescription Drugs and Biological Products for the Treatment of Oncologic Diseases.” 8 Zirkelbach et al. 6 and Shah et al. 3 have offered valuable insights into the rationale, significance, and principles of dose optimization, along with a few drug approval examples from a regulatory agency perspective. Poor dose optimization has several negative consequences, such as failure to bring a drug to market, frequent dose modifications at the approved dose, and postmarketing requirements to further evaluate the dose. Shah et al. 3 provide examples of approved drugs whose dose was modified for safety or tolerability after approval.

Dose-optimization trials are more complex than conventional MTD-finding trials. 9 The latter mainly focuses on DLT and is guided by a simple decision rule: if the observed data suggest that the DLT probability of the current dose is unacceptably higher (or lower) than the target DLT rate, we de-escalate (or escalate) the dose. In contrast, dose-optimization trials are inherently multidimensional. By definition, they require the characterization and assessment of the benefit–risk of the doses, which involves various data, including toxicity and efficacy data, as well as pharmacokinetics, pharmacodynamics, and biomarker data. The decision of dose transition and selection must be based on the benefit–risk assessment of the doses. This increased dimensionality complicates the trial design, decision rule, and their implementation, and often requires larger sample sizes. As a result, it likely increases costs and prolongs the timeline for early phase drug development. Therefore, it is critically important to have efficient and novel statistical design strategies to address these challenges and meet the increasing regulatory requirements for oncology dose justification.

The aim of this article is to provide statistical and practical considerations related to the planning and execution of dose-optimization trials. To facilitate understanding, we classified dose-optimization trials conducted premarket into two types: phase 1/2 dose optimization, where dose optimization is performed in phase 1 or/and 2, and phase 2/3 dose optimization, where dose optimization is performed in phase 2 or/and 3. In what follows, we discuss the methods, challenges, and practical considerations for designing and implementing both types of trials. We also provide real-world trial examples to illustrate key considerations. Finally, we conclude with a brief discussion.

Phase 1/2 dose optimization

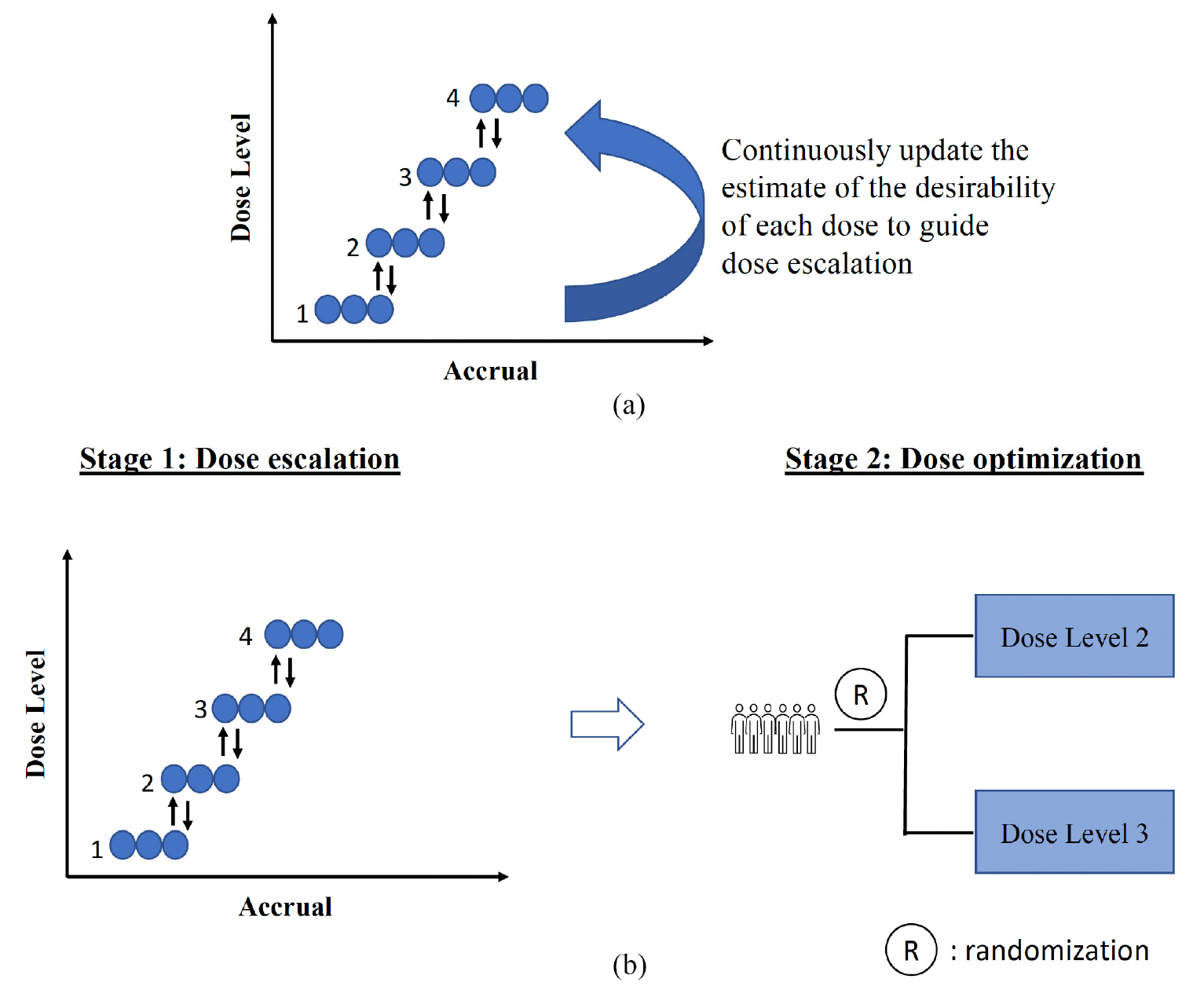

The topic of dose optimization has recently been propelled into the spotlight with the advent of Project Optimus, but the concept of finding the optimal dose based on a consideration of both risk and benefit is not a new one.10–12 Since Thall and Cook’s seminal work on the EffTox design, 13 a plethora of designs have been proposed for optimizing doses in phase 1/2 settings14–25 (see the book of Yuan et al. 9 for a comprehensive review). To aid understanding of this vast and ever-increasing number of designs and provide a roadmap for future development, we categorize them as efficacy-integrated designs and two-stage designs (Figure 1).

(a) Efficacy-integrated designs, which achieve dose optimization by continuously updating the estimate of the benefit–risk trade-off of each dose, based on the most recent data, to guide dose escalation, de-escalation, and selection. (b) Two-stage designs, where stage 1 dose escalation is performed to establish the MTD, and stage 2 conducts dose optimization often by randomization in multiple doses identified in stage 1. MTD: maximum tolerated dose.

Efficacy-integrated phase 1/2 designs, also known as fully sequential phase 1/2 designs, directly target the OBD by performing dose escalation or de-escalation based on the benefit–risk trade-off. The decision to escalate or de-escalate the dose is typically made by continuously updating the dose–toxicity and dose–efficacy model estimates based on interim data, in a similar fashion as the continual reassessment method. 26 In contrast, two-stage phase 1/2 designs first identify the MTD through conventional DLT-based dose escalation and then randomize patients among multiple doses to identify the OBD based on risk–benefit assessment. Examples of efficacy-integrated phase 1/2 designs include EffTox 13 and Bayesian optimal interval phase 1/2 (BOIN12) design, 25 while examples of two-stage phase 1/2 designs include the design by Hoering et al., 27 utility-based Bayesian optimal interval (U-BOIN) design 28 and dose-ranging approach to optimizing dose (DROID). 29 It is important to note that the purpose of differentiating the two classes is to aid understanding and not to provide a definitive definition. A design may incorporate features of both approaches. For instance, a design may use a benefit–risk trade-off to perform dose escalation while also including randomization.30,31

In the following sections, we first describe some essential elements of phase 1/2 designs, including efficacy and toxicity endpoints and benefit–risk trade-off, and then provide a more detailed description of efficacy-integrated and two-stage design strategies.

Efficacy and toxicity endpoints

Dose optimization involves assessing the risks and benefits of a new drug, which requires specifying efficacy and toxicity endpoints to characterize its potential outcomes. An example of a toxicity endpoint is DLT, which is often defined as a grade 3 or higher AE that occurs within the first treatment cycle according to the Common Terminology Criteria for Adverse Events. However, DLTs may not be sufficient to fully characterize the safety and tolerability of many novel targeted drugs. These drugs often cause low-grade toxicity but rarely result in DLTs. In such cases, more comprehensive toxicity endpoints that account for low-grade or cumulative toxicity, such as ordinal toxicity endpoint, 32 total toxicity burden,33–38 or equivalent toxicity score,37,38 may be preferred. To quantify the safety profile of the drug when cumulative toxicity is expected, the dose tolerability rate over multiple cycles can also be used. 39 The resulting toxicity endpoint can be binary, categorical, semicontinuous, or continuous. 38 Each type of toxicity endpoint has its advantages and limitations and should be chosen carefully with both clinical and statistical inputs. Alternatively, multiple constraints can be used to control different grades of toxicity at prespecified levels.40,41

Efficacy endpoints should be chosen based on clinical and logistic considerations. For early phase trials, objective response rate along with the duration of response is commonly used. However, the response often takes several cycles to be ascertained, which causes major logistic difficulties in making real-time dose assignment decisions. In this case, intermediate short-term endpoints, such as pharmacodynamic endpoints or target receptor occupancy, may be used instead. Alternatively, new designs are available to handle delayed responses.19,42,43 A potential issue with using short-term endpoints is that they may not be a reliable surrogate of clinical endpoints. To address this, one approach is to use short-term endpoints to guide real-time dose assignment decisions during the trial and then use the long-term clinical endpoint at the end of the trial to identify the OBD. 29 In some situations, more than one efficacy endpoint can be used to capture the multifaceted effects of the drug and improve design efficacy. It is more convincing if different pieces of evidence, such as pharmacokinetics, pharmacodynamics, and efficacy, point to the same dose. For instance, Agrawal et al. 44 considered receptor occupancy, pharmacokinetic parameters, and tumor shrinkage jointly for nivolumab dose selection. In some trials, patient-reported outcomes, such as quality of life, are important for determining the OBD.

Benefit–risk trade-off

The goal of dose optimization is to find the dose that achieves the optimal balance between benefit and risk. However, in current practice, the benefit–risk trade-off is rarely explicitly defined or used to guide dose optimization and selection. We believe that explicitly defining the benefit–risk trade-off, tailored to the trial’s objectives and characteristics, is advantageous and should be more widely adopted. By doing so, investigators can evaluate and fine-tune the design’s operating characteristics, resulting in more efficient dose optimization.

A straightforward method to define the benefit–risk trade-off is by considering the trade-off between the probability of efficacy and toxicity of a dose.

21

For example, benefit–risk trade-off

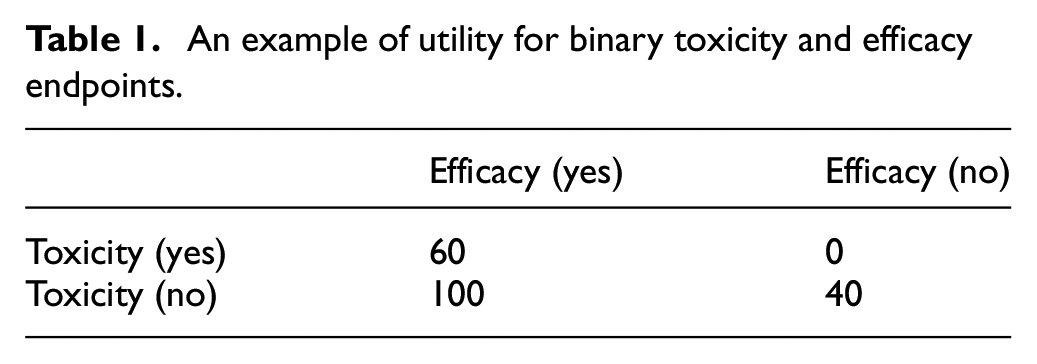

A more versatile and broadly applicable method of defining the benefit–risk trade-off is through utility,22,25,30,45,46 where clinicians are asked to provide utility scores for each potential patient-level outcome, thus quantifying the desirability of doses. For instance, with binary toxicity and efficacy endpoints, four potential outcomes exist for each patient: (efficacy, toxicity) = (yes, no), (yes, yes), (no, no), and (no, yes). We assign a score of 100 to the most desirable outcome (yes, no) and a score of 0 to the least desirable outcome (no, yes). For the remaining two outcomes, we elicit the scores from clinicians; for example, a score of 60 and 40 may be assigned to (yes, yes) and (no, no), respectively (see Table 1). The benefit–risk trade-off of a dose is then defined as the average of the scores and weighted by the probability of each potential outcome. That is,

An example of utility for binary toxicity and efficacy endpoints.

The utility-based benefit–risk trade-off is often a better option than the probability-based benefit–risk trade-off discussed earlier since clinicians typically have a better grasp of the relative desirability of patient outcomes than probabilities. Furthermore, research has shown that the utility approach encompasses the efficacy-and-toxicity-probability-based benefit–risk trade-off as a special case.25,28,47

The use of a benefit–risk trade-off to guide dose optimization may raise some concerns. The first concern is that the benefit–risk trade-off is subjective. However, we consider the need for elicitation from trial clinicians as a strength. This process encourages clinicians to carefully consider the benefit–risk trade-off and design the study accordingly, reducing subjectivity and variability. Leaving the decision process unspecified actually leads to more subjectivity and variability. In addition, specifying the benefit–risk trade-off enables the evaluation of the design’s operating characteristics through simulation, enhancing understanding and providing opportunities to improve the design before the trial begins. Finally, research indicates that many designs are quite robust to the specification of benefit–risk trade-off. To reduce the subjectivity of benefit–risk trade-off elicitation, open communication and active efforts among stakeholders to reach a consensus are recommended. Seeking regulatory input early on during the design stage is also essential.

The second common concern regarding the use of benefit–risk trade-off for dose optimization is that the benefit and risk of a treatment are multidimensional, making it challenging to capture all aspects with a single benefit–risk trade-off. However, it is important to note that the benefit–risk trade-off is primarily defined to facilitate and enhance the efficiency of the adaptive decision-making process for dose assignment and evaluation of the trial design’s operating characteristics. Ultimately, the final decision for dose selection, particularly at the end of the trial, should be based on both the design recommendation, which is determined by the prespecified benefit–risk trade-off, and the totality of the evidence.

Efficacy-integrated designs

The efficacy-integrated design is characterized by its use of toxicity and efficacy, which are combined simultaneously through the use of benefit–risk trade-off, to guide dose escalation and de-escalation, and ultimately determine the OBD (Figure 1(a)). This approach assumes a statistical model to update the dose–toxicity and dose–efficacy relationship based on interim data. This information is then utilized to make adaptive dose assignment decisions that prioritize doses with high benefit–risk trade-off for treating the next cohort of patients. The efficacy-integrated design operates similarly to the continual reassessment method, but uses the estimate of benefit–risk trade-off rather than DLT to make dose transition decisions. For ease of explanation, the terms benefit–risk trade-off and desirability are used interchangeably. Depending on the method and level of complexity of implementation, efficacy-integrated designs can be further differentiated into model-based designs and model-assisted designs.

Model-based designs The model-based design assumes a statistical model to depict the dose–toxicity and dose–efficacy curves, which are used to guide dose transition. Examples of model-based designs include EffTox

13

and late-onset EffTox designs.

19

EffTox assumes the following logistic marginal dose–toxicity and dose–efficacy models:

Model-assisted designs Model-assisted designs have been developed to address the limitations of model-based designs.48,49 Unlike model-based approaches, model-assisted approaches use simple models, such as binomial or multinomial models, at each dose, without assuming any specific shape for the dose–toxicity or dose–efficacy curves. As a result, the decision rule can be derived and tabulated before the trial onset. During the trial, users can simply consult the decision table to make dose assignment decisions. The book by Yuan et al. 49 provides a comprehensive review of model-assisted designs. Due to their simplicity and desirable operating characteristics, model-assisted designs have gained popularity in recent years. For instance, the Bayesian optimal interval (BOIN) design, 50 a model-assisted design, received the fit-for-purpose designation from the FDA as a tool for dose-finding in oncology. 51

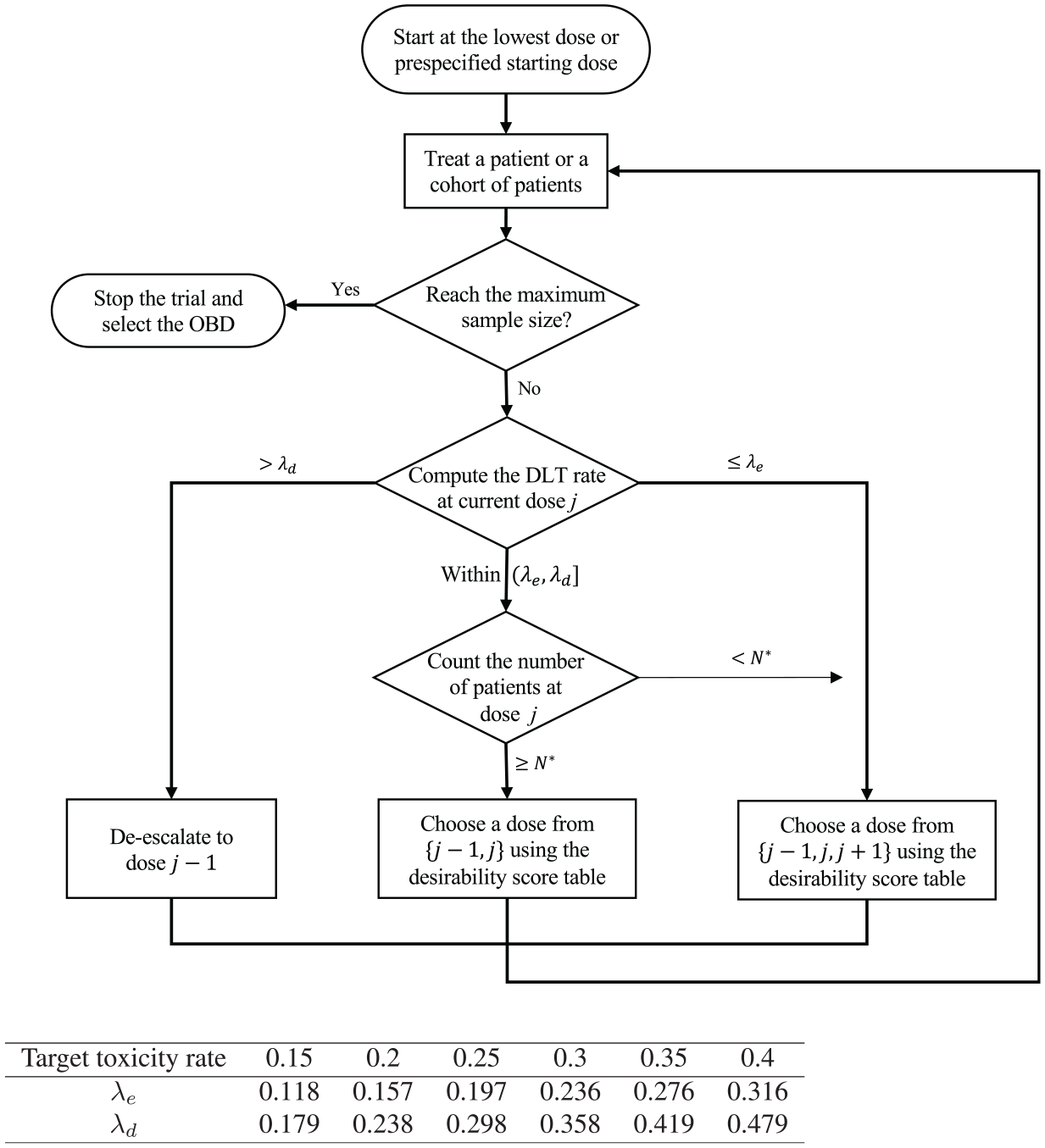

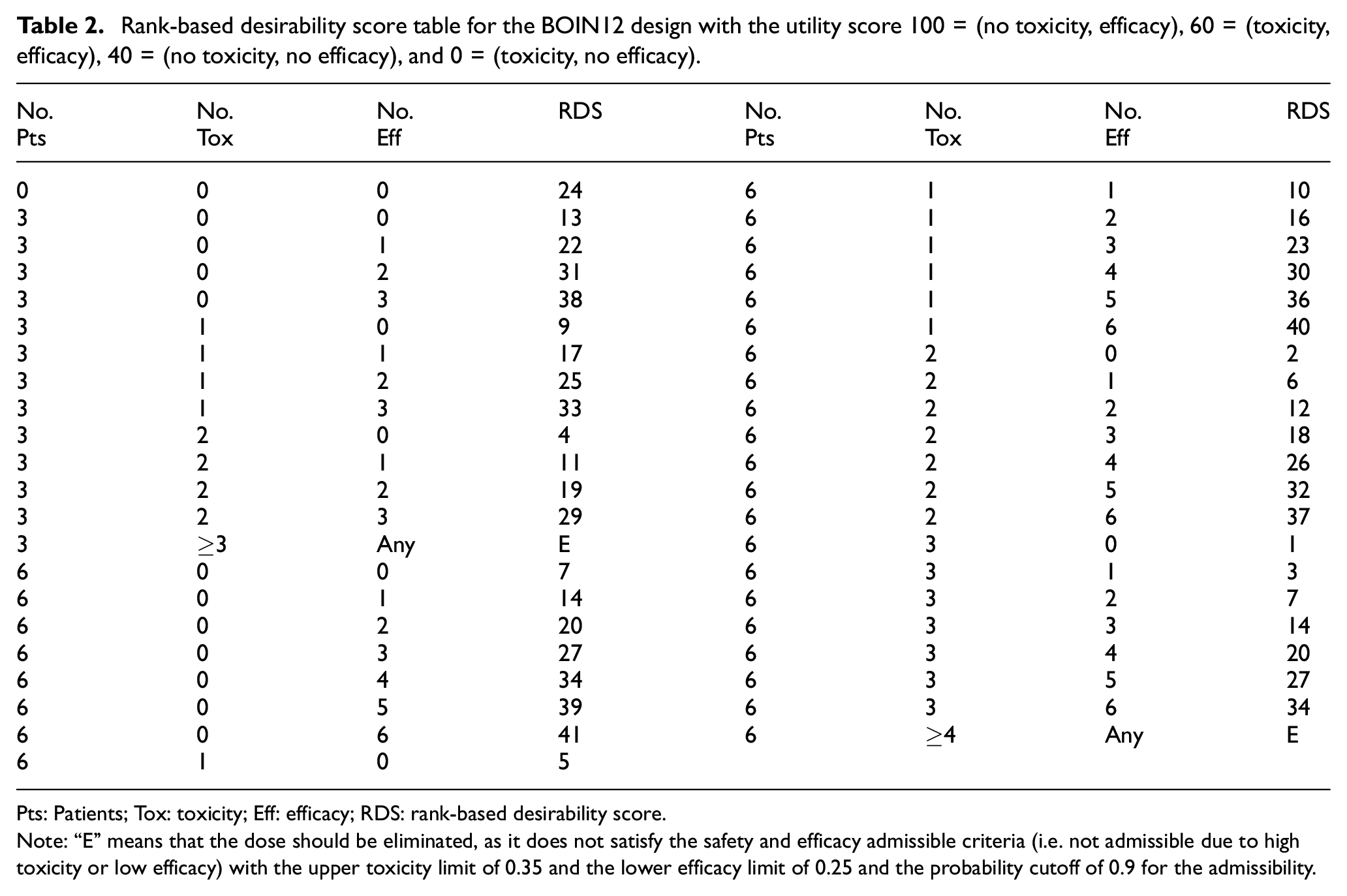

A number of model-assisted designs have been proposed for dose optimization.24,25,52–54 We here use BOIN12 to illustrate this approach. BOIN12 uses the utility to measure the toxicity–efficacy trade-off and models it using a simple beta-binomial model based on pseudolikelihood methodology. Based on interim toxicity and efficacy data, the BOIN12 design adaptively assigns patients to the dose with the highest estimated desirability. The dose-finding rule of BOIN12 is depicted in Figure 2. A key feature of this design is that dose desirability can be pretabulated and included in the trial protocol prior to the trial’s start (see Table 2), making implementation simple. During the trial, determining the desirability of a dose is straightforward: count the number of patients treated at a given dose, the number who experienced toxicity, and the number who experienced efficacy. This information is used to look up the dose’s rank-based desirability score in Table 2. The next cohort of patients is then assigned to the dose with the highest rank-based desirability score value. For instance, consider a scenario in which a trial has treated three, six, and three patients at the first three doses, respectively, and has observed toxicity and efficacy outcomes of (0, 1, 2) and (0, 3, 1), respectively. The current dose being administered is d = 2. In accordance with the dose-finding rule, we compare the observed toxicity rate

The schema of the BOIN12 design, where

Rank-based desirability score table for the BOIN12 design with the utility score 100 = (no toxicity, efficacy), 60 = (toxicity, efficacy), 40 = (no toxicity, no efficacy), and 0 = (toxicity, no efficacy).

Pts: Patients; Tox: toxicity; Eff: efficacy; RDS: rank-based desirability score.

Note: “E” means that the dose should be eliminated, as it does not satisfy the safety and efficacy admissible criteria (i.e. not admissible due to high toxicity or low efficacy) with the upper toxicity limit of 0.35 and the lower efficacy limit of 0.25 and the probability cutoff of 0.9 for the admissibility.

It is worth noting that Table 2 assumes a cohort size of 3. To account for the possibility that the number of evaluable patients may not be a multiple of 3, a more comprehensive decision table can be generated using the software described later, which includes every possible number of patients up to the maximum number of patients that can be treated at a dose. The BOIN12 has been shown to have desirable operating characteristics through an extensive simulation study, often outperforming more complex model-based phase 1/2 designs, such as the EffTox design. 25

Two-stage designs

The two-stage design approach takes a staged approach to dose optimization.27–29,55 As illustrated in Figure 1(b), in stage 1, dose escalation is performed to establish the MTD. At the end of stage 1, typically, the MTD and one or two lower doses that demonstrate appropriate antitumor activities and pharmacokinetic/pharmacodynamic characteristics are selected and proceed to stage 2 for dose optimization often via randomization. Stage 1 often uses conventional MTD-targeted dose-escalation methods, such as model-based continual reassessment method or model-assisted Bayesian optimal interval design. Thus, the key question for this approach is how to design stage 2, especially in terms of sample size determination.

Yang et al.

56

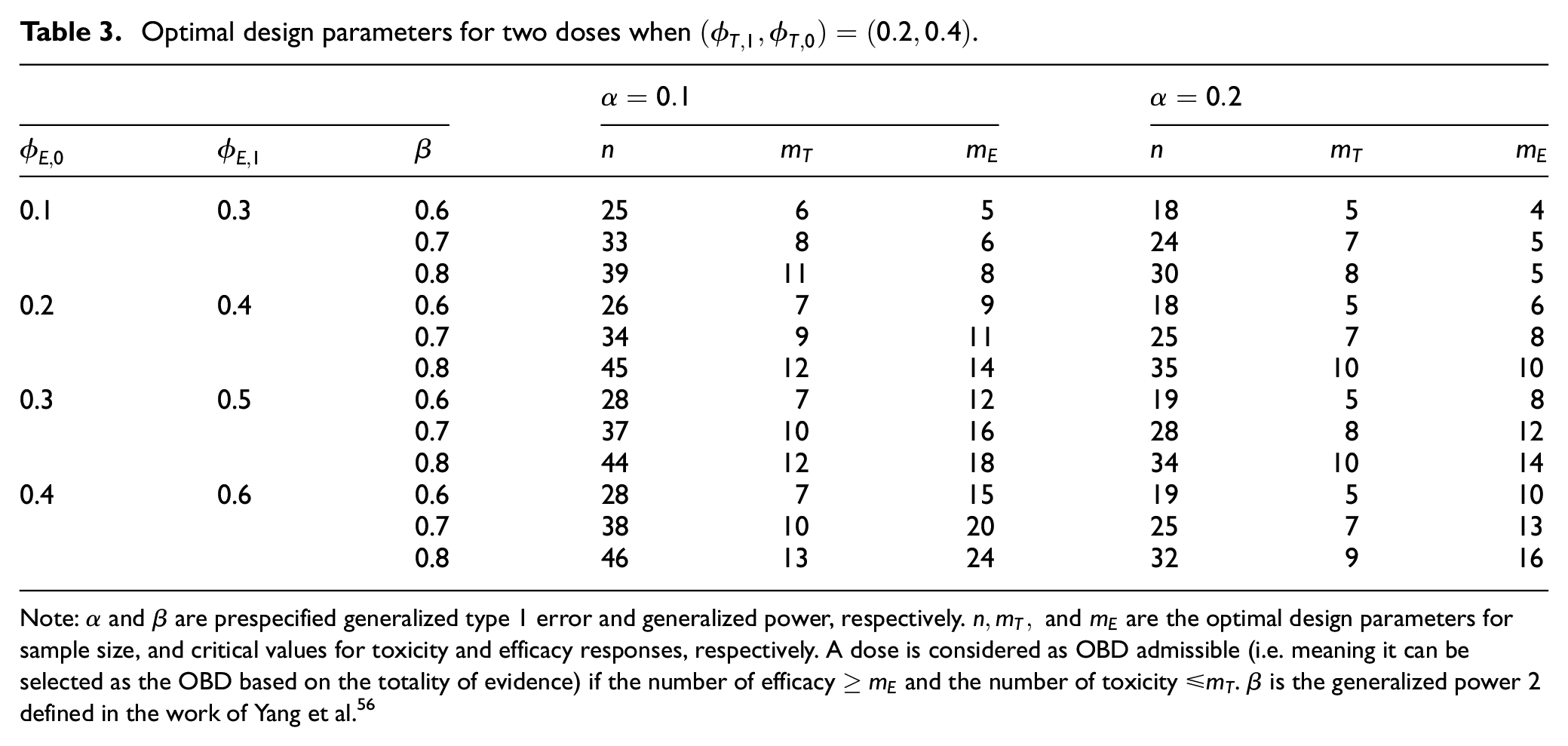

proposed the MERIT (Multiple-dosE RandomIzed Trial design for dose optimization based on toxicity and efficacy) design to provide a systematic approach to determining the sample size for stage 2 randomization. As in practice the final selection of the OBD involves both statistical and nonstatistical considerations, it is of limited value to control the statistical properties of the design solely in terms of the OBD selection. Thus, MERIT focuses on controlling the statistical properties, such as type 1 error and power, for identifying doses that are admissible to be the OBD. The OBD can only be selected from the admissible doses. To define the admissible doses, let

MERIT considers a null hypothesis

Table 3 shows the optimal sample size for a randomized trial with two doses, as well as the decision rule to determine whether a dose is OBD admissible. For example, suppose

Optimal design parameters for two doses when

Note:

Regarding the method of randomization, equal randomization is the most commonly used approach due to its ease of implementation and unbiased comparison between doses. Although response-adaptive randomization may seem attractive as it allows for more patients to be allocated to a more desirable dose, it often provides little benefit for multiple-dose randomization trials with small sample sizes. In fact, accrual may be nearly complete before the data start to skew the randomization toward better doses. In addition, response-adaptive randomization is more logistically challenging and increases the likelihood of unbalanced patient characteristic distribution across arms, which can lead to biased estimates. 57 Equal randomization combined with safety and futility monitoring, such as using Bayesian optimal phase 2 design, 58 is often effective and allows for early stopping of overly toxic and futile doses during the trial.

The approach of randomizing patients among multiple doses for optimization is commonly used in nononcology drug development and is referred to as dose-ranging. However, well-established dose-ranging methods in nononcology therapeutic areas, such as the multiple comparison procedure—modeling method, 59 are rarely used in oncology due to the unique characteristics and challenges of cancer drug development. 29 To address this issue, Guo and Yuan 29 developed an oncology-specific dose-ranging design referred to as DROID, by combining the mature framework of nononcology dose-ranging with oncology dose-finding.

Design choice

The efficacy-integrated and two-stage strategies each have their own advantages and disadvantages, making them suitable for different scenarios. The two-stage approach is well-aligned with conventional develop-by-stage practices and can accommodate different populations for the dose-escalation and randomization stages. However, a potential drawback of the two-stage design is that the true optimal dose may be incorrectly excluded when transitioning from stages 1 to 2 due to unreliable toxicity and efficacy estimates based on a small stage 1 sample size. This issue can be partially addressed by backfilling patients during the dose-escalation stage to obtain more data and increase the reliability of dose selection. However, this approach may still be limited by a small sample size. In addition, the two-stage approach generally requires larger sample sizes than the efficacy-integrated approach.

In contrast, the efficacy-integrated approach continuously learns the toxicity and efficacy profile of all doses throughout the trial, making it more efficient to identify the optimal dose and requiring smaller sample sizes. One limitation of this approach is that it requires efficacy and toxicity endpoints to be quickly observable enough to make adaptive decisions. However, methods such as time-to-event BOIN12 (TITE-BOIN12) have been proposed to address this limitation and facilitate real-time decision-making in the presence of pending toxicity or efficacy data 42 . In addition, the efficacy-integrated approach requires that the population used for dose optimization is comparable to that for subsequent phase 2b or 3 trials, which could be challenging when the target population is not clear. In this case, after phase 1/2 dose-finding, we may conduct cohort expansion (e.g. basket trials) in potential target populations to confirm the OBD and establish the target population before proceeding to phase 3 trials. This strategy is also applicable to the two-stage approach.

The efficacy-integrated and two-stage approaches demand different sample sizes. For the two-stage approach, the recommended sample size is

The efficacy-integrated and two-stage design strategies can be combined to achieve more efficient dose optimization, and they are not mutually exclusive. For instance, a trial can begin with an efficacy-integrated design (e.g. BOIN12) to optimize the initial dose efficiently, and then progress to the second stage with multiple-dose randomization to refine optimization using the MERIT design. In the generalized phase 1/2 design, a third randomized stage is added to further optimize the dose based on long-term endpoints. 31

Phase 2/3 dose optimization

A phase 2/3 design offers an alternative strategy for dose optimization. This design type encompasses a broad range of designs and can serve various purposes, including treatment selection, population selection, and endpoint selection,60,61 and expediting the drug development process for accelerated approval. 62 Here, we focus on the phase 2/3 design for the purpose of dose optimization.

In this context, the phase 2 component involves the random assignment of patients to multiple doses, with or without a control, to evaluate the benefits and risks of each dose. The doses are typically selected based on factors such as toxicity, pharmacokinetics/pharmacodynamics, and preliminary efficacy data collected in the phase 1 dose-escalation study, which should demonstrate reasonable safety and antitumor activity. At the end of phase 2, an interim analysis is performed to determine the optimal dose that produces the most favorable benefit–risk trade-off for further investigation in the phase 3 component of the trial. The goal of phase 3 is to confirm the efficacy of the selected optimal dose with a randomized concurrent control or historical control.

Types of phase 2/3 designs

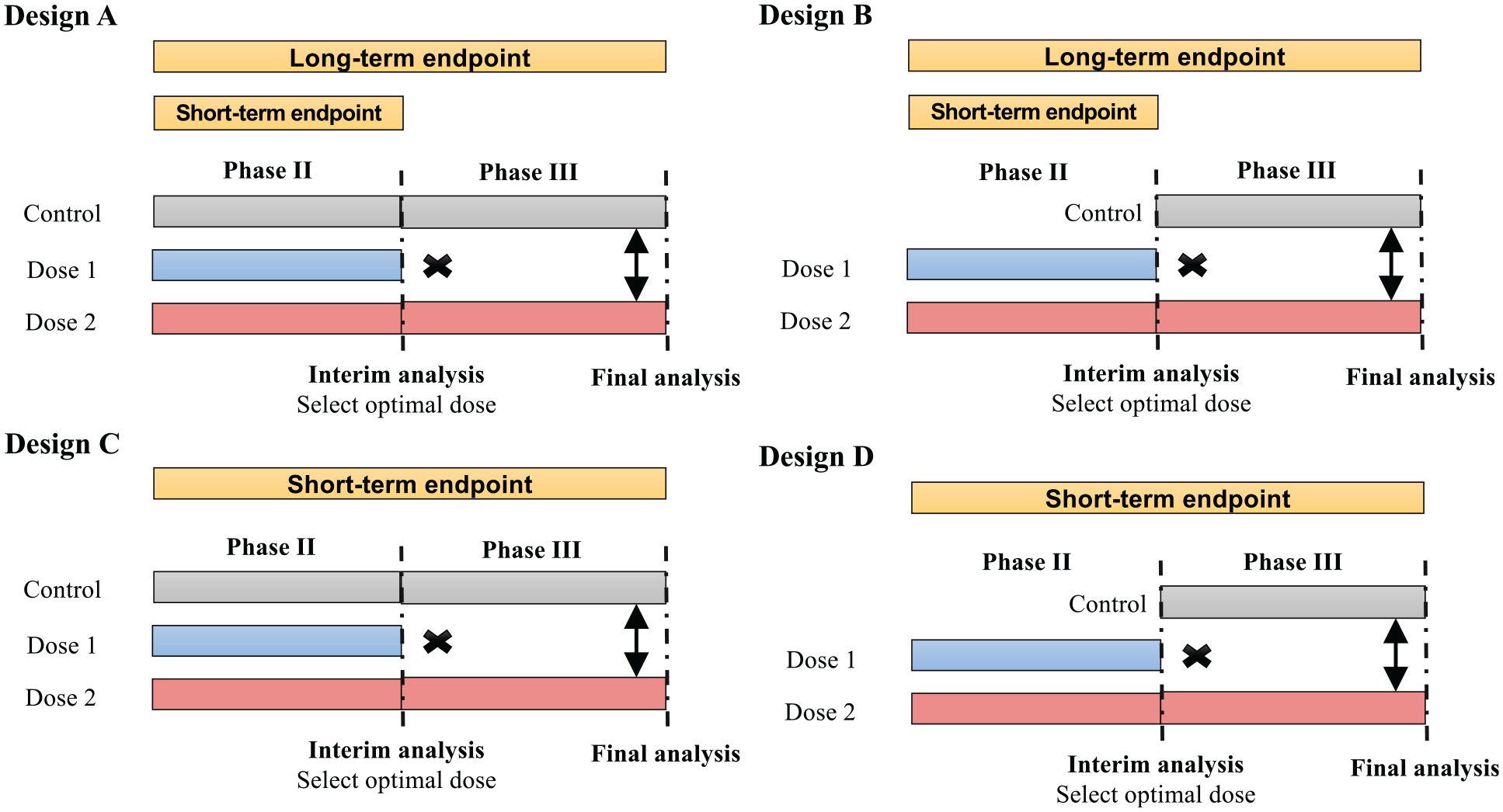

Depending on whether the concurrent control is included and the type of endpoints used in phases 2 and 3, Jiang and Yuan 63 distinguish four forms of phase 2/3 dose-optimization designs (Figure 3) that are suitable for different clinical settings.

Four types of phase 2/3 trial designs, varying in whether the concurrent control is included and the type of endpoints used in phases 2 and 3.

Design A incorporates a concurrent control in both stages and employs a short-term binary endpoint (e.g. objective response rate) in phase 2 to identify the optimal dose. In phase 3, a long-term time-to-event endpoint (e.g. progression-free survival (PFS) or overall survival) is used to assess the treatment’s therapeutic effect. The use of a short-term endpoint in phase 2 allows for a prompt selection of the optimal dose to progress to phase 3. Although not depicted in the schema, when appropriate, phase 3 may include an additional interim futility/superiority analysis akin to the standard group sequential design. An example of Design A is the HORIZON 3 [Cediranib Plus FOLFOX6 Versus Bevacizumab Plus FOLFOX6 in Patients With Untreated Metastatic Colorectal Cancer] trial for advanced metastatic colorectal cancer, 64 which will be further elaborated in the “Trial Examples” section.

Design B is a modification of Design A that includes only the control in phase 3. This can further reduce the sample size. However, Design B’s drawback is the lack of concurrent control in phase 2, making it difficult to combine phase 2 and 3 data and obtain an unbiased estimate of the treatment effect if there is a drift in the patient population or/and the treatment effect. Design B is a reasonable option when a drift is unlikely, for example, when the accrual is fast such that the patient population is unlikely to change, and the characteristics and performance of study centers remain stable over the trial period. Design B was used in several clinical trials, such as a randomized multicenter trial of SM-88 in patients with metastatic pancreatic cancer. 65

Design C is similar to Design A but simpler because it employs the same short-term endpoint (e.g. objective response rate) for both phases 2 and 3. This design is particularly useful in situations where demonstrating an effect on a long-term endpoint (e.g. survival or morbidity) requires lengthy and often large trials due to the disease’s prolonged course, and the short-term endpoint is reasonably likely to predict clinical benefit on the long-term endpoint. The seamless phase 2/3 trial of intravenous (IV) tenecteplase versus standard-dose IV alteplase for treating patients with acute ischemic stroke is an example of Design C. 66

Design D is a simplified version of Design C that does not include a control. This design is appropriate when there is a particularly acute unmet medical need (e.g. a refractory or resistant patient population), and/or the tumor under treatment is rare. Designs C and D are useful for drug development that targets accelerated approval from the FDA, which often relies on a short-term surrogate or intermediate clinical endpoint such as response. A limitation of Design D, like Design B, is the lack of concurrent controls, which may result in a biased estimate of the treatment effect if there is a drift in the patient population.

In addition to considering short-term phase 2 and long-term phase 3 endpoints, additional endpoints can be employed for making adaptive decisions regarding the transition from phases 2 to 3. One such approach is the 2-in-1 phase 2/3 designs,67,68 which may provide additional flexibility for dose optimization.

Operational versus inferential

The phase 2/3 design can be categorized as either operational or inferential. In operational phase 2/3 designs, both phases are conducted under the same protocol to eliminate any gaps between them and reduce the overall trial cost. The data collected in phase 2 are not used in the phase 3 confirmatory analysis. Operational phase 2/3 designs are relatively simple to implement, and provide flexibility in dose selection and study design adjustments based on the results of the phase 2 portion while maintaining the integrity and reliability of the confirmatory phase 3 analysis.

Inferential phase 2/3 designs integrate both phase 2 and 3 data to evaluate the treatment effect. Because they incorporate additional phase 2 data, inferential phase 2/3 designs typically demand smaller sample sizes, shorten timelines of drug development, and exhibit greater statistical efficiency compared with operational phase 2/3 designs. However, for the same reason, they require careful consideration and specialized statistical methods to control the family-wise error rate (see Stallard and Todd 60 and Kunz et al. 61 for relevant methods). In addition, the implementation of inferential phase 2/3 designs is more logistically and operationally challenging compared with operational phase 2/3 designs, as detailed below.

Practical considerations

In operational phase 2/3 designs, two phases are conducted independently and the data collected in phase 2 are not used in the phase 3 confirmatory analysis. Therefore, the phase 2 and 3 portions can be conducted using standard considerations for phase 2 and 3 trials, respectively, resulting in little additional complexity beyond what is expected for each individual phase.

The use of inferential phase 2/3 designs for dose optimization presents more logistical and operational challenges. Due to the complexity of the design, it is crucial that sponsors and regulatory agencies engage in discussions about the trial design as early as possible in the development process. This allows for agencies to communicate their expectations and potentially leads to more efficient studies. Determining the doses to be studied in phase 2/3 trials requires knowledge of therapeutic properties, patient population heterogeneity, the need for additional dose exploration for a supplemental application, as well as communication between patients and providers. Similar considerations also apply to the selection of the optimal dose when stage 1 is complete.

For phase 2/3 trials to select the optimal dose, unblinded data access is necessary at the end of stage 1. However, this could compromise the trial’s integrity if not handled appropriately. The FDA guidance on adaptive designs recommends limiting access to comparative interim results to individuals with relevant expertise who are independent of the trial’s conducting and managing personnel and who have a need to know. An Independent Data Monitoring Committee or an independent adaptation body should make the interim dose selection decision. Procedures must be in place to ensure that personnel responsible for preparing and reporting interim analysis results to the Independent Data Monitoring Committee are physically and logistically separated from the trial’s managing and conducting personnel. This requires planned procedures to maintain and verify confidentiality, as well as documentation of monitoring and adherence to operating procedures. To maximize the trial’s integrity, investigators should prespecify design details, including the anticipated number and timing of interim analyses, criteria for dose selection, methods for controlling type 1 error and estimating treatment effects, and the data access plan.

Software

Designing dose-optimization trials is more challenging than conventional dose-finding trials and requires the use of more complicated statistical designs. It is critical to thoroughly evaluate and calibrate the operating characteristics before beginning the trial. Moreover, for certain designs, such as model-based designs, the trial conduct also requires real-time model fitting and calculation. Thus, easy-to-use software is a key to the success of dose-optimization trials. This is an area that requires immediate attention and development. The website www.trialdesign.org offers a dose-optimization module that includes several dose-optimization designs, such as BOIN12, TITE-BOIN12, MERIT, and DROID. In addition, software to implement the EffTox design is available from the software download website at The University of Texas MD Anderson Cancer Center.

Trial examples

Efficacy-integrated phase 1/2 dose optimization

An efficacy-integrated model-based late-onset EffTox design 19 was utilized to determine the optimal dose of sitravatinib in combination with a fixed dose of nivolumab for the treatment of clear cell renal cell carcinoma. 69 The trial had two primary endpoints: toxicity, defined as the time to DLT within 12 weeks of starting therapy, and early efficacy, defined as the absence of progressive disease at 6 weeks using Response Evaluation Criteria in Solid Tumor (RECIST) guideline by investigator assessment. Dose escalation/de-escalation was performed based on the benefit–risk trade-off constructed using marginal toxicity and efficacy probabilities. At the end of the trial, the 80 and 120 mg doses had almost the same estimated trade-off desirability scores; thus, additional criteria were used to compare the doses, including an evaluation of quality of life. The 120 mg dose was chosen as the optimal dose for sitravatinib and is currently being evaluated in ongoing phase 2 and 3 clinical trials for various malignancies. The implementation of this model-based phase 1/2 design has been logistically and resource-demanding. It requires a dedicated staff biostatistician to maintain frequent day-to-day communication between the clinical and data teams and perform real-time calculations to determine the dose assignment for the next patients. Tidwell et al. 70 review this process and provide a summary of challenges and potential solutions, one of which is to use model-assisted designs such as BOIN12 as described next.

As an example, an optimal dose-finding trial of donor-derived CD5 chimeric antigen receptor (CAR) T cells in patients with relapsed or refractory T-cell acute lymphoblastic leukemia was based on the BOIN12 design.

71

The utility function shown in Table 1 was used to measure the benefit–risk trade-off of the treatment, and the rank-based desirability score in Table 2 was used to guide dose escalation. Patients were treated in cohorts of 3. Up to the time of reporting, a total of five patients who had CD7-negative relapse after CD7 CAR therapy were enrolled and received prior stem cell transplantation donor-derived CD5 CAR T cells at an initial dose of

Two-stage phase 1/2 dose optimization

Belantamab mafodotin, an antibody-drug conjugate targeting B-cell maturation antigen, was developed in a two-stage phase 1/2 study. In the DREAMM-1 first-in-human trial,

72

the dose escalation was performed based on the continual reassessment method design to explore doses ranging from 0.03 to 4.6 mg/kg. MTD was not reached. The 3.4 mg/kg dosage showed activity in the dose-expansion portion of DREAMM-1, but many patients experienced dose interruptions (71%) and reductions (66%). To improve tolerability, both 2.5 and 3.4 mg/kg doses were further evaluated in the DREAMM-2 trial in which patients were randomly assigned between the two dose arms.

73

Efficacy was similar between the 2.5 mg/kg cohort

Phase 2/3 dose optimization

The HORIZON 3 trial compared the efficacy of cediranib with that of bevacizumab when used in combination with chemotherapy mFOLFOX6 for first-line treatment of advanced metastatic colorectal cancer. 64 The trial employed a randomized, double-blind, inferential phase 2/3 design (Design A). During the phase 2 part, patients were randomly assigned 1:1:1 to receive cediranib 20 or 30 mg/day or bevacizumab 5 mg/kg IV infusion every 14 days, each combined with 14-day treatment cycles of the regimen. An Independent Data Monitoring Committee conducted end-of-phase 2 data analysis after 225 patients had 3 months of follow-up. The Independent Data Monitoring Committee concluded that cediranib 20 mg met all predefined criteria for continuation. As a result, patients enrolled in the phase 3 part of the study were randomly assigned 1:1 to receive mFOLFOX6 with cediranib 20 mg or bevacizumab. All study personnel other than the Independent Data Monitoring Committee remained blinded to the data until the trial ended. Patients who received cediranib 30 mg in the phase 2 part were unblinded and given the option to continue on open-label cediranib (20 or 30 mg/day). The primary analysis was planned for the primary endpoint PFS, which would occur after 850 progression events had occurred, based on all data from patients recruited into both the phase 2 and phase 3 parts of the study, excluding data from the cediranib dose discontinued at the end of phase 2. Since PFS data from patients recruited into the phase 2 part of the study were used in the phase 3 analyses, and data from the phase 2 part were used to select the phase 3 dose, the method of Todd and Stallard 74 was used to adjust the type 1 error for the primary analysis. The primary analysis showed that PFS had no significant difference between the arms. The estimated hazard ratio was 1.10 (95% CI = 0.97–1.25), and the median PFS was 9.9 months for cediranib 20 mg and 10.3 months for bevacizumab. However, since the upper 95% CI was beyond the predefined limit of 1.2, noninferiority of cediranib versus bevacizumab could not be concluded.

Discussion

We have reviewed design strategies and provided practical guidance on dose-optimization trials. For phase 1/2 designs, we contrast efficacy-integrated and two-stage phase 1/2 design strategies and discuss their pros and cons and key considerations for trial implementation. For phase 2/3 designs, we discuss and compare different types of designs based on the type of endpoint, whether the control is included, and whether phase 2 data are combined with phase 3 data for the primary analysis (inferential or operational).

In practice, the decision of whether to pursue a phase 1/2 or a phase 2/3 strategy should be made on a case-by-case basis, taking into account a variety of factors including clinical, statistical, logistic, and budgetary considerations. For instance, if a drug candidate has a fast readout of efficacy and pharmacodynamic parameters, an efficacy-integrated phase 1/2 design may be preferred to evaluate safety and efficacy simultaneously, starting early in the trial. On the contrary, if a drug candidate has been tested in a sufficient number of patients at various dose levels and there is a good understanding of its therapeutic window, a seamless phase 2/3 design may be a more attractive approach to selecting a dose from phase 2 and seamlessly bring it to the confirmatory phase 3.

We have focused on dose optimization in the early phases (e.g. phases 1 and 2) of drug development, which is generally preferred to premarket dose optimization. This approach increases the likelihood that the recommended dosage of the marketed product maximizes efficacy and minimizes toxicity, and avoids many issues associated with postmarketing dose optimization, such as the requirement for large sample sizes, long study durations, and difficulties in conducting the study as patients and investigators may be reluctant to be randomized to a dose of an approved product that differs from the approved dose. However, it is not uncommon that a dose has not been optimized at the time of marketing approval, and dose-optimization studies are conducted after the drug has been approved. In such cases, the postapproval study evaluating two or more doses may be planned as noninferiority trials. One may be concerned that performing dose optimization in the early phase will needlessly expose large numbers of patients to ineffective therapies and slow down drug development. 75 Nevertheless, novel statistical designs, such as BOIN12 and EffTox, can stop the trial early when the drug demonstrates little activity, alleviating these concerns. Further research is warranted to develop and implement better study designs to maximize the benefit of dose optimization and deliver safe and effective treatments to patients.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.