Abstract

The vision-based road lane detection technique plays a key role in driver assistance system. While existing lane recognition algorithms demonstrated over 90% detection rate, the validation test was usually conducted on limited scenarios. Significant gaps still exist when applied in real-life autonomous driving. The goal of this article was to identify these gaps and to suggest research directions that can bridge them. The straight lane detection algorithm based on linear Hough transform (HT) was used in this study as an example to evaluate the possible perception issues under challenging scenarios, including various road types, different weather conditions and shades, changed lighting conditions, and so on. The study found that the HT-based algorithm presented an acceptable detection rate in simple backgrounds, such as driving on a highway or conditions showing distinguishable contrast between lane boundaries and their surroundings. However, it failed to recognize road dividing lines under varied lighting conditions. The failure was attributed to the binarization process failing to extract lane features before detections. In addition, the existing HT-based algorithm would be interfered by lane-like interferences, such as guardrails, railways, bikeways, utility poles, pedestrian sidewalks, buildings and so on. Overall, all these findings support the need for further improvements of current road lane detection algorithms to be robust against interference and illumination variations. Moreover, the widely used algorithm has the potential to raise the lane boundary detection rate if an appropriate search range restriction and illumination classification process is added.

Introduction

Driver inattention and visual interference are leading causes of accidents and road fatalities. 1 The development of advanced driver assistance systems (ADAS) can improve driving safety. 2,3 Several ADAS, such as the lane departure warning system, 4 lane keeping system, 5 target localization, 6 and obstacle avoidance, 7 have been gradually turned into commercial products. 8 However, existing systems are still far from satisfaction, especially under challenging scenarios. 9 ADAS road understandings in complex environments usually result in a high false-positive rate and low detection rate. Consequently, the performance of road and lane perceptions, especially the lane detection technique, needs to be further improved. 10,11 Lane mark recognition is one of the most important parts of road understandings. 12 For example, a more reliable path planning approach for mobile object navigation can be achieved by the interaction of lane detection with obstacle avoidance. 13,14 Despite the ADAS incorporating laser radar and global positioning sensors, 1 vision-based devices are usually applied for lane perceptions. This is a result of lane markings being originally designed for human visual system and cameras can obtain equivalent cues via computations. 11 In addition, machine visions are cost-effective solutions in automotive applications, compared to other sensors. 15 However, it is important to mention that stereo cameras and radar always work together to enhance each other’s effectiveness and to achieve self-driving, similar to the five senses for humans to understand and perceive the world.

Vision-based lane detection is a technique to locate the lane boundaries in an image without prior information of the road. Such an algorithm influences the performance of lane tracking, which tracks the lane edges from frame to frame. 12 A careful review of the literature indicates that various approaches can be applied practically to detect the lane markings. The first category applied the feature-based 16,17 approach to locate the lanes, such as line width, edge, color, intensity, texture, and gradient features. 18 For example, the lane edge features can be extracted by Hough transform (HT). 19,20 Moreover, road lane-markers, candidates, and obstacles can be segmented using the gray level histogram of the road. 17 In addition, model-based 21 algorithms, such as two-dimensional (2D), three-dimensional (3D), straight, or curved road models, 19 are also widely used in practice. Despite performing better in detecting weak lane appearance features, 22 such an approach is less efficient compared to edge-based solutions. 10 Machine learning (ML), especially deep learning (e.g. convolutional neural network), 23,24 is another technique that can be used in lane detection, 25,26 with much progress made in past 5 years. But such methods would have difficulties on road conditions not included in the training set 27 and tend to overfit toward noises in images and lane markers. 28 In addition, such a “pixel-wise” segmentation methodology cannot generate information (i.e. lane boundary parameters including the position and direction) directly understood by computers for decision making. As a result, traditional computer vision technique is still important for road perception.

An essential requirement for the lane marker detection system is the real-time functionality with existing hardware and software implementations. 29 It is also important to mention that lane perception is only one of several image processing steps (including road understanding, obstacle tracking, traffic sign detection, etc.) during semiautonomous driving. As a result, the lane detection technique needs to be simple and efficient enough to adjust to the short response time and limited computational resources. 30,31 The HT technique is such an algorithm and therefore being widely used when detecting straight road lanes. 10 Consequently, the research focus of this study is the HT-based algorithm. However, such an algorithm suffers from weak lane marks, noise, and occlusions. 1,22 It was reported that error rates of ADAS should be several orders of magnitude lower for a closed-loop autonomous driving system. 11 Consequently, improving the performance of the lane detection technique in challenging scenarios is required. To enhance the effectiveness of the technique, it is important to determine the reasons that cause the detection failures under more complex practical situations and to know which specific image processing step results in the final lane misjudgment. However, a literature review indicates that the existing focus is to propose a specific algorithm that can obtain acceptable results only under limited scenarios. 11 Moreover, there is a lack of deep and detailed analysis of the widely used HT-based algorithm when facing lane detection failures. This type of knowledge gap would delay the progress of the algorithm enhancement and could even waste researchers’ efforts and funds if focused in the wrong direction. Consequently, the goal of this article is to bridge this gap in a documented way, despite the fact the reasons that cause lane detection failures are probably well understood by the experts in such field. Specifically, the authors carefully selected several typical scenarios that the HT-based algorithm may fail in detecting the road lines. A detailed diagnosis will be proposed to find the items that would result in the failure for each situation. Finally, an algorithm optimization direction is proposed in this study. The future computing capabilities will guarantee the improved algorithm to be both robust and real time. As a result, there is an urgent need to diagnose the widely used lane detection algorithm and provide guidance for future research foci, which can help accelerate the car automatization process, especially for developing more robust and reliable algorithms for road perceptions.

Lane boundary detection theory

Since road markings in close range image shots are usually straight in most situations of interest, this study employs a straight-line pattern instead of more complex curves in road modeling. 12 Moreover, the straight lane model is enough to demonstrate the limitations of existing lane detection algorithms (i.e. the goal of this study), because the perception failures are primarily caused by environmental interferences other than lane boundary models. This section will take one image representative of a highway daytime condition as an example to describe the major steps during straight lane detections based on HT.

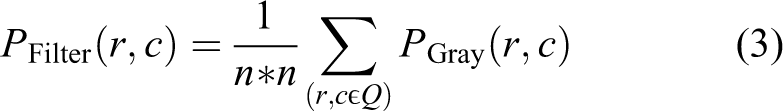

The raw image used for lane detection is usually taken by a camera mounted in the middle of the vehicle, 11 as shown in Figure 1(a). Images taken by the camera are usually represented by RGB color model. It is an additive color model, in which a broad array of colors is reproduced by simply mixing different intensities of red, green, and blue, as shown in equation (1).

where

Primary steps of straight lane detection algorithm based on Hough transform (highway daytime condition): (a) raw image, (b) grayscale image, (c) binary image, (d) edge image, (e) detected lane boundary candidates (marked with red points) after scan inside the polar Hough parameter space, and (f) finally detected lane boundaries superimposed on the original color image.

To save computational efforts, the RGB image is usually transformed to a grayscale image (as shown in Figure 1(b)) for preprocessing. Equation (2) shows the widely used transformation equation, where

Choosing the maximum (i.e.

Then, image filtering and mathematical morphology are applied to remove noise. 32 The mean filter (shown in equation (3)) and median filter (shown in equation (4)) are the simplest approaches

In this case,

The filtered grayscale image is then converted to black and white binary images (as shown in Figure 1(c)) based on an appropriate threshold, as explained in equation (5)

where

Finally, an edge detection algorithm is applied to extract the edge features (Figure 1(d)) from the binary image. Roberts (shown in equation (7)) and Sobel (shown in equation (8)) operators are examples of simple methodologies. Canny operator is more robust to noise because it applies Gauss filter first before intensity gradient calculation

where

These four mentioned steps form the preprocessing stage. The resulting edges are considered as road marking candidates, which will be analyzed in Hough space. The HT is a technique used for isolating features of a particular shape within a given image 33 at a parameter space (as shown in equation (9))

Each point (ri, ci) in r-c plane gives a sinusoid in ρ-θ plane (i.e. parameter space). The final result of the linear HT is a 2D array (i.e. accumulator space): one is the quantized angle

Scenario selection

The input video used in this study were taken at typical roads when driving in North America. The most widely used lane boundary detection algorithm (i.e. introduced in the previous section) was utilized offline to locate lanes when performing indoor tests. Despite such an algorithm successfully recognizing the lane boundaries from approximately 90% of frames, the detection rate was still not acceptable in practice. The authors carefully selected several representative scenarios that the HT-based algorithm is expected to fail and present them in the next section. Specifically, the most concerned scenarios include a variety of different road types under various lighting and shadowing conditions. The major reasons for detection failures will be deeply analyzed for each scenario, followed by a discussion on the optimization direction, in the next section. Admittedly, the cases that will be presented may not include all the possible causes of the detection failure. But working on these scenarios can at least provide an optimization direction. Moreover, it would also be of strong interest to researchers who wish to enhance the road perception accuracy with high generality.

Limitation analysis

Figure 1 in the previous section shows that the HT-based algorithm detected the road dividing lines successfully, suggesting its effectiveness under the highway daytime condition. Hillel et al. 11 reported that such an algorithm was capable of solving the lane detection issues in nearly 90% of the highway frames. However, the road lane detection technique tends to be only robust under specific road types and conditions. The objective of this study is to test the performance of the HT-based algorithm when detecting road lanes under challenging scenarios. As a result, this section will employ such an algorithm under different complex situations such as lane types, road surface, nighttime, and other environmental factors (shadow, rain, etc.). It is also important to note that assumption (b) proposed in the previous section was removed during the image processing in this section, with the goal of better showing potential limitations of lane boundary detection technique.

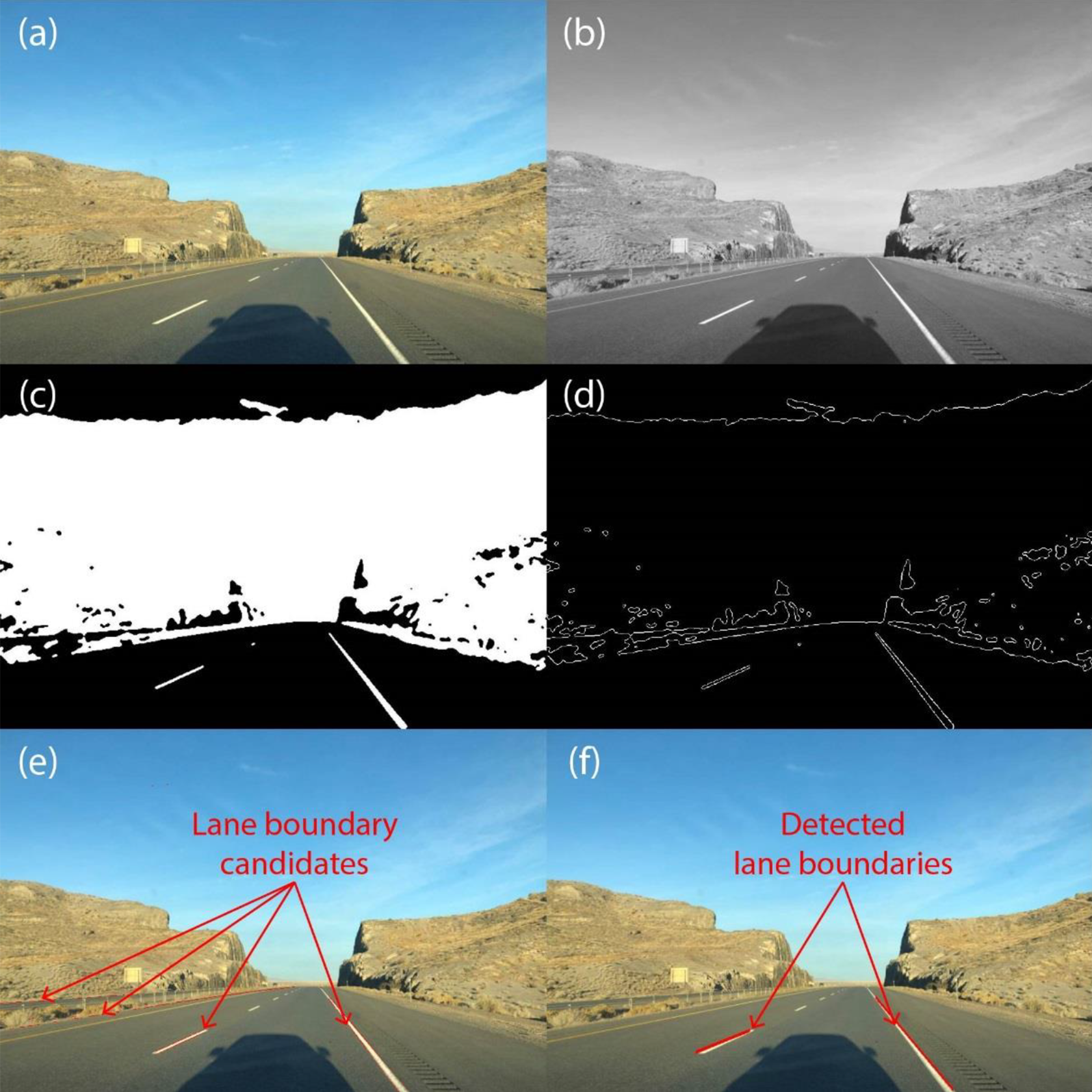

Figure 2 shows the lane detection performance of the HT-based algorithm at dusk on the highway. Despite the change of illumination condition, the road lanes can still be easily separated from the background without the application of the gradient-enhancing method, as shown in Figure 2(b). The successful lane detection results shown in Figure 2(c) suggest that the algorithm is robust against illumination variations in simple highway scenarios, such as those shown in Figures 1 and 2. This is because dividing lines are in strong contrast to the background under these scenarios, which guarantees the road markings to be correctly extracted from surroundings during the binarization process.

Results of HT-based lane detection at dusk on highway: (a) raw image, (b) binary image, and (c) detected lane boundaries superimposed on the original color image. HT: Hough transform.

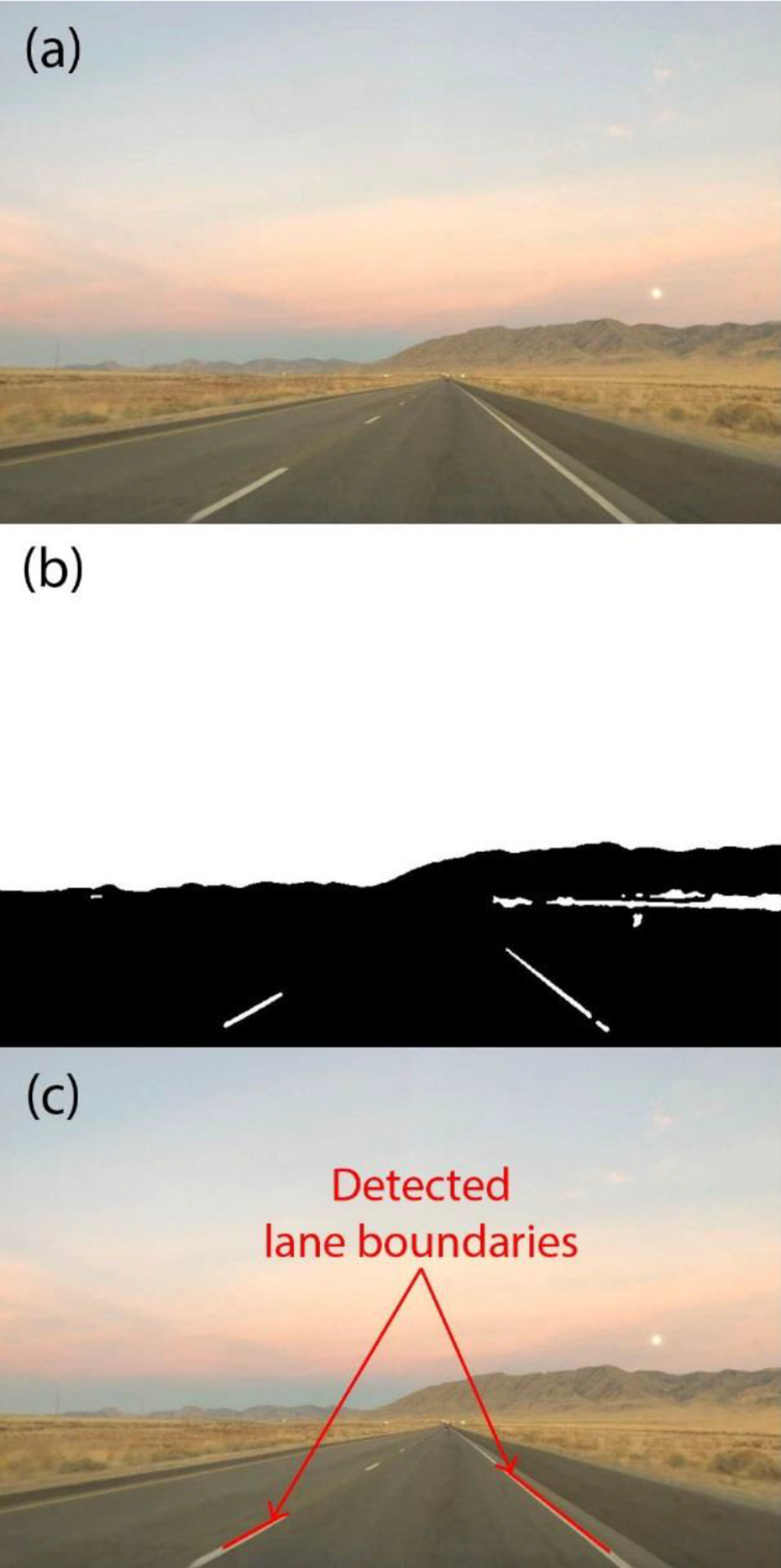

Figure 3 shows the lane detection performance of the HT-based algorithm at night on a highway. When a vehicle drives at nighttime, the intensity in the area illuminated by headlights is several orders of magnitudes higher than that of the background. Although the lane markings show good contrast with the road surface from human vision, parts of lanes are overexposed. Subsequently, Figure 3(c) indicates that the HT-based algorithm failed to detect the road markings. The mistake was attributed to the failure of extracting lane features from the background during the binarization process shown in Figure 3(b). The authors suppose that it was the inappropriate choice of binarization threshold that causes the failure to extract lane features from the surroundings. Similar issues would also arise when abrupt change occurs in illumination. For example, when the host vehicle enters or exits a tunnel, or drives below a bridge that casts its shadows on the road surface, 11 the algorithm may fail.

Results of HT-based lane detection at night on highway: (a) raw image, (b) binary image, and (c) detected lane boundaries superimposed on the original color image. HT: Hough transform.

The straight lane detection algorithm based on HT was also tested on a repaired road with asphalt patches. As shown in Figure 4(a), the road is curved to the left and the left lane boundary is missing. Figure 4(c) shows that even though the road is patched and curved, the HT-based straight lane detection algorithm can still result in correct detection of the right lane boundary. It supports the line model simplification assumption, which supposes road dividing lines in the near-front region to be always straight. In terms of the left lane boundary, Figure 4(c) shows that the detection algorithm failed. A reasonable explanation is that the left lane boundary is covered by the asphalt patch. Despite that the color contrast between the asphalt patch and the road surface is not low (shown in Figure 4(b)) by the human eye, the color of asphalt patch is more toward black not white, which resulted in neither the left lane boundary nor the perimeter of the asphalt patch to be detected.

Results of HT-based lane detection on the repaired road: (a) raw image, (b) binary image, and (c) detected lane boundaries superimposed on the original color image. HT: Hough transform.

A typical town road with various road markings, pedestrian sidewalk, and guardrails adds difficulty in the application of HT-based lane detection algorithm. The scene shown in Figure 5 belongs to such a category. Figure 5(c) indicates that a different type of road line (a solid yellow line with a broken yellow line next to it) was successfully detected by HT-based algorithm because of its strong color contrast against the road surface. Figure 5(c) also shows that guardrails and boundaries of pedestrian sidewalk were incorrectly detected as lane boundaries. These issues can be avoided by simply assuming that road lanes must be close in proximity to the host vehicle. However, it will result in failing to detect all the lanes other than the host lane (the lane on which the host vehicle drives on). In addition, misrecognition of guardrail will increase the risk of lane change maneuvers. Such an issue could probably be solved by real-time lane tracking, which can consider the history of lane detections to eliminate the present interferences.

Results of HT-based lane detection on town road with guardrail and pedestrian sidewalk: (a) raw image, (b) binary image, and (c) detected lane boundaries superimposed on the original color image. HT: Hough transform.

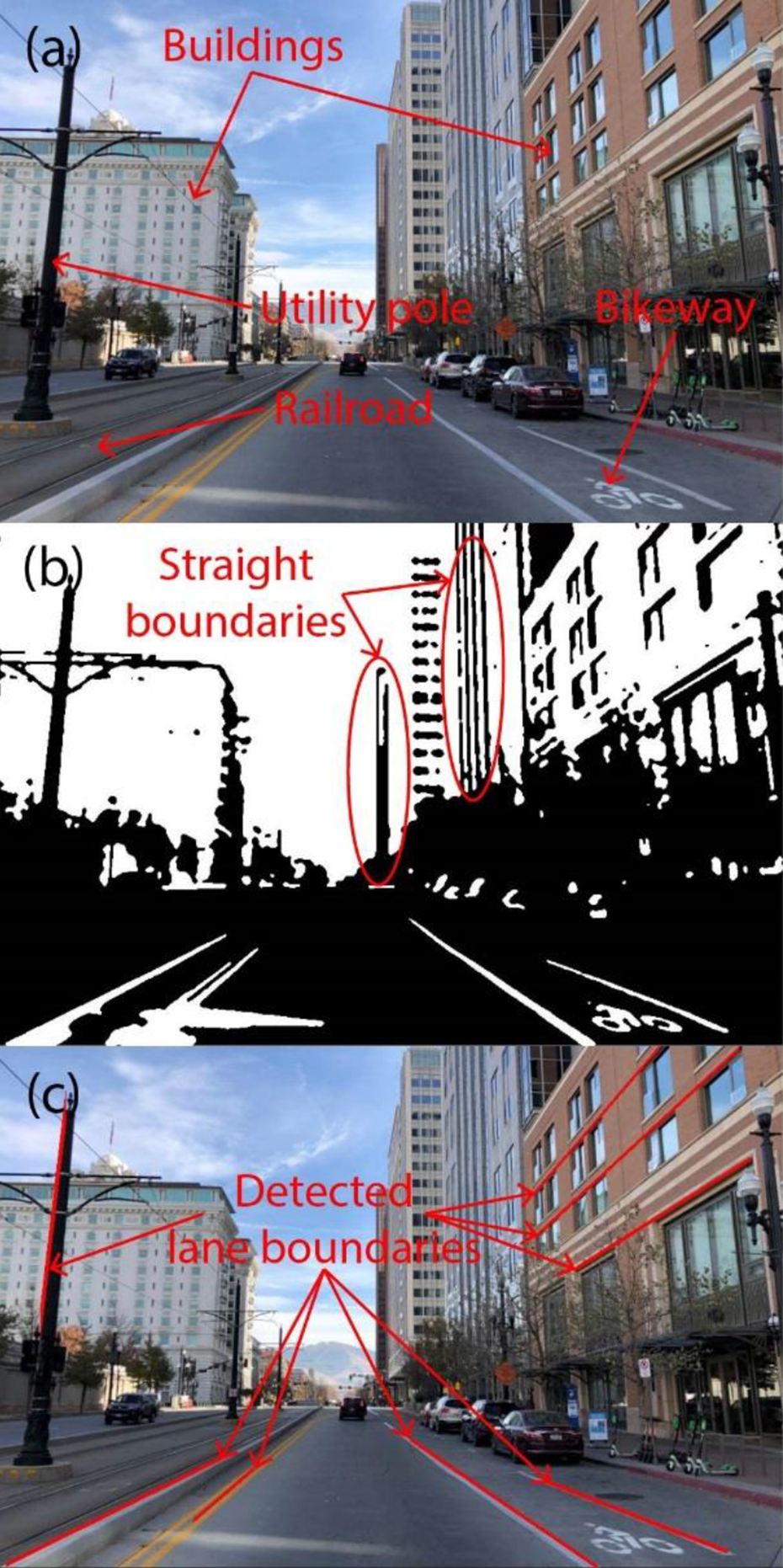

An urban street is a more challenging scenario for HT-based lane detection. A typical urban street shown in Figure 6(a) has complex painted road surface markings, utility poles, and buildings, which may introduce misleading edges and textures on the HT-based lane detection algorithm. The interferences from such complex environments are expected to affect the performance of the HT-based algorithm. As shown in Figure 6(b), the traditional preprocessing process was not capable of eliminating these interferences. While most of them were filtered out during the detection stage, some of these interferences were left, as observed in Figure 6(c). The road edges, utility pole, and window edges were erroneously identified as lane boundaries. A possible way to reduce the false-positive rate under such conditions is using assumptions to eliminate the misleading edges far from the host lane during the image preprocessing stage. Another potential way is to use feature-based ML techniques. This could be one of the potential areas for future research.

Results of HT-based lane detection in urban street: (a) raw image, (b) binary image, and (c) detected lane boundaries superimposed on the original color image. HT: Hough transform.

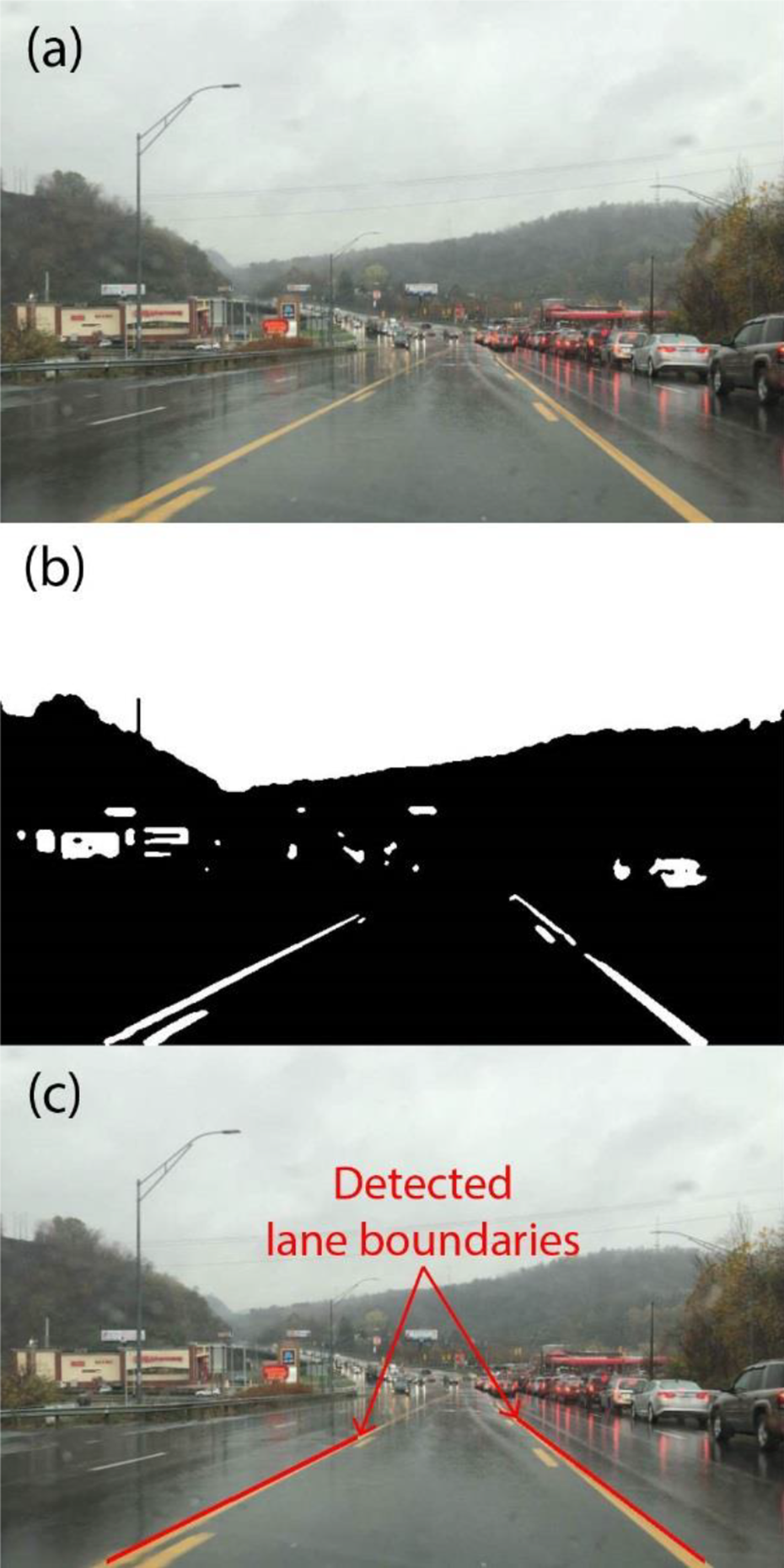

A good lane detection algorithm should also be robust against interferences introduced by bad weather. Figures 7 and 8 show the lane detection performance on rainy days. Since rainy weather did not heavily impact on the color contrast for the specific case in Figure 7(a), lane boundaries were effectively extracted from the background after the preprocessing, as shown in Figure 7(b). As a result, the lane boundaries were detected precisely, as observed in Figure 7(c). Even though the lane boundaries could be successfully detected on rainy days for some scenarios, reflection from wet road may lead to glare and image overexposure, resulting in lane detection failure. An example is given in Figure 8, which shows the HT-based algorithm failing to detect the left lane boundary due to the overexposure caused by rain. However, the region where the right lane boundary is located showed an acceptable level of contrast against the background, which allowed the HT-based algorithm to detect the boundary successfully. The reason for this region being not affected by the overexposure (caused by reflection) was that shadows from the roadside trees reduced the light intensity. The comparison between these two cases suggests that the threshold used in binarization process is of critical importance for effective detections. It determines whether the lane dividing lines are successfully extracted from the background. The optimized threshold is adjusted globally according to the illumination conditions during processing, which may contribute to an inappropriate threshold for ROIs and therefore bring in difficulties to detect lane boundaries when driving in bad weather.

Results of HT-based lane detection under rainy condition: (a) raw image, (b) binary image, and (c) detected lane boundaries superimposed on the original color image. HT: Hough transform.

Results of HT-based lane detection on a rainy day with poor contrast: (a) raw image, (b) binary image, and (c) detected lane boundaries superimposed on the original color image. HT: Hough transform.

It is also important to mention that all the cases discussed above (i.e. Figures 1 to 8) have at least one side of the lane boundary being captured by the camera. However, our results indicated that the vision-based algorithm is not able to detect lane marks when they are completely vanished or totally covered by snow. Under such circumstances, successful use of vision-based technique in road understanding needs to integrate information from other sources, such as the road boundaries. Moreover, as mentioned in “Introduction” section, radar-based methodologies are also of great importance for autonomous navigation, 35 especially when vision-based techniques lose effectiveness.

Optimization directions

The previous section evaluated the performance of the HT-based lane detection algorithm under several of the most concerning scenarios. It can be concluded that such widely used algorithm is capable of detecting the dividing lines under relatively simple scenarios in practice, regardless of road marking type, weather conditions, and time of day, as long as the lane boundaries present strong contrast against the road surface. This is because the binarization process plays a decisive role in extracting lane features from given images. Weak contrast can result in information loss during the binarization process and eventually contribute to lane detection failures. With respect to the challenging backgrounds, the main difficulties arise from changing illumination conditions and lane-boundary-like interferences caused by outliers. Existing studies have enhanced the performance of HT-based algorithm under challenging environment via combining other methodologies. This section will present a review of them and discuss their shortcomings in practice.

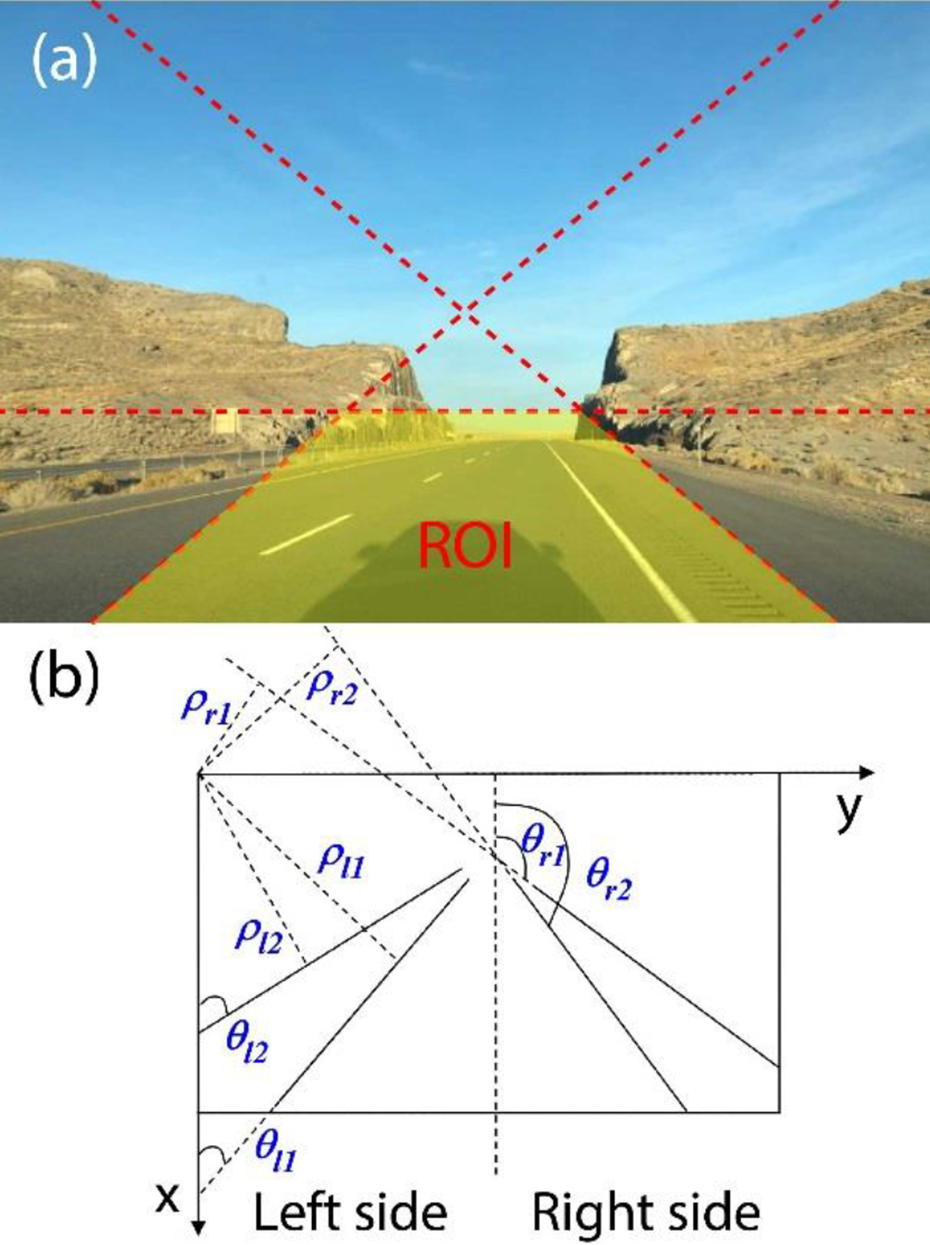

Complex environment would bring in numerous types of lane-like interferences, most of which cannot be filtered out during the preprocessing stage. Since large amounts of misleading edges are sent to the detection step, the computational burden for the detection algorithm would also increase in addition to the false-positive rate. Fan et al. 36 enhanced the feature extraction process with the help of ML. In detail, lane features were extracted by AdaBoost algorithm trained with Haar-like rectangle features. It performed better than before as such an enhancement technique eliminated most of the interferences and therefore provided a relatively small amount of extracted edges for line detection in Hough space. However, it requires a large number of features to be considered during supervised learning and still tends to fail when processing features are not included in the training set. In addition, these undesired interferences can be potentially eliminated by determining a selected subset of pixels, namely region of interest (ROI), during the image preprocessing stage. Admittedly, incorporating such a methodology can significantly increase the detection rate and reduce the computational cost. But the ROI size choice varied with studies because most researchers determined the ROI based on their personal preference. For instance, Yi et al. 2 applied one-third of each frame as ROI for further processing. However, Wang 37 and Xu 38 considered 3/5 and 7/12, respectively, of the images’ lower part as the region including road lanes. Duan et al. 39 even applied a trapezoid region as ROI, as shown in Figure 9(a). Considering a fixed size of ROI may not be appropriate for all conditions; both Wu et al. 40 and Marzougui et al. 41 employed an adaptive ROI approach. Similarly, with restrictions in the r-c plane, some studies limited the polar angle range in Hough space. In detail, the polar angle search scope can be defined in the region between (θ1, θ2), with the difference between θ1 and θ2 as a tolerance. 42 As shown in Figure 9(b), the left road boundary is assumed to be located between (θl1, θl2), while the right one is between (θr1, θr2). Again, such a range was also chosen personally based on their specific images. Zhou and Chen 43 argued the left boundary to be between [20°, 75°] and the right one to be located in the range of [105°, 165°], while Marzougui et al. 41 chose [15°, 70°] and [110°, 165°] as the corresponding interest ranges. In the authors’ viewpoint, the search range depends on vehicle pose and mounting location of cameras, and thus, various choices of the search range are reported. Even though the image-splitting or angle-limiting schemes is effective to some extent, neither methodologies can be fully relied upon in terms of eliminating all the interferences. The next step of our research will combine the restricted-search-region approach and ML-assisted edge detection, with the aim to test the level of improvement.

Restricted search range: (a) region of interest and (b) polar angle range.

In addition to lane-like interferences, illumination variations also add difficulties to dividing line detection. As discussed in the previous section, the algorithm failed to recognize the road lane features under artificial light at night (i.e. case 3) or in wet road conditions with strong reflection on rainy days (i.e. case 8). The failure was attributed to such regions being neglected after binarization process during the preprocessing stage and never considered during the lane detection stage. Both Xu et al. 38 and Duan et al. 44 argued that constant binarization threshold was only appropriate in a single lighting condition. Moreover, Aziz et al. 45 used different parameters for lane detections during day and night, and finally effectively detected all the lane markings investigated in his study. We also tested such an idea in case 3 (i.e. Figure 3) and case 8 (i.e. Figure 8), with the results shown in Figure 10. As observed in Figure 10(a), both left and right lane boundaries are successfully extracted from the background with a different threshold (i.e. a value larger than the one calculated by Otsu method), in spite of the large variations in lighting intensity over the image. This is because lane boundaries already present a strong contrast to the neighbors, which guarantees the detection to be effective if a more appropriate threshold is selected. However, as shown in Figure 10(b), a larger threshold only helps to extract the left lane boundary, while the right lane boundary is missed, which is contrary to the results shown in Figure 8(b). These results suggest that the threshold is better to be temporally and spatially modulated according to the variances in lighting intensity.

Considering the lane detection algorithms, which can adaptively change the threshold according to different lighting conditions that are highly needed, a ML-based approach is shown in Figure 11. After supervised training, ML algorithms (such as Bayes classifier and neural network) can help recognize the abnormal lighting conditions. Then, the contrast enhancement approach (such as histogram equalization, linear contrast adjustment, homomorphic filtering, etc.) can be conducted locally in the ROI to help with feature extraction. The results in Figure 10 validate the potential of such ML-based technique. Moreover, Zhang et al. 46 and Li et al. 47 showed that lane boundaries can be detected at night and even in dense fog with the help of image enhancement. Future work will test the effectiveness of the proposed algorithm by classifying image sequences into different lighting categories.

Schematic diagram of machine learning-assisted Hough transform-based lane boundary detection algorithm.

Moreover, some existing studies also introduced other methodologies to assist the HT-based algorithm. For example, as white and yellow are the basic colors of lane markings, the detection performance can be improved via color feature. 48 Since the color of lane boundaries can be easily extracted in YCbCr color space, Li et al. 49 converted the raw figure to YCbCr color space and applied HT on “Y” component and “Cb” component separately. Similarly, specific structural characteristics of lane, such as lane width 48 and lane shape, 50 can also be employed to extract lane boundaries. Zheng et al. 51 and Luo et al. 52 even proposed the lane detection algorithms assuming road dividing lines to be subject to multiple structural constraints (i.e. length constraint, parallel constraint, distribution constraint, pair constraint, and uniform width constraint). In addition to the structural features of the lane boundary itself, Gao et al. 53 also added the geometric relationship between the lane and the vehicle to help detect lane boundaries. Yuan et al. 54 combined HT and vanishing point to jointly identify lane boundaries. Aiming to enhance the lane detection robustness, Xing et al. 55 also utilized a second lane detection approach (i.e. lane model fitting) to assist the primary HT-based algorithm, and such a “backup” was activated when encountering low detection confidence. In the authors’ opinion, these combination techniques would experience trade-off relations between complexity and detection accuracy. However, the increasing computational power will guarantee the improved algorithm to be both robust and real time.

Summary and conclusions

HT-based lane detection technique is one of the most often used algorithms in ADAS. As existing literature lack a deep analysis of its performance under practical scenarios, this article carefully selected several of the most concerning situations (with changed lighting and weather conditions) to analyze. Based on the detailed diagnosis of each failure, this article proposed several key issues that need to be solved for a robust lane detection algorithm. In addition, an ML-assisted approach was proposed with the goal of overcoming the bottlenecks. Overall, all the results and discussions indicate the urgent need for enhancing road perceptions, which can help accelerate the field of autonomous driving cars.

Footnotes

Nomenclature

| 2D | Two-dimensional |

| 3D | Three-dimensional |

| ADAS | Advanced driver assistance systems |

| HT | Hough transform |

| ML | Machine learning |

| ROI | Region of interest |

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.